Abstract

Introduction

The objective of the study was to evaluate the cost-effectiveness of glecaprevir/pibrentasvir versus other direct-acting antivirals (DAAs) for treating chronic hepatitis C virus (HCV) infections in Japan.

Methods

We developed a health state transition model to capture the natural history of HCV. A cost-effectiveness analysis of DAAs from the perspective of a public healthcare payer in Japan with a lifetime horizon over annual cycles was performed. Treatment attributes, baseline demographics, transition probabilities, health-state utilities, and costs data were extracted from publications. Costs and outcomes were discounted at 2% per annum. In the base case we focused on genotype 1 (GT1) treatment-naïve patients without cirrhosis. The scenario analysis examined a pan-genotype treatment in GT1–3 (i.e., portfolio), treatment-naïve, and treatment-experienced patients. The portfolio cost-effectiveness of DAAs was derived by calculating a weighted average of patient segments defined by treatment history, cirrhosis status, and genotype.

Results

The base case results indicated that glecaprevir/pibrentasvir was dominant (i.e., generating higher quality-adjusted life years [QALYs] and lower lifetime costs) compared to all other DAAs. The predicted lifetime risk of hepatocellular carcinoma was 3.66% for glecaprevir/pibrentasvir and sofosbuvir/ledipasvir, 4.99% for elbasvir/grazoprevir, and 5.27% for daclatasvir/asunaprevir/beclabuvir. In scenario analysis the glecaprevir/pibrentasvir (GLE/PIB) portfolio dominated the sofosbuvir (SOF)-based portfolio (namely sofosbuvir/ledipasvir in GT1–2 and sofosbuvir + ribavirin in GT3). The base case probabilistic sensitivity analysis (PSA) showed that glecaprevir/pibrentasvir was cost-effective in 93.4% of the simulations for a willingness-to-pay/QALY range of Japanese yen (JPY) 1.6–20 million. The PSA for the portfolio scenario indicated that the GLE/PIB portfolio was cost-effective in 100% of simulations until the willingness-to-pay/QALY reached JPY 5.2 million; this proportion decreased to 69.4% at a willingness-to-pay/QALY of JPY 20 million. Results were also robust in deterministic sensitivity analyses.

Conclusion

In GT1 treatment-naïve non-cirrhotic patients GLE/PIB was a cost-effective strategy compared to other DAAs. When a pan-genotypic framework was used, the GLE/PIB portfolio dominated the SOF-based portfolio.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Why carry out this study? |

Glecaprevir/pibrentasvir (GLE/PIB) is the first and only ribavirin-free pan-genotypic (i.e., genotype [GT] 1–6) approved to treat chronic hepatitis C virus (HCV) infections with or without compensated cirrhosis in Japan. However, no study comparing GLE/PIB to other approved direct-acting antivirals (DAAs) comparators in Japan has been published to date. |

This study examined the cost-effectiveness of GLE/PIB versus approved DAAs for treating GT1–3, HCV infections in Japan. |

What was learned from the study? |

GLE/PIB was cost-saving and had better outcomes, making GLE/PIB a dominant treatment option compared to other DAAs and no treatment in GT1 treatment-naïve patients without cirrhosis. |

When a pan-genotypic framework was used, the GLE/PIB portfolio dominated (i.e., better outcomes at a lower cost) a sofosbuvir-based portfolio. |

Introduction

Japan has one of the highest rates of hepatitis C virus (HCV) infection in the industrialized world, with approximately 2 million people living with the disease [1]. The prevalence of HCV in the general population is estimated to be between 0.6% and 0.9% [2]. Genotype 1b (GT1b) has been reported as the most prevalent subtype (65%), followed by GT2 (34%) [3].Footnote 1 As liver disease progresses, some patients may develop cirrhosis and eventually progress from compensated cirrhosis to decompensated cirrhosis (DCC), hepatocellular carcinoma (HCC), and liver failure. Japan also has the highest prevalence of HCC amongst the industrialized countries with HCV and its complications being the leading causes [4]. In fact, HCV-related HCC accounts for 70% of HCC cases in Japan [5]. Moreover, HCC is the fifth leading cause of death in Japan, and the societal costs associated with HCC morbidity and mortality are high [6]. In response, Japan introduced liver cancer screening programs in the 1980s, as well as awareness programs targeted at the public and healthcare providers in the 1990s [7]. Between 2000 and 2005, there was an estimated 55% decrease in undiagnosed HCV carriers [8]. In addition, the estimated societal burden of HCC fell from Japanese yen (JPY) 863.1 billion in 1996 to JPY 607.2 billion in 2014 [6].

Sustained virologic response (SVR) is a marker for viral eradication in HCV infection. The introduction of all-oral, direct-acting antivirals (DAAs) has drastically improved SVR rates and management of chronic HCV [7, 9, 10]. In addition, SVR achieved with DAA treatment has been demonstrated to persist long-term [11, 12]. In a cohort of 10,000 GT1b Japanese patients, the total economic savings of treatment with approved DAAs versus no treatment (calculated as: [savings due to treatment from avoiding projected health state costs] + [quality-adjusted life years (QALYs) gained by treatment] × [value of QALY] − weighted DAA costs) was estimated to be JPY 7.5 million and JPY 12.8 million per patient, at willingness-to-pay (WTP) thresholds of JPY 4 million and JPY 6 million per QALY, respectively. The considerable direct and indirect savings may be attributed to avoidance of HCC and DCC [13].

The HCV treatment landscape in Japan has some differentiating features compared to other countries. For one, regulatory approval in Japan requires specific clinical trials performed in Japan amongst Japanese patients. Secondly, approved HCV treatments in Japan include some treatment options that are not commonly used in other countries. In September 2017, glecaprevir/pibrentasvir (GLE/PIB) became the first and only 8-week treatment option in Japan for GT1 and GT2 HCV-infected patients without cirrhosis, and who are naïve to DAA treatment. These patients represent the majority of people living with HCV in Japan. The GLE/PIB regimen can also be prescribed as a 12-week course for patients infected with GT3–6, those with compensated cirrhosis, and those not cured with previous DAA treatment [14, 15]. However, to our knowledge, no study comparing GLE/PIB to other approved all-oral, interferon- and ribavirin-free DAA comparators in Japan has been published to date. Because of the unique HCV treatment landscape, a cost-effectiveness analysis of HCV therapies in Japan would broaden the scope of information on HCV and may offer insight into less common treatment options.

We based our model on previously published models of the natural history of chronic HCV infection, including Virabhak et al. [16], Ishida and Yotsuyanagi [17], and Hartwell et al. [18]. Most notably, we extended the same natural history model structure to capture lifetime disease progression of patients with HCV regardless of treatment history (i.e., treatment-naïve or treatment-experienced) and genotype (i.e., GT1–3).

Methods

Natural History Model

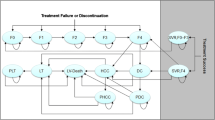

The natural history model structure is presented in Fig. 1. The model was made up of eight health states including five disease progression states (i.e., no cirrhosis [F0–F3], compensated cirrhosis [F4]Footnote 2; DCC; HCC; and liver transplant), two SVR states (i.e., SVR, history of no cirrhosis; and SVR, history of compensated cirrhosis), and an absorbing mortality state (i.e., liver-related and non-liver-related death) which could be reached from any state. DCC was modeled as one health state [18, 20, 21]. Our model allowed variation in disease progression across genotypes [22, 23]. Firstly, the risks of cirrhosis and HCC have been shown to be higher in patients with GT3 compared to GT1 infected patients [24]. Secondly, GT2 patients are at significantly lower risk and GT3 patients are at higher risk for long-term morbidity and mortality relative to GT1 patients [25, 26].

Natural history model schematic. DCC decompensated cirrhosis, HCC hepatocellular carcinoma, SVR sustained virologic response. Health states are depicted by ellipses, arrows represent permissible transitions between health states, while loops represent no transition. Dashed arrows depict the possibility of recovering (i.e., SVR). The dotted arrow depicts transition from “SVR, history of F0–F3” and “No cirrhosis (F0–F3)” to HCC. Death is possible from any health state. Liver-related death is possible from DCC, HCC, and liver transplant

Patients entering the model initiated treatment through one of two initial fibrosis states (i.e., F0–F3 or F4). With successful treatment patients achieved SVR and transitioned to SVR states [18]. In the absence of successful treatment patients either remained in their current health state or progressed to more severe stages of liver disease following natural disease progression.

In the model, patients could develop HCC from any SVR state, albeit at lower rates than patients who did not achieve SVR. In turn, patients who achieved SVR from compensated cirrhosis were assumed to face a higher risk of HCC than those who achieved SVR from no cirrhosis [27, 28]. A proportion of patients with compensated cirrhosis progressed to DCC [29, 30]. Some patients with DCC progressed to HCC, while a proportion received liver transplants. Patients with HCC could also receive liver transplants [21, 31, 32]. In addition, DCC, HCC, and liver transplant are commonly accepted as advanced stages of liver disease and thus we applied excess liver-related mortality risks [17, 18, 33]. Finally we assumed that spontaneous remission was not possible for patients with chronic HCV.

Table 1 shows model inputs such as patient characteristics, transition probabilities associated with fibrosis and non-fibrosis disease progression, genotype-specific fibrosis and non-fibrosis progression hazard ratios, and background age- and gender-adjusted probability of death.

Study Population and Treatment Comparators

In the base case we focused on GT1, treatment-naïve non-cirrhotic patients, who comprise the largest patient segment in Japan [3]. In a PMOS of GLE/PIB, treatment-naïve patients accounted for 67.8% and non-cirrhotic patients accounted for 84.4% [34] of all patients with HCV. Fifty percent of patients with HCV had GT1 (of whom GT1b patients formed the vast majority), and 50.6% were male. The average age of the HCV population was 66.5 years. Using a segmented approach (i.e., the comparison of one intervention versus one comparator within a pre-specified patient segment, defined by patients’ treatment history, cirrhosis status, and/or genotype), we compared GLE/PIB versus other comparators approved for HCV treatment in Japan: sofosbuvir/ledipasvir (SOF/LDV) elbasvir/grazoprevir (EBR + GZR), daclatasvir/asunaprevir/beclabuvir (DCV/ASV/BCV), and no treatment. Since the combination of sofosbuvir and velpatasvir is only approved for patients who have failed on DAA or those with DCC in Japan, it is not a relevant comparator in the current segmented analysis which is restricted to treatment-naïve patients without cirrhosis.

Given that GLE/PIB is a pan-genotypic treatment, we also analyzed cost-effectiveness from a broader perspective to inform decision-making in the entire patient population with a portfolio approach. The portfolio approach involved the comparison of treatment strategies in combinations of patient segments (i.e., treatment history–cirrhosis status–genotype combination), which in turn enabled flexible computation of a pan-genotypic incremental cost-effectiveness ratio (ICER) for the overall HCV population of interest. Computationally, the model calculated outcomes for each segment, and aggregated costs, QALYs, and clinical outcomes by weighting each segment on the basis of the patients’ treatment history, cirrhosis status, and genotype distribution to obtain a consolidated, weighted portfolio ICER and clinical outcomes. In portfolio analysis, we compared the GLE/PIB portfolio to a portfolio comprising treatment with SOF/LDV in GT1–2 and SOF + ribavirin in GT3 patients. Although GT3–6 patients were eligible to enroll in the GLE/PIB trial in Japan, only GT3 patients ended up being recruited. Subsequently, approval of GLE/PIB in the GT3–6 segment was based on clinical trial data comprising GT3 patients only.

Clinical Inputs

We extracted efficacy and duration data directly from Japanese phase III clinical trials [35,36,37,38,39,40,41,42,43], on the basis of the approved label for each regimen [14, 44,45,46,47]. Adverse event (AE) rates with DAA treatment were low; thus, AE costs had a negligible impact on overall cost and were excluded from the analysis. In the case of regimens with no Japanese trials, we used data from international trials [48]. For regimens with multiple phase III trials for a given patient segment, we consolidated data across relevant trials [48]. We used an intention-to-treat (ITT) perspective.

The expected treatment duration for each regimen was computed on the basis of labeled duration and trial-based discontinuation rates [35,36,37,38,39,40,41,42,43]. Table 2 shows the treatment efficacy for all patient segments included in the analysis for both the segmented and portfolio approach. For transparency, we reported SVR rate by patient segment.

Health Utilities

Health state utilities were drawn from Ishida and Yotsuyanagi [17] (Table 1). Treatment-related health utility reflects the effect of treatment on quality of life over the treatment duration. Treatment-related health utility data were derived from published literature, when available [49, 50]. When no relevant published data existed, we made the simplifying assumption that treatment-related utility matched that observed in the AbbVie clinical trials of GLE/PIB [35, 36].

Costs

We included only direct medical costs in this study (Table 1) [51]. Direct cost estimates for health states were taken from published Japanese studies [17, 28]. As a result of negligible inflation in Japan, cost data were not inflated from 2006 (for liver transplant-related health state costs) and 2014 (for all other health state costs) to the present year. Japanese guidelines support not inflating cost estimates [51]. The cost per course of a therapy was calculated by multiplying daily cost of the regimen [52] and the mean (trial-based) duration of treatment. The DAA treatment options generally require little monitoring. Furthermore, these costs would be similar across the treatment options considered in this evaluation. Therefore, we also assumed that there were no on-treatment monitoring costs. All data were deidentified when used for this analysis. This article does not contain any studies with human participants or animals performed by any of the authors and did not require institutional review.

Analysis

The model was developed following good modeling practices [53, 54]. We estimated the direct medical costs, liver outcomes, QALYs, and ICERs. Discount rates (costs, utilities and life years) in the base case were set to 2% as per Japanese guidelines [51, 55]. We assumed a payer WTP of JPY 5 million/QALY (USD 46,015/QALY) [56] as a threshold for assessing the cost-effectiveness of GLE/PIB with the net monetary benefit (NMB) approach [57]. The NMB is a summary statistic that represents the net value of an intervention compared to an alternative health technology, considering the WTP threshold per QALY. A positive NMB indicates that the intervention is cost-effective compared to the alterative at the given WTP threshold. The NMB approach was chosen in favor of ICERs to report results as the NMB was easier to interpret in a situation where a treatment option is dominant.

Base Case

In the base case analysis, we compared GLE/PIB to four DAAs and no treatment in treatment-naïve non-cirrhotic GT1 patients. We performed a sequential analysis to derive the cost-effectiveness frontier by eliminating sequentially dominated and extendedly dominated strategies.

In the context of multiple comparisons, pairwise comparisons of ICERs may be misleading [58]. To establish a complete comparison of treatment options, we performed a fully incremental analysis which involved calculating the incremental QALY gains and costs for treatment options and ranking them by ascending costs. Options that were dominated (i.e., more expensive and less effective than one or more alternatives) or extendedly dominated (i.e., more expensive and less effective than a combination of two alternatives) were removed. The ICERs of each of the remaining options were then calculated as the additional costs divided by the additional QALYs by comparing one option with the next least costly [59]. If one treatment dominates all the others, either by dominance or extended dominance then only that treatment option is considered cost-effective. The sets of remaining treatment options form the cost-effectiveness frontier, which represented the set of points corresponding to treatment alternatives that were considered to be cost-effective at different values of the cost-effectiveness threshold [60]. Any option above, or to the left of the frontier, represented an inefficient option (i.e., suboptimal) as more QALYs were achievable at equal or lower costs (i.e., dominated or extendedly dominated) [59].

Scenario Analyses

In scenario analyses, we assessed the cost-effectiveness of GLE/PIB by varying the method of comparison or key model parameters. In scenario 1, we adopted a portfolio approach whereby a pan-genotypic ICER for the overall GT1–3 HCV population was derived. This overall ICER was calculated as a weighted average of patient segments defined by genotype, treatment history, and cirrhosis status, with weights based on the Japanese HCV population. In this scenario analysis, we reported findings of a GLE/PIB portfolio in GT1–3 versus a sofosbuvir (SOF)-based portfolio (namely SOF/LDV in GT1–2 and SOF + ribavarin in GT3). In scenarios 2 and 3, we varied the baseline age by ± 5 years, namely a “low” age of 61.5 years and a “high” age of 71.5 years. The impact of discount rates was explored in scenario 4 (0%) and scenario 5 (4%).

Sensitivity Analyses

Baseline demographics, background death rate, discount rates, regimen duration, and costs were not varied in deterministic sensitivity analyses (DSA) and probabilistic sensitivity analyses (PSA). The non-treatment-specific variables tested in DSA included transition probabilities related to disease progression, health state costs, and health utilities. For the PSA, 500 simulations were drawn from the variables’ distributions. For SVR rates, values of 100% were varied in the DSA and PSA using a method proposed by Briggs et al. [61]. Several parameters were tested in multi-way sensitivity analysis including SVR rates in patients without cirrhosis and the GT-specific fibrosis and non-fibrosis progression hazard ratios. As a result of the lack of data, PSA variation on treatment-related utility change was only possible for GLE/PIB where a normal distribution was assumed. The results of the PSAs are summarized graphically using cost-effectiveness acceptability curves (CEAC). Each point on a CEAC indicates the percentage of simulations where a treatment option is cost-effective compared to the other treatment option for a specific WTP per QALY. Each CEAC line is obtained by varying the payer WTP/QALY from JPY 0 to 20 million. For each treatment option the CEAC is the line indicating the percentage of simulations where that strategy yields the highest NMB compared to the other treatment options. When comparing multiple treatment options for each WTP/QALY, the sum of all lines add up to 100%. Table 1 provides details of DSA and PSA inputs.

Results

Base Case

In the base case segmented analysis, we compared GLE/PIB to DAAs such as SOF/LDV, EBR + GZR, DCV/ASV/BCV as well as no treatment in GT1 treatment-naïve non-cirrhotic patients. Table 3 presents the clinical outcomes for the different treatment regimens using baseline parameter input values. In the base case the percentage of patients ever reaching more advanced liver disease (such as DCC, HCC, or liver transplant) or dying from a liver-related cause was lowest with GLE/PIB and SOF/LDV compared with the remaining DAA treatment regimens and no treatment. For instance the lifetime risks of DCC and HCC were 0.00% and 3.66% for GLE/PIB and SOF/LDV, respectively. These lifetime risks were 0.25% and 4.99% for EBR + GZR, 0.30% and 5.27% for DCV/ASV/BCV, and 7.41% and 43.17% for no treatment.

Table 3 also presents results of a pairwise ICER analysis and a fully incremental analysis. In GT1 treatment-naïve non-cirrhotic patients, GLE/PIB was a dominant strategy compared to EBR + GZR, DCV/ASV/BCV, SOF/LDV, and no treatment: it conferred better outcomes at a lower cost.

Scenario Analysis

In scenario analysis, we ran an analysis using the portfolio approach where we compared a GLE/PIB portfolio versus a portfolio containing SOF/LDV in GT1–2 and SOF + ribavarin in GT3 patients (i.e., SOF portfolio). The long-term clinical outcomes of the GLE/PIB portfolio were close to those of the SOF portfolio (Table 4): GLE/PIB had a lower risk of DCC, HCC, liver transplant, and liver-related death. Table 4 also shows incremental results: with better outcomes (i.e., QALYs) at a lower cost, GLE/PIB dominated the SOF portfolio. Our base case conclusions were robust to scenarios in which we varied age insofar as GLE/PIB continued to dominate EBR + GZR, DCV/ASV/BCV, SOF/LDV, and no treatment. When we assumed a lower average age of patients with HCV, successfully treated patients gained more life years and QALYs. On the other hand, the older the average age of patients with HCV, the fewer QALYs accrued over a lifetime from successful treatment. Our scenario analyses suggested that there were higher benefits of treatment in a younger population. To further explore the impact of age, we derived the net NMB assuming a WTP threshold of JPY 5 million/QALY (USD 46,015/QALY) [56]. The NMB of GLE/PIB versus no treatment decreased by about 45% as mean patient age increased from 61.5 years (NMB = JPY 29,755,761 [USD 273,843]) to 71.5 years (NMB = JPY 16,256,617 [USD 149,610]). This analysis indicated that, although a younger population experienced greater benefits from treatment due to the expected life duration after the treatment, the benefits were still cost-effective with an older population as the NMB remained positive.

Increasing the discount rate lowered the present value of future costs and outcomes leading to lower total lifetime costs and QALYs. In the scenarios where discount rates were set to zero and 4%, the conclusions of the incremental analysis were unchanged from the base case. Quantitatively the NMB of GLE/PIB versus the second least costly option, EBR + GZR, decreased as the discount rate increased from 0% (NMB = JPY 1,820,109 [USD 16,750]) to 4% (NMB = JPY 1,365,476 [USD 12,566], assuming a WTP threshold of JPY 5 million/QALY (USD 46,015/QALY) [56].

Results of Uncertainty Analysis

Deterministic Sensitivity Analyses: Key Results

A DSA was conducted using the NMB approach for the cost-effectiveness analysis of GLE/PIB compared with SOF/LDV in GT1 treatment-naïve non-cirrhotic patients by varying the base case parameter values across their assumed ranges and assuming a payer WTP of JPY 5 million/QALY. Efficacy of both the intervention and comparator was equal and at their maximum value of 100%; thus the outcomes of both regimens were identical, i.e., only varying SVR rates affected model outcomes.

For completeness we presented results of the DSA for the portfolio approach (the cost-effectiveness analysis of the GLE/PIB portfolio compared to the SOF portfolio) (Fig. 2). Results were most sensitive to SVR rates and to a smaller extent health utility of SVR for patients (history of F4).

DSA results showing the 10 most influential disease model parameters in the portfolio approach comparing the GLE/PIB portfolio to the SOF portfolio. DCC decompensated cirrhosis, DSA deterministic sensitivity analysis, F0–F3 no cirrhosis, F4 compensated cirrhosis, GLE/PIB glecaprevir/pibrentasvir, HCC hepatocellular carcinoma, JPY Japanese yen [¥], NMB net monetary benefit (in JPY), SOF sofosbuvir, SVR sustained virologic response, TP transition probability. The NMB assumes a payer willingness-to-pay of JPY 5 million per quality-adjusted life year

Probabilistic Sensitivity Analyses: Key Results

Figure 3 presents the cost-effectiveness acceptability curves for all DAAs included in the analysis in the GT1 treatment-naïve non-cirrhotic population. At a WTP threshold of JPY 5 million/QALY, GLE/PIB was the optimal treatment in 99.4% of simulations. This remained at 99.0% of simulations when we increased the WTP threshold to JPY 7 million/QALY. Furthermore GLE/PIB was the only treatment option on the cost-effectiveness acceptability frontier. At a WTP threshold of JPY 5 million/QALY, EBR + GZR was cost-effective in 0.6% of simulations. At a WTP threshold of JPY 9 million/QALY, SOF/LDV was cost-effective in 0.2% of simulations; these probabilities rose to 1.6% and 4.6% for EBR + GZR and SOF/LDV, respectively, at JPY 20 million/QALY.

Figure 4 presents the PSA in the portfolio analysis where the GLE/PIB portfolio was compared to the SOF portfolio. At a WTP threshold of JPY 5 million/QALY, GLE/PIB was the optimal treatment strategy in 100.0% of simulations. At a WTP threshold of JPY 20 million/QALY or below, GLE/PIB was the optimal treatment strategy in at least 69.4% of simulations.

Discussion

To our knowledge this is the first analysis of the cost-effectiveness of GLE/PIB against other DAAs in Japan. We found that GLE/PIB was associated with higher QALY gains due to improved SVR and long-term health outcomes. Our study suggested that in a population of Japanese patients with HCV, GLE/PIB was a dominant strategy compared to EBR + GZR, SOF/LDV, DCV/ASV/BCV, and no treatment as it conferred better outcomes at a lower cost. The QALYs for GLE/PIB and SOF/LDV were close—14,204 versus 14,202, respectively. Thus assuming QALYs were similar, GLE/PIB would be cost-minimizing compared to SOF/LDV, which is a more conservative interpretation of the results than characterizing GLE/PIB as dominant to SOF/LDV. The base case results persisted across different scenarios as well as DSA and PSA.

The model had several strengths. It was developed in line with previously published models [58], which improved consistency with previous health technology assessments [62, 63], facilitated comparisons with other technologies, and supported the validity of the model results. Secondly, the model used Japanese-based input parameters to model disease progression and health state utilities; where available, SVR data were also extracted directly from published clinical trials conducted in Japan. To the extent that some treatment options approved in Japan are not commonly used elsewhere, our study broadens our knowledge of available treatment options to treat HCV. Third, we assessed model validity in terms of technical validation, internal validation, and external validation. To assess external validity of the model, the model’s estimates of compensated cirrhosis in untreated GT1 patients with F0 were generated. The base case model predicted that 20.9% of patients would have a history of compensated cirrhosis 20 years post-infection, which was concordant with rates from other HCV studies [16, 64,65,66,67]. Fourth, we included two modeling approaches: the portfolio approach and the segmented approach; this facilitated the assessment of GLE/PIB from the perspective of both broadly and narrowly defined markets. Finally, we included probabilistic and deterministic sensitivity analyses to assess the robustness of the results.

The model also had several limitations. Most phase III HCV clinical trials had single arms and indirect evidence obtained through a common comparator was unavailable. We could not conduct a robust network meta-analysis because of the paucity of data [49, 50]. Aligned with previous cost-effectiveness analyses in HCV, we extracted and compared data directly from clinical trials [16, 18, 33]. Even though GLE/PIB is indicated in GT1–6, we only conducted the analyses in GT1–3. As reported by Mochida et al., there were no GT4–6 patients recruited in the GLE/PIB Japan PMOS [34]. Although GT3–6 patients were eligible to enroll, only GT3 patients ended up being recruited. Thus the approval of GLE/PIB in GT3–6 patients in Japan was based on clinical trial data in GT3 patients. Therefore we excluded GT4–6 because of the lack of data. The model did not include monitoring costs and treatment-related AE costs primarily because of the lack of reliable costs data. However given that DAAs generally require little monitoring and have low AE rates, we did not believe that the overall monitoring and AE cost would be influential to the analysis. Secondly, as a result of the absence of robust data, the model included the conservative assumption that there was no spontaneous remission from F0 and no viral reinfection. Future research to inform these parameters would be beneficial. There was limited information on the demographics of patients with chronic HCV in Japan. As a result, baseline data for patient distribution across genotypes, treatment history, and fibrosis distribution were estimated using the PMOS of GLE/PIB [34].

To determine how these and other limitations affected our findings, we conducted DSA and PSA where inputs were varied across a range of plausible values. For these analyses, treatment history, background mortality rate, and the duration and costs of the regimen were not varied. The DSA and PSA confirmed the robustness of our findings in our base case and scenario analyses. In DSA, SVR rates were the most influential parameters: SVR differences between the intervention and comparator were not large (or even zero); QALY differences, which are affected by SVR and treatment-related disutility, were in turn small. Thus the denominator of the cost-effectiveness ratio was small, and changes in SVR had a relatively large impact on the upper and lower bounds of the ICERs. We relied on Japanese trials which had relatively small sample sizes within each patient segment. This led to wide confidence intervals around SVR as illustrated in the tornado diagram. We also introduced the method suggested by Briggs et al. [61] to add variation to the SVR rates that were 100%, thus allowing for parameter uncertainty. In fact the method by Briggs penalized trials with smaller samples such as the GLE/PIB trials. Though analytically robust our results may not be broadly generalizable to the Japanese population because of the small sample sizes of the clinical trials and the lack of published data on the demographic characteristics of Japanese patients with chronic HCV. Better characterizations of these parameters will be beneficial for future assessments of the economic implications of various therapeutic options for HCV infection. Nevertheless real-word evidence studies of GLE/PIB are emerging from multiple cohorts with close to 10,000 patients across various countries, supporting the safety and efficacy in real-world settings [68] including Asian populations: Ogawa et al. [69] in Japan and Hsu et al. in Taiwanese patients [70]. Ogawa et al. [69] studied a cohort of 314 Japanese patients: 122 GT1 and 192 GT2. They reported 12-week SVR rates in GT1 and GT2 patients of 99.2% and 98.9%, respectively. In addition they found that serious adverse events were rare with discontinuation due to an adverse event observed in only 1.6% of patients.

Conclusion

In GT1 treatment-naïve non-cirrhotic patients compared with SOF/LDV, EBR + GZR, DCV/ASV/BCV, and no treatment, GLE/PIB demonstrated superior efficacy, lower probabilities of progressing to advanced stages of liver disease or dying from liver-related causes, and higher QALY gains. GLE/PIB was a dominant strategy compared to EBR + GZR, DCV/ASV/BCV, SOF/LDV, and no treatment: it conferred better outcomes at a lower cost. In portfolio analysis, we compared the GLE/PIB portfolio versus a SOF-based portfolio comprising SOF/LDV in GT1–2 and SOF + ribavirin in GT3. The GLE/PIB portfolio dominated the SOF-based portfolio. Our results remained consistent during sensitivity analyses. Our analysis offers important preliminary insight into the cost-effectiveness of novel DAA treatments for patients with HCV for the public healthcare payers in Japan.

Notes

We refer to a specific genotype (GT) in the document as GT + the genotype. Thus, for genotype 1b we use the abbreviation GT1b.

The model health states are based on METAVIR liver fibrosis stage which classifies chronic HCV from fibrosis 0 (F0), no fibrosis to F4 cirrhosis [19].

References

Liu GG, DiBonaventura M, Yuan Y, et al. The burden of illness for patients with viral hepatitis C: evidence from a national survey in Japan. Value Health. 2012;15(1 Suppl):S65–71.

Bennett H, Waser N, Johnston K, et al. A review of the burden of hepatitis C virus infection in China Japan South Korea and Taiwan. Hepatol Int. 2015;9(3):378–90.

Gower E, Estes C, Blach S, Razavi-Shearer K, Razavi H. Global epidemiology and genotype distribution of the hepatitis C virus infection. J Hepatol. 2014;61(1 Suppl):S45–57.

Yatsuhashi H. Past, present, and future of Viral Hepatitis C in Japan. Euroasian J Hepatogastroenterol. 2016;6(1):49–51.

Umemura T, Ichijo T, Yoshizawa K, Tanaka E, Kiyosawa K. Epidemiology of hepatocellular carcinoma in Japan. J Gastroenterol. 2009;44(Suppl 19):102–7.

Matsumoto K, Wu Y, Kitazawa T, Fujita S, Seto K, Hasegawa T. Cost of illness of hepatocellular carcinoma in Japan: a time trend and future projections. PLoS One. 2018;13(6):e0199188.

Kudo M. Surveillance, diagnosis, treatment, and outcome of liver cancer in Japan. Liver Cancer. 2015;4(1):39–50.

Tanaka J, Koyama T, Mizui M, et al. Total numbers of undiagnosed carriers of hepatitis C and B viruses in Japan estimated by age- and area-specific prevalence on the national scale. Intervirology. 2011;54(4):185–95.

Morio K, Imamura M, Kawakami Y, et al. Advanced liver fibrosis effects on the response to sofosbuvir-based antiviral therapies for chronic hepatitis C. J Med Virol. 2018;90(12):1834–40.

Asahina Y, Hayashi N, Izumi N, et al. JSH guidelines for the management of hepatitis C virus infection: a 2014 update for genotype 1. Hepatol Res. 2014;44(Suppl S1):59–70.

Hayashi K, Ishigami M, Ishizu Y, et al. Late relapse of hepatitis C virus in patients with sustained virological response after daclatasvir and asunaprevir therapy. J Viral Hepat. 2018;25(12):1446–51.

Kozbial K, Moser S, Al-Zoairy R, et al. Follow-up of sustained virological responders with hepatitis C and advanced liver disease after interferon/ribavirin-free treatment. Liver Int. 2018;38(6):1028–35.

Younossi ZM, Tanaka A, Eguchi Y, Henry L, Beckerman R, Mizokami M. Treatment of hepatitis C virus leads to economic gains related to reduction in cases of hepatocellular carcinoma and decompensated cirrhosis in Japan. J Viral Hepat. 2018;25(8):945–51.

Pharmaceuticals and Medical Devices Agency [PMDA]. Maviret (G/P) Product Insert [In Japanese only]. http://www.info.pmda.go.jp/go/pack/6250113F1021_1_04/. Accessed 11 Sept 2019.

Pharmaceutical Technology. AbbVie’s Maviret receives Japanese MHLW approval to treat HCV. 2017. https://www.pharmaceutical-technology.com/news/newsabbvies-maviret-receives-japanese-mhlw-approval-to-treat-hcv-5935729/. Accessed 11 Sept 2019.

Virabhak S, Yasui K, Yamazaki K, et al. Cost-effectiveness of direct-acting antiviral regimen ombitasvir/paritaprevir/ritonavir in treatment-naive and treatment-experienced patients infected with chronic hepatitis C virus genotype 1b in Japan. J Med Econ. 2016;19(12):1144–56.

Ishida H, Yotsuyanagi H. Examination of the cost-effectiveness of the standard of care for chronic HCV treatment [In Japanese only]. Research on medical economic evaluation of various measures related to viral liver disease: MHLW, 2014. Report No.: 20133004B: Cat No.: 20133004B007-20133004B0010: Pages: 127–192. https://mhlw-grants.niph.go.jp/niph/search/NIDD00.do?resrchNum=201333004B. Accessed 3 Nov 2019.

Hartwell D, Jones J, Baxter L, Shepherd J. Peginterferon alfa and ribavirin for chronic hepatitis C in patients eligible for shortened treatment re-treatment or in HCV/HIV co-infection: a systematic review and economic evaluation. Health Technol Assess. 2011;15(17):1–210 (i–xii).

Bedossa P, Poynard T. An algorithm for the grading of activity in chronic hepatitis C. The METAVIR Cooperative Study Group. Hepatology. 1996;24(2):289–93.

Grishchenko M, Grieve RD, Sweeting MJ, et al. Cost-effectiveness of pegylated interferon and ribavirin for patients with chronic hepatitis C treated in routine clinical practice. Int J Technol Assess Health Care. 2009;25(2):171–80.

Liu S, Cipriano LE, Holodniy M, Owens DK, Goldhaber-Fiebert JD. New protease inhibitors for the treatment of chronic hepatitis CA cost-effectiveness analysis. Ann Intern Med. 2012;156(4):279–90.

EASL. Recommendations on treatment of hepatitis C 2016. J Hepatol. 2017;66(1):153–94.

AASLD-IDSA. Hepatitis C guidance: AASLD-IDSA recommendations for testing managing and treating adults infected with hepatitis C virus. Hepatology. 2015;62(3):932–54.

Kanwal F, Kramer JR, Ilyas J, Duan Z, El-Serag HB. HCV genotype 3 is associated with an increased risk of cirrhosis and hepatocellular cancer in a national sample of U.S. veterans with HCV. Hepatology. 2014;60(1):98–105.

McCombs J, Matsuda T, Tonnu-Mihara I, et al. The risk of long-term morbidity and mortality in patients with chronic hepatitis C: results from an analysis of data from a Department of Veterans Affairs Clinical Registry. JAMA Intern Med. 2014;174(2):204–12.

Goolsby Hunter A, Rosenblatt L, Patel C, Blauer-Peterson C, Anduze-Faris B. Clinical characteristics healthcare costs and resource utilization in hepatitis C vary by genotype. Curr Med Res Opin. 2017;33(5):829–36.

Maruoka D, Imazeki F, Arai M, Kanda T, Fujiwara K, Yokosuka O. Long-term cohort study of chronic hepatitis C according to interferon efficacy. J Gastroenterol Hepatol. 2012;27(2):291–9.

McEwan P, Ward T, Webster S, et al. Estimating the long-term clinical and economic outcomes of daclatasvir plus asunaprevir in difficult-to-treat Japanese patients chronically infected with hepatitis C genotype 1b. Value Health Reg Issues. 2014;3(Supplement C):136–45.

Fattovich G, Giustina G, Degos F, et al. Morbidity and mortality in compensated cirrhosis type C: a retrospective follow-up study of 384 patients. Gastroenterology. 1997;112(2):463–72.

Sangiovanni A, Prati GM, Fasani P, et al. The natural history of compensated cirrhosis due to hepatitis C virus: a 17-year cohort study of 214 patients. Hepatology. 2006;43(6):1303–10.

Achkar JP, Araya V, Baron RL, Marsh JW, Dvorchik I, Rakela J. Undetected hepatocellular carcinoma: clinical features and outcome after liver transplantation. Liver transplantat Surg. 1998;4(6):477–82.

Ryder SD. Guidelines for the diagnosis and treatment of hepatocellular carcinoma (HCC) in adults. Gut. 2003;52:iii1–8.

Shepherd J, Jones J, Hartwell D, Davidson P, Price A, Waugh N. Interferon alpha (pegylated and non-pegylated) and ribavirin for the treatment of mild chronic hepatitis C: a systematic review and economic evaluation. Health Technol Assess. 2007;11(11):1–205iii.

Mochida S, et al. Post-marketing observational study on glecaprevir/pibrentasvir in chronic hepatitis C patients without cirrhosis or with compensated cirrhosis: an interim report. In: The 55th Annual Meeting of Japan Society of Hepatology 2019.

AbbVie GK. CERTAIN-1 study results [In Japanese only]. Summary documents on Maviret. Section 2.7.3.2.1.1. pp 29–41. http://www.pmda.go.jp/drugs/2017/P20171013002/112130000_22900AMX00973000_K102_1.pdf.

AbbVie GK. CERTAIN-2 study results [In Japanese only]. Summary documents on Maviret. Section 2.7.3.2.1.2. pp 41–48. http://www.pmda.go.jp/drugs/2017/P20171013002/112130000_22900AMX00973000_K102_1.pdf.

Chayama K, Suzuki F, Karino Y, et al. Efficacy and safety of glecaprevir/pibrentasvir in Japanese patients with chronic genotype 1 hepatitis C virus infection with and without cirrhosis. J Gastroenterol. 2018;53:557–65.

Toyota J, Karino Y, Suzuki F, et al. Daclatasvir/asunaprevir/beclabuvir fixed-dose combination in Japanese patients with HCV genotype 1 infection. J Gastroenterol. 2017;52(3):385–95.

Kumada H, Suzuki Y, Karino Y, et al. The combination of elbasvir and grazoprevir for the treatment of chronic HCV infection in Japanese patients: a randomized phase II/III study. J Gastroenterol. 2017;52(4):520–33.

Mizokami M, Yokosuka O, Takehara T, et al. Ledipasvir and sofosbuvir fixed-dose combination with and without ribavirin for 12 weeks in treatment-naive and previously treated Japanese patients with genotype 1 hepatitis C: an open-label randomised phase 3 trial. Lancet Infect Dis. 2015;15(6):645–53.

Toyoda H, Chayama K, Suzuki F, et al. Efficacy and safety of glecaprevir/pibrentasvir in japanese patients with chronic genotype 2 hepatitis C virus infection. Hepatology. 2018;67(2):505–13.

Kumada H, Watanabe T, Suzuki F, et al. Efficacy and safety of glecaprevir/pibrentasvir in HCV-infected Japanese patients with prior DAA experience severe renal impairment or genotype 3 infection. J Gastroenterol. 2018;53:566–75.

Asahina Y, Itoh Y, Ueno Y, et al. Ledipasvir-sofosbuvir for treating japanese patients with chronic hepatitis C virus genotype 2 infection. Liver Int. 2018;38(9):1552–61.

Pharmaceuticals and Medical Devices Agency [PMDA]. Harvoni (SOF/LDV) Product Insert [In Japanese only]. http://www.info.pmda.go.jp/go/pack/6250107F1026_1_12/. Accessed 11 Sept 2019.

Pharmaceuticals and Medical Devices Agency [PMDA]. Grazyna (GZR) Product Insert [In Japanese only]. http://www.info.pmda.go.jp/go/pack/6250043F1024_1_04/. Accessed 11 Sept 2019.

Pharmaceuticals and Medical Devices Agency [PMDA]. Erelsa (EBR) Product Insert [In Japanese only]. http://www.info.pmda.go.jp/go/pack/6250044F1029_1_03/. Accessed 11 Sept 2019.

Pharmaceuticals and Medical Devices Agency [PMDA]. Ximency (DCV/ASV/BCV) Product Insert [In Japanese only]. http://www.info.pmda.go.jp/go/pack/6250112F1027_1_03/. Accessed 11 Sept 2019.

Zeuzem S, Dusheiko GM, Salupere R, et al. Sofosbuvir and ribavirin in HCV genotypes 2 and 3. N Engl J Med. 2014;370(21):1993–2001.

NICE. Elbasvir-grazoprevir for treating chronic hepatitis C [ID842]. Technology appraisal guidance [TA413]. London: National Institute for Health and Care Excellence; 2016.

NICE. Ledipasvir-sofosbuvir for treating chronic hepatitis C [ID742]. London: National Institute for Health and Care Excellence; 2015.

Fukuda T, et al. Guideline for preparing cost-effectiveness evaluation to the Central Social Insurance Medical Council. Health and Labour Science Research Grants Strategic Integrated Scientific Research Project. 2019.

Japanese National Health Insurance drug price list [In Japanese only]. 2019. https://www.mhlw.go.jp/topics/2018/04/dl/tp20190403-01_01.pdf. Accessed 11 Sept 2019.

Briggs AH, Weinstein MC, Fenwick EA, Karnon J, Sculpher MJ, Paltiel AD. Model parameter estimation and uncertainty: a report of the ISPOR-SMDM Modeling Good Research Practices Task Force-6. Value Health. 2012;15(6):835–42.

Weinstein MC, O’Brien B, Hornberger J, et al. Principles of good practice for decision analytic modeling in health-care evaluation: report of the ISPOR Task Force on Good Research Practices-Modeling Studies. Value Health. 2003;6(1):9–17.

Fukuda T, Shiroiwa T, Ikeda S, et al. Guideline for economic evaluation of healthcare technologies in Japan. J Natl Inst Public Health. 2013;62:625–40.

Board of Governors of the Federal Reserve System. Historical Rates for the Japanese Yen. In: Foreign Exchange Rates - H.10. Washington DC. 2019. https://www.federalreserve.gov/releases/h10/Hist/dat00_ja.htm. Accessed 3 June 2019.

Kristensen FBIA, Neumann PJ, Goldman DP. Challenges and best practices for the Japan health technology assessment pilot program. Value Outcomes Spotlight. 2018;4:40–1.

Johnson SJ, Parise H, Virabhak S, Filipovic I, Samp JC, Misurski D. Economic evaluation of ombitasvir/paritaprevir/ritonavir and dasabuvir for the treatment of chronic genotype 1 hepatitis C virus infection. J Med Econ. 2016;19(10):983–94.

Drummond M, Sculpher M, Torrance G, O’Brien B, Stoddart G. Methods for the economic evaluation of health care programmes. 3rd ed. Oxford: Oxford University Press; 2005.

York Health Economics Consortium. Cost-effectiveness frontier. York. 2016. http://www.yhec.co.uk/glossary/cost-effectiveness-frontier/. Accessed 11 Sept 2019.

Briggs AH, Ades AE, Price MJ. Probabilistic sensitivity analysis for decision trees with multiple branches: use of the Dirichlet distribution in a Bayesian framework. Med Decis Mak. 2003;23(4):341–50.

NICE. Glecaprevir–pibrentasvir for treating chronic hepatitis C technology appraisal guidance [TA499]. London: National Institute for Health and Care Excellence; 2018.

NICE. Ombitasvir–paritaprevir–ritonavir with or without dasabuvir for treating chronic hepatitis C technology appraisal guidance [TA365]. London: National Institute for Health and Care Excellence; 2015.

Freeman AJ, Dore GJ, Law MG, et al. Estimating progression to cirrhosis in chronic hepatitis C virus infection. Hepatology. 2001;34(4 Pt 1):809–16.

Brady B, Siebert U, Sroczynski G, et al. Pegylated interferon combined with ribavirin for chronic hepatitis C virus infection: an economic evaluation [technology report no 82]. Ottawa: Canadian Agency for Drugs and Technologies in Health; 2007.

Thein HH, Yi Q, Dore GJ, Krahn MD. Estimation of stage-specific fibrosis progression rates in chronic hepatitis C virus infection: a meta-analysis and meta-regression. Hepatology. 2008;48(2):418–31.

Alter HJ, Seeff LB. Recovery persistence and sequelae in hepatitis C virus infection: a perspective on long-term outcome. Semin Liver Dis. 2000;20(1):17–35.

AbbVie Inc. Application for inclusion of Maviret (glecaprevir/pibrentasvir) on the WHO Model List of Essential Medicines (EML). Geneva, Switzerland. 2019. https://www.who.int/selection_medicines/committees/expert/22/applications/s6.4.4.2_glecprevir-pibrentasvir.pdf. Accessed 3 Nov 2019.

Ogawa E, Furusyo N, Nakamuta M, et al. Glecaprevir and pibrentasvir for Japanese patients with chronic hepatitis C genotype 1 or 2 infection: results from a multicenter real-world cohort study. Hepatol Res. 2019;49(6):617–26.

Hsu S-J, Chiu M-C, Fang Y-J, et al. Real-world effectiveness and safety of glecaprevir/pibrentasvir in Asian patients with chronic hepatitis C. J Formos Med Assoc. 2019;118(8):1187–92.

Suka M, Igarashi A, Kitazawa T, Yoda T. Development of the fundamental model for HCV [In Japanese only]. Research on medical economic evaluation of various measures related to viral liver disease: MHLW, 2014. Report No.: 20133004B: Cat No.: 20133004B0004-20133004B0005: Pages: 76–95. https://mhlw-grants.niph.go.jp/niph/search/NIDD00.do?resrchNum=2013330-04B. Accessed 3 Nov 2019.

Ministry of Health Labour and Welfare. Abridged life tables for Japan 2017. In: Statistics and Information Department. Minister’s Secretariat Government of Japan. 2017.

Foster GR, Afdhal N, Roberts SK, et al. Sofosbuvir and velpatasvir for HCV genotype 2 and 3 infection. N Engl J Med. 2015;373(27):2608–17.

Acknowledgements

Funding

Funding was provided by AbbVie Inc., Chicago, USA and AbbVie GK, Tokyo, Japan to Medicus Economics to develop the cost-effectiveness model and to provide technical writing assistance. The study sponsor participated in the interpretation of data review and approval of the article. All authors had full access to all of the data in this study and take complete responsibility for the integrity of the data and accuracy of the data analysis. AbbVie GK the study sponsor is funding the Rapid Service and Open Access Fees.

Medical Writing and Editorial Assistance

Julia Bond, MPH, of Medicus Economics provided medical writing and editing services in the development of this publication. Support for this assistance was funded by AbbVie GK.

Authorship

All named authors meet the International Committee of Medical Journal Editors (ICMJE) criteria for authorship for this article, take responsibility for the integrity of the work as a whole, and have given their approval for this version to be published.

Disclosures

Isao Kawaguchi is an employee of AbbVie GK and may own AbbVie stock. Kazuaki Chayama received research funding from AbbVie, Bristol-Myers Squibb, Dainippon Sumitomo Pharma, Toray Industries Inc. and received payment for lectures from AbbVie, Bristol-Myers Squibb, Gilead, and MSD. Yuri Sanchez Gonzalez is an employee of AbbVie Inc and may own AbbVie stock. Suchin Virabhak is an employee of Medicus Economics LLC, a consulting company that conducts economic evaluations in a variety of therapeutic areas for pharmaceutical companies. Dominic Mitchell is a contractor to Medicus Economics LLC. Cammy Yuen is an employee of AbbVie Pte. Ltd. and may own AbbVie stock. Hiromitsu Kumada received payment for lectures from AbbVie GK, MSD, Dainippon Sumitomo Pharma, Bristol-Myers Squibb, and Gilead. AbbVie is the manufacturer of glecaprevir/pibrentasvir.

Compliance with Ethics Guidelines

This article is based on previously conducted studies and does not contain any studies with human participants or animals performed by any of the authors.

Author information

Authors and Affiliations

Corresponding author

Additional information

Enhanced Digital Features

To view enhanced digital features for this article go to https://doi.org/10.6084/m9.figshare.10610303.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution-NonCommercial 4.0 International License (http://creativecommons.org/licenses/by-nc/4.0/), which permits any noncommercial use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Kawaguchi, I., Chayama, K., Gonzalez, Y.S. et al. A Cost-Effectiveness Analysis of Glecaprevir/Pibrentasvir Versus Existing Direct-Acting Antivirals to Treat Chronic Hepatitis C in Japan. Adv Ther 37, 457–476 (2020). https://doi.org/10.1007/s12325-019-01166-3

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12325-019-01166-3