Abstract

Introduction

Increased biochemical bone turnover markers (BTMs) measured in serum are associated with bone loss, increased fracture risk and poor treatment adherence, but their role in clinical practice is presently unclear. The aim of this consensus group report is to provide guidance to clinicians on how to use BTMs in patient evaluation in postmenopausal osteoporosis, in fracture risk prediction and in the monitoring of treatment efficacy and adherence to osteoporosis medication.

Methods

A working group with clinical scientists and osteoporosis specialists was invited by the Scientific Advisory Board of European Society on Clinical and Economic Aspects of Osteoporosis, Osteoarthritis and Musculoskeletal Diseases (ESCEO).

Results

Serum bone formation marker PINP and resorption marker βCTX-I are the preferred markers for evaluating bone turnover in the clinical setting due to their specificity to bone, performance in clinical studies, wide use and relatively low analytical variability. BTMs cannot be used to diagnose osteoporosis because of low sensitivity and specificity, but can be of value in patient evaluation where high values may indicate the need to investigate some causes of secondary osteoporosis. Assessing serum levels of βCTX-I and PINP can improve fracture prediction slightly, with a gradient of risk of about 1.2 per SD increase in the bone marker in addition to clinical risk factors and bone mineral density. For an individual patient, BTMs are not useful in projecting bone loss or treatment efficacy, but it is recommended that serum PINP and βCTX-I be used to monitor adherence to oral bisphosphonate treatment. Suppression of the BTMs greater than the least significant change or to levels in the lower half of the reference interval in young and healthy premenopausal women is closely related to treatment adherence.

Conclusion

In conclusion, the currently available evidence indicates that the principal clinical utility of BTMs is for monitoring oral bisphosphonate therapy.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Osteoporosis—Diagnosis and Burden of Disease

Osteoporosis is a disease characterized by low bone mineral density (BMD) and deterioration of bone microarchitecture, which leads to increased risk of fragility fracture [1, 2]. Osteoporotic fractures, especially of the hip and spine, commonly result in disability, increased morbidity and mortality [3]. In 2010, the number of fractures in the European Union was estimated at 3.6 million, of which 620,000 were hip fractures [4]. Patients at high fracture risk can be identified by investigating known clinical risk factors, which can be combined using a fracture risk calculator such as FRAX, for the calculation of 10-year probability of major osteoporotic and hip fracture [5]. A measurement of BMD using dual-energy X-ray absorptiometry (DXA) provides a good surrogate for bone strength and is used to diagnose osteoporosis, which in postmenopausal women and men aged ≥ 50 years is defined as a BMD value of − 2.5 standard deviations (T-score) or below the mean of the young adult woman [6, 7]. The estimation of fracture risk probability in FRAX can be further refined by adding femoral neck BMD to the clinical risk factors in the calculation and is recommended in many clinical guidelines [8].

Measuring Bone Turnover

Bone turnover is necessary to replace damaged bone, for example, containing microcracks, with new and healthy bone and to release calcium into the circulation to maintain calcium homeostasis. Bone resorption comprises the 4–6-week process in which osteoclasts excavate bone to cause resorption pits, from which degraded bone releases calcium into the microenvironment and later the circulation. In a coupled process, bone resorption triggers bone formation by osteoblasts, a process taking 4–5 months, which fills the resorption cavity with an unmineralized osteoid, a connective tissue rich in collagen. Levels of bone turnover markers reflect the activity and number of bone-forming (osteoblasts) and bone-degrading cells (osteoclasts), providing an estimate of bone resorption and bone formation. Bone turnover markers can be measured non-invasively in either blood or urine at a fairly low cost (usually < €20).

The most widely used markers are N-terminal collagen type I extension propeptide (PINP), osteocalcin and bone alkaline phosphatase for bone formation and C-terminal cross-linking telopeptide of type I collagen (βCTX-I), N-terminal telopeptide of type I collagen (NTX), deoxypyridinoline, hydroxyproline or tartrate-resistant acid phosphatase isoform 5b (TRAP5b) for bone resorption (Table 1) [9]. Post-translational cleavage of type I collagen during bone matrix formation gives rise to PINP, which subsequently leaks out into the circulation and can be measured in serum. Osteocalcin is also produced by osteoblasts during bone formation, is excreted by the kidneys and is one of the most abundant non-collagenous proteins in bone. It is also released during bone resorption. Alkaline phosphatase (ALP) is secreted from bone to the circulation when the osteoid is mineralized, but only about half of serum ALP levels are derived from bone, and the other half emanates mainly from the liver. However, there are currently available assays that to a high degree are specific to the circulating bone ALP isoform (BALP).

Each bone marker has distinct features that reflect particular aspects of bone physiology. For example, TRAP5b reflects the number of osteoclasts and is not secreted in urine and can therefore be useful in assessing bone and mineral disorder in chronic kidney disease, whilst measuring βCTX-I in such patients is inappropriate since the bone marker accumulates in serum if renal function is poor. βCTX-I reflects osteoclast activity resulting in bone degradation and is useful in evaluating, e.g., glucocorticoid induced osteoporosis [10], in which βCTX-I increases rapidly and peaks after about a week after glucocorticoids are started. Oral glucocorticoid treatment also inhibits bone formation, as reflected by a rapid and profound decline in serum osteocalcin levels, whereas the decline in PINP is considerably smaller [10].

Most clinical trials have used bone turnover markers to monitor osteoporosis treatment but the use has not been widely adopted in clinical practice [11,12,13,14].

Factors Affecting Levels of Bone Turnover Markers

Bone resorption markers, including βCTX-I, show diurnal variations, with the highest blood concentration early in the morning and the lowest at around 2 p.m. Both the levels of bone resorption and formation markers are suppressed by feeding, but the effect is much larger for resorption markers (excepted Trap5b), which are suppressed by 20–40%, whilst formation markers are suppressed by < 10% [15, 16]. A fracture normally results in a rapid increase in bone resorption markers, which doubles in weeks, followed by more slowly increasing bone formation markers, which double in serum levels after about 3 months, but remain elevated for up to a year after fracture [17]. Several other factors, including glucocorticoids, menopausal state, age, gender, pregnancy/lactation, aromatase inhibitors, renal insufficiency, immobility and exercise, have an impact on blood-bone turnover markers and should also be considered in their evaluation (Table 2) [18].

Current Recommendations of Use of Bone Turnover Markers in Clinical Guidelines

The use of serum bone formation marker PINP and resorption marker βCTX-I in the investigation of osteoporosis or in monitoring treatment is currently recommended in several guidelines around the world, including those issued by the UK National Osteoporosis Guideline Group (NOGG), by the National Osteoporosis Foundation in the US [19,20,21] and by the International Osteoporosis Foundation (IOF) [22, 23].

Aim

The aim of this consensus group report is to provide guidance, based on the opinion of the experts of this group, to clinicians on how to use bone turnover markers in patient evaluation, in fracture risk prediction and in monitoring treatment effect and adherence to oral bisphosphonates in postmenopausal osteoporosis. The results of this report are endorsed by the Scientific Board of the European Society on Clinical and Economic Aspects of Osteoporosis, Osteoarthritis and Musculoskeletal Diseases (ESCEO).

Methods

An international working group was gathered to develop recommendations for the use of bone turnover markers in the diagnosis and treatment of osteoporosis. Specialists in internal medicine, endocrinology, rheumatology, rehabilitation, geriatrics, clinical biochemistry and epidemiology were invited to participate by the Scientific Advisory Board of European Society on Clinical and Economic Aspects of Osteoporosis, Osteoarthritis and Musculoskeletal Diseases (ESCEO). A 1-day in-person meeting was held on 5 February 2019 in Geneva to discuss the existing scientific literature on the topic and to propose recommendations. After the meeting, members of the writing group (ML, JYR, JK, EM) drafted the first manuscript with the recommendations. The manuscript was then reviewed and commented on by all group participants from the Geneva meeting. This article is based on previously conducted studies and does not contain any studies with human participants or animals performed by any of the authors.

Results and Discussion

Preferred Bone Markers

The IOF and International Federation of Clinical Chemistry and Laboratory Medicine recommend that the bone formation marker PINP and resorption marker βCTX-I be used as reference markers and measured in serum using standardized assays. These markers were chosen based on a number of criteria, including adequate characterization of the marker, specificity to bone, performance in clinical studies, biological and analytical variability, wide availability, potential for standardization of methods, sample handling, stability and medium of measurement (serum vs. urine) [22,23,24].

The Role of Bone Turnover Markers in the Diagnosis of Osteoporosis and Patient Evaluation

Osteoporosis is operationally defined by BMD using DXA. There is an inverse relationship between BMD and the serum levels of bone turnover markers, but the correlation is weak to moderate [25]. In the TRIO study, only 20% of postmenopausal women, diagnosed with osteoporosis using DXA had serum βCTX-I above the upper normal range for healthy premenopausal women [26]. Therefore, bone turnover markers cannot be used for the diagnosis of osteoporosis. However, abnormal levels of bone turnover markers, particularly when high, can be useful for identifying patients in whom further investigations may be needed to detect secondary causes of osteoporosis (e.g., primary hyperparathyroidism, thyrotoxicosis, malabsorption) or other bone diseases (e.g., osteomalacia, Paget’s diseases, bone metastases, multiple myeloma) [27].

Predicting Bone Loss Using Bone Turnover Markers

Declining circulating estradiol levels, particularly during the menopausal transition, gives rise to increased bone turnover due to an increased number of bone remodelling units with a greater increase of osteoclastic activity, causing an imbalance between bone resorption and formation, leading to bone loss. The increased bone turnover is reflected by an increase in bone turnover markers, which is associated with loss of both trabecular and cortical bone [28, 29]. Bone turnover marker levels correlate with bone loss on a group level, and this correlation can be strengthened by sampling blood on several occasions to reduce the between-samples variation. However, the proportion of the variation in BMD change that can be explained by bone turnover markers remains quite small. Thus, predicting an individual’s bone loss over time using bone turnover markers has proved challenging and cannot be recommended in a clinical setting [30,31,32]. Since bone loss from the forearm and hip has been associated with increased risk of fracture, it seems reasonable to assume that bone turnover markers, which are associated with bone loss, can predict fractures [33, 34]. In addition, bone turnover markers may affect fracture risk independently of BMD. Increased bone turnover can be accompanied by a high proportion of newly formed and partly mineralized bone, which is weaker than mineralized bone, and poor trabecular bone microstructure, because of resorption cavities on the trabeculae, trabecular perforations and loss of trabecular connectivity, can have a substantial negative impact on bone strength, not captured with DXA [35, 36].

The Role of Bone Turnover Markers in Fracture Risk Prediction

Most prospective studies investigating the associations between bone turnover markers and incident fractures in postmenopausal and older women have found that the higher the level of bone turnover markers, the greater the fracture risk [18]. With some exceptions, bone resorption markers and bone alkaline phosphatase are more strongly associated with fractures (all, multiple, spine and hip) than other bone turnover markers [32, 37,38,39]. Elevated bone turnover markers increase fracture risk independently of BMD in some but not in all studies [18]. The role of serum PINP and βCTX-I in fracture prediction was investigated in a meta-analysis of six prospective cohorts with women and men. The risk of fracture was increased by 23% [hazard ratio 1.23 (95% CI 1.09–1.39)] and 18% [hazard ratio 1.18 (95% CI 1.05–1.34)] per SD increase in serum PINP and βCTX-I, respectively, but these analyses were not adjusted for BMD [40]. In a recent meta-analysis of nine studies of mostly postmenopausal women, bone turnover markers were weakly associated with fracture risk after adjustment for confounders, with a gradients of risk of 1.20 for serum βCTX-I and of 1.28 for serum PINP. It was concluded that PINP and βCTX-I appear to predict fracture risk independently of BMD and clinical risk factors [41], but the availability of knowledge of confounding variables was very variable. Bone turnover markers’ ability to predict fractures seems to be stronger over short (within a few years) rather than long time periods [42, 43], which likely limits their value and usefulness in long-term fracture prediction in risk calculators such as FRAX, but makes them more appealing in the prediction of short-term or imminent fracture risk. Based on the relatively weak associations between bone turnover markers and fracture risk, uncertainty about the independent ability to predict fractures, the natural variability in the markers, problems with the assays and the inability to predict fracture over long time periods, the Fracture Risk Assessment Tool (FRAX) Position Development Conference members concluded that bone turnover markers should not be included in the calculation of the 10-year probability of fracture in the FRAX tool [44].

The Use of Bone Turnover Markers in the Monitoring of Osteoporosis Treatment

Bisphosphonates

Bisphosphonates, including alendronate, risedronate, zoledronate and ibandronate, are the most commonly used medications to treat osteoporosis [4]. They reduce bone resorption by inhibiting osteoclasts, increase BMD and lower the risk of spine, hip and non-vertebral fractures [45,46,47]. With treatment in recommended doses, βCTX-I is reduced rapidly, by approximately 50–80%, reaching maximum suppression after about 2 months, whilst PINP suppression is slightly smaller and reaches its nadir after about 6 months [48].

Several clinical trials have reported a relationship between the reduction of bone turnover markers and the reduction in vertebral and nonvertebral fracture risk following anti-resorptive treatment [18]. For example, changes in bone turnover markers have been shown to explain a considerable proportion, 54–77%, of the nonvertebral fracture risk reduction with risedronate treatment [49]. The 12-month decrease in βCTX-I and PINP with alendronate treatment in the Fracture Intervention Trial was associated with the reduction of spine fractures [50]. However, due to low sensitivity, it has been deemed inappropriate to use bone turnover markers to predict an individual patient’s response to treatment [51].

Another complicating factor is that the effect on bone marker suppression varies across the licensed bisphosphonates. For example, in the TRIO study, alendronate and ibandronate treatment given to postmenopausal osteoporotic women caused a greater suppression of βCTX-I and NTX-I levels than risedronate [26].

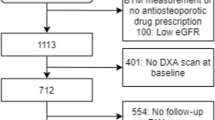

A major challenge with oral bisphosphonates is the poor adherence with less than half of patients taking medication 1 year after treatment initiation [52]. Women adhering to oral bisphosphonates have greater reductions in serum bone turnover marker levels and lower fracture risk than women with poor adherence [26]. It has therefore been proposed that bone turnover markers can be used to monitor treatment adherence. For such a task to be successful and clinically useful, clear definitions of what constitutes an adequate response to treatment must exist. A blood test prior to and after a certain time post-treatment initiation will be required to determine the level of change in the bone turnover markers. Since serum PINP and βCTX-I are responsive to treatment and have low within-subject variability, their use is recommended. A commonly proposed approach to determine if the change in the bone marker is physiologically relevant (and not due to measurement or sampling error) is to compare the observed change with the least significant change (LSC). Assuming that the change is normally distributed, a true change would have to be greater than the LSC, which equals √2 × 1.96 × intra-individual coefficient of variation (CV) = 2.77 × CV. For example, using this approach, serum βCTX-I would need to drop from 350 ng/l to 259 ng/l, assuming an intra-individual CV of 9.4%, which corresponds to an LSC of 26%, in a treated patient to confirm a positive treatment response. In the TRIO study, 3-month bisphosphonate treatment resulted in suppression of PINP and βCTX-I larger than LSC in 75–94% and 68–73%, respectively, of the included women. A detection level, describing the proportion of patients taking oral bisphosphonates that show decreases (larger than LSC) in each of the markers, βCTX-I and PINP, was investigated and was found to be 84% for PINP, 87% for βCTX-I and as high as 94% when measuring both markers [26, 53]. Based on the findings of the TRIO study, the International Osteoporosis Foundation (IOF) and European Calcified Tissue Society (ECTS) Working Group recently issued a recommendation to monitor oral bisphosphonate treatment using a baseline and 3-month measurement of serum βCTX-I and PINP. According to this recommendation, if the decrease is smaller than the LSC, the treating clinician should reassess to identify problems with treatment, which usually relate to poor adherence (Fig. 1) [53].

Algorithm proposed by an IOF-ECTS working group for monitoring bisphosphonate treatment adherence using CTX-I and/or PINP [53]

Another approach that has been proposed is to define the target for treatment as suppression of the bone turnover marker to the lower half of the reference interval in young and healthy premenopausal women [54]. This strategy is complicated by the fact that not all women are above this interval prior to receiving treatment. If bone turnover markers have not been measured prior to starting therapy, the reference interval method could still be used, which increases the clinical usefulness of the method. An analysis from the TRIO study revealed that the proportion of responders detected using the reference interval approach was very similar to the one detected using the LSC approach [26].

Denosumab

Denosumab is a human monoclonal antibody to RANKL, which is administrated subcutaneously. It is the most potent inhibitor of bone resorption, as reflected by a very rapid decrease to nearly undetectable levels of bone resorption marker βCTX-I within a few days of administration [55]. Serum PINP is also suppressed by denosumab treatment, but the decrease is not as marked as for βCTX-I and takes up to 3–6 months to be complete [56]. Biannual injections of denosumab reduce the risk of hip, vertebral and non-vertebral fractures in postmenopausal women [13]. The effect of denosumab is more potent than bisphosphonates in increasing BMD, which continues to rise for up to 10 years of treatment [57]. However, when treatment is stopped, there is a rebound increase in bone turnover markers well above pre-treatment levels and accelerated bone loss is seen [58]. During this phase the risk of multiple vertebral fractures increases [59, 60]. Pretreatment with bisphosphonates reduces this overshoot in bone turnover markers when denosumab treatment stops, and starting bisphosphonate therapy after denosumab cessation is able to attenuate bone loss, but the optimal regime for bisphosphonate therapy after denosumab cessation has not yet been determined [61]. It is possible that monitoring the bone marker response may aid in the use of bisphosphonate treatment frequency and dosing when denosumab treatment is stopped. Future research is needed to address this hypothesis.

Anabolic Treatment

Treatment of postmenopausal women with the parathyroid hormone analogue teriparatide causes a rapid, within days, response in bone formation markers such as PINP, which reach peak levels after 3 months [62, 63]. This increase is followed several months later by a considerably smaller rise also in bone resorption markers. The response to teriparatide is dose-dependent and the increase in PINP correlates weakly or moderately with increases in BMD, which are considerably larger at bone sites rich in trabecular bone, such as the lumbar spine, than those seen with bisphosphonate therapy [64, 65]. Teriparatide is more effective than oral risedronate in reducing the risk of vertebral and clinical fractures in postmenopausal with severe osteoporosis [66]. A systematic review of the present evidence concluded that there is insufficient evidence to recommend the use of monitoring bone turnover markers for predicting the effect of teriparatide treatment effect [67].

Conclusion

Although the use of bone turnover markers has been extensive in clinical trials, prospective cohort studies, case-control studies and at many clinics included in standard patient evaluation for many years, their value in clinical practice is not entirely clear. Challenges relating to large pre-analytical (diurnal variations, feeding, age, gender, menopausal status, etc.) and analytical variations and use of a multitude of markers in different clinical scenarios have impaired the interpretation of their value and makes recommendations for their use in the individual patient more difficult. Despite these challenges, this working group recommends that the following conclusions can be made, based upon the available evidence:

-

The bone formation marker serum PINP and resorption marker serum βCTX-I are the preferred markers for evaluating bone turnover in the clinical setting.

-

Bone turnover markers cannot be used to diagnose osteoporosis but can be of value in patient evaluation and can improve the ability to detect some causes of secondary osteoporosis.

-

Serum βCTX-I and PINP correlate only moderately with bone loss in postmenopausal women and with osteoporosis medication-induced gains in BMD. Therefore, the use of bone turnover markers cannot be recommended to monitor osteoporosis treatment effect in individual patients.

-

Adding data on serum βCTX-I and PINP levels in postmenopausal women can only improve fracture risk prediction slightly in addition to clinical risk factors and BMD and therefore has limited value.

-

Bisphosphonates are the most commonly used osteoporosis medications, but adherence to oral bisphosphonates falls below 50% within the first year of treatment. Monitoring PINP and βCTX-I is effective in monitoring treatment adherence and can be defined as the sufficient suppression of these markers (by more than the LSC or to the lower half of the reference interval for young and healthy premenopausal women).

References

Kanis JA, McCloskey EV, Johansson H, Oden A, Melton LJ 3rd, Khaltaev N. A reference standard for the description of osteoporosis. Bone. 2008;42:467–75.

Lorentzon M, Cummings SR. Osteoporosis: the evolution of a diagnosis. J Intern Med. 2015;277:650–61.

Marottoli RA, Berkman LF, Cooney LM Jr. Decline in physical function following hip fracture. J Am Geriatr Soc. 1992;40:861–6.

Hernlund E, Svedbom A, Ivergard M, et al. Osteoporosis in the European Union: medical management, epidemiology and economic burden. A report prepared in collaboration with the International Osteoporosis Foundation (IOF) and the European Federation of Pharmaceutical Industry Associations (EFPIA). Arch Osteoporos. 2013;8:136.

Kanis JA, Johnell O, Oden A, Johansson H, McCloskey E. FRAX and the assessment of fracture probability in men and women from the UK. Osteoporos Int. 2008;19:385–97.

Cheng XG, Lowet G, Boonen S, et al. Assessment of the strength of proximal femur in vitro: relationship to femoral bone mineral density and femoral geometry. Bone. 1997;20:213–8.

Assessment of fracture risk and its application to screening for postmenopausal osteoporosis. Report of a WHO Study Group. World Health Organ Tech Rep Ser. World Health Organisation, pp 1–129.

Kanis JA, Harvey NC, Cooper C, et al. A systematic review of intervention thresholds based on FRAX: a report prepared for the National Osteoporosis Guideline Group and the International Osteoporosis Foundation. Arch Osteoporos. 2016;11:25.

Eastell R, Szulc P. Use of bone turnover markers in postmenopausal osteoporosis. Lancet Diabetes Endocrinol. 2017;5:908–23.

Dovio A, Perazzolo L, Osella G, et al. Immediate fall of bone formation and transient increase of bone resorption in the course of high-dose, short-term glucocorticoid therapy in young patients with multiple sclerosis. J Clin Endocrinol Metab. 2004;89:4923–8.

Black DM, Delmas PD, Eastell R, et al. Once-yearly zoledronic acid for treatment of postmenopausal osteoporosis. N Engl J Med. 2007;356:1809–22.

Harris ST, Watts NB, Genant HK, et al. Effects of risedronate treatment on vertebral and nonvertebral fractures in women with postmenopausal osteoporosis: a randomized controlled trial. Vertebral Efficacy With Risedronate Therapy (VERT) Study Group. JAMA. 1999;282:1344–52.

Cummings SR, San Martin J, McClung MR, et al. Denosumab for prevention of fractures in postmenopausal women with osteoporosis. N Engl J Med. 2009;361:756–65.

McCloskey E, Selby P, Davies M, et al. Clodronate reduces vertebral fracture risk in women with postmenopausal or secondary osteoporosis: results of a double-blind, placebo-controlled 3-year study. J Bone Miner Res. 2004;19:728–36.

Clowes JA, Hannon RA, Yap TS, Hoyle NR, Blumsohn A, Eastell R. Effect of feeding on bone turnover markers and its impact on biological variability of measurements. Bone. 2002;30:886–90.

Christgau S, Bitsch-Jensen O, Hanover Bjarnason N, et al. Serum CrossLaps for monitoring the response in individuals undergoing antiresorptive therapy. Bone. 2000;26:505–11.

Cox G, Einhorn TA, Tzioupis C, Giannoudis PV. Bone-turnover markers in fracture healing. J Bone Joint Surg Br. 2010;92:329–34.

Vasikaran S, Eastell R, Bruyere O, et al. Markers of bone turnover for the prediction of fracture risk and monitoring of osteoporosis treatment: a need for international reference standards. Osteoporos Int. 2011;22:391–420.

Cosman F, de Beur SJ, LeBoff MS, et al. Clinician’s guide to prevention and treatment of osteoporosis. Osteoporos Int. 2014;25:2359–81.

Compston J, Cooper A, Cooper C, et al. UK clinical guideline for the prevention and treatment of osteoporosis. Arch Osteoporos. 2017;12:43.

Kanis JA, Burlet N, Cooper C, et al. European guidance for the diagnosis and management of osteoporosis in postmenopausal women. Osteoporos Int. 2008;19:399–428.

Vasikaran S, Cooper C, Eastell R, et al. International osteoporosis foundation and international federation of clinical chemistry and laboratory medicine position on bone marker standards in osteoporosis. Clin Chem Lab Med. 2011;49:1271–4.

Szulc P, Naylor K, Hoyle NR, Eastell R, Leary ET, National Bone Health Alliance Bone Turnover Marker P. Use of CTX-I and PINP as bone turnover markers: National Bone Health Alliance recommendations to standardize sample handling and patient preparation to reduce pre-analytical variability. Osteoporos Int. 2017;28:2541–56.

Morris HA, Eastell R, Jorgensen NR, et al. Clinical usefulness of bone turnover marker concentrations in osteoporosis. Clin Chim Acta. 2017;467:34–41.

Garnero P, Sornay-Rendu E, Chapuy MC, Delmas PD. Increased bone turnover in late postmenopausal women is a major determinant of osteoporosis. J Bone Miner Res. 1996;11:337–49.

Naylor KE, Jacques RM, Paggiosi M, et al. Response of bone turnover markers to three oral bisphosphonate therapies in postmenopausal osteoporosis: the TRIO study. Osteoporos Int. 2016;27:21–31.

Biver E, Chopin F, Coiffier G, et al. Bone turnover markers for osteoporotic status assessment? A systematic review of their diagnosis value at baseline in osteoporosis. Joint Bone Spine. 2012;79:20–5.

Sowers MR, Zheng H, Greendale GA, et al. Changes in bone resorption across the menopause transition: effects of reproductive hormones, body size, and ethnicity. J Clin Endocrinol Metab. 2013;98:2854–63.

Marques EA, Gudnason V, Lang T, et al. Association of bone turnover markers with volumetric bone loss, periosteal apposition, and fracture risk in older men and women: the AGES-Reykjavik longitudinal study. Osteoporos Int. 2016;27:3485–94.

Ivaska KK, Lenora J, Gerdhem P, Akesson K, Vaananen HK, Obrant KJ. Serial assessment of serum bone metabolism markers identifies women with the highest rate of bone loss and osteoporosis risk. J Clin Endocrinol Metab. 2008;93:2622–32.

Rogers A, Hannon RA, Eastell R. Biochemical markers as predictors of rates of bone loss after menopause. J Bone Miner Res. 2000;15:1398–404.

Szulc P, Delmas PD. Biochemical markers of bone turnover: potential use in the investigation and management of postmenopausal osteoporosis. Osteoporos Int. 2008;19:1683–704.

Riis BJ, Hansen MA, Jensen AM, Overgaard K, Christiansen C. Low bone mass and fast rate of bone loss at menopause: equal risk factors for future fracture: a 15-year follow-up study. Bone. 1996;19:9–12.

Finigan J, Greenfield DM, Blumsohn A, et al. Risk factors for vertebral and nonvertebral fracture over 10 years: a population-based study in women. J Bone Miner Res. 2008;23:75–85.

Dempster DW. The contribution of trabecular architecture to cancellous bone quality. J Bone Miner Res. 2000;15:20–3.

Follet H, Boivin G, Rumelhart C, Meunier PJ. The degree of mineralization is a determinant of bone strength: a study on human calcanei. Bone. 2004;34:783–9.

Garnero P, Sornay-Rendu E, Claustrat B, Delmas PD. Biochemical markers of bone turnover, endogenous hormones and the risk of fractures in postmenopausal women: the OFELY study. J Bone Miner Res. 2000;15:1526–36.

Ross PD, Kress BC, Parson RE, Wasnich RD, Armour KA, Mizrahi IA. Serum bone alkaline phosphatase and calcaneus bone density predict fractures: a prospective study. Osteoporos Int. 2000;11:76–82.

Sornay-Rendu E, Munoz F, Garnero P, Duboeuf F, Delmas PD. Identification of osteopenic women at high risk of fracture: the OFELY study. J Bone Miner Res. 2005;20:1813–9.

Johansson H, Oden A, Kanis JA, et al. A meta-analysis of reference markers of bone turnover for prediction of fracture. Calcif Tissue Int. 2014;94:560–7.

Tian A, Ma J, Feng K, et al. Reference markers of bone turnover for prediction of fracture: a meta-analysis. J Orthop Surg Res. 2019;14:68.

Ivaska KK, Gerdhem P, Vaananen HK, Akesson K, Obrant KJ. Bone turnover markers and prediction of fracture: a prospective follow-up study of 1040 elderly women for a mean of 9 years. J Bone Miner Res. 2010;25:393–403.

Robinson-Cohen C, Katz R, Hoofnagle AN, et al. Mineral metabolism markers and the long-term risk of hip fracture: the cardiovascular health study. J Clin Endocrinol Metab. 2011;96:2186–93.

McCloskey EV, Vasikaran S, Cooper C, Members FPDC. Official Positions for FRAX(R) clinical regarding biochemical markers from Joint Official Positions Development Conference of the International Society for Clinical Densitometry and International Osteoporosis Foundation on FRAX(R). J Clin Densitom. 2011;14:220–2.

Reginster J, Minne HW, Sorensen OH, et al. Randomized trial of the effects of risedronate on vertebral fractures in women with established postmenopausal osteoporosis. Vertebral Efficacy with Risedronate Therapy (VERT) Study Group. Osteoporos Int. 2000;11:83–91.

Chesnut CH 3rd, Skag A, Christiansen C, et al. Effects of oral ibandronate administered daily or intermittently on fracture risk in postmenopausal osteoporosis. J Bone Miner Res. 2004;19:1241–9.

Black DM, Cummings SR, Karpf DB, et al. Randomised trial of effect of alendronate on risk of fracture in women with existing vertebral fractures. Fracture Intervention Trial Research Group. Lancet. 1996;348:1535–41.

Rosen CJ, Hochberg MC, Bonnick SL, et al. Treatment with once-weekly alendronate 70 mg compared with once-weekly risedronate 35 mg in women with postmenopausal osteoporosis: a randomized double-blind study. J Bone Miner Res. 2005;20:141–51.

Eastell R, Barton I, Hannon RA, Chines A, Garnero P, Delmas PD. Relationship of early changes in bone resorption to the reduction in fracture risk with risedronate. J Bone Miner Res. 2003;18:1051–6.

Bauer DC, Black DM, Garnero P, et al. Change in bone turnover and hip, non-spine, and vertebral fracture in alendronate-treated women: the fracture intervention trial. J Bone Miner Res. 2004;19:1250–8.

Kanis JA, McCloskey E, Branco J, et al. Goal-directed treatment of osteoporosis in Europe. Osteoporos Int. 2014;25:2533–43.

Cramer JA, Gold DT, Silverman SL, Lewiecki EM. A systematic review of persistence and compliance with bisphosphonates for osteoporosis. Osteoporos Int. 2007;18:1023–31.

Diez-Perez A, Naylor KE, Abrahamsen B, et al. International Osteoporosis Foundation and European Calcified Tissue Society Working Group. Recommendations for the screening of adherence to oral bisphosphonates. Osteoporos Int. 2017;28:767–74.

Bergmann P, Body JJ, Boonen S, et al. Evidence-based guidelines for the use of biochemical markers of bone turnover in the selection and monitoring of bisphosphonate treatment in osteoporosis: a consensus document of the Belgian Bone Club. Int J Clin Pract. 2009;63:19–26.

Miller PD, Bolognese MA, Lewiecki EM, et al. Effect of denosumab on bone density and turnover in postmenopausal women with low bone mass after long-term continued, discontinued, and restarting of therapy: a randomized blinded phase 2 clinical trial. Bone. 2008;43:222–9.

Eastell R, Christiansen C, Grauer A, et al. Effects of denosumab on bone turnover markers in postmenopausal osteoporosis. J Bone Miner Res. 2011;26:530–7.

Bone HG, Wagman RB, Brandi ML, et al. 10 years of denosumab treatment in postmenopausal women with osteoporosis: results from the phase 3 randomised FREEDOM trial and open-label extension. Lancet Diabetes Endocrinol. 2017;5:513–23.

Bone HG, Bolognese MA, Yuen CK, et al. Effects of denosumab treatment and discontinuation on bone mineral density and bone turnover markers in postmenopausal women with low bone mass. J Clin Endocrinol Metab. 2011;96:972–80.

Cummings SR, Ferrari S, Eastell R, et al. Vertebral fractures after discontinuation of denosumab: a post hoc analysis of the randomized placebo-controlled FREEDOM trial and its extension. J Bone Miner Res. 2018;33:190–8.

Lorentzon M. Treating osteoporosis to prevent fractures: current concepts and future developments. J Intern Med. 2019;285:381–94.

Uebelhart B, Rizzoli R, Ferrari SL. Retrospective evaluation of serum CTX levels after denosumab discontinuation in patients with or without prior exposure to bisphosphonates. Osteoporos Int. 2017;28:2701–5.

Dempster DW, Zhou H, Recker RR, et al. Differential effects of teriparatide and denosumab on intact PTH and bone formation indices: AVA osteoporosis study. J Clin Endocrinol Metab. 2016;101:1353–63.

Glover SJ, Eastell R, McCloskey EV, et al. Rapid and robust response of biochemical markers of bone formation to teriparatide therapy. Bone. 2009;45:1053–8.

Chen P, Satterwhite JH, Licata AA, et al. Early changes in biochemical markers of bone formation predict BMD response to teriparatide in postmenopausal women with osteoporosis. J Bone Miner Res. 2005;20:962–70.

Finkelstein JS, Wyland JJ, Lee H, Neer RM. Effects of teriparatide, alendronate, or both in women with postmenopausal osteoporosis. J Clin Endocrinol Metab. 2010;95:1838–45.

Kendler DL, Marin F, Zerbini CAF, et al. Effects of teriparatide and risedronate on new fractures in post-menopausal women with severe osteoporosis (VERO): a multicentre, double-blind, double-dummy, randomised controlled trial. Lancet. 2018;391:230–40.

Burch J, Rice S, Yang H, et al. Systematic review of the use of bone turnover markers for monitoring the response to osteoporosis treatment: the secondary prevention of fractures, and primary prevention of fractures in high-risk groups. Health Technol Assess. 2014;18:1–180.

Acknowledgements

The choice of topics, participants, content and agenda of the Working Groups as well as the writing, editing, submission and reviewing of the manuscript are the sole responsibility of the ESCEO, without any influence from third parties.

Funding

No funding or sponsorship was received for this study or publication of this article. The resulting recommendations were derived independently by the authors, without any influence from funding sources. The latter had no role in the process of writing or editing the report; any potential conflicts of interest were disclosed by each member of the working group. The ESCEO Working Group was funded by the ESCEO. The ESCEO receives unrestricted educational grants to support its educational and scientific activities from non-governmental organizations, not-for-profit organizations, non-commercial or corporate partners.

Authorship

All named authors meet the International Committee of Medical Journal Editors (ICMJE) criteria for authorship for this article, take responsibility for the integrity of the work as a whole, and have given their approval for this version to be published.

Disclosures

Mattias Lorentzon has received lecture or consulting fees from Amgen, Lilly, Meda, UCB Pharma, Renapharma, Radius Health and Consilient Health. Maria Luisa Brandi has received honoraria from Amgen, Bruno Farmaceutici and Kyowa Kirin, academic grants and/or speaker grants from Abiogen, Alexion, Amgen, Bruno Farmaceutici, Eli Lilly, Kyowa Kirin, MSD, NPS, Servier, Shire and SPA and consultation grants from Alexion, Bruno Farmaceutici, Kyowa Kirin, Servier and Shire. Jean-Yves Reginster is a shareholder of the Centre Académique de Recherche et d’Expérimentation en Santé (CARES SPRL) and is a member of the journal’s Editorial Board. René Rizzoli is a Speaker Bureau or Member of Scientific Advisory Boards for Danone, Echolight, Effryx, Mylan, Nestlé, ObsEva, Pfizer, Radius Health, Sandoz and TEVA/Theramex and is a Section Editor of this journal. Cyrus Cooper has received lecture fees and honoraria from Amgen, Danone, Eli Lilly, GSK, Kyowa Kirin, Medtronic, Merck, Nestlé, Novartis, Pfizer, Roche, Servier, Shire, Takeda and UCB outside of the submitted work. Roland Chapurlat is on the advisory boards for Pfizer, Amgen, UCB, Ultragenyx and BMS and is a speaker for Amgen, UCB, Abbvie, MSD, Lilly, Janssen, Pfizer and Arrow. Richard Pikner is a speaker and a member of advisory boards for Amgen and has received honoraria for speaking from Amgen, Takeda, Roche, DiaSorin, Abbott and Beckmann-Coulter. Olivier Bruyère has received research grants from Biophytis, IBSA, MEDA, Servier and SMB and consulting or lecture fees from Amgen, Biophytis, IBSA, MEDA, Servier, SMB, TRB Chemedica and UCB. Andrea Gasparik has received speaker fees from Eli Lilly and Richter Gedeon. Etienne Cavalier is a consultant for IDS, Diasorin, Biomerieux and Fujirebio. Bernard Cortet has received fees for occasional interventions as an expert or speaker for Amgen, Expanscience, Ferring, Lilly, Medtronic, MSD, Mylan, Novartis, Roche Diagnostics and UCB. John Kanis reports grants from Amgen, Eli Lilly and Radius Health, consulting fees from Theramex, and is the architect of FRAX® but has no financial interest. Eugene McCloskey has received departmental research funding from Roche and IDS and is a participant in advisory board for Roche. Jean-Marc Kaufman, Médéa Locquet, Andrea Laslop, Pawel Szulc, Jaime Branco, Adolfo Diez-Perez, Markus Herrmann, Niklas Rye Jorgensen, Radmila Matijevic, Salvatore Minisola, Mila Vlaskovska and Serge Ferrari have nothing to disclose.

Compliance with Ethics Guidelines

This article is based on previously conducted studies and does not contain any studies with human participants or animals performed by any of the authors

Author information

Authors and Affiliations

Corresponding author

Additional information

Enhanced Digital Features

To view enhanced digital features for this article go to https://doi.org/10.6084/m9.figshare.9097391.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution-NonCommercial 4.0 International License (http://creativecommons.org/licenses/by-nc/4.0/), which permits any noncommercial use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Lorentzon, M., Branco, J., Brandi, M.L. et al. Algorithm for the Use of Biochemical Markers of Bone Turnover in the Diagnosis, Assessment and Follow-Up of Treatment for Osteoporosis. Adv Ther 36, 2811–2824 (2019). https://doi.org/10.1007/s12325-019-01063-9

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12325-019-01063-9