Abstract

Introduction

In the clinic, the assessment of patients with multiple sclerosis (MS) is typically qualitative and non-standardized.

Objectives

To describe the MS Performance Test (MSPT), an iPad Air® 2 (Apple, Cupertino, CA, USA)-based neurological assessment platform allowing patients to input relevant information without the aid of a medical technician, creating a longitudinal, clinically meaningful, digital medical record. To report results from human factor (HF) and usability studies, and the initial large-scale implementation in a practice setting.

Methods

The HF study examined use-error patterns in small groups of MS patients and healthy controls (n = 14), the usability study assessed the effectiveness of patient interaction with the tool by patients with a range of MS disability (n = 60) in a clinical setting, and the implementation study deployed the MSPT across a diverse population of patients (n = 1000) in a large MS center for routine clinical care.

Results

MSPT assessments were completed by all users in the HF study; minor changes to design were recommended. In the usability study, 73% of patients with MS completed the MSPT, with an average administration time of 32 min; 85% described their experience with the tool as satisfactory. In the initial implementation for routine care, 84% of patients with MS completed the MSPT, with an average administration time of 28 min.

Conclusion

Patients with MS with varying disability levels completed the MSPT with minimal or no supervision, resulting in comprehensive, efficient, standardized, quantitative, clinically meaningful data collection as part of routine medical care, thus allowing for large-scale, real-world evidence generation.

Funding

Biogen.

Trial Registration

NCT02664324.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Avoid common mistakes on your manuscript.

Introduction

Typical outcome measures used in multiple sclerosis (MS) clinical trials are the annualized relapse rate (ARR) and the Expanded Disability Status Scale (EDSS), a clinician-rated categorical scale ranging from 0 (normal neurological exam) to 10 (death resulting from MS) [1, 2]. Both ARR and EDSS are useful metrics for research trials to determine the efficacy of an intervention, but these measures do not adequately capture many important dimensions of MS, such as cognitive function, vision, depression, or fatigue. Also, these same dimensions are not captured in a consistent and standardized way in clinical practice [3]. Although clinical office notes include symptoms and neurological exam findings, there is little standardization or quantification; therefore, it has not been possible to develop systematic knowledge from routine office visits by patients with MS. In order for health care professionals (HCPs) to better understand disease progression, to enable individualized therapy decisions, and to learn from clinical practice, standardized, quantitative clinical data are needed to complement the traditional neurological examination in medical care settings [4].

Quantitative measures of MS have been used within the context of clinical trials but are rarely administered in routine clinical practice. The Multiple Sclerosis Functional Composite (MSFC) is a reliable and well-validated instrument that was developed as a multidimensional quantitative measure of neurologic disability in patients with MS [5]. The four components of the MSFC-4 include measures of upper and lower extremity function, vision, and cognition [6, 7]. Starting in 2012, the Multiple Sclerosis Outcome Assessment Consortium launched an initiative focused on functional performance outcome measures (PerfOs) that quantify walking speed, manual dexterity, vision, and cognition; measures traditionally used within MSFC-4 were highlighted (https://c-path.org/programs/msoac/) [7,8,9,10,11]. The traditional method of conducting the MSFC-4/PerfOs has some limitations, most notably the need for the tests to be administered by a trained technician. This has limited the use of MSFC-4 in clinical practice [12]. For similar reasons, comprehensive collection of standardized patient-reported quality of life (QoL) outcome data is not routine in clinical practice [13]. Although several MS-specific QoL scales have been developed, few are routinely used in the practice setting owing to the difficulty of collecting, scoring, and tracking relevant QoL outcomes [13, 14].

To enable the routine collection of PerfOs and QoL data in a clinical setting, we developed the Multiple Sclerosis Performance Test (MSPT). In this paper, we describe the technical development of the MSPT tool and report three substudies that examine human factors, usability, and initial clinical implementation of the MSPT.

Methods

The Multiple Sclerosis Performance Test

The MSPT tool was designed so that the vast majority of patients could perform the test with minimal to no supervision. The tool integrates a standardized patient history questionnaire, PerfO assessments, and a well-validated QoL instrument on one platform that can be self-administered. Summary data from these tests can be imported into the electronic medical record (EMR). The integrated MSPT reduces the logistical barriers to collecting the data that provide an objective and holistic view of a patient’s clinical status. The MSPT is an assessment tool that enables the aggregation of datasets traditionally employed in clinical trials, effectively allowing the collection and use of standardized, clinically meaningful data while minimizing resource burden to the HCP.

The MSPT is an iPad Air® 2 (Apple, Cupertino, CA, USA)-based assessment tool that is comprised of both software and hardware components, designed specifically for the collection of standardized clinical data from patients with MS during routine clinic visits (Fig. 1). Data can be collected in approximately 30 min and scored and aggregated at the time of testing, with minimal or no assistance from medical staff. The MSPT described here is a more sophisticated version of a research prototype of the tool initially developed at the Cleveland Clinic Foundation [8], comprising a software application that is an electronic adaptation of PerfOs validated against the MSFC-4. The MSPT tool described here and developed jointly by the Cleveland Clinic Foundation and Biogen has substantially improved functionality and has been re-designed to enable administration by the patient with minimal or no supervision. It includes the following modules: (1) Processing Speed Test (PST), which evaluates cognitive function including elements of attention, psychomotor speed, visual processing, and working memory, and is adapted from the Symbol Digit Modalities Test [15, 16]; (2) Contrast Sensitivity Test (CST), which measures visual acuity with 100% and 2.5% levels of contrast, and is adapted from the Sloan Low Contrast Visual Acuity Test [17]; (3) Manual Dexterity Test (MDT), which assesses upper extremity function and is adapted from the 9-Hole Peg Test [18]; and (4) Walking Speed Test (WST), which assesses lower extremity function, and is adapted from the Timed-25 Foot Walk [19]. These four modules of the MSPT provide a comprehensive assessment of the neurologic function impacted by MS. Details of each PerfO task are presented elsewhere [20].

MSPT assessment tool. Upper panel iPad Air® 2 contained within the hardware case, with grid overlay that also functions as a kickstand (a), shown over the screen (b). (c) Bluetooth remote for walking speed test; (d) aluminum pegs for manual dexterity test; (e) magnetized cover for (c) and (d); (f) headphones for audio instructions; (g) power cord. Lower left panel grid overlay in the kickstand position used for all modules except the manual dexterity test. Lower right panel aluminum pegs inserted into the grid overlay for the manual dexterity test. MSPT Multiple Sclerosis Performance Test

The MSPT case enclosing the iPad Air® 2 is a lightweight unit made of injection-molded Cycoloy™ resin components (Omega Plastics, MI, USA; Fig. 1). The case includes a moveable overlay that provides a test grid configured to move in and out of physical contact with the screen, and incorporates receptacles for metal pegs to enable the MDT. The grid of apertures extending through the overlay allows pegs to mechanically and electrically connect with the iPad Air® 2 screen, which is programmed to measure individual peg insertion and removal times for the MDT. The overlay is hinged and can be rotated 260 degrees about the pivot away from the touchscreen to operate as a kickstand to support the housing and the iPad Air® 2 when positioned on a table. The kickstand specifications are specifically designed to enable correct positioning for the vision test module (CST), but also allow convenient positioning for viewing of other assessment modules. Aluminum pegs and a Bluetooth remote are housed in receptacles incorporated into the case and are stored in place with a magnetized cover.

Software features added to the previously reported prototype [8] of the MSPT include a clinical wrapper enabling patient and administrator log-in; a structured patient history questionnaire module termed MyHealth to record demographics, health history, use of MS disease-modifying therapies, and other questions related to a patient’s MS status; and the Quality of Life in Neurological Disorders (Neuro-QoL) measurement system (Fig. 2). The Neuro-QoL contains validated self-report measures designed to assess the health-related quality of life (HRQoL) of people with a wide range of neurological disorders [21]. These self-report measures are in the broad domains of physical, mental, and social functioning. The Neuro-QoL uses computer-adaptive test (CAT) methodology to comprehensively quantify a person’s HRQoL while minimizing the number of questions that are asked [22, 23]. The use of CAT methodology allows for the most efficient data collection by presenting the fewest questions needed to achieve a predetermined level of precision.

The modular assessments are presented to the patient in a predefined sequence via an interface designed for ease of use and patient comprehension (Fig. 2). Each module of the MSPT includes audiovisual instructions to guide the patient through each step of the assessment. Headphones are provided, and video instructions in the patient’s preferred language (currently English, Spanish, or German) map to text instructions displayed on the screen. The functional assessment modules include tutorials and practice tests to familiarize patients with the modules.

The MSPT is designed to be used in the clinical setting with minimal supervision. A diagrammatic representation of MSPT administration in routine clinical care is presented in Fig. 3. After the patient is logged into the MSPT system (Fig. 4, left panel) and independently conducts the MSPT assessments, the MSPT software application can provide the HCP with immediate access to a comprehensive dashboard of longitudinal patient demographic and clinical data (Fig. 4, right panel). Using the iPad Air® 2 touch screen, individual data points can be selected for a more detailed review (see Supplementary Figs. 1–5). The MSPT also has a print feature allowing printing (using AirPrint®) of a summary document from the iPad Air® 2, displaying individual patient results. Alternatively, MSPT data can be viewed directly in the EMR and/or patient data can be accessed by the HCP via a secure MSPT web portal intended to support medical research.

MSPT graphic user interface for health care professional (HCP) use. A clinic staff member can log a patient into the MSPT system using the administration wrapper (step 2 in the careflow). Each patient has a unique ID and information from prior visits can be recalled so that data input is reduced on subsequent visits. Data collected from the patient are aggregated and presented to the HCP at the point of care (step 5 in the careflow). A summary dashboard was designed to provide the HCP with a comprehensive overview of the patient’s functional status. MSPT Multiple Sclerosis Performance Test

The MSPT application interfaces with a secure cloud-based system that is designed for analysis, storage, and integration with external systems (Fig. 5). A connectivity gateway consisting of application programming interfaces (APIs) was designed per Health Level-7 standards. The gateway can be configured for any modern hospital electronic medical record (EMR) implementation by utilizing Fast Healthcare Interoperability Resources and Clinical Document Architecture standards. Communication between the gateway and the EMR is bidirectional and supports Simple Object Access Protocol APIs using a Representational State Transfer design. The platform utilizes industry standard methods (AES-256) to encrypt all protected health information at rest and during transmission. Access to the MSPT cloud and data therein is restricted to the health care institution that is using the registered tool.

MSPT cloud structure and data flow. Patient (1) inputs data using MSPT software application graphic user interface (2) and files are instantaneously uploaded to the MSPT cloud, a HIPAA compliant, secure AWS environment (3). Files are transferred in JavaScript Object Notation (JSON) format. Files can be transferred to a web server, a cloud-based database or exported via a gateway to allow integration into the medical record (4). The HCP (5) can access patient data via the MSPT software application (6) or a secure web portal (7). API application programming interface, EMR electronic medical record, AWS Amazon Web Services, HCP health care professional, HIPAA Health Insurance Portability and Accountability Act, HL7 Health Level-7, MSPT Multiple Sclerosis Performance Test, SOAP Simple Object Access Protocol

In compliance with International Organization for Standardization (ISO) 13485 requirements [24], a design-history file was developed for the MSPT that included a full risk analysis, and details on the generation of software requirement specifications, design, development, testing, and release of a commercial-ready product. Software components of the MSPT were implemented using Objective-C, Ruby on Rails, and Go programming languages.

Substudy 1: Human Factors Study

A formative, simulated-use human factors study of the MSPT was conducted to identify unanticipated use-error patterns that could be related to the design of the graphical user interface (GUI). The study included 14 representative users: 7 adult patients with definite MS and 7 healthy control participants, defined as clinical staff who provide care for patients with MS on a regular basis. All participants were unfamiliar with the MSPT. The representative users interfaced with software version 1.1 (v1.1) of the MSPT under simulated clinical conditions (i.e., self-administration). The study also included a short follow-up survey regarding the MSPT GUI and its implications for patient care. Analysis comprised a heuristic evaluation with descriptive and categorical summations of the survey data.

Substudy 2: Usability Study

This was a multi-center, cross-sectional feasibility study designed to assess the usability of a fully integrated MSPT (v1.1) in a population of patients with MS, representative of those who would use the MSPT in a clinical setting. Sixty study participants were enrolled at three comprehensive MS clinical care sites (20 per site): the Cleveland Clinic, Johns Hopkins Hospital, and the New York University Langone Medical Center. Any patient with a diagnosis of MS was eligible to participate in the study unless severe visual or cognitive impairment precluded them from seeing or comprehending the informed consent or assessment tool instructions. The patients were assigned all modules of the MSPT. Self-administration was videotaped and a survey was administered after completion of the MSPT. This study probed the usability of the MSPT modules in clinical settings in terms of: (1) completion rate; (2) time to completion; (3) number and type of errors made in completion of the modules; and (4) satisfaction with the MSPT experience.

Data were analyzed descriptively with R (https://www.r-project.org/), using summary statistics for continuous variables and frequency distributions for categorical variables. Confidence intervals were calculated as appropriate. Open text responses (qualitative free text) from the MSPT Usability Survey and video recordings were analyzed to identify any specific changes/modifications that could improve the usability of the MSPT.

Substudy 3: Initial Clinical Implementation of the MSPT

The MSPT was deployed at the Cleveland Clinic Mellen Center for Multiple Sclerosis as part of the routine care visit for a subset of patients with MS. Patients in two physician practices were requested to come to their appointment 30 min prior to their physician appointment and asked to self-administer the MSPT under minimal supervision. Analyses comparing results of substudies 2 and 3 were performed with R (https://www.r-project.org/).

All participants in substudies 1 and 2 provided written informed consent and the protocols for these studies were approved by appropriate local institutional review board committees. Data for substudy 3 were obtained from routine clinical practice under a registry protocol approved by the Cleveland Clinic Institutional Review Board. All procedures followed were in accordance with the ethical standards of the responsible committee on human experimentation (institutional and national) and with the Helsinki Declaration of 1964, as revised in 2013.

Results

Substudy 1: Human Factors Study

Overall, the MSPT was found to be safe and easy to use. All participants completed nearly every task without use errors. The difficulties that some participants experienced informed minor design changes. Examples of recommended changes included modification to the GUI and clarification or addition of instructions to better direct the end user.

In the follow-up survey, participants and HCPs were asked questions about their perceptions on using the MSPT. In general, all the participants found the MSPT experience to be positive. Patients generally reported that the MSPT was intuitive (6/7 respondents) and that it would help them better articulate symptoms and/or disease progression (5/7 respondents). The large majority of HCPs reported the information generated from the MSPT to be useful (6/7 respondents) and indicated that the MSPT would improve interaction with patients (6/7 respondents). HCPs indicated that the data from the MSPT would lead to patients being more “engaged in the conversation” (7/7 respondents) and more compliant with treatment recommendations (7/7 respondents).

Substudy 2: Usability Study

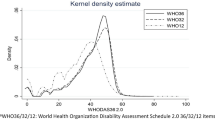

A total of 59 of the 60 enrolled participants underwent and completed the testing. One patient was not able to complete testing. Patient characteristics are presented in Table 1. Module completion rate was defined as the percentage of patients with MS who completed the modules they were assigned and initiated. Of note, study coordinators provided neither guidance nor supervision during usability testing; however, coordinators were instructed to record any specific questions from patients with MS that arose during usability testing. Table 2 indicates that completion rates were high (> 95%) for the MyHealth, Neuro-QoL, PST, MDT, and WST modules, while a lower completion rate was observed for the CST (75%) module. A total of 16 patients (27%) skipped at least one module during testing and 12 patients (20%) restarted at least one module.

Table 2 provides time to completion for the six modules. The average total time taken to complete all MSPT modules was 32.7 ± 7.3 min, which included time taken to review instructions and practice each test.

Results of the MSPT satisfaction survey are shown in Fig. 6. For most questions, > 85% of the participants were satisfied/completely satisfied with their user experience. Lower satisfaction ratings were observed for the CST (27%) and MDT (72%) modules.

MSPT usability study satisfaction survey. Fifty-nine participants were given a short survey at the end of usability testing, probing overall satisfaction with the MSPT tool. Responses were scored and averages are presented as percentages. CST contrast sensitivity test, MDT manual dexterity test, MSPT Multiple Sclerosis Performance Test

Substudy 3: Initial Clinical Implementation of the MSPT

MSPT data from the first 1000 patient visits, pertaining to the usability of the MSPT, were analyzed. Of the 1000 visits, 792 were from unique patients using the MSPT for the first time as part of their routine clinical care. The remaining 208 visits were self-administered by return patients with prior use of the MSPT. Patient characteristics for the 792 first-time MSPT users are presented in Table 1. Patients in the initial clinical implementation were older, had a longer disease duration, and included fewer African Americans and Hispanics than patients in the usability study; no differences were observed regarding sex, education, disease course, and self-reported disability (Patient-Determined Disease Steps). Table 2 indicates that completion rates in this cohort were high (> 91%) and comparable with those of substudy 2, with the exception of completion rates for the CST (52%) and Neuro-QoL (80%) modules. The lower completion rate for the CST module has prompted design optimization of this particular module (see “Discussion”).

Completion times in substudy 3 were slightly faster than in substudy 2 for all modules except MyHealth and Neuro-QoL. The faster PST, CST, MDT, and WST completion times in the clinic setting than under study conditions may be a reflection of the reduced time spent in each module on return visits, suggesting that module completion rates may fall with increasing experience. The MyHealth module took longer in the clinic setting than in the study setting owing to the addition of several site-specific questions to the module. Compared with substudy 2, average overall time to complete the MSPT was slightly faster, 27.7 ± 8.3 min.

The absolute number of patients assigned the Neuro-QoL module via the MSPT was lower than the number assigned to other modules because patients at the Mellen Center frequently complete this assessment using a unique web-based portal prior to their clinic visit.

Discussion

Following ISO 13485 standards, the MSPT was optimized, tested, and released. This neurological assessment tool was designed with the goal of enabling the routine collection of quantitative clinical assessments of patients with MS through a technology-enabled solution. The purposes were to collect standardized, longitudinal, clinically meaningful data without unnecessary burden to the patient or HCP, and to facilitate standardized clinical data aggregation for systematic learning. From September 2015 to September 2018, the MSPT has been successfully implemented at the Cleveland Clinic Mellen Center for Multiple Sclerosis and at 6 other MS Centers in the US, 2 in Germany, and 1 in Spain as part of a technology enabled MS network called MS PATHS (Partners Advancing Technology for Health Solutions). Within this network, MSPT is used as part of routine care, and de-identified data are aggregated for learning. Overall, over 14,000 MS patients have used MSPT during over 25,000 patient encounters. In MS PATHS, The MSPT was granted CE marking to be used for the purpose of monitoring disease, as part of the treatment of MS, and is classified as a Class I medical device.

Patients are scheduled to come to the clinic 30 min prior to their physician appointment and complete the MSPT assessment immediately prior to their appointment. The data generated by the patient using the MSPT can be incorporated into the EMR and used at the point of care in the patient–physician consultation. By enabling the standardized collection of clinically meaningful information, the MSPT has the potential to transform care for patients with MS by making quantitative information available at the point of care, previously only rarely available to MS neurologists owing to the practical constraints of limited resources in a busy clinic environment.

The MSPT was designed for patients with MS and their physicians, with significant emphasis placed on the context of the use. The test modules were adapted from neurological assessments used in the field of MS and were further validated to demonstrate that in a digital format these assessments deliver clinically meaningful data when conducted by the patient with limited or no supervision [20] (Rao et al., submitted). Questionnaires and audio and visual instructions were developed to be easily comprehensible and the GUI was designed for a positive user experience and intuitive functioning. The hardware case surrounding the iPad Air® 2 was designed for functionality and durability and to optimize the execution of the test modules.

Through systematic development and the incorporation of human factors and usability testing, we have shown that the effectiveness of the product performance as measured in a study setting can be translated into the clinical setting. An important measure of effectiveness of an assessment tool is the extent to which the user can achieve their goals in the intended context of use [25].

Usability testing identified specific challenges with the CST—the vision assessment module of the MSPT—which were reflected in lower completion rates. Post hoc analysis revealed that 63% of participants had to re-align their heads or move the MSPT at some point during the assessment in order to stay within a viewing distance range (45–55 cm). If the patient moved outside of the range, the test was stopped and did not resume until the head moved within range. Subsequent versions of the MSPT have incorporated software modifications to improve the range-finding capability to optimize the CST module, and additional improvements are in development. These issues exemplify the value of quantitative and qualitative usability testing. Generally, no significant challenges were identified with the other MSPT components; the vast majority of patients reported high levels of acceptance of the MSPT component tests and ease of use. Initial clinical implementation generally confirmed patient acceptance found with the usability testing.

There are some notable limitations. First, as mentioned, the CST was not easy to use, with only 52% completing the test. Improved versions of CST will hopefully improve usability and patient experience. Second, implementing MSPT into clinical practice requires changes to the clinic workflow, and issues include the need to modify patient schedules to allow adequate time for testing, explaining the rationale for MSPT use to patients, providing assistance the first time they take the testing, and gaining HCP agreement to review results with the patients. Third, there are significant costs associated with the MSPT, including manufacturing costs, implementation and support costs, hosting MSPT data in the cloud, and integrating MSPT data into the provider EMR. These costs were paid by the sponsor of this project, but widespread implementation will need to consider these costs. Thus, the tool is not widely available at the present time. And finally, the value of collecting high-quality, meaningful, standardized data as part of patient care will need to be demonstrated through future research. As health care systems evolve to payments for quality, and ultimately payment for specific outcomes, systematic approaches to data collection, such as MSPT, should fill an important unmet need. Experience gained with the MSPT at 10 health care institutions participating in MS PATHS will inform future product development and commercialization decisions prior to broader availability for use in MS clinical practice and studies.

Conclusions

The MSPT is a digital assessment tool that allows the collection of standardized, quantitative, clinically meaningful data in the clinical setting for individual assessments of patients with MS, as well as real-world evidence generation. Collectively, the application of robust user interface and user experience design and testing resulted in a health care technology product that has the potential to make standardized clinical information available to HCPs during routine care, which ultimately could benefit patients with MS, MS research, and the health care system.

References

Inusah S, Sormani MP, Cofield SS, et al. Assessing changes in relapse rates in multiple sclerosis. Mult Scler. 2010;16(12):1414–21.

Karabudak R, Dahdaleh M, Aljumah M, et al. Functional clinical outcomes in multiple sclerosis: current status and future prospects. Mult Scler Relat Disord. 2015;4(3):192–201.

Meyer-Moock S, Feng YS, Maeurer M, Dippel FW, Kohlmann T. Systematic literature review and validity evaluation of the Expanded Disability Status Scale (EDSS) and the Multiple Sclerosis Functional Composite (MSFC) in patients with multiple sclerosis. BMC Neurol. 2014;14:58.

Lublin FD, Reingold SC, Cohen JA, et al. Defining the clinical course of multiple sclerosis: the 2013 revisions. Neurology. 2014;83(3):278–86.

Rudick RA, Cutter G, Reingold S. The multiple sclerosis functional composite: a new clinical outcome measure for multiple sclerosis trials. Mult Scler. 2002;8(5):359–65.

Ontaneda D, LaRocca N, Coetzee T, Rudick R, NMSS MSFC Task Force. Revisiting the multiple sclerosis functional composite: proceedings from the National Multiple Sclerosis Society (NMSS) task force on clinical disability measures. Mult Scler. 2012;18(8):1074–80.

Balcer LJ, Raynowska J, Nolan R, et al. Validity of low-contrast letter acuity as a visual performance outcome measure for multiple sclerosis. Mult Scler. 2017;23(5):734–47.

Rudick RA, Miller D, Bethoux F, et al. The Multiple Sclerosis Performance Test (MSPT): an iPad-based disability assessment tool. J Vis Exp. 2014;88:e51318.

Motl RW, Cohen JA, Benedict R, et al. Validity of the timed 25-foot walk as an ambulatory performance outcome measure for multiple sclerosis. Mult Scler. 2017;23(5):704–10.

Benedict RH, DeLuca J, Phillips G, et al. Validity of the Symbol Digit Modalities Test as a cognition performance outcome measure for multiple sclerosis. Mult Scler. 2017;23(5):721–33.

Feys P, Lamers I, Francis G, et al. The Nine-Hole Peg Test as a manual dexterity performance measure for multiple sclerosis. Mult Scler. 2017;23(5):711–20.

National Multiple Sclerosis Society. Multiple sclerosis functional composite (MSFC) scoring and training manual, revised. 2001. https://www.nationalmssociety.org/NationalMSSociety/media/MSNationalFiles/Brochures/10-2-3-31-MSFC_Manual_and_Forms.pdf. Accessed 18 Dec 2018.

Rosato R, Testa S, Oggero A, Molinengo G, Bertolotto A. Quality of life and patient preferences: identification of subgroups of multiple sclerosis patients. Qual Life Res. 2015;24(9):2173–82.

Miller DM, Allen R. Quality of life in multiple sclerosis: determinants, measurement, and use in clinical practice. Curr Neurol Neurosci Rep. 2010;10(5):397–406.

Smith A. Symbol digit modalities test. Los Angeles: Western Psychological Services; 1973.

Rao SM, NMSS Cognitive Function Study Group. A manual for the brief repeatable batter of neuropsychological test in multiple sclerosis. New York: National Multiple Sclerosis Society; 1990.

Baier ML, Cutter GR, Rudick RA, et al. Low-contrast letter acuity testing captures visual dysfunction in patients with multiple sclerosis. Neurology. 2005;64(6):992–5.

Kraft GH, Amtmann D, Bennett SE, et al. Assessment of upper extremity function in multiple sclerosis: review and opinion. Postgrad Med. 2014;126(5):102–8.

Kaufman M, Moyer D, Norton J. The significant change for the Timed 25-foot Walk in the multiple sclerosis functional composite. Mult Scler. 2000;6(4):286–90.

Rao SM, Losinski G, Mourany L, et al. Processing speed test: validation of a self-administered, iPad®-based tool for screening cognitive dysfunction in a clinic setting. Mult Scler. 2017;23(14):1929–37.

Cella D, Lai JS, Nowinski CJ, et al. Neuro-QOL: brief measures of health-related quality of life for clinical research in neurology. Neurology. 2012;78(23):1860–7.

Miller DM, Bethoux F, Victorson D, et al. Validating neuro-QoL short forms and targeted scales with people who have multiple sclerosis. Mult Scler. 2016;22(6):830–41.

Cella D. Development and initial validation of patient-reported item banks for use in neurological research and practice. 2015. http://www.healthmeasures.net/images/neuro_qol/Neuro-QoL_Manual_Technical_Report_v2_24Mar2015.pdf. Accessed 18 Dec 2018.

International Organization for Standardization. ISO13485:2016: medical devices—quality management systems—requirements for regulatory purposes. 2016. https://www.iso.org/standard/59752.html. Accessed 18 Dec 2018.

Svanaes D, Das A, Alos OA. The contextual nature of usability and its relevance to medical informatics. In: Andersen SK, Klein MCA, Schulz S, Aarts J, Mazzoleni MC, editors. eHealth beyond the horizon—get IT there. Clifton: IOS; 2008.

Acknowledgements

The authors would like to thank the individuals who participated in this study.

Funding

This work, the article processing charges and the open access fee were funded by Biogen, Cambridge, MA, USA.

Medical Writing, Editorial, and Other Assistance

Editorial assistance in the preparation of this manuscript was provided by Excel Scientific Communications, Southport, CT, USA. Support for this assistance was funded by Biogen.

Authorship

All named authors meet the International Committee of Medical Journal Editors (ICMJE) criteria for authorship for this manuscript, take responsibility for the integrity of the work as a whole, and have given their approval for this version to be published.

Authorship Contributions

Jane K. Rhodes, David Schindler, Stephen M. Rao, Fernando Venegas, Wendy Gabel, Jay Alberts, and Richard A. Rudick were responsible for product design and development. Jane K. Rhodes, Stephen M. Rao, Efrosini T. Bruzik, James R. Williams, Glenn A. Phillips, Colleen C. Mullen, Jaime L. Freiburger, Christine Reece, Robert A. Bermel, Lauren B. Krupp, Ellen M. Mowry, and Richard A. Rudick were responsible for the design and execution of the study. Jane K. Rhodes, David Schindler, Stephen M. Rao, James R. Williams, Glenn A. Phillips, Colleen C. Mullen, Lyla Mourany, Deborah M Miller, Francois Bethoux, and Richard A. Rudick analyzed and interpreted the data. Jane K. Rhodes, Stephen M. Rao, and Richard A. Rudick provided intellectual guidance and mentorship. All authors had full access to all of the data in this study and take complete responsibility for the integrity of the data and accuracy of the data analysis. All authors read and approved this manuscript.

Disclosures

Biogen has obtained an exclusive license for use of the MSPT in MS and related disorders. Jane K. Rhodes is currently an employee of FORMA Therapeutics and was an employee and stock holder of Biogen during the development of the manuscript. David Schindler is an employee and stock holder of Qr8 Health and has received royalties from the Cleveland Clinic for licensing MSPT-related technology. Stephen M. Rao has received honoraria, royalties, or consulting fees from Biogen, Genzyme, Novartis, the American Psychological Association, and the International Neuropsychological Society; and research funding from the National Institutes of Health, the US Department of Defense, the National Multiple Sclerosis Society, the CHDI Foundation, Biogen, and Novartis as well as royalties from the Cleveland Clinic for licensing MSPT-related technology. Fernando Venegas is an employee and stock holder of Biogen. Efrosini T. Bruzik is an employee and stock holder of Biogen. Wendy Gable is an employee and stock holder of Qr8 Health. James R. Williams is an employee and stock holder of Biogen. Glenn A. Phillips is currently an employee of Akcea Therapeutics and was an employee and stock holder of Biogen during the development of the manuscript. Colleen C. Mullen has received consulting fees from Biogen. Deborah M. Miller has served as a consultant for Hoffman-Roche, Ltd and has received royalties from the Cleveland Clinic for licensing MSPT-related technology. Francois Bethoux has received honoraria for speaking from Biogen; consulting fees from Flex Pharma, GW Pharma, Abide, and Ipsen; royalties from Biogen and Springer Publishing; research funding from Acorda Therapeutics, Adamas Pharmaceuticals, Atlas5D, Biogen, and the Consortium of Multiple Sclerosis Centers; and has received royalties from the Cleveland Clinic for licensing MSPT-related technology. Robert A. Bermel has served as a consultant for Biogen, Genentech, Genzyme, and Novartis; receives research support from Biogen, Genentech, and Novartis; and shares rights to intellectual property underlying the MSPT, currently licensed to Qr8 Health and Biogen. Lauren B. Krupp has received consulting fees from Biogen, EMD Serono, Novartis, Pfizer (data safety monitoring board), PK Law, Projects in Knowledge, and Teva Neuroscience; royalties from AbbVie Inc. and Grifols Worldwide; and research grant support from Biogen, the Lourie Foundation, the National Institutes of Health, the National Multiple Sclerosis Society, Novartis, Teva Neuroscience, and the US Department of Defense. Ellen M. Mowry has received research funding from Biogen and Genzyme; free medication for a clinical trial from Teva Neuroscience; royalties for editorial duties with UpToDate; and was principal investigator for trials funded by Biogen and Sun Pharma. Jay Alberts has received honoraria, royalties, or consulting fees from Biogen and the Davis Phinney Foundation; research funding from the National Institutes of Health, the US Department of Defense, and the National Science Foundation; and royalties from the Cleveland Clinic for licensing MSPT-related technology. Richard A. Rudick is an employee and stock holder of Biogen. Jaime L. Freiburger, Lyla Mourany, and Christine Reece have nothing to declare.

Compliance with Ethics Guidelines

All procedures followed were in accordance with the ethical standards of the responsible committee on human experimentation (institutional and national) and with the Helsinki Declaration of 1964, as revised in 2013. All participants in substudies 1 and 2 provided written informed consent and the protocols for these studies were approved by appropriate local institutional review board committees. Data for substudy 3 were obtained from routine clinical practice under a registry protocol approved by the Cleveland Clinic Institutional Review Board.

Data Availability

Data for substudies 1 and 2 are available upon request and detailed at this website: http://clinicalresearch.biogen.com. Data for substudy 3 are not available for sharing external to Cleveland Clinic Foundation.

Author information

Authors and Affiliations

Corresponding author

Additional information

Enhanced Digital Features

To view enhanced digital features for this article go to https://doi.org/10.6084/m9.figshare.7807904.

Electronic Supplementary Material

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution-NonCommercial 4.0 International License (http://creativecommons.org/licenses/by-nc/4.0/), which permits any noncommercial use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Rhodes, J.K., Schindler, D., Rao, S.M. et al. Multiple Sclerosis Performance Test: Technical Development and Usability. Adv Ther 36, 1741–1755 (2019). https://doi.org/10.1007/s12325-019-00958-x

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12325-019-00958-x