Abstract

There is a demand for evaluation of spatial data infrastructures (SDIs), to justify and monitor the relations between investments in SDI initiatives and the results obtained. It is also essential to pay particular attention to identifying user communities, and eliciting their assessment of effects deriving from an SDI. The paper introduces a concept of a multi-criteria method which allows to assess effectiveness of the SDI from the user perspective. The application of the proposed method in the Polish Spatial Data Infrastructure (PSDI) and its main access point to spatial data and services called ‘Geoportal 2’ as well as two groups of the national geoportal”s users (spatial planners and land surveyors) presents its potential. The total scores for the Geoportal 2 indicated the investment has potential and is quite effective, although some components of the PSDI (e.g. main access point, datasets, network services, software, hardware, procedures) may need improvements and additional analyses in the future. The contribution of this paper is the multi-criteria method which enables the analysis of outcomes, benefits (impacts) and business value of using SDI business project’s artifacts (outputs) considering the following dimensions: information and support provided, use process, user organizational performance, strategic alignment and business impact on user enterprise.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The increasing popularity of spatial data infrastructures (SDIs) has resulted in building new infrastructures on different levels of government (i.e. local, national, supranational), but also in developing the existing ones through such means as including new data sources. A growing body of literature describes many different aspects of SDI initiatives, including the concepts and models of SDIs (e.g. Hjelmager et al. 2008; de By et al. 2009), perspectives (e.g. Chan et al. 2001), development (e.g. Rajabifard et al. 2002), managing metadata, spatial data sets and services (e.g. Innerebner et al. 2016), as well as standards and implementing rules (e.g. Wortman 1994).

One of the important issues within the development of spatial data infrastructures (SDIs) is economic and financial analysis. There is a demand for SDI evaluation in order to (e.g. Grus et al. 2008) justify and monitor in a systematic way the relationships between the investments in SDI initiatives and the results obtained. Many different assessment methods are considered in the area of the SDI projects. To structure and organize SDI assessment the framework which integrates various approaches and techniques is proposed by Grus et al. 2008. Secondly, financial methods are used for SDI investments(e.g. Craglia and Nowak 2006; Bregt 2012). Moreover, there are examples of application of different qualitative methods, which come from different scientific fields, including operational research, management and economy (e.g. Geudens et al. 2009; Toomanian et al. 2011).

Questions about the efficiency and effectiveness of various SDI activities are also valid in monitoring not only the development, but also transitions and changes of SDI. Lance et al. (2006) note that efficiency, rather than effectiveness, is more commonly stated as an objective of SDI initiatives. However, in the literature, deliberations on effectiveness concerning both concept and scope in the field of SDI as well as indicators and assessment methods can be found. In the area of effectiveness assessment there are references to management and organization studies (Kok and van Loenen 2005; Giff and Crompvoets 2008; Grus et al. 2011), economic studies (Craglia and Campagna 2010), as well as to information system theory (Steudler et al. 2008; Nedović-Budić et al. 2008).

Indicators for measuring effectiveness are a separate issue. Steudler et al. (2008) derive evaluation and performance indicators for SDIs from land administration principles. Evaluation involves assessing the strengths and weaknesses of programs, policies, personnel, products and organizations to improve their effectiveness. The work of Giff and Crompvoets (2008) describes the framework of designing the performance indicators for assessing the efficiency and effectiveness of SDI, which are based on organizational development theory. Craglia and Campagna (2010) present example indicators for SDI effectiveness, butalso internal efficiency benefits of SDI, and the effectiveness benefits derived by the public and local businesses in dealing with their local public administration.

Nedović-Budić et al. (2008) present the evaluation framework focusing on the effective use of SDI, by identifying the current and potential users and finding out how useful the SDI-supplied data and services are or would be for their particular needs. It derives measures mainly from information systems that are based on concepts such as: usefulness, effective use, information and organizational effectiveness. The latter is also considered by Kok and van Loenen (2005).

There is also a conceptual logic model of an SDI component, which illustrates the relationships between an SDI’s inputs, outputs, outcomes, and impact within the context of efficiency and effectiveness as discussed in Giff and Crompvoets (2008).

The aim of the paper is to present a multi-criteria method for assessment of SDI effectiveness from users’ perspectives as the review of existing literature on this subject(e.g. Nedović-Budić et al. 2008;Vandenbrucke et al. 2013; Craglia and Nowak 2006) confirms the need for identifying user communities and assessment of value deriving froman SDI use. Undoubtedly the successful application and use of SDI products and services (Nedović-Budić et al. 2008) rely on the fulfillment of the users’ needs and expectations. In the course of this paper,a case study of two user groups – spatial planners and surveyors of the Polish Spatial Data Infrastructure (PSDI) – is taken into consideration. The assessment enables analysis of effects related to the PSDI business project carried out by the Polish Head Office of Geodesy and Cartography between 2008 and 2015 and concerns the project’s artifacts such as a geoportal (main access point to the PSDI) and its applications, datasets, as well as support provided.

The contribution of this paper is the qualitative method for ex-post assessment of SDI effectiveness based on the analysis of outcomes, benefits (impacts) and business value of using SDI business project’s artifacts (outputs), which includes accessibility, usefulness and usability of the project’s artifacts (e.g. main access point and its applications, spatial datasets, network services) as well as impact of the SDI on user organizational performance and business strategy.

The article is structured as follows. The Methodology section gives a brief outline of effectiveness assessment in this study, as well as presenting the concept of a method, which allows for the analysis of the dimensions of SDI effectiveness. The application of the method to spatial data and services of the PSDI, in Geoportal 2, the main Polish access point, is described in the Case study section. The results are then discussed and the solutions evaluated. A final summary follows in the conclusion.

Methodology

SDI business project

SDI could be considered as (PMBOK 2000) a temporary endeavor which is undertaken to create unique products and services. The SDI project is an IT-oriented project the purpose of which is design, implementation and installation of artifacts such as: computers, storage, networking and other physical devices, as well as software, databases and processes, and thereby constitutes the components of the SDI. Also essential for IT projects are (Murphy 2002) the business context and objectives of an organization and investors who are planning a project, have a project in progress or have just completed one. The organizations and investors implementing an SDI are mainly government authorities. However, the business context and objectives are as important to authorities as to private entities. Therefore, an SDI business project should be considered as the sum of information technology components to be designed, implemented, and installed, a whole IT lifecycle and the business objectives.

A viewpoint of the business project concerns SDI assessment in a frame of an (Zwirowicz-Rutkowska 2014) SDI business project approach, which uses the assumptions of the Multi-View SDI Assessment Framework (Fig. 1). The essence of this framework is (Grus et al. 2007, 2008) that it accepts multiple perspectives (views) on SDI and thus accepts its complexity in terms of multiple definitions. Moreover, each approach covers at least one of the three purposes of the assessment: accountability, knowledge and development. All approaches use one or more assessment methods, such as case studies, surveys, document analysis, etc., to evaluate SDIs. The application component of the assessment framework also focuseson measuring the indicators of each assessment approach. The result part of the framework has two functions: 1) evaluating the SDI and 2) evaluating each approach and the whole assessment framework.

Scope of effectiveness assessment

The proposed method assessment is considered for the SDI business project approach which includes measuring SDI effectiveness from the perspective of the users and their organizational performance, as well as the organization undertaking the SDI investment.

Many authors indicate (e.g. Heffron 1989; Bannister 2001; Productivity Commission 2013) the multi-dimensionality and difficulty of measuring the concept of effectiveness. The term is not always defined or interpreted consistently within and across disciplines. It also concerns the area of SDI. Theeffectiveness issue is discussed in the field of SDI as it was presented in Introduction section, but the key aspects which influence effectiveness interpretation are features which should be evaluated, methods and indicators selection, as well as some terminological assumptions concerning the concept of spatial data infrastructure and the infrastructure specificity.

This study investigates effectiveness from the perspective of the users. In this case effectiveness is manifested by outcomes, benefits (impacts) and business value of using the SDI projects’ artifacts (outputs, e.g. main access point to the infrastructure and other applications, datasets, network services, metadata, hardware), which are described on four levels: 1) the SDI provides some data sources, applications and metadata which can be utilized by users and also supports the users in utilizing the functionality of the SDI, 2) the use of SDI components influence the decision making processes of the users and 3) the use of SDI components influence user organizational performance, as well as 4) the SDI might help to achieve users’ strategic goals and have an impact on their ability to transform business processes. Effectiveness refers to the extent to which performance of users’ duties and also their needs are supported and covered by the SDI components in terms of usefulness, accessibility and usability of the SDI projects’ artifacts. It also concerns achievement of business objectives of organizational units utilizing the SDI. The proposed scope of effectiveness derives from concepts of information effectiveness theory, including (Nedović-Budić et al. 2008) effective use, organizational effectiveness, user satisfaction, value of geographic information, operational effectiveness, as well as (Hamilton and Chervany 1981) system effectiveness, and also (Murphy 2002) business value from information technology.

Multi-criteria method characteristics

In the area of evaluation of IT initiatives, many different methods are presented, which could be categorized (Lech 2005; Renkema and Berghout 1997) as follows:1) financial methods, 2) qualitative methods (multi-criteria methods, strategic analysis methods, 3) probabilistic methods. For the presented business project approach, a multi-criteria method is proposed. Generally, (Lech 2005) multi-criteria methods used for IT projects like Information Economics (Parker and Benson 1988) or the method presented by T. Murphy (2002) try to evaluate all aspects of a single IT project. They are thus IT-oriented, have a wide evaluation scope (beyond financial aspect) and a single-project level of observation. They integrate some qualitative contributions with a wide range of quantitative non-financial aspects. Multi-criteria methods characteristics are as follows: 1) pillars, categories or domains of assessment with weighting schemas are identified, 2) indicators are grouped by each pillar, category or domain, 3) ranking, weighting or scoring schemas for indicators are assumed, 4) the results of the evaluation process are scores for each indicator, then pillar and also the total score for the project, which is then being interpreted.

Assessment categories

A proposed multi-criteria method dedicated to an SDI is for ex-post and monitoring assessment of effectiveness through four categories (Table 1), which adhere to the four levels mentioned presented in the Scope of effectiveness assessment subsection. Each category (C) is weighted (Wc) in accordance with their relative importance to the organization which undertakes the assessment. The sum of the weighed categories (Wp) is 100%.

Indicators for assessment categories

Each category is split into n indicators (I), against which each project should be assessed (Tables 2, 3, 4, and 5). For each criterion, a 0–10 scale is proposed. For all categories, a higher score means that the project fulfills more of each criterion. As the SDI and information systems have much in common (e.g. hardware, applications), there are measures (column I in Tables 3 and 4), which derive from the field of operational research, benefits of IT projects and management information system (Hamilton and Chervany 1981; Farbey et al. 1992). There are also indicators (column I in Table 5), which adhere to aspects of business value from information technology (Murphy 2002). Some indicators (column I in Tables 2 and 3) are related to the general concept of the SDI outputs and to several aspects of effectiveness described by Nedović-Budić et al. (2004, 2008) and Zwirowicz-Rutkowska (2016).

After scoring (SI) each indicator, the average score (ASc) and weighted score (WSc) for each category is calculated.

If there are m scores for each indicator (the group interview), the average score (ASI) for each indicator is calculated:\( ASI=\frac{1}{m}\sum_{i=1}^m{SI}_i \).

If there are k groups of users, a weight for each group is assigned (wu), which is equal to the number of respondents and then the weighted average score (WASI) for each indicator is calculated:\( WASI=\sum_{i=1}^k\frac{wu_i\times {ASI}_i}{wu_i} \).

For the purpose of accuracy evaluation of the averages standard deviation (σ) calculations are done as presented below.

A general equation for the standard deviation of the average score (σAS):

where ν is a deviation from the average score (AS), lis a number of observations.

A deviation from the average score for each category:

Evaluation of the average score calculations for each category:

where l is a number of indicators.

A general equation for the standard deviation of the weighted average score for each indicator (σWASI):

where wu is a weight for each group, ν is a deviation from the weighted average score (WASI), k is a number of the group observations.

A deviation from the weighted average score for each indicator:

Evaluation of the weighted average score calculations for each indicator:

Total business project score and its interpretation

The total SDI business project weighted score (WS BP ) is as follows:\( {WS}_{BP}=\sum_{i=1}^4{ WS c}_i \).

There are no absolute rules for interpreting the overall score, but for the method dedicated to SDI presented in this paper, based on the assumptions for IT projects (Murphy 2002), the propositions of interpretation of the total SDI business project weighted score are as follows. SDI projects scoring about 25% are rather poor and their artifacts are ineffective. Projects with scores between 26 and 50% have some potential but their artifacts require significant modification in the following projects. Projects with scores between 51 and 75% are good and quite effective, although their artifacts may need some improvements and additional analyses in the future. A project above 76% is a solid investment and its artifacts are of a high level of effectiveness.

Case study

The polish spatial data Infrastructure and the geoportal 2 business project

The Polish SDI is implemented by twelve different central government bodies. The PSDI is the part of Infrastructure for Spatial Information in the in the European Community (INSPIRE) and compatible with implementing rules, requirements and obligations established at Community level. According to the INSPIRE Directive (European Parliament and the Council 2007), the PSDI covers thirty-four INSPIRE spatial data themes. The PSDI is also developed at regional and local levels through different initiatives, and by many institutions and local authorities. The national geoportal (national access point) integrates certain thematic, regional and local geoportals and is the main access point to the PSDI resources.

The national access point, called ‘Geoportal 2,’ offers the following applications: a geoportal for the INSPIRE (INSPIRE broker), a national geoportal (national broker), a metadata editor and validator, mobile applications, and a statistics module and allows the access to a wide range of datasets, network services and metadata.

Applications and other mentioned SDI components are artifacts of the Geoportal 2 project, which was carried out by the Polish Head Office of Geodesy and Cartography between 2008 and 2012, finally closed in 2015. The IT life cycle of this business project included the following phases (Polish Head Office of Geodesy and Cartography 2009): 1) documentation preparation for funding, 2) project management, 3) development and maintenance of the PSDI, 4) development and maintenance of the Land Surveying and Cartographic Infrastructure, 5) implementation and maintenance of INSPIRE network services and INSPIRE broker, 6) implementation and maintenance of trade network services and national broker, 7) training, 8) promotion. The business context of this SDI project included (Polish Head Office of Geodesy and Cartography 2010) analysis of the Land Surveying and Cartographic Service’s tasks and data resources and other stakeholders’ tasks and resources, which build the Land Surveying and Cartographic Databases and Infrastructure, as a reference part of the National Spatial Data Infrastructure, as well as interactions between the Land Surveying and Cartographic Service and other government bodies engaged in the process of the PSDI implementation in three dimensions: European, national and trade. Business objectives included 1) enabling access and use of geographical information in Poland through the development of the national spatial data infrastructure in the part of the reference databases as well as network services associated with these datasets, 2) use of the electronic geodetic and cartographic archive and also other documents in the process of registers, maps and databases creation and maintenance for the purpose of development of the NSDI, 3) better access to geodetic and cartographic documents and their prevention of damage in case of the random incidents as well as deterioration of their condition as the result of the running use, 4) drawing up and implementation of the security politics which define the rules of the common spatial data and metadata use through the main access point as well as restriction procedures of the risk of the intentional or accidental contravene of spatial data and metadata confidentiality, integrity and accessibility, 5) implementation of the best practice in the system’s maintenance.

Application of the method

In this study, two groups of users of the PSDI and its main access point (Geoportal 2) are considered as follows: spatial planners and land surveyors.

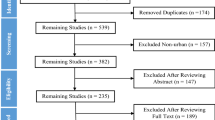

The application of the method is based on surveys conducted between July 2014 and May 2015. The survey encompassed ten topics and different types of closed questions concerning the issues of users’ duties performance and their needs in relation with assessment of usefulness, accessibility and usability of the main access point, and referring to categories described in the Assessment Categories section (Table 1) and including the indicators presented in the Scores for categories section (Tables 2, 3, 4, and 5). A total of 63 questionnaires were mailed to companies specializing in urban and local level planning. An additional 100 questionnaires were send or presented to land surveying companies. The completion rate was 44.4% and 21%, respectively.

Results

Scores for categories

This section presents calculations of the average scores (ASI) and the weighted average score (WASI) for each indicator in the category, including 2 groups of user (k = 2) as well as the average scores for the assessment categories (Asc), and also standard deviations (σ) for averages (Tables 2, 3, 4, and 5). The base for calculations were results of surveys– scores for indicators indicated by 28 spatial planners and 21 land surveyors. Weights for groups are respectively WU1 = 28, WU2 = 21.Tables 6 and 7 show evaluation of the averages calculations.

Table 2 shows results of the assessment of data availability as well as usefulness of support provided in Geoportal 2. The average score for this category is 4.4 points. Three indicators received the highest weighted average scores (over 6.5 points): data – thematic accuracy (1_1), data – completeness (1_2), data – lineage (1_7). The rest of the scores are equal or below 5.0 points.

As the survey results showed, the PSDI has influence on decision makers and the decision-making process (average score for the category is 6.0 points, Table 3). From among 6 indicators considered for the Decision makers subcategory, 3 of them received the weighted average scores over 6.5 points. In the area of Decision making process 3 indicators out of 14 received weighted average scores over 6.5 points. The lowest scored indicators (weighted average scores below 5.0 points) are better/easier cooperation with different stakeholders (2_19), better/easier cooperation within an organization (2_20) and consideration of constraints and alternatives (2_12). The weighted average scores for the Applications subcategory are between 6.0 and 6.5 points.

The average score for the category of User organizational performance is 4.2 points (Table 4). 3 indicators out of 11 received scores over 5.5 points: duration of procedure (3_1), change of attitude towards some procedures/tasks (3_2), improved procedures (3_3). The rest of the potential effects have very low meaning for land surveyors and spatial planners (weighted average scores are between 2.2 and 4.4 points).

The average score for the Strategic alignment and business impact on user enterprise category is 3.7 points (Table 5). From among 19 effects, 4 received 5.5 points and more: improved knowledge transfer (4_6), optimization of workflow (4_12), possibility of ICT inclusion in tasks (4_18) and ICT impact on efficiency increase of the employees and the whole company (4_19). The other potential effects are not necessarily perceived by the respondents (weighted average scores between 1.8 and 4.2 points).

Total score for the geoportal 2 project

This section presents calculations of the weighted scores for each category (WSc) and total score for the Geoportal 2 project (WSBP). An assessment is conducted in two variants (Tables 8 and 9). The base are weights (W) for categories (Table 1), as well as average scores for categories (ASc) calculations (Tables 2, 3, 4, and 5).

In the first case (Table 8), all categories are of the same importance (25%). The category of Use process received the highest weighted score (15%). The categories of Information and support provided as well as User organizational performance received, in round figures, the same scores (11%). The category of Strategic alignment and business impact on user enterprise received the lowest score (9.25%). The total weighted score for the Geoportal 2 project is 45.75 ± 3.25%. According to the rules for interpretation of overall scores, Geoportal 2 has some potential but some components (datasets, network services, hardware, software) require modification in subsequent projects.

In the second case (Table 9), the European Project INSPIRE and its objectives (European Parliament and the Council 2007; Commission of the European Communities 2009) are taken into account, including the need for collecting information about the use of spatial data services of the infrastructure for spatial information, as well as evidence showing the use of the infrastructure for spatial information by the general public. Therefore, the first two categories are assumed to be the most important (weight of 45% per category). In this case, the category of Use process also received the highest weighted score (27%). The score for the Information and support category is in round figures 20%. The categories of User organizational performance as well as Strategic alignment and business impact on user enterprise received very low scores (2.1% and 1.85% respectively). The total weighted score for the Geoportal 2 project is 50.75 ± 3.05%. According to the rules for interpretation of overall scores Geoportal 2 is good and quite effective, although its components may need some improvements and additional analyses in the future. Although, if accuracy of the averages scores for categories is taken into consideration, awareness of the results’ credibility should be retained and some components of the main access point may require a deeper analysis and some modifications.

Discussion

This ex-post analysis allows for the assessment of the effectiveness of the PSDI adhering to the Geoportal 2 project’s artifacts, i.e. main access point to the PSDI and its applications, datasets and support from the user perspective. The paper presents some preliminary findings about the availability and usefulness of the main Polish access point in professional practice of spatial planners and land surveyors. This is the first use of the multi-criteria method proposed in this study.

Average standard deviation calculations allowed for the evaluation of accuracy of the weighted average scores for each indicator, as well as the average scores for each category (Tables 2-5). The results of calculations of the sums of deviations from averages confirmed the correctness of the averages calculations (Tables 6-7).

The total weighted scores for the Geoportal 2 project (in round figures 46% for the first variant and 51% for the second) indicated that Geoportal 2 has some potential, but some components (datasets, network services, hardware, software, procedures) will require fine-tuning in the future to increase the PSDI effectiveness as indicated by the rules for interpretation of overall scores presented in this paper and the results for the category of Information and support provided as well as the subcategory Applications of the Use process category. Moreover, the variants illustrated importance of the weighting.

The study confirmed that the PSDI is used both by urban planners and surveyors, although the analysis of the scores for the groups suggested that spatial planners value the Geoportal 2 project’s artifacts more and derive more benefits from using them, by comparing the average scores for indicators of the Use process category (Table 3). The PSDI does not have a great effect on the organizational performance of the users’ enterprises oruser enterprise strategy and business goals.

Datasets in the geoportal might be inappropriate for carrying out tasks, considering the scores (Table 2) in the temporal validity, positional accuracy and distribution formats of data categories. Moreover, the surveyor group was of the opinion that the geoportal, which integrates the datasets of selected thematic groups mainly from the central geodetic and cartographic service, is not always able to rival the district or municipality geoportals and websites. Among the different types of user support, email addresses (for spatial planners) and video tutorials (for surveyors) were the most useful ones. The weighted average scores for the applications are between 6.0 and 6.5 points (Table 3), but some technical issues were raised by the interviewees. First of all, Geoportal 2 is perceived as difficult to use because of the layout of certain tools and a lack of intuitiveness. The availability and performance of the applications is also intermittent. The list of factors influencing the potential lack of accessibility includes aspects that are both user-specific (internet access, internet speed, hardware, software, web browsers) and Geoportal 2 administrator-specific (server performance and scale to keep up with growing computing demands).

The multi-criteria method presented in this paper allows for the assessment of the SDI effectiveness from the user perspective by integration of the quantitative non-financial aspects with some qualitative contribution of the SDI, and adds to a variety of methods that have been used for user perspective so far, (e.g. Grus et al. 2008; Nedović-Budić et al. 2004; European Parliament and the Council 2007; Commission of the European Communities 2009):verbal description, case studies, survey, as well as statistical tests. Financial methods, based on financial analysis tools are the most desirable from the investors’ perspective, but include only selected costs and benefits which are defined in the monetary unit. They are considered for SDI projects (e.g. Craglia and Nowak 2006; Bregt 2012; Borzacchiello and Craglia 2013). However, Bregt (2012) underlines that on the one hand, cost-benefit analysis is easy to understand as it translates all aspects into monetary terms, but on the other hand, it is not the right tool for as complex a project as INSPIRE, especially during the phase of the infrastructure implementation. In this paper, it is indicated that qualitative methods, including multi-criteria and strategic analysis, are an interesting option which can be used for SDI projects of different sizes and allows for the integration of financial aspects with non-financial ones.

For the purpose of identifying the indicators for the categories proposed in this study, some concepts from the field of SDI, operational research, management information system and business economics (Hamilton and Chervany 1981; Farbey et al. 1992; Murphy 2002; Nedović-Budić et al. 2008) were incorporated into the method. Moreover, the author’s expert knowledge, gained from the implementation of the Geoportal 2 project, was utilized. Selected indicators allow to capture essence of the concept categories such as effective use, organizational effectiveness, as well as value deriving from IT projects with reference to some basic SDI components such as main access point and its applications, datasets and support provided.

The method is subjective, as it is based on ranking, but this is (Remenyi et al. 2000) the common feature of all multi-criteria methods. The advantages of the method encompass the possibility of modifying the indicators, including adding new ones or selecting the optimum set of measures for a given context or set of circumstances, integrating with the financial category, as well as a wide scope of evaluation. This method is dedicated to organizations responsible for SDI development and monitoring the relationships between the investments in SDI initiatives and the results obtained taking into account usability, usefulness, as well as availability of the infrastructure for users’ enterprises.

Conclusion

The aim of the study was to present a multi-criteria method for ex-post assessment of SDI effectiveness from the users’ perspective, as well as the application of the proposed method in Geoportal 2, the main access point to the Polish Spatial Data Infrastructure’s spatial data and services, as well as two groups of the national geoportal’s users.

The results bring us closer to understanding of the PSDI effectiveness considering the following dimensions: information and support provided, use process, user organizational performance, strategic alignment and business impact on user enterprise. The total scores for the main access point of the PSDI indicated its potential, but the Geoportal 2 project’s artifacts (datasets, network services, hardware, software) will require fine-tuning including improvements and additional analyses in the future to increase the PSDI effectiveness. The PSDI and Geoportal 2 are considered by surveyors to bea source of information supplementary to documentation from the geodetic and cartographic service centers or field reconnaissance. They value the Geoportal 2 project’s products and use them for land surveying work activities. Spatial planners perceive Geoportal 2 as the data source for 14 different tasks.

A literature overview confirms there are examples of application of different qualitative methods for assessment of SDIs which come from different scientific fields, including operational research, management and economy. Geudens et al. (2009) present a new multi-criteria method dedicated to SDI which is for ex-ante assessment of new SDI policy strategies. There is also evidence of use of the Balanced Scorecard method (BSC) for SDI in the literature (Toomanian et al. 2011). Generally, BSC is the universal method for assessment of organizational effects. Thus, the BSC (Lech 2005) focuses only on one aspect of IT impact on the organization responsible for IT project implementation – supporting the strategic goals. A concept of a qualitative method introduced in this paper is IT-focused and considered for the SDI business project approach, and adds to a variety of viewpoints and methods identified for the Multi-View SDI Assessment Framework. It covers all three purposes of the assessment linked with the SDI assessment framework: accountability, knowledge and development. It is for ex-post and monitoring assessment of using the SDI business project’s artifacts – outputs, e.g. main access point and its applications, datasets, network services, metadata, hardware, which constitute the SDI components. It also develops the concept of the SDI effectiveness and contributes to the user perspective described by Nedović-Budić et al. (2008), by references to aspects such as usefulness and usability, as well as information and organizational effectiveness. The multi-criterion method proposed in this study widens the typology of methods used for SDI assessment from a user perspective and helps to evaluate the mentioned above aspect using a wide range of quantitative non-financial and also qualitative aspects. The proposed scope of effectiveness assessment and indicators make also references to the aspects of system effectiveness and business value from information technology, as well as derive from other scientific fields such as business economics and management information system.

Application of the method requires a survey instrument, which should be generally accessible. Popularization of the survey among communities interested in using the SDI is recommended. Survey forms could be presented on the web pages connected with the SDI data and service source access points.

The results of the SDI assessment may serve as a basis for improvements of components of the SDI in the future, as well as identifying goals for the SDI development and the following SDI projects carried out by the Polish Head Office of Geodesy and Cartography. Including respondents’ recommendations concerning the main access point to the PSDI – Geoportal 2 – such as (1) better spatial data quality, (2) user-friendly application layout, (3) intuitive access to application toolbars as well as (4) high server performance and appropriate scale to keep up with growing computing demands will certainly increase the effectiveness of the PSDI and the Geoportal 2.

References

Bannister F (2001) Dismantling the silos: extracting new value from IT investments in public administration. Inf Syst J 11:65–84

Borzacchiello MT, Craglia M (2013) Estimating benefits of spatial data infrastructures: a case study on e-Cadastres. Comput Environ Urban 41:276–288

Bregt A (2012) Spatial Data Infrastructures. Cost-Benefit Analysis in Perspective. Costs and Benefits of Implementing the INSPIRE Directive Workshop. JRC

Chan TO, Feeney ME, Rajabifard A, Williamson I (2001) The dynamic nature of spatial data infrastructure: a method of descriptive classification. Geomatica 55(1):451–458

Commission of the European Communities (2009) Decision of 5 June 2009 implementing Directive 2007/2/EC of the European Parliament and of the Council as regards monitoring and reporting. Official Journal of the European Union L148, Luxembourg: Publications Office of the European Union

Craglia M, Campagna M (2010) Advanced regional SDIs in Europe: comparative cost-benefit evaluation and impact assessment perspectives. International Journal of Spatial Data Infrastructures Research 5:145–167

Craglia M, Nowak J (2006) Report of International Workshop on Spatial Data Infrastructures: Cost-Benefit / Return on Investment. 12–13 January 2006. Ispra, Italy. European Commission Joint Research Centre, Institute for Environment and Sustainability

de By RA, Lemmens R, Morales J (2009) A skeleton design theory for spatial data infrastructure. Methodical construction of SDI nodes and SDI networks. Earth Sci Inform 2:299–313

European Parliament and the Council (2007) Directive 2007/2/EC of the European Parliament and of the council of 14 March 2007 establishing an Infrastructure for Spatial Information in the European Community (INSPIRE). Official Journal of the European Union L108(50). Publications Office of the European Union

Farbey B, Land F, Targett D (1992) Evaluating investments in IT. J Inform Technol 7(2):109–122

Geudens T, Macharis C, Crompvoets J, Plastria F (2009) Assessing spatial data infrastructure policy strategies using the multi-actor multi-criteria analysis. International Journal of Spatial Data Infrastructures Research 4:265–297

Giff GA, Crompvoets J (2008) Performance indicators a tool to support spatial data infrastructure assessment. Comput Environ Urban 32(5):365–376

Grus L, Crompvoets J, Bregt AK (2007) Multi-view SDI assessment framework. International Journal of Spatial Data Infrastructures Research 2:33–53

Grus L, Crompvoets J, Bregt AK (2008) Theoretical introduction to the multi-view framework to assess SDIs. In: Crompvoets J, Rajabifard A, van Loenen B, Delgado Fernandez T (eds) A multi-view framework to assess spatial data infrastructures. The Melbourne University Press, Melbourne, pp 93–113

Grus L, Castelein W, Crompvoets J, Overduin T, van Loenen B, van Groenestijn A, Rajabifard A, Bregt AK (2011) An assessment view to evaluate whether spatial data infrastructures meet their goals. Comput Environ Urban 35(3):217–229

Hamilton S, Chervany NL (1981) Evaluating information system effectiveness — part I: comparing evaluation approaches. MIS Quart 5:55–69

Heffron F (1989) Organizational theory and public organizations: the political connection. Englewood Cliffs

Hjelmager J, Moellering H, Cooper A, Delgado T, Rajabifard A, Rapant P, Danko D, Huet M, Laurent D, Aalders H, Iwaniak A, Abad P, Düren U, Martynenko A (2008) An initial formal model for spatial data infrastructures. Int J Geogr Inf Sci 22(11–12):1295–1309

Innerebner M, Costa A, Chuprikova E, Monsorno R, Ventura B (2016) Organizing earth observation data inside a spatial datainfrastructure. Earth Sci Inform. doi:10.1007/s12145-016-0276-0

Kok B, van Loenen B (2005) How to assess the success of National Spatial Data Infrastructures? Comp Environ Urban 29(6):699–717

Lance KT, Georgiadou Y, Bregt A (2006) Understanding how and why practicioners evaluate SDI performance. International Journal of Spatial Data Infrastructure Research 1:65–104

Lech P (2005) Evaluation Methods’ Matrix – A Tool for Customized IT Investment Evaluation. Proceedings of the 12th European Conference on Information Technology Evaluation: 297–306

Murphy T (2002) Business value from technology. A practical guide for today's executive, John Wiley & Sons, Inc, Hoboken

Nedović-Budić Z, Feeney MEF, Rajabifard A, Williamson I (2004) Are SDIs serving the needs of local planning ? Case study of Victoria, Australia and Illinois, USA. Comp Environ Urban 28:329–351

Nedović-Budić Z, Pinto JK, Budhathoki NR (2008) SDI effectiveness from the user perspective. In: Crompvoets J, Rajabifard A, van Loenen B, Delgado Fernandez T (eds) A multi-view framework to assess spatial data infrastructures. The Melbourne University Press, Melbourne, pp 273–303

Parker M, Benson J (1988) Information economics. Prentice Hall, Upper Saddle River

PMBOK (2000) A guide of the Project Management body of knowledge, Project Management Institute, USA

Polish Head Office of Geodesy and Cartography (2009) Feasibility study of the geoportal 2 project

Polish Head Office of Geodesy and Cartography (2010) Architecture of the geoportal 2 information system

Productivity Commission (2013) On efficiency and effectiveness: some definitions. Staff Research Note, Canberra

Rajabifard A, Feeney MEF, Williamson IP (2002) Future directions for SDI development. Int J Appl Earth Obs 4(1):11–22

Remenyi D, Money A, Sherwood-Smith M (2000) The effective measurement and management of IT costs and benefits. Butterworth-Heinemann, Oxford

Renkema T, Berghout E (1997) Methodologies for information systems investment evaluation at the proposal stage: a comparative review. Inform Software Tech 39:1–13

Steudler D, Rajabifard A, Williamson I (2008) Evaluation and performance indicators to assess spatial data infrastructure initiatives. In: Crompvoets J, Rajabifard A, van Loenen B, Delgado Fernandez T (eds) A multi-view framework to assess spatial data infrastructures. The Melbourne University Press, Melbourne, pp 193–210

Toomanian A, Mansourian A, Harrie L, Ryden A (2011) Using balanced scorecard for evaluation of spatial data infrastructures: a Swedish case study in accordance with INSPIRE. International Journal of Spatial Data Infrastructures Research 6:311–343

Vandenbrucke D, Dessers E, Crompvoets J, Bregt AK, van Orshoven J (2013) A methodology to assess the performance of spatial data infrastructures in the context of work process. Comput Environ Urban 38:58–66

Wortman KC (1994) Developing standards for a National Spatial Data Infrastructure. Cartogr Geogr Inform 21(3):132–135

Zwirowicz-Rutkowska A (2014) A business project approach to assess spatial data infrastructures. Conference proceedings of the 14th Geoconference on informatics. Geoinformatics and Remote sensing 3:413–421

Zwirowicz-Rutkowska A (2016) Evaluating spatial data infrastructure as a data source for land surveying. Journal of Surveying Engineering-ASCE. doi:10.1061/(ASCE)SU.1943-5428.0000185

Author information

Authors and Affiliations

Corresponding author

Additional information

Responsible editor: H. A. Babaie

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Zwirowicz-Rutkowska, A. A multi-criteria method for assessment of spatial data infrastructure effectiveness. Earth Sci Inform 10, 369–382 (2017). https://doi.org/10.1007/s12145-017-0292-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12145-017-0292-8