Abstract

In perceptual psychology, audition and introspection have not yet received as much attention as other topics (e.g., vision) and methods (third-person paradigms). Practical examples and theoretical considerations show that it nevertheless seems promising to treat both topics in conjunction to gain insights into basic structures of attention regulation and respective agentive awareness. To this end, an empirical study on voluntary auditory change was conducted with a non-reactive first-person design. Data were analyzed with a mixed methods approach and compared with an analogous study on visual reversal. Qualitative hierarchical coding and explorative statistics yield a cross-modal replication of frequency patterns of mental activity as well as significant differences between the modalities. On this basis, the role of mental agency in perception is refined in terms of different levels of intention and discussed in the context of the philosophical mental action debate as well as of the Global Workspace/Working Memory account. As a main result, this work suggests the existence and structure of a gradual and developable agentive attention awareness on which voluntary attention regulation can build, and which justifies speaking, in a certain sense, of attentional self-perception.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

In perceptual psychology and cognitive science, both audition and introspection have not yet received as much attention as other topics and methods – and especially not in this combination. As a prime example of sensory perception, vision is mostly preferred in experimental studies, certainly because of remarkable insights and the great importance it plays for our visuocentric culture (O’Callaghan, 2009). Methodologically, the detached third-person perspective dominates the research designs, surely an aftereffect of introspection’s bad reputation from the formative years of modern psychology (Danziger, 1980). Before systematically arguing why it nevertheless could be of interest to jointly explore these somewhat underrepresented topics, two illustrative examples shall be introduced.

First, the philosopher H. Witzenmann describes the phenomenon of being disrupted from writing by radio noise from the neighbor and, nevertheless, being able to make disappear the unwanted sound by concentrating on the intended work (Witzenmann, 1989). What he highlights as even more interesting, however, is pausing and paying attention from work when the disturbing sound stops again. Then it is not an external stimulus that attracts attention, rather its disappearance reveals the previous effort, now superfluous, to disengage from the distracting noise. Or, with other words, attention is aroused by the dynamics of one’s own attention regulation which, after an initial conscious impulse, had been pre-reflectively working until its reason vanished (see also Cowan, 1988, p. 176f.).

The second example is more common and often used in laboratory studies on auditory scene analysis (ASA) which means the segregation and grouping of stimuli to form consistent auditory objects (Bregman, 1990). At lively social events (e.g., a cocktail party), people engage in talking with one person while suppressing the noise made by other speakers, music, etc. But when one’s own name or another meaningful content is fished out of the jumble of words and noise, it becomes clear that, paradoxically speaking, a pre-attentive form of attention must have been operating before noticing the outcome (Bronkhorst, 2015; Moray, 1959; Wood & Cowan, 1995). This phenomenon can be further specified by the aspect of intention, for example, by waiting to be called by a friend who is yet to appear or being involved in something that could be discussed by other party guests. Then the identification of information in the stimulus shadowed so far is not unexpected but can be seen in the context of (sub-) intentionally divided attention (Wood & Cowan, 1995).

Common to both examples is an attentional shift between unwanted and wanted auditory events, performed by disengaging or engaging activity, and accompanied by transitions between conscious and unconscious states. In the first example, we become aware of disengaging from previously initiated disengaging effort, and in the second we consciously take up what was so far only tracked in the background. Since in both examples, attention is raised not only to external percepts but also to the attentional dynamics itself, we can ask whether the conscious/unconscious demarcation in such transitions is intangibly fixed, e.g., by principles of our psychophysical organization, or if first-person access can be extended to stages or levels of attentional processing mostly held to be unconscious and automatic in principle (as, e.g., by Bargh & Chartrand, 1999). Furthermore, dependent on conscious accessibility, it can be asked as to what extent this implies a voluntary controllability of these processes, which would allow an assessment of their agentive status.

In the context of the philosophical debate of mental action, Galen Strawson’s answer to this question is quite pessimistic as he claims mental action to be limited to triggering automatic cognitive processes that only confront the agent with their results like with the uninfluenceable consequences of a fired bullet (Strawson, 2003). On the one hand, this ‘mental ballistics’ account seems to be suitable to prevent an infinite regress of a voluntarily exerted volition (Ryle, 2009) and is furthermore supported by the argument that mental content must already be present before one has the option to think about it voluntarily (Fiebich & Michael, 2015; Ruben, 1995). On the other hand, opponents argue for a wider scope of mental action as, for instance, in belief (Boyle, 2009), memory (Arango-Muñoz & Bermúdez, 2018), and judgment (Owens, 2009). Based on various arguments that all revolve around the topic of consciousness, they maintain that cognitive processes allow in many cases much more access than Strawson has in mind. While the above examples seem to support such a broader view of mental action, they cannot be directly linked to the mental action debate since perception has not been an issue here so far, which can be partly explained by historical reasons.

Traditionally, beginning with the psychophysical prelude of experimental psychology in the nineteenth century, perception tended to be broken down to its external occasion – the stimulus. The idea that sensation and perception are fully determined by the subject’s environment boomed in classical behaviorism and was later refined in bottom-up theories which also involved internal aspects of processing (Firestone & Scholl, 2016; Gibson, 1966, 1972; Marr, 1982). Nevertheless, other approaches put a stronger focus on the inside of the perceptual system and provided evidence for top-down components (for vision: Gregory, 1970; Rock, 1997; Suzuki & Peterson, 2000; for audition: Alain et al., 2001; McAdams, 2002). Here, however, a distinction must be made between whether top-down actually means active conscious control of attention or is merely understood as a synonym for central neural mechanisms in contrast to early stages of processing, such as peripheral channeling in the sensory organs (by frequency-dependent excitation patterns or stimulus presentation to different ears; Hartmann & Johnson, 1991; Snyder et al., 2006). Therefore, to prevent confusion, it does not suffice to speak of top-down processing but rather to clarify the extent to which conscious effort contributes to it.

In particular, the phenomenon of bistable perception has fascinated researchers for a long time because it exhibits a relative independence of the stimulus (Helmholtz, 1867; Liebert & Burk, 1985; Necker, 1832). Here, the ambiguity of perceptual interpretations, related to one and the same physical stimulus, allows the observer to consciously influence what is perceived, at least to some extent. In recent experimental studies, however, the role of conscious attention is mostly restricted to a binary decision by the subjects (via button press) which one of two possible percepts is perceived with a prescribed perceptual intention, equally for vision (Kornmeier et al., 2009; van Ee et al., 2005) and audition (Alain et al., 2001; Curtu et al., 2019). Then, conscious attention is only deployed to confirm a resultant percept with an appropriate content, regardless of foregoing aspects of attentional processing. Of course, for an essentially neural understanding of top-down processing it is consistent to limit the first-person perspective to passive observation, noticing only what comes in without having access to how it comes about (which is consistent with Strawson’s view). However, if we take a broader view of top-down, as suggested above and by the introductory examples, then we would have to ask for experiments considering attentional aspects beyond neural automaticity.

Transferring this into the terms of the mental action debate, we can distinguish different forms of intention as degrees of access to mental processing. In general, conscious intentions are seen as an important criterion for qualifying mental events as actions (O’Shaughnessy, 2000). More particularly, distal intentions guide our mental lives in a future-directed and goal-oriented way, such as to aim for a desirable effect or result without controlling its realization, while proximal intentions refer to initiating and continuing the individual steps and strategies required for this purpose (for action in general: Mele, 1992; for mental action: Buckareff, 2005). In our context, distal intention seems to be the level at which the conscious decision about certain content to be perceived is taken and maintained until the goal is achieved. Proximal intentions would then have to be responsible for achieving this perceptual outcome in the best possible way, which would certainly include specific aspects of attention regulation. But since perception has not been addressed in the mental action debate thus far, these considerations remain hypothetical. They are encouraged, however, by the option of a third intentional level further refining the aspect of action planning on the proximal level. At first glance, motor-intentions (M-intentions) suggested by Pacherie (2008) might look like a promising candidate, as they are intended to back up the higher levels of intentional guidance by more concrete, perception-based information. On closer inspection, however, it becomes clear that M-intentions are meant to rely on “what neuroscientists call motor representations”, reflecting “an implicit knowledge of biomechanical constraints and the kinematic and dynamic rules governing the motor system” (Pacherie, 2008, p. 186). Thus, since M-intentions are inaccessible to phenomenal consciousness, they are irrelevant for our investigation, even considering a transfer to mental agency. Moreover, one may ask whether it is at all appropriate to speak of intentions here in terms of automated neural programs.

Instead, we endorse McAdams’ phenomenal conclusion that “we actively strive to make sense out of the ever-changing array of sounds, putting together parts that belong together and separating out conflicting information” (McAdams, 2002, p. 439). Regarding such elementary activities as “separating out” and “putting together”, we suggest executive intentions as a more process-related and phenomenally conscious level the explanatory power of which we already showcased in previous work (Wagemann & Raggatz, 2021). So, one aim of the present study is to find out whether such a set of conscious perceptual intentions can be concretized in terms of attention regulation by involving first-person observation. If successful, this could expand mental agency to perception and thus allow to widen the scope of mental action also in principle.

In other first-person studies, we already explored visual perceptual reversals (Wagemann et al., 2018; Wagemann, 2020) and came to promising results with view on mental agency in terms of specific forms of mental activity. To further corroborate these findings, link them to the topic of intention, and investigate whether they can be generalized to another modality, we used a similar experimental design addressing multistable auditory perception. Besides vision, audition is one of the most important senses for coping with everyday life, and while the two have certain aspects in common, they also differ in some respects. Like vision, audition serves spatial orientation, but in this respect, it has a lower discriminatory power than the former (Blauert, 1997). In return, audition seems to be more inwardly oriented, for example, sounds heard with headphones are perceived between the ears inside the head (O’Callaghan, 2009; Vickers, 2009), at least if not enhanced with surround sound technology. Whereas vision is clearly focused on external objects, albeit ending at their surface, audition also externalizes objects as sound sources (Hartmann & Wittenberg, 1996), but seems much more focused on experiencing the inherent, material properties of objects (e.g., wood vs. metal) or utterances of sentient beings. Some philosophers even go so far as to deny that sound experience is essentially related to external space (Nudds, 2001; O’Shaughnessy, 2000); others argue that it is the combination of different modalities that leads to auditory objects being assessed as spatial (O’Callaghan, 2019; Strawson, 1959; Witzenmann, 1989).

Another aspect that distinguishes audition from vision is the fact that hearing relates much more to the duration or temporal unfolding of percepts than does seeing (Bregman, 1990; McAdams, 2002; O’Callaghan, 2009). Furthermore, we can close our eyes to ward off unwanted stimuli or move them toward something to be seen, both in a purely physical sense, while our ears cannot be closed without external aids and are rather limited in their spatial mobility. This suggests that in audition a higher mental effort is required from the beginning to compensate for this difference, which is also to be investigated in this study.

If a more inward-looking character of audition could be demonstrated, this might explain why this modality does not yet enjoy as much popularity among researchers as vision. Furthermore, it would make understandable that in the introductory examples, perception is closer connected with attention regulation than in analogous visual examples, in which object-related externalization covers more of the perceiver’s mental contribution. This is exactly what motivates us to explore into the first-person dimensions of auditory perceptual change and suggests interesting phenomena up to insights into the agentive structure of the subject. In this context, A. S. Bregman’s plea for subjective and phenomenological experience in audition research can be motivating, on the one hand, as this would not only help to discover the essential aspects, but also to speed up the research process considerably (Bregman, 2005; see also Locke, 2009). On the other hand, his appreciation of an experiential approach seems limited in that he adopts pre-attentive Gestalt mechanisms (or schema-driven processes) to partially explain auditory perception (see also Bregman, 1990). But why should the principle of experience not be extendable to the question of a conscious and agentive access to the attentional aspects of audition?

Experimental Procedure

Stimulus

In the research on ASA and particularly on bistable or multistable auditory perception, two main aspects and related types of experimental paradigms must be distinguished: concurrent and sequential organization of sound events (McAdams, 2002). While the former applies to simultaneous presentation of acoustic stimuli that could be grouped or segregated into individual auditory events, the latter deals with sequential stimuli to be identified as sound patterns unfolding in time. For several reasons, we decided for simultaneous presentation with a stimulus consisting of 3 sinusoidal tones, as will be explained in the following.

As suggested by Bregman (2005), we developed the task and the stimulus starting from individual everyday experience. In the context of his daily meditation practice focusing on the sound of a singing bowl, the author noticed that the sound consisted of three main frequencies which are initially heard as a whole but can also be segregated into individual tones. He realized that he was able, after some training, to switch his attention deliberately between the low, the middle, and the high tone, and thus to create different sequential patterns. Notably, the tones do not stand in a harmonical relation (183 Hz, 528 Hz, 980 Hz) but nonetheless can be experienced as a holistic impression. However, during the slow decay of the sound, it changes with altered intensities of the tones and emerging and fading vibrations. To obtain a reproducible stimulus as close as possible to a real sound, we analyzed the frequencies of the singing bowl and simulated the acoustic event by an appropriate triple sine tone with homogeneous power distribution. The sound was recorded in mono, which ensures that with headphones the phantom center is perceived inside the head. During the trials, subjects heard the stimulus in an infinite loop.

Because participants were to be challenged to consciously deploy and experience attentional processes, we thus minimized cues in the stimulus that are known to facilitate perceptual change. First, this explains why we opted for concurrent presentation without any physical changes. In sequential presentation, by contrast, even short gaps between successive sound patterns can influence perception in that the attentional dynamic is reset (Cusack et al., 2004). Other cues that support segregation are different stimulus onsets, different intensities, different periodicities, all of which do not apply to the stimulus used here. The only stimulus-related aspect that facilitates segregation is the relatively large frequency differences between the tones (van Noorden, 1975), which, however, are not random but are originally related to the physical properties of the singing bowl.

Task and Participants

The task consisted of two steps as follows: (1) To practice first to focus attention briefly at one of the three tones at a time and then to switch to another of the three tones, so that switching can be performed voluntarily and several times in a row (approx. 2–3 days). (2) Then to describe as precisely as possible (a) how the change between two tones (e.g., the low and the high) takes place and what is experienced, (b) what is mentally exerted to bring about the change (which is to be observed for different combinations of tone patterns such as high/low or high/medium), and (c) how well one succeeds in each case. The instructions also included a short explanation of multistable perception with ambiguous stimuli and the request to use headphones and be cautious with the intensity level. Along with an MP3 file containing the stimulus, the instructions were emailed to participants, who in turn submitted their reports after one week.

The study was conducted in March 2021 with 26 undergraduate students (second year, B.A. Curative Education) which were all female and aged between 20 and 49 (M = 24.4). They participated in the context of a phenomenology course at Alanus University (Campus Mannheim) in March 2021 and received partial course credit in exchange. The subjects and the author did not know each other before the course which was given completely online. As participants took this course only as a minor, it is very unlikely that they were familiar with previously published studies of the author. Of course, the study was not discussed prior to or during data collection and participants were not informed about any theoretical or hypothetical explanations of the phenomena in question.

Initially, the sample size was determined based on general recommendations for qualitative in-depth studies (N = 20–30; Dworkin, 2012) and according to the reference study (Nvisual = 25; see below) but can also be justified by more specific criteria. As Guest and colleagues (2020) conclude from their analyses, high thematic saturation can already be reached with 12 data sets; Fugard and Pott’s (2015, p. 6) model suggests 24 participants for a minimum prevalence of 50% of the themes among the population and 10 instances per theme, which is nearly consistent with the reference study. From an inferential statistics perspective, a power of 0.8 can be calculated for a desired effect size of d = 0.8 with our sample sizes, which would be a good basis to work with.

Another remark needs to be made on the one-sided gender distribution of participants. This limitation was not planned and resulted from the given composition of the study cohort with which the experiment was conducted. However, this could be seen as an advantage rather than a disadvantage, as similar studies examining gender differences in ASA tend to show higher performance by males on various tasks. For example, men seem to have a greater ability to ignore task-irrelevant acoustic distractors (Espinoza-Varas & Jajoria, 2009) or to localize target sounds in a multisource sound environment (Lewald & Hausmann, 2013; Zündorf et al., 2011). Also, for preteen children, boys outperformed girls in a frequency discrimination task, which may rather indicate inherent physiological differences between the sexes than socialization effects (Zaltz et al., 2014). We therefore assume that positive results that can be obtained with females are more likely to be generalizable across gender than vice versa, although this would of course need to be tested in further studies.

Data Acquisition

As in some of our former studies, introspective data were collected in the form of open-ended self-reports that were to be written in complete sentences. This method offers several advantages that have proven particularly useful regarding pandemic-related social distancing since no direct contact to the subjects is necessary. Moreover, this non-reactive form of data acquisition serves for eliminating the experimenter or expectation bias which is inherently imminent with interactive techniques, such as, for example, microphenomenological interviews (Petitmengin, 2006; Vermersch, 1999). The think-aloud method (Ericsson, 2003) also does not seem to be appropriate here, as it is incompatible with the auditory task, on the one hand, and impractical for recording rapidly changing perceptual observations without interference, on the other. Another advantage of written self-reports is their quick and easy handling for analysis purposes, as they are submitted in a form which can be immediately processed.

Data Analysis

Data were analyzed with a mixed methods approach including qualitative as well as quantitative aspects. Regarding the former, the self-reports were subjected to a hierarchical coding procedure comprising an initial stage of open coding and two subsequent refinements (Corbin & Strauss, 1990). In this way, we balanced data-driven, inductive analysis and theory-driven, deductive analysis since they are two sides of the same coin. Naturally, researchers search for aspects in the data that are meaningful in the context of their research question some of which occur unexpectedly while others are anticipated based on prior theoretical or empirical work. However, to show that one is not just cherry-picking otherwise useless or contradictory data, it is necessary to also analyze the parts of the data that are not directly related to the research question. The latter turned out to include more obvious aspects of first-person experience, whereas the former refer to more subtle aspects, as will be explained in more detail below. In this way, the complete data could be coded (except few introductory or transitional sentences, numbering characters, and blank spaces). From this exploration of the data, thirty categories emerged finally which were partially grouped by generic topics and lead to 795 coded data segments. In most cases, data were segmented into whole sentences, but sometimes, however, it was necessary to break down longer sentences into two or more parts containing information according to different categories. In cases where it was not possible to split the sentence despite multiple aspects or ambiguities, we also allowed overlapping coding, resulting in 84.8% non-overlapping and 15.2% overlapping coded segments (13.6% double; 1.6% triple; 928 encodings, in total).

On the one hand, overlaps in coding can be viewed critically, as they may indicate an inconsistent category system. On the other hand, this may indicate different levels of meaning that can meet in ambiguous parts of the data. Indeed, from a theoretical perspective, not all categories proved equally relevant in the context of the research questions, which is why we reorganized the set of categories in three hierarchical levels and analyzed them further with varying depth. While the first level refers to multiple, more easily accessible aspects of first-person experience (Table 1), the second focuses on fine-grained forms of mental activity including dynamic and agentive aspects (Table 2). Originally, these activity forms were explored in our studies on visual perceptual reversal (Wagemann et al., 2018; Wagemann, 2020), but they also were present in the current data. The third level is about different aspects of intention related to the activities of the second level (Table 3). Also, this analytic level can be traced to previous work (Wagemann & Raggatz, 2021), as explained above, and thus can be seen to be driven by prior theory building, too.

In consistence with the studies mentioned (2018, 2020) the coding frame was adapted to the auditory context and formulated more concise. For Level 2 the code definitions read as follows:

-

1.

Turning Away (from the stimulus). Fading out, pushing back, turning away from general distractions/irritations as well as individual sounds (even if not successful), supported purely mentally or also physically, preparing a perceptual change or tone discrimination.

-

2.

Producing. Forming helpful thoughts, ideas, associations; activating prior knowledge, memory; mental strategy (partly supported bodily); deciding on a particular tone/change; making aware of the goal.

-

3.

Turning Toward (the stimulus). Focusing attention to the perceptual field and to auditory sensory processes; directing toward ear(s); focusing, searching, trying to perceive, listening out/filtering; aiming for the goal.

-

4.

Perceiving. Success or failure of the intended perception, possibly only partially; actual hearing/perceiving, it goes easily or with difficulty; dwelling on the perception; the goal is reached or not reached.

While Level 2 categories refer to typical forms of mental activity, Level 3 covers parts of the data indicating that different aspects of the task (including these activities) have been performed intentionally, i.e., deliberately attempted or systematically planned. From a theoretical perspective, this aims at an even clearer relation of introspectively observable mental activity to the philosophical criteria of mental agency on different levels. The code definitions presented in the following are compatible with those developed in the 2021 study while also adapted to the current task:

-

1.

Distal Intention. Trying to discriminate or change tones // Trying to discriminate or change tones in a certain way (e.g., fast vs. slow, easy vs. difficult, abrupt vs. continuous) // Trying to discriminate or change tones by unspecific means (e.g., concentration, effort). In fact, these expressions are just other words for discrimination and change, aimed at the result of a particular, sharply defined perception.

-

2.

Proximal Intention. Trying to discriminate or change tones by specifying an interchangeable mental strategy (specific thoughts; content-related/image-related/body-related). This can refer to the content aspect of “Producing” (e.g., internal images, associations, prior knowledge) or to appropriate attitudes of awareness (see Level 2).

-

3.

Executive Intention. Trying to exercise certain forms of activity (one or more) that contribute to tone discrimination or change necessarily (Turning Away, Turning Toward) or make them qualitatively more secure (Perceiving, dwelling on/verifying sounds).

To distinguish distal intentions from proximal and executive intentions: In the former, concentration/attention/awareness, etc., relate directly to the goal (a particular tone/change), but not, as in the latter two, to how one gets or wants to get to the goal. Indicative of proximal and executive intention, phrases such as “in order to” or “by” often occur; in distal intention, however, they do not, since here no details are given about a strategy or its execution. To demarcate distal from executive intention in terms of “Perceiving”: the former is still aiming at the goal, while the latter has already achieved it to a certain extent (the tone is or has already been heard) but seeks to increase the quality of hearing or the certainty of actually hearing the tone even further. Although there were also some references of these categories to aspects of the first coding level, this study was limited to the relationships between the second and the third level.

With view on quantitative analysis, we conducted descriptive and some explorative inferential statistics. In this context, we did not only analyze the actual data but also compared it with those of one previous study regarding the overall number of words written under the different conditions (visual/auditory reversal task) and the use of first-person pronouns as inspired by James Pennebaker and colleagues (Chung & Pennebaker, 2007; Seih et al., 2011). Since such objective measures of the frequency of function words in written language are thought to reflect cognitive processes that are usually pre-reflective, we see some proximity to the attentional processes we studied and decided to include this analysis to triangulate the qualitative and quantitative aspects of the data. Besides this, we analyzed the portions of encoded data on the different categorical levels and in comparison with the results of the previous study.

To give a brief overview of this reference study (Wagemann et al., 2018): It was based on an analogous task and an identical design; it used a Necker cube as a visual stimulus, and the task was to practice a voluntary perceptual reversal between the two main variants for the first 2 or 3 days and then to perform the reversal for the rest of a week and introspectively observe how one would achieve this. The 25 participants (22 females, 3 males) aged between 21 and 50 years (M = 24.0) were second year students in the same study program as in the current study. The study was integrated into the same phenomenology course (February 2018), and information about the theoretical or hypothetical background was also withheld from participants in advance of the study. Data acquisition was also conducted via open-ended written self-reports. Therefore, the experimental conditions are consistent with those of the current study except the auditory nature of the task.

In the present study, intercoder reliability was checked for levels 2 and 3 since coding here is crucial for nominal quantification of the data. For comparison of Level 2 with the previous study, all data coded under categories 1 to 3 were used but, however, only subcategories 4.1 and 4.2 were included because partial success, difficulties, and failure (4.3 and 4.4) were not part of this category in the visual study. In addition, due to the uneven distribution of coded data across the four categories (see Fig. 1), only half of the encodings under subcategories 4.1 and 4.2 were included into intercoder reliability testing for statistical reasons. In this way, coding by the author resulted in 181 segments, that were randomized, blinded, and then reassessed by a second coder who was not involved in the design of the coding frame (see above categories) and initially untrained (Campbell et al., 2013). Computation of Cohen’s Kappa yielded κ = 0.753 which already corresponds to substantial or good agreement (Dawson & Trapp, 2004; McHugh, 2012). Due to overlapping coding, as mentioned, seven segments had to be assigned two different categories, out of which, remarkably, six were matched in exact combinations by both coders. For further refinement, as suggested by O’Connor and Joffe (2020), out of 35 inconsistencies, 16 segments were subjected to a feedback round between coders to clarify understanding and interpretation of the coding scheme, which resolved these cases and ultimately resulted in strong agreement with κ = 0.856.

Structured Category System and Coding Levels. Numbers indicate in how many data sets (participants) encodeable segments were found and how many segments were coded according to a certain category. Solid lines display intra-level connections, while dashed arrow lines show the relations between Level 3 and the other two levels

For Level 3, the same procedure was conducted with all 75 segments, 6 of which were coded twice due to ambiguity. Initially, Cohen’s Kappa yielded κ = 0.660 which is lower than for Level 2 but can still be seen as substantial agreement. Here, 10 out of 14 inconsistently coded segments were reassessed in a feedback round where it turned out that coding at this level is more dependent on the broader context of the segments in the reports. In addition to clarifying subtleties of code definitions, these 10 sentences or sentence fragments were supplemented by one or two preceding or following sentences, resulting in agreement in 9 cases and in κ = 0.828. With six double encodings, five of which were consensual, the proportion of ambiguous interpretations was somewhat higher here.

Results

Before delving into the specificities of the coding levels, the structured category system is presented as a first result indicating both the qualitative relations between the levels and categories and the frequencies of encoded data per category (Fig. 1). Horizontally, the first and last category of Level 1 (Starting; Looking Back) frame the other aspects in a diachronic manner, while vertically the different levels unfold with respect to different observational subtleties. As the most subtle level, Level 3 (Intention/Trying) dominates the schema because it relates to much of the content of the other two levels. Quantitatively, these relations are reversed as Level 1 includes 463 encodings (among all participants), Level 2 includes 384 (among all participants), and Level 3 includes 81 (among 22 participants), for a total of 928.

In comparison with the visual reversal study (see above), we found a significant difference with large effect size in the length of open-ended self-reports. While on the visual reversal task, the 25 participants wrote 196.0 words on average (SD = 112.8), on the auditory task the 26 participants wrote more than twice (M = 459.2 words, SD = 217.9, t(49) = 5.3, p < 0.001; d = 1.48). Frequency analysis of first-person pronouns (I, my, me) in the texts yielded a slight but not significant difference between the visual case (M = 8.71%, SD = 2.9) and the auditory case (M = 9.43%, SD = 3.2), t(49) = , p = 0.403. Compared with Pennebaker and colleagues’ results, this is clearly higher than normal use of first-person pronouns accumulated across genres (without spoken: 5.21%; Chung & Pennebaker, 2007, p. 348) but quite similar to a forced use of first-person pronouns (9.81% on average; Seih et al., 2011, p. 930). It should be added, however, that participants in our study were not instructed to write in the first person and no strong emotions were involved, as in Seih et al. (2011), which puts the comparison somewhat into perspective.

First Level: Multiple Aspects

For a qualitative impression, typical examples of encoded data segments are given in Table 1. Since the full scope of first level aspects cannot be analyzed in this study, two points are highlighted here, which relate to levels 2 and 3. In addition to the predominantly single-coded data, there were, as mentioned, some cases that resulted in overlapping coding due to ambiguous meaning. Here, of particular interest are cases that allow conclusions to be drawn about individual developmental processes, such as Participant 7 who noticed on the third day that her changing between tones was accompanied by corresponding (up/down) eye movements (3.2 Body Resonance). Then she intentionally took up this resonance and used it to support the change (3.3. Body Strategy/6.1 Learning Effect). Others observed and cultivated embodied perception of sounds (3.2 Body Resonance/4.1 Single Tones), such as Participant 14, who exhaled deeply in order to hear the lower tone, because she seemed to feel it in her thorax, or Participant 1 who hummed tones in order to be able to access them in the stimulus.

Apart from body-based strategies, also purely mental aspects of practice were reported in overlap with concentration effort (2.1). Here, enhanced performance was observed for tone change (Part. 13) or holding of one tone (Part. 18). In the context of change, concentration on one single tone (2.2) was described by Participant 16 to be dependent on “mental focusing on the low tone” which reflects the differentiation of a specific mental act and a certain content but does not reveal more fine-grained or dynamic aspects. Without reference to concentration, phenomena of the change dynamic (4.3) were characterized by several participants, such as whether the transition appeared abrupt vs. smooth, fast vs. slow, or that the transition between certain tones was easier vs. more difficult to manage than that between others. Another interesting phenomenon is that during the change some participants (3, 21, and 24) momentarily perceived all tones together before they arrived at the intended tone. Similarly, Participants 14 and 16 reported that they were eventually able to selectively perceive all tones within the overall sound, moving one of them to the fore while relegating the others to the background. This already leads over to the next section.

Second Level: Mental Micro-Activities

Here again, exemplary excerpts from the self-reports are shown in Table 2. Compared to the visual study, not only were the same four categories successfully applied again, but most of them could also be broken down into subcategories. On the one hand, this is due to the participants’ statements, which selectively referred to tone discrimination and change in the first and last category as the two directly stimulus-related ones (Turning Away, Perceiving). On the other hand, two different types of mental strategies could be distinguished in Producing, namely the deployment of prior knowledge about the tones or already experienced reversals (2.1 Anticipation) and involvement of mental images/associations or imagined bodily regions (2.2 Mental Strategy, in the narrower sense). Notably, while the latter did include bodily or physical references, these were purely mental: inner humming, imagination of striking singing bowls, imagination of spatial movement in certain directions (e.g., up/down), visualization of physical scenarios (e.g., ladder/escalator, piano keyboard). This is important to distinguish the mental strategies pertaining to Producing from the body strategies of Level 1, which involve physical actions, or which start from passively experienced resonance between tones and body regions. In contrast, what we denote as mental strategy does not rely on resonance experience but rather independently associates tones with body regions, often combined with additional imaginations (e.g., such as “lines” or “levels”).

Apart from these details, the results at Level 2 give a quite similar picture to the visual case, both in qualitative and quantitative regard. Before presenting the quantitative aspects, the overlapping encodings should be interpreted qualitatively. As reported in the data analysis section, 6 out of 7 double-coded segments were confirmed by coders, and now we can add that all these double-encodings consisted of consecutive categories: While combinations Turning Away/Producing and Turning Toward/Perceiving occurred one time each, Producing/Turning Toward occurred four times. As argued in our earlier study (Wagemann & Raggatz, 2021), we consider this a possible indication that the nominal categories could be interpreted as successive phases of a mental process structure. This is further substantiated by the logical independency of the categories as well as their logical relations. The categories each describe a distinct mental activity gesture that can in principle be performed independently of the others. At the same time, each activity gesture contributes a necessary part without which a perceptual shift cannot be fully accomplished. Based on the decision to undertake a specific change (e.g., low to high), attention must initially be focused on the starting (e.g., low) tone. The change dynamic is opened by leaving the low tone (Turning Away) and to orient on the ‘high tone’. The latter, however, must be prepared by appropriate knowledge about the ‘high tone’ which otherwise remains an abstract goal. Such knowledge is activated in certain ways (Producing) and then used to reorient attention toward the stimulus (Turning Toward). As this dynamic can terminate after each step, also the last step of really perceiving the high tone is not necessarily implied by the preceding steps. This dynamic structure is in principle also described by Posner and Petersen’s phases of attentional shift (disengaging, reorienting, engaging), whereas we can support this from the first-person perspective and differentiate the middle phase even further into Producing and Turning Toward (Posner & Petersen, 1990).

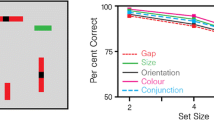

Relying on these arguments, we interpret the nominal categories of Level 2 in an ordinal context as successive phases of a mental process structure, which opens further avenues for quantitative analysis. The results are shown in Table 4 and depicted in Fig. 2a. They illustrate that the auditory and the visual data lead to structurally similar patterns which, however, deviate in a characteristic way. Phases 1 and 2 are more pronounced in the auditory case, whereas this relation is reversed for the third phase; finally, both frequencies are almost equal near hundred percent. While a Chi-square test for the isolated categories did not yield significant differences between the modalities (see Table 4), partially significant differences between the measured values in both modalities and corresponding linear modeling could be shown (see Fig. 2b,c). More specifically, a modified Chi-square test in which the values of the linear regression were taken as expected frequencies yielded a marginally significant difference for Producing, χ(1, N = 51) = 3.0, p = 0.081, and a significant difference for Turning Toward, χ(1, N = 51) = 6.5, p = 0.011. The other two phases (Turning Away, Perceiving) did not differ significantly from the regression line. The question why linear modeling is justified in this case is addressed in the discussion section. Besides, what we regard as an important result of this study, the cross-modal replication of introspectively accessible activity forms, will be debated below.

Comparison and Linear Modeling of Auditory and Visual Change Phases (2nd Level). TA: Turning Away; PR: Producing; TT: Turning Toward; PE: Perceiving. Frequencies of testpersons increase between TA and PE in characteristic patterns that deviate more or less from linear modeling. Differences between the two middle (stimulus-distanced) categories and respective regression lines were (marginally) significant between the auditory and visual study (pPR = .081; pTT = .011)

Third Level: Intention and Trying

For the fact that before the study it was unclear whether and to what extent the aspect of intention/trying can be observed in the context of perceptual change, we can also consider the results at this level as exciting. On the one hand, there is sufficient discriminatory power between the three forms of distal, proximal, and executive intention to draw theoretical implications (see below). On the other hand, it is consistent that these aspects are reported somewhat less frequently by participants than the second-level categories, because here we are dealing with an even more subtle level of inner observation (see Table 3). In any case, it is remarkable that all forms of intention were reported by (almost) the same portion, namely 50%, of the participants each. While this might imply that they are equally relevant forms of intention, it does not allow to draw further conclusions regarding their differences. At best, this can be done indirectly by considering the activities of Level 2 that have been intended or attempted by the various forms of intention in each case. In the previous section, we have already noticed the characteristic pattern of frequencies among participants which now can serve to quantitatively distinguish the forms of intention. Initially, what we defined as proximal intention stands out from executive intentions insofar Producing exceeds the regression line while the latter (especially referring to Turning Away/Toward) lie below, clearly at least in the auditory case (see Fig. 2b,c). Furthermore, distal intention as related to the result-oriented aspect of Perceiving differs markedly from both other forms since it is the absolute maximum in this diagram. This pattern is even more clearly apparent considering the numbers of coded segments in the respective categories (see Fig. 1): Turning Away (35); Producing (63); Turning Toward (40); Perceiving (247).

Against this background, we can evaluate the double encodings and indicate a possible interpretation similarly to the two other levels. Four out of five consensual double encodings included proximal and executive intention, whereas only one referred to distal and executive intention. Here is one example of the dominant proximal/executive-type: “In addition to the association with an object that reminded me of the low tone, I also found it helpful to imagine the low tone before playing the stimulus each day and to ‘already have it in my ear’ in order to make it easier for me to hear it clearly and to focus briefly on it alone” (Part. 26). While the first part of the sentence refers to a mental strategy (association), the second also belongs to the Producing-category (anticipation) but at the same time transfers the proximal intention (which is here more implicit) into an executive intention referring to Turning Toward (third part of the sentence). In comparison, the distal intention “to make the change from high to low” appears to be superficially oriented toward the goal without referring to a possible strategy or concrete steps of execution. Therefore, the three forms of intention can be associated with different levels or types of attention pertaining to one’s own mental processes and activities, in which context the above example describes a transition between the more overarching proximal and the immediately process-related executive level. This line of interpretation will be further pursued and discussed in the next section.

Discussion

What has been elaborated so far can be understood as a mental analogy of auditory stream segregation: By means of an auditory segregation task we demonstrated that it is also possible to segregate, so to speak, the ‘mental stream’ into specific forms or phases of activity such as parsing tones or words in a complex acoustic stimulus. The difference, however, lies in the rather passive and result-oriented character with which auditory objects are noticed in everyday routines or in the context of common experimental paradigms, on the one hand, and the decidedly active and dynamic character which mental micro-gestures and corresponding intentions exhibit when performed and observed in a first-person setting, on the other. This difference, as the results suggest, can be understood in terms of a gradual and developable introspective access to different layers of the perceptual process as reflected by the three levels of hierarchical coding.

In the following, this view should be further substantiated and refined to open a new perspective for mental agency and to discuss this regarding other approaches. To this end, we briefly recapitulate the empirical results and use them for substantiating the existence and structure of an “agentive attention awareness”, as demanded by Watzl (2017, p. 234). Initially, the fact that significantly more words were written in the auditory case than in the visual case suggests the more inward character of audition which apparently invites participants to get in closer touch with the phenomenal and agentive side of their mental processing. The slight difference in first-person pronouns in the auditory and visual data also seems to confirm this, although it was not significant. Nevertheless, both results can be compiled as evidence for the high introspective accessibility of both senses with respect to their mental processing, whereas vision is less predestined in this context, likely due to stronger externalization. This view converges with the specific results of Level 2 in that the frequency patterns of mental activity in both modalities resemble each other and at the same time differ characteristically (though not significantly in statistical terms). In the context of Level 3, it is conclusive that Perceiving, as related to distal intention, is by far the most pronounced category, while Turning Away is the lowest. This can be explained as follows: Turning Away is furthest in sequence from Perceiving representing the distal goal of the task and, additionally, includes the contrary gesture in relation to the stimulus (leaving vs. arriving). Hence, the former is less present in participants’ introspective awareness than the latter, nonetheless it is sufficiently pronounced to be clearly distinguished from the other micro-gestures. Between these two, which in this regard are consistent across modalities, are Producing and Turning Toward located with intermediate frequencies. We see this as a rationale for a linear modeling of the frequencies between easier and more difficult accessible forms of mental activity. On this basis, the shift of frequency patterns for Producing and Turning Toward can be interpreted as further indication of a more inwardly oriented character of audition in relation to vision, because these activities are more distanced from the stimulus than the other two. That these two phases deviate (marginally) significantly upward and downward, respectively, from a linear model of attentional awareness supports their importance for a more sophisticated understanding of perceptual change.

In philosophical regard, this sheds light on the introductory question whether the conscious/unconscious demarcation in perceptual processing is apodictically fixed or if attention can be allocated to the attentional dynamics itself, which would be in favor of a “sense of mental agency”. Presumably, as for general action, this question cannot be unambiguously answered since it depends on enabling or inhibiting constraints effective on different personal and subpersonal levels (Gallagher, 2012). Even if it is unclear how agentive awareness can be specified for the mental case, this should integrate agentive experience and agentive judgment as two aspects between which many approaches navigate (e.g., Horgan et al., 2003; Mylopoulos, 2014; Synofzik et al., 2008). While agentive judgments are mostly seen as higher-level cognitive processes that build on agentive experiences but might also be independent, agentive experiences are deemed to be produced by low-level processes (e.g., neural mechanisms of motor control) which are not accessible to consciousness (Bayne & Pacherie, 2007; Vignemont & Fourneret, 2004). This double indirectness between the (presumed) source of agentive experience and the judgmental classification of a subjective event as an action seems to separate the conscious and non-conscious level quite neatly from each other; however, both move together again regarding mental action. This is because, on the one hand, judging is now itself scrutinized for its actional status and respective forms of agentive awareness (Cassam, 2010; Peacocke, 2007). On the other hand, as already assumed in the introduction, agentive experiences cannot simply be attributed to the sensorimotor system controlling external behavior, insofar as the latter is not relevant in mental actions. Addressing both aspects, Peacocke (2009) claims that action awareness is neither judgmental nor perceptual but rather a kind of immediate first-person access to one’s doing an action, though without clarifying this precisely. Not surprisingly, this is massively questioned from a reductionist perspective (e.g., Carruthers, 2009).

Against this background, we will outline an account associating different levels of agentive awareness with different forms of intentions which extends mental action to perception and clarifies the role concepts and judging play here. First, the importance of the distinction between mental activities (Level 2) and associated intentions (Level 3) should be emphasized again, as supported by Peacocke (2007, p. 373) and as we have done empirically. Introspectively observed and reported mental activities at Level 2 – without explicit indication of being intended – are mental doings and thus “raw” testimonies of agentive awareness. Since they are specific forms of attention, their occurrence also implies a basic form of agentive attention awareness on which attention regulation can build. Introspectively confirmed intentions and tryings at Level 3 referring to mental activities further enhance their agentive status and thus, with view on the above-mentioned criteria, can be considered full-fleshed evidence of agentive attention awareness. This shows a first gradation of somewhat more and less consciously exercised mental activities, which can be further differentiated based on the different forms of intention. Because distal, proximal, and executive intentions come differently close to the nonconceptual level of mental processing, their contribution to agentive awareness also differs. Hence, intentions can also be understood as different kinds of mediators between the nonconceptual level of executed mental activity and appropriate conceptual representations. This suggests conceiving of one and the same activity in terms of a conceptual representation of it or of its corresponding intention, on the one hand, and of its nonconceptual execution without explicit intention, on the other.

From these considerations, an important conclusion can be drawn for the role of phenomenal consciousness for cognitive processing. While in everyday life, peoples’ awareness is fully engaged with certain situations, things, and contents arising seemingly without their active involvement, the results of our study (on Level 2 and 3) show that under suitable conditions the underlying cognitive processes can be consciously observed and controlled to varying degrees. This supports the analogy suggested above between perception in the usual sense and “mental stream segregation,” which at least partially ascribes a “perceptual” dimension to mental life. What there is to discover in each case, whether in the auditory stimulus or in the perceptual process itself, is represented by concepts whose actualization and application make conscious what is represented in each case. However, this does not entail agentive awareness to be necessarily linked to judgmental attributions, but only that the latter make the former explicit and communicable. Without making it explicit, the pre-reflective performance of mental activity might be felt as a vague sense of being actively involved in the process, possibly associated with investing concentration, having certain emotions, and becoming fatigued, as covered by first-level categories. This is also the decisive difference between perception related to sensory data and self-perception related to own activity. For the execution of mental or attentional activity structuring consciousness already warrants the agent an immediate experience of this activity (Watzl, 2017), whereas in the case of sensory perception experience approaches the mental agent as something initially alien (Steiner, 1964; Wagemann et al., 2018; Waldenfels, 2011; Witzenmann, 1983). Apart from this difference, however, corresponding “conceptual capacities” are required in both cases with which subjects make the respective experience rationally accessible and thus explicit (Watzl, 2017, p. 278). Before having applied these capacities, we are in “states of pre-judgmental awareness” which “subjects enjoy […] even if they lack the concepts needed to think about them and critically evaluate their justificatory standing” (Garcia-Carpintero, 2006, p. 76). This spectrum ranging between an intrinsic but provisional agentive experience and perception-like observation of one’s mental actions might reconcile the long-standing dispute about observational (e.g., Lycan, 1996) and non-observational (e.g., Shoemaker, 1994) models of introspection.

In the context of the present study, the concepts helping to make explicit the levels of mental agency can be construed as a subject-level version of Baars’ “goal hierarchy” or as what Watzl calls a “priority system” or “centrality structure” (Baars, 1988; Watzl, 2017; see Fig. 3). Attentional dynamics take place between a prioritized concept and its application to the nonconceptual stimulus, which, as explained, can be sensory or mental. This distinction results from the levels of the priority structure that correspond to the different forms of intention. While distal intentions refer primarily to a certain auditory percept, proximal intentions refer to flexible action plans and thus, in terms of content, aim to an effect of mental activity (Producing). Executive intentions refer directly to invariant forms of mental activity that are indispensable for actualizing and pursuing an action plan and eventually achieving the perceptual goal. Depending on the agent’s previous experience on the perception side (e.g., success, failure), each update of conceptual content (via Producing) may confirm or change conceptual priority for the next attempt. Obviously, prioritizing a more process-related intention must not dismiss the higher-level intentions but can just relegate them to the periphery of attention so that they can continue to guide the process from there. Conversely, however, prioritization of a higher (e.g., distal) intention must not necessarily encompass the deeper (proximal and executive) intentions, especially if their phenomenal targets were not aware so far. Hence, selection of an intentional level can lead to a narrow focus without periphery, as suggested by the tick mark in Fig. 3 (left side), or to a wider range around a focal concept and across the levels which can be deliberately shifted, expanded, or narrowed as needed (see Watzl, 2017, p. 183f., for a similar account; see also Arvidson, 2006) – which, on a personal level, is analogous to a subpersonal “hierarchical shifting of attention” according to the Global Workspace/Working Memory account (Cowan, 2001, p. 93; Dehaene et al., 2014).

Action-State Approach/Levels of Intention. Different levels of intention, conceptually organized in the goal hierarchy (or priority system), reflect different depths of agentive attention awareness. Whether implicitly or explicitly, intentions refer to the execution of characteristic forms of mental activity mediating between the conceptual and the nonconceptual (stimulus) level. Mental states are defined by certain conceptual or perceptual content, while mental actions are independent due to their invariant features

On this view, executed mental activities can have the status of pre-reflective and potential or consciously actualized mental actions, which in any case mediate between conceptual and perceptual states. According to the results, perceptual states are more likely to be conscious than concomitant conceptual states (due to externalization), and states in general seem to be more conscious than mental activities or actions. While states can be defined by certain conceptual or perceptual content, although actions refer to such content, they are specific kinds of interacting with it and thus set apart (Wagemann, 2021). As mental actions possess their own structural content, so to speak, in Turning Away, Producing, Turning Toward, and Perceiving, they are principally independent of the mental states between which they range. This independence is supported by the empirical evidence of mental activities being connected with the different forms of intention. However, regardless of which “intention-attention” (with a narrower or wider periphery) is guiding a discrimination or perceptual change, respective mental activities must be executed in any case. When they are consciously executed, as indicated by much of the data, their conceptual (state) side converges with their perceptual (activity) side, which justifies speaking literally of self-perception in the context of agentive attention awareness. Otherwise, if only distal and proximal intentions are conscious, the executive layer of the mental process remains pre-reflective and seems to be phenomenally inexistent for skeptics. In this gradual sense, intentions can be seen as “mediators of introspection” (Watzl, 2017, p. 228) and, thus, introspection as a legitimate and developable tool of philosophical and psychological inquiry.

Introspection, if deployed in such a systematic and rigorous way, is not too far from meditation which recently turned out to provide promising cues for an understanding of mental action beyond content dependency (Upton & Brent, 2018). Aggregating the two stimulus-oriented (TT, PE) and the two stimulus-averted (TA, PR) activities, respectively, we obtain what in meditation research is known as focused attention and open monitoring (see, e.g., Lutz et al., 2008) and which can also serve as an access route to agentive aspects of cognitive and perceptual problem-solving (Wagemann & Raggatz, 2021). Apart from content-dependent attentional processing, a meditation-based approach to mental action and agentive awareness can already be found in Steiner’s 1918 edition of the Philosophy of freedom in which he grants mental activity the option of self-perception: “On the one hand, intuitively experienced thinking is an active process taking place in the human spirit, on the other hand it is also a spiritual percept grasped without a physical sense organ. It is a percept in which the perceiver is himself active, and a self-activity which is at the same time perceived” (Steiner, 1964, p. 130). Replacing “intuitively experienced thinking” with “introspectively observed cognition”, “spirit” with “consciousness”, and “spiritual” with “mental” yields the philosophical conclusion of this study.

Conclusion

To also provide some psychologically aimed implications, we finally summarize the most important findings, point to limitations of the study, and propose further avenues for experimental research. First, on a qualitative level, this study shows that introspective data pertaining to auditory perceptual change can be reliably coded with cross-modal categories together forming a dynamic structure of conscious and voluntarily controllable attentional activities. Second, from a quantitative perspective, we found that this structure adapts to audition and vision based on characteristic frequency patterns of the two stimulus-distanced activity forms (Producing, Turning Toward) which, moreover, suggests a differentiation of Posner and Petersen’s model of attentional shift regarding the middle phase of reorienting. While with view on our previous studies the external validity of this mental activity structure is initially strengthened (Wagemann et al., 2018; Wagemann, 2020, 2021; Wagemann & Raggatz, 2021; Wagemann & Weger, 2021), it will have to be further tested in experimental work. To this end, we pinpoint two exemplary aspects to be taken up in further research: On the one hand, the limitations of the current sample in terms of gender, age, and educational background, for example, must be overcome. The investigation of more controlled and naïve samples would be desirable and possibly achievable through the development of an appropriate questionnaire. This would also allow the study of larger samples, which could lead to statistical clarification of differences between modalities. Moreover, for example, the question of which mental strategies lead to successful perceptual change and to what extent could also be explored. This already addresses the second desideratum of an expanded triangulation of qualitative data analysis with elaborated quantitative instruments. Here we can also mention the still pending combination of our first-person approach with neurophysiological measures, which could provide more insight into the temporal structure of perceptual change. However, these are only a few options for continuations of this study, and we optimistically look forward to further work devoted to this task in the borderland of psychology and philosophy.

Data Availability

The auditory stimulus and full datasets generated and analyzed during this study are available under https://osf.io/fz26c/?view_only=cdffc44d03534171a59a3d4e61c3b632

References

Alain, C., Arnott, S. R., & Picton, T. W. (2001). Bottom-up and top-down influences on auditory scene analysis: Evidence from event-related brain potentials. Journal of Experimental Psychology: Human Perception and Performance, 27, 1072–1089.

Arango-Muñoz, S., & Bermúdez, J. P. (2018). Remembering as a mental action. In K. Michaelian, D. Debus, & D. Perrin (Eds.), New directions in the philosophy of memory (pp. 75–96). Routledge.

Arvidson, P. S. (2006). The sphere of attention: Context and margin. Springer.

Baars, B. J. (1988). A cognitive theory of consciousness. Cambridge University Press.

Bargh, J., & Chartrand, T. L. (1999). The unbearable automaticity of being. American Psychologist, 54(7), 462–479.

Bayne, T., & Pacherie, E. (2007). Narrators and comparators: The architecture of agentive self-awareness. Synthese, 159, 475–491.

Blauert, J. (1997). Spatial Hearing: The Psychophysics of Human Sound Localization. MIT Press.

Boyle, M. (2009). Active belief. Canadian Journal of Philosophy, 39(1), 119–147.

Bregman, A. S. (1990). Auditory Scene Analysis: The Perceptual Organization of Sound. MIT Press.

Bregman, A. S. (2005). Auditory scene analysis and the role of phenomenology in experimental psychology. Canadian Psychology, 46(1), 32–40.

Bronkhorst, A. W. (2015). The cocktail-party problem revisited: Early processing and selection of multi-talker speech. Attention, Perception, & Psychophysics, 77, 1465–1487.

Buckareff, A. A. (2005). How (not) to think about mental action. Philosophical Explorations, 8(1), 83–89.

Campbell, J. L., Quincy, C., Osserman, J., & Pedersen, O. K. (2013). Coding in-depth semistructured interviews: Problems of unitization and intercoder reliability and agreement. Sociological Methods & Research, 42, 294–320.

Carruthers, P. (2009). Action-awareness and the active mind. Philosophical Papers, 38(2), 133–156.

Cassam, Q. (2010). Judging, believing and thinking. Philosophical Issues, 20, 80–95.

Chung, C. K., & Pennebaker, J. W. (2007). The psychological functions of function words. In K. Fiedler (Ed.), Social Communication (pp. 343–359). Psychology Press.

Corbin, J., & Strauss, A. (1990). Grounded theory research: Procedures, canons, and evaluative criteria. Qualitative Sociology, 13, 3–21.

Cowan, N. (1988). Evolving conceptions of memory storage, selective attention, and their mutual constraint within the human information processing system. Psychological Bulletin, 104, 163–191.

Cowan, N. (2001). The magical number 4 in short-term memory: A reconsideration of mental storage capacity. Behavioral and Brain Sciences, 24, 87–185.

Cusack, R., Deeks, J., Aikman, G., & Carlyon, R. P. (2004). Effects of location, frequency region, and time course of selective attention on Auditory Scene Analysis. Journal of Experimental Psychology: Human Perception and Performance, 30(4), 643–656.

Curtu, R., Wang, X., Brunton, B. W., & Nourski, K. V. (2019). Neural signatures of auditory perceptual bistability revealed by large-scale human intracranial recordings. The Journal of Neuroscience, 39(33), 6482–6497.

Danziger, K. (1980). The history of introspection reconsidered. Journal of the History of the Behavioral Sciences, 16, 242–261.

Dawson, B., & Trapp, R. G. (2004). Basic and clinical biostatistics. (4th ed.). Lange Medical Books.

Dehaene, S., Charles, L., King, J.-R., & Marti, S. (2014). Toward a computational theory of conscious processing. Current Opinion in Neurobiology, 25, 76–84.

Dworkin, S. L. (2012). Sample size policy for qualitative studies using in-depth interviews. Archives of Sexual Behavior, 41, 1319–1320.

Ericsson, K. A. (2003). Valid and non-reactive verbalization of thoughts during performance of tasks towards a solution to the central Problems of introspection as a source of scientifc data. Journal of Consciousness Studies, 10(9–10), 1–18.

Espinoza-Varas, B., & Jajoria, P. (2009). Gender differences in auditory selective attention in the context of variable-frequency post-target distractors. Proceedings of Meetings on Acoustics, USA, 157(6). https://doi.org/10.1121/2.0000665

Fiebich, A., & Michael, J. (2015). Mental actions and mental agency. Review of Philosophy and Psychology, 6(4), 683–693.

Firestone, C., & Scholl, B. J. (2016). Cognition does not affect perception: Evaluating the evidence for ‘top-down’ effects. The Behavioral and Brain Sciences, 39, 1–77.

Fugard, A. J. B., & Potts, H. W. W. (2015). Supporting thinking on sample sizes for thematic analyses: A quantitative tool. International Journal of Social Research Methodology: Theory & Practice, 18(6), 669–684.

Gallagher, S. (2012). Multiple aspects in the sense of agency. New Ideas in Psychology, 30, 15–31.

Garcia-Carpintero, M. (2006). The structure of nonconceptual content. European Review of Philosophy, 6, 65–79.

Gibson, J. J. (1966). The senses considered as perceptual systems. Houghton Mifflin.

Gibson, J. J. (1972). A Theory of Direct Visual Perception. In J. Royce & W. Rozenboom (Eds.), The Psychology of Knowing. Gordon & Breach.

Gregory, R. L. (1970). The Intelligent Eye. Weidenfeld and Nicolson.

Guest, G., Namey, E., & Chen, M. (2020). A simple method to assess and report thematic saturation in qualitative research. PloS one, 15(5), e0232076.

Hartmann, W. M., & Johnson, D. (1991). Stream segregation and peripheral channeling. Music Perception, 9, 155–184.

Hartmann, W. M., & Wittenberg, A. (1996). On the Externalization of Sound Images. Journal of the Acoustical Society of America, 99(6), 3678–3688.

Horgan, T., Tienson, J., & Graham, G. (2003). The phenomenology of first-person agency. In S. Walter, & H. D. Heckmann (Eds.), Physicalism and mental causation: The metaphysics of mind and action. Exeter: Imprint Academic, 323–340.

Kornmeier, J., Hein, C. M., & Bach, M. (2009). Multistable perception: When bottom-up and top-down coincide. Brain and Cognition, 69, 138–147.

Lewald, J., & Hausmann, M. (2013). Effects of sex and age on auditory spatial scene analysis. Hearing Research, 299, 46–52.

Liebert, R. M., & Burk, B. (1985). Voluntary control of reversible figures. Perceptual & Motor Skills, 61(2), 1307–1310.

Locke, E. A. (2009). It’s time we brought introspection out of the closet. Perspectives of Psychological Science, 4(1), 24–25.

Lutz, A., Slagter, H., Dunne, J., & Davidson, R. (2008). Attention regulation and monitoring in meditation. Trends in Cognitive Sciences, 12(4), 163–169.

Lycan, W. (1996). Consciousness and experience. MIT Press.

Marr, D. (1982). Vision. A computational investigation into the human representation and processing of visual information. W. H. Freeman.

McAdams, S. (2002). Auditory perception and cognition. In H. Pashler & D. Medin (Eds.), Steven's handbook of experimental psychology: Sensation and perception (Vol. I; pp. 397–452). John Wiley & Sons Inc.

McHugh, M. L. (2012). Interrater reliability: The kappa statistic. Biochemia Medica, 22(3), 276–282.

Mele, A. R. (1992). Springs of action. Oxford University Press.

Moray, N. (1959). Attention in dichotic listening: Affective cues and the influence of instructions. The Quarterly Journal of Experimental Psychology, 11, 56–60.

Mylopoulos, M. I. (2014). Agentive awareness is not sensory awareness. Philosophical Studies, 172(3), 761–780.

Necker, L. (1832). Observations on some remarkable optical phaenomena seen in Switzerland; and on an optical phaenomenon which occurs on viewing a Figure of a crystal or geometrical solid. Philosophical Magazine and Journal of Science, 1, 329–337.

Nudds, M. (2001). Experiencing the production of sounds. European Journal of Philosophy, 9, 210–229.

O’Callaghan, C. (2009). Audition. In S. Robins, J. Symons, & P. Calvo (Eds.), Routledge Companion to the Philosophy of Psychology (pp. 579–591). Routledge.

O’Callaghan, C. (2019). A Multisensory Philosophy of Perception. Oxford University Press.

O’Connor, C., & Joffe, H. (2020). Intercoder reliability in qualitative research: Debates and practical guidelines. International Journal of Qualitative Methods, 19, 1–13.

O’Shaughnessy, B. (2000). Consciousness and the world. Oxford University Press.

Owens, D. (2009). Freedom and practical judgement. In L. O’Brien & M. Soteriou (Eds.), Mental actions (pp. 121–237). Oxford University Press.

Pacherie, E. (2008). The phenomenology of action: A conceptual framework. Cognition, 107(1), 179–217.

Peacocke, C. (2007). Mental action and self-awareness (I). In B. McLaughlin & J. D. Cohen (Eds.), Contemporary debates in philosophy of mind (pp. 358–376). Blackwell.

Peacocke, C. (2009). Mental action and self-awareness (II). In L. O’Brien & M. Soteriou (Eds.), Mental actions (pp. 192–214). Oxford University Press.

Petitmengin, C. (2006). Describing one’s subjective experience in the second person: An interview method for the science of consciousness. Phenomenology and the Cognitive Sciences, 5, 229–269.

Posner, M. I., & Petersen, S. E. (1990). The attention system of the human brain. Annual Reviews in Neuroscience, 13, 25–42.

Rock, I. (1997). Indirect Perception. MIT Press.

Ruben, D. (1995). Mental overpopulation and the problem of action. Journal of Philosophical Research, 20, 511–524.

Ryle, G. (2009). The concept of mind. (60th anniversary edition). Routledge

Seih, Y. T., Chung, C. K., & Pennebaker, J. W. (2011). Experimental manipulations of perspective taking and perspective switching in expressive writing. Cognition and Emotion, 25(5), 926–938.

Shoemaker, S. (1994). Self-Knowledge and ‘Inner-Sense.’ Philosophy and Phenomenological Research, 54, 249–314.

Snyder, J. S., Alain, C., & Picton, T. W. (2006). Effects of attention on neuroelectric correlates of auditory stream segregation. Journal of Cognitive Neuroscience, 18(1), 1–13.

Steiner, R. (1964). The philosophy of freedom (M. Wilson, Trans.). Forest Row: Rudolf Steiner Press. (Original work published 1918) Steven’s handbook of experimental psychology: Sensation and perception (Vol. I, pp. 397–452). John Wiley & Sons Inc.

Strawson, G. (2003). Mental ballistics or the involuntariness of spontaneity. Proceedings of the Aristotelian Society, 103, 227–256.

Strawson, P. F. (1959). Individuals. Routledge.

Suzuki, S., & Peterson, M. A. (2000). Multiplicative effects of intention on the perception of bistable apparent motion. Psychological Science, 11, 202–209.

Synofzik, M., Vosgerau, G., & Newen, A. (2008). Beyond the comparator model: A multifactorial two-step account of agency. Consciousness and Cognition, 17(1), 219–239.

Upton, C. L., & Brent, M. (2018). Meditation and the scope of mental action. Philosophical Psychology, 32(1), 52–71.

van Ee, R., Dam, L. E., & Brouwer, G. J. (2005). Voluntary control and the dynamics of perceptual bi-stability. Vision Research, 45, 45–55.

van Noorden, L. P. (1975). Temporal coherence in the perception of tone sequences. Unpublished doctoral dissertation, Eindhoven University of Technology, Eindhoven, the Netherlands.

Vermersch, P. (1999). Introspection as practice. Journal of Consciousness Studies, 6(2–3), 17–42.

Vickers, E. (2009). Fixing the phantom center: Diffusing acoustical crosstalk. The Audio Engineering Society, 127th Convention, Paper 7916.

Vignemont, F., & Fourneret, P. (2004). The sense of agency: A philosophical and empirical review of the “Who” system. Consciousness and Cognition, 13, 1–19.

von Helmholtz, W. (1867). Handbuch der physiologischen Optik. Leopold Voss.

Wagemann, J. (2020). Mental action and emotion—What happens in the mind when the stimulus changes but not the perceptual intention. New Ideas in Psychology, 56, Article 100747.

Wagemann, J. (2021). Exploring the structure of mental action in directed thought. Philosophical Psychology. Advance online publication. https://doi.org/10.1080/09515089.2021.1951195

Wagemann, J., & Raggatz, J. (2021). First-person dimensions of mental agency in visual counting of moving objects. Cognitive Processing, 22(3), 453–473.

Wagemann, J., & Weger, U. (2021). Perceiving the other self. An experimental first-person account to nonverbal social interaction. American Journal of Psychology, 134(4), 441–461.

Wagemann, J., Edelhäuser, F., & Weger, U. (2018). Outer and inner dimensions of the brain-consciousness relation—Refining and integrating the phenomenal layers. Advances in Cognitive Psychology, 14(4), 167–185.

Waldenfels, B. (2011). Phenomenology of the alien: Basic concepts. Northwestern University Press.

Watzl, S. (2017). Structuring mind. The nature of attention and how it shapes consciousness. Oxford University Press.

Witzenmann, H. (1983). Strukturphänomenologie. Vorbewusstes Gestaltbilden im erkennenden Wirklichkeitenthüllen [Structure phenomenology. Preconscious formation in the epistemic disclosure of reality]. Dornach: Gideon Spicker.

Witzenmann, H. (1989). Sinn und Sein [Sense and being]. Freies Geistesleben.