Abstract

Interpreting other’s actions is a very important ability not only in social life, but also in interactive sports. Previous experiments have demonstrated good estimation performances for the weight of lifted objects through point-light displays. The basis for these performances is commonly assigned to the concept of motor simulation regarding observed actions. In this study, we investigated the weak version of the motor simulation hypothesis which claims that the goal of an observed action strongly influences its understanding (Fogassi, Ferrari, Gesierich, Rozzi, Chersi, & Rizzolatti, 2005). Therefore, we conducted a weight judgement task with point-light displays and showed participants videos of a model lifting and lowering three different weights. The experimental manipulation consisted of a goal change of these actions by showing the videos normal and in a time-reversed order of sequence. The results show a systematic overestimation of weights for time-reversed lowering actions (thus looking like lifting actions) while weight estimations for time-reversed lifting actions did not differ from the original playback direction. The results are discussed in terms of motor simulation and different kinematic profiles of the presented actions.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

In order to interpret or anticipate what others intend to do, we are able to extrapolate their intentions by “reading” their movements (or their minds) (Sebanz, Bekkering, & Knoblich, 2006). Not only is this a very important ability of social interaction in everyday life, but also a crucial competence for athletes in interactive sports, like table tennis or fencing. To prevail in sport competitions, athletes need to know in advance what their opponents are intending on doing. With this knowledge, they can prepare in a sufficient amount of time for an appropriate (re)action. Therefore, it is not surprising that humans are very sensitive to biological motion. Neurophysiological studies show that biological motion selectively activates specific areas/domains in the human brain (e.g. areas in the inferior frontal gyrus and the superior temporal sulcus) (Giese & Poggio, 2003). A variety of behavioural studies showed that humans can extract a great amount of information of another person’s actions even though information is limited. For example, we can identify gender (Kozlowski & Cutting, 1977; Pollick, Kay, Heim, & Stringer, 2005) and mood of a walking person (Dittrich, Troscianko, Lea, & Morgan, 1996). Further, we can identify and categorise the action a model is performing (Johansson, 1973), and – this especially is interesting for this study – we can judge the weight a person is lifting (Bingham, 1993; Grierson, Ohson, & Lyons, 2013; Runeson & Frykholm, 1981; Shim, Hecht, Lee, Yook, & Kim, 2009) by observing those actions in point-light (or stick diagram) displays. These performances are based on the analysis of salient point lights fixed to the main joints of a human model. That is, the kinematic profiles of these moving points provide sufficient information for the observer to dissect the action (Blake & Shiffrar, 2007; Viviani, Figliozzi, & Lacquaniti, 2011b).

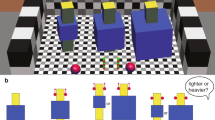

In weight estimation tasks, a person is displayed lifting objects of different weights and participants are asked to judge the weight. It is not possible to identify the weight from the object (because they all look alike, e.g. different heavy boxes with the same dimensions) but by observing the body movement of the lifter. Participants are usually very successful in accomplishing those tasks even though the lifter is just depicted as point light figure or the lifted object is not displayed (Bingham, 1993; Grierson et al., 2013; Runeson & Frykholm, 1981; Shim et al., 2009). It is an open debate whether good estimation performances of observed lifting actions are based on visual or motor experiences. This study aims to provide new insights by using stimuli that look plausible in normal and time-reversed video play back (lifting and lowering an object). The alteration of the video play back direction makes it possible to change the goal of an action (e.g. from lifting to placing an object) while its average kinematic profile is preserved.

There are two main hypotheses discussed to explain good identification performances of observed biological motion: the motor simulation hypothesis (MSH, e.g. Gallese, Fadiga, Fogassi, & Rizzolatti, 1996; Gallese & Goldman, 1998; sometimes referred to as the direct matching hypothesis, Rizzolatti, Fogassi, & Gallese, 2001) and the visual analysis hypothesis (VAH, e.g. Johansson, 1973; Rizzolatti et al., 2001). The MSH assumes that our own motor system contributes to the understanding of another person’s actions. That is, we simulate the observed actions of others through our own motor system. By doing so, we can identify the goals or inner states of observed persons, which help us to anticipate their future actions (Gallese & Goldman, 1998). At the neurological level this ability is based on the mirror neuron system that was first found in macaque monkeys (di Pellegrino, Fadiga, Fogassi, Gallese, & Rizzolatti, 1992; Rizzolatti, Fadiga, Gallese, & Fogassi, 1996a; Gallese et al., 1996) and was soon afterwards also found in the human brain (Fadiga, Fogassi, Pavesi, & Rizzolatti, 1995; Rizzolatti et al., 1996b). “MNs [mirror neurons] respond both when a particular action is performed by the recorded monkey and when the same action performed by another individual is observed” (Gallese & Goldman, 1998, p. 495). The MSH postulates that an externally triggered action plan is activated in the observer – not for its execution – but for putting oneself in the observed person’s situation. The activation creates analogous mental occurrences in the observer as in the observed person, which allows ‘mind-reading’ of the person’s goals and intentions (Gallese & Goldman, 1998).

Two variations of the MSH have been postulated: a strong version (Gallese, Keysers, & Rizzolatti, 2004; Rizzolatti et al., 2001) and a weak version (Fogassi et al., 2005). The weak version of the MSH assumes that the goal of the observed action is mainly represented by motor simulation in the observer whereas the kinematic details of that action are less important. This assumption is consistent with the finding that the mirror neuron system of the monkey codes the same act (in this case grasping) differently depending on the goal of the action (Fogassi et al., 2005). Thereby, other factors like grasp intensity or movement kinematics were ruled out to be responsible for the different activation patterns. The strong version suggests that all parts of the action plan of observed actions (for example, the exerted force and the movement kinematics) are simulated by the motor system of the observer based on the mirror neuron system. This assumption refers to the observation that the human mirror neuron system, in contrast to that of monkeys, is also active when objectless movements of an actor are observed (Gallese et al., 2004).

The visual analysis hypothesis assumes that every element which forms an action are analysed purely based on visual information without involving the observer’s motor system (Giese & Poggio, 2003; Rizzolatti et al., 2001). Giese and Poggio (2003) proposed a neurophysiological model with two pathways: one for form processing (related to features like orientation, body shapes, etc.) and one for motion processing. This model assumes that biological motion is recognized by a set of learned patterns. “These patterns are encoded as sequences of ‘snapshots’ of body shapes by neurons in the form pathway, and by sequences of complex optic flow patterns in the motion pathway” (Giese & Poggio, 2003, p. 181). Thereby, the form pathway is located in the ventral processing stream and the motion pathway is located in the dorsal processing stream referring to the two visual pathways hypothesis by Goodale and Milner (1992).

Several studies tested the contributions of the aforementioned hypotheses to explain weight judgement performances by humans observing an actor lifting different weights (Auvray, Hoellinger, Hanneton, & Roby-Brami, 2011; Hamilton, Joyce, Flanagan, Frith, & Wolpert, 2007; Maguinness, Setti, Roudaia, & Kenny, 2013). Auvray et al. (2011) tested all three explanations with several experiments. They found evidence favouring the weak version of the MSH. Their main argument against the strong version of the MSH was based on the findings in Experiment 2. Here, five different kinematic parameters were identified that varied consistently with the lifting action (action execution) with different weights. However, observer’s weight judgements were mainly based on one parameter - in particular acceleration - although this parameter “explained a relatively small part of the variance of the information related to weight during action execution” (Auvray et al., 2011, p. 1100). Furthermore, showing the observer more details of the executed action (only the hand and the lifted object, each represented as one moving point vs. a stick diagram of the whole grasping arm) did not increase weight judgement performance (Experiment 1). The strongest evidence against the visual analysis hypothesis results from Experiment 3, where subjects were confronted with their own lifting movements and those of others. Even though subjects failed to recognise their own actions above chance level, they relied on different kinematic parameters when judging their own lifting movements compared to the movements of others.

Hamilton et al. (2007) also found evidence against the strong version of the MSH. In line with Auvray et al. (2011), they investigated lifting actions with displays showing just the arm of a model grasping a small box. They also found a difference between kinematic parameters that correlated with weight and the kinematic information used for weight judgements. Subjects did not use grasp information for their judgements even though grasp duration was a good predictor of weight.

In contrast, there are several neurophysiological studies that have found evidence in favour of the strong version of the MSH (Alaerts, Senot, Swinnen, Craighero, Wenderoth, & Fadiga, 2010; Aziz-Zadeh, Maeda, Zaidel, Mazziotta, & Iacoboni, 2002; Borroni & Baldissera, 2008; Montagna, Cerri, Borroni, & Baldissera, 2005; Strafella & Paus, 2000). By using transcranial magnetic stimulation (TMS), these studies showed activity in the primary motor cortex elicited by different parameters of an observed action. The authors detected that this activity is effector specific (Aziz-Zadeh et al., 2002), muscle specific (Strafella & Paus, 2000), and synchronized to the temporal characteristics of the observed action (Montagna et al., 2005). Furthermore, Alaerts et al., (2010) showed that corticospinal excitability while observing a model grasping and lifting an object was not only modulated by the identity of the involved muscles, but also by the exerted force of the observed action. Results revealed higher corticospinal activity in the observer when the model lifted a heavy weight as compared to a light weight.

In summary, it remains unclear to what extent the motor system of an observer is involved in weight judgement tasks. The depicted behavioural studies found evidence in favour of the weaker version of the MSH, whereas the findings of the neurophysiological studies correspond to the assumptions of the strong version of the MSH more. Therefore, this study aimed at testing the weak version of the MSH. Hence, the action goal serves as a crucial hint in the interpretation of other’s actions. Therefore, the experimental manipulation was to alter the action goal of lifting and lowering actions. We implemented this by showing lifting and lowering actions normal and in a time-reversed order of sequence. Using this procedure, to lift an object changes to placing an object while the average kinematic profiles of the actions remain the same (Lestou, Pollick, & Kourtzi, 2008). Based on the weak version of the MSH, we hypothesize that weight judgements will differ between the video play back directions even though the average movement kinematics remain constant.

Method

Participants

Thirty students (7 females, mean age: 23.3 ± 2.8 years) from the University of Kassel participated in the experiment. They were not informed about the manipulations and the hypotheses of the experiment. All individual participants included in the study gave their written consent.

Apparatus and Stimuli

The experiment was conducted on a laptop (Fujitsu Lifebook, Windows 7 SP1). Stimulus presentations and response recordings were performed/controlled by the software E-Prime 2.0 (Psychology Software Tools (PST), Inc., Sharpsburg, USA). The keys “8”, “5 “and “2” from the numeric keypad on the right side of the laptop keyboard were used as response inputs.

A male person (181 cm, 75 kg, 23 years) served as a model to produce the point-light stimuli. Eighteen 15 mm reflective markers were attached bilaterally to bony points of reference (the second metatarsal toe, heel, ankle, knee, great trochanter, acromion, elbow, wrist, middle finger and right above the ears). Marker trajectories were captured by a six-camera motion capture system (Oqus 3+, Qualisys AB, Gothenburg, Sweden) operating at 100 Hz. The model lifted and lowered each weight consecutively (12, 24 and 36 kg). The three recordings were divided into the lifting and the lowering part of the action. The 3-D coordinates of all markers were exported and entered into Matlab R2012b (Version 8.0.0.783) for video production of point-light displays and manipulation. We created an artificial marker at the midpoint between the head-markers. This virtual marker was the only head-marker visual to the participants. All movements were shown from a lateral perspective with a camera position of 1.75 m in vertical height and 3 m apart from the model. The markers were displayed as small-sized white circles on a black background. The stimulus size was 7.4° in height and 1.7° in width for the upright posture, and 5° in height and 2.5° in width for the stooped posture (see supplementary material available with this article online). All videos were cut to the same length depending on the longest movement duration (2.5 s, lowering of 36 kg). This means that the first frame of lowering 36 kg showed the model standing upright holding a box (not visible) in front of their body. In the second frame the lowering movement of the box begun. The last frame showed the moment the lowered object was placed on the ground. For the 24 kg- and 12 kg-condition, the model was shown 12 and four frames longer standing in the upright posture holding the box. The first frame of all lifting movements showed the model grasping the box but not yet starting to lift it. The movement begun within the second frame. Lifting movements took less time than lowering movements. To achieve equal stimuli lengths, the model was shown standing upright with the lifted box for 14 (12 kg), 10 (24 kg), and 9 (36 kg) frames. Videos were extended in the upright posture in order to avoid an attentional focus of the observer on the grasp phase of time-reversed lowering and original lifting movements, since grasp duration is a crucial factor for weight estimation performance (Hamilton et al., 2007).

Finally, the videos of all (original) lifting and lowering movements were time-reversed (see Table 1). That is, the sequence of frames was rendered from the last to the first frame. Furthermore, all videos were mirrored by 180° (at the vertical midline) to avoid a bias based on viewing preferences a moving person is directed to (Maass, Pagani, & Berta, 2007). The 24 final videos were sized 720 × 576 pixels, downsampled to 25 fps and were saved in mp4-file format.

Kinematic Analyses of the Stimuli

The movement kinematics of the depicted actions in the stimulus set were analysed for lifting and lowering the three different weights. Therefore, the centre of mass (CoM) of the male model performing the lifting and lowering action was calculated using Simi Motion (Version 8.0). The CoM reflects a hypothetical point were the mass of a human body is concentrated und thus allows for a simplification of human body motions. We applied the centre of mass model by Dempster (1955) to determine the velocity and acceleration of the CoM for the duration of the different actions. Velocity and acceleration have previously been demonstrated to be relevant cues for weight estimation performances (Auvray et al., 2011; Shim et al., 2009).

Design and Procedure

The experiment consisted of a within-subject design with the following conditions: two movement types (lifting and lowering), three weights (light, middle, and heavy), two video playback directions (N = normal and R = reversed) and two directions of view (to the left, to the right).

The experiment took part in a softly lit room. Participants were seated at a desk approximately 50 cm away from the laptop display. All participants were instructed in written form at the beginning of the experimental session. Afterwards, they received an explanation about the realisation of a point-light figure by showing them videos of a real person (prepared with markers) lifting and lowering a box accompanied by a corresponding point-light figure performing the same actions at the same time. Subsequently, each participant completed four blocks with 24 trials in random order (3 lifting videos with 3 weights, 3 lowering videos with 3 weights × 2 (time reversed) × 2 viewing directions). Please note that participants were not aware of the video play back manipulation. Furthermore, the videos contained no piece of information that made it possible to distinguish between a normal or reversed movement. At the beginning of the block two anchor videos of a lifting and a lowering movement were shown as a reference (normal play back direction). Participants were informed about the relative weight being moved here (middle weight). Then, they were prompted to make estimates of the weights within the next eight trials before the two anchor videos were shown again. This sequence of events was repeated eleven times, resulting in a total of 96 video ratings. Participants entered their weight judgements after each trial by pressing one of three keys of the numeric keypad on the laptop’s keyboard. Therefore, the number “2” was assigned to a light weight, the number “5” to a middle weight and the number “8” to a heavy weight. Participants had no time pressure for their judgement. The experiment waited until an input was registered. Every 24 trials a pause was offered. Participants were able to continue as soon as they felt ready.

Statistics

The weight judgements of all participants were assigned to an ordinal scale ranging from 1 to 3 (1 representing the light weight and 3 the heavy weight). The median judgements for every participant and the condition movement type, weight and video play back direction were analysed using non-parametric statistical tests. We performed Friedman Tests for every movement type (lifting, lowering) combined with every video playback direction (N, R). For post hoc analyses, Wilcoxon tests were used. We performed Bonferroni-Holm adjustments to avoid alpha error inflation (Holm, 1979). An alpha error of 5% was set for all analyses.

Results

Experiment

Friedman Tests revealed significant effects for every movement type combined with every video playback direction, lifting (N): χ2(2) = 28.8; p < .001; lifting (R): χ2(2) = 14.3; p = .002; lowering (N): χ2(2) = 15.5; p < .001; lowering (R): χ2(2) = 8.7; p = .013. Post hoc Wilcoxon Test showed significant differences in weight judgements of all weights (1.4 vs. 1.8 vs. 2.1) in the lifting (N) condition (see Table 2), light vs. middle Z = −2.9; p = .008; r = −.52; middle vs. heavy Z = −2.9; p = .008; r = −.52; light vs. heavy Z = −4.4; p < .001; r = −.8. For weight judgments in the lifting (R) condition (1.6 vs. 1.9 vs. 2.1) the post hoc test results were comparable except for the difference between the middle and the heavy weight, light vs. middle Z = −2.5; p = .024; r = −.46; middle vs. heavy Z = −1.7; p = .098; r = −.3; light vs. heavy Z = −3.2; p = .003; r = −.6. The distinction between the different weights for the lowering (N) condition (2.3 vs. 2.0 vs. 2.5) was less clear than for both lifting conditions. Post hoc tests showed a significant difference in weight judgements of the middle and heavy weight, only, light vs. middle Z = −2.2; p = .058; r = −.4; middle vs. heavy Z = −3; p = .009; r = −.54; light vs. heavy Z = −1; p = .32; r = −.18. For the lowering (R) condition there were no significant differences within the median weight judgements (2.5, 2.4, 2.7), light vs. middle Z = −0.3; p = .771; r = −.05; middle vs. heavy Z = −2.2; p = .063; r = −.4; light vs. heavy Z = −2.3; p = .063; r = −.4.

To test the impact of video playback direction on weight estimations a Wilcoxon test was performed. Results show no impact of normal and time-reversed video playback direction for lifting movements (1.85 vs. 1.8), Z = −0.5; p = .59; r = −.1. However, the Wilcoxon test revealed an impact of normal and time-reversed video playback direction for lowering movements (2.25 vs. 2.57) (see Table 2), Z = −2.7; p = .014; r = −.5.

Kinematics of the Stimulus Material

Figs. 1 and 2 show the time course of the velocity and acceleration of the CoM calculated for lifting and lowering actions performed by the stimulus model. Both parameters reflect the motion of the CoM calculated from the joint markers of the model in the two-dimensional plane of the presented videos.

Discussion

In this study, we investigated the weak version of the MSH by analysing weight judgements deduced from observed lifting and lowering movements. According to this hypothesis, we assumed different weight judgments for time-reversed videos compared to videos played back in normal temporal order. Our results could partially confirm the weak version of the MSH as weight judgements differed from the observation of both lowering conditions, but not for lifting movements. The observation of time-reversed lowering movements (thus looking like lifting movements) led to a significant general overestimation of the moved weights. In contrast, the observation of time-reversed lifting movements (thus looking like lowering movements) did not affect weight judgement performance.

Participants showed an excellent performance in their weight judgements for lifting (N) actions by just observing an acting point-light figure. On average, all three weights were judged significantly different from one another. This result is in line with literature using weight judgement tasks with full body point-light displays (e.g. Bingham, 1993; Grierson et al., 2013; Runeson & Frykholm, 1981; Shim, Carlton, & Kim, 2004). On the other hand, weight judgement performances for lowering (N) movements were poor. Participants classified the middle weight as lighter as the lightest weight, and they were not able to differentiate between the light and the heavy weight. This result could be the consequence of the less systematic variations of several kinematic parameters (see Figs. 1 and 2) within the different weights compared to the lifting action. Lifting velocity (of the box or the joints) represents a crucial cue for weight judgements (Shim et al., 2009; Shim & Carlton, 1997).

However, the weight estimation results for lowering movements do not correspond with the assumption of the use of peak lift velocity (represented in this study by the velocity of the model) as the main cue. The middle weight was judged as the lightest weight in this condition although peak velocity was nearly the same for the middle and the light weight. Hence, the peak velocity of the CoM was a less valid cue to judge the weight in lowering movements compared to lifting movements. However, as previously shown by Auvray et al. (2011), acceleration seemed to be the more informative cue here (see Fig. 2). For the lowering movements, the downward acceleration of the CoM was overall highest for the middle weight with a smaller deceleration compared to the lightest weight. Thus, participants might have relied on this parameter more in their weight judgements.

We investigated the impact of the action goal for weight judgements by playing back the original actions in reversed temporal order. This way, we preserved the kinematics (on average) but changed the goal of the action as described by Lestou et al. (2008). Changing the action goal of a lowering movement seemed to activate top-down processes of motor-related areas leading to a re-evaluation of the same kinematic profile (Viviani, Figliozzi, Campione, & Lacquaniti, 2011a). Since a differentiation between the three weights was not successful participants overestimated the moved weights significantly. Thus, imprecise weight estimations for time-reversed lowering movements seem to be the result of motor simulations of the goal of the observed action and their kinematics. This interpretation of our results corresponds with findings of Lestou et al. (2008) who showed by a fMRI analysis that the kinematic details as well as the action goal were processed concurrently but in different regions of the brain. They presented subjects two successive videos of actions that were (a) identical, (b) had different kinematics (on temporal level) but the same goal, (c) had different kinematics and different goals or (d) had different goals but the same average kinematics. The latter condition was achieved by video playback in reversed direction (like in the present study). The displayed actions (just the right arm from the right sagittal plane as point-light figure) were lifting and throwing movements that changed to placing or catching (or pulling something towards oneself) when displayed time-reversed. Subjects were either demanded to solely observe the videos, or to mentally imitate the observed actions. The authors analysed the brain scans of the subjects while observing or imitating the two sequentially presented stimuli. Their results showed increased brain activity in the parietal cortex, premotor cortex and superior temporal cortex for condition (d). For condition (b), brain activity was increased in the parietal cortex and superior temporal cortex but not in the premotor cortex. Thus, the authors argue “that processing in the PMv [ventral premotor cortex] may mediate the exact copying of complex movements, whereas processing in parietal and superior temporal areas may support the interpretation of abstract action goals and movement styles” (Lestou et al., 2008, p. 334). This neurophysiological evidence and the results of our study for the lowering (R) condition underline the importance of action goals in the interpretations of others.

Albeit, from a biomechanical point of view, the question arises if the procedure of video playback direction change truly results in two actions with the same kinematics. Kinematics describes the motion of points or objects with the dimensions time, position, velocity and acceleration. Reversing the timeline changes the geometry of an action in such a way that it begins with its end and ends with its beginning. By this, acceleration converts to deceleration and vice versa. Furthermore, geometric “shapes” resulting of the motion move in the opposite direction and thus take place to different points in time compared to its original. With this in mind, the kinematics of an action is not the same when the timeline is changed. In spite of this problem, we follow the method and use the labels of changing the goal of an action by changing the video playback direction as described by Lestou et al. (2008).

Even though our results for the lowering (R) condition emphasise the importance of the action goal, it is alleviated in our results for lifting actions. Although the kinematic profiles of lifting (N) and lowering (N) actions differ in many aspects, there seemed to be no substantial influence of top-down processes inherent in participant’s weight estimations. A lowering action is characterised by a distinct deceleration of the CoM at the end of the movement in order to place the object safely on the floor (see Fig. 2). In addition, the movement velocity is slower in general (see Fig. 1). These specific kinematic features are not inherent in time-reversed lifting movements. If goal processing is an important factor in motor simulation, then an underestimation of the perceived weights should be expected. Indeed, compared to the estimated weights in the lowering (N) condition, weight estimations were significantly reduced in the lifting (R) condition. Nevertheless, it is striking that there are no differences for weight estimations between the lifting (N) and lifting (R) condition. However, there are indications that the kinematics of the place phase are not important to the observers for their weight judgements (Auvray et al., 2011; Hamilton et al., 2007). This finding might be the reason for the null effect of video play back direction for lowering actions here. Additionally, it demonstrates the importance of movement kinematics for judging the observed actions of others.

There is an abundance of evidence that suggests that movement velocity influences weight estimations of observed lifting actions. The weight of a lift is estimated to be lighter, the faster the movement appears (Shim et al., 2009; Shim & Carlton, 1997). Furthermore, grasp duration (Hamilton et al., 2007) and acceleration (Auvray et al., 2011) were identified as crucial indicators which observers rely on in their weight judgements. Obviously, movement execution of lowering actions is slower in general compared to lifting actions (see Fig. 1). In addition, acceleration is considerably reduced and grasp duration or the first lift phase, respectively, is distinctly longer compared to an original lifting action (see Fig. 2b, deceleration at the end of the action). According to the ideomotor principle (James, 1890), which the concept of motor simulation is based on, the sensory consequences caused by the execution of an action are assigned to the respective motor program. Therefore, the motor program of a certain action can be activated by the anticipation or observation of its effects. Projecting oneself in a lifting situation with a slow first lift phase seemed to activate effect representations that are usually perceivable while lifting heavy weights. In addition, the light acceleration and thus deceleration led to the impression that very heavy weights were moved in that condition, too.

In conclusion, our findings favour the weak version of the MSH. Our study showed on the one hand, that the action goal is crucial for the interpretation of other person’s actions, but this was not the case for all conditions. On the other hand, we showed that specific kinematics of an observed action are crucial for action interpretations while others seemed to play only a minor role. This outcome does not correspond with the assumptions of the strong version of the MSH. The most interesting finding here is the re-evaluation of movement kinematics depending on the goal of an action as shown for lowering actions. This result is a convincing demonstration for the assignment of action effects (movement kinematics) to motor commands, whose execution lead to those effects to reach a superior action effect, namely the action goal.

Data Availability Statement

The datasets generated during and analysed during the current study are available from the corresponding author on reasonable request.

References

Alaerts, K., Senot, P., Swinnen, S. P., Craighero, L., Wenderoth, N., & Fadiga, L. (2010). Force requirements of observed object lifting are encoded by the observer's motor system: A TMS study. The European Journal of Neuroscience, 31(6), 1144–1153. https://doi.org/10.1111/j.1460-9568.2010.07124.x

Auvray, M., Hoellinger, T., Hanneton, S., & Roby-Brami, A. (2011). Perceptual weight judgments when viewing one’s own and others’ movements under minimalist conditions of visual presentation. Perception, 40, 1081–1103. https://doi.org/10.1068/p6879 .

Aziz-Zadeh, L., Maeda, F., Zaidel, E., Mazziotta, J., & Iacoboni, M. (2002). Lateralization in motor facilitation during action observation: A TMS study. Experimental Brain Research, 144, 127–131. https://doi.org/10.1007/s00221-002-1037-5 .

Bingham, G. P. (1993). Scaling judgments of lifted weight: Lifter size and the role of the standard. Ecological Psychology, 5, 31–64. https://doi.org/10.1207/s15326969eco0501_2 .

Blake, R., & Shiffrar, M. (2007). Perception of human motion. Annual Review of Psychology, 58, 47–73. https://doi.org/10.1146/annurev.psych.57.102904.190152 .

Borroni, P., & Baldissera, F. (2008). Activation of motor pathways during observation and execution of hand movements. Social Neuroscience, 3, 276–288. https://doi.org/10.1080/17470910701515269 .

Dempster, W. (1955). Space requirements of the seated operator: Geometrical, kinematic, and mechanical aspects of the body with special reference to the limbs. Wright-Patterson Air Force Base Ohio: Wright Air Development Center.

di Pellegrino, G., Fadiga, L., Fogassi, L., Gallese, V., & Rizzolatti, G. (1992). Understanding motor events: A neurophysiological study. Experimental Brain Research, 91, 176–180.

Dittrich, W. H., Troscianko, T., Lea, S. E., & Morgan, D. (1996). Perception of emotion from dynamic point-light displays represented in dance. Perception, 25, 727–738. https://doi.org/10.1068/p250727 .

Fadiga, L., Fogassi, L., Pavesi, G., & Rizzolatti, G. (1995). Motor facilitation during action observation: A magnetic stimulation study. Journal of Neurophysiology, 73(6), 2608–2611.

Fogassi, L., Ferrari, P. F., Gesierich, B., Rozzi, S., Chersi, F., & Rizzolatti, G. (2005). Parietal lobe: From action organization to intention understanding. Science, 308, 662–667. https://doi.org/10.1126/science.1106138 .

Gallese, V., & Goldman, A. (1998). Mirror neurons and the simulation theory of mind-reading. Trends in Cognitive Sciences, 2(12), 493–501. https://doi.org/10.1016/S1364-6613(98)01262-5

Gallese, V., Fadiga, L., Fogassi, L., & Rizzolatti, G. (1996). Action recognition in the premotor cortex. Brain, 119, 593–609. https://doi.org/10.1093/brain/119.2.593 .

Gallese, V., Keysers, C., & Rizzolatti, G. (2004). A unifying view of the basis of social cognition. Trends in Cognitive Sciences, 8, 396–403. https://doi.org/10.1016/j.tics.2004.07.002 .

Giese, M. A., & Poggio, T. A. (2003). Neural mechanisms for the recognition of biological movements. Nature Reviews. Neuroscience, 4, 179–192. https://doi.org/10.1038/nrn1057 .

Goodale, M. A., & Milner, A. D. (1992). Separate visual pathways for perception and action. Trends in Neurosciences, 15, 20–25. https://doi.org/10.1016/0166-2236(92)90344-8 .

Grierson, L. E. M., Ohson, S., & Lyons, J. (2013). The relative influences of movement kinematics and extrinsic object characteristics on the perception of lifted weight. Attention, Perception & Psychophysics, 75, 1906–1913. https://doi.org/10.3758/s13414-013-0539-5 .

Hamilton, A. F. D. C., Joyce, D. W., Flanagan, J. R., Frith, C. D., & Wolpert, D. M. (2007). Kinematic cues in perceptual weight judgement and their origins in box lifting. Psychological Research, 71, 13–21. https://doi.org/10.1007/s00426-005-0032-4 .

Holm, S. (1979). A simple sequentially rejective multiple test procedure. Scandinavian Journal of Statistics, 6(2), 65–70.

James, W. (1890). The principles of psychology, Vol. I, II. Cambridge, MA: Harvard University Press.

Johansson, G. (1973). Visual perception of biological motion and a model for its analysis. Perception & Psychophysics, 14, 201–211. https://doi.org/10.3758/BF03212378 .

Kozlowski, L. T., & Cutting, J. E. (1977). Recognizing the sex of a walker from a dynamic point-light display. Perception & Psychophysics, 21, 575–580. https://doi.org/10.3758/BF03198740 .

Lestou, V., Pollick, F. E., & Kourtzi, Z. (2008). Neural substrates for action understanding at different description levels in the human brain. Journal of Cognitive Neuroscience, 20, 324–341. https://doi.org/10.1162/jocn.2008.20021 .

Maass, A., Pagani, D., & Berta, E. (2007). How beautiful is the goal and how violent is the fistfight?: Spatial Bias in the interpretation of human behavior. Social Cognition, 25, 833–852. https://doi.org/10.1521/soco.2007.25.6.833 .

Maguinness, C., Setti, A., Roudaia, E., & Kenny, R. A. (2013). Does that look heavy to you? Perceived weight judgment in lifting actions in younger and older adults. Frontiers in Human Neuroscience, 7, 795. https://doi.org/10.3389/fnhum.2013.00795 .

Montagna, M., Cerri, G., Borroni, P., & Baldissera, F. (2005). Excitability changes in human corticospinal projections to muscles moving hand and fingers while viewing a reaching and grasping action. The European Journal of Neuroscience, 22, 1513–1520. https://doi.org/10.1111/j.1460-9568.2005.04336.x .

Pollick, F. E., Kay, J. W., Heim, K., & Stringer, R. (2005). Gender recognition from point-light walkers. Journal of Experimental Psychology. Human Perception and Performance, 31, 1247–1265. https://doi.org/10.1037/0096-1523.31.6.1247 .

Rizzolatti, G., Fadiga, L., Gallese, V., & Fogassi, L. (1996a). Premotor cortex and the recognition of motor actions. Cognitive Brain Research, 3, 131–141. https://doi.org/10.1016/0926-6410(95)00038-0 .

Rizzolatti, G., Fadiga, L., Matelli, M., Bettinardi, V., Paulesu, E., Perani, D., & Fazio, F. (1996b). Localization of grasp representations in humans by PET: 1. Observation versus execution. Experimental brain research, 111, 246–252.

Rizzolatti, G., Fogassi, L., & Gallese, V. (2001). Neurophysiological mechanisms underlying the understanding and imitation of action. Nature Reviews. Neuroscience, 2, 661–670. https://doi.org/10.1038/35090060 .

Runeson, S., & Frykholm, G. (1981). Visual perception of lifted weight. Journal of Experimental Psychology. Human Perception and Performance, 7, 733–740. https://doi.org/10.1037/0096-1523.7.4.733 .

Sebanz, N., Bekkering, H., & Knoblich, G. (2006). Joint action: Bodies and minds moving together. Trends in Cognitive Sciences, 10, 70–76. https://doi.org/10.1016/j.tics.2005.12.009 .

Shim, J., & Carlton, L. G. (1997). Perception of kinematic charcteristics in the motion of lifted weight. Journal of Motor Behavior, 29, 131–146. https://doi.org/10.1080/00222899709600828 .

Shim, J., Carlton, L. G., & Kim, J. (2004). Estimation of lifted weight and produced effort thruough perception of point-light display. Perception, 33, 277–291. https://doi.org/10.1068/p3434 .

Shim, J., Hecht, H., Lee, J.-E., Yook, D.-W., & Kim, J.-T. (2009). The limits of visual mass perception. Quarterly Journal of Experimental Psychology, 62, 2210–2221. https://doi.org/10.1080/17470210902730597 .

Strafella, A. P., & Paus, T. (2000). Modulation of cortical excitability during action observation: A transcranial magnetic stimulation study. Neuroreport, 11(10), 2289–2292.

Viviani, P., Figliozzi, F., Campione, G. C., & Lacquaniti, F. (2011a). Detecting temporal reversals in human locomotion. Experimental Brain Research, 214, 93–103. https://doi.org/10.1007/s00221-011-2809-6 .

Viviani, P., Figliozzi, F., & Lacquaniti, F. (2011b). The perception of visible speech: Estimation of speech rate and detection of time reversals. Experimental Brain Research, 215, 141–161. https://doi.org/10.1007/s00221-011-2883-9 .

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest

The authors declare that they have no conflict of interest.

Ethical Approval

All procedures performed in studies involving human participants were in accordance with the ethical standards of the local ethics committee and with the 1964 Helsinki declaration and its later amendments.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

(MP4 38 kb)

(MP4 39 kb)

(MP4 36 kb)

(MP4 36 kb)

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Braun, C., Fischer, S. & Eckardt, N. Weight estimations with time-reversed point-light displays. Curr Psychol 41, 7032–7040 (2022). https://doi.org/10.1007/s12144-020-01196-z

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12144-020-01196-z