Abstract

Following other contributions about the MAX accidents to this journal, this paper explores the role of betrayal and moral injury in safety engineering related to the U.S. federal regulator’s role in approving the Boeing 737MAX—a plane involved in two crashes that together killed 346 people. It discusses the tension between humility and hubris when engineers are faced with complex systems that create ambiguity, uncertain judgements, and equivocal test results from unstructured situations. It considers the relationship between moral injury, principled outrage and rebuke when the technology ends up involved in disasters. It examines the corporate backdrop against which calls for enhanced employee voice are typically made, and argues that when engineers need to rely on various protections and moral inducements to ‘speak up,’ then the ethical essence of engineering—skepticism, testing, checking, and questioning—has already failed.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

The Repentance

Not long ago in this journal, Herkert et al. (2020) analyzed and commented on the Boeing 737 MAX accidents and their ethical implications for engineering. One of the key calls is the fostering of moral courage: the courage to act on one’s moral convictions including adherence to codes of ethics, and strengthening the voice of engineers within large organizations. At the end of their article, Herkert and colleagues intimate a sense of déjâ vu—large-scale tragedies involving engineering decision-making are not new, nor are the calls for engineers to speak up and for their organizations to empower them (Feldman, 2004; Vaughan, 2005; Verhezen, 2010). Even more recently in this journal, Englehardt, Werhane and Newton pick up on this and point to the immense difficulty and ethical challenges for engineers in the face of organizational malfeasance (2021). We agree with Englehardt and colleagues: if an engineer needs ‘moral courage’ simply to do their job, then there is a major moral and systemic (or, as Herkert and colleagues say: ‘macroethical’) failure whose remedies cannot sustainably or reliably come from front-line moral heroism (cf. Tkakic, 2019). In this paper, we take this a step further, tracing the repentance and rebuke of one particular safety engineer (employed by the regulator, the Federal Aviation Administration) and explore what they mean for the question of moral courage in engineering.

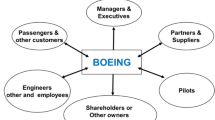

Early in 2021, a Federal Aviation Administration (FAA) safety engineer based in Seattle, publicly denounced the federal regulator’s role in approving the Boeing 737MAX—a plane involved in two crashes that together killed 346 people. What drove him to speak out while still employed was his renewed faith, deeply intertwined with an active repentance for his role in failing to prevent the disasters (Gates, 2021). In a detailed letter sent to one of the families involved in the second crash, and several interviews, the safety engineer explained how he should have been, but was not, among the regulator’s specialists who assessed the MAX’s critical new flight control software. The safety engineer had no involvement until after the first MAX accident in Indonesia in 2018. Senior FAA managers had focused on fulfilling the demands of industry, while itself struggling to meet its regulatory mandate under conditions of deregulation and resource limitations (Englehardt et al., 2021). As a result, manufacturers did much of their own certification, a process that could leave senior engineers and unaware or ignorant of critical new systems or changes and additions to existing systems. Boeing had felt under pressure its employees to keep the implications of design and software changes to the 737 minor or invisible, and get it through regulatory approval without extra pilot training requirements (Defazio & Larsen, 2020).

While the safety engineer’s exclusion may have acted to mitigate feelings of remorse and guilt, he was the most experienced engineer in the regulator’s Seattle office. He admitted afterward that he “felt a strong conviction that I should help with healing the families of the 737 MAX crashes” (Gates, 2021, p. 13). He indicated how his regret arose from his failure to deploy the responsibilities of his role and expert knowledge. Sharpe (2004) reminds us how this would not so much be about exacting retrospective responsibility, about ‘backward-looking accountability’ which focuses on finding someone to blame for (not) having done something. Rather, it is a reminder of forward-looking accountability, linked to goal-setting and moral deliberation which themselves are embedded in particular roles that people occupy. Prospective responsibility, or forward-looking accountability, is oriented toward deliberative and practical processes involved in setting and meeting goals. In the case of safety regulation, the overriding goal of the entire system is avoiding harm to other people. A failure of forward-looking accountability, then, can be seen here as not deploying the system’s role or knowledge in the service of risk reduction and harm avoidance.

The Moral Injury

‘Moral injury’ is increasingly recognized as one consequence when one’s ethical framework is broadsided or even broken by the actions of others or oneself. The term was first used by Shay in the mid 1990s to describe the “betrayal of what is right by someone in legitimate authority in a high stakes situation” (Shay, 2014, p. 181). Moral injury can be triggered by a self-accusation that is caused by something one did or failed to do. But things can also be done to someone, who suffers moral injury as a result. This means that a betrayal by those in authority within manufacturer or regulator would have been but one aspect of the moral injury. Moral injury also arises when one transgresses one’s own moral compass—leaving what Litz et al. (2009, p. 697) describe as “the lasting psychological, biological, spiritual, behavioral and social impact of perpetrating, failing to prevent, or bearing witness to acts that transgress deeply held moral beliefs and expectations.” The safety engineer’s would in that case have been a double moral wound.

Betrayal is a central theme for those morally injured and has been an almost ubiquitous human experience over the ages. Individuals such as Judas, Brutus, and Cassius betray leaders and nations, leading Dante to envision their destiny as the very center circle of hell with all other betrayers. These historical betrayals have often focused on the individual as the traitor who breaks the fraternal bonds of ‘thick’ human relationships (Margalit, 2017). Organizations can betray individuals, with destructive consequences (Smith & Freyd, 2014). Smith and Freyd focused on organizations that betray individuals who report sexual abuse, but others notice institutional betrayal in cases of public safety, finding that distrust and a sense of betrayal among people gets heightened when the disaster or crisis can be traced to human agency, to human-made decisions, judgments or errors (Sobeck et al., 2020). When an engineer believes they are part of an organization dedicated to safety in a high stakes context such as aviation, only to find this dedication to safety is getting—or has been—undermined, the concept of institutional betrayal must be considered. Roger Boisjoly’s failed attempts to stop the Challenger launch decision in 1986 is a well-publicized precedent (Vaughan, 1996).

Hindsight and outcome knowledge of course tend to single out the possible role of one’s omission(s). They connect the bad outcome to one’s inaction in a way that (over-)emphasizes one’s role and tends to finesse the contribution of a host of other factors (Baron & Hershey, 1988). Many of those factors would likely have overridden whatever one might have done in an attempt to alter the organizational course of action (Dekker et al., 2011). Memory of such events, however, are vividly organized around the counterfactual—‘what if I had acted differently at that juncture or opportunity?’ (Dekel & Bonanno, 2013; Fischhoff & Beyth, 1975). It implies that repentance is called for because it was a supposedly rational choice to not do the right thing (Dekker, 2007).

The Humility and Hubris

Which, of course, it wasn’t. Technologies, says Wynne, are ‘unruly.’ They are less orderly, less rule-bound, less controlled and less universally reliable than we think. Technology evolves uncertainly according to innumerable ad hoc judgments and assumptions. It may present in a neat aesthetic of tidy practices and compliant regulatory submissions and formal procedures, but engineers, Wynne reminds us, are operating with far greater levels of ambiguity; they need to make judgements in less than clearly structured situations. This is particularly the case when a completed system is seen as no more than the sum of its parts (indeed, a version of the ‘many hands’ problem noted by Herkert et al.) which is not going to be changed in its essence because of some minor aerodynamic or software tweaks. This ignores the complex interactions and possible emergence of novel behaviors that weren’t in the original equation set (the aerodynamic pitch-up problem that eventually led Boeing to put in MCAS is itself an example of that). Eventually only the technological system, unleashed into practice, becomes the ‘real’ test of its own reliability and safety (Weingart, 1991). This realization, and the necessary humility in the face of complexity and uncertainty, demands at least in theory an entirely different response:

After the unthinkable has happened — ‘Why did you ever think there was such a thing as zero risk, and how naive can you be to imagine that technical knowledge does not harbour areas of uncertainty, even legitimate ignorance? We cannot be blamed for the inevitable uncertainties that exist in developing expert knowledge and technological systems’. (Wynne, 1988, p. 150)

But that may not be typical for how an engineer feels about her or his contribution to the success and failure of a technology. An engineer may be forgiven for—and indeed be professionally committed to—trying to reduce the uncertainties inherent in a technology and its deployment. For this, engineering encompasses a wide range of activities that, at their core, are tradeoffs between numerous design goals, under conditions of limited means, knowledge and technological capability, in order to achieve particular goals. Gaining experience with a legacy system, and making seemingly ‘minor’ tweaks to it, can actively contribute to a sense of certainty that risks are both known and under control. It is ironically a lack of failure—at least in recent history—with the system in question that can help set it on course for failure (Petroski, 2000). The greatest risk, indeed, of already safe but complex systems lies in their apparent absence of risk: their continued success (Dekker, 2011). As Petroski explains:

Success and failure in design are intertwined. Though a focus on failure can lead to success, too great a reliance on successful precedents can lead to failure. Success is not simply the absence of failure; it also masks potential modes of failure. Emulating success may be efficacious in the short term, but such behavior invariably and surprisingly leads to failure itself. (Petroski, 2018, p. 3)

Petroski warns how a strategy of emulating one’s own past success is braided through the human tendency towards hubris about safety (Petroski, 2006). As later authors summed it up: “despite the best intentions of all involved, the objective of safely operating technological systems could be subverted by some very familiar and ‘normal’ processes of organizational life” (Pidgeon & O’Leary, 2000, p. 16). One of these is to assure a market for the technology developed, which involved an early commitment to maximum interoperability of the various generations of 737s—particularly to avoid the imposition of additional pilot training requirements by the regulator. Control software modifications were necessary to achieve that. They seemed only small, meant to yield local advantages in similarity, efficiency or versatility (Woods & Dekker, 2000).

Toward the FAA, this meant that nothing noteworthy would or should be revealed outside the narrow scope of the earliest tests—which helped cement an addition to the control system as only ‘hazardous.’ This in turn meant that limited (or no further) testing was required, because earlier tests had shown that no tests were required (a engineering circularity that has been referred to as ‘the self-licking ice cream cone’ (Worden, 1992)). But in the meantime, in order to handle new aerodynamic discoveries, the manufacturer had to enhance the automatic control system’s power, with limited disclosure to the regulator and other stakeholders (even internal ones) (FAA, 2020).

What the reforms proposed by the FAA in the wake of the MAX accidents don’t do assertively—as more technology and automation will surely penetrate legacy systems and form the basis of novel ones—is acknowledge that tests must have the potential to reveal factors that fall outside of what engineering thought needed to be considered to that point. Testing has to be able to reveal things that can matter, things which engineers didn’t know would come up, didn’t think needed to be considered, or assumed would not have mattered even if they did. There is no (systems) engineering unless it actualizes a capability to recognize anomalies that lead to re-assessments and re-designs. The risk that engineering will uncover problems and require change and further testing has to be accepted as part of engineering and engineering oversight—despite budgetary and timeline implications. In the face of increasingly complex systems, regulatory work runs the risk of being piecemeal and narrow and may not be able to uncover bigger gaps or orphaned concerns. The regulator specifically has to answer the question whether engineering has sufficiently questioned or re-assessed the proposed designs and remain vigilant for other pressures that undercut or eliminate engineering’s role to question and test its own work.

The Decay and the Disaster

Such pressures, however, were rife—even within the FAA. The engineer’s repentance comes not from a failure of one employee to muster the heroism to ‘voice’ his concerns. Instead, the entire system of prospective accountability on which a safety regulator’s work is supposedly founded, had eroded due to a number of factors. A US Senate inquiry in the aftermath of the MAX accidents found:

common themes among the allegations including insufficient training, improper certification, FAA management acting favorably toward operators, and management undermining of frontline inspectors. The investigation revealed that these trends were often accompanied by retaliation against those who report safety violations and a lack of effective oversight, resulting in a failed FAA safety management culture. (Wicker, 2020, p. 2)

The ‘failed safety management culture,’ of a regulator was itself a complex result of forces that had been decades in the making. Deregulation and cutbacks at some point leave a government agency with few options but to rely on the very manufacturer they are supposed to regulate for technical know-how and expertise (Bier et al., 2003). The resulting safety sacrifices are predictable; they are the systemic effects of deliberate political choices related to deregulation. Erosion of the organization’s core functions continues even while people inside cling to the aesthetics of its former authority and an idealized image of its own character. The most important consequence of such

decay is a condition of generalized and systemic ineffectiveness. It develops when an organization shifts its activities from coping with reality to presenting a dramatization of its own ideal character. In [such an] organization, flawed decision making of the sort that leads to disaster is normal activity, not an aberration. (Schwartz, 1989, p. 319)

By the time the MAX was assessed and certified, the safety engineer described the FAA as an organization with a militaristic chain of command, in which lower-level employees could offer opinions when asked but otherwise must ‘sit down and shut up.’ Which, the engineer now says, he wished he hadn’t (Gates, 2021). After the second crash of a Boeing 737MAX, the engineer still wasn’t assigned to work on the fix, so he attended meetings on his own accord and offered his expertise. A program manager asked him why he was there, and the engineer answered “because I’m pissed” (Gates, 2021, p. 13). Such repentant outrage at having been betrayed and excluded, and “restrained by reason and a resolve to see justice” (Fox, 2019, p. 211), can serve as a rebuke only to those who are attentive and receptive.

The Remedies

One popular remedy (or putative way to pre-empt the very situation that might lead to moral injury) is telling engineers to try harder: the requirement or expectation on engineers and knowledgeable others to ‘speak up;’ to use their voice even in the face of pressures to remain silent. ‘Employee (or engineer) voice’ in this sense is known in the literature as:

any attempt at all to change, rather than to escape from, an objectionable state of affairs, whether through individual or collective petition to the management directly in charge, through appeal to a higher authority with the intention of forcing a change in management, or through various types of actions or protests, including those that are meant to mobilize public opinion. (Hirschman, 1970, p. 30)

Margalit (2017) recognizes the possibility of retaliation. Insiders who speak up against the express (or tacit but dominant) goals of their organization can be portrayed as a traitor to their employer, community and colleagues. Speaking up can be a relationally fraught exercise, regardless of legal protections or professional exhortations. So in order to do it,

…engineers need support. They cannot be expected to sacrifice their jobs and perhaps their careers by standing up to management power. In addition to government whistle-blowing protection, professional engineering associations need to provide legal, financial and employment services to individuals who are unfairly punished for speaking out on unsafe engineering projects. This is an example of ‘countervailing powers’ … needed in organizations working in high-risk environments. (Feldman, 2004, p. 714)

The FAA should have been the right place for this, which should perhaps have gone without saying. Not only is the very role of a regulator the discharge of its prospective accountability (and hearing bad news from employees is very much part of that), organization science suggests speaking up in government organizations should actually be easier. Research shows that:

employees who experienced psychological safety were more likely to exhibit voice behavior; employee voice, in turn, promoted work engagement. (Ge, 2020, p. 1)

Private enterprise doesn’t enjoy the best preconditions for such a climate of psychosocial safety (Becher & Dollard, 2016; Zadow et al., 2017) and employee engagement (Kaufman, 1960). Everything (and everyone) needs to be focused (in principle) on the company’s mission, and focused on short-term results (Galic et al., 2017). Middle managers in private business are more rule-bound and protocol-conscious and pay more deference to formal structure than their government counterparts. One explanation lies in the contingent nature of private employment and hierarchical control and accountability:

Business organizations clearly have a greater capacity to exert such control, and by implication to heighten individual sensitivity to the structural instruments of control. Such organizations may coerce compliance, if necessary up to the point of discharging an employee … this is a critical power. A middle manager in an economic organization is accordingly under constant competitive pressure to produce results and to display the norms and values prevailing in his administrative climate. Security, advancement, and ultimate success are conditioned on acceptable performance and behavior throughout the managerial career. (Buchanan, 1975, p. 436)

In government agencies, the relationship between individual work and organizational mission is less clear-cut. Government agencies also have stronger systems of appeal that acknowledges the procedural due process rights of individuals (whether employees or citizens). In government agencies, Buchanan (1975) found that control and accountability happen largely by persuasion rather than by control, surveillance, counting and coercion like in private corporations. The differences, however, are perhaps too easily overstated, and less clear-cut than they once were. There are complex interpersonal, social, cultural, emotional, hierarchical, ethical and operational factors that all enter into deliberations about speaking up or not speaking up (Orasanu & Martin, 1998; Salas et al., 2006; Weber et al., 2018). The coalescence of constraints and expectations across both government and private organizations since the 1980s has gradually erased some of the unique aspects of federal employment as offering more autonomy and ‘voice.’ Engineering loss of voice happened before in a government agency under significant financial strain. Vaughan studied the institutional arrangements within NASA in the 1980s and found how its engineers were

‘servants of power:’ carriers of a belief system that caters to dominant industrial … interests. The argument goes as follows: Located in and dependent on organizations whose survival is linked to the economies of technological production and to rationalized administrative procedures, the engineering worldview includes a preoccupation with (1) cost and efficiency, (2) conformity to rules and hierarchical authority, and (3) production goals… Engineering loyalty, job satisfaction, and identity come from the relationship with the employer, not from the profession. Engineers do not resist the organizational goals of their employers; they use their technical skills in the interest of those goals. (Vaughan, 1996, p. 205)

Vaughan’s observation suggests that calls for engineers to speak up not only mischaracterize the relationship between them and their organization—whether private or government—but that these calls are misguided. It isn’t as if engineers resist goals that arise at the level of an organization in interaction with its environment. They make them, or see them as, their own goals. These are no longer decisions and trade-offs made by the organization, but problems proudly owned by individuals or teams of engineers. It is an insidious but desired delegation, an internalization of external pressure facilitated by Vaughan’s “loyalty, job satisfaction and identity,” by engineers’ pride of workmanship (Deming, 1982), demonstrating that they can do more with less, that they can find solutions where others have given up. It might render calls for engineers to ‘speak up’ slightly ridiculous. Because whom are they speaking up to?

Outrage about an engineer not speaking up in hindsight, can lead some to make demands and call for leverage that not only insult the professional ethic and commitment of engineers in general, but that actively work against a climate of psychological safety inside the organization that employs them:

once support is available to counter loss of one’s career from speaking out about dangerous technologies, engineering societies need to require engineers to act in accordance with the prevent-harm ethic. This requirement must include both training to inculcate the prevent-harm ethic and sanctions—up to losing one’s license—when the ethic is violated. (Feldman, 2004, p. 714)

Feldman’s is not just a call for ‘safety heroes’ (Reason, 2008), it is a threat of vilification of those who aren’t. But thinking about the problem this way is more than a mischaracterization. It is a capitulation. It is an admission that the system of prospective accountability—on which the entire role of a regulator and engineering oversight is premised—has already failed. An engineer employed by a regulator for the explicit purpose of evaluating, assessing, checking and assuring the engineering of a manufacturer’s software or system, should not have to be told what a ‘test’ is, or rely on whistleblower protection, or on individual ethical heroics to probe further and be heard when something is uncovered. She or he should not have to be threatened with losing professional certification to feel compelled to look further and raise her or his voice. If that is what the field has come down to, then something much deeper, and wider, is amiss. Which, of course, is the argument made by some: a steady decades-long drift into disaster of extractive capitalism itself (Albert, 1993; Saull, 2015; Tkakic, 2019).

The Rebuke

Guilt arising from moral injury can lead to (self-)destructive tendencies (Jamieson et al., 2020). However, for the FAA safety engineer, it led to repentance, and growth through an expression of constructive anger. His rebuke, or principled moral outrage (Rushton, 2013), led him to review the disaster and the wider conditions around it, as well as expressing compassion and empathy. These, too, are hallmarks of principled moral outrage. Let’s turn to the context so that we might better understand the reach of the rebuke.

In 2004, not long after Boeing had relocated its headquarters to Chicago from Seattle, the then COO remarked that “When people say I changed the culture at Boeing, that was the intent, so it’s run like a business rather than a great engineering firm… people want to invest in a company because they want to make money” (Defazio & Larsen, 2020, p. 34). By the time the 737 MAX was launched, Boeing—by the deliberate design of its leaders—may no longer really have been an engineering company. Where there had been twenty engineers on a part of a similar project before, there was only one such engineer for the 737 MAX. In 2014 the FAA accepted Boeing’s initial certification basis for the 737 MAX. Boeing’s stock price had been rising for a year as MAX orders rolled in. Additional sales with record profits were predicted. The company decided that from 2014 on,

a significant portion of our named executive officers’ long-term incentive compensation will be tied to Boeing’s total shareholder return as compared to a group of 24 peer companies. (Lazonick & Sakinc, 2019, p. 2)

Shareholder value and shareholder returns became a priority. As some have observed, it offered a bounty to those who had a lot of shares to begin with—including the company’s senior management. Yet the connection between rewards in shares and product safety problems was identified around the same time:

Stock options are thought to align the interests of CEOs and shareholders, but scholars have shown that options sometimes lead to outcomes that run counter to what they are meant to achieve. Building on this research, we argue that options promote a lack of caution in CEOs that manifests in a higher incidence of product safety problems. (Wowak et al., 2015, p. 1082)

Extractive financial pressure and engineering trade-offs became biased in a direction of maximal grandfathering, reduced need for oversight, less manpower allocated, accelerated approvals, no additional training requirements (Denning, 2013; Imberman, 2001). Subsequent research suggested (but could of course not prove) that with less of a singular focus on stock price during these years, resources could have been available for the safe design and development, including adequate testing and safety analyses (Lazonick & Sakinc, 2019). Nudging tradeoffs away from extractive tendencies requires a change in leadership posture—from corporate warrior to guardian spirit (Baker, 2020; Blumberg et al., 2022), listening to staff and customers, and committing not just to vague aspirations of accountability and transparency (Woodhead, 2022), but to the testable specificities of honesty and truth-telling. Repentance involves a commitment to tell the truth despite its costs. Language used both reflects and creates culture—particularly around honesty (Schauer & Zeckhauser, 2007). This means resisting concealing truth, not being fully liberal with the truth, or linguistic contortions that do not outrightly lie, but do deceive (The United States Department of Justice, 2021). To create a culture where truth is valued, it is important to actually use the word ‘truth,’ to nurture “a talent for speaking differently, rather than for arguing well, [as] the chief instrument of cultural change” (Rorty, 1989, p. 7). Accountability, if that word must be used, should be of the forward-looking kind (Sharpe, 2003): deeply connected to roles and responsibilities and focused on what needs to be done to set people inside the organization, and those tasked with its oversight, up for success.

The rebuke of the FAA safety engineer, though, goes further, reading less like the critical scrutiny of a specific act, situation or person and more like an impeachment of the political-economic and societal arrangements that enabled the disaster to incubate at the nexus between Wall Street, the regulator and the manufacturer. Because the irony is: the aircraft in question was going to be a huge commercial success—no matter what. And yet ever more resources were sucked from the project through extractive shareholder-capitalist arrangements (e.g. share buybacks) in which major shareholders are also the chief internal decision-makers of the company. It has made proud organizations drift into failure before (Mandis, 2013; McLean & Elkind, 2004). Going beyond its utility as personal repentance, the rebuke in the case described here perhaps serves as a political statement about ideological fandoms: deregulation, free markets and the sanctity of share price on the one hand, versus meaningful government involvement, engineering professionalism and old-fashioned metrics like earnings and operating margins on the other. The rebuke’s real subject is indignation over the kind of corporatization, deregulation and financialization that makes some shareholders wildly rich while underprioritizing engineering norms, expertise and technical know-how. It is a principled moral outrage that inspired the safety engineer to step up in the first place—in his own quoted words ‘enraged by sinful greed’ while ‘the righteous perish’ (Gates, 2021, p. 13).

References

Albert, M. (1993). Capitalism against capitalism. Whurr Publishers.

Baker, D. P. (2020). Morality and ethics at war: Bridging the gaps between the soldier and the state. Bloomsbury Publishing.

Baron, J., & Hershey, J. C. (1988). Outcome bias in decision evaluation. Journal of Personality and Social Psychology, 54(4), 569–569.

Becher, H., & Dollard, M. (2016). Psychosocial safety climate and better productivity in Australian workplaces: Costs, productivity, presenteeism, absenteeism. Australia Safe Work Australia.

Bier, V., Joosten, J., Glyer, D., Tracey, J., & Welsh, M. (2003). Effects of deregulation on safety: Implications drawn from the aviation, rail, and United Kingdom nuclear power industries. Kluwer.

Blumberg, D. M., Papazoglou, K., & Schlosser, M. D. (2022). The POWER manual: A step-by-step guide to improving police officer wellness, ethics, and resilience [Kindle].

Buchanan, B. (1975). Red tape and the service ethic: Some unexpected differences between public and private managers. Administration & Society, 6, 423–444.

Defazio, P. A., & Larsen, R. (2020). The design, development and certification of the Boeing 737 MAX (Final Committee Report). The House Committee on Transportation and Infrastructure.

Dekel, S., & Bonanno, G. A. (2013). Changes in trauma memory and patterns of posttraumatic stress. Psychological Trauma: Theory Research Practice and Policy, 5(1), 26–34.

Dekker, S. W. A. (2007). Eve and the serpent: A rational choice to err. Journal of Religion & Health, 46(1), 571–579.

Dekker, S. W. A. (2011). Drift into failure: From hunting broken components to understanding complex systems. Ashgate Publishing Co.

Dekker, S. W. A., Cilliers, P., & Hofmeyr, J. (2011). The complexity of failure: Implications of complexity theory for safety investigations. Safety Science, 49(6), 939–945.

Deming, W. E. (1982). Out of the crisis. MIT Press.

Denning, S. (2013). What went wrong at Boeing. Strategy & Leadership, 41(3), 36–41. https://doi.org/10.1108/10878571311323208

Englehardt, E., Werhane, P. H., & Newton, L. H. (2021). Leadership, engineering and ethical clashes at Boeing. Science and Engineering Ethics, 27(1), 12–12. https://doi.org/10.1007/s11948-021-00285-x

FAA. (2020). Timeline of activities leading to the certification of the Boeing 737 MAX 8 aircraft and actions taken after the October 2018 Lion Air Accident (Report No. AV2020037). Federal Aviation Administration, U.S. Department of Transportation, Office of Inspector General.

Feldman, S. P. (2004). The culture of objectivity: Quantification, uncertainty, and the evaluation of risk at NASA. Human Relations, 57(6), 691–718.

Fischhoff, B., & Beyth, R. (1975). "I knew it would happen” remembered probabilities of once-future things. Organizational Behavior and Human Performance, 13(1), 1–16.

Fox, P. (2019). Walking towards thunder. Hachette Australia.

Galic, M., Timan, T., & Koops, B. J. (2017). Bentham, Deleuze and beyond: An overview of surveillance theories from the panopticon to participation. Philosophy and Technology, 30(1), 9–37.

Gates, D. (2021). FAA safety engineer goes public to slam the agency’s oversight of Boeing’s 737 MAX. The Seattle Times, p. 13.

Ge, Y. (2020). Psychological safety, employee voice, and work engagement. Social Behavior and Personality, 48(3), 1–7. https://doi.org/10.2224/sbp.8907

Herkert, J., Borenstein, J., & Miller, K. (2020). The Boeing 737 MAX: Lessons for engineering ethics. Science and Engineering Ethics, 26, 2957–2974.

Hirschman, A. O. (1970). Exit, voice, and loyalty: Responses to decline in firms, organizations, and states. Harvard University Press.

Imberman, W. (2001). Why engineers strike—The Boeing story. Business Horizons, 44(6), 35–44. https://doi.org/10.1016/S0007-6813(01)80071-9

Jamieson, N., Maple, M., Ratnarajah, D., & Usher, K. (2020). Military moral injury: A concept analysis. International Journal of Mental Health Nursing, 29(6), 1049–1066. https://doi.org/10.1111/inm.12792

Kaufman, H. (1960). The forest ranger: A study in administrative behavior. Johns Hopkins University Press.

Lazonick, W., & Sakinc, M. E. (2019). May 31). Make passengers safer? Boeing just made shareholders richer. The American Prospect, 30(2), 66.

Litz, B. T., Stein, N., Delaney, E., Lebowitz, L., Nash, W. P., Silva, C., & Maguen, S. (2009). Moral injury and moral repair in war veterans: A preliminary model and intervention strategy. Clinical Psychology Review, 29, 695–706. https://doi.org/10.1016/j.cpr.2009.07.003

Mandis, S. G. (2013). What happened to Goldman Sachs: An insider’s story of organizational drift and its unintended consequences. Harvard Business Review Press.

Margalit, A. (2017). On betrayal. Harvard University Press.

McLean, B., & Elkind, P. (2004). The smartest guys in the room: The amazing rise and scandalous fall of Enron. Portfolio.

Orasanu, J. M., & Martin, L. (1998). Errors in aviation decision making: A factor in accidents and incidents. Human Error, Safety and Systems Development Workshop (HESSD) 1998. Retrieved from http://www.dcs.gla.ac.uk/~johnson/papers/seattle_hessd/judithlynnep

Petroski, H. (2000). Vanities of the bonfire. American Scientist, 88(6), 486–491.

Petroski, H. (2006). Patterns of failure. Modern Steel Construction, 47(7), 43–46.

Petroski, H. (2018). Success through failure: The paradox of design. Princeton University Press.

Pidgeon, N. F., & O’Leary, M. (2000). Man-made disasters: Why technology and organizations (sometimes) fail. Safety Science, 34(1–3), 15–30.

Reason, J. T. (2008). The human contribution: Unsafe acts, accidents and heroic recoveries. Ashgate Publishing Co.

Rorty, R. (1989). Contingency, irony, and solidarity. Cambridge University Press.

Rushton, C. H. (2013). Principled moral outrage: An antidote to moral distress? AACN Advanced Critical Care, 24(1), 82–89. https://doi.org/10.1097/NCI.0b013e31827b7746

Salas, E., Wilson, K. A., & Burke, C. S. (2006). Does crew resource management training work? An update, an extension, and some critical needs. Human Factors, 48(2), 392–413.

Saull, R. (2015). Capitalism, crisis and the far-right in the neoliberal era. Journal of International Relations and Development, 18(8), 25–51.

Schauer, F., & Zeckhauser, R. J. (2007). Paltering. Retrieved from https://ssrn.com/abstract=832634

Schwartz, H. S. (1989). Organizational disaster and organizational decay: The case of the National Aeronautics and Space Administration. Industrial Crisis Quarterly, 3, 319–334.

Sharpe, V. A. (2003). Promoting patient safety: An ethical basis for policy deliberation. Hastings Center Report, 33(5), S2–19.

Sharpe, V. A. (2004). Accountability: Patient safety and policy reform. Georgetown University Press.

Shay, J. (2014). Moral injury. Psychoanalytic Psychology, 31(2), 182–191. https://doi.org/10.1037/a0036090

Smith, C. P., & Freyd, J. J. (2014). Institutional betrayal. The American Psychologist, 69(6), 575–587. https://doi.org/10.1037/a0037564

Sobeck, J., Smith-Darden, J., Hicks, M., Kernsmith, P., Kilgore, P. E., Treemore-Spears, L., & McElmurry, S. (2020). Stress, coping, resilience and trust during the Flint water crisis. Behavioral Medicine, 46(3–4), 202–216. https://doi.org/10.1080/08964289.2020.1729085

The United States Department of Justice. (2021). Boeing charged with 737 Max fraud conspiracy and agrees to pay over $2.5 Billion. Retrieved from https://www.justice.gov/opa/pr/boeing-charged-737-max-fraud-conspiracy-and-agrees-pay-over-25-billion

Tkakic, M. (2019). Crash course: How Boeing’s managerial revolution created the 737MAX disaster. New Republic, 105(10), 5–31.

Vaughan, D. (1996). The Challenger launch decision: Risky technology, culture, and deviance at NASA. University of Chicago Press.

Vaughan, D. (2005). System effects: On slippery slopes, repeating negative patterns, and learning from mistake? In W. H. Starbuck, & M. Farjoun (Eds.), Organization at the limit: Lessons from the Columbia disaster (pp. 41–59). Blackwell.

Verhezen, P. (2010). Giving voice in a culture of silence: From a culture of compliance to a culture of integrity. Journal of Business Ethics, 96, 187–206.

Weber, D. E., MacGregor, S. C., Provan, D. J., & Rae, A. R. (2018). We can stop work, but then nothing gets done. Factors that support and hinder a workforce to discontinue work for safety. Safety Science, 108, 149–160.

Weingart, P. (1991). Large technical systems, real life experiments, and the legitimation trap of technology assessment: The contribution of science and technology to constituting risk perception. In T. R. LaPorte (Ed.), Social responses to large technical systems: Control or anticipation (pp. 8–9). Kluwer.

Wicker, R. F. (2020). Aviation safety oversight (Committee Investigation Report, December 2020). US Senate Committee on Commerce, Science, and Transportation.

Woodhead, L. (2022). Truth and deceit in institutions. Studies in Christian Ethics, 35(1), 87–103. https://doi.org/10.1177/09539468211051162

Woods, D. D., & Dekker, S. W. A. (2000). Anticipating the effects of technological change: A new era of dynamics for human factors. Theoretical Issues in Ergnomics Science, 1(3), 272–282.

Worden, S. P. (1992). On self-licking ice cream cones. In M. S. Giampapa, & J. A. Bookbinder (Eds.), Seventh Cambridge workshop on cool stars, Stellar systems, and the Sun, 26 vol., (pp. 599–603). Astronomical Society of the Pacific.

Wowak, A. J., Mannor, M. J., & Wowak, K. D. (2015). Throwing caution to the wind: The effect of CEO stock option pay on the incidence of product safety problems. Strategic Management Journal, 36(7), 1082–1092. https://doi.org/10.1002/smj.2277

Wynne, B. (1988). Unruly technology: Practical rules, impractical discourses and public understanding. Social Studies of Science, 18(1), 147–167.

Zadow, A. J., Dollard, M. F., McLinton, S. S., Lawrence, P., & Tuckey, M. R. (2017). Psychosocial safety climate, emotional exhaustion, and work injuries in healthcare workplaces. Stress and Health, 33(5), 558–569. https://doi.org/10.1002/smi.2740

Funding

Open Access funding enabled and organized by CAUL and its Member Institutions.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Dekker, S.W.A., Layson, M.D. & Woods, D.D. Repentance as Rebuke: Betrayal and Moral Injury in Safety Engineering. Sci Eng Ethics 28, 56 (2022). https://doi.org/10.1007/s11948-022-00412-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11948-022-00412-2