Abstract

Purpose of Review

The present overview addresses the importance of voice in human-technology interactions within the sexual realm. It introduces empirical evidence within two key domains: (a) sexualized interactions involving artificial entities and (b) communication about sexuality-related health. Moreover, the review underscores existing research gaps.

Recent Findings

Theories and first empirical studies underline the importance of voice within sexualized interactions with voice assistants or conversational agents as social cues. However, research on voice usage in sexual health-related contexts reveals contradicting results, mainly because these technologies ask users to vocalize potentially sensitive topics.

Summary

Although the utilization of voice in technology is steadily advancing, the question of whether voice serves as the optimal medium for social interactions involving sexually related artificial entities and sexual health-related communication remains unanswered. This uncertainty stems from the fact that certain information must be conveyed verbally, which could also be communicated through alternative means, such as text-based interactions.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Computers operate in binary, which significantly differs from the familiar natural language used by humans. To bridge this gap and enhance user-friendliness, user interfaces were developed to make interactions with technology more intuitive. Traditionally, many of these interfaces depended on clicking or typing. However, advancements in natural language processing (NLP), which can be understand as the application of computational techniques for automatic analysis and representation of human languages [1], along with text-to-speech (TTS) technologies and large language models (LLMs), have enabled vocal interactions with computers. This development allows users to communicate with technology using spoken language.

While there are technologies that rely on a mix of different input modalities (e.g., conversational agents that allow text-based chatting and verbal communication), there are also those that focus primarily on voice interaction. The prevalent example of the latter would be voice assistants, which are defined as “software agents that can interpret human speech and respond via synthesized voices” (p. 81) [2]. This technology uses NLP and TTS algorithms to compute an output in natural language that is highly probable to be an appropriate answer to an inquiry. This internal process can be implemented in smartphones or designated loudspeaker systems. About 40% of the US population is estimated to communicate with voice assistants, with smartphones being the most commonly used device [3].

The use of speech as a control element makes the technology particularly accessible and usable, as it can draw on linguistic patterns that humans use in interpersonal contexts [4]. It primarily addresses efficient needs such as controlling smart home applications or fact-checking [5]. At the same time, the ability to socially communicate will continue to improve based on rapidly evolving LLMs (e.g., GPT-4).

Within the realm of sexuality-related applications, these “software agents” can not only serve as social partners with whom one can have sexualized interactions but also as an information source for sexual health communication. Both aspects will be discussed in the following.

The Importance of Voice Within Sexuality-Related Technologies

Technologies used in the broader field of sexuality can be distinguished in arousal-oriented interactions and non-arousal activities [6 ••]. The first covers technologies under the umbrella term “sexual interaction in digital contexts (SIDC)”. Here, users have sexualized interactions with humans or artifical entities via or through technologies. While SIDC would also include video calls with another person in which the voice plays an integral part (sexuality via technology), this article focuses on technologies (respectively artifical entities) that allow interactions with the system.

When discussing intimacy with voice technologies, the science fiction movie Her is frequently mentioned as an example of how people form bonds with voice agents [7]. In this story, the main character develops a romantic connection and engages in intimate interactions with a voice assistant. In reality, the applications (also known as skills or actions) that can be implemented on a voice assistant are restricted by the companies selling the voice assistant systems.

However, first smartphone applications provide a mix of input modalities (e.g., text or voice) that permit romantic and sexualized interactions, such as the companion application Replika. A romantic partner mode can be activated via a Pro membership, enabling romantic and sexualized conversations [8]. The human voice comes into play here by allowing users to talk on the phone with their persona. The smartphone thus becomes the shell of a person with whom people can exchange whatever is on their mind at any time of the day or night. Technological developments will moreover enable increasingly realistic communication: filler words (e.g., I mean, like, uh, um), pauses, breathing, and other very characteristic forms of vocal communication can now be imitated by algorithms (e.g., Genny by Lovo AI) [9].

In addition to these arousal-oriented interactions, technologies facilitate so-called “sexuality-related non-arousing activities”, such as communication about sexual health. These activities can be categorized as digital health interventions (DHIs), encompassing interventions delivered through digital technologies such as smartphones or websites. DHIs can foster healthy behaviors and facilitate remote access to effective treatments [10].

In the context of sexual health promotion, DHIs have gained popularity and have undergone significant advancements in response to the increasing adoption of the internet and mobile devices. They offer a desirable approach for reaching at-risk populations, e.g., adolescents, sexual or ethnic minorities, and sex workers, who may be hesitant or unable to seek professional advice due to lack of resources or stigmatization. Notably, a recent trend in this field involves incorporating new technologies, including text- and voice-based conversational agents [11]. Widely known examples of conversational agents using NLP for DHIs are digital voice assistants such as Apple’s Siri or Amazon’s Alexa [12••]. Beyond their convenience of home accessibility, voice assistants’ ability to engage in private and natural conversations with users amplifies their value as healthcare assistants [13••].

One example of a virtual assistant teaching teenagers about sex and sexual health is the voice assistant skill Hush Hush, developed by Healthy Teen Network. Hush Hush is a “trusted, confidential mentor that engages students in relevant, thoughtful, and personalized sex education conversations.” The voice assistant skill aims to create a confidential environment for adolescents to explore matters related to gender and sexuality, evaluate relationships, and select appropriate birth control methods. It is the goal to provide a non-judgmental guidance concerning complex issues about love and sexuality [14].

Method

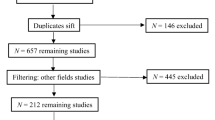

In the following, an overview of theoretical and empirical insights for both arousal-oriented interactions and sexual health communication will be presented. The papers provided represent pertinent research derived from a thorough review of the existing literature. For this purpose, common scientific meta-data services (e.g., search engines) were used, such as Google Scholar, Web of Science, Research Gate, and Elicit. Search terms were composed of key words related to “sexuality,” “sexual health,” “pleasure,” “sex,” “voice assistants,” “voice bots,” and “smart home device.” Given that the field is heavily underrepresented and employs a diverse language, Google Scholar’s reverse search of cited papers was also extensively utilized. Because both theoretical and empirical research on voice usage in the broader field of sexuality-related technologies is scarce, research gaps in arousal-oriented interactions and sexual health communication are presented at the end of each subsection. The discussion includes a table that summarizes relevant papers that address the usage of voice in both arousal-oriented interactions and sexual health communication.

Theoretical and Empirical Insights on Arousal-Oriented Interactions with Sexualized Voice Technologies

Theoretical Basis for Arousal-Oriented Interactions with Sexualized Voice Technologies

The theoretical basis for humans engaging in social, specifically in intimate or sexualized interactions with an artificial persona, has been postulated in the sexual interaction illusion model (SIIM) by Szczuka and colleagues [15]. The model is based on the media equation theory and suspension of disbelief, which both can be helpful theories to explain why people interact with artificial entities within intimate settings. In terms of the media equation theory, numerous empirical studies have already shown that people respond to media that meet the criteria of natural language, interactivity, and social role similarly to contact with another person [16••]. Moreover, the so-called suspension of disbelief could play a role, especially in short-term interactions [17]. A theory that originates from theater and film research and states that people quite willingly engage with fictional content for the sake of entertainment or the hoped-for benefit and block out stimuli of the artificial for the moment (cf. reception of fictional stories that are meanwhile not constantly scrutinized for their degree of reality).

Impressions of Human Authenticity and Uniqueness as Important Variable for Empirical Studies

While research in the field of digitized intimacy and sexuality is still underrepresented, there are a few empirical studies on how people respond to intimate interactions with communicative assistants. An empirical study of Szczuka experimentally investigated social responses with a flirtatious voice assistant (including sexualized communication) [18••]. In this study, heterosexual male participants were either exposed to the flirting voice assistant or the same content/messages from another human in form of voice messages as they can be send via state-of-the art messenger applications. The results demonstrated that voice assistants arouse more interest than human messages (one explanation could be the novelty effects), yet the humans were perceived to be more flirtatious. Similar patterns are found in other comparative studies of digitized intimacy/sexuality with artificial counterparts. Motivational and intentional processes are experienced differently in humans than in artificial interaction partners. In this case, it refers to flirtatious behaviors. Within humans, this behavior is characterized by motivations and intentions that can be driven by the self (e.g., a boost of self-esteem) or the wish to contact another person. These distinctive states are based on cautiousness, which can only be imitated at the moment but not authentically implemented into machines. More research is needed on whether there is a threshold, for instance, contextual or personal factors, that influence to which degree users accept the imitation of distinctive motivations and emotions.

Based on the SIIM, it is imaginable that the mere imitation will, at some point, underline the artificiality of a technological system as a form of disbelief in the authenticity of the communicated content. A similar effect is presented in the science fiction movie Her [7]. Throughout the movie the protagonist is confronted with the reality that the voice assistant that imitated all different aspects of an intimate relationship does not only operate on his device but that multiple other users also use the algorithm. This emphasizes the persona’s artificiality, which makes the user recognize that the relation is neither unique nor authentic.

Yet, this does not mean that humans will not engage in such interactions at all. Accompanied sexual arousal may direct cognitive capacities precisely to need satisfaction rather than to cognitions that might negatively affect the situation. For example, considerations of whether such an entity cautiously decides to engage in a sexualized interaction or whether a sexualized interaction with a voice assistant might deviate from certain norms [15]. Research in hyper-realistic sex dolls, including users who use technological “extensions” such as the smartphone applications mentioned earlier to communicate to the persona, agrees that users do not become illusory when using them and understand the interaction partners are not existing persons. This is evident, for example, in interview studies in which “it” is mainly used as a pronoun despite strong personification [19, 20].

However, Pentina and colleagues did a mixed-method study on communication with the companion application Replika. They supported the notion that the perceived social qualities of the technology are vital in developing an attachment to the technology in combination with the user’s motivation [21••]. More research needs to be done to understand motivational factors and their potential power to suspend impressions of the artificiality of the technologies or cognitions centering around the systems’ artificialness in more general.

Research Gaps: Long-term vs. Short-term Interactions, Relevance of Authenticity/Uniqueness, and Temporary Benefits as Transitional Objects

The present state of theoretical research indicates variances between short- and long-term associations with artificial entities, though further empirical investigation is required to strengthen this understanding [22]. Short-term interactions might be an excellent outlet to explore and/or act out social and sexual needs in a controlled environment. Nevertheless, prolonged interactions over extended periods may be associated with unique difficulties. This is the case for users who actively establish and sustain a lasting bond with an artificial entity, requiring them to suppress or handle indications of artificiality actively.

Moreover, the technology involved inevitably exposes its artificial nature during repeated interactions. Dialogue systems may, for instance, verbally express implemented desires (which are based on what other people labled to be a desire) such as the wish to build a shared future with the user. Still, when it comes to practical implementation (e.g., creating a family or a shared living space), users might quickly be confronted with the limits of such a future in practice simply because resources and capabilities are lacking (e.g., financial resources or physical abilities).

However, research indicates that (temporary) relationships to artificial companions (embodied in terms of sexualized robots, but also non-embodied conversational agents such as Replika AI) can potentially benefit different users. These artificial companions can, for instance, function as a form of transitional object to overcome difficult life stages (e.g., difficult breakups or severe loneliness) [19, 23]. More research is needed to understand the use of technologies only for a temporary life phase (e.g., accompanying therapy concepts as described in [19]).

Empirical Insights on Non-arousal Activities Through Voice: Sexual Health Communication

Usage of Voice Assistants for Sexual Health Promotion

First studies have explored the potential of voice-based conversational agents in the context of mental health [24, 25]. However, the current research on conversational agents for sexual health is predominantly focussed on text-based platforms [11, 26, 27]. Consequently, investigations into using voice assistants for sexual health advice are limited. Thus far, they have primarily addressed specific facets of sexual health communication (e.g., particular diseases) and compared different modalities.

For instance, Wilson and colleagues examined the efficacy of smartphones and their digital assistants, specifically Siri and Google Assistant, in providing accurate sexual health advice. They compared the results with a laptop-based Google search [28]. The findings revealed that the laptop-based Google search outperformed both voice assistants, with Google Assistant’s performance superior to Siri’s. Although the smartphone assistants understood the questions the authors addressed, they either dismissed necessary inquiries related to general health or did not provide appropriate information. A significant implication of this study is that attempts to use voice assistants for sexual health in the “real world” could be worse than in the conducted research due to influences of slang words or colloquialisms and accents.

Another example is a study by Napolitano and colleagues that concentrates on the ability of voice assistants to recognize and answer questions about male sexual health concerning different subjects with high prevalence among men, such as erectile dysfunction, premature ejaculation, or male infertility [29•]. Consistent with the findings of Wilson and colleagues, overall, the answers given by the voice assistants were classified as intermediate-low quality [28], [29•]. The study however underlines that different companies provide answers of distinctive quality and therefore can create knowledge gaps that are based on a system’s capabilities to react to user’s needs.

Since no human is involved in the interactions between voice assistants and a patient, voice assistants can represent an accessible and anonymous technology that can be particularly useful in health-related areas associated with stigma. This extends not only to acquiring knowledge but also to managing specific medical conditions. One example is the human immunodeficiency virus (HIV), as HIV is among the eight most prevalent sexually transmitted infections (STIs). Consequently, there is a high interest in the potential of DHIs in this field. While studies have previously focused on evaluating the initial effectiveness of text-based chatbots (e.g., [30, 31]), according to Garett and Young, there is currently no available research investigating the utilization of voice-based conversational agents for pre-test counseling to encourage HIV testing [12••]. However, they point out the potential conversational agents have in this field to alleviate specific challenges faced by high-risk individuals, such as experiencing stigma or limited test kid access. They can be programmed to provide accurate information about available tests, enabling users to compare and select the most suitable option.

Furthermore, voice-based conversational agents can serve as reliable sources of scientifically grounded information, counteracting for instance prevalent HIV-related misconceptions on the internet and social media [12••]. Nevertheless, it is essential to acknowledge that their investigation solely focuses on the theoretical potential of voice assistants in this particular context. Considering the findings of Wilson and colleagues and Napolitano and colleagues, it is debatable whether voice assistants are presently serving as a reliable and accurate source of scientifically correct information [28], [29•].

The Influence of Modality on Sexual Health Communication

As stated earlier, using speech to interact with voice assistants represents a distinctive characteristic to enhance their accessibility [4]. However, there is a lack of research addressing the appropriateness of employing voice assistants in a domain as sensitive and often stigmatized as sexual health. It is debatable if the modality, i.e., voice usage, is the best choice for gathering sexual health advice. In this context, a text-based conversational agent would be a more comfortable choice for most potential users. Cho investigated whether user perceptions are influenced by the modality (voice vs. text) and device (smartphone vs. smart home device) when attempting to access sensitive health information from voice assistants [13••]. Participants were instructed to ask less and more sensitive health questions. In contrast, the high sensitivity information condition concerned sexual health questions like “Do you have to have sex to get an STD?” or “Do condoms affect orgasms?”. The findings suggest that voice interactions can enhance positive evaluations of the agent due to the perception of a social conversation between the user and the voice assistant. However, these patterns were only observed when the requested information was less sensitive, and the participants reported low privacy concerns.

Research Gap: High Sensitivity Information and Privacy

The study by Cho [13••] showed that contrary to the assumption that voice interactions would reduce the social perceptions of a voice assistant in highly sensitive contexts involving information sensitivity and privacy concerns. It was observed that text interactions had a significant impact on perceived social presence similar to voice interactions. That implies that in sensitive settings, interactions with voice assistants become more engaging and stimulating for users, thereby enhancing the social presence of the assistant regardless of the interaction [13••]. There is evidence that individuals exhibit a higher level of comfort when disclosing personal information to voice assistants that utilize a human-recorded voice without a visible face (i.e., disembodied), as opposed to an agent with synthetic facial features that simulates a human appearance [16••]. A systematic review analyzing the effectiveness and acceptability of conversational agents for sexual health promotion by Balaji and colleagues, however, noted that social presence was only found to be adequate in half of the systems studies, possibly due to the utilization of multimedia and embodiment through avatars [11]. Incorporating social presence into a system often praised for facilitating anonymous and non-human interactions raises an intriguing challenge, highlighting the necessity to strike a delicate balance. Although social presence has been implicated in the user acceptance of conversational agents in other domains, its specific impact in the sexual health field remains to be elucidated.

Discussion

It became evident that voice is utilized differently in arousal-oriented interactions than in sexual health communication. Thus, it underlines the importance of this taxonomy provided by Döring and colleagues [6 ••]. While it might be an essential feature in social settings to convey impressions of human likeness, it functions as a user-friendly interface for inquiries made in health communication. Table 1 summarizes relevant work that uses voice in sexuality-related technologies.

The table demonstrates that the primary research emphasis often does not revolve around the utilization of voice. This contributes to the existence of numerous gaps in research. While specific gaps have already been tackled in the sections discussing arousal-oriented interactions with sexualized voice technologies and health communication, broader areas also require research, which will be further detailed ahead.

Examining Modalities: Voice- vs. Text-Based Conversational Agents

One important research gap concerns the comparison of modalities (voice- vs. text-based conversational agents) and, consequently, the applicability of voice assistants for arousal-oriented and sexual health communication. As previously stated, the research on the impact of modality on user perceptions remains insufficiently explored in the field of sexual health. Similarly, a knowledge gap exists in the realm of intimate communication. Therefore, it is crucial to investigate the role of modality to uncover the specific contribution of voice in close social interactions and knowledge acquisition. This approach can provide a comprehensive understanding of the significance and impact of voice within these domains. Taking this one step further, comparing voice assistants and embodied agents is also necessary. For example, Dworkin and colleagues investigated the application of embodied conversational agents for HIV medication adherence in young HIV-positive African American men [32]. Embodied agents exhibit the potential to function as customizable relational agents that can foster a socio-emotional connection with the user. Those agents can effectively facilitate education, encourage positive behavior change, and enhance user engagement through various modalities, including audio, graphics, animation, and text [32]. On the other hand, existing evidence suggests that individuals prefer to disclose personal information to disembodied conversational agents [16••]. Based on these findings, it can be postulated that voice, as a human-like cue, may hold greater relevance in facilitating intimate social interactions than knowledge acquisition in sexual health.

Diverse (and Inclusive) Perspective on Sexualized Interactions with Artificial Voices

In the following an outlook on important but underrepresented perspectives in the usage of voice technologies will be provided. Firstly, research on the arousal and non-arousal-oriented usage of voice assistant does not sufficiently address more diverse and inclusive user groups, while the technology has the potential to be used for non-heteronormative groups.

One already discussed but yet under-researched aspect is the gendering of the artificial voice: Even though synthetic, and even if ambiguous, users have the tendency to gender artificial voices [33]. In line with media equation theory, this can activate gender stereotypes [16••], including for instance the systems trustworthiness (e.g., [34, 35]) or likeability [36]. Therefore, the gender perception of the voice has a high relevance for sexual and intimate interactions, as well as for non-arousal activities. Given the fact that voice assistants are utilized by individuals of all genders, it is imperative that representation in voice assistants reflects this diversity. However, this is not yet the reality. The majority of voice assistants on the market have a feminine name [37] and are represented as feminine [38]. This however contributes to the potential replication of stereotypes as the mostly female voice fulfills the social role of a servant, which in some cases is heavily sexualized and degraded [39]. For example, Amazon’s voice assistant system Alexa consistently assumes a subservient role. The system establishes and sustains a link between women and obedience. Among other factors, this leads to sexual harassment within interaction with voice assistants [40, 41]. Therefore, several studies investigate, how conversational technologies respond to sexual harassment and verbal abuse (e.g., [40, 42]). While this can also be a mechanism of playing the system (testing out social norms what is not accepted in interpersonal interactions with other humans) is nevertheless a behavior that is observed and need to be researched longitudinally, especially in the realm of arousal-oriented tasks. While a more optimistic view conceptualized the female artificial voice as the new superpower who is able to answer all imaginable questions within milliseconds, it still needs to be highlighted that this might be more important in the realm of non-arousal-oriented tasks. While first voice assistant systems are equipped with the option to switch to a masculine artificial voice [33], a notable gap exists in the availability of voices that encompass the complete spectrum of gender presentations, including non-binary or gender-ambiguous voices [37].

Diversity is also a question of the data a system is trained with. Extensive research has underscored both explicit and implicit biases present in algorithms and datasets, which are used to train NLP systems, concerning gender [43,44,45], race (leading to discrimination and racism, e.g., [46, 47]), age (leading to ageism [48]), as well as their intersections [46, 49]. The predominant focus has been on gender stereotypes, harassment, and offensive language, particularly emphasizing restricted and/or unfavorable associations with femininities and individuals identifying as genderqueer [50]. Yet, according to Seaborn and colleagues, a disparity is evident in the way the “gender problem” is conceptualized, as most of the present work is guided by a sex/gender binary model of male and female. Therefore, they propose a more comprehensive examination of masculine biases and gender to expose imbalances and disparities in voice assistant-oriented NLP datasets [50].

When talking about more diverse and inclusive user groups, it is important to highlight people of different ages, sexualities, and users that are not in line with affordances of heteronormative user groups, such as people with physical or intellectual disabilities or medical conditions.

Adolescents, who often seek health-related information online, represent a significant user group. A study by Rideout and Fox found that approximately 87% of Americans aged 14 to 22 use online platforms for health-related data [51]. Additionally, the internet is a valuable resource for young individuals exploring their sexuality, particularly for LGBTQAI + youth seeking connections to with like-minded individuals [52, 53]. Moreover, the growing group of older adults exploring the possibilities the digital world offers for sex and love is another significant user segment. A recent study revealed that the online activities among older adults can be categorized into three groups: non-arousal activities (e.g., visiting educational websites or chatting on dating platforms), solitary arousal activities (e.g., watching pornography), and partnered arousal activities which involve at least one other individual (e.g., engaging in webcam sex) [54, 55]. Hence, older adults are utilizing the digital space in the domains that align with application areas discussed in this work. Yet, there exists a significant gap in understanding the potential role of voice for this user group. Voice-only technologies may offer relief for older individuals who, for example, have problems with typing. However, it could also pose a challenge for some who are unfamiliar with communicating with technical devices through speech or expressing intimate thoughts aloud as commands to a voice assistant.

Additionally, voice-only technologies offer a new potential from an accessibility perspective, as individuals experiencing motor, linguistic, and cognitive impairments can engage effectively with voice assistants, given they possess particular levels of remaining cognitive and linguistic abilities [56]. Studies show that despite the presence of certain accessibility challenges, individuals with a range of disabilities already utilize state-of-the-art voice assistant systems. This usage extends to unexpected scenarios such as speech therapy and providing support for caregivers [57]; it is therefore also imaginable that sexualized interaction could be of benefit for these user groups.

The Potential of Personalized LLMs

As LLMs enhance, personalization of voice interactions is evolving to become a key factor in satisfying user’s expectations for customized experiences that correspond to their individual needs and preferences [58]. The idea of a stronger personification can for instance in turn affect how users align their speech models with a system [59], or the level of parasocial relation they form with the voice [59] and consequently affect variables such as heightened preference, trust in the agent [60], and smoother conversations [61, 62]. In terms of arousal-oriented interactions, this might even be the key for ongoing dialogues and in some cases even romantic connections. And while personalized LLMs are likely to positively enhance the usage of heteronormative user groups, this will be specifically the key for user groups of more diverse affordances.

One imaginable example is users with hearing or speech impairments, as well as neurodivergent people, allowing adjustments such as altering the voice assistant’s speed or an appropriate language use to enhance their overall user experience, both for arousal and non-arousal applications.

Psychological Aspects and Ethical Considerations

However, there is a demand for further research to explore psychological components related to voice assistants, encompassing their capabilities and quality and factors like acceptability, trust, and trustworthiness. Previous studies on conversational agents in health care have highlighted various limitations. These include privacy concerns, limited conversational responsiveness, user-perceived undesirable personality (e.g., rude, lack of sympathy, patronizing, or judgmental), and a lack of trust in the creators of the digital assistants [12, 63]. It must be noted that providers of such technologies bear immense social responsibility; users can form deep relationships with artificial communication partners, and sudden updates or discontinuation of specific services can lead to severe social reactions (cf.: changing the offer of romantic partner mode in Replika and reports from users that went as far as suicidal thoughts [64]). However, it is essential to highlight that voice assistants in particular are heavily controlled by providers. The applications offered by the most widely used devices are subject to the rules of the respective providers, and these are often heavily regulated, especially in the area of sexuality. It, therefore, remains to be seen to what extent dedicated devices will be developed for intimate interactions with voice assistants or whether greater reliance will be placed on voice interaction via smartphones. This responsibility also extends to the realm of sexual health communication, encompassing social implications and considerations regarding privacy and data security. Previous studies have identified data privacy and confidentiality concerns as obstacles to the regular use of virtual assistants, particularly in contexts involving sensitive information, such as health contexts [65, 66]. Thus, it is reasonable to posit that these factors also significantly influence when addressing inquiries on sexuality, which involve deeply intimate subject matter and are widely regarded as highly sensitive.

Moreover, handling sensitive data brings focus to the notion of trust. As a result, inquiries emerge regarding the trustworthiness of these systems and the features they possess, which can influence the willingness of patients to trust them within a medical setting [67]. Those aspects can also be connected effectively to the abovementioned point, as certain user groups, such as children and adolescents, necessitate particular attention, especially concerning data security. Because a substantial proportion of children aged two to eight already engage with voice assistants daily, it is essential to consider the potential and risks associated with utilizing voice assistants for sexual health education and intimate communication in general [68]. Particularly in this context, it is crucial to comprehend the mechanisms involved in data storage and access, as research conducted by Szczuka and colleagues demonstrated that such understanding is negatively associated with children’s inclination to disclose private information to a voice assistant. More precisely, the language employed by the voice assistant in their study (e.g., using the phrase “I am silent as a grave” when asked about entrusting a secret) holds the potential to prompt children to disclose susceptible information, which can subsequently be accessed by unauthorized individuals [69]. As the act of safeguarding information is integral to the process of identity formation, this naturally includes highly sensitive details concerning body perception and sexuality. Voice assistants that are used in a sexuality-related context should therefore disclose their data policies and operate in the users’ best interests. This could for instance be utilized by providing easy options for data deletion, straightforward access to a data management system that outlines authorized access, and perhaps even implementing preventive prompts to remind users of privacy considerations. To achieve this, it would however also be important to have adaptive systems which recognize users and their different affordances, which is something that still needs to be implemented in state-of-the-art systems.

Limitations

Due to the limited empirical literature, this article can only be considered an overview of the usage of voice as a medium for social interactions involving sexually related artificial entities and sexual health-related communication. However, we believe that the findings of this work can serve as valuable resources for researchers, informing future work to broaden the presently limited scope of empirical knowledge in this field.

Conclusion

Empirical research on arousal-oriented interaction with sexualized voice technologies and non-arousal activities through voice is still underrepresented. Although initial studies suggest that voice assumes a crucial role in establishing connections with artificial agents by offering significant human and, consequently, social cues, findings concerning the significance of voice in health-related contexts remain varied. These results tend to lean towards perceiving voice more prominently as an interface type. Because natural language processing become rapidly more sophisticated, using voice in the broader field of sex-related technologies will likely play a more critical role soon. The present article encompasses pertinent gaps in research to spark future studies aimed at gaining deeper insights into the significance of voice in the realm of technology associated with sexuality.

References

Papers of particular interest, published recently, have been highlighted as: • Of importance •• Of major importance

Chowdhary KR. Natural Language Processing. In: Fundamentals of artificial intelligence [Internet]. New Delhi: Springer India; 2020 [cited 2023 Dec 9]. 603–49. Available from: http://link.springer.com/https://doi.org/10.1007/978-81-322-3972-7_19

Hoy MB. Alexa, Siri, Cortana, and more: an introduction to voice assistants. Med Ref Serv Q. 2018;37(1):81–8.

Lis J. How big is the voice assistant market? [Internet]. Insider Intelligence. 2022. Available from: https://www.insiderintelligence.com/content/how-big-voice-assistant-market

Zwakman DS, Pal D, Arpnikanondt C. Usability evaluation of artificial intelligence-based voice assistants: the case of Amazon Alexa. SN Comput Sci. 2021;2(1):28.

Al-Kaisi AN, Arkhangelskaya AL, Rudenko-Morgun OI. The didactic potential of the voice assistant “Alice” for students of a foreign language at a university. Educ Inf Technol. 2021;26(1):715–32.

•• Döring N, Krämer N, Mikhailova V, Brand M, Krüger THC, Vowe G. Sexual interaction in digital contexts and its implications for sexual health: a conceptual analysis. Front Psychol. 2021 12:769732. (This document classifies technologies into categories based on arousal orientation, distinguishing between arousal-oriented and non-arousal-oriented technologies, thereby offering a crucial foundational definition)

Jonze S. Her [Internet]. 2013. Available from: https://www.imdb.com/title/tt1798709/

Luka Inc. Replika. 2023; Available from: https://replika.com

LOVO AI. Genny by LOVO. AI text to speech and generative AI [Internet]. 2022. Available from: https://genny.lovo.ai/signin

Murray E, Hekler EB, Andersson G, Collins LM, Doherty A, Hollis C, et al. Evaluating digital health interventions: key questions and approaches. Am J Prev Med. 2016;51(5):843–51.

Balaji D, He L, Giani S, Bosse T, Wiers R, de Bruijn GJ. Effectiveness and acceptability of conversational agents for sexual health promotion: a systematic review and meta-analysis. Sex Health. 2022;19(5):391–405.

••Garett R, Young SD. Potential application of conversational agents in HIV testing uptake among high-risk populations. J Public Health. 2023 Mar 14;45(1):189–92. (This theoretical paper explores the application of conversational agents in increasing HIV testing among high-risk populations and points out the potential of their application within the field of sexual health.)

•• Cho E. Hey Google, Can i ask you something in private? In: Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems [Internet]. Glasgow Scotland Uk: ACM; 2019 [cited 2023 Jun 28]. 1–9. Available from: https://dl.acm.org/doi/https://doi.org/10.1145/3290605.3300488.(This empirical work underscores the significance of modalities and their impact on the communication of sensitive inquiries)

Healthy Teen Network. Hush Hush [Internet]. 2023. Available from: https://www.healthyteennetwork.org/project/hush-hush/

Szczuka JM, Hartmann T, Krämer NC. Negative and positive influences on the sensations evoked by artificial sex partners: a review of relevant theories, recent findings, and introduction of the sexual interaction illusion model. In: Zhou Y, Fischer MH, editors. AI Love You [Internet]. Cham: Springer International Publishing; 2019 [cited 2023 Jun 28]. p. 3–19. Available from: https://link.springer.com/https://doi.org/10.1007/978-3-030-19734-6_1

•• Nass CI, Brave S. Wired for speech: how voice activates and advances the human-computer relationship. Cambridge, Mass: MIT Press; 2005. 296. (This book establishes a theoretical foundation for the utilization of voice as a social cue and explores how it subsequently triggers social cues and elicits corresponding responses)

Coleridge ST. Biographia literaria, Chapter XIV. [Internet]. 1871. Available from: https://www.gutenberg.org/files/6081/6081-h/6081-h.htm.

•• Szczuka JM. Flirting with or through media: how the communication partners’ ontological class and sexual priming affect heterosexual males’ interest in flirtatious messages and their perception of the source. Front Psychol. 2022 ;13:719008. (This empirical study tests differences between reactions to computer-mediated voice communication and voice assistants)

Knafo D, LoBosco R. The age of perversion: desire and technology in psychoanalysis and culture. New York: Routledge; 2017. 283. (Psychoanalysis in a new key).

Lievesley R, Reynolds R, Harper CA. The ‘perfect’ partner: understanding the lived experiences of men who own sex dolls. Sex Cult. 2023;27(4):1419–41.

•• Pentina I, Hancock T, Xie T. Exploring relationship development with social chatbots: a mixed-method study of Replika. Comput Hum Behav. 2023 Mar;140:107600. (This work introduces an model of how and why humans build an relationship with conversational agents)

Szczuka JM, Dehnert. Sexualized robots: use cases, normative debates, and the need for research. In: Emerging Issues for Emerging Technologies: Informed Provocations for Theorizing Media Future.

Skjuve M, Følstad A, Fostervold KI, Brandtzaeg PB. My chatbot companion - a study of human-chatbot relationships. Int J Hum-Comput Stud. 2021;149:102601.

Parmar P, Ryu J, Pandya S, Sedoc J, Agarwal S. Health-focused conversational agents in person-centered care: a review of apps. Npj Digit Med. 2022;5(1):21.

Miner AS, Milstein A, Schueller S, Hegde R, Mangurian C, Linos E. Smartphone-based conversational agents and responses to questions about mental health, interpersonal violence, and physical health. JAMA Intern Med. 2016;176(5):619.

Nadarzynski T, Bayley J, Llewellyn C, Kidsley S, Graham CA. Acceptability of artificial intelligence (AI)-enabled chatbots, video consultations and live webchats as online platforms for sexual health advice. BMJ Sex Reprod Health. 2020;46(3):210–7.

Nadarzynski T, Puentes V, Pawlak I, Mendes T, Montgomery I, Bayley J, et al. Barriers and facilitators to engagement with artificial intelligence (AI)-based chatbots for sexual and reproductive health advice: a qualitative analysis. Sex Health. 2021;18(5):385–93.

Wilson N, MacDonald EJ, Mansoor OD, Morgan J. In bed with Siri and Google Assistant: a comparison of sexual health advice. BMJ. 2017;13:j5635.

• Napolitano L, Barone B, Spirito L, Trama F, Pandolfo SD, Capece M, et al. Voice assistants as consultants for male patients with sexual dysfunction: a reliable option? Int J Environ Res Public Health. 2023 ;20(3):2612. (This empirical investigation provides a direct comparison of the recognition and response capabilities of the presently dominant voice assistants in the context of inquiries pertaining to male sexual health.)

Van Heerden A, Ntinga X, Vilakazi K. The potential of conversational agents to provide a rapid HIV counseling and testing services. In: 2017 International Conference on the Frontiers and Advances in Data Science (FADS) [Internet]. Xi’an: IEEE; 2017 [cited 2023 Aug 7]. p. 80–5. Available from: http://ieeexplore.ieee.org/document/8253198/

Liu AY, Laborde ND, Coleman K, Vittinghoff E, Gonzalez R, Wilde G, et al. DOT Diary: developing a novel mobile app using artificial intelligence and an electronic sexual diary to measure and support PrEP adherence among young men who have sex with men. AIDS Behav. 2021;25(4):1001–12.

Dworkin MS, Lee S, Chakraborty A, Monahan C, Hightow-Weidman L, Garofalo R, et al. Acceptability, feasibility, and preliminary efficacy of a theory-based relational embodied conversational agent mobile phone intervention to promote HIV medication adherence in young HIV-positive African American MSM. AIDS Educ Prev. 2019;31(1):17–37.

Abercrombie G, Curry AC, Pandya M, Rieser V. Alexa, Google, Siri: What are your pronouns? Gender and anthropomorphism in the design and perception of conversational assistants [Internet]. arXiv; 2021 [cited 2023 Dec 12]. Available from: http://arxiv.org/abs/2106.02578

O’Connor JJM, Barclay P. The influence of voice pitch on perceptions of trustworthiness across social contexts. Evol Hum Behav. 2017;38(4):506–12.

Tolmeijer S, Zierau N, Janson A, Wahdatehagh JS, Leimeister JMM, Bernstein A. Female by default? – exploring the effect of voice assistant gender and pitch on trait and trust attribution. In: Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems [Internet]. Yokohama Japan: ACM; 2021 [cited 2023 Dec 8]. p. 1–7. Available from: https://dl.acm.org/doi/https://doi.org/10.1145/3411763.3451623

Ernst CPH, Herm-Stapelberg N. The impact of gender stereotyping on the perceived likability of virtual assistants. AMCIS 2020 Proceedings [Internet]. 4th ed. 2020; Available from: https://aisel.aisnet.org/amcis2020/cognitive_in_is/cognitive_in_is/4

Danielescu A, Horowit-Hendler SA, Pabst A, Stewart KM, Gallo EM, Aylett MP. Creating inclusive voices for the 21st century: a non-binary text-to-speech for conversational assistants. In: Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems [Internet]. Hamburg Germany: ACM; 2023 [cited 2023 Dec 7]. p. 1–17. Available from: https://dl.acm.org/doi/https://doi.org/10.1145/3544548.3581281

Balfour LA. #TimesUp for Siri and Alexa: sexual violence and the digital domestic. In: Patrick S, Rajiva M, editors. The Forgotten Victims of Sexual Violence in Film, Television and New Media [Internet]. Cham: Springer International Publishing; 2022 [cited 2023 Dec 7]. p. 163–77. Available from: https://link.springer.com/https://doi.org/10.1007/978-3-030-95935-7_9

UNESCO. https://en.unesco.org/Id-blush-if-I-could. 2021.

Cercas Curry A, Rieser V. #MeToo Alexa: how conversational systems respond to sexual harassment. In: Proceedings of the Second ACL Workshop on Ethics in Natural Language Processing [Internet]. New Orleans, Louisiana, USA: Association for Computational Linguistics; 2018 [cited 2023 Dec 8]. p. 7–14. Available from: http://aclweb.org/anthology/W18-0802

Leisten LM, Rieser V. Voice assistants’ response strategies to sexual harassment and their relation to gender. 2022 [cited 2023 Dec 8]; Available from: https://freidok.uni-freiburg.de/data/223817

Curry AC, Rieser V. A crowd-based evaluation of abuse response strategies in conversational agents. 2019 [cited 2023 Dec 9]; Available from: https://arxiv.org/abs/1909.04387

Chen Y, Mahoney C, Grasso I, Wali E, Matthews A, Middleton T, et al. Gender bias and under-representation in natural language processing across human languages. In: Proceedings of the 2021 AAAI/ACM Conference on AI, Ethics, and Society [Internet]. Virtual Event USA: ACM; 2021 [cited 2023 Dec 8]. p. 24–34. Available from: https://dl.acm.org/doi/https://doi.org/10.1145/3461702.3462530

Cirillo D, Gonen H, Santus E, Valencia A, Costa-jussà MR, Villegas M. Sex and gender bias in natural language processing. In: Sex and Gender Bias in Technology and Artificial Intelligence [Internet]. Elsevier; 2022 [cited 2023 Dec 8]. p. 113–32. Available from: https://linkinghub.elsevier.com/retrieve/pii/B9780128213926000091

Strengers Y, Qu L, Xu Q, Knibbe J. Adhering, steering, and queering: treatment of gender in natural language generation. In: Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems [Internet]. Honolulu HI USA: ACM; 2020 [cited 2023 Dec 8]. p. 1–14. Available from: https://dl.acm.org/doi/https://doi.org/10.1145/3313831.3376315

Manzini T, Yao Chong L, Black AW, Tsvetkov Y. Black is to criminal as Caucasian is to police: detecting and removing multiclass bias in word embeddings. In: Proceedings of the 2019 Conference of the North [Internet]. Minneapolis, Minnesota: Association for Computational Linguistics; 2019 [cited 2023 Dec 8]. p. 615–21. Available from: http://aclweb.org/anthology/N19-1062

Schlesinger A, O’Hara KP, Taylor AS. Let’s talk about race: identity, chatbots, and AI. In: Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems [Internet]. Montreal QC Canada: ACM; 2018 [cited 2023 Dec 8]. p. 1–14. Available from: https://doi.org/10.1145/3173574.3173889

Diaz M, Johnson I, Lazar A, Piper AM, Gergle D. Addressing age-related bias in sentiment analysis. In: Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems [Internet]. Montreal QC Canada: ACM; 2018 [cited 2023 Dec 8]. p. 1–14. Available from: https://doi.org/10.1145/3173574.3173986

Ghai B, Hoque MN, Mueller K. WordBias: an interactive visual tool for discovering intersectional biases encoded in word embeddings. In: Extended Abstracts of the 2021 CHI Conference on Human Factors in Computing Systems [Internet]. Yokohama Japan: ACM; 2021 [cited 2023 Dec 8]. p. 1–7. Available from: https://doi.org/10.1145/3411763.3451587

Seaborn K, Chandra S, Fabre T. Transcending the “male code”: implicit masculine biases in NLP contexts. In: Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems [Internet]. Hamburg Germany: ACM; 2023 [cited 2023 Dec 8]. p. 1–19. Available from: https://doi.org/10.1145/3544548.3581017

Rideout V, Fox S. Digital health practices, social media use, and mental well-being among teens and young adults in the US. Artic Abstr Rep. 2018;1093.

Gámez-Guadix M, Incera D. Homophobia is online: sexual victimization and risks on the internet and mental health among bisexual, homosexual, pansexual, asexual, and queer adolescents. Comput Hum Behav. 2021;119:106728.

Lucassen M, Samra R, Iacovides I, Fleming T, Shepherd M, Stasiak K, et al. How LGBT+ young people use the internet in relation to their mental health and envisage the use of e-therapy: exploratory study. JMIR Serious Games. 2018;6(4):e11249.

Scandurra C, Mezza F, Esposito C, Vitelli R, Maldonato NM, Bochicchio V, et al. Online sexual activities in Italian older adults: the role of gender, sexual orientation, and permissiveness. Sex Res Soc Policy. 2022;19(1):248–63.

Gewirtz-Meydan A, Opuda E, Ayalon L. Sex and love among older adults in the digital world: a scoping review. Gerontologist. 2023;63(2):218–30.

Masina F, Orso V, Pluchino P, Dainese G, Volpato S, Nelini C, et al. Investigating the accessibility of voice assistants with impaired users: mixed methods study. J Med Internet Res. 2020;22(9):e18431.

Pradhan A, Mehta K, Findlater L. “Accessibility came by accident”: use of voice-controlled intelligent personal assistants by people with disabilities. In: Proceedings of the 2018 CHI Conference on Human Factors in Computing Systems [Internet]. Montreal QC Canada: ACM; 2018 [cited 2023 Dec 9]. p. 1–13. Available from: https://dl.acm.org/doi/https://doi.org/10.1145/3173574.3174033

Salemi A, Mysore S, Bendersky M, Zamani H. LaMP: when large language models meet personalization. 2023 [cited 2023 Dec 9]; Available from: https://arxiv.org/abs/2304.11406

Gampe A, Zahner-Ritter K, Müller JJ, Schmid SR. How children speak with their voice assistant Sila depends on what they think about her. Comput Hum Behav. 2023;143:107693.

Hoegen R, Aneja D, McDuff D, Czerwinski M. An end-to-end conversational style matching agent. In: Proceedings of the 19th ACM International Conference on Intelligent Virtual Agents [Internet]. Paris France: ACM; 2019 [cited 2023 Dec 9]. p. 111–8. Available from: https://doi.org/10.1145/3308532.3329473

Srivastava S, Theune M, Catala A. The role of lexical alignment in human understanding of explanations by conversational agents. In: Proceedings of the 28th International Conference on Intelligent User Interfaces [Internet]. Sydney NSW Australia: ACM; 2023 [cited 2023 Dec 9]. p. 423–35. Available from: https://doi.org/10.1145/3581641.3584086

Thomas P, McDuff D, Czerwinski M, Craswell N. Expressions of style in information seeking conversation with an agent. In: Proceedings of the 43rd International ACM SIGIR Conference on Research and Development in Information Retrieval [Internet]. Virtual Event China: ACM; 2020 [cited 2023 Dec 9]. p. 1171–80. Available from: https://doi.org/10.1145/3397271.3401127

Prakash AV, Das S. Intelligent conversational agents in mental healthcare services: a thematic analysis of user perceptions. Pac Asia J Assoc Inf Syst. 2023;12(2):1.

Verma P. They fell in love with AI bots. A software update broke their hearts. The Washington Post [Internet]. 2023 Mar 30; Available from: https://www.washingtonpost.com/technology/2023/03/30/replika-ai-chatbot-update/

Cowan BR, Pantidi N, Coyle D, Morrissey K, Clarke P, Al-Shehri S, et al. “What can I help you with?”: infrequent users’ experiences of intelligent personal assistants. In: Proceedings of the 19th International Conference on Human-Computer Interaction with Mobile Devices and Services [Internet]. Vienna Austria: ACM; 2017 [cited 2023 Jun 28]. p. 1–12. Available from: https://doi.org/10.1145/3098279.3098539

Rheu M, Shin JY, Peng W, Huh-Yoo J. Systematic review: trust-building factors and implications for conversational agent design. Int J Human-Computer Interact. 2021;37(1):81–96.

Wienrich C, Reitelbach C, Carolus A. The trustworthiness of voice assistants in the context of healthcare investigating the effect of perceived expertise on the trustworthiness of voice assistants, providers, data receivers, and automatic speech recognition. Front Comput Sci. 2021;17(3):685250.

Common Sense Media. What’s that you say? Smart speakers and voice assistants toplines. 2019; Available from: https://www.commonsensemedia.org/sites/default/files/research/report/2019_cs-sm_smartspeakers-toplines_final-release.pdf

Szczuka JM, Strathmann C, Szymczyk N, Mavrina L, Krämer NC. How do children acquire knowledge about voice assistants? A longitudinal field study on children’s knowledge about how voice assistants store and process data. Int J Child-Comput Interact. 2022;33:100460.

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest

The authors declare no competing interests.

Human and Animal Rights and Informed Consent

This article does not contain any studies with human or animal subjects performed by any of the authors.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Szczuka, J.M., Mühl, L. The Usage of Voice in Sexualized Interactions with Technologies and Sexual Health Communication: An Overview. Curr Sex Health Rep 16, 47–57 (2024). https://doi.org/10.1007/s11930-024-00383-4

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11930-024-00383-4