Abstract

This paper discusses closed-loop identification of unstable systems. In particular, we first apply the joint input–output identification method and then convert the identification problem of unstable systems into that of stable systems, which can be tackled by using kernel-based regularization methods. We propose to identify two transfer functions by kernel regularization, the one from the reference signal to the input, and the one from the reference signal to the output. Since these transfer functions are stable, kernel regularization methods can construct their accurate models. Then the model of unstable system is constructed by ratio of these functions. The effectiveness of the proposed method is demonstrated by a numerical example and a practical experiment with a DC motor.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

A finite impulse response (FIR) model is a reasonable model for identification of linear time-invariant stable discrete-time system since it is linear-in-parameter and can approximate any systems by increasing the number of parameters (see e.g., Sect. 3.3 of [1]). The main drawback of FIR model is overfitting; when the number of data is small, the least squares estimate would be affected by noise significantly. To avoid overfitting, kernel regularization methods have been proposed and attracted a lot of attention in these days [1,2,3,4,5]. Kernel regularization methods estimate the FIR parameter through regularized least squares where the regularization term is designed based on a priori knowledge on the system, e.g., stability [1, 2], McMillan degree [6], relative degree [7, 8], or frequency response [9, 10].

One of the important challenges in kernel regularization is to identify unstable systems. In general, FIR model is not suitable for unstable systems, the impulse response of unstable system diverges, thus kernels for stable systems [11, 12] are not appropriate for unstable systems.

This paper proposes a simple and effective way to identify unstable systems with kernel regularization. The main idea of the proposed method comes from joint input–output identification approach (see e.g., Sect. 13.5 of [13]). We consider a closed-loop system stabilized by a controller, and first identify two stable transfer functions, one from reference to input and one from reference to output. Then the unstable target system is estimated by the ratio of these two transfer functions. Note that the above two transfer functions are stable if the controller stabilizes the target system, and thus the kernel regularization is available.

The advantages of the proposed method compared to the typical joint input–output identification are the following.

-

Compared to parametric approaches of joint input–output identification, the exact knowledge on the structure of the target transfer function is not required.

-

Compared to the case where two transfer functions are estimated by the least squares with FIR model, kernel regularization improves identification accuracy.

A preliminary version of this paper is presented in SICE Annual Conference 2022 [14]. Main differences between the preliminary version and this paper are (1) the main part of the paper (Sect. 4) is significantly revised, (2) the target in the numerical simulation is changed to more complicated one, and (3) a practical experiment is added.

Notation The set of real numbers are denoted by \({\mathbb {R}}\). The transpose of a matrix A is denoted by \(A^\textrm{T}\). In this paper, z denotes the complex frequency in z-transform. The discrete convolution between two time series \(g_t\) and \(u_t\) (\(g_t=u_t=0\) for \(t<0\)), i.e., \(\sum _{i=0}^{\infty } g_i u_{t-i}\) is denoted by \(g_t * u_t\). We also use this notation for truncated time series; if \(g_t=0\) for \(t\ge m\), \(g_t*u_t=\sum _{i=0}^{m-1} g_i u_{t-i}\).

2 Problem setting

This paper focuses on a single-input-single-output linear discrete-time system G(z). The system G(z) can be unstable, so there may be a pole of G(z) outside the unit circle in the complex plane. We consider the closed-loop setting as illustrated in Fig. 1.

Here, \(u_t, y_t, r_t\) and \(\varepsilon _t\) denote the input, output, reference and observation noise at time step t, respectively. The controller K(z) is assumed to stabilize the system, i.e., the poles of \(\frac{G(z)K(z)}{1+G(z)K(z)}\) lie inside the unit circle.

Based on these assumptions, this paper discusses the following problem.

Problem 1

Assume that the data \(\{(r_t, u_t, y_t)\}_{t=0}^{N-1}\) generated from the system illustrated in Fig. 1 are given. Estimate the frequency response of the system, i.e., \(G(e^{ {\mathrm i}\omega })\).

Remark 1

This paper assumes \(r_t=0\) for \(t<0\) for the ease of notation. The result can be extended to the case with \(r_t\ne 0\) for \(t<0\) by revising the matrix R defined in later.

3 Brief introduction of kernel regularization

This section briefly summarizes kernel regularization methods.

Since the kernel regularization is employed for open-loop experiment cases, we consider the system illustrated in Fig. 2. To avoid the confusion, we use another notation, \(u^o_t, y^o_t,\) and \(\varepsilon _t^o\) for input, output, and noise at time step t, respectively. The target to be estimated is denoted by H(z) whose impulse response is denoted by \(h_t\), and we assume \(h_t =0\) for \(t\ge m\). With these notations, the input–output relationship is given by the discrete convolution

The goal of kernel regularization is to estimate \(h_0, \ldots , h_{m-1}\) from \(\{(u_t^o, y_t^o)\}_{t=0}^{N-1}\).

Let

The estimate of impulse response

by kernel regularization is then given as follows.

Here, \(K \in {\mathbb {R}}^{m\times m}\) is a positive definite matrix whose (i, j) element is given by a bivariate function k(i, j). This function k is called a kernel function, and an appropriate design of kernel function improves the identification accuracy significantly.

Three most widely used kernels are tuned-correlated (TC) [1], diagonal-correlated (DC) [1], and stable-spline (SS) [2] defined as

Here, a pair \((\beta , \alpha )\) (or triplet \((\beta , \alpha , \rho )\)) is called hyperparameter.

It should be noted that these kernels are derived for stable systems, i.e., exponentially converging impulse response. Therefore, these kernels are not applicable to unstable systems in a straightforward manner.

4 Proposed method

Let us consider transfer functions from \(r_t\) to \(u_t\) and \(y_t\); \(G_{ru}(z)=\frac{K(z)}{1+G(z)K(z)}\) and \(G_{ry}(z)=\frac{G(z)K(z)}{1+G(z)K(z)}\). Then, the target system G(z) is computed from these two stable transfer functions as \(G(z)=\frac{G_{ry}(z)}{G_{ru}(z)}\). This idea is used in systems identification; we can estimate G(z) by estimating two transfer functions \(G_{ru}(z)\) and \(G_{ry}(z)\). Such an identification method is called joint input–output identification (see e.g., Sect. 13.5 of [13]).

Typical difficulties of joint input–output identification is the following:

-

The structure of G(z) is unknown in general. Therefore, if we employ the parametric approach, how to select the structures of \(G_{ru}(z)\) and \(G_{ry}(z)\) itself is a difficult task.

-

If we employ nonparametric approach, overfitting problem arises especially when the amount of data is not enough.

To solve these difficulties, this paper proposes to connect joint input–output identification and kernel regularization. Note that the transfer functions \(G_{ru}(z)\) and \(G_{ry}(z)\) are stable since K(z) stabilizes the closed-loop system, thus kernel regularization is available.

Let \(g^{ru}_t\) and \(g^{ry}_t\) be impulse responses of \(G_{ru}(z)\) and \(G_{ry}(z)\), respectively. This paper proposes the following procedure to identify the unstable system G(z).

-

Step 1

Select \(m\le N\) be a sufficiently large natural number such that \(g^{ru}_t\) and \(g^{ry}_t\) can be truncated for \(t\ge m\).

-

Step 2

Estimate \(g^{ru}_t\) and \(g^{ry}_t\) (\(0\le t \le m-1\)) by a kernel regularization method. In more detail, let

Then,

where \(K_{ru} \in {\mathbb {R}}^{m\times m}\) and \(K_{ry} \in {\mathbb {R}}^{m\times m}\) are kernel matrices with respect to \(g^{ru}_t\) and \(g^{ry}_t\), respectively, whose (i, j) element is defined by k(i, j).

Remark 2

Hyperparameters for \(K_{ru}\) and \(K_{ry}\) can be different. How to tune these hyperparameters are discussed later.

-

Step 3

Compute discrete-time Fourier transformation of \(\hat{g}^{ru}_t\) and \(\hat{g}^{ry}_t\):

$$\begin{aligned} \hat{G}_{ru}(e^{{\mathrm i}\omega })=&\textstyle \sum \limits _{j=0}^{N-1} \hat{g}^{ru}_j e^{-{\mathrm i}j \omega }, \end{aligned}$$(12)$$\begin{aligned} \hat{G}_{ry}(e^{{\mathrm i}\omega })=&\textstyle \sum \limits _{j=0}^{N-1} \hat{g}^{ry}_j e^{-{\mathrm i}j \omega }. \end{aligned}$$(13)

Construct \(\hat{G}(e^{{\mathrm i}\omega })\) by \(\frac{\hat{G}_{ry}(e^{{\mathrm i}\omega })}{\hat{G}_{ru}(e^{{\mathrm i}\omega })}\).

Main advantages of the proposed method are shown in the following:

-

The structure of G(z) could be unknown.

-

Thanks to the kernel regularization, the identification accuracy is improved compared to the least squares estimate especially when the data are not adequate.

The remaining problem is how to tune the hyperparameter for \(K_{ru}\) and \(K_{ry}\). To this end, the effect of observation noise \(\varepsilon _t\) should be noted. From Fig. 1, the relations between \(r_t\) and \(u_t, y_t\) are given as

with a slight abuse of notation.Footnote 1 This suggests that the observation noise shows unknown colored behavior if we consider the relations between \(r_t\) and \(u_t, y_t\). Based on the above observation, standard hyperparameter tuning methods such as empirical Bayes can not be used since they assume the whiteness of the observation noise.Footnote 2

Based on this, this paper employs validation data to tune the hyperparameter. In the following, we use \(\rho \) to denote the hyperparameter. The estimation of \(g_t^{ru}\) and \(g_t^{ry}\) including hyperparameter tuning is summarized as follows.

-

Step 0

Prepare two sets of observed data with the fixed controller as \(\{(r_t, u_t, y_t)\}_{t=0}^{N-1}\) and \(\{(\bar{r}_t, \bar{u}_t, \bar{y}_t)\}_{t=0}^{\bar{N}-1}\). Here, \(\bar{r}_t, \bar{u}_t, \bar{y}_t\) are the reference, input, and output signals used in the second experiment.

-

Step 1

Generate candidates of hyperparameter \(\{\rho _i\}_{i=1}^{N_{\rho }}\).

-

Step 2

Estimate the impulse response of \(G_{ru}(z)\) and \(G_{ry}(z)\) from the first data set with \(\rho _i\), and denote them by \(\hat{g}^{ru}_t(\rho _i)\) and \(\hat{g}^{ry}_t(\rho _i)\).

-

Step 3

Compute

$$\begin{aligned} E_{ru}(\rho _i)=&\textstyle \sum \limits _{t=0}^{\bar{N}-1} \left( \bar{u}_t-\hat{g}^{ru}_t(\rho _i)*\bar{r}_t\right) ^2,\end{aligned}$$(16)$$\begin{aligned} E_{ry}(\rho _i)=&\textstyle \sum \limits _{t=0}^{\bar{N}-1} \left( \bar{y}_t-\hat{g}^{ry}_t(\rho _i)*\bar{r}_t\right) ^2. \end{aligned}$$(17) -

Step 4

Select

$$\begin{aligned} \rho _{ru}^*=\mathop {\textrm{argmin}}\limits _{\rho _i}\ E_{ru}(\rho _i), \end{aligned}$$(18)$$\begin{aligned} \rho _{ry}^*=\mathop {\textrm{argmin}}\limits _{\rho _i}\ E_{ry}(\rho _i) , \end{aligned}$$(19)

and return \(\hat{g}_t^{ru}(\rho _{ru}^*)\) and \(\hat{g}_t^{ru}(\rho _{ru}^*)\) as the estimates.

Remark 3

There are several alternative approaches to the above hyperparameter tuning method such as two-fold cross validation, SURE, or generalized cross validation (see e.g., [15, 16]). As shown in the following examples, however, the above simple tuning method also works well.

5 Numerical example

To demonstrate the effectiveness of the proposed method, this section shows a numerical example with a model of magnetic levitation model used in [17]:

A stabilized controller for this system is selected as

The length of experiment N and the length of FIR m are set to 255, and M sequence (Maximum-length sequence) is used as reference signal \(r_t\). The noise variance is selected so as to SNR becomes 20 (dB). TC kernel is employed as the kernel function, and the candidates of hyperparameter are \(\{\alpha _i\}_{i=1}^{10} \times \{\beta _i\}_{i=1}^{10}\) where \(\alpha _i\) and \(\beta _i\) are logarithmically spaced from 0.8 to 0.99 and from \(10^{-4}\) to \(10^4\), respectively.

Based on the above setting, we identified the system for 30 times with independent noise realizations.

Figures 3 and 4 are impulse responses of \(G_{ru}(z), G_{ry}(z)\) and their estimates. The horizontal and vertical axes show time and impulse response, respectively.Footnote 3 The 30 estimates are shown with gray lines, and the true ones are shown with red lines.

We have several observations from these figures.

-

The behavior of these impulse responses is complicated, thus a parametric approach of joint input–output identification is not easy if we do not know the structure of P.Footnote 4

-

Although the impulse response behaves in a complicated way, the estimates well approximate the true ones thanks to kernel regularization.

-

Convergence rates and scales of the impulse responses of \(G_{ru}(z)\) and \(G_{ry}(z)\) are different. Hence it is natural to tune hyperparameters of \(K_{ru}\) and \(K_{ry}\) separately.

Based on these estimates, we computed \(\hat{G}(e^{{\mathrm i}\omega })\).

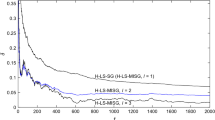

Figure 5 shows the estimated Bode diagram. The horizontal axes show the frequency with rad/sample, and the vertical axes show the gain and phase, respectively. The red lines show the true responses, and the gray lines show the 30 estimated responses. We can confirm that the estimates well approximate the true response.

This result suggests the following advantage of the proposed method.

-

Although the proposed method is one of the nonparametric methods whose number of parameter m is equivalent to the number of data N, the estimated result well approximates the true response and thus the overfitting is avoided.

-

An unstable system can be identified by the proposed method with kernel regularization which is developed for stable systems.

-

Different from [18], the periodicity of the reference signal is not required.

To show the effectiveness of the proposed method more clearly, we compare the result with a parametric approach of joint input–output identification. We employed tfest function in MATLAB System identification toolbox (ver 9.14). The number of poles of \(G_{ru}(z)\) and \(G_{ry}(z)\) are set to 6 and 7, and the number of zeros of \(G_{ru}(z)\) and \(G_{ry}(z)\) are set to 5 and 6, respectively. Note that these values are true ones.

Figures 6 and 7 show the estimated impulse responses of \(G_{ru}(z)\) and \(G_{ry}(z)\) with the parametric approach, respectively. The definitions of axes and the lines are the same as Figs. 3 and 4. We can see that \(G_{ru}(z)\) is approximated well, but \(G_{ry}(z)\) is not well approximated.

Figure 8 shows the estimated frequency response with the parametric approach. The definitions of axes and the lines are the same as Fig. 5. Since models are parametric ones, frequency responses are smooth. However, the identification accuracy becomes worse compared to the proposed method especially in the low frequency range.

Table 1 shows the mean, variance, and median of Fit values of estimated impulse responses where Fit is defined by

where \(\hat{g}^{\bullet }_t\) and \(g^{\bullet }_t\) are estimated and true impulse responses, respectively. Here, \(\bullet \) indicates either ru or ry. This table indicates that the proposed method estimates the impulse responses with small variance compared to the parametric approach in this case. In particular, \(G_{ry}\) is much better estimated by the proposed method than the parametric approach.

These results show the effectiveness of the proposed method clearly; even if the structure of the target system is known, the proposed method shows slightly better identification accuracy compared to the parametric approach. As mentioned above, the structure of the target system itself is often unknown in practice. Thus the above result clearly shows the importance of the proposed method.

Remark 4

Since \(N=m\) and the SNR is 20 (dB), the least squares method shows overfitting. Since the results cannot be plotted in the same range and the order of Fit becomes about \(10^{20}\), we omit the results with the least squares method.

6 Practical experiment

To demonstrate the effectiveness of the proposed method, we show a practical experiment with a DC motor in this section.

The target system is Quanser QUBE Servo 2 shown in Fig. 9. The input is voltage, and the angular velocity is chosen as the output. The sampling time is 0.002 s. We added a 15 g load to the motor and considers a model around inverted position to make the system unstable.

To describe the experimental setup clear, we define the angle of the motor measured from up-direction \(\theta \) as shown in Fig. 10. With this notation, our goal is described as to model the behavior of \(\dot{\theta }\) around \(\theta =0\).

To achieve these, we employed the closed-loop system shown in Fig. 11. The block \(\frac{1}{z-1}\) indicates the integrator. Although the output \(y_t\) is angular velocity, the signal \(r_t\) gives a reference to the angle. Note that the proposed method can be also used in this setting since \(G_{ru}(z)\) and \(G_{ry}(z)\) become \(\frac{K(z)}{1+P(z)K(z)\frac{1}{z-1}}\) and \(\frac{P(z)K(z)}{1+P(z)K(z)\frac{1}{z-1}}\), respectively, and still the relation \(G(z)=\frac{G_{ry}(z)}{G_{ru}(z)}\) holds.

Based the above setting, we collected the input/output data. We first set \(\theta =\frac{1}{16}\pi \), and then set \(\theta =0\).

We applied the proposed method with \(N=m=750\). TC kernel is employed as the kernel function, and the candidates of hyperparameters are the same with the ones used in the simulation. Figures 12 and 13 show the measured input/output and the estimated ones from \(\hat{g}_t^{ru}\) and \(\hat{g}^{ry}_t\), respectively. The solid lines shows the measured signal, and the broken lines show the estimated ones. These results indicate that the estimated impulse responses reproduce the measured signal well.

Figures 14 and 15 show the estimated impulse responses of \(G_{ru}(z)\) and \(G_{ry}(z)\), respectively. Thanks to the kernel regularization, the estimated results seem to avoid overfitting.

Figure 16 shows the Bode diagram of the estimated target transfer function. The horizontal axis shows the frequency with Hz. Gain diagram suggests that the system shows 20 [dB/dec] high-frequency decay property. This is reasonable since (stable) DC motors are often model as \(\frac{b}{s+a}\) where s denotes the complex frequency in Laplace transform.

From the above result, the effectiveness of the proposed method is experimentally validated.

7 Conclusion

This paper proposes an identification procedure for unstable systems with kernel regularization. In particular, the proposed method employs joint input–output identification framework, i.e., estimates two transfer functions from reference signal to input and output, and then constructs the model of system with these models. A numerical example is shown to demonstrate the effectiveness of the proposed method. In particular, the proposed method gives slightly better estimates even when the parametric approach is available, i.e., when the true structure of the system is known. When the structure is unknown, the proposed method would be much better than the parametric approach. In addition to the simulation, a practical experiment with a DC motor is also shown.

Estimation of noise model is one of the future tasks.

Notes

We consider \(G_{ru}, G_{ry}, G, K\) as transfer functions defined with time shift operator.

Strictly speaking, empirical Bayes is available if the correlation of the noise is known. In our setting, however, it is unknown and thus hard to employ empirical Bayes hyperparameter tuning.

In fact, as we will show later the parametric approach is difficult even when we know the structure of P.

References

Chen, T., Ohlsson, H., & Ljung, L. (2012). On the estimation of transfer functions, regularizations and Gaussian processes-Revisited. Automatica, 48(8), 1525–1535.

Pillonetto, G., & De Nicolao, G. (2010). A new kernel-based approach for linear system identification. Automatica, 46(1), 81–93.

Pillonetto, G., Dinuzzo, F., Chen, T., De Nicolao, G., & Ljung, L. (2014). Kernel methods in system identification, machine learning and function estimation: A survey. Automatica, 50(3), 657–682.

Dinuzzo, F. (2015). Kernels for linear time invariant system identification. SIAM Journal on Control and Optimization, 53(5), 3299–3317.

Pillonetto, G., Chen, T., Chiuso, A., Nicolao, G. D., & Ljung, L. (2022). Regularized System Identification—Learning Dynamic Models from Data. Cham: Springer.

Prando, G., & Chiuso, A. (2015). Model reduction for linear Bayesian System Identification. In Proceedings of the 54th IEEE Conference on Decision and Control, pp. 2121–2126. Osaka, Japan.

Fujimoto, Y., Maruta, I., & Sugie, T. (2017). Extension of First-Order Stable Spline Kernel to Encode Relative Degree. In Proceedings of the 20th IFAC World Congress, pp. 15481–15486. Toulouse, France.

Fujimoto, Y., & Chen, T. (2019). On the coordinate change to the first-order spline kernel for regularized impulse response estimation. arXiv:1901.10835

Fujimoto, Y. (2020). Kernel regularization in frequency domain: Encoding high-frequency decay property. IEEE Control Systems Letters, 5(1), 367–372.

Fujimoto, Y. (2021). Kernel regularization for low-frequency decay systems. In Proceedings of the 60th IEEE Conference on Decision and Control, pp. 3018–3023. IEEE.

Bisiacco, M., & Pillonetto, G. (2020). Kernel absolute summability is sufficient but not necessary for RKHS stability. SIAM Journal on Control and Optimization, 58(4), 2006–2022.

Bisiacco, M., & Pillonetto, G. (2020). On the mathematical foundations of stable RKHSS. Automatica, 118, 109038.

Ljung, L. (1999). System Identification: Theory for the User (2nd ed.). New Jersey: Prentice Hall.

Fujimoto, Y., & Sugie, T. (2022). Joint input-output identification of unstable systems with Kernel regularization. In Proceedings the SICE Annual Conference 2022, pp. 679–681.

Mu, B., Chen, T., & Ljung, L. (2018). On asymptotic properties of hyperparameter estimators for kernel-based regularization methods. Automatica, 94, 381–395.

Ju, Y., Chen, T., Mu, B., & Ljung, L. (2021). On asymptotic distribution of generalized cross validation hyper-parameter estimator for regularized system identification. In 2021 60th IEEE Conference on Decision and Control (CDC), pp. 1598–1602. IEEE.

Maruta, I., & Sugie, T. (2022). Closed-loop subspace identification for stable/unstable systems using data compression and nuclear norm minimization. IEEE Access, 10, 21412–21423.

Fujimoto, Y. (2022). Kernel-based regularization for unstable systems. In Proceedings of the 61st IEEE Conference Decision and Control, pp. 209–214. Cancún, Mexico.

Author information

Authors and Affiliations

Corresponding author

Additional information

This work was supported by JSPS KAKENHI (Nos. 22K14281, 22H01653).

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Fujimoto, Y., Sugie, T. Joint input–output identification of unstable systems with kernel regularization. Control Theory Technol. 22, 195–202 (2024). https://doi.org/10.1007/s11768-024-00216-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11768-024-00216-8