ABSTRACT

BACKGROUND

There have been no prior population-based studies of variation in performance of hospitalists.

OBJECTIVE

To measure the variation in performance of hospitalists.

DESIGN

Retrospective research design of 100 % Texas Medicare data using multilevel, multivariable models.

SUBJECTS

131,710 hospitalized patients cared for by 1,099 hospitalists in 268 hospitals from 2006–2009.

MAIN MEASURES

We calculated, for each hospitalist, adjusted for patient and disease factors (case mix), their patients' average length of stay, rate of discharge home or to skilled nursing facility (SNF) and rate of 30-day mortality, readmissions and emergency room (ER) visits.

KEY RESULTS

In two-level models (admission and hospitalist), there was significant variation in average length of stay and discharge location among hospitalists, but very little variation in 30-day mortality, readmission or emergency room visit rates. There was stability over time (2008–2009 vs. 2006–2007) in hospitalist performance. In three-level models including admissions, hospitalists and hospitals, the variation among hospitalists was substantially reduced. For example, hospitals, hospitalists and case mix contributed 1.02 %, 0.75 % and 42.15 % of the total variance in 30-day mortality rates, respectively.

CONCLUSIONS

There is significant variation among hospitalists in length of stay and discharge destination of their patients, but much of the variation is attributable to the hospitals where they practice. The very low variation among hospitalists in 30-day readmission rates suggests that hospitalists are not important contributors to variations in those rates among hospitals.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Hospitalists are physicians who specialize in the care of hospitalized patients. There are advantages and disadvantages to the “hospitalist” model. The potential advantages stem from greater efficiency and expertise from physicians concentrating just on inpatient care.1–5 The potential disadvantages derive from discontinuities in care: the unfamiliarity of the hospitalist with the patient and the communication errors that might occur during transitions from outpatient to inpatient, and vice versa, between different physicians.6–16 The negative impact of discontinuity on quality of care may be greater in the elderly.

We have used 5 % national Medicare data to describe the growth of hospitalists from 1996 through 2006,17,18 to evaluate the association of care by hospitalists with length of stay,17–20 to assess how the impact of hospitalists varies by patient and hospital characteristics,18 to examine how hospitalist care affects continuity of care,21–23 to describe the growing role of hospitalists in caring for surgical patients,24 and to describe the outcomes of hospitalist care.19,20,23,25 We found that hospitalist care was associated with shorter length of stay and lower hospital costs, but with higher medical costs post-discharge.19,20 In addition, patients receiving hospitalist care were less likely to be discharged to their homes and more likely to been seen in an emergency room (ER) in the 30 days after discharge.19,20

Variation in outcomes and quality of care that cannot be explained by illness severity, patient preference, or “unwarranted variation”, indicates an opportunity to decrease the cost or improve the effectiveness of healthcare.26–29 There is a substantial literature demonstrating that quality and outcomes of medical care vary among providers, and that this can be measured.30–34 However, to our knowledge, there have been no prior studies of variation in care among hospitalists. For example, are there significant, reproducible differences among hospitalists in the length of stay of their patients, in the percent of patients who are discharged home compared to a skilled nursing facility (SNF), or in 30-day readmission rates? What are the relative contributions of hospitalists, hospitals and patient case mix to readmission rates and other measures? In this report, we use 100 % Texas Medicare data to study 1,099 hospitalists practicing at 268 hospitals in Texas, and use multilevel models to study variation in length of stay and outcomes of care at the level of the individual hospitalist.

METHODS

Source of Data

Claims from the years 2005 to 2009 for 100 % of Texas Medicare beneficiaries were used, including Medicare beneficiary summary files, Medicare Provider Analysis and Review (MedPAR) files, Outpatient Standard Analytical Files (OutSAF), and Medicare Carrier files. Diagnosis related groups (DRG) associated information, including weights, Major Diagnostic Categories (MDC), and geometric mean length of stay, were obtained from Centers for Medicare & Medicaid Services (https://www.cms.gov/Medicare/Medicare-Fee-for-Service-Payment/AcuteInpatientPPS/index.html).

Identification of Hospitalists

Hospitalists are defined as generalist physicians (general practitioner, family physician, internist or geriatrician) who had at least 100 evaluation-and-management (E&M) billings in a given year and generated at least 90 % of their total E&M billings in that year from inpatient services.17 Inpatient E&M billings were identified by Current Procedural Terminology (CPT) codes 99221-99223, 99231-99233 and 99251-99255. Outpatient E&M billings were identified by CPT codes 99201-99205, 99211-99215 and 99241-99245 from Carrier files.17 In sensitivity analyses, we varied the minimum number of E&M billings required for identification of hospitalists, and also the percentage of those bills from inpatient services. This had relatively small effect on the number of hospitalists identified. For example, raising the number of E&M charges to 200 from 100 decreased the number of hospitalists identified from 1,099 to 1,068, while reducing the percentage of E&M charges from 90 % to 75 % increased the number from 1,099 to 1,123.

Establishment of the Study Cohort

This process is outlined in Table 1. From 2008 and 2009 MedPAR files, we started with all admissions and selected hospital admissions with a medical DRG from acute care hospitals in Texas. We excluded admissions with obstetric services, major trauma and intensive care unit (ICU) services. We excluded admissions with ICU stays, because the algorithm for identifying hospitalists cannot distinguish regular hospitalists from generalist physicians who are full-time intensive care physicians. We next identified admissions cared for by hospitalists. To identify these admissions, we first identified all the treating physicians for each hospitalization by linking inpatient E&M billings in the Carrier files to the admission record in MedPAR files. If all of the E&M billings by generalist physicians for a given admission were from hospitalists, the admission was considered an admission cared for by hospitalists. Among those admissions, we selected those in which one hospitalist was responsible for > 50 % of all hospitalist charges. For patients with more than one admission in a given year, we randomly selected one admission per patient per year, in order to avoid clustering at the patient level. In additional analyses with 30-day readmission rate as the outcome, we included all admissions for those patients with multiple admissions in a year. The results were almost identical. We further excluded patients who were enrolled in health maintenance organizations (HMOs) or did not have continuous Medicare Parts A and B coverage in the 12 months prior to the admission of interest, because such individuals may have incomplete information on covariates (such as comorbidity). This resulted in 138,761 admissions in the initial study cohort. From these, we selected admissions associated with a major hospitalist who cared for at least 30 admissions during the study period, leaving 131,710 admissions and 1,099 hospitalists. Hofer et al.35 has shown that provider-level performance measures have a reliability greater than 0.8 for a panel of 100 patients with an intraclass correlation coefficient (ICC) of 0.04. Depending on the particular outcome, additional selection criteria described in the Study Outcomes section were applied. We also built a cohort in the same manner from 2006 and 2007 MedPAR files, in order to study the consistency in performance of the hospitalists across two time periods.

Covariates

We categorized beneficiaries by age, gender and ethnicity using Medicare beneficiary summary files. We used the Medicaid indicator as a proxy of low income. Information on weekday vs. weekend admission, emergent admission, and DRG were obtained from MedPAR files. Elixhauser medical conditions were identified using the claims from MedPAR, Carrier and OutSAF files in the year prior to that of the admission of interest.36 We also assessed whether a patient had a primary care physician (PCP). A PCP was defined as a general practitioner, family physician, internist or geriatrician who saw the patient on three or more occasions in an outpatient setting (CPT E&M codes 99201-99205 and 99211-99215) in the prior year.37 Total hospitalizations and outpatient visits in the prior year were identified from MedPAR files and Carrier files, respectively.

Study Outcomes

Hospital length of stay was obtained from MedPAR files. For each admission, we calculated a difference in length of stay by subtracting the geometric mean length of stay for that DRG obtained from the Center for Medicare and Medicaid Services from the actual length of stay. This measure intrinsically controls for case mix among hospitalists, because geometric mean length of stay differs for each DRG. We excluded outliers more than three standard deviations from the norm in order to approximate the normal distribution and analyze with a hierarchical general linear model, leaving 129,491 admissions, and 1,099 hospitalists.

Mortality within 30 days of admission was calculated from date of death in the Medicare beneficiary summary file. These analyses included all 131,710 admissions and 1,099 hospitalists in the cohort. We chose mortality within 30 days of admission rather than from discharge to avoid biases in different hospital length of stay among hospitalists. However, our analyses of 30-day post discharge mortality produced almost identical results.

We calculated the rate of admissions discharged home and the rate discharged to a Skilled Nursing Facility (SNF), obtained from MedPAR files. We excluded those who were discharged dead, transferred to another acute care hospital or had stayed in a nursing facility any time in the three months prior to the admission of interest, leaving 99,522 admissions and 990 hospitalists.

ER visits were identified by CPT E&M codes 99281-99285 and 99288 from Carrier files. To study readmissions and ER visits within 30 days of discharge, we excluded those who were discharged dead or transferred to another acute care hospital, or died in the 30 days post discharge without an event (readmission or ER visit), leaving 108,547 admissions and 1,019 hospitalists in the study cohort for 30-day readmission, and 108,226 admissions and 1,018 hospitalists for 30-day ER visits. Readmissions and ER visits were not mutually exclusive; i.e., most readmissions also had an ER visit.

Statistical Analyses

Multilevel analyses were used to account for the clustering of patients within hospitalists and hospitalists within hospitals. For differences in length of stay, a hierarchical general linear model was used. For other outcomes, we used hierarchical generalized linear models with binomial distribution. The hospitalist-specific estimates were derived from two-level models adjusted with patient characteristics and then plotted by rank, and from three-level models including hospitals. Patient characteristics included age, race/ethnicity, gender, Medicaid eligibility, emergency admission, weekend admission, DRG weight, MDC, Elixhauser medical condition (29 individual indicators), number of hospitalizations, number of physician visits and having a PCP in the year prior to the admission of interest. For the model analyzing differences in length of stay, DRG weight was not adjusted because it was a within-DRG comparison. Because some hospitalists cared for admissions at more than one hospital in the three-level models, we assigned hospitalists to the hospital in which > 50 % of their E&M charges occurred, and excluded admissions by those hospitalists to other hospitals. All analyses were performed with SAS version 9.2 (SAS Inc., Cary, NC). The threshold models for the partitioned variances were performed with MLwiN version 2.02.38

RESULTS

The final sample was 131,710 admissions cared for by 1,099 hospitalists. The median number of Medicare admissions cared for by each hospitalist was 98, and the 25th and 75th percentiles were 54 and 156. The characteristics of those admissions are summarized in Table 2. The average length of stay was 4.2 days. For patients admitted from home, 81.5 % were discharged back to their home, with the remainder going to a SNF, rehabilitation or other inpatient facilities. The 30-day readmission rate was 15.8 %; 19.8 % were seen in an ER within 30 days of discharge; and mortality within 30 days after admission was 7.7 %.

Our primary interest was variation in patient length of stay, discharge location and 30-day outcomes, at the level of each hospitalist. For this, we conducted a series of two-level models, controlling for the characteristics in Table 2.

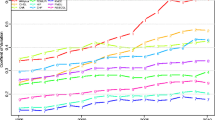

Figure 1a shows the variation in length of stay for each hospitalist. This is a cumulative distribution showing the mean value and 95 % confidence intervals for each hospitalist, derived from the two-level multivariable model. Dark vertical lines indicate hospitalists whose average length of stay is significantly different from the mean. The patients of 198 hospitalists (18 %) had significantly shorter lengths of stay, while the patients of 214 hospitalists (19 %) had significantly longer lengths of stay. A similar pattern is shown for the percent of patients discharged home (Fig. 1b) and discharged to a SNF (Fig. 1c), but with fewer hospitalists being significantly different from the mean. Very few hospitalists were significantly different from the mean in 30-day mortality (Fig. 1d). There were no significant differences among hospitalists in 30-day readmission rates. For 30-day ER visit rates, only one hospitalist was significantly higher and two significantly lower than the mean (data not shown).

Differences in length of stay (a); rates of admissions discharged home (b); rate discharged to skilled nursing facility (c); and 30-day mortality rates (d) for Texas hospitalists, from lowest to highest. The differences or rates were estimated by 2-level analyses, adjusted with patient characteristics. The horizontal line represents the overall mean. Error bars represent 95 % confidence intervals of the estimate for the individual hospitalist. Black error bars represent hospitalists with significantly higher or lower estimates.

We evaluated the stability of hospitalist performance over time by assessing the average adjusted length of stay for hospitalists in 2008–2009 compared to their length of stay in 2006–2007. We ranked the 633 hospitalists with > 30 admissions in both 2006–2007 and 2008–2009 by quintile of their average adjusted length of stay in 2008–2009 (Table 3). Of hospitalists in the first quintile in length of stay in 2008–2009, 50.8 % were in the first quintile and 25.4 % were in the second quintile in 2006–2007. For those in the fifth quintile in 2008–2009, 56.3 % were in the fifth quintile and 30.2 % in the fourth quintile in 2006–2007.

The two-level models described above do not account for the fact that hospitalists cluster within hospitals. Therefore, to distinguish variation at the hospitalist level from variation among hospitals, we constructed three-level models examining the contribution of patient, hospitalist and hospital to variations in length of stay, discharge destination and 30-day outcomes. Table 4 presents the proportion of the variation (ICC) at the hospitalist and hospital level for each of these measures. Also shown is the partitioned variance, which is the percentage of total variance contributed by hospitals, hospitalists and measurable patient factors (case mix). For length of stay, hospitals and hospitalists contributed roughly equally to the variation, while for discharge destination (home or SNF), the hospital contribution was larger than that of hospitalists. The variance at the hospital and hospitalist level in 30-day readmission, 30-day ER visit rates and mortality were small, but significantly greater than 0. Similarly, the ICCs for these outcomes were small. For the 30-day outcomes, the contribution of patient-level factors was one to two orders of magnitude higher than that of hospital- and hospitalist-level factors.

In all the analyses above, if a patient had multiple hospitalizations in a given year, we selected one at random to avoid clustering at the patient level. However, this method would tend to lower estimates of the rehospitalization rate. Accordingly, in supplemental analyses we included all admissions. This had almost no effect on the estimates of the ICC for readmission.

DISCUSSION

The purpose of this study was to assess the extent of variation in the care provided by hospitalists by measures of their practice: average adjusted length of stay, discharge destination, 30-day mortality, and rates of readmission and ER visits within 30 days of discharge. We found significant variation in length of stay and discharge destination among hospitalists, a variation that was stable over time. However, much of the variation among hospitalists was because of clustering of hospitalists within hospitals. We found little to no variation among hospitalists in 30-day mortality, rehospitalization or ER visit rates, either in the two-level or three-level models.

The variations in lengths of stay and discharge destination, between hospitalists and between hospitals, suggest underlying variations in hospitalist practice styles and hospital-based systems of care. The relative lack of variation at the hospitalist level in 30-day outcomes is not likely due to insufficient power, given the large number of hospitalists and substantial number of admissions per hospitalist. This suggests that the hospitalist practice styles that lead to variations in length of stay and discharge destination do not have a noticeable impact on mortality, readmission rates or ER visit rates. Several prior studies have also suggested a weak link between care in hospital and readmission rates.39–41 First, practice styles leading to better performance on measures of hospital discharge planning are not associated with significant improvement in readmission rates.39 Second, regional baseline admission rates (a measure of primary care practice styles and systems) have a much greater influence on readmission rates than patient or hospital factors.40 Third, most interventions (largely impacting hospital-based practice styles or systems) have failed to substantially reduce the risk of readmission.41

Hospitals are being held accountable for readmissions of patients they discharge.42 Hospitals are likely to shift some of this accountability to their hospitalists. Our findings suggest that this shift may be misguided. In the three-level model apportioning variance in readmission rates among hospitals, hospitalists and measurable patient characteristics (case mix), the percent of total variance from hospitals (0.09 %) and hospitalists (0.18 %) was more than two orders of magnitude lower than that attributable to case mix (24.03 %).

Our study has limitations. It is an observational study and susceptible to bias and confounding. We studied patients with fee-for-service Medicare who received care in a single large state in the USA over a two-year period. It is possible that our results may not apply to a younger population, those in other states, or during a different time period. In particular, there are substantial variations in hospital readmission rates among different regions of the USA.39,40 This variation would be missed in the current analyses. We excluded patients with ICU stays in this study, so our results do not apply to critically ill patients. We focused on variation among hospitalists, but did not examine the impact of characteristics of individual hospitalists (years of experience, training, etc.) on that variation. We also did not look at continuity of care, i.e., whether the patient was cared for by one, two or several hospitalist while hospitalized.

As hospitalist care continues to increase in prevalence in the USA, so does the ability of hospitalists to impact the cost and quality of hospital care.17 Our study suggest a potential opportunity for hospitalists to further impact the cost of care by decreasing variability in their clinical practice that leads to the variation in length of stay and discharge location. Our study also suggests that an approach of making hospitalists accountable for decreasing the cost of care related to readmissions may be flawed, and could lead to unintended negative consequences.43

REFERENCES

Wachter RM, Goldman L. The emerging role of “hospitalists” in the American health care system. N Engl J Med. 1996;335:514–7.

Bryant DC. Hospitalists and ‘officists’ preparing for the future of general internal medicine. J Gen Intern Med. 1999;14:182–5.

Coffman J, Rundall TG. The impact of hospitalists on the cost and quality of inpatient care in the United States: a research synthesis. Med Care Res Rev. 2005;62:379–406.

Wachter RM. Reflections: the hospitalist movement a decade later. J Hospital Med. 2006;1:248–52.

Wachter RM. The state of hospital medicine in 2008. Med Clin North Am. 2008;92:265–73. vii.

Hinami K, Farnan JM, Meltzer DO, Arora VM. Understanding communication during hospitalist service changes: a mixed methods study. J Hosp Med. 2009;4:535–40.

Kripalani S, LeFevre F, Phillips CO, Williams MV, Basaviah P, Baker DW. Deficits in communication and information transfer between hospital-based and primary care physicians: implications for patient safety and continuity of care. JAMA. 2007;297:831–41.

Pham HH, Grossman JM, Cohen G, Bodenheimer T. Hospitalists and care transitions: the divorce of inpatient and outpatient care. Health Aff (Millwood). 2008;27:1315–27.

Meltzer D. Hospitalists and the doctor-patient relationship. J Legal Stud. 2001;30:589–606.

Coleman EA, Berenson RA. Lost in transition: challenges and opportunities for improving the quality of transitional care. Ann Intern Med. 2004;141:533–6.

Roy CL, Poon EG, Karson AS, et al. Patient safety concerns arising from test results that return after hospital discharge. Ann Intern Med. 2005;143:121–8.

Moore C, Wisnivesky J, Williams S, McGinn T. Medical errors related to discontinuity of care from an inpatient to an outpatient setting. J Gen Intern Med. 2003;18:646–51.

Coleman EA, Min SJ, Chomiak A, Kramer AM. Posthospital care transitions: patterns, complications and risk identification. Health Serv Res. 2004;39:1449–65.

Frustrations with hospitalist care: need to improve transitions and communication. Ann Intern Med. 2010;152:469.

Adult MJ. The relationship between hospitalist and primary care physicians. Ann Intern Med. 2010;152:475.

Snow V, Beck D, Budnitz T, et al. Transitions of Care Consensus policy statement: American College of Physicians, Society of General Internal Medicine, Society of Hospital Medicine, American Geriatrics Society, American College of Emergency Physicians, and Society of Academic Emergency Medicine. J Gen Intern Med. 2009;24:971–6.

Kuo YF, Sharma G, Freeman JL, Goodwin JS. Growth in the care of older patients by hospitalists in the United States. New Eng J Med. 2009;360:1102–12.

Kuo YF, Goodwin JS. Effect of hospitalist on length of stay in the Medicare population: variation according to hospital and patient characteristics. J Am Geriatr Soc. 2010;58:1649–57.

Howrey B, Kuo YF, Goodwin JS. Association of care by hospitalists on discharge destination and 30-day outcomes following acute ischemic stroke. Medical Care. 2011;49:701–7.

Kuo YF, Goodwin JS. Association of hospitalist care with medical utilization after discharge: evidence or cost shifting. Ann Intern Med. 2011;155:152–9.

Fletcher KE, Sharma G, Zhang DD, Kuo YF, Goodwin JS. Trends in inpatient continuity of care for a cohort of Medicare patients 1996–2006. J Hosp Med. 2011;6:434–44.

Sharma G, Fletcher KE, Zhang DD, Kuo Y-F, Freeman JL, Goodwin JS. Continuity of outpatient and inpatient care for hospitalized older adults. JAMA. 2009;301:1671–80.

Sharma G, Kuo YF, Freeman JL, Zhang DD, Goodwin JS. Outpatient follow-up with a usual care provider and 30-day emergency room visit and readmission in patients hospitalized for chronic obstructive pulmonary disease. Arch Inter Med. 2010;170:1664–70.

Sharma G, Kuo YF, Freeman J, Goodwin JS. Comanagement of hospitalized surgical patients by medicine physicians in the United States. Arch Intern Med. 2010;170:363–8.

Sharma G, Freeman JL, Zhang DD, Goodwin JS. Continuity of care and Intensive Care Unit use at the end of life. Arch Intern Med. 2009;169:81–6.

Wennberg JE. Unwarranted variations in healthcare delivery: implications for academic medical centres. BMJ. 2002;325(7370):4.

Wennberg DE, Wennberg JE. Addressing variations: Is there hope for the future? Health Aff (Millwood). 2003;Suppl Web Exclusives:W3-614-7.

Mercuri M, Gafni A. Medical practice variations: what the literature tells us (or does not) about what are warranted and unwarranted variations. J Eval Clin Pract. 2011;17:671–7.

Wennberg JE. Time to tackle unwarranted variations in practice. BMJ. 2011;342:d1513.

Schroeder SA, Schliftman BA, Piemme TE. Variation among physicians in use of laboratory tests: relation to quality of care. Med Care. 1974;12:709–13.

Scholle SH, Roski J, Adams JL, et al. Benchmarking physician performance: reliability of individual and composite measures. Am J Manag Care. 2008;14:833–8.

NBCH: National Business Coalition on Health. Value-based Purchasing Guide. Chapter 2: Physician Performance Measurement and Reporting. Available at: http://www.nbch.org/VBPGuide. Accessed November 30, 2011.

Westert GP, Nieboer AP, Groenewegen PP. Variation in duration of hospital stay between hospitals and between doctors within hospitals. Social Sci Med. 1993;37:833–9.

de Jong JD, Westert GP, Lagoe R, Groenewegen PP. Variation in hospital length of stay: do physicians adapt their length of stay decisions to what is usual in the hospital where they work? Health Serv Res. 2006;41:374–94.

Hofer TP, Hayward RA, Greenfield S, Wagner EH, Kaplan SH, Manning WG. The unreliability of individual physician “report cards” for assessing the costs and quality of care of a chronic disease. JAMA. 1999;281(22):2098–105.

Elixhauser A, Steiner C, Harris DR, Coffey RM. Comorbidity measures for use with administrative data. Med Care. 1998;36:8–27.

Shah BR, Hux JE, Laupacis A, Zinman B, Cauch-Dudek K, Booth GL. Administrative data algorithms can describe ambulatory physician utilization. Health Serv Res. 2007;42:1783–93.

Rasbash J, Charlton C, Browne WJ, Healy M, Cameron B. MLwiN Version 2.02. Centre for Multilevel Modelling, University of Bristol; 2005.

Jha AK, Orav EJ, Epstein AM. Public reporting of discharge planning and rates of readmissions. New Engl J Med. 2009;361(27):2637–45.

Epstein AM, Jha AK, Orav EJ. The relationship between hospital admission rates and rehospitalizations. New Engl J Med. 2011;365:2287–95.

Hansen LO, Young RS, Hinami K, Leung A, Williams MV. Interventions to reduce 30-day rehospitalization: a systematic review. Ann Int Med. 2011;155:520–8.

Medicare Program, Hospital Inpatient Prospective Payment Systems for Acute Care Hospitals and the Long-Term Care Hospital Prospective Payment System and FY 2012 Rates, Hospitals’ FTE Resident Caps for Graduate Medical Education Payment. Fed Regist Rules Regul. 2011;76(160):51660–76.

Axon RN, Williams MV. Hospital readmission as an accountability measure. JAMA. 2011;305:504–5.

Acknowledgements

Funders

Supported by grants from the National Institutes of Health: 1R01-AG033134, K05-CA134923, 5P30AG024832 and RP101207.

Prior Presentations

None.

Conflict of Interest

The authors declare that they do not have a conflict of interest.

Open Access

This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Goodwin, J.S., Lin, YL., Singh, S. et al. Variation in Length of Stay and Outcomes among Hospitalized Patients Attributable to Hospitals and Hospitalists. J GEN INTERN MED 28, 370–376 (2013). https://doi.org/10.1007/s11606-012-2255-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11606-012-2255-6