Abstract

Phytophthora infestans, the causal agent of late blight, is a major threat to potato production in northwestern Europe. Before 1980, the worldwide population of P. infestans outside Mexico appeared to be asexual and to consist of a single clonal lineage of A1 mating type characterized by a single genotype. It is widely believed that new strains migrated into Europe in 1976 and that this led to subsequent population changes including the introduction of the A2 mating type. The population characteristics of recently collected isolates in NW Europe show a diverse population including both mating types, sexual reproduction and oospores, although differences are observed between regions. Although it is difficult to find direct evidence that new strains are more aggressive, there are several indications from experiments and field epidemics that the aggressiveness of P. infestans has increased in the past 20 years. The relative importance of the different primary inoculum sources and specific measures for reducing their role, such as covering dumps with plastic and preventing seed tubers from becoming infected, is described for the different regions. In NW Europe, varieties with greater resistance tend not to be grown on a large scale. From the grower’s perspective, the savings in fungicide input that can be achieved with these varieties are not compensated by the higher (perceived) risk of blight. Fungicides play a crucial role in the integrated control of late blight. The spray strategies in NW Europe and a table of the specific attributes of the most important fungicides in Europe are presented. The development and use of decision support systems (DSSs) in NW Europe are described. In The Netherlands, it is estimated that almost 40% of potato growers use recommendations based on commercially available DSS. In the Nordic countries, a new DSS concept with a fixed 7-day spray interval and a variable dose rate is being tested. In the UK, commercially available DSSs are used for c. 8% of the area. The validity of Smith Periods for the new population of P. infestans in the UK is currently being evaluated.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Potato late blight, caused by the oomycete pathogen Phytophthora infestans Mont. (de Bary), first occurred in Europe in the 1840s when it led to the devastating Great Irish Potato Famine (Bourke 1991). Over 160 years later, it continues to pose a major threat to potato production, particularly in the cooler, wetter northern European countries despite the efforts of potato breeders and fungicide producers. In the first half of the twentieth century, breeding programmes concentrated on introducing major genes for blight resistance, but this approach was largely abandoned after the 1960s when it was found that even multiple R-genes were soon overcome by the pathogen. Subsequent breeding efforts have produced cultivars with substantial levels of race-nonspecific resistance (also known as horizontal, partial, or field resistance), but the great majority of European potato production remains devoted to susceptible varieties. Varietal choice is dictated by end users who demand cultivars with specific agronomic characters, and these are difficult to combine with blight resistance. Production is thus reliant on the application of fungicides despite pressures from governments, supermarkets, and consumers to reduce inputs of pesticides. The situation has been further complicated by major pathogen population changes which have occurred since the late 1970s, resulting in the displacement of the apparently clonal ‘old’ A1 mating type P. infestans population by a new, more diverse and presumably fitter population containing strains of both the A1 and A2 mating types. To achieve control of this pathogen population, it is essential to exploit the range of available control measures in an integrated programme. In the context of EuroBlight network (www.euroblight.net), recent results regarding the pathogen and integrated control strategies are presented and discussed. The objective of this review is to describe the present situation regarding the pathogen and integrated control strategies in The Netherlands, the Nordic countries and the UK. The population characteristics and epidemiology of P. infestans are presented and discussed. Five best practices to control late blight are presented: control of primary inoculum sources, use of varieties that are more resistant, use of fungicides and decision support systems (DSSs) and prevention of tuber blight.

The Pathogen

The sexual oospores of P. infestans were first identified in the 1950s in Mexico, and the presence of two mating types, named A1 and A2, established (Gallegly and Gallindo 1957; Niederhauser 1956). Subsequently, single R-genes conferring resistance to late blight were identified from the wild species Solanum demissum in Mexico, and it became possible to identify virulence phenotypes (races) which could overcome one or more R-genes. Eventually, a set of 11 differential potato clones, each carrying a different single R-gene, was used to identify complementary avirulence/virulence phenotypes in collections of P. infestans (Malcolmson 1969; Malcolmson and Black 1966). Until the 1980s, mating type and virulence to R-genes were the only markers available for characterizing P. infestans, but since then a range of genotypic markers has been developed and used to study population changes (Cooke and Lees 2004).

Before 1980, the worldwide population of P. infestans outside Mexico appeared to be asexual and to consist of a single clonal lineage (US-1) of A1 mating type characterized by a single genotype (one mitochondrial DNA haplotype, one multi-locus restriction fragment length polymorphism (RFLP) genotype and one di-locus isozyme genotype). In contrast, the population in highland Mexico was sexual and consisted of both A1 and A2 mating types which were genotypically highly diverse (Grünwald and Flier 2005). It is widely believed that new strains migrated within consignments of ware potatoes imported into Europe in the dry summer of 1976 (Niederhauser 1991) and that this led to subsequent population changes including the introduction of the A2 mating type.

Population Characteristics (Genotypic, Phenotypic)

The Netherlands

The first recording of the A2 mating type in The Netherlands was in 1981 (Frinking et al. 1987). Before 1980, the P. infestans population in The Netherlands consisted of only A1 mating types. Among isolates collected after 1980, the A2 mating type appeared along with the A1 mating type. Between 1987 and 1990, large numbers of isolates were collected and the percentage A2 mating type varied from 11% to 18% (Drenth et al. 1993b). From 36 isolates gathered in 1995, 12 were determined as A2 mating type (Flier et al. 1998). The frequency of the A2 mating type was 75% in a survey of 570 isolates collected throughout The Netherlands in 2005. In just over 60% of the fields sampled, both A1 and A2 mating types were present (A Evenhuis and HMG Van Raaij, unpublished data). This indicates that a sexually reproducing population occurs within a field and oospores might readily be formed in the canopy.

Among 253 isolates collected between 1981 and 1991 and tested for specific virulence, 73 races were identified (Drenth et al. 1994). These races contained many unnecessary virulence alleles (e.g. 5, 6, 7, 8 and 11) for which there are no corresponding resistance genes in the array of potato cultivars grown in The Netherlands. One isolate, collected in 1992, was virulent against all 11 known R-genes (Drenth 1994; LJ Turkensteen, unpublished data). Virulence patterns of 36 isolates gathered in 1995 were complex. All virulence factors were present, except for virulence factor 9 (Flier et al. 1998). Currently, complex isolates still dominate the P. infestans population. On average, eight virulence factors were present in 50 isolates collected in 2004 (Van Raaij et al. 2007).

DNA fingerprinting was used to estimate genotypic diversity among 153 isolates of P. infestans collected from potato and tomato plants in 14 fields distributed over six regions in The Netherlands in 1989. The DNA fingerprint probe, RG57, hybridized to 21 fragments of genomic DNA, 16 of which were polymorphic. Thirty-five RG57 genotypes were identified among the 153 isolates. Half of the isolates had the most widely distributed genotype, which was found in 10 fields in five of the six regions sampled. However, 89% of the genotypes were detected in only one field, and 60% occurred only once. Two mitochondrial DNA types, designated A and B (= Ia/Ib, IIa/IIb, respectively, sensu Griffith and Shaw 1998), were found. Type A occurred in 143 isolates and was found in all fields in every region. Type B, in contrast, was found in only 10 isolates, all collected in community gardens. Partitioning of the genotypic diversity into components with the Shannon diversity index revealed that 52% of the diversity was associated with differences occurring within fields, 8% was due to differences among fields within regions and 40% was accounted for by differences among regions. Genotypic differentiation was observed between isolates collected in community gardens and in commercial potato fields. Canonical variate analysis grouped isolates from commercial potato fields together, regardless of the geographic distance between the fields. Isolates from community gardens differed among regions and differed from the isolates collected in commercial potato fields (Drenth et al. 1993a).

Nordic Countries

In Sweden, the A2 mating type was first observed in 1985 (Kadir and Umaerus 1987). In Norway and Finland, A2 was found in the early 1990s (Hermansen et al. 2000), in Denmark in 1996 (Bødker et al. 1998) and in Iceland in 1999 (Olafsson and Hermansen 2001). More recent Nordic mating type surveys have disclosed that the distribution of P. infestans mating types is close to 1:1 (Lehtinen et al 2007, 2008). Coexistence of both mating types enables sexual reproduction which increases genotypic variation and thereby the adaptability of the pathogen. Studies of genetic variation using RFLP, amplified fragment length polymorphism and simple sequence repeat markers have indicated that sexual reproduction is an important contributing factor to the genetic variation in the Nordic populations of P. infestans (Brurberg et al. 1999, 2011; Widmark et al. 2007).

Sexual reproduction of P. infestans results in the formation of oospores. Field surveys in Norway and Sweden have shown that oospores are commonly produced in infected plants under field conditions (Dahlberg et al. 2002). Oospores have the ability to survive for at least 18 months (Andersson, 2007) outside the host plant and will result in late blight becoming a soil-borne disease. Early symptoms of late blight, presumably originating from oospores, have been reported in the Nordic countries (Andersson et al. 1998; Lehtinen and Hannukkala 2004; Widmark et al. 2007).

During the 1990s, the frequency of metalaxyl resistance in some years reached nearly 60% in Norway and Finland (Hermansen et al. 2000). Later studies of isolates sampled in 2003 showed that the frequency of resistant isolates had dropped to low levels. The majority of the isolates were also sensitive to the fungicide propamocarb. However, some resistant isolates (growing at 1,000 ppm propamocarb) were found in Sweden and Finland in isolates sampled in 2003 (Lehtinen et al. 2007, 2008).

Mapping of virulence in Nordic P. infestans populations has shown that complex races are common. All known virulence genes were found both in Finland and Norway during the 1990s, and race 13471011 was the most common (Hermansen et al. 2000). Later studies of virulence in the Nordic populations from 2003 confirm these findings (Lehtinen et al. 2007, 2008).

UK

In the UK, the A2 mating type was first detected in samples from 1981, and new genotypes of both A1 and A2 mating types became increasingly common before they came to replace the old single clonal lineage by the end of the 1980s. The A2 mating type remained at a low frequency (<20% of sites sampled) during the 1980s and 1990s in England, Wales and Scotland (Cooke et al. 2003; Day et al. 2004) and at even lower frequencies in Northern Ireland and the Republic of Ireland (0–5% of sites sampled; Cooke et al. 1995; O’Sullivan et al. 1995).

Molecular analysis of UK and Irish populations in the 1990s identified several common genotypes which were detected at many sites (Carlisle et al. 2001; Cooke et al. 2003, 2006; Day et al. 2004; Griffin et al. 2002; Purvis et al. 2001; Shaw and Wattier 2002). These were thought to be separate clonal lineages, widely but not evenly distributed over the region. Other genotypes were also detected which were unique to a single site in a single year. These were considered to be a product of sexual recombination. It has been concluded that despite the presence of both mating types, commercial fields were usually infected by a single clonal lineage but that some crops had a more diverse population with one or more clonal lineages and one or more unique genotypes. The latter were suspected to be sites associated with sexual reproduction and oospore production.

In a survey of 99 sites in Great Britain in 2005, the A2 mating type was detected at 38 sites, and the genotypes found differed from those found in the 1990s (Shaw et al. 2007). For another 165 outbreaks sampled in Great Britain in 2006, 65.5% contained A2 and 21.8% yielded both mating types (Cooke et al. 2007). However, there was no evidence for oospore-derived outbreaks. This rather sudden increase in A2 frequency, which was associated with an increase in a single A2 genotype known as 13_A2, paralleled that in NE France in 2004/2005 and brought Great Britain into line with the situation in north and central Europe. This same A2 genotype was identified in Northern Ireland in 2007 and has subsequently also increased in frequency there (Cooke et al. 2008).

Surveys in the 1960s and 1970s identified great diversity for virulence phenotype and showed that virulent strains able to overcome certain R-genes which had not been bred into any widely grown cultivars were common in the UK. This diversity presumably resulted from mutation in the old clonal lineage, US-1, before it was replaced by new, variable populations. The common occurrence of strains with virulence to many R-genes (strains with complex virulence) spelled the speedy demise of some cultivars with single-gene resistance and resulted in a change of breeding strategy away from R-genes towards polygenic, race-nonspecific resistance.

Epidemiology

The Netherlands

The aggressiveness of P. infestans in The Netherlands has increased in the past 20 years, resulting in shorter life cycles (by 30%), more leaf spots/life cycle, shorter infection period (6 h instead of 8 h), greater temperature range (between 5 °C and 27 °C instead of 10–25 °C) and occurrence of stem lesions (Turkensteen and Mulder 1999). As a consequence, shorter spray intervals have been adopted.

Comparison of aggressiveness to tubers of P. infestans isolates from three potato-growing regions showed variation in their ability to infect tubers of cv. Bintje. The most aggressive isolate of the old population matched the average level of the new population in its ability to infect tubers (Flier et al. 1998). These results show that increased aggressiveness to both foliage and tubers had become characteristic of Dutch P. infestans populations. No correlation between components of tuber pathogenicity and pathogenicity to foliage was found (Flier et al. 1998, 2001). The introduction of a sexually reproducing population of P. infestans in The Netherlands has had a major impact on late blight epidemics and population biology of the late blight pathogen. Sexual reproduction has led to a genetically more diverse population of P. infestans in The Netherlands that is marked by an increased adaptability to host and environment (Flier and Turkensteen 1999; Flier 2001; Flier et al. 2003).

Nordic Countries

During the last decade, there are indications of earlier infections and that more frequent applications of fungicides are needed to control late blight in the Nordic countries. Data from Finland show that the first findings of blight occur 1 month earlier now than 20 years ago. In addition, blight epidemics have become frequent in northernmost production areas, where the disease rarely caused damage before 1999 (Hannukkala et al. 2007). However, in northern Norway, late blight epidemics are still not present every year (A Hermansen, unpublished data). In South Sweden, three to four sprays were common around 1975, while 30 years later eight to 10 sprays are normally used (B Andersson, unpublished data). In Denmark, there has been a steadily increase in the fungicide applications from four to five sprays in 1992–1994 to eight to nine sprays 2007–2009 (Anonymous 2010)

Aggressiveness studies of Nordic samples of P. infestans collected in 2003 showed that the latent period ranged from 3.41 to 5.45 days (81.8 to 130.8 h) in different isolates on leaves of cv. Bintje at 15 °C. This study also disclosed differences between isolates in other aggressiveness parameters, e.g., sporulation capacity and lesion growth. Complementary experiments showed a good correlation between the aggressiveness indices obtained in the laboratory and results from inoculated field trials (Lehtinen et al. 2009). However, we have no earlier experimental data from this region to compare these data to in order to show if changes in epidemiological parameters have occurred. There have been no recent Nordic studies focusing on aggressiveness of different isolates to tubers. Tuber blight is normally not a big problem in the Nordic countries due to the good control of foliar infection obtained by frequent fungicide applications.

UK

The rapid replacement of the single clonal lineage by diverse genotypes of the pathogen in the 1980s in the UK (as in other European countries) suggests that the new genotypes left more progeny and out-competed the old genotype within a few years, i.e. they were fitter. However, the relationship between aggressiveness to foliage and tubers and long-term fitness is far from clear, since very aggressive genotypes may fail to survive if they destroy their host before they are able to initiate epidemics in the succeeding season.

While the increasing use of fungicides indicates that the new blight strains have been more destructive and more difficult to control, there is still little direct evidence to suggest that the new strains in the UK are more aggressive than the old. Laboratory studies (Carlisle et al. 2002; Day and Shattock 1997) have shown that new strains from culture are variable in their ability to infect and in their ability to sporulate on detached leaflets. However, the few comparisons that have been made of isolates of the old genotype with isolates of the new genotype have not shown clear evidence that new isolates were more ‘aggressive’. Notwithstanding, Day and Shattock (1997) produced evidence that infection efficiency and sporulation did increase in new strains collected in England and Wales over the period 1982–1995. Also, Carlisle et al. (2002) showed that latent periods in new genotypes from Northern Ireland ranged from 74 to 94 h on susceptible cv. Bintje. In contrast, the latent period of the strains from USA, presumably of the old genotype, used by Crosier in the 1930s (Crosier 1934), ranged from 96 to 108 h on several cultivars. In the interpretation of results from studies using stored isolates, it is always necessary to be aware that losses in pathogenicity can occur in vitro, and this is a particular problem for comparison of old and new genotypes.

A further confounding factor is the role of both short-term climatic variation and long-term climate change in making late blight more difficult to control in the UK and elsewhere. In The Netherlands, a change in weather variables at the transition from 1979 to 1980, when there was a resurgence of severe late blight epidemics after a ‘10-year truce’ (1969–1978), was one possible factor (along with the occurrence of phenylamide resistance and the introduction of the new population), which Zwankhuizen and Zadoks (2002) suggested might have contributed to increased disease in the period 1979–1986. How such changes may have affected late blight in the UK (and elsewhere in Europe) is unclear.

Control Strategies

Control of Primary Inoculum Sources

The Netherlands

The first step in integrated control is reducing the primary sources of inoculum. In The Netherlands, it has been shown that in most years blight epidemics start from infected plants on dumps (Zwankhuizen et al. 2000). Therefore, farmers were thoroughly informed about a nationwide regulation to cover dumps before April 15 with black plastic throughout the season. This campaign organised by the Masterplan Phytophthora, launched by the Agricultural and Horticultural Organisation LTO-Nederland in 1999, resulted in a significant reduction in the number of uncovered dumps (Schepers et al. 2000). The better ‘control’ of dumps led to the increased importance of (latently) infected seed tubers as a source of primary inoculum (Turkensteen et al. 2002). Unfortunately, an increase in uncovered dumps was observed in 2005.

These regulations also force farmers to control late blight in the field. A field is considered to contain an excessive amount of blight when more than 1,000 infected leaflets in 20 m2 or 2,000 infected leaflets in 100 m2 are observed. The regulation forces growers to take measures to control this disease either by spraying eradicant fungicides or by desiccation of the crop with propane burners (organic growers). In one of the Phytophthora Umbrella Plan projects, the efficiency of propane burners to kill late blight spores and mycelium was investigated. The aim of the Umbrella Plan, which was launched in 2003, is to reduce the negative impact on the environment of the use of fungicides by 75% by 2012 using three strategies: first to integrate all present and new research and to focus all research on the aim of fungicide reduction, second to hand over the steering of all research to a board of representatives from the potato sector to ensure commitment to and application of the results of all short-term and long-term research and third to combine the three parties—research, policy and potato sector—in one consortium to ensure that each party takes responsibility for reaching the 2012 aim (Boonekamp 2005).

Volunteer potatoes can readily be found in The Netherlands, since winters are usually mild. Volunteers are self-set potatoes from a previous commercial crop growing as weeds in other crops. In the same way as potatoes in outgrade piles or discard heaps, they may carry blight inoculum and, if they survive the winter conditions, can act as a primary infection source. Equally important, as they are not protected by fungicide sprays, the developing foliage may be infected at any time during the season and become an ongoing source of inoculum to nearby crops. Volunteers tend to emerge and senesce over long periods of time making it difficult to achieve good control with herbicides. Farmers tend to hire land from cattle holders and grow potatoes for 1 or 2 years. After that period, these fields are quite often used to grow maize, and volunteer plants can be found. Volunteers are also found in other crops which are grown in rotation with potatoes. Usually, the role of volunteers is to accelerate the epidemic rather than to serve as a primary inoculum source. To reduce their role in producing inoculum, volunteers must be controlled after July 1 when more than two plants are present per square metre in a 300-m2 section of the field. In one of the Phytophthora Umbrella Plan projects, an automated system for detection and control of potato volunteers is being developed for use in sugar beet.

Infested seed tubers are a major inoculum source. A survey of early infections during 2003–2005 showed that epidemics were driven by infested seed in 39% of the cases investigated (Evenhuis et al. 2007; Turkensteen et al. 2004, 2005).

Oospores are readily produced in unsprayed crops and volunteer potatoes. Their incidence varied between 15% and 78% of sampled leaflets with two or more lesions, for the southwestern and northeastern (starch) regions (Flier et al. 2004). Especially with short crop rotation schemes, oospores are a threatening primary inoculum source. Sandy and clay soils contaminated with oospores remained infectious for 48 and 34 months, respectively (Turkensteen et al. 2000). In the starch potato region, potatoes are usually grown every 2 or 3 years. Moreover, late blight control at the end of the growing season is not strictly carried out. As a consequence, oospores can survive in the soil until the next potato crop. Early infections of potato crops probably originated from oospores in the northeast of The Netherlands in 2002 (Turkensteen 2003). Infections from seed and dumps could be ruled out in these crops. A survey of early infections during 2003–2005 showed that the epidemics were driven by oospores in 18% of the cases investigated. Usually, these were found in the starch-growing region in the northeast of The Netherlands.

Early crops covered with perforated polythene can act as a source of inoculum for neighbouring potato fields: First outbreaks are regularly reported to originate from polythene-covered crops (Schepers and Spits 2006). Usually, late blight is not controlled under polythene, and if primary inoculum sources are present in the field, infection is discovered only when the polythene is lifted. Trials showed that spraying fungicides (+ adjuvants) over polythene-covered crops resulted in a certain level of protection of the potato leaves (Spits et al. 2003). Combining this strategy with warning neighbouring growers when the cover is removed, removing the cover in dry sunny weather and immediately spraying the crop after removal of the cover will help to reduce the impact of covered crops as an early infection source.

Usually, the first part of the growing season is characterized by low levels of blight. By using early-maturing cultivars, pre-sprouting the seed and early planting, the time during which the crop develops during the low risk period is increased. The efficacy of this strategy to control blight was investigated in the EU project Blight-MOP (Tiemens-Hulscher et al. 2002). In addition, moderate nitrogen fertilization is often recommended as a cultural practice to delay the development of late blight. In some experiments, a direct effect of nitrogen on late blight is observed, but the indirect effect on haulm growth is probably more important. Crops with abundant haulm growth dry up relatively slowly after dew or rain and can be more sensitive in some situations to late blight infection. A field trial with different fertilization regimes carried out in the Blight-MOP project did not result in significant differences in late blight development between treatments (Tiemens-Hulscher et al. 2002).

Nordic Countries

In the Nordic countries, the temperature often drops below 0 °C during winter. Thus, potato tubers in upper parts of dumps and soil profiles are usually killed by frost. However, in some years and situations, plants might develop from surviving tubers, especially in the southern parts of the region. In northern parts of the region, thick snow cover often prevents freezing of the soil and tubers can remain viable in spite of the very cold aerial temperature. Volunteer plants are an increasing problem also in northern production regions.

As discussed above, the problems with inoculum from dumps and volunteer plants are of less importance in cold climates. Thus, infected seed tubers, together with oospores, are the most important primary infection sources in the Nordic countries (Widmark et al. 2007). Control of seed tuber inoculum is not easy. The most important strategy is to use seed tubers free of late blight. However, seed lots might contain blighted tubers even though the mother plants have been treated regularly against blight during the growing season. Even low levels of leaf blight can cause severe tuber blight problems (Nærstad et al. 2007a). No seed treatment with fungicides effective against P. infestans is allowed in the Nordic countries. If the seed lot is infected, use of systemic fungicides early in the season might delay the first infections in the field. In Norway, farmers are advised to use this strategy if they suspect primary infection from the seed lot (Hermansen and Nærstad 2009). The same strategy is also recommended in the other Nordic countries.

The importance of oospores as soil-borne inoculum is determined both by their formation in plant tissue and their survival in soil. Cold winters with frozen soil will conserve oospores between growing seasons and result in a germination peak in spring coinciding with the planting of the potato crop. This will further increase the importance of oospores as a source of inoculum. In an inoculated field trial in Sweden, it was not possible to recover isolates from the soil after 15 months (Widmark et al. 2011). Experiments starting in 1998 with buried oospores in soils in the Nordic countries indicate, using tetrazolium bromide as a vital stain (Sutherland and Cohen 1983), that the oospores are capable of surviving at least five winters in the harsh weather conditions (Nordskog et al., unpublished). Danish and Finnish studies of the correlation between crop rotation and early late blight infections show a clear trend that infections started earliest in fields without rotations. The decline in early infections was most pronounced with rotations with three or more year between the potato crops (Bødker et al. 2006; Hannukkala et al. 2007). The same correlation between rotation and early infections has been observed in early potatoes in south Sweden (Andersson, unpublished). This indicates that a sound crop rotation is important and is an effective way of reducing the risk of soil-borne infections of P. infestans. Although 3 years between potato crops seems to be sufficient under practical situations, even longer rotations are probably needed to rule out the potential influence of surviving oospores.

UK

In parts of the UK in recent years, there has been less segregation of production systems and often early production, even under crop covers, is not restricted to ‘traditional’ early areas such as the southwest of England, west Wales and the southeastern counties. In many areas, early plantings may be closely integrated with second early and main crop plantings. The area of crop grown under fleece in the UK has increased substantially in recent years, and this can increase the risk of early development of blight because fungicide sprays cannot be applied until the fleece is removed (the crop cover used on potatoes in the UK is almost exclusively woven polypropylene, known as fleece). Also, the presence of the fleece increases the temperature and humidity within the crop canopy, encouraging blight development. The relative importance of seed-borne inoculum compared with that from infected waste potatoes on discard heaps is difficult to determine. Nevertheless, since the 1960s when aerial photography was used to study the patterns of early outbreaks of blight and demonstrated the importance of waste potatoes as primary sources of inoculum (Cock 1990), the emphasis in the UK has continued to focus on farm hygiene. In 2003, the British Potato Council (BPC) launched an initiative called the ‘Fight against Blight’ campaign. The aim of the campaign was to provide up-to-date, quality-controlled information on blight outbreaks and the development of the epidemic in England, Scotland and Wales (Bradshaw et al. 2004), so that better informed judgments could be made of blight risk. Scouts are recruited on a voluntary basis each growing season and are requested to send suspect samples of tissue with late blight symptoms for laboratory confirmation. The results are communicated back to the scouts by SMS text messaging, and they are also presented on the Potato Council (formerly the British Potato Council) website (www.potato.org.uk/blight) which is updated daily.

Information from the campaign confirmed the importance of outgrade piles as a primary source of inoculum in Great Britain in 2003; 17% of verified outbreaks were on piles of waste potatoes. Raised awareness of the outgrade pile issue resulted in fewer blighted outgrade piles in 2004 and 2005, i.e. 2% and 3% of outbreaks, respectively. Information on best practice aspects of potato blight control has been made available as Grower Advice Sheets, which can also be downloaded from the website and are updated as necessary. In Northern Ireland, initial outbreaks of blight are reported by staff of the Department of Agriculture & Rural Development (DARD), and information on these is available to growers through Blight-Net (via www.ruralni.gov.uk) and the Blightline recorded telephone service.

In the UK, it is recommended that waste potatoes are placed in readily accessible parts of the farm well away from nearby potato fields and ideally on land not intended for crop production in the near future (Anonymous 2009). It is also important that these outgrade piles or discard heaps are located well away from watercourses to avoid any risk of pollution as the potatoes rot. It is strongly recommended that growers regularly check for potato haulm growth, and this is much easier if the waste is readily accessible. It is recommended that haulm growth is treated with a foliar acting herbicide (diquat or glyphosate) to destroy haulm growth or re-growth. Glyphosate will not destroy tubers within the outgrade pile that have not produced haulm. Glyphosate has a slower action than diquat and may still permit lesions to sporulate as the tissue dies. This means that regular inspections, early treatment and several applications will be part of this process. For smaller quantities of waste, covering with black plastic sheeting may suffice. The sheeting must be kept in place and remain intact until the tubers are no longer viable. This will prevent re-growth and the proliferation of spores on the outgrade pile, reducing the risk to nearby crops.

Volunteer potato control will need to be considered throughout the crop rotation (Anonymous 1999). Cultivations for the crop following potatoes should be such as to maximize the chances of frost kill of tubers during the winter. There are selective herbicides available for volunteer control in many crops, including cereals, sugar beet and oilseed rape. Leaving a field fallow after potatoes increases herbicide options. Other options include the use of maleic hydrazide (but not on seed crops, first early crops or those grown under polythene). The number of tubers left in the field after harvest can be minimized through good agronomic practices to produce the correct tuber size. Setting the harvester to lift small tubers where possible and making sure that returned tubers are near to the surface will also help.

Seed tubers have been recognized as a source of P. infestans for many years. However, their relative importance as a primary source of inoculum in the UK compared with outgrade piles, volunteers and other crops has not been quantified, and although growers are now more aware of primary inoculum sources, the initial stages of an outbreak initiated from a seed source may often be undetected (Kelly et al. 2004; Thompson and Cooke 2005). The extent to which blight is introduced into crops with the seed is minimized where classified seed is used. The current tolerances for blighted tubers in Scottish seed stocks are 0.2% for pre-basic seed and for basic seed stocks to be marketed outside the EU and 0.5% for basic seed to be marketed within the EU. Northern Ireland seed tolerances for blighted tubers are 0.1% for pre-basic seed and 0.2% for basic seed. The rate of transmission of P. infestans from infected seed onto the growing plants varies considerably, and many factors influence this rate. The blighted seed tuber needs to survive long enough to allow infection of the haulm, and there may also be an interaction between the pathogen strain causing infection and the potato cultivar (Thompson and Cooke 2005). It is not surprising that factors facilitating seed tuber decay shortly after planting, e.g., high soil moisture content (Fairclough 1995) and the presence of Erwinia soft rot bacteria (Sicilia et al. 2002), limit the spread of P. infestans to the haulm. A synergistic interaction between stem canker caused by Rhizoctonia solani Kühn and stem blight has been reported (Kelly et al. 2006).

It is now possible to test seed stocks for infection by P. infestans using a PCR assay specific to P. infestans (Hussain et al. 2005). This technology can detect as little as 0.5 pg of P. infestans DNA from tubers (Hussain 2003) and allows infected stocks to be identified. However, the efficiency of detection depends on the sampling strategy employed, and detecting low level infection is labour-intensive. In addition, it may be difficult to predict the risk posed by stocks identified as positive (Kelly et al. 2006), partly because it is unclear to what extent symptomless seed tubers can initiate epidemics. Tubers in which P. infestans DNA has been detected may not initiate infection in the field if the pathogen is not sufficiently viable, if the inoculum is not in the right location or if the right environmental conditions are not present.

In Great Britain, in the years up to 2005, the A2 mating type was generally found only at low levels, and although sexual recombination probably occurred occasionally, there was no evidence of infection of commercial crops by oospores. In Northern Ireland, the A2 mating type was more infrequent than in Great Britain. Control of oospores and oospore-initiated infection has therefore not formed part of the UK blight control strategy. A much greater percentage of the A2 mating type in Great Britain was reported in 2005 (Shaw et al. 2007). By 2007, the A2 mating type was dominant in Great Britain with genotype 13_A2 accounting for 82% of the population (Lees et al. 2008), while in Northern Ireland it increased to over 50% of the population in 2009 (Kildea et al. 2010). The role of oospores in initiating infection in the UK is currently under investigation, but to date, there is no evidence that this occurs other than very rarely.

Use of Resistant Cultivars

Both partial resistance (lower susceptibility) and fungicides can slow down the development of late blight. Many reports show that partial resistance in the foliage may be used to complement fungicide applications to allow savings of fungicide by reduced application rates or extended intervals between applications (Bain et al 2008; Bradshaw and Bain 2007; Bus et al. 1995; Clayton and Shattock 1995; Fry 1975, 1977, 1978; Fry et al. 1983; Gans et al. 1995; Grünwald et al. 2000; Nielsen and Bødker 2001; Nielsen 2004; Nærstad et al. 2007a; Shtienberg et al. 1994). Nærstad et al. (2007a) showed that exploiting high foliage resistance to reduce fungicide input was risky when field resistance to tuber blight was low. When field resistance to tuber blight was high, a medium-high resistance in the foliage could be exploited to reduce the fungicide input. In a number of European countries, trials have been carried out in which the possibilities of reducing the fungicide input in resistant varieties have been investigated. In western Europe, resistant cultivars are not grown on a large scale because commercially important characteristics such as quality, yield and earliness are usually not combined in the same cultivar with late blight resistance. In the grower’s perspective, the savings in fungicide input that can be achieved with resistant cultivars are generally outweighed by the higher perceived risk of blight. In countries where fungicides are not available or very expensive, the use of resistant cultivars is one of the most important ways to reduce damage from blight. In the future, the EU Thematic Strategy for Pesticides is likely to provide additional motivation for greater use of integrated control. The Strategy proposes a Sustainable Use of Pesticides Directive (Stark 2008). As part of this Directive, sustainable use will be delivered through member state action plans, including the use of integrated pest management. One of the aims of the UK Pesticides Strategy, published in 2006, is to encourage the uptake of non-chemical treatments.

The Netherlands

As a part of the Phytophthora Umbrella Plan, field trials were carried out to estimate the infection efficiency and the minimum dose rate of fluazinam required to achieve protection of the 30 most important cultivars in The Netherlands (Kessel et al. 2004; Spits et al. 2004). In 2006, recommendations based on these results were published. These recommendations to reduce fungicide inputs when using resistant cultivars will have to be validated and demonstrated in a range of practical situations (with low and high disease pressure) to convince growers of their robustness.

Nordic Countries

While the cultivars’ foliar resistance may be used to reduce fungicide inputs for late blight control, it is very risky to exploit this foliar resistance if the field resistance to tuber blight is low (Nærstad et al. 2007a). In Norway, resistance to tuber blight is tested by application of inoculum to freshly harvested tubers (Bjor 1987). In field trials with cultivars with different tuber resistance scores and reduced fungicide inputs, it was found that the amount of tuber infection in the field was mainly determined by the cultivar, and the differences between treatments were marginal in most cultivars. However, the correlation between the tuber resistance score and the observed tuber infection frequency in the field was low probably because of the effect of cultivar-specific avoidance of inoculum (Nærstad et al. 2007a). In Denmark, resistance to tuber blight is tested by application of inoculum in the field after partial defoliation, and methods are closely related to the EUCABLIGHT protocol: ‘Field test for tuber blight resistance’ [www.eucablight.org; EUCABLIGHT, an EU-funded Concerted Action, was a consortium of European blight researchers and breeders, which is continued as part of the EuroBlight network (www.euroblight.net)]. This type of field test for resistance to tuber blight also includes the cultivar-specific effect of avoidance of inoculum in addition to intrinsic tuber resistance.

UK

As in other European countries, the vast majority of varieties planted in the UK today have limited blight resistance (Bradshaw, 2008) and therefore depend on fungicidal protection to survive pathogen attack. However, late-blight resistance breeding in the UK (reviewed by Wastie 1991 and Mackay 2002) has resulted in a number of cultivars with slow-blighting phenotypes. Although planted in small quantities, many of these would seem to have a stable, race-nonspecific resistance, but there is evidence that some have a combination of race-specific (R-gene) and horizontal resistance. Stewart et al. (2003) suggested that increased resistance was conferred by defeated R-genes or linked genes for field resistance. In seasons and at locations where disease pressures remained low, these resistant varieties have been grown and have yielded well without protection and are therefore of interest to organic farmers, particularly if varieties have high levels of tuber-blight resistance. However, under sustained high disease pressures, these partially resistant varieties suffer total foliage loss and related yield loss. Higher levels of stable resistance are required as some customers, retailers and governments demand lower inputs of chemicals.

During the Soviet Era, breeders in Hungary were able to produce clones with very high blight resistance and also resistance to common virus diseases. The Sárvári family has continued to breed from these lines and has collaborated with the Sárvári Research Trust in North Wales to produce two varieties for the UK market which produce high yields under the heaviest disease pressures. Since these cultivars are of late foliage maturity and have high dry matter concentration, efforts continue to assess resistant clones with earlier maturity, a range of dry matter concentrations together with an absence of major weaknesses. It remains to be seen how far the resistance in Sárpo material becomes eroded with time and exposure to an increasingly variable blight population in the UK and elsewhere.

EUCABLIGHT has agreed on improved methods for assessment of resistant germplasm in field trials and in the laboratory. The inclusion of common standards with a range of blight resistance will allow comparison of the expression of foliage- and tuber-blight resistance in clones grown in different climates and exposed to different strains and populations of P. infestans. The 1–9 scores for ranking resistant varieties are already widely adopted in different countries for the benefit of growers. These scores can now be determined using the same agreed EUCABLIGHT protocol so that results in different countries will be more comparable. Differences in scores will then indicate more accurately when the resistance of a variety is expressed less when exposed to a particular pathogen population—an indication that the resistance in question is race-specific.

Use of Fungicides

Within the EU, pesticides are regulated at a national level and at the community level where they are subject to regular review to ensure that their safety is evaluated to modern standards. The basis of this review programme was originally set out in Council Directive 91/414; this has been superseded by Regulation 1107/2009, which came into force in December 2009 and requires additional review criteria to be met. The cost of producing supporting data for the review programme has resulted in the withdrawal of many pesticides from the market including some fungicides used for the control of late blight. More information on the legislative control of pesticides in Europe can be found on the UK Chemicals Regulation Directorate website (www.pesticides.gov.uk).

The Netherlands

Fungicides play a crucial role in the integrated control of late blight. In order to optimize the use of fungicides, it is important to know the effectiveness and type of activity of the active ingredients to control blight. What is their effectiveness on leaf blight, stem blight and tuber blight and do they protect the new growing point (Evenhuis et al. 1996, 2006b; Schepers and Van Soesbergen 1995)? Are the fungicides protectant, curative or eradicant (Schepers 2000)? What is their rainfastness and mobility (Evenhuis et al. 1998; Schepers 1996)? During the EuroBlight workshops on integrated control of potato late blight held every 18 months, the fungicide characteristics of the most important fungicide active ingredients used for control of late blight in Europe are discussed and ratings are given. The ratings are based on the consensus of experience of scientists in countries present during the workshop and special field trials of EuroBlight standards (Table 1). The frequency and timing of fungicide applications should depend on the foliar resistance of the cultivar, fungicide characteristics, rate of growth of new foliage, weather conditions, irrigation and incidence of blight in the region.

In The Netherlands, the average number of sprays per season varies from seven to 20 depending on the weather, disease pressure and crop (seed, ware, starch). To prevent the crop from being infected, the best policy is to protect the crop whenever an infectious period occurs. The first sprays should start immediately after emergence of the crop, when weather circumstances are favourable for late blight. The most widely used protectant product is fluazinam (Shirlan). By using Shirlan in a flexible way (interval, dose rate), effective control of leaf and tuber blight can be achieved during the complete growing season. Curzate M (mancozeb, cymoxanil) is the most widely used fungicide which combines a protectant (mancozeb) and a translaminar product (cymoxanil). It is mainly used in the first part of the growing season because of its good protectant and curative properties. Shirlan and Curzate M are the products with the largest market share. All active ingredients mentioned in Table 1 are at present registered in The Netherlands except benalaxyl (Schepers and Spits 2006).

The characteristics of the fungicides can be used to optimize their efficacy by matching their strong points with specific situations in the growing season concerning infection pressure and plant growth. When early disease pressure (originating from infected tubers or oospores) coincides with a rapid crop growth, field trials have shown that products containing metalaxyl-M or cymoxanil result in good control. Since monitoring shows that the frequency of resistant strains only increases later on in the season, the efficacy of metalaxyl-M in the beginning of the growing season is usually not influenced by the occurrence of resistant strains. However, the risk of failures due to metalaxyl resistance and the availability of a range of alternative fungicides that can also protect the new growth in a rapidly developing canopy have led growers to increasingly exclude metalaxyl-M from the spraying programme. In the second half of the growing season when conditions are critical for the occurrence of tuber blight, fluazinam and cyazofamid have been shown to control tuber blight very well.

The benefits of air-assisted spraying have been assessed in trials in The Netherlands, in which a cymoxanil + mancozeb formulation was applied in 200 l/ha with and without air assistance (van de Zande et al. 2005). Significantly better control of foliar blight with air assistance at individual assessments was observed but not at the end of the growing season after many applications had been made. Differences in the control of tuber blight were small.

Different strategies, in which efficacy, costs and environmental side effects are recorded, are tested on five regional farms situated in important potato-growing regions in The Netherlands. During the growing season, data from these trials, including types of products used, timing and occurrence of late blight, are recorded and can be followed on the Internet (www.kennisakker.nl) by farmers and advisors. The results of these trials are used in discussions with farmers, and comparisons are made between the trial results and their spraying schedules regarding efficacy, costs and environmental side effects. Each year, the control strategy is discussed with all stakeholders in potatoes and published in farmers’ journals and in a brochure that is sent to all potato growers and advisors.

Nordic Countries

Previously fluazinam (Shirlan) was the most widely used contact fungicide in the Nordic countries, but now a broader spectrum of fungicides is on the market. Products containing cyazofamid (Ranman) and mandipropamid (Revus) are now important components in the control strategies. Mancozeb products are still widely used in the Nordic countries. However, in Sweden, products containing mancozeb as the only active ingredient were withdrawn from the market in the mid 1990s. The use of products containing metalaxyl-M varies among Nordic countries. Metalaxyl-M is sold only in mixtures with fluazinam (Epok) and mancozeb (Ridomil Gold) (wwwevirafi/portal/en/, wwwkemise, www.mst.dk). In Sweden, about 15% of sprays include metalaxyl-M with no restrictions on its use in seed production. In Finland, the market share of products containing metalaxyl-M is close to 5%, but their use on seed potatoes is not permitted. In Norway and Denmark, only one application of metalaxyl-M per season is permitted. Recently registered fungicides in the Nordic countries include cyazofamid (Ranman) and mandipropamid (Revus) mentioned above and mixtures of zoxamide and mancozeb (Electis), famoxadone and cymoxanil (Tanos), cymoxanil and mancozeb (Curzate M), fenamidone and propamocarb (Tyfon, Consento) and fenamidone and mancozeb (Sereno). To date, not all of these products are registered in each of the four Nordic countries

The behaviour and efficacy of blight fungicides in Europe has been regularly evaluated in EU.NET.ICP consortium meetings and later in EuroBlight (Table 1), and the efficacy of different products in the Nordic region does not differ significantly from these ratings. In Denmark and southern Sweden, which are the largest potato production regions in Nordic countries, the rate of crop development is relatively similar to that in the UK and The Netherlands. However, potato is an economically profitable crop up to the Polar Circle and beyond. The northernmost production areas, especially in Finland, Norway and the central and northern parts of Sweden, are characterized by a short growing period, but extremely rapid crop growth in the nearly 24-h daylight, which occurs in the middle of the season. Therefore, the generally recommended spray intervals (7–10 days) for contact fungicides are definitely too long when high inoculum pressure is present in these conditions and where new unprotected growth can be 10–15 cm within 1 week.

The blight risk and number of fungicide applications needed for adequate blight control varies considerably between seasons, climatologically diverse regions within Nordic countries and the type of production. Roughly three to eight applications are carried out in Finland, Norway and mid to north Sweden with an average number of approximately five to six sprays per season. In Denmark and South Sweden, one to two more applications are typically needed (Schepers 2004). Until the late 1990s in production areas beyond latitudes 64° N, zero to two mancozeb applications were sufficient to control blight. Due to the new sexually reproducing blight population and climate change, epidemics in some of these regions start 1 month earlier than before the 1990s and more sprays than before are needed (Hannukkala et al. 2007).

Two major strategies are used in fungicide programmes in Nordic countries. In large farms, fixed 5- to 7-day spray intervals with effective protectant fungicides have proved feasible. The dose rate can be adjusted according to the prevailing blight risk (Nielsen 2004). In smaller farms, it is possible to adjust spray intervals between 5 and 14 days and choose translaminar or systemic and contact fungicides according to the weather forecast, crop growth stage and blight risk (see “Decision Support Systems” section). Fluazinam and cyazofamid are recommended at the end of the spray programme due to their good tuber blight control.

Experience from Denmark shows that spraying with effective fungicides (e.g., cyazofamid and mandipropamid) before periods with high risk of infections can give very effective control of late blight. Field trials in 2009 showed that it was possible to reduce the use of cyazofamid and mandipropamid by 30% by adjusting the dose according to resistance level in the variety and the infection pressure. However, late blight is not the only target pathogen and considerations should also be taken to include fungicides that are effective against early blight (Alternaria solani, Alternaria alternata; Nielsen et al. 2010).

Traditionally large spray volume rates have been recommended in Denmark (and other countries) when applying fungicides for control of potato late blight. Using conventional hydraulic field sprayers, however, volume rate has been decreased and 150–200 l ha-1 seems to be optimal with current cultivars and cultivation systems. Control of potato late blight using either conventional technique, air assistance with the Hardi Twin system or the Danfoil Airsprayer has been investigated in a number of field experiments. The influence of air assistance was inconsistent, and no general advantage was found. Using the Danfoil Airsprayer at 30 l ha-1, a slightly reduced efficacy was seen compared to the other treatments. The influence of volume rate and nozzle angling on potato late blight control with flat fan, pre-orifice and air induction nozzles was also investigated. A slightly reduced efficacy was seen using 80 l ha-1 whereas efficacy was alike using the two higher volume rates. At 160 l ha-1, control of late blight was compared using traditional flat fan nozzles, pre-orifice nozzles and air induction nozzles. Surprisingly the control of late blight was comparable with the three different nozzle types despite the large difference in droplet size and coverage (Jensen and Nielsen 2008a, b).

Resistance to metalaxyl appeared in pathogen populations in the Nordic potato production areas very soon after introduction of the fungicide in the 1980s as elsewhere in the world (see “Population Characteristics (Genotypic, Phenotypic)” section). Severe crop failures following metalaxyl use were recorded especially in Sweden (Olofsson 1989) and Finland (Hannukkala 1994). Increased resistance to metalaxyl has also been reported from Denmark (Holm 1989) and Norway (Hermansen et al. 2000). The development of resistance of P. infestans to propamocarb hydrochloride has recently been monitored in the Nordic countries. There are no indications of failures in the efficacy of the product although individual isolates capable of growing on high concentrations have been found (Lehtinen et al. 2007, 2008). Resistance development against other fungicides has not been monitored in Nordic countries.

UK

As part of the ‘Fight against Blight’ campaign, one of the knowledge gaps identified was in relation to fungicide efficacy and from 2003 to 2005 the BPC commissioned independent evaluation of certain fungicides including newly developed active substances. These experiments were designed to compare certain proprietary formulations under field conditions and measure their effects on the control of both foliar and tuber blight (Bradshaw and Bain 2005). When to start a spray programme has long been a concern for UK growers. It is invariably a compromise in terms of justifying the cost and not wasting expensive fungicide on bare soil when the plants are relatively small. These experiments showed that there was a clear benefit to the control of foliar blight from applying fungicides at an early stage of crop development in the presence of very low levels of pathogen inoculum. This was the case where both severe and less severe blight epidemics developed and the effect remained evident for several weeks in some situations. Even where disease pressure was low when fungicides were applied but became high afterwards, there was a clear benefit from the early use of fungicides for the control of foliar blight. This suggests that fungicides were suppressing pathogen inoculum well before visible symptoms became evident.

Since the revocation of the approval for fentin-based fungicides (Directive 91/414 EC), there has been concern in the UK about the availability of fungicides which would give effective control of tuber infection. The BPC-commissioned experiments also showed that low levels of foliar blight and a ‘slow blight epidemic’ could give rise to a high incidence of tuber infection. This presents a good example of the relationship between prolonged production of inoculum on the foliage and high levels of tuber infection. It is also a strong reminder of the need to maintain fungicide programmes up to the end of haulm desiccation. Differences in performance between products were recorded, and these are reflected in the ratings given in Table 1. Control of tuber blight by fungicides is said to be ‘indirect’ when the fungicide treatment affects tuber blight only through its effect on the severity of foliar blight. It is considered to be a ‘direct’ effect on the tuber infection process itself when fungicide treatments which result in similar levels of foliar blight differentially affect tuber blight.

The choice of fungicide product depends on a number of factors including disease risk, weather conditions, fungicide mode of action, resistance management issues and the stage of growth of the crop. In the UK, it is recommended that fungicides for late blight control are used protectively and are applied before crop infection occurs. Starting the spray programme early enough and maintaining the correct spray intervals during the season for the prevailing risk are just as important as the choice of fungicide. Assessment of disease risk using information on disease activity in the locality, recent infection periods and weather forecasts is used to guide the frequency of spray applications.

Some growers select fungicide products taking account of their specific attributes (Table 1) according to specific stages of crop development. These are emergence to start of rapid haulm growth, rapid haulm growth, the end of rapid haulm growth to start of senescence and the start of senescence to complete haulm destruction. An example of how fungicide characteristics affect choice would be the use of systemic products during the rapid haulm growth phase and the choice of products with tuber blight activity after the rapid haulm growth phase leading up to senescence. In the UK, the minimum interval between applications of the same product is governed by UK legislation (Control of Pesticide Regulations, 1986) and must be adhered to if stated on the product label. In many cases, the minimum interval between applications of the same product is 7 days. However, when disease risk is extremely high, closer intervals may be appropriate and the use of different products for consecutive applications is necessary.

Where irrigation is used, this should also influence selection of fungicides and application timing. Careful scheduling of irrigation around fungicide spray programmes is essential to minimize disease risk as often it will not be possible to use a sprayer for several days. Growers are recommended to choose fungicides that have good rainfastness and to allow sufficient time between spraying and irrigation to ensure rainfastness. Information on rainfastness is usually available from the fungicide manufacturers. Trickle irrigation is less risky than the use of rain guns or other forms of overhead irrigation, but it is rarely used (Buckley, personal communication).

The range of fungicides approved in the UK is similar to that in other European countries. Recently approved fungicides include, for the 2006 season, benthiavalicarb-isopropyl (in Valbon, a co-formulation with mancozeb) and fluopicolide (in Infinito, co-formulated with propamocarb), for the 2007 season, mandipropamid (Revus), 2008 amisulbrom (Shinkon) and 2010 ametoctradin (Resplend and Decabane, co-formulated with dimethomorph and mancozeb, respectively). Surveys of Pesticide Usage are carried out every 2 years for arable crops in Great Britain (Pesticide Usage Survey Team, the Food, Environment and Research Agency and also Science and Advice for Scottish Agriculture) and in Northern Ireland (Pesticide Usage Monitoring Group, AFBI), and the most up-to-date results can be found on the Internet (www.fera.defra.gov.uk, www.sasa.gov.uk and www.afbini.gov.uk, respectively).

In Great Britain, fungicides accounted for 65% of the total pesticide-treated area of ware potatoes in the 2008 survey (Garthwaite et al. 2008), and most fungicides were applied at or near full label rate for the sole purpose of protecting from late blight. Three formulations, cymoxanil + mancozeb, cyazofamid and fluazinam (in various products), accounted for over half of all fungicides applied. Over 1.6 million hectares of fungicides were applied, and these three formulations accounted for 23%, 20% and 16% of this area, respectively. Other fungicides which were widely used were propamocarb hydrochloride + fluopicolide (Infinito), mancozeb, dimethomorph + mancozeb (Invader) and mandipropamid (Revus). A similar range of fungicides was applied to seed potatoes, with cymoxanil + mancozeb, cyazofamid and fluazinam being used on over two thirds of the growing area.

In Northern Ireland, fungicides accounted for 70% of the area of main crop potatoes treated with pesticides in 2008 (Withers et al. 2008). Fluazinam (various products) was the most widely used fungicide, accounting for 34% of the total spray hectares, while cymoxanil + mancozeb (various products), fluopicolide + propamocarb hydrochloride (Infinito) and chlorothalonil + propamocarb hydrochloride (Merlin) accounted for 19%, 13% and 11% of the treated area, respectively. Mancozeb used alone accounted for 8% of the treated area, but when co-formulations with other fungicides were included, the total area receiving products containing mancozeb was 29%. On seed potatoes, fluazinam and fluopicolide + propamocarb hydrochloride were the most widely used fungicides.

In the UK, the first detection of strains of P. infestans resistant to phenylamides occurred in 1981, 3 years after these fungicides were registered (Cooke 1981; Holmes and Channon 1984). In the UK, phenylamides were only approved for late blight control in mixtures with mancozeb. Although it is tempting to relate this appearance of phenylamide-resistant strains to the introduction of the new population, the two events are probably coincidental; indeed one of the first resistant isolates found in the Republic of Ireland belonged to the ‘old’ US-1 population. Annual monitoring during the 1980s in Great Britain and Northern Ireland showed the incidence of isolates containing resistant strains to be generally well under 40% until the late 1980s when it abruptly increased to over 70%. Introduction of formulations containing higher rates of non-systemic fungicides and the implementation of anti-resistance strategies involving use of only two or three phenylamide applications early in the spray programme contributed to a subsequent decline in the incidence of phenylamide-resistant strains in Northern Ireland (Cooke and Little 2006) and also in Great Britain. However, the situation has changed since the appearance of genotype 13_A2 and its domination of the populations in Great Britain and Northern Ireland since 2007 and 2009, respectively. To date, all isolates of 13_A2 have proved to be phenylamide-resistant, and manufacturers of metalaxyl-containing products have responded by reducing the number of recommended applications during a single season to one. Many advisors have not recommended the use of phenylamides in fungicide programmes since 2008. There is no evidence that P. infestans has developed resistance to any other fungicides used in the UK: limited monitoring has shown isolates continue to be very sensitive to fluazinam and to zoxamide. In the UK, guidance on fungicide resistance management is provided by the Fungicide Resistance Action Group (FRAG-UK), which brings together members from independent organisations and agrochemical companies; the latest version of the FRAG-UK publication ‘Potato late blight: Guidelines for managing fungicide resistance’ can be downloaded from the Chemicals Regulation Directorate’s website (www.pesticides.gov.uk).

A survey in 2004 for the BPC found that for potato blight fungicide application, 85% of the area was treated using conventional hydraulic sprayers, 11% air-assisted and 4% twin fluid (Anonymous 2004). Sixty-two percent of blight sprays were applied through flat fan nozzles, 30% through angled nozzles, 4% twin fluid and 3% using air assistance. The use of angled nozzles increased from 22% at the rosette stage of crop growth to 35% at full canopy. Sixty percent of growers changed their water volume during the growing season. The most popular water volume was 150 to 200 l ha-1. The area treated with more than 200 l ha-1 increased from 17% at the rosette stage to 49% at full canopy. In 2008, nearly 70% of fungicides were applied to crops at 151–200 l ha-1, with 22% above 200 l ha-1 and the remainder below 150 l ha-1 (Garthwaite et al. 2008). In three trials in different years, the contact fungicides mancozeb or fluazinam were applied in fixed water volumes, i.e. 150, 200 or 300 l ha-1, and also in a managed programme in which water volume was matched to the size of the crop canopy. It was observed that the levels of foliar and tuber blight were consistently the lowest where the water volume had been managed (RA Bain, unpublished). The effectiveness of some fixed volume treatments was inconsistent.

Decision Support Systems

DSSs integrate and organise all available information on the life cycle of P. infestans, the weather (historical and forecast), plant growth, fungicide characteristics, cultivar resistance and disease pressure, required to make decisions concerning the management of late blight. Computer-based DSSs that require weather information and regular late blight scouting inputs have been developed and validated in a number of European countries (Hansen et al. 2002). DSS can deliver general or very site-specific information to the users via extension officers, telephone, fax, e-mail, SMS, PC and websites on the Internet. Using the Internet, databases and web tools have become the most important platform for dissemination. Information is delivered directly to the farmers via web pages. SMS or e-mail, via extension officers who use the DSS and then advise on specific control actions to farmers via fax, phone or e-mail, is also common.

Six different DSS were tested in validation trials across Europe in 2001: Simphyt (Germany), PLANT-Plus (The Netherlands), NegFry (Denmark), ProPhy (The Netherlands), Guntz-Divoux/Milsol (France) and PhytoPre + 2000 (Switzerland). The use of DSS reduced fungicide input by 8–62% compared to routine treatments. The level of foliar disease at the end of the season was the same or lower using a DSS compared to a routine treatment in 26 of 29 validations (Hansen et al. 2002). During this test, all DSSs were PC-based. Since then, all DSSs or part of them have become available on the Internet. In 2010, partners from the EuroBlight network created a freely available platform that allows testing and comparing the weather-based late blight sub-models from seven existing and new European DSSs (www.euroblight.net). The results from different models for disease risk or infection risk gave similar but by no means identical results. The tool is intended to improve the quality of existing sub-models, and it will be used to analyse the weather-based risk of late blight development in different regions of Europe and beyond (Hansen et al. 2010).

The Netherlands

The presence of the new, more aggressive P. infestans population in The Netherlands will encourage growers to take more information into account with respect to spray decisions because the risk of spray regimes with fixed intervals will often be too high (Turkensteen et al. 2002). An important task for the near future therefore is to update the DSS with information on the epidemiology of the new aggressive population of P. infestans. Issues such as the influence of temperature and relative humidity on the infection process, the role of primary and secondary inoculum sources and the cultivar resistance ratings for foliar and tuber blight will have to be addressed again. Also the control of early blight caused by Alternaria spp. will have to be integrated in control strategies for late blight, since early blight has recently become more threatening to potato cultivation in northwestern Europe.

In The Netherlands, the DSS ProPhy (Opticrop B.V.) and PLANT-Plus (DACOM PLANT Service B.V.) are used commercially. In total, about 4,500 Dutch potato growers (36%) use the recommendations of these DSSs given via PC, Internet, e-mail or fax. Moreover, recommendations based on PLANT-Plus reach all Dutch potato growers through ALPHI (an automated telephone service) and automated phone calls for high-risk situations issued by the Masterplan Phytophthora. Both companies have an extensive network of weather stations in The Netherlands.

PLANT-Plus and ProPhy are reliable tools to aid control of potato late blight. Although cultivar resistance is used in DSS recommendations, recent research has shown that higher levels of late blight resistance can be used to even further reduce the input of protectant fungicides. In a series of field experiments from 2002 to 2005, experimental versions of PLANT-Plus and ProPhy, purpose-built to further exploit cultivar resistance, were compared to their respective commercial versions for development purposes (Wander et al. 2003, 2006). Two approaches were used: longer spray intervals and/or reduced fluazinam dose rates. Cultivars included in the experiments were (foliar resistance rating in brackets): Bintje (3), Santé (4½), Agria (5½), Remarka (6½), Aziza (7½) and starch potato cultivars Starga (5½), Karakter (6), Seresta (7) and Karnico (8). The date of first spray was usually not affected by using the different cultivars. The date of the first observation of potato late blight symptoms was relatively late in the highly resistant cultivar Aziza, but relatively early in the highly resistant cultivar Karnico. With the cultivars Seresta and Aziza, it was possible to substantially reduce the fluazinam dose rate except at the end of the growing season. Current resistance figures according to the Dutch National Variety List seemed not to be appropriate for this purpose (Kessel and Flier, unpublished data).

Based on the research results, it was concluded that:

-

Fluazinam dose rates can be reduced for higher levels of potato blight resistance.

-

Spray interval can be increased for higher levels of potato late blight resistance.

-

Experimental DSS versions successfully incorporated cultivar resistance and performed reasonably well.

-

More research is necessary to develop resistance specific recommendations for all cultivars.

Nordic Countries

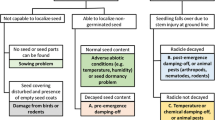

To protect the crop against late blight, potato growers need information related to the environment, the host and the pathogen. In all Nordic countries, information and decision support aimed at late blight control are disseminated via the Internet (www.planteInfo.dk; www.vips-landbruk.no; www.sjv.se; www.agronet.fi). The dataflow, infrastructure and organisation are quite similar in all four countries (Fig. 1). One applied research institute in each country is responsible for dissemination of agrometeorological and related information to the agricultural sector. This is done in close collaboration with the national meteorological institute and the extension service in each country. Weather data are available from weather station networks, owned and operated by the national meteorological offices. Additional stations were established in important agricultural areas in the 1980s in Denmark and in the 1990s in Norway and Sweden (Magnus et al. 1991; www.plant-net.com/lantmet_new/index.htm). All information is disseminated via the Internet and increasingly via SMS to mobile phones (Jensen and Thysen 2004).

The driving force behind the implementation of late blight DSS in the Nordic countries was the introduction of political action plans in the 1980s, requiring growers to reduce pesticide use. The PC-NegFry system was developed in the early 1990s and was tested in all the Nordic countries during 1993–1996 (Hansen et al. 1995; Hermansen and Amundsen 2003). Subsequently, it was also tested in several other countries in Europe (Hansen et al. 2000, 2002; Leonard et al. 2001). Further development of PC-NegFry stopped in 2002, but modified versions of NegFry are now in use via the Internet in all Nordic countries. In Sweden, users can download weather data files directly accessible by the PC programme. In Norway, accumulated and daily risk values from NegFry are available in VIPS (www.vips-landbruk.no) together with the Försund rules developed in the late 1950s (Hermansen and Amundsen 2003) and a new improved late blight model (Nærstad et al. 2009). In Finland (www.agronet.fi), NegFry accumulated and daily risk values are calculated for 25 weather stations of the Finnish Meteorological Institute.

Due to the dramatic change in the biology of the late blight pathogen, it was decided to initiate basic biological studies enabling update of models and decision rules (Hansen et al. 2006; Nielsen et al 2007). A common Nordic framework for development of a core Internet-based late blight DSS was established in 2003. This framework facilitates future development of late blight DSS applications and allows components to be exchanged between partner countries. The use of collaborative Internet applications was already established in 1998 by the development of the Internet-based Web-blight system (Hansen et al. 2001). In this system all Nordic countries, Baltic countries and Poland use the same system for monitoring the initial symptoms of late blight in commercial fields. Maps and tables with information on early outbreaks of disease in the whole region were available in Web-blight, and the national maps were integrated in national Internet-based information and DSS. Additionally, the Web-blight system analyses primary disease data from field tests of foliage blight resistance (Hansen et al. 2005). This service was taken over by EUCABLIGHT (www.eucablight.org; Colon et al. 2005) in 2005, and the derived information on level, type and stability of resistance of cultivars will be exploited in the DSSs. The Internet-based system for surveillance of early attacks of P. infestans is now a part of the EuroBlight research platform (www.euroblight.net).

Due to structural changes in Nordic agriculture, the number of farmers has decreased, but the potato area has increased, including the number of fields of potato grown by each farmer. This is a barrier to using complex models and decision rules that operate on a field level as input requires detailed observations in each field. Consequently, a simple, 7-day spray-schedule DSS (Blight Management) was developed integrating reduced dosages of fluazinam (Shirlan), host resistance, infection pressure and epidemic phase (Nielsen 2004; Hansen et al. 2006). The method for calculation of infection pressure is described at www.euroblight.net. The blight season was divided into three phases to take into account the presence of inoculum and possible adaptation of local pathogen populations to host resistance (Table 2). Blight attacks in conventional and organic fields are monitored in all the Nordic countries using EuroBlight Nordic Blight Surveillance system.