Abstract

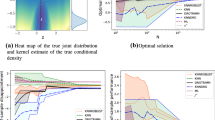

Large dimensional predictors are often introduced in regressions to attenuate the possible modeling bias. We consider the stable direction recovery in single-index models in which we solely assume the response Y is independent of the diverging dimensional predictors X when β T0 X is given, where β 0 is a p n × 1 vector, and p n → ∞ as the sample size n → ∞. We first explore sufficient conditions under which the least squares estimation β n0 recovers the direction β 0 consistently even when p n = o(\( \sqrt n \)). To enhance the model interpretability by excluding irrelevant predictors in regressions, we suggest an ℓ 1-regularization algorithm with a quadratic constraint on magnitude of least squares residuals to search for a sparse estimation of β 0. Not only can the solution β n of ℓ 1-regularization recover β 0 consistently, it also produces sufficiently sparse estimators which enable us to select “important” predictors to facilitate the model interpretation while maintaining the prediction accuracy. Further analysis by simulations and an application to the car price data suggest that our proposed estimation procedures have good finite-sample performance and are computationally efficient.

Similar content being viewed by others

References

Altham P M E. Improving the precision of estimation by fitting a model. J Roy Statistit Soc B, 1984, 46: 118–119

Candès E J, Romberg J, Tao T. Stable singal recovery from incomplete and inaccurate measurements. Comm Pure Appl Math, 2006, 59: 1207–1223

Chen C H, Li K C. Can SIR be as popular as multiple linear regression? Statist Sinica, 1998, 8: 289–316

Cook R D. Regression Graphics: Ideas for Studying Regressions through Graphics. New York: Wiley & Sons, 1998

Cook R D, Li B. Dimension reduction for conditional mean in regression. Ann Statist, 2002, 30: 455–474

Cook R D, Ni L. Sufficient dimension reduction via inverse regression: A minimum discrepancy approach. J Amer Statist Assoc, 2005, 100: 410–428

Donoho D L. High-dimensional data analysis: The curses and blessings of diemsnionality. Aide-memoire of a lecture at AMS Conference on Math Challenges of the 21st Century, 2000

Fan J Q, Li R. Variable selection via nonconcave penalized likelihood and its oracle properties. J Amer Statist Assoc, 2001, 96: 1348–1360

Fan J Q, Peng H. Nonconcave penalized likelihood with a diverging number of parameters. Ann Statist, 2004, 32: 928–961

Hall P, Li K C. On almost linearity of low dimensional projection from high dimensional data. Ann Statist, 1993, 21: 867–889

Huber P J. Robust regression: Asymptotics, conjectures and Monte Carlo. Ann Statist, 1973, 1: 799–821

Kong E, Xia Y C. Variable selection for the single-index model. Biometrika, 2007, 94: 217–229

Li K C. Sliced inverse regression for dimension reduction (with discussion). J Amer Statist Assoc, 1991, 86: 316–342

Li L X. Sparse sufficient dimension reduction. Biometrika, 2007, 97: 603–613

Li L X, Cook R D, Tsai C L. Partial inverse regression. Biometrika, 2007, 94: 615–625

Li L X, Nachtsheim C J. Sparse sliced inverse regression. Technometrics, 2006, 48: 503–510

Li L X, Simonoff J S, Tsai C L. Tobit model estimation and sliced inverse regression. Statist Modelling, 2007, 7: 107–123

Li L X, Yin X R. Sliced inverse regression with regularizations. Biometrics, 2007, 64: 124–131

Naik P A, Hagerty M R, Tsai C L. A new dimension reduction approach for data-rich marketing environments: Sliced inverse regression. J Mark Res, 2000, 37: 113–134

Naik P A, Tsai C L. Single-index model selections. Biometrika, 2001, 88: 821–832

Ni L Q, Cook R D, Tsai C L. A note on shrinkage sliced inverse regression. Biometrika, 2005, 92: 242–247

Palmquist R B. Hedonic methods. In: Braden J B, Kolstad C D, eds. Measuring the Demand for Environmental Quality. Amsterdam: North-Holland, 1991, 77–120

Pollard D. Convergence of Stochastic Processes. New York: Springer-Verlag, 1984

Rosen S. Hedonic prices and implicit markets: Product differentiation in perfect competition. J Polit Econ, 1974, 83: 34–55

Tibshirani R. Regression shrinkage and selection via the Lasso. J Roy Statist Soc B, 1996, 58, 267–288

Tobin J. Estimation of relationsjips for limited dependent variables. Econometrika, 1958, 26: 24–36

Wang Q, Yin X. A nonlinear multi-dimensional variable selection method for high dimensional data: Sparse MAVE. Comp Statist Data Anal, 2008, 52: 4512–4520

Xia Y C, Li W K, Tong H, et al. An adaptive estimation of optimal regression subspace. J Roy Statist Soc B, 2002, 64: 363–410

Zhu L P, Zhu L X. On distribution-weighted partial least squares with diverging number of highly correlated predictors. J Roy Statist Soc B, 2009, 71: 525–548

Zhu L P, Zhu L X. Dimension reduction for conditional variance in regressions. Statist Sinica, 2009, 19, 869–883

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Zhu, L., Zhu, L. Stable direction recovery in single-index models with a diverging number of predictors. Sci. China Math. 53, 1817–1826 (2010). https://doi.org/10.1007/s11425-010-4026-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11425-010-4026-3

Keywords

- ℓ1-minimization

- diverging parameters

- inverse regression

- restricted orthonormality

- sparsity

- sufficient dimension reduction