Abstract

Gathering information from students’ answers to open-ended questions helps to assess the quality of teachers’ practices and its relations with students’ motivation. The present study aimed to use sentiment analysis, an artificial intelligence-based tool, to examine students’ responses to open-ended questions about their teacher’s communication. Using the obtained sentiment scores, we studied the effect of teachers engaging messages on students’ sentiment. Subsequently, we analysed the mediating role of this sentiment on the relation between teachers’ messages and students’ motivation to learn. Results showed that the higher the students’ perceived use of engaging messages, the more positive their sentiments towards their teacher’s communication. This is an important issue for future research as it shows the usefulness of sentiment analysis for studying teachers’ verbal behaviours. Findings also showed that sentiment partially mediates the effect of teachers engaging messages on students’ motivation to learn. This research paves the way for using sentiment analysis to better study the relations of teachers’ behaviours, students’ sentiments and opinions, and their outcomes.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Teachers can engage in a variety of classroom practices to improve the quality of their teaching and have a positive impact on students’ learning and performance (Smith & Baik, 2021). Among these practices there is evidence that communication during class plays a key role, as it influences students’ well-being, behaviour, engagement, academic performance, and motivation (Caldarella et al., 2020; Chickering & Gamson, 1987; Hattie, 2008; Putwain & Best, 2011; Putwain & Remedios, 2014; Putwain & Roberts, 2009; Ramsden, 2003). To date, several studies have investigated dialogue, rules, feedback, and teacher’s questions to students (Brooks et al., 2019; Howe & Abedin, 2013; Lipnevich & Panadero, 2021). Recently, researchers have shown increased interest in the effect of teachers’ messages on students’ outcomes (Buma & Nyamupangedengu, 2020; Floress et al., 2018; Putwain et al., 2021). Evidence suggests that these messages influence students’ academic performance, motivation to learn and anxiety (Belcher et al., 2021; Nicholson et al., 2019; Santana-Monagas, Putwain, et al., 2022). Consequently, the study of teachers’ messages is a growing concern, as improving teachers’ use of them could lead to learners’ positive outcomes (Gregory et al., 2017).

Studying the mediators that explain the relation between variables allow researchers to focus on the transmission of effects, to find causal relationships, understood as the relationship in which a change in the independent variable causes a change in the dependent variable, and to conduct more effective interventions (Hamaker et al., 2020; Kazdin, 2007; Preacher & Kelley, 2011; VanderWeele, 2015). Although several investigations have been carried out on the association between teachers’ practices, students’ perceptions of those practices, and different student outcomes (Adediwura & Tayo, 2007; De Meyer et al., 2014; Geier, 2022; Haerens et al., 2015), much less is known about which variables are mediating these relations. Recent studies have established that one of the factors that students focus on most when assessing and expressing their sentiments and opinions towards teachers’ practices is communication (Catano & Harvey, 2011). In turn, students’ sentiments and opinions about their learning experience have been found to be related to their motivation (Hasan et al., 2013; Shen et al., 2009). Taken together, these studies support the hypothesis that students’ sentiments on teachers’ communication may be mediating the effect that teachers’ messages have on students’ motivation.

Research on the subject has been mostly restricted to self-reported measures (Nicholson et al., 2019; Putwain & Remedios, 2014; Santana-Monagas et al., 2023; Santana–Monagas, Núñez, SantanaMonagas et al., 2022a, b). However, gathering information from students’ answers to open-ended helps to assess the quality of teachers’ practices, students’ motivation and even establishing causality (Maxwell, 2012; Stupans et al., 2016). Thanks to advances in natural language processing (Hirschberg & Manning, 2015), a considerable amount of literature has been published on the use of sentiment analysis to examine students’ feedback. This artificial intelligence-based tool has been mainly used to assess learners’ satisfaction with teachers or content in massive open online courses (Zhou et al., 2020). Nevertheless, few studies have analysed the relations between the sentiment, defined in this study as the positive, negative, or neutral opinion of the students, and other variables (Nimala & Jebakumar, 2021). The empirical work presented here provides one of the first investigations into the exploration of the mediating role of students’ sentiments about their teachers’ communication using open-ended questions that have been coded using sentiment analysis.

This study aims to contribute to this growing area of research through the following two objectives: (1) using sentiment analysis on students’ responses to an open-ended question to analyse whether teachers’ messages affect students’ sentiment; and (2) testing the mediating role of sentiment in the effect of teachers engaging messages on students’ motivation to learn. The first subsections of this paper will provide information about teachers engaging messages and students’ motivation to learn; a more in-depth conceptualisation of sentiment analysis and in its use on the educational field; how to study the relations between these variables; and the research questions of the study.

Teachers engaging messages: the link to students’ motivation to learn

‘If you work hard, you will feel fulfilled’, ‘Unless you work hard, you will be disappointed with yourself’. Those are some examples of teachers engaging messages (TEM). TEM push pupils to engage in school tasks (Santana–Monagas, Putwain, et al., 2022), rather than posing questions that facilitate learning, giving them information about how they performed on a task, or instructing them. They are characterised by focusing on the positive consequences and highlighting the benefits of engaging in a task or warning the students if the task is not carried out (Rothman & Salovey, 1997). In addition, these messages also focus on different types of learners’ motivation (Santana-Monagas, Núñez, et al., 2022). Teachers can appeal to external forms of motivation like rewards and punishments (i.e., extrinsic motivation) or feelings (i.e., introjected motivation), or to internal forms like the value of studies (i.e., identified motivation) or the pleasure of engaging (i.e., intrinsic motivation). Therefore, TEM can be contextualised following two theories: Message Framing Theory (MFT; Rothman & Salovey 1997) and Self-Determination Theory (SDT; Deci & Ryan 2016; Ryan & Deci, 2000, 2020).

Research on teachers’ messages based on these theories found that messages focussed on warning the students can have positive effects on students’ anxiety, behavioural engagement, and performance (Putwain et al., 2019, 2021; Putwain & Symes, 2011). Moreover, research has found that students who are internally motivated are more engaged, perform better, and acquire higher-quality learning (Taylor et al., 2014).

Prior research has established that teachers’ messages are related to students’ motivation to learn (MTL; Collie et al., 2019; Vansteenkiste et al., 2012). Students’ MTL is a complex psychological construct that refers to the desire, drive, and persistence to engage in learning activities (Núñez et al., 2005; Vallerand et al., 1992). One perspective that has been used to study MTL is also SDT, which suggests that students’ MTL can be classified as intrinsic (i.e., sign of competence and self-determination), extrinsic (i.e., participation in an activity to obtain rewards or avoid punishments), or amotivated (i.e., lack of interest or engagement), depending on the level of self-determination and autonomy involved.

Teachers can use different messages to appeal to and influence students’ MTL, for example, when they ask students to study to make their parents proud, appealing to an introjected motivation (Deci & Ryan, 2016). Up to now, studies that explored the impact of TEM on students’ MTL have found that TEM predicted students’ MTL, and this, in turn, predicted students’ performance (Santana-Monagas, Putwain, et al., 2022). These findings made an important contribution to establishing the importance of teachers’ messages, as they impact motivation, which plays a fundamental role in students’ lives (Ryan & Deci, 2017). When students are motivated, they do not only change their behaviour, but there are also benefits in other essential aspects of their lives (Behzadnia et al., 2018; Liu et al., 2017; Marshik et al., 2017; Oostdam et al., 2019). Consequently, improving students’ MTL through effective interventions focused on enhancing the use of TEM is a potential need that could be realised.

To design effective interventions, it is important to detect the variables that mediate the relations between the independent and the dependent variables. Knowledge of mediators helps to achieve the expected results after the causes modification, especially when contexts vary (Preacher & Kelley, 2011). When it comes to selecting the mediating variables, Hamarker et al. (2020) recommend a theory-based approach. We have therefore decided to rely on the aforementioned MFT and SDT, since they indicate that students’ motivation depends on how they feel, which is also determined by their environment.

Several studies have shown associations between teachers’ practices, students’ perceptions and opinions with these practices, and different outcomes (Adediwura & Tayo, 2007; Behzadnia et al., 2018; De Meyer et al., 2014; Haerens et al., 2015), yet little attention has been paid to the mediating role that one of them may be playing. Many recent studies have shown that teachers’ communication is related to students’ satisfaction and sentiments (Dhillon & Kaur, 2021; Goodboy et al., 2009). In an analysis to determine which teachers’ dimensions are most highly valued by students, Catano and Harvey (2011) found that communication is one of the competences that students focus on the most. In turn, research on students’ motivation show the impact of their satisfaction and sentiments towards the teacher and the learning experience (Baños et al., 2017; Shen et al., 2009). For instance, the study conducted by Hasan et al. (2013) concluded that students’ satisfaction with teachers’ performance was among the strongest predictors of students’ motivation. The evidence presented in this section suggests that students’ sentiment about how their teachers communicate could be an intervening variable that may account for the relation between TEM and students’ MTL.

Previous research measuring these variables are limited to the use of self-reports as the method of data collection. Questionnaires allow for quick and accessible measurements (Robins et al., 2007), but they have limitations or may be biased (Álvarez–Álvarez et al., 2019; Putwain & Roberts, 2009). Likert scale questions are restricted to a predefined set of options, which may not fully capture the range of students’ opinions and sentiments (Bielick, 2017; Joshi et al., 2015). For example, students may not fully agree or disagree with a statement, but instead have a more nuanced opinion that cannot be captured by a simple one to five or to seven option answer. Recently, a considerable amount of literature has emerged around the use of mixed methods of data collection because they allow researchers to gain a greater understanding of the problem studied (Greene, 2005; Molina–Azorin, 2016). However, much of the research that has been carried out until now has been limited due to the complexity of analysing and coding the data collected through open methods (Rodgers & Cowles, 1993; Walker, 1989).

Nowadays, advances in the field of natural language processing have made possible to easily and reliably carry out the analysis and coding of the information gathered (Hirschberg & Manning, 2015). One example of these advances is sentiment analysis, a tool widely used to study satisfaction and opinions through the analysis of responses to open-ended questions or comments (Feldman, 2013). Sentiment analysis enables the analysis of a vast amount of text data from answers to open-ended questions, which allows for a more detailed and nuanced understanding of students’ opinions and sentiments. Additionally, the analysis of a large amount of data allows the identification of patterns and trends that are not easily apparent through manual analysis, which can provide insights that would have been missed with traditional methods. Prior studies have already used this tool for students’ evaluation of teaching, proving its usefulness (Rybinski & Kopciuszewska, 2021). This study follows this approach and uses sentiment analysis to obtain information about students’ opinions and sentiments about their teachers’ communication.

The potential of sentiment analysis in education

Sentiment analysis (SA) is an artificial intelligence-based tool used to extract sentiment, referred to the positive, negative, or neutral emotional state or opinion that a person expresses towards a particular subject, from large amounts of text (Rani & Kumar, 2017). To date, it is already integrated into many applications such as chatbots or transcription services (Solangi et al., 2018). SA is also widely used to monitor users’ opinions and sentiments about products, as it allows companies to select which products are worth investing in (Feldman, 2013). There are three main methods for using SA: creating and training your own model (Kang et al., 2018), fine-tuning a pre-trained one (Rybinski & Kopciuszewska, 2021), or using a non-fine-tuned pre-trained model (Andersson et al., 2018). Each method involves a certain amount of time for creation and training, and different reliabilities (Kagklis et al., 2015). The first two lead to reliable results in terms of Cohen’s Kappa, Fleiss’ Kappa, and average pairwise percent agreement (Lin et al., 2019). Unfortunately, these methods come with some drawbacks: part of the data is used to train the model and then it cannot be used in analyses; results are not replicable; and they require time to be created and trained. Although smaller reliability values are obtained, non-fine-tuned pre-trained models can also be used. For instance, Andersson et al. (2018) used this type of model, and they were able to compare the average hours of study outside the class and the sentiment of student feedback, founding a moderate negative correlation between them. They concluded that, although it would have been more reliable to train a model, this method was less time-consuming. Thereby, the most useful option for applied researchers interested in the analysis of relations between sentiment and other variables seems to be non-fine-tuned pre-trained models. Additionally, when analysing the sentiment polarity, results can be the sentiment labels (positive, negative, or neutral), or the score of belonging to each sentiment (Hujala et al., 2020; Nimala & Jebakumar, 2021). Based on the aim of this study, analysing sentiment polarity scores of students’ responses can be a good way to examine their sentiments and its relations with other variables.

So far, several studies have investigated the use of SA in education. They have mainly dealt with students’ evaluation of teaching in higher education and massive open online courses (Geng et al., 2020; Rybinski & Kopciuszewska, 2021; Zhou et al., 2020). Prior research suggest that information obtained with SA is helpful in examining the impression of the courses (Cunningham-Nelson et al., 2019), improving the courses (Leong et al., 2012), and evaluating the teachers (Pong-inwong & Songpan, 2019). Nevertheless, few studies have ventured to use SA as a method of data collection when the goal is to test an explanatory model of achievement and performance (Nimala & Jebakumar, 2021). Among these, Liu et al. (2018) found relations between the positive sentiments extracted from students’ feedback and their academic performance in online courses. As noted by Burić et al. (2016) it is necessary to test explanatory models, as the results could be used to explain the behaviour of outstanding teachers (Tseng et al., 2018). The evidence reviewed here seems to suggest the pertinence of using SA to analyse students’ sentiments on their teachers’ communication using an open-ended question. By doing so, we can test whether teachers’ messages influence students’ sentiment, and then examine the mediating effect of the sentiment polarity scores on the impact of TEM on students’ MTL. Following Zhou et al. (2020) advice, this work will provide new insights into the relations between sentiment, teacher behaviours and students’ outcomes.

TEM, students’ sentiment, and MTL: a multilevel analysis

The type of design and data analysis must be considered when trying to understand the effect of TEM on students’ MTL and the mediating role of the students’ sentiment. A teacher may use different messages with each student, thus, each learner report differently on the engaging messages used by their teacher. However, this variable does not assess a characteristic of the student but that of the teacher. For studying these kinds of variables, it is necessary to follow a multilevel approach, in which a variable can be situated at two levels (Marsh et al., 2012; Morin et al., 2014). At the teachers’ level (L2) we would find the overall tendency of teachers’ use of TEM, which would allow us to test whether TEM affect the average classroom sentiment. At the students’ level (L1) we would find TEM, students’ sentiments and their MTL, thus enabling us to examine the mediating role of sentiments in the impact of TEM on MTL. Consequently, the methodological approach adopted in this study is a multilevel analysis since this is the most appropriate way to analyse the data.

Research questions

This research proposed the following research questions:

- RQ1:

-

Is sentiment analysis a useful tool for assessing teachers’ verbal behaviours in the educational context?

- RQ2:

-

Do teachers engaging messages affect students’ sentiment about their teachers’ communication?

- RQ3:

-

Does students’ sentiment mediate the effect of teachers engaging messages on students’ motivation to learn?

Materials and methods

Participants

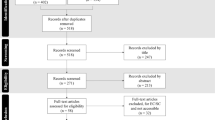

A total of 39 teachers (22 females and 17 males; mean age = 45.98, SD = 7.99) and their 963 students (468 females, 494 males and 1 unspecified; mean age = 16.39, SD = 1.27) participated in the study. They belonged to 16 secondary schools in both urban and rural settings of Gran Canaria, Tenerife, and Santander (Spain). To reduce potential bias, all participating teachers taught mathematics, and all students attended the same number of hours per week.

Procedure

Data collection took place in the first and second trimesters of the school year. Although the results pertain to data from the second term, measures of students’ MTL from the first term were taken to control for their MTL in the second term. The aims of the study were explained to teachers and students, emphasising that their participation was voluntary and confidential. Variables were evaluated by using two questionnaires provided through Google Forms and conducted in the classroom under the teacher’s supervision.

Instruments

Teachers engaging messages

Teachers engaging messages were assessed through the Teachers’ Engaging Messages Scale (Santana-Monagas, Putwain, et al., 2022; Appendix A). The scale contains a total of 36 items preceded by the phrase ‘My teacher tells me that…’. An example of an item to which students were asked to respond was, ‘My teacher tells me that…If I work hard, I will feel important’. Using a 7-point Likert scale, students were asked to report on their teacher’s use of TEM. A 7 indicates that students strongly agree with the fact that their teacher uses a considerable amount of these messages, while a 1 indicates the opposite. In this study, we only selected the items from the subscale of introjected messages focused on the benefits of engaging in tasks. McDonald’s Omega was used to examine the reliability of the instrument; it is more accurate than Cronbach’s alpha (McNeish, 2018). McDonald’s Omega was estimated using factor loadings from a congeneric CFA for each variable. The reliability and validity of this scale have been confirmed, with values of McDonald’s Omega above 0.81 for each factor. In the present study, McDonald’s Omega was 0.91 for the factor analysed.

Students’ motivation to learn

Motivation to learn was measured using the Spanish version of the Échelle de Motivation en Éducation (Núñez et al., 2005). This scale consists of 20 items, beginning with the question, ‘Why do you study?’, followed by a series of statements such as ‘Because it will make me feel important’ or ‘To prove to me that I am an intelligent person’. The items were measured through a seven-point Likert scale ranging from 1 (absolutely not true) to 7 (absolutely true). We selected the items from the subscale of introjected motivation. McDonald’s Omega was also used to examine the reliability of the instrument, and it was estimated using factor loadings from a congeneric CFA for each variable. In this case, McDonald’s Omega was 0.89 for the first term items and 0.88 for the second term items.

Students’ sentiment

Following previous studies that ask questions to examine specific elements with SA (Hynninen et al., 2019), and that have provided guidance on crafting effective open-ended questions (Bielick, 2017; Shilo, 2015), we took great care in creating an open-ended question that was not ambiguous and minimized the potential for bias in students’ chances to give a Yes/No or a brief answer. The question, which was asked at the beginning of the Teachers’ Engaging Messages Scale, was: ‘If you had to tell a classmate how your teacher talks to you, what would you say?’. To ensure the question was suitably framed, we also consulted with experts in the field and considered the potential sources of bias in the question (lack of specificity, social desirability bias, etc.).

We decided to use the pre-trained model provided by Microsoft (2022) to perform sentiment analysis in our study. The model uses a combination of n-gram and word embeddings features as classification techniques to analyse text data. It has been pre-trained on a large dataset of text data, and it uses natural language processing techniques, such as tokenization, to extract features from text data. This technique breaks down the text into smaller units called tokens, and then use mathematical algorithms to understand the context and meaning of the text and classify it into different sentiments.

To use the service, we created an executable file using the API key provided by Microsoft. The input was an Excel worksheet with one column containing all the students’ answers to the open-ended question. After analysing the data, it returns another Excel worksheet with the original column plus a sentiment label (i.e., positive, negative, and neutral), and a numeric sentiment score between 0 and 1, where sentiment scores closer to 1 represents highly positive comments and scores closer to 0 represent highly negative comments. We analysed a total of 6072 words, with no cost because this amount of data is sufficiently small for Microsoft to not charge any cost. The time taken to analyse that amount of data was approximately 10 min, which is much less time-consuming than coding the data by hand.

Once the data were analysed, it was first necessary to test the reliability of the SA model. To determine it, two researchers independently classified the messages according to the sentiment label. We then compared their results with the Microsoft’s model results and examined the inter-annotator agreement by calculating the average pairwise percent agreement, the Fleiss’ Kappa, and the Cohen’s Kappa coefficient using ReCal3: Reliability Calculator for 3 or more annotators (Freelon, 2010). Results showed an average pairwise percent agreement of 80%, which is quite satisfactory. The value observed for the average pairwise Cohen’s Kappa was 0.51 and for the Fleiss’ Kappa was 0.50, which are moderately good results (Fleiss, 1971; Landis & Koch, 1977).

Data analysis

All data analyses were conducted with Mplus 8.6 (Muthén & Muthén, 2021). The mean, standard deviation and correlations among variables were carried out before analysing the statistical models.

To determine the relations between variables at the two different levels, we used a multilevel structural equation model (ML-SEM) approach (Morin et al., 2014). This model helps us control for measurement error at the students’ and teachers’ levels and for sampling error by aggregating individual students’ responses to represent teacher’s level constructs. As it was previously mentioned, when performing a ML-SEM, students’ responses from teachers’ related questions can be aggregated to serve as a measure of the teachers’ tendency. For gathering evidence that a variable pertains to the teachers’ level, we expect students’ responses about one teacher to be similar. Following Lüdtke et al. (2009) recommendations, we decided to use the intraclass correlation coefficient (ICC) to inform about the similarity observed across student ratings of TEM and sentiment. The multilevel analysis was carried out using the following variables: TEM, students’ sentiment, and students’ MTL at students’ level (L1); and TEM and students’ sentiments at teachers’ level (L2).

We conducted an analysis of mediation at L1 to test if students’ sentiments mediated the relation between TEM and students’ MTL. To establish whether the mediation effect was full or partial (Morin et al., 2014), we tested two alternative models. L2 of both models was the same: TEM predicted students’ sentiments about their teacher’s communication. At level 1, when introducing the variable students’ MTL, relations changed between the models. In the first one (Fig. 1), TEM effects on students’ MTL were postulated to be fully mediated by the students’ sentiment. In the second model (Fig. 2), these effects were partially mediated by the student’s sentiment.

To search for evidence of mediation, we compared both models using a χ2 test and fit indexes (Morin et al., 2014). If there were no differences between both models, we would hold the most parsimonious result. Finally, we calculated the indirect effect and standard error using the delta method (Sobel, 1982).

Results

Table 1 shows relevant examples of the results obtained after analysing the students’ answers with sentiment analysis:

Answers 1 and 6 are elaborated and well classified. In answer 2, although there is a spelling mistake, the model could successfully classify it. Responses 3 and 7 are examples of sentences that convey two things in the same message, which can be well appreciated in the score of number 3 (neutral score). Answers 4 and 5 are poorly elaborated feedback, which could sometimes be misinterpreted (9.42%). Finally, response 8 denotes other factors of teachers’ communication not covered in this study (4.90%).

Preliminary analyses

Descriptive statistics (mean, SD, and ICC) of TEM, students’ sentiment, and students’ MTL are represented in Table 2. ICC values observed for the sentiment (0.07) and the teachers’ engaging messages (0.06) were acceptable (Marsh et al., 2008).

Bivariate correlations between sentiment, TEM, and students’ MTL are displayed in Table 3. All variables were positively and significantly correlated at level 1 (below the diagonal). However, at level 2 (above the diagonal), only sentiment and TEM, and these with students’ MTL, were significantly correlated, showing a positive correlation.

Multilevel mediation analysis

Fit indices comparison of the full and partial mediation models is displayed in the table below (Table 4).

Comparisons of these two models in terms of fits favours the partial mediation model in all aspects. Adding partial mediation resulted in a decrease in information criteria values: AIC (Akaike, 1974) and BIC (Schwarz, 1978) were lower in the partial mediation model (4295.33 and 4339.18) than in the full mediation model (4353.97 and 4393.44). RMSEA value in full mediation model (0.25) indicates that this model’s fit is too poor as it is higher than 0.10 (Browne & Cudeck, 1992), while partial mediation model takes a value of 0.10, which indicates a much better fit than the first one. When comparing CFI and TLI, the full mediation model has values too low to be considered good or acceptable for both indices, while the partial mediation model has values considered good for both as they are above 0.90 (Bentler & Bonett, 1980; Schreiber et al., 2006). We observed that the SRMR at the students’ level of the full mediation model is higher than 0.08. In contrast, in the partial mediation model, for both the students’ and teachers’ levels, the SRMR takes values below 0.08, indicating an approximate fit (Asparouhov & Muthén, 2018). These results suggest that the best fitting ML-SEM model is the partial mediation model, as it provided an adequate representation of relations among the variables (Morin et al., 2014).

After comparing fit indices of both models and observing that the partial mediation model fitted better, its results were examined (Table 5).

As shown in Table 5, all hypothesised relations (see Fig. 2) were significant in both levels 1 and 2. In general terms, it can be observed that TEM predict students’ sentiment well, especially at level 2, where the β takes values of 0.95. At level 1, all relations were also positive, with the strongest relationship between TEM and students’ MTL (β = 0.28). The standardised indirect effect between TEM and students’ MTL was significantly different from 0 (β = 0.012; S.E. = 0.006; p = .041).

Discussion

The present study aimed to prove that sentiment analysis is a useful tool for assessing teachers’ verbal behaviour in the educational context (RQ1), and to use it for studying the relations between students’ sentiments and teachers engaging messages (RQ2) as well as the mediating role of students’ sentiment in the effect of TEM on students’ motivation to learn (RQ3). Firstly, the reliability of the model used was analysed and it was found to be moderately good. With respect to the first research question, we have provided evidence of the utility of the SA to measure students’ sentiments about their teacher’s communication. Specifically, we found that SA can accurately capture the positive, negative, and neutral opinions of students about their teacher’s communication, and this information can be used to improve the quality of teachers’ practices and enhance students’ motivation to learn.

Regarding RQ2 and RQ3, it was discovered that when teachers rely on more engaging messages, students manifest more positive sentiments when asked about their teacher’s communication. In addition, we found that sentiment partially mediates the effect of TEM on students’ MTL. The following sections will discuss these results.

The role of students’ sentiment in engaging messages and motivation to learn

An initial objective of the study was to examine the relations of TEM, students’ sentiment, and students’ MTL, for which we compared two models (Morin et al., 2014). Answering the RQ2, model results showed that student sentiment is strongly influenced by teachers’ use of TEM (β = 0.95). We found that the higher the students’ perceived use of TEM, the more positive their sentiments towards their teacher’s communication. This finding broadly supports the work of prior studies in SA linking students’ sentiment with students’ evaluation of teachers’ performance and behaviours (Nimala & Jebakumar, 2021; Pong-inwong & Songpan, 2019; Sindhu et al., 2019; Tseng et al., 2018). Our findings support the idea that valuable information can also be gathered from students’ feedback from open-ended questions to gain insights into teacher behaviours and performance using AI-based tools.

The RQ3 sought to determine whether students’ sentiment was mediating the effect of TEM on students’ MTL. Results indicated that the best fitting model was the one in which sentiment acted as a partial mediator (Fig. 2). We observed that both paths, from TEM to students’ sentiments and from sentiments to students’ MTL, were in line with our hypotheses, providing evidence of mediation. As the direct path from TEM to the students’ MTL is strong we cannot rule out other possible mediators, thus our results do not provide evidence of a total mediation, but only partially (MacKinnon et al., 2007). This finding was unexpected and suggests that sentiment is not the only option to focus on when developing interventions that seek to improve students’ MTL. Actually, this reinforces the idea that motivation is a complex psychological construct and can have a variety of causes (Judd & Kenny, 1981). The findings of this study are, however, significant in at least two major respects: we have provided evidence of the utility of the SA to measure students’ sentiments about their teacher’s communication; and we have proved that this variable is relevant to study relations between teacher and student variables.

Limitations and future perspectives

Despite the contributions of this study, some limitations need to be addressed. First, the sentiment classification reliability was found to be moderate. One possible explanation for these results is the simplicity of students’ answers. Due to the fact that the SA model has not been specifically trained for the educational model, it sometimes interpreted simple responses incorrectly. Similarly, sarcastic, and metaphorical responses to the questions were not correctly assessed by the model. However, researchers were able to understand the context of these responses and they classified them correctly. In this study, we have established that the use of non-fine-tuned pre-trained models, despite having lower reliability values than more complex models, has some important advantages: they are more accessible, and they also save time. Future studies using this type of model, however, could take some actions to increase the reliability of SA. Among these is to pay attention to how open-ended questions are formulated to prevent students from writing too simple, sarcastic, or metaphorical responses. We must also pay special attention to potential bias in students’ feedback when analysing its relations with other variables. Other researchers cited potential bias towards the positive evaluation of teaching (Alhija & Fresko, 2009; Cunningham-Nelson et al., 2019; Sengkey et al., 2019), which is also seen when applying sentiment analysis (Hynninen et al., 2020).

There is abundant room for further progress in determining the mediators that influence the relations between TEM and MTL. It is possible to consider the students’ MTL as the mediator between TEM and the students’ sentiment (Bronstein et al., 2005; Morin et al., 2014). Contrary to our cross-sectional study, a longitudinal study could help examine the directions of the relations (Arens et al., 2015). Further research is also needed to explore other partial mediators when examining teachers’ behaviours and students’ motivation (Kunter et al., 2007; Moran, 2023). The possibility of moderators influencing the strength and shape of the mediated effect should be considered as well (MacKinnon et al., 2007). In this regard, Zhou & Ye, (2020) recommend investigating the role and impact of demographic variables (gender, age, group, academic background, etc.) on students’ emotions and performance using SA.

Lastly, when students assess their teachers’ engaging messages, they are informing about their perceptions, and the indirect nature of the data can lead to potential bias. When using students’ reports to assess a classroom characteristic (e.g., teachers’ verbal behaviours), it is recommended to combine the indirect data with objective observational data (Urdan, 2004). For this reason, future studies can incorporate direct observations inside the classroom to measure teachers’ engaging messages (Falcon et al., 2023).

Conclusions

The present study performed a sentiment analysis on students’ responses to open-ended questions about their teacher’s communication. We then examined if teachers engaging messages affected students’ sentiment and the mediating role of this sentiment in the relation between teachers’ messages and students’ motivation to learn. Results showed that sentiment analysis is a useful tool for measuring students’ opinions and sentiments towards a teacher verbal behaviour, particularly engaging messages. We also found that students’ sentiment of their teacher’s communication was strongly influenced by teachers’ use of engaging messages. Specifically, we found that the higher the students’ perceived use of engaging messages, the more positive their sentiments towards their teacher’s communication (β = 0.95). Another major finding was that sentiment partially mediates the effect of teachers’ engaging messages on students’ motivation to learn. We observed that both paths, direct and indirect, were in line with our hypotheses, providing evidence of mediation, but as the direct path from engaging messages to the motivation to learn was strong (β = 0.28), we could not rule out other possible mediators. These findings open the path to the usage of sentiment analysis to study the relations of the students’ sentiment and their outcomes. The findings will also be of interest to design interventions focused on improving teachers’ use of engaging messages. Improving teachers’ comprehension and perception of their engaging messages could lead to great progress in students’ outcomes, such as their feedbacks’ sentiments and motivation to learn.

References

Adediwura, A. A., & Tayo, B. (2007). Perception of teachers’ knowledge, attitude and teaching skills as predictor of academic performance in nigerian secondary schools. Educational Research and Review, 2(7), 165–171. http://www.academicjournals.org/ERR.

Akaike, H. (1974). A new look at the statistical model identification. IEEE Transactions on Automatic Control, 19(6), 716–723. https://doi.org/10.1109/TAC.1974.1100705.

Alhija, F. N. A., & Fresko, B. (2009). Student evaluation of instruction: What can be learned from students’ written comments? Studies in Educational Evaluation, 35(1), 37–44. https://doi.org/10.1016/j.stueduc.2009.01.002.

Álvarez-Álvarez, C., Sanchez-Ruiz, L., Ruthven, A., & Montoya, J. (2019). Innovating in university teaching through classroom interaction. Journal of Education Innovation and Communication, 1(1), 8–18. https://doi.org/10.34097/jeicom_1_1_1.

Andersson, E., Dryden, C., & Variawa, C. (2018). Applying machine learning to student feedback through sentiment analysis. 2018Canadian Engineering Education Association (CEEA-ACEG18) Conference, 2–7. https://doi.org/10.24908/pceea.v0i0.13059

Arens, A. K., Morin, A. J. S. S., & Watermann, R. (2015). Relations between classroom disciplinary problems and student motivation: Achievement as a potential mediator? Learning and Instruction, 39, 184–193. https://doi.org/10.1016/j.learninstruc.2015.07.001.

Asparouhov, T., & Muthén, B. (2018). SRMR in Mplus. http://www.statmodel.com/download/SRMR2.pdf

Baños, R., Ortiz-Camacho, M. M., Baena-Extremera, A., & Tristán-Rodríguez, J. L. (2017). Satisfaction, motivation and academic performance in students of secondary and high school: Background, design, methodology and proposal of analysis for a research paper. Espiral Cuadernos Del Profesorado, 10(20), 40–50.

Behzadnia, B., Adachi, P. J. C., Deci, E. L., & Mohammadzadeh, H. (2018). Associations between students’ perceptions of physical education teachers’ interpersonal styles and students’ wellness, knowledge, performance, and intentions to persist at physical activity: A self-determination theory approach. Psychology of Sport and Exercise, 39, 10–19. https://doi.org/10.1016/j.psychsport.2018.07.003.

Belcher, J., Wuthrich, V. M., & Lowe, C. (2021). Teachers use of fear appeals: Association with student and teacher mental health. British Journal of Educational Psychology. https://doi.org/10.1111/bjep.12467.

Bentler, P. M., & Bonett, D. G. (1980). Significance tests and goodness of fit in the analysis of covariance structures. Psychological Bulletin, 88(3), 588–606. https://doi.org/10.1037/0033-2909.88.3.588.

Bielick, S. (2017). Surveys and Questionnaires. In D. Wyse, N. Selwyn, E. Smith, & L. E. Suter (Eds.), The BERA/SAGE Handbook of Educational Research (pp. 640–659).

Bronstein, P., Ginsburg, G. S., & Herrera, I. S. (2005). Parental predictors of motivational orientation in early adolescence: A longitudinal study. Journal of Youth and Adolescence, 34(6), 559–575. https://doi.org/10.1007/s10964-005-8946-0.

Brooks, C., Carroll, A., Gillies, R. M., & Hattie, J. (2019). A matrix of feedback for learning. Australian Journal of Teacher Education, 44(4), 14–32. https://doi.org/10.14221/ajte.2018v44n4.2.

Browne, M. W., & Cudeck, R. (1992). Alternative ways of assessing model fit. Sociological Methods & Research, 21(2), 230–258. https://doi.org/10.1177/0049124192021002005

Buma, A., & Nyamupangedengu, E. (2020). Investigating teacher talk moves in lessons on basic genetics concepts in a teacher education classroom. African Journal of Research in Mathematics Science and Technology Education, 24(1), 92–104. https://doi.org/10.1080/18117295.2020.1731647

Burić, I., Sorić, I., & Penezić, Z. (2016). Emotion regulation in academic domain: Development and validation of the academic emotion regulation questionnaire (AERQ). Personality and Individual Differences, 96, 138–147. https://doi.org/10.1016/j.paid.2016.02.074.

Caldarella, P., Larsen, R. A. A., Williams, L., Downs, K. R., Wills, H. P., & Wehby, J. H. (2020). Effects of teachers’ praise-to-reprimand ratios on elementary students’ on-task behaviour. Educational Psychology, 40(10), 1306–1322. https://doi.org/10.1080/01443410.2020.1711872.

Catano, V. M., & Harvey, S. (2011). Student perception of teaching effectiveness: Development and validation of the evaluation of teaching competencies scale (ETCS). Assessment and Evaluation in Higher Education, 36(6), 701–717. https://doi.org/10.1080/02602938.2010.484879

Chickering, A., & Gamson, Z. (1987). Seven principles for good practice in undergraduate education. AAHE Bulletin. https://doi.org/10.5551/jat.Er001

Collie, R. J., Granziera, H., & Martin, A. J. (2019). Teachers’ motivational approach: Links with students’ basic psychological need frustration, maladaptive engagement, and academic outcomes. Teaching and Teacher Education. https://doi.org/10.1016/j.tate.2019.07.002

Cunningham-Nelson, S., Baktashmotlagh, M., & Boles, W. (2019). Visualizing student opinion through text analysis. IEEE Transactions on Education, 62(4), 305–311. https://doi.org/10.1109/TE.2019.2924385

De Meyer, J., Speleers, L., Tallir, I. B., Soenens, B., Vansteenkiste, M., Aelterman, N., Van den Berghe, L., & Haerens, L. (2014). Does observed controlling teaching behavior relate to students’ motivation in physical education? Journal of Educational Psychology, 106(2), 541–554. https://doi.org/10.1037/a0034399.

Deci, E., & Ryan, R. (2016). Optimizing students’ motivation in the era of testing and pressure: A self-determination theory perspective. In W. C. Liu, J. C. K. Wang, & R. M. Ryan (Eds.), Building autonomous learners: perspectives from research and practice using self-determination theory (pp. 9–29). Springer. https://doi.org/10.1007/978-981-287-630-0_2

Dhillon, N., & Kaur, G. (2021). Self-assessment of teachers’ communication style and its impact on their communication effectiveness: A study of indian higher educational institutions. SAGE Open. https://doi.org/10.1177/21582440211023173

Falcon, S., Admiraal, W., & Leon, J. (2023). Teachers’ engaging messages and the relationship with students’ performance and teachers’ enthusiasm. Learning and Instruction, 86, 101750.

Feldman, R. (2013). Techniques and applications for sentiment analysis. Communications of the ACM, 56(4), 82–89. https://doi.org/10.1145/2436256.2436274.

Fleiss, J. L. (1971). Measuring nominal scale agreement among many raters. Psychological Bulletin, 76(5), 378–382. https://doi.org/10.1037/h0031619.

Floress, M. T., Jenkins, L. N., Reinke, W. M., & McKown, L. (2018). General education teachers’ natural rates of praise: A preliminary investigation. Behavioral Disorders, 43(4), 411–422. https://doi.org/10.1177/0198742917709472

Freelon, D. G. (2010). ReCal: Intercoder reliability calculation as a web service. International Journal of Internet Science, 1, 20–33.

Geier, M. T. (2022). The teacher behavior checklist: The mediation role of teacher behaviors in the relationship between the students’ importance of teacher behaviors and students’ effort. Teaching of Psychology, 49(1), 14–20. https://doi.org/10.1177/0098628320979896

Geng, S., Niu, B., Feng, Y., & Huang, M. (2020). Understanding the focal points and sentiment of learners in MOOC reviews: A machine learning and SC-LIWC-based approach. British Journal of Educational Technology, 51(5), 1785–1803. https://doi.org/10.1111/bjet.12999

Goodboy, A. K., Martin, M. M., & Bolkan, S. (2009). The development and validation of the student communication satisfaction scale. Communication Education, 58(3), 372–396. https://doi.org/10.1080/03634520902755441.

Greene, J. C. (2005). The generative potential of mixed methods inquiry. International Journal of Research and Method in Education, 28(2), 207–211. https://doi.org/10.1080/01406720500256293.

Gregory, A., Ruzek, E., Hafen, C. A., Mikami, A. Y., Allen, J. P., & Pianta, R. C. (2017). My teaching partner-secondary: A video-based coaching model. Theory into Practice, 56(1), 38–45. https://doi.org/10.1080/00405841.2016.1260402

Haerens, L., Aelterman, N., Vansteenkiste, M., Soenens, B., & Van Petegem, S. (2015). Do perceived autonomy-supportive and controlling teaching relate to physical education students’ motivational experiences through unique pathways? Distinguishing between the bright and dark side of motivation. Psychology of Sport and Exercise, 16(P3), 26–36. https://doi.org/10.1016/j.psychsport.2014.08.013.

Hamaker, E. L., Mulder, J. D., & van IJzendoorn, M. H. (2020). Description, prediction and causation: Methodological challenges of studying child and adolescent development. Developmental Cognitive Neuroscience, 46, 100867. https://doi.org/10.1016/J.DCN.2020.100867.

Hasan, N., Malik, S. A., & Khan, M. M. (2013). Measuring relationship between students’ satisfaction and motivation in secondary schools of Pakistan. Middle East Journal of Scientific Research, 18(7), 907–915. https://doi.org/10.5829/idosi.mejsr.2013.18.7.11793.

Hattie, J. (2008). Visible learning: A synthesis of over 800 meta-analyses relating to achievement. In Visible Learning: A Synthesis of Over 800 Meta-Analyses Relating to Achievement. https://doi.org/10.4324/9780203887332

Hirschberg, J., & Manning, C. D. (2015). Advances in natural language processing. Science, 349(6245), 261–266. https://www.science.org.

Howe, C., & Abedin, M. (2013). Classroom dialogue: A systematic review across four decades of research. Cambridge Journal of Education, 43(3), 325–356. https://doi.org/10.1080/0305764X.2013.786024.

Hujala, M., Knutas, A., Hynninen, T., & Arminen, H. (2020). Improving the quality of teaching by utilising written student feedback: A streamlined process. Computers and Education, 157, 103965. https://doi.org/10.1016/j.compedu.2020.103965

Hynninen, T., Knutas, A., Hujala, M., & Arminen, H. (2019). Distinguishing the themes emerging from masses of open student feedback. 2019 42nd International Convention on Information and Communication Technology, Electronics and Microelectronics, MIPRO 2019 - Proceedings, 557–561. https://doi.org/10.23919/MIPRO.2019.8756781

Hynninen, T., Knutas, A., & Hujala, M. (2020). Sentiment analysis of open-ended student feedback. 2020 43rd International Convention on Information, Communication and Electronic Technology, MIPRO 2020 - Proceedings, 755–759. https://doi.org/10.23919/MIPRO48935.2020.9245345

Joshi, A., Kale, S., Chandel, S., & Pal, D. (2015). Likert scale: Explored and explained. British Journal of Applied Science & Technology, 7(4), 396–403. https://doi.org/10.9734/bjast/2015/14975

Judd, C. M., & Kenny, D. A. (1981). Estimating the Effects of Social Interventions. Cambridge University Press. https://doi.org/10.1093/sw/28.2.169

Kagklis, V., Karatrantou, A., Tantoula, M., Panagiotakopoulos, C. T., & Verykios, V. S. (2015). A Learning Analytics Methodology for detecting sentiment in Student Fora: A Case Study in Distance Education. European Journal of Open Distance and E-Learning, 18(2), 74–94. https://doi.org/10.1515/eurodl-2015-0014.

Kang, L., Liu, Z., Su, Z., Li, Q., & Liu, S. (2018). Analyzing the relationship among learners’ social characteristics, sentiments in a course forum and learning outcomes. Proceedings – 2018 7th International Conference of Educational Innovation through Technology, EITT 2018, 5, 210–213. https://doi.org/10.1109/EITT.2018.00049

Kazdin, A. E. (2007). Mediators and mechanisms of change in psychotherapy research. Annual Review of Clinical Psychology, 3, 1–27. https://doi.org/10.1146/annurev.clinpsy.3.022806.091432.

Kunter, M., Baumert, J., & Köller, O. (2007). Effective classroom management and the development of subject-related interest. Learning and Instruction, 17(5), 494–509. https://doi.org/10.1016/j.learninstruc.2007.09.002.

Landis, J. R., & Koch, G. G. (1977). The measurement of observer agreement for categorical data. Biometrics. https://doi.org/10.2307/2529310

Leong, C. K., Lee, Y. H., & Mak, W. K. (2012). Mining sentiments in SMS texts for teaching evaluation. Expert System Application, 39, 2584–2589. https://doi.org/10.1016/j.eswa.2011.08.113.

Lin, Q., Zhu, Y., Zhang, S., Shi, P., Guo, Q., & Niu, Z. (2019). Lexical based automated teaching evaluation via students’short reviews. Computer Applications in Engineering Education, 27(1), 194–205. https://doi.org/10.1002/cae.22068.

Lipnevich, A. A., & Panadero, E. (2021). A review of feedback models and theories: Descriptions, definitions, and conclusions. Frontiers in Education. https://doi.org/10.3389/feduc.2021.720195

Liu, J., Bartholomew, K., & Chung, P. K. (2017). Perceptions of Teachers’ interpersonal Styles and Well-Being and Ill-Being in secondary School Physical Education students: The role of need satisfaction and need frustration. School Mental Health, 9(4), 360–371. https://doi.org/10.1007/s12310-017-9223-6.

Liu, Z., Zhang, W., Cheng, H. N. H. H., Sun, J., & Liu, S. (2018). Investigating relationship between discourse behavioral patterns and academic achievements of students in SPOC discussion forum. International Journal of Distance Education Technologies, 16(2), 37–50. https://doi.org/10.4018/IJDET.2018040103.

Lüdtke, O., Robitzsch, A., Trautwein, U., & Kunter, M. (2009). Assessing the impact of learning environments: How to use student ratings of classroom or school characteristics in multilevel modeling. Contemporary Educational Psychology, 34(2), 120–131. https://doi.org/10.1016/j.cedpsych.2008.12.001.

MacKinnon, D. P., Fairchild, A. J., & Fritz, M. S. (2007). Mediation analysis. Annual Review of Psychology, 58, 593–614. https://doi.org/10.1146/annurev.psych.58.110405.085542.

Marsh, H. W., Martin, A. J., & Cheng, J. H. S. (2008). A multilevel perspective on gender in classroom motivation and climate: Potential benefits of male teachers for boys? Journal of Educational Psychology, 100(1), 78–95. https://doi.org/10.1037/0022-0663.100.1.78.

Marsh, H. W., Lüdtke, O., Nagengast, B., Trautwein, U., Morin, A. J. S. S., Abduljabbar, A. S., & Köller, O. (2012). Classroom climate and contextual effects: Conceptual and methodological issues in the evaluation of group-level effects. Educational Psychologist, 47(2), 106–124. https://doi.org/10.1080/00461520.2012.670488

Marshik, T., Ashton, P., & Algina, J. (2017). Teachers’ and students’ needs for autonomy, competence, and relatedness as predictors of students’ achievement. Social Psychology of Education, 20, 1–29. https://doi.org/10.1007/s11218-016-9360-z.

Maxwell, J. A. (2012). The importance of qualitative research for causal explanation in Education. Qualitative Inquiry, 18(8), 655–661. https://doi.org/10.1177/1077800412452856.

McNeish, D. (2018). Thanks coefficient alpha, we’ll take it from here. Psychological Methods, 23(3), 412–433. https://doi.org/10.1037/met0000144.

What is sentiment analysis and opinion mining in Azure Cognitive Service for Language? Microsoft Corporation, & En-Us, H. D. M. C. (2022)

Molina-Azorin, J. F. (2016). Mixed methods research: An opportunity to improve our studies and our research skills. European Journal of Management and Business Economics, 25(2), 37–38. https://doi.org/10.1016/j.redeen.2016.05.001.

Moran, S. (2023). Educating the youth to develop life purpose: An eco-systemic approach. Revista de Investigación Educativa, 41(1), 15–31. https://doi.org/10.6018/rie.539521

Morin, A. J. S. S., Marsh, H. W., Nagengast, B., & Scalas, L. F. (2014). Doubly latent multilevel analyses of classroom climate: An illustration. Journal of Experimental Education, 82(2), 143–167. https://doi.org/10.1080/00220973.2013.769412.

Muthén, L. K., & Muthén, B. O. (2021). Mplus: Statistical Analysis with Latent Variables: User’s Guide (Version 8.6). Authors.

Nicholson, L., Putwain, D. W., Nakhla, G., Porter, B., Liversidge, A., & Reece, M. (2019). A person-centered approach to students’ evaluations of perceived fear appeals and their association with engagement. Journal of Experimental Education, 87(1), 139–160. https://doi.org/10.1080/00220973.2018.1448745

Nimala, K., & Jebakumar, R. (2021). Sentiment topic emotion model on students feedback for educational benefits and practices. Behaviour and Information Technology, 40(3), 311–319. https://doi.org/10.1080/0144929X.2019.1687756.

Núñez, J. L., Martín-Albo, J., & Navarro, J. G. (2005). Validación de la versión española de la Échelle de motivation en Éducation. Psicothema, 17(2), 344–349.

Oostdam, R. J., Koerhuis, M. J. C., & Fukkink, R. G. (2019). Maladaptive behavior in relation to the basic psychological needs of students in secondary education. European Journal of Psychology of Education, 34(3), 601–619. https://doi.org/10.1007/s10212-018-0397-6.

Pong-inwong, C., & Songpan, W. (2019). Sentiment analysis in teaching evaluations using sentiment phrase pattern matching (SPPM) based on association mining. International Journal of Machine Learning and Cybernetics, 10(8), 2177–2186. https://doi.org/10.1007/s13042-018-0800-2.

Preacher, K. J., & Kelley, K. (2011). Effect size measures for mediation models: Quantitative strategies for communicating indirect effects. Psychological Methods, 16(2), 93–115. https://doi.org/10.1037/a0022658.

Putwain, D. W., & Best, N. (2011). Fear appeals in the primary classroom: Effects on test anxiety and test grade. Learning and Individual Differences, 21(5), 580–584. https://doi.org/10.1016/j.lindif.2011.07.007.

Putwain, D. W., & Remedios, R. (2014). The scare tactic: Do fear appeals predict motivation and exam scores? School Psychology Quarterly, 29(4), 503–516. https://doi.org/10.1037/spq0000048

Putwain, D. W., & Roberts, C. (2009). The development of an instrument to measure teachers’ use of fear appeals in the GCSE classroom. British Journal of Educational Psychology, 79(4), 643–661. https://doi.org/10.1348/000709909X426130.

Putwain, D. W., & Symes, W. (2011). Teachers’ use of fear appeals in the Mathematics classroom: Worrying or motivating students? The British Journal of Educational Psychology, 81, 456–474. https://doi.org/10.1348/2044-8279.002005.

Putwain, D. W., Symes, W., & McCaldin, T. (2019). Teacher use of loss-focused, utility value messages, prior to high-stakes examinations, and their appraisal by students. Journal of Psychoeducational Assessment, 37(2), 169–180. https://doi.org/10.1177/0734282917724905.

Putwain, D. W., Symes, W., Nicholson, L. J., & Remedios, R. (2021). Teacher motivational messages used prior to examinations: What are they, how are they evaluated, and what are their educational outcomes? In A. J. Elliot (Ed.), Advances in motivation science (Vol. 8, pp. 63–103). NY: Elsevier. https://doi.org/10.1016/bs.adms.2020.01.001

Ramsden, P. (2003). Learning to teach in higher education studies in higher education. Routledge. https://doi.org/10.1080/03075079312331382498

Rani, S., & Kumar, P. (2017). A sentiment analysis system to improve teaching and learning. Computer, 50(5), 36–43.

Robins, R. W., Fraley, R. C., & Krueger, R. F. (2007). In R. W. Robins, R. C. Fraley, & R. F. Krueger (Eds.), Handbook of research methods in personality psychology. The Guilford Press.

Rodgers, B. L., & Cowles, K. V. (1993). The qualitative research audit trail: A complex collection of documentation. Research in Nursing & Health, 16(3), 219–226. https://doi.org/10.1002/nur.4770160309.

Rothman, A. J., & Salovey, P. (1997). Shaping perceptions to motivate healthy behavior: The role of message framing. Psychological Bulletin, 121(1), 3–19. https://doi.org/10.1037/0033-2909.121.1.3.

Ryan, R., & Deci, E. (2000). Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. American Psychologist, 55(1), 68–78. https://doi.org/10.1037/0003-066X.55.1.68.

Ryan, R., & Deci, E. (2017). Self-determination theory: Basic psychological needs in motivation, development, and wellness. The Guilford Press. https://doi.org/10.1521/978.14625/28806

Ryan, R., & Deci, E. (2020). Intrinsic and extrinsic motivation from a self-determination theory perspective: Definitions, theory, practices, and future directions. Contemporary Educational Psychology, 61, 101860. https://doi.org/10.1016/j.cedpsych.2020.101860.

Rybinski, K., & Kopciuszewska, E. (2021). Will artificial intelligence revolutionise the student evaluation of teaching? A big data study of 1.6 million student reviews. Assessment and Evaluation in Higher Education, 46, 1127–1139. https://doi.org/10.1080/02602938.2020.1844866.

Santana-Monagas, E., Núñez, J. L., Loro, J. F., Huéscar, E., & León, J. (2022a). Teachers’ engaging messages: The role of perceived autonomy, competence and relatedness. Teaching and Teacher Education, 109, 103556. https://doi.org/10.1016/j.tate.2021.103556.

Santana-Monagas, E., Putwain, D. W., Núñez, J., Loro, J., & León, J. (2022b). Do teachers’ engaging messages predict motivation to learn and performance? Revista de Psicodidáctica (English Ed), 27(1), 86–95. https://doi.org/10.1016/j.psicoe.2021.11.001.

Santana-Monagas, E., Núñez, J. L., Loro, J. F., Moreno-Murcia, J. A., & León, J. (2023). What makes a student feel vital? Links between teacher-student relatedness and teachers’ engaging messages. European Journal of Psychology of Education. https://doi.org/10.1007/s10212-022-00642-9.

Schreiber, J. B., Stage, F. K., King, J., Nora, A., & Barlow, E. A. (2006). Reporting structural equation modeling and confirmatory factor analysis results: A review. Journal of Educational Research, 99(6), 323–338. https://doi.org/10.3200/JOER.99.6.323-338.

Schwarz, G. (1978). Estimating the dimension of a model. The Annals of Statistics, 6(2), 461–464. https://doi.org/10.1214/aos/1176344136.

Sengkey, D. F., Jacobus, A., & Manoppo, F. J. (2019). Implementing support vector machine sentiment analysis to students’ opinion toward lecturer in an Indonesian Public University. Journal of Sustainable Engineering: Proceedings Series, 1(2), 194–198. https://doi.org/10.35793/joseps.v1i2.27

Shen, L., Wang, M., & Shen, R. (2009). Affective e-Learning: Using “Emotional” data to improve learning in pervasive learning environment. Educational Technology & Society, 12, 176–189.

Shilo, G. (2015). Formulating good open-ended questions in assessment. Educational Research Quarterly, 38(4), 3–30.

Sindhu, I., Muhammad Daudpota, S., Badar, K., Bakhtyar, M., Baber, J., & Nurunnabi, M. (2019). Aspect-based opinion mining on student’s feedback for faculty teaching performance evaluation. IEEE Access, 7, 108729–108741. https://doi.org/10.1109/ACCESS.2019.2928872

Smith, C. D., & Baik, C. (2021). High-impact teaching practices in higher education: A best evidence review. Studies in Higher Education, 46(8), 1696–1713. https://doi.org/10.1080/03075079.2019.1698539.

Sobel, M. E. (1982). Asymptotic confidence intervals for Indirect Effects in Structural equation models. Sociological Methodology, 13, 290–312. https://doi.org/10.2307/270723.

Solangi, Y. A., Solangi, Z. A., Aarain, S., Abro, A., Mallah, G. A., & Shah, A. (2018). Review on Natural Language Processing (NLP) and Its Toolkits for Opinion Mining and Sentiment Analysis. 2018 IEEE 5th International Conference on Engineering Technologies and Applied Sciences (ICETAS) (pp. 1–4). https://doi.org/10.1109/ICETAS.2018.8629198

Stupans, I., McGuren, T., & Babey, A. M. (2016). Student evaluation of teaching: A study exploring student rating instrument free-form text comments. Innovative Higher Education, 41(1), 33–42. https://doi.org/10.1007/s10755-015-9328-5

Taylor, G., Jungert, T., Mageau, G. A., Schattke, K., Dedic, H., Rosenfield, S., & Koestner, R. (2014). A self-determination theory approach to predicting school achievement over time: The unique role of intrinsic motivation. Contemporary Educational Psychology, 39(4), 342–358. https://doi.org/10.1016/J.CEDPSYCH.2014.08.002.

Tseng, C. W., Chou, J. J., & Tsai, Y. C. (2018). Text mining analysis of teaching evaluation questionnaires for the selection of outstanding teaching faculty members. IEEE Access, 6, 72870–72879. https://doi.org/10.1109/ACCESS.2018.2878478

Urdan, T. (2004). Using multiple methods to assess students’ perceptions of classroom goal structures. European Psychologist, 9(4), 222–231. https://doi.org/10.1027/1016-9040.9.4.222

Vallerand, R. J., Pelletier, L. G., Blais, M. R., Briere, N. M., Senecal, C., & Vallieres, E. F. (1992). The academic motivation scale: A measure of intrinsic, extrinsic, and amotivation in education. Educational and Psychological Measurement, 52(4), 1003–1017.

VanderWeele, T. J. (2015). Explanation in causal inference: Methods for mediation and interaction. Oxford University Press.

Vansteenkiste, M., Sierens, E., Goossens, L., Soenens, B., Dochy, F., Mouratidis, T., Aelterman, N., Haerens, L., & Beyers, W. (2012). Identifying configurations of perceived teacher autonomy support and structure: Associations with self-regulated learning, motivation and problem behavior. Learning and Instruction, 22, 431–439. https://doi.org/10.1016/j.learninstruc.2012.04.002.

Walker, M. (1989). Analysing qualitative data: Ethnography and the evaluation of medical education. Medical Education, 23(6), 498–503. https://doi.org/10.1111/j.1365-2923.1989.tb01575.x.

Zhou, J., & Ye, J. M. (2020). Sentiment analysis in education research: A review of journal publications. Interactive Learning Environments. https://doi.org/10.1080/10494820.2020.1826985

Funding

Open Access funding provided thanks to the CRUE-CSIC agreement with Springer Nature. This work was supported by the Ministry of Science, Innovation and Universities of Spain (PID2019-106948RA-I00/AEI/https://doi.org/10.13039/501100011033). It has also been funded by the University of Las Palmas de Gran Canaria, Cabildo de Gran Canaria, and Banco Santander by the pre-doctoral training programme for research personnel.

Author information

Authors and Affiliations

Contributions

SF: Formal analysis, Investigation, Writing - Original Draft, Writing - Review & Editing. JL: Conceptualization, Methodology, Resources, Writing - Review & Editing, Supervision, Project administration, Funding acquisition.

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Falcon, S., Leon, J. How do teachers engaging messages affect students? A sentiment analysis. Education Tech Research Dev 71, 1503–1523 (2023). https://doi.org/10.1007/s11423-023-10230-3

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11423-023-10230-3