Abstract

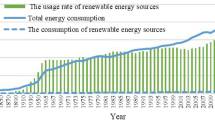

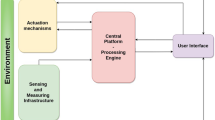

Conventional wireless sensors have difficulty solving the problem of energy limitation, especially in sensor networks in hard-to-reach extreme areas. In order to solve the problem that it is difficult to charge wireless sensors in the field using conventional energy sources, the energy harvesting wireless senor is designed to use renewable energy sources for power supply. Considering the uncertainty and unknown nature of renewable energy generation, and the need for effective energy management of the sensor. In this paper, an Node Power Control Optimization (NPCO) power allocation algorithm is proposed to adjust the power allocation problem of wireless sensor nodes within each time slot. In addition, to address the unknown and random nature of energy arrival, this paper proposes a CLSTM model based on deep learning to predict the energy arrival. The continuous autonomous energy management of wireless sensor nodes is achieved by combining the CLSTM prediction results using the NPCO algorithm. The algorithm is applicable to continuous states and is able to show good performance in the verification of real solar data. The algorithm achieves better performance in terms of long-term average net bit rate compared to the current DDPG algorithm, AC algorithm, and Lyapunov optimization algorithm.

Similar content being viewed by others

References

Ulukus, S., Yener, A., Erkip, E., Simeone, O., Zorzi, M., Grover, P., & Huang, K. (2015). Energy harvesting wireless communications: A review of recent advances. IEEE Journal on Selected Areas in Communications, 33(3), 360–381.

Ku, M. L., Wei, L., Yan, C., & Liu, K. (2017). Advances in energy harvesting communications: Past, present, and future challenges. IEEE Communications Surveys and Tutorials, 18(2), 1384–1412.

Sudevalayam, S., & Kulkarni, P. (2011). Energy harvesting sensor nodes: Survey and implications. IEEE Communications Surveys and Tutorials, 13(3), 443–461.

Piyare, R., Murphy, A. L., Kiraly, C., Tosato, P., & Brunelli, D. (2017). Ultra low power wake-up radios: A hardware and networking survey. IEEE Communications Surveys and Tutorials, 19(4), 2117–2157.

Ye, T., Qiang, X., & Xue, J. (2017). On efficient message passing in energy harvesting based distributed system. In 2017 22nd Asia and South Pacific design automation conference (ASP-DAC), pp. 139–144.

Tutuncuoglu, K., & Yener, A. (2012). Optimum transmission policies for battery limited energy harvesting nodes. IEEE Transactions on Wireless Communications, 11(3), 1180–1189.

Gündüz, D., & Devillers, B. (2011). Two-hop communication with energy harvesting. In 4th IEEE International Workshop on Computational Advances in Multi-Sensor Adaptive Processing (CAMSAP), pp. 201–204.

Tutuncuoglu, K., & Yener, A. (2012). Optimal power policy for energy harvesting transmitters with inefficient energy storage. In 46th Annual Conference on Information Sciences and Systems (CISS), pp. 1–6.

Orhan, O., & Erkip, E. (2015). Energy harvesting two-hop communication networks. IEEE Journal on Selected Areas in Communications, 33(12), 2658–2670.

Di, X., Xiong, K., Fan, P., Yang, H. C., & Letaief, K. B. (2017). Optimal resource allocation in wireless powered communication networks with user cooperation. IEEE Transactions on Wireless Communications, 16(12), 7936–7949.

Yang, J., & Ulukus, S. (2012). Optimal packet scheduling in a multiple access channel with energy harvesting transmitters. Journal of Communications and Networks, 14(2), 140–150.

Kang, J. M. (2020). Reinforcement learning based adaptive resource allocation for wireless powered communication systems. IEEE Communications Letters, 24, 1752–1756.

Wang, Z. (2012). Communication of energy harvesting tags. IEEE Transactions on Communications, 60(4), 1159–1166.

Ku, M. L., Chen, Y., & Liu, K. (2015). Data-driven stochastic models and policies for energy harvesting sensor communications. IEEE Journal on Selected Areas in Communications, 33(8), 1505–1520.

Shresthamali, S., Kondo, M., & Nakamura, H. (2017). Adaptive power management in solar energy harvesting sensor node using reinforcement learning. ACM Transactions on Embedded Computing Systems, 16(5s), 1–21.

Chan, W., Zhang, P., Nevat, I., Nagarajan, S. G., Valera, A. C., Tan, H. X., & Gautam, N. (2015). Adaptive duty cycling in sensor networks with energy harvesting using continuous-time Markov chain and fluid models. IEEE Journal on Selected Areas in Communications, 33(12), 2687–2700.

Wei, Y., Yu, F. R., Mei, S., & Zhu, H. (2017). User scheduling and resource allocation in HetNets with hybrid energy supply: An actor-critic reinforcement learning approach. IEEE Transactions on Wireless Communications, 17(1), 680–692.

Wang, L., Zhang, G., Li, J., & Lin, G. (2020). Joint optimization of power control and time slot allocation for wireless body area networks via deep reinforcement learning. Wireless Networks, 26(3), 4507–4516.

Nguyen, K. K., Duong, T. Q., Vien, N. A., Le-Khac, N. A., & Nguyen, N. M. (2019). Non-cooperative energy efficient power allocation game in d2d communication: A multi-agent deep reinforcement learning approach. IEEE Access, 7, 100480–100490.

Hwang, S., Kim, H., Lee, H., & Lee, I. (2020). Multi-agent deep reinforcement learning for distributed resource management in wirelessly powered communication networks. IEEE Transactions on Vehicular Technology, 69(11), 14055–14060.

Qiu, C., Hu, Y., & Bing Zeng, Y. C. (2019). Deep deterministic policy gradient (DDPG)-based energy harvesting wireless communications. IEEE Internet of Things Journal, 6(5), 8577–8588.

Murad, A., Kraemer, F. A., Bach, K., & Taylor, G. (2019). Autonomous management of energy-harvesting IoT nodes using deep reinforcement learning, In 2019 IEEE 13th International Conference on Self-Adaptive and Self-Organizing Systems (SASO), pp. 43–51

Chu, M., Li, H., Liao, X., & Cui, S. (2018). Reinforcement learning based multi-access control and battery prediction with energy harvesting in IoT systems. In 2018 IEEE Global Communications Conference (GLOBECOM), pp. 1–6.

Su, N., & Zhu, Q. (2020). Outage performance analysis and resource allocation algorithm for energy harvesting d2d communication system. Wireless Networks, 26(7), 5163–5176.

Kai, C., Meng, X., Mei, L., & Huang, W. (2023). Multi-agent reinforcement learning based joint uplink–downlink subcarrier assignment and power allocation for d2d underlay networks. Wireless Networks, 29(2), 891–907.

Hochreiter, S., & Schmidhuber, J. (1997). Long short-term memory. Neural Computation, 9(8), 1735–1780.

Andreas, A., & Wilcox, S. (2011) Solar technology acceleration center (solartac), Aurora, Colorado (Data), NREL Report No. DA-5500-56491. http://dx.doi.org/10.5439/1052224.

Acknowledgements

This research was funded by the National Natural Science Foundation of China (Grant No. 60971088)

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Liu, Z., Wang, J. Power control algorithm for wireless sensor nodes based on energy prediction. Wireless Netw 30, 517–532 (2024). https://doi.org/10.1007/s11276-023-03504-4

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11276-023-03504-4