Abstract

If the laws of nature are deterministic, then it seems possible that a Laplacean intelligence that knows the initial conditions and the laws would be able to accurately predict everything that will ever happen. However, it would be easy to construct a counterpredictive device that falsifies any revealed prediction about its future behavior. What would then occur if a Laplacean intelligence encountered a counterpredictive device? This is the paradox of predictability. A number of philosophers have proposed solutions to it, though part of my aim here is to argue that the paradox is more pernicious than has thus far been appreciated, and therefore that extant solutions are inadequate. My broader aim is to argue that the paradox motivates Humeanism about laws of nature.

Similar content being viewed by others

Notes

When I talk of Laplacean intelligences and counterpredictive devices not being compossible, I mean to imply that (i) the intelligence makes a prediction about the output of the device, (ii) the device is providedthat prediction as input, and (iii) the device does not malfunction. It is the specific situation where all of (i)–(iii) obtain that is impossible. If any of these conditions does not obtain, then Laplacean intelligences and counterpredictive devices may perfectly well coexist.

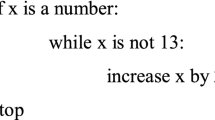

Garrett and Joaquin (2021) present a compelling case for the idea that we need no explanation of this logical impossibility. To see the point more clearly, we might compare our situation to Turing’s proof that no Turing machine constitutes a general solution to the Halting Problem. Turing showed that if there were such a “general halting machine,” then we could easily create another Turing machine that was bound to frustrate it. Given a description of this “counterpredictive machine” with an arbitrary input, we ask the supposed general halting machine if the counterpredictive machine will halt with that input. If it says yes, the counterpredictive machine goes into an infinite loop and never halts. If it says no, the counterpredictive machine halts. So a general halting machine is logically impossible.

That situation is structurally analogous to our present one. But Turing and other computability theorists didn’t think we needed an explanation of that impossibility. So why should we think we need one here? (Gijsbers, 2021 argues that the structural analogy between Turing’s proof and the paradox of predictability is in fact relevant to the paradox’s solution.)

See Lange (2016, especially chapters 7–9) for some illustrative examples.

I take it that this is also the kind of explanation that has been sought by other authors writing on the paradox, e.g. those cited in footnote 1. None of them thought that the paradox could be solved simply by noting the relevant logical impossibility.

C.f. Gijsbers (2021), p. 6: “But of course we need to say more in order to dispel the paradox. We need to explain why or in what sense determinism fails to deliver predictability.”

I am assuming here a particular form of determinism, namely forward determinism, where the initial state plus the laws determines all subsequent states. Other kinds of determinism would make the intelligence’s task even easier. If in addition the laws were reverse deterministic, the intelligence would arguably have an easier time discerning the total state at some particular time. Stronger forms of determinism might require only that the intelligence knows the boundary conditions inside some highly circumscribed region of spacetime (even just a single event) in order to use the laws to infer the content of the entire spacetime manifold.

When I talk of the intelligence’s “predictive scope” or “horizon” I mean to to denote everything that is entailed by its knowledge of the physical variables in question. You might then think that the intelligence’s predictive scope is never going to include the output of a counterpredictive device—no matter how nearby in the future that device operates—because any uncertainty at all in the initial variables will result in some degree of uncertainty about the output of the counterpredictive device. But it would not be difficult to design a counterpredictive device whose operations were “resilient” to this kind of uncertainty. For example, we might imagine that so long as the position of a lever is within a certain allowed range, the device will definitely turn on its green light. In that case, as long as the initial uncertainty does not ramify into uncertainty greater than the allowed range on the position of the lever, the output of the counterpredictive device falls within the intelligence’s predictive scope.

Roberts (2008, p. 345) also suggests that the mathematical problems the intelligence would have to solve may be uncomputable.

Moreover, even if \(f_L\) is not effectively computable, it is likely that there are approximations of \(f_L\) that are effectively computable, and are accurate within limited timescales. Such approximations would then have a built-in predictive horizon beyond which they are insufficiently accurate, but again, we would only need to posit that the counterpredictive device operates within that horizon to generate the paradox.

See Lewis (1973, 1986, 1994) for canonical statements of the view. Various developments of the BSA have been proposed over the years. See, for example, Loewer (1996, 2007); Cohen and Callender (2009), and Demarest (2017) for modifications of the account of the particular matters of fact that get systematized. And see Callender (2017), Hicks (2018), Dorst (2018), Jaag and Loew (2020), and Loewer (2020) for modifications of the systematizing standards that get applied to those facts.

See Beebee (2000, pp. 578–579) for a similar way of contrasting Humeanism and anti-Humeanism.

Setting aside unusual situations like Norton’s dome; see Norton (2008).

It is worth noting that nothing about this solution is incompatible with Humeanism about laws. The important point is just that it is compatible with anti-Humeanism.

One problem that I will only mention, but not pursue: the Nomic Preclusion solution seems to imply much too strong a result about the limits of our knowledge, namely that any contingent universally-quantified claim of unrestricted scope (e.g. “There are no gold cubes with a mass greater than 100,000,000 kg”) is unknowable to an embedded agent, since it relies on a totality fact. This conclusion seems untenable. An embedded agent may have good enough evidence to count as “knowing” such a claim, even if its evidence does not entail that claim. In the ensuing discussion, however, I will grant that an embedded agent could not know such things, because I want to focus on a more revealing problem with the proposal.

If we consider deterministic theories (like those mentioned in fn. 7) in which the intelligence only needs to know a very limited region of spacetime, such as a single event, to use the laws to infer the contents of the rest of the spacetime manifold, then the predictive task becomes even easier.

“Near” future, to guarantee that the counterpredictive device is within the predictive horizon of the intelligence, taking into account measurement errors and calculational shortcuts that it must take as an embedded agent.

To be clear, it is perfectly obvious why the intelligence cannot correctly guess the output of the device (assuming that the device is given the prediction, does not malfunction, etc.). That is not what’s happening here. Rather, the intelligence is guessing the total state at t, and then calculating the device’s output based on the laws and its guess about the total state. What we want an explanation of is why, in that situation, the intelligence would be guaranteed to guess wrong.

Given the amount of calculation involved, it wouldn’t actually be that quick. Rather, it would be the most laborious publication, in terms of total work required, in the history of humankind.

References

Armstrong, D. (1983). What is a law of nature? Cambridge: Cambridge University Press.

Beebee, H. (2000). The non-governing conception of laws of nature. Philosophy and Phenomenological Research, 61, 571–594.

Beebee, H., & Mele, A. (2002). Humean compatibilism. Mind, 111, 201–223.

Bird, A. (2007). Nature’s metaphysics. New York: Oxford University Press.

Bishop, R. C. (2003). On separating predictability and determinism. Erkenntnis, 58, 169–188.

Boyd, R. (1972). Determinism, laws, and predictability in principle. Philosophy of Science, 39, 431–450.

Callender, C. (2017). What makes time special? New York: Oxford University Press.

Carroll, J. (1994). Laws of nature. New York: Cambridge University Press.

Cartwright, N. (1980). Do the laws of physics state the facts? Pacific Philosophical Quarterly, 61, 75–84.

Cohen, J., & Callender, C. (2009). A better best system account of lawhood. Philosophical Studies, 145, 1–34.

Demarest, H. (2017). Powerful properties, powerless laws. In J. Jacobs (Ed.), Putting powers to work: Causal powers in contemporary metaphysics. Oxford: Oxford University Press.

Dorst, C. (2018). Towards a best predictive system account of laws of nature. British Journal for the Philosophy of Science, 70, 877–900.

Dretske, F. (1977). Laws of nature. Philosophy of Science, 44, 248–268.

Ellis, B. (2001). Scientific essentialism. Cambridge, UK: Cambridge University Press.

Garrett, B., & Joaquin, J. J. (2021). Ismael on the paradox of predictability. Philosophia. https://doi.org/10.1007/s11406-021-00381-z.

Geroch, R. (1977). Prediction in general relativity. In J. Earman, C. Glymour, & J. Stachel (Eds.), Foundations of space-time theories (Vol. 8, pp. 81–93). Minneapolis: University of Minnesota Press.

Gijsbers, V. (2021). The paradox of predictability. Erkenntnis. https://doi.org/10.1007/s10670-020-00369-3.

Hall, N. (ms) Humean reductionism about laws of nature. https://philpapers.org/archive/HALHRA.pdf

Hicks, M. (2018). Dynamic humeanism. British Journal for the Philosophy of Science, 69, 987–1007.

Ismael, J. (2016). How physics makes us free. New York: Oxford University Press.

Ismael, J. (2019). Determinism, counterpredictive devices, and the impossibility of Laplacean intelligences. The Monist, 102, 478–498.

Jaag, S., & Loew, C. (2020) Making best systems best for us. Synthese

Landsberg, P. T., & Evans, D. A. (1970). Free will in a mechanistic universe? The British Journal for the Philosophy of Science, 21, 343–358.

Lange, M. (2016). Because without cause: Non-causal explanations in science and mathematics. New York: Oxford University Press.

Lewis, D. (1973). Counterfactuals. Malden: Blackwell.

Lewis, D. (1986). Philosophical papers (Vol. II). New York: Oxford University Press.

Lewis, D. (1994). Humean supervenience debugged. Mind, 103, 474–90.

Loewer, B. (1996). Humean supervenience. Philosophical Topics, 24, 101–127.

Loewer, B. (2007). Laws and natural properties. Philosophical Topics, 35, 313–328.

Loewer, B. (2020). The package deal account of laws and properties. Synthese. https://doi.org/10.1007/s11229-020-02765-2.

Mackay, D. M. (1960). On the logical indeterminacy of a free choice. Mind, 69, 31–40.

Malament, D. (1996). In defense of dogma: Why there cannot be a relativistic quantum mechanics of (localizable) particles. In C. Rob (Ed.), Perspectives on quantum reality. Dordrecht: Springer.

Manchak, J. (2008). Is prediction possible in general relativity? Foundations of Physics, 38, 317–21.

Maudlin, T. (2007). The metaphysics within physics. Oxford: Oxford University Press.

Maudlin, T. (2011). Quantum non-locality and relativity (3rd ed.). Malden: Wiley-Blackwell.

Mumford, S. (2004). Laws in nature. London: Routledge.

Norton, J. (2008). The dome: An unexpectedly simple failure of determinism. Philosophy of Science, 75, 786–798.

Putnam, H. (1967). Time and physical geometry. The Journal of Philosophy, 64, 240–247.

Roberts, J. (2008). The law-governed universe. New York: Oxford University Press.

Rummens, S., & Cuypers, S. E. (2010). Determinism and the paradox of predictability. Erkenntnis, 72, 233–249.

Scriven, M. (1965). An essential unpredictability in human behaviour. In B. B. Wolman & E. Nagel (Eds.), Scientific psychology: Principles and approaches (pp. 411–425). New York: Basic Books.

Shoemaker, S. (1980). Causality and properties. In P. van Inwagen (Ed.), Time and cause (pp. 109–35). Dordrecht: Reidel.

Stone, M. A. (1989). Chaos, prediction, and Laplacean determinism. American Philosophical Quarterly, 26, 123–131.

Tooley, M. (1977). The nature of laws. Canadian Journal of Philosophy, 7, 667–698.

van Inwagen, P. (1983). An essay on free will. Oxford: Clarendon Press.

Author information

Authors and Affiliations

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Thanks to John Biro, Rodrigo Borges, Taylor Cyr, Finnur Dellsén, Kevin Dorst, Cameron Gibbs, James Gillespie, Jenann Ismael, Nic Koziolek, Greg Ray, and two anonymous referees for helpful advice, comments, and suggestions. Thanks also to an audience at the 2021 Central APA for very valuable feedback.

Rights and permissions

About this article

Cite this article

Dorst, C. Laws, melodies, and the paradox of predictability. Synthese 200, 40 (2022). https://doi.org/10.1007/s11229-022-03595-0

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11229-022-03595-0