Abstract

Inference of interaction networks represented by systems of differential equations is a challenging problem in many scientific disciplines. In the present article, we follow a semi-mechanistic modelling approach based on gradient matching. We investigate the extent to which key factors, including the kinetic model, statistical formulation and numerical methods, impact upon performance at network reconstruction. We emphasize general lessons for computational statisticians when faced with the challenge of model selection, and we assess the accuracy of various alternative paradigms, including recent widely applicable information criteria and different numerical procedures for approximating Bayes factors. We conduct the comparative evaluation with a novel inferential pipeline that systematically disambiguates confounding factors via an ANOVA scheme.

Similar content being viewed by others

Explore related subjects

Find the latest articles, discoveries, and news in related topics.Avoid common mistakes on your manuscript.

1 Introduction

A topical and challenging problem for computational statistics and machine learning is to infer the structure of complex systems of interacting units. This research area has been particularly motivated by the cognate research discipline of computational systems biology, where researchers aim to reconstruct the structure of biopathways or regulatory networks from postgenomic data; see e.g. Smolen et al. (2000), De Jong (2002) and Lawrence et al. (2010). Two principled approaches can be distinguished. The first paradigm aims to apply generic models like sparse Lasso-type regression, Bayesian networks, or hierarchical Bayesian models. A recent overview and comparative evaluation was published by Aderhold et al. (2014). The advantage of this approach is that the computational complexity of inference is comparatively low, and the application of these methods to problems of genuine interest is computationally feasible. The disadvantage is that interactions are modelled at a high level of abstraction, which ignores the detailed nature of the underlying mechanisms. The second paradigm is based on mechanistic models and the detailed mathematical description of the underlying interaction processes, typically in the form of ordinary or stochastic differential equations (DEs). Two pioneering examples of this approach were published by Vyshemirsky and Girolami (2008) and Toni et al. (2009). The advantage of this paradigm is a more detailed and faithful mathematical representation of the interactions in the system. The disadvantage is the substantially higher computational costs of inference, which stem from the fact that each parameter adaptation requires a numerical integration of the differential equations. A novel approach, presented by Oates et al. (2014) and termed ’chemical model averaging’ (CheMA), aims for a compromise that combines the strengths of both paradigms. The underlying principle is that of gradient matching, first proposed by Ramsay et al. (2007). Given the concentration time series of some quantities whose interactions are to be inferred, the temporal derivatives of the concentrations are directly estimated from the data. These derivatives are then matched against those predicted from the DEs. Formally, on the assumption that the mismatch can be treated like observational noise of known distributional form, we can derive the likelihood and thus apply standard statistical inference techniques. The model is effectively a non-linear regression model, whose computational complexity of inference sits between the two paradigms discussed above: it is lower than for proper mechanistic models, since the DEs do not have to be integrated numerically; it is higher than for standard models of the first category, since the model is non-linear in its parameters and an analytic marginalization is intractable. Henceforth, we refer to it as a ’semi-mechanistic’ model.

Overview of the presented work and how it extends the CheMA model of Oates et al. (2014)

This article takes the work of Oates et al. (2014), which won the best paper award at the European Conference on Computational Biology (ECCB) in 2014, further in four respects, related to accuracy and efficiency, model selection, benchmarking, and application expansion. An overview can be found in Fig. 1.

1.1 Accuracy and efficiency

Robust gradient estimation is absolutely critical for semi-mechanistic modelling. The numerical differentiation proposed in Oates et al. (2014) is known to be susceptible to noise amplification. We here propose the application of Gaussian process (GP) regression and the exploitation of the fact that under fairly general assumptions, GPs are closed under differentiation. Our approach effectively implements a low-pass filter that counteracts the noise amplification of the differentiation step, and we quantify the boost in network reconstruction accuracy that can be achieved in this way. We further critically assess the influence of the parameter prior in the underlying Bayesian hierarchal model. In particular, we compare the g-prior with the ridge regression prior (see e.g. Chapter 3 in Marin and Robert (2007)) in the context of the proposed semi-mechanistic model and demonstrate that the latter significantly improves both accuracy and computational efficiency.

1.2 Model selection

Network reconstruction is effectively based on statistical model selection. The model selection paradigm applied in Oates et al. (2014)—computing the log marginal likelihood (MLL) with Chib’s method—is not uncontroversial. Conceptually, alternatives to the MLL based on predictive performance have been promoted (see e.g. Sect. 7.4 in Gelman et al. (2014a)). Numerically, Chib’s method can give inaccurate results, as discussed e.g. in Murphy (2012), Chapter 24. In this article, we assess four numerical approximation procedures for the MLL in the context of semi-mechanistic models: Chib’s original method (Chib and Jeliazkov 2001), Chib’s method with a numerical stabilization, thermodynamic integration (Friel and Pettitt 2008), and a numerically stabilized version of thermodynamic integration (Friel et al. 2013). We further carry out a comparative evaluation between the MLL and four information criteria (IC) as approximations to the predictive performance paradigm promoted in Gelman et al. (2014a): ’divergence IC’ (DIC), ’widely applicable IC’ (WAIC), ’cross-validation IC’ (CVIC), and ’widely applicable Bayesian IC’ (WBIC).

Overview of the ANOVA pipeline. Based on a mathematical formulation in terms of Markov jump processes, realistic mRNA concentration time series are generated from a collection of gene regulatory networks, and the transcription rate (temporal gradient) is computed with two alternative methods: numerical differentiation versus GP regression. The proposed iCheMA model is compared with 10 established state-of-the-art network reconstruction methods, each predicting a ranking of the potential interactions (edges) in the network. From these rankings, the areas under the ROC (AUROC) and precision-recall curve (AUPRC) are computed, and provide a score for the accuracy of network reconstruction. An ANOVA scheme is applied to disentangle the effects of network topology, gradient computation and reconstruction method, for clearer recognition of trends and patterns

1.3 Benchmarking

Assessing methodological innovation calls for an objective performance evaluation. We have carried out a comprehensive comparative evaluation of the proposed semi-mechanistic model with 11 state-of-the-art network inference methods from computational statistics and machine learning, based on a realistic stochastic process model of the underlying molecular processes (Guerriero et al. 2012) and six distinct regulatory networks with different degrees of connectivity. The analysis of such a complex simulation study is hampered by the influence of various confounding factors, which tend to blur naive graphical representations. We therefore apply an ANOVA scheme, which enables us to disentangle the various effects and thereby extract clear trends and patterns in the results. In this way we can show that by integrating prior domain knowledge via a system-specific mathematical representation, the resulting semi-mechanistic model can significantly outperform state-of-the-art generic machine learning and computational statistics methods. We provide an application pipeline (Fig. 2) with which a user can objectively quantify this performance gain.

1.4 Application extension

Finally, we adapt the method from the modelling of protein signalling cascades in Oates et al. (2014) to transcriptional gene regulation and include an explicit model of transcriptional time delays. In Appendix 4 we provide a novel application of the proposed semi-mechanistic model to plant systems biology, where the objective is to infer the structure of the key gene regulatory network controlling circadian regulation in Arabidopsis thaliana.

Probabilistic graphical model representation of semi-mechanistic models. The figure shows a probabilistic graphical model representation of the semi-mechanistic models investigated in our study. Top panel CheMA, as proposed by Oates et al. (2014). Bottom panel The new variant of CheMA (iCheMA), proposed here

2 Model

2.1 Interaction model

Inference of networks from data has become a topical theme in various scientific disciplines, particularly in systems biology. Here, rather than merely aiming for a descriptive representation of associations, the objective is a quantitative mathematical description of the processes that lead to the formation of an interaction network (e.g. a ‘biopathway’ or a ‘biochemical reaction network’). A standard approach is to model this network with a system of ordinary differential equations (ODEs):

Here, \(i\in \{1,\ldots ,n\}\) denotes one of n components of the system, which is called a ‘node’. In systems biology, this is typically a gene or a protein. The variable \(x_i(t)\) denotes a measurable concentration of node i at time t. This can, for instance, be a gene expression or mRNA concentration. The vector \(\pi _i(t)\) contains the concentrations of the regulators of node i. In a network, the regulators of node i are those nodes with a directed edge (or arrow) pointing to node i. Finally, the differential equations depend on a parameter vector \(\varvec{\theta }\). In systems biology, these parameters are typically reaction rates that determine the kinetics of the underlying reactions. A specific example, taken from Barenco et al. (2006), is given in Sect. 2.3. What makes network inference in the context of such a mechanistic description particularly challenging is the fact that the parameters \(\varvec{\theta }\) are typically not measurable, or that only a small fraction of them can be measured. Hence, the elucidation of the interaction network structure requires these parameters to be inferred from concentration time series, which are typically sparse and noisy. To avoid the computational complexity of numerically solving the ODEs, we follow Oates et al. (2014) and use gradient matching. The idea, first proposed by Ramsay et al. (2007), is to estimate the time derivatives \(\frac{d x_i}{dt}\) directly from the data, then treat the problem as nonlinear regression. On the assumption that the estimated derivatives can be treated like noisy data distributed around the predicted derivatives, and this distribution is iid normal, we obtain for the likelihood:

where \(y_i(t_j)= \frac{d x_i(t)}{dt}|_{t=t_j}\), and \(\mathscr {N}(.|\mu ,\sigma ^2)\) is a normal distribution with mean \(\mu \) and variance \(\sigma ^2\). Oates et al. (2014) obtained the temporal derivatives \(y_i(t_j)\) (\(j=1,\ldots ,T\)) by differencing the time series \(x_i(t_1),\ldots ,x_i(t_T)\), based on the Euler equation. However, differencing is known to lead to noise amplification (see e.g. Chatfield (1989)). In the present work, we apply a GP to smooth interpolation and exploit the fact that GPs are closed under differentiation, i.e. provided the kernel is differentiable, the derivative of a GP is also a GP, and its covariance matrix can be derived (Solak et al. 2002; Holsclaw et al. 2013).Footnote 1 We provide more details in the following section.

2.2 Rate (or gradient) estimation

The fundamental concept of the interaction model is the matching of gradients between the regulator variables on the right-hand side and the rate of mRNA concentration change \(\frac{dx_i(t)}{dt}\) on the left-hand side of Eq. (1). Since direct measurements of these rates are typically missing, we derive a rate estimate from the available concentration measurements at the time points \(t^{\star }\in \{t_1,\ldots ,t_T\}\). A common procedure, which is also used by Oates et al. (2014), is to calculate the slope of the concentration change at each time point \(t^{\star }\) with the finite difference quotients

This numerical procedure can yield good approximations to the true rates of concentration change if the data \(x_i\) is relatively precise, i.e. the signal-to-noise ratio is high. If the data is noisy, however, the rates from the difference quotient are susceptible to distortions as a consequence of noise amplification, as mentioned above. We here propose the application of GP regression to counteract this noise amplification. A GP defines a prior distribution over functions g(.) that transform input data points, defined here as time points \(\mathbf{t}= (t_1, \ldots , t_T)\), into output data points, defined here as the concentration vector \(\mathbf{x}_i= (x_i(t_1), \ldots , x_i(t_T))\) for species i such that \(x_i(t^*) = g(t^*)\). The joint prior distribution over the functions \(p(g(t_1), \ldots , g(t_T))\) is Gaussian distributed and commonly has a zero mean and a covariance matrix \(\mathbf{G}\) with independent and identically distributed (iid) additive noise \(\sigma ^2_n\):

where I is the identity matrix. The key idea of the GP is that the elements \(p, q \in \{1, \ldots , T\}\) of the covariance matrix \(\mathbf{G}\) are calculated from a kernel function with \(G_{pq} = \kappa (t_p, t_q)\), which is typically chosen in such a way that for similar points \(t_p\) and \(t_q\), the corresponding values \(x_i(t_p)\) and \(x_i(t_q)\) are stronger correlated than for dissimilar arguments. Widely used kernel functions that are also applied in this paper are the radial basis function (RBF), the periodic function (PER), or the Matérn class function (MAT); see Chapter 4 in Rasmussen and Williams (2006) for the explicit mathematical expressions. By taking the first derivative of the kernel function \(\kappa '(t_p, t_q)\) we obtain a prior distribution \(p(\mathbf{g})\) over functions that define the first temporal derivative, i.e. the concentration gradients, for each of the time points in \(\mathbf{t}\). Provided the kernel function is differentiable, this is again a valid GP. The simplest approach is to compute the expectation over these functions and thus obtain a mean estimate of the analytical solution for the gradients at each time point. For the explicit mathematical expression, see e.g. Eq. (1) in Holsclaw et al. (2013). This acts as a proxy for the missing rates \(y_i(t)\) on the left-hand side of Eq. (1).Footnote 2 In Appendix 2 we describe the details of the GP application and the software we used.

2.3 Model and prior distributions

Equation (1) typically takes the form (Barenco et al. 2006)

Setting \(c_i=0\) and employing Michaelis–Menten kinetics yields the CheMA approach (Oates et al. 2014):Footnote 3

where the sum is over all species u that are in the set of regulators \(\pi _i\) of species i, and the indicator functions \(I_{u,i}\) indicate whether species u is an activator (\(I_{u,i}=1\)) or inhibitor (\(I_{u,i}=0\)). The first term, \(-v_{0,i} x_i(t^{\star })\), takes the degradation of \(x_i\) into account, while \(v_{u,i}\) and \(k_{u,i}\) are the maximum reaction rate and Michaelis–Menten parameters for the regulatory effect of species \(u\in \pi _i\) on species i, respectively. Equation (6) represents the typical form of transcriptional regulation without complex formation; see e.g. the supplementary material in Pokhilko et al. (2010) and Pokhilko et al. (2012). We discuss the limitations caused by complex formation in Sect. 6.5. Without loss of generality, we now assume that \(\pi _i\) is given by \(\pi _i= \{x_1,\ldots ,x_s\}\). Eq. (6) can then be written in vector notation:

where \({\mathbf{V}}_i=(v_{0,i},v_{1,i}\ldots ,v_{s,i})^{\top }\) is the vector of the maximum reaction rate parameters, and the vector \({\mathbf{D}}_{i,t^{\star }}\) depends on the measured concentrations \(x_u(t^{\star })\) and the Michaelis–Menten parameters \(k_{u,i}\) (\(u\in \pi _i\)) via Eq. (6):

We combine the Michaelis–Menten parameters \(k_{u,i}\) (\(u\in \pi _i\)) in a vector \({\mathbf{K}}_i\), and we arrange the T row vectors \({\mathbf{D}}_{i,t^{\star }}^{\top }\) (\(t^{\star }\in \{t_1,\ldots ,t_T\}\)) in a T-by-\((|\pi _i|+1)\) design matrix \({\mathbf{D}}_i={\mathbf{D}}_i({\mathbf{K}}_i)\). The likelihood is then:

where \({\mathbf{y}}_i:=(y_i(t_1),\ldots ,y_i(t_T))^{\top }\) is the vector of the rates or gradients for species i. To ensure non-negative Michaelis–Menten parameters, truncated Normal prior distributions are used:

where \({\mathbf{1}}\) is a vector of ones, \({\mathbf{I}}\) is the identity matrix, \(\nu >0\) is a hyperparameter, and the subscript, \(\left\{ {\mathbf{K}}_i\ge 0 \right\} \), indicates the truncation condition, i.e. that each element of \({\mathbf{K}}_i\) has to be non-negative. In the original CheMA model (Oates et al. 2014) a truncated g-prior is imposed on the maximum reaction rate vectors \({\mathbf{V}}_i\):

where \({\mathbf{D}}_i={\mathbf{D}}_i({\mathbf{K}}_i)\), and a Jeffrey prior is used for the noise variance: \(p(\sigma _i^2)\propto \sigma _i^{-2}\). In Sect. 6.2 we demonstrate an intrinsic shortcoming of the g-prior, and we show that the model can be significantly improved by employing a truncated ridge regression prior instead:

where \(\delta ^2_i\) is a new hyperparameter which regulates the prior strength. For \(\sigma _i^2\) and \(\delta ^2_i\) we use inverse Gamma priors, \(\sigma _i^{2}\sim IG(a_{\sigma },b_{\sigma })\) and \(\delta ^2_i \sim IG(a_{\delta },b_{\delta })\). Graphical model representations for both models CheMA and iCheMA are provided in Fig. 3. For inference of the iCheMA model the MCMC sampling scheme in Oates et al. (2014) has to be modified. The new full conditional distributions can be derived from the equations in Sect. 3.2 of Marin and Robert (2007). The details are given in Sect. 3.1, and pseudo-code of the MCMC algorithm is provided in Table 1. Table 2 shows that the replacement of Eq. (11) by Eq. (12) yields a substantial reduction of the computational costs of the MCMC scheme. Figure 6 in Sect. 6.2 shows that this replacement can also lead to a significantly improved network reconstruction accuracy.

3 Inference

3.1 Posterior inference

We refer to the proposed new variant of the CheMA model, which employs an analytical rather than a numerical gradient and replaces the truncated g-prior in Eq. (11) by the truncated ridge regression prior in Eq. (12), as the improved CheMA (iCheMA) model. For iCheMA, as outlined in Sect. 2.3, the Metropolis-within-Gibbs Markov Chain Monte Carlo (MCMC) sampling scheme proposed by Oates et al. (2014) has to be modified. In the new variant (iCheMA) we replace the truncated g-prior on \({\mathbf{V }}_i\) by the truncated ridge regression prior, we use a conjugate inverse Gamma prior rather than a Jeffrey’s prior for the noise variance \(\sigma _i^2\), and we introduce a new hyperparameter \(\delta _i^2\). We thus have to revise the sampling steps of the original MCMC inference algorithm. We also replace the approximate collapsed Gibbs sampling step for the noise variance \(\sigma _i^2\) from Oates et al. (2014) by an exact uncollapsed Gibbs sampling step. For computing the posterior distribution of the noise variance \(\sigma _i^2\),

Oates et al. (2014) approximate the marginalization integral

with the closed form solution from Marin and Robert (2007), Chapter 3. This is exact if there are no restrictions on the integration bounds. However, given the underlying positivity constraint for \({\mathbf{V}}_i\), symbolically \(\{{\mathbf{V}}_i\ge 0\}\), the integral is no longer analytically tractable and the expressions for Eqs. (13, 14) used in Oates et al. (2014) become an approximation.Footnote 4 We therefore switch to an uncollapsed Gibbs sampling step, where \(\sigma _i^2\) is sampled from the full conditional distribution \(p(\sigma _i^2|{\mathbf{K}}_i,{\mathbf{V}}_i,{\mathbf{y}}_i)\) and the marginalization integral from Eq. (14) becomes obsolete.Footnote 5

For species i and a given regulator set \(\pi _i\) we have to sample the maximum reaction rate vector \({\mathbf{V }}_i\), the Michaelis–Menten parameter vector \({\mathbf{K}}_i\), the noise variance \(\sigma _{i}^{2}\), and the new hyperparameter \(\delta _{i}^{2}\) from the posterior distribution:

where \({\mathbf{y}}_i:=(y_i(t_1),\ldots ,y_i(t_T))^{\top }\) is the vector of rates or gradients. For the full conditional distribution of \({\mathbf{V}}_i\) we get:

Since \({\mathbf{K}}_i\), \(\sigma _i^2\), and \(\delta _i^2\) are fixed in Eq. (16) and the (truncated) Gaussian prior on \({\mathbf{V}}_i\) from Eq. (12) is conjugate to the likelihood in Eq. (9), we obtain:

where \(\tilde{\varvec{\varSigma }} = \delta _{i}^{2} ({\mathbf{I}} + \delta _{i}^{2} {\mathbf{D}}_i^{\top } {\mathbf{D}}_i)^{-1}\), \(\tilde{\varvec{\mu }} = \tilde{\varvec{\varSigma }} (\delta _{i}^{-2} {\mathbf{1}} + {\mathbf{D}}_i^{\top } {\mathbf{y}}_i )\), and \({\mathbf{D}}_i = {\mathbf{D}}_i({\mathbf{K}}_i)\) is the design matrix, built from the rows given in Eq. (8). For the full conditional distribution of \(\delta _i^2\) we have:

As \({\mathbf{V}}_i\) and \(\sigma _i^2\) are fixed in Eq. (18) and the inverse Gamma prior on \(\delta _i^2\) is conjugate for \(p({\mathbf{V}}_i|\sigma _i^2,\delta _i^2)\), defined in Eq. (12), we obtain:

with \(\tilde{b}_{\delta } = b_{\delta } + \frac{1}{2} \sigma _{i}^{2} ({\mathbf{V }}_i - {\mathbf{1}})^{\top } ({\mathbf{V }}_i - {\mathbf{1}})\), and \(\tilde{a}_{\delta } = a_{\delta } + \frac{1}{2} ((|\pi _i|+1)\). For the full conditional distribution of \(\sigma _i^2\) we have:

As \({\mathbf{K}}_i\), \({\mathbf{V}}_i\), and \(\delta _i^2\) are fixed in Eq. (20) and the Gaussian–Inverse–Gamma prior on \(({\mathbf{V }}_i,\sigma _i^2)\) is conjugate for the likelihood in Eq. (9), we get:

where \(\tilde{b}_{\sigma } = b_{\sigma } + \frac{1}{2} [ ( {\mathbf{y}}_i - {\mathbf{D}}_i{\mathbf{V }}_i)^{\top } ({\mathbf{y}}_i - {\mathbf{D}}_i{\mathbf{V }}_i) + \delta _{i}^{2} ({\mathbf{V }}_i - {\mathbf{1}})^{\top } ({\mathbf{V }}_i - {\mathbf{1}})]\), and \(\tilde{a}_{\sigma } = a_{\sigma } + \frac{1}{2} (T + |\pi _i|+1)\). For the mathematical details see, e.g., Chapter 3 of Marin and Robert (2007).

The full conditional distribution of \({\mathbf{K}}_i\) cannot be computed in closed-form so that the Michaelis–Menten parameters have to be sampled by Metropolis–Hastings (MH) MCMC steps. Given the current vector \({\mathbf{K}}_i\), a realization \({\mathbf{u}}\) from a multivariate Gaussian distribution with expectation vector \({\mathbf{0}}\) and covariance matrix \({\varvec{\varSigma }}=0.1 \cdot {\mathbf{I}}\) is sampled, and we propose the new parameter vector \({\mathbf{K}}_i^{\star }={\mathbf{K}}_i+{\mathbf{u}}\) subject to a reflection of negative values into the positive domain. The MH acceptance probability for the new vector \({\mathbf{K}}_i^{\star }\) is then \(A({\mathbf{K}}_i,{\mathbf{K}}_i^{\star })=\min \left\{ 1,R({\mathbf{K}}_i,{\mathbf{K}}_i^{\star })\right\} \), with

where the Hastings-Ratio (HR) is equal to one, and the prior probability ratio (PR) depends on the model variant.Footnote 6 For the original CheMA model (Oates et al. 2014) we obtain from Eq. (11):

For the proposed new variant (iCheMA) we get from Eq. (12):

If the move is accepted, we replace \({\mathbf{K}}_i\) by \({\mathbf{K}}_i^{\star }\), or otherwise we leave \({\mathbf{K }}_i\) unchanged. Pseudo code of the MCMC sampling scheme for the new model variant (iCheMA) is provided in Table 1. Table 2 shows that the replacement of Eq. (23) by Eq. (24) yields a substantial reduction of the computational costs of the MCMC inference.

3.2 Model selection

The ultimate objective of inference is model selection, i.e. to infer the n regulator sets \(\pi _i\) (\(i=1,\ldots ,n\)) of the interaction processes described by Eq. (6). We compare and critically assess five alternative paradigms: the divergence information criterion (DIC), proposed by Spiegelhalter et al. (2002), the ’widely applicable information criterion’ (WAIC), proposed by Watanabe (2010), the ’widely applicable Bayesian information criterion’ (WBIC), proposed by Watanabe (2013), the cross-validation information criterion (CVIC) , proposed by Gelfand et al. (1992), and the marginal likelihood (also called ’model evidence’). For the ’marginal likelihood’ paradigm, we compare two numerical methods: Chib’s method (Chib), proposed by Chib and Jeliazkov (2001), and thermodynamic integration (TI), proposed by Friel and Pettitt (2008). For the latter, we have further assessed the numerical stabilization of the numerical integration proposed by Friel et al. (2013) in a simulation study, which can be found in Appendix 3.

3.3 Posterior probabilities of interactions

For the improved variant of the CheMA model (iCheMA) we follow Oates et al. (2014) and perform ’model averaging’ to compute the marginal posterior probabilities of all regulator-regulatee interactions (i.e. the ’edges’ in the interaction graph). The marginal posterior probability for species u being a regulator of i is given by:

where \(\varPi \) is the set of all possible regulator sets \(\pi _i\) of species i, and \(\varPi ^{(u\rightarrow i)}\) is the set of all regulator sets \(\pi _i\) of i that contain the regulator u. For simplicity, we chose a uniform prior for \(\pi _i\) subject to a maximum cardinality of 3 for the set of regulators (’parents’) of a node.

3.4 Network inference scoring scheme

For the CheMA model (Oates et al. (2014)) and the novel model variant (iCheMA) the marginal interaction posterior probabilities in Eq. (25) can be used to rank the network interactions in descending order. If the true regulatory network is known, this ranking defines the receiver operating characteristic (ROC) curve (Hanley and McNeil 1982), where for all possible threshold values, the sensitivity (or recall) is plotted against the complementary specificity. By numerical integration we then obtain the area under the curve (AUROC) as a global measure of network reconstruction accuracy, where larger values indicate a better performance, starting from AUROC = 0.5 to indicate random expectation, to AUROC = 1 for perfect network reconstruction. A second well established measure that is closely related to the AUROC score is the area under the precision recall curve (AUPREC), which is the area enclosed by the curve defined by the precision plotted against the recall (Davis and Goadrich 2006). AUPREC scores have the advantage over AUROC scores that the influence of large quantities in false positives can be identified better through the precision. These two scores (AUROC and AUPREC) are widely applied in the systems biology community to score the global network reconstructions accuracy (Marbach et al. 2012).

3.5 Causal sufficiency

In Appendix 1 we discuss causal sufficiency and how its violation affects the inference of regulatory network structures.

Hypothetical gene regulatory network of the circadian clock in A. thaliana, based on Pokhilko et al. (2010). The network displayed in the top left panel is the P2010 network proposed by Pokhilko et al. (2010). The other networks are pruned versions of that network, corresponding to certain protein knock-downs, as noted in each network title. These networks are used for the realistic data simulations, described in Sect. 5.2. Grey boxes group sets of regulators or regulated components. Arrows symbolize activations and bars inhibitions. Solid lines show protein–gene interactions; dashed lines show protein interactions; and the regulatory influence of light is symbolized by a sun symbol. Figure reproduced from Aderhold et al. (2014)

4 Evaluation

4.1 ANOVA

Like other network reconstruction models, CheMA and the novel iCheMA model yield a ranking of the regulatory interactions. Hence, if the true interaction network is known, AUROC and AUPREC scores can be computed, as explained in Sects. 3.3 and 3.4. For our performance evaluation on realistic network data, described in Sect. 5.2, we were running simulations for different settings, e.g. related to different regulatory network structures, shown in Fig. 4, and different inference methods. In order to distinguish the relevant effects from the confounding factors, we adopted the DELVE evaluation procedure for comparative assessment of classification and regression methods in Machine Learning (Rasmussen 1996; Rasmussen et al. 1996). That is, we set up a multi-way analysis of variance (ANOVA) scheme to disentangle the factors of interest. For instance, if there are three main effects (A, B, and C), and \(y_{ijkl}\) is the l-th AUROC (or AUPREC) score obtained for the constellation \(A=i\), \(B=j\) and \(C=k\), then we set up a 3-way ANOVA scheme:

where \(\varepsilon _{ijkl}{\sim }N(0,\sigma ^2)\) is zero-mean white additive Gaussian noise. We then computed ANOVA confidence intervals, e.g. for the effects of \(A=1\), \(A=2,\ldots \) on the AUROC scores (i.e. confidence intervals for the parameters \(A_1, A_2,\ldots \)); see, e.g., Brandt (1999) for details.

Thereby the main effects of the ANOVA models varied in dependence on the addressed research question. Throughout Sect. 6 we use the following five main effect symbols:

-

\({\mathbf{N_n}}\) (\(n=1,\ldots ,6\)) is the effect of the Network structure, see Fig. 4 for the six structures,

-

\({\mathbf{K_k}}\) (\(k=1,\ldots 4\)) is the effect of the GP Kernel, used for the computation of the analytical gradient (’RBF’, ’PER’, ’MAT32’, or ’MAT52’),

-

\({\mathbf{G_g}}\) (\(g=1,2\)) is the effect of the Gradient type, i.e. numerical (’difference quotient’) versus analytical (’GP interpolation’),

-

\({\mathbf{M_m}}\) (\(m=1,\ldots ,12\)) is the effect of the inference Method, i.e. the iCheMA model and eleven competing methods, listed in Table 5,

-

and \({\mathbf{P_p}}\) (\(p=1,2\)) is the effect of the Prior on \({\mathbf{V}}_i\) (i.e. the g-prior from Eq. (11) versus the ridge regression prior from Eq. (12).

In Appendix 2, we test the statistical assumptions of the ANOVA scheme in Eq. (26), and we include additional results related to the improvement of GP regression over numerical differentiation, and the influence of the network topology.

4.2 Simulation details

In our study we have included the four IC: DIC, WAIC, CVIC, and WBIC, and we have employed four different numerical methods to approximate the marginal likelihood: Chib’s original method (Chib and Jeliazkov 2001) (Chib naive), a stabilized version of Chib’s method (Chib), proposed here, thermodynamic integration with the trapezoid rule (TI), see Eq. (38), and thermodynamic integration with the numerical correction (TI-STAB), see Eq. (41).

The computation of the model selection scores (DIC, WAIC, CVIC, WBIC and the MLL with both Chib’s method and TI) requires MCMC simulations; pseudo code can be obtained from Table 1. We monitored the convergence of the MCMC chains with standard convergence diagnostics based on potential scale reduction factors (Gelman and Rubin 1992). The application of Chib’s method is based on the selection of a particular ’pivot’ parameter vector \(\tilde{\varvec{\theta }}\), as described under Eq. (34). Initially, we chose \(\tilde{\varvec{\theta }}\) to be the MAP (maximum a posteriori) estimator from the entire MCMC simulation. This was found to lead to numerical instabilities, though, as seen from the right panel of Fig. 8. We found a way to numerically stabilize Chib’s method, which we discuss in Appendix 1. We also found that the transition from TI to TI-STAB proposed by Friel et al. (2013) can be counter-productive, as seen from Table 4. We have investigated this unexpected effect in more detail in a simulation study in Appendix 3. We also found that the improved variant of CheMA (iCheMA) substantially reduces the computational costs of the MCMC-based inference, as shown in Table 2 and discussed in more detail in Appendix 1.

5 Data

5.1 Synthetic data

We generate \(T=240\) data points \(x_s(t_1),\ldots ,x_s(t_T)\) for \(n=4\) species (\(s=1,\ldots ,4\)) from iid standard Gaussian distributions. Subsequently, to obtain non-negative concentrations, the observations of each individual species are shifted such that the lowest value is equal to 0, before we follow Oates et al. (2014) and re-scale the observations of each species to mean 1. With \(x_1\) taking the role of the degradation process and \(x_2\) being an activating regulator (\(I_{2,y}=1\)) of a target species, whose gradient y(.) we here assume to be directly observable, we generate target observations \(y(t_j)\) with Eq. (6). We then have for \(j=1,\ldots ,T\):

where \({\mathbf{V}}_y=(v_{0,y},v_{2,y})^\mathsf{T}\) is the vector of maximum reaction rate parameters, \({\mathbf{K}}_y=(k_{2,y})\) contains the Michaelis–Menten parameter(s), and \(\epsilon _{t_j} \sim \mathscr {N}(0,\sigma ^2)\) is additive iid Gaussian noise (\(j=1,\ldots ,T\)). We keep the Michaelis–Menten parameter fixed at \(k_{2,y}=1\), while we vary the rates \(v_{0,y}\) and \(v_{2,y}\), as indicated in Table 3. Our goal is to infer the set of regulators \(\varvec{\pi }\!_{y}\) of y out of all subsets of \(\left\{ x_2,x_3,x_4 \right\} \), where \(\varvec{\pi }\!_{y}=\left\{ x_2 \right\} \) is the true regulator set. The effect of the degradation, taken into account by \(x_1\), is included in all 8 models.

5.2 Realistic data

For an objective model evaluation, we use the benchmark data from Aderhold et al. (2014), which contain simulated gene expression and protein concentration time series for ten genes in the circadian clock of A. thaliana. The time series correspond to measurements in 2-h intervals over 24 h, and are repeated 11 times, corresponding to different experimental conditions. We use time series generated from six variants of the circadian gene regulatory network in A. thaliana, shown in Fig. 4; these variants correspond to different protein, i.e. transcription factor, knock-downs. The molecular interactions in these graphs were modelled as individual discrete events with a Markov jump process, using the mathematical formulation from Guerriero et al. (2012) and practically simulated with Biopepa (Ciocchetta and Hillston 2009), based on the Gillespie algorithm (Gillespie 1977). For large volumes of cells, the concentration time series converge to the solutions of ODEs of the form in Eq. (5). However, for smaller volumes, time series simulated with Markov jump processes contain stochastic fluctuations that mimic the mismatch between the ODE model and genuine molecular processes, and the volume size was chosen as described in Guerriero et al. (2012) so as to match the fluctuations observed in real quantitative reverse transcription polymerase chain reaction (qRT-PCR) profiles. For the network reconstruction task, we only kept the gene expression time series and discarded the protein concentrations; this emulates the common problem of systematically missing values for certain types of molecular species (in our case: protein concentrations).

5.3 Real data

We have applied the iCheMa model to gene expression time series obtained with real-time polymerase chain reaction experiments to predict the circadian regulatory network in Arabidopsis thaliana. All details and the results can be found in Appendix 4.

6 Results

This section discusses the effect of gradient approximation (Sect. 6.1), the influence of the prior (Sect. 6.2), the accuracy of model selection (Sect. 6.3), the relative performance compared to the current state of the art (Sect. 6.4), and the problem of model mismatch (Sect. 6.5).

6.1 Evaluating the effect of the gradient computation

To illustrate the difference in the accuracy of network inference between a numerically calculated gradient using the difference quotient defined in Eq. (3) and an analytical gradient using a GP, we applied both gradient types to the realistic data of Sect. 5.2 and evaluated the performance of the methods listed in Table 5 together with iCheMA. The difference quotient was calculated with a time difference of \(\delta _t = 2\) hFootnote 7, and the analytical gradient was calculated with a GP using a RBF kernel as described in Sect. 2.2. The results of a preliminary study, in which we investigated the effect of the GP kernel on the network reconstruction accuracy, can be found in Appendix 2. We have recorded the AUROC and AUPREC scores for all the different conditions mentioned in Sect. 5.2, and summarize the outcome with an ANOVA analysis that treats the different conditions and methods as distinct effects. Fig. 5a shows that the results for the data with the analytical rate estimation significantly improves the performance over the numerical difference quotient while taking all methods of Table 5 and iCheMA into account. The same trend can be observed in Fig. 5b, which only considers the results for iCheMA. Hence, we conclude that network reconstruction accuracy significantly improves when an analytically derived gradient using a GP is used instead of the numerical difference quotient used in Oates et al. (2014). This can be observed for both a broad variety of different network reconstruction methods as well as specifically for the iCheMA method.

Effect of the gradient type: numerical versus analytical. For the realistic network data from Sect. 5.2 we compared the network reconstruction accuracy of a numerical and an analytical gradient. The numerical gradient was computed with the difference quotient, as proposed in Oates et al. (2014), and a time difference of \(\delta _t = 2\) h. The analytical gradient, proposed here, was derived from the derivative of a Gaussian process (GP) using the radial basis function kernel with optimized parameters, as implemented in the Matlab library gpstuff. Both panels show the mean AUROC and AUPREC scores with confidence intervals for the effect of the gradient type derived from ANOVA models. In a all methods listed in Table 5 and iCheMA were included so that the ANOVA model has 3 effects: gradient type \(G_g\), network structure \(N_n\), and method \(M_m\): \(y_{gnml} = G_g + N_n + M_m + \varepsilon _{gnml}\), see Sect. 4.1 for details. b shows the confidence intervals for iCheMA only, i.e. for an ANOVA model with \(M_m\) being removed

6.2 Evaluating the influence of the parameter prior

To evaluate the influence of the parameter prior on model selection, we computed the MLL for the g-prior in Eq. (11) and the ridge regression prior in Eq. (12) for the synthetic data from Sect. 5.1. For each of the 9 parameter settings, shown in Table 3, and 4 different noise variances \(\sigma ^2\) we generated 10 independent data instantiations from Eq. (27). We then applied the stabilized Chib method (Chib) for approximating the MLLs for all possible regulator–regulatee configurations, and computed the logarithmic Bayes factors (i.e. the differences of the MLLs) between the true and each wrong model. Fig. 6 gives the average logarithmic Bayes factors (averaged across the \(9\times 4=36\) parameter settings) for 10 independent replications. In Fig. 6 the distributions of the MLL score differences are represented with boxplots, where positive values indicate that the true structure is correctly selected, whereas negative values indicate that the wrong alternative structure is erroneously selected. It can be seen that regulator sets that do not contain the true regulator (corresponding to the three rightmost box plots) are clearly rejected. However, for the over-complex alternative models (three leftmost box plots), which contain spurious regulators, the MLL score difference obtained with the g-prior fails to consistently favour the true model. Two out of three differences are negative in Fig. 6a, indicating that an over-complex model is preferred to the true one. For the proposed ridge regression prior, on the other hand, the MLL score difference does succeed in consistently favouring the true structure, as displayed in Fig. 6b. We had a closer look at the results for the individual parameter sets, shown in Fig. 7. This figure reveals that the g-prior performed well for some parameter settings, but not for others. In particular, we found that the g-prior systematically fails when ’\(v_{0,y}<1\le v_{2,y}\)’ (see Table 3). In Appendix 1 we provide a theoretical explanation for this trend.

Comparison of CheMA and iCheMA: effect of the prior. The plots show the differences in the log marginal likelihoods (MLL) between the true regulator–regulatee model and six alternative wrong models, obtained with CheMA/iCheMA for the data from Sect. 5.1. There are three potential regulators \(\{x_2,x_3,x_4\}\) in the system, with \(\varvec{\pi }\!_{y}=\{x_2\}\) (’[2]’) being the true regulator of the response y. The configurations on the horizontal axis define alternative regulator configurations. The log score differences have been averaged across the nine parameter configurations, shown in Table 3, and four noise settings (\(\sigma ^2= 0.05,0.1,0.2,0.4\)). The box plots show the distributions of the average log score differences for 10 independent data instantiations. Positive values indicate that the true model was identified correctly; for negative differences, the wrong model had a higher score and would thus be erroneously selected. The results for the g-prior (CheMA) from Eq. (11) are shown in (a); the results for the ridge regression prior (iCheMA) from Eq. (12) are shown in (b)

Detailed Chib log marginal likelihood (MLL) scores for the original CheMA method with g-Prior (a) and a modified method with a ridge prior (b). Detailed plots from which the average score distributions in Fig. 6 are derived. Each of the nine slots along the horizontal axis corresponds to one parameter configuration of \((v_{0,y},k_{2,y},v_{2,y})\) as displayed in Table 3 and used for the synthetic data in Sect. 5.1. The slots contain the Chib MLL difference scores between a wrong parent configurations (the parent configurations are aligned from left to right in each slot according to the order in the legend) and the true parent set ([2]). Positive values indicate that the true parent configuration receives a higher score

6.3 Model selection

We used the synthetic data from Sect. 5.1 to cross-compare the performance of the model selection schemes; for an overview see Appendix 1. The MLL based selection procedures score the individual models with respect to the differences in the MLL (or log Bayes factors). We compare these results with the score differences of the various IC. For inference we used the novel iCheMA model, and we varied the hyperparameters of the prior distributions so as to obtain increasingly diffuse prior distributions. In a first scenario we set the hyperparameter \(\delta ^2\) in Eq. (12) to a value sf, which we refer to as ’spread-factor’, and we kept \(\nu =0.5\) in Eq. (10) fixed. In the second scenario we set both hyperparameters \(\delta ^2\) and \(\nu \) to the spread factor sf. Figure 8 shows boxplots of the log score differences between the true model and an over-complex alternative model (with one redundant regulator-variable) for both scenarios and increasing spread factors (sf). Again positive differences indicate that the true structure is correctly selected, whereas negative differences indicate that the alternative over-complex structure is erroneously selected. The overall trend revealed in Fig. 8 is that the log score difference decreases with the spread-factor (i.e. with the diffuseness of the prior) for most of the model selection criteria.

Score difference between the true and an over complex regulator-regulatee configuration for iCheMA, applied with different model selection schemes. Each box plot includes the average results from 10 independent data instantiations for the model of Sect. 5.1. The over-complex configuration includes a spurious regulator. Positive values indicate that the true model is favoured over the over-complex model. DIC, WAIC, CVIC, and WBIC are the IC described in Appendix 1; the methods for calculating the MLL are: a naive implementation of Chib (Chib-naiv), a stabilized version of Chib’s method (Chib), and thermodynamic integration in two variants (TI-4 and TI-8). Both TI-variants were applied with \(K=10\) discretization points and differ w.r.t. the power \(m\in \{4,8\}\) in Eq. (42). The panels demarcated by horizontal lines correspond to different prior distributions of the interaction parameters, characterized by the spread factor sf, ranging from 0.01 to 1e+20. Left panel The prior variance of the Michaelis–Menten parameters in Eq. (10) was kept fixed at \(\nu =0.5\). The spread hyperparameter \(\delta ^2\) for the prior of the reaction rate parameters in Eq. (12) was varied, i.e. \(\delta ^2=sf\). Right panel Both \(\delta ^2\) and \(\nu \) take the value sf, i.e. \(\delta ^2=\nu =sf\). A higher resolution for the IC (plotted on a different scale) is available from Fig. 9

Score differences for iCheMA, for different information criteria. This figure replicates the results from Fig. 8 for the information criteria DIC, WAIC and CVIC, on a different scale for improved resolution, for spread factors (sf) ranging from 10 to \(1e+20\). a is extracted from the left panel of Fig. 8, and b is extract from right panel of Fig. 8. Negative score differences indicate that the over-complex model is favoured over the true one

The parameter priors in Eqs. (10) and (12) are Gaussians centred on \(\mu =1\), with different variances. For low spread-factors sf (i.e. for low prior variances), both groups of criteria (IC and MLL) clearly favour the true model, since the prior ’pulls’ the spurious interaction parameter from its true value of zero towards a wrong value of \(\mu =1\). As the prior becomes more diffuse, the score differences become less pronounced, but still select the true model up to spread factors of about \(sf\approx 100\). As the prior becomes more diffuse, with the spread factor exceeding \(sf> 100\), the IC occasionally fail to select the correct model. A more detailed representation focusing on the IC and larger spread factors is given in Fig. 9. It is seen that among the IC it is mainly DIC that repeatedly fails to select the true model (the central inter-quartile range of the score difference distribution, between the first and third quartile, includes negative values), whereas for the other information criteria the selection of the wrong model is relatively unlikely (the central inter-quartile range does not include negative values). Two of the four MLL methods, namely TI-8 and Chib, start to increasingly favour the true model as the spread factor further increases beyond \(sf> 1000\). This is a consequence of Lindley’s paradox, whereby MLL increasingly penalizes the over-complex model for increasingly vague priors. TI-4, in principle, shows a very similar trend but the score difference is lower than for TI-8, indicating that the choice of the discretization points (i.e. the applied temperature ladder) implied by the power \(m\in \{4,8\}\) in Eq. (42) can critically affect the result.

Among the MLL methods, the naive application of Chib’s method, Chib naive, as proposed in Chib and Jeliazkov (2001), shows a completely different pattern and systematically fails to select the correct model for large spread factors. A theoretical explanation for this instability is provided in Appendix 1. We achieve a stabilization of Chib’s method, referred to as Chib, by selecting the pivot parameter set \(\tilde{\varvec{\theta }}\) with the highest posterior probability within the set of actually sampled parameters (excluding the parameter states from the burn-in phase). We refer to Appendix 1 for details.

The left panel of Fig. 8 shows that the different ways of computing the MLL give very similar results up to a prior spread factor of about 1e+08. For spread factors exceeding this value, the results differ. The MLL computed with Chib’s method (Chib) monotonically increases, as expected from Lindley’s paradox. The MLL computed with TI is obtained without numerical stabilization and reaches a plateau, with different values obtained for different trapezium sum discretization schemes (determined by m in Eq. (42)). This is a numerical discretization error that results from the form of the integrand in Eq. (37), which has most of its area concentrated on values near \(\tau =0\). We tried to stabilize TI with the corrected trapezium rule, replacing Eq. (38) by Eq. (41). Interestingly, this transition from TI to TI-STAB turned out to be occasionally counter-productive, as shown in Table 4. We have investigated the effect of the numerical “stabilization” more thoroughly in a simulation study based on a Bayesian linear regression model. We found that TI-STAB can fail for small numbers of discretization points and diffuse priors. The study and its results can be found in Appendix 3. In our subsequent simulations we use the numerically stabilized variant of Chib’s method (Chib), which has a 10-fold lower numerical complexity compared to TI (because we used 10 different temperatures \(\tau \) for TI).

Network reconstruction for the wildtype network. The scatter plot shows the network reconstruction accuracy for the CheMA model and its new variant iCheMA, using realistic data (see Sect. 5.2) generated from the wildtype network in Fig. 4. Both methods, CheMA and iCheMA, were applied with both a numerical and an analytical gradient

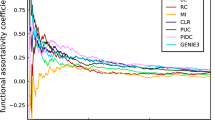

6.4 Comparison with state-of-the-art network reconstruction methods

We have compared the prediction accuracy of the proposed new novel variant (iCheMA) of the semi-mechanistic CheMA model of Oates et al. (2014) with the original CheMA model and 11 state-of-the-art machine learning methods, assessed in Aderhold et al. (2014). These methods are listed in Table 5 and were applied as described in Aderhold et al. (2014). The network reconstruction accuracy performance was tested on the realistic gene expression profiles from Sect. 5.2, based on the six network structures from Fig. 4. The results in terms of AUROC and AUPREC scores are shown in Fig. 11 and demonstrate that the iCheMA model outperforms all alternative methods. The CheMA model of Oates et al. is not included in this comparison. Due to the substantially higher computational costs, the simulations for all six networks in Fig. 4 would require several weeks of computing time on a medium-size cluster, as indicated by Table 2. In order to keep the computational complexity manageable, we compared CheMA and iCheMA in a separate study, using only data from the wildtype network, proposed by Pokhilko et al. (2010) and shown in the top left panel of Fig. 4. Note that for all methods included in Fig.11, the gradient for the response \(\frac{d x_i(t)}{dt}\) of Eq. (6) is derived from an analytical solution of the derivative using a GP with RBF kernel, as described in Sect. 2.2. The original definition of CheMA, on the other hand, uses a numerical gradient estimation. To separate out the effects of gradient estimation and the other differences between the methods, we applied both CheMA and iCheMA with both gradients: the numerical and the analytical gradient. The results are shown in Fig. 10. The two findings of this study are: iCheMA consistently outperforms CheMA, and the analytical gradient leads to a significant improvement in the prediction accuracy over the numerical gradient.

Comparison between the iCheMA model and state-of-the-art network reconstruction methods. The scatter plot shows the network reconstruction accuracy for the novel iCheMA model and the eleven alternative methods from Table 5, using the realistic network data from Sect. 5.2, generated from the six network structures of Fig. 4. All network reconstruction methods used the analytical gradient, obtained from GP regression with an RBF kernel, and the displayed AUROC and AUPREC values are the effects of the method, derived from an ANOVA with 2 effects: method \(M_m\) and network structure \(N_n\): \(y_{mnl} = M_m + N_n + \varepsilon _{mnl}\); see Sect. 4.1 for details

Network structure selection with iCheMA. The figure assesses the network structure identification with iCheMA based on the Biopepa data described in Sect. 5.2. a Heatmaps showing the average ranks (averaged over 5 independent data instantiations) of six candidate networks (shown in Fig. 4) based on MLL (computed with Chib’s method). The rows show the true network from which the data were generated. The columns show the candidate networks used for inference. A rank of 1 (black) in the diagonal indicates that the true network is consistently selected. The mathematical model is based on Eq. (6), i.e. Michaelis–Menten kinetics with interactions between regulators modelled as additive effects. b: Like a, but with multiplicative terms added to the interaction model to allow for protein complex formation

6.5 Model selection for network identification

As a final test, we evaluated the accuracy of model selection for network identification, using the data from Sect. 5.2. These data contain gene expression time series from the six gene regulatory networks of Fig. 4, which contain one wildtype and five mutant networks from protein knock-down experiments. We computed the MLL (with Chib’s method) for each of the candidate networks, for each data set in turn. The evaluation was repeated over five independent data instantiations. For each data set, all models were ranked based on the MLL, and the ranks were averaged over all data sets. This leads to the six-by-six confusion matrix of Fig. 12a, where the rows represent the networks used for data generation, and the columns represent the candidate networks ranked with the MLL. Note that we have emulated the mismatch between data generation and inference characteristic for real applications. For data generation, molecular interactions corresponding to the edges in the network were modelled with complex Markov jump processes, as described in Sect. 5.2, with the intention to mimic real biological processes. For inference and model selection, interactions were modelled with Michaelis–Menten kinetics, corresponding to Eq. (6), and the interactions between different regulators were modelled additively, by adding the Michaelis–Menten terms—this reduction in complexity is required for general computational tractability and scalability. The results are shown in Fig. 12a. The diagonal elements of the matrix show the average ranks for the correct network. Two of the six network structures are consistently correctly identified (average rank 1), but for the other four structures, the average ranks vary between 1.6 and 3. This failure to consistently identify the true network tallies with the fact that the AUROC scores in Fig. 11 are significantly below 1.0. The explanation is that the iCheMA model is currently restricted to additive interactions, as shown in Eq. (6). The data generation process, summarized in Sect. 5.2 and available in complete mathematical description from Guerriero et al. (2012), contains molecular processes related to complex formation (e.g. protein heterodimerization). Complex formation involving two transcription factors a and b acting on target gene i is mathematically described by a product of Michaelis–Menten terms of the form

where the symbols have the same meaning as in Eq. (6); see Pokhilko et al. (2010) for explicit mathematical expressions.

We included prior knowledge about molecular complex formation and expanded the iCheMA model accordingly to include the corresponding product of Michaelis–Menten terms in Eq. (6). We then computed the MLL as before and repeated the analysis. The results are shown in Fig. 12b and demonstrate that, by making the model more faithful to the data-generating process, model selection has substantially improved: the average ranks of the true network (shown in the diagonal elements of the matrix) are never worse than a value of 2 (out of 6), and reach the optimal value of 1 in 50 % of the cases. The corresponding results for the various IC are displayed in Table 6. When restricting the model to additive terms (greater mismatch between data and model), MLL outperforms the IC, as presumably expected. Interestingly, when reducing the mismatch between data and model by including the product terms, two IC, WAIC and CVIC, are competitive with MLL and perform even slightly better. DIC is substantially outperformed by MLL and the competitive information criteria WAIC and CVIC; these findings are consistent with the earlier results from Sect. 6.3. The performance of WBIC lies between WAIC /CVIC and DIC.

7 Discussion

Automatic inference of regulatory structures in our study is based on a bi-partition of the variables into putative regulators (transcription factor proteins) and regulatees (mRNAs) and a physical model of the regulation processes based on Michaelis–Menten kinetics. This effectively conditions the inference on assumed prior knowledge and is, as such, contingent on the accuracy of these assumptions. In our study we have allowed for a mismatch between the assumed prior knowledge and the ground truth. First, the assumed model is deterministic, defined in terms of ordinary differential equations, while the data-generation mechanism is stochastic (simulated with a Markov jump process). Second, the interaction model is additive, while the data-generation mechanism includes multiplicative terms. Third, we have allowed for the possibility of missing data (missing protein concentrations). Our results show that due to this mismatch, the true causal system cannot be learned (see e.g. Fig. 11, which shows AUROC and AUPREC scores clearly below 1). However, our work suggests that causal inference based on a simplified physical model achieves significantly better results than inference based on an empirical model. (See Fig. 11. Note that only iCheMA is based on a physical model; all the other methods use machine learning methods based on empirical modelling). Our study also quantifies how the performance improves as the physical model is made more realistic; see Fig. 12.

Semi-mechanistic modelling is a topical research area, as evidenced by the recent publication by Babtie et al. (2014). Our article complements this work by addressing a different research question. The objective of Babtie et al. is to investigate how uncertainty about the model structure (i.e. the interaction network defined by the ODEs) impacts on parameter uncertainty, and how parameter confidence or credible intervals are systematically underestimated when not allowing for model uncertainty. Our article addresses questions that have not been investigated by Babtie et al. : how accurate is the network reconstruction or ODE model selection, which factors determine it, and to what extent? Our work has been motivated by Oates et al. (2014), and we have shown that the authors’ seminal work, which won the best paper award at ECCB 2014, can be further improved with two methodological modifications: a different gradient computation, based on GP regression, and a different parameter prior, replacing the g-prior used by Oates et al. by the ridge regression prior more commonly used in machine learning. These two priors have e.g. been discussed in Chapter 3 of Marin and Robert (2007), but without any conclusions about their relative merits. Our study provides empirical evidence for the superiority of the ridge regression prior (Fig. 6) in the context of semi-mechanistic models, and a theoretical explanation for the reason behind it (Sect. 6.2). Table 2 shows that the new iCheMA variant reduces the computational costs drastically; a theoretical explanation for the reduction is provided in Appendix 1.

Our work has led to deeper insight into the strengths and shortcomings of different scoring schemes and numerical procedures. We have investigated the effectiveness of DIC as a method of semi-mechanistic model ranking. DIC is routinely used for model selection in Winbugs (Lunn et al. 2012), and the paper in which it was introduced (Spiegelhalter et al. 2002) has got over 5000 citations at the time of the submission of the present article. However, our findings that in the context of network learning DIC often prefers a model with additional spurious complexity over the true model (Fig. 9) questions its viability as a selection tool for semi-mechanistic models.

We have further compared different methods for computing the MLL. We have shown that Chib’s method (Chib and Jeliazkov 2001) can lead to numerical instabilities. These instabilities have also been reported by Lunn et al. (2012) and are presumably the reason why Chib’s method is not available in Winbugs. We have identified the cause of the numerical instability (see Appendix 1), and propose a modified implementation that substantially improves the robustness and practical viability of the method. This modification appears to be even preferable to thermodynamic integration, which at higher computational complexity shows noticeable variation with the discretization of the integral in Eq. (37) and the number of ‘temperatures’. It has been suggested (Friel et al. 2013) that the accuracy of thermodynamic integration can be improved by including second-order terms in the trapezium sum—see Eq. (41)—but the findings of our study are that this correction is no panacea for a general improvement in numerical accuracy, and that there are scenarios where the second-order correction can be counter-productive. It has come to our attention that a more recent method for improving thermodynamic integration has been proposed by Oates et al. (2016). Including this method in our benchmark study would be an interesting project for future research.

Due to the high computational complexity and potential instability of the MLL computation, several articles in the recent computational statistics literature have investigated faster approximate but numerically more stable alternatives. In our work, we have included WAIC, CVIC and WBIC as alternatives to MLL and evaluated their potential for model selection in two benchmark studies. It turns out that these more recent IC significantly outperform DIC (Figs. 8 and 9), and that WAIC and CVIC are compatible in performance with model selection based on the MLL (Table 6). It is advisable that several independent studies for different systems be carried out by independent researchers in the near future, but our study points to the possibility that statistical model selection in complex systems may be feasible at a comparable degree of accuracy but with substantially lower computational costs than with MLL.

Notes

Within this paper we refer to the resulting gradients as the numerical (Oates et al. 2014) and the analytical gradient, proposed here.

Using the whole distribution, as on page 58 of Holsclaw et al. (2013), would give us additional indication of uncertainty akin to a distribution of measurement errors. Due to the increased computational costs (additional matrix operations) this has not been attempted, though.

In the original CheMA model, a different decay term is used (for protein dephosphorylation), and no inhibitory interactions are included for numerical reasons. The linear decay term in Eq. (6) is more appropriate for transcriptional regulation, and the inclusion of inhibitory interactions achieves better results (as shown in Appendix 2).

This issue applies to the CheMA model, as proposed by Oates et al. and to the new improved variant (iCheMA), proposed here. For both models we implement the exact uncollapsed rather than the approximate collapsed Gibbs sampling step for \(\sigma _i^2\).

The truncation of \({\mathbf{V}}_i\) is then automatically properly taken into account, as it will only be conditioned on \({\mathbf{V}}_i\) that fulfil the required constraint.

The HR is equal to 1, as the proposal moves are symmetric. New candidates \({\mathbf{K}}_i^{\star }\) with negative elements are never accepted, as they have the prior probability zero, \(P({\mathbf{K}}_i^{\star })=0\).

In the realistic data study, we assume gene measurements to take place in intervals of \(\delta _t = 2\) h. This mimics typical rates for the qRT-PCR sampling experiments in Flis et al. (2015).

\(\int _a^b f(x) dx = {(b-a)} \frac{f(b)+f(a)}{2} - \frac{(b-a)^3}{12} f``(c)\) for some \(c\in [a,b]\).

Loosely speaking, under ’ideal circumstances’ the MCMC sample should contain parameters with high posterior probabilities, while parameters deviating from the sampled ones, such as \(\tilde{\varvec{\theta }}\), should be assumed to be very unlikely.

Note that \(\mu =1\) is the prior expectation of \(v_{u,i}\) and \(v_{0,i}\), see Eq. (11).

In real-world applications the maximal number of regulators for each species can be restricted to a maximal ’fan-in’ (or ’in-degree’) value, but this fan-in is rarely set to to a value lower than 3. Hence, taking the degradation process (i.e. the first column of the design matrix \({\mathbf{D}}_i\)) into account, the MCMC inference requires at least 4-dimensional multivariate Gaussian integrals to be computed. For completeness, we note that substantially more effective algorithms for computing multivariate Gaussian CDFs are only available for the bivariate and trivariate case, see, e.g., the algorithms in Drezner and Weslowsky (1989) and Genz (2004).

The score in Eq. (60) is the deviation between the true and an approximated log Bayes factor.

A full list of growing conditions and strains is available from the MocklerLab (Mockler et al. 2007) at ftp://www.mocklerlab.org/diurnal/expression_data/Arabidopsis_thaliana_conditions.xlsx

The equation for protein translation and degradation of P only depends on the status of light and darkness. Thus, protein P is produced throughout darkness with a sharp decline at dawn. By multiplication with a continuous (or binary) light indicator in the range [0,1] we obtain a sharp peak at dawn for Light*P. This peak might act as an initial ‘start of day’ impulse for some of the clock genes.

References

Aderhold, A., Husmeier, D., Grzegorczyk, M.: Statistical inference of regulatory networks for circadian regulation. Stat. Appl. Genet. Mol. Biol. 13(3), 227–273 (2014)

Babtie, A.C., Kirk, P., Stumpf, M.P.H.: Topological sensitivity analysis for systems biology. PNAS 111(51), 18,507–18,512 (2014)

Barenco, M., Tomescu, D., Brewer, D., Callard, R., Stark, J., Hubank, M.: Ranked prediction of p53 targets using hidden variable dynamic modeling. Genome Biol. 7(3), r25 (2006)

Brandt, S.: Data Analysis: Statistical and Computational Methods for Scientists and Engineers. Springer, New York (1999)

Chatfield, C.: The Analysis of Time Series. Chapman & Hall, Boca Raton (1989). iSBN 0-412-31820-2

Chib, S., Jeliazkov, I.: Marginal likelihood from the Metropolis-Hastings output. J. Am. Stat. Assoc. 96(453), 270–281 (2001)

Ciocchetta, F., Hillston, J.: Bio-PEPA: a framework for the modelling and analysis of biological systems. Theor. Comput. Sci. 410(33), 3065–3084 (2009)

Davis, J., Goadrich, M.: The relationship between precision-recall and ROC curves. In: Proceedings of the 23rd International Conference on Machine Learning (ICML), ACM, pp. 233–240 (2006)

De Jong, H.: Modeling and simulation of genetic regulatory systems: a literature review. J. Comput. Biol. 9(1), 67–103 (2002)

Drezner, Z., Weslowsky, G.: On the computation of the bivariate normal integral. J. Stat. Comput. Simul. 35, 101–107 (1989)

Flis, A., Fernández, A.P., Zielinski, T., Mengin, V., Sulpice, R., Stratford, K., Hume, A., Pokhilko, A., Southern, M.M., Seaton, D.D., et al.: Defining the robust behaviour of the plant clock gene circuit with absolute rna timeseries and open infrastructure. Open Biol. 5(10), 150,042 (2015)

Fogelmark, K., Troein, C.: Rethinking transcriptional activation in the arabidopsis circadian clock. PLoS Comput. Biol. 10(7), e1003,705 (2014)

Friel, N., Pettitt, A.: Marginal likelihood estimation via power posteriors. J. R. Stat. Soc.: Ser. B (Stat. Methodol.) 70, 589–607 (2008)

Friel, N., Hurn, M., Wyse, J.: Improving power posterior estimation of statistical evidence. Stati. Comput. 24(5), 709–723 (2013)

Gelfand, A.E., Dey, D.K., Chang, H.: Model determination using predictive distributions with implementation via sampling-based methods. Bayesian Stat. 4, 147–167 (1992)

Gelman, A., Rubin, D.B.: Inference from iterative simulation using multiple sequences. Stat. Sci. 1, 457–472 (1992)

Gelman, A., Carling, J.B., Stern, H., Dunson, D.B., Vehtari, A., Rubin, D.: Bayesian Data Analysis, 3rd edn. Chapman & Hall, Boca Raton (2014a)

Gelman, A., Hwang, J., Vehtari, A.: Understanding predictive information criteria for Bayesian models. Stat. Comput. 24(6), 997–1016 (2014b)

Genz, A.: Numerical computation of rectangular bivariate and trivariate normal and t probabilities. Stat. Comput. 14(3), 251–260 (2004)

Genz, A., Bretz, F.: Numerical computation of multivariate t probabilities with application to power calculation of multiple contrasts. J. Stat. Comput. Simul. 63, 361–378 (1999)

Genz, A., Bretz, F.: Comparison of methods for the computation of multivariate t probabilities. J. Comput. Graph. Stat. 11(4), 950–971 (2002)

Gillespie, D.T.: Exact stochastic simulation of coupled chemical reactions. J. Phys. Chem. 81(25), 2340–2361 (1977)

Grzegorczyk, M., Aderhold, A., Husmeier, D.: Inferring bi-directional interactions between circadian clock genes and metabolism with model ensembles. Stat. Appl. Genet. Mol. Biol. 14(2), 143–167 (2015)

Guerriero, M.L., Pokhilko, A., Fernández, A.P., Halliday, K.J., Millar, A.J., Hillston, J.: Stochastic properties of the plant circadian clock. J. R. Soc. Interface 9(69), 744–756 (2012)

Hanley, J.A., McNeil, B.J.: The meaning and use of the area under a receiver operating characteristic (ROC) curve. Radiology 143(1), 29–36 (1982)

Holsclaw, T., Sansó, B., Lee, H.K., Heitmann, K., Habib, S., Higdon, D., Alam, U.: Gaussian process modeling of derivative curves. Technometrics 55(1), 57–67 (2013)

Lawrence, N.D., Girolami, M., Rattray, M., Sanguinetti, G.: Learning and Inference in Computational Systems Biology. Computational Molecular Biology. MIT Press, Cambridge (2010)

Lindley, D.V.: A statistical paradox. Biometrika 1, 187–192 (1957)

Lunn, D., Jackson, C., Best, N., Thomas, A., Spiegelhalter, D.: The BUGS Book: A Practical Introduction to Bayesian Analysis. Chapman & Hall, Boca Raton (2012)

Marbach, D., Costello, J.C., Küffner, R., Vega, N.M., Prill, R.J., Camacho, D.M., Allison, K.R., Kellis, M., Collins, J.J., Stolovitzky, G., et al.: Wisdom of crowds for robust gene network inference. Nat. Methods 9(8), 796–804 (2012)

Marin, J.M., Robert, C.P.: Bayesian Core: A Practical Approach to Computational Bayesian Statistics. Springer, New York (2007)

Mockler, T., Michael, T., Priest, H., Shen, R., Sullivan, C., Givan, S., McEntee, C., Kay, S., Chory, J.: The DIURNAL project: DIURNAL and circadian expression profiling, model-based pattern matching, and promoter analysis. In: Cold Spring Harbor Symposia on Quantitative Biology, Cold Spring Harbor Laboratory Press, vol. 72, pp. 353–363 (2007)

Murphy, K.: Machine Learning: A Probabilistic Perspective. MIT Press, Cambridge (2012)

Oates, C.J., Dondelinger, F., Bayani, N., Korkola, J., Gray, J.W., Mukherjee, S.: Causal network inference using biochemical kinetics. Bioinformatics 30(17), i468–i474 (2014)

Oates, C.J., Papamarkou, T., Girolami, M.: The controlled thermodynamic integral for Bayesian model evidence evaluation. J. Am. Stat. Assoc. (2016). doi:10.1080/01621459.2015.1021006

Ota, K., Yamada, T., Yamanishi, Y., Goto, S., Kanehisa, M.: Comprehensive analysis of delay in transcriptional regulation using expression profiles. Genome Inform. 14, 302–303 (2003)

Pokhilko, A., Hodge, S., Stratford, K., Knox, K., Edwards, K., Thomson, A., Mizuno, T., Millar, A.: Data assimilation constrains new connections and components in a complex, eukaryotic circadian clock model. Mol. Syst. Biol. 6(1), 416 (2010)

Pokhilko, A., Fernández, A., Edwards, K., Southern, M., Halliday, K., Millar, A.: The clock gene circuit in Arabidopsis includes a repressilator with additional feedback loops. Mol. Syst. Biol. 8, 574 (2012)

Pokhilko, A., Mas, P., Millar, A.: Modelling the widespread effects of TOC1 signalling on the plant circadian clock and its outputs. BMC Syst. Biol. 7(1), 1–12 (2013)

Ramsay, J., Hooker, G., Campbell, D., Cao, J.: Parameter estimation for differential equations: a generalized smoothing approach. J. R. Stat. Soc.: Ser. B 69(5), 741–796 (2007)

Rasmussen, C.E.: Evaluation of Gaussian processes and other methods for non-linear regression. PhD thesis, University of Toronto, Dept. of Computer Science (1996)

Rasmussen, C.E., Williams, C.K.I.: Gaussian Processes for Machine Learning. MIT Press, Cambridge (2006)

Rasmussen CE, Neal RM, Hinton GE, van Camp D, Revow M, Ghahramani Z, Kustra R, Tibshirani R (1996) The DELVE manual. http://www.cs.toronto.edu/~delve

Smolen, P., Baxter, D.A., Byrne, J.H.: Modeling transcriptional control in gene networks—methods, recent results, and future directions. Bull. Math. Biol. 62(2), 247–292 (2000)

Solak E, Murray-Smith R, Leithead WE, Leith DJ, Rasmussen CE (2002) Derivative observations in Gaussian process models of dynamic systems. In: Proceedings of Advances in Neural Information Processing Systems (NIPS), MIT Press, Cambridge (2002)

Spiegelhalter, D.J., Best, N.G., Carlin, B.P., Van Der Linde, A.: Bayesian measures of model complexity and fit. J. R. Stat. Soc.: Ser. B (Stat. Methodol.) 64(4), 583–639 (2002)

Toni, T., Welch, D., Strelkowa, N., Ipsen, A., Stumpf, M.P.: Approximate Bayesian computation scheme for parameter inference and model selection in dynamical systems. J. R. Soc. Interface 6(31), 187–202 (2009)

Vanhatalo, J., Riihimäki, J., Hartikainen, J., Jylänki, P., Tolvanen, V., Vehtari, A.: GPstuff: Bayesian modeling with Gaussian processes. J. Mach. Learn. Res. 14(1), 1175–1179 (2013)

Vyshemirsky, V., Girolami, M.A.: Bayesian ranking of biochemical system models. Bioinformatics 24(6), 833–839 (2008)

Watanabe, S.: Asymptotic equivalence of Bayes cross validation and widely applicable information criterion in singular learning theory. J. Mach. Learn. Res. 11, 3571–3594 (2010)

Watanabe, S.: A widely applicable Bayesian information criterion. J. Mach. Learn. Res. 14, 867–897 (2013)

Yang, Z.: Maximum likelihood phylogenetic estimation from DNA sequences with variable rates over sites: approximate methods. J. Mol. Evol. 39, 306–314 (1994)

Acknowledgments

This project was partially supported by a grant from the Engineering and Physical Sciences Research Council (EPSRC) of the UK, grant reference number EP/L020319/1, and a grant from the European Commission FP7, “Timet”, grant agreement 245143. We would like to thank Andrew Millar for helpful discussions.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendices

1.1 Appendix 1: Methodology

1.1.1 Model selection

The ultimate objective of inference is model selection, i.e. to infer the n regulator sets \(\pi _i\) (\(i=1,\ldots ,n\)) of the interaction processes described by Eq. (6). Thereby each regulator set \(\pi _i\) also includes a binary variable that indicates, for each regulator, the type of regulation: activation versus inhibition. For notational clarity, let \(\varvec{\theta }_i=({\mathbf{V }}_i,{\mathbf{K}}_i,\sigma _{i}^{2},\delta _{i}^{2})\) denote the model-specific parameters, and recall that \({\mathbf{y}}_i=(y_i(t_1),\ldots ,y_i(t_T))^{\top }\) denotes the observed (or approximated) gradients for species \(x_i\).

DIC is an improvement on the classical information criteria AIC and BIC and is defined as

where \( \overline{\varvec{\theta }_i} = \int \varvec{\theta }_i p(\varvec{\theta }_i|\pi _i,{\mathbf{y}}_i) d\varvec{\theta }_i\) is the posterior mean of the parameters. In practice, the integrals are approximated by sums over parameters approximately sampled from the posterior distribution \(p(\varvec{\theta }_i|\pi _i,{\mathbf{y}}_i)\) with MCMC. The marginal likelihood is defined as

The essential difference between Eqs. (28) and (29) is that DIC is defined as an expectation with respect to the posterior distribution, \(p(\varvec{\theta }_i|\pi _i,{\mathbf{y}}_i)\), whereas the marginal likelihood is defined as an expectation with respect to the prior distribution, \(p(\varvec{\theta }_i|\pi _i)\). This has two consequences. First, the marginal likelihood is affected by the choice of prior, and in particular is known to increasingly penalize a more complex model as the prior \(p(\varvec{\theta }_i|\pi _i)\) becomes more diffuse (Lindley’s paradox, see Lindley 1957). DIC, on the other hand, is unaffected by diffuse priors: the more diffuse a prior, the less of an influence it has on the posterior. The second consequence is that computing the log marginal likelihood (MLL) is more onerous, as we will discuss below.