Abstract

Estimation with large amounts of data can be facilitated by stochastic gradient methods, in which model parameters are updated sequentially using small batches of data at each step. Here, we review early work and modern results that illustrate the statistical properties of these methods, including convergence rates, stability, and asymptotic bias and variance. We then overview modern applications where these methods are useful, ranging from an online version of the EM algorithm to deep learning. In light of these results, we argue that stochastic gradient methods are poised to become benchmark principled estimation procedures for large datasets, especially those in the family of stable proximal methods, such as implicit stochastic gradient descent.

Similar content being viewed by others

Notes

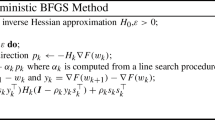

Second-order methods typically use the Hessian matrix of second-order derivatives of the log-likelihood and are discussed in detail in Sect. 3.

Procedure (2) is actually an ascent algorithm because it aims to maximize the log-likelihood, and thus a more appropriate name would be stochastic gradient ascent. However, we will use the term “descent” in order to keep in line with the relevant optimization literature, which traditionally considers minimization problems through descent algorithms.

The solution of the fixed-point equation (3) requires additional computations per iterations. However, Toulis et al. (2014) derive a computationally efficient implicit algorithm in the context of generalized linear models. Furthermore, approximate solutions of implicit updates are possible for any statistical model (see Eq. (4)).

This is an important distinction because, traditionally, the focus in optimization has been to obtain fast convergence to some point \(\widehat{\varvec{\theta }}\) that minimizes the empirical loss, e.g., the maximum-likelihood estimator. From a statistical viewpoint, under variability of the data, there is a trade-off between convergence to an estimator and its asymptotic variance (Le et al. 2004).

Similarly, a sequence of matrices \({\varvec{C}}_n\) can be designed such that \({\varvec{C}}_n \rightarrow \varvec{\mathcal {I}}(\varvec{\theta _\star })^{-1}\) (Sakrison 1965).

The acronym ASGD is also used in machine learning to denote asynchronous SGD i.e., a variant of SGD that can be parallelized on multiple machines. We will not consider this variant here.

References

Amari, S.-I.: Natural gradient works efficiently in learning. Neural Comput. 10(2), 251–276 (1998)

Amari, S.-I., Park, H., Kenji, F.: Adaptive method of realizing natural gradient learning for multilayer perceptrons. Neural Comput. 12(6), 1399–1409 (2000)

Bather, J.A.: Stochastic Approximation: A Generalisation of the Robbins–Monro Procedure, vol. 89. Cornell University, Mathematical Sciences Institute, New York (1989)

Beck, A., Teboulle, M.: Mirror descent and nonlinear projected subgradient methods for convex optimization. Oper. Res. Lett. 31(3), 167–175 (2003)

Bengio, Y.: Learning deep architectures for ai. Foundations and trends \(\textregistered \). Mach. Learn. 2, 1–127 (2009)

Bengio, Y., Delalleau, O.: Justifying and generalizing contrastive divergence. Neural Comput. 21(6), 1601–1621 (2009)

Benveniste, A., Métivier, M., Priouret, P.: Adaptive Algorithms and Stochastic Approximations. Springer Publishing Company, Incorporated, New York (2012)

Bertsekas, D.P., Tsitsiklis, J.N.: Neuro-dynamic programming: an overview. In: Proceedings of the 34th IEEE Conference on Decision and Control, vol. 1, pp. 560–564 (1995)

Bordes, A., Bottou, L., Gallinari, P.: Sgd-qn: careful quasi-Newton stochastic gradient descent. J. Mach. Learn. Res. 10, 1737–1754 (2009)

Bottou, L.: Large-scale machine learning with stochastic gradient descent. In: Proceedings of COMPSTAT’2010, pp. 177–186. Springer, New York (2010)

Bottou, L., Le Cun, Y.: On-line learning for very large data sets. Appl. Stoch. Models Bus. Ind. 21(2), 137–151 (2005)

Bousquet, O., Bottou, L.: The tradeoffs of large scale learning. Adv. Neural Inf. Process. Syst. 20, 161–168 (2008)

Broyden, C.G.: A class of methods for solving nonlinear simultaneous equations. Math. Comput. 19, 577–593 (1965)

Cappé, O.: Online em algorithm for hidden Markov models. J. Comput. Graph. Stat. 20(3), 728–749 (2011)

Cappé, O., Moulines, M.: On-line expectation-maximization algorithm for latent data models. J. R. Stat. Soc. 71(3), 593–613 (2009)

Carreira-Perpinan, M.A., Hinton, G.E.: On contrastive divergence learning. In: Proceedings of the Tenth International Workshop on Artificial Intelligence and Statistics, pp. 33–40. Citeseer (2005)

Cheng, L., Vishwanathan, S.V.N., Schuurmans, D., Wang, S., Caelli, T.: Implicit online learning with kernels. In: Proceedings of the 2006 Conference Advances in Neural Information Processing Systems 19, vol. 19, p. 249. MIT Press, Cambridge, 2007

Chung, K.L.: On a stochastic approximation method. Ann. Math. Stat. 25, 463–483 (1954)

Dempster, A., Laird, N., Rubin, D.: Maximum likelihood from incomplete data via the EM algorithm. J. R. Stat. Soc. Ser. B 39, 1–38 (1977)

Duchi, J., Hazan, E., Singer, Y.: Adaptive subgradient methods for online learning and stochastic optimization. J. Mach. Learn. Res. 999999, 2121–2159 (2011)

Dupuis, P., Simha, R.: On sampling controlled stochastic approximation. IEEE Trans. Autom. Control 36(8), 915–924 (1991)

El Karoui, N.: Spectrum estimation for large dimensional covariance matrices using random matrix theory. Ann. Stat. 36, 2757–2790 (2008)

Fabian, V.: On asymptotic normality in stochastic approximation. Ann. Math. Stat. 39, 1327–1332 (1968)

Fabian, V.: Asymptotically efficient stochastic approximation; the RM case. Ann. Stat. 1, 486–495 (1973)

Fisher, R.A.: On the mathematical foundations of theoretical statistics. Philos. Trans. R. Soc. Lond. Ser. A 222, 309–368 (1922)

Fisher, R.A.: Statistical Methods for Research Workers. Oliver and Boyd, Edinburgh (1925a)

Fisher, R.A.: Theory of statistical estimation. In: Mathematical Proceedings of the Cambridge Philosophical Society, vol. 22, pp. 700–725. Cambridge University Press, Cambridge (1925b)

Geman, S., Geman, D.: Stochastic relaxation, gibbs distributions, and the Bayesian restoration of images. IEEE Trans. Pattern Anal. Mach. Intell. 6, 721–741 (1984)

George, A.P., Powell, W.B.: Adaptive stepsizes for recursive estimation with applications in approximate dynamic programming. Machine Learn. 65(1), 167–198 (2006)

Girolami, M.: Riemann manifold Langevin and Hamiltonian Monte Carlo methods. J. R. Stat. Soc. Ser. B 73(2), 123–214 (2011)

Gosavi, A.: Reinforcement learning: a tutorial survey and recent advances. INFORMS J. Comput. 21(2), 178–192 (2009)

Green, P.J.: Iteratively reweighted least squares for maximum likelihood estimation, and some robust and resistant alternatives. J. R. Stat. Soc. Ser. B 46, 149–192 (1984)

Hastie, T., Tibshirani, R., Friedman, J.: The Elements of Statistical Learning: Data Mining, Inference, and Prediction, 2nd edn. Springer, New York (2011)

Hennig, P., Kiefel, M.: Quasi-Newton methods: a new direction. J. Mach. Learn. Res. 14(1), 843–865 (2013)

Hinton, G.E.: Training products of experts by minimizing contrastive divergence. Neural Comput. 14(8), 1771–1800 (2002)

Hoffman, M.D., Blei, D.M., Wang, C., Paisley, J.: Stochastic variational inference. J. Mach. Learn. Res. 14(1), 1303–1347 (2013)

Huber, P.J., et al.: Robust estimation of a location parameter. Ann. Math. Stat. 35(1), 73–101 (1964)

Huber, P.J.: Robust Statistics. Springer, New York (2011)

Johnson, R., Zhang, T.: Accelerating stochastic gradient descent using predictive variance reduction. Adv. Neural Inf. Process. Syst. 26, 315–323 (2013)

Kivinen, J., Warmuth, M.K.: Additive versus exponentiated gradient updates for linear prediction. In: Proceedings of the Twenty-Seventh Annual ACM Symposium on Theory of Computing, pp. 209–218

Kivinen, J., Warmuth, M.K., Hassibi, B.: The p-norm generalization of the lms algorithm for adaptive filtering. IEEE Trans. Signal Process. 54(5), 1782–1793 (2006)

Korattikara, A., Chen, Y., Welling, M.: Austerity in mcmc land: cutting the metropolis-hastings budget. In: Proceedings of the 31st International Conference on Machine Learning, pp. 181–189 (2014)

Kulis, B., Bartlett, P.L.: Implicit online learning. In: Proceedings of the 27th International Conference on Machine Learning (ICML-10), pp. 575–582 (2010)

Lai, T.L., Robbins, H.: Adaptive design and stochastic approximation. Ann. Stat. 7, 1196–1221 (1979)

Lange, K.: A gradient algorithm locally equivalent to the EM algorithm. J. R. Stat. Soc. Ser. B 57, 425–437 (1995)

Lange, K.: Numerical Analysis for Statisticians. Springer, New York (2010)

Le, C., Bottou Yann, L., Bottou, L.: Large scale online learning. Adv. Neural Inf. Process. Syst. 16, 217 (2004)

Lehmann, E.H., Casella, G.: Theory of Point Estimation, 2nd edn. Springer, New York (2003)

Li, L.: A worst-case comparison between temporal difference and residual gradient with linear function approximation. In: Proceedings of the 25th International Conference on Machine Learning, ACM, pp. 560–567

Liu, Z., Almhana, J., Choulakian, V., McGorman, R.: Online em algorithm for mixture with application to internet traffic modeling. Comput. Stat. Data Anal. 50(4), 1052–1071 (2006)

Ljung, L., Pflug, G., Walk, H.: Stochastic Approximation and Optimization of Random Systems, vol. 17. Springer, New York (1992)

Martin, R.D., Masreliez, C.: Robust estimation via stochastic approximation. IEEE Trans. Inf. Theory 21(3), 263–271 (1975)

Murata, N.: A Statistical Study of On-line Learning. Online Learning and Neural Networks. Cambridge University Press, Cambridge (1998)

Nagumo, J.-I., Noda, A.: A learning method for system identification. IEEE Trans. Autom. Control 12(3), 282–287 (1967)

National Research Council: Frontiers in Massive Data Analysis. The National Academies Press, Washington, DC (2013)

Neal, R.M., Hinton, G.E.: A view of the em algorithm that justifies incremental, sparse, and other variants. In: Learning in Graphical Models, pp. 355–368. Springer, New York (1998)

Neal, R.: Mcmc Using Hamiltonian Dynamics. Handbook of Markov Chain Monte Carlo 2 (2011)

Nemirovski, A.S., Yudin, D.B.: Problem Complexity and Method Efficiency in Optimization. Wiley, Chichester (1983)

Nemirovski, A., Juditsky, A., Lan, G., Shapiro, A.: Robust stochastic approximation approach to stochastic programming. SIAM J. Optim. 19(4), 1574–1609 (2009)

Nevelson, M.B., Khasminskiĭ, R.Z.: Stochastic Approximation and Recursive Estimation, vol. 47. American Mathematical Society, Providence (1973)

Nowlan, S.J.: Soft Competitive Adaptation: Neural Network Learning Algorithms Based on Fitting Statistical Mixtures. Carnegie Mellon University, Pittsburgh (1991)

Parikh, N., Boyd, S.: Proximal algorithms. Found. Trends Optim. 1(3), 123–231 (2013)

Pillai, N.S., Smith, A.: Ergodicity of approximate mcmc chains with applications to large data sets. arXiv preprint http://arxiv.org/abs/1405.0182 (2014)

Polyak, B.T., Tsypkin, Y.Z.: Adaptive algorithms of estimation (convergence, optimality, stability). Autom. Remote Control 3, 74–84 (1979)

Polyak, B.T., Juditsky, A.B.: Acceleration of stochastic approximation by averaging. SIAM J. Control Optim. 30(4), 838–855 (1992)

Robbins, H., Monro, S.: A stochastic approximation method. Ann. Math. Stat. 22, 400–407 (1951)

Rockafellar, R.T.: Monotone operators and the proximal point algorithm. SIAM J. Control Optim. 14(5), 877–898 (1976)

Rosasco, L., Villa, S., Công Vũ, B.: Convergence of stochastic proximal gradient algorithm. arXiv preprint http://arxiv.org/abs/1403.5074, 2014

Ruppert, D.: Efficient estimations from a slowly convergent robbins-monro process. Technical report, Cornell University Operations Research and Industrial Engineering (1988)

Ryu, E.K., Boyd, S.: Stochastic proximal iteration: a non-asymptotic improvement upon stochastic gradient descent. Working paper. http://web.stanford.edu/~eryu/papers/spi.pdf (2014)

Sacks, J.: Asymptotic distribution of stochastic approximation procedures. Ann. Math. Stat. 29(2), 373–405 (1958)

Sakrison, D.J.: Efficient recursive estimation; application to estimating the parameters of a covariance function. Int. J. Eng. Sci. 3(4), 461–483 (1965)

Salakhutdinov, R., Mnih, A., Hinton, G.: Restricted boltzmann machines for collaborative filtering. In: Proceedings of the 24th International Conference on Machine Learning, ACM, pp. 791–798 (2007)

Sato, M.-A., Ishii, S.: On-line em algorithm for the normalized Gaussian network. Neural Comput. 12(2), 407–432 (2000)

Sato, I., Nakagawa, H.: Approximation analysis of stochastic gradient langevin dynamics by using Fokker-Planck equation and ito process. JMLR W&CP 32(1), 982–990 (2014)

Schapire, R.E., Warmuth, M.K.: On the worst-case analysis of temporal-difference learning algorithms. Mach. Learn. 22(1–3), 95–121 (1996)

Schaul, T., Zhang, S., LeCun, Y.: No more pesky learning rates. arXiv preprint. http://arxiv.org/abs/1206.1106, 2012

Schraudolph, N.N., Yu, J., Günter, S.: A stochastic quasi-Newton method for online convex optimization. In: Meila M., Shen X. (eds.) Proceedings of the 11th International Conference on Artificial Intelligence and Statistics (AISTATS), vol. 2, pp. 436–443. San Juan, Puerto Rico (2007)

Slock, D.T.M.: On the convergence behavior of the LMS and the normalized LMS algorithms. IEEE Trans. Signal Process. 41(9), 2811–2825 (1993)

Sutton, R.S.: Learning to predict by the methods of temporal differences. Mach. Learn. 3(1), 9–44 (1988)

Tamar, A., Toulis, P., Mannor, S., Airoldi, E.: Implicit temporal differences. In: Neural Information Processing Systems, Workshop on Large-Scale Reinforcement Learning (2014)

Taylor, G.W., Hinton, G.E., Roweis, S.T.: Modeling human motion using binary latent variables. Adv. Neural Inf. Process. Syst. 19, 1345–1352 (2006)

Titterington, M.D.: Recursive parameter estimation using incomplete data. J. R. Stat. Soc. Ser. B 46, 257–267 (1984)

Toulis, P., Airoldi, E.M.: Implicit stochastic gradient descent for principled estimation with large datasets. arXiv preprint http://arxiv.org/abs/1408.2923, 2014

Toulis, P., Airoldi, E., Rennie, J.: Statistical analysis of stochastic gradient methods for generalized linear models. JMLR W&CP 32(1), 667–675 (2014)

Venter, J.H.: An extension of the robbins-monro procedur. Ann. Math. Stat. 38, 181–190 (1967)

Wang, C., Chen, X., Smola, A., Xing, E.: Variance reduction for stochastic gradient optimization. Adv. Neural Inf. Process. Syst. 26, 181–189 (2013)

Wang, M., Bertsekas, D.P.: Stabilization of stochastic iterative methods for singular and nearly singular linear systems. Math. Oper. Res. 39(1), 1–30 (2013)

Wei, C.Z.: Multivariate adaptive stochastic approximation. Ann. Stat. 3, 1115–1130 (1987)

Welling, M., Teh, Y.W.: Bayesian learning via stochastic gradient langevin dynamics. In: Proceedings of the 28th International Conference on Machine Learning (ICML-11), pp. 681–688 (2011)

Xu, W.: Towards optimal one pass large scale learning with averaged stochastic gradient descent. arXiv preprint http://arxiv.org/abs/1107.2490, 2011

Younes, L.: On the convergence of markovian stochastic algorithms with rapidly decreasing ergodicity rates. Stochastics 65(3–4), 177–228 (1999)

Zhang, T.: Solving large scale linear prediction problems using stochastic gradient descent algorithms. In: Proceedings of the Twenty-First International Conference on Machine Learning, ACM, p. 116 (2004)

Acknowledgments

The authors wish to thank Leon Bottou, Bob Carpenter, David Dunson, Andrew Gelman, Brian Kulis, Xiao-Li Meng, Natesh Pillai, Neil Shephard, Daniel Sussman and Alexander Volfovsky for useful comments and discussion. This research was sponsored, in part, by NSF CAREER award IIS-1149662, ARO MURI award W911NF-11-1-0036, and ONR YIP award N00014-14-1-0485. PT is a Google Fellow in Statistics. EMA is an Alfred P. Sloan Research Fellow.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Toulis, P., Airoldi, E.M. Scalable estimation strategies based on stochastic approximations: classical results and new insights. Stat Comput 25, 781–795 (2015). https://doi.org/10.1007/s11222-015-9560-y

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11222-015-9560-y