Abstract

Using a global set of ~ 300 institutions, standard, collaboration and fractional Category Normalised Citation Impact (CNCI) indicators are compared between 2009 and 2018 to demonstrate the complementarity of the three variants for research evaluation. Web of Science data show that Chinese institutions appear immune to the indicator used as CNCI changes, generally improvements, are similar for all three variants. Other regions tend to show greater increases in standard CNCI over collaboration CNCI, which in turn is greater than fractional CNCI; however, decreases in CNCI values, particularly in established research economies like North America and western Europe are not uncommon. These findings may highlight the differing extent to which the number of collaborating countries and institutions on papers affect each variant. Other factors affecting CNCI values may be citation practices and hiring of Highly Cited Researchers. Evaluating and comparing the performance of institutions is a main driver of policy, research and funding direction. Decision makers must understand all aspects of CNCI indicators, including the secondary factors illustrated here, by using a ‘profiles not metrics’ approach.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The Category Normalised Citation Impact (CNCI) indicator puts citations in context. By normalising citation counts by publication year, document type and subject category, reasonable comparisons between the influence of papers within and without a given subject field are permitted. It is a standard indicator for national and institutional research performance comparisons (Jappe, 2020) and has been used to inform government policy (BIS, 2009), hire and promote academic staff (Holden et al., 2005) and allocate resources (Carlsson, 2009). CNCI, which is used in Clarivate products, is not the only indicator of its type; Elsevier, for example, offer the Field-weighted citation impact (FWCI).

The need for citation normalisation is well justified (Schubert et al., 1988), given field (e.g., Garfield, 1979) and national cultural (Adams, 2018) differences in citation growth, and continues to be a central theme of bibliometrics and scientometrics (Waltman & van Eck, 2019). CNCI, however, considers only three factors in its normalisation. There are arguments that other factors should be considered when normalising citations such as the referencing behaviour of citing publications or citing journals (e.g., source normalisation: Waltman & van Eck, 2013; citing-side normalisation: Zitt & Small, 2008), or the use of citation percentiles (Bornmann, 2020; Bornmann & Williams, 2020). A weighted CNCI, based on citations within a short time window and a fixed longer window has also been suggested (Wang & Zhang, 2020; Zhang & Wang, 2021). Others (Adams et al., 2007, 2019a, 2023) suggest that indicators should instead be used as part of a profile, not as a standalone metric. CNCI also relies on citations acknowledging the usefulness of work (Garfield, 1955) and the broad correlation between citation accumulation and peer judgements of research significance (i.e., higher cited papers are judged to be more significant e.g., Moed, 2005, 2017). Though this is generally true, citations can be made for numerous reasons (e.g., Garfield, 1977; Small, 1982) as well as those of a nefarious nature (e.g., Heneberg, 2016; Bartneck & Kokkelmans, 2011; Fister Jr et al. 2016).

A further complication is that academic research has become increasingly team-oriented (Bozeman & Youtie, 2017) and international in the last 40 years (Adams, 2013; Narin et al., 1991). Motivations for international collaboration include knowledge transfer, access to equipment and financial aid and it is generally viewed positively (e.g., Hicks & Katz, 1996; Katz & Martin, 1997). Bilateral partnerships, which flourished into the 2000s, are beginning to be superseded by more multilateral partnerships (Adams & Szomszor, 2022; Adams et al., 2019b) and the formation of a global research network (Adams & Szomszor, 2022; Wagner & Leydesdorff, 2005), which is increasingly necessary to tackle global scale issues such as pandemics (e.g., COVID-19) and climate change, and large-scale technical research projects such as CERN.

Due to the normalisation process, the benchmark value of CNCI is one, which represents world average. A paper with a CNCI value of 2 would be above world average, having twice the number of citations as the average paper; a paper with a CNCI value of 0.5 would be below world average, having half the number of citations of the average paper. However, because of this single value outcome, CNCI offers only a snapshot of a document’s performance. How this performance credit is subsequently attributed to authors (and therefore institutions and countries), especially as more collaborative work generally results in higher CNCI (Adams et al., 2019b; Glänzel & Schubert, 2004; Narin et al., 1991; Thelwall, 2020; Waltman & van Eck, 2015), creates issues.

Numerous counting methods have been devised (see Gauffriau, 2021) – 32 since 1981 – to assign publication credit to authors. The most common of these is fractional counting, where an entity is assigned a fractional share of paper credit (e.g., Aksnes et al., 2012; Burrell & Rousseau, 1995; Egghe et al., 2000; van Hooydonk, 1997), which itself has many variations (e.g., Sivertsen et al., 2019; Waltman & van Eck, 2015). Fractional counting has been applied to institutions (e.g., Leydesdorff & Shin, 2011), countries (e.g., Glänzel & De Lange, 2002), and used by national research councils to evaluate work (e.g., Sweden: Kronman et al., 2010). However, as author numbers on publications increase, sometimes into the hundreds or even thousands, the assignment of fractional credit and, consequently any evaluation of such work, becomes futile to the detriment of research management.

Given the collaborative nature of research, it appears appropriate to consider the type of collaboration when attempting to apportion credit. Collaborative CNCI (collab CNCI) was formulated (Potter et al., 2020, 2022) with this is mind, and as an approach that does not presume credit (as fractional counting does). This alternative approach assigns documents to one of five types (discussed later), depending on the level of collaboration, and normalises citation counts by this, in addition to the three parameters standard CNCI already considers.

Results have demonstrated, at both the institutional and national level, that collab CNCI values are generally lower than standard CNCI, due to the additional normalisation, and agree well with fractional values (Adams et al., 2022; Potter et al., 2020, 2022). Collab CNCI can also highlight situations where an institution’s domestic articles outperform its internationally collaborative articles (Adams et al., 2022; Potter et al., 2022). Additionally, division of papers into the five collaboration types provides an in-depth profile, rather than a single metric, view of an entity’s research profile for assessment. Without this additional normalisation and subdivision, crucial aspects of an entity’s research profile would be overlooked and potentially impact management decision making.

Here, building upon our previous work (Potter & Kovač, 2023; Potter et al., 2022), these three alternative CNCI indicators (standard, collaboration, and fractional) are used in concert to analyse and explain institutional and, by extension, regional CNCI changes over a ten-year period. This demonstrates the ‘profiles not metrics’ approach and offers insights into the practicality of combining approaches and its importance in performance evaluation and informing research management decision making.

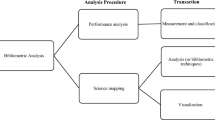

Methods

This work used the same data source as Potter et al. (2022) and Potter and Kovač (2023). This consisted of documents with the type ‘article’ published in the Web of Science Core Collection between 2009 and 2018 inclusive. Building upon Potter and Kovač (2023), this set was filtered to include 30 institutions from nine predefined regions: North America (defined as just USA and Canada), Mexico and South America (MexSAm), Western Europe (WEur), Eastern Europe (EEur), Nordic Europe (Nordic), Middle East-North Africa-Turkey (MENAT), Southeast Asia (SEAsia), China, and Asia–Pacific (APAC). Institutions for each region were chosen semi-randomly to reflect high, middle and low volume article-producing institutions, with a minimum threshold of 5000 (co)authored articles over the 10-year period. Africa had only 11 institutions covering three countries (Nigeria, South Africa, Uganda) that met the minimum criteria, but was included for completeness. The dataset included almost 5.5 M articles. Institutions were collated from author address information available within Web of Science. Specifically, these were the ‘Affiliation’ data provided as part of the Author Information in the Web of Science metadata for a given document. This ‘Affiliation’ provides a ‘unified’ institution name that merges names from Web of Science addresses including name variants, such as previous names, affiliated sub-organisations and spelling variants, and is a combination of background research by Clarivate editorial staff and feedback from organisations. Though some address information was incomplete, this did not affect result interpretation due to the relatively small set of incomplete data (e.g., ~ 3% of articles when considering the top 20 institutions by article count).

CNCI for each institutions’ articles was calculated in the standard manner: citations counts were normalised by document type, year of publication, and Web of Science subject category, and is hence referred to as standard (s-) CNCI. The Web of Science subject categories cover 254 subjects with journals sometimes assigned to multiple ones; in such cases, the average CNCI of all an article’s categories was assigned to the article.

Collab CNCI, defined by Potter et al. (2020), and sometimes referred to as c-CNCI in the following text, was calculated in the same manner as standard CNCI but with the additional normalisation by collaboration type. The five possible collaboration types are: domestic single institutional, domestic multi-institutional, international bilateral, international trilateral, and international quadrilateral plus. International collaborations were based only on the number of unique countries; number of unique institutions was irrelevant. In theory, a paper with a single author could fall into any one of these collaboration types, depending on their affiliations. It is important to note that highly multilateral work (i.e., international quadrilateral plus papers) was rare. This set of papers accounted for only 4% of all output though this is likely to increase in the future.

The standard and collab CNCI values for an article were assigned to all institutions on that article. For example, if an article with three institutions had a CNCI of 1.5, each institution was awarded an article count of 1.0 and a CNCI value of 1.5. An institution’s mean CNCI was then calculated by summing the CNCI of all the articles on which the institution was recorded in an author address and dividing this by the number of such articles, over the entire dataset.

Fractional CNCI, also referred to as f-CNCI in the following text, was calculated at the author level following Waltman and van Eck (2015). This method assigns credit to each author, institution, country etc. on a publication based upon the total, deduplicated number of each. This credit is then multiplied by the article’s CNCI to calculate the fractional CNCI value. An institution’s mean CNCI was then calculated by summing the CNCIs on which that institution was recorded in an author address and dividing this by the sum of its total fractional CNCI value. The reader is referred to Waltman and van Eck (2015), as well as Potter et al. (2020), for more thorough descriptions and methodology of each indicator. To investigate temporal changes between each of the CNCI approaches, the absolute differences in CNCI values between the years 2009 and 2018 were compared.

Results

Temporal CNCI indicator changes

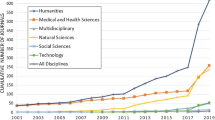

Figure 1 presents CNCI values for all institutions across all three indicator variants for the years 2009 and 2018. All regions, bar North America and Western Europe, show a general increase in s-CNCI over the period. For the other regions, some tend to show a far greater spread in s-CNCI values in 2018 compared to 2009, particularly Eastern Europe. However, some of this spread may be due to the choice of institutions. These results contrast those seen for fractional and collab CNCI, where there is little difference in overall trends between the two years. North America and Western Europe appear to show a general decline in fractional and collab. China is the only region where the general trend is an increase in indicator values. Overall, fractional and collab CNCI have largely subdued any improvements seen in s-CNCI.

A comparison of standard, fractional and collaboration CNCI values between 2009 and 2018 for selected institutions covering 10 global regions. Regional labels refer to: USA and Canada, Eastern Europe (EEur), Middle East-North Africa-Turkey (MENAT), China, Asia–Pacific (APAC), Southeast Asia (SEAsia), Nordic Europe (Nordic), Western Europe (WEur), Africa, and Mexico and South America (MexSAm)

Between the two reference years, some institutions distance themselves from their peers for s-CNCI, notably University of Adelaide, Australia; Shanghai Institute of Biological Sciences, China; and Niels Bohr Institute, Copenhagen, Denmark. However, when considering fractional and collab CNCI, many of these are no longer unique (e.g., another institution has a similar value) or fall back into the main group. Only the University of Adelaide remains in a unique position across all three indicators. Massachusetts Institute of Technology (MIT), by contrast, declines across all three indicators though remains the top performing institution. Uniquely, King Abdullah University of Science and Technology (KAUST), Saudi Arabia, was an anomaly in s-CNCI, though not the other indicators, in 2009 (incidentally the year in which the university was officially established). However, by 2018, it had become an outlier for fractional and collab CNCI. Though it’s s-CNCI value increased, it is now joined by King Abdulaziz University, Saudi Arabia, as stand out performers in the MENAT region.

Figure 2 converts the data in Fig. 1 to absolute differences plotting s-CNCI and f-CNCI against c-CNCI to show relative relationships between the indicators. When comparing absolute s-CNCI and c-CNCI changes over the ten-year period, institutions generally have a greater s-CNCI improvement (s-CNCI = 1.158 × c-CNCI). When comparing f-CNCI and c-CNCI changes, institutions generally have greater c-CNCI improvements (f-CNCI = 0.904 × c-CNCI).

Chinese institutions strongly follow the identity (x = y) line (gradient of 0.97 and 0.89 for s-CNCI and f-CNCI, respectively, vs c-CNCI; Supplementary Fig. 1, Supplementary Table 1) with the vast majority showing increases in all three CNCI variants and consequently plotting in the first quadrant (+ x, + y). African institutions show a similar trend, but with the caveat of only 11 institutions included. Southeast Asia and MENAT institutions also tend to follow the identity line, though more institutions fall into the second (+ x, − y) and third (− x, − y) quadrants showing decreases in at least one variant; North American institutions mainly plot in these quadrants too. APAC, Western Europe, Nordic Europe, and Mexico/South America institutions are generally concentrated around the origin; Mexico/South America tend to follow the identity line, while the other regions are more concentrically clustered, with Western Europe more dispersed among the four quadrants with many of its institutions showing decreasing values. Eastern Europe institutions tend to plot in the first quadrant, but with a far greater s-CNCI increase than c-CNCI increase.

Institutions of note include Northwestern Polytechnical University (NPU), Xi’an, China, which exhibits significant increases across all CNCI variants (0.78 for s-CNCI, 0.71 for f-CNCI, and 0.74 for c-CNCI) and MIT, Cambridge, USA, which exhibits a decline in all variants (− 0.24 in s-CNCI, − 0.49 in f-CNCI, and − 0.38 in c-CNCI). Some institutions have mixed outcomes, for example, Tulane University, New Orleans, USA, has an s-CNCI and c-CNCI increase of 0.49 and 0.16, respectively, but a decline of − 0.17 in f-CNCI. However, for most institutions with mixed outcomes, one change is usually minor (i.e., < 0.05).

Fractional and collab CNCI rely, to differing extents, on the number of collaborating countries and institutions on papers. Figure 3 illustrates the division between article outputs and their citation share for each collaboration type for selected institutions.

Total articles and total citations by collaboration type for selected institutions over the period 2009-2018. Collaboration types: domestic single institutional (dom:single), domestic multi-institutional (dom:multi), international bilateral (int:bilat), trilateral (int:trilat) and quadrilateral plus (int:quad+). Note different scales

Over 50% of Niels Bohr Institute output is international quadrilateral plus. Additionally, these papers account for 60% of all citations received. Overall, the institute’s output is dominated by international collaboration (~ 90%). There is no domestic single output as the institute is part of the University of Copenhagen.

NPUs output is heavily domestic (77%). International quadrilateral plus output is negligible (< 1%). Notably, the share of citations per group closely follows the article share, though international papers received relatively slightly more. Seoul National University is also highly domestic (72%), mainly domestic multi (54%). Domestic single and international bilateral have similar output (~ 18%). International quadrilateral plus accounts for ~ 6% of output but nearly 19% of citations.

MIT has an almost equal split between domestic (49%) and international (51%) output. International quadrilateral plus articles account for 20% of citations compared to 12% of output.

Table 1 compares the average number of institutional partners at each collaboration level in 2009 and 2018 for the same institutions presented in Fig. 2. Almost all institutions increase their average partnerships across all collaboration types. Some increases are incremental (NPU international trilateral partnerships increases from 5.3 to 5.4), while others increase by one whole institution (e.g., University of Adelaide international trilateral from 5.0 to 6.0); for international quadrilateral plus, this can be tens of partners (e.g., Tulane University from ~21 to ~88; Niels Bohr Institute from ~17 to 109; note that NPU had no international quadrilateral plus papers in 2009). Similar patterns are seen for median values (Supplementary Table 2); these values are almost identical to the means apart from international quadrilateral plus where medians are generally far lower than means (e.g., Niels Bohr Institute), though there are exceptions (MIT and Seoul National University in 2009). Table 1 also illustrates that the relative percentage of international papers has increased for all shown institutions. International papers were the main output of MIT and University of Adelaide in 2018. NPUs international output more than doubled from 12 to 28%, though is still very much domestically focused. Across the complete dataset, 20% of articles had only a single institution, 26% had two institutions; 22% had five or more institutions; 4% had 10 or more.

Discussion

General trends

Well established research regions (such as North America and Western Europe) generally saw stagnation or declines in CNCI values, however their values remained competitive compared to other regions’ institutions. For example, MIT, which is likely seen as a prestigious institution to partner with, declined in all three indicators over the period but remained the top ranked institution in 2018. Such trends are likely a consequence of well performing institutions only having marginal increases in indicator values or decreases as they cannot continue to perform at the same level. This is analogous to the Red Queen Race in game theory where one must run fast to stay in the same place and even faster to move forward. Africa and Mexico/South America institutional gains in CNCI were generally nullified when looking at f-CNCI or c-CNCI. This is corroborated by previous results, though at the national level, that showed fractional counting generally benefits large research economies (Potter et al., 2020). Eastern Europe and China do see increases in c-CNCI over the period in question. This suggests that their research (unlike that of Africa and Mexico/South America), when analysed by collaboration type, is performing better than their peers. Overall, results illustrate that international collaboration is generally increasing over the period, further corroborating the results of Potter et al. (2022) as well as others (e.g., Adams & Gurney, 2018; Ribeiro et al., 2018).

Chinese citation patterns

Chinese institutions appear relatively immune to using different CNCI indicators showing similar increases across all three; accounting for collaboration either fractionally or by collaboration type seems to make little difference, in contrast to other world regions (Figs. 1, 2, Supplementary Fig. 1, Supplementary Table 1). Several factors could explain this phenomenon. First, China’s scientific output has grown rapidly over the last 20 years (Leydesdorff & Zhou, 2005; Stahlschmidt & Hinze, 2018; Wang, 2016) making it the second largest scientific producer globally (behind USA) in the most recent year of this analysis (2018). Secondly, Chinese publication citation characteristics differ from the worldwide citation distribution (Stahlschmidt & Hinze, 2018); China researchers tend to cite their fellow citizens more often (Bakare & Lewison, 2017; Shehatta & Al-Rubaish, 2019), as well as citing authors from some Asian countries more than others (Tang et al., 2015). Additionally, Chinese authored research tends to have longer reference lists than the world average (Stahlschmidt & Hinze, 2018). These all provide more opportunities for Chinese citations. Finally, articles authored by Chinese researchers are increasing their presence in the references of other countries’ publications, while, for example, USA authored articles, are experiencing a slow decline (Khelfaoui et al., 2020).

Highly cited researchers

Unusual increases in CNCI indicator values may also be due in part to hiring policies, particularly hiring of Highly Cited Researchers. These researchers, selected by the Institute for Scientific Information (ISI) at Clarivate, have demonstrated significant and broad influence reflected in their publication of multiple highly cited papers over the last decade. These highly cited papers rank in the top 1% by citations for a field (or fields) and publication year in the Web of Science. Two institutions that showed anomalously high CNCI values leaving behind their peers in their respective regions were KAUST and University of Adelaide. These institutions saw their number of highly cited researchers increase from 5 to 13 (KAUST) and 5 to 10 (University of Adelaide) between 2014 and 2018 (R. Fry, personal communication, November 7, 2022). While further assessment is outside the focus of this study, the hiring and potential citation impact of HCRs could positively influence an institution’s CNCI.

Collaboration and research focus

Changes and differences in CNCI indicator values could also be due to individual institution’s research foci. For example, institutions focused on large, highly multi-lateral projects would likely receive low fractional credit regardless of how many citations such work received. For example, the Niels Bohr Institute (Figs. 2 and 3, Table 1), whose papers with the most institutions (494, 298, 297) where all CERN-based collaborations (ATLAS Collaboration 2018a, 2018b, 2018c). The increasing trend of highly multi-institutional, multi-lateral papers is seen in Table 1. How these papers perform relative to their peers (i.e., same collaboration type) would influence its c-CNCI value (e.g., NPU—Fig. 2). Additionally, institutions that mainly publish with no international co-authors may see the likelihood of their work being noticed by others diminished, but this could depend on the size of their national research base (e.g., NPU located in China—Figs. 2, 3). We should expect collaboration to be influenced by many factors, of which research discipline will be one because of links between the research and the need for teams and facilities. As fields of research vary between institutions this could impact the type and size of collaborations formed. These topics will be covered in future analyses.

These results, therefore, suggest that the collaborations institutions make may influence CNCI changes over time. This could include the hiring of Highly Cited Researchers, who may relatively quickly amass citations directly influencing CNCI values. Additionally, collab CNCI demonstrates how research performs relative to its collaboration group. As previously shown (Potter et al., 2022), such analysis could highlight cases where an institution’s domestic research outperforms its more international and collaborative output. However, such insights should not discourage increased collaboration – it merely highlights that CNCI as a single metric does not provide a thorough view of performance and that other factors may be influential and should be considered by research and policy managers.

Conclusions

The standard CNCI indicator provides only a snapshot of an institution’s performance hiding underlying factors. By including fractional and collaboration CNCI alongside the standard approach a greater ‘profiles not metrics’ view can be produced demonstrating the complementarity of these indicators. However, to fully appreciate and understand the meaning of CNCI value changes, research and policy managers must understand the formulation behind each CNCI indicator, recognising the (dis)advantages. Additionally, in an increasingly collaborative world, it is vital to understand how an institution’s collaboration profile is changing over time, as collaboration complicates analysis requiring more nuanced approaches. Furthermore, collaboration partnerships and citation patterns which directly influence CNCI calculations must be considered. Evaluating and comparing the performance of institutions is a main driver of funding and research direction decisions. Managers must understand all aspects of CNCI indicators, including secondary factors, to be able to make better informed decisions.

Data availability

All the background data are available to academic researchers in institutions that subscribe to the Web of Science.

References

Adams, J. (2013). The fourth age of research. Nature, 497(7451), 557–560. https://doi.org/10.1038/497557a

Adams, J. (2018). Information and misinformation in bibliometric time-trend analysis. Journal of Informetrics, 12, 1063–1071. https://doi.org/10.1016/j.joi.2018.08.009

Adams, J., & Gurney, K. A. (2018). Bilateral and multilateral coauthorship and citation impact: Patterns in UK and US international collaboration. Frontiers in Research Metrics and Analysis, 3, 12. https://doi.org/10.3389/frma.2018.00012

Adams, J., Gurney, K., & Marshal, S. (2007). Profiling citation impact: A new methodology. Scientometrics, 72(2), 325–344. https://doi.org/10.1007/s11192-007-1696-x

Adams, J., McVeigh, M., Pendlebury, D. & Szomszor, M. (2019a). Profiles, not metrics. Global Research Report, Clarivate Analytics, London

Adams, J., Pendlebury, D. A., & Potter, R. (2022). Making it count: research credit management in a collaborative world. Clarivate.

Adams, J., Pendlebury, D., Potter, R., & Rogers, G. (2023). Unpacking research profiles: Moving beyond metrics. Clarivate.

Adams, J., Pendlebury, D., Potter, R., & Szomszor, M. (2019b). Multi-authorship and research analytics. Clarivate Analytics.

Adams, J., & Szomszor, M. (2022). A converging global research system. Quantitative Science Studies, 3(3), 715–731. https://doi.org/10.1162/qss_a_00208

Aksnes, D. W., Schneider, J. W., & Gunnarsson, M. (2012). Ranking national research systems by citation indicators. A comparative analysis using whole and fractionalised counting methods. Journal of Informetrics, 6, 36–43. https://doi.org/10.1016/j.joi.2011.08.002

ATLAS collaboration, Aaboud, M., Aad, G., et al. (2018a). Combination of inclusive and differential t(t)over-bar charge asymmetry measurements using ATLAS and CMS data at √S=7 and 8 TeV. Journal of High Energy Physics, 33. https://doi.org/10.1007/JHEP04(2018)033

ATLAS Collaboration, Aaboud, M., Aad, G., et al. (2018b). Search for dark matter and other new phenomena in events with an energetic jet and large missing transverse momentum using the ATLAS detector. Journal of High Energy Physics, 126. https://doi.org/10.1007/JHEP01(2018)126

ATLAS Collaboration, Aaboud, M., Aad, G., et al. (2018c). Search for electroweak production of supersymmetric states in scenarios with compressed mass spectra at √s=13 TeV with the ATLAS detector. Physical Review D, 97, 052010. https://doi.org/10.1103/PhysRevD.97.052010

Bartneck, C., & Kokkelmans, S. (2011). Detecting h-index manipulation through self-citation analysis. Scientometrics, 87, 85–98. https://doi.org/10.1007/s11192-010-0306-5

Bakare, V., & Lewison, G. (2017). Country over-citation ratios. Scientometrics, 113, 1199–1207. https://doi.org/10.1007/s11192-017-2490-z

BIS. (2009). International comparative performance of the UK research base. Department for Business, Innovation and Skills.

Bornmann, L. (2020). How can citation impact in bibliometrics be normalized? A new approach combining citing-side normalization and citation percentiles. Quantitative Science Studies, 1(4), 1553–1569. https://doi.org/10.1162/qss_a_00089

Bornmann, L., & Williams, R. (2020). An evaluation of percentile measures of citation impact, and a proposal for making them better. Scientometrics, 124, 1457–1478. https://doi.org/10.1007/s11192-020-03512-7

Bozeman, B., & Youtie, J. (2017). The strength in numbers: The new science of team science. Princeton University Press. https://doi.org/10.2307/j.ctvc77bn7

Burrell, Q., & Rousseau, R. (1995). Fractional counts for authorship attribution: A numerical study. Journal of the American Society for Information Science, 46, 97–102.

Carlsson, H. (2009). Allocation of research funds using bibliometric indicators—asset and challenge to Swedish higher education sector. InfoTrend, 64(4), 82–88.

Egghe, L., Rousseau, R., & van Hooydonk, G. (2000). Methods for accrediting publications to authors or countries: Consequences for evaluation studies. Journal of the American Society for Information Science and Technology, 51(2), 145–157. https://doi.org/10.1002/(SICI)1097-4571(2000)51:2%3c145::AID-ASI6%3e3.0.CO;2-9

Fister, I., Jr., Fister, I., & Perc, M. (2016). Toward the discovery of citation cartels in citation networks. Frontiers in Physics. https://doi.org/10.3389/fphy.2016.00049

Garfield, E. (1955). Citation indexes for science: A new dimension in documentation through association of ideas. Science, 122(3159), 108–111. https://doi.org/10.1126/science.122.3159.108

Garfield, E. (1977). Can citation indexing be automated? Essay of an information scientist (Vol. 1, pp. 84–90). ISI Press.

Garfield, E. (1979). Is citation analysis a legitimate evaluation tool? Scientometrics, 1(4), 359–375. https://doi.org/10.1007/BF02019306

Gauffriau, M. (2021). Counting methods introduced into the bibliometric research literature 1970–2018: A review. Quantitative Science Studies, 2(3), 932–975. https://doi.org/10.1162/qss_a_00141

Glänzel, W., & De Lange, C. (2002). A distributional approach to multinationality measures of international scientific collaboration. Scientometrics, 54(1), 75–89. https://doi.org/10.1023/a:1015684505035

Glänzel, W., & Schubert, A. (2004). Analyzing scientific networks through co-authorship. In H. F. Moed, W. Glänzel, & U. Schmoch (Eds.), Handbook of quantitative science and technology research: The use of publication and patent statistics in studies of S&T systems (pp. 257–276). Kluwer Academic Publishers.

Heneberg, P. (2016). From excessive journal self-cites to citation stacking: Analysis of journal self-citation kinetics in search for journals, which boost their scientometric indicators. PLoS ONE, 11(4), e0153730. https://doi.org/10.1371/journal.pone.0153730

Hicks, D., & Katz, J. S. (1996). Science policy for a highly collaborative science system. Science and Public Policy, 23, 39–44. https://doi.org/10.1093/spp/23.1.39

Holden, G., Rosenberg, G., & Barker, K. (2005). Bibliometrics: A potential decision making aid in hiring, reappointment, tenure and promotion decisions. In G. Holden, G. Rosenberg, & K. Barker (Eds.), Bibliometrics in social work (pp. 67–92). Routledge.

Jappe, A. (2020). Professional standards in bibliometric research evaluation? Ameta-evaluation of European assessment practice 2005–2019. PLoS ONE, 5(4), e0231735. https://doi.org/10.1371/journal.pone.0231735

Katz, J. S., & Martin, B. R. (1997). What is research collaboration? Research Policy, 26, 1–18. https://doi.org/10.1016/S0048-7333(96)00917-1

Khelfaloui, M., Larregue, J., Lariviere, V., & Gingras, Y. (2020). Measuring national self-referencing patterns of major science producers. Scientometrics, 123(2), 979–996. https://doi.org/10.1007/s11192-020-03381-0

Kronman, U., Gunnarsson, M., & Karlsson, S. (2010). The bibliometric database at the Swedish Research council—contents, methods and indicators. Swedish Research Council.

Leydesdorff, L., & Shin, J. C. (2011). How to evaluate universities in terms of their relative citation impacts: Fractional counting of citations and the normalization of differences among disciplines. Journal of the American Society for Information Science and Technology, 62(6), 1146–1155. https://doi.org/10.1002/asi.21511

Leydesdorff, L., & Zhou, P. (2005). Are the contributions of China and Korea upsetting the world system of science? Scientometrics, 63, 617–630. https://doi.org/10.1007/s11192-005-0231-1

Moed, H. F. (2005). Citation analysis in research evaluation (p. 347). Springer. https://doi.org/10.1007/1-4020-3714-7

Moed, H. F. (2017). Applied evaluative informetrics (p. 317). Springer. https://doi.org/10.1007/978-3-319-60522-7

Narin, F., Stevens, K., & Whitlow, E. S. (1991). Scientific co-operation in Europe and the citation of multinationally authored papers. Scientometrics, 21, 313–323. https://doi.org/10.1007/BF02093973

Potter, R. W. K. & Kovač, M. (2023). Tracking Category Normalized Citation Impact (CNCI) changes: Benefits of combining standard, collaboration and fractional CNCI for performance evaluation and understanding. Proceedings of ISSI 2023 the 19 International Conference on Scientometrics and Informetrics, 2, (pp.363–369). https://doi.org/10.5281/zenodo.8428859

Potter, R. W. K., Szomszor, M., & Adams, J. (2020). Interpreting CNCIs on a country-scale: The effect of domestic and international collaboration type. Journal of Informetrics, 14(4), 101075. https://doi.org/10.1016/j.joi.2020.101075

Potter, R. W. K., Szomszor, M., & Adams, J. (2022). Comparing standard, collaboration and fractional CNCI at the institutional level: Consequences for performance evaluation. Scientometrics, 127, 7435–7448. https://doi.org/10.1007/s11192-022-04303-y

Ribeiro, L. C., Rapini, M. S., Silva, L. A., & Albuquerque, E. A. (2018). Growth patterns of the network of international collaboration in science. Scientometrics, 114, 159–179. https://doi.org/10.1007/s11192-017-2573-x

Schubert, A., Glänzel, W., & Braun, T. (1988). Against absolute methods: Relative scientometric indicators and relational charts as evaluation tools. In A. F. J. van Raan (Ed.), Handbook of quantitative studies of science and technology. Elsevier.

Shehatta, I., & Al-Rubaish, A. M. (2019). Impact of country self-citations on bibliometric indicators and ranking of most productive countries. Scientometrics, 120, 775–791. https://doi.org/10.1007/s11192-019-03139-3

Sivertsen, G., Rosseau, R., & Zhang, L. (2019). Measuring scientific contributions with modified fractional counting. Journal of Informetrics, 13(2), 679–694. https://doi.org/10.1016/j.joi.2019.03.010

Small, H. G. (1982). Citation context analysis. In B. Dervin & M. Voigt (Eds.), Progress in communication sciences (Vol. 3, pp. 287–310). Ablex.

Stahlschmidt, S., & Hinze, S. (2018). The dynamically changing publication universe as a reference point in national impact evaluation: A counterfactual case study on the Chinese publication growth. Frontiers in Research Metrics and Analytics, 3, 30. https://doi.org/10.3389/frma.2018.00030

Tang, L., Shapira, P., & Youtie, J. (2015). Is there a clubbing effect underlying Chinese research citation increases? Journal of the Association for Information Science and Technology, 66(9), 1923–1932. https://doi.org/10.1002/asi.23302

Thelwall, M. (2020). Large publishing consortia produce higher citation impact research but coauthor contributions are hard to evaluate. Quantitative Science Studies, 1(1), 290–302. https://doi.org/10.1162/qss_a_00003

van Hooydonk, G. (1997). Fractional counting of multiauthored publications: Consequences for the impact of author. Journal of the American Society for Information Science, 48(10), 944–945. https://doi.org/10.1002/(SICI)1097-4571(199710)48:10%3c944::AID-ASI8%3e3.0.CO;2-1

Wagner, C. S., & Leydesdorff, L. (2005). Network structure, self-organization, and the growth of international collaboration in science. Research Policy, 34, 1608–1618. https://doi.org/10.1016/j.respol.2005.08.002

Waltman, L., & Eckvan, N. J. (2013). Source normalized indicators of citation impact: An overview of different approaches and an empirical comparison. Scientometrics. https://doi.org/10.1007/s11192-012-0913-4

Waltman, L., & van Eck, N. J. (2015). Field-normalized citation impact indicators and the choice of an appropriate counting method. Journal of Informetrics, 9(4), 872–894. https://doi.org/10.1016/j.joi.2015.08.001

Waltman, L., & van Eck, N. J. (2019). Field normalization of scientometric indicators. In W. Glänzel, H. F. Moed, U. Schmoch, & M. Thelwell (Eds.), Springer handbook of science and technology indicators. Springer Nature: Cham.

Wang, L. (2016). The structure and comparative advantages of China’s scientific research: Quantitative and qualitative perspectives. Scientometrics, 106(1), 435–452. https://doi.org/10.1007/s11192-015-1650-2

Wang, X., & Zhang, Z. H. (2020). Improving the reliability of short-term citation impact indicators by taking into account the correlation between short- and long-term citation impact. Journal of Informetrics, 14(2), 101019. https://doi.org/10.1016/j.joi.2020.101019

Zhang, L. H. & Wang, X. (2021). Two New Field Normalization Indicators Considering the Reliability of Citation Time Window: Some Theoretical Considerations Semantic search result. 18th International Conference on Scientometrics and Informetrics (ISSI) (pp.1307–1317)

Zitt, M., & Small, H. (2008). Modifying the journal impact factor by fractional citation weighting: The audience factor. Journal of the American Society for Information Science and Technology, 59(11), 1856–1860. https://doi.org/10.1002/asi.20880

Acknowledgements

This paper is an extended version of a conference proceeding originally presented at the 19th International Society for Scientometrics and Informetrics conference in 2023.

Funding

No external funding was required for this research.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

All authors are associated with the Institute for Scientific Information, which is part of Clarivate, the owner of the Web of Science.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Potter, R.W.K., Kovač, M. & Adams, J. Tracking changes in CNCI: the complementarity of standard, collaboration and fractional CNCI in understanding and evaluating research performance. Scientometrics (2024). https://doi.org/10.1007/s11192-024-05028-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11192-024-05028-w