Abstract

As the open access movement has gained widespread popularity in the scientific community, academic publishers have gradually adapted to the new environment. The pioneer open access journals have turned themselves into megajournals, and the subscription-based publishers have established open access branches and have turned subscription-based journals into hybrid ones. Maybe the most dramatic outcome of the open access boom is the market entry of such fast-growing open access publishers as Frontiers and Multidisciplinary Digital Publishing Institute (MDPI). By 2021, in terms of the number of papers published, MDPI has become one of the largest academic publishers worldwide. However, the publisher’s market shares across countries and regions show an uneven pattern. Whereas in such scientific powers as the United States and China, MDPI has remained a relatively small-scale player, it has gained a high market share in Europe, particularly in the Central and Eastern European (CEE) countries. In 2021, 28 percent of the SCI/SSCI papers authored/co-authored by researchers from CEE countries were published in MDPI journals, a share that was as high as the combined share of papers published by Elsevier and Springer Nature, the two largest academic publishers in the world. This paper seeks to find an explanation for the extensively growing share of MDPI in the publication outputs of CEE countries by choosing Hungary as a case study. To do this, by employing data analysis, some unique features of MDPI will be revealed. Then, we will present the results of a questionnaire survey conducted among Hungary-based researchers regarding MDPI and the factors that motivated them to publish in MDPI journals. Our results show that researchers generally consider MDPI journals’ sufficiently prestigious, emphasizing the importance of the inclusion of MDPI journals in Scopus and Web of Science databases and their high ranks and impacts. However, most researchers posit that the quick turnaround time that MDPI journals offer is the top driver of publishing in such journals.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

Introduction

Since the early 1990s, open access publishing has become an increasingly popular way of disseminating research findings (Björk & Solomon, 2012a). Compared to the publishers and journals operating according to the traditional subscription-based business model, open access publishers and journals do not impose subscriptions, licensing fees, and pay-per-view fees, but remove licensing restrictions and, in most cases, allow authors to retain the copyright (Suber, 2019). According to a report issued by Frontiers, in 2017, 16 percent of peer-reviewed papers worldwide were published in fully open access journals (Frontiers, 2018), whereas, according to Pinfield and Johnson (2018), in 2016, 25 percent of peer-reviewed papers located in Scopus was available in an open access form. A recent report by Pollock and Michael (2021) estimates that in 2020, 36 percent of all scholarly articles were published as paid-for open access. Publishing in open access journals is also fostered by major funders such as the National Institutes of Health and the Wellcome Trust require their grantees to publish the research results in open access journals or deposit them in open access repositories (Björk & Solomon, 2012a). Furthermore, the open access movement might receive additional impetus as an outcome of the launching of Plan S, an initiative of cOAlition S, a consortium of leading research funders (Van Noorden, 2020). It must be noted that in 2020, ERC, one of the most reputed research funders in Europe, announced its withdrawal from cOAlition S. However, ERC’s decision will most probably not jeopardize the further development of the open access movement because the funder expressed its commitment to support open access, but independently from cOAlition S.Footnote 1 Overall, the share of papers published in an open access way in the global publication output has significantly increased in the past decades, and the trend will expectedly continue (see, for example, Laakso et al., 2011; Piwowar et al., 2018; Simard et al., 2020).

As open access has gained tremendous support from the scientific community in the past decades, many open access publishers have been established and many fully open access journals have been launched (see, for example, Asai, 2020). Björk and Solomon (2012b) point out that in the early 2000s, BioMed Central and Public Library of Science (PLoS), the two most notable advocates of the new business model, emerged, and soon became prominent actors in the arena of academic publishing. As a response to the open access boom and the success of open access publishers, such traditional subscription-based publishers as Elsevier, Springer (now Springer Nature), Wiley, BMJ Publishing Group and IEEE have gradually become involved in the open access business by either launching fully open access journals or transforming subscription-based journals into hybrid ones, offering authors the opportunity to make their article openly accessible upon payment of the article processing charge (Erfanmanesh & Teixeira da Silva, 2019; Pinfield et al., 2017; Zhang & Watson, 2017).

It has been experienced that many open access journals have turned themselves into so-called megajounals. Synthesizing the megajournal definitions of Björk (2015, 2018), Norman (2012), Siler et al. (2020), and Spezi et al. (2017), the main criteria of megajournals are as follows: big publication volume, soundness-only peer review, broad subject area or multidisciplinary scope, full open access with moderate APC, and fast peer review and rapid publishing. Most researchers agree that PLOS ONE can be considered the pioneer of megajournals (Petersen, 2019; Siler et al., 2020), and it is still one of the most widely known representative of its kind. Since its launch in 2006, the business model of PLOS ONE has been successfully adopted by other open access publishers such as PeerJ, Frontiers, and Hindawi, and the open access branches of such subscription-based publishers as Elsevier (Heliyon), Springer Nature (Scientific Reports), IEEE (IEEE Access), and BMJ Publishing Group (BMJ Open). As a result, open access megajournals have significantly contributed to the increase in the publication volume of most leading publishing houses. For example, in 2021, 10.64 percent of all articles and review articles published by Springer Nature were located in three open access (mega) journals (Scientific Reports, Nature Communications, and Journal of High Energy Physics). In contrast, the publisher’s total SCI/SSCI-indexed journal portfolio reached 1828 titles (data are coming from Web of Science’s InCites).

However, none of the pioneer open access publishers and the open access branches of the subscription-based publishers have experienced such a robust expansion in the number of open access articles as Frontiers and, in the first place, Multidisciplinary Digital Publishing Institute (commonly known as MDPI) (Petrou, 2020). MDPI launched its first journal entitled Molecules in 1996, the same year it was founded under the name of “Molecular Diversity Preservation International”. This was followed by the launch of International Journal of Molecular Sciences in 1999. In 2001, Sensors, the next journal of MDPI, was founded followed by Marine Drugs in 2003. In the first windless years, MDPI’s portfolio only covered a handful of journals that published a slowly growing number of articles; however, around 2006–2008, MDPI implemented a change in its business strategy, allowing it to publish an explosively growing number of articles, in a rapidly increasing number of journals. In 2021, MDPI published approximately 390 peer-reviewed journals out of which 205 were included in Web of Science, and 99 were listed by Science Citation Index (SCI) and Social Sciences Citation Index (SSCI) (MDPI, 2022). Parallel, based on the number of SCI/SSCI articles annually published, by 2021, MDPI positioned itself as the third largest academic publisher in the world.

It is well documented that Elsevier, Springer Nature, and other major subscription-based publishers such as Wiley and Taylor & Francis have been dominating the global academic publishing market for a long time occupying leading positions among the academic publishers in most countries worldwide (Hagve, 2022; Larivière et al., 2015). However, MDPI has only recently joined the exclusive group of top publishers, and the geographical pattern of its market shares across countries has not yet been investigated. Nevertheless, there are some hints that some regions are particularly important for MDPI. For example, the fact that five out of the publishers’ 15 offices are located in Central and Eastern European (CEE) countries (Serbia: Belgrade and Novia Sad; Romania: Bucharest and Cluj; Poland: Krakow) might be a clue to the importance of that region for MDPI.

In this paper, we will demonstrate that MDPI has gained extremely high shares in the publication outputs of CEE countries. Then, we will seek an explanation of this pattern by analyzing some major features of MDPI journals that are very welcomed by CEE researchers. By surveying scholars from Hungarian institutions, we will map what factors motivated researchers to publish in MDPI journals and how they judged the prestige of MDPI and the journals it publishes. The main goal of this analysis is to explain why MDPI offers a perfect venue for CEE researchers to publish their findings, and how some historical factors characterizing CEE countries facilitate MDPI to gain a strong position in the region. For CEE researchers, it is not only a rational choice to publish in MDPI journals, but also a decision that is affected by a sort of path dependency.

The paper’s organization is as follows: After the Introduction, we will include the Data and Methodology section to present the databases we used for the data analysis and the methodology of the compilation of the questionnaire. Then, in the Results section, we will map the geographical pattern of MDPI’s market shares worldwide and demonstrate some unique features of MDPI journals that might lure CEE researchers to publish in them. Following this, we will analyze the results of the survey. Finally, we discuss the results, draw conclusions, present the research limitations, and sketch out some future research directions.

Data and methods

Mapping the market shares of MDPI worldwide

First, we analyzed and mapped the geographical pattern of MDPI’s market shares among academic publishers worldwide. To do this, we scrutinized and compared the output data of leading publishers by country in 2011, 2016, and 2021. Data for this analysis were retrieved from Clarivate’s InCites Benchmarking and Analytics platform. We considered only SCI and SSCI articles and review articles (henceforward: articles). For the mapping, we used ArcGIS 10.6 software. Only those countries were involved in the mapping, of which researchers produced at least 2000 articles in 2021. After carrying out this analysis phase, we obtained a clear picture of the worldwide market share pattern of MDPI. Then, we picked the CEE region and Hungary for further analysis.

Data analysis of MDPI journals

In the second data analysis phase, we aimed to show and compare the turnaround times of articles produced or co-produced by Hungary-based researchers in 2021. The turnaround time (TaT) of an article was defined as the interval between the date when the journal received the article and the date when the article was first published online. We reviewed the publication history of 2575 SCI/SSCI articles sorted out per academic publishers having a significant market share in Hungary (see the list of journals in Appendix 1). In 2021, Hungary-based researchers produced/co-produced 10,499 SCI/SSCI articles, of which 70 percent were published by Elsevier, MDPI, Springer Nature, Wiley, Frontiers, Taylor & Francis, Oxford University Press, and Public Library of Science. Naturally, there were considerable differences between the market shares of academic publishers; for example, Elsevier published 9-times more articles than Oxford University Press (i.e., 2160 vs. 242). For Elsevier and Springer Nature, more than 300 articles’ publication histories were reviewed, respectively. For Wiley and Frontiers, we checked more than 200 articles respectively, and for Taylor & Francis and Oxford University Press, more than 100 articles were reviewed respectively. This means we scrutinized the publication history of 1178 articles published by subscription-based academic publishers. For subscription-based publishers, we paid particular attention to including gold open access, hybrid gold open access, and non-open access journals in the analysis with roughly the same shares (the ratios are as follows: 39% GOA – 30% GHIB – 31% NOA).

For MDPI, we reviewed the publication history of 1005 articles, so the total amount of articles published by subscription-based publishers and MDPI were comparable. Besides the fully open access Frontiers journals (which are very popular across the CEE region), we included PLOS ONE (published by Public Library and Science) and IEEE Access (published by IEEE) in the analysis. We did this for two reasons: First, we realized that PLOS ONE and IEEE Access were popular journals for Hungarian researchers, making it reasonable to study them carefully. Second, we wanted to compare the turnaround time statistics of journals in which publishers employed a similar business model to MDPI. Finally, because Scientific Reports – the leading open access journal of Springer Nature – seemed to be the most popular journal for Hungarian researchers in terms of the number of articles published (and one of the top non-MDPI journals in other CEE countries), we decided to pay special attention of reviewing the turnaround times of Scientific Reports’ articles.

The reviewing process of turnaround times was impossible to automate, so we had to check out the publication history of every single article one by one.

Questionnaire survey among Hungary-based researchers

Whereas the results of the data analysis let us expose some special features’ of MDPI journals and compare those features with that of other publishers’ journals, the data can provide only an indirect explanation of the motivation of researchers about why they published in MDPI journals. To obtain a clearer picture of researchers’ motivation, we surveyed Hungary-based researchers who authored or co-authored at least one article published in SCI/SSCI-listed MDPI journals in 2021. In that year, Web of Science contained 1765 SCI/SSCI-indexed articles with Hungarian authorship/co-authorship. We retrieved and carefully reviewed the articles’ affiliation data reported by the authors and extracted the Hungarian email addresses from them. By doing this, we compiled a dataset of 5356 email addresses. Then, to increase accuracy, we removed duplicates from the dataset, thereby we reduced the email addresses by 27 percent, and finally obtained 3694 items. Following the compilation of the dataset of email addresses, we requested the researchers to fill out a questionnaire.

In the questionnaire, we asked 16 questions classified into five main categories. The first category contained questions about the factors that had made researchers submit their manuscript(s) to MDPI journal(s). Then, we wanted to find out if researchers were engaged in producing review reports for MDPI journals and whether the vouchers provided as rewards for creating the reviews motivated them to publish in MDPI journals. In the third question-category, we asked researchers whether their employer applied performance assessment to determine one’s salary and whether articles published in MDPI journals are fully considered in the assessment of individuals’ publication performance. The fourth question-category was included in the questionnaire to map what researchers think about the prestige of MDPI and the journals in which they published. Furthermore, we requested researchers to express their opinion about the prestige of MDPI compared to other publishers. Finally, we asked researchers to report their personal details, including their age, their highest academic degree/title, their research area, and the type of the institutions they were affiliated with. This latter question package let us sort out the responses received for the questions in the first four categories based on the features of the researchers (e.g., age cohorts).

We sent out the invitation emails with LimeSurvey 1.92 software between May 18 and June 1, 2022, to 3694 email addresses located in the dataset. Due to the unavailability of researchers and the deletion of resigned employees’ email accounts, 150 out of the emails we sent were rejected automatically in the survey period. Furthermore, 30 individuals unsubscribed instantly from the mailing list. Thus, finally (i.e., until June 1, 2022, when we closed the survey), we collected 629 fully completed questionnaires and 89 uncompleted ones. During the analysis, we considered only the fully completed questionnaires.

Results

MDPI: A major global publisher with uneven market share patterns across countries and regions

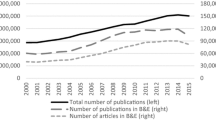

From its foundation in 1996 until the mid-2000s, the number of SCI/SSCI articles published by MDPI slowly increased. However, due to a shift in its business strategy around 2006–2008, the publisher has extensively broadened its portfolio and has published an explosively growing number of articles. For example, in 2011, MDPI published approximately 4000 SCI/SSCI articles and review articles (henceforward: articles), slightly less than Journal of Applied Physics, a journal of the American Institute of Physics, on its own; however, in 2021, with more than 210,000 SCI/SSCI articles, MDPI was ranked third among the largest academic publishers, right behind Elsevier and Springer Nature. Between 2011 and 2016, both MDPI and Frontiers (and Hindawi to a lesser extent) witnessed a robust growth in the number of articles published (477 and 721 percent, respectively); however, between 2016 and 2021, MDPI published a dramatically growing number of articles year by year resulting in a growth rate of 1085 percent and leaving Frontiers far behind (Table 1). In other words, in that 5-year period, MDPI experienced a 7.13- and 8.89-times higher growth rate in the number of articles published than Elsevier and Springer Nature, respectively, which growth rate might be challenging for the now-leading publishers in the future.

Table 1 shows that Elsevier currently tops the ranking as the largest publisher in the world, followed by Springer Nature and MDPI. Data might suggest that these publishers have a homogenous market share pattern across countries in the world. The fact is that in 2021, in terms of the number of SCI/SSCI articles published, Elsevier was the largest academic publisher in 83 percent of the countries involved in the analysis (see Appendices 2−4), and Springer Nature occupied the second position in 65 percent of the countries. In 15 percent of the countries, MDPI was the top-ranked academic publisher in terms of market share, and in 22 percent of countries, it came second in the ranking. Now, if we observe the countries where MDPI has a leading role among academic publishers (Fig. 1), we can discover a unique geographical pattern. Whereas most MDPI articles contained China- and United States-based authors in 2021 (more precisely 30 percent of the articles), the publisher managed to acquire low market shares in these scientific powers. In China, the market share of MDPI in terms of the number of articles published was 5.92 percent, and in the United States, MDPI had a market share of 5.17 percent. Furthermore, the market share of MDPI among academic publishers is considered relatively low in such leading countries in science as Canada (6.18 percent), Denmark (7.05 percent), India (3.62 percent), Japan (8.07 percent), the Netherlands (7.34 percent), Singapore (5.54 percent), Sweden (7.86 percent), Switzerland (7.31 percent), and the United Kingdom (6.26 percent).

However, MDPI has managed to acquire powerful market positions in Central and Eastern European (CEE) and, to a lesser extent, South European countries (Greece, Italy, Malta, Portugal, and Spain) (Fig. 1).

In 2021, 34.18 percent and 33.41 percent of the articles authored by Romania- and Poland-based authors were published in MDPI journals, respectively, which ratios in those countries were higher than that of Elsevier and Springer Nature combined. The market share of MDPI was above 30 percent in Lithuania as well, reached almost 30 percent in Latvia and Slovakia, and exceeded 20 percent in Croatia and Slovenia. In Bulgaria, with almost 19 percent of the market share, MDPI was the top academic publisher, whereas, in the Czech Republic and Hungary, MDPI occupied the second position behind Elsevier, with a difference of 2–3 percent between them. However, considering the explosive growth of the market share of MDPI in CEE countries in the past 11 years (2011–2021) (Fig. 2), we can predestinate a further increase in the dominance of the publisher. Furthermore, if only focusing articles produced without international collaborations, the shares of MDPI in CEE countries become even much higher (Appendix 5). In Latvia, Lithuania, and Poland, the share of articles published in MDPI journals by only domestic authors exceeded 40 percent, respectively. In Romania, it reached almost 50 percent (i.e., every second paper authored by only Romania-based researchers was published in an MDPI journal). In contrast, if articles produced by domestic authors were considered, the low market shares of MDPI that characterize the United States, the United Kingdom, and Japan, for instance, resulted in even much lower shares.

Obviously, there must be many reasons behind the uneven worldwide market share patterns of MDPI. However, we hypothesize that the extreme dominance of MDPI in CEE countries can be explained by some universal factors, including the similar evolution path of these countries’ science systems, the favoritism of metrics over prestige in research evaluation, and the increasing pressure on researchers to publish more and more papers. If we combine these factors, MDPI seems to be the panacea for rising the challenges.

Rise of megajournals in MDPI’s portfolio

After reviewing MDPI’s Annual Report of 2021, we can conclude that expansion is a core strategy for the publisher (MDPI, 2022). In Sect. 3.1, we demonstrated that the number of articles published by MDPI has been explosively increasing in the past decade. However, there is a less tangible but highly impactful component of MDPI’s strategy, according to which the flagship journals have gradually been transformed into megajournals (see, for example, Orduña-Malea & Aguillo, 2022; Repiso et al., 2021). In 2011, many of the now-leading MDPI journals did not even exist. Sustainability, one of the flagship journals of MDPI, published 121 articles in 2011, and by publishing 1331 articles in 2016, it was ranked 91st on the list of the top journals. However, in 2021, after experiencing approximately 1000 percent growth in the number of articles published within six years, Sustainability (along with International Journal of Molecular Sciences, International Journal of Environmental Research and Public Health, and Applied Sciences-Basel) were included in the exclusive group of journals publishing more than 10,000 articles per year (Table 2, Appendix 6). Furthermore, it seems very possible that in the next some years, PLOS ONE (that published 28 percent less articles in 2021 than in 2016) will be surpassed by Sustainability which then becomes the number two megajournal behind Scientific Reports. Whereas MDPI published 99 SCI/SSCI-indexed journals in 2021, 45.5 percent of the articles were contained by the top 10 megajournals.

As mentioned earlier, megajournals are generally characterized by high inclusivity, broad disciplinary or multidisciplinary scope, and soundness-only peer review. In the Discussion section, we will demonstrate that, in one way or another, these features also characterize MDPI megajournals, fundamentally impacting the decision of CEE researchers on where to submit their manuscripts.

Applying extremely short turnaround times

According to Norman (2012), Petrou (2020), and Siler et al. (2020), open access megajournals tend to apply relatively fast peer review and editing, and rapid publishing. For these journals, one of the main tools to accelerate the publishing process is to request referees to produce their review reports within a period of 7–10 days (e.g., most of the MDPI journals and Scientific Reports) (see, MDPI: The MDPI Editorial Process, and Scientific Reports: Guide to referees). By doing this, the turnaround times of articles could be shortened significantly.

As Table 3 demonstrates, for traditional subscription-based publishers (i.e., Elsevier, Oxford University Press, Springer Nature, Taylor & Francis, and Wiley), the median turnaround time is 141 days, while the average turnaround time is slightly higher (177.06 days). According to the general belief, open access journals can be characterized by a rapid publishing model; however, we found that both the median and the average turnaround times of articles published by Frontiers journals, and PLOS ONE and Scientific Reports were considerably similar to those of subscription-based publishers (median turnaround times: 3.90 months [117 days] to 4.90 months [147 days]; average turnaround times: 4.51 months [135.34 days] to 5.46 months [163.70 days]).

Nevertheless, none of the subscription-based publishers and Frontiers, and such open access journals as PLOS ONE and Scientific Reports apply such short turnaround times as IEEE Access and MDPI. For IEEE Access, both the median and the average turnaround times of articles were slightly shorter than for MDPI, which can be explained by the fact that IEEE Access’ disciplinary scope is quite narrow (it publishes only engineering papers). As for MDPI, we found that the median turnaround time of articles was 1.3 months (39 days), and the average turnaround time was 1.40 months (41.99 days). That is, for authors, MDPI offers 1/3‒1/4-times shorter turnaround times than its rivals.

In addition, if we compare the maximum time passed between the submission and the first publishing of an article, we can realize that for some publishers, it exceeded 1000 days. Frontiers journals and Scientific Reports also experienced a maximum turnaround time of over 5–600 days. In contrast, for MDPI, the maximum turnaround time of an article was only 155 days, roughly equal to the average turnaround time of articles published by subscription-based publishers (177.06 days).

Figure 3 demonstrates the turnaround times of 2575 SCI/SSCI articles authored/co-authored by Hungary-based researchers in 2021. The articles are classified into five turnaround time categories which are as follows: less than 1 month, 1−3 months, 4−6 months, 7−12 months, and more than 12 months. As we can see, the highest share of articles for almost all publishers and journals falls into the turnaround time category of 4−6 months. However, there are some differences between publishers in that which of the turnaround time categories contain the second highest share of articles. For example, for subscription-based publishers and PLOS ONE, the turnaround time category of 7−12 months contains the second highest share of articles, whereas, for Frontiers, the second most articles’ turnaround times fall between 1 and 3 months.

However, for MDPI, two-thirds of the articles belong to the turnaround time category of 1−3 months, and almost one-third of them can be characterized by a turnaround time of less than one month. If we investigate the median and average turnaround times of MDPI articles (671 items) located in the category of 1−3 months, we can realize that they are 45 and 47.48 days (i.e., ca. 1.5 months). In the category containing the highest share of articles authored/co-authored by Hungarian researchers, the median/average turnaround times are much closer to the minimum threshold value (31 days) than the maximum one (91 days).

The short time (7−10 days) provided for the referees by most MDPI journals is sometimes criticized as a factor that might negatively affect the quality of the review and is considered a characteristic of predatory journals by some (see, Oviedo-García, 2021). However, we must note that such highly reputed open access journals as Scientific Reports, Scientific Data (both published by Springer Nature) and PLOS ONE also request the referees to conduct the review within 10 days. Nevertheless, none of the publishers and open access megajournals can offer as short turnaround times as MDPI. As discussed in Sect. 3.4, the short turnaround times are crucial for CEE researchers, significantly influencing their decision regarding the submission of the manuscripts.

Results of the survey

The survey analysis is based on 629 fully completed questionnaires produced by Hungary-based researchers (see the questionnaire in Appendix 7). In the first section of the questionnaire, we wanted to determine researchers’ motivation about why they had chosen an MDPI journal to publish their papers. We found that 79.81 percent of the respondents considered the journals’ Scimago Journal Rank (SJR) Q1/Q2 classification to be an important factor having affected their decision of publishing in MDPI journals (Fig. 4). With 71.70 percent of the responses, “fast reviewing and publishing” (i.e., a specialty of MDPI) were marked second among the factors. Another quality measure was, the journals’ high impact factor value (Saha et al., 2003), which was indicated by 62.80 percent of the respondents as an attractive feature of MDPI journals. Based on the percentage of the responses (i.e., 61.69 percent), this was followed by the open access publishing offered by MDPI. Every other factor was indicated to be important by less than 50 percent of the respondents. We asked researchers if the low/moderate APCs offered by MDPI journals were an attractive factor, but surprisingly, only 7.47 percent of the respondents marked this to be important. That is, either the APCs of MDPI journals were higher than we had assumed, or the researchers had enough funds to pay the APC, or they were able to reduce the APC by receiving discounts and exemptions.

In conclusion, the decision of Hungary-based researchers about choosing MDPI journals for publishing research results is basically affected by three factors: 1) (relatively) high quality indicators of journals, 2) short turnaround times, and 3) open access publishing option.

Then we asked researchers if they had been involved in reviewing publications submitted to MDPI journals. Results showed that 61.05 percent of the respondents produced reviews for MDPI journals in 2021 or before. Of the respondents involved in the reviewing process, 59.90 percent used the reward vouchers they received from the journals to reduce the amount of the APC. However, only 48.26 percent of the above cohort of reviewers (i.e., 28.90 percent of all who carried out reviews) asserted that their decision to publish in an MDPI journal was impacted by the opportunity to reduce the APC by redeeming the vouchers. The voucher-based reward model applied by MDPI appears to be an attractive factor for only half of those involved in reviewing for MDPI journals (who represent 17.65 percent of the respondents). While acknowledging the effectiveness of MDPI’s voucher-based reward model, the results suggest that the authors had to find additional fundings to cover the APC.

Concerning the above topic, we wanted to find out what funding the authors used to cover the APC. As we expected, the highest share of the respondents (i.e., 64.07 percent of them) indicated that they fully or partly paid the APC of the papers published in MDPI journals from national and EU research funding and scholarships. 39.11 percent of the respondents redeemed the vouchers received for reviewing to equalize or reduce the APC. Furthermore, for one-third of the researchers, the employer financially supported the payment of the APC, and in the case of 29.09 percent of the respondents, the APC was paid by co-authors. Finally, 10.97 percent of the respondents indicated that they used their own money to fully or partly cover the APC. We wanted to obtain information about the sources the authors used to pay the APC because some universities (e.g., University of Szeged) and research institutions (e.g., ELRN Centre for Economic and Regional Studies) have decided to no longer provide financial support for researchers to cover the APC of MDPI journals, and we have unofficially been informed that in Poland, governmental funds may not be used for this purpose in the future.

Following this, we asked researchers if the employers applied performance assessment to determine employees’ salary, and if yes, did they consider publication performance of individuals as part of the assessment. For this question, 59.94 percent of the respondents denoted that the employer applied performance assessment to determine salaries, but according to 4.77 percent of the respondents, the publication performance was not considered by the employer. We also wanted to find out which of the quality parameters of journals met the assessment requirements applied by the employer. 49.76 percent of the respondents indicated that only journals with SJR Q1/Q2 classification were involved in the assessment. For this question, such quality indicators as the journals’ indexation in Web of Science/Scopus and journals with impact factor were mentioned by almost the same share of respondents (i.e., 29.57 and 29.73 percent respectively). This response, along with the one given to the journal selection question indicate that the employers primarily consider the Scimago Journal Rank; however, a part of them also calculate with the impact factor value, suggesting that there is no uniform position/standard in the Hungarian institutions regarding the application of quality indicators.

Finally, the last question in this section of the questionnaire was aimed to investigate if articles published in MDPI journals were considered in the performance assessment. Of the respondents, 85.88 percent informed us that MDPI journals were included in the assessment, and only 2.88 percent replied that they were excluded from the procedure.

The core of the final section of the questionnaire was to obtain information about how the researchers judge the prestige of MDPI, and that of the journals in which their papers were published. We asked the researchers to mark how they judge the reputation of MDPI/MDPI journals on a scale ranging from 1 to 5, where 1 indicated low and 5 indicated high reputation. As for the reputation of the publisher, the third of the respondents (i.e., 32.75 percent of them) marked by 3 on the scale, judging the reputation of MDPI to be moderate. However, 29.25 percent and 14.31 percent of the respondents indicated by 4 and 5 on the scale respectively. Thereby, the overall reputation of MDPI reached a score of 3.41, so researchers classified the reputation of MDPI between moderate and good. We also asked researchers to judge the reputation of MDPI journals in which they published their articles. Naturally, we expected that the researchers would be biased towards the reputation of the journal that they had chosen as a venue for their publications. The findings reinforce our expectations: 40.38 percent of the respondents gave a rating of 4, and 23.85 and 22.89 percent of them marked it as 3 and 5 respectively. The average score of MDPI journals’ reputation is 3.81, which lets us conclude that researchers considered the reputation of the journals to be good.

Concerning the above issue, we asked researchers to pick three academic publishers that published the most reputed journals in their research field. For this question, we compiled a list of ten publishers having the highest market shares in CEE countries (Fig. 5). Most researchers (76.95 percent) indicated Elsevier as the publisher of the most reputed journals, followed by Springer Nature (58.66 percent) and Wiley (33.23 percent). With a share of 25.76 percent, MDPI was ranked fourth on that list, surpassing Taylor & Francis (12.72 percent) and Oxford University Press (12.24 percent).

Discussion

Before discussing the results of the analysis, we need to provide an insight into the evolution of the science systems of CEE countries, revealing some common and unique features of the process that might help us understand why MDPI has been able to gain a leading position in the region.

In the socialist era that started around the late 1940s in most CEE countries and lasted until the end of the 1980s or the beginning of the 1990s, science was under strict political control, and basic research had to serve the interest of the industry and military (Kozlowski et al., 1999). Graham (1993) points out that due to the soviet ideological effect, sociology, political sciences, and public health were neglected and underprivileged disciplines. During this period, universities and research institutions’ political isolation hindered researchers from getting involved in international research collaborations with Western partners (Dobbins & Kwiek, 2017; Kozak et al., 2015). However, there were some notable exceptions. According to Teodorescu and Andrei (2011: 713) “the scientific communities in Poland and Hungary were already open to the West and enjoyed relatively high levels of international collaboration even before the fall of communism.” Naturally, researchers in communist CEE countries were required to publish scientific papers, but due to the isolation of the national science systems they primarily published in national journals. As Grančay et al., (2017: 1817) put it “publishing in international journals were not the main criteria for academic success.” Furthermore, scholars with good political connections could maneuver themselves into a more advantageous position at academic promotions even if they did not meet the academic criteria regarding the quantity and quality of publications. In conclusion, most researchers in the CEE region could not get involved in research collaborations with western partners and were not required to publish in international journals.

Due to the collapse of the communist regimes around 1989/1990, science in CEE countries was liberated, and scholars were given a free way to collaborate with their western peers and publish in international journals. However, many scholars lacked proper knowledge about which international journals were classified as the most prestigious in their respective fields. During the transition, some worrying trends emerged: many scholars started to publish in poor quality “international” journals and predatory journals, or they kept publishing in local journals in which they used to publish prior to the fall of the regime (Grančay et al., 2017). To halt this negative trend, the governments, academies, and universities developed and launched new assessment criteria for scholars paying particular attention to the quality measures of publications. It is well documented that in CEE countries, the performance assessment of researchers has highly become metric-based; so, such indicators as the impact factor and the SJR indicator (more precisely: the journals’ classification in Q1/Q2 categories based on the SJR indicator value) have become crucial factors of the assessment (see, for example, Antonowicz et al., 2017; Csomós, 2020; Grančay et al., 2017; Hladchenko & Moed, 2021; Kristapsons & Tjunina, 1995; Kulczycki et al., 2017; Pajić, 2015; Paruzel-Czachura et al., 2021). When applying for promotions, academic degrees and titles, and national research grants and scholarships, scholars must demonstrate the aggregate bibliometric values of their academic accomplishments, which are considered more important than demonstrating what research results they have published in which journals. We can conclude that the performance assessment practice in CEE countries prompts researchers to publish in journals with (relatively high) impact factor and/or SJR Q1/Q2 positions rather than journals being considered the most prestigious ones in their respective fields.

However, the case of Hungary is exceptional. Whereas the metric-based performance assessment has been applied for decades in CEE countries, the Hungarian government has recently launched a massive reform of the higher education sector that might further increase the pressure on researchers to publish more and faster. Since the middle of 2021, the government has transferred almost all public universities to quasi-public foundations; therefore, the universities may no longer be eligible for direct funding from the governmental budget but must operate similarly to private companies and make special deals with the government about financing. In return for the financial support, among other indicators that need to be fulfilled, the government requires that universities produce a particular amount of SJR Q1/Q2 papers every year. As part of the reform, the salaries of the academic staff have been increased by 30–50 percent of which half will be provided following the assessment of individuals’ annual research performance. Naturally, a key indicator of the performance assessment is the number of SJR Q1/Q2 papers one may publish in a year. No doubt that the new performance assessment environment will reshape researchers’ publication strategy, who will conduct research requiring a shorter time and submit manuscripts to journals that will most probably publish them within a reasonable time.

Parallel with the shift towards a highly metric-based performance assessment that CEE countries have experienced, MDPI journals have gained remarkable market shares in the region. In the followings, we will discuss what makes MDPI the perfect solution for researchers to overcome the challenges of the new circumstances.

The first finding of the data analysis is that the flagship MDPI journals have tended to position themselves among the leading megajournals publishing thousands or more than ten thousand items yearly. The explosive increase in the publication volume of MDPI journals is essential to be noted because this process can only be maintained if they will gradually apply a sort of multidisciplinary approach and will publish articles from a broad range of disciplines. The megajournal character also implies that the peer review focuses on evaluating the scientific soundness of articles rather than their novelty and impact (Siler et al., 2020). Global science is characterized by a core-periphery structure where the core is occupied by such English-speaking nations as the United States, the United Kingdom and Australia (Demeter & Toth, 2020; Gui et al., 2019; Leydesdorff & Wagner, 2008; Paasi, 2005). This core group also contains some non-native English-speaking countries from Western Europe and East Asia. However, the CEE countries are still located on the periphery of global science (see, for example, Leydesdorff et al., 2013; Marginson, 2021; Zelnio, 2012), and for researchers from the periphery, it is highly challenging to compete with researchers from core countries when they want to get published in journals that apply highly rigid selection methodologies and publish only limited numbers of articles per year. In contrast, MDPI megajournals offer high inclusivity and pay particular attention to the assessment of scientific soundness suggesting that researchers can get around the fierce competition with researchers from core countries. These features of MDPI journals make them attractive publication venues for researchers located in CEE countries.

The second finding of the data analysis demonstrates, the MDPI journals are characterized by applying very short turnaround times. Considering the median turnaround time, MDPI journals conduct the peer review, editing and online publishing of papers in 39 days. In contrast, both subscription-based publishers, and other open access publishers and journals, apply considerably higher turnaround times making them uncompetitive with MDPI journals from a CEE perspective. Short turnaround times seems to be one of the most important factors impacting the decision of researchers located in CEE countries about which journals they should publish in. Because the performance assessment criteria applied at promotions focus on journal metrics rather than journal prestige, and the performance-based salaries are determined on an annual basis (i.e., one’s annual publication production is considered), fast publishing has gained key importance for researchers.

The survey results reinforce the importance of short turnaround times offered by MDPI journals. The indexation of MDPI journals in Web of Science and Scopus are fundamental factors affecting researchers’ decision to submit manuscripts, primarily if the journals are classified into SJR Q1/Q2 classes and have an impact factor. The voucher-based reward system seems to be somewhat beneficial for CEE researchers engaged in reviewing for MDPI journals because it helps reduce the APC.

One may conclude that for CEE researchers, to publish in MDPI journals is nothing more than the apparent outcome of a rational choice: if they want to climb higher on the career ladder relatively quickly, if they want to publish scientifically sound but less innovative and competitive papers, if they want to earn more money in the up-coming years, MDPI journals offer the best solution to achieve the goals. However, the survey revealed that the picture was slightly more complex because, despite some criticisms of MDPI journals raised in the scientific community (see, for example. de Vrieze, 2018; Copiello, 2019; Oviedo-García, 2021), Hungary-based researchers considered MDPI journals sufficiently prestigious. Even though researchers judged some subscription-based publishers to be more prestigious, the position of MDPI was rather good in the ranking. In addition, after reviewing the opinions of researchers about MDPI via emails, we can declare that prestige is a flexible concept strongly linked with such features as trustworthiness and predictability. It is out of the question that MDPI journals put great emphasis on setting the boundaries of the peer review process, which efforts significantly contribute to the increasing image of the journals.

Conclusions

The results of our research demonstrate that MDPI has gained remarkable market share in most of the CEE countries. By conducting data analysis focusing on MDPI and the journals it publishes, and a questionnaire survey regarding MDPI among Hungary-based researchers, we managed to look behind the curtain. By offering high inclusivity, short turnaround times, and such journal quality indicators that meet the requirements of performance assessments, MDPI journals perfectly satisfy the demands of researchers in CEE countries who are under high pressure to get published rapidly. This latent cooperation seems to be quite fruitful for both parties and predicts that the market share of MDPI will further increase in the region.

It must be noted that some universities and research institutions (e.g., University of Szeged and ELRN Centre for Economic and Regional Studies) have imposed or are about to impose measures to hold back further increase of MDPI’s share in the publication output. There might be several reasons institution leaders urge affiliated researchers to consider publishing in other journals rather than those published by Frontiers, MDPI, and Public Library of Science. However, publicly disclosed concerns are mostly centered on financials: paying the APCs of papers published in MDPI journals (along with some additional costs) has become a substantial burden for the institutions. To understand this issue, we raise the case of Hungary. In the framework of the Electronic Information Service National Programme (EIS), Hungary has made agreements with the leading subscription-based publishers (including Elsevier, Springer Nature, and Wiley) so that researchers can publish their research open access in both hybrid and gold open access journals without having to pay an APC. In 2022, consortium members of the EIS program paid approximately 5.2 million Euros to Elsevier to access the ScienceDirect database and the open access publishing option in eligible journals.Footnote 2 However, despite all efforts made by the EIS program (i.e., the consortium members) to satisfy the demands of Hungarian researchers for open access publishing, a substantially increasing number of papers are published in MDPI journals. Furthermore, due to the explosively growing number of articles published in MDPI journals with SJR Q1/Q2 classification, institutions are required to pay a considerable amount of money as publication reward and performance-based salaries, further exacerbating the financial issues.

To change this trend, that is, to reduce the ratio of papers published by MDPI and some other open access publishers in the total output, one of the measures introduced by the institutions is the removal of MDPI journals from the list of those open access journals of which APCs can be funded from the institutional budget.Footnote 3 Nevertheless, the effectiveness of such measures is questionable because the APC of many papers published in MDPI journals is funded by national and EU research funders partly or fully. Furthermore, if institution leaders decide to cut back the amount of the publication rewards, researchers may lose their motivation to publish, jeopardizing the accomplishment of the institutional goals needed to obtain governmental funds. In addition, some institution leaders posit that as long as such prominent databases as Web of Science and Scopus include the journals published by MDPI, thereby guaranteeing their quality, there is nothing to worry about.Footnote 4

Our research has certain limitations. In the questionnaire survey, we involved Hungary-based researchers who published in MDPI journals in 2021, so we took only a snapshot of a single country in the CEE region. However, we think that due to the similar evolution path of the science systems and the dominance of metric-based performance assessments applied in CEE countries, there is not much difference between the circumstances and motivations of researchers across the region. In addition, we did not investigate the attitude of researchers towards other open access publishers and journals (e.g., Frontiers, PLOS ONE, and Scientific Reports) and subscription-based publishers. As for the questionnaire survey, it seems reasonable to repeat it from a different angle and find out the opinion of researchers who have never published in MDPI journals.

Finally, in the future, the geographical scope of the research should be widened. Firstly, more information should be acquired from other CEE countries to corroborate our findings. Secondly, when mapping MDPI’s market share worldwide, we realized that the pattern we detected for the CEE region is similar to that of the Mediterranean region. That is, it would be reasonable to investigate the latter region more deeply to whether we can find common features with the CEE region. Thirdly, we should find out why MDPI has remained a marginal player in the United States, the United Kingdom, and China. We did the first step in this research, but many questions have remained that we should answer in the future.

Notes

ERC, 2020: ERC Scientific Council calls for open access plans to respect researchers’ needs. https://erc.europa.eu/news/erc-scientific-council-calls-open-access-plans-respect-researchers-needs.

The EIS contract between Elsevier and the Library and information Centre of the Hungarian Academy of Sciences is available at this link: https://eisz.mtak.hu/images/szerzodesek/ScienceDirect_2022.pdf

See for example, the announcement of University of Szeged Klebelsberg Kuno Library indicating that due to financial issues, the Library will no longer be able to pay MDPI (and Frontiers) journals’ APCs http://szerzoknek.ek.szte.hu/szabalyvaltozas-az-open-access-tamogatas-es-a-lektoralasi-szolgaltatasokban/

References

Antonowicz, D., Kohoutek, J., Pinheiro, R., & Hladchenko, M. (2017). The roads of ‘excellence’ in Central and Eastern Europe. European Educational Research Journal, 16(5), 547–567.

Asai, S. (2020). Market power of publishers in setting article processing charges for open access journals. Scientometrics, 123(2), 1037–1049.

Björk, B.-C. (2015). Have the "mega-journals" reached the limits to growth? PeerJ, 2015(5),e981, 1−11.

Björk, B.-C. (2018). Evolution of the scholarly mega-journal, 2006–2017. PeerJ. https://doi.org/10.7717/peerj.4357

Björk, B.-C., & Solomon, D. (2012a). Open access versus subscription journals: A comparison of scientific impact. BMC Medicine, 10(73), 1–10.

Björk, B.-C., & Solomon, D. (2012b). Pricing principles used by scholarly open access publishers. Learned Publishing, 25(2), 132–137.

Chawla, D. S. (2021). Hundreds of ‘predatory’ journals indexed on leading scholarly database. Nature News. https://doi.org/10.1038/d41586-021-00239-0

Copiello, S. (2019). On the skewness of journal self-citations and publisher self-citations: Cues for discussion from a case study. Learned Publishing, 32(3), 249–258.

Csomós, G. (2020). Introducing recalibrated academic performance indicators in the evaluation of individuals’ research performance: A case study from Eastern Europe. Journal of Informetrics. https://doi.org/10.1016/j.joi.2020.101073

de Vrieze, J. (2018). Open-access journal editors resign after alleged pressure to publish mediocre papers. Science Insider, https://www.science.org/content/article/open-access-editors-resign-after-alleged-pressure-publish-mediocre-papers.

Demeter, M., & Toth, T. (2020). The world-systemic network of global elite sociology: The western male monoculture at faculties of the top one-hundred sociology departments of the world. Scientometrics, 124(3), 2469–2495.

Dobbins, M., & Kwiek, M. (2017). Europeanisation and globalisation in higher education in Central and Eastern Europe: 25 years of changes revisited (1990–2015). European Educational Research Journal, 16(5), 519–528.

Erfanmanesh, M., & Teixeira da Silva, J. A. (2019). Is the soundness-only quality control policy of open access mega journals linked to a higher rate of published errors? Scientometrics, 120(2), 917–923.

Frontiers (2018). Scientific Excellence at Scale: Open Access journals have a clear citation advantage over subscription journals. Frontiers Announcements, https://blog.frontiersin.org/2018/07/11/scientific-excellence-at-scale-open-access-journals-have-a-clear-citation-advantage-over-subscription-journals/.

Graham, L. R. (1993). Science in Russia and the Soviet Union: A Short History. Cambridge University Press.

Grančay, M., Vveinhardt, J., & Šumilo, Ē. (2017). Publish or perish: How Central and Eastern European economists have dealt with the ever-increasing academic publishing requirements 2000–2015. Scientometrics, 111(3), 1813–1837.

Gui, Q., Liu, C., & Du, D. (2019). Globalization of science and international scientific collaboration: A network perspective. Geoforum, 105, 1–12.

Hagve, M. (2022). The money behind academic publishing. Tidsskrift for Den Norske Legeforening, 140(11), 1–5.

Hladchenko, M., & Moed, H. F. (2021). The effect of publication traditions and requirements in research assessment and funding policies upon the use of national journals in 28 post-socialist countries. Journal of Informetrics. https://doi.org/10.1016/j.joi.2021.101190

Kozak, M., Bornmann, L., & Leydesdorff, L. (2015). How have the Eastern European countries of the former Warsaw Pact developed since 1990? A bibliometric study. Scientometrics, 102(2), 1101–1117.

Kozlowski, J., Radosevic, S., & Ircha, D. (1999). History matters: The inherited disciplinary structure of the post-communist science in countries of Central and Eastern Europe and its restructuring. Scientometrics, 45(1), 137–166.

Kristapsons, J., & Tjunina, E. (1995). Changes in Latvia’s science indicators in the transformation period. Research Evaluation, 5(2), 151–160.

Kulczycki, E., Korzeń, M., & Korytkowski, P. (2017). Toward an excellence-based research funding system: Evidence from Poland. Journal of Informetrics, 11(1), 282–298.

Laakso, M., Welling, P., Bukvova, H., Nyman, L., Björk, B.-C., & Hedlund, T. (2011). The development of open access journal publishing from 1993 to 2009. PLoS ONE. https://doi.org/10.1371/journal.pone.0020961

Larivière, V., Haustein, S., & Mongeon, P. (2015). The oligopoly of academic publishers in the digital era. PLoS ONE. https://doi.org/10.1371/journal.pone.0127502

Leydesdorff, L., & Wagner, C. S. (2008). International collaboration in science and the formation of a core group. Journal of Informetrics, 2(4), 317–325.

Leydesdorff, L., Wagner, C., Park, H.-W., & Adams, J. (2013). International collaboration in science: The global map and the network. Profesional De La Informacion, 22(1), 87–95.

Marginson, S. (2021). What drives global science? The four competing narratives. Studies in Higher Education, 47(8), 1566–1584.

MDPI (2022). Annual Report 2021. MDPI, Communications & Marketing Department, Basel, https://mdpi-res.com/data/2021_annual_report_mdpi.pdf.

Norman, F. (2012). Megajournals, blog post, Occam’s typewriter, http://occamstypewriter.org/trading-knowledge/2012/07/09/megajournals/.

Orduña-Malea, E., & Aguillo, I. F. (2022). Are link-based and citation-based journal metrics correlated? An Open Access megapublisher case study. https://doi.org/10.1162/qss_a_00199

Oviedo-García, M. A. (2021). Journal citation reports and the definition of a predatory journal: The case of the Multidisciplinary Digital Publishing Institute. Research Evaluation, 30(3), 405–419.

Paasi, A. (2005). Globalisation, academic capitalism, and the uneven geographies of international journal publishing spaces. Environment and Planning A, 37(5), 769–789.

Pajić, D. (2015). Globalization of the social sciences in Eastern Europe: Genuine breakthrough or a slippery slope of the research evaluation practice? Scientometrics, 102(3), 2131–2150.

Paruzel-Czachura, M., Baran, L., & Spendel, Z. (2021). Publish or be ethical? Publishing pressure and scientific misconduct in research. Research Ethics, 17(3), 375–397.

Petersen, A. M. (2019). Megajournal mismanagement: Manuscript decision bias and anomalous editor activity at PLOS ONE. Journal of Informetrics, 13(4), 100974.

Petrou, C. (2020). Guest Post – MDPI’s Remarkable Growth. The Scholarly Kitchen, https://scholarlykitchen.sspnet.org/2020/08/10/guest-post-mdpis-remarkable-growth/.

Pinfield, S., & Johnson, R. (2018). Adoption of open access is rising – but so too are its costs. LSE Impact Blog, https://blogs.lse.ac.uk/impactofsocialsciences/2018/01/22/adoption-of-open-access-is-rising-but-so-too-are-its-costs/.

Pinfield, S., Salter, J., & Bath, P. A. (2017). A “Gold-centric” implementation of open access: Hybrid journals, the “Total cost of publication”, and policy development in the UK and beyond. Journal of the Association for Information Science and Technology, 68(9), 2248–2263.

Piwowar, H., Priem, J., Larivière, V., Alperin, J. P., Matthias, L., Norlander, B., Farley, A., West, J., & Haustein, S. (2018). The state of OA: A large-scale analysis of the prevalence and impact of Open Access articles. PeerJ, 2018(2), e4375.

Pollock, D., & Michael, A. (2021). News & Views: Open Access Market Sizing Update 2021. Delta Think, 2021. https://deltathink.com/news-views-open-access-market-sizing-update-2021.

Repiso, R., Merino-Arribas, A., & Cabezas-Clavijo, Á. (2021). El año que nos volvimos insostenibles: Análisis de la producción española en sustainability (2020). Profesional De La Informacion, 30(4), e300409.

Saha, S., Saint, S., & Christakis, D. A. (2003). Impact factor: A valid measure of journal quality? Journal of the Medical Library Association, 91(1), 42–46.

Severin, A., & Low, N. (2019). Readers beware! Predatory journals are infiltrating citation databases. International Journal of Public Health, 64(8), 1123–1124.

Siler, K., Larivière, V., & Sugimoto, C. R. (2020). The diverse niches of megajournals: Specialism within generalism. Journal of the Association for Information Science and Technology, 71(7), 800–816.

Simard, M.-A., Ghiasi, G., Mongeon, P., & Lariviere, V. (2020). National differences in dissemination and use of open access literature. PLoS ONE, 17, e0272730.

Spezi, V., Wakeling, S., Pinfield, S., Creaser, C., Fry, J., & Willett, P. (2017). Open-access mega-journals: The future of scholarly communication or academic dumping ground? A review. Journal of Documentation, 73(2), 263–283.

Suber, P. (2019). Open Access. The MIT Press Essential Knowledge Series.

Teodorescu, D., & Andrei, T. (2011). The growth of international collaboration in East European scholarly communities: A bibliometric analysis of journal articles published between 1989 and 2009. Scientometrics, 89(2), 711–722.

Van Noorden, R. (2020). Open-access Plan S to allow publishing in any journal. Nature. https://doi.org/10.1038/d41586-020-02134-6

Zelnio, R. (2012). Identifying the global core-periphery structure of science. Scientometrics, 91(2), 601–615.

Zhang, L., & Watson, E. M. (2017). Measuring the Impact of Gold and Green Open Access. Journal of Academic Librarianship, 43(4), 337–345.

Funding

Open access funding provided by University of Debrecen.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Csomós, G., Farkas, J.Z. Understanding the increasing market share of the academic publisher “Multidisciplinary Digital Publishing Institute” in the publication output of Central and Eastern European countries: a case study of Hungary. Scientometrics 128, 803–824 (2023). https://doi.org/10.1007/s11192-022-04586-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-022-04586-1