Abstract

The average Category Normalised Citation Impact (CNCI) of an institution’s publication output is a widely used indicator for research performance benchmarking. However, it combines all entity contributions, obscuring individual inputs and preventing clear insight and sound policy recommendations if it is not correctly understood. Here, variations (Fractional and Collaboration [Collab] CNCI)—which aim to address the obscurity problem—are compared to the Standard CNCI indicator for over 250 institutions, spread globally, covering a ten-year period using Web of Science data. Results demonstrate that both Fractional and Collab CNCI methods produce lower index values than Standard CNCI. Fractional and Collab results are often near-identical despite fundamentally different calculation approaches. Collab-CNCI, however, avoids assigning fractional credit (which is potentially incorrect) and is relatively easy to implement. As single metrics obscure individual inputs, institutional output is also deconstructed into five collaboration groups. These groups track the increasing international collaboration trend, particularly highly multi-lateral studies and the decrease in publications authored by single institutions. The deconstruction also shows that both Standard and Fractional CNCI increase with the level of collaboration. However, Collab-CNCI does not necessarily follow this pattern thus enabling the identification of institutions where, for example, their domestic single articles are their best performing group. Comparing CNCI variants and deconstructing portfolios by collaboration type is, when understood and used correctly, an essential tool for interpreting institutional performance and informing policy making.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

While the primary aim of academic research is the enrichment and furthering of knowledge, the proximate unit of research output (particularly in the sciences) is generally a published set of results. Publication rates, however, can vary dramatically between fields, as can citation accumulation (e.g., Garfield, 1977; Hurt, 1987). Citations are widely seen as an indicator of the impact, significance, and influence of academic publications (Cole & Cole, 1973; Garfield, 1955) and, for reasonably large samples, there appears to be a sound relationship between citation counts (specifically, to journal articles) and peer judgments of quality (i.e., higher citation counts are associated with better peer judgment) in science, technology, and many social science fields, although this is less evident in the arts and humanities (Aksnes et al., 2019; Evidence, 2007; Waltman, 2016).

Because citation accumulation is variable in its development, normalisation of accumulated citation counts is required to analyse citation impact across different fields and time periods in order to enable comparisons between institutions, countries etc. Although citation accumulation broadly correlates with peer judgments, there is no definitive ‘reference set’ of research performance measures to which any specific analytical model can be compared. The standard normalisation approach for researchers drawing on data in the Web of Science is to use the Category Normalised Citation Impact (CNCI). This calculates a ratio between an observed count and an expected global average by normalising citation counts by the three principal variables that affect counts to a research paper: document type (e.g., article, review); publication year; and Web of Science subject category.

CNCI is widely used (as well as other measures) as a standard indicator for both national and institutional comparisons (Jappe, 2020) and considered a key measure by many policymakers. Consequently, it greatly impacts public perception of an entity’s (researcher, institution, country) research portfolio quality and, particularly at the institutional level, can influence funding allocation (Carlsson, 2009). However, a weakness of the CNCI indicator is that it combines all entity contributions, obscuring information on individual inputs and, thereby, preventing clear insight, sound comparisons and policy recommendations (e.g., Szomszor et al., 2021). This is especially important given that the greater the collaboration (i.e., more authors or, particularly, countries) on a published work, the higher that work’s CNCI (Adams et al., 2019; Thelwall, 2020; Waltman & van Eck, 2015). Consequently, despite its prevalence as an indicator, CNCI may have more limited value for management purposes than is often recognised.

It has been suggested that fractional counting (whereby an entity’s share on a research paper is made equal to the fraction of the author addresses from that entity) offers an approach that ‘corrects’ standard CNCI with a more ‘accurate’ reflection of entity input (Waltman & van Eck, 2015). Outcomes of fractional counting have been discussed by several authors (e.g., Aksnes et al., 2012; Burrell & Rousseau, 1995; Egghe et al., 2000; van Hooydonk, 1997); investigated relative to institutions (e.g., Leydesdorff & Shi, 2011) and countries (e.g., Glänzel & De Lange, 2002); and used by national research councils to evaluate work (e.g., Sweden: Kronman et al., 2010); a ‘modified fractional counting’ method has also now been formulated (Sivertsen et al., 2019). Furthermore, Gauffriau (2021), in her review of counting methods, noted that almost 70% (22/32) of suggested counting methods from 1981 to 2018 involved fractionalisation. However, while fractionally assigning credit based purely on address data may reflect participation, it may reflect contribution no more accurately than the standard method. Furthermore, the summation of fractional credits continues to obscure the real distribution of the effort contributed and credit acquired through single and variously multiple-authored papers. This results in a continuing deficit in valid information to support research management as opposed to a relatively simplistic point metric of past achievement.

The Institute for Scientific Information (ISI) proposed an alternative approach to calculating citation impact to address the challenge of turning citation data into management information (Potter et al., 2020). This variant index (Collab-CNCI) clarifies entity contributions by considering different levels of domestic and international authorship collaboration through an additional normalisation in the CNCI calculation. The benefit of distinguishing between different national and collaborative authorship patterns was reported by Gorraiz et al. (2012), who analysed these separately to show that Austria’s international collaboration with European neighbours was a major influence on citation outcomes.

Using the Collab-CNCI index, Potter et al. (2020) were able to provide deeper insight into a country’s research portfolio, including article and citation share for each collaboration type, and compare results to other CNCI indicators. This illustrated the importance of highly multi-lateral articles, particularly via citation share, for developing research economies. Economies such as Georgia and Sri Lanka were more negatively affected when ranked by Collab-CNCI, revealing lower relative performance for the article types compared to more developed research economies (e.g., UK, USA, Australia). Potter et al. (2020) recommended that partitioning by collaboration type should be carried out by analysts to enable improved interpretation for research policy and management decision making. However, Potter et al. (2020) considered their chosen dataset, which covered ten years, as a single block (i.e., they did not consider temporal changes in CNCI indicators), which meant that changes in countries’ level of collaboration and any consequent variation in CNCI indicators over time could not be investigated.

Here, we apply the Collab-CNCI indicator at the institutional level comparing outcomes with those of the standard (henceforth referred to as Standard) and Fractional (i.e., Waltman & van Eck, 2015) CNCI. By analysing a set of global institutions annually over ten years, we compare and examine trends in CNCI indicators, as well as the role of collaborations, to better understand (1) differences between the indicator variants, (2) the performance of articles within each collaboration group and (3) how indicator variants influence perceptions of institutional performance. This work builds upon that of Potter et al. (2021).

Methods

The data source was all documents with type “article” indexed in the Web of Science Core Collection (i.e., including the Emerging Sources Citation index—ESCI) over a ten-year period from 2009 to 2018. This was the same source as that used in Potter et al., (2020, 2021). As this methodology used only Web of Science data, it is possible that an equivalent study using data from other indexing services may produce different outcomes.

Institution names were taken from the Enhanced Organisation labels assigned in address data within the Web of Science. To be considered for analysis, institutions had to have (co)authored at least 5000 articles over the ten-year period.

For global coverage, and to expand the work of Potter et al. (2021), institutions were chosen from each of nine geo-political regions (USA and Canada, Mexico and South America, Western Europe, Eastern Europe, Nordic Europe, Middle East-North Africa-Turkey [MENAT], Southeast Asia, China, and Asia-Pacific [APAC]) for analysis (Supplementary Tables 1 and 2). The list of thirty institutions for each region was manually compiled by choosing a structured selection of institutions with high, medium, and low publication volume. For completeness, Africa was included as an additional region even though only 11 institutions covering three countries (Nigeria, South Africa, Uganda) met the 5000-article threshold. The chosen institutions produced a dataset covering 5,470,998 unique articles. This covered 35% of all articles in the original data source (i.e., 5,470,998 articles out of a possible 15,650,080). The calculated Standard, Collab, and Fractional CNCI indicators (explained below) for each institution were then compared to one another. We note that a value of one for any calculated CNCI value from each method represents world average. Consequently, a CNCI value of > 1 represents performance above world average, and vice versa.

Standard CNCI was calculated conventionally by normalising an article’s citation count by document type, year of publication, and Web of Science journal-based subject category. These categories cover 254 subjects with journals sometimes assigned to multiple ones; in these cases, the average CNCI of all an article’s categories was assigned to the article. Each unique institution listed in the article address was allocated full credit. Thus, if an article with two institutions had a CNCI of 1.1, each institution was awarded an article count of 1.0 and a CNCI value of 1.1. An institution’s mean CNCI was then calculated by summing the CNCI of all the articles on which the institution was recorded in an author address and dividing this by the number of such articles, over the entire dataset.

Collab-CNCI, defined by Potter et al. (2020), uses collaboration type as an additional normalisation factor to those already considered by Standard CNCI. The five collaboration types, also based on author address data, are: (i) domestic single (authors from the same institution); (ii) domestic multi (authors from more than one institution within the same country); (iii) international bilateral (two countries); (iv) international trilateral (three countries); and (v) international quadrilateral-plus (four or more countries). Though this latter, most collaborative, group covered ~ 4% of the articles, it accounted for ~ 9% of all citations (a similar share to that of international trilateral). The international allocations are made regardless of the number of institutions. Note that, due to multiple affiliations, a single author article could fall into any of these types, although this is exceptional. Each institution on every article was assigned the appropriate collaboration type. As with Standard CNCI, each institution was given full credit and the full CNCI value for an article, but the mean CNCI for each collaboration-type was calculated separately (e.g., only an institution’s international trilateral articles were considered when calculating the institution’s CNCI for the international trilateral article type). We refer the reader to Potter et al. (2020) for further method justification.

Fractional CNCI was calculated at the author level following the method of Waltman and van Eck (2015). This method assigns credit to each author, institution, country etc. on a publication based upon the total, deduplicated number of each on a document. This credit is then multiplied by the article’s CNCI to calculate the fractional CNCI value. An institution’s mean CNCI was then calculated by summing the CNCIs on which that institution was recorded in an author address and dividing this by the sum of its total fractional CNCI value. We refer the reader to Waltman and van Eck (2015), as well as Potter et al. (2020), for more thorough descriptions and methodology. However, at the author level, not all researchers could be assigned to an organisation (or a country) due to incomplete address data. Consequently, when analysing data at the author level, approximately 3% of articles were not included (when considering the top 20 organisations by article count). For example, the University of Toronto was assigned to 108,047 articles at the article level, but 103,840 articles (∼96%) at the author level. However, given the size of the dataset, there is no reason to expect that outcomes for any one institution would be consistently affected by this.

Results

To illustrate temporal trends in each of the CNCI indicators, the mean Standard, Collab, and Fractional CNCI were plotted for sample institutions from six of the regions. These six provide an initial global comparison of institutions within strongly developed (Denmark, USA), recently developed (China), and developing (Estonia, Malaysia, Saudi Arabia) research economies. Calculated indicator values for all institutions are listed in Supplementary Table 1.

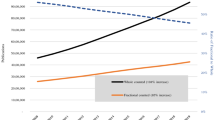

The data show that, in general, Standard CNCI values tend to be higher and show greater variation over time than Collab and Fractional CNCI. Consequently, institutions perform closer to, or below, world average (which, by definition, has a mean value of one) using these latter indicators (Fig. 1).

For example, University of Tartu, Estonia, remained above world Standard CNCI average over all years, substantially increasing its value (1.05 in 2009 compared to 1.85 in 2018). However, this institution is initially below world average using Collab and Fractional CNCI, only exceeding Collab-CNCI world average in 2014 (where it remains) and surpassing fractional world average in only two years (2012 and 2016). Conversely, Tsing Hua University, Beijing, China, sees consistent improvement and little variation across all indicators (though this flattens off and slightly decreases in 2018). University of Copenhagen, Denmark, shows a notable increase in Standard CNCI from 2009 to 2012 before stabilising around 1.8. Collab and Fractional values, however, have remained largely static (around 1.25), with only a minor improvement between 2009 and 2018 (~ 0.06). Conversely, the University of California San Diego, USA, changes from a relatively consistent performer for CNCI (~ 1.9), to a slight decline (0.09 decrease) with Collab-CNCI, and more moderate decline with Fractional CNCI (0.2 decrease). King Saud University, Riyadh, Saudi Arabia, shows a decrease in Standard CNCI performance from 2011 to 2014 but a significant increase (~ 25%) afterwards. However, its Fractional and Collab CNCI were still only just approaching world average in 2018. The University of Malaya, Kuala Lumpur, Malaysia, shows a continual increase in Standard CNCI, though at a slower rate post-2014, and was significantly above world average in 2018; Fractional and Collab CNCI values, however, never surpassed world average and have decreased post-2015.

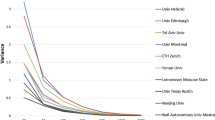

As noted previously, the gross indicator values only provide a single snapshot of an institution’s performance. To deconstruct this, Fig. 2 illustrates the indicator values by collaboration group type (data for all institutions is in Supplementary Table 2). As research becomes more collaborative the indicator value invariably increases. This is particularly noticeable for the Standard and Fractional indicators—domestic single articles have the lowest value; international quadrilateral-plus articles have the highest value. In fact, quadrilateral-plus articles provide the highest values for all institutions over all years bar one (2011 for the University of Tartu where international trilateral is the best performing group). For Standard CNCI, quadrilateral-plus values are generally ~ 2–3 times greater than the other collaborative groups and show greater variance over the ten years.

The collaboration normalising ‘adjustment’ of Collab-CNCI is clear: values for international quadrilateral-plus articles are suppressed resulting in net CNCI values comparable to the other collaboration groups (which are also suppressed in terms of credit or value). King Saud University, for example, has all international quadrilateral-plus articles above world average by the Standard and Fractional indicators, but this is only the case for 2016 to 2018 when considering Collab-CNCI.

For Collab-CNCI, unlike the other two indicators, greater collaboration does not necessarily relate to higher index values because of the like-for-like normalisation within collaboration groups. In fact, all institutions show crossovers between the different collaboration groups. For example, domestic single articles were the best performing group for the University of Malaya in 2011 and 2013, though its more recent performance has rapidly declined. International trilateral articles were the best performing for Tsing Hua University in 2011, 2013 and 2014; international quadrilateral-plus was not the best performing group for UC San Diego until 2012. Indeed, domestic single articles regularly outperform articles with greater institutional collaboration (e.g., University of Copenhagen, where domestic single articles are either the second or third best performing group in any year within the period).

There are broadly similar trend profiles between the three indicators for a given institution, despite the suppression. There are, however, some exceptions. For example, the value of Fractional CNCI for University of Tartu in 2012 spiked above five times world average, while both Standard and Collab CNCI values followed their respective trends with values around 4 and 1.5, respectively.

The deconstruction by collaboration type highlights the changes in article output over the period. While the CNCI indicator values (both overall and by collaboration type) remain stable, the frequency of internationally collaborative articles continuously increases over the period (Fig. 3). King Saud University dramatically increased its proportion of internationally collaborative output between 2009 and 2011 from ~ 45 to ~ 70%, increasing again to ~ 80% in 2016. This institution remains the most internationally collaborative of those highlighted here while others typically show a more gradual increase in international collaboration.

Conversely, domestic single output decreases in percentage share for all institutions. Tsing Hua University, University of Malaya, and King Saud University saw domestic single output halved; University of Copenhagen and University of Tartu almost halved. Domestic multilateral output has remained relatively stable for University of Copenhagen and University of Malaya, while decreasing ~ 20% for UC San Diego (64% to 52%), and almost 50% for University of Tartu and King Saud University. Tsing Hua University is the only institution to see an increase in domestic multi-institutional output (37% to 43%). Arguably, international bilateral output has remained the most stable with only incremental changes (King Saud University bilateral output increased ~ 40% between 2009 and 2011 but has remained relatively stable since). International trilateral trends vary substantially: King Saud University output doubled; University of Copenhagen remained constant; while Tsing Hua University and UC San Diego growth matched that of their international quadrilateral-plus articles. Increases in the quadrilateral-plus group have been most significant in some cases (University of Tartu has almost tripled quadrilateral-plus output; University of Malaya output increased over four-fold).

Though share of total output has decreased for domestic single authored articles, its absolute volume has remained generally stable for all institutions. Considering other collaboration groups, absolute output has increased steadily for UC San Diego, University of Copenhagen, King Saud University, and University of Malaya. These absolute increases are, however, dwarfed by Tsing Hua University, which saw a considerable absolute increase in international bilateral and domestic multi-institutional articles. University of Tartu article output over the period increased ~ 50%, driven by greater volumes of international collaborative papers as domestic output remained static. Importantly, this resulted in international quadrilateral-plus articles becoming its largest contributor (in 2018) in absolute and percentage terms. For the other institutions, international quadrilateral-plus, by the end of the period, had similar output share to domestic multi-institutional articles for University of Copenhagen, King Saud University, and University of Malaya. UC San Diego and Tsing Hua University doubled and quadrupled, respectively, their quadrilateral-plus output but, given their substantial output in other groups, this group (as well as international trilateral) still provides the lowest share.

Discussion

The results presented in this paper demonstrate clear differences in outcome between the variant methodologies used to calculate Category Normalised Citation Impact (CNCI). The application of both Fractional and Collab CNCI results in lower (and similar) net values compared with Standard CNCI but Collab-CNCI adds significant management information content in revealing the deconstructed sources of citation impact and the role of collaboration in raising the indicator value.

Indicator comparisons

Fractional and Collab CNCI almost always produced a lower indicator value than Standard CNCI. This is because, in the case of Fractional (Waltman & van Eck, 2015), as the label implies, institutions will generally be rewarded with only an arithmetical fraction of an article’s CNCI value where that article is the product of multi-institutional research. For Collab-CNCI (Potter et al., 2020), other institutions’ contributions are considered but this is done by normalising the citation count at the level of the collaboration group (where groups are demarcated by the degree of collaboration).

Fractional and Collab CNCI produced similar values and trends to one another. Since collaborative authorship almost universally results in enhanced citation counts, it can be argued that an indicator that accounts for the collaboration component by partitioning will provide a ‘truer’ depiction of normalised impact. As noted, ‘truth’ is contestable but we would agree that partitioning may indeed be fairer. This similarity in outcome, therefore, raises the question of whether one is better suited to evaluative purposes or if they could be used interchangeably or in a complementary fashion.

Aksnes et al. (2012) and Potter et al. (2020) found similar trends (i.e., citation indicator values were lower using fractional counting compared to full counting) when analysing countries and this can also be applied to institutional analysis. Both those papers also stated that the difference between whole and fractionalised counts was greatest for those countries with the highest proportion of internationally co-authored articles.

Based on their analysis, Aksnes et al. (2012) believe strong arguments exist to favour fractional counting over full counting (especially at national level) stating that national citation indicators “will have greater validity and better justification when fractionalised counts are used.” This was based upon entities being credited with the contributions of other entities’ scientists in whole counting, where those outside contributions may have been greater.

Fractional counting, while seemingly straightforward, can be complicated to calculate (Waltman & van Eck, 2015) and take many different forms (Gauffriau, 2021). Aksnes et al. (2012), however, used a simplified version of fractional counting, relative to the method of Waltman and van Eck (2015) (used here) and in Potter et al. (2020). Aksnes et al. (2012) method was based on the number of authors assigned to each country relative to the total number of authors (e.g., if two of three total authors are assigned to country A, then country A would receive two-thirds [2/3] of the credit and CNCI value).

Aksnes et al. (2012) stated that the application of these variant indicators would have a major impact on science policy and therefore indicators should be based on sound and consistent methodology, a sentiment with which we agree. However, based on results presented here, and in Potter et al. (2020), we advocate the use of Collab CNCI over those fractional counting methods.

The assignation of credit based on the number of entities (countries, institutions, etc.) based on author affiliations does not necessarily reflect contributed efforts (though it may reflect participation). This is exemplified by Vollset et al. (2020), a global burden disease study, whereby contributions, as presented in the article, were not evenly distributed between all 23 authors for the required tasks; and Lozano et al. (2020), a global study on health service effective coverage, with well over 250 contributors, though only six contributed to the first manuscript draft. Though Aksnes et al. (2012) stated that whole counting can provide entities with the contributions of others, fractional counting may still assign incorrect contributions. Indeed, the summed value of fractional credits is a ‘black box’ in which the component contributions are obscured, whereas the Standard method is an overt calculation. Any fractional credit arithmetically assigned to any one entity based on author affiliations must generally be subject to reinterpretation on peer review or (better) author agreement. Notably, Nordic countries have used fractional counting in analyses to allocate institutional funding (e.g., Research Council of Sweden (Kronman et al., 2010; Vetenskapsrådet, 2014; Nordforsk 2010, 2011), with the Research Council of Sweden choosing fractional counting, in part, because, in whole counting, “the same article can be counted several times” (Vetenskapsrådet, 2014).

Fractional counting, by its nature and by explicit statements from its proponents, suggests a refinement on, or correction to, the Standard method. This cannot be tested since no ‘correct’ reference value exists, but it may thereby falsely suggest greater ‘accuracy’ when this is not the case. Conversely, Collab-CNCI does not assign partial (and thus potentially incorrect) credit and, while the contributions of others may be applied to an entity, normalising by collaboration type addresses this and allows similarly collaborative articles to be compared to one another. Collab-CNCI is also no more problematic to describe or understand than Standard CNCI.

It is important to add that, to use any of these metrics, research and policy managers must understand their inherent differences and the relationship between different results. Without this, policy making will remain partly uninformed.

Collaboration

All highlighted institutions demonstrated a continuous increase in international collaboration over the period (Fig. 3), confirming the results of others (e.g., Adams & Gurney, 2018; Ribeiro et al., 2018). The increase in international collaboration in most circumstances is due to a combination of decreases in domestic single article output and increases in quadrilateral-plus article output (Fig. 2); institutions are becoming more collaborative, particularly highly internationally collaborative.

Importantly, however, the CNCI indicator values did not correspondingly show a continuous increase over time (Fig. 1). Figure 2 demonstrated that most of the ‘value’ of net CNCI is sourced from the most international collaborative articles. This most collaborative group is also the most affected by using a Fractional or Collab CNCI approach and contains the highest variance temporally, due to this group being the smallest by article count (international quadrilateral-plus articles accounted for ~ 4% of the dataset).

The rapid increase in multilateral articles, combined with their inherently greater CNCI capability (e.g., Adams et al., 2019; Thelwall, 2020; Waltman & van Eck, 2015) are likely to influence institutional indicators even more heavily in the future. Crucially, in terms of Collab-CNCI, it is the performance of these multilateral articles relative to their direct peers (e.g., how do an institution’s international bilateral articles compare to other institution’s international bilateral articles?) which matters. This can highlight what may seem like atypical behaviour, whereby less collaborative articles perform the best on a true like-for-like comparison, as highlighted by University of Malaya (Fig. 2) and in Adams et al. (2022). Deconstructing an institution’s output into collaboration types is therefore essential to assess whether greater (international) collaboration is positively or negatively influencing an institution’s CNCI.

Conclusions

The Standard method for calculating Category Normalised Citation Impact (CNCI), which is widely used in research assessment and policy, is flawed in subsuming all entity contributions, thus hiding individual input and weakening like-for-like comparisons. Our results show that variant methods (Fractional and Collaboration [Collab] CNCI) not only offer alternatives but that the influence of collaboration produces near similar values and trends. Fractional CNCI, however, appears to ‘correct’ the Standard method yet hiding disaggregated component contributions. While each method complements one another, we advocate the use of Collab-CNCI as it explicitly takes collaboration into account without fractionating contributions, follows the established normalisation approach of Standard CNCI and calculates outcomes that retain a clear origin. Deconstructing research output into collaboration types is essential and provides an additional level of information and insight that cannot be gained from the single CNCI values. This highlights the continuous trend of increasing international collaboration, across all regions, in contrast to other CNCI metrics. Deconstruction also reveals that an institution’s most internationally collaborative articles are not necessarily its best performers. Research managers and funders can better contextualise relative performance across and within institutions by deconstructing research output through Collab-CNCI and, consequently, make better informed funding decisions.

References

Adams, J., & Gurney, K. A. (2018). Bilateral and multilateral coauthorship and citation impact: Patterns in UK and US international collaboration. Frontiers in Research Metrics and Analysis. https://doi.org/10.3389/frma.2018.00012

Adams, J., Pendlebury, D. A., & Potter, R. W. K. (2022) Making it count: Research credit management in a collaborative world, Clarivate, London. ISBN 978-1-8382799-7-4

Adams, J., Pendlebury, D. A., Potter, R. W. K., & Szomszor, M. (2019). Multi-authorship and research analytics. Clarivate Analytics, London. ISBN 978-1-9160868-6-9

Aksnes, D. W., Langfeldt, L., & Wouters, P. (2019). Citations, citation indicators, and research quality: An overview of basic concepts and theories. SAGE Open, 9(1), 1–17. https://doi.org/10.1177/2158244019829575

Aksnes, D. W., Schneider, J. W., & Gunnarsson, M. (2012). Ranking national research systems by citation indicators. A comparative analysis using whole and fractionalised counting methods. Journal of Informetrics, 6(1), 36–43. https://doi.org/10.1016/j.joi.2011.08.002

Burrell, Q., & Rousseau, R. (1995). Fractional counts for authorship attribution: A numerical study. Journal of the American Society for Information Science, 46, 97–102. https://doi.org/10.1002/(SICI)1097-4571(199503)46:2%3c97::AID-ASI3%3e3.0.CO;2-L

Carlsson, H. (2009). Allocation of research funds using bibliometric indicators—Asset and challenge to Swedish higher education sector. InfoTrend, 64(4), 82–88.

Cole, J. R., & Cole, S. (1973). Social stratification in science. The University of Chicago Press.

Egghe, L., Rousseau, R., & van Hooydonk, G. (2000). Methods for accrediting publications to authors or countries: Consequences for evaluation studies. Journal of the American Society for Information Science, 51(2), 145–157. https://doi.org/10.1002/(SICI)1097-4571(2000)51:2%3c145::AID-ASI6%3e3.0.CO;2-9

Evidence. (2007). The use of bibliometrics to measure research quality in UK higher education institutions. Report to Universities UK. Universities UK. ISBN 978 1 84036 165 4. Retrieved September 17, 2021 from https://dera.ioe.ac.uk//26316/

Garfield, E. (1955). Citation indexes for science. A new dimension in documentation through association of ideas. Science, 122, 108–111. https://doi.org/10.1126/science.122.3159.108

Garfield, E. (1977). Can citation indexing be automated? Essay of an information scientist, 1 (pp. 84–90). ISI Press.

Gauffriau, M. (2021). Counting methods introduced into the bibliometric research literature 1970–2018: A review. Quantitative Science Studies, 2(3), 932–975. https://doi.org/10.1162/qss_a_00141

Glänzel, W., & De Lange, C. (2002). A distributional approach to multinationality measures of international scientific collaboration. Scientometrics, 54(1), 75–89. https://doi.org/10.1023/a:1015684505035

Gorraiz, J., Reimann, R., & Gumpenberger, C. (2012). Key factors and considerations in the assessment of international collaboration: A case study for Austria and six countries. Scientometrics, 91(2), 417–433. https://doi.org/10.1007/s11192-011-0579-3

Hurt, C. D. (1987). Conceptual citation differences in science, technology, and social sciences literature. Information Processing & Management, 23, 1–6. https://doi.org/10.1016/0306-4573(87)90033-1

Jappe, A. (2020). Professional standards in bibliometric research evaluation? Ameta-evaluation of European assessment practice 2005–2019. PLoS ONE, 5(4), e0231735. https://doi.org/10.1371/journal.pone.0231735

Kronman, U., Gunnarsson, M., & Karlsson, S. (2010). The bibliometric database at the Swedish Research Council—Contents, methods and indicators. Swedish Research Council, Stockholm

Leydesdorff, L., & Shin, J. C. (2011). How to evaluate universities in terms of their relative citation impacts: Fractional counting of citations and the normalization of differences among disciplines. Journal of the American Society for Information Science and Technology, 62(6), 1146–1155. https://doi.org/10.1002/asi.21511

Lozano, R., et al. (2020). Measuring universal health coverage based on an index of effective coverage of health services in 204 countries and territories, 1990–2019: A systematic analysis for the Global Burden of Disease Study 2019. The Lancet, 396(10258), 1250–1284. https://doi.org/10.1016/S0140-6736(20)30750-9

Nordforsk. (2010). Bibliometric research performance indicators for the Nordic Countries. A publication from the NORIA-net. In J. W. Schneider (Ed.), The use of bibliometrics in research policy and evaluation activities. NordForsk.

Nordforsk. (2011). Comparing research at Nordic Universities using bibliometric indicators. A publication from the NORIA-net. In F. Piro (Ed.), Bibliometric indicators for the Nordic Universities. NordForsk.

Potter, R. W. K., Szomszor, M., & Adams, J. (2020). Interpreting CNCIs on a country-scale: The effect of domestic and international collaboration type. Journal of Informetrics, 14(4), 101075. https://doi.org/10.1016/j.joi.2020.101075

Potter, R. W. K., Szomszor, M., & Adams, J. (2021). Research performance indicators and management decision making: Using Collab-CNCI to understand institutional impact. In: Proceedings of the 18th international conference on scientometrics and informetrics, 913–920.

Ribeiro, L. C., Rapini, M. S., Silva, L. A., & Albuquerque, E. A. (2018). Growth patterns of the network of international collaboration in science. Scientometrics, 114, 159–179. https://doi.org/10.1007/s11192-017-2573-x

Sivertsen, G., Rosseau, R., & Zhang, L. (2019). Measuring scientific contributions with modified fractional counting. Journal of Informetrics, 13(2), 679–694. https://doi.org/10.1016/j.joi.2019.03.010

Szomszor, M., Adams, J., Fry, R., Gebert, C., Pendlebury, A. D., Potter, R. W. K., & Rogers, G. (2021). Interpreting bibliometric data. Frontiers in Research Metrics and Analytics, 5, 30. https://doi.org/10.3389/frma.2020.628703

Thelwall, M. (2020). Large publishing consortia produce higher citation impact research but coauthor contributions are hard to evaluate. Quantitative Science Studies, 1(1), 290–302. https://doi.org/10.1162/qss_a_00003

van Hooydonk, G. (1997). Fractional counting of multiauthored publications: Consequences for the impact of author. Journal of the American Society for Information Science, 48(10), 944–945. https://doi.org/10.1002/(SICI)1097-4571(199710)48:10%3c944::AID-ASI8%3e3.0.CO;2-1

Vetenskapsrådet. (2014). Guidelines for using bibliometrics at the Swedish Research Council. The Swedish Research Council, Stockholm.

Vollset, S. E., et al. (2020). Fertility, mortality, migration, and population scenarios for 195 countries and territories from 2017 to 2100: A forecasting analysis for the Global Burden of Disease Study. The Lancet, 396(10258), 1285–1306. https://doi.org/10.1016/S0140-6736(20)30677-2

Waltman, L. (2016). A review of the literature on citation impact indicators. Journal of Informetrics, 10, 365–391. https://doi.org/10.1016/j.joi.2016.02.007

Waltman, L., & van Eck, N. J. (2015). Field-normalized citation impact indicators and the choice of an appropriate counting method. Journal of Informetrics, 9(4), 872–894. https://doi.org/10.1016/j.joi.2015.08.001

Acknowledgements

This paper is an extended version of a conference proceeding originally presented at the 18th International Society for Scientometrics and Informetrics conference in 2021. We thank the editor and two anonymous reviewers for their comments.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

All authors are associated with the Institute for Scientific Information, which is part of Clarivate, the owner of the Web of Science.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Potter, R.W.K., Szomszor, M. & Adams, J. Comparing standard, collaboration and fractional CNCI at the institutional level: Consequences for performance evaluation. Scientometrics 127, 7435–7448 (2022). https://doi.org/10.1007/s11192-022-04303-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-022-04303-y