Abstract

The citations count is flawed but it still the most common way of measuring the academic impact used by scholarly journals (Impact Factor), individual researchers (h-index) and funding agencies (a proxy for quality of research). Individual papers should attract citations depending upon the importance and usefulness of the results presented. However, large enough data sets reveal that there are parameters independent of individual papers' quality that can determine an average citation rate. Here, we examine papers (4756 in total) published in six selected tribology journals in a six-year window between January 2010 and December 2015. Citations were retrieved from the Web of Science and compared with their (1) manuscript length, (2) number of authors, (3) number of affiliated institutions, (4) number of international co-authors, (5) number of cited references, (6) number of words in the title, and (7) mode of publication (open versus paid access). The results revealed that citations received by papers published in tribology journals are affected by all of these parameters. This is a significant finding for authors wishing to increase the impact of their research. This knowledge can be used effectively at the manuscript planning and writing stages to support scientific merit. We suggest that the significance of parameters not directly related to the quality of a scholarly paper will become more critical with the rise of alternative ways of measuring impact including novel generation of paper metrics (e.g., Eigenfactor, SJR), social mentions, and viral outreach.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

The judgment of science's quality is necessary, as individual scientists and research fields compete for limited resources. Citations are used for an evaluation of a scientists' reputation (h-index: h papers with at least h citations each) (Bornmann and Daniel 2009), measuring the quality of research (number of citing articles excluding self-citations) (Flatt et al. 2017) and impact of scholarly journals (Impact Factor (IF)): the number of citations divided by the number of published papers) (Amin and Mabe 2000). It was found that chances of becoming a Principal Investigator (PI) are directly related to the number of papers that receive more citations than the average for the journal in which they were published (citations/IF > 1) (Dijk et al. 2014). Many international funding agencies use the h-index to successfully assess the PI's ability to conduct the proposed research (Edwards and Roy 2017). However, there are signs that the citation-based system is affected by the flood of mediocre papers, self-citation cartels, and lengthy reference lists, which suggests the need for a new means of how success in science is measured (Fire and Guestrin 2019).

Ideally, there should be a direct correlation between the paper citations and the importance and usefulness of the results presented. One of the early attempts to grasp the nature of citations was Merton's normative citation theory. He simply stated that the documents are cited when they influenced the reader (Merton 1973) . Later, a number of other factors, besides cognitive influence and peer recognition were identified.

Reputation and esteem are factors increasing not only chances for an academic promotion (Petersen et al. 2014) or acquisition of research funding (Bol et al. 2018), but can also influence the readership of outputs (Petersen et al. 2014). The more successful the researcher is, the more citations their publications are likely to receive, which allows them to become even more successful. This is called the Matthew Effect, and the term was coined by (again) (Merton 1968). Such a positive feedback loop also exists in the case of "reputation" of individual publication: early citation counts correlate with the overall number of gathered citations in a longer time frame (Adams 2005).

It takes time to build an academic reputation through high-quality outputs. However, data sets provide supportive evidence for the conclusion that there are parameters not directly related to the quality of paper that affect the mean citation rate. Moreover, the authors can easily take advantage of these parameters, as long as they understand the underlying principles. For instance, several studies confirmed the importance of the title length, with a majority of reports showing a negative correlation between the number of words in the title and citation count (Letchford et al. 2015; Bramoullé and Ductor 2018), i.e. shorter titles generate more citations. However, it was observed that there is a difference between the fields, e.g., Wesel and co-workers (Wesel et al. 2014) showed that in sociology and applied physics, shorter titles seemed to result in more citations, but in medicine the effect was opposite. Haustein and co-workers reported similar differences between life and earth sciences, natural sciences and engineering (negative) versus mathematics and computer science, social sciences and humanities (positive) (Haustein et al. 2015). This correlation might also vary within the field over time. Guo and co-workers showed dynamic changes of influence of the title length on citation count analysing over 300,000 papers in the economy (Guo et al. 2018). The authors observed that correlation changed around the year 2000 from negative to positive. This was explained by the increasing importance of online search engines (Web of Knowledge was launched in 2002 and Scopus in 2004). "Catchy" and short titles perform better when searches are done manually. However, longer, and more descriptive titles generate more "hits" in online searches resulting in improved discoverability and higher citation counts. It is worth noting, that Wesel and co-workers (who claimed differences in correlation between fields) completed their survey based on data from 1996 to 2005, i.e., exactly during the transition stage, which could affect their results (Wesel et al. 2014). Nevertheless, Haustein and co-workers focused on papers published in 2012 and still observed differences between the fields (Haustein et al. 2015). One way to increase the length (if one judiciously wishes to) of the title is to use a colon (Wesel et al. 2014; Hudson 2016). It also appears that longer titles correlate, to some extent, with a larger number of co-authors (Hudson 2016).

In fact, the number of authors is an important factor influencing the overall citation count. Two main drivers rationalise this. Firstly, more authors bring more competencies and knowledge, theoretically increasing the paper's scientific quality. Secondly, more collaborators increase a paper's visibility by exposure to broader networks of professional contacts (who will share, like, comment and might eventually cite given publication). Such a positive association between the number of co-authors and citation counts has been repeatedly reported (Haustein et al. 2015; Wesel 2016; Fox et al. 2016). Surprisingly, this is not true for all scientific domains. For instance, Bornmann and co-workers did not found any correlation between the number of authors and citations in chemistry (journal studied: Angewandte Chemie International Edition) (Bornmann et al. 2012). A lack of such correlation was also observed in an investigation of factors driving citations for Finnish articles (Didegah et al. 2018). The surname of the authors is also an important factor. The authors' articles with a surname beginning with a letter close to the beginning of the alphabet gather more citations than those unfortunate authors whose surname is at the end of the alphabet (Tregenza 1997). Also, the nationality of the authors plays a role (Royle et al. 2013).

In summary, there is some ambiguity in case of the title length and the authorship and their impact on citations. This is mainly due to specific characteristics of different academic fields. However, some factors seem to be somewhat universal across the disciplines. For instance, there is a consensus that increases in the manuscript length (Fox et al. 2016), the number of references (Fox et al. 2016; Corbyn 2010) and publishing in an open access model (Lewis 2018; Piwowar et al. 2018) results in increased citations. In this study, we provide insight into factors affecting citations of papers published explicitly in the field of tribology.

Methods

We analysed citations data for papers published in six selected tribology journals, with their 2018 impact factors ranging from 1.037 to 3.517. The journal titles are not relevant for this study; hence we label them (A) to (F). Citations data sets were retrieved from the Web of Science in July/August 2018 for the six-year window between January 2010 and December 2015. We analysed 4,756 papers in total. Citations were correlated with (i) manuscript length, (ii) number of references cited, (iii) title length, (iv) number of co-authors, (v) number of author institutions, (vi) number of author countries, and (vii) whether the paper was published behind the paywall or in Open Access (OA).

We analysed the Guide for Authors of each journal for any recommendations regarding manuscript formatting and Open Access charges. Three out of six journals recommend maximum manuscript length for original papers, with limits of 3000, 5000 and 7000 words. However, these are only recommendations, and longer articles are typically considered if the content justifies the article's length. The authors are usually advised that the titles should be concisely worded and informative. For multi-authored papers, the recommendation is that all authors that have made a significant contribution to the paper should be listed, and those who have not contributed to the research but provided support, should be mentioned in an acknowledgements section. All six journals provide an Open Access option, and the article processing charge (APC) varies between 2995 and 3600 USD.

We recognise that the longer the period after paper publication, the more citations that paper is likely to attract. While analysing citations data in 2018, a paper published in 2010 will have much more time to be discovered and cited, than a paper published in 2015. Hence, in this study, we used a "citations per year" measure to normalise citations data by the number of years that passed since the publication.

Distribution of citations as a function of time after publication, normally follows a characteristic citation curve, as described by Amin and Mabe, see Fig. 1 (Amin and Mabe 2000). We analysed citations for our data set and plotted relevant citation curves for six journals, as shown in Fig. 2. The citations distribution is flat in all cases, suggesting that the distribution reached a steady state part of the curve after the initial citation peak, which can be observed in Fig. 1. This justifies using a general "citations per year" measure to compare citation performance in this study.

Results

Manuscript length

This part of the study included all journals with the exception of journal B. This was caused by a technical difficulty in extracting information about the number of pages from the journal B data set. Each plot in Fig. 3 contains a cloud of data points corresponding to individual papers published in the analysed period. Solid lines represent the root mean square (RMS) trends. The correlation is clearly positive for all journals with the proportionality constants ranging between 0.033 (Journal D) and 0.1802 (Journal A). This means that a single page brings between a 0.03 and 0.18 rise in the average citation count per year in a very theoretical approach. However, one needs to keep in mind the low values of the coefficient of determination R2. It has to be mentioned that in this kind of analysis, the data scatter is expected to be very high. Obviously, the citation count depends primarily on the paper quality, and the influence of analysed factors (if it is present indeed) is not easy to be distinguished. This problem is known in studies of this kind. Even the dependence between journal impact factor and future article citations is characterised by a relatively low coefficient of determination R2 = 0.385 (Times Higher Education 2018). When different factors are analysed, such as the manuscript length, number of authors, and references cited, the R2 becomes even smaller (Fox et al. 2016). This is why the R2 values are systematically listed and compared in relation to each other in this study. The use of a large number of data points indicates that even those with low R2 could be considered representative and informative.

References cited

Figure 4 presents clouds of data points corresponding to individual papers published in the analysed period. The correlation is clearly positive for all journals with the proportionality constant ranging between 0.01 (journal D) and 0.03 (journal E). Again, this might be interpreted as follows: the addition of one reference in the bibliography results in a 0.01 to 0.03 increase in the average citation count per year. However, the R2 coefficient values are higher than in the previous case but are still indicating a relatively weak trend. Nevertheless, it is evident that this correlation is present and valid for all analysed journals.

Number of words in the title

For most journals, the correlation between the number of words in the title and citations is negative, except journals A and C (Fig. 5). However, in those two cases, the proportionality constants are relatively low, 0.0136 and 0.003, respectively, with the lowest observed coefficient of determination of 0.0004 and 0.0001. Hence, the title length is not an important factor influencing the mean number of citation of papers published in tribology journals.

Number of authors, institutions, and countries

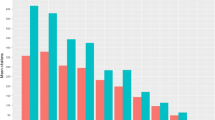

Figures 6 a–c present the relationship between the number of authors, affiliated institutions, and international authors involved in each paper, respectively. Each column represents the average number of citations per year (left-hand side axis), while the black dots represent the sample size in terms of the number of papers in each category (right-hand side axis).

Average annual number of citations for papers published in journals A-F plotted as a function of, a number of authors, b number of affiliated institutions, c number of affiliated countries involved in each paper. Black dots represent the sample size in terms of the number of papers included in the average citations value calculation

Open and paid access models

Figure 7 presents how citations in each analysed journal are affected by the publishing model: classic paid access versus open access. Again, the black dots represent the sample size.

Discussion

Researchers tend to focus on designing reliable experiments, collecting, and analysing data, and finally presenting their arguments in a clear and informative way. For instance, in the article entitled "The Criteria Considered in Preparing Manuscripts for Submission to Biomedical Journals" (Ghahramani and Mehrabani 2013), the authors list the following "essential features of a good manuscript": specific goal and time of the study, having the Ethical Approval, good methodology/study design, good statistical analysis, appropriate and correct interpretation of data, good figures/illustrations, appropriate use of language/grammar. In our study, we postulate an argument that a responsible author will carry out high-quality research and write a paper in such a way that it is easily discoverable and attractive to read, resulting in more citations. This is crucial to avoid the publication to go completely uncited. For example, according to the 2013 study (Ghahramani and Mehrabani 2013), almost 77 per cent of publications in the visual and performing arts were still uncited after five years after publication. The same study showed that STEM subjects also had relatively high rates of uncited work, e.g., 44 per cent of papers in industrial and manufacturing engineering, and 40 per cent of papers in automotive, aerospace and ocean engineering were still uncited after five years from publication.

In five journals for which we were able to extract pagination, we observed a clear correlation between the annual number of citations and the number of pages (Fig. 3). The page limits specified by three out of six journals do not seem to influence the observed relationship. Similar trends have been observed in other disciplines such as ecology (Fox et al. 2016), and general medicine (Falagas et al. 2013). Longer papers present more content, giving the readers more opportunity to find relevant information, leading to more citations. We have also observed that papers with a higher number of references included in the bibliography generated more citations for all six journals. Actually, one could expect that there will be a positive correlation between the length of the paper, and the number of references. Indeed, we confirmed such correlation using Journal A as a representative case (Fig. 8). As for more comprehensive studies and longer manuscripts, papers with a larger number of references have a wider reach and more opportunity for being discovered and subsequently cited. Other authors also observed that the number of references results in higher citation count (Fox et al. 2016; Corbyn 2010). The citation alerts that authors receive each time their paper is cited, perhaps play a role here, by efficiently increasing the reach and disseminating the content automatically to a larger pool of potential readers.

Several studies showed that articles with shorter titles generate more citations (Letchford et al. 2015; Bramoullé and Ductor 2018). However, a recent study claims that papers with longer titles attract fewer citations, not because of the number of words in the title but because, on average, longer titles include more hyphens (Zhou 2019). This study's authors showed that the main factor behind the alleged advantage of short titles is a single punctuation mark. Our results seem to suggest that this correlation is not conclusive, at least not in case of tribology. We observed a weak relationship between the number of words in the title and the number of citations, with two out of six journals showing a positive correlation actually, i.e., papers with longer titles attracted slightly more citations (Fig. 5).

We aimed to explore a relationship between the authorship and the citations count. We evaluated three parameters: number of authors, number of cooperating institutions, and number of countries affiliated with the paper. Intuitively, one could expect a positive correlation between these factors and a citation count. A larger number of authors brings more knowledge and insight, as well as personal networks of contacts, which should directly impact citations. Such effect was reported (Wesel 2016; Fox et al. 2016) with some notable exceptions related to specific fields, e.g. in chemistry Bornmann and co-workers did not find any correlation between the number of authors and the citation count (Bornmann et al. 2012). This is somehow surprising that tribology is different from chemistry in this aspect. We found that an average citation for papers authored by a single researcher is the lowest in the case of all studied journals (Fig. 6a). The increase was up to 60% (journal A), with an average citation count increasing from 1.68 to 2.83 when the number of authors increased from one to five or more. Similar reasoning might be applied in the number of cooperating institutions (Fig. 6b), and the number of affiliated countries (Fig. 6c). The more diverse the environment, the more knowledge and broader expertise lead to the higher quality of the publication and more interest among readers. An increase in the number of authors, cooperating institutions and affiliated countries is also broadening the network of acquaintances and co-workers, which are likely to be notified upon publication and cite it as appropriate. Some discrepancies in the results, e.g., decrease of average citations in journals C and D when 2 and 3 + affiliated countries are compared (Fig. 6c), is likely due to a small sample size (see black dots in Fig. 6a–c).

The intuitive belief that it is desirable for authors to allow as many people as possible to freely access the publication is confirmed by several studies (Piwowar et al. 2018). However, there are also proved differences (in magnitude and not the trend) in the open access effect depending on the field (Li et al. 2018). It was interesting to evaluate the magnitude of the OA effect in tribology (Fig. 7). Out of six journals studied, we excluded three (journals D, E and F) due to a deficient number of open access papers published in the analysed time-window (i.e., only one OA paper in case of journal D). However, in case of journal A and B, it is clear that there is a positive effect of publishing in OA, with up to 55% citations increase in journal B, for which the number of OA papers was the highest among studied journals. It seems that the OA model is not only field specific, but also journal specific: from virtually non-existing to over 50% in case of this study. The OA article processing charges are very similar for all six journals (2995–3600 USD), and we assume that this variable is negligible.

All the above dependencies can be summarised with the consideration of Table 1. The average arguments of paper features add some interesting examples of how the journal policy might affect papers presented therein. We observed that the average paper length varied from journal to journal. In Journal D the papers were generally shorter with an average of 8.17, while in Journal E the average paper length was as high as 13.47. Journals A, B and F have a significantly higher average number of cited references. This could be partially accounted to because those journals are publishing a higher fraction of review papers known to have a much higher number of references and higher citation rates (Amin and Mabe 2000). Journal A has a relatively high average number of institutions, countries, and authors per paper.

Our considerations confirm the weakness of current methods for the evaluation of scientific journals. Statistically significant dependencies that were previously observed in other disciplines were also confirmed in the case of tribology. It was shown that paper citation count depends on its length, number of references, title length, type of affiliations and the number of authors. All those factors are independent of the scientific merit of publication. This supports the ongoing discussion about the best way of evaluating academic papers.

The most popular journal-level metric is the Impact Factor. However, IF depends on many elements not directly related to scientific quality. According to Althouse et al. (2009), the average IF attained in journals representing well-connected branches of science (such as medicine), could be even one order of magnitude higher than IF in weakly related fields (such as social science). The measures that capture these differences were named the second-generation metrics by Moed and Plume (2011). The third-generation metrics provide quality measures based on the analysis of the overall relationships between cited papers. This allows including not only the citation count, but also its quality, and is characterised by such measures as the Eigenfactor (Bergstrom et al. 2008) or Scimago Journal Rank (SJR) (Falagas et al. 2008).

Taylor and Kamalaski have supplemented the above list by adding an additional two journal metrics (2012). The fourth-generation metrics include PDF downloads and HTML views, while the fifth considers the overall activity related to the paper across social media, blogs, Wikipedia pages, reference managers, etc. The most known example of this kind of metric is Altmetrics (Piwowar 2013), which allows tracking researchers' influence related to their papers and the overall impact that might also be exerted by other outputs such as data sets, software, etc. Understandably, the number of social media mentions correlates directly with a classic citation counts (Eysenbach 2011). Nevertheless, Altmetrics is not fully aligned with citations and could be treated as an excellent supplement to more traditional metrics (Costas et al. 2015).

However, all generations of metrics have an inalienable weakness that makes them not fully adequate for measuring research performance. J. Z. Muller has very well addressed this matter (Costas et al. 2015). He shows numerous examples where metrics fixation draws our attention towards the easily measured factors, which are not necessarily the most relevant. The current measure of academic productivity generates a bias towards short-term publication cycle, rather than a long-term research capacity. This creates a danger of a closed-loop where new generations of metrics stimulate new gaming methods. Authors might feel the rising temptation to use dependencies, like those presented in this paper, to improve their personal academic performance rankings (Fire and Guestrin 2019).

To summarise, in this study, we have shown examples of non-scientific paper features that influence the citation counts. We believe that understanding the significance of those features should be part of a researchers' toolkit but should not be the main factor driving the writing behaviour. We suggest that those lessons should be treated as a source of reflection on becoming a better researcher and writing better papers. We appreciate the fact that some results presented in this paper confirm similar findings observed in other disciplines. Indeed, this study confirms that conclusions drawn here are common between tribology and other research disciplines. We offer an original interpretation of the results, formulating a deeper, more profound understanding of the subject. This should be of interest to a broader research community, especially to the tribologists publishing their findings.

Conclusions

In this paper, we looked at factors affecting citations of papers published in tribology journals. We suggested that a responsible author would carry out high-quality research and make sure that their research is easily discoverable and attractive to read, resulting in improved impact. We established that:

-

Mean citation count correlates positively with manuscript length and number of cited references. We wish to underline that this is not an incentive to write long and diluted texts, but rather to publish wholesome research and multi-aspect analysis. The more content publication provides, the more likely it is to gather citations. This is an intrinsic reward system, prohibiting "salami publishing", i.e., partitioning data into smallest, but publishable pieces. The positive impact of a higher number of references is also due to reciprocity—cited authors are more likely to cite us back.

-

The number of collaborating authors, institutions, and countries also positively impacts the citation rate. Again, this should not be a stimulus to create publishing cartels, but rather to include co-authors with complementary expertise. Diversity is a foundation for multi-dimensional analysis of the collected results. There is also an impact of more extensive networks associated with a longer author list. Such larger networks provide better visibility of the publication, and it is a potential source of future citations.

-

We confirmed that publishing in OA provides some advantage over paid access in terms of the number of citations gathered by given publication.

-

Surprisingly, the title length is not an important factor influencing the mean number of citation of papers published in tribology journals. The majority of reports show field specific correlation, but we showed only very weak dependence, which additionally is journal specific.

We expect that the above factors' significance will become more critical with the rise of alternative ways of measuring impact including novel generation metrics (e.g., Eigenfactor, SJR), social mentions, and viral outreach. Hence, there is a need for a holistic approach in publishing, which combines scientific merit, quality in data presentation, and effective knowledge of non-scientific factors contributing to improved citations. However, we should not lose ourselves in anticipating the effect and taking excessive actions that alter its outcome. It is good to remember Goodhart's Law, according to which "When a measure becomes a target, it ceases to be a good measure".

References

Adams, J. (2005). Early citation counts correlate with accumulated impact. Scientometrics, 63, 567–581. https://doi.org/10.1007/s11192-005-0228-9.

Althouse, B. M., West, J. D., Bergstrom, C. T., & Bergstrom, T. (2009). Differences in impact factor across fields and over time. Journal of American Society Information Science Technology, 60, 27–34. https://doi.org/10.1002/asi.20936.

Amin, M., & Mabe, M. (2000). Impact factor: Use and abuse. Perspectives in Publishing, 1, 1–6.

Bergstrom, C. T., West, J. D., & Wiseman, M. A. (2008). The EigenfactorTM metrics. Journal of Neuroscience, 28, 11433–11434. https://doi.org/10.1523/JNEUROSCI.0003-08.2008.

Bol, T., de Vaan, M., & van de Rijt, A. (2018). The Matthew effect in science funding. Proceedings of the National Academy of Sciences U S A, 115, 4887–4890. https://doi.org/10.1073/pnas.1719557115.

Bornmann, L., & Daniel, H.-D. (2009). The state of h index research Is the h index the ideal way to measure research performance? EMBO Reports, 10, 2–6. https://doi.org/10.1038/embor.2008.233.

Bornmann, L., Schier, H., Marx, W., & Daniel, H. D. (2012). What factors determine citation counts of publications in chemistry besides their quality? Journal of Informetrics, 6, 11–18. https://doi.org/10.1016/j.joi.2011.08.004.

Bramoullé, Y., & Ductor, L. (2018). Title length. Journal of Economic Behavior Organization, 150, 311–324. https://doi.org/10.1016/j.jebo.2018.01.014.

Corbyn, Z. (2010). An easy way to boost a paper’s citations. Nature. https://doi.org/10.1038/news.2010.406.

Costas, R., Zahedi, Z., & Wouters, P. (2015). Do, “altmetrics” correlate with citations? Extensive comparison of altmetric indicators with citations from a multidisciplinary perspective. Journal of Association Information Science Technology, 66, 2003–2019. https://doi.org/10.1002/asi.23309.

Van Dijk, D., Manor, O., & Carey, L. B. (2014). Publication metrics and success on the academic job market. Current Biology, 24, R516–R517. https://doi.org/10.1016/j.cub.2014.04.039.

Didegah, F., Bowman, T. D., & Holmberg, K. (2018). On the differences between citations and altmetrics: An investigation of factors driving altmetrics versus citations for finish articles. Journal of the Association for Information Science Technology, 69, 832–843. https://doi.org/10.1002/asi.23934.

Eysenbach, G. (2011). Can tweets predict citations? Metrics of social impact based on twitter and correlation with traditional metrics of scientific impact. Journal of Medical Internet Research. https://doi.org/10.2196/jmir.2012.

Edwards, M. A., & Roy, S. (2017). Academic research in the 21st century: Maintaining scientific integrity in a climate of perverse incentives and hypercompetition. Environment Engineering Science, 34, 51–61. https://doi.org/10.1089/ees.2016.0223.

Falagas, M. E., Kouranos, V. D., Arencibia-Jorge, R., & Karageorgopoulos, D. E. (2008). Comparison of SCImago journal rank indicator with journal impact factor. FASEB Journals, 22, 2623–2628. https://doi.org/10.1096/fj.08-107938.

Falagas, M. E., Zarkali, A., Karageorgopoulos, D. E., Bardakas, V., & Mavros, M. N. (2013). The impact of article length on the number of future citations: A bibliometric analysis of general medicine journals. PLoS ONE, 8, 1–8. https://doi.org/10.1371/journal.pone.0049476.

Fire, M., & Guestrin, C. (2019). Over-optimization of academic publishing metrics: Observing goodhart’s law in action. Gigascience, 8, 1–20. https://doi.org/10.1093/gigascience/giz053.

Flatt, J. W., Blasimme, A., & Vayena, E. (2017). Improving the measurement of scientific success by reporting a self-citation index. Publications, 5, 1–6. https://doi.org/10.3390/publications5030020.

Fox, C. W., Paine, C. E. T., & Sauterey, B. (2016). Citations increase with manuscript length, author number, and references cited in ecology journals. Ecology and Evolution, 6, 7717–7726. https://doi.org/10.1002/ece3.2505.

Ghahramani, Z., & Mehrabani, G. (2013). The criteria considered in preparing manuscripts for submission to biomedical journals. Bulletin of Emergency and Trauma, 1, 56–569.

Guo, F., Ma, C., Shi, Q., & Zong, Q. (2018). Succinct effect or informative effect: The relationship between title length and the number of citations. Scientometrics, 116, 1531–1539. https://doi.org/10.1007/s11192-018-2805-8.

Haustein, S., Costas, R., & Larivière, V. (2015). Characterizing social media metrics of scholarly papers: The effect of document properties and collaboration patterns. PLoS ONE, 10, 1–21. https://doi.org/10.1371/journal.pone.0120495.

Hudson, J. (2016). An analysis of the titles of papers submitted to the UK REF in 2014: authors, disciplines, and stylistic details. Scientometrics, 109, 871–889. https://doi.org/10.1007/s11192-016-2081-4.

Letchford, A., Moat, H. S., & Preis, T. (2015). The advantage of short paper titles. Royal Society Open Science, 2, 10–15. https://doi.org/10.1098/rsos.150266.

Lewis, C. (2018). The open access citation advantage: Does it exist and what does it mean for libraries? Information Technology and Libraries, 37, 50–65. https://doi.org/10.6017/ital.v37i3.10604.

Li, Y., Wu, C., Yan, E., & Li, K. (2018). Will open access increase journal CiteScores? An empirical investigation over multiple disciplines. PLoS ONE, 13, 1–21. https://doi.org/10.1371/journal.pone.0201885.

Merton, R. K. (1968). The matthew effect in science: The reward and communication systems of science are considered. Science, 159, 56–63. https://doi.org/10.1126/science.159.3810.56.

Merton, R. K. (1973). The sociology of science: Theoretical and empirical investigations (Vol. 15). United States: The University of Chicago Press. https://doi.org/10.2307/3102264.

Moed, H., Plume, A (2011) The multi-dimensional research assessment matrix. Res Trends

Petersen, A. M., Fortunato, S., Pan, R. K., Kaski, K., Penner, O., Rungi, A., et al. (2014). Reputation and impact in academic careers. Proceedings of the National Academy of Sciences U S A, 111, 15316–15321. https://doi.org/10.1073/pnas.1323111111.

Piwowar, H. (2013). Value all research products. Nature, 493, 159. https://doi.org/10.1002/asi.23309.

Piwowar, H., Priem, J., Larivière, V., Alperin, J. P., Matthias, L., Norlander, B., et al. (2018). The state of OA: A large-scale analysis of the prevalence and impact of open access articles. PeerJ, 2018, 1–23. https://doi.org/10.7717/peerj.4375.

Royle, P., Kandala, N. B., Barnard, K., & Waugh, N. (2013). Bibliometrics of systematic reviews: Analysis of citation rates and journal impact factors. Systematic Reviews, 2, 74. https://doi.org/10.1186/2046-4053-2-74.

Taylor, M., Kamalski J. (2012) The changing face of journal metrics. https://www.elsevier.com/connect/the-changing-face-of-journal-metrics.

Times Higher Education. How much research goes completely uncited? 2018. https://www.timeshighereducation.com/news/how-much-research-goes-completely-uncited.

Tregenza, T. (1997). Darwin a better name than Wallace? [1]. Nature, 385, 480. https://doi.org/10.1038/385480a0.

van Wesel, M. (2016). Evaluation by citation: Trends in publication behavior, evaluation criteria, and the strive for high impact publications. Science and Engineering Ethics, 22, 199–225. https://doi.org/10.1007/s11948-015-9638-0.

van Wesel, M., Wyatt, S., & ten Haaf, J. (2014). What a difference a colon makes: How superficial factors influence subsequent citation. Scientometrics, 98, 1601–1615. https://doi.org/10.1007/s11192-013-1154-x.

Zhou, Z. Q., Tse, T. H., & Witheridge, M. (2019). Metamorphic robustness testing: Exposing hidden defects in citation statistics and journal impact factors. IEEE Transactions on Software Engineering. https://doi.org/10.1109/tse.2019.2915065.

Acknowledgements

JP was supported by the National Science Centre within SONATA BIS Grant 2017/26/E/ST4/00041.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Liskiewicz, T., Liskiewicz, G. & Paczesny, J. Factors affecting the citations of papers in tribology journals. Scientometrics 126, 3321–3336 (2021). https://doi.org/10.1007/s11192-021-03870-w

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-021-03870-w