Abstract

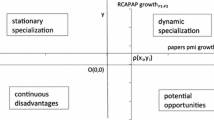

Newcomer nations, promoted by developmental states, have poured resources into nanotechnology development, and have dramatically increased their nanoscience research influence, as measured by research citation. Some achieved these gains by producing significantly higher impact papers rather than by simply producing more papers. Those nations gaining the most in relative strength did not build specializations in particular subfields, but instead diversified their nanotechnology research portfolios and emulated the global research mix. We show this using a panel dataset covering the nanotechnology research output of 63 countries over 12 years. The inverse relationship between research specialization and impact is robust to several ways of measuring both variables, the introduction of controls for country identity, the volume of nanoscience research output (a proxy for a country’s scientific capability) and home-country bias in citation, and various attempts to reweight and split the samples of countries and journals involved. The results are consistent with scientific advancement by newcomer nations being better accomplished through diversification than specialization.

Similar content being viewed by others

Notes

Suggesting that the case for diversification may well vary with a country’s stage of development, Imbs and Wazciarg (2003) show that poorer and richer countries tend to have lower levels of industrial diversity, relative to modestly rich countries. Similar forces could yield similar effects for scientific diversification.

Indeed, despite significant efforts, we have been unable to locate any studies of this relationship at the national level. At the firm level, Lee et al. (2012) find that firms with more specialized research portfolios filed more high impact patents; while Matusik and Fitza (2012) show that venture capital firms that are highly specialized and highly diversified were more successful than those who were moderately diversified in taking firms they invest in public.

In keeping with previous literature, we define the developmental state simply as a government that, motivated by desire for economic advancement, intervenes in industrial affairs (Woo-Cumings 1999). For our purposes, technological affairs are an aspect of industrial ones.

Iran could certainly be included in this list, given the dramatic rise in its nanotechnology research output, which surpassed that of Brazil by 2009. However, for most of the sample period, there are too few papers from Iran to analyze its patterns of specialization.

To the extent that research is cheaper in lower-income countries, the dollar figures provided in this section overstate the research budgets of high-relative to low-income countries. There is no index comparing the cost of scientific research across countries that would permit us to compare these budgets in terms of purchasing power.

We have re-run the analyses appearing in the next section for k = 8, and the results did not change qualitatively. The only exception is Fig. 8, which involves the Hirschman Herfindahl Index of concentration. The HHI is known to be sensitive to changes in the number of subfields across which concentration is measured.

The citation rate for a subfield in some year is the ratio between the average citation rate of papers published in that subfield that year and the average citation rate of all nanotechnology papers published that year.

Using a share-of-citations measure allows for the fact the fact that more recent publications have had less time to be cited and compete for recognition in a larger pool of publications. We emphasize that this is a measure if relative influence, increases in which only tell us that the number of citations to papers involving country c have grown more rapidly than have citations to all papers in the field.

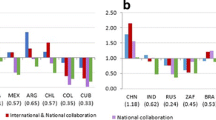

One obvious drawback to this simple share-of-citations measure is that it gives greater weight to internationally coauthored papers (Aksnes 2006). Shares of citations and publications measures recalculated to attribute papers to countries in inverse proportion to the number of countries that authored each paper yield the same qualitative findings as the figures and table presented here, indicating that our results are not driven by an over-counting of internationally coauthored papers. Fractional attribution of internationally coauthored papers yields only two minor changes: (1) The growth over time in China’s RCI is slightly more pronounced, because the citation rates of Chinese- only papers have converged on those of papers involving authors from both China and other countries; (2) Russia’s relative RCI drops with fractional attribution, as its internationally collaborative papers are more highly cited than Russian only papers.

Some countries may tend to cite their own work, and lower-income countries are more likely to cite work in lower ranked journals (Didegah et al. 2012). There is little we can do to correct our estimates of influence and impact for this with the data available, but we will check that our estimates of the relationship between diversification and impact are plausibly robust to such problems.

We tried using a re-based impact factor (RBI—see Sect. 3.3) as a proxy for impact. The RBI normalizes citations across subfields by subfield norms and then takes the country’s publication-share-weighted average of these normalize citation across sub fields (King 2004). Rebasing has imperceptible effects on Fig. 5, indicating that results in this section are not sensitive to the weighting of citations in different subfields.

Eigenfactor scores as reported by Web of Science, 2010 Journal Rankings. The cutoff presented here for prestigious journals was provided by nanotechnologist at a top-ranked engineering department who we asked to identify the lowest ranked journal they would support their graduate students submitting papers to. Lowering the bar does not alter our results qualitatively.

Some countries do rank differently by CV and by Nonconformity. As noted, this is unsurprising given that coefficients of variation are very sensitive to changes in the country-mean value of RLA and RLAM.

We have chosen not to present estimates using Top5 because the measure is censored at zero. Estimates from Tobit models with country fixed effects are not consistent, due to the incidental parameters problem. We have, however, analyzed the behavior of Top5 using linear regressions with fixed effects (ignoring censoring), and the results are qualitatively identical to those using RBI and RCI, although the coefficient on the diversification measure is sometimes less statistically significant. Tobit models without country fixed effects yield negative and highly significant coefficients on the diversification measure.

Brazil provides an illustrative example. While Brazil is now developing a research mix that resembles that of the rest of the world, it had, until at least 2005, focused on nanobio and alternative energy applications, not only within nanotechnology but also across all of science and technology (Fink et al. 2012). As its nanotechnology research mix has become more concentrated, its research impact has declined—the only of our six Newcomer nations for which this is the case.

References

Abramo, G., Cicero, T., & D’Angelo, C. A. (2012). Revisiting the scaling of citations for research assessment. Journal of Informetrics, 6(4), 470–479.

Aksnes, D. W. (2006). Citation rates and perceptions of scientific contribution. Journal of the American Society for Information Science and Technology, 57(2), 169–185. doi:10.1002/asi.20262.

Aksnes, D. W., Schneider, J. W., & Gunnarsson, M. (2012). Ranking national research systems by citation indicators. A comparative analysis using whole and fractionalised counting methods. Journal of Informetrics, 6(1), 36–43.

Andersson, M., & Ejermo, O. (2008). Technology specialization and the magnitude and quality of exports. Econ. Innov. New Techn., 17(4), 355–375.

Appelbaum, R. P., Parker, R., & Cao, C. (2011). Developmental state and innovation: Nanotechnology in China. Global Networks, 11(3), 298–314.

Archibugi, D., & Pianta, M. (1992). Specialization and size of technological activities in industrial countries: The analysis of patent data. Research Policy, 21(1), 79–93.

Avila-Robinson, A., & Miyazaki, K. (2012). Emerging micro/nanofabrication technologies as drivers of nanotechnological change: Paths of knowledge evolution and international patterns of specialization. Technology Management for Emerging Technologies (PICMET), 2012 Proceedings of PICMET’12: (pp. 2652–2662): IEEE.

Balassa, B. (1965). Trade liberalisation and “revealed” comparative advantage1. The Manchester School, 33(2), 99–123.

Blei, D. M., & Lafferty, J. D. (2007). A correlated topic model of science. Annals of Applied Statistics, 1(1), 17–35. doi:10.1214/07-Aoas114.

Blei, D. M., Ng, A. Y., & Jordan, M. I. (2003). Latent Dirichlet allocation. Journal of Machine Learning Research, 3(4–5), 993–1022. doi:10.1162/Jmlr.2003.3.4-5.993.

Braun, T., Zsindely, S., Dióspatonyi, I., & Zádor, E. (2007). Gatekeeping patterns in nano-titled journals. Scientometrics, 70(3), 651–667. doi:10.1007/s11192-007-0306-2.

Cantwell, J., & Vertova, G. (2004). Historical evolution of technological diversification. Research Policy, 33(3), 511–529.

Chang, J., Gerrish, S., Wang, C., Boyd-graber, J. L., & Blei, D. M. (2009). Reading tea leaves: How humans interpret topic models. In Advances in neural information processing systems (Vol. 22, pp. 288–296). https://papers.nips.cc/paper/3700-reading-tea-leaves-how-humans-interpret-topic-models.

Chen, H., & Roco, M. C. (2008). Mapping nanotechnology innovations and knowledge: global and longitudinal patent and literature analysis (Vol. 20): Springer Science & Business Media.

Commission of the European Communities. (2007). Nanosciences and Nanotechnologies: An action plan for Europe 2005-2009. First Implementation Report 2005–2007 Brussels.

Cowan, N. (2001). The magical number 4 in short-term memory: A reconsideration of mental storage capacity. Behavioral and Brain Sciences, 24(01), 87–114. doi:10.1017/S0140525X01003922.

Didegah, F., Thelwall, M., & Gazni, A. (2012). An international comparison of journal publishing and citing behaviours. Journal of Informetrics, 6(4), 516–531.

Editorial (2008). Location, location, location. Nature Nanotechnology, 3(6), 309.

European Commission. (2006). FP7—Tomorrow’s answers start today. In Community Research and Development Information Service (CORDIS) (Ed.): European Commission. https://ec.europa.eu/research/fp7/pdf/fp7-factsheets_en.pdf.

Feldman, M. P., & Audretsch, D. B. (1999). Innovation in cities: Science-based diversity, specialization and localized competition. European economic review, 43(2), 409–429.

Fink, D., Kwon, Y., Rho, J. J., & So, M. (2012). S&T knowledge production from 2000 to 2009 in two periphery countries: Brazil and South Korea. Scientometrics, 99, 1–18.

Garfield, E. (1979). Citation indexing: Its theory and application in science, technology, and humanities. New York: Wiley.

Glanzel, W., Meyer, M., du Plessis, B., Magerman, T., Schlemmer, B., Debackere, K., et al. (2003). Nanotechnology: Analysis of an emerging domain of scientific and technological endeavour. Leuven, Belgium: Steunpunt O&O Statistieken.

Guan, J., & Ma, N. (2007). China’s emerging presence in nanoscience and nanotechnology: A comparative bibliometric study of several nanoscience ‘giants’. Research Policy, 36(6), 880–886.

Hall, D., Jurafsky, D., & Manning, C. D. Studying the history of ideas using topic models. In Proceedings of the conference on empirical methods in natural language processing, 2008 (pp. 363-371): Association for Computational Linguistics.

Harper, T. (2011). Global Funding of Nanotechnologies and Its Impact, Cientifica. Available at http://cientifica.com/wp-content/uploads/downloads/2011/07/Global-Nanotechnology-Funding-Report-2011.pdf.

Hausmann, R., Hidalgo, C., Bustos, S., Coscia, M., Chung, S., Jimenez, J., et al. (2011). The atlas of economic complexity: mapping paths to prosperity. Cambridge, MA: Center for International Development, Harvard University.

Hidalgo, C. A., & Hausmann, R. (2009). The building blocks of economic complexity. Proceedings of the National Academy of Sciences, 106(26), 10570–10575.

Hidalgo, C. A., Klinger, B., Barabási, A. L., & Hausmann, R. (2007). The product space conditions the development of nations. Science, 317(5837), 482–487.

Horlings, E., & Van den Besselaar, P. Convergence in science: Growth and structure of worldwide scientific output, 1993–2008. In Science and Innovation Policy, 2011 Atlanta Conference on, 2011 (pp. 1–19): IEEE.

Huang, C., Notten, A., & Rasters, N. (2011). Nanoscience and technology publications and patents: a review of social science studies and search strategies. The Journal of Technology Transfer, 36(2), 145–172.

Imbs, J., & Wacziarg, R. (2003). Stages of diversification. American Economic Review, 93, 63–86.

Jin, B., & Rousseau, R. (2005). Evaluation of research performance and scientometric indicators in China. In H. F. Moed, W. Glanzel & U. Schmoch (Eds.), Handbook of quantitative science and technology research (pp. 497–514): Springer.

Khramova, E., Meissner, D., & Sagieva, G. (2013). Statistical patent analysis indicators as a means of determining country technological specialisation. WP BRP: Higher School of Economics Research Paper No. 9.

King, D. A. (2004). The scientific impact of nations. Nature, 430(6997), 311–316.

Kostoff, R. N. (1998). The use and misuse of citation analysis in research evaluation—Comments on theories of citation? Scientometrics, 43(1), 27–43.

Kostoff, R. N. (2012). China/USA nanotechnology research output comparison—2011 update. Technological Forecasting and Social Change, 79(5), 986–990.

Kostoff, R., Murday, J., Lau, C., & Tolles, W. (2006). The seminal literature of nanotechnology research. Journal of Nanoparticle Research,. doi:10.1007/s11051-005-9034-9.

Lee, W.-L., Chiang, J.-C., Wu, Y.-H., & Liu, C.-H. (2012). How knowledge exploration distance influences the quality of innovation. Total Quality Management & Business Excellence, 23(9–10), 1045–1059.

Lenoir, T., & Herron, P. (2009). Tracking the current rise of chinese pharmaceutical bionanotechnology. Journal of Biomedical Discovery and Collaboration, 4, 8.

Leydesdorff, L. (1998). Theories of citation? Scientometrics, 43(1), 5–25.

Leydesdorff, L. (2013). An evaluation of impacts in “Nanoscience & nanotechnology”: steps towards standards for citation analysis. Scientometrics, 94(1), 35–55.

Leydesdorff, L., & Wagner, C. (2009). Is the United States losing ground in science? A global perspective on the world science system. Scientometrics, 78(1), 23–36.

Leydesdorff, L., & Zhou, P. (2007). Nanotechnology as a field of science: Its delineation in terms of journals and patents. Scientometrics, 70(3), 693–713. doi:10.1007/s11192-007-0308-0.

Lundberg, J. (2007). Lifting the crown—citation z-score. Journal of Informetrics, 1(2), 145–154.

MacRoberts, M. H., & MacRoberts, B. R. (1996). Problems of citation analysis. Scientometrics, 36(3), 435–444.

Mangàni, A. (2007). Technological variety and the size of economies. Technovation, 27(11), 650–660.

Matusik, S. F., & Fitza, M. A. (2012). Diversification in the venture capital industry: Leveraging knowledge under uncertainty. Strategic Management Journal, 33(4), 407–426. doi:10.1002/smj.1942.

Mehta, A., Herron, P., Motoyama, Y., Appelbaum, R., & Lenoir, T. (2012). Globalization and de-globalization in nanotechnology research: The role of China. Scientometrics, 93(2), 439–458.

Miller, G. A. (1956). The magical number seven, plus or minus two: Some limits on our capacity for processing information. Psychological Review, 63(2), 81.

Mimno, D., & McCallum, A. (2007). Organizing the OCA: Learning faceted subjects from a library of digital books. Proceedings of the 7th Acm/Iee Joint Conference on Digital Libraries, (pp. 376–385). doi:10.1145/1255175.1255249.

Moed, H. F., De Bruin, R. E., & Van Leeuwen, T. N. (1995). New bibliometric tools for the assessment of national research performance: Database description, overview of indicators and first applications. Scientometrics, 33(3), 381–422.

Mogoutov, A., & Kahane, B. (2007). Data search strategy for science and technology emergence: A scalable and evolutionary query for nanotechnology tracking. Research Policy,. doi:10.1016/J.Respol.02.005.

Newman, D., Chemudugunta, C., Smyth, P., & Steyvers, M. (2006). Analyzing entities and topics in news articles using statistical topic models. Intelligence and Security Informatics, Proceedings, 3975, 93–104.

Noyons, E., Buter, R., van Raan, A., Schmoch, U., Heinze, T., Hinze, S., et al. (2003). Mapping excellence in science and technology across Europe (Part 2: Nanoscience and nanotechnology) (Draft report of project EC-PPN CT-2002-0001 to the European Commission).

OECD. (2013). Research and development statistics: Gross domestic expenditure on R-D by sector of performance and source of funds. OECD science, technology and R&D statistics (database). doi:10.1787/data-00189-en. Accessed 26 Oct 2013.

OECD. (2014). Main science and technology indicators, Issue 1. Paris: OECD Press.

OECD Working Party on Nanotechnology. (2012). Finance and Investor Models in Nanotechnology. (Background Paper 2: OECD /NNI International Symposium on Assessing the Economic Impact of Nanotechnology. Paris, France).

Onel, S., Zeid, A., & Kamarthi, S. (2011). The structure and analysis of nanotechnology co-author and citation networks. Scientometrics, 89(1), 119–138. doi:10.1007/s11192-011-0434-6.

Palmberg, C., Dernis, H., & Miguet, C. (2009). Nanotechnology: An overview based on indicators and statistics. STI Working Paper Series. 2 rue André-Pascal, 75775 Paris Cedex 16, France: OECD, Directorate for Science, Technology and Industry.

PCAST. (2012). Report to the President and Congress on the fourth assessment of the National Nanotechnology Initiative. President's Council of Advisors on Science and Technology. http://whitehouse.gov/sites/default/files/microsites/ostp/PCAST_2012_Nanotechnology_FINAL.pdf.

Pianta, M., & Archibugi, D. (1991). Specialization and size of scientific activities: A bibliometric analysis of advanced countries. Scientometrics, 22(3), 341–358.

Porter, A., Youtie, J., & Shapira, P. (2008). Refining search terms for nanotechnology. Journal of Nanoparticle Research, 10(5), 715–728.

Porter, A. L., & Zhang, Y. (2012). Text Clumping for Technical Intelligence.

Pouris, A. (2007). Nanoscale research in South Africa: A mapping exercise based on scientometrics. Scientometrics, 70(3), 541–553. doi:10.1007/s11192-007-0301-7.

Raje, J. (2011). Commercialization of nanotechnology: Global overview and European position. Budapest: Lux Research.

Řehůřek, R., & Sojka, P. Software framework for topic modelling with large corpora. In Proceedings of LREC 2010 workshop New Challenges for NLP Frameworks, 2010 (pp. 46–50).

Roco, M. C. (2007). National nanotechnology initiative: past, present, future. In W. A. Goddard, D. Brenner, S. E. Lyshevski & G. J. Iafrate (Eds.), Handbook on nanoscience, engineering and technology (2nd ed., pp. 3.1–3.26). Boca Raton: Taylor and Francis.

Roco, M. C., Mirken, C. A., & Hersam, M. C. (Eds.). (2010). Nanotechnology research directions for societal needs in 2020: Retrospective and outlook. Berlin: Springer.

Royal Society of London. (2011). Knowledge, networks and nations: global scientific collaboration in the 21st century. London: Elsevier.

Schubert, A., & Braun, T. (1986). Relative indicators and relational charts for comparative assessment of publication output and citation impact. Scientometrics, 9(5–6), 281–291.

Schubert, T., & Grupp, H. (2011). Tests and confidence intervals for a class of scientometric, technological and economic specialization ratios. Applied Economics, 43(8), 941–950.

Shaikh, A. (2007). Globalization and the Myths of Free Trade: History, Theory and Empirical Evidence: Taylor & Francis.

Shapira, P., & Wang, J. (2010). Follow the money. Nature, 468(7324), 627–628.

UNCTAD (2006). UNCTAD Handbook of Statistics. In U.N.C.O.T.A. Development (Ed.). Geneva: United Nations Conference on Trade and Development.

Van Noorden, R. (2014). China tops Europe in R&D intensity. Nature, 505, 144–145.

van Zeebroeck, N., van Pottelsberghe de la Potterie, B., & Han, W. (2006). Issues in measuring the degree of technological specialisation with patent data. Scientometrics, 66(3), 481–492.

Wagner, C. S. (2008). The new invisible college: Science for development. Washington, D.C.: Brookings Institution Press.

Waltman, L., & van Eck, N. J. (2013). Source normalized indicators of citation impact: An overview of different approaches and an empirical comparison. Scientometrics, 96(3), 699–716.

Woo-Cumings, M. (1999). The developmental state: Cornell University Press.

Youtie, J., Shapira, P., & Porter, A. L. (2008). Nanotechnology publications and citations by leading countries and blocs. [Editorial Material]. Journal of Nanoparticle Research, 10(6), 981–986. doi:10.1007/s11051-008-9360-9.

Zakaria, F. (2008). The post-american world. New York: W.W. Norton & Co.

Zhang, H., Giles, C. L., Foley, H. C., & Yen, J. (2007). Probabilistic community discovery using hierarchical latent gaussian mixture model. AAAI, 7, 663–668.

Zitt, M., & Bassecoulard, E. (2006). Delineating complex scientific fields by an hybrid lexical-citation method: An application to nanosciences. Inform Process Manag, 42(6), 1513–1531. doi:10.1016/j.ipm.2006.03.016.

Acknowledgments

We are grateful to Richard Appelbaum, Matthew Gebbie, Shirley Han, Barbara Harthorn, Luciano Kay, Sumita Pennathur and Galen Stocking for support and for useful discussions of our results. Rachael Drew, Quinn McCreight, Caitlin Vejby and Chris Wegemer provided invaluable research assistance. This material is based upon work supported by the National Science Foundation under Grant No. SES 0531184. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the authors and do not necessarily reflect the views of the National Science Foundation. This work was conducted under the auspices of the University of California at Santa Barbara’s Center for Nanotechnology in Society (www.cns.ucsb.edu).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Herron, P., Mehta, A., Cao, C. et al. Research diversification and impact: the case of national nanoscience development. Scientometrics 109, 629–659 (2016). https://doi.org/10.1007/s11192-016-2062-7

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-016-2062-7