Abstract

Modeling distributions of citations to scientific papers is crucial for understanding how science develops. However, there is a considerable empirical controversy on which statistical model fits the citation distributions best. This paper is concerned with rigorous empirical detection of power-law behaviour in the distribution of citations received by the most highly cited scientific papers. We have used a large, novel data set on citations to scientific papers published between 1998 and 2002 drawn from Scopus. The power-law model is compared with a number of alternative models using a likelihood ratio test. We have found that the power-law hypothesis is rejected for around half of the Scopus fields of science. For these fields of science, the Yule, power-law with exponential cut-off and log-normal distributions seem to fit the data better than the pure power-law model. On the other hand, when the power-law hypothesis is not rejected, it is usually empirically indistinguishable from most of the alternative models. The pure power-law model seems to be the best model only for the most highly cited papers in “Physics and Astronomy”. Overall, our results seem to support theories implying that the most highly cited scientific papers follow the Yule, power-law with exponential cut-off or log-normal distribution. Our findings suggest also that power laws in citation distributions, when present, account only for a very small fraction of the published papers (less than 1 % for most of science fields) and that the power-law scaling parameter (exponent) is substantially higher (from around 3.2 to around 4.7) than found in the older literature.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

It is often argued in scientometrics, social physics and other sciences that distributions of some scientific “items” (e.g., articles, citations) produced by some scientific sources (e.g., authors, journals) have heavy tails that can be modelled using a power-law model. These distributions are then said to conform to the Lotka’s law (Lotka 1926). Examples of such distributions include author productivity, occurrence of words, citations received by papers, nodes of social networks, number of authors per paper, scattering of scientific literature in journals, and many others (Egghe 2005). In fact, power-law models are widely used in many sciences as physics, biology, earth and planetary sciences, economics, finance, computer science, and others (Newman 2005; Clauset et al. 2009). Models equivalent to Lotka’s law are known as Pareto’s law in economics (Gabaix 2009) and as Zipf’s law in linguistics (Baayen 2001). Appropriate measuring and providing scientific explanations for power laws plays an important role in understanding the behaviour of various natural and social phenomena.

This paper is concerned with empirical detection of power-law behaviour in the distribution of citations received by scientific papers. The power-law distribution of citations for the highly cited papers was first suggested by SollaPrice (1965), who also proposed a “cumulative advantage” mechanism that could generate the power-law distribution (SollaPrice 1976). More recently, a growing literature has developed that aims at measuring power laws in the right tails of citation distributions. In particular, Redner (1998), Redner (2005) found that the right tails of citation distributions for articles published in Physical Review over a century and of articles published in 1981 in journals covered by Thomson Scientific’s Web of Science (WoS) follow power laws. The latter data set was also modelled with power-law techniques by Clauset et al. (2009) and Peterson et al. (2010). The latter study also used data from 2007 list of the living highest h-index chemists and from Physical Review D between 1975 and 1994. VanRaan (2006) observed that the top of the distribution of around 18,000 papers published between 1991 and 1998 in the field of chemistry in Netherlands follows a power law distribution. Power-law models were also fitted to data from high energy physics (Lehmann et al. 2003), data for most cited physicists (Laherrère and Sornette 1998), data for all papers published in journals of the American Physical Society from 1983 to 2008 (Eom and Fortunato 2011), and to data for all physics papers published between 1980 and 1989 (Golosovsky and Solomon 2012).

Recently, Albarrán and Ruiz-Castillo (2011) tested for the power-law behavior using a large WoS dataset of 3.9 million articles published between 1998 and 2002 categorized in 22 WoS research fields. The same dataset was also used to search for the power laws in the right tail of citation distributions categorized in 219 WoS scientific sub-fields (Albarrán et al. 2011a, b). These studies offer the largest existing body of evidence on the power-law behaviour of citation distributions. Three major conclusions appear from them. First, the power-law behavior is not universal. The existence of power law cannot be rejected in the WoS data for 17 out of 22 and for 140 out of 219 sub-fields studied in Albarrán and Ruiz-Castillo (2011) and in Albarrán et al. (2011a, b), respectively. Secondly, in opposition to previous studies, these papers found that the scaling parameter (exponent) of the power-law distribution is above 3.5 in most of the cases, while the older literature suggested that the parameter value is between 2 and 3 (Albarrán et al. 2011). Third, power laws in citation distributions are rather small—on average they cover just about 2 % of the most highly cited articles in a given WoS field of science and account for about 13.5 % of all citations in the field.

The main aim of this paper is to use a statistically rigorous approach to answer the empirical question of whether the power-law model describes best the observed distribution of highly cited papers. We use the statistical toolbox for detecting power-law behaviour introduced by Clauset et al. (2009). There are two major contributions of the present paper. First, we use a very large, previously unused data set on the citation distributions of the most highly cited papers in several fields of science. This data set comes from Scopus, a bibliographic database introduced in 2004 by Elsevier, and contains 2.2 million articles published between 1998 and 2002 and categorized in 27 Scopus major subject areas of science. Most of the previous studies used rather small data sets, which were not suitable for rigorous statistical detecting of the power-law behaviour. In contrast, our sample is even bigger with respect to the most highly cited papers than the large sample used in the recent contributions based on WoS data (Albarrán and Ruiz-Castillo 2011; Albarrán et al. 2011a, b). This results from the fact that Scopus indexes about 70 % more sources compared to the WoS (López-Illescas et al. 2008; Chadegani et al. 2013) and therefore gives a more comprehensive coverage of citation distributions.Footnote 1

The second major contribution of the paper is to provide a rigorous statistical comparison of the power-law model and a number of alternative models with respect to the problem which theoretical distribution fits better empirical data on citations. This problem of model selection has been previously studied in some contributions to the literature. It has been argued that models like stretched exponential (Laherrère and Sornette 1998), Yule (SollaPrice 1976), log-normal (Redner 2005; Stringer et al. 2008; Radicchi et al. 2008), Tsallis (Tsallis and deAlbuquerque 2000; Anastasiadis et al. 2010; Wallace et al. 2009) or shifted power law (Eom and Fortunato 2011) fit citation distributions equally well or better than the pure power-law model. However, previous papers have either focused on a single alternative distribution or used only visual methods to choose between the competing models. The present paper fills the gap by providing a systematic and statistically rigorous comparison of the power-law distribution with such alternative models as the log-normal, exponential, stretched exponential (Weibull), Tsallis, Yule and power-law with exponential cut-off. The comparison between models was performed using a likelihood ratio test (Vuong 1989; Clauset et al. 2009).

Materials and methods

Fitting power-law model to citation data

We follow Clauset et al. (2009) in choosing methods for fitting power laws to citation distributions. These authors carefully show that, in general, the appropriate methods depend on whether the data are continuous or discrete. In our case, the latter is true as citations are non-negative integers. Let \(x\) be the number of citations received by an article in a given field of science. The probability density function (pdf) of the discrete power-law model is defined as

where \(\zeta (\alpha ,x_0)\) is the generalized or Hurwitz zeta function. The \(\alpha \) is a shape parameter of the power-law distribution, known as the power-law exponent or scaling parameter. The power-law behaviour is usually found only for values greater than some minimum, denoted by \(x_0\). In case of citation distributions, the power-law behaviour has been found on average only in the top 2 % of all articles published in a field of science (Albarrán et al. 2011a, b).

The lower bound on the power-law behaviour, \(x_0\), should be therefore estimated if we want to measure precisely in which part of a citation distribution the model applies. Moreover, we need an estimate of \(x_0\) if we want to obtain an unbiased estimate of the power-law exponent, \(\alpha \).

We estimate \(\alpha \) using the maximum likelihood (ML) estimation. The log-likelihood function corresponding to (1) is

where \(x_i\) is the number of citations received by the \(i\hbox {th}\) paper \((i = 1,\dots , n)\).

The ML estimate for \(\alpha \) is found by numerical maximization of (2).Footnote 2

Following Clauset et al. (2009), we use the following procedure to estimate the lower bound on the power-law behaviour, \(x_{0}\). For each \(x\geqslant x_{\rm min}\), we calculate the ML estimate of the power-law exponent, \(\hat{\alpha }\), and then we compute the well-known Kolmogorov–Smirnov (KS) statistic for the data and the fitted model. The KS statistic is defined as

where \(S(x)\) is the cumulative distribution function (cdf) for the observations with value at least \(x_0\), and \(P(x,\hat{\alpha })\) is the cdf for the fitted power-law model to observations for which \(x\geqslant x_0\). The estimate \(\hat{x}_0\) is then chosen as a value of \(x_0\) for which the KS statistic is the smallest. The standard errors for both estimated parameters, \(\hat{\alpha }\) and \(\hat{x}_0\), are computed with standard bootstrap methods with 1,000 replications.

Goodness-of-fit and model selection tests

The next step in measuring power laws involves testing goodness of fit. A positive result of such a test allows to conclude that a power-law model is consistent with data. Following Clauset et al. (2009) again, we use a test based on a semi-parametric bootstrap approach.Footnote 3 The procedure starts with fitting a power-law model to data and calculating a KS statistic (see Eq. 3) for this fit, denoted by \(k\). Next, a large number of synthetic data sets is generated that follow the originally fitted power-law model above the estimated \(x_{0}\) and have the same non-power-law distribution as the original data set below \(\hat{x}_{0}\). Then, a power-law model is fitted to each of the generated data sets using the same methods as for the original data set, and the KS statistics are calculated. The fraction of data sets for which their own KS statistic is larger than \(k\) is the p value of the test. It represents a probability that the KS statistics computed for data drawn from the power-law model fitted to the original data is at least as large as \(k\). The power-law hypothesis is rejected if the p value is smaller than some chosen threshold.Footnote 4 Following Clauset et al. (2009), we rule out the power-law model if the estimated p value for this test is smaller than 0.1. In the present paper, we use 1,000 generated data sets.

If the goodness-of-fit test rejects the power-law hypothesis, we may conclude that the power law has not been found. However, if a data set is fitted well by a power law, the question remains if there is an alternative distribution, which is an equally good or better fit to this data set. We need, therefore, to fit some rival distributions and evaluate which distribution gives a better fit. To this aim, we use the likelihood ratio test, which tests if the compared models are equally close to the true model against the alternative that one is closer. The test computes the logarithm of the ratio of the likelihoods of the data under two competing distributions, LR, which is negative or positive depending on which model fits data better. Specifically, let us consider two distributions with pdfs denoted by \(p_1(x)\) and \(p_2(x)\). The LR is defined as:

A positive value of the LR suggests that model \(p_1(x)\) fits the data better. However, the sign of the LR can be used to determine which model should be favored only if the LR is significantly different from zero. Vuong (1989) showed that in the case of non-nested models the normalized log-likelihood ratio \(\hbox {NLR}=n^{-1/2}\hbox {LR}/\sigma \), where \(\sigma \) is the estimated standard deviation of LR, has a limit standard normal distribution.Footnote 5 This result can be used to compute a p value for the test discriminating between the competing models. If the p value is small (for example, smaller than 0.1), then the sign of the LR can probably be trusted as an indicator of which model is preferred. However, if the p value is large, then the test is unable to choose between the compared distributions.

We have followed Clauset et al. (2009) in choosing the following alternative discrete distributions: exponential, stretched exponential (Weibull), log-normal, Yule and the power law with exponential cut-off.Footnote 6 Most of these models have been considered in previous literature on modeling citation distribution. As another alternative, we also use the Tsallis distribution, which has been also proposed as a model for citation distributions (Wallace et al. 2009; Anastasiadis et al. 2010). Finally, we also consider a “digamma” model using exponential functions of a digamma function, which was recently introduced for distributions with heavy tails in a statistical physics framework based on the principle of maximum entropy (Peterson et al. 2013).Footnote 7

The definitions of our alternative distributions are given in Table 1.

Data

We use citation data from Scopus, a bibliographic database introduced in 2004 by Elsevier. Scopus is a major competitor to the most-widely used data source in the literature on modeling citation distributions—Web of Science (WoS) from Thomson Reuters. Scopus covers 29 million records with references going back to 1996 and 21 million pre-1996 records going back as far as 1823. An important limitation of the database is that it does not cover cited references for pre-1996 articles. Scopus contains 21,000 peer-reviewed journals from more than 5,000 international publishers. It covers about 70 % more sources compared to the WoS (López-Illescas et al. 2008), but a large part of the additional sources are low-impact journals. A recent literature review has found that the quite extensive literature that compares WoS and Scopus from the perspective of citation analysis offers mixed results (Chadegani et al. 2013). However, most of the studies suggest that, at least for the period from 1996 on, the number of citations in both databases is either roughly similar or higher in Scopus than in WoS. Therefore, is seems that Scopus constitutes a useful alternative to WoS from the perspective of modeling citation distributions.

Journals in Scopus are classified under four main subject areas: life sciences (4,200 journals), health sciences (6,500 journals), physical sciences (7,100 journals) and social sciences including arts and humanities (7,000 journals). The four main subject areas are further divided into 27 major subject areas and more than 300 minor subject areas. Journals may be classified under more than one subject area.

The analysis in this paper was performed on the level of 27 Scopus major subject areas of science.Footnote 8 From the various document types contained in Scopus, we have selected only articles. For the purpose of comparability with the recent WoS-based studies (Albarrán and Ruiz-Castillo 2011; Albarrán et al. 2011a), only the articles published between 1998 and 2002 were considered. Following previous literature, we have chosen a common 5-year citation window for all articles published in 1998–2002.Footnote 9 See Albarrán and Ruiz-Castillo (2011) for a justification of choosing the 5-year citation window common for all fields of science.

In order to measure the power-law behaviour of citations, we need data on the right tails of citation distributions. To this end, we have used the Scopus Citation Tracker to collect citations for \(\min (100{,}000; x)\) of the highest cited articles, where \(x\) is the actual number of articles published in a given field of science during 1998–2002. This analysis was performed separately for each of the 27 science fields categorized by Scopus.

Descriptive statistics for our data sets are presented in Table 2.

In some cases, there was less than 100,000 articles published in a field of science during 1998–2002 and we were able to obtain complete or almost complete distributions of citations (see columns 2–4 of Table 2).Footnote 10 In other cases, we have obtained only a part of the relevant distribution encompassing the right tail and some part of the middle of the distribution. The smallest portions of citation distributions were obtained for Medicine (8.4 % of total papers), Biochemistry, Genetics and Molecular Biology (15.7 %) and Physics and Astronomy (18.4 %). However, using the WoS data for 22 science categories, Albarrán and Ruiz-Castillo (2011) found that power laws account usually only for less than 2 % of the highest-cited articles. Therefore, it seems that the coverage of the right tails of citation distributions in our samples is satisfactory for our purposes.

Results and discussion

Table 3 presents results of fitting the discrete power-law model to our data sets consisting of citations to scientific articles published over 1998–2002 (with a common 5-year citation window), separately for each of the 27 Scopus major subject areas of science. The last row gives also results for all subject areas combined (“All sciences”). Beside estimates of the power-law exponent \((\hat{\alpha })\) and the lower bound on the power-law behaviour \((\hat{x}_0)\), the table gives also the estimated number and the percentage of power-law distributed papers, as well as the p value for our goodness-of-fit test.

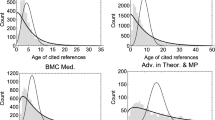

Results with respect to the goodness-of-fit suggest that the power-law hypothesis cannot be rejected for the following 14 Scopus science fields: “Agricultural and Biological Sciences”, “Biochemistry, Genetics and Molecular Biology”, “Chemical Engineering”, “Chemistry”, “Energy”, “Environmental Science”, “Materials Science”, “Neuroscience”, “Nursing”, “Pharmacology, Toxicology and Pharmaceutics”, “Physics and Astronomy”, “Psychology”, “Health Professions”, and “Multidisciplinary”. The remaining 13 Scopus fields of science for which the power-law model is rejected include humanities and social sciences (“Arts and Humanities”, “Business, Management and Accounting”, “Economics, Econometrics and Finance”, “Social Sciences”), but also formal sciences (“Computer Science”, “Decision Sciences”, “Mathematics”), life sciences (“Immunology and Microbiology”, “Medicine”, “Veterinary”, “Dentistry”), as well as “Earth and Planetary Sciences” and “Engineering”. The best power-law fits for these fields of science are shown on Fig. 1.

For most of the distributions shown on Fig. 1, it can be clearly seen that their right tails decay faster than the pure power-law model indicates. This suggest that the largest observations for these distributions should be rather modeled with a distribution having a lighter tail than the pure power-law model like the log-normal or power-law with exponential cut-off models.

The p value for our goodness-of-fit test in case of “All Sciences” is 0.076, which is below our acceptance threshold of 0.1. However, this p value is non-negligible and significantly higher than p values for most of the 13 Scopus fields of science for which we reject the power-law hypothesis. For this reason, we conclude that the evidence is not conclusive in this case. Our result for “All Sciences” is, however, in a stark contrast with that of Albarrán and Ruiz-Castillo (2011), who using the WoS data found that the fit for a corresponding data set was very good (with a p value of 0.85).Footnote 11

The estimates of the power-law exponent for the 14 Scopus science fields for which the power law seems to be a plausible hypothesis range from 3.24 to 4.69. This is in a good agreement with Albarrán and Ruiz-Castillo (2011) and confirms their assessment that the true value of this parameter is substantially higher than found in the earlier literature (Redner 1998; Lehmann et al. 2003; Tsallis and deAlbuquerque 2000), which offered estimates ranging from around 2.3 to around 3. We also confirm the observation of Albarrán and Ruiz-Castillo (2011) that power laws in citation distributions are rather small—they account usually for less than 1 % of total articles published in a field of science. The only two fields in our study with slightly “bigger” power laws are “Chemistry” (2 %) and “Multidisciplinary” (2.8 %).

The comparison between the power-law hypothesis and alternatives using the Vuong’s test is presented in Table 4. It can be observed that the exponential model can be ruled out in most of the cases. We discuss other results first for the 13 Scopus fields of science that did not pass our goodness-of-fit test. For all of these fields, except for “Veterinary”, the Yule and power-law with exponential cut-off models fit the data better than the pure power-law model in a statistically significant way. The log-normal model is better than the pure power-law model in 10 of the discussed fields; the same holds for the Weibull distribution in case of 5 fields and for the digamma distribution in case of 4 fields. However, these results do not imply that the distributions, which give a better fit to the non-power-law distributed data than the pure power-law model are plausible hypotheses for these data sets. This issue should be further studied using appropriate goodness-of-fit tests.

We now turn to results for the remaining Scopus fields of science that were not rejected by our goodness-of-fit test. The power-law hypothesis seems to be the best model only for “Physics and Astronomy”. In this case, the test statistics is always non-negative implying that the power-law model fits the data as good as or better than each of the alternatives. For the remaining 13 fields of science, the log-normal, Yule and power-law with exponential cut-off models have always higher log-likelihoods suggesting that these models may fit the data better than the pure power-law distribution. However, only in a few cases the differences between models are statistically significant. For “Chemistry” and “Multidisciplinary” both the Yule and power-law with exponential cut-off models are favoured over the pure power-law model. The power-law with exponential cut-off is also favoured in case of “Health Professions”. In other cases, the p values for the likelihood ratio test are large, which implies that there is no conclusive evidence that would allow to distinguish between the pure power-law, log-normal, Yule and power-law with exponential cut-off distributions. Comparing the power-law distribution with the Weibull and Tsallis distributions, we observe that the sign of the test statistic is positive in roughly half of the cases, but the p values are always large and neither model can be ruled out. For the considered 13 fields of science, the digamma model is never better than the power law, judging by the sign of the test statistic. Our likelihood ratio tests suggest therefore that when the power law is a plausible hypothesis according to our goodness-of-fit test it is often indistinguishable from some alternative models.

Overall, our results show that the evidence in favour of the power-law behaviour of the right-tails of citation distributions is rather weak. For roughly half of the Scopus fields of science studied, the power-law hypothesis is rejected. Other distributions, especially the Yule, power-law with exponential cut-off and log-normal seem to fit the data from these fields of science better than the pure power-law model. On the other hand, when the power-law hypothesis is not rejected, it is usually empirically indistinguishable from all alternatives with the exception of the exponential distribution. The pure power-law model seems to be favoured over alternative models only for the most highly cited papers in “Physics and Astronomy”. Our results suggest that theories implying that the most highly cited scientific papers follow the Yule, power-law with exponential cut-off or log-normal distribution may have slightly more support in data than theories predicting the pure power-law behaviour.

Conclusions

We have used a large, novel data set on citations to scientific papers published between 1998 and 2002 drawn from Scopus to test empirically for the power-law behaviour of the right-tails of citation distributions. We have found that the power-law hypothesis is rejected for around half of the Scopus fields of science. For the remaining fields of science, the power-law distribution is a plausible model, but the differences between the power law and alternative models are usually statistically insignificant. The paper also confirmed recent findings of Albarrán and Ruiz-Castillo (2011) that power laws in citation distributions, when they are a plausible, account only for a very small fraction of the published papers (less than 1 % for most of science fields) and that the power-law exponent is substantially higher than found in the older literature.

Notes

From the perspective of measuring power laws in citation distributions, the most important part of the distribution is the right tail. It seems that the database used in this paper has a better coverage of the right tail of citation distributions. The most highly cited paper in our database has received 5,187 citations (see Table 2), while the corresponding number for the database based on WoS is 4,461 (Li and Ruiz-Castillo 2013). Our database is further described in “Materials and methods” section.

If our data were drawn from a given model, then we could use the KS statistic in testing goodness of fit, because the distribution of the KS statistic is known in such a case. However, when the underlying model is not known or when its parameters are estimated from the data, which is our case, the distribution of the KS statistic must be obtained by simulation.

In this goodness-of-fit test we are interested in verifying if the power-law model is a plausible hypothesis for our data sets. Hence, high p values suggest that the power law is not ruled out. This approach is to be contrasted with the usual approach, which for a given null hypothesis interprets low p values as evidence in favor of the alternative hypothesis. See Clauset et al. (2009) for a more detailed discussion of these interpretations of p values.

In case of nested models, \(2\hbox {LR}\) has a limit a Chi squared distribution (Vuong 1989).

The power-law with exponential cut-off behaves like the pure power-law model for smaller values of \(x\), \(x\geqslant x_0\),while for larger values of \(x\) it behaves like an exponential distribution. The pure power-law model is nested within the power-law with exponential cut-off, and for this reason the latter always provides a fit at least as good as the former.

I would like to thank an anonymous referee for suggesting the inclusion of this distribution in our comparison of alternative models.

See Table 2 for a list of the analyzed Scopus areas of science.

For example, for articles published in 1998 we have analyzed citations received during 1998–2002, while for articles published in 2002, those received during 2002–2006.

For all fields of science analyzed, there were some articles with missing information on citations. These articles were removed from our samples. However, this has usually affected only about 0.1 % of our samples.

In Albarrán and Ruiz-Castillo (2011), the power-law hypothesis is found plausible for 17 out of 22 WoS fields of science. It is rejected for “Pharmacology and Toxicology”, “Physics”, “Agricultural Sciences”, “Engineering”, and “Social Sciences, General”. These results are not directly comparable with those of the present paper as Scopus and WoS use different classification systems to categorize journals.

References

Albarrán, P., Crespo, J. A., Ortuño, I., & Ruiz-Castillo, J. (2011). The skewness of science in 219 sub-fields and a number of aggregates. Scientometrics, 88(2), 385–397.

Albarrán, P., Crespo, J.A., Ortuño, I., & Ruiz-Castillo, J. (2011). The skewness of science in 219 sub-fields and a number of aggregates. Working paper 11–09, Universidad Carlos III.

Albarrán, P., & Ruiz-Castillo, J. (2011). References made and citations received by scientific articles. Journal of the American Society for Information Science and Technology, 62(1), 40–49.

Anastasiadis, A. D., deAlbuquerque, M. P., deAlbuquerque, M. P., & Mussi, D. B. (2010). Tsallis q-exponential describes the distribution of scientific citations—A new characterization of the impact. Scientometrics, 83(1), 205–218.

Baayen, R. H. (2001). Word frequency distributions. Dordrecht: Kluwer.

Chadegani, A. A., Salehi, H., Md Yunus, M., Farhadi, H., Fooladi, M., Farhadi, M., et al. (2013). A comparison between two main academic literature collections: Web of Science and Scopus databases. Asian Social Science, 9(5), 18–26.

Clauset, A., Shalizi, C. R., & Newman, M. E. (2009). Power-law distributions in empirical data. SIAM Review, 51(4), 661–703.

de Solla Price, D. (1965). Networks of scientific papers. Science, 149, 510–515.

de Solla Price, D. (1976). A general theory of bibliometric and other cumulative advantage processes. Journal of the American Society for Information Science, 27(5), 292–306.

Egghe, L. (2005). Power laws in the information production process: Lotkaian informetrics. Oxford: Elsevier.

Eom, Y. H., & Fortunato, S. (2011). Characterizing and modeling citation dynamics. PloS One, 6(9), e24,926.

Gabaix, X. (2009). Power laws in economics and finance. Annual Review of Economics, 1(1), 255–294.

Golosovsky, M., & Solomon, S. (2012). Runaway events dominate the heavy tail of citation distributions. The European Physical Journal Special Topics, 205(1), 303–311.

Laherrère, J., & Sornette, D. (1998). Stretched exponential distributions in nature and economy:“Fat tails” with characteristic scales. The European Physical Journal B, 2(4), 525–539.

Lehmann, S., Lautrup, B., & Jackson, A. (2003). Citation networks in high energy physics. Physical Review E, 68(2), 026,113.

Li, Y., & Ruiz-Castillo, J. (2013). The impact of extreme observations in citation distributions. Tech. rep., Universidad Carlos III, Departamento de Economía.

López-Illescas, C., deMoya-Anegón, F., & Moed, H. F. (2008). Coverage and citation impact of oncological journals in the Web of Science and Scopus. Journal of Informetrics, 2(4), 304–316.

Lotka, A. (1926). The frequency distribution of scientific productivity. Journal of Washington Academy Sciences, 16(12), 317–323.

Newman, M. E. (2005). Power laws, Pareto distributions and Zipf’s law. Contemporary Physics, 46(5), 323–351.

Peterson, G. J., Pressé, S., & Dill, K. A. (2010). Nonuniversal power law scaling in the probability distribution of scientific citations. Proceedings of the National Academy of Sciences, 107(37), 16,023–16,027.

Peterson, J., Dixit, P. D., & Dill, K. A. (2013). A maximum entropy framework for nonexponential distributions. Proceedings of the National Academy of Sciences, 110(51), 20,380–20,385.

Radicchi, F., Fortunato, S., & Castellano, C. (2008). Universality of citation distributions: Toward an objective measure of scientific impact. Proceedings of the National Academy of Sciences, 105(45), 17,268–17,272.

Redner, S. (1998). How popular is your paper? An empirical study of the citation distribution. The European Physical Journal B, 4(2), 131–134.

Redner, S. (2005). Citation statistics from 110 years of Physical Review. Physics Today, 58, 49–54.

Shalizi, C.R. (2007). Maximum likelihood estimation for q-exponential (Tsallis) distributions. Tech. rep., arXiv preprint math/0701854.

Stringer, M. J., Sales-Pardo, M., & Amaral, L. A. N. (2008). Effectiveness of journal ranking schemes as a tool for locating information. PLoS One, 3(2), e1683.

Tsallis, C., & deAlbuquerque, M. P. (2000). Are citations of scientific papers a case of nonextensivity? The European Physical Journal B, 13(4), 777–780.

VanRaan, A. F. J. (2006). Statistical properties of bibliometric indicators: Research group indicator distributions and correlations. Journal of the American Society for Information Science and Technology, 57(3), 408–430.

Vuong, Q. H. (1989). Likelihood ratio tests for model selection and non-nested hypotheses. Econometrica, 57(2), 307–333.

Wallace, M. L., Larivière, V., & Gingras, Y. (2009). Modeling a century of citation distributions. Journal of Informetrics, 3(4), 296–303.

Acknowledgments

I would like to thank two anonymous referees for helpful comments and suggestions that improved this paper. The use of Matlab and R software accompanying the papers by Clauset et al. (2009), Shalizi (2007) and Peterson et al. (2013) is gratefully acknowledged. Any remaining errors are my responsibility.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution License which permits any use, distribution, and reproduction in any medium, provided the original author(s) and the source are credited.

About this article

Cite this article

Brzezinski, M. Power laws in citation distributions: evidence from Scopus. Scientometrics 103, 213–228 (2015). https://doi.org/10.1007/s11192-014-1524-z

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-014-1524-z