Abstract

How does artificial intelligence (AI) impact audit quality and efficiency? We explore this question by leveraging a unique dataset of more than 310,000 detailed individual resumes for the 36 largest audit firms to identify audit firms’ employment of AI workers. We provide a first look into the AI workforce within the auditing sector. AI workers tend to be male and relatively young and hold mostly but not exclusively technical degrees. Importantly, AI is a centralized function within the firm, with workers concentrating in a handful of teams and geographic locations. Our results show that investing in AI helps improve audit quality, reduces fees, and ultimately displaces human auditors, although the effect on labor takes several years to materialize. Specifically, a one-standard-deviation change in recent AI investments is associated with a 5.0% reduction in the likelihood of an audit restatement, a 0.9% drop in audit fees, and a reduction in the number of accounting employees that reaches 3.6% after three years and 7.1% after four years. Our empirical analyses are supported by in-depth interviews with 17 audit partners representing the eight largest U.S. public accounting firms, which show that (1) AI is developed centrally; (2) AI is widely used in audit; and (3) the primary goal for using AI in audit is improved quality, followed by efficiency.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

How does artificial intelligence (AI) affect firms’ product quality and efficiency? AI investments by firms in all sectors have skyrocketed in recent years (Bughin et al., 2017; Furman and Seamans 2019) and have been associated with increased firm sales (Babina et al. 2020) and market value (Rock 2020). However, the evidence on whether AI can help make firms more productive remains mixed.Footnote 1 Providing compelling empirical evidence of AI’s impact on firms’ product quality and efficiency is challenging for two reasons. First, there is a dearth of firm-level data necessary to quantify AI adoption in individual firms (Raj and Seamans 2018). Second, occupations differ in the extent to which they can be substituted or complemented by AI algorithms, indicating that AI’s effects may concentrate in specific industries, with muted or null effects overall. We circumvent these challenges by bringing together two key elements: (1) a large proprietary dataset of employee resumes, which allows us to capture actual employment of AI workers by individual firms; and (2) a focus on the auditing sector, which is especially well-suited for studying potential effects of artificial intelligence. AI algorithms help recognize patterns and make predictions from large amounts of data—tasks that auditors heavily rely on—placing auditors among the occupations that are most exposed to new technologies such as AI (Frey and Osborne 2017). Focusing on the audit sector, our paper is the first to demonstrate the gains from AI investments for firms’ product quality and efficiency.

To measure firms’ investments in AI, we adopt the novel measure of AI human capital proposed by Babina et al. (2020). Their approach empirically determines the most relevant terms (e.g., machine learning, deep learning, TensorFlow, neural networks) in AI jobs using job postings data and then locates employees with these terms in the comprehensive resume data, which come from Cognism Inc. and cover the broad cross-section of both U.S. and foreign firms. Importantly, this measure does not rely on any mandated reporting (e.g., R&D expenditures) and as such is not limited to public firms. The novel measure enables us to assess the impact of AI in a comprehensive empirical exploration. Our sample spans 2010–2019, covers the 36 largest U.S. public accounting firms with at least 100 employees, and analyzes more than 310,000 employee profiles.

To guide our empirical tests, we conduct in-depth semi-structured interviews with 17 audit partners representing the largest eight audit firms (nine partners from Big 4 firms and eight partners from non-Big 4 firms). These interviews reveal that (1) AI is widely used in audit; (2) adoption of AI in the audit sector is highly centralized and top-down; (3) the main focus of AI is enhanced audit quality, which is achieved through improved anomaly and fraud detection, risk assessment, and the ability to refocus human labor on more advanced and high-risk areas; (4) while auditors’ AI investments are instrumental for audit quality, clients’ AI investments play a much smaller role; (5) AI adoption in recent years has been accompanied by noticeable shifts in labor demand and composition, such as eliminating lower-level tasks; and (6) the main barrier to widespread adoption of AI is onboarding and training skilled human capital.

Based on these insights, we focus our empirical analysis on the role of AI in improving audit quality. We document that audit firms investing in AI are able to measurably lower the incidence of restatements, material restatements, restatements related to accruals and revenue recognition, and restatement-related SEC investigations. Specifically, a one-standard-deviation increase in the share of a firm’s AI workers over the course of the prior three years translates into a 5.0% reduction in the likelihood of a restatement, a 1.4% reduction in the likelihood of a material restatement, a 1.9% reduction in the likelihood of a restatement related to accruals and revenue recognition, and a 0.3% reduction in the likelihood of a restatement-related SEC investigation.Footnote 2Footnote 3 Consistent with the rapid growth in the use of AI technologies in recent years, when slicing our sample into earlier (2010–2013) and later (2014–2017) time periods, we observe substantially larger effects of AI on audit quality in the later period.

We document two additional important findings on AI and audit quality. First, we test the insight, from our interviews with audit partners, that the main driver of audit quality is auditors’ use of AI, not their clients’ use of AI. We take advantage of the extensive coverage of the Cognism data to measure not only auditors’ AI investments but also their clients’ AI investments. The reduction in restatements comes entirely from auditors’ AI, consistent with the findings in Austin et al. (2021). Second, we leverage the detailed location data in the employee resumes to test whether it is firm-level (national) AI investments or office-level (local) AI investments that trigger audit quality improvements. Reflecting the centralized function of AI employees, the results become substantially weaker when the hiring of AI workers is measured at the office level rather than at the firm level.

The resume data also allow us to explore several additional important factors. First, since our data provide extensive coverage for the 36 largest audit firms, we can show that the effect of AI is present and consistent in both Big 4 and non-Big 4 firms. Second, we use the approach developed by Fedyk and Hodson (2020) to measure firms’ investments in general technical skills such as software engineering and data analysis and show that our results are driven specifically by AI and not by other technologies. Third, we conduct several cross-sectional tests to show that our results are stronger in subsamples where we expect AI to have greater effects. Specifically, we find stronger results for audits of older firms (which are likely to have more extensive operations and larger accumulated datasets), on auditors’ new clients (consistent with insights from our interviews with audit partners and with the evidence provided by Eilifsen et al. (2020)), and in the retail industry (which many of our interviewees highlighted as the most suitable industry for AI tools).

In the final part of the paper, we provide preliminary evidence that AI not only improves product quality but also enables audit firms to deliver the product more efficiently—a novel result for the audit industry that has not been shown in other sectors. Looking at fees, we show that a one-standard-deviation increase in the share of AI workers over the previous three years predicts a reduction of 0.9%, 1.5%, and 2.1% in audit fees over the following one, two, and three years, respectively. At the same time, we document that firms investing more in AI are able to cut down their labor force, although this organizational change takes several years to implement. A one-standard-deviation increase in the share of AI workers over the past three years translates into an economically sizable decrease of 3.6% in the total number of accounting employees at the firm three years later and an even greater drop of 7.1% four years out. The reduction in the labor force suggests that audit firms’ ongoing costs of conducting audits decrease with investment in AI, and we see evidence of this in fees earned per employee, which increase with AI adoption.

The result on the displacement of auditors when firms invest in AI is especially noteworthy, given the discussion on how technological advances such as AI might impact the labor market, and yet limited evidence on actual labor displacement to date. Accounting and auditing corresponds to precisely the type of industry where the effects of AI on the labor force are likely to be most visible, even when previous technical innovations (including robotics) have been less disruptive.Footnote 4 AI’s focus on tasks such as decision-making, prediction, and anomaly identification suggests a potential for the displacement of white-collar jobs (in contrast to the unskilled manual labor displaced by previous technologies). Focusing on the audit sector, we provide early evidence that this displacement is occurring at the firm level. This suggests that audit firms’ increased reliance on technology may be a potential mechanism for the trends of increased departures and turnover among auditors (Knechel et al. 2021).

We perform a deeper dive into the type of workers displaced by AI. Specifically, we classify all employees in the sample into junior level (e.g., analysts and associates), mid-tier (e.g., managers and team leads), and senior level (e.g., principals, directors, and partners) based on their job titles. We do not find any significant displacement effects at the mid-tier and senior levels. Instead, the reduction in accounting employees concentrates at the junior level. For a one-standard-deviation increase in the share of AI workers over the previous three years, firms reduce their junior workforce by an average of 5.7% three years later and by an average of 11.8% after four years. This is consistent with firms’ investments in AI enabling them to automate human analysis tasks at the expense of inexperienced workers, while employees with more experience and accumulated institutional capital are less affected.

Taken together, our results show that audit firms are able to leverage AI to improve their processes, providing higher audit quality while operating with fewer employees and lower audit fees. This offers novel insight to the recent literature on the adoption and economic impact of AI (Alderucci et al. 2020; Rock 2020; Babina et al. 2020) by providing the first systematic evidence that AI helps improve product quality.

Our results also speak to the literature on audit quality, which shows that firm-, office-, and partner-level expertise and characteristics influence audit quality (Francis and Yu 2009; Choi et al. 2010; Francis et al. 2013; Bills et al. 2016; Singer and Zhang 2017; Beck et al. 2018). We build on this foundation by considering a technical characteristic that has the potential to be especially disruptive: the adoption of AI technologies. In doing so, we contribute a novel perspective to the literature on the effects of new technologies on audit. A number of papers have pointed to the ways in which data analytics and machine learning can improve prediction in audit and fraud detection processes (Cao et al. 2015; Brown et al. 2020; Perols et al. 2017; Kogan et al. 2014; Yoon et al. 2015). However, despite the increasing interest in AI applications from academics and practitioners alike, large-scale empirical evidence on the effects of AI adoption in the auditing space remains scarce. Two exceptions are Law and Shen (2021) and Ham et al. (2021), who focus on office-level AI job postings to characterize the evolution of audit firms’ demand for AI skills. In contrast, we emphasize actual hiring of AI employees. By analyzing real employees’ profiles, roles, locations, skills, and job histories, ours is the first study to provide an overview of what the AI workforce in audit firms actually looks like: its composition, organizational structure, and applications within the firm.Footnote 5

The remainder of the paper proceeds as follows. Section 2 discusses the related literature, summarizes interviews with 17 audit partners, and draws out testable empirical predictions. Section 3 introduces our comprehensive resume dataset, discusses the construction of the firm-level measure of AI human capital, and presents a detailed background on AI workers in the audit sector, their roles, and their locations within firms. Section 4 discusses empirical results concerning audit quality. Section 5 contains the evidence on efficiency and labor displacement. Section 6 concludes.

2 Related literature, interviews with audit partners, and empirical predictions

2.1 Related literature: Technology in audit

Technology use in audit falls mostly under the umbrella of data analytics, which entails “the science and art of discovering and analyzing patterns, identifying anomalies, and extracting other useful information in data underlying or related to the subject matter of an audit through analysis, modeling, and visualization for the purpose of planning or performing the audit” (AICPA 2017). The benefits of using audit data analytics can include improved understanding of an entity’s operations and associated risks, increased potential for detecting fraud and misstatements, and improved communications with those charged with governance of audited entities. Prior research has recognized the potential for data analytics to increase audit effectiveness (Appelbaum et al. 2017). Firms employ multiple levels and various types of data analytics tools in audits (Deloitte 2016; EY 2017; KPMG 2017, 2018). Regulators recognize the increasing use of technology in audit, seek input on the impact of these trends, and underscore the need for additional standards regulating technology use (PCAOB 2017; PCAOB 2019; IAASB 2018).

Prior research has concentrated on a conceptual understanding of the trends, potential applications, and challenges of the use of data analytics (e.g., Alles 2015; Brown-Liburd et al. 2015; Cao et al. 2015; Gepp et al. 2018; Cooper et al. 2019). For example, Brown-Liburd et al. (2015) acknowledge that Big Data provides an opportunity to use powerful analytical tools and that it is important for audit firms to use these new tools to enhance the audit process. Gray and Debreceny (2014) discuss the increasing value of data mining as a financial statement auditing tool and a de rigueur part of e-discovery in lawsuits. Rose et al. (2017), in an experimental setting, demonstrate that Big Data visualizations affect an auditor’s evaluation of evidence and professional judgments. Al-Hashedi and Magalingam (2021) provide a review of the extensive research related to detecting financial fraud (bank fraud, insurance fraud, financial statement fraud, and cryptocurrency fraud) from 2009 to 2019, highlighting that 34 data mining techniques were used to identify fraud in various financial applications.

Preliminary evidence on the use of data analytics tools supports the idea of an overall positive attitude towards the tools’ implementation but leaves open questions of the scope and efficiency of their use. Salijeni et al. (2019) provide evidence of a growing reliance on data analytics tools in the audit process while voicing some concerns over whether these changes actually have a substantial effect on the nature of audit and audit quality. The U.K. Financial Reporting Council (FRC 2017) reviews the use of audit data analytics (ADA) for the six largest U.K. audit firms in 2015. They demonstrate that all firms were investing heavily in ADA capability (hardware, software, or skills) and that all cited audit quality as a main driver for the ADA implementation. Similarly, CPA Canada (2017) survey 394 auditors from large-, mid-, and small-sized firms regarding the use of ADA and find ADA use in all major phases of the audit. Eilifsen et al. (2020) interview international public accounting firms in Norway and find overall positive attitudes towards ADA usefulness while suggesting that more advanced ADA is relatively limited.

More recent research collects additional evidence on how various data analytics tools are utilized by auditors and perceived by their clients and standard-setters. Walker and Brown-Liburd (2019) interview audit partners and find that (1) auditors are influenced by competition, clients/management, and regulatory bodies to incorporate data analytics; (2) auditors are starting to use more complex tools; and (3) training is a significant factor in making audit data analytics an institutionalized activity. Focusing on interactions between the main stakeholders, Austin et al. (2021) conduct interviews with matched dyads of company managers and their respective audit partners, as well as regulators and data analysts, to examine the dissemination of data analytics through financial reporting and auditing. Their main findings highlight that (1) auditors are “pushing faster” on incorporating data analytics to stay competitive; (2) auditors’ clients are supportive of the data analytics applications and improve their data practices to provide high-quality data; and (3) there are currently no clear rules from the standard-setters on how data analytics should be used in financial reporting, and normative rules (with private feedback from regulators) are applied instead of formal rules. Christ et al. (2021) provide evidence of superior audit quality driven by technology-enabled inventory audits.

2.2 Related literature: Artificial intelligence (AI)

The Organization for Economic Co-operation and Development (OECD) defines Artificial Intelligence (AI) as a “machine-based system that can, for a given set of human-defined objectives, make predictions, recommendations or decisions influencing real or virtual environments.” Correspondingly, we define AI in audit as machine-based methods used to represent, structure, and model data (including large quantities of unstructured data), leading to more accurate predictions and inference. What differentiates AI from previous data analytics techniques is that AI is able to model highly non-linear relationships in the data and process both large volumes of data and unstructured data such as text and images. AI algorithms can complement other recent technologies, which provide data that can be analyzed by AI (e.g., images from drones) or specific applications for AI algorithms (e.g., robotic process automation).

A nascent literature has been exploring the adoption and economic impact of AI (Alderucci et al. 2020; Rock 2020; Babina et al. 2020). To date, there is limited evidence of AI replacing human labor, despite the ongoing attention to this possibility in both policy discussions and the theoretical literature (e.g., Agrawal et al. 2019). For example, Acemoglu et al. (2022) document that AI is associated with some job replacement at individual establishments but not at the aggregate occupation or industry level, suggesting that AI has no discernible aggregate effects on labor to date. Several papers document that AI helps firms grow faster (e.g., Rock 2020; Babina et al. 2020). The most relevant paper to ours in this space is Babina et al. (2020), which introduces the measure of AI investments based on employee profiles. Babina et al. (2020) look at U.S. public firms in all sectors and document that AI increases firm growth by boosting product innovation. Although we adopt their measure, our work is substantially different, because our focus on a particular sector (audit) allows us to go much more in depth on specific applications of AI and to consider unique economic implications such as product quality.

The audit sector presents a unique setting to study the impact of AI, with predictions that differ from other economic sectors for two reasons. First, the audit process features a single product with rigid rules and standards, offering limited scope for applying AI towards rapid growth through inventing new products, unlike in the industries studied by Babina et al. (2020). Instead, the audit process’s clearly defined objectives and reliance on accurate predictions, especially anomaly detection, provide the scope to increase both the quality (by reducing the error rate) and the efficiency (by automating tasks such as fraud detection) of the auditing process. Second, the auditing sector offers a unique opportunity to study the impact of AI on human labor in firms that focus on the tasks that are most exposed to potential disruption from AI (Frey and Osborne 2017).

Due to the difficulty in obtaining data on individual firms’ adoption and use of technology, most of the empirical research on technology in audit examines survey or experimental evidence. Our paper contributes the first large-scale study of audit firms’ use of technology and its effect on audit quality, using detailed information from employee resumes. We focus on artificial intelligence as the most sophisticated available technology for data analysis that economists have touted as a general-purpose technology with the potential to transform firm operations and high-skilled jobs (Aghion et al. 2019; Mihet and Philippon 2019; Acemoglu et al. 2022). Additionally, our data allow us to measure other human-capital-intensive technological investments, including more basic (non-AI) data analytics and software engineering. This enables us to compare and contrast these technologies with AI. In contemporaneous work, Law and Shen (2021) and Ham et al. (2021) offer a complementary investigation to ours, using job postings data to look at the evolution of audit firms’ demand for AI workers in recent years. By contrast, our resume data allow us to capture how AI is already being used in the audit process.

2.3 AI in audit: Insights from interviews with audit partners and empirical predictions

To better understand the scope, timing, potential benefits, and challenges of AI adoption by U.S. public accounting firms, we conducted 17 semi-structured interviews with audit partners and national technology leaders from the eight largest U.S. public accounting firms.Footnote 6 Big 4 firms were represented by nine partners, including three national technology leaders; mid-tier audit firms (Grant Thornton, BDO, RSM/McGladrey, and Moss Adams) were represented by eight partners, including two national technology leaders (e.g., Chief Transformation Officer). The interviewees had different firm tenures and years of experience (from eight to 35 years). Interviews took place over the three-month period from November 2021 to January 2022. Each interview lasted from 29 to 63 minutes and was recorded conditional on formal approval from the interviewee, in addition to notes taken by interviewers. The interviewees were assured of their anonymity. We developed the semi-structured interview script in line with best practices in the literature (Austin et al. 2021). Our interview questions centered on the use of technology in audit, with a specific focus on the use of AI.

The first set of questions was related to the extent to which different types of technologies are used in audit. Responses reveal that all firms use technologies like robotic process automation (mostly as a tool for internal operations in administrative tasks, though the technology is “becoming old-school” and declining in use in recent years), data analytics, and AI (all but one interviewee from a non-Big 4 firm report extensive use of AI in audit practices in their firms). While a few of our interviewees indicated that drones could be useful in specific industries like timber or agriculture, only one interviewee from a Big 4 firm mentioned drones as being actually used in audits.Footnote 7 Overall, we received a strong message that audit firms (Big 4 and non-Big 4) extensively use technology, with AI being one of the most promising and effective instruments to improve quality and efficiency. As one of the Big 4 partners summarizes it: “It’s hard to argue against the value of AI, especially for anomaly detection and fraud prevention. We also feel pressure from our clients to use cutting-edge technologies like AI. The focus is all on AI. … We are slowly becoming a tech firm.”

In terms of specific AI applications, the main areas where AI is used in audits are anomaly detection and fraud prevention (by using machine learning for pattern analysis), revenue analysis (e.g., order/invoice matching, mapping receivables with cash receipts), financial risk assessment, bank secrecy and anti-money laundering, optical character recognition to review contracts and leases, and analysis of large public databases (big data) for benchmarking. When asked about specific industries, interviewees consistently estimate that AI has the greatest impact on industries with many small repeat transactions, such as retail.

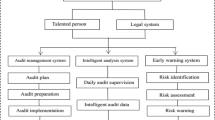

The second set of questions was designed to provide an in-depth understanding of the development and implementation of AI in audit practices. According to our interviewees, AI tools used in audit are predominately developed in centralized hubs and implemented as top-down standard procedures. We also find that Big 4 firms use a combination of in-house algorithm development and external software purchases. By contrast, smaller firms rely more on external solutions and often coordinate and co-invest by partnering with AICPA. In terms of the size of the AI-labor force, one technology leader summarized it as follows: “There is no need for hundreds of engineers; a few people who understand the business can direct the process and teach and educate others.”

Our interviews yield interesting insights about how audit firms incentivize and educate their personnel for wide adoption of new technologies. Firms have up to 30–40 AI-based tools that are available for auditors’ practical use (some tools are required, some are optional, and some are only relevant to specific engagements), and partners are expected to use these tools on at least 2–3 clients; expectations are even stronger for new and large clients. These centralized incentives are “baked into the performance matrices,” and AI is accounted for in performance evaluations like ROI, because it “creates revenues, reduces costs, increases capacity.” Trained human capital is recognized as the main barrier to AI implementation, and significant investments are made to overcome the challenge by investing in employee upskilling: “Developing new tools is relatively easy, it’s scaling and deploying that takes the most effort. A few years ago, we strategically decided to digitally upskill all 60K employees—from first years to partners.” The overall perception is that the entire audit industry is changing, and “whoever doesn’t invest in new technologies will get eaten up.” In terms of the timeline of AI adoption, most of our conversations reveal that investment in AI began around 2010–2012, with some indication that it may have “plateaued in the recent 1–2 years.”Footnote 8

Partners report that client demand plays an important role in AI implementation. Clients either expect or request AI usage by auditors, and auditors “have to follow suit as clients do expect [them] to do the work in a specific fashion: expectations on what the auditor brings to the table get higher and higher.” At the same time, almost every partner indicates that clients expect audit fees to decrease as auditors use more advanced technologies. Finally, most clients rely on auditors’ AI efforts in the audit space instead of pursuing their own: “Clients almost never invest in AI for audit purposes; they don’t understand audit well enough to make such moves. Auditors do.” The interviewees also note that regulators are generally receptive to the new technologies, with only limited concerns related to regulation: “A couple of years ago PCAOB acknowledged the emergence of new technologies and started to provide more guidance, and there is additional demand for clear guidance given how many firms are now using these technologies.”

The third set of questions was focused on the potential benefits of AI in terms of audit quality and efficiency. All of our interviewees who report the usage of AI in audit note that AI has a positive effect on audit quality: “Quality is a must. Our main goal is improved quality, and if we are lucky, we are more efficient.” In particular, AI enables the implementation of new audit models, directs auditors to the areas that are likely to be most problematic, and frees human auditors from routine jobs, allowing them to concentrate on more important tasks. Most interviewees agree that AI can also lead to improved efficiency from reduced manual work (e.g., analyzing data/testing for accuracy in a fraction of the time it used to take), faster data extraction, and centralized models in place of custom solutions. However, audit partners point out that efficiency effects might take time to realize: “Efficiency takes a bit of time—not one year, but years later.” In terms of the labor effects, all interviewees agree that “labor effects concentrate on removing lower-level tasks and asking people to take on more complex tasks and/or handle larger volume of tasks.”

To sum, our interviews reveal that AI is considered a key technology for audit quality and efficiency. The highest potential impact of AI is anticipated in areas such as fraud prevention, risk assessment, money-laundering detection, bank secrecy, and cybersecurity. Importantly, AI algorithms are able to process a variety of data formats, including image recognition, parsing leases and contracts, and examining firms’ networks (e.g., supplier networks or ownership structures) for potential signs of money laundering.Footnote 9 Based on the prior literature and our interviews, we make the following predictions related to the effects of AI on audit:

-

Prediction 1: AI improves audit quality.

-

Prediction 2: AI improves audit efficiency, lowering the cost of the audit product.

-

Prediction 3: AI reduces the audit workforce, especially the entry-level employees.

3 Sample, data, and measures

Our approach to measuring AI investments leverages detailed resume data to identify actual AI talent at each audit firm at each point in time. Our data are unique in their ability to capture technology adoption in audit firms comprehensively, complementing previous mostly survey-based evidence (Salijeni et al. 2019; Christ et al. 2021; Krieger et al. 2021). We begin by describing the data and then detail the construction of the AI measure.

3.1 Data

The dataset of individual employee resumes comes from Cognism, a client relationship management company that combines and curates individual resumes from third party providers, partner organizations, and online profiles. The dataset is maintained in compliance with the latest EU General Data Protection Regulation (GDPR), the California Consumer Privacy Act (CCPA), and a number of other data protection policies. The data contain 3.4 billion work experiences across 535 million individuals, spanning more than 30 years and over 22 million organizations globally, including private firms, public firms, small and medium-sized enterprises, family-run businesses, non-profits, governmental entities, universities, and military organizations. For each individual in our sample, we have the following general information: a unique identifier, city and country level location, an approximate age derived from the individual’s educational record, gender classified based on the first name, social media linkages, and a short bio sketch (where provided). For each employment record listed by the individual, we see the start and end dates, the job title, the company name, and the job description (where provided). Similarly, each education record includes start and end dates, the name of the institution, the degree earned, and the major. In addition, individuals may volunteer self-identified skills and list their patents, awards, publications, and similar attainments.

The data are enriched with state-of-the-art machine learning techniques to identify employees’ departments and seniority. Over 20,000 individual job titles are classified manually based on markers of seniority (e.g., “Partner”) and department (e.g., “Assurance”). The remaining job titles are then classified into departments using a probabilistic language model and into seniority levels using an artificial neural network. For employees of accounting firms, where the organizational structure is relatively streamlined, we assess the model’s output by manually reviewing an additional sample of over 10,000 positions and correcting all discrepancies for job titles with more than one associated employee. This procedure confirms that Cognism’s original model covering all firms has a very high accuracy rate (over 95% for seniority and 93% for departments).

In order to observe the auditor-client network and measure outcome variables such as restatements and fees, we merge the Cognism data to Audit Analytics. This process is not straightforward, because the resume data are not standardized, with employees potentially listing their company names in very different ways (e.g., “PricewaterhouseCoopers” vs. “PwC”). For each firm in Audit Analytics, we use textual analysis to identify different references to the same firm name in the resume data.Footnote 10 We restrict our final sample to all firms in Audit Analytics that are matched to at least 100 employees in the Cognism resume data and at least one employee identified as an AI worker over the sample period (2010–2019).Footnote 11 This procedure results in a sample of 36 unique firms, covering more than 310,000 employees’ profiles. Our sample includes the Big 4 firms (PwC, Deloitte, KPMG, and Ernst & Young) and 32 additional firms (e.g., Grant Thornton, BDO USA, RSM US, McGladrey, Moss Adams, CohnReznick, Baker Tilly, Crowe Horwath).

Table 1 Panel A provides descriptive statistics of the sample. On average, our comprehensive data cover 61,564 U.S.-based employees per year for Deloitte, 49,424 for PwC, 42,639 for EY, and 31,498 for KPMG. Our sample contains 15 non-Big 4 firms with over 1000 employees (the two largest non-Big 4 firms are RSM and Grant Thornton with 11,784 and 8138 employees per year, respectively). Table 1 Panel B presents the descriptive statistics by year from 2010 to 2019. As can be seen from this panel, the total number of people employed in the auditing industry more than doubled over the last decade, from 151,352 employees in 2010 to 310,422 employees in 2019. To validate these numbers, we compare employee counts in the Cognism data against the firms’ official U.S. employment numbers, as reported in Accounting Today. We find that Cognism covers approximately 86% of public accounting employees, which provides excellent coverage and adds external validity to our analyses.

3.2 Measure of AI investments

Given AI’s heavy reliance on human rather than physical capital, we measure AI investment based on firms’ employees.Footnote 12 Babina et al. (2020) propose and validate a procedure that empirically determines the most relevant employee skills for the implementation of AI by U.S. firms. We leverage this list of relevant terms (e.g., “machine learning,” “deep learning,” “TensorFlow,” “neural networks,” etc.) and the detailed resume data to identify individual workers skilled in the area of Artificial Intelligence. The classification is performed at the level of each individual job record of each worker. For a particular job record, we classify the individual as a direct AI employee if at least one of the following conditions holds: (1) the job (role and description) is AI-related, (2) the individual produced any AI-related patents or publications during the job, or (3) the individual received any AI-related awards during the job.

We aggregate the individual AI and non-AI jobs up to the firm level by computing, for each firm in each year, the percentage of employees who are classified as AI-related. Figure 1 depicts the percentage of AI employees relative to all employees for the six largest audit firms in terms of the number of completed audits per year: Deloitte, PwC, EY, KPMG, Grant Thornton, and BDO USA. The percentage of AI employees has steadily increased for all six firms over the last decade, with an especially pronounced increase for Big 4 firms. This finding is generalized in Panel B of Table 1, which reports descriptive statistics for all audit firms in our sample: the percentage of AI employees steadily increased from 0.08% in 2010 to 0.39% (0.37%) in 2018 (2019). While the share of AI workers is low in absolute terms, in terms of the technological and innovative nature of their work, AI workers are most similar to inventors, who represent around 0.13%–0.24% of the U.S. workforce but have a disproportionate impact on firm operations (Babina et al. 2021). Interestingly, when compared to different industries’ percentages of AI employees reported in Babina et al. (2020), the percentage of AI employees in audit firms ranks quite high—only slightly lower than the percentage of AI employees in the information industry and higher than the percentage of AI employees in all other industries (based on NAICS-2 digit industry codes). In Appendix B, we provide a sample of actual job descriptions of identified AI workers to further demonstrate that our measure of AI investments comprehensively captures relevant AI activities in audit firms.

3.3 Descriptive statistics of AI workers

Table 2 reports summary statistics on individual AI employees across the 36 audit firms in our sample. AI workers tend to be predominantly (70%) male and relatively young (with a median age of 34 and an interquartile range of 28–41 years old). A small set (approximately 11%) of AI workers hold doctorate degrees (including PhD and JD). The incidence of MBA degrees is similar at 13%. The most common maximal educational attainments of AI workers are bachelor’s degrees (38% of the sample) and non-MBA master’s degrees (31% of the sample). In terms of disciplines of study, AI workers tend to be largely technical (e.g., 28% hold majors in engineering, 16% in computer science, 9% in mathematics, and 7% in information systems). A substantially smaller but still sizable portion of AI workers hold business-related degrees (e.g., 15% hold majors in finance, 14% in economics, and 9% in accounting). The skills most commonly reported by AI workers include data analysis (42% of all AI workers who self-report at least one specific skill), Python (37%), SQL (36%), machine learning (32%), the language R (30%), research (24%), and analytics (23%).

In terms of professional experience, AI workers in audit firms tend to grow within the audit industry rather than come from outside (e.g., from tech firms). Over 38% of AI workers in audit firms worked in the Professional, Scientific, and Technical Services industry (NAICS two-digit code 54, which houses accounting firms) at their immediately preceding job experience. The next most frequent prior industry is Administrative Services (NAICS 56) at 14%, followed by Finance (NAICS 52) at 11%, Education (NAICS 61) and Information (NAICS 51) at 9% each, and Manufacturing (NAICS 31, 32, and 33) at 5%.

In terms of functional organization, the descriptive statistics in Table 2 show that the majority of AI workers (58%) tend to be relatively junior within the firm. The remainder are split evenly between 24% in middle management (i.e., managers and team leads) and 18% in senior roles (corresponding to principal or partner level positions). This mirrors the seniority distribution of all employees in audit firms (62% junior, 26% mid-level, and 12% senior), supporting the notion that AI is a function with a similar internal structure to other departments and functions.

The location data of AI workers confirms that AI is a centralized function within audit firms. Figure 2 shows the distribution of AI workers across the United States. There are two main hubs (with several hundred AI workers across the 36 firms in our sample) in New York and California, and smaller but noticeable hubs in Washington D.C., Illinois, and Texas. In total, these locations account for more than half of all AI workers in audit firms. This is consistent with comments by audit partners in our interviews, who point out that employees involved in implementing new technology tend to work in centralized groups aimed specifically at developing technical tools.

Table 3 provides descriptive statistics of firm-level investments in AI, juxtaposed with key audit quality measures, audit fees, and characteristics of audit clientele for our sample during 2010–2019. The share of AI employees (AI %) and the change in the share of AI employees over the past three years (ΔAI (−3, 0) (%)) show considerable cross-sectional variation across audits. For example, the mean of ΔAI (−3, 0) (%) is 0.10 with a standard deviation of 0.07. This allows for a meaningful cross-sectional analysis of the impact of the change in the share of AI workers on audit quality and efficiency. In terms of audit quality, on average, approximately 14% of issuers experience future restatements (Restatement), and approximately 3% experience material future restatements disclosed in Form 8-K item 4.02 (Material Restatement). SEC involvement in the restatement process is an even rarer event, experienced by only 1% of issuers (SEC investigation). Average audit and non-audit fees are $2.63 million and $0.64 million, respectively. Overall, the descriptive statistics of our sample are similar to those reported in prior studies examining audit quality and efficiency (Aobdia 2019; Hoopes et al. 2018).

4 AI and audit quality

Our main research question, motivated by the insights from our interviews with audit partners regarding AI applications in audit, is whether AI improves audit quality.

4.1 AI and audit restatements: Main analysis

DeFond and Zhang (2014) define audit quality as “greater assurance that the financial statements faithfully reflect the firm’s underlying economics, conditioned on its financial reporting system and innate characteristics.” While there are multiple empirical proxies used in the literature to assess audit quality, financial restatements are considered to be one of the most robust and universally applicable indicators of low audit quality (Knechel et al. 2013; DeFond and Zhang 2014; Christensen et al. 2016; Aobdia 2019). In their recent study, Rajgopal et al. (2021) analyze specific accusations related to audit deficiencies detailed in 141 AAERs and 153 securities class action lawsuits over a period of almost 40 years (1978–2016) and conclude that restatements consistently predict the most cited audit deficiencies.Footnote 13,Footnote 14

We analyze the association between the probability of restatements (I(RSTi,t)) and changes in the total share of AI workers over the course of the prior three-year period (ΔAI(−3,0)i,t) using the following modelFootnote 15:

Following the prior literature (e.g., DeFond and Zhang 2014; Aobdia 2019), we control for whether the audit is issued by a Big 4 auditor (Big4) and for firm characteristics that can affect the likelihood of restatements, such as size measured as the natural logarithm of total assets (Size), market value of equity scaled by book value of equity (MB), operating cash flows scaled by total assets (CFO), cash flow volatility measured as the three-year standard deviation of CFO (CFO_volatility), one-year percentage growth in property, plant, and equipment (PPE_growth), one-year percentage growth in sales (Sales_growth), the ratio of sales to lagged total assets (Asset_turn), current ratio (Current_ratio), quick ratio (Quick_ratio), leverage (Leverage), foreign income (Foreign_income), short- and long-term investments (Investments), net income scaled by average total assets (ROA), lagged total accruals (Lag total accruals), an indicator variable equal to one if a firm issued new equity in the prior year (Issued_equity), an indicator variable equal to one if a firm issued new debt in the prior year (Issued_debt), an indicator variable equal to one if a firm issued new equity or debt in the subsequent year (Future_finance), number of business segments (Num_busseg), number of geographic segments (Num_geoseg), and an indicator variable equal to one if a firm operates in a highly litigious industry (Litigation).Footnote 16 Appendix A provides detailed variable definitions. Continuous control variables are winsorized at the 1st and 99th percentiles to reduce the impact of outliers. To facilitate the comparison and interpretation of the coefficient estimates, we standardize all continuous independent variables to have standard deviations equal to one. We also include issuer industry and year fixed effects based on two-digit industry codes and cluster standard errors at the issuer level. We estimate regression (1) over 2010–2017 to allow up to three years for mistakes to be discovered and reported in restatements, but our results remain qualitatively unchanged if we extend the period to 2019.

Table 4 presents the results for four measures of the restatement variable I(RSTi,t). Column 1 looks at I(Restatementi,t), an indicator variable equal to one if the financial statements for firm i for year t are restated. Column 2 considers I(Material restatementi,t), an indicator variable equal to one if firm i reports a material restatement for year t, which is disclosed in a Form 8-K item 4.02. Column 3 investigates I(Revenue and accrual restatementi,t), an indicator variable equal to one if firm i reports a restatement for year t related to either revenue recognition or accruals issues. Column 4 considers I(SEC investigationi,t), an indicator variable equal to one if there is SEC involvement in the restatement process; such involvement can take the form of either an SEC comment letter triggering the restatement or a formal or informal SEC inquiry into the circumstances surrounding the restatement.

Negative and statistically significant coefficients on ΔAI(−3,0)I,t in all specifications indicate that adverse audit outcomes decline with recent investments in AI by the corresponding audit firms. These findings are true after controlling for auditee-specific characteristics and auditees’ industry and year fixed effects. Importantly, year fixed effects remove the broader time trend in the probability of restatements and allow us to truly explore the effect of cross-sectional differences in AI investments among audit firms. The estimated effects are economically and statistically significant. For example, a one-standard-deviation increase in an audit firm’s share of AI employees over the course of the prior three years is associated with a 5% reduction in the likelihood of restatements, a 1.4% reduction in the likelihood of material restatements, a 1.9% reduction in the likelihood of restatements related to accrual and revenue recognition, and a 0.3% reduction in the likelihood of restatements related to SEC investigations.

4.2 AI and restatements: Additional analyses

We bolster our analysis of the effect of AI on restatements with several additional tests. First, we show that the effects strengthen over time, consistent with the growing emphasis on AI technologies in recent years. Second, we document that the positive effects of AI are present for both Big 4 and non-Big 4 firms. Third, we measure and control for audit firms’ investments in non-AI data analytics and software engineering to show that our results are indeed specific to AI.

Audit firms have been investing in AI since the early 2010s. For example, KPMG in Singapore has investigated and researched the use of AI and machine learning in forensic accounting since 2012, using NLP and unsupervised machine learning in anomaly detection and text clustering to identify fraudulent vendors (Goh et al. 2019). Deloitte filed a patent for “Fraud Detection Methods and Systems” in 2012, using cluster analysis and unsupervised machine learning to detect fraud. However, AI investments have skyrocketed in recent years, as evidenced by the growth in AI workers from 0.08% of the audit workforce in 2010 to 0.39% in 2018. As a result, we expect the effect of AI on audit quality to be stronger in the second half of our period. In Table 5 Panel A we estimate specification (1) separately for two subperiods: 2010–2013 and 2014–2017. As predicted, the results are stronger in the latter period for all outcome variables, with the difference significant when looking at all restatements. For example, a one-standard-deviation increase in AI investments reduces the incidence of restatements by 2.9% during 2010–2013 and by 4.9% during 2014–2017.

We next consider whether our results reflect universal effects of AI or are merely driven by a small subset of firms—specifically, Big 4 firms, which, due to their size and importance, house the bulk of AI employees. In Table 5 Panel B we estimate specification (1) separately for audits performed by Big 4 firms and non-Big 4 firms. Overall, the results are very consistent across the two subsamples. The reduction in restatements from AI is higher among Big 4 firms (with the difference statistically significant), but the reduction in material restatements is slightly higher among non-Big 4 firms (with the difference not significant).

Finally, we address the concern that our results are confounded by an important omitted variable: that firms’ AI investments may be correlated with more general contemporaneous technological investments. We draw on the methodology developed in Fedyk and Hodson (2020) for identifying generic technical skills from employee resumes. The approach leverages topic modeling to classify hundreds of thousands of self-reported skills from individual resumes into 44 concrete skillsets ranging from Legal to Product Management. Some of the skillsets reflect broad areas of technical focus, which are more generic than AI. We take the two general technical skillsets that are most likely to be confounds to AI: Software Engineering (which captures programming abilities and reflects skills such as Java, Linux, and C++) and Data Analysis (which captures generic data analysis methods that are less sophisticated than AI, reflecting skills in statistics, Microsoft Office, and SPSS). Figure 3 contextualizes recent growth in AI against growth in Software Engineering and Data Analysis, highlighting differences between AI and these more traditional technologies. The share of AI workers at audit firms increases fivefold from 2010 to 2018. There is also a more general trend towards technical workers at audit firms, as reflected by the threefold increase in employees with Software Engineering skills, but this trend is not as stark as the one for AI. The share of employees with general (old-school) skills in Data Analysis remains practically flat from 2010 to 2018.

We estimate the following specification analyzing the relationship between the likelihood of adverse restatement outcomes, I(RSTi,t), and changes in the share of Software Engineering workers and Data Analysis workers over the course of the prior three-year period (ΔSE(−3,0)i,t and ΔDA(−3,0)i,t, respectively), with and without accounting for the change in AI (ΔAI(−3,0)i,t):

The dependent variables are I(Restatementi,t), I(Material restatementi,t), I(Revenue and accrual restatementi,t), and I(SEC investigationi,t). The controls are the same as in Table 4. The odd columns of Table 5 Panel C estimate specification (2) without including ∆AI(−3, 0)i, t.We see that investments in generic Data Analysis are not associated with improvements in audit quality, consistent with the lack of growth in this domain shown in Fig. 3 and with prior literature that documents limited effects of data analytics on audit processes (e.g., Eilifsen et al., 2020). Software Engineering does predict a reduction in the likelihood of revenues and accruals-related restatements, but its effect is muted when we also include AI in the analysis. Importantly, the effect of AI remains almost unchanged when we add Data Analysis and Software Engineering, which confirms that our results are driven specifically by AI and not by more general technological investments by audit firms. For example, a one-standard-deviation increase in AI investments reduces the incidence of restatements by 5.4% and 5% with (Table 5 Panel C column 2) and without (Table 4 column 1) controls for growth in software engineering and data analysis skills, respectively.

4.3 AI and restatements: Firm-level versus office -level analysis

In our next analysis, we leverage the detailed location data in Cognism resumes to test whether the effect of AI adoption on audit quality is a national firm-wide phenomenon or an office-specific effect. We augment regression (1) with ΔAI_office_level(−3,0)i,t, the change in the office-level share of AI workforce over the course of the prior three-year period:

Table 6 reports the results for the dependent variable I(Restatementi,t) in columns 1 and 2, I(Material restatementi,t) in columns 3 and 4, I(Revenue and accrual restatementi,t) in columns 5 and 6, and I(SEC investigationi,t) in columns 7 and 8. In odd columns the main explanatory variable is the change in the office-level share of the AI workforce over the course of the prior three-year period, while in even columns we include the change in the share of AI workers both at the national firm-wide level (ΔAI(−3,0)i,t) and at the office level (ΔAI_office_level(−3,0)i,t). For all types of restatements and in all specifications, the coefficient estimates on office-level AI investments are significantly lower than those on firm-level AI investments. Furthermore, when we control for firm-wide adoption of AI, the coefficient estimates on ΔAI_office_level(−3,0)i,t become statistically insignificant in all but one specification. These results statistically confirm the centralized function of AI employees that was pointed out by the partners in the interviews and that is reflected in geographically concentrated AI hubs in Fig. 2.

4.4 Clients’ AI and restatements

Auditors’ investments in AI may correlate with the technological sophistication of their clients, raising the question of the real source of our documented effect: Is it auditors’ investments that drive improvements in audit quality, or do auditors’ AI investments mirror their clients’ investments, which in turn are the main driver of the results? We exploit the wide scope of our resume data to construct analogous measures of AI investments at each client of the auditors in our sample. Specifically, for each audit client in each year, we compute ∆AI _ client(−3, 0)i, t as the change in the share of AI workers at the client firm over the past three years. In Table 7, we report estimates from the following specification with and without the main measure of auditors’ AI investments (∆AI(−3, 0)i, t):

As before, the dependent variable is I(Restatementi,t) in columns 1 and 2, I(Material restatementi,t) in columns 3 and 4, I(Revenue and accrual restatementi,t) in columns 5 and 6, and I(SEC investigationi,t) in columns 7 and 8. In the odd columns, we look only at the effect of clients’ AI, and in even columns we consider both clients’ AI and auditors’ AI jointly. While clients’ AI has a negligible effect on audit quality, the effect of auditors’ AI is virtually unchanged from that in Table 4, confirming that it is audit firms’ investments in AI—not AI investments by their clients—that help reduce restatements. These results are consistent with Austin et al. (2021), who conduct a survey on client-audit data analytics and observe that “typically, managers and auditors indicate that the auditor has more sophisticated data analytics practices than the client” and that clients contribute to the data analytics journey by supporting their auditors’ use of technology through, for example, providing higher quality data. The results are also in line with the insights from our interviews with audit partners, who clearly indicate that clients tend to rely on auditors’ AI.

4.5 AI and restatements: Cross-sectional heterogeneity

To provide further support for our empirical results, we conduct several additional analyses that show that our main effects are stronger in audits where one would ex ante expect AI to play a stronger role.

First, Table 8 Panel A estimates our main empirical specification (1) separately in each tercile of audits based on client firm age.Footnote 17 Successful implementation of AI algorithms relies on having extensive data, and older firms are likely to have accumulated more data through their ongoing economic activity. As a result, we expect AI to have a greater impact on audits of older firms. This is exactly what we see in the results. For example, a one-standard-deviation increase in the share of AI workers decreases the probability of a restatement by 4.3% among the youngest tercile of client firms and by 6.5% among the oldest tercile of client firms.

Second, Eilifsen et al. (2020) suggest that new technologies are more likely to be used on new clients. This is echoed in our interviews with audit partners, who stress how audit firms highlight new technologies such as AI to attract new clients. In Table 8 Panel B, we estimate the following regression:

The dependent variable is I(Restatementi,t) in columns 1 and 2, I(Material restatementi,t) in columns 3 and 4, I(Revenue and accrual restatementi,t) in columns 5 and 6, and I(SEC investigationi,t) in columns 7 and 8. NewClienti, t is a dummy variable equal to one if the audit is being performed on a client who has been audited by the current auditor for three years or less.Footnote 18 The positive coefficients on NewClienti, t show that, in general, new clients are more difficult to audit. Consistent with our predictions, this challenge is partially offset with AI: the coefficients on ∆AIi, t × NewClienti, t are negative for all restatement variables and significant for material restatements.

Finally, our interviews with audit practitioners suggest that the retail industry—with its high transaction volumes, rapidly growing e-commerce sector, and need for predictions regarding inventory impairment—is particularly suited to reap the benefits from AI. In Table 8 Panel C, we estimate the following regression:

The dependent variable is I(Restatementi,t) in column 1, I(Material restatementi,t) in column 2, I(Revenue and accrual restatementi,t) in column 3, and I(SEC investigationi,t) in column 4. Retaili, t is a dummy variable that equals one if a client belongs to the retail sector (two-digit SIC codes between 52 and 59) and zero otherwise. Consistent with our prediction that AI is especially beneficial for audit quality in retail, the coefficient on ∆AIi, t × Retaili, t is negative for all dependent variables and significant for revenue and accruals restatements.

To summarize, our cross-sectional analysis shows that the effect of AI on reducing the probability of restatements is stronger in situations where the application of AI is expected to be more promising.

5 AI and audit process efficiency

While our primary focus is on audit quality, we test two additional hypotheses related to audit process efficiency: that AI helps reduce the costs of the audit product, and that AI helps decrease the reliance of the audit process on human labor. While our results about increased efficiency are more preliminary than our main findings on audit quality, they provide an important complementary perspective to the quality angle.

5.1 Audit fees and AI adoption

Audit fees are the outcome of both supply and demand factors. On the demand side, audit fees can be associated with higher board or audit committee independence and competency (Carcello et al. 2002; Abbott et al. 2003; Engel et al. 2010). On the supply side, audit fees are sometimes used to proxy for audit quality because they are expected to measure the auditor’s effort level or a client’s risk (Caramanis and Lennox 2008; Seetharaman et al. 2002). Audit fees also capture audit efficiency (Felix et al. 2001; Abbott et al. 2012). For example, Abbott et al. (2012) find evidence of audit fee reduction from the assistance provided by outsourced internal auditors, who are viewed as more efficient and independent than in-house internal auditors.

In the context of our study, we use audit fees as a measure of audit efficiency. If AI adoption improves efficiency by streamlining the audit process, reducing man-hours, saving time, improving accuracy, and increasing insight into clients’ business processes, then we can expect a negative association between audit costs and AI adoption. However, even if audit efficiency increases with AI adoption, audit firms might not pass their savings on to clients. Indeed, Austin et al. (2021) document interesting tension between the expectations of auditors versus clients with respect to how technology should impact fees. Clients believe that fees should decline as the audit process becomes more efficient, while auditors push back, calling for higher fees due to additional investments in innovation. We received similar insights from our interviews with audit partners: clients expect audit fees to decrease as auditors use more advanced technologies, but auditors point to high upfront costs and lagged efficiency gains. Thus, the association between audit fees and AI adoption is an open empirical question.

We estimate the relationship between AI adoption and fees via the following regression:

The dependent variable is Audit Feesi,t, the natural logarithm of audit fees of firm i in year t reported in Audit Analytics. We use the log transformation because differences in logs are a close approximation for small percentage changes (Abbott et al. 2012). The control variables are the same as in regression (1) and capture firm characteristics that the prior literature has found to be associated with fees charged by the auditor to compensate for audit complexity or risk. Additionally, we control for log fees at time t = 0 to capture fee stickiness and account for unobservable characteristics of the client firm. Similarly to regression (1), we standardize all continuous independent variables to have standard deviations equal to one (to facilitate the comparison and interpretation of the coefficient estimates), include issuer’s industry and year fixed effects based on two-digit industry codes, and cluster standard errors at the issuer level.

Table 9 displays the results for fees one year ahead (t = 1) in column 1, two years ahead (t = 2) in column 2, and three years ahead (t = 3) in column 3. In all specifications, our results indicate that audit fees are negatively associated with recent AI adoption. For example, based on column 1, a one-standard-deviation increase in the share of AI workers over the previous three years predicts a 0.9% reduction in per-audit fees in the following year. The effect of AI adoption becomes even stronger over longer time horizons: a one-standard deviation increase in the share of AI employees over the previous three years forecasts a 1.5% (2.1%) reduction in log per-audit fees after two (three) years.

Fees exhibit strong stickiness at the client level (for instance, in column 1, the coefficient estimate on audit fees at time t = 0 is 0.778). The statistically and economically significant effect of AI adoption on fees in the following years even after controlling for prior year fees, other observable factors influencing auditors’ fees, and industry and year fixed effects gives us confidence that our results are most likely not driven by unobservable confounds.

5.2 Labor effects of AI adoption

If AI adoption is meant to improve the efficiency of audit firms by streamlining the audit process and reducing manual tasks, it might lead to a reduction in the accounting workforce. This potential effect comes across from our interviews with partners at the largest audit firms. For example, one partner noted that the primary way in which AI affects the audit process is by automating analysis that would previously be performed by staff members, thereby “reducing human error” and making employees more efficient (with an average auditor being expected to handle up to ten clients, when a decade ago that number was only three). Using the resume data, we offer a large-scale analysis of the time-series association between AI adoption and the numbers of employees in audit and tax practices.Footnote 19 Table 1 Panel B shows an increase in the percentage of AI-related employees in accounting firms, from 0.08% in 2010 to 0.37% in 2019. Importantly, while the total workforce and the share of AI employees are both increasing for the firms in our sample, the percentage of accounting (audit and tax) employees actually decreases by 11% over the last decade, giving us some preliminary evidence of a decline in the accounting-related workforce.

Our evidence on U.S. employment supports recent results by Chen and Wang (2016) and Knechel et al. (2021), who document substantial departures and turnover of auditors in Taiwan and China. Furthermore, we suggest that some of this trend can be attributed to audit firms’ investments in AI, and we formally investigate the association between the decline in accounting employees and AI adoption by estimating the following regression:

where the dependent variable is the percent change in accounting employees at auditor j from year t to t + n. We control for Big 4 status (Big4) and log total fees (log_total_feesj,t) of auditor j in year t to capture each auditor’s size and reputation. Year fixed effects enable a cross-sectional comparison among auditors with various degrees of AI adoption while controlling for aggregate time trends in accounting employment due to unobservable factors.

Additionally, to investigate the levels of seniority at which the workforce displacement takes place, we separate accounting employees into junior level (e.g., staff accountant/auditor, assistant and associate accountant/auditor, analyst, senior accountant/auditor), mid-tier (e.g., team lead, manager, senior manager), and senior level (e.g., director, managing director, partner), estimating regression (7) separately for each level of seniority.

Table 10 Panel A reports the aggregate results (pooled across all accounting employees, regardless of seniority). Columns 1, 2, 3, and 4 show the relationship between the change in the share of AI employees in the past three years and the growth in accounting employees over the next one, two, three, and four years, respectively. We find that growth in accounting employees over the next three and four years (columns 3 and 4) is negatively impacted by audit firms’ AI investments. The economic effect is large: a one-standard-deviation increase in the share of AI workers over the past three years translates into a decrease of 3.6% in accounting employees three years later and an even greater drop of 7.1% four years out. By contrast, the lack of significant results in the first and second years indicates that the reduction in human labor due to AI takes time to implement.

Panel B displays the effect of AI adoption on accounting employees at various levels of seniority. In columns 1 and 2, we regress growth in junior-level accounting employees three and four years out, respectively. In columns 3 and 4, the dependent variable is growth in mid-tier employees three and four years out, while in columns 5 and 6, the dependent variable is growth in senior employees three and four years out. We find a significant displacement effect of AI adoption on the labor force only at the junior level. A one-standard-deviation increase in the share of AI workers over the past three years predicts a decrease of 5.7% in the number of junior accounting employees three years later and an even larger decrease of 11.8% four years later. Overall, our results provide consistent evidence of accounting job displacement by AI that concentrates at the junior level.

The reduction in the accounting labor force supports the notion, raised by audit partners in the interviews, that AI makes individual audit employees more productive, helping audit firms achieve more per employee. We investigate how fees earned per employee relate to the change in the share of AI workers over the past three years by estimating the following regression:

Table 11 reports the results. The dependent variable is the natural logarithm of three-years-ahead audit fees divided by the number of accounting employees. We control for the respective fees per employee in year t = 0 and estimate this regression with year fixed effect. Our results suggest that AI adoption is indeed associated with an increase in log audit fees per employee, significant at the 5% level.

6 Conclusion

Our research provides the first comprehensive empirical evidence on the artificial intelligence talent in audit firms and its effects on product quality and labor. We analyze a unique dataset covering the majority of employees of large audit firms. We document a significant rise in AI talent from 2010 to 2019, showcasing that AI workers tend to be relatively young, predominantly male, and hold bachelor’s or master’s degrees in technical fields such as statistics, applied mathematics, and computer science. Importantly, the organization of AI talent tends to be centralized within firms, with the majority of AI workers located in a handful of hubs such as New York, Washington D.C., and San Francisco.

Guided by insights from detailed interviews with 17 audit partners from the eight largest U.S. public accounting firms, we investigate whether AI improves the audit process. Our empirical results document substantial gains in quality, as AI investments by audit firms are associated with significant declines in the incidence of restatements, including material restatements and restatements related to accruals and revenue recognition. These results are robust to controlling for auditors’ investments in other technologies and are driven specifically by auditors rather than by their clients. Moreover, we find preliminary evidence that improved audit quality is accompanied by a move towards a leaner process: as audit firms invest in AI, they are able to lower the fees they charge while reducing their audit workforces and showing increased productivity, as measured by total fees per employee.

Altogether, our results shed light on the positive impacts of AI on audit quality and efficiency. At the same time, our findings on the labor impacts of AI caution that the gains from new technologies may not be distributed equally across the population. While partners of audit firms benefit from increased product quality, greater efficiency, and reductions in personnel costs, junior employees may suffer from the displacement we observe several years after AI investments. We hope that our findings will help inform industry leaders and policy makers, and that our detailed datasets on firm-level AI investments and labor will open the doors for further large-scale empirical research on the broader impacts of new technologies in accounting and auditing, as well as in other service-oriented industries.

Notes

Alderucci et al. (2020) find that firms that patent AI innovations (i.e., producers of AI technologies) see significant increases in total factor productivity. However, looking more broadly at public firms across all industries, Babina et al. (2020) do not find any effect of AI investments on total factor productivity or sales per worker.

For ease of exposition, we standardize the continuous independent variable to have a sample standard deviation of one throughout our empirical analysis.

Based on the evidence in Babina et al. (2020), the benefits of AI investments tend to accrue after two years. Correspondingly, our main specification aggregates the independent variable across a three-year time period. In additional analyses, we confirm that our results are robust to alternative specifications.

Using a Gaussian process classifier, Frey and Osborne (2017) demonstrate that the accounting profession is among the most at-risk of being replaced by new technologies such as AI.

See Section 3 for a detailed analysis of AI workforce organization within audit firms.

The interviews were approved by the Institutional Review Board (IRB) at the University of San Francisco.

Smart glasses were mentioned once for remote inventory counts. Another technology mentioned was a blockchain built for intra-company transfers, but it is currently “on the shelf.”

On July 24, 2012, Deloitte filed patent No. 61/675,095, “Fraud Detection Methods and Systems,” which consists of an unsupervised statistical approach to detect fraud by utilizing cluster analysis to identify specific clusters of claims or transactions for additional investigation and utilizing association rules as tripwires to identify outliers. The patent is cited by 115 other patents mostly developed by big tech and big data companies like Palantir, Intuit, IMB, and Microsoft.

For example, one of the Big 4 accounting firms implemented a system to evaluate credit information related to a bank’s commercial loans by including unstructured data from social media and applying machine learning to identify “problematic” loan transactions.

Specifically, we strip out common endings (e.g., “L.P.”) from the firm names and first look for the exact match. If the exact match is not found, we use fuzzy matching based on edit distance between pairs of names. Finally, we manually check and correct the matches.

For robustness, we confirm that our main result on the association between restatements and AI remains unchanged if we remove the restriction of having at least one employee identified as an AI worker. The results of this robustness check are reported in Online Appendix Table OA.1.

We believe that the hiring of the AI workforce accurately reflects audit firms’ overall AI investments for the following reasons. From the interviews with audit partners, we find that the workforce is considered to be the largest cost to investing in AI. The other item that audit partners mentioned as a cost of (though not as much of a barrier to) AI adoption was the purchase of external software. However, when it comes to purchasing external software, Babina et al. (2020) find that the purchase of external AI-related software tends to be complementary to internal hiring of AI-skilled labor. Furthermore, empirically, they find that incorporating external software in the overall measure does not change any of the results. Therefore, internal AI-skilled hiring appears to be a sufficient statistic for firms’ overall AI investments, and we adopt this measure in our paper.

The SEC issues Accounting and Auditing Enforcement Releases (AAERs) during or at the conclusion of an investigation against a company, an auditor, or an officer for alleged accounting and/or auditing misconduct.

In additional analyses reported in Tables OA.2 and OA.3 in the Online Appendix, we also analyze absolute and signed total accruals, signed and absolute discretionary accruals, signed and absolute performance matched accruals, and discretionary accruals (Dechow et al. 1995; Dechow and Dichev 2002; Kothari et al. 2005, Reichelt and Wang 2010). Overall, we do not find evidence of a significant association between the adoption of AI and accruals. Similarly, Table OA.3 also demonstrates that AI is not associated with differences in the time lag between the fiscal year end and the audit report or with the incidence of Type 1 or Type 2 errors in going concern opinions.

The three-year horizon is motivated by the evidence in Babina et al. (2020) that AI investments tend to predict firm operations with a lag of two years. For robustness, we also estimate model (1) using changes in AI workers over the prior two years. Results are robust to this specification, as reported in Online Appendix Table OA.4.

As a robustness check, we include additional controls for auditor size, growth, and industry specialization in Online Appendix Table OA.5. Auditor size is proxied by the total number of employees; auditor growth is computed as the growth in an audit firm’s personnel from the prior year; and auditor industry specialization is an indicator variable equal to one if the audit firm has the largest market share in the industry. We measure market share using the number of clients audited by an audit firm within an industry (Balsam et al. 2003).

The results are robust to alternative specifications—for example, comparing the audits in the top versus the bottom quartile of client age.

Like the client age results, these results are robust to alternative cutoffs, including defining new clients as those that only started with the auditor up to one year prior.

Based on our detailed examination of job titles and job descriptions in the Cognism data, a number of employees reference both audit and tax functions simultaneously. As such, we take the conservative approach of considering all accounting (audit and tax) employees together in our analyses and referring to them as accounting employees. All results are even stronger when we restrict our attention to employees whose job titles focus exclusively on audit, as we show in Online Appendix Table OA.6.

References

Abbott, L.J., S. Parker, G.F. Peters, and K. Raghunandan. 2003. An empirical investigation of audit fees, nonaudit fees, and audit committees. Contemporary Accounting Research 20 (2): 215–234.

Abbott, L.J., S. Parker, and G.F. Peters. 2012. Audit fee reductions from internal audit-provided assistance: The incremental impact of internal audit characteristics. Contemporary Accounting Research 29 (1): 94–118.

Acemoglu, D., D. Autor, J. Hazell, and P. Restrepo. (2022). AI and jobs: Evidence from online vacancies. (no. w28257). National Bureau of economic research (NBER). Available at: https://www.nber.org/system/files/working_papers/w28257/w28257.pdf

Aghion, P., B.F. Jones, and C. I. Jones. (2019). Artificial intelligence and economic growth”, National Bureau of Economic Research (NBER). Available at: https://www.nber.org/system/files/chapters/c14015/c14015.pdf