Abstract

Purpose

To select and scale items for the seven domains of the Patient-Reported Inventory of Self-Management of Chronic Conditions (PRISM-CC) and assess its construct validity.

Methods

Using an online survey, data on 100 potential items, and other variables for assessing construct validity, were collected from 1055 adults with one or more chronic health conditions. Based on a validated conceptual model, confirmatory factor analysis (CFA) and item response models (IRT) were used to select and scale potential items and assess the internal consistency and structural validity of the PRISM-CC. To further assess construct validity, hypothesis testing of known relationships was conducted using structural equation models.

Results

Of 100 potential items, 36 (4–8 per domain) were selected, providing excellent fit to our hypothesized correlated factors model and demonstrating internal consistency and structural validity of the PRISM-CC. Hypothesized associations between PRISM-CC domains and other measures and variables were confirmed, providing further evidence of construct validity.

Conclusion

The PRISM-CC overcomes limitations of assessment tools currently available to measure patient self-management of chronic health conditions. This study provides strong evidence for the internal consistency and construct validity of the PRISM-CC as an instrument to assess patient-reported difficulty in self-managing different aspects of daily life with one or more chronic conditions. Further research is needed to assess its measurement equivalence across patient attributes, ability to measure clinically important change, and utility to inform self-management support.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Plain English summary

Most of the daily work to manage life with chronic health conditions such as diabetes, heart disease, mental illness, arthritis, and many others is done by patients and their families. We know that this kind of self-management improves peoples’ health outcomes and quality of life. Assessment tools are needed to help people and health providers identify the kind of difficulties people are experiencing so that appropriate programs, resources and supports can be accessed. Unfortunately, existing assessment tools do not measure areas of difficulty most important to people and are inadequate for people who are living with multiple conditions. This study presents a new assessment tool to fill this gap and evaluates its validity. The Patient-Reported Inventory of Self-Management of Chronic Conditions (PRISM-CC) assesses patients’ perceived difficulty in self-managing seven different aspects of life with chronic health conditions. This study provides evidence that PRISM-CC measures these domains. The PRISM-CC is now ready for testing in clinical settings.

Introduction

Enabling people to better self-manage their chronic conditions is widely regarded as critical to improving patient outcomes and alleviating the growing demand for medical care [1, 2]. Self-management is defined by the Institute of Medicine, as the daily tasks that “individuals must undertake to live well with one or more chronic conditions” [3]. Self-management is more than just medical management such as monitoring symptoms and adhering to therapies. It also includes management of emotions, roles, social relationships and daily activities [4,5,6,7,8,9]. Self-management of the different tasks is reciprocal; management of broader areas impacts ability to effectively manage symptoms, and vice-versa [5, 8, 10]. Self-management strategies and needed support change over time as chronic conditions and life circumstances change [1, 11,12,13,14].

Feasible, valid, and reliable measures that pinpoint patients’ self-management difficulties are required to guide patient care, and research on effectiveness of self-management interventions. Two scoping reviews of 35 existing self-management measures have identified major deficiencies in existing measures [15, 16]. First, the majority were developed for specific health conditions [15, 16]. While these have a role, generic measures relevant to persons living with multiple chronic conditions are needed to inform integrated, interdisciplinary, and client-centered care [5, 14, 17]. Second, most self-management measures do not differentiate aspects of self-management shown to be important to patients, nor the self-management supports required [15, 16, 18]. Most measures are unidimensional, providing only a single score across domains or limiting focus to specific aspects of self-management [16, 18]. Among the multidimensional measures, all lack content validity when assessed against reviews of self-management tasks important to patients [15]. Finally, many existing measures also lack a cohesive theoretical or conceptual framework [15, 16].

The Patient-Reported Inventory of Self-Management of Chronic Conditions (PRISM-CC) is a new, patient-reported instrument designed to address the limitations of existing measures [19]. Designed to be applicable to patients living with multiple chronic conditions, it will differentiate domains of self-management relevant to patient experiences, and be feasible for use in diverse settings. Its intended purpose is to inform individualized self-management support plans, program evaluation, and research on chronic disease management.

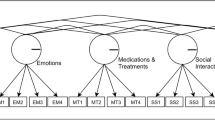

The conceptual foundation of the PRISM-CC is a validated, patient-centered framework describing seven domains of self-management strategies relevant to the experience of patients with one or more chronic conditions: the Taxonomy of Every Day Self-Management Strategies (TEDSS) [9, 10]. The TEDSS was first developed using concept mapping of research studies, then further refined and validated using longitudinal, qualitative data from 117 interviews with individuals with neurological conditions [7]. Given the diversity of age of onset, varied symptoms and unpredictable trajectory of neurological conditions, these data were well suited to refining and validating the TEDSS framework. It was also compared and found to be consistent with other conceptual frameworks developed for patients with other chronic conditions [1, 5, 20, 21].

The PRISM-CC is a patient-reported measure of perceived success (or difficulty) in self-managing each of the seven TEDSS domains (Table 1): Resource, Process, Internal, Activity, Social Interactions, Healthy Behaviors, and Disease Controlling domains. This paper reports item selection, scaling, and construct validation of the PRISM-CC. Our objectives were to: (1) select and scale items to measure the seven domains of the PRISM-CC, and (2) assess the internal consistency and construct validity of the PRISM-CC.

Methods

Preliminary item generation

Development of the PRISM-CC followed the Patient-Reported Outcomes Measurement Information System (PROMIS)® Scientific Standard [19] and the COSMIN Study Design checklist for Patient-Reported outcome measures [22,23,24]. The first step in development was to operationally reframe the seven TEDSS domain definitions to reflect perceived success or difficulty in self-managing each domain (Table 1). Item generation (Phase 1) and assessment of relevance and understanding to people living with chronic conditions (Phase 2) are previously reported [19]. Briefly, item development and selection were conducted by an international, multidisciplinary study team, which included clinicians, researchers and people living with chronic conditions. Potential items (N = 250) were generated for each domain using qualitative data employed to develop the TEDSS, and by examining items from other tools [9, 10]. After an initial conceptual review, 231 items were retained and administered to 40 persons living with chronic conditions to assess their relevance and understandability. Poorly performing items were discarded. Moderately performing items were further assessed during face-to-face cognitive interviews with ten of the 40 participants [25, 26]. We retained 100 items (11–17 items per domain—none from existing scales) for item selection, scaling, and validation.

Participants and data collection

Between February 2020 and April 2021 1,213 persons aged 18 years and over, who self-reported having at least one chronic condition, and who could read English, completed an anonymous, 20–30-min online survey. To be eligible, subjects had to select at least one of 13 conditions from a list (see footnote, Table 2) or specify “other” conditions in a free-text field. Recruitment sought a diverse sample with respect to sociodemographic characteristics and types of chronic conditions through posters displayed in health care settings; information distributed to patient groups, health charities and organizations; social media (Facebook and Twitter), and online advertising sites (e.g., Kijiji). Some (N = 159) were also recruited using Amazon Mechanical Turk (https://www.mturk.com). We excluded 158 persons (13%) who had missing data on 50% or more of the items, for a final sample size of 1055. Those excluded were not statistically different than those included with respect to age, gender, household type, number of reported chronic conditions, self-reported health status, or self-reported mental health; however, they had lower educational attainment.

The study procedure adhered to the Canadian Tri-Council Policy Statement on Ethical Conduct for Research Involving Humans, and ethics approval was obtained from the Nova Scotia Health Research Ethics Board (Romeo File #: 1025263). Informed consent was obtained from all survey respondents.

Measures

The survey included 100 potential PRISM-CC items in two versions with different item orders. The preferential response scale assessed difficulty: unable to do, with extreme difficulty, with much difficulty, with a little difficulty, easily, very easily (66 items). When this was not appropriate, two other response scales were used: (1) never, rarely, sometimes, often, almost always, always (24 items), and (2) strongly disagree, disagree, moderately disagree, moderately agree, agree, strongly agree (10 items). For each item, high scores indicated self-management success. Each item included a “not applicable” (NA) response option.

The survey also included sociodemographic questions (age, gender, country of residence, current living situation, and educational status), type(s) of chronic conditions, self-reported general health and mental health status, a question on impact of chronic condition(s) on daily life, and the 6-item Self-Efficacy in Managing Chronic Conditions (SEMCD) measure [27]. The SEMCD is widely used to evaluate patients’ confidence in managing chronic conditions and is strongly correlated with other measures of self-management [18, 28]. Self-reported general (and mental health) were measured with a single question “In general, would you say your (mental) health is”: excellent, very good, good, fair, or poor [29,30,31]. Impact on daily life was measured with the question: “Overall, how much do you feel that your chronic condition(s) affect(s) your life?”: not at all, a little bit, moderately, quite a bit, or extremely.

Analytic approach

As per our published protocol [19], analyses proceeded through item selection, model refinement and then assessment of construct validity. Confirmatory factor analysis (CFA) and item response theory (IRT) models provided complementary approaches to inform item selection, scaling, and assessment of construct validity of the PRISM-CC [32, 33]. Models appropriate for ordinal response scales were employed. CFA models were estimated using the R ‘Lavaan’ package based on pairwise polychoric correlations and diagonally weighted least squares [34, 35]. For IRT, multidimensional graded response models were estimated with full information maximum likelihood using the R ‘mirt’ package [36, 37].

Missing data resulted from both item non-response and “NA” responses. Items with high proportions of “NA” responses resulting from life contexts uncommon to many respondents (e.g., managing chronic conditions at work) were excluded; however, missing and “NA” responses are unlikely to be missing completely at random [38]. While our estimation methods handled item non-response, sensitivity analyses were conducted to assess the impact of non-random patterns of missingness on reported model parameters and fit statistics. Multivariate chained equations were used to impute 10 sets of missing and “NA” item responses using the “mice v2.9” package in R using all items in our final model as predictors [39]. Parameter estimates and fit statistics were then re-estimated and pooled across the 10 chained imputation data sets, and compared to our reported results.

The item selection process identified the best performing set of items to create a parsimonious measurement instrument for the seven PRISM-CC domains while differentiating between domains. Item selection proceeded through six iterations of modeling and item assessment. Only a few items were excluded at each iteration, and models were refitted before further decisions were made. At later iterations, items previously discarded were reconsidered by adding them back into more refined model versions to evaluate how they performed. Item selection decisions considered multiple statistical criteria combined with ongoing assessment of face and content validity by our interdisciplinary team. Cognitive interview data and qualitative analyses, which informed item development, were often used to inform decisions. Statistical information informing item selection decisions included: (1) low item variance or very low item-rest correlations; (2) high rates of item non-response or not applicable responses resulting from context-specific items; (3) weak standardized factor loadings (< 0.6) or weak discrimination parameters in the IRT models (< 1.35) [40, 41]; (4) examination of IRT item location parameters, item-category response function plots and item information plots to assess the contribution of each item to measuring the latent variable; (5) indications of problems with item performance based on the 1–2 largest modification indices at each model iteration, such as correlated errors (which violates the conditional independence assumption) or cross-loading of an item between domains (indicative of weak discriminant validity of an item); (6) evidence of differential item functioning by sociodemographic variables; and (7) areas of local strain based on residual correlations. A more detailed description of how each of these criteria were used is provided in Supplementary Appendix.

Once items for the PRISM-CC were selected, we assessed construct validity by assessing the extent to which data corresponded to our conceptual framework (structural validity), and hypothesis testing based on known relationships [42].

Structural validity was assessed by the fit of the data to correlated domain CFA and IRT measurement models reflecting our full conceptual framework, and individual measurement models for each of the seven domains. Internal consistency of each domain was assessed with Cronbach’s alpha and measures of marginal reliability. To assess global fit of models, we computed the Root Mean Square Error of Approximation (RMSEA), Squared Root Mean Residual (SRMR), Tucker Lewis Index (TLI) and Comparative Fit Index (CFI). Standard cut-offs widely used in the literature as indicative of good fit were used (SRMR < 0.05, RMSEA < 0.06, and TLI and CFI > 0.95) [43]. However, a RMSEA of 0.06 can indicate poor fit in a model with low standardized factor loadings (e.g., 0.40) while a RMSEA of 0.20 can indicate good fit in a model with high standardized factor loadings (e.g., > 0.90) [40]. So, we also assessed the fit of individual items using CFA standardized factor loadings, IRT item discrimination parameters, and the infit and outfit mean square fit statistics. Standardized factor loadings in the range 0.60–0.74 were interpreted as high, and values ≥ 0.75 were interpreted as very high [40]. IRT item discrimination parameters in the range 1.35–1.69 were considered high, and those ≥ 1.70 were considered very high [41]. Good infit and outfit statistics have values close to 1.0 and should be in the range of 0.5 to 1.5 [44]. Residuals between observed and model predicted inter-item correlations were examined to identify local areas of poor fit. To further assess structural validity, discrimination between latent variables was assessed by testing for collapsibility of domains that were highly correlated (≥ 0.80).

Non-reflective responses in online surveys can result in underestimation of model fit [45, 46]. Of the 1,055 respondents included in our analysis, 5% spent, on average, less than 3.6 s per question, giving plausibility to this concern. Accordingly, sensitivity analyses were conducted to assess the potential impact of non-reflective responses on the model fit of each PRISM-CC domain (see Supplementary Appendix).

Finally, as detailed in our protocol [19], we further assessed construct validity of the PRISM-CC using structural equation models to test hypothesized associations between each domain and variables previously shown to be associated with self-management. Briefly, we hypothesized that the SEMCD would be positively associated with all domains; higher education would be associated with higher Process, Resource, and Healthy Behaviors domain scores; a higher number of chronic conditions would be associated with lower Disease Controlling domain scores; higher self-perceived general health would be associated with higher Disease Controlling domain scores; and higher self-perceived mental health would be associated with higher Internal and Social Interaction domain scores.

Results

The sample included respondents with a diverse range of chronic conditions and social circumstances (Table 2). Younger adults and females are likely overrepresented relative to the adult populations of persons with chronic conditions, and a large proportion of respondents, especially those who were younger reported mental health conditions. Chronic conditions such as cardiovascular disease and chronic obstructive pulmonary disease (COPD) are likely underrepresented relative to adult populations of persons with chronic conditions, while many rarer chronic conditions are likely overrepresented.

Item selection

Thirty-six items, with 4–8 items per domain, were selected for the PRISM-CC (Table 1). Early in the selection process, items were most frequently eliminated because of poor item-rest correlations and weak factor loadings, combined with evidence of poor face validity. Later in the item selection process, content validity (e.g., redundant items), poor discriminant validity, and evidence of differential item functioning where more common reasons for item elimination. We selected items for each domain that best captured the construct while resulting in very good to excellent model fit. See Supplemental Appendix for detailed description of the item selection.

Face and content validity of selected items was assessed by our team to be high for all domains except the Resource domain, which was assessed as moderate because some content of the domain definition was not captured. None of the selected domain items explicitly ask about locating or using community and/or social services, or informal supports, though the fourth item may do so implicitly. Two potential items about self-perceived difficulty in finding “community” and “social” services were eliminated due to ambiguity in meaning. Potential items to measure difficulty accessing informal supports (e.g., “When I need to, I ask family or friends to help me.”) were eliminated due to poor discriminant validity with the Social Interaction domain.

Construct validity and internal consistency

Evidence for the structural validity of the PRISM-CC is strong. The data provided excellent fit to our hypothesized correlated factors CFA model and corresponding multidimensional IRT graded response model. The seven factor CFA model had an RMSEA of 0.057 (90% CI 0.055–0.060), an SRMR of 0.039, a CFI of 0.951, and a TLI of 0.947. Table 3 shows that items for all domains had high measurement quality. All standardized loadings were high or very high (19 of 36 are very high), and nearly all IRT graded response item discrimination parameters were very high (34 of 36). Infit and outfit statistics were generally close to 1.0, and all met fit criteria. Measurement quality for items in the Healthy Behaviors domain was weaker, on average, than other domains.

Evidence of model fit and internal consistency was also assessed individually for each PRISM-CC domain (Table 4). Internal consistency, as measured by Cronbach’s alpha and marginal reliability, exceeded 0.80 for all domains. The CFI, TLI and SRMR fit statistics indicated excellent fit for all domains in both CFA and IRT models. However, the upper confidence intervals for RMSEAs for both the CFA and IRT models were higher than the commonly used cut-off of 0.06. However, examination of residual correlations did not indicate localized areas of poor fit in any domain, and modification indices did not identify substantial model misspecifications. Sensitivity analyses (see Supplemental Appendix) indicated that non-reflective responses resulted in underestimation of the fit of the PRISM-CC. Dropping potentially non-reflective respondents increased standardized factor loadings by 0.05 or more, and substantially improved indices of model fit, including the RMSEA. Sensitivity analyses re-estimating all CFA models using multiply imputated missing and not applicable responses resulted in small changes to standardized factor loadings and fit statistics but did not alter any study conclusions.

Estimated correlations between PRISM-CC domains were positive and exceeded 0.50, for both the CFA and IRT graded response models (Table 5). The Healthy Behaviors domain had particularly high estimated correlations with the Internal (~ 0.85) and the Activity (~ 0.87) domains. However, the conceptual distinction between these domains was clear, and statistical tests for each pair, comparing a two-factor model with a constrained one-factor model, indicated better fit for the two-factor models. Furthermore, modification indices for the CFA model did not identify unspecified cross loadings contributing to high correlations between these domains.

Testing of pre-specified hypotheses provided further evidence of the construct validity. All hypotheses were confirmed (Tables 5 and 6). There were strong and statistically significant associations between the SEMCD and each of the PRISM-CC domains (Table 5). As shown in Table 6, higher education was associated with higher Process, Resource, and Healthy Behavior domain scores; a larger number of chronic conditions was associated with lower Disease Controlling domain scores; self-perceived general health was associated with higher Disease Controlling domain scores; and higher self-perceived mental health was associated with higher Internal and Social Interaction domain scores.

Discussion

Key Findings and Limitations

The PRISM-CC includes 36 items with 4–8 items per domain. All domains, with the partial exception of the Resource domain, have good face and content validity when measured against our conceptual framework. The operational definition of the Resource domain was “self-perceived success in seeking, pursuing and/or managing needed formal or informal supports and resources”. Items retained in this domain measure success in managing formal health care but do not explicitly capture success managing informal supports (help from family and friends) or accessing community and social services. Items generated to measure management of informal supports had poor discriminant validity with the Social Interaction domain, so were not retained. This suggests that management of informal supports, while important to patients, is indistinguishable from the management of social contacts and networks, which is captured by the Social Interaction domain. As well, items expected to measure difficulty managing community and social service supports performed poorly and were not retained due to possible differences among respondents in defining social and community services across different contexts.

Evidence of the PRISM-CC’s structural validity, one aspect of construct validity, is strong. Our data had very good to excellent fit to the pre-specified, correlated factor CFA and IRT models. While estimated upper confidence intervals for the RMSEA in many of the CFA and IRT domain-specific models were above the widely used cut-off of 0.06, we believe this is not of much concern. An RMSEA greater than 0.06 may indicate good fit when standardized factor loadings are greater than 0.70, which is the case for most of our items [40]. Moreover, sensitivity analyses of the potential impact of non-reflective responses to survey questions suggest that our estimates of standardized factor loadings and model fit are likely conservative relative to what would be obtained from application of the PRISM-CC in clinical settings.

Sample characteristics may limit the generalizability of results. While we had a large and diverse sample, data came from people living in the community, not from clinical settings. The online nature of the survey contributed to the younger cohort whose chronic disease patterns likely differ from older and/or clinical populations. To address this, further assessment of measurement equivalence across patients with different socio-demographic and chronic disease attributes is needed.

Confirmation of a priori, hypothesized associations between PRISM-CC domains and variables, as detailed in the study protocol [19], provides additional evidence of construct validity. While this is encouraging, additional evidence of validity is needed. The critical next step is to assess the ability of the PRISM-CC to inform patient care, measure clinically meaningful change, and evaluate the critical ingredients of self-management interventions.

While strong evidence for internal consistency of each domain was confirmed, assessment of test–retest reliability, measurement error and responsiveness of the PRISM-CC remains unassessed.

Clinical utility

Self-management is the work done by people themselves [3, 5,6,7]. The TEDSS articulates that work in seven domains, identified and described by people living with chronic conditions and labelled using non-medical language [9, 10]. Built on this foundation, the PRISM-CC is expected to be understandable, intuitive, and relevant to patients’ experiences. It is not a measure of underlying ability or capacity, but rather patients’ personal assessment of their success/difficulty within each domain. For example, difficulty in the Disease Controlling domain represents perceived difficulty managing “medications and treatments, monitoring symptoms and limiting complications” regardless of whether it is the result of managing a complex medication and treatment regimen or having insufficient knowledge to manage a single medication. In either case, and regardless of the underlying reason, perceived difficulty is uncovered so that self-management support can be offered.

Measuring perceived difficulty, the PRISM-CC stands in contrast to tools that focus on single constructs, such as self-efficacy or patient activation, believed to bolster self-management [27, 47]. However, many other factors, such as health literacy, social support networks, access to transportation, use of technologies or tools, and the development of specific skills or strategies also enable self-management [48,49,50]. The PRISM-CC facilitates a patient-centered approach in which patients identify areas of difficulty. If indicated, contributing factors can then be investigated. Given that the success of most self-management support interventions is dependent on the actions of patients, and often necessitates behaviour change, patients are most likely to engage in interventions that are of perceived difficulty [51].

With its multi-dimensionality and focus on patient-perceived difficulty, the PRISM-CC is positioned to facilitate tailored interventions, addressing a concern with “one size fits all” self-management interventions [14, 52, 53]. Its seven domains explicate, from the perspective of the patient, strengths or challenges in self-managing traditional areas of medical (Disease Controlling and Healthy Behaviours domains), roles (Activities and Social Interaction domains) and emotional management (Internal domain) while also assessing problem solving (Process domain) and aspects of health navigation (Resource domain), providing valuable insight into how to tailor support [6]. For example, a newly diagnosed patient who identifies difficulty finding information, problem solving and decision-making (Process domain) but no difficulty with routine exercise and healthy food choices (Healthy Behaviours domain) needs different support than a patient with multiple conditions and limited mobility who self-reports greatest difficulty in the Activities and Social Interaction domains. Using the PRISM-CC to pinpoint areas of patient concern coupled with skillful clinical assessment, tailored interventions can be offered, and appropriate referrals made. Success or difficulty in some domains will likely cluster together, as indicated by the high correlations between them.

PRISM-CC also has the potential to improve the functioning and patient-centeredness of multidisciplinary and interprofessional teams. Assigning disciplinary case managers based on patient needs and provider scope of practice are two examples. This work could be strengthened using the Team Assessment of Self-Management Strategies (TASMS), also based on the TEDSS framework, to better understand the types of self-management support teams provide [54].

Finally, the PRISM-CC is expected to be feasible for both research and clinical settings, and for individuals living with one or multiple chronic conditions. It is short to administer (36 items) and does not need specific training. Visualized reporting tools are under development, and licensing and apps for administration and linkage to electronic clinical records are being investigated.

Conclusions

The PRISM-CC was developed through a process grounded in a validated conceptual model, item generation and item selection. This study provides evidence for the internal consistency and construct validity of the PRISM-CC. Data from a large and diverse sample of adults with chronic conditions was found to have very good to excellent fit to the conceptual model. PRISM-CC domains were confirmed to have hypothesized associations with other measures and variables. The PRISM-CC holds promise for use in clinical care, program evaluation, and self-management research, but further validation in these settings is needed.

References

Schulman-Green, D., Jaser, S., Martin, F., Alonzo, A., Grey, M., McCorkle, R., Redeker, N. S., Reynolds, N., & Whittemore, R. (2012). Processes of self-management in chronic illness. Journal of Nursing Scholarship, 44(2), 136–144. https://doi.org/10.1111/j.1547-5069.2012.01444.x

Van de Velde, D., De Zutter, F., Satink, T., Costa, U., Janquart, S., De, S. D., & Vriendt, P. (2019). Delineating the concept of self-management in chronic conditions: A concept analysis. British Medical Journal Open, 9(7), e027775. https://doi.org/10.1136/bmjopen-2018-027775

Corrigan, J. M., Adams, K., & Greiner, A. C. (2004). 1st Annual Crossing the Quality Chasm Summit: A Focus on Communities. National Academies Press.

Simmons, L. A., Wolever, R. Q., Bechard, E. M., & Snyderman, R. (2014). Patient engagement as a risk factor in personalized health care: A systematic review of the literature on chronic disease. Genome Med, 6(2), 16. https://doi.org/10.1186/gm533

Liddy, C., Blazkho, V., & Mill, K. (2014). Challenges of self-management when living with multiple chronic conditions: Systematic review of the qualitative literature. Canadian Family Physician, 60(12), 1123–1133.

Corbin, J., & Strauss, A. (1985). Managing chronic illness at home: Three lines of work. Qualitative Sociology, 8(3), 224–247.

Audulv, Å., Packer, T., Hutchinson, S., Roger, K. S., & Kephart, G. (2016). Coping, adapting or self-managing—what is the difference? A concept review based on the neurological literature. Journal of Advanced Nursing, 72(11), 2629–2643. https://doi.org/10.1111/jan.13037

Audulv, A., Asplund, K., & Norbergh, K.-G. (2012). The integration of chronic illness self-management. Qualitative Health Research, 22(3), 332–345. https://doi.org/10.1177/1049732311430497

Audulv, Å., Ghahari, S., Kephart, G., Warner, G., & Packer, T. L. (2019). The Taxonomy of Everyday Self-management Strategies (TEDSS): A framework derived from the literature and refined using empirical data. Patient Education and Counselling, 102(2), 367–375. https://doi.org/10.1016/j.pec.2018.08.034

Audulv, Å., Hutchinson, S., Warner, G., Kephart, G., Versnel, J., & Packer, T. L. (2021). Managing everyday life: Self-management strategies people use to live well with neurological conditions. Patient Education and Counselling, 104(2), 413–421. https://doi.org/10.1016/j.pec.2020.07.025

Grey, M., Knafl, K., & McCorkle, R. (2006). A framework for the study of self- and family management of chronic conditions. Nursing Outlook, 54(5), 278–286. https://doi.org/10.1016/j.outlook.2006.06.004

Audulv, Å. (2013). The over time development of chronic illness self-management patterns: A longitudinal qualitative study. BMC Public Health, 13, 452. https://doi.org/10.1186/1471-2458-13-452

Satink, T., Cup, E. H. C., de Swart, B. J. M., & Nijhuis-van der Sanden, M. W. G. (2015). How is self-management perceived by community living people after a stroke? A focus group study. Disability and Rehabilitation, 37(3), 223–230. https://doi.org/10.3109/09638288.2014.918187

Bratzke, L. C., Muehrer, R. J., Kehl, K. A., Lee, K. S., Ward, E. C., & Kwekkeboom, K. L. (2014). Self-management priority setting and decision-making in adults with multimorbidity: A narrative review of literature. International Journal of Nursing Studies. https://doi.org/10.1016/j.ijnurstu.2014.10.010

Packer, T. L., Fracini, A., Audulv, Å., Alizadeh, N., van Gaal, B. G. I., Warner, G., & Kephart, G. (2018). What we know about the purpose, theoretical foundation, scope and dimensionality of existing self-management measurement tools: A scoping review. Patient Education and Counselling, 101(4), 579–595. https://doi.org/10.1016/j.pec.2017.10.014

Hudon, É., Hudon, C., Lambert, M., Bisson, M., & Chouinard, M. C. (2021). Generic self-reported questionnaires measuring self-management: A scoping review. Clinical Nursing Research, 30(6), 855–865. https://doi.org/10.1177/1054773820974149

Hopman, P., Schellevis, F. G., & Rijken, M. (2016). Health-related needs of people with multiple chronic diseases: Differences and underlying factors. Quality of Life Research, 25(3), 651–660. https://doi.org/10.1007/s11136-015-1102-8

Kephart, G., Packer, T. L., Audulv, Å., & Warner, G. (2019). The structural and convergent validity of three commonly used measures of self-management in persons with neurological conditions. Quality of Life Research, 28(2), 545–556. https://doi.org/10.1007/s11136-018-2036-8

Packer, T., Kephart, G., Audulv, Å., Keddy, A., Warner, G., Peacock, K., & Sampalli, T. (2020). Protocol for development, calibration and validation of the Patient-Reported Inventory of Self-Management of Chronic Conditions (PRISM-CC). British Medical Journal Open, 10(9), e036776. https://doi.org/10.1136/bmjopen-2020-036776

Schulman-Green, D., Jaser, S. S., Park, C., & Whittemore, R. (2016). A metasynthesis of factors affecting self-management of chronic illness. Journal of Advanced Nursing, 72(7), 1469–1489. https://doi.org/10.1111/jan.12902

Eton, D. T., Ridgeway, J. L., Egginton, J. S., Tiedje, K., Linzer, M., Boehm, D. H., Poplau, S., Ramalho de Oliveira, D., Odell, L., Montori, V. M., May, C. R., & Anderson, R. T. (2015). Finalizing a measurement framework for the burden of treatment in complex patients with chronic conditions. Patient Related Outcome Measures, 6, 117–126. https://doi.org/10.2147/PROM.S78955

PROMIS Instrument Development and Validation Scientific Standards Version 2.0 (revised May 2013).

Gagnier, J. J., Lai, J., Mokkink, L. B., & Terwee, C. B. (2021). COSMIN reporting guideline for studies on measurement properties of patient-reported outcome measures. Quality of Life Research, 30(8), 2197–2218. https://doi.org/10.1007/s11136-021-02822-4

Mokkink, L. B., de Vet, H. C. W., Prinsen, C. A. C., Patrick, D. L., Alonso, J., Bouter, L. M., & Terwee, C. B. (2018). COSMIN risk of bias checklist for systematic reviews of patient-reported outcome measures. Quality of Life Research, 27(5), 1171–1179. https://doi.org/10.1007/s11136-017-1765-4

Drennan, J. (2003). Cognitive interviewing: Verbal data in the design and pretesting of questionnaires. Journal of Advanced Nursing, 42(1), 57–63. https://doi.org/10.1046/j.1365-2648.2003.02579.x

Peterson, C. H., Peterson, N. A., & Powell, K. G. (2017). Cognitive interviewing for item development: Validity evidence based on content and response processes. Measurement and Evaluation in Counseling and Development, 50(4), 217–223. https://doi.org/10.1080/07481756.2017.1339564

Lorig, K., Chastain, R. L., Ung, E., Shoor, S., & Holman, H. R. (1989). Development and evaluation of a scale to measure perceived self-efficacy in people with arthritis. Arthritis and Rheumatism, 32(1), 37–44.

Ritter, P. L., & Lorig, K. (2014). The English and Spanish Self-Efficacy to Manage Chronic Disease Scale measures were validated using multiple using multiple studies. Journal of Clinical Epidemiology, 67(11), 1265–1273. https://doi.org/10.1016/j.jclinepi.2014.06.009

Layes, A., Asada, Y., & Kepart, G. (2012). Whiners and deniers—what does self-rated health measure? Social Science and Medicine, 75(1), 1–9. https://doi.org/10.1016/j.socscimed.2011.10.030

Ahmad, F., Jhajj, A. K., Stewart, D. E., Burghardt, M., & Bierman, A. S. (2014). Single item measures of self-rated mental health: A scoping review. BMC Health Services Research, 14, 398. https://doi.org/10.1186/1472-6963-14-398

Bombak, A. E. (2013). Self-rated health and public health: A critical perspective. Frontiers in Public Health, 1, 15. https://doi.org/10.3389/fpubh.2013.00015

Brown, T. A. (2015). Confirmatory factor analysis for applied research. Guilford.

Kamata, A., & Bauer, D. J. (2008). A note on the relation between factor analytic and item response theory models. Structural Equation Modeling: A Multidisciplinary Journal, 15(1), 136–153. https://doi.org/10.1080/10705510701758406

Rosseel, Y. (2012). Lavaan: An R package for structural equation modeling and more. Version 0.5–12 (BETA). Journal of Statistical Software, 48(2), 1–36.

Gana, K., & Broc, G. (2019). Structural equation modeling with Lavaan. Wiley.

Chalmers, R. P. (2012). mirt: A multidimensional item response theory package for the R environment. Journal of Statistical Software, 48(1), 1–29. https://doi.org/10.18637/jss.v048.i06

Reckase, M. D. (2011). Multidimensional item response theory. Springer.

Holman, R., Glas, C. A., Lindeboom, R., Zwinderman, A. H., & de Haan, R. J. (2004). Practical methods for dealing with ‘not applicable’ item responses in the AMC Linear Disability Score project. Health and Quality of Life Outcomes, 2, 29. https://doi.org/10.1186/1477-7525-2-29

Buuren, S. V., & Groothuis-Oudshoorn, K. (2011). Multivariate imputation by chained. Journal of Statistical Software. https://doi.org/10.18637/jss.v045.i03

McNeish, D., An, J., & Hancock, G. R. (2018). The thorny relation between measurement quality and fit index cutoffs in latent variable models. Journal of Personality Assessment, 100(1), 43–52. https://doi.org/10.1080/00223891.2017.1281286

Baker, F. B., & Kim, S.-H. (2017). The basics of item response theory using R. Springer.

Mokkink, L. B., Terwee, C. B., Patrick, D. L., Alonso, J., Stratford, P. W., Knol, D. L., Bouter, L. M., & de Vet, H. C. (2010). The COSMIN study reached international consensus on taxonomy, terminology, and definitions of measurement properties for health-related patient-reported outcomes. Journal of Clinical Epidemiology, 63(7), 737–745. https://doi.org/10.1016/j.jclinepi.2010.02.006

Hu, L., & Bentler, P. M. (1999). Cutoff criteria for fit indexes in covariance structure analysis: Conventional criteria versus new alternatives. Structural Equation Modeling: A Multidisciplinary Journal, 6(1), 1–55. https://doi.org/10.1080/10705519909540118

Smith, R. M., Schumacker, R. E., & Bush, M. J. (1998). Using item mean squares to evaluate fit to the Rasch model. Journal of Outcome Measurement, 2(1), 66–78.

Beck, M. F., Albano, A. D., & Smith, W. M. (2019). Person-fit as an index of inattentive responding: A comparison of methods using Polytomous survey data. Applied Psychological Measurement, 43(5), 374–387. https://doi.org/10.1177/0146621618798666

Schneider, S., May, M., & Stone, A. A. (2018). Careless responding in internet-based quality of life assessments. Quality of Life Research, 27(4), 1077–1088. https://doi.org/10.1007/s11136-017-1767-2

Hibbard, J. H., Mahoney, E. R., Stockard, J., & Tusler, M. (2005). Development and testing of a short form of the patient activation measure. Health Services Research, 40(6 Pt 1), 1918–1930. https://doi.org/10.1111/j.1475-6773.2005.00438.x

Glasgow, R. E., Huebschmann, A. G., Krist, A. H., & Degruy, F. V. (2019). An Adaptive, Contextual, Technology-Aided Support (ACTS) system for chronic illness self-management. Milbank Quarterly, 97(3), 669–691. https://doi.org/10.1111/1468-0009.12412

Hyman, I., Shakya, Y., Jembere, N., Gucciardi, E., & Vissandjée, B. (2017). Provider- and patient-related determinants of diabetes self-management among recent immigrants: Implications for systemic change. Canadian Family Physician, 63(2), e137–e144.

Koetsenruijter, J., van Eikelenboom, N., van Lieshout, J., Vassilev, I., Lionis, C., Todorova, E., Portillo, M. C., Foss, C., Serrano, G. M., Roukova, P., Angelaki, A., Mujika, A., Knutsen, I. R., Rogers, A., & Wensing, M. (2016). Social support and self-management capabilities in diabetes patients: An international observational study. Patient Education and Counselling, 99(4), 638–643. https://doi.org/10.1016/j.pec.2015.10.029

Michie, S., van Stralen, M. M., & West, R. (2011). The behaviour change wheel: A new method for characterising and designing behaviour change interventions. Implementation Science, 6, 42. https://doi.org/10.1186/1748-5908-6-42

Coulter, A., Entwistle, V. A., Eccles, A., Ryan, S., Shepperd, S., & Perera, R. (2015). Personalised care planning for adults with chronic or long-term health conditions. Cochrane Database System Reviews, 2015(3), CD010523. https://doi.org/10.1002/14651858.CD010523.pub2

Kastner, M., Hayden, L., Wong, G., Lai, Y., Makarski, J., Treister, V., Chan, J., Lee, J. H., Ivers, N. M., Holroyd-Leduc, J., & Straus, S. E. (2019). Underlying mechanisms of complex interventions addressing the care of older adults with multimorbidity: A realist review. British Medical Journal Open, 9(4), e025009. https://doi.org/10.1136/bmjopen-2018-025009

Keddy, A. C., Packer, T. L., Audulv, Å., Sutherland, L., Sampalli, T., Edwards, L., & Kephart, G. (2021). The Team Assessment of Self-Management Support (TASMS): A new approach to uncovering how teams support people with chronic conditions. Healthcare Management Forum, 34(1), 43–48. https://doi.org/10.1177/0840470420942262

Acknowledgements

Kylie Peacock and Adele Mansour were instrumental in survey development and recruitment. We are grateful for the time and insights of our two patient advisors. Tara Sampalli, Shannon Ryan Carson, Rob Dickson, Lindsay Sutherland, Lynn Edwards and other administrators and staff in Nova Scotia Health have given their time and expertise to support the development of the PRISM-CC.

Funding

This research is funded by the Canadian Institutes of Health Research (Award Number 152932) and the Nova Scotia Health Research Foundation (Award Number 222).

Author information

Authors and Affiliations

Contributions

Conceptualisation: GK, TP, AA, and GW. Methodology: GK, TP, AA, GW, and AR. Software: GK, AR, YC, IO; Validation: AR, YC and IO; Formal analysis: GK, AR, YC and IO; Project administration: TP and GK. Data curation: TP, GK, AA, and AR; Supervision: GK and TP; Funding acquisition: TP, GK, AA, and GW. Methodology: GK, TP, and GW. Writing, reviewing and editing: all authors.

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kephart, G., Packer, T., Audulv, Å. et al. Item selection, scaling and construct validation of the Patient-Reported Inventory of Self-Management of Chronic Conditions (PRISM-CC) measurement tool in adults. Qual Life Res 31, 2867–2880 (2022). https://doi.org/10.1007/s11136-022-03165-4

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11136-022-03165-4