Abstract

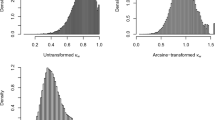

There are plenty of intercoder reliability indices, whereas the choice of them has been debated. With a Monte Carlo simulation, the determinants of the agreement indices were empirically tested. The chance agreement of Bennett’s S is found to be only affected by the number of categories. Consequently, S is a category based index. The chance agreements of Krippendorff’s \(\alpha \), Scott’s \(\pi \) and Cohen’s \(\kappa \) are affected by the marginal distribution, the level of difficulty and the interaction between them, and yet the difficulty level influences their chance agreements abnormally. The three indices are hence in general distribution based indices. Gwet’s \(AC_1\) reversed the direction of the three aforementioned indices, but its chance agreement is additionally affected by the number of categories and the interaction between the number of categories and the marginal distribution. \(AC_1\) can be classified into a class based on the number of categories, the marginal distribution and the level of difficulty. Both theoretical and practical implications were also discussed in the end.

Similar content being viewed by others

Notes

Finn’s r is designed for continuous ratings, but it can be used for binary nominal data.

Its formula of chance agreement is \(\frac{1}{k^{(n-1)}}\) , where n is the number of raters. Therefore, it is the only one in the S family applicable to multiple raters.

Although \(I_r\) cannot be classified into the S family according to Feng (2012), they share the same formula of calculating chance agreement. Consequently, the results of S also apply to \(I_r\).

The correlation with the unfolded distribution is higher, but their relationship is actually nonlinear. Therefore, correlation is not applicable.

References

Aickin, M.: Maximum likelihood estimation of agreement in the constant predictive probability model, and its relation to cohen’s kappa. Biometrics 46(2), 293–302 (1990). URL http://www.jstor.org/stable/2531434

Bennett, E.M., Alpert, R., Goldstein, A.C.: Communications through limited-response questioning. Public Opin. Q. 18(3), 303–308 (1954). doi:10.1086/266520. http://poq.oxfordjournals.org/content/18/3/303.abstract, http://poq.oxfordjournals.org/content/18/3/303.full.pdf+html

Brennan, R., Prediger, D.: Coefficient kappa: Some uses, misuses, and alternatives. Educ. Psychol. Meas. 41(3), 687–699 (1981)

Cohen, J.: A coefficient of agreement for nominal scales. Educ. Psychol. Meas. 20(1), 37–46 (1960). doi:10.1177/001316446002000104

Feng, G.C.: Factors affecting intercoder reliability: a monte carlo experiment. Qual. Quant. :1–24 (2012). doi:10.1007/s11135-012-9745-9

Finn, R.: A note on estimating the reliability of categorical data. Educ. Psychol. Meas. 30(1), 71–76 (1970). doi:10.1177/001316447003000106

Grove, W., Andreasen, N., McDonald-Scott, P., Keller, M., Shapiro, R.: Reliability studies of psychiatric diagnosis: theory and practice. Archiv. Gen. Psychiatry 38(4), 408 (1981). doi:10.1001/archpsyc.1981.01780290042004

Gwet, K.: Inter-rater reliability: dependency on trait prevalence and marginal homogeneity. Stat. Methods Inter Rater Reliab. Assess. Ser. 2, 1–9 (2002)

Gwet, K.: Computing inter-rater reliability and its variance in the presence of high agreement. Br. J. Math. Stat. Psychol. 61(1), 29–48 (2008)

Gwet, K.: Handbook of Inter-rater reliability: a definitive guide to measuring the extent of agreement among multiple raters. In; Advanced Analytics. LLC, Gaithersburg (2010)

Hayes, A., Krippendorff, K.: Answering the call for a standard reliability measure for coding data. Commun. Methods Meas. 1(1), 77–89 (2007)

Holley, J., Guilford, J.: A note on the g index of agreement. Educ. Psychol. Meas. 24(4), 749–753 (1964). doi:10.1177/001316446402400402; http://epm.sagepub.com/content/24/4/749.short; http://epm.sagepub.com/content/24/4/749.full.pdf+html

Holsti, O.: Content Analysis for the Social Sciences and Humanities. Addison-Wesley, Reading (1969)

Janson, S., Vegelius, J.: On generalizations of the g index and the phi coefficient to nominal scales. Multivar. Behav. Res. 14(2), 255–269 (1979). doi:10.1207/s15327906mbr1402_9

Krippendorff, K.: Bivariate agreement coefficients for reliability of data. Sociol. Methodol. 2, 139–150 (1970). http://www.jstor.org/stable/270787

Krippendorff, K.: Content Analysis: An Introduction to Its Methodology, 2nd edn. Sage Publications, Thousand Oaks (2004a)

Krippendorff, K.: Reliability in content analysis: some common misconceptions and recommendations. Hum. Commun. Res. 30(3), 411–433 (2004b). doi:10.1111/j.1468-2958.2004.tb00738.x

Krippendorff, K.: Agreement and information in the reliability of coding. Commun. Methods Meas. 5(2), 93–112 (2011). doi:10.1080/19312458.2011.568376

Krippendorff, K.: A dissenting view on so-called paradoxes of reliability coefficients. Commun. Yearbook 36 519-591(2012)

Lord, F., Novick, M., Birnbaum, A.: Statistical Theories of Mental Test Scores, 2008th edn. Addison-Wesley, Don Mills (1968)

Maxwell, A.E.: Coefficients of agreement between observers and their interpretation. Br. J. Psychiatry 130(1), 79–83 (1977). doi:10.1192/bjp. 130.1.79; http://bjp.rcpsych.org/content/130/1/79.abstract; http://bjp.rcpsych.org/content/130/1/79.full.pdf+html

Partchev, I.: Irtoys: simple interface to the estimation and plotting of irt models. R package version 01 2 (2009)

Perreault, J., William, D., Leigh, L.E.: Reliability of nominal data based on qualitative judgments. J. Market. Res. 26(2):135–148 (1989). http://www.jstor.org/stable/3172601

Potter, W., Levine-Donnerstein, D.: Rethinking validity and reliability in content analysis. J. Appl. Commun. Res. 27(3), 258–284 (1999)

Schuster, C., Smith, D.A.: Indexing systematic rater agreement with a latent-class model. Psychol. Methods 7(3), 384–395 (2002). http://www.sciencedirect.com/science/article/pii/S1082989X02001900

Scott, W.: Reliability of content analysis: the case of nominal scale coding. Public Opin. Q. 19, 321–325 (1955). doi:10.1086/266577

Shrout, P.: Measurement reliability and agreement in psychiatry. Stat. Methods Med. Res. 7(3), 301–317 (1998)

Tinsley, H.E., Weiss, D.J.: Interrater reliability and agreement. In: Tinsley, H., Brown, S. (eds.) Handbook of Applied Multivariate Statistics and Mathematical Modeling, Chap 4, pp. 95–124. Academic Press, San Diego (2000)

Whitehurst, G.: Interrater agreement for journal manuscript reviews. Am. Psychol. 39(1), 22 (1984)

Zhao, X.: A Reliability Index (ai) that Assumes Honest Coders and Variable Randomness. Association for Education in Journalism and Mass Communication, Chicago (2012)

Acknowledgments

The author would like to thank Prof. Xinshu Zhao for his helpful comments and suggestions.

Author information

Authors and Affiliations

Corresponding author

Appendix: R code used in simulation

Appendix: R code used in simulation

Rights and permissions

About this article

Cite this article

Feng, G.C. Underlying determinants driving agreement among coders. Qual Quant 47, 2983–2997 (2013). https://doi.org/10.1007/s11135-012-9807-z

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11135-012-9807-z