Abstract

The basic Bayesian model of credence states, where each individual’s belief state is represented by a single probability measure, has been criticized as psychologically implausible, unable to represent the intuitive distinction between precise and imprecise probabilities, and normatively unjustifiable due to a need to adopt arbitrary, unmotivated priors. These arguments are often used to motivate a model on which imprecise credal states are represented by sets of probability measures. I connect this debate with recent work in Bayesian cognitive science, where probabilistic models are typically provided with explicit hierarchical structure. Hierarchical Bayesian models are immune to many classic arguments against single-measure models. They represent grades of imprecision in probability assignments automatically, have strong psychological motivation, and can be normatively justified even when certain arbitrary decisions are required. In addition, hierarchical models show much more plausible learning behavior than flat representations in terms of sets of measures, which—on standard assumptions about update—rule out simple cases of learning from a starting point of total ignorance.

Similar content being viewed by others

Notes

Note that the term “imprecise credences” is sometimes used to designate a specific formal model of belief based on sets of probability measures, rather than the phenomenon being modeled. To avoid confusion between model and the thing modeled, I will avoid the term “imprecise credences” altogether, using “credal imprecision” as a name for the phenomenon and “sets-of-measures” for the formal model under discussion.

Representations based on probability intervals or on upper and lower probabilities can for present purposes be treated as a special case of sets-of-measures models.

A fourth way to deal with the inability of sets-of-measures models to allow serious learning from a starting point of ignorance is suggested by Rinard (2013): we can conclude that a precise formal model of belief states is not possible. This might well be correct, but it would be defeatist to draw this conclusion simply because sets-of-measures models cannot account for simple cases of inductive learning. In particular, the hierarchical approach that I will sketch momentarily gives us another reason not to abandon hope for a formal model of belief.

The part that still hits home is the accusation that precise credence models give rise to “very specific inductive policies” which are not justified by evidence. This is closely related to the impossibility of assumption-free learning noted above, as well as the question of whether and how rules like the Principle of Indifference can be used to justify certain choices of priors. We will return to this issue in Sect. 6 below.

For discussion of richer languages based on probabilistic programming principles that can describe hierarchical Bayesian models with uncertainty over individuals, properties, relations, etc., see for example Milch et al. (2007) and Goodman et al. (2008, 2016), Tenenbaum et al. (2011), Goodman and Lassiter (2015), Pfeffer (2016) and Icard (2017).

The model is directly inspired by the Microsoft Trueskill system that is used to rank Xbox Live players in order to ensure engaging match-ups in online games: see Bishop (2013). It is conceptually close to the more complex tug-of-war model, with quantification and inference over individuals and their properties and relations, that is explored by Gerstenberg and Goodman (2012) and Goodman and Lassiter (2015).

References

Al-Najjar, N. I., & Weinstein, J. (2009). The ambiguity aversion literature: A critical assessment. Economics & Philosophy, 25(3), 249–284.

Bishop, C. M. (2013). Model-based machine learning. Philosophical Transactions of the Royal Society of London A: Mathematical, Physical and Engineering Sciences, 371(1984), 20120222.

Bradley, S. (2014). Imprecise probabilities. In E. N. Zalta (Ed.), The Stanford encyclopedia of philosophy (Winter 2014 ed.).

Danks, D. (2014). Unifying the mind: Cognitive representations as graphical models. Cambridge: MIT Press.

de Finetti, B. (1977). Probabilities of probabilities: A real problem or a misunderstanding? In A. Aykac & C. Brumet (Eds.), New developments in the applications of Bayesian methods. Amsterdam: North-Holland.

Elga, A. (2010). Subjective probabilities should be sharp. Philosophers’ Imprint, 10(5), 1–11.

Ellsberg, D. (1961). Risk, ambiguity, and the savage axioms. The Quarterly Journal of Economics, 75, 643–669.

Gerstenberg, T., & Goodman, N. D. (2012). Ping pong in church: Productive use of concepts in human probabilistic inference. In Proceedings of the 34th annual conference of the Cognitive Science Society (pp. 1590–1595).

Gigerenzer, G. (1991). How to make cognitive illusions disappear: Beyond heuristics and biases. European Review of Social Psychology, 2(1), 83–115.

Glymour, C. N. (2001). The mind’s arrows: Bayes nets and graphical causal models in psychology. Cambridge: MIT Press.

Goodman, N., Mansinghka, V., Roy, D., Bonawitz, K., & Tenenbaum, J. (2008). Church: A language for generative models. Uncertainty in Artificial Intelligence, 22, 23.

Goodman, N. D., & Lassiter, D. (2015). Probabilistic semantics and pragmatics: Uncertainty in language and thought. In S. Lappin & C. Fox (Eds.), Handbook of Contemporary Semantic Theory (2nd ed.). London: Wiley-Blackwell.

Goodman, N. D., Tenenbaum, J. B., & The ProbMods Contributors. (2016). Probabilistic models of cognition. Retrieved February 14, 2019 from http://probmods.org.

Griffiths, T., Tenenbaum, J. B., & Kemp, C. (2012). Bayesian inference. In R. G. Morrison (Ed.), The Oxford handbook of thinking and reasoning (pp. 22–35). Oxford: Oxford University Press.

Griffiths, T. L., Kemp, C., & Tenenbaum, J. B. (2008). Bayesian models of cognition. In R. Sun (Ed.), Cambridge handbook of computational psychology (pp. 59–100). Cambridge: Cambridge University Press.

Griffiths, T. L., & Tenenbaum, J. B. (2006). Optimal predictions in everyday cognition. Psychological Science, 17(9), 767–773.

Grove, A. J., & Halpern, J. Y. (1998). Updating sets of probabilities. In Proceedings of the fourteenth conference on uncertainty in artificial intelligence (pp. 173–182). Morgan Kaufmann.

Hájek, A., & Smithson, M. (2012). Rationality and indeterminate probabilities. Synthese, 187(1), 33–48.

Halpern, J. Y. (2003). Reasoning about uncertainty. Cambridge: MIT Press.

Hoff, P. D. (2009). A first course in Bayesian statistical methods. Berlin: Springer.

Icard, T. (2017). From programs to causal models. In A. Cremers, T. van Gessel & F. Roelofsen (Eds.), Proceedings of the 21st Amsterdam colloquium (pp. 35–44).

Jaynes, E. (2003). Probability theory: The logic of science. Cambridge: Cambridge University Press.

Jeffrey, R. (1983). Bayesianism with a human face. In J. Earman (Ed.), Testing scientific theories (pp. 133–156). Minneapolis: University of Minnesota Press.

Joyce, J. M. (2005). How probabilities reflect evidence. Philosophical Perspectives, 19(1), 153–178.

Joyce, J. M. (2010). A defense of imprecise credences in inference and decision making. Philosophical Perspectives, 24(1), 281–323.

Kahneman, D., Slovic, P., & Tversky, A. (1982). Judgment under uncertainty: Heuristics and biases. Cambridge: Cambridge University Press.

Keynes, J. M. (1921). A treatise on probability. New York: Macmillan.

Koller, D., & Friedman, N. (2009). Probabilistic graphical models: Principles and techniques. Cambridge: MIT Press.

Kolmogorov, A. (1933). Grundbegriffe der Wahrscheinlichkeitsrechnung. Berlin: Springer.

Körding, K. P., & Wolpert, D. M. (2004). Bayesian integration in sensorimotor learning. Nature, 427(6971), 244–247.

Levi, I. (1974). On indeterminate probabilities. The Journal of Philosophy, 71(13), 391–418.

Levi, I. (1985). Imprecision and indeterminacy in probability judgment. Philosophy of Science, 52(3), 390–409.

Macmillan, N., & Creelman, C. (2005). Detection theory: A user’s guide. London: Lawrence Erlbaum.

Milch, B., Marthi, B., Russell, S., Sontag, D., Ong, D. L., & Kolobov, A. (2007). Blog: Probabilistic models with unknown objects. In L. Getoor & B. Taskar (Eds.), Introduction to statistical relational learning (pp. 373–398). Cambridge: MIT Press.

Nisbett, R. E., & Wilson, T. D. (1977). Telling more than we can know: Verbal reports on mental processes. Psychological Review, 84(3), 231.

Pearl, J. (1988). Probabilistic reasoning in intelligent systems: Networks of plausible inference. Los Altos: Morgan Kaufmann.

Pearl, J. (2000). Causality: Models, reasoning and inference. Cambridge: Cambridge University Press.

Pedersen, A. P., & Wheeler, G. (2014). Demystifying dilation. Erkenntnis, 79(6), 1305–1342.

Pfeffer, A. (2016). Practical probabilistic programming. New York: Manning Publications.

Rinard, S. (2013). Against radical credal imprecision. Thought: A Journal of Philosophy, 2(2), 157–165.

Russell, S., & Norvig, P. (2010). Artificial intelligence: A modern approach. Englewood Cliffs: Prentice Hall.

Seidenfeld, T., & Wasserman, L. (1993). Dilation for sets of probabilities. The Annals of Statistics, 21, 1139–1154.

Simeone, O. (2017). A brief introduction to machine learning for engineers. arXiv:1709.02840v1.

Sloman, S. A. (2005). Causal models: How we think about the world and its alternatives. Oxford: OUP.

Spirtes, P., Glymour, C., & Scheines, R. (1993). Causation, prediction, and search. Cambridge: MIT Press.

Tenenbaum, J. B., Kemp, C., Griffiths, T. L., & Goodman, N. D. (2011). How to grow a mind: Statistics, structure, and abstraction. Science, 331(6022), 1279–1285.

Trommershäuser, J., Maloney, L. T., & Landy, M. S. (2008). Decision making, movement planning and statistical decision theory. Trends in Cognitive Sciences, 12(8), 291–297.

Tversky, A., & Kahneman, D. (1974). Judgment under uncertainty: Heuristics and biases. Science, 185(4754), 1124–1131.

van Fraassen, B. C. (1990). Figures in a probability landscape. In J. Dunn & A. Gupta (Eds.), Truth or consequences (pp. 345–356). Berlin: Springer.

van Fraassen, B. C. (2006). Vague expectation value loss. Philosophical Studies, 127(3), 483–491.

Vul, E., Goodman, N., Griffiths, T., & Tenenbaum, J. (2014). One and done? Optimal decisions from very few samples. Cognitive Science, 38(4), 599–637.

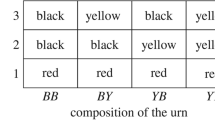

Walley, P. (1996). Inferences from multinomial data: Learning about a bag of marbles. Journal of the Royal Statistical Society, Series B (Methodological), 58, 3–57.

White, R. (2010). Evidential symmetry and mushy credence (pp. 161–186). Oxford: Oxford University Press.

Wilson, T. D. (2004). Strangers to ourselves: Discovering the adaptive unconscious. Cambridge: Harvard University Press.

Wolpert, D. H. (1996). The lack of a priori distinctions between learning algorithms. Neural computation, 8(7), 1341–1390.

Woodward, J. (2003). Making things happen: A theory of causal explanation. Oxford: Oxford University Press.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Lassiter, D. Representing credal imprecision: from sets of measures to hierarchical Bayesian models. Philos Stud 177, 1463–1485 (2020). https://doi.org/10.1007/s11098-019-01262-8

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11098-019-01262-8