Abstract

Electric circuits manipulate electric charge and magnetic flux via a small set of discrete components to implement useful functionality over continuous time-varying signals represented by currents and voltages. Much of the same functionality is useful to biological organisms, where it is implemented by a completely different set of discrete components (typically proteins) and signal representations (typically via concentrations). We describe how to take a linear electric circuit and systematically convert it to a chemical reaction network of the same functionality, as a dynamical system. Both the structure and the components of the electric circuit are dissolved in the process, but the resulting chemical network is intelligible. This approach provides access to a large library of well-studied devices, from analog electronics, whose chemical network realization can be compared to natural biochemical networks, or used to engineer synthetic biochemical networks.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Living organisms perform a variety of functions that can be described in abstract terms as information processing and regulation. Analogies have been drawn between the biochemical reaction networks that perform such functions and electric circuits of similar nature (Arkin 2000; Del Vecchio et al. 2008). The comparison is useful in systems biology, in trying to understand the function of natural systems, and in synthetic biology, in trying to engineer desired functionality in a biochemical context.

An obstacle to exploiting this analogy is that the fundamental components of biochemistry and electronics are very different: the phenomena of resistance, induction, and capacitance based on the interplay between electric and magnetic fields have no immediate parallel in terms of chemical concentrations. Hence, it is not clear how to take systematic advantage of engineering knowledge developed in electronics in understanding biochemical systems. Instead, the search for components of biological network has progressed in different and certainly more appropriate directions (Hart et al. 2012; Milo et al. 2002).

Even within electronics, though, the precise nature of electric components is incidental to the desired function. For example, one may wish to filter the high frequencies of a signal represented by an oscillating voltage. There are countless electric circuits that can perform this function, based on different classes of components in different configurations. Some of those circuits are based on operational amplifiers, which are themselves built from a large network of components to perform an abstract function to which they are incidental. The nature of the fundamental components is inessential, as long as they can be combined to provide a wide range of functionality.

A common way to describe essential function, both in electronics and in biochemistry, is through a system of ordinary differential equations (ODEs). Once the function of a circuit is reduced to this form, it does not matter if the quantities represented are voltages or concentrations: it only matters the way in which they vary over time. Conversely, given an ODE system, one may ask the engineering question of how to realize a circuit (electronic or biochemical) that can perform that function. An early example of this inverse process is the synthesis of mechanical and then electric analog circuits from differential equations (Bush 1931; Shannon 1941).

Certain classes of polynomial ODE systems can be systematically turned into chemical reaction networks (CRNs) that obey the same kinetics (Hars and Toth 1979). Further, CRNs can be compiled systematically into a collection of molecules that can be engineered to obey the kinetics of those reactions (Soloveichik et al. 2010). In this paper we wish to go one step further on the front end of this process. Taking advantage of the large libraries of known electric circuits, we wish to take an arbitrary (but linear, for now) electric circuit and show how to turn it into a set of molecules that obey the kinetics of the quantities in that circuit.

The first obstacle we need to confront is that even common electric circuits describe behaviors that go beyond ODEs. Algorithmic approaches for the analysis of linear circuits such as Modified Nodal Analysis produce, in general, systems of differential algebraic equations (DAEs), where the algebraic equations are induced by classical node analysis based on Kirchhoff laws (Ho et al. 1975). Hence we first reduce DAEs to ODEs, after which we can apply some further techniques. The second obstacle is to take an ODE that may be about voltages and currents, and turn it into a form that can be interpreted chemically. This means that each variable should only take nonnegative quantities (for concentrations), and that appropriate chemical reactions should be derived about those quantities.

This approach has a dual purpose. From a systems biology point of view, we may want to compare the CRNs derived from electric circuits to the ones occurring in nature. This might help elucidate the function of natural networks. Or, at least, it will provide a spectrum of possible chemical networks of known function, whose structure may not be obvious, therefore broadening our expectations of what is possible chemically. From a systems biology point of view, we take it as given that an abstract CRN can be turned into a collection of molecules. In fact, multiple target architectures are possible, from short oligonucleotides in solution (Soloveichik et al. 2010) to gene networks (Schwarz-Schilling et al. 2016). The ability to generate molecular configurations from a vast existing library of (electric, or other) circuits is appealing for systematizing the generations of synthetic organisms. In extending the known techniques from ODEs to DAEs, we extend the scope of potential libraries we can draw from.

Contributions Our main result is a systematic technique that transforms linear DAE systems into CRNs. Our technique is, to the best of our knowledge, novel. DAE systems are either solved symbolically by relying on index reduction (Pantelides 1988), or numerically by relying on numerical methods that compute the trajectories for a given initial condition (Kunkel and Mehrmann 2006). In contrast to our approach, index reduction introduces additional derivatives of signals that may not be available to the circuit, while numerical methods do not transform the DAEs into ODEs, which seems necessary for transforming DAE systems into CRNs. We analyze, as an example, an electric high pass filter and provide a chemical reaction network for it, whose function and architecture can be independently interpreted in a biological context.

2 Outline of methods

We start from a linear electric circuit composed of resistors, capacitors and inductors, with variables ranging over voltages and currents, and we systematically derive an equivalent CRN where chemical species (or more precisely their differences) approximate the trajectories of the original variables.

The basis for this process is the so-called Hungarian Lemma (Hars and Toth 1979), which provides a method for converting certain polynomial ODEs into CRNs by converting each monomial on the right hand side of a differential equation into a separate chemical reaction. Polynomial ODEs can represent, exactly, a much broader class of ODEs, including fractional, trigonometric, and exponential terms (Liu et al. 2015), thus covering a broad range of chemical behavior including Hill kinetics (Cardelli 2014).

The Hungarian Lemma, however, has specific requirements. First, the concentrations of the chemical species must be nonnegative, while ordinary ODE variables, and in particular voltages and currents, may be negative. Second, if a monomial has a negative sign, then the differential variable on the left-hand side of the equation must appear as a factor in the monomial. This means, for example, that the ODE \(\partial _{t}x = y\) (where the growth rate of x is given by the concentration of y, with \(\partial _{t}x\) denoting the time derivative of x) can be reduced to the reaction \(y \rightarrow x + y\). And the ODE \(\partial _{t}x = -xy\) can be reduced to the reaction \(x+y \rightarrow y\). But the ODE \(\partial _{t}x = -y\) (where the decrease in rate of x is given by the concentration of y) cannot be reduced: for x to decrease it must appear on the left-hand-side of a chemical reaction, which implies it should appear in a monomial for \(\partial _{t}x\) by the law of mass action. An ODE system with no such forbidden negative monomial is said to be Hungarian, and any Hungarian ODE system can be reduced to a CRN (although not uniquely) whose mass action kinetics reproduces the original ODE. We use a technique to reduce a polynomial non-Hungarian ODE to an Hungarian one in twice as many variables, thus allowing us to produce CRNs also for non-Hungarian ODEs. In the same step, we make all trajectories nonnegative so that they can be realized by chemical species.

Some simple electric circuits yield ODE systems that can be converted to CRNs as outlined. Pure resistor circuits yield simple algebraic equations. But more complex circuits yield general DAEs (Ho et al. 1975), which we must be prepared to handle. Our main technique applies to linear DAE systems of the form

Here, \(x \in \mathbb {R}^n\) is the (column) vector of dependent variables, \(E \in \mathbb {R}^{n \times n}\) produces a linear combination of their derivatives, \(A \in \mathbb {R}^{n \times n}\) produces a linear combination of the variables, and the term \(B \in \mathbb {R}^{n \times m}\) is the input matrix, and \(u \in \mathbb {R}^m\) is the vector of inputs, such as voltage or current sources. That is, A and E are real matrices which produce linear combinations of variables and their derivatives by multiplication with the corresponding column vectors.

In general, inputs are assumed to be arbitrary, known functions of time. However, for the purposes of converting electric circuits into CRNs, it is necessary to appropriately encode also the inputs as chemical species. In this paper, we will assume that the input vector u can be itself described as the solution of a system of equations. Specifically, we assume that it satisfies an affine ODE system of the form

for some matrix \(D \in \mathbb {R}^{(m+k) \times (m+k)}\) and \(\left( u(0),z(0) \right) ^T, d \in \mathbb {R}^{m+k}\). Intuitively, the input u in (1) is part of an ODE solution which may depend on auxiliary ODE variables z that do not appear in the DAE. This is a rather general setting that allows us to encode arbitrary time-varying inputs by approximating them with Fourier series, which can be expressed as solutions of linear ODEs (see Sect. 4).

Our technique converts the overall system (1), (2) into an ODE system over the same variables, up to a controllable approximation. That ODE system can then be transformed into a CRN as discussed above.

We now describe in detail the entire process of converting linear electric circuits to CRNs, through a small textbook example involving a single differential equation and a single algebraic equation for the well-known RL (resistor–inductor) circuit in Fig. 1 (Agarwal and Lang 2005). The analysis of the circuit proceeds as follows. We let \(v_{ in }\) denote the input voltage (measured with respect to the ground node); the output is the voltage \(v_{ out }\) across the inductor. By standard node analysis, using Kirchhoff’s current law at each node, we obtain that the three currents are equal, so \(i \triangleq i_T = i_R = i_L\). Faraday’s law for the inductor L, and Ohm’s law for the resistor R, then give us:

This is a DAE, where (3a) is a differential equation, and (3b) is an algebraic equation. To find its solution, we can replace \(v_{ out }= v_{ in }- iR\) from (3b) in (3a), obtaining (4a), which can be integrated to obtain i(t). From that solution we can then obtain \(v_{ out }(t)\) via (4b).

If we briefly assume that \(v_{ in }\) is a non-negative input source, then (4a) can be converted to a mass action CRN as follows (using chemical species with the same names as our variables):

where with the symbol \(\emptyset \) we have denoted the empty set of products. These two chemical reactions yield (4a) for the evolution of the concentration of species i. However, a voltage \(v_{ in }\) may be negative, which cannot be modeled with chemical concentrations. Additionally, we are interested in the output \(v_{ out }\), not i, and therefore we need to find a way to realize chemically Eq. (4b) as well.

Because of those difficulties, we cannot make much progress without a more general technique to implement DAEs as CRNs. Our technique involves an approximation, like the one that seems necessary just for algebraic equations, but it can be used in the general case of linear DAEs. We now illustrate it by applying it to the example of Fig. 1. We first rearrange our DAE as (5a,5b). Setting \(x=(i, v_{ out })^T\) we have:

which can be arranged into the form (1):

where we have fixed the constant vector \(b := Bu\) under the assumption of a constant input source \(u := v_{ in }\).

We approximate the DAE, for a parameter \(h>0\), by symbolically computing \(F_h(x) = (E-hA)^{-1}(Ax+b)\), which is related to the numerical backward Euler method. Taking \(R = L = 1\) for simplicity, we obtain:

We next use \(F_h(x)\) as the right-hand side of a new ODE system \(\partial _{t}x = F_h(x)\), which is such that for \(h \rightarrow 0\) the solution of the ODE system (7a), (7b) converges to the solution of the original DAE system (5a), (5b) (see Theorem 1).

Indeed, we can easily see that for \(h \rightarrow 0\), (7a) converges exactly to the ODE (4a). As for (7b), this is now a differential equation approximating Eq. (5b) for \(h \rightarrow 0\), where we notice that a small value of h makes (7b) evolve much faster than (7a).

We have reduced a DAE to an ODE, but (7a), (7b) is not Hungarian because of the \(-i\) monomial in (7b). Keeping in mind that we need to deal eventually with non-Hungarian ODEs, we now apply a positivation technique in the style of Oishi and Klavins (2011) and Fages et al. (2017), also known as dual-rail encoding, where each variable is represented as the difference of two non-negative variables:

Let us now abbreviate \(p=1/(1+h)\), \(q=1/(h+h^2)\), and \(r=1/h\), and consider the ODE system where we separate the positive and negative monomials of each ODE in (7a), (7b) into two ODEs:

The initial conditions for this new system must satisfy \(i^{+}_0 - i^{-}_0 = i_0\) with \(i^{+}_0,i^{-}_0\ge 0\), etc. Since differentiation is a linear operator, the solutions of (7a), (7b) can be recovered as differences from the solutions of (8a), (8b): \(\partial _{t}i^{+}- \partial _{t}i^{-}= \partial _{t}i\) and \(\partial _{t}v_{ out }^{+}- \partial _{t}v_{ out }^{-}= \partial _{t}v_{ out }\). Although the goal was to make all variables non-negative, we now also have a Hungarian ODE system because all the monomials in (8a), (8b) are positive. Hence there is no further difficulty in converting these ODEs to mass action reactions, obtaining the following CRN with unary reagents, with one reaction for each monomial in (8a), (8b), and with the parameter h appearing in the reaction rates:

Here the input \(v_{ in }^\pm \) always acts as a simple catalyst. We note that the CRN implementation does not depend on the actual value of \(v_{ in }\), which only affects the initial condition of the chemical species that represent \(v_{ in }^\pm \). This decoupling between the CRN implementation of the circuit and that of the input sources carries over to the more general case when the sources are time-varying solutions of the ODEs (2), see Theorem 3 and the subsequent discussion.

Inspecting (9), the chemical species \(v_{ out }^\pm \) and \(i^\pm \) are involved in autocatalytic cycles. For example both \(i^{+}\) and \(i^{-}\) grow exponentially over time, while their difference i remains bounded. It is possible to eliminate such exponential growths by adding non-linear dampening reactions to the CRN:

The first reaction, for example, preserves the difference \(i^{+}- i^{-}\), and results in two new identical monomials in the ODEs for \(i^{+}\) and \(i^{-}\), that then cancel in \(\partial _{t}i^{+}- \partial _{t}i^{-}\). Hence that reaction does not change the i solution, but keeps \(i^{+}\) and \(i^{-}\) bounded.

The network consisting of reactions (9), (10) is depicted in Fig. 2, where for small h we have \(1 \approx p \ll q \approx r\), and we can take \(\gamma = r\). This network has the flavor of an incoherent feedforward motif (Milo et al. 2002), considering parallel pairs \(x^\pm \rightarrow y^\pm \) as activations and cross pairs \(x^\pm \rightarrow y^\mp \) as inhibitions, thereby \(v_{ in }\) activates both i and \(v_{ out }\), and i ‘incoherently’ inhibits \(v_{ out }\). Additionally, the motif of mutual catalysis and join degradation around \(i^\pm \) makes that pair stabilize to a copy of its input \(v_{ in }^\pm \) (in the sense that at steady state \(i^{+}- i^{-}= v_{ in }^{+}- v_{ in }^{-}\)) regardless of the value of the rate p. This motif is repeated around \(v_{ out }^\pm \). When the input \(v_{ in }^\pm \) remains constant, \(i^\pm \) becomes a copy of \(v_{ in }^\pm \), and \(v_{ out }^\pm \) becomes a copy of the sum of its two opposite inputs, \(v_{ in }^\pm \) and \(i^\mp \), and so it converges to a baseline output of \(v_{ out }^{+}- v_{ out }^{-}\approx 0\). When the input \(v_{ in }\) changes, it affects \(v_{ out }\) quickly and i slowly, with a delayed inhibition of \(v_{ out }\) by i. It has been shown that feedforward motifs can behave like high-pass filters (de Ronde et al. 2012).

The subnetwork in Fig. 2 consisting of \(v_{ in }^\pm \), \(v_{ out }^\pm \), and the connecting q,r,\(\gamma \) arcs, is in itself also a low-pass filter. It is exactly what is obtained when replacing the inductor with a capacitor of capacitance C in Fig. 1, yielding a well known low-pass filter, and deriving the CRN from it by positivation (with \(q=r=1/RC\)). The process is simpler in this case, since a single ODE is generated from that circuit, and no approximation via \(h \rightarrow 0\) is required.

In summary, we have derived an intelligible chemical reaction network from an electric circuit, and we are guaranteed that it implements the same functionality, as shown in the next section.

3 Methods

3.1 From DAEs to ODEs

We first show how to convert a linear DAE system into a linear ODE system. To this end we start with a DAE system in the form

which corresponds to (1) under the assumption of constant inputs u. Following Hadamard’s concept of well-posedness, we next assume regularity. A DAE system is regular if there exists no initial condition \(x(0) \in \mathbb {R}^n\) which admits more than one solution. We let \(\mathcal {D}\) denote the set of initial conditions for which a regular DAE admits solutions. Elements of \(\mathcal {D}\) are called consistent initial conditions (see, e.g., Kunkel and Mehrmann 2006, Section 2.1 for details). In the case of electric circuits, for instance, non-regular DAE systems may arise in the presence of short circuits and other erroneous designs.

If E is invertible, this DAE can be directly recast into a linear ODE system via

However, in the case of linear electric circuits E is in general not invertible. In this case, a transformation of a DAE system into an ODE system requires index reduction (Pantelides 1988; Kunkel and Mehrmann 2006), which relies on expensive symbolic computations. Our method consists in circumventing this analysis by considering an explicit scheme arising from numerical methods for the solution of DAE systems.

A numerical method is an algorithm that generates, for a given small time step \(h > 0\) and initial condition x(0), a sequence \((x[i])_i\) such that \(x[i] \approx x(i h)\), where \(x : [0;\infty ) \rightarrow \mathbb {R}^n\) denotes the solution of (11). Numerical methods are guaranteed to converge to the solution x when h approaches zero. That is, that for any error threshold \(\varepsilon > 0\) and finite time horizon \(T > 0\), one can find a sufficiently small time step \(h > 0\) such that \(\max _{0 \le i \le N} ||x[i] - x(i h) || \le \varepsilon \) and \(T / h = N \in \mathbb {N}\).

A common numerical method for the solution of DAE systems is the backward Euler method (Kunkel and Mehrmann 2006, Section 5.2) which is given by

The function \(F_h\) is well-defined for sufficiently small \(h > 0\) because the DAE system is regular (Kunkel and Mehrmann 2006, Section 2.1).

Noting that the “slope” of the Euler method at point x[i], \((x[i + 1] - x[i]) / h\), is given by \(F_h(x[i])\), we expect that the Euler sequence \((x[i])_{i \ge 0}\) will match the solution of the ODE system \(\partial _t x_h = F_h(x_h)\). That is, we expect that \(x_h(i h) \approx x[i]\) for all \(0 \le i \le N\). Then, the convergence of the Euler sequence to the DAE solution x would allow us to conclude that \(x_h(i h) \approx x[i] \approx x(i h)\).

The next theorem is our first main result and proves that this is indeed the case.

Theorem 1

For any \(\varepsilon > 0\), \(x(0) \in \mathcal {D}\) and \(T > 0\), there exists an \(h > 0\) such that \(\sup _{0 \le t \le T} ||x(t) - x_h(t) || \le \varepsilon \), where \(\partial _{t}x_h = F_h(x_h)\) and \(x_h(0) = x(0)\).

We now extend Theorem 1 to systems (1), (2).

Theorem 2

Given a DAE system via (1) and (2), consider the ODE system

where \(h > 0\) is small and the functions \(u_h^{\langle 0 \rangle }, u_h^{\langle 1 \rangle } \in \mathbb {R}^m\) satisfy

with initial conditions

Then, for any \(\varepsilon > 0\), \(x(0) \in \mathcal {D}\) and \(T > 0\), there exists an \(h > 0\) such that \(\sup _{0 \le t \le T} ||x(t) - x_h(t) || \le \varepsilon \) if \(x_h(0) = x(0)\).

Note that \(I - h D\) is strictly diagonal dominant and therefore invertible for sufficiently small values of h. It can be shown that \((u^{\langle 0 \rangle }_h, v^{\langle 0 \rangle }_h)^T\) converges to \((u,v)^T\) from (2) as \(h \rightarrow 0\). Hence, Theorem 2 essentially replaces the constant vector b in \(F_h(x) = (E - hA)^{-1}(A x + b)\) by the function Bu.

3.2 From ODEs to CRNs

We next present a technique that transforms the ODE approximation from Sect. 3.1 into a CRN. The approach borrows ideas from Oishi and Klavins (2011) that transforms linear ODE systems (i.e., systems without algebraic constraints) into CRNs. We wish to point out, however, that our approach considers state space representation, while (Oishi and Klavins 2011) acts on the frequency domain.

In the following, let \(\partial _{t}x = \hat{A}x + \hat{b}\) denote some ODE system with initial condition x(0).

Proposition 1

Any non-negative quadruple \((\hat{A}^+,\hat{A}^-,\hat{b}^+,\hat{b}^-)\) satisfying \(\hat{A}= \hat{A}^+ - \hat{A}^-\) and \(\hat{b}= \hat{b}^+ - \hat{b}^-\) induces the positivation

If (15) is subject to non-negative \(x^+(0), x^-(0) \in \mathbb {R}^n_{\ge 0}\) with \(x(0) = x^+(0) - x^-(0)\), the corresponding solution \((x^+, x^-)\) is non-negative and satisfies \(x = x^+ - x^-\).

While positivations trivially satisfy the properties of the Hungarian lemma discussed in Sect. 2 and can therefore be readily translated into CRNs, they may exhibit divergence even if the original system is bounded, see for instance (8a), which implies that \(i^+\) and \(i^-\) diverge. Fortunately, one can apply a correction that leads to bounded positivations.

Proposition 2

Given a positivation \((\hat{A}^+,\hat{A}^-,\hat{b}^+,\hat{b}^-)\), define the quadratic function \(Q(x^+,x^-) = (x_1^+ x_1^-,\ldots ,x_n^+ x_n^-)^T\). For any \(\gamma > 0\), the corresponding Hungarization is given by

If (16) is subject to non-negative \(x^+(0), x^-(0) \in \mathbb {R}^n_{\ge 0}\) with \(x(0) = x^+(0) - x^-(0)\), ODE system (16) admits a non-negative solution on \([0;\infty )\) that satisfies \(x = x^+ - x^-\). Moreover, if x is bounded on \([0;\infty )\), then so is \((x^+, x^-)\).

By applying the law of mass action, it can be easily seen that the Q terms in (16) are captured by the annihilation reactions \(x^{+}_1 + x^{-}_1 \mathop {\longrightarrow }\limits _{}^{\gamma } \emptyset , \ldots , x^{+}_n + x^{-}_n \mathop {\longrightarrow }\limits _{}^{\gamma } \emptyset \). Combining this with Proposition 2, we arrive at the following statement.

Proposition 3

Define the chemical reactions of the Hungarization (16) as

Then, the reactions \(\mathcal {R}\) induce, via the law of mass action, the ODE system (16).

For instance, if the positivation is given by (7a, 7b), then (9, 10) constitute \(\mathcal {R}\).

Proposition 3 and Theorem 2 yield our main result.

Theorem 3

Given a DAE system via (1) and (2), let

\(\mathcal {H}_{1,h}\) and \(\mathcal {H}_{2,h}\) denote the Hungarization of (12) and (13, 14), respectively.

\(\mathcal {R}_{1,h}\) and \(\mathcal {R}_{2,h}\) refer to the chemical reactions of \(\mathcal {H}_{1,h}\) and \(\mathcal {H}_{2,h}\), respectively.

Then, the following holds.

- (a)

The solution of the CRN given by \(\mathcal {R}_{1,h} \cup \mathcal {R}_{2,h}\) converges to the DAE solution as \(h \rightarrow 0\).

- (b)

A change of D and d affects the reactions of \(\mathcal {R}_{2,h}\) but does not alter the reactions of \(\mathcal {R}_{1,h}\).

As anticipated in Sect. 2, Theorem 3 ensures that a) our encoding is correct up to a controllable error and b) that the CRN implementation of the circuit, \(\mathcal {R}_{1,h}\), does not depend on the CRN implementation of the input, \(\mathcal {R}_{2,h}\).

Theorems 2 and 3 allow for composition of circuits: a circuit expressed as a DAE (1), with input provided by an ODE (2), yields another ODE (12) that can be supplied as input to a further circuit. The corresponding CRNs can be composed as well.

4 Methods applied to example

The RL circuit discussed in Sect. 2 is a high-pass filter, attenuating the low frequencies of the input while transmitting the high frequencies to the output. The cutoff frequency is the frequency at which the input signal is attenuated by \(\frac{1}{2}\) its power, or equivalently its amplitude is attenuated by \(-3\,\text {dB} \approx \sqrt{\frac{1}{2}} \approx 0.707\). In our circuit, the cutoff frequency is \(f_c = \frac{R}{2\pi L} \text {Hz}\), where R is the value of the resistance (measured in ohm) and L is the inductance (in henry).

Here we show how we can apply Theorem 3 to the RL circuit with a time-varying input represented by an arbitrary differentiable function. Such a function can be approximated arbitrarily well by a Fourier series u(t) given by

where \(\alpha \), \(\beta _i\), \(\omega _i\), and \(\gamma _i\) are constant parameters. It can be seen that u(t) can be written as the solution of the ODE system:

with initial conditions \(z_i(0) = \sin (\gamma _i)\), \(\bar{z}_i(0) = \cos (\gamma _i)\) and \(u(0) = \alpha + \sum _{i = 1}^N \beta _i \sin (\gamma _i)\) with \(1 \le i \le N\), whereby \(z_i\) and \(\bar{z}_i\) are auxiliary variables whose solutions give the sinusoidal components of the series. We can recognize the system (17) to be in the required form (2).

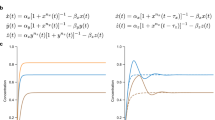

Left: simulation of the CRN from Fig. 2, plotting variables differences, with \(L=R=1\), input \(v_{ in }= v_{ in }^{+}- v_{ in }^{-}\) of frequency \(\frac{1}{2\pi } \text {Hz}\), and output \(v_{ out }= v_{ out }^{+}- v_{ out }^{-}\). Horizontal axis is time. Right: the same, but plotting the individual variables

As a first example of an input waveform, we consider the ODE system

With initial conditions \(u(0) = 0\) and \(z(0) = 1\), this yields the solution \(u(t) = \sin (t)\) for all t, whose frequency is the cutoff frequency. Figure 3 shows simulations of the \(\sin (t)\) CRN composed with the high-pass filter CRN, taking \(h = 0.01\) for a sufficiently good approximation, and \(\gamma = r\) for a sufficiently fast degradation. As expected, the output \(v_{ out }\) is attenuated by \(-3\text {dB} \approx 0.707\), and its phase is shifted by \(45^{\circ }\). The variation of the underlying non-negative variables is shown on the right.

The circuit from Fig. 2 exhibiting perfect adaptation. Input \(v_{ in }= v_{ in }^{+}- v_{ in }^{-}\), and output \(v_{ out }= v_{ out }^{+}- v_{ out }^{-}\). Horizontal axis is time

As a second example, in a biological context, high pass filters exhibit perfect adaptation (Ferrell 2016): they adapt to slow but possibly large variations in input stimulus and still react to quick changes. In Fig. 4 we supply stepped inputs via an appropriate Fourier series to our filter. At each sudden increase or decrease, the output reacts quickly and then settles back to its original level. The size of the transient response is proportional to the step size, but independent of the level of the input. The adaptation level can be set to any level, not just zero, by adding a constant contribution to \(v_{ out }^+\), so that the output can represent the (positive) concentration of a certain protein.

5 Discussion

We have presented a method to convert linear DAEs to CRNs which hinges on a transformation into an approximate linear ODE system with arbitrary accuracy. This is then translated into a set of reactions where the time-course evolutions of the concentrations of the chemical species can be directly related to the original DAE solution.

In principle, any DAE system can be exactly transformed into an ODE system by means of the so-called index reduction (Kunkel and Mehrmann 2006). However, this relies on symbolic computations. Moreover, the so-obtained ODE system will contain derivatives of the input signal, thus requiring for additional approximations. For instance, by differentiating (3b) and using (3a) we obtain \(\partial _t v_{ out }= \partial _t v_{ in }- \frac{R}{L}v_{ out }\), which together with (3a) is a simple ODE system in the dependent variables with no algebraic equations. This system now depends on the derivative of the input, \(\partial _t v_{ in }\), and would have to be combined with another circuit to supply that derivative from the given input \(v_{ in }\). In contrast to index reduction our technique does not require input derivatives. Moreover, while index reduction requires symbolic computations, the matrix inversion at the basis of the construction of the approximate linear ODE system can also be performed using numerical techniques.

An electric circuit which gives the DAE system (19) that is not in semi-explicit form. Here, \(C_1\), \(C_2\) and R refer, respectively, to the first capacitor, the second capacitor and the resistor

The precision of the linear ODE depends on a parameter which, when taken asymptotically small, has the effect of rapidly equilibrating certain components of the ODE system. Therefore, in this respect our approach can be related to quasi steady-state approximation (QSSA, Verhulst 2005; Pantea et al. 2014), which applies to semi-explicit DAEs in the special form

Essentially, QSSA replaces the algebraic constraints \(0 = A_2 y + B_2 u\) with \(\varepsilon \partial _t y = A_2 y + B_2 u\) for some \(\varepsilon \approx 0\). However, DAEs of electric circuits are not semi-explicit in general. For instance, the circuit given in Fig. 5 yields the DAE system

which is not semi-explicit because it has two differential variables on the left-hand side.

Extensions of this work are needed to tackle non-linear DAE systems arising from non-linear electronic components such as diodes and transistors. The conversion of polynomial ODEs to Hungarian and positive ones, and thus to CNRs, works essentially unchanged also for non-linear polynomial systems, and further extends to ODE systems including trigonometric and exponential functions, which can model transistors. However, this must be coupled with a general method for converting non-linear DAEs arising from electronic circuits to ODEs.

References

Agarwal A, Lang J (2005) Foundations of analog and digital electronic circuits. Morgan Kaufmann, Burlington

Arkin A (2000) Signal processing by biochemical reaction networks. Cambridge University Press, Cambridge, pp 112–144

Bush V (1931) The differential analyzer. A new machine for solving differential equations. J Frankl Inst 212(4):447–488

Cardelli L (2014) Morphisms of reaction networks that couple structure to function. BMC Syst Biol 8(1):84

de Ronde WH, Tostevin F, ten Wolde PR (2012) Feed-forward loops and diamond motifs lead to tunable transmission of information in the frequency domain. Phys Rev E 86:021913

Del Vecchio D, Ninfa AJ, Sontag ED (2008) Modular cell biology: retroactivity and insulation. Mol Syst Biol 4(1):2008. http://msb.embopress.org/content/4/1/161

Fages F, Le Guludec G, Bournez O, Pouly A (2017) Strong turing completeness of continuous chemical reaction networks and compilation of mixed analog–digital programs. In: Feret J, Koeppl H (eds) Computational methods in systems biology. Springer, Cham, pp 108–127

Ferrell JE (2016) Perfect and near-perfect adaptation in cell signaling. Cell Syst 2(2):62–67

Hars V, Toth J (1979) On the inverse problem of reaction kinetics. In: Qualitative theory of differential equations, vol 30

Hart Y, Antebi YE, Mayo AE, Friedman N, Alon U (2012) Design principles of cell circuits with paradoxical components. PNAS 109(21):8346–8351

Ho C-W, Ruehli A, Brennan P (1975) The modified nodal approach to network analysis. IEEE Trans Circ Syst 22(6):504–509

Kunkel P, Mehrmann V (2006) Differential–algebraic equations. Analysis and numerical solution. EMS Publishing House, Zurich

Liu J, Zhan N, Zhao H, Zou L (2015) Abstraction of elementary hybrid systems by variable transformation. In: Bjørner N, de Boer F (eds) Formal methods, pp 360–377

Milo R, Shen-Orr S, Itzkovitz S, Kashtan N, Chklovskii D, Alon U (2002) Network motifs: simple building blocks of complex networks. Science 298(5594):824–827

Oishi K, Klavins E (2011) Biomolecular implementation of linear I/O systems. IET Syst Biol 5(4):252–260

Pantea C, Gupta A, Rawlings JB, Craciun G (2014) The QSSA in chemical kinetics: as taught and as practiced. Springer, Berlin, pp 419–442

Pantelides CC (1988) The consistent initialization of differential–algebraic systems. SIAM J Sci Stat Comput 9(2):213–231

Schwarz-Schilling M, Kim J, Cuba C, Weitz M, Franco E, Simmel F (2016) Building a synthetic transcriptional oscillator. Methods Mol Biol 1342:185–99

Shannon CE (1941) Mathematical theory of the differential analyzer. J Math Phys 20(1–4):337–354

Soloveichik D, Seelig G, Winfree E (2010) DNA as a universal substrate for chemical kinetics. PNAS 107(12):5393–5398

Verhulst F (2005) Methods and applications of singular perturbations: boundary layers and multiple timescale dynamics. Springer, Cham

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix

Proof of Proposition 1

Since \(\hat{A}^+,\hat{A}^-,\hat{b}^+\) and \(\hat{b}^-\) are non-negative, the theory of differential inequalities (or monotonic systems) readily implies that the solution \((x^+,x^-)\) of (15) is non-negative whenever \((x^+(0), x^-(0))\) is non-negative. To see the remainder of the statement, let \((x^+,x^-)\) solve (15) for \(x(0) = x^+(0) - x^-(0)\). Then

Since \(\partial _t{x} = \hat{A}x + \hat{b}\) admits a unique solution, it must hold \(x = x^+ - x^-\). \(\square \)

Proof of Proposition 2

Write (16) as \(\partial _t{x}^+_i = g^+_i(x^+,x^-)\) and \(\partial _t{x}^-_i = g^-_i(x^+,x^-)\). Since \(g^+_i(z^+,z^-) \ge - \gamma z_i^+ z_i^-\) and \(g^-_i(z^+,z^-) \ge - \gamma z_i^+ z_i^-\) for any \((z^+,z^-) \in \mathbb {R}^{2n}_{\ge 0}\) and the ODE system

remains non-negative if initialized with non-negative values, we conclude that \((x^+,x^-)\) remains non-negative. Moreover, since \(\gamma Q\) is non-positive on \(\mathbb {R}^{2n}_{\ge 0}\), the solution of (16) is defined on \([0;\infty )\) and does not exhibit a finite explosion time. Since the second claim follows trivially from Proposition 1 because the Q terms cancel each other out in \(\partial _t{x}^+ - \partial _t{x}^-\), let us focus on the third claim and set \(\xi := \sup _{0 \le t \le \infty } ||x(t) ||_\infty < \infty \). Note that \(x_i = x^{+}_i - x^{-}_i\), hence we get

Since a similar calculation implies that

we infer that there exists a \(\zeta > 0\) such that, for all i and \((z^+,z^-) \in \mathbb {R}^{2n}_{\ge 0}\), it holds that

\(g^+_i(z^+,z^-) \le -1\) if \(z^+_i \ge \zeta \) and;

\(g^-_i(z^+,z^-) \le -1\) when \(z^-_i \ge \zeta \).

This ensures that for any initial condition \((x^+(0),x^-(0)) \in \mathbb {R}^{2n}_{\ge 0}\), the solution \((x^{+},x^{-})\) enters eventually \([0;\zeta ]^{2n}\) in order to remain there forever. \(\square \)

Proof of Proposition 3

Straightforward. \(\square \)

Proof of Theorem 3

Follows from a direct combination of Proposition 3 and Theorem 2. \(\square \)

Proof of Theorems 1 and 2

Before proving Theorems 1 and 2, we first have to establish some auxiliary results. To allow for a compact notation, we denote in the present section the i-th step of the numeric sequence by \(x_i\) rather than x[i].

Proposition 4

Consider the ODE systems \(\partial _t{x} = F(x)\) and \(\partial _t{x}_h = F_h(x)\) where F and \(F_h\) are assumed to be Lipschitz continuous on some bounded domain \(B \subseteq \mathbb {R}^n\) and \(L \ge 0\) denotes the Lipschitz constant of F. Let us assume further that both ODE systems have solutions on [0; T] which remain in B and that \(\sup \{ ||F(x) - F_h(x) || \mid x \in B \} \le \eta \). Then, if \(x(0) = x_h(0)\), for all \(0 \le t \le T\) it holds that

Proof

We first show a modified version of Gronwall’s inequality. To be more specific, let \(\xi _1\) and \(\xi _2\) be positive constants and v a continuous function on \(0 \le t < \infty \) such that

Then, it holds that \(v(t) \le \tfrac{\xi _2}{\xi _1} (\mathrm {e}^{\xi _1 t} - 1)\). To see this, we first rewrite (20) to

Since this rewrites to \(\tilde{v}(t) \le \tilde{\alpha } + \int _0^t \tilde{v}(s) \tilde{w}(s) ds\) for \(\tilde{v}(s) := v(s) + \tfrac{\xi _2}{\xi _1}\), \(\tilde{\alpha } := \tfrac{\xi _2}{\xi _1}\) and \(\tilde{w}(s) := \xi _1\), Gronwall’s inequality ensures that \(\tilde{v}(t) \le \tilde{\alpha } \cdot \mathrm {e}^{\int _0^t \tilde{w}(s) ds}\) and we infer the auxiliary statement. This, in turn, yields

\(\square \)

Proposition 5

Let \(E \partial _t{x} = A x + b\) be a regular linear DAE system and let \(\mathcal {D}\subseteq \mathbb {R}^n\) denote the corresponding set of consistent initial conditions. Then, \(\mathcal {D}\) is an affine subspace of \(\mathbb {R}^n\) and \(x + h F_h(x) \in \mathcal {D}\) whenever \(x \in \mathcal {D}\).

Proof

To see that \(\mathcal {D}\) is an affine subspace of \(\mathbb {R}^n\), we refer to (Kunkel and Mehrmann 2006, Section 2.1). Note further that \(x_i = x_{i-1} + h F_h(x_{i-1})\) defines the backward Euler scheme which is applied to the DAE system \(E \partial _t{x} = A x + b\), see (Kunkel and Mehrmann 2006, Section 5.2). Consider the BDF-1 scheme (Kunkel and Mehrmann 2006, Section 5.3) which is given by

if applied to \(E \partial _t{x} = A x + b\). With this, we first observe that

where the inversion in the last line can always be performed for sufficiently small h because \(E \partial _t{x} = A x + b\) is regular. This, in turn, yields

This shows that the backward Euler scheme and the BDF-1 scheme are identical if applied to \(E \partial _t{x} = A x + b\). With this, the statement of the proposition is closely related to (Kunkel and Mehrmann 2006, Remark 5.25). To see this, we may assume without loss of generality (see proof of Kunkel and Mehrmann 2006, Theorem 5.24) that \(E \partial _t{x} = A x + b\) is such that \(A = I\) and \(E = N\) for some nilpotent N with \(N^\nu = 0\) and \(N^{\nu - 1} \ne 0\). It can be easily seen that in such a case the solution is \(x \equiv -b\), thus implying in particular that the set of consistent initial conditions is \(\mathcal {D}= \{-b\}\). Moreover, the BDF-1 scheme rewrites to

where the last equivalence is due to the Neumann series and the nilpotency of N. This, in turn, implies that

thus showing that \(x_i = -b\) whenever \(x_{i-1} = -b\). \(\square \)

Proposition 6

Let \(E \partial _t{x} = A x + b\) be a regular linear DAE system and let \(\mathcal {D}\subseteq \mathbb {R}^n\) denote the corresponding set of consistent initial conditions. Then

-

The solution of \(E \partial _t{x} = A x + b\) is contained in \(\mathcal {D}\).

-

There exist \(\hat{A} \in \mathbb {R}^{n \times n}\) and \(\hat{b} \in \mathbb {R}^n\) such that the solution of the ODE system \(\partial _t{x} = \hat{A} x + \hat{b}\) coincides with that of \(E \partial _t{x} = A x + b\) for all \(x(0) \in \mathcal {D}\).

-

Together with \(F_h(x) := (E - hA)^{-1}(A x + b)\), where \(h > 0\), it holds that \(F_h\) converges uniformly, as \(h \rightarrow 0\), to \(\hat{A} x + \hat{b}\) on any bounded subset of \(\mathcal {D}\).

Proof

The first two points are well-known in the theory of linear DAE systems, see Kunkel and Mehrmann (2006, Section 2.1) [it is interesting to note that an efficient computation of \(\hat{A} \in \mathbb {R}^{n \times n}\) and \(\hat{b} \in \mathbb {R}^n\) is difficult because it relies on index reduction (Pantelides 1988)].

To see the third claim, we observe that \(x_i = x_{i-1} + h F_h(x_{i-1})\) defines the backward Euler scheme applied to the DAE system \(E \partial _t{x} = A x + b\), see Kunkel and Mehrmann (2006, Section 5.2). We next show that \(x_0 \mapsto \frac{1}{h}(x_1 - x_0)\) converges uniformly on any bounded subset of \(\mathcal {D}\) to \(x_0 \mapsto \hat{A} x_0 + \hat{b}\) when \(h \rightarrow 0\). To this end, we may assume without loss of generality (see discussion after Equation 5.25 in Kunkel and Mehrmann 2006) that the DAE system \(E \partial _t{x} = A x + b\) is such that

where N is such that \(N^\nu = 0\) and \(N^{\nu -1} \ne 0\) for some \(\nu \ge 1\). This implies that the solution of \(E \partial _t{x} = A x + b\) is characterized by a pair of decoupled dynamical systems, namely by the ODE system \(\partial _t{x}^{\mathrm{I}} = J x^{\mathrm{I}} + b^{\mathrm{I}}\) and the DAE system \(N \partial _t{x}^{\mathrm{II}} = x^{\mathrm{II}} + b^{\mathrm{II}}\), where \(x = (x^{\mathrm{I}}, x^{\mathrm{II}})\) and \(b =(b^{\mathrm{I}}, b^{\mathrm{II}})\). Thanks to this, it suffices to consider \(x^{\mathrm{I}}_1 - x^{\mathrm{I}}_0\) and \(x^{\mathrm{II}}_1 - x^{\mathrm{I}I}_0\) separately.

Since \(x_{\mathrm{II}} \equiv - b_{\mathrm{II}}\) solves \(N \partial _t{x}^{\mathrm{II}} = x^{\mathrm{II}} + b^{\mathrm{II}}\), we infer that \(\mathcal {D}= \{ (x^{\mathrm{I}}, x^{\mathrm{II}}) \mid x^{\mathrm{II}} = - b^{\mathrm{II}} \}\). Hence, Proposition 5 shows that \(x^{\mathrm{II}}_1 - x^{\mathrm{II}}_0 = 0\) whenever \(x_0 \in \mathcal {D}\).

We next focus on \(x^{\mathrm{I}}_1 - x^{\mathrm{I}}_0\). Thanks to the fact that \(\partial _t{x}^{\mathrm{I}} = J x^{\mathrm{I}} + b^{\mathrm{I}}\), we have to investigate the local truncation error of the backward Euler scheme in the context of a linear ODE system. Despite the fact that this is discussed in many books about ODEs, we provide here a proof because most texts do not show that the local truncation error converges uniformly to zero on arbitrarily large compact sets. To this end, we first observe that the Taylor expansion of \(x^{\mathrm{I}}\) around zero yields

for some \(\xi \in (0;h)\). With \(\tilde{F}_h(x^{\mathrm{I}}_0) = (I - hJ)^{-1}(J x^{\mathrm{I}}_0 + b^{\mathrm{I}})\), the proof of Proposition 5 implies that \(\tilde{F}_h(x^{\mathrm{I}}_0) = \frac{1}{h}(x^{\mathrm{I}}_1 - x^{\mathrm{I}}_0)\). This, in turn, implies that

In the case \(h \le 1 / (2 ||J ||)\), the Neumann series allows us to deduce that

with \(||\sum _{k=0}^\infty (h J)^k || \le \sum _{k = 0}^\infty 2^{-k} = 2\). Moreover, a differentiation of \(\partial _t{x}^{\mathrm{I}} = J x^{\mathrm{I}} + b^{\mathrm{I}}\) yields \(\ddot{x}^{\mathrm{I}} = J^2 x^{\mathrm{I}} + J b^{\mathrm{I}}\). This and the last statement imply the existence of constants \(\zeta _1, \zeta _2 \ge 0\) that neither depend on \(x^{\mathrm{I}}_0\) nor on h and that satisfy

for all \(0 \le h \le 1\). This shows that \(x^{\mathrm{I}}_0 \mapsto \frac{1}{h}(x^{\mathrm{I}}_1 - x^{\mathrm{I}}_0)\) converges uniformly on any bounded set to \(x^{\mathrm{I}}_0 \mapsto J x^{\mathrm{I}}_0 + b^{\mathrm{I}}\). \(\square \)

We are in a position to prove Theorem 1.

Proof of Theorem 1

Let \(\hat{A} \in \mathbb {R}^{n \times n}\) and \(\hat{b} \in \mathbb {R}^n\) be as in Proposition 6 and fix \(T > 0\) and \(x(0) \in \mathcal {D}\). Since the solution of \(E \partial _t{x} = A x + b\) solves the linear ODE system \(\partial _t{x} = \hat{A} x + \hat{b}\), this implies that x exists and is bounded on [0; T]. Hence, there exists a closed ball \(B_\rho (0)\) centered at \(0 \in \mathbb {R}^n\) with radius \(\rho > 0\) such that \(x(t) \in B_\rho (0)\) for all \(0 \le t \le T\). Since \(B_\rho (0)\) is bounded, Proposition 6 ensures that x is contained in \(\mathcal {D}\) and that for any \(\eta > 0\) there exists an \(h > 0\) such that

Moreover, Proposition 5 ensures that the solution \(x_h\) of \(\partial _t{x}_h = F(x_h)\) is contained in \(\mathcal {D}\). By combining the foregoing statements, Proposition 4 yields the claim. \(\square \)

The following auxiliary results are needed for the proof of Theorem 2

Proposition 7

Fix \(E, A \in \mathbb {R}^{n \times n}\), \(B \in \mathbb {R}^{n \times (k + m)}\), \(D \in \mathbb {R}^{(k + m) \times (k + m)}\), \(d \in \mathbb {R}^{(k + m)}\) and consider the linear DAE system

Then, \((\hat{E}- h \hat{A})^{-1} (\hat{A}\hat{x}+ \hat{b})\) is given by

Proof

By relying on the inversion formula for block matrices, we obtain

Armed with this, we infer that

and

A summation of the foregoing statements yields (21). \(\square \)

Corollary 1

Fix an arbitrary consistent initial condition \((x(0),u(0))^T \in \mathbb {R}^{n + k + m}\) of the DAE system from Proposition 7. The corresponding ODE approximation is then

with \(u^{\langle 0 \rangle }_h(0) = u(0)\) and \(u^{\langle 1 \rangle }_h(0) = (I - hD)^{-1} D u(0)\).

Proof

Follows directly from Proposition 7. \(\square \)

Proof of Theorem 2

In Theorem 2, replace u with \(\hat{u}\), z with \(\hat{z}\) and B with \(\hat{B}\). Afterwards, apply Corollary 1 to the case where \(u := \left( {\begin{array}{c}\hat{u}\\ \hat{z}\end{array}}\right) \in \mathbb {R}^{k + m}\) and

\(\square \)

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Cardelli, L., Tribastone, M. & Tschaikowski, M. From electric circuits to chemical networks. Nat Comput 19, 237–248 (2020). https://doi.org/10.1007/s11047-019-09761-7

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11047-019-09761-7