Abstract

The objective of this study is to solve the problem of user data not being precisely received from sensors because of sensing region limitations in invoked reality (IR) space, distortion of colors or patterns by lighting, and blocking or overlapping of a user by other users. The sensing scope range is thus expanded using multiple sensors in the IR space. Moreover, user feature data are accurately identified by user sensing. Specifically, multiple sensors are employed when not all of user data are sensed because they overlap with data of other users. In the proposed approach, all clients share the user feature data from multiple sensors. Accordingly, each client recognizes that the user is the same individual on the basis of the shared data. Furthermore, the identification accuracy is improved by identifying the user features based on colors and patterns that are less affected by lighting. Therefore, accurate identification of the user feature data is enabled, even under lighting changes. The proposed system was implemented based on system performance analysis standards. The practicality and system performance in identifying the same person using the proposed method were verified through an experiment.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Invoked reality (IR) has been the focus of many studies on loading virtual world content into the real world using a variety of natural user interface (NUI) technologies [1, 2, 6, 8, 12, 14]. The IR space enables users to interact based on diverse types of content. The NUI can be configured by applying the IR technology to the indoor space.

Recognition of a single user by employing a single sensor or multiple sensors in the IR space produces only one user event. Therefore, the recognition technology, including recognition of a user’s motion or voice, can be easily implemented. However, when multiple users interact in the IR space, it is difficult for the sensors to accurately identify the events respectively performed by each user because the users block each other or overlap [3, 5, 7, 9–11, 13, 16].

Furthermore, the IR space does not continually maintain a specific environment. The amount of sunshine or brightness of the interior lighting impacts user identification [3, 4, 11, 13, 16].

The objective of this study is to expand the sensing scope range in the IR space by minimizing the impact of lighting. Accordingly, the problem of user data not being accurately identified on account of the blocking or overlapping by other users is solved. To this end, the sensing scope range is expanded in the proposed approach using multiple sensors in the IR space. User feature data are accurately identified by user sensing. Specifically, multiple sensors are employed if not all of the user data are sensed because they overlap with data of other users. In the proposed approach, all clients share the user feature data from multiple sensors. Accordingly, each client recognizes that the given user is the same individual on the basis of the shared data. Furthermore, the identification accuracy is improved by identifying the user features based on colors and patterns that are less affected by lighting. Therefore, accurate identification of the user’s feature data is enabled, even when lighting changes. This approach to identifying multiple users can be applied to IR-based conferencing systems or military training systems based on body motion recognition. For these applications, the approach would variously combine technologies, such as gesture, voice, and face recognition.

The remainder of this paper is organized as follows. In Section 2, the trends and features of related research are outlined. Section 3 presents the proposed approach for identifying the features of multiple users in the IR space. Section 4 describes the experiment conducted on the system implementation, and the results are analyzed. Section 5 concludes the paper.

2 Related works

Human subjects can receive personalized contents by intuitively adapting themselves to the IR space. The objective of identifying multiple users by employing basic technology has been actively investigated to provide services required by each user in all locations in the IR space [3, 4].

In daily life, sensors detect the physical amounts of light, heat, and temperature, and the changing of these elements depending on the applications. These values are determined by using specific signals. For example, lighting sensors measure brightness, infrared sensors detect object movements by emitting infrared rays, and webcams enable video conferencing using the Internet or by transferring video images in real time. In particular, Microsoft’s Kinect sensor was developed to recognize the physical motions and voice of a user without controllers by employing sensor technology.

In some approaches, multiple users are identified by employing a single sensor that uses colors and patterns as features. Use of only color features may affect the obtained images depending on lighting changes. This disadvantage has been compensated by using combined pattern features to enhance robustness to lighting changes. Moreover, the identification of multiple users based on a single sensor is limited by the sensing scope. To address this limitation, the identification of multiple users based on multiple sensors has been actively investigated.

Han’s method [3] employs color histograms with color and texture information identified from RGB data obtained from Kinect using the Kinect sensor. In this approach, the head of a user entering into the sensing scope is identified; the user’s silhouette is quickly examined by fixing the length ratio of other body parts based on the head area. When more than two users enter the sensing region, clothing is selected as the user feature based on the assumption that it is rare for two users to be wearing the same clothing. Han’s approach extracts the silhouette of each user entering the sensing scope range using the Canny edge detector. It connects all histograms to the user’s final histogram and estimates the angle between two histograms. When the angle between the two histograms is below the given threshold, the user is identified as being the same person.

Yumak [15] focused on re-identifying a narrator. The user’s skeleton is detected and the image patch is then extracted. The local binary pattern (LBP) and hue, saturation, and value (HSV) color histogram are applied to the image patch. LBP is widely used because it is minimally impacted by lighting changes and it has a simple structure. The color histogram can collect differentiated data from multiple color channels. The matching score is estimated by comparing the user template saved for the narrator entering and withdrawing from the sensing scope range by using a Kinect sensor. If the matching score is higher than the given threshold, the relevant user identity is selected. If it does not match the user template, the user is classified as a new one and is added to a list.

In the present study, re-identification of the user by employing a narrator is the approach used to address the above limitations. The method of Yumak [15], as described above, is applied. Moreover, multiple users are identified with a single sensor using colors and patterns as features by employing one Kinect sensor, while all clients share the user feature data from multiple sensors. The pattern features are used together to avoid the impact of lighting effects. Accordingly, each client recognizes that the user is the same individual on the basis of the shared data. Furthermore, the identification accuracy is improved by identifying the user features based on colors and patterns that are less affected by lighting. Therefore, accurate identification of the user feature data is enabled, even under lighting changes.

3 Extracting user features for user identification

In this section, the proposed algorithm for extracting user features to identify the same person in the IR space is described. In addition, the method for integrating the user feature data from each sensor in the server is outlined.

3.1 Identifying the same person based on multiple sensors

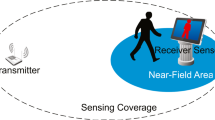

Multiple users enter the sensing scope range in the IR space. Clients extract the features of each user. The server estimates the similarity between the user’s feature data acquired from multiple clients and the user features in the server database. The user with the highest similarity value is determined to be the same person. Synchronization is performed by sending the data of the user who is deemed the same person to all clients. Figure 1 illustrates the structure for identifying the same person based on multiple sensors.

3.2 Experiment of extracting features of multiple users based on colors and patterns

When multiple sensors are used in the IR space, the same person can be identified only when all sensors accurately recognize the features of each individual and share the user data. If only a single feature is used to this end in the procedure, it is difficult to identify the user when several users with similar features enter the sensing scope range. Thus, multiple features are applied to identify the user, even when one kind of feature is the same.

In image processing, colors can be distorted, or patterns or textures can become ambiguous on account of the lighting. Furthermore, lighting can cause errors when extracting the features of each object. Constant lighting cannot be maintained in a real experiment environment.

3.2.1 Extraction of features using the CIELAB color space

The CIELAB color space is applied to minimize errors caused by lighting and the color data are used as features. The CIELAB color space enables color similarity recognition, specifically the coefficient and color space relating to the color recognition ability of a human being. The L coordinates in CIELAB refer to the color brightness. The A coordinates indicate proximity to red as being closer to +a, and proximity to green as being closer to –a.

To use CIELAB color space, the color space of 2D image is converted from RGB to CIELAB. And the histograms are calculated for only A and B channels excepting L coordinates, for eliminating the impact from lighting. We extracts CIELAB based histogram features from head, upper body, and lower body from a user. From a 2D image, we converts the color space in only three parts, head, upper body, and lower body, with given sizes for each part.

3.2.2 Extraction of texture and pattern features based on LBP

The most important features of LBP are its simple operation and robustness to lighting changes. After the color space of an image is converted to CIELAB, LBP is used to identify the textures and patterns of the image. As circular texture data, LBP value is one when a pixel is brighter than the center pixel when the relative brightness changes in neighboring 3*3 pixels, and it is zero when it is darker. We calculate histograms for each color channel and each body part image as a feature.

3.2.3 Extraction of user height features

In some cases, multiple users wear clothing of the same colors, textures, and/or patterns. In these cases, the features of a trigonometric function using the distance to a sensor and the user’s height are applied to identify the user.

Figure 2 describes the extraction of a user’s height and distance between the sensor and user when multiple users enter the sensing scope range.

The feature of a user’s height is determined with Formula (1), where x 2 is the distance between the user and sensor, and x 1 is the distance between a previous sensor and the user. In addition, y 2 is the user’s height in the present frame, and y 1 is the user’s height in the previous frame. Figure 3 illustrates the distance between the sensor and user, along with the user’s height.

3.3 Extracting and identifying user features

Similarity is estimated by comparing the user’s features saved in the server and the features of multiple users extracted in the IR space in real time. Comparison between features is performed by applying the formula to estimate the Bhattacharyya to the histogram of the color and pattern features extracted above. The Bhattacharyya distance measures the similarity of discrete or continuous probability distributions. It is frequently used to measure the similarity between two histograms.

The user’s feature data are saved in the server. Height features are compared per frame and the tangent value is estimated using Formula (1). The tangent value becomes the user’s height feature in the final similarity formula.

Various features are extracted and applied to determine that the user is the same person by comparing the data from all sensors. Formula (2) indicates the final similarity by estimating several feature values.

Here, i is a user ID, n is the number of features, a j is a feature value, b j is a weight value, and S i is a final similarity.

3.4 Same-user identification system structure

The same user identification system classifies the client and server roles. The client extracts the user’s color and pattern data, as well as the user’s height ratio, as the features using the approach for extracting the feature data of colors and patterns. Next, the client transfers the extraction results to the server. The server compares the user data from each client to the user data saved in the server. It identifies the user with the highest similarity by estimating the degree of similarity of the user’s data to the user’s data in the server. After estimation in the server, the data on the user with the highest similarity is transferred from the server to the client. The same user is identified among multiple users through the above procedure.

4 Experiment and analysis

The proposed system was implemented to identify multiple users by employing multiple sensors using the proposed approach. The experiment was performed to analyze the system identification performance.

4.1 Experiment objective

The goal of the experiment was to determine the system’s performance in accurately determining the user identity, Kinect player index, and similarity to each client by using multiple sensors when more than two users were involved.

The common problem in existing research was selected as the experiment condition. The experiment was additionally intended to verify the accuracy of the proposed method of identifying the same user among multiple users by employing statistics. The three main experiment goals were to verify:

-

1)

The accuracy of user identification when a user escapes the sensing scope of multiple sensors in the IR space and re-enters it.

-

2)

The identification of multiple users when lighting changes in the IR space.

-

3)

The accuracy of identification when more than two users wearing clothing in similar colors and patterns enter the sensing scope.

The accuracy of identification as described above was statistically measured and the practicality of the proposed algorithm was verified using the analysis results.

4.2 Experiment methodology

The experiment development environment is described in the following subsections.

4.2.1 Environment for the experiment development

Two personal computers (PCs) were used as the system to identify the same user among multiple users by employing multiple sensors. The specifications were an Intel(R) Core(TM) i5-4690, GeForce GTX 960, Window 8, and the Microsoft Visual Studio 2013 development platform. The sensor was evaluated by connecting two Kinect sensors, version 1, for Windows.

4.2.2 Experiment environment

The experiment was performed in a rectangular IR space that was 2-m wide and 4-m long. When multiple users were detected using only one Kinect sensor, the users could block each other or overlap. In this case, the skeleton could not be exactly recognized; thus, it was difficult to obtain accurate user data. To solve this problem, more than two Kinect sensors were used. The sensing scope was expanded using the multiple sensors, and the user’s feature data were extracted at various angles. The users were identified in real time by connecting one client PC to each Kinect sensor. Both the server and client were connected to the remaining PC. The server integrated the user’s feature data, compared it to the user’s data in the server, and synchronized the user identity. Figure 4 shows the experiment environment with the arrangement of multiple sensors.

4.2.3 Experiment sample data

To identify the same person among multiple users in the space with multiple sensors arranged, the experiment was executed using the data on six users. Table 1 is the sample data on 6 users participating in the experiment.

4.2.4 Experiment procedure

The experiment is executed as described below. First, the server saved the user’s feature data obtained using the sensors in front of and behind the user in the IR space. Next, the participants entered the IR space one after another. Then, each client visualized and confirmed the same person among the multiple participants (users). Finally, the user exited from the sensing scope range of multiple sensors together with multiple other users.

4.3 Experiment contents

The user focus areas were designated as the pre-conditioning process before extracting the user’s color features when entering the IR space. The user’s head, abdomen area, and a portion of the lower body were designated as the user focus areas. The color and pattern features of those focus areas were identified and employed as the user feature data.

4.3.1 Extraction of user color feature

The user color features were extracted using the Kinect sensor. Because the color was comprised of RGB data, it was converted using the CIELAB color model conversion formula. The histograms of the values of A and B, except L corresponding to brightness in CIELAB, were extracted and used as the features.

Figure 5 illustrates the RGB image of the user focus areas, and the image converted into CIELAB. Figure 6 presents the histograms on A and B channels of CIELAB. The highest weight was applied to the user color feature in the experiment. From the experiment, it was determined that, when the color feature weight was increased, the accuracy in identifying the same person in multiple users showed the highest value.

4.3.2 Extraction of user material and pattern features

As the experiment pre-process, histograms of material and patterns of user focus areas were extracted by applying LBP on those areas. Figure 7 represents an application of LBP on images of the areas. Figure 8 depicts histograms of LBP applied to these images.

4.3.3 Extraction of user height features

The distance between the user and sensor, as well as the user height, were used as the features. When the user height feature had a higher weight than the color, texture, or pattern feature, the identification accuracy was not significant. Although color, textures, and patterns have relatively unique properties, the user height produced an error when being measured by the sensors. Nevertheless, good performance was shown when a significant difference existed in the heights of multiple users.

4.3.4 User feature data synchronization

The following procedure was executed to express the identification result by synchronizing the same-person data from multiple users to all clients. First, the user feature data were extracted for each user, and the collected feature data were saved in the user feature database in the server. Each client extracted the user feature data per frame and transferred the data to the server. The server estimated the similarity by comparing the feature data from each client with the feature data saved in it. All clients experienced the similarity estimation procedure with the user feature database. The user identity with the highest similarity and the final similarity after the estimation were transferred to all clients for synchronization.

Figure 9 illustrates the system structure for identifying the same person.

4.4 Experimental results and analysis

The experiment was executed based on the experiment conditions described in Section 4.1. Because our system runs at 30 fps, it does not abundant any captured frame from Kinect. The system’s accuracy in identifying the same person among multiple users was analyzed.

4.4.1 Analyzing the accuracy in identifying the same person exiting and re-entering sensing scope range

The experiment analyzed the accuracy in properly assigning the user identity when a user exited the sensing scope range of multiple sensors and then re-entered it.

Table 2 presents the accuracy of identification for the single user entering the sensing scope range using the sample data from Table 1. In accordance with the experiment results, User 5 shows lower accuracy in the identification than other users. However, all other users have a high accuracy in identification when they exit the sensing scope for a short time and re-enter it.

Table 3 shows the identification accuracy when multiple users enter the sensing scope range. In multi-user identification, the average identification accuracy is decreased. Because skeleton information captured from an immovable user is slightly different for each frame, the color features from a user’s body parts are also different. By this phenomenon, extraction of color features from three body parts can be affected. To reduce such influence, a method to extract same feature from each body part in the different frames should be studied in the future. Figure 10 illustrates the experimental results of identifying the same person among multiple users.

4.4.2 Same-person identification under IR space lighting changes

Most previous studies that selected color as the user feature showed that the identification accuracy is significantly reduced when the lighting changes. Accordingly, the present experiment identified multiple users both in bright and dark environments. Moreover, the lighting intensity was changed during the experiment for identifying multiple users. Based on the results, User 1 with a patterned top, User 2 with a pink top, User 3 with a black top and pants, and User 6 with a top with yellow lettering were identified among the six users. However, User 4 was frequently misidentified as User 3, which made it difficult to identify User 4. Table 4 presents the user identification status in accordance with lighting intensity changes.

4.4.3 Identification accuracy when more than two users wore similarly colored and patterned clothing

The proposed algorithm used the color and pattern of the user’s head, along with the color and pattern of the clothing top and pants, as the user features. The experiment was executed to analyze the identification when more than two users wore similarly colored and patterned clothing. Table 5 outlines the results.

5 Conclusion

In existing studies, when identifying the same person among multiple users, blocking or overlapping of a user by other users under lighting intensity changes is an obstacle to accurately extracting the user features. This obstacle makes it difficult to identify the same person when a user exists and then re-enters the sensing scope range.

In this study, the CIELAB color model and LBP were used. They did not have a significant impact from lighting intensity changes. The sensing scope range was expanded using multiple sensors, and the user feature data were synchronized to enable user identification by employing additional sensors when one sensor failed to identify the user. This approach reduced users blocking or overlapping each other. The performance of the proposed approach was assessed by presenting the performance assessment standards. According to the results, the identification accuracy of the approach is 89.75 % when multiple users exit the sensing scope range and then re-enter it. Under lighting intensity changes, the system identifies that four out of five users are the same person. The same person can be identified among multiple users because the user identity is retained without changing, even when the users are blocking or overlapping each other. Because current latest version of Kinect is now available, we are planning to upgrade our system to be operated under Kinect v2. And, in our continuous research on this system, the proposed method is scheduled to run in more states of users. For instances, the method needs to be operated though the distance between the sensor and a user is varied and the users are conducting random gestures.

References

Blanco-Gonzalo R, Poh N, Wong R, Sanchez-Reillo R (2015) Time evolution of face recognition in accessible scenarios. HCIS 5(24):1–11

Gao Y, Lee HJ (2015) Viewpoint unconstrained face recognition based on affine local descriptors and probabilistic similarity. J Inf Process Syst 11(4):643–654

Han J, Pauwels EJ, de Zeeuw PM, de With PHN (2012) Employing a RGB-D sensor for real-time tracking of humans across multiple re-entries in a smart environment. IEEE Trans Consum Electron 58(2):255–263

Hu N, Bormann R, Zwölfer T, Kröse B (2014) Multi-user identification and efficient user approaching by fusing robot and ambient sensors. 2014 I.E. International Conference on Robotics and Automation (ICRA), IEEE, pp. 5299–5306

Jo HI, Yu HB, Kim K, Sung JH (2015) Motion tracking system for multi-user with multiple Kinects. Int J u-and e-Serv Sci Technol 8(7):99–108

Kim JK, Kang WM, Park JH, Kim JS (2014) GWD: gesture-based wearable device for secure and effective information exchange on battlefield environment. J Convergence 5(4):6–10

Maes P, Mistry P (2009) Unveiling the “Sixth Sense”. Game-changing wearable tech. TED 2009

O’Hara K, Gonzalez G, Sellen A, Penney G, Varnavas A, Mentis H, Criminisi A, Corish R, Rouncefield M, Dastur N, Carrell T (2014) Touchless interaction in surgery. Commun ACM 57(1):70–77

Satta R, Pala F, Fumera G, Roli F (2013) Real-time appearance-based person re-identification over multiple KinectTM cameras. 8th International Conference on Computer Vision Theory and Applications (VISAPP 2013), pp. 407–410

Shimura K, Ando Y, Yoshimi T, Mizukawa M (2014) Research on person following system based on RGB-D features by autonomous robot with multi-Kinect sensor. 2014 IEEE/SICE International Symposium on System Integration (SII), IEEE, pp. 304–309

Sim S (2015) Simulator for invoked reality environment design. Thesis, Graduate School of Dongguk University

Song W, Xi Y, Ikram W, Cho S, Cho K, Um K (2014) Design and implementation of a web camera-based natural user interface engine. Lecture Notes in Electrical Engineering. Future Inf Technol 309: 497–502

Tran C, Trivedi MM (2012) 3-D posture and gesture recognition for interactivity in smart spaces. IEEE Trans Ind Inf 8(1):178–187

Williamson BM, LaViola JJ, Roberts T, Garrity P (2012) Multi-Kinect tracking for dismounted soldier training. Proceedings of the Interservice/Industry Training, Simulation, and Education Conference (I/ITSEC). pp. 1–9

Yumak Z, Ren J, Thalmann NM, Yuan J (2014) Tracking and fusion for multiparty interaction with a virtual character and a social robot. SIGGRAPH Asia 2014 Autonomous Virtual Humans and Social Robot for Telepresence. ACM, pp. 3

Zerroug A, Cassinelli A, Ishikawa M (2011) Invoked computing: spatial audio and video AR invoked through miming. Proceedings of Virtual Reality International Conference, pp. 31–32

Acknowledgments

This research was supported by the MSIP(Ministry of Science, ICT and Future Planning), Korea, under the ITRC(Information Technology Research Center) support program (IITP-2016-H8501-16-1014) supervised by the IITP(Institute for Information & communications Technology Promotion) and was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Science, ICT and future Planning (NRF-2015R1A2A2A01003779).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Jung, Y., Xi, Y., Cho, S. et al. Design and implementation of a same-user identification system in invoked reality space. Multimed Tools Appl 76, 11429–11447 (2017). https://doi.org/10.1007/s11042-016-4117-4

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-016-4117-4