Abstract

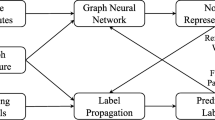

Node classification is a crucial task for efficiently analyzing graph-structured data. Related semi-supervised methods have been extensively studied to address the scarcity of labeled data in emerging classes. However, two fundamental weaknesses hinder the performance: lacking the ability to mine latent semantic information between nodes, or ignoring to simultaneously capture local and global coupling dependencies between different nodes. To solve these limitations, we propose a novel semantic-enhanced graph neural networks with global context representation for semi-supervised node classification. Specifically, we first use graph convolution network to learn short-range local dependencies, which not only considers the spatial topological structure relationship between nodes, but also takes into account the semantic correlation between nodes to enhance the representation ability of nodes. Second, an improved Transformer model is introduced to reasoning the long-range global pairwise relationships, which has linear computational complexity and is particularly important for large datasets. Finally, the proposed model shows strong performance on various open datasets, demonstrating the superiority of our solutions.

Similar content being viewed by others

Code availability

All the codes have been released on https://github.com/GridBard/SEGNN.

References

Ba, J.L., Kiros, J.R. & Hinton, G.E. (2016). Layer normalization. arXiv preprint arXiv:1607.06450

Chen, M., Wei, Z., Huang, Z., Ding, B. & Li, Y. (2020). Simple and deep graph convolutional networks. In International conference on machine learning (pp. 1725–1735).

Chen, J. & Kou, G. (2023). Attribute and structure preserving graph contrastive learning. In Proceedings of the AAAI conference on artificial intelligence (Vol. 37, pp. 7024–7032).

Chen, J., Gao, K., Li, G. & He, K. (2022). Nagphormer: Neighborhood aggregation graph transformer for node classification in large graphs. arXiv preprint arXiv:2206.04910

Gasteiger, J., Bojchevski, A. & Günnemann, S. (2019). Combining neural networks with personalized PageRank for classification on graphs. In International conference on learning representations

Ge, X., Chen, F., Xu, S., Tao, F. & Jose, J.M. (2023). Cross-modal semantic enhanced interaction for image-sentence retrieval. In Proceedings of the IEEE/CVF winter conference on applications of computer vision (pp. 1022–1031).

Geng, Z., Guo, M.-H., Chen, H., Li, X., Wei, K. & Lin, Z. (2021). Is attention better than matrix decomposition? In International conference on learning representations

Guo, M.-H., Cai, J.-X., Liu, Z.-N., Mu, T.-J., Martin, R. R., & Hu, S.-M. (2021). Pct: Point cloud transformer. Computational Visual Media, 7, 187–199.

Hamilton, W., Ying, Z. & Leskovec, J. (2017). Inductive representation learning on large graphs. In Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S., & Garnett, R. (eds.) Advances in neural information processing systems, Vol. 30, Curran Associates, Inc.

He, K., Zhang, X., Ren, S., & Sun, J. (2016) Deep residual learning for image recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 770–778).

Hu, Z., Wang, Z., Wang, Y., & Tan, A.-H. (2023). Msrl-net: A multi-level semantic relation-enhanced learning network for aspect-based sentiment analysis. Expert Systems with Applications, 217, 119492.

Huang, Z., Wang, X., Huang, L., Huang, C., Wei, Y. & Liu, W. (2019). Ccnet: Criss-cross attention for semantic segmentation. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 603–612).

Jin, W., Derr, T., Wang, Y., Ma, Y., Liu, Z. & Tang, J. (2021). Node similarity preserving graph convolutional networks. In Proceedings of the 14th ACM international conference on web search and data mining (pp. 148–156).

Kipf, T.N. & Welling, M. (2017). mSemi-supervised classification with graph convolutional networks. In International conference on learning representations.

Li, X., Zhu, R., Cheng, Y., Shan, C., Luo, S., Li, D. & Qian, W. (2022) Finding global homophily in graph neural networks when meeting heterophily. In Chaudhuri, K., Jegelka, S., Song, L., Szepesvari, C., Niu, G. & Sabato, S. (eds.) Proceedings of the 39th international conference on machine learning (Vol. 162, pp. 13242–13256).

Li, X., Zhong, Z., Wu, J., Yang, Y., Lin, Z. & Liu, H. (2019). Expectation-maximization attention networks for semantic segmentation. In Proceedings of the IEEE/CVF international conference on computer vision (pp. 9167–9176).

Liu, H., Peng, P., Chen, T., Wang, Q., Yao, Y. & Hua, X.-S. (2023). Fecanet: Boosting few-shot semantic segmentation with feature-enhanced context-aware network. IEEE Transactions on Multimedia, 1–13.

McPherson, M., Smith-Lovin, L. & Cook, J.M. (2001). Birds of a feather: Homophily in social networks. Annual Review Of Sociology, 415–444.

Pandit, S., Chau, D.H., Wang, S. & Faloutsos, C. (2007) Netprobe: A fast and scalable system for fraud detection in online auction networks. In Proceedings of the 16th international conference on World Wide Web (pp. 201–210).

Pei, H., Wei, B., Chang, K.C.-C., Lei, Y. & Yang, B. (2020). Geom-GCN: Geometric graph convolutional networks. In International Conference on learning representations

Rong, Y., Bian, Y., Xu, T., Xie, W., WEI, Y., Huang, W. & Huang, J. (2020). Self-supervised graph transformer on large-scale molecular data. In Larochelle, H., Ranzato, M., Hadsell, R., Balcan, M.F. & Lin, H. (eds.) Advances in neural information processing systems (Vol. 33, pp. 12559–12571).

Rozemberczki, B., Allen, C., & Sarkar, R. (2021). Multi-scale attributed node embedding. Journal of Complex Networks, 9(2), cnab014.

Shchur, O., Mumme, M., Bojchevski, A. & Günnemann, S. (2018). Pitfalls of graph neural network evaluation. arXiv preprint arXiv:1811.05868

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A.N., Kaiser, L.u. & Polosukhin, I. (2017). Attention is all you need. In Guyon, I., Luxburg, U.V., Bengio, S., Wallach, H., Fergus, R., Vishwanathan, S. & Garnett, R. (eds.) Advances in neural information processing systems, Vol. 30, Curran Associates, Inc.

Veličković, P., Cucurull, G., Casanova, A., Romero, A., Liò, P. & Bengio, Y. (2018). Graph attention networks. In International conference on learning representations.

Wang, G., Ying, R., Huang, J. & Leskovec, J. (2021). Multi-hop attention graph neural networks. In Zhou, Z.-H. (ed.) Proceedings of the thirtieth international joint conference on artificial intelligence, IJCAI-21 (pp. 3089–3096).

Wu, G., Lu, Z., Zhuo, X., Bao, X., & Wu, X. (2023). Semantic fusion enhanced event detection via multi-graph attention network with skip connection. IEEE Transactions on Emerging Topics in Computational Intelligence, 7(3), 931–941.

Wu, Z., Jain, P., Wright, M., Mirhoseini, A., Gonzalez, J.E. & Stoica, I. (2021). Representing long-range context for graph neural networks with global attention. In Advances in neural information processing systems (Vol. 34, pp. 13266–13279).

Xu, H., Huang, C., Xu, Y., Xia, L., Xing, H. & Yin, D. (2020). Global context enhanced social recommendation with hierarchical graph neural networks. In 2020 IEEE international conference on data mining (ICDM) (pp. 701–710).

Yang, X., Deng, C., Dang, Z., Wei, K. & Yan, J. (2021). Selfsagcn: Self-supervised semantic alignment for graph convolution network. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 16775–16784).

Yun, S., Jeong, M., Kim, R., Kang, J., & Kim, H.J. (2019). Graph transformer networks. In Wallach, H., Larochelle, H., Beygelzimer, A., d’Alche-Buc, F., Fox, E., & Garnett, R. (eds.) Advances in Neural Information Processing Systems, Vol. 32, Curran Associates, Inc.

Zhu, J., Yan, Y., Zhao, L., Heimann, M., Akoglu, L., & Koutra, D. (2020). Beyond homophily in graph neural networks: Current limitations and effective designs. Advances in Neural Information Processing Systems, 33, 7793–7804.

Zou, M., Gan, Z., Cao, R., Guan, C., & Leng, S. (2023). Similarity-navigated graph neural networks for node classification. Information Sciences, 633, 41–69.

Acknowledgements

This work is supported by the National Science Foundation of China (No. 62306152). Additionally, we express our heartfelt gratitude to the reviewers for their invaluable comments and insightful suggestions.

Funding

This work is supported by the National Science Foundation of China (No. 62306152).

Author information

Authors and Affiliations

Contributions

All authors contributed to the work. The paper was written together by Youcheng Qian and Xueyan Yin. Youcheng Qian designed and conducted the experiments and Xueyan Yin commented on ways for improvements. All authors have read and approved the final manuscript.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no Conflict of interest.

Ethics approval

Not applicable.

Consent to participate

Not applicable.

Consent for publication

Not applicable.

Additional information

Editors: Dino Ienco, Roberto Interdonato, Pascal Poncelet.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Qian, Y., Yin, X. Semantic-enhanced graph neural networks with global context representation. Mach Learn (2024). https://doi.org/10.1007/s10994-024-06523-0

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10994-024-06523-0