Abstract

It is conventional wisdom in machine learning and data mining that logical models such as rule sets are more interpretable than other models, and that among such rule-based models, simpler models are more interpretable than more complex ones. In this position paper, we question this latter assumption by focusing on one particular aspect of interpretability, namely the plausibility of models. Roughly speaking, we equate the plausibility of a model with the likeliness that a user accepts it as an explanation for a prediction. In particular, we argue that—all other things being equal—longer explanations may be more convincing than shorter ones, and that the predominant bias for shorter models, which is typically necessary for learning powerful discriminative models, may not be suitable when it comes to user acceptance of the learned models. To that end, we first recapitulate evidence for and against this postulate, and then report the results of an evaluation in a crowdsourcing study based on about 3000 judgments. The results do not reveal a strong preference for simple rules, whereas we can observe a weak preference for longer rules in some domains. We then relate these results to well-known cognitive biases such as the conjunction fallacy, the representative heuristic, or the recognition heuristic, and investigate their relation to rule length and plausibility.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In their classical definition of the field, Fayyad et al. (1996) have defined knowledge discovery in databases as “the non-trivial process of identifying valid, novel, potentially useful, and ultimately understandable patterns in data.” Research has since progressed considerably in all of these dimensions in a mostly data-driven fashion. The validity of models is typically addressed with predictive evaluation techniques such as significance tests, hold-out sets, or cross validation (Japkowicz and Shah 2011), techniques which are now also increasingly used for pattern evaluation (Webb 2007). The novelty of patterns is typically assessed by comparing their local distribution to expected values, in areas such as novelty detection (Markou and Singh 2003a, b), where the goal is to detect unusual behavior in time series, subgroup discovery (Kralj Novak et al. 2009), which aims at discovering groups of data that have unusual class distributions, or exceptional model mining (Duivesteijn et al. 2016), which generalizes this notion to differences with respect to data models instead of data distributions. The search for useful patterns has mostly been addressed via optimization, where the utility of a pattern is defined via a predefined objective function (Hu and Mojsilovic 2007) or via cost functions that steer the discovery process into the direction of low-cost or high-utility solutions (Elkan 2001). To that end, Kleinberg et al. (1998) formulated a data mining framework based on utility and decision theory.

Arguably, the last dimension, understandability or interpretability, has received the least attention in the literature. The reason why interpretability has rarely been explicitly addressed is that it is often equated with the presence of logical or structured models such as decision trees or rule sets, which have been extensively researched since the early days of machine learning. In fact, much of the research on learning such models has been motivated with their interpretability. For example, Fürnkranz et al. (2012) argue that rules “offer the best trade-off between human and machine understandability”. Similarly, it has been argued that rule induction offers a good “mental fit” to decision-making problems (van den Eijkel 1999; Weihs and Sondhauss 2003). Their main advantage is the simple logical structure of a rule, which can be directly interpreted by experts not familiar with machine learning or data mining concepts. Moreover, rule-based models are highly modular, in the sense that they may be viewed as a collection of local patterns (Fürnkranz 2005; Knobbe et al. 2008; Fürnkranz and Knobbe 2010), whose individual interpretations are often easier to grasp than the complete predictive theory. For example, Lakkaraju et al. (2016) argued that rule sets (which they call decision sets) are more interpretable than decision lists because they can be decomposed into individual local patterns.

Only recently, with the success of highly precise but largely inscrutable deep learning models, has the topic of interpretability received serious attention, and several workshops in various disciplines have been devoted to the topic of learning interpretable models at conferences like ICML (Kim et al. 2016, 2017, 2018), NIPS (Wilson et al. 2016; Tosi et al. 2017; Müller et al. 2017) or CHI (Gillies et al. 2016). Moreover, several books on the subject have already appeared, or are in preparation (Jair Escalante et al. 2018; Molnar 2019), funding agencies like DARPA have recognized the need for explainable AI,Footnote 1 and the General Data Protection Regulation of the EC includes a “right to explanation”, which may have a strong impact on machine learning and data mining solutions (Piatetsky-Shapiro 2018).

The strength of many recent learning algorithms, most notably deep learning (LeCun et al. 2015; Schmidhuber 2015), feature learning (Mikolov et al. 2013), fuzzy systems (Alonso et al. 2015) or topic modeling (Blei 2012), is that latent variables are formed during the learning process. Understanding the meaning of these hidden variables is crucial for transparent and justifiable decisions. Consequently, visualization of such model components has recently received some attention (Chaney and Blei 2012; Zeiler and Fergus 2014; Rothe and Schütze 2016). Alternatively, some research has been devoted to trying to convert such arcane models to more interpretable rule-based or tree-based theories (Andrews et al. 1995; Craven and Shavlik 1997; Schmitz et al. 1999; Zilke et al. 2016) or to develop hybrid models that combine the interpretability of logic with the predictive strength of statistical and probabilistic models (Besold et al. 2017; Tran and d’Avila Garcez 2018; Hu et al. 2016).

Instead of making the entire model interpretable, methods like LIME (Ribeiro et al. 2016) are able to provide local explanations for inscrutable models, allowing to trade off fidelity to the original model with interpretability and complexity of the local model. In fact, Martens and Provost (2014) report on experiments that illustrate that such local, instance-level explanations are preferable to global, document-level models. An interesting aspect of rule-based theories is that they can be considered as hybrids between local and global explanations (Fürnkranz 2005): A rule set may be viewed as a global model, whereas the individual rule that fires for a particular example may be viewed as a local explanation.

Nevertheless, in our view, many of these approaches fall short in that they take the interpretability of rule-based models for granted. Interpretability is often considered to correlate with complexity, with the intuition that simpler models are easier to understand. Principles like Occam’s Razor (Blumer et al. 1987) or Minimum Description Length (MDL) (Rissanen 1978) are commonly used heuristics for model selection, and have shown to be successful in overfitting avoidance. As a consequence, most rule learning algorithms have a strong bias towards simple theories. Despite the necessity of bias for simplicity for overfitting avoidance, we argue in this paper that simpler rules are not necessarily more interpretable, at least not when other aspects of interpretability beyond the mere syntactic readability are considered. This implicit equation of comprehensibility and simplicity was already criticized by, e.g., Pazzani (2000), who argued that “there has been no study that shows that people find smaller models more comprehensible or that the size of a model is the only factor that affects its comprehensibility.” There are also a few systems that explicitly strive for longer rules, and recent evidence has shed some doubt on the assumption that shorter rules are indeed preferred by human experts. We will discuss the relation of rule complexity and interpretability at length in Sect. 2.

Other criteria than accuracy and model complexity have rarely been considered in the learning process. For example, Gabriel et al. (2014) proposed to consider the semantic coherence of its conditions when formulating a rule. Pazzani et al. (2001) show that rules that respect monotonicity constraints are more acceptable to experts than rules that do not. As a consequence, they modify a rule learner to respect such constraints by ignoring attribute values that generally correlate well with other classes than the predicted class. Freitas (2013) reviews these and other approaches, compares several classifier types with respect to their comprehensibility and points out several drawbacks of model size as a single measure of interpretability.

In his pioneering framework for inductive learning, Michalski (1983) stressed its links with cognitive science, noting that “inductive learning has a strong cognitive science flavor”, and postulates that “descriptions generated by inductive inference bear similarity to human knowledge representations” with reference to Hintzman (1978), an elementary text from psychology on human learning. Michalski (1983) considers adherence to the comprehensibility postulate to be “crucial” for inductive rule learning, yet, as discussed above, it is rarely ever explicitly addressed beyond equating it with model simplicity. Miller (2019) makes an important first step by providing a comprehensive review of what is known in the social sciences about explanations and discusses these findings in the context of explainable artificial intelligence.

In this paper, we primarily intend to highlight this gap in machine learning and data mining research. In particular, we focus on the plausibility of rules, which, in our view, is an important aspect that contributes to interpretability (Sect. 2). In addition to the comprehensibility of a model, which we interpret in the sense that the user can understand the learned model well enough to be able to manually apply it to new data, and its justifiability, which specifies whether the model is in line with existing knowledge, we argue that a good model should also be plausible, i.e., be convincing and acceptable to the user. For example, as an extreme case, a default model that always predicts the majority class is very interpretable, but in most cases not very plausible. We will argue that different models may have different degrees of plausibility, even if they have the same discriminative power. Moreover, we believe that the plausibility of a model is—all other things being equal—not related or in some cases even positively correlated with the complexity of a model.

To that end, we also report the results of a crowdsourcing evaluation of learned rules in four domains (Sect. 3). Overall, the performed experiments are based on nearly 3000 judgments collected from 390 distinct participants. The results show that there is indeed no evidence that shorter rules are preferred by humans. On the contrary, we could observe a preference for longer rules in two of the studied domains (Sect. 4). In the following, we then relate this finding to related results in the psychological literature, such as the conjunctive fallacy (Sect. 5) and insensitivity to sample size (Sect. 6). Section 7 is devoted to a discussion of the relevance of conditions in rules, which may not always have the expected influence on one’s preference, in accordance with the recently described weak evidence effect. The remaining sections focus on the interplay of cognitive factors and machine readable semantics: Sect. 8 covers the recognition heuristic, Sect. 9 discusses the effect of semantic coherence on interpretability, and Sect. 10 briefly highlights the lack of methods for learning structured rule-based models.

2 Aspects of interpretability

Interpretability is a very elusive concept which we use in an intuitive sense. Kodratoff (1994) has already observed that it is an ill-defined concept, and has called upon several communities from both academia and industry to tackle this problem, to “find objective definitions of what comprehensibility is”, and to open “the hunt for probably approximate comprehensible learning”. Since then, not much has changed. Lipton (2016) still suggests that the term interpretability is ill-defined. In fact, the concept can be found under different names in the literature, including understandability, interpretability, comprehensibility, plausibility, trustworthiness, justifiability and others. They all have slightly different semantic connotations.

A thorough clarification of this terminology is beyond the scope of this paper, but in the following, we briefly highlight different aspects of interpretability and then proceed to clearly define and distinguish comprehensibility and plausibility, the two aspects that are pertinent to this work.

2.1 Three aspects of interpretability

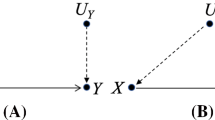

In this section, we attempt to bring some order into the multitude of terms that are used in the context of interpretability. Essentially, we distinguish three aspects of interpretability (see also Fig. 1):

Syntactic interpretability: This aspect is concerned with the ability of the user to comprehend the knowledge that is encoded in the model, in very much the same way as the definition of a term can be understood in a conversation or a textbook.

Epistemic interpretability: This aspect assesses to what extent the model is in line with existing domain knowledge. A model can be interpretable in the sense that the user can operationalize and apply it, but the encoded knowledge or relationships are not well correlated with the user’s prior knowledge. For example, a model which states that the temperature is rising on odd-numbered days and falling on even-numbered days has a high syntactic interpretability but a low epistemic interpretability.

Pragmatic interpretability: Finally, we argue that it is important to capture whether the model serves the intended purpose. A model can be perfectly interpretable in the syntactic and epistemic sense but have a low pragmatic value for the user. For example, the simple model that the temperature tomorrow will be roughly the same as today is obviously very interpretable in the syntactic sense, it is also quite consistent with our experience and therefore interpretable in the epistemic sense, but it may not be satisfying as an acceptable explanation for a weather forecast.

Note that these three categories essentially correspond to the grouping of terms pertinent to interpretability, which has previously been introduced by Bibal and Frénay (2016). They treat terms like comprehensibility, understandability, and mental fit, as essentially synonymous to interpretability, and use them to denote syntactic interpretability. In a second group, Bibal and Frénay (2016) bring notions such as interestingness, usability, and acceptability together, which essentially corresponds to our notion of pragmatic interpretability. Finally, they have justifiability as a separate category, which essentially corresponds to what we mean by epistemic interpretability. We also subsume their notion of explanatory as explainability in this group, which we view as synonymous to justifiability. A key difference to their work lies in our view that all three of the above are different aspects of interpretability, whereas Bibal and Frénay (2016) view the latter two groups as different but related concepts.

We also note in passing that this distinction loosely corresponds to prominent philosophical treatments of explanations (Mayes 2001). Classical theories, such as the deductive-nomological theory of explanation (Hempel and Oppenheim 1948), are based on the validity of the logical connection between premises and conclusion. Instead, Van Fraassen (1977) suggests a pragmatic theory of explanations, according to which the explanation should provide the answer to a (why-)question. Therefore, the same proposition may have different explanations, depending on the information demand. For example, an explanation for why a patient was infected with a certain disease may relate to her medical conditions (for the doctor) or to her habits (for the patient). Thus, pragmatic interpretability is a much more subjective and user-centered notion than epistemic interpretability.

However, clearly, these aspects are not independent. As already noted by Bibal and Frénay (2016), syntactic interpretability is a prerequisite to the other two notions. We also view epistemic interpretability as a prerequisite to pragmatic interpretability: In case a model is not in line with the user’s prior knowledge and therefore has a low epistemic value, it also will have a low pragmatic value to the user. Moreover, the differences between the terms shown in Fig. 1 are soft, and not all previous studies have used them in consistent ways. For example, Muggleton et al. (2018) employ a primarily syntactic notion of comprehensibility (as we will see in Sect. 2.2) and evaluate it by testing whether the participants in their study can successfully apply the acquired knowledge to new problems. In addition, it is also measured whether they can give meaningful names to the explanations they deal with, and whether these names are helpful in applying the knowledge. Thus, these experiments try to capture epistemic aspects as well.

2.2 Comprehensibility

One of the few attempts for an operational definition of interpretability is given in the works of Schmid et al. (2017) and Muggleton et al. (2018), who related the concept to objective measurements such as the time needed for inspecting a learned concept, for applying it in practice, or for giving it a meaningful and correct name. This gives interpretability a clearly syntactic interpretation in the sense defined in Sect. 2.1. Following Muggleton et al. (2018), we refer to this type of syntactic interpretability as comprehensibility and define it as follows:

Definition 1

(Comprehensibility) A model \(\mathbf {m}_1\) is more “comprehensible” than a model \(\mathbf {m}_2\) with respect to a given task if a human user makes fewer mistakes in the application of model \(\mathbf {m}_1\) to new samples drawn randomly from the task domain than when applying \(\mathbf {m}_2\).

Thus, a model is considered to be comprehensible if a user is able to understand all the mental calculations that are prescribed by the model, and can successfully apply the model to new tasks drawn from the same population. A model is more comprehensible than another model if the user’s error rate in doing so is smaller.Footnote 2 Muggleton et al. (2018) study various related, measurable quantities, such as the inspection time, the rate with which the meaning of the predicate is recognized from its definition, or the time used for coming up with a suitable name for a definition.

Relation to alternative notions of interpretability.Piltaver et al. (2016) use a very similar definition when they study how the response time for various data- and model-related tasks such as “classify”, “explain”, “validate”, or “discover” varies with changes in the structure of learned decision trees. Another variant of this definition was suggested by Dhurandhar et al. (2017; 2018), who consider interpretability relative to a target model, typically (but not necessarily) a human user. More precisely, they define a learned model as \(\delta \)-interpretable relative to a target model if the target model can be improved by a factor of \(\delta \) (e.g., w.r.t. predictive accuracy) with information obtained by the learned model. All these notions have in common that they relate interpretability to a performance aspect, in the sense that a task can be performed better or performed at all with the help of the learned model.

As illustrated in Fig. 1, we consider understandability, readability and mental fit as alternative terms for syntactic interpretability. Understandability is considered as a direct synonym for comprehensibility (Bibal and Frénay 2016). Readability clearly corresponds to the syntactic level. The term mental fit may require additional explanation. We used it in the sense of van den Eijkel (1999) to denote the suitability of the representation (i.e. rules) for a given purpose (to explain a classification model).

2.3 Justifiability

A key aspect on interpretability is that a concept is consistent with available domain knowledge, which we call epistemic interpretability. Martens and Baesens (2010) have introduced this concept under the name of justifiability. They consider a model to be more justifiable if it better conforms to domain knowledge, which may be viewed as constraints to which a justifiable model has to conform (hard constraints) or should better conform (soft constraints). Martens et al. (2011) provide a taxonomy of such constraints, which include univariate constraints such as monotonicity as well as multivariate constraints such as preferences for groups of variables.

We paraphrase and slightly generalize this notion in the following definition:

Definition 2

(Justifiability) A model \(\mathbf {m}_1\) is more “justifiable” than a model \(\mathbf {m}_2\) if \(\mathbf {m}_1\) violates fewer constraints that are imposed by the user’s prior knowledge.

Martens et al. (2011) also define an objective measure for justifiability, which essentially corresponds to a weighted sum over the fractions of cases where each variable is needed in order to discriminate between different class values.

Relation to comprehensibility and plausibility. Definition 1 (comprehensibility) addresses the syntactical level of understanding, which is a prerequisite for justifiability. What this definition does not cover are facets of interpretability that relate to one’s background knowledge. For example, an empty model or a default model, classifying all examples as positive, is very simple to interpret, comprehend and apply, but such model will hardly be justifiable.

Clearly, one needs to be able to comprehend the definition of a concept before it can be checked whether it corresponds to existing knowledge. Conversely, we view justifiability as a prerequisite to our notion of plausibility, which we will define more precisely in the next section: a theory that does not conform to domain knowledge is not plausible, but, on the other hand, the user may nevertheless assess different degrees of plausibility to different explanations that are all consistent with our knowledge. In fact, many scientific and in particular philosophical debates are about different, conflicting theories, which are all justifiable but have different degrees of plausibility for different groups of people.

Relation to alternative notions of interpretability. Referring to Fig. 1, we view plausibility as an aspect of epistemic interpretability, similar to notions like explainability, trustworthiness and credibility. Both trustworthiness and credibility imply an evaluation of the model against domain knowledge. Explainability is harder to define and has received multiple definitions in the literature. We essentially follow Gall (2019), who makes a distinction that is similar to our notions of syntactic and epistemic interpretability: in his view, interpretability is to allow the user to grasp the mechanics of a process (similar to the notion of mental fit that we have used above), whereas explainability also implies a deeper understanding of why the process works in this way. This requires the ability to relate the notion to existing knowledge, which is why we view it primarily as an aspect of epistemic interpretability.

Good discriminative rules for the quality of living of a city (Paulheim 2012b)

2.4 Plausibility

In this paper, we focus on a pragmatic aspect of interpretability, which we refer to as plausibility. We primarily view this notion in the sense of “user acceptance” or “user preference”. However, as discussed in Sect. 2.1, this also means that it has to rely on aspects of syntactic and epistemic interpretability as prerequisites. For the purposes of this paper, we define plausibility as follows:

Definition 3

(Plausibility) A model \(\mathbf {m}_1\) is more “plausible” than a model \(\mathbf {m}_2\) if \(\mathbf {m}_1\) is more likely to be accepted by a user than \(\mathbf {m}_2\).

Within this definition, the word “accepted” bears the meaning specified by the Cambridge English DictionaryFootnote 3 as “generally agreed to be satisfactory or right”.

Our definition of plausibility is less objective than the above definition of comprehensibility because it always relates to the subject’s perception of the utility of a given explanation, i.e., its pragmatic aspect. Plausibility, in our view, is inherently subjective, i.e., it relates to the question how useful a model is perceived by a user. Thus, it needs to be evaluated in introspective user studies, where the users explicitly indicate how plausible an explanation is, or which of two explanations appears to be more plausible. Two explanations that can equally well be applied in practice (and thus have the same syntactic interpretability) and are both consistent with existing knowledge (and thus have the same epistemic interpretability), may nevertheless be perceived as having different degrees of plausibility.

Relation to comprehensibility and justifiability. A model may be consistent with domain knowledge, but nevertheless appear implausible. Consider, e.g., the rules shown in Fig. 2, which have been derived by the Explain-a-LOD system (Paulheim and Fürnkranz 2012). The rules provide several possible explanations for why a city has a high quality of living, using Linked Open Data as background knowledge. Clearly, all rules are comprehensible and can be easily applied in practice. They also appear to be justifiable in the sense that all of them appear to be consistent with prior knowledge. For example, while the number of records made in a city is certainly not a prima facie aspect of its quality of living, it is reasonable to assume a correlation between these two variables. Nevertheless, the first three rules appear to be more plausible to a human user, which was also confirmed in an experimental study (Paulheim 2012a, b).

Relation to alternative notions of interpretability. In Fig. 1 we consider interestingness, usability, and acceptability as related terms. All these notions imply some degree of user acceptance or fitness for given purpose.

In the remainder of the paper, we will typically talk about “plausibility” in the sense defined above, but we will sometimes use terms like “interpretability” as a somewhat more general term. We also use “comprehensibility”, mostly when we refer to syntactic interpretability, as discussed and defined above. However, all terms are meant to be interpreted in an intuitive, and non-formal way.Footnote 4

3 Setup of crowdsourcing experiments on plausibility

In the remainder of the paper, we focus on the plausibility of rules. In particular, we report on a series of five crowdsourcing experiments, which relate the perceived plausibility of a rule to various factors such as rule complexity, attribute importance or centrality. As a basis, we used pairs of rules generated by machine learning systems, typically one rule representing a shorter, and the other a longer explanation. Participants were then asked to indicate which one of the pair they preferred.

The selection of crowdsourcing as a means of acquiring data allows us to gather thousands of responses in a manageable time frame while at the same time ensuring our results can be easily replicated.Footnote 5 In the following, we describe the basic setup that is common to all performed experiments. Most of the setup is shared for the subsequent experiments and will not be repeated, only specific deviations will be mentioned. Cognitive science research has different norms for describing experiments than those that are commonly employed in machine learning research.Footnote 6 Also, the parameters of the experiments, such as the amount of payment, is described in somewhat greater detail than usual in machine learning, because of the general sensitivity of the participants to such conditions.

We tried to respect these differences by dividing experiment descriptions here and in subsequent sections into subsections entitled “Material”, “Participants”, “Methodology”, and “Results”, which correspond to the standard outline of an experimental account in cognitive science. In the following, we describe the general setup that applies to all experiments in the following sections, where then the main focus can be put on the results.

3.1 Material

For each experiment, we generated rule pairs generated with two different learning algorithms, and asked users about their preference. The details of the rule generation and selection process are described in this section.

3.1.1 Domains

For the experiment, we used learned rules in four domains (Table 1):

- Mushroom:

contains mushroom records drawn from Field Guide to North American Mushrooms (Lincoff 1981). Being available at the UCI repository (Dua and Karra Taniskidou 2017), it is arguably one of the most frequently used datasets in rule learning research, its main advantage being discrete, understandable attributes.

- Traffic:

is a statistical dataset of death rates in traffic accidents by country, obtained from the WHO.Footnote 7

- Quality:

is a dataset derived from the Mercer Quality of Living index, which collects the perceived quality of living in cities world wide.Footnote 8

- Movies:

is a dataset of movie ratings obtained from MetaCritic.Footnote 9

The last three datasets were derived from the Linked Open Data (LOD) cloud (Ristoski et al. 2016). Originally, they consisted only of a name and a target variable, such as a city and its quality-of-living index, or a movie and its rating. The names were then linked to entities in the public LOD dataset DBpedia, using the method described by Paulheim and Fürnkranz (2012). From that dataset, we extracted the classes to which the entities belong, using the deep classification of YAGO, which defines a very fine grained class hierarchy of several thousand classes. Each class was added as a binary attribute. For example, the entity for the city of Vienna would get the binary features European Capitals, UNESCO World Heritage Sites, etc.

The goal behind these selections was that the domains are general enough so that the participants are able to comprehend a given rule without the need for additional background knowledge, but are nevertheless not able to reliably judge the validity of a given rule. Thus, participants will need to rely on their common sense in order to judge which of the two rules appears to be more convincing. This also implies that we specifically did not expect the users to have expert knowledge in these domains.

3.1.2 Rule generation

We used two different approaches to generate rules for each of the four domains mentioned in the previous section.

Class Association Rules: We used a standard implementation of the Apriori algorithm for association rule learning (Agrawal et al. 1993; Hahsler et al. 2011) and filtered the output for class association rules with a minimum support of 0.01, minimum confidence of 0.5, and a maximum length of 5. Pairs were formed between all rules that correctly classified at least one shared instance. Although other more sophisticated approaches (such as a threshold on the Dice coefficient) were considered, it turned out that the process outlined above produced rule pairs with quite similar values of confidence (i.e., most equal to 1.0), except for the Movies dataset.

Classification Rules: We used a simple top-down greedy hill-climbing algorithm that takes a seed example and generates a pair of rules, one with a regular heuristic (Laplace) and one with its inverted counterpart. As shown by Stecher et al. (2016) and illustrated in Fig. 5, this results in rule pairs that have approximately the same degree of generality but different complexities.

From the resulting rule sets, we selected several rule pairs consisting of a long and a short rule that have the same or a similar degree of generality.Footnote 10 For Quality and Movies, all rule pairs were used. For the Mushroom dataset, we selected rule pairs so that every difference in length (one to five) is represented. All selected rule pairs were pooled, so we did not discriminate between the learning algorithm that was used for generating them. For the Traffic dataset, the rule learner generated a higher number of rules than for the other datasets, which allowed us to select the rule pairs for annotation in such a way that various types of differences between rules in each pair were represented. Since this stratification procedure, detailed in (Kliegr 2017), applied only to one of the datasets, we do not expect this design choice to have a profound impact on the overall results and omit a detailed description here.

As a final step, we automatically translated all rule pairs into human-friendly HTML-formatted text, and randomized the order of the rules in the rule pair. Example rules for the four datasets are shown in Fig. 3. The first column of Table 1 shows the final number of rule pairs generated in each domain.

3.2 Methodology

The generated rule pairs were then evaluated in a user study on a crowdsourcing platform, where participants were asked to issue a preference between the plausibility of the shown rules. This was then correlated to various factors that could have an influence on plausibility.

3.2.1 Definition of crowdsourcing experiments

As the experimental platform we used the CrowdFlower crowdsourcing service.Footnote 11 Similar to the better-known Amazon Mechanical Turk, CrowdFlower allows to distribute questionnaires to participants around the world, who complete them for remuneration. The remuneration is typically a small payment in US dollars—for one judgment relating to one rule we paid 0.07 USD—but some participants may receive the payment in other currencies, including in game currencies (“coins”).

A crowdsourcing task performed in CrowdFlower consists of a sequence of steps:

- 1.

The CrowdFlower platform recruits participants, so-called workers for the task from a pool of its users, who match the level and geographic requirements set by the experimenter. The workers decide to participate in the task based on the payment offered and the description of the task.

- 2.

Participants are presented assignments which contain an illustrative example.

- 3.

If the task contains test questions, each worker has to pass a quiz mode with test questions. Participants learn about the correct answer after they pass the quiz mode, and have the option to contest the correct answer if they consider it incorrect.

- 4.

Participants proceed to the work mode, where they complete the task they have been assigned by the experimenter. The task typically has the form of a questionnaire. If test questions were defined by the experimenter, the CrowdFlower platform randomly inserts test questions into the questionnaire. Failing a predefined proportion of hidden test questions results in removal of the worker from the task. Failing the initial quiz or failing a task can also reduce participants’ accuracy on the CrowdFlower platform. Based on the average accuracy, participants can reach one of the three levels. A higher level gives a user access to additional, possibly better paying tasks.

- 5.

Participants can leave the experiment at any time. To obtain payment for their work, they need to submit at least one page of work. After completing each page of work, the worker can opt to start another page. The maximum number of pages per participant is set by the experimenter. As a consequence, two workers can contribute a different number of judgments to the same task.

- 6.

If a bonus was promised, the qualifying participants receive extra credit.

Example instructions for experiments 1–3. The example rule pair was adjusted based on the dataset. For Experiment 3, the box with the example rule additionally contained values of confidence and support, formatted as shown in Fig. 8

The workers were briefed with task instructions, which described the purpose of the task, gave an example rule, and explained plausibility as the elicited quantity (cf. Fig. 4). As part of the explanation, the participants were given definitions of “plausible” sourced from the Oxford DictionaryFootnote 12 and the Cambridge DictionaryFootnote 13 (British and American English). The individual task descriptions differed for the five tasks and will be described in more detail later in the paper in the corresponding sections. Table 2 shows a brief overview of the factors variables and their data types for the five experiments.

3.2.2 Evaluation

Rule plausibility was elicited on a five-level linguistic scale ranging from “Rule 2 (strong preference)” to “Rule 1 (strong preference)”, which were interpreted as ordinal values from \(-2\) to \(+2\). Evaluations were performed at the level of individual judgments, also called micro-level, i.e., each response was considered to be a single data point, and multiple judgments for the same pair were not aggregated prior to the analysis. By performing the analysis at the micro-level, we avoided the possible loss of information as well as the aggregation bias (Clark and Avery 1976). Also, as shown, for example, by Robinson (1950), the ecological (macro-level) correlations are generally larger than the micro-level correlations, therefore by performing the analysis on the individual level, we obtain more conservative results.

We report rank correlation between a factor and the observed evaluation (Kendall’s \(\tau \), Spearman’s \(\rho \)) and tested whether the coefficients are significantly different from zero. We will refer to the values of Kendall’s \(\tau \) as the primary measure of rank correlation, since according to Kendall and Gibbons (1990) and Newson (2002), the confidence intervals for Spearman’s \(\rho \) are less reliable than confidence intervals for Kendall’s \(\tau \).

For all obtained correlation coefficients we compute the p-value, which is the probability of obtaining a correlation coefficient at least as extreme as the one that was actually observed assuming that the null hypothesis holds, i.e., that there is no correlation between the two variables. The typical cutoff value for rejecting the null hypothesis is \(\alpha =0.05\).

3.3 Participants

The workers in the CrowdFlower platform were invited to participate in individual tasks. CrowdFlower divides the available workforce into three levels depending on the accuracy they obtained on earlier tasks. As the level of the CrowdFlower workers, we chose Level 2, which was described as follows: “Contributors in Level 2 have completed over a hundred Test Questions across a large set of Job types, and have an extremely high overall Accuracy.”.

In order to avoid spurious answers, we also employed a minimum threshold of 180 s for completing a page; workers taking less than this amount of time to complete a page were removed from the job. The maximum time required to complete the assignment was not specified, and the maximum number of judgments per contributor was not limited.

For quality assurance, each participant who decided to accept the task first faced a quiz consisting of a random selection of previously defined test questions. These had the same structure as regular questions but additionally contained the expected correct answer (or answers) as well as an explanation for the answer. We used swap test questions where the order of the conditions was randomly permuted in each of the two pairs so that the participant should not have a preference for either of the two versions. The correct answer and explanation was only shown after the worker had responded to the question. Only workers achieving at least 70% accuracy on test questions could proceed to the main task.

3.3.1 Statistical information about participants

CrowdFlower does not publish demographic data about its base of workers. Nevertheless, for all executed tasks, the platform makes available the location of the worker submitting each judgment. In this section, we use this data to elaborate on the number and geographical distribution of workers participating in Experiments 1–5 described later in this paper.

Table 3a reports on workers participating in Experiments 1–3, where three types of guidelines were used in conjunction with four different datasets, resulting in 9 tasks in total (not all combinations were tried). Experiments 4–5 involved different guidelines (for determining attribute and literal relevance) and the same datasets. The geographical distribution is reported in Table 3b. In total, the reported results are based on 2958 trusted judgments.Footnote 14 Actually, more judgments were collected, but some were excluded due to automated quality checks.

In order to reduce possible effects of language proficiency, we restricted our participants to English-speaking countries. Most judgments (1417) were made by workers from United States, followed by the United Kingdom (837) and Canada (704). The number of distinct participants for each crowdsourcing task is reported in detailed tables describing the results of the corresponding experiments (part column in Tables 4–8). Note that some workers participated in multiple tasks. The total number of distinct participants across all tasks reported in Tables 3a and 3b is 390.

3.3.2 Representativeness of crowdsourcing experiments

There is a number of differences between crowdsourcing and the controlled laboratory environment previously used to run psychological experiments. The central question is to what extent do the cognitive abilities and motivation of participants differ between the crowdsourcing cohort and the controlled laboratory environment. Since there is a small amount of research specifically focusing on the population of the CrowdFlower platform, which we use in our research, we present data related to Amazon Mechanical Turk, under the assumption that the descriptions of the populations will not differ substantially.Footnote 15 This is also supported by previous work such as Wang et al. (2015), which has indicated that the user distribution of CrowdFlower and AMT is comparable.

The population of crowdsourcing workers is a subset of the population of Internet users, which is described in a recent meta study by Paolacci and Chandler (2014) as follows: “Workers tend to be younger (about 30 years old), overeducated, underemployed, less religious, and more liberal than the general population.” While there is limited research on workers’ cognitive abilities, Paolacci et al. (2010) found “no difference between workers, undergraduates, and other Internet users on a self-report measure of numeracy that correlates highly with actual quantitative abilities.” According to a more recent study by Crump et al. (2013), workers learn more slowly than university students and may have difficulties with complex tasks. Possibly the most important observation related to the focus of our study is that according to Paolacci et al. (2010) crowdsourcing workers “exhibit the classic heuristics and biases and pay attention to directions at least as much as subjects from traditional sources.”

4 Interpretability, plausibility, and model complexity

The rules shown in Fig. 2 may suggest that simpler rules are more acceptable than longer rules because the highly rated rules (a) are shorter than the lowly rated rules (b). In fact, there are many good reasons why simpler models should be preferred over more complex models. Obviously, a shorter model can be interpreted with less effort than a more complex model of the same kind, in much the same way as reading one paragraph is quicker than reading one page. Nevertheless, a page of elaborate explanations may be more comprehensible than a single dense paragraph that provides the same information (as we all know from reading research papers).

Other reasons for preferring simpler models include that they are easier to falsify, that there are fewer simpler theories than complex theories, so the a priori chances that a simple theory fits the data are lower, or that simpler rules tend to be more general, cover more examples and their quality estimates are therefore statistically more reliable.

However, one can also find results that throw doubt on this claim. In particular, in cases where not only syntactic interpretability is considered, there are some previous works where it was observed that longer rules are preferred by human experts. In the following, we discuss this issue in some depth, by first reviewing the use of a simplicity bias in machine learning (Sect. 4.1), then taking the alternative point of view and recapitulating works where more complex theories are preferred (Sect. 4.2), and then summarizing the conflicting past evidence for either of the two views (Sect. 4.3). Finally, in Sect. 4.4, we report on the results of our first experiment, which aimed at testing whether rule length has an influence on the interpretability or plausibility of found rules at all, and, if so, whether people tend to prefer longer or shorter rules.

4.1 The bias for simplicity

Michalski (1983) already states that inductive learning algorithms need to incorporate a preference criterion for selecting hypotheses to address the problem of the possibly unlimited number of hypotheses and that this criterion is typically simplicity, referring to philosophical works on simplicity of scientific theories by Kemeny (1953) and Post (1960), which refine the initial postulate attributed to Ockham, which we discuss further below. According to Post (1960), judgments of simplicity should not be made “solely on the linguistic form of the theory”.Footnote 16 This type of simplicity is referred to as linguistic simplicity. A related notion of semantic simplicity is described through the falsifiability criterion (Popper 1935, 1959), which essentially states that simpler theories can be more easily falsified. Third, Post (1960) introduces pragmatic simplicity, which relates to the degree to which the hypothesis can be fitted into a wider context.

Machine learning algorithms typically focus on linguistic or syntactic simplicity, by referring to the description length of the learned hypotheses. The complexity of a rule-based model is typically measured with simple statistics, such as the number of learned rules and their length, or the total number of conditions in the learned model (cf., e.g., Todorovski et al. 2000; Lakkaraju et al. 2016; Minnaert et al. 2015; Wang et al. 2017). Inductive rule learning is typically concerned with learning a set of rules or a rule list that discriminates positive from negative examples (Fürnkranz et al. 2012; Fürnkranz and Kliegr 2015). For this task, a bias towards simplicity is necessary because, for a contradiction-free training set, it is trivial to find a rule set that perfectly explains the training data, simply by converting each example to a maximally specific rule that covers only this example.

Occam’s Razor, “Entia non sunt multiplicanda sine necessitate”,Footnote 17 which is attributed to English philosopher and theologian William of Ockham (c. 1287–1347), has been put forward as support for a principle of parsimony in the philosophy of science (Hahn 1930). In machine learning, this principle is generally interpreted as “given two explanations of the data, all other things being equal, the simpler explanation is preferable” (Blumer et al. 1987), or simply “choose the shortest explanation for the observed data” (Mitchell 1997). While it is well-known that striving for simplicity often yields better predictive results—mostly because pruning or regularization techniques help to avoid overfitting—the exact formulation of the principle is still subject to debate (Domingos 1999), and several cases have been observed where more complex theories perform better (Murphy and Pazzani 1994; Webb 1996; Bensusan 1998).

Much of this debate focuses on the aspect of predictive accuracy. When it comes to understandability, the idea that simpler rules are more comprehensible is typically unchallenged. A nice counterexample is due to Munroe (2013), who observed that route directions like “take every left that doesn’t put you on a prime-numbered highway or street named for a president” could be most compressive but considerably less comprehensive. Although Domingos (1999) argues in his critical review that it is theoretically and empirically false to favor the simpler of two models with the same training-set error on the grounds that this would lead to lower generalization error, he concludes that Occam’s Razor is nevertheless relevant for machine learning but should be interpreted as a preference for more comprehensible (rather than simple) models. Here, the term “comprehensible” clearly does not refer to syntactical length.

A particular implementation of Occam’s razor in machine learning is the minimum description length (MDL; Rissanen 1978) or minimum message length (MMLFootnote 18; Wallace and Boulton 1968) principle which is an information-theoretic formulation of the principle that smaller models should be preferred (Grünwald 2007). The description length that should be minimized is the sum of the complexity of the model plus the complexity of the data encoded given the model. In this way, both the complexity and the accuracy of a model can be traded off: the description length of an empty model consists only of the data part, and it can be compared to the description length of a perfect model, which does not need additional information to encode the data. The theoretical foundation of this principle is based on the Kolmogorov complexity (Li and Vitányi 1993), the essentially uncomputable length of the smallest model of the data. In practice, different coding schemes have been developed for encoding models and data and have, e.g., been used as selection or pruning criteria in decision tree induction (Needham and Dowe 2001; Mehta et al. 1995), inductive rule learning (Quinlan 1990; Cohen 1995; Pfahringer 1995) or for pattern evaluation (Vreeken et al. 2011). The ability to compress information has also been proposed as a basis for human comprehension and thus forms the backbone of many standard intelligence tests, which aim at detecting patterns in data. Psychometric artificial intelligence (Bringsjord 2011) extends this definition to AI in general. For an extensive treatment of the role of compression in measuring human and machine intelligence, we refer the reader to Hernández-Orallo (2017).

Many works make the assumption that the interpretability of a rule-based model can be measured by measures that relate to the complexity of the model, such as the number of rules or the number conditions. A maybe prototypical example is the Interpretable Classification Rule Mining (ICRM) algorithm, which “is designed to maximize the comprehensibility of the classifier by minimizing the number of rules and the number of conditions” via an evolutionary process (Cano et al. 2013). Similarly, Minnaert et al. (2015) investigate a rule learner that is able to optimize multiple criteria and evaluate it by investigating the Pareto front between accuracy and comprehensibility, where the latter is measured with the number of rules. Lakkaraju et al. (2016) propose a method for learning rule sets that simultaneously optimizes accuracy and interpretability, where the latter is again measured by several conventional data-driven criteria such as rule overlap, coverage of the rule set, and the number of conditions and rules in the set. Most of these works clearly focus on syntactic interpretability.

4.2 The bias for complexity

Even though most systems have a bias toward simpler theories for the sake of overfitting avoidance and increased accuracy, some rule learning algorithms strive for more complex rules and have good reasons for doing so. Already Michalski (1983) has noted that there are two different kinds of rules, discriminative and characteristic. Discriminative rules can quickly discriminate an object of one category from objects of other categories. A simple example is the rule

which states that an animal with a trunk is an elephant. This implication provides a simple but effective rule for recognizing elephants among all animals. However, it does not provide a very clear picture of the properties of the elements of the target class. For example, from the above rule, we do not understand that elephants are also very large and heavy animals with a thick gray skin, tusks and big ears.

Characteristic rules, on the other hand, try to capture all properties that are common to the objects of the target class. A rule for characterizing elephants could be

Note that here the implication sign is reversed: we list all properties that are implied by the target class, i.e., by an animal being an elephant. Even though discriminative rules are easier to comprehend in the syntactic sense, we argue that characteristic rules are often more interpretable than discriminative rules from a pragmatic point of view. For example, in a customer profiling application, we might prefer to not only list a few characteristics that discriminate one customer group from the other, but are interested in all characteristics of each customer group.

The distinction between characteristic and discriminative rule is also reminiscent of the distinction between defining and characteristic features of categories. Smith et al. (1974)Footnote 19 argue that both of them are used for similarity-based assessments of categories to objects, but that only the defining features are eventually used when similarity-based categorization over all features does not give a conclusive positive or negative answer.

Characteristic rules are very much related to formal concept analysis (Wille 1982; Ganter and Wille 1999). Informally, a concept is defined by its intent (the description of the concept, i.e., the conditions of its defining rule) and its extent (the instances that are covered by these conditions). A formal concept is then a concept where the extension and the intension are Pareto-maximal, i.e., a concept where no conditions can be added without reducing the number of covered examples. In Michalski’s terminology, a formal concept is both discriminative and characteristic, i.e., a rule where the head is equivalent to the body.

It is well-known that formal concepts correspond to closed itemsets in association rule mining, i.e., to maximally specific itemsets (Stumme et al. 2002). Closed itemsets have been primarily mined because they are a unique and compact representative of equivalence classes of itemsets, which all cover the same instances (Zaki and Hsiao 2002). However, while all itemsets in such an equivalence class are equivalent with respect to their support, they may not be equivalent with respect to their understandability or interestingness.

Gamberger and Lavrač (2003) introduce supporting factors as a means for complementing the explanation delivered by conventional learned rules. Essentially, they are additional attributes that are not part of the learned rule, but nevertheless have very different distributions with respect to the classes of the application domain. In a way, enriching a rule with such supporting factors is quite similar to computing the closure of a rule. In line with the results of Kononenko (1993), medical experts found that these supporting factors increase the plausibility of the found rules.

Stecher et al. (2014) introduced so-called inverted heuristics for inductive rule learning. The key idea behind them is a rather technical observation based on a visualization of the behavior of rule learning heuristics in coverage space (Fürnkranz and Flach 2005), namely that the evaluation of rule refinements is based on a bottom-up point of view, whereas the refinement process proceeds top-down, in a general-to-specific fashion. As a remedy, it was proposed to “invert” the point of view, resulting in heuristics that pay more attention to maintaining high coverage on the positive examples, whereas conventional heuristics focus more on quickly excluding negative examples. Somewhat unexpectedly, it turned out that this results in longer rules, which resemble characteristic rules instead of the conventionally learned discriminative rules. For example, Fig. 5 shows the two decision lists that have been found for the Mushroom dataset with the conventional Laplace heuristic \(\text {h}_{\text {Lap}}\) (top) and its inverted counterpart  (bottom). Although fewer rules are learned with

(bottom). Although fewer rules are learned with  , and thus the individual rules are more general on average, they are also considerably longer. Intuitively, these rules also look more convincing, because the first set of rules often only uses a single criterion (e.g., odor) to discriminate between edible and poisonous mushrooms. Thus, even though the shorter rules may be more comprehensible in the syntactic sense, the longer rules appear to be more plausible. Stecher et al. (2016) and Valmarska et al. (2017) investigated the suitability of such rules for subgroup discovery, with somewhat inconclusive results.

, and thus the individual rules are more general on average, they are also considerably longer. Intuitively, these rules also look more convincing, because the first set of rules often only uses a single criterion (e.g., odor) to discriminate between edible and poisonous mushrooms. Thus, even though the shorter rules may be more comprehensible in the syntactic sense, the longer rules appear to be more plausible. Stecher et al. (2016) and Valmarska et al. (2017) investigated the suitability of such rules for subgroup discovery, with somewhat inconclusive results.

4.3 Conflicting evidence

The above-mentioned examples should help to motivate that the complexity of models may have an effect on the interpretability of a model. Even in cases where a simpler and a more complex rule covers the same number of examples, shorter rules are not necessarily more interpretable, at least not when other aspects of interpretability beyond syntactic comprehensibility are considered. There are a few isolated empirical studies that add to this picture. However, the results on the relation between the size of representation and interpretability are limited and conflicting, partly because different aspects of interpretability are not clearly discriminated.

Larger models are less interpretable. Huysmans et al. (2011) were among the first that actually tried to empirically validate the often implicitly made claim that smaller models are more interpretable. In particular, they related increased complexity to measurable events such as a decrease in answer accuracy, an increase in answer time, and a decrease in confidence. From this, they concluded that smaller models tend to be more interpretable, proposing that there is a certain complexity threshold that limits the practical utility of a model. However, they also noted that in parts of their study, the correlation of model complexity with utility was less pronounced. The study also does not report whether the participants of their study had any domain knowledge relating to the used data, so that it cannot be ruled out that the obtained result was caused by lack of domain knowledge.Footnote 20 A similar study was later conducted by Piltaver et al. (2016), who found a clear relationship between model complexity and interpretability in decision trees.

In most previous works, interpretability was interpreted in the sense of syntactic comprehensibility, i.e., the pragmatic or epistemic aspects of interpretability were not addressed.

Larger models are more interpretable. A direct evaluation of the perceived interpretability of classification models has been performed by Allahyari and Lavesson (2011). They elicited preferences on pairs of models, which were generated from two UCI datasets: Labor and Contact Lenses. What is unique to this study is that the analysis took into account the participants’ estimated knowledge about the domain of each of the datasets. On Labor, they were expected to have good domain knowledge but not so for Contact Lenses. The study was performed with 100 students and involved several decision tree induction algorithms (J48, RIDOR, ID3) as well as rule learners (PRISM, Rep, JRip). It was found that larger models were considered as more comprehensible than smaller models on the Labor dataset, whereas the users showed the opposite preference for Contact Lenses. Allahyari and Lavesson (2011) explain the discrepancy with the lack of prior knowledge for Contact Lenses, which makes it harder to understand complex models, whereas in the case of Labor, “...the larger or more complex classifiers did not diminish the understanding of the decision process, but may have even increased it through providing more steps and including more attributes for each decision step.” In an earlier study, Kononenko (1993) found that medical experts rejected rules learned by a decision tree algorithm because they found them to be too short. Instead, they preferred explanations that were derived from a Naïve Bayes classifier, which essentially showed weights for all attributes, structured into confirming and rejecting attributes.

To some extent, the results may appear to be inconclusive because the different studies do not clearly discriminate between different aspects of interpretability. Most of the results that report that simpler models are more interpretable refer to syntactic interpretability, whereas, e.g., Allahyari and Lavesson (2011) tackle epistemic interpretability by taking the users’ prior knowledge into account. Similarly, the study of Kononenko (1993) has aspects of epistemic interpretability, in that “too short” explanations contradict the experts’ experience. pragmatic interpretability of the models has not been explicitly addressed, nor are we aware of any studies that explicitly relate plausibility to model complexity.

4.4 Experiment 1: Are shorter rules more plausible?

Motivated by the somewhat inconclusive evidence in previous works on interpretability and complexity, we set up a crowdsourcing experiment that specifically focuses on the aspect of plausibility. In this and the experiments reported in subsequent sections, the basic experimental setup follows the one discussed in Sect. 3. Here, we only note task-specific aspects.

Example rule pair used in experiments 1–3. For Experiment 3, the description of the rule also contained values of confidence and support, formatted as shown in Fig. 8

Material. The questionnaires presented pairs of rules as described in Sect. 3.1.2, and asked the participants to give (a) judgment which rule in each pair is more preferred and (b) optionally a textual explanation for the judgment. A sample question is shown in Fig. 6. The judgments were elicited using a drop down box, where the participants could choose from the following five options: “Rule 1 (strong preference)”, “Rule 1 (weak preference)”, “No preference”, “Rule 2 (weak preference)”, “Rule 2 (strong preference)”. As shown in Fig. 6, the definition of plausibility was accessible to participants at all times since it was featured below the drop-down box. As optional input, the workers could provide a textual explanation of their reasoning behind the assigned preference, which we informally evaluated but which is not further considered in the analyses reported in this paper.

Participants. The number of judgments per rule pair for this experiment was 5 for the Traffic, Quality, and Movies datasets. The Mushroom dataset had only 10 rule pairs, therefore we opted to collect 25 judgments for each rule pair in this dataset.

Results. Table 4 summarizes the results of this crowdsourcing experiment. In total, we collected 1002 responses, which is on average 6.3 judgments for each of the 158 rule pairs. On two of the datasets, Quality and Mushroom, there was a strong, statistically significant positive correlation between rule length and the observed plausibility of the rule, i.e., longer rules were preferred. In the other two datasets, Traffic and Movies, no significant difference could be observed in either way.

In any case, these results show that there is no negative correlation between rule length and plausibility. In fact, in two of the four datasets, we even observed a positive correlation, meaning that in these cases, longer rules were preferred.

5 The conjunction fallacy

Human-perceived plausibility of a hypothesis has been extensively studied in cognitive science. The best-known cognitive phenomenon related to our focus area of the influence of the number of conditions in a rule on its plausibility is the conjunctive fallacy. This fallacy falls into the research program on cognitive biases and heuristics carried out by Amos Tversky and Daniel Kahneman since the 1970s. The outcome of this research program can be succinctly summarized by a quotation from Kahneman’s Nobel Prize lecture at Stockholm University on December 8, 2002:

“\(\ldots \), it is safe to assume that similarity is more accessible than probability, that changes are more accessible than absolute values, that averages are more accessible than sums, and that the accessibility of a rule of logic or statistics can be temporarily increased by a reminder.” (Kahneman 2003)

In this section, we will briefly review some aspects of this program, highlighting those that seem to be important for inductive rule learning. For a more thorough review, we refer to Kahneman et al. (1982) and Gilovich et al. (2002), a more recent, very accessible introduction can be found in Kahneman (2011).

The Linda problem (Tversky and Kahneman 1983)

5.1 The Linda problem

The conjunctive fallacy is in the literature often defined via the “Linda” problem. In this problem, participants are asked whether they consider it more plausible that a person Linda is more likely to be (a) a bank teller or (b) a feminist bank teller (Fig. 7). Tversky and Kahneman (1983) report that based on the provided characteristics of Linda, 85% of the participants indicate (b) as the more probable option. This was essentially confirmed in by various independent studies, even though the actual proportions may vary. In particular, similar results could be observed across multiple settings (hypothetical scenarios, real-life domains), as well as for various kinds of participants (university students, children, experts, as well as statistically sophisticated individuals) (Tentori and Crupi 2012).

However, it is easy to see that the preference for (b) is in conflict with elementary laws of probabilities. Essentially, in this example, participants are asked to compare conditional probabilities \(\Pr (F \wedge B \mid L)\) and \(\Pr (B\mid L)\), where B refers to “bank teller”, F to “active in feminist movement” and L to the description of Linda. Of course, the probability of a conjunction, \(\Pr (A \wedge B)\), cannot exceed the probability of its constituents, \(\Pr (A)\) and \(\Pr (B)\) (Tversky and Kahneman 1983). In other words, as it always holds for the Linda problem that \(\Pr (F \wedge B \mid L) \le \Pr (B \mid L)\), the preference for alternative \(F\wedge B\) (option (b) in Fig. 7) is a logical fallacy.

5.2 The representativeness heuristic

According to Tversky and Kahneman (1983), the results of the conjunctive fallacy experiments manifest that “a conjunction can be more representative than one of its constituents”. It is a symptom of a more general phenomenon, namely that people tend to overestimate the probabilities of representative events and underestimate those of less representative ones. The reason is attributed to the application of the representativeness heuristic. This heuristic provides humans with means for assessing a probability of an uncertain event. According to the representativeness heuristic, the probability that an object A belongs to a class B is evaluated “by the degree to which A is representative of B, that is by the degree to which A resembles B” (Tversky and Kahneman 1974).

This heuristic relates to the tendency to make judgments based on similarity, based on a rule “like goes with like”. According to Gilovich and Savitsky (2002), the representativeness heuristic can be held accountable for a number of widely held false and pseudo-scientific beliefs, including those in astrology or graphology.Footnote 21 It can also inhibit valid beliefs that do not meet the requirements of resemblance.

A related phenomenon is that people often tend to misinterpret the meaning of the logical connective ‘and’. Hertwig et al. (2008) hypothesized that the conjunctive fallacy could be caused by “a misunderstanding about conjunction”, i.e., by a different interpretation of ‘probability’ and ‘and’ by the participants than assumed by the experimenters. They discussed that ‘and’ in natural language can express several relationships, including temporal order, causal relationship, and most importantly, can also indicate a collection of sets instead of their intersection. For example, the sentence “He invited friends and colleagues to the party” does not mean that all people at the party were both colleagues and friends. According to Sides et al. (2002), ‘and’ ceases to be ambiguous when it is used to connect propositions rather than categories. The authors give the following example of a sentence that is not prone to misunderstanding: “IBM stock will rise tomorrow and Disney stock will fall tomorrow”. Similar wording of rule learning results may be, despite its verbosity, preferred. We further conjecture that representations that visually express the semantics of ‘and’ such as decision trees may be preferred over rules, which do not provide such visual guidance.

5.3 Experiment 2: misunderstanding of ‘and’ in inductively learned rules

Given its omnipresence in rule learning results, it is vital to assess to what degree the ‘and’ connective is misunderstood when rule learning results are interpreted. In order to gauge the effect of the conjunctive fallacy, we carried out a separate set of crowdsourcing tasks, To control for a misunderstanding of ‘and’, the group of workers approached in Experiment 2 additionally received intersection test questions which were intended to ensure that all participants understand the and conjunction the same way it is defined in the probability calculus. In order to correctly answer these, the respondent had to realize that the antecedent of one of the rules contains mutually exclusive conditions. The correct answer was a weak or strong preference for a rule which did not contain the mutually exclusive conditions.

Material. The participants were presented with the same rule pairs as in Experiment 1 (Group 1). The difference between Experiment 1 and Experiment 2 was only one manipulation: instructions in Experiment 2 additionally contained the intersection test questions, not present in Experiment 1. We refer to the participants that received these test questions as Group 2.

Participants. Same as for Experiment 1 described earlier. There was one small change for the Mushroom dataset, where for economical constraints we collected 15 judgments for each rule pair within Experiment 2, instead of 25 collected in Experiment 1.

Results. We state the following proposition: The effect of higher perceived interpretability of longer rules disappears when it is ensured that participants understand the semantics of the ‘and’ conjunction. The corresponding null hypothesis is that the correlation between rule length and plausibility is no longer statistically significantly different from zero for participants that successfully completed the intersection test questions (Group 2). We focus on the analysis on Mushroom and Quality datasets on which we had initially observed a higher plausibility of longer rules.

The results presented in Table 5 show that the correlation coefficient is still statistically significantly different from zero for the Mushroom dataset with Kendall’s \(\tau \) at 0.28 (\(p<0.0001\)), but not for the Quality dataset, which has \(\tau \) not different from zero at \(p<0.05\) (albeit at a much higher variance). This suggests that at least on the Mushroom dataset, there are other factors apart from “misunderstanding of and” that cause longer rules to be perceived as more plausible. We will take a look at some possible causes in the following sections.

6 Insensitivity to sample size

In the previous sections, we have motivated that rule length is by itself not an indicator for the plausibility of a rule if other factors such as the support and the confidence of the rule are equal. In this and following sections, we will discuss the influence of these and a few alternative factors, partly motivated by results from the psychological literature. The goal is to motivate some directions for future research on the interpretability and plausibility of learned concepts.

6.1 Support and confidence

In the terminology used within the scope of cognitive science (Griffin and Tversky 1992), confidence corresponds to the strength of the evidence and support to the weight of the evidence. Results in cognitive science for the strength and weight of evidence suggest that the weight is systematically undervalued while the strength is overvalued. According to Camerer and Weber (1992), this was, e.g., already mentioned by Keynes (1922), who drew attention to the problem of balancing the likelihood of the judgment and the weight of the evidence in the assessed likelihood. In particular, Tversky and Kahneman (1971) have argued that human analysts are unable to appreciate the reduction of variance and the corresponding increase in reliability of the confidence estimate with increasing values of support. This bias is known as insensitivity to sample size, and essentially describes the human tendency to neglect the following two principles: a) more variance is likely to occur in smaller samples, b) larger samples provide less variance and better evidence. Thus, people underestimate the increased benefit of higher robustness of estimates made on a larger sample.

In the previous experiments, we controlled the rules selected into the pairs, so they mostly had identical or nearly identical confidence and support. Furthermore, the confidence and support values of the shown rules were not revealed to the participants during the experiments. However, in real situations, rules in the output of inductive rule learning have varying quality, which is communicated mainly by the values of confidence and support. Given that longer rules can fit the data better, they tend to be higher on confidence and lower on support. This implies that if confronted with two rules of different lengths, where the longer has a higher confidence and the shorter a higher support, the analyst may prefer the longer rule with higher confidence (all other factors equal). These deliberations lead us to the following proposition: When both confidence and support are explicitly revealed, confidence but not support will positively increase rule plausibility.

6.2 Experiment 3: Is rule confidence perceived as more important than support?

We aim to evaluate the effect of explicitly revealed confidence (strength) and support (weight) on rule preference. In order to gauge the effect of rule quality measures confidence and support, we performed an additional experiment.

Material. The participants were presented with rule pairs, like in the previous two experiments. We used only rule pairs from the Movies dataset, where the differences in confidence and support between the rules in the pairs were largest. The only difference in the setup between Experiment 1 and Experiment 3 was that participants now also received information about the number of correctly and incorrectly covered instances for each rule, along with the support and confidence values. Figure 8 shows an example of this additional information provided to the participants. Workers that received this extra information are referred to as Group 3.

Participants. This setup was the same as for the preceding two experiments.

Results. Table 6 shows the correlations of the rule quality measures confidence and support with plausibility. It can be seen that there is a relation to confidence but not to support, even though both were explicitly present in descriptions of rules for Group 3. Thus, our result supports the hypothesis that insensitivity to sample size effect is applicable to the interpretation of inductively learned rules. In other words, when both confidence and support are stated, confidence positively affects the preference for a rule, whereas support tends to have no impact.

The results also show that the relationship between revealed rule confidence and plausibility is causal. This follows from confidence not being correlated with plausibility in the original experiment (Group 1 in Fig. 6), which differed only via the absence of the explicitly revealed information about rule quality. While such a conclusion is intuitive, to our knowledge it has not yet been empirically confirmed before.

7 Relevance of conditions in rule

An obvious factor that can determine the perceived plausibility of a proposed rule is how relevant it appears to be. Of course, rules that contain more relevant conditions will be considered to be more acceptable. One way of measuring this could be in the strength of the connection between the condition (or conjunction of conditions) with the conclusion. However, in our crowdsourcing experiments, we only showed sets of conditions that are equally relevant in the sense that their conjunction covers about the same number of examples in the shown rules or that the rules have a similar strength of the connection. Nevertheless, the perceived or subjective relevance of a condition may be different for different users.