Abstract

The aim of this paper is to develop tractable large deviation approximations for the empirical measure of a small noise diffusion. The starting point is the Freidlin–Wentzell theory, which shows how to approximate via a large deviation principle the invariant distribution of such a diffusion. The rate function of the invariant measure is formulated in terms of quasipotentials, quantities that measure the difficulty of a transition from the neighborhood of one metastable set to another. The theory provides an intuitive and useful approximation for the invariant measure, and along the way many useful related results (e.g., transition rates between metastable states) are also developed. With the specific goal of design of Monte Carlo schemes in mind, we prove large deviation limits for integrals with respect to the empirical measure, where the process is considered over a time interval whose length grows as the noise decreases to zero. In particular, we show how the first and second moments of these integrals can be expressed in terms of quasipotentials. When the dynamics of the process depend on parameters, these approximations can be used for algorithm design, and applications of this sort will appear elsewhere. The use of a small noise limit is well motivated, since in this limit good sampling of the state space becomes most challenging. The proof exploits a regenerative structure, and a number of new techniques are needed to turn large deviation estimates over a regenerative cycle into estimates for the empirical measure and its moments.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Among the many interesting results proved by Freidlin and Wentzell in the 70’s and 80’s concerning small random perturbations of dynamical systems, one of particular note is the large deviation principle for the invariant measure of such a system. Consider the small noise diffusion

where \(X_{t}^{\varepsilon }\in {\mathbb {R}}^{d}\), \(b:{\mathbb {R}}^{d}\rightarrow {\mathbb {R}}^{d}\), \(\sigma :{\mathbb {R}}^{d}\rightarrow {\mathbb {R}}^{d} \times {\mathbb {R}}^{k}\) (the \(d\times k\) matrices) and \(W_{t}\in {\mathbb {R}}^{k}\) is a standard Brownian motion. Under mild regularity conditions on b and \(\sigma \), one has that for any \(T\in (0,\infty )\) the processes \(\{X_{\cdot }^{\varepsilon }\}_{\varepsilon >0}\) satisfy a large deviation principle on \(C([0,T]:{\mathbb {R}}^{d})\) with rate function

when \(\phi \) is absolutely continuous and \(\phi (0)=x\), and \(I_{T}(\phi )=\infty \) otherwise. If \(\sigma (x)\sigma (x)^{\prime }>0\) (in the sense of symmetric square matrices) for all \(x\in {\mathbb {R}}^{d}\), then one can evaluate the supremum and find

To simplify the discussion, we will assume this non-degeneracy condition. It is also assumed by Freidlin and Wentzell in [12], but can be weakened.

Define the quasipotential V(x, y) for \(x,y \in {\mathbb {R}}^{d}\) by

Suppose that \(\{X^{\varepsilon }\}\) is ergodic on a compact manifold \(M\subset {\mathbb {R}}^{d}\) with invariant measure \(\mu ^{\varepsilon } \in {\mathcal {P}}(M)\). Then, under a number of additional assumptions, including assumptions on the structure of the dynamical system \({\dot{X}}_{t}^{0} =b(X_{t}^{0})\), Freidlin and Wentzell [12, Chapter 6] show how to construct a function \(J:M\rightarrow [0,\infty ]\) in terms of V, such that J is the large deviation rate function for \(\{\mu ^{\varepsilon }\}_{\varepsilon >0}\): J has compact level sets, and

This gives a very useful approximation to \(\mu ^{\varepsilon }\), and along the way many interesting related results (e.g., transition rates between metastable states) are also developed.

The aim of this paper is to develop large deviation type estimates for a quantity that is closely related to \(\mu ^{\varepsilon }\), which is the empirical measure over an interval \([0,T^{\varepsilon }]\). This is defined by

for \(A\in {\mathcal {B}}(M)\). For reasons that will be made precise later on, we will assume \(T^{\varepsilon }\rightarrow \infty \) as \(\varepsilon \rightarrow 0\), and typically \(T^{\varepsilon }\) will grow exponentially in the form \(e^{c/\varepsilon }\) for some \(c>0\).

There is of course a large deviation theory for the empirical measure when \(\varepsilon >0\) is held fixed and the length of the time interval tends to infinity (see, e.g., [7, 8]). However, it can be hard to extract information from the corresponding rate function. Our interest in proving large deviations estimates when \(\varepsilon \rightarrow 0\) and \(T^{\varepsilon }\rightarrow \infty \) is in the hope that one will find it easier to extract information in this double limit, analogous to the simplified approximation to \(\mu ^{\varepsilon }\) just mentioned. These results will be applied in [10] to analyze and optimize a Monte Carlo method known as infinite swapping [9, 15] when the noise is small. Small noise models are common in applications and are also the setting in which Monte Carlo methods can have the greatest difficulty. We expect that the general set of results will be useful for other purposes as well.

We note that while developed in the context of small noise diffusions, the collection of results due to Freidlin and Wentzell that are discussed in [12] also hold for other classes of processes, such as scaled stochastic networks, when appropriate conditions are assumed and the finite time sample path large deviation results are available (see, e.g., [19]). We expect that such generalizations are possible for the results we prove as well.

The outline of the paper is as follows. In Sect. 2, we explain our motivation and the relevance for studying the particular quantities that are the topic of the paper. In Sect. 3, we provide definitions and assumptions that are used throughout the paper, and Sect. 4 states the main asymptotic results as well as a related conjecture. Examples that illustrate the results are given in Sect. 5. In Sect. 6, we introduce an important tool for our analysis—the regenerative structure, and with this concept, we decompose the original asymptotic problem into two sub-problems that require very different forms of analysis. These two types of asymptotic problems are then analyzed separately in Sects. 7 and 8. In Sect. 9, we combine the partial asymptotic results from Sects. 7 and 8 to prove the main large deviation type results that are stated in Section 4. Section 10 gives the proof of a key theorem from Section 8, which asserts an approximately exponential distribution for return times that arise in the decomposition based on regenerative structure, as well as a tail bound needed for some integrability arguments. The last section of the paper, Sect. 11, presents the proof of an upper bound for the rate of decay of the variance per unit time in the context of a special case, thereby showing for the case that the lower bounds of Sect. 4 are in a sense tight. To focus on the main discussion, proofs of some lemmas are collected in an Appendix.

Remark 1.1

There are certain time-scaling parameters that play key roles throughout this paper. For the reader’s convenience, we record here where they are first described: \(h_1\) and w are defined in (4.1) and (4.2); c is introduced and its relation to \(h_1\) and w is given in Theorem 4.3; m is introduced at the beginning of Sect. 6.2.

2 Quantities of Interest

The quantities we are interested in are the higher order moments, and in particular second moments, of an integral of a risk-sensitive functional with respect to the empirical measure \(\rho ^{\varepsilon }\) defined in (1.2). To be more precise, the integral is of the form

for some nice (e.g., bounded and continuous) function \(f:M\rightarrow {\mathbb {R}}\) and a closed set \(A\in {\mathcal {B}}(M)\). Note that this integral can also be expressed as

In order to understand the large deviation behavior of moments of such an integral, we must identify the correct scaling to extract meaningful information. Moreover, as will be shown, there is an important difference between centered moments and ordinary (non-centered) moments.

By the use of the regenerative structure of \(\{X_{t}^{\varepsilon }\}_{t\ge 0} \), we can decompose (2.2) [equivalently (2.1)] into the sum of a random number of independent and identically distributed (iid) random variables, plus a residual term which here we will ignore. To simplify the notation, we temporarily drop the \(\varepsilon \), and without being precise about how the regenerative structure is introduced, let \(Y_{j}\) denote the integral of \(e^{-\frac{1}{\varepsilon }f\left( X_{t}^{\varepsilon }\right) }1_{A}\left( X_{t}^{\varepsilon }\right) \) over a regenerative cycle. (The specific regenerative structure we use will be identified later on.)

Thus, we consider a sequence \(\{Y_{j}\}_{j\in {\mathbb {N}} }\) of iid random variables with finite second moments and want to compare the scaling properties of, for example, the second moment and the second centered moment of \(\frac{1}{n}\sum _{j=1}^{n}Y_{j}\). When used for the small noise system, both n and moments of \(Y_{i}\) will scale exponentially in \(1/\varepsilon \), and n will be random, but for now we assume n is deterministic. The second moment is

and the second centered moment is

When analyzing the performance of the Monte Carlo schemes, one is concerned of course with both bias and variance, but in situations where we would like to apply the results of this paper one assumes \(T^{\varepsilon }\) is large enough that the bias term is unimportant, so that all we are concerned with is the variance. However, some care will be needed to determine a suitable measure of quality of the algorithm, since as noted \(Y_{i}\) could scale exponentially with in \(1/\varepsilon \) with a negative coefficient (exponentially small), while n will be exponentially large.

In the analysis of unbiased accelerated Monte Carlo methods for small noise systems over bounded time intervals (e.g., to estimate escape probabilities), it is standard to use the second moment, which is often easier to analyze, in lieu of the variance [3, Chapter VI], [4, Chapter 14]. This situation corresponds to \(n=1\). The alternative criterion is more convenient since by Jensen’s inequality one can easily establish a best possible rate of decay of the second moment, and estimators are deemed efficient if they possess the optimal rate of decay [3, 4]. However, with n exponentially large this is no longer true. Using the previous calculations, we see that the second moment of \(\frac{1}{n}\sum _{j=1}^{n}Y_{j}\) can be completely dominated by \(\left( EY_{1}\right) ^{2}\), and therefore using this quantity to compare algorithms may be misleading, since our true concern is the variance of \(\frac{1}{n}\sum _{j=1}^{n}Y_{j}\).

This observation suggests that our study of moments of the empirical measure we should consider only centered moments, and in particular quantities like

which is the variance per unit time. For Monte Carlo, one wants to minimize the variance per unit time, and to make the problem more tractable we instead try to maximize the decay rate of the variance per unit time. Assuming the limit exists, this is defined by

and so we are especially interested in lower bounds on this decay rate.

Thus, our goal is to develop methods that allow the approximation of at least first and second moments of (2.2). In fact, the methods we introduce can be developed further to obtain large deviation estimates of higher moments if that were needed or desired.

3 Setting of the Problem, Assumptions and Definitions

The process model we would like to consider is an \({\mathbb {R}}^{d}\)-valued solution to an Itô stochastic differential equation (SDE), where the drift so strongly returns the process to some compact set that events involving exit of the process from some larger compact set are so rare that they can effectively be ignored when analyzing the empirical measure. However, to simplify the analysis we follow the convention of [12, Chapter 6], and work with a small noise diffusion that takes values in a compact and connected manifold \(M\subset {\mathbb {R}}^{d}\) of dimension r and with smooth boundary. The precise regularity assumptions for M are given on [12, page 135]. With this convention in mind, we consider a family of diffusion processes \(\{X^{\varepsilon }\}_{\varepsilon \in (0,\infty )},X^{\varepsilon }\in C([0,\infty ):M)\), that satisfy the following condition.

Condition 3.1

Consider continuous \(b:M\rightarrow {\mathbb {R}}^{d}\) and \(\sigma :M\rightarrow {\mathbb {R}}^{d}\times {\mathbb {R}}^{d}\) (the \(d\times d\) matrices) and assume that \(\sigma \) is uniformly nondegenerate, in that there is \(c>0\) such that for any x and any v in the tangent space of M at x, \(\langle v,\sigma (x)\sigma (x)^{\prime }v\rangle \ge c\langle v,v\rangle \). For absolutely continuous \(\phi \in C([0,T]:M)\) define \(I_{T}(\phi )\) by (1.1), where the inverse \(\left[ \sigma (x)\sigma (x)^{\prime }\right] ^{-1}\) is relative to the tangent space of M at x. Let \(I_{T}(\phi )=\infty \) for all other \(\phi \in C([0,T]:M)\). Then, we assume that for each \(T<\infty \), \(\{X^{\varepsilon }_t\}_{0\le t\le T}\) satisfies the large deviation principle with rate function \(I_{T}\), uniformly with respect to the initial condition [4, Definition 1.13].

We note that for such diffusion processes nondegeneracy of the diffusion matrix implies there is a unique invariant measure \(\mu ^{\varepsilon } \in {\mathcal {P}}(M)\). A discussion of weak sufficient conditions under which Condition 3.1 holds appears in [12, Sect. 3, Chapter 5].

Remark 3.2

There are several ways one can approximate a diffusion of the sort described at the beginning of this section by a diffusion on a smooth compact manifold. One such “compactification” of the state space can be obtained by assuming that for some bounded but large enough rectangle trajectories that exit the rectangle do not affect the large deviation behavior of quantities of interest and then extend the coefficients of the process periodically and smoothly off an even larger rectangle to all of \({\mathbb {R}}^{d}\) (a technique sometimes used to bound the state space for purposes of numerical approximation). One can then map \({\mathbb {R}}^{d}\) to a manifold that is topologically equivalent to a torus, and even arrange that the metric structure on the part of the manifold corresponding to the smaller rectangle coincides with a Euclidean metric.

Define the quasipotential \(V(x,y):M\times M\rightarrow [0,\infty )\) by

For a given set \(A\subset M,\) define \(V(x,A)\doteq \inf _{y\in A}V(x,y)\) and \(V(A,y)\doteq \inf _{x\in A}V(x,y).\)

Remark 3.3

For any fixed y and set A, V(x, y) and V(x, A) are both continuous functions of x. Similarly, for any given x and any set A, V(x, y) and V(A, y) are also continuous in y.

Definition 3.4

We say that a set \(N\subset M\) is stable if for any \(x\in N,y\notin N\) we have \(V(x,y)>0.\) A set which is not stable is called unstable.

Definition 3.5

We say that \(O\in M\) is an equilibrium point of the ordinary differential equation (ODE) \({\dot{x}}_{t}=b(x_{t})\) if \(b(O)=0.\) Moreover, we say that this equilibrium point O is asymptotically stable if for every neighborhood \({\mathcal {E}}_{1}\) of O (relative to M) there exists a smaller neighborhood \({\mathcal {E}}_{2}\) such that the trajectories of system \({\dot{x}}_{t}=b(x_{t})\) starting in \({\mathcal {E}}_{2}\) converge to O without leaving \({\mathcal {E}}_{1}\) as \(t\rightarrow \infty .\)

Remark 3.6

An asymptotically stable equilibrium point is a stable set, but a stable set might contain no asymptotically stable equilibrium point.

The following restrictions on the structure of the dynamical system in M will be used. These restrictions include the assumption that the equilibrium points are a finite collection. This is a more restrictive framework than that of [12], which allows, e.g., limit cycles. In a remark at the end of this section, we comment on what would be needed to extend to the general setup of [12].

Condition 3.7

There exists a finite number of points \(\{O_{j} \}_{j\in L} \subset M\) with \(L\doteq \{1,2,\ldots ,l\}\) for some \(l\in {\mathbb {N}} \), such that \(\cup _{j\in L}\{O_j\}\) coincides with the \(\omega \)-limit set of the ODE \({\dot{x}}_{t}=b(x_{t})\).

Without loss of generality, we may assume that \(O_{j}\) is stable if and only if \(j\in L_{\mathrm{{s}}}\) where \(L_{\mathrm{{s}}}\doteq \{1,\ldots ,l_{\mathrm{{s}}}\}\) for some \(l_{\mathrm{{s}}}\le l.\)

Next, we give a definition from graph theory which will be used in the statement of the main results.

Definition 3.8

Given a subset \(W\subset L=\{1,\ldots ,l\},\) a directed graph consisting of arrows \(i\rightarrow j\) \((i\in L\setminus W,j\in L,i\ne j)\) is called a W-graph on L if it satisfies the following conditions.

-

1.

Every point i \(\in L\setminus W\) is the initial point of exactly one arrow.

-

2.

For any point i \(\in L\setminus W,\) there exists a sequence of arrows leading from i to some point in W.

We note that we could replace the second condition by the requirement that there are no closed cycles in the graph. We denote by G(W) the set of W-graphs; we shall use the letter g to denote graphs. Moreover, if \(p_{ij}\) (\(i,j\in L,j\ne i\)) are numbers, then \(\prod _{(i\rightarrow j)\in g}p_{ij}\) will be denoted by \(\pi (g).\)

Remark 3.9

We mostly consider the set of \(\{i\}\)-graphs, i.e., \(G(\{i\})\) for some \(i\in \) L, and also use G(i) to denote \(G(\{i\}).\) We occasionally consider the set of \(\{i,j\}\)-graphs, i.e., \(G(\{i,j\})\) for some \(i,j\in \) L with \(i\ne j.\) Again, we also use G(i, j) to denote \(G(\{i,j\}).\)

Definition 3.10

For all \(j\in L\), define

and

Remark 3.11

Heuristically, if we interpret \(V\left( O_{m},O_{n}\right) \) as the “cost” of moving from \(O_{m}\) to \(O_{n},\) then \(W\left( O_{j}\right) \) is the “least total cost” of reaching \(O_{j}\) from every \(O_{i}\) with \(i\in L\setminus \{j\}.\) According to [12, Theorem 4.1, Chapter 6], one can interpret \(W(O_i)-\min _{j \in L}W(O_j)\) as the decay rate of \(\mu ^\varepsilon (B_\delta (O_i))\), where \(B_\delta (O_i)\) is a small open neighborhood of \(O_i\).

Definition 3.12

We use \(G_{\mathrm{{s}}}\left( W\right) \) to denote the collection of all W-graphs on \(L_{\mathrm{{s}}}=\{1,\ldots ,l_{\mathrm{{s}}}\}\) with \(W\subset L_{\mathrm{{s}}}.\)

We make the following technical assumptions on the structure of the SDE. Let \(B_{\delta }(K)\) denote the \(\delta \)-neighborhood of a set \(K\subset M.\) Recall that \(\mu ^{\varepsilon }\) is the unique invariant probability measure of the diffusion process \(\{X^{\varepsilon }_t\}_{t}.\) The existence of the limits appearing in the first part of the condition is ensured by Theorem 4.1 in [12, Chapter 6].

Condition 3.13

-

1.

There exists a unique asymptotically stable equilibrium point \(O_{1}\) of the system \({\dot{x}}_{t}=b(x_{t})\) such that

$$\begin{aligned}&\lim _{\delta \rightarrow 0}\lim _{\varepsilon \rightarrow 0}-\varepsilon \log \mu ^{\varepsilon }(B_{\delta }(O_{1}))=0, \text { and } \nonumber \\&\lim _{\delta \rightarrow 0}\lim _{\varepsilon \rightarrow 0}-\varepsilon \log \mu ^{\varepsilon }(B_{\delta }(O_{j})) >0 \text{ for } \text{ any } j\in L\setminus \{1\}. \end{aligned}$$ -

2.

All of the eigenvalues of the matrix of partial derivatives of b at \(O_\ell \) relative to M have negative real parts for \(\ell \in L_{\mathrm{{s}}}\).

-

3.

\(b:M\rightarrow {\mathbb {R}}^{d}\) and \(\sigma :M\rightarrow {\mathbb {R}}^{d}\times {\mathbb {R}}^{d}\) are \(C^{1}\).

Remark 3.14

According to [12, Theorem 4.1, Chapter 6] and the first part of Condition 3.13, we know that \(W(O_{j})>W(O_{1})\) for all \(j\in L\setminus \{1\}.\)

Remark 3.15

We comment on the use of the various parts of the condition. Part 1 means that neighborhoods of \(O_{1}\) capture more of the mass as \(\varepsilon \rightarrow 0\) than neighborhoods of any other equilibrium point. It simplifies the analysis greatly, but we expect it could be weakened if desired. Parts 2 and 3 are assumed in [6], which gives an explicit exponential bound on the tail probability of the exit time from the domain of attraction. It is largely because of our reliance on the results of [6] that we must assume that equilibrium sets are points in Condition 3.7, rather than the more general compacta as considered in [12]. Both Condition 3.7 and 3.13 could be weakened if the corresponding versions of the results we use from [6] were available.

Remark 3.16

The quantities \(V(O_i,O_j)\) determine various key transition probabilities and time scales in the analysis of the empirical measure. The more general framework of [12], as well as the one-dimensional case (i.e., \(r=1\)) in the present setting, requires some closely related but slightly more complicated quantities. These are essentially the analogues of \(V(O_i,O_j)\) under the assumption that trajectories used in the definition are not allowed to pass through equilibrium compacta (such as a limit cycle) when traveling from \(O_i\) to \(O_j\). The related quantities, which are designated using notation of the form \({\tilde{V}}(O_i,O_j)\) in [12], are needed since the probability of a direct transition from \(O_i\) to \(O_j\) without passing though another equilibrium structure may be zero, which means that transitions from \(O_i\) to \(O_j\) must be decomposed according to these intermediate transitions. To simplify the presentation, we do not provide the details of the one-dimensional case in our setup, but simply note that it can be handled by the introduction of these additional quantities.

Consider the filtration \(\{{\mathcal {F}}_{t}\}_{t\ge 0}\) defined by \({\mathcal {F}}_{t}\doteq \sigma (X_{s}^{\varepsilon },s\le t)\) for any \(t\ge 0.\) For any \(\delta >0\) smaller than a quarter of the minimum of the distances between \(O_{i}\) and \(O_{j}\) for all \(i\ne j\), we consider two types of stopping times with respect to the filtration \(\{{\mathcal {F}}_{t}\}_{t}\). The first type are the hitting times of \(\{X^{\varepsilon }_t\}_{t}\) at the \(\delta \)-neighborhood of all equilibrium points \(\{O_{j}\}_{j\in L}\) after traveling a reasonable distance away from those neighborhoods. More precisely, we define stopping times by \(\tau _{0}\doteq 0,\)

The second type of stopping times is the return times of \(\{X^{\varepsilon }_t\}_{t}\) to the \(\delta \)-neighborhood of \(O_{1}\), where as noted previously \(O_{1}\) is in some sense the most important equilibrium point. The exact definitions are \(\tau _{0}^{\varepsilon }\doteq 0,\)

We then define two embedded Markov chains \(\{Z_{n}\}_{n\in {\mathbb {N}} _{0}}\doteq \{X_{\tau _{n}}^{\varepsilon }{}\}_{n\in {\mathbb {N}} _{0}}\) with state space \( {\textstyle \mathop {\cup }\nolimits _{j\in L}} \partial B_{\delta }(O_{j})\), and \(\{Z_{n}^{\varepsilon }\}_{n\in {\mathbb {N}} _{0}}\doteq \{X_{\tau _{n}^{\varepsilon }}^{\varepsilon }{}\}_{n\in {\mathbb {N}} _{0}}\) with state space \(\partial B_{\delta }(O_{1}).\)

Let \(p(x,\partial B_{\delta }(O_{j}))\) denote the one-step transition probabilities of \(\{Z_{n}\}_{n\in {\mathbb {N}} _{0}}\) starting from a point \(x\in {\textstyle \mathop {\cup }\nolimits _{i\in L}} \partial B_{\delta }(O_{i}),\) namely,

We have the following estimates on \(p(x,\partial B_{\delta }(O_{j}))\) in terms of V. The lemma is a consequence of [12, Lemma 2.1, Chapter 6] and the fact that under our conditions \(V(O_i,O_j)\) and \({\tilde{V}}(O_i,O_j)\) as defined in [12] coincide.

Lemma 3.17

For any \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\) and \(\varepsilon \in (0,\varepsilon _{0}),\) for all \(x\in \partial B_{\delta }(O_{i}),\) the one-step transition probability of the Markov chain \(\{Z_{n}\}_{n\in {\mathbb {N}} }\) on \(\partial B_{\delta }(O_{j})\) satisfies

for any \(i,j\in L.\)

Remark 3.18

According to Lemma 4.6 in [16], Condition 3.1 guarantees the existence and uniqueness of invariant measures for \(\{Z_{n}\}_{n}\) and \(\{Z_{n}^{\varepsilon }\}_{n}.\) We use \(\nu ^{\varepsilon }\in {\mathcal {P}}(\cup _{i\in L}\partial B_{\delta }(O_{i}))\) and \(\lambda ^{\varepsilon }\in {\mathcal {P}}(\partial B_{\delta }(O_{1}))\) to denote the associated invariant measures.

4 Results and a Conjecture

The following main results of this paper assume Conditions 3.1, 3.7 and 3.13 . Although moments higher than the second moment are not considered in this paper, as noted previously one can use arguments such as those used here to identify and prove the analogous results.

Recall that \(\{O_{j}\}_{j\in L}\) are the equilibrium points and that they satisfy Conditions 3.7 and 3.13. In addition, \(O_{j}\) is stable if and only if \(j\in L_{\mathrm{{s}}}\), where \(L_{\mathrm{{s}}}\doteq \{1,\ldots ,l_{\mathrm{{s}}}\}\) for some \(l_{\mathrm{{s}}}\le l=\left| L\right| \), and \(\tau ^\varepsilon _1\) is the first return time to the \(\delta \)-neighborhood of \(O_1\) after having first visited the \(\delta \)-neighborhood of any other equilibrium point.

Lemma 4.1

For any \(\delta \in (0,1)\) smaller than a quarter of the minimum of the distances between \(O_{i}\) and \(O_{j}\) for all \(i\ne j\), any \(\varepsilon >0\) and any nonnegative measurable function \(g:M\rightarrow {\mathbb {R}} \)

where \(\lambda ^{\varepsilon }\in {\mathcal {P}}(\partial B_{\delta }(O_{1}))\) is the unique invariant measure of \(\{Z_{n}^{\varepsilon }\}_{n}=\{X_{\tau _{n}^{\varepsilon }}^{\varepsilon }\}_{n}\) and \(\mu ^{\varepsilon }\in {\mathcal {P}}(M)\) is the unique invariant measure of \(\{X_{t}^{\varepsilon }\}_{t}.\)

Proof

We define a measure on M by

for \(B\in {\mathcal {B}}(M),\) so that for any nonnegative measurable function \(g:M\rightarrow {\mathbb {R}} \)

According to the proof of Theorem 4.1 in [16], the measure given by \({\hat{\mu }}^{\varepsilon }\left( B\right) /{\hat{\mu }}^{\varepsilon }\left( M\right) \) is an invariant measure of \(\{X^{\varepsilon }_t\}_{t}.\) Since we already know that \(\mu ^{\varepsilon }\) is the unique invariant measure of \(\{X^{\varepsilon }_t\}_{t},\) this means that \(\mu ^{\varepsilon }(B)=\hat{\mu }^{\varepsilon }\left( B\right) /{\hat{\mu }}^{\varepsilon }\left( M\right) \) for any \(B\in {\mathcal {B}}(M).\) Therefore, for any nonnegative measurable function \(g:M\rightarrow {\mathbb {R}} \)

\(\square \)

Recall the definitions of \(W(O_j)\) and \(W(O_1\cup O_j)\) in Definition 3.10, as well as the definition of the quasipotential V(x, y) in (3.1). For any \(k\in L\), we define

In addition, define

Remark 4.2

The quantity \(h_k\) is related to the time that it takes for the process to leave a neighborhood of \(O_k\), and \(W(O_1)-W(O_1\cup O_j)\) is related to the transition time from a neighborhood of \(O_j\) to one of \(O_1\). It turns out that our results and arguments depend on which of \(h_1\) or w is larger. Throughout the paper, the constructions used in the case when \(h_1>w\) will be in terms of what we call a single cycle, and those for the case when \(h_1\le w\) in terms of a multicycle.

Theorem 4.3

Let \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\vee w\). Given \(\eta >0,\) a continuous function \(f:M\rightarrow {\mathbb {R}} \) and any compact set \(A\subset M,\) there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\)

where \(W(x)\doteq \min _{j\in L}[W(O_{j})+V(O_{j},x)]\).

Remark 4.4

Since \(W(x)=\min _{j\in L}[W(O_{j})+V(O_{j},x)],\) the lower bound appearing in Theorem 4.3 is equivalent to

The next result gives an upper bound on the variance per unit time, or equivalently a lower bound on its rate of decay. In the design of a Markov chain Monte Carlo method, one would maximize this rate of decay to improve the method’s performance.

Theorem 4.5

Let \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\vee w\). Given \(\eta >0,\) a continuous function \(f:M\rightarrow {\mathbb {R}} \) and any compact set \(A\subset M,\) there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\)

where

and for \(j\in L\setminus \{1\}\)

Remark 4.6

If one mistakenly treated a single cycle case as a multicycle case in the application of Theorem 4.5, then the result is the same since with \(h_1>w\), (4.2) implies that \(R_{j}^{(3)}\ge R_{j}^{(2)}\) for any \(j\in L\).

Remark 4.7

Although Theorems 4.3 and 4.5 as stated assume the starting distribution \(\lambda ^\varepsilon \), they can be extended to general initial distributions by using results from Sect. 10, which show that the process essentially forgets the initial distribution before leaving the neighborhood of \(O_1\).

Remark 4.8

In this remark, we interpret the use of Theorems 4.3 and 4.5 in the context of Monte Carlo and also explain the role of the time scaling \(T^\varepsilon \).

There is a minimum amount of time that must elapse before the process can visit all stable equilibrium points often enough that good estimation of risk-sensitive integrals is possible. As is well known, this time scales exponentially in the form of \(T^\varepsilon = e^{c/\varepsilon }\), and the issue is the selection of the constant \(c>0\), which motivates the assumptions on \(T^\varepsilon \) for the two cases. However, when designing a scheme there typically will be parameters available for selection. The growth constant in \(T^\varepsilon \) will then depend on these parameters, which will then be chosen to (either directly or indirectly, depending on the criteria used) reduce the size of \(T^\varepsilon \). For a compelling example, we refer to [10], which shows how for a system with fixed well depths a scheme known as infinite swapping can be designed so that given any \(a>0\) one can design a scheme so that an interval of length \(e^{a/\varepsilon }\) suffices.

Theorem 4.3 is concerned with bias, and for \(T^\varepsilon \) as above will give a negligible contribution to the total error in comparison with the variance. Thus, it is Theorem 4.5 that determines the performance of the scheme and serves as a criteria for optimization. Of particular note is that the value of c does not appear in the variational problem appearing in Theorem 4.5.

Theorem 4.5 gives a lower bound on the rate of decay of variance per unit time. For applications to the design of Monte Carlo schemes as in [10], there is an a priori bound on the best possible performance, and so this lower bound (which yields an upper bound on variances) is sufficient to determine if a scheme is nearly optimal. However, for other purposes an upper bound on the decay rate could be useful, and we expect the other direction holds as well.

The proofs of Theorems 4.3 and 4.5 for single cycles and multicycles are almost identical with a few key differences. We focus on providing proofs in the single cycle case, and then point out the required modifications in the proofs for the multicycle case.

Theorem 4.9

The bound in Theorem 4.3 can be calculated using only stable equilibrium points. Specifically,

-

1.

\(W(x)=\min _{j\in L_{\mathrm{{s}}}}[W(O_{j})+V(O_{j},x)]\)

-

2.

\(W\left( O_{j}\right) =\min _{g\in G_{\mathrm{{s}}}\left( j\right) }\left[ {\textstyle \sum _{\left( m\rightarrow n\right) \in g}} V\left( O_{m},O_{n}\right) \right] \)

-

3.

\(W(O_{1}\cup O_{j})=\min _{g\in G_{\mathrm{{s}}}\left( 1,j\right) }\left[ {\textstyle \sum _{\left( m\rightarrow n\right) \in g}} V\left( O_{m},O_{n}\right) \right] \)

-

4.

\( \min _{j\in L}( \inf _{x\in A}[ f( x) +V(O_{j},x)] +W(O_{j}) ) =\min _{j\in L_{\mathrm{{s}}}}( \inf _{x\in A}[ f(x)+V(O_{j},x)] +W(O_{j}) ) \).

Remark 4.10

Theorem 4.9 says that the bound appearing in Theorem 4.3 depends on the set of indices of only stable equilibrium points. This is not surprising, since in [12, Chapter 6], it has been shown that the logarithmic asymptotics of the invariant measure of a Markov process in this framework can be characterized in terms of graphs on the set of indices of just stable equilibrium points. It is natural to ask if the same property holds for the lower bound appearing in Theorem 4.5. Notice that part 4 of Theorem 4.9 implies \(\min _{j\in L}R_{j}^{(1)}=\min _{j\in L_{\mathrm{{s}}}}R_{j}^{(1)}\), so if one can prove (possibly under extra conditions, for example, by considering a double-well model as in Sect. 11) that \(\min _{j\in L}R_{j}^{(2)}=\min _{j\in L_{\mathrm{{s}}}}R_{j}^{(2)}\), then these two equations assert the property we want for the single cycle case, namely, \( \min _{j\in L}( R_{j}^{(1)}\wedge R_{j}^{(2)}) =\min _{j\in L_{\mathrm{{s}}}}( R_{j}^{(1)}\wedge R_{j} ^{(2)}). \) An analogous comment applies for the multicycle case.

Conjecture 4.11

Let \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\vee w\). Let f be continuous and suppose that A is the closure of its interior. Then for any \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\)

In Section 11, we outline the proof of Conjecture 4.11 for a special case.

5 Examples

Example 5.1

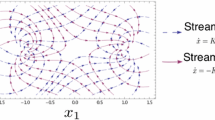

We first consider the situation depicted in Fig. 1. Values of \(W(O_{j})\) are given in the figure. If one interprets the figure as a potential with minimum zero, then the corresponding heights of the equilibrium points are given by \(W(O_{j})-W(O_{1})\). We take \(f=0\) and A to be a small closed interval about \(O_{5}\). As we will see and should be clear from the figure, this example can be analyzed using single regenerative cycles.

Recall that

and for \(j>1\)

If one traces through the proof of Theorem 4.5 for the case of a single cycle, then one finds that the constraining bound is given in Lemma 7.23, which is in turn based on Lemma 7.9. As we will see, in the minimization problem \(\min _{j\in L}(R_{j}^{(1)}\wedge R_{j}^{(2)})\) the min on j turns out to be achieved at \(j=5\). This is of course not surprising, since A is an interval about \(O_{5}\). It is then the minimum of \(R_{5}^{(1)}\) and \(R_{5}^{(2)}\) which determines the dominant source of the variance of the estimator.

We recall that \(\tau _{1}^{\varepsilon }\) is the time for a full regenerative cycle, and that \(\tau _{1}\) is the time to first reach the \(2\delta \) neighborhood of an equilibrium point and then reach a \(\delta \) neighborhood of a (perhaps the same) equilibrium point. The quantities that are relevant in Lemma 7.9 are

for \(R_{j}^{(1)}\) and

for \(R_{j}^{(2)}\). Decay rates are in turn determined by (see the proof of Lemma 7.23)

and

respectively. Thus, for this example it is only the term \(W(O_{1})-W(O_{1}\cup O_{5})\) that distinguishes between the two. Since this is always greater than zero and it appears in \(R_{j}^{(2)}\) in the form \(-(W(O_{1})-W(O_{1}\cup O_{5}))\), it must be the case that \(R_{5}^{(2)}<\) \(R_{5}^{(1)}\).

The numerical values for the example are

and \(R_{1}^{(2)}=16-4=12,h_{1}=4\) and \(w=5-2=3\). Since \(w<h_{1}\), it falls into the single cycle case. We therefore find \(\min _{j}R_{j}^{(1)}\wedge R_{j}^{(2)}\) equals to 0 and occurs with superscript 2 and at \(j=5\).

For an example where the dominant contribution to the variance is through the quantities associated with \(R_{j}^{(1)}\), we move the set A further to the right of \(O_{5}\). All other quantities are unchanged save

whose decay rates are governed (for \(j=5\)) by \( \inf _{x\in A}[V(O_{5},x)]\text { and }2\inf _{x\in A} [V(O_{5},x)], \) respectively. Choosing A so that \(\inf _{x\in A}[V(O_{5},x)]>3\), it is now the case that \(R_{5}^{(1)}<\) \(R_{5}^{(2)}\).

Example 5.2

We consider the situation depicted in Fig. 2. In this example, we again take \(f=0\) and A to be a small closed interval about \(O_{3}\). Since the well at \(O_5\) is deeper than that at \(O_1\), we expect that multicycles will be needed, and so recall

The needed values are

and \(R_{1}^{(2)}=8-4=4,h_{1}=4\) and \(w=7-2=5\). Since \(w>h_{1}\) a single cycle cannot be used for the analysis of the variance, and we need to use multicycles. We find \(\min _{j} R_{j}^{(1)}\wedge R_{j}^{(2)}\wedge R_{j}^{(3)}\) is equal to \(-1\) and occurs with superscript 3 and \(j=3\).

6 Wald’s Identities and Regenerative Structure

To prove Theorems 4.3 and 4.5, we will use the regenerative structure to analyze the system over the interval \([0,T^{\varepsilon }]\). Since the number of regenerative cycles will be random, Wald’s identities will be useful.

Recall that \(\tau _{n}^{\varepsilon }\) is the n-th return time to \(\partial B_{\delta }\left( O_{1}\right) \) after having visited the neighborhood of a different equilibrium point, and \(\lambda ^{\varepsilon }\in {\mathcal {P}}(\partial B_{\delta }(O_{1}))\) is the invariant measure of the Markov process \(\{X_{\tau _{n}^{\varepsilon }}^{\varepsilon }\}_{n\in {\mathbb {N}}_{0}}\) with state space \(\partial B_{\delta }(O_{1}).\) If we let the process \(\{X^{\varepsilon }_t \}_{t}\) start with \(\lambda ^{\varepsilon }\) at time 0, that is, assume the distribution of \(X^{\varepsilon }_0\) is \(\lambda ^{\varepsilon },\) then by the strong Markov property of \(\{X^{\varepsilon }_t \}_{t},\) we find that \(\{X^{\varepsilon }_t \}_{t}\) is a regenerative process and the cycles \(\{\{X^{\varepsilon }_{\tau _{n-1}^{\varepsilon }+t}:0\le t<\tau _{n}^{\varepsilon }-\tau _{n-1}^{\varepsilon }\},\tau _{n}^{\varepsilon } -\tau _{n-1}^{\varepsilon }\}\) are iid objects. Moreover, \(\{\tau _{n}^{\varepsilon }\}_{n\in {\mathbb {N}} _{0}}\) is a sequence of renewal times under \(\lambda ^{\varepsilon }.\)

6.1 Single cycle

Define the filtration \(\{{\mathcal {H}}_{n}\}_{n\in {\mathbb {N}} },\) where \({\mathcal {H}}_{n}\doteq {\mathcal {F}}_{\tau _{n}^{\varepsilon }}\) and \({\mathcal {F}}_{t}\doteq \) \(\sigma (\{X^{\varepsilon }_s\); \(s\le t\})\). With respect to this filtration, in the single cycle case (i.e., when \(h_1>w\)), we consider the stopping times \( N^{\varepsilon }\left( T\right) \doteq \inf \left\{ n\in {\mathbb {N}}:\tau _{n}^{\varepsilon }>T\right\} . \) Note that \(N^{\varepsilon }\left( T\right) -1\) is the number of complete single renewal intervals contained in [0, T].

With this notation, we can bound \(\frac{1}{T^{\varepsilon }}\int _{0} ^{T^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{t}^{\varepsilon }\right) }1_{A}\left( X_{t}^{\varepsilon }\right) dt\) from above and below by

where

Applying Wald’s first identity shows

Therefore, the logarithmic asymptotics of \(E_{\lambda ^{\varepsilon }}(\int _{0}^{T^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{t}^{\varepsilon }\right) }1_{A}\left( X_{t}^{\varepsilon }\right) dt/T^{\varepsilon })\) are determined by those of \(E_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) /T^{\varepsilon }\) and \(E_{\lambda ^{\varepsilon }}S_{1}^{\varepsilon }.\) Likewise, to understand the logarithmic asymptotics of \(T^{\varepsilon }\cdot \) \(\hbox {Var}_{\lambda ^{\varepsilon }}(\int _{0}^{T^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{t}^{\varepsilon }\right) }1_{A}\left( X_{t}^{\varepsilon }\right) dt/T^{\varepsilon }),\) it is sufficient to identify the corresponding logarithmic asymptotics of \(\hbox {Var}_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) /T^{\varepsilon }\), \(\hbox {Var}_{\lambda ^{\varepsilon }}(S_{1}^{\varepsilon }),\) \(E_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) /T^{\varepsilon }\) and \(E_{\lambda ^{\varepsilon }}S_{1}^{\varepsilon }\). This can be done with the help of Wald’s second identity, since

In the next two sections, we derive bounds on \(E_{\lambda ^{\varepsilon }} S_{1}^{\varepsilon }\), \(\hbox {Var}_{\lambda ^{\varepsilon }}(S_{1}^{\varepsilon })\) and \(E_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) \), \(\hbox {Var}_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) \), respectively.

6.2 Multicycle

Recall that in the case of a multicycle, we have \(w\ge h_1\). For any \(m>0\) such that \(h_1+m>w\) and for any \(\varepsilon >0\), on the same probability space as \(\{\tau ^{\varepsilon }_n\}\), one can define a sequence of independent and geometrically distributed random variables \(\{{\mathbf {M}}^{\varepsilon }_i\}_{i\in {\mathbb {N}}}\) with parameter \(e^{-m/\varepsilon }\) that are independent of \(\{\tau ^{\varepsilon }_n\}\). We then define multicycles according to

Consider the stopping times \( {\hat{N}}^{\varepsilon }\left( T\right) \doteq \inf \left\{ n\in {\mathbb {N}} :{\hat{\tau }}_{n}^{\varepsilon }>T\right\} . \) Note that \({\hat{N}}^{\varepsilon }\left( T\right) -1\) is the number of complete multicycles contained in [0, T]. With this notation and by following the same idea as in the single cycle case, we can bound \(\frac{1}{T^{\varepsilon }}\int _{0} ^{T^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{t}^{\varepsilon }\right) }1_{A}\left( X_{t}^{\varepsilon }\right) dt\) from above and below by

where

Therefore, by applying Wald’s first and second identities, we know that the logarithmic asymptotics of \(E_{\lambda ^{\varepsilon }}(\int _{0}^{T^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{t}^{\varepsilon }\right) }1_{A}\left( X_{t}^{\varepsilon }\right) dt/T^{\varepsilon })\) are determined by those of \(E_{\lambda ^{\varepsilon }}( {\hat{N}}^{\varepsilon }\left( T^{\varepsilon }\right) ) /T^{\varepsilon }\) and \(E_{\lambda ^{\varepsilon }}{\hat{S}}_{1}^{\varepsilon }\), and the asymptotics of \(T^{\varepsilon }\cdot \) \(\hbox {Var}_{\lambda ^{\varepsilon }}(\int _{0}^{T^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{t}^{\varepsilon }\right) }1_{A}\left( X_{t}^{\varepsilon }\right) dt/T^{\varepsilon })\) by those of \(\hbox {Var}_{\lambda ^{\varepsilon }}( {\hat{N}}^{\varepsilon }\left( T^{\varepsilon }\right) ) /T^{\varepsilon }\), \(\hbox {Var}_{\lambda ^{\varepsilon }}({\hat{S}}_{1}^{\varepsilon })\), \(E_{\lambda ^{\varepsilon }}( {\hat{N}}^{\varepsilon }\left( T^{\varepsilon }\right) ) /T^{\varepsilon }\) and \(E_{\lambda ^{\varepsilon }}{\hat{S}}_{1}^{\varepsilon }\). In particular, we have

and

In the next two sections, we derive bounds on \(E_{\lambda ^{\varepsilon }} {\hat{S}}_{1}^{\varepsilon }\), \(\hbox {Var}_{\lambda ^{\varepsilon }}({\hat{S}}_{1}^{\varepsilon })\) and \(E_{\lambda ^{\varepsilon }}( {\hat{N}}^{\varepsilon }\left( T^{\varepsilon }\right) ) \), \(\hbox {Var}_{\lambda ^{\varepsilon }}( {\hat{N}}^{\varepsilon }\left( T^{\varepsilon }\right) )\), respectively.

Remark 6.1

It should be kept in mind that \({\hat{\tau }}^{\varepsilon }_n, {\hat{N}}^{\varepsilon }\left( T^{\varepsilon }\right) \) and \({\hat{S}}_{n}^{\varepsilon }\) all depend on m, although this dependence is not explicit in the notation.

Remark 6.2

In general, for any quantity in the single cycle case, we use analogous notation with a “hat” on it to represent the corresponding quantity in the multicycle version. For instance, we use \(\tau ^{\varepsilon }_n\) for a single regenerative cycle, and \({\hat{\tau }}^{\varepsilon }_n\) for a multi-regenerative cycle.

7 Asymptotics of Moments of \(S_{1}^{\varepsilon }\) and \({\hat{S}}_{1}^{\varepsilon }\)

In this section, we will first introduce the elementary theory of an irreducible finite state Markov chain \(\{Z_{n}\}_{n\in {\mathbb {N}} _{0}}\) with state space L, and then state and prove bounds for the asymptotics of moments of \(S_{1}^{\varepsilon }\) and \({\hat{S}}_{1}^{\varepsilon }\).

For the asymptotic analysis, the following useful facts will be used repeatedly.

Lemma 7.1

For any nonnegative sequences \(\left\{ a_{\varepsilon }\right\} _{\varepsilon >0}\) and \(\left\{ b_{\varepsilon }\right\} _{\varepsilon >0}\), we have

7.1 Markov Chains and Graph Theory

In this subsection, we state some elementary theory for finite state Markov chains taken from [1, Chapter 2]. For a finite state Markov chain, the invariant measure, the mean exit time, etc., can be expressed explicitly as the ratio of certain determinants, i.e., sums of products consisting of transition probabilities, and these sums only contain terms with a plus sign. Which products should appear in the various sums can be described conveniently by means of graphs on the set of states of the chain. This method of linking graphs and quantities associated with a finite state Markov chain was introduced by Freidlin and Wentzell in [12, Chapter 6].

Consider an irreducible finite state Markov chain \(\{Z_{n}\}_{n\in {\mathbb {N}} _{0}}\) with state space L. For any \(i,j\in L,\) let \(p_{ij}\) be the one-step transition probability of \(\{Z_{n}\}_{n}\) from state i to state j. Write \(P_{i}(\cdot )\) and \(E_{i}(\cdot )\) for probabilities and expectations of the chain started at state i at time 0. Recall the notation \(\pi (g)\doteq \prod _{(i\rightarrow j)\in g}p_{ij}\).

Lemma 7.2

The unique invariant measure of \(\{Z_{n}\}_{n\in {\mathbb {N}} }\) can be expressed

Proof

See Lemma 3.1, Chapter 6 in [12]. \(\square \)

To analyze the empirical measure, we will need additional results, including representations for the number of visits to a state during a regenerative cycle. Write

for the first hitting time of state i, and write

Observe that \(T_{i}^{+}=T_{i}\) unless \(Z_{0}=i,\) in which case we call \(T_{i}^{+}\) the first return time to state i.

Let \({\hat{N}}\doteq \inf \{n\in {\mathbb {N}} _{0}:Z_{n}\in L\setminus \{1\}\}\) and \(N\doteq \inf \{n\in {\mathbb {N}} :Z_{n}=1,n\ge {\hat{N}}\}.\) \({\hat{N}}\) is the first time of visiting a state other than state 1, and N is the first time of visiting state 1 after \({\hat{N}}.\) For any \(j\in L,\) let \(N_{j}\) be the number of visits (including time 0) of state j before N, i.e., \(N_{j}=\left| \{n\in {\mathbb {N}} _{0}:n<N\text { and }Z_{n}=j\}\right| .\) We would like to understand \(E_{1}N_{j}\) and \(E_{j}N_{j}\) for any \(j\in L.\) These quantities will appear later on in Subsection 7.2. The next lemma shows how they can be related to the invariant measure of \(\{Z_{n}\}_{n}\).

Lemma 7.3

-

1.

For any \(j\in L\setminus \{1\}\)

$$\begin{aligned} E_{j}N_{j}=\frac{\sum _{g\in G\left( 1,j\right) }\pi \left( g\right) }{\sum _{g\in G\left( 1\right) }\pi \left( g\right) }\text { and }E_{j} N_{j}=\lambda _{j}\left( E_{j}T_{1}+E_{1}T_{j}\right) . \end{aligned}$$ -

2.

For any \(i,j\in L,\) \(j\ne i\)

$$\begin{aligned} P_{i}\left( T_{j}<T_{i}^{+}\right) =\frac{1}{\lambda _{i}\left( E_{j} T_{i}+E_{i}T_{j}\right) }. \end{aligned}$$ -

3.

For any \(j\in L\)

$$\begin{aligned} E_{1}N_{j}=\frac{1}{1-p_{11}}\frac{\lambda _{j}}{\lambda _{1}}. \end{aligned}$$

Proof

See Lemma 3.4 in [12, Chapter 6] for the first assertion of part 1 and see Lemma 2.7 in [1, Chapter 2] for the second assertion of part 1. For part 2, see Corollary 2.8 in [1, Chapter 2]. For part 3, since \( E_{1}N_{j}=\sum _{\ell =1}^{\infty }P_{1}\left( N_{j}\ge \ell \right) , \) we need to understand \(P_{1}\left( N_{j}\ge \ell \right) \), which means we need to know how to count all the ways to get \(N_{j}\ge \ell \) before returning to state 1.

We first have to move away from state 1, so the types of sequences are of the form

for some \(i,q\in {\mathbb {N}} \) and \(k_{1}\ne 1,\cdots ,k_{q}\ne 1\). When \(j=1,\) we do not care about \(k_{1},k_{2},\ldots ,k_{q},\) and therefore

For \(j\in L\setminus \{1\},\) the event \(\{N_{j}\ge \ell \}\) requires that within \(k_{1},k_{2},\ldots ,k_{q},\) we

-

1.

first visit state j before returning to state 1, which has corresponding probability \(P_{1}(T_{j}<T_{1}^{+})\),

-

2.

then start from state j and again visit state j before returning to state 1, which has corresponding probability \(P_{j}(T_{j}^{+}<T_{1}).\)

Step 2 needs to happen at least \(\ell -1\) times in a row, and after that we do not care. Thus,

and

The third equality comes from part 2. \(\square \)

To apply the preceding results using the machinery developed by Freidlin and Wentzell, one must have analogues that allow for small perturbations of the transition probabilities due to the fact that initial conditions are to be taken in small neighborhoods of the equilibrium points. The addition of a tilde will be used to identify the corresponding objects, such as hitting and return times. Take as given a Markov chain \(\{\tilde{Z}_{n}\}_{n\in {\mathbb {N}} _{0}}\) on a state space \({\mathcal {X}}= {\textstyle \cup _{i\in L}} {\mathcal {X}}_{i},\) with \({\mathcal {X}}_{i}\cap {\mathcal {X}}_{j}=\emptyset \) \((i\ne j),\) and assume there is \(a\in [1,\infty )\) such that for any \(i,j\in L\) and \(j\ne i,\) the transition probability of the chain from \(x\in {\mathcal {X}}_{i}\) to \({\mathcal {X}}_{j}\) (denoted by \(p\left( x,{\mathcal {X}}_{j}\right) \)) satisfies the inequalities

for any \(x\in {\mathcal {X}}_{i}\). Write \(P_{x}(\cdot )\) and \(E_{x}(\cdot )\) for probabilities and expectations of the chain started at \(x\in {\mathcal {X}}\) at time 0. Write

for the first hitting time of \({\mathcal {X}}_{i},\) and write

Observe that \({\tilde{T}}_{i}^{+}={\tilde{T}}_{i}\) unless \({\tilde{Z}}_{0} \in {\mathcal {X}}_{i},\) in which case we call \({\tilde{T}}_{i}^{+}\) the first return time to \({\mathcal {X}}_{i}.\) Recall that \(l=|L|\).

Remark 7.4

Observe that given \(j\in L\) and for any \(x\in {\mathcal {X}}_{j}\), \( 1-p\left( x,{\mathcal {X}}_{j}\right) =\textstyle \sum _{k\in L\setminus \left\{ j\right\} }p\left( x,{\mathcal {X}}_{k}\right) . \) Therefore, we can apply (7.3) to obtain

Lemma 7.5

-

1.

Consider distinct \(i,j,k\in L\). Then, for \(x\in {\mathcal {X}}_{k},\)

$$\begin{aligned} a^{-4^{l-2}}P_{k}\left( T_{j}<T_{i}\right) \le P_{x}( {\tilde{T}} _{j}<{\tilde{T}}_{i}) \le a^{4^{l-2}}P_{k}\left( T_{j}<T_{i}\right) . \end{aligned}$$ -

2.

For any \(i\in L\), \(j\in L\setminus \{i\}\) and \(x\in {\mathcal {X}}_{i},\)

$$\begin{aligned} a^{-4^{l-2}-1}P_{i}\left( T_{j}<T_{i}^{+}\right) \le P_{x}( \tilde{T}_{j}<{\tilde{T}}_{i}^{+}) \le a^{4^{l-2}+1}P_{i}\left( T_{j} <T_{i}^{+}\right) . \end{aligned}$$

Proof

For part 1, see Lemma 3.3 in [12, Chapter 6]. We only need to prove part 2. Note that by a first step analysis on \(\{{\tilde{Z}}_{n}\}_{n\in {\mathbb {N}} _{0}}\), for any \(i\in L\), \(j\in L\setminus \{i\}\) and \(x\in {\mathcal {X}}_{i},\)

The first inequality comes from the use of (7.3) and part 1; the last equality holds since we can do a first step analysis on \(\{Z_{n}\}_{n}.\) Similarly, we can show the lower bound. \(\square \)

Let \({\check{N}}\doteq \inf \{n\in {\mathbb {N}} _{0}:{\tilde{Z}}_{n}\in \cup _{j\in L\setminus \{1\}}{\mathcal {X}}_{j}\}\) and \({\tilde{N}}\doteq \inf \{n\in {\mathbb {N}} :Z_{n}\in {\mathcal {X}}_{1},n\ge {\check{N}}\}.\) For any \(j\in L,\) let \(\tilde{N}_{j}\) be the number of visits (including time 0) of state \({\mathcal {X}} _{j}\) before \({\tilde{N}},\) i.e., \({\tilde{N}}_{j}=| \{n\in {\mathbb {N}} _{0}:n<{\tilde{N}}\text { and }Z_{n}\in {\mathcal {X}}_{j}\}| .\) We would like to understand \(E_{x}{\tilde{N}}_{j}\) for any \(j\in L\) and \(x\in {\mathcal {X}}_{1}\) or \({\mathcal {X}}_{j}.\)

Lemma 7.6

For any \(j\in L\) and \(x\in {\mathcal {X}}_{1}\)

Moreover, for any \(j\in L\setminus \{1\}\)

and

Proof

For any \(x\in {\mathcal {X}}_{1},\) note that for any \(\ell \in {\mathbb {N}} ,\) by a conditioning argument as in the proof of Lemma 7.3 (3), we find that for \(j\in L\setminus \{1\}\)

and

Thus, for any \(x\in {\mathcal {X}}_{1}\) and for \(j\in L\setminus \{1\}\)

The second inequality is from Remark 7.4 and Lemma 7.5 (2); the third equality comes from Lemma 7.3 (2); the last equality holds due to Lemma 7.2. Also,

The last inequality is from Remark 7.4. This completes the proof of part 1.

Turning to part 2, since for any \(\ell \in {\mathbb {N}} \)

we have

Furthermore, we use the conditioning argument again to find that for any \(j\in L\setminus \{1\}\) and \(\ell \in {\mathbb {N}} \)

This implies that

We use Lemma 7.5 (2) to obtain the second inequality and Lemma 7.3, parts (2) and (1), for the penultimate and last equalities. \(\square \)

7.2 Asymptotics of Moments of \(S_{1}^{\varepsilon }\)

Recall that \(\{X^{\varepsilon }\}_{\varepsilon \in (0,\infty )}\subset C([0,\infty ):M)\) is a sequence of stochastic processes satisfying Conditions 3.1, 3.7 and 3.13. Moreover, recall that \(S_{1}^{\varepsilon }\) is defined by

As mentioned in Section 6, we are interested in the logarithmic asymptotics of \(E_{\lambda ^{\varepsilon }}S_{1}^{\varepsilon }\) and \(E_{\lambda ^{\varepsilon }}(S_{1}^{\varepsilon })^{2}.\) To find these asymptotics, the main tool we will use is Freidlin–Wentzell theory [12]. In fact, we will generalize the results of Freidlin–Wentzell to the following: For any given continuous function \(f:M\rightarrow {\mathbb {R}} \) and any compact set \(A\subset M,\) we will provide lower bounds for

and

As will be shown, these two bounds can be expressed in terms of the quasipotentials \(V(O_{i},O_{j})\) and \(V(O_{i},x).\)

Remark 7.7

In the Freidlin–Wentzell theory as presented in [12], they only consider bounds for

Thus, their result is a special case of (7.5) with \(f\equiv 0\) and \(A=M\). Moreover, we generalize their result further by considering the logarithmic asymptotics of higher moment quantities such as (7.6).

Before proceeding, we recall that \(L=\{1,\ldots ,l\}\) and for any \(\delta >0,\) we define \(\tau _{0}\doteq 0,\)

Moreover, \(\tau _{0}^{\varepsilon }\doteq 0,\)

In addition, \(\{Z_{n}\}_{n\in {\mathbb {N}} _{0}}\doteq \{X_{\tau _{n}}^{\varepsilon }\}_{n\in {\mathbb {N}} _{0}}\) is a Markov chain on \( {\textstyle \bigcup \nolimits _{j\in L}} \partial B_{\delta }(O_{j})\) and \(\{Z_{n}^{\varepsilon }\}_{n\in {\mathbb {N}} _{0}}\) \(\doteq \{X_{\tau _{n}^{\varepsilon }}^{\varepsilon }\}_{n\in {\mathbb {N}} _{0}}\) is a Markov chain on \(\partial B_{\delta }(O_{1}).\) It is essential to keep the distinction clear: when there is an \(\varepsilon \) superscript the chain makes transitions between neighborhoods of distinct equilibria, while if absent such transitions are possible, but for stable equilibria there will be many more transitions between the \(\delta \) and \(2\delta \) neighborhoods.

Following the notation of Subsect. 7.1, let \({\hat{N}}\doteq \inf \{n\in {\mathbb {N}} _{0}:Z_{n}\in {\textstyle \bigcup \nolimits _{j\in L\setminus \{1\}}} \partial B_{\delta }(O_{j})\}\), \(N\doteq \inf \{n\ge {\hat{N}}:Z_{n}\in \partial B_{\delta }(O_{1})\}\), and recall \({\mathcal {F}}_{t}\doteq \sigma (\{X_{s} ^{\varepsilon };s\le t\})\). Then, since \(\{\tau _{n}\}_{n\in {\mathbb {N}} _{0}}\) are stopping times with respect to the filtration \(\{{\mathcal {F}} _{t}\}_{t\ge 0},\) \({\mathcal {F}}_{\tau _{n}}\) are well-defined for any \(n\in {\mathbb {N}} _{0}\) and we use \({\mathcal {G}}_{n}\) to denote \({\mathcal {F}}_{\tau _{n}}.\) One can prove that \({\hat{N}}\) and N are stopping times with respect to \(\{{\mathcal {G}} _{n}\}_{n\in {\mathbb {N}} }.\) For any \(j\in L,\) let \(N_{j}\) be the number of visits of \(\{Z_{n}\}_{n\in {\mathbb {N}} _{0}}\) to \(\partial B_{\delta }(O_{j})\) (including time 0) before N.

The proofs of the following two lemmas are given in Appendix.

Lemma 7.8

Given \(\delta >0\) sufficiently small, for any \(x\in \partial B_{\delta }(O_{1})\) and any nonnegative measurable function g \(:M\rightarrow {\mathbb {R}} \),

Lemma 7.9

Given \(\delta >0\) sufficiently small, for any \(x\in \partial B_{\delta }(O_{1})\) and any nonnegative measurable function g \(:M\rightarrow {\mathbb {R}} \),

Although as noted the proofs are given in Appendix, these results follow in a straightforward way by decomposing the excursion away from \(O_{1}\) during \([0,\tau _{1}^{\varepsilon }]\), which only stops when returning to a neighborhood of \(O_{1}\), into excursions between any pair of equilibrium points, counting the number of such excursions that start near a particular equilibrium point, and using the strong Markov property.

Remark 7.10

Following an analogous argument as in the proof of Lemmas 7.8 and 7.9, we can prove the following: Given \(\delta >0\) sufficiently small, for any \(x\in \partial B_{\delta }(O_{1})\) and any nonnegative measurable function g \(:M\rightarrow {\mathbb {R}} \),

and

The main difference is that if the integration starts from \(\sigma _{0}^{\varepsilon }\) (the first visiting time of \( {\textstyle \bigcup \nolimits _{j\in L\setminus \{1\}}} \partial B_{\delta }(O_{j})\)), then any summation appearing in the upper bounds should sum over all indices in \(L\setminus \{1\}\) instead of L.

Owing to its frequent appearance but with varying arguments, we introduce the notation

and write \(I^{\varepsilon }(t;f,A)\) if \(t_{1}=0\) and \(t_{2}=t\) so that, e.g., \(S_{1}^{\varepsilon }=I^{\varepsilon }(\tau _{1}^{\varepsilon };f,A)\).

Corollary 7.11

Given any measurable set \(A\subset M\), a measurable function \(f:M\rightarrow {\mathbb {R}} ,\) \(j\in L\) and \(\delta >0,\) we have

and

where

and

Proof

For the first part, applying Lemma 7.8 with \(g(x)=e^{-\frac{1}{\varepsilon }f\left( x\right) }1_{A}\left( x\right) \) and using (7.1) and (7.2) completes the proof. For the second part, using Lemma 7.9 with \(g(x)=e^{-\frac{1}{\varepsilon }f\left( x\right) }1_{A}\left( x\right) \) and using (7.1) and (7.2) again completes the proof. \(\square \)

Remark 7.12

Owing to Remark 7.10, we can modify the proof of Corollary 7.11 and show that given any set \(A\subset M,\) a measurable function \(f:M\rightarrow {\mathbb {R}} ,\) \(j\in L\) and \(\delta >0,\)

Moreover,

where the definitions of \({\hat{R}}_{j}^{(1)}\) and \({\hat{R}}_{j}^{(2)}\) can be found in Corollary 7.11.

We next consider lower bounds on

for \(j\in L\). We state some useful results before studying the lower bounds. Recall also that \(\tau _{1}\) is the time to reach the \(\delta \)-neighborhood of any of the equilibrium points after leaving the \(2\delta \)-neighborhood of one of the equilibrium points.

Lemma 7.13

For any \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0}\in (0,1)\), such that for all \(\delta \in (0,\delta _{0})\) and \(\varepsilon \in (0,\varepsilon _{0})\)

Proof

If x is not in \(\cup _{j\in L}B_{2\delta }(O_{j})\), then a uniform (in x and small \(\varepsilon \)) upper bound on these expected values follows from the corollary to [12, Lemma 1.9, Chapter 6].

If \(x\in \cup _{j\in L}B_{2\delta }(O_{j})\), then we must wait till the process reaches \(\cup _{j\in L}\partial B_{2\delta }(O_{j})\), after which we can use the uniform bound (and the strong Markov property). Since there exists \(\delta >0\) such the lower bound \(P_{x}(\inf \{t\ge 0:X_{t}^{\varepsilon }\in \cup _{j\in L}\partial B_{2\delta }(O_{j})\}\le 1)\ge e^{-\eta /2\varepsilon }\) is valid for all \(x\in \cup _{j\in L}B_{2\delta }(O_{j})\) and small \(\varepsilon >0\), upper bounds of the desired form follow from the Markov property and standard calculations. \(\square \)

For any compact set \(A\subset M\), we use \(\vartheta _{A}\) to denote the first hitting time

Note that \(\vartheta _{A}\) is a stopping time with respect to filtration \(\{{\mathcal {F}}_{t}\}_{t\ge 0}.\) The following result is relatively straightforward given the just discussed bound on the distribution of \(\tau _{1}\), and follows by partitioning according to \(\tau _{1} \ge T\) and \(\tau _{1} < T\) for large but fixed T.

Lemma 7.14

For any compact set \(A\subset M,\) \(j\in L\) and any \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0}\in (0,1)\), such that for all \(\varepsilon \in (0,\varepsilon _{0})\) and \(\delta \in (0,\delta _{0})\)

Lemma 7.15

Given a compact set \(A\subset M\), any \(j\in L\) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

and

Proof

The idea of this proof follows from the proof of Theorem 4.3 in [12, Chapter 4]. Since \(I^{\varepsilon }(\tau _{1};0,A)=\int _{0}^{\tau _{1}} 1_{A}\left( X_{s}^{\varepsilon }\right) ds\), for any \(x\in \partial B_{\delta }(O_{j}),\)

The inequality is due to \( E_{X_{\vartheta _{A}}^{\varepsilon }}I^{\varepsilon }(\tau _{1};0,A)\le E_{X_{\vartheta _{A}}^{\varepsilon }}\tau _{1}\le \sup _{y\in \partial A}E_{y} \tau _{1}. \) We then apply Lemmas 7.13 and 7.14 to find that for the given \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0} \in (0,1)\), such that for all \(\varepsilon \in (0,\varepsilon _{0})\) and \(\delta \in (0,\delta _{0}),\)

Thus,

This completes the proof of part 1.

For part 2, following the same conditioning argument as for part 1 with the use of Lemmas 7.13 and 7.14 gives that for the given \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0}\in (0,1)\), such that for all \(\varepsilon \in (0,\varepsilon _{0})\) and \(\delta \in (0,\delta _{0}),\)

Therefore,

\(\square \)

Lemma 7.16

Given compact sets \(A_{1},A_{2}\subset M\), \(j\in L\) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

Proof

We set \(\vartheta _{A_{i}}\doteq \inf \left\{ t\ge 0:X_{t}^{\varepsilon }\in A_{i}\right\} \) for \(i=1,2.\) For any \(x\in \partial B_{\delta }(O_{j}),\) using a conditioning argument as in the proof of Lemma 7.15 we obtain that for any \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0} \in (0,1)\), such that for all \(\varepsilon \in (0,\varepsilon _{0})\) and \(\delta \in (0,\delta _{0}),\)

The last inequality holds since for \(i=1,2\)

and owing to Lemma 7.13, for all \(\varepsilon \in (0,\varepsilon _{0})\)

Furthermore, for the given \(\eta >0,\) by Lemma 7.14, there exists \(\delta _{i}\in (0,1)\) such that for any \(\delta \in (0,\delta _{i})\)

for \(i=1,2.\) Hence, letting \(\delta _{0}=\delta _{1}\wedge \delta _{2},\) for any \(\delta \in (0,\delta _{0})\)

The first inequality is from (7.9). \(\square \)

Remark 7.17

The next lemma considers asymptotics of the first and second moments of a certain integral that will appear in a decomposition of \(S^{\varepsilon }_{1}\). It is important to note that the variational bounds for both moments have the same structure as an infimum over \(x \in A\). While one might consider it possible that the variational problem for the second moment could require a pair of parameters (e.g., infimum over \(x,y \in A\)), the infimum is in fact achieved on the “diagonal” \(x=y\). This means that the biggest contribution to the second moment is likewise due to mass along the “diagonal.”

Lemma 7.18

Given a compact set \(A\subset M,\) a continuous function \(f:M\rightarrow {\mathbb {R}} ,\) \(j\in L\) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

and

Proof

Since a continuous function is bounded on a compact set, there exists \(m\in (0,\infty )\) such that \(-m\le f(x)\le m\) for all \(x\in A.\) For \(n\in {\mathbb {N}} \) and \(k\in \{1,2,\ldots ,n\},\) consider the sets

Note that \(A_{n,k}\) is a compact set for any n, k. In addition, for any n fixed, \( {\textstyle \bigcup _{k=1}^{n}} A_{n,k}=A.\) With this expression, for any \(x\in \partial B_{\delta }(O_{j})\) and \(n\in {\mathbb {N}} \)

The second inequality holds because by definition of \(A_{n,k},\) for any \(x\in A_{n,k}\), \(f(x)\ge F_{n,k} -2m/n\) with \(F_{n,k}\doteq \sup _{y\in A_{n,k}}f\left( y\right) \).

Next, we first apply (7.2) and then Lemma 7.15 with compact sets \(A_{n,k}\) for \(k\in \{1,2,\ldots ,n\}\) to get

Finally, we know that \(V\left( O_{j},x\right) \) is bounded below by 0, and then we use the fact that for any two functions \(f,g: {\mathbb {R}} ^{d}\rightarrow {\mathbb {R}} \) with g being bounded below (to ensure that the right hand side is well defined) and any set \(A\subset {\mathbb {R}} ^{d},\) \( \inf _{x\in A}\left( f\left( x\right) +g\left( x\right) \right) \le \sup _{x\in A}f\left( x\right) +\inf _{x\in A}g\left( x\right) \) to find that the last minimum in the previous display is greater than or equal to

Therefore,

Since n is arbitrary, sending \(n\rightarrow \infty \) completes the proof for the first part.

Turning to part 2, we follow the same argument as for part 1. For any \(n\in {\mathbb {N}} ,\) we use the decomposition of A into \( {\textstyle \bigcup _{k=1}^{n}} A_{n,k}\) to have that for any \(x\in \partial B_{\delta }(O_{j}),\)

Recall that \(F_{n,k}\) is used to denote \(\sup _{y\in A_{n,k}}f\left( y\right) \). Using the definition of \(A_{n,k}\) gives that for any \(k,\ell \in \{1,\ldots ,n\}\)

Applying (7.2) first and then Lemma 7.16 with compact sets \(A_{n,k}\) and \(A_{n,\ell }\) pairwise for all \(k,\ell \in \{1,2,\ldots ,n\}\) gives that

Sending \(n\rightarrow \infty \) completes the proof for the second part. \(\square \)

Our next interest is to find lower bounds for

We first recall that \(N_{j}\) is the number of visits of the embedded Markov chain \(\{Z_{n}\}_{n}=\{X_{\tau _{n}}^{\varepsilon }\}_{n}\) to \(\partial B_{\delta }(O_{j})\) within one loop of regenerative cycle. Also, the definitions of G(i) and G(i, j) for any \(i,j\in L\) with \(i\ne j\) are given in Definition 3.8 and Remark 3.9.

Lemma 7.19

For any \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\) and for any \(j\in L\)

where

Proof

According to Lemma 3.17, we know that for any \(\eta >0,\) there exist \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\) and \(\varepsilon \in (0,\varepsilon _{0}),\) for all \(x\in \partial B_{\delta }(O_{i}),\) the one-step transition probability of the Markov chain \(\{Z_{n}\}_{n}\) on \(\partial B_{\delta }(O_{j})\) satisfies the inequalities

We can then apply Lemma 7.6 with \(p_{ij}=e^{-\frac{1}{\varepsilon }V\left( O_{i},O_{j}\right) }\) and \(a=e^{\frac{1}{\varepsilon }\eta /4^{l-1}}\) to obtain that

Thus,

Hence, it suffices to show that

Observe that by definition for any \(j\in L\) and \(g\in G\left( j\right) \)

which implies that

The inequality is from Lemma 7.1; the last equality holds due the definition of \(W\left( O_{j}\right) \).

\(\square \)

Recall the definition of \(W(O_{1}\cup O_{j})\) in (3.3). In the next result, we obtain bounds on, for example, a quantity close to the expected number of visits to \(B_{\delta }(O_{j})\) before visiting a neighborhood of \(O_{1}\), after starting near \(O_{j}\).

Lemma 7.20

For any \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

and for any \(j\in L\setminus \{1\}\)

Proof

We again use that by Lemma 3.17, for any \(\eta >0\) there exist \(\delta _{0}\in (0,1)\) and \(\varepsilon _{0}\in (0,1),\) such that (7.10) holds for any \(\delta \in (0,\delta _{0})\), \(\varepsilon \in (0,\varepsilon _{0})\) and all \(x\in \partial B_{\delta }(O_{i}).\) Then, by Lemma 7.6 with \(p_{ij}=e^{-\frac{1}{\varepsilon }V\left( O_{i},O_{j}\right) }\) and \(a=e^{\frac{1}{\varepsilon }\eta /4^{l-1}}\)

and for any \(j\in L\setminus \{1\}\)

Thus,

and

Following the same argument as for the proof of Lemma 7.19, we can use Lemma 7.1 to obtain that

and

Recalling (3.2) and (3.3), we are done. \(\square \)

As mentioned at the beginning of this subsection, our main goal is to provide lower bounds for

and

for a given continuous function \(f:M\rightarrow {\mathbb {R}} \) and compact set \(A\subset M.\) We now state the main results of the subsection. Recall that \(h_{1}=\min _{\ell \in L\setminus \{1\}}V\left( O_{1},O_{\ell }\right) \), \(S_{1}^{\varepsilon }\doteq \int _{0}^{\tau _{1}^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{s}^{\varepsilon }\right) }1_{A}\left( X_{s}^{\varepsilon }\right) ds\) and \(W\left( O_{j}\right) \doteq \min _{g\in G\left( j\right) }[\sum _{\left( m\rightarrow n\right) \in g}V\left( O_{m},O_{n}\right) ]\) and the definitions (7.8).

Lemma 7.21

Given a compact set \(A\subset M,\) a continuous function \(f:M\rightarrow {\mathbb {R}} \) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

Proof

Recall that by Lemma 7.18, we have shown that for the given \(\eta ,\) there exists \(\delta _{1}\in (0,1),\) such that for any \(\delta \in (0,\delta _{1})\) and \(j\in L\)

In addition, by Lemma 7.19, we know that for the same \(\eta ,\) there exists \(\delta _{2}\in (0,1),\) such that for any \(\delta \in (0,\delta _{2})\)

Hence, for any \(\delta \in (0,\delta _{0})\) with \(\delta _{0}=\delta _{1} \wedge \delta _{2},\) we apply Corollary 7.11 to get

where \(\tau _{1}^{\varepsilon }\) is the time for a regenerative cycle and \(\tau _{1}\) is the first visit time of neighborhoods of equilibrium points after being a certain distance away from them. \(\square \)

Remark 7.22

According to Remark 7.12 and using the same argument as in Lemma 7.21, we can find that given a compact set \(A\subset M,\) a continuous function \(f:M\rightarrow {\mathbb {R}} \) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

Lemma 7.23

Given a compact set \(A\subset M,\) a continuous function \(f:M\rightarrow {\mathbb {R}} \) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

where \(S_{1}^{\varepsilon }\doteq \int _{0}^{\tau _{1}^{\varepsilon }}e^{-\frac{1}{\varepsilon }f\left( X_{s}^{\varepsilon }\right) }1_{A}\left( X_{s}^{\varepsilon }\right) ds\) and \(h_{1}=\min _{\ell \in L\setminus \{1\}}V\left( O_{1},O_{\ell }\right) \), and

and for \(j\in L\setminus \{1\}\)

Proof

Following a similar argument as for the proof of Lemma 7.21, given any \(\eta >0,\) owing to Lemmas 7.18, 7.19 and 7.20, there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\) and for any \(j\in L\)

and for any \(j\in L\setminus \{1\}\),

Hence, for any \(\delta \in (0,\delta _{0})\) we apply Corollary 7.11 to get

where

and

and for \(j\in L\setminus \{1\}\)

\(\square \)

7.3 Asymptotics of Moments of \({\hat{S}}_{1}^{\varepsilon }\)

Recall that

where \({\hat{\tau }}_{i}^{\varepsilon }\) is a multicycle defined according to (6.4) and with \(\{{\mathbf {M}}^{\varepsilon }_i\}_{i\in {\mathbb {N}}}\) being a sequence of independent and geometrically distributed random variables with parameter \(e^{-m/\varepsilon }\) for some \(m>0\) such that \(m+h_1>w\). Moreover, \(\{{\mathbf {M}}^{\varepsilon }_i\}\) is also independent of \(\{\tau ^{\varepsilon }_n\}\). Using the independence of \(\{{\mathbf {M}}^{\varepsilon }_i\}\) and \(\{\tau ^{\varepsilon }_n\}\), and the fact that \(\{\tau ^{\varepsilon }_n\}\) and \(\{S_{n}^{\varepsilon }\}\) are both iid under \(P_{\lambda ^{\varepsilon }}\), we find that \(\{{\hat{S}}_{n}^{\varepsilon }\}\) is also iid under \(P_{\lambda ^{\varepsilon }}\) and

and

On the other hand, since \({\mathbf {M}}^{\varepsilon }_1\) is geometrically distributed with parameter \(e^{-m/\varepsilon }\), this gives that

Therefore, by combining (7.11), (7.12) and (7.13) with Lemma 7.21 and Lemma 7.23, we have the following two lemmas.

Lemma 7.24

Given a compact set \(A\subset M,\) a continuous function \(f:M\rightarrow {\mathbb {R}} \) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

Lemma 7.25

Given a compact set \(A\subset M,\) a continuous function \(f:M\rightarrow {\mathbb {R}} \) and \(\eta >0,\) there exists \(\delta _{0}\in (0,1),\) such that for any \(\delta \in (0,\delta _{0})\)

where \(R_{j}^{(1)}\) and \(R_{j}^{(2)}\) are defined as in Lemma 7.23, and

Later on, we will optimize on m to obtain the largest bound from below. This will require that we consider first \(m>w-h_1\), so that as shown in the next section \(N^{\varepsilon }(T^{\varepsilon })\) can be suitably approximated in terms of a Poisson distribution, and then sending \(m\downarrow w-h_1\).

8 Asymptotics of Moments of \(N^{\varepsilon }(T^{\varepsilon })\) and \({\hat{N}}^{\varepsilon }(T^{\varepsilon })\)

Recall that the number of single cycles in the time interval \([0,T^{\varepsilon }]\) plus one is defined as

where the \(\tau _{n}^{\varepsilon }\) are the return times to \(B_{\delta }(O_{1})\) after ever visiting one of the \(\delta \)-neighborhood of other equilibrium points than \(O_{1}.\) In addition, \(\lambda ^{\varepsilon }\) is the unique invariant measure of \(\{Z_{n}^{\varepsilon }\}_{n}=\{X_{\tau _{n}^{\varepsilon } }^{\varepsilon }\}_{n}.\) The number of multicycles in the time interval \([0,T^{\varepsilon }]\) plus one is defined as

where \({\hat{\tau }}^\varepsilon _i\) are defined as in (6.4).

In this section, we will find the logarithmic asymptotics of the expected value and the variance of \(N^{\varepsilon }\left( T^{\varepsilon }\right) \) with \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\) in Lemmas 8.2 and 8.4 under the assumption that \(h_1>w\) (i.e., single cycle case), and the analogous quantities for \({\hat{N}}^{\varepsilon }\left( T^\varepsilon \right) \) with \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>w\) in Lemmas 8.19 and 8.21 under the assumption that \(w\ge h_1\) (i.e., multicycle case).

Remark 8.1

While the proofs of these asymptotic results are quite detailed, it is essential that we obtain estimates good enough for a relatively precise comparison of the expected value and the variance of \(N^{\varepsilon }\left( T^{\varepsilon }\right) \), and likewise for \({\hat{N}}^{\varepsilon }\left( T^\varepsilon \right) \). For this, the key result needed is the characterization of \(N^{\varepsilon }\left( T^\varepsilon \right) \) (and \({\hat{N}}^{\varepsilon }\left( T^\varepsilon \right) \)) as having an approximately Poisson distribution. These follow by exploiting the asymptotically exponential character of \(\tau _{n}^{\varepsilon }\) (and \({\hat{\tau }}_{n}^{\varepsilon }\)), together with some uniform integrability properties.

Lemmas 8.2 and 8.4 below are proved in Sect. 8.3.

Lemma 8.2

If \(h_1>w\) and \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\), then there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\)

Corollary 8.3

If \(h_1>w\) and \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\), then there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\)

where \(\varkappa _{\delta }\doteq \min _{y\in \cup _{k\in L\setminus \{1\}}\partial B_{\delta }(O_{k})}V(O_{1},y)\).

Lemma 8.4

If \(h_1>w\) and \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\), then for any \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\)

Before proceeding, we mention a result from [11] and define some notation which will be used in this section. Results in Section 5 and Section 10 of [11, Chapter XI] say that for any \(t>0,\) the first and second moment of \(N^{\varepsilon }\left( t\right) \) can be represented as

Let \(\Gamma ^{\varepsilon }\doteq T^{\varepsilon }/E_{\lambda ^{\varepsilon }}\tau _{1}^{\varepsilon }\) and \(\gamma ^{\varepsilon }\doteq \left( \Gamma ^{\varepsilon }\right) ^{-\ell }\) with some \(\ell \in (0,1)\) which will be chosen later. Intuitively, \(\Gamma ^{\varepsilon }\) is the typical number of regenerative cycles in \([0,T^{\varepsilon }]\) since \(E_{\lambda ^{\varepsilon }}\tau _{1}^{\varepsilon }\) is the expected length of one regenerative cycle. To simplify notation, we pretend that \(\left( 1+2\gamma ^{\varepsilon }\right) \Gamma ^{\varepsilon }\) and \(\left( 1-2\gamma ^{\varepsilon }\right) \Gamma ^{\varepsilon }\) are positive integers so that we can divide \(E_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) \) into three partial sums which are

and

Similarly, we divide \(E_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) ^{2}\) into

and

The next step is to find upper bounds for these partial sums, and these bounds will help us to find suitable lower bounds for the logarithmic asymptotics of \(E_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) \) and \(\hbox {Var}_{\lambda ^{\varepsilon }}\left( N^{\varepsilon }\left( T^{\varepsilon }\right) \right) \). Before looking into the upper bound for partial sums, we establish some properties.

Theorem 8.5

If \(h_1>w\), then for any \(\delta >0\) sufficiently small,

Moreover, there exists \(\varepsilon _{0}\in (0,1)\) and a constant \({\tilde{c}}>0\) such that

for any \(t>0\) and any \(\varepsilon \in (0,\varepsilon _{0}).\)

Remark 8.6

For any \(\delta >0,\) \(\varkappa _{\delta }\le h_1.\)

The proof of Theorem 8.5 will be given in Section 10. In that section, we will first prove an analogous result for the exit time (or first visiting time to other equilibrium points to be more precise) and then show how one can extend those results to the return time. The proof of the following lemma is straightforward and hence omitted.

Lemma 8.7

If \(h_1>w\) and \(T^{\varepsilon }=e^{\frac{1}{\varepsilon }c}\) for some \(c>h_1\), then for any \(\eta >0,\) there exists \(\delta _{0}\in (0,1)\) such that for any \(\delta \in (0,\delta _{0})\),

Lemma 8.8