Abstract

In this paper, we use the calculus of variations to derive a sensitivity analysis for ordinary differential equations with events. This sweeping gradient method (SGM) requires a forward sweep to evaluate the original model and a backwards sweep of the adjoint to compute the sensitivity. The method is applied to canonical optimal control problems with numerical examples, including the sampled linear quadratic regulator and the optimal time-switching and state-switching for minimum-time transfer of the double integrator. We show that the application of the SGM for these examples matches the gradient determined analytically. Numerical examples are produced using gradient-based optimization algorithms. The emphasis of this work is on modeling considerations for the effective application of this method.

Similar content being viewed by others

References

Agrawal, A., Barratt, S., Boyd, S., Stellato, B.: Learning convex optimization control policies. In: Learning for Dynamics and Control, pp. 361–373. PMLR (2020)

Backer, W.D.: Jump conditions for sensitivity coefficients. In: Sensitivity Methods in Control Theory, pp. 168–175. Elsevier (1966). https://doi.org/10.1016/b978-1-4831-9822-4.50016-9

Betts, J.T.: Sparse Jacobian updates in the collocation method for optimal control problems. J. Guid. Control. Dyn. 13(3), 409–415 (1990). https://doi.org/10.2514/3.25352

Betts, J.T.: Survey of numerical methods for trajectory optimization. J. Guid. Control. Dyn. 21(2), 193–207 (1998). https://doi.org/10.2514/2.4231

Betts, J.T.: Practical methods for optimal control and estimation using nonlinear programming. Soc. Ind. Appl. Math. (2010). https://doi.org/10.1137/1.9780898718577

Betts, J.T., Frank, P.D.: A sparse nonlinear optimization algorithm. J. Optim. Theory Appl. 82(3), 519–541 (1994). https://doi.org/10.1007/bf02192216

Boccadoro, M., Wardi, Y., Egerstedt, M., Verriest, E.: Optimal control of switching surfaces in hybrid dynamical systems. Discrete Event Dyn. Syst. 15(4), 433–448 (2005). https://doi.org/10.1007/s10626-005-4060-4

Bryson, A.E., Denham, W.F.: A steepest-ascent method for solving optimum programming problems. J. Appl. Mech. 29(2), 247–257 (1962). https://doi.org/10.1115/1.3640537

Bryson, A.E., Ho, Y.C.: Applied Optimal Control. Routledge, Abingdon (1975). https://doi.org/10.1201/9781315137667

Bu, J., Mesbahi, A., Mesbahi, M.: LQR via first order flows. In: 2020 American Control Conference (ACC). IEEE (2020). https://doi.org/10.23919/acc45564.2020.9147853

Bu, J., Mesbahi, A., Mesbahi, M.: Policy gradient-based algorithms for continuous-time linear quadratic control (2020)

Bulirsch, R., Nerz, E., Pesch, H.J., von Stryk, O.: Combining direct and indirect methods in optimal control: range maximization of a hang glider. In: Optimal Control, pp. 273–288. Birkhäuser Basel (1993). https://doi.org/10.1007/978-3-0348-7539-4_20

Cao, Y., Li, S., Petzold, L.: Adjoint sensitivity analysis for differential-algebraic equations: algorithms and software. J. Comput. Appl. Math. 149(1), 171–191 (2002). https://doi.org/10.1016/s0377-0427(02)00528-9

Cao, Y., Li, S., Petzold, L., Serban, R.: Adjoint sensitivity analysis for differential-algebraic equations: the adjoint DAE system and its numerical solution. SIAM J. Sci. Comput. 24(3), 1076–1089 (2003). https://doi.org/10.1137/s1064827501380630

Conte, S.D., de Boor, C.: Elementary numerical analysis. Society for Industrial and Applied Mathematics (2017). https://doi.org/10.1137/1.9781611975208

Courant, R.: On the first variation of the Dirichlet–Douglas integral and on the method of gradients. Proc. Natl. Acad. Sci. 27(5), 242–248 (1941). https://doi.org/10.1073/pnas.27.5.242

Courant, R.: Variational methods for the solution of problems of equilibrium and vibrations. Bull. Am. Math. Soc. 49(1), 1–23 (1943)

Denham, W.F., Bryson, A.E.: Optimal programming problems with inequality constraints. II—solution by steepest-ascent. AIAA Journal 2(1), 25–34 (1964). https://doi.org/10.2514/3.2209

Dopico, D., Zhu, Y., Sandu, A., Sandu, C.: Direct and adjoint sensitivity analysis of ordinary differential equation multibody formulations. Journal of Computational and Nonlinear Dynamics (2014). https://doi.org/10.1115/1.4026492

D’Souza, S.N., Kinney, D., Garcia, J.A., Llama, E., Sarigul-Klijn, N.: Potential for integrating entry guidance into the multi-disciplinary entry vehicle optimization environment. In: AIAA Scitech 2019 Forum. American Institute of Aeronautics and Astronautics (2019). https://doi.org/10.2514/6.2019-0015

Eberhard, P., Bischof, C.: Automatic differentiation of numerical integration algorithms. Math. Comput. 68(226), 717–732 (1999). https://doi.org/10.1090/s0025-5718-99-01027-3

Egerstedt, M., Wardi, Y., Axelsson, H.: Transition-time optimization for switched-mode dynamical systems. IEEE Trans. Autom. Control 51(1), 110–115 (2006). https://doi.org/10.1109/tac.2005.861711

Eichmeir, P., Lauß, T., Oberpeilsteiner, S., Nachbagauer, K., Steiner, W.: The adjoint method for time-optimal control problems. J. Comput. Nonlinear Dyn. (2020). https://doi.org/10.1115/1.4048808

Fatkhullin, I., Polyak, B.: Optimizing static linear feedback: gradient method. SIAM J. Control. Optim. 59(5), 3887–3911 (2021). https://doi.org/10.1137/20m1329858

Fazel, M., Ge, R., Kakade, S., Mesbahi, M.: Global convergence of policy gradient methods for the linear quadratic regulator. In: Dy, J., Krause, A. (eds.) Proceedings of the 35th International Conference on Machine Learning, Proceedings of Machine Learning Research, vol. 80, pp. 1467–1476. PMLR (2018). https://proceedings.mlr.press/v80/fazel18a.html

Filippov, A.F.: Differential Equations with Discontinuous Righthand Sides. Springer, Cham (1988). https://doi.org/10.1007/978-94-015-7793-9

Galán, S., Feehery, W.F., Barton, P.I.: Parametric sensitivity functions for hybrid discrete/continuous systems. Appl. Numer. Math. 31(1), 17–47 (1999). https://doi.org/10.1016/s0168-9274(98)00125-1

Garg, D., Patterson, M.A., Francolin, C., Darby, C.L., Huntington, G.T., Hager, W.W., Rao, A.V.: Direct trajectory optimization and costate estimation of finite-horizon and infinite-horizon optimal control problems using a radau pseudospectral method. Comput. Optim. Appl. 49(2), 335–358 (2009). https://doi.org/10.1007/s10589-009-9291-0

Gavrilović, M., Petrović, R., Šiljak, D.: Adjoint method in the sensitivity analysis of optimal systems. J. Frankl. Inst. 276(1), 26–38 (1963). https://doi.org/10.1016/0016-0032(63)90307-7

Gershwin, S.B., Jacobson, D.H.: A discrete-time differential dynamic programming algorithm with application to optimal orbit transfer. AIAA J. 8(9), 1616–1626 (1970). https://doi.org/10.2514/3.5955

Gill, P.E., Murray, W., Wright, M.H.: Practical Optimization. Society for Industrial and Applied Mathematics (1981). https://doi.org/10.1137/1.9781611975604

Griesse, R., Walther, A.: Evaluating gradients in optimal control: continuous adjoints versus automatic differentiation. J. Optim. Theory Appl. 122(1), 63–86 (2004). https://doi.org/10.1023/B:JOTA.0000041731.71309.f1

Griffiths, D., Walborn, S.: Dirac deltas and discontinuous functions. Am. J. Phys. 67(5), 446–447 (1999). https://doi.org/10.1119/1.19283

Gronwall, T.H.: Note on the derivatives with respect to a parameter of the solutions of a system of differential equations. Ann. Math. 20(4), 292 (1919). https://doi.org/10.2307/1967124

Hadamard, J.: Mémoire sur le problème d’analyse relatif à l’équilibre des plaques élastiques encastrées. Académie des sciences. Mémoires. Imprimerie nationale (1908). https://books.google.com/books?id=8wSUmAEACAAJ

Hager, W.W.: Runge–Kutta methods in optimal control and the transformed adjoint system. Numer. Math. 87(2), 247–282 (2000). https://doi.org/10.1007/s002110000178

Hájek, O.: Discontinuous differential equations, I. J. Differ. Equ. 32(2), 149–170 (1979). https://doi.org/10.1016/0022-0396(79)90056-1

Hale, M.T., Wardi, Y., Jaleel, H., Egerstedt, M.: Hamiltonian-based algorithm for optimal control (2016)

Hargraves, C., Paris, S.: Direct trajectory optimization using nonlinear programming and collocation. J. Guid. Control. Dyn. 10(4), 338–342 (1987). https://doi.org/10.2514/3.20223

Herman, A.L., Conway, B.A.: Direct optimization using collocation based on high-order Gauss–Lobatto quadrature rules. J. Guid. Control. Dyn. 19(3), 592–599 (1996). https://doi.org/10.2514/3.21662

Holtz, D., Arora, J.S.: An efficient implementation of adjoint sensitivity analysis for optimal control problems. Struct. Optim. 13(4), 223–229 (1997). https://doi.org/10.1007/bf01197450

Hung, J.: method of adjoint systems and its engineering applications technical note no. 1. Technical report (1964). https://ntrs.nasa.gov/citations/19650003538

Hwang, J.T., Martins, J.R.: A computational architecture for coupling heterogeneous numerical models and computing coupled derivatives. ACM Trans. Math. Softw. 44(4), 1–39 (2018). https://doi.org/10.1145/3182393

Jittorntrum, K.: An implicit function theorem. J. Optim. Theory Appl. 25(4), 575–577 (1978). https://doi.org/10.1007/bf00933522

Jurovics, S.A., McINTYRE, J.E.: The adjoint method and its application to trajectory optimization. ARS J. 32(9), 1354–1358 (1962). https://doi.org/10.2514/8.6284

Kálmán, R.E.: Contributions to the theory of optimal control (1960)

Kelley, H.J.: Gradient theory of optimal flight paths. ARS J. 30(10), 947–954 (1960). https://doi.org/10.2514/8.5282

Lantoine, G., Russell, R.P.: A hybrid differential dynamic programming algorithm for constrained optimal control problems. Part 1: theory. J. Optim. Theory Appl. 154(2), 382–417 (2012). https://doi.org/10.1007/s10957-012-0039-0

Lantoine, G., Russell, R.P.: A hybrid differential dynamic programming algorithm for constrained optimal control problems. Part 2: application. J. Optim. Theory Appl. 154(2), 418–442 (2012). https://doi.org/10.1007/s10957-012-0038-1

Levis, A.H., Schlueter, R.A., Athans, M.: On the behaviour of optimal linear sampled-data regulators\(\top \). Int. J. Control 13(2), 343–361 (1971). https://doi.org/10.1080/00207177108931949

Li, H., Wensing, P.M.: Hybrid systems differential dynamic programming for whole-body motion planning of legged robots. IEEE Robot. Autom. Lett. 5(4), 5448–5455 (2020). https://doi.org/10.1109/lra.2020.3007475

Li, S., Petzold, L., Zhu, W.: Sensitivity analysis of differential–algebraic equations: a comparison of methods on a special problem. Appl. Numer. Math. 32(2), 161–174 (2000). https://doi.org/10.1016/s0168-9274(99)00020-3

Lugo, R., Litton, D., Qu, M., Shidner, J., Powell, R.: A robust method to integrate end-to-end mission architecture optimization tools. In: 2016 IEEE Aerospace Conference. IEEE (2016). https://doi.org/10.1109/aero.2016.7500621

Ma, Y., Dixit, V., Innes, M.J., Guo, X., Rackauckas, C.: A comparison of automatic differentiation and continuous sensitivity analysis for derivatives of differential equation solutions. In: 2021 IEEE High Performance Extreme Computing Conference (HPEC). IEEE (2021). https://doi.org/10.1109/hpec49654.2021.9622796

Malyuta, D., Reynolds, T.P., Szmuk, M., Lew, T., Bonalli, R., Pavone, M., Acikmese, B.: Convex optimization for trajectory generation: A tutorial on generating dynamically feasible trajectories reliably and efficiently. IEEE Control. Syst. 42(5), 40–113 (2022). https://doi.org/10.1109/mcs.2022.3187542

Margolis, B.W.L.: SimuPy: a python framework for modeling and simulating dynamical systems. J. Open Source Softw. 2(17), 396 (2017). https://doi.org/10.21105/joss.00396

Mårtensson, K.: Gradient methods for large-scale and distributed linear quadratic control. Ph.D. Thesis, Department of Automatic Control (2012). Defence details Date: 2012-04-27 Time: 10:15 Place: Room M:B, M-building, Ole Römers väg 1, Lund University Faculty of Engineering External reviewer(s) Name: Bamieh, Bassam Title: Prof. Affiliation: University of California at Santa Barbara, USA

Mayne, D.: A second-order gradient method for determining optimal trajectories of non-linear discrete-time systems. Int. J. Control 3(1), 85–95 (1966). https://doi.org/10.1080/00207176608921369

McIlroy, M.D.: Mass produced software components. In: Software Engineering: Report of a Conference Sponsored by the NATO Science Committee, Garmisch, Germany, pp. 7–11 (1968)

McReynolds, S.R.: The successive sweep method and dynamic programming. J. Math. Anal. Appl. 19(3), 565–598 (1967). https://doi.org/10.1016/0022-247x(67)90012-1

McReynolds, S.R., Bryson Jr, A.E.: A successive sweep method for solving optimal programming problems. Techreport AD0459518 (1965). https://apps.dtic.mil/sti/citations/AD0459518

McShane, E.J.: Integration. Princeton University Press, Princeton (1944)

Meurer, A., Smith, C.P., Paprocki, M., Čertík, O., Kirpichev, S.B., Rocklin, M., Kumar, A., Ivanov, S., Moore, J.K., Singh, S., Rathnayake, T., Vig, S., Granger, B.E., Muller, R.P., Bonazzi, F., Gupta, H., Vats, S., Johansson, F., Pedregosa, F., Curry, M.J., Terrel, A.R., Roučka, v., Saboo, A., Fernando, I., Kulal, S., Cimrman, R., Scopatz, A.: Sympy: symbolic computing in python. PeerJ Comput. Sci. 3, e103 (2017). https://doi.org/10.7717/peerj-cs.103

Papageorgiou, A., Tarkian, M., Amadori, K., Ovander, J.: Multidisciplinary optimization of unmanned aircraft considering radar signature, sensors, and trajectory constraints. J. Aircr. 55(4), 1629–1640 (2018). https://doi.org/10.2514/1.c034314

Pellegrini, E., Russell, R.P.: On the computation and accuracy of trajectory state transition matrices. J. Guid. Control. Dyn. 39(11), 2485–2499 (2016). https://doi.org/10.2514/1.g001920

Polak, E.: Optimization. Springer, New York (1997). https://doi.org/10.1007/978-1-4612-0663-7

Pontryagin, L.S., Boltyanskii, V.G., Gamkrelidze, R.V., Mishchenko, E.F.: Mathematical Theory of Optimal Processes. Classics of Soviet Mathematics. Interscience Publishers (1962). https://books.google.com/books?id=kwzq0F4cBVAC

Roth, W.E.: On direct product matrices. Bull. Am. Math. Soc. 40(6), 461–468 (1934)

Rozenvasser, E.: General sensitivity equations of discontinuous systems. Avtomat. i Telemekh. 3, 52–56 (1967)

Sengupta, B., Friston, K., Penny, W.: Efficient gradient computation for dynamical models. Neuroimage 98, 521–527 (2014). https://doi.org/10.1016/j.neuroimage.2014.04.040

Shampine, L., Thompson, S.: Event location for ordinary differential equations. Comput. Math. Appl. 39(5–6), 43–54 (2000). https://doi.org/10.1016/s0898-1221(00)00045-6

Squire, W., Trapp, G.: Using complex variables to estimate derivatives of real functions. SIAM Rev. 40(1), 110–112 (1998). https://doi.org/10.1137/s003614459631241x

Stapor, P., Fröhlich, F., Hasenauer, J.: Optimization and profile calculation of ODE models using second order adjoint sensitivity analysis. Bioinformatics 34(13), i151–i159 (2018). https://doi.org/10.1093/bioinformatics/bty230

Stewart, D.E., Anitescu, M.: Optimal control of systems with discontinuous differential equations. Numer. Math. 114(4), 653–695 (2009). https://doi.org/10.1007/s00211-009-0262-2

Sutherland, B., Mattis, D.C.: Ambiguities with the relativistic \(\delta \)-function potential. Phys. Rev. A 24(3), 1194–1197 (1981). https://doi.org/10.1103/physreva.24.1194

Tassa, Y., Erez, T., Todorov, E.: Synthesis and stabilization of complex behaviors through online trajectory optimization. In: 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE (2012). https://doi.org/10.1109/iros.2012.6386025

Tassa, Y., Mansard, N., Todorov, E.: Control-limited differential dynamic programming. In: 2014 IEEE International Conference on Robotics and Automation (ICRA). IEEE (2014). https://doi.org/10.1109/icra.2014.6907001

Vasudevan, R., Gonzalez, H., Bajcsy, R., Sastry, S.S.: Consistent approximations for the optimal control of constrained switched systems—part 1: a conceptual algorithm. SIAM J. Control. Optim. 51(6), 4463–4483 (2013). https://doi.org/10.1137/120901490

Vasudevan, R., Gonzalez, H., Bajcsy, R., Sastry, S.S.: Consistent approximations for the optimal control of constrained switched systems—part 2: an implementable algorithm. SIAM J. Control. Optim. 51(6), 4484–4503 (2013). https://doi.org/10.1137/120901507

Virtanen, P., Gommers, R., Oliphant, T.E., Haberland, M., Reddy, T., Cournapeau, D., Burovski, E., Peterson, P., Weckesser, W., Bright, J., van der Walt, S.J., Brett, M., Wilson, J., Millman, K.J., Mayorov, N., Nelson, A.R.J., Jones, E., Kern, R., Larson, E., Carey, C.J., Polat, İ., Feng, Y., Moore, E.W., VanderPlas, J., Laxalde, D., Perktold, J., Cimrman, R., Henriksen, I., Quintero, E.A., Harris, C.R., Archibald, A.M., Ribeiro, A.H., Pedregosa, F., van Mulbregt, P., SciPy 1.0 contributors: SciPy 1.0: fundamental algorithms for scientific computing in python. Nat. Methods 17, 261–272 (2020). https://doi.org/10.1038/s41592-019-0686-2

Whiffen, G.: Mystic: implementation of the static dynamic optimal control algorithm for high-fidelity, low-thrust trajectory design. In: AIAA/AAS Astrodynamics Specialist Conference and Exhibit. American Institute of Aeronautics and Astronautics (2006). https://doi.org/10.2514/6.2006-6741

Xie, Z., Liu, C.K., Hauser, K.: Differential dynamic programming with nonlinear constraints. In: 2017 IEEE International Conference on Robotics and Automation (ICRA). IEEE (2017). https://doi.org/10.1109/icra.2017.7989086

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Ryan Russell.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A: Continuous-Time Linear Quadratic Regulator

Appendix A: Continuous-Time Linear Quadratic Regulator

1.1 Appendix A.1: Problem Model and Application of the Algorithm

In this section, we apply the sweeping gradient method (SGM) to the continuous-time linear quadratic regulator (LQR) problem. We will consider a linear, time-invariant (LTI) system under full-state feedback. This is represented by the dynamics equations

where \(x\in {\mathbb {R}}^{n}\) is the system state, \(u\in {\mathbb {R}}^{m}\) is the system’s controlled input, with the state matrix \(A\in {\mathbb {R}}^{n\times n}\) and input matrix \(B\in {\mathbb {R}}^{n\times m}\) taken as problem data. The LQR problem is to optimize the feedback gains \(K\in {\mathbb {R}}^{m\times n}\) with respect to the quadratic performance index

where \(Q\in {\mathbb {R}}^{n\times n}\) and \(R\in {\mathbb {R}}^{m\times m}\) are weighting matrices that shape the closed-loop performance (and are typically treated as design parameters), and \(t_{f}\) is some specified trajectory duration.

To evaluate the gradient with SGM, we find the required partial derivatives of the dynamics (32) and performance index (33) with respect to the state \(x\left( t\right) \) and parameters \(\theta ={\text {vec}}\left( K\right) =\left[ K_{1,1},K_{2,1},\ldots ,K_{m,n}\right] ^{T}\).

Under state feedback, the ODE model becomes

The quadratic performance index (33) under state feedback is given by

Then, the partial derivatives required to find the co-state and evaluate the variational derivative (5) are given by

where \(\otimes \) indicates the Kronecker product. The partial derivatives with respect to the state are standard derivatives of linear and quadratic forms from matrix calculus. The partial derivatives with respect to the parameter vector can be found using the matrix calculus properties of the \({\text {vec}}\) operator or by taking the appropriate element-wise partial derivatives. For example, the partial derivative of the dynamics f with respect to the parameters yields the \(n\times nm\) matrix

Using these partial derivatives, the co-state FVP is given by

and the variational derivative given by

1.2 Appendix A.2: Relationship to Direct Solution

The derivative of the performance index (35) with respect to the feedback gains can also be found directly, first proved by Kalman [46] and more recently analyzed for gradient-based optimization by Fatkhulllin [24] and Bu [11]. For completeness, we include the direct formulation of the derivative and then show it is equivalent to the one shown above.

1.2.1 Appendix A.2.1: Direct Solution

This method uses the fact that the performance index (35) can be expressed as

where \(X\) \(\left( t\right) \) is the symmetric solution to the differential Lyapunov equation

The differential of (38) is given by

where \(\textrm{d}X\left( t\right) \) satisfies the differential Lyapunov equation

found by applying the Implicit Function Theorem [44] to (39). The form of the Lyapunov equation (41) allows us to express (40) by the integral

Since \(\textrm{d}J\) is a scalar, we can use the cyclic property of the trace without modifying its value to factor out the \(\textrm{d}K^{T}\) from the integral as

This gives us the derivative of the performance index (35) with respect to the gain matrix K as

1.2.2 Appendix A.2.2: Equivalence to SGM

The derivative (42) is the same as (37). To see this, we first follow Bryson [9] to assume the form of the co-state as

where \(S\left( t\right) \) is a yet unknown time-varying matrix. Taking the time derivative of (43) and substituting the dynamics of the state (34) and co-state (36) shows that \(S\left( t\right) \) must satisfy the matrix differential equation

Since \(S\left( t\right) \) must satisfy the same Lyapunov equation as \(X\left( t\right) \) and the solution to the Lyapunov equation is unique, we have \(S\left( t\right) =X\left( t\right) \) and \(X\left( t\right) x\left( t\right) =\lambda \left( t\right) \).

Next, we will use Roth’s theorem [68], which states for matrices T, U, V of appropriate dimension for valid matrix multiplication,

We will also use the transpose property of the Kronecker product,

Then, we can manipulate (42) by substituting \(S\left( t\right) \) for \(X\left( t\right) \), distributing the constant matrices into the integral, applying the \({\text {vec}}\) operator, and noting that the \({\text {vec}}\) operator applied to a vector like \(x\left( t\right) \) leaves it unchanged, to find

as desired.

1.3 Appendix A.3: Numerical Examples

We consider two canonical systems for the LQR numerical examples. These examples are selected to illustrate behavior with different state dimension (and therefore parameter dimension) and stability properties. The first system is a double integrator with state and input matrices

The second system is a linearized cart and pendulum model with state and input matrices

where \(m=1\) kg is the mass of the pendulum concentrated at \(L=0.5\) m from the cart of mass \(M=3\) kg experiencing acceleration due to gravity \(g=9.81\) m/s\(^{2}\). The state is defined by the translational position of the center of mass of the cart, the pendulum angle from vertical, followed by their respective velocities. The input is modeled as the horizontal force acting on the cart.

For the numerical examples in this and subsequent sections, the original and adjoint systems are solved in sequence using SimuPy [56]. The performance index and its derivative are evaluated by quadrature. The gradient-based optimization is performed using SciPy’s implementation of the conjugate gradient method [80].

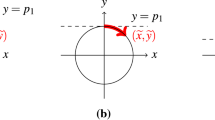

For the double integrator, we use \(Q=I\in {\mathbb {R}}^{2\times 2}\) and \(R=I\in {\mathbb {R}}^{1\times 1}\) for the performance index. The feedback gains are initialized to zero in \({\mathbb {R}}^{1\times 2}\). The initial condition is \(x\left( 0\right) =\left[ 1,0.1\right] ^{T}\) and each simulation runs for 32 s. In Fig. 10, we show the progression of several metrics over each iteration of the optimization and establish the colormap for the iteration number shared for each figure associated with a particular example. The color is determined from a perceptually uniform sequential color map from purple to yellow for the optimizer’s iteration number. Red is generally used for “true” solution, in this case the LQR gains found by solving the algebraic Riccati equation (ARE). Figure 10a shows the progression of the performance metric on a log scale with an offset of the best performance, indicated above the vertical axis, to show the progression over a wide dynamic range. In this case, the optimized gains perform marginally better than the LQR solution from the ARE, shown in a dashed red line, which may be an artifact of evaluating performance from an approximate numeric solution of the ODE with a single initial condition. The method could be performed by averaging the results from multiple initial conditions spanning the state space. The performance metric for the final iteration of the optimizer in this example is indicated by both the half-circle and extended bar to indicate the floor of the plot. Figure 10b shows the normalized progression along the line from the initial feedback gains to the LQR gains found by solving the ARE. Figure 10c shows the progression of the norm of the gradient. Figure 11 shows the simulated trajectories of the state, input, and control, respectively, where the color of each curve indicates the iteration of the optimizer matching the colormap of Fig. 10.

Since the double integrator problem has a two-dimensional parameter space, we can visualize the performance and sensitivity of the parameter space in Fig. 12. The value of the performance index is notionally indicated using a grayscale colormap of the circular markers and contour lines. The sensitivity is shown by arrows with lengths proportional to the magnitude of the gradient and orientated antiparallel to the gradient. The figure also shows the gains and sensitivity at each step of the optimization, similar to central path illustrations for interior point methods. The color along the curve indicates iteration number, using the same color map as Fig. 10. The magnitudes of both the performance metric colormap and sensitivity arrow lengths are shown with a log-scale to accommodate the wide dynamic range. The contour lines show the convexity of the performance index. Visual inspection of the gradient field suggests the flow is toward the optimum, as expected.

For the linearized cart and pendulum, we use \(Q=I\in {\mathbb {R}}^{4\times 4}\) and \(R=I\in {\mathbb {R}}^{1\times 1}\) for the performance index. Since this method assumes a meaningful trajectory \(x\left( t\right) \), we initialize the optimization with stable feedback gains by solving the ARE with \(Q=0\in {\mathbb {R}}^{4\times 4}\). The initial condition is \(x\left( 0\right) =\left[ 1,-1,0,0\right] ^{T}\), and each simulation runs for 32 s. In Fig. 13, we show metrics over each iteration of the optimization and establish the colormap for the iteration number for this example. In this case, the gains found using the ARE marginally outperform the gradient-based optimizer, so the ARE solution forms the floor of Fig. 13a. The final value from the optimization algorithm is indicated with a full circle at the appropriate vertical axis position. Figure 14 shows the trajectories of the state, control, and co-state for each iteration.

Rights and permissions

About this article

Cite this article

Margolis, B.W.L. A Sweeping Gradient Method for Ordinary Differential Equations with Events. J Optim Theory Appl 199, 600–638 (2023). https://doi.org/10.1007/s10957-023-02303-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-023-02303-3

Keywords

- Variational derivative

- Ordinary differential equations with events

- Parameter optimization

- Adjoint

- Sweeping method

- LQR

- Switching schedule