Abstract

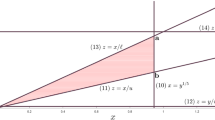

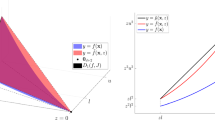

We study mixed-integer nonlinear optimization (MINLO) formulations of the disjunction \(x\in \{0\}\cup [\ell ,u]\), where z is a binary indicator for \(x\in [\ell ,u]\) (\(0 \le \ell <u\)), and y “captures” f(x), which is assumed to be convex and positive on its domain \([\ell ,u]\), but otherwise \(y=0\) when \(x=0\). This model is very useful in nonlinear combinatorial optimization, where there is a fixed cost c for operating an activity at level x in the operating range \([\ell ,u]\), and then, there is a further (convex) variable cost f(x). So the overall cost is \(cz+f(x)\). In applied situations, there can be N 4-tuples \((f,\ell ,u,c)\), and associated (x, y, z), and so, the combinatorial nature of the problem is that for any of the \(2^N\) choices of the binary z-variables, the non-convexity associated with each of the \((f,\ell ,u)\) goes away. We study relaxations related to the perspective transformation of a natural piecewise-linear under-estimator of f, obtained by choosing linearization points for f. Using 3-d volume (in (x, y, z)) as a measure of the tightness of a convex relaxation, we investigate relaxation quality as a function of f, \(\ell \), u, and the linearization points chosen. We make a detailed investigation for convex power functions \(f(x):=x^p\), \(p>1\).

Similar content being viewed by others

Notes

A square matrix \(A=[a_{ij}]\) (not necessary symmetric) is called a Z-matrix if all of its off-diagonal entries are non-positive.

A Z-matrix A is an M-matrix if it is positive stable, that is, all of its eigenvalues have positive real parts. In fact, the following conditions are equivalent for a Z-matrix to be an M-matrix: (1) All real eigenvalues of A are positive; (2) A is non-singular and \(A^{-1}\) is nonnegative; (3) \(A=LU\) where L is lower triangular and U is upper triangular and all of the diagonal elements of L, U are positive; (4) there exists a vector \(x>0\) such that \(Ax>0\); see [11, Theorem 2.5.3].

The result follows from: if \({\hat{x}}>0\) and \(LU{\hat{x}}>0\), then \(F'({\varvec{\xi }}){\hat{x}}\ge LU{\hat{x}}>0\). (See [11, Theorem 2.5.4].)

References

Aktürk, M.S., Atamtürk, A., Gürel, S.: A strong conic quadratic reformulation for machine-job assignment with controllable processing times. Oper. Res. Lett. 37(3), 187–191 (2009)

AnĐelić, M., Da Fonseca, C.: Sufficient conditions for positive definiteness of tridiagonal matrices revisited. Positivity 15(1), 155–159 (2011)

Berenguel, J.L., Casado, L.G., García, I., Hendrix, E.M., Messine, F.: On interval branch-and-bound for additively separable functions with common variables. J. Global Optim. 56(3), 1101–1121 (2013)

Boyd, S., Vandenberghe, L.: Convex Optimization. Cambridge University Press, Cambridge (2004)

Charnes, A., Lemke, C.E.: Minimization of nonlinear separable convex functionals. Naval Res. Logistics Q. 1, 301–312 (1954)

Dudek, G., Tsotsos, J.K.: Decomposition and representation of planar curves using curvature-tuned smoothing. In: Ferrari, L.A., de Figueiredo, R.J.P. (eds.) Curves and Surfaces in Computer Vision and Graphics, vol. 1251, pp. 142–150. International Society for Optics and Photonics, SPIE (1990)

Frangioni, A., Gentile, C.: Perspective cuts for a class of convex 0–1 mixed integer programs. Math. Program. 106(2), 225–236 (2006)

Günlük, O., Linderoth, J.: Perspective reformulations of mixed integer nonlinear programs with indicator variables. Math. Program. Ser. B 124, 183–205 (2010)

Hijazi, H., Bonami, P., Ouorou, A.: An outer-inner approximation for separable mixed-integer nonlinear programs. INFORMS J. Comput. 26(1), 31–44 (2014)

Hiriart-Urruty, J.B., Lemaréchal, C.: Convex Analysis and Minimization Algorithms. I: Fundamentals, Grundlehren der Mathematischen Wissenschaften, vol. 305. Springer, Berlin (1993)

Horn, R.A., Johnson, C.R.: Topics in Matrix Analysis. Cambridge University Press, Cambridge (1994)

Hudson, D.J.: Fitting segmented curves whose join points have to be estimated. J. Am. Stat. Assoc. 61(316), 1097–1129 (1966)

Lee, J., Morris, W.D., Jr.: Geometric comparison of combinatorial polytopes. Discret. Appl. Math. 55(2), 163–182 (1994)

Lee, J., Skipper, D., Speakman, E.: Algorithmic and modeling insights via volumetric comparison of polyhedral relaxations. Math. Program. Ser. B 170, 121–140 (2018)

Lee, J., Skipper, D., Speakman, E.: Gaining or losing perspective. In: H.A. Le Thi, H.M. Le, T. Pham Dinh (eds.) Optimization of Complex Systems: Theory, Models, Algorithms and Applications, pp. 387–397. Springer (2020)

Lee, J., Skipper, D., Speakman, E.: Gaining or losing perspective. J. Global Optim. 82, 835–862 (2022)

Lee, J., Skipper, D., Speakman, E., Xu, L.: Gaining or losing perspective for piecewise-linear under-estimators of convex univariate functions. In: C. Gentile, G. Stecca, P. Ventura (eds.) Graphs and Combinatorial Optimization: from Theory to Applications: CTW2020 Proceedings, pp. 349–360. Springer (2021)

Lee, J., Wilson, D.: Polyhedral methods for piecewise-linear functions I: the lambda method. Discret. Appl. Math. 108(3), 269–285 (2001)

Meek, D.S., Walton, D.J.: Approximation of discrete data by \(G^1\) arc splines. Comput. Aided Des. 24(6), 301–306 (1992)

Mott, T.E.: Newton’s method and multiple roots. Am. Math. Mon. 64(9), 635–638 (1957)

Ortega, J.M., Rheinboldt, W.C.: Iterative Solution of Nonlinear Equations in Several Variables. In: SIAM (2000)

Pavlidis, T.: Polygonal approximations by Newton’s method. IEEE Trans. Comput. 26(8), 800–807 (1977)

Pavlidis, T., Horowitz, S.L.: Segmentation of plane curves. IEEE Trans. Comput. 23(8), 860–870 (1974)

Toriello, A., Vielma, J.P.: Fitting piecewise linear continuous functions. Eur. J. Oper. Res. 219(1), 86–95 (2012)

Vielma, J.P., Ahmed, S., Nemhauser, G.: Mixed-integer models for nonseparable piecewise-linear optimization: unifying framework and extensions. Oper. Res. 58(2), 303–315 (2010)

Wilson, D.G.: Algorithm 510: piecewise linear approximations to tabulated data. ACM Trans. Math. Softw. 2(4), 388–391 (1976)

Zwillinger, D.: CRC Standard Mathematical Tables and Formulas, 33rd edn. CRC Press, Boca Raton (2018)

Acknowledgements

J. Lee was supported in part by ONR grants N00014-17-1-2296 and N00014-21-1- 2135. D. Skipper was supported in part by ONR grant N00014-18-W-X00709. E. Speakman was supported by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) - 314838170, GRK 2297 MathCoRe. Lee and Skipper gratefully acknowledge additional support from the Institute of Mathematical Optimization, Otto-von-Guericke-Universität, Magdeburg, Germany.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

A short preliminary version of some of this work is in the proceedings of CTW 2020; see [17]

Appendix

Appendix

Lemma A.1

For \(x\in (0,1)\cup (1,\infty )\), \(p>1\),

Proof

\(x^p + (p-1) - px = x^p - 1 - p(x-1)>0\) because of the strict convexity of \(x^p\) on \((0,\infty )\) for \(p>1\). \((p-1)x^p + 1 - px^{p-1} = 1 - x^p - px^{p-1}(1-x)>0\) because of the strict convexity of \(x^p\) on \((0,\infty )\) for \(p>1\). \(\square \)

Lemma A.2

Letting \(h(x):=(p-2)(x^p-1) - p(x^{p-1}-x)\), we have

-

(i)

if \(1<p<2\), then \(h(x)>0\) for \(x\in (0,1)\);

-

(ii)

if \(p>2\), then \(h(x)<0\) for \(x\in (0,1)\).

Proof

We have

(i) If \(1<p<2\), then \(h''(x)>0\) on (0, 1), which implies that \(h'(x)\) is increasing. Thus, \(h'(x)<h'(1)=0\), which implies that h(x) is decreasing. Therefore, \(h(x)>h(1)=0\). (ii) Similarly, we could prove that h(x) is increasing and \(h(x)<0\) on (0, 1). \(\square \)

Remark A.1

Notice that \(h(x) = -x^p h(1/x)\), we have \(h(x)<0\) on \((1,\infty )\) when \(1<p<2\), and \(h(x)>0\) on \((1,\infty )\) when \(p>2\).

Lemma A.3

Letting \(\delta (x) := (x^{p-1}-1)^2 - (p-1)^2 x^{p-2}(x-1)^2\), we have

-

(i)

if \(1<p<2\), then \(\delta (x)<0\) on \((0,1)\cup (1,\infty )\);

-

(ii)

if \(p>2\), then \(\delta (x)> 0\) on \((0,1)\cup (1,\infty )\).

Proof

Notice that \(\delta (x) = x^{2p-2}\delta (1/x)\), we only need to show the results on (0, 1). Letting

we have

(i) \(\varphi '(x)> 0\) because of the strict concavity of \(x^{p/2}\) when \(1<p<2\). Along with \(\varphi (1)=0\), we obtain that \(\varphi (x)<0\) on (0, 1). (ii) Similarly, because of the strict convexity of \(x^{p/2}\) when \(p>2\), we obtain that \(\varphi (x)>0\) on (0, 1). \(\square \)

Lemma A.4

For \(x\in (0,1)\cup (1,\infty )\),

Proof

We have

By Lemma A.1 and the inequality \(t-1\ge \log t\), we have \(\frac{d}{dx}\left( \frac{\phi '(x)}{x^{p-2}}\right) >0\). Because \(\phi '(1)=0\), we have \(\phi '(x)<0\) for \(x\in (0,1)\) and \(\phi '(x)>0\) for \(x\in (1,\infty )\). Combined with \(\phi (1)=0\), we obtain \(\phi (x)>0\) for \(x\in (0,1)\cup (1,\infty )\), which proves the lemma. \(\square \)

Lemma A.5

For \(1<p<2\), \(x\in (0,1)\),

Proof

Let \(K(x):=x^{\frac{2-p}{3}}(1+(p-1)x^p-p x^{p-1})-x^p-(p-1)+px\).

The last inequality follows from the strict concavity of function \(x^{\frac{p+1}{3}}\) when \(1<p<2\). Because \(K_2(1)=0\), we have \(K_2(x)< 0\) on (0, 1), which implies \(K_1(x)\) is decreasing on (0, 1). Along with \(K_1(1)=0\), which implies \(K_1(x)> 0\) on (0, 1). Therefore, K(x) is increasing on (0, 1), and \(K(x)< K(1)=0\), which proves the lemma. \(\square \)

Proof of Theorem 3.13

For \(p>2\), we know that for \(k\ge 0\),

because of the concavity of \(F_i(x)\) from Lemma 3.8 (ii). Along with \(F(x^0)\le 0\) (Proposition 3.12) and \([F'(x^k)]^{-1}\ge 0\) from Lemma 3.7 (ii), we know that \(x^{k+1}\ge x^k\) for \(k\ge 0\). Also by concavity, we have

which implies \(x^k\le u{\mathbf {1}}\) because \([F'(x^k)]^{-1}\) is nonnegative. Therefore, the increasing bounded sequence \(\{x^k\}\) has a limit \(x^*=\lim _{k\rightarrow \infty } x^k\) and \(F(x^*) = 0\).

For \(1<p<2\), similarly, we know that for \(k\ge 0\),

because of the convexity of \(F_i(x)\) from Lemma 3.8 (i). Along with \(F(x^0)\ge 0\) (Proposition 3.12), we know that \([F'(x^k)]^{-1}\ge 0\) from Lemma 3.7 (i). we know that \(x^{k+1}\le x^k\) for \(k\ge 0\). Also by convexity, we have

which implies \(x^k\ge \ell {\mathbf {1}}\) because \([F'(x^k)]^{-1}\) is nonnegative. Therefore, the decreasing bounded sequence \(\{x^k\}\) has a limit \(x^*=\lim _{k\rightarrow \infty } x^k\) and \(F(x^*) = 0\). \(\square \)

Proof of Claim in Theorem 3.19 (i)

Letting

the claim is for \(1<p<2\), \(0<t<1\), \(\varTheta (t)>0\). We have

Let \(\varTheta _1(t):=6t^p-p(p+1)t^2+2p(p-2)t-(p-2)(p-3)\), \(\varTheta _2(t):=4t^p-(p^2-1)t^2+2(p^2-2p-1)t-(p-3)(p-1)\). We first show that \(t^p-1-p(t-1)\le (p-1)(1-t)^2\). This follows from the fact that

Then we have

Thus, \(\varTheta _2'(t)<\varTheta _2'(1) = 0\), which implies \(\varTheta _2(t)\) is decreasing and \(\varTheta _2(t)>\varTheta _2(1)=0\). Because \(\varTheta _1(t)<0\) and \(\varTheta _2(t)>0\), we have that \(\varTheta '''(t)<0\). Therefore, \(\varTheta ''(t)>\varTheta ''(1)=0\), which implies that \(\varTheta '(t)\) is increasing. Thus, \(\varTheta '(t)<\varTheta '(1)=0\), which implies \(\varTheta (t)\) is decreasing, i.e., \(\varTheta (t)>\varTheta (1)=0\). Then the claim follows directly. \(\square \)

Proof of Claim in Theorem 3.19 (ii)

Letting

the claim is for \(p>2\), \(0<t<1\), \(\varPhi (t)<0\). We have

Let \(\varPhi _1(t):=\frac{\varPhi ''(t)}{p(p-1)t^{p-3}} = -2(p-2)(2p-1)t^{p+1} +2p(2p-1)t^p -p^2(p+1)t^2 +2(p-2)(p^2+p-1)t - p(p-1)(p-2)\). Then

Therefore, we have that \(\varPhi _1''(t)\) is increasing on \((0,\frac{p}{p+1})\) and decreasing on \((\frac{p}{p+1},1)\). We have \(\varPhi _1''(t)\le \varPhi _1''(\frac{p}{p+1})=2p^2(2p-1)\left( \frac{p}{p+1}\right) ^{p-2}-2p^2(p+1)\). Letting \(\varPhi _2(p):=(p-2)\log \left( \frac{p}{p+1}\right) -\log \left( \frac{p+1}{2p-1}\right) \), we have

Therefore, \(\varPhi _2'(p)\) is increasing on \((2,\infty )\). Along with \(\lim _{p\rightarrow \infty }\varPhi _2'(p)=0\), we have that \(\varPhi _2'(p)<0\) on \((2,\infty )\), which implies that \(\varPhi _2(p)<\varPhi _2(2)=0\). Thus, \(\varPhi _1''(t)<0\). Then we have that \(\varPhi _1'(t)\) is decreasing on (0, 1), which implies that \(\varPhi _1'(t)>\varPhi _1'(1) =0\). Therefore, we have \(\varPhi _1(t)<\varPhi _1(1)=0\), i.e., \(\varPhi ''(t)<0\). Then we conclude that \(\varPhi '(t)\) is decreasing on (0, 1), which implies that \(\varPhi '(t)>\varPhi (1)=0\). Therefore, \(\varPhi (t)\) is increasing on (0, 1) and \(\varPhi (t)<\varPhi (1)=0\), which proves the claim. \(\square \)

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Lee, J., Skipper, D., Speakman, E. et al. Gaining or Losing Perspective for Piecewise-Linear Under-Estimators of Convex Univariate Functions. J Optim Theory Appl 196, 1–35 (2023). https://doi.org/10.1007/s10957-022-02144-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-022-02144-6

Keywords

- Convex relaxation

- Perspective function/transformation

- Volume

- Piecewise linear

- Univariate

- Indicator variable

- Global optimization

- Mixed-integer nonlinear optimization