Abstract

Dual decomposition has been successfully employed in a variety of distributed convex optimization problems solved by a network of computing and communicating nodes. Often, when the cost function is separable but the constraints are coupled, the dual decomposition scheme involves local parallel subgradient calculations and a global subgradient update performed by a master node. In this paper, we propose a consensus-based dual decomposition to remove the need for such a master node and still enable the computing nodes to generate an approximate dual solution for the underlying convex optimization problem. In addition, we provide a primal recovery mechanism to allow the nodes to have access to approximate near-optimal primal solutions. Our scheme is based on a constant stepsize choice, and the dual and primal objective convergence are achieved up to a bounded error floor dependent on the stepsize and on the number of consensus steps among the nodes.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Lagrangian relaxation and dual decomposition are extremely effective in solving large-scale convex optimization problems [1–6]. Dual decomposition has also been employed successfully in the field of distributed convex optimization, where the optimization problem requires to be decomposed among cooperative computing entities (called in the following simply by nodes). In this case, the optimization problem is generally divided into two steps, a first step pertaining the calculation of the local subgradients of the Lagrangian dual function, and a second step consisting of the global update of the dual variables by projected subgradient ascent. The first step can typically be performed in parallel on the nodes, whereas the second step has often to be performed centrally, by a so-called master node (or data-gathering node, or fusion center), which combines the local subgradient information.

Even though by solving the dual problem, one obtains a lower bound on the optimal value of the original convex problem, in practical situations, one would also like to have access to an approximate primal solution. However, even with the availability of an approximate dual optimal solution, a primal one cannot be easily obtained. The reason is that the Lagrangian dual function is generally nonsmooth at an optimal point, thus an optimal primal solution is not a trivial combination of the extreme subproblem solutions. Methods to recover approximate (near-optimal) primal solutions from the information coming from dual decomposition have been proposed in the past [4, 7–13] (and references therein). In one way or another, all these methods use a combination of all the approximate primal solutions that are generated, while the dual decomposition scheme converges to a near-optimal dual solution. A possible choice for the combination is the ergodic mean [4, 11, 14].

Among the dual decomposition schemes with primal recovery mechanism available in the literature, we are interested here in the ones that employ a constant stepsize in the projected dual subgradient update. The reasons are twofold. First of all, a constant stepsize yields faster convergence to a bounded error floor, which is fundamental in real-time applications (e.g., control of networked systems). In addition, the error floor can be tuned by trading-off the number of iterations required and the value of the stepsize. The second reason is that in many situations, the underlying convex optimization problem is not stationary, but changes over time. Having in mind the development of methods to update the dual variables while the optimization problem varies [15–17], it is of key importance to employ a constant stepsize. In this way, the capability of the subgradient scheme to track the dual optimal solutions does not change over time due to a vanishing stepsize approach.

In this paper, we propose a way to remove the need for a master node to collect the local subgradient information coming from the different nodes and generate a global subgradient. The reason is that in distributed systems, the nodes are connected via an ad-hoc network and the communication is often limited to geographically nearby nodes. It is therefore impractical to collect the local subgradient information in one physical location, whereas it is advisable to enable the nodes themselves to have access to a suitable approximation of the global subgradient. We use consensus-based mechanisms to construct such an approximation. Consensus-based mechanisms have been used in the primal domain both with constant stepsizes [18, 19] and with vanishing ones [19–21], however, to the best of the authors’ knowledge, they have not been used in the dual domain, and not together with primal recovery. An interesting, but different, approach applying consensus on the cutting-plane algorithm to solve the master problem has been very recently proposed in [22]. Our main contributions can be described as follows.

First, we develop a constant stepsize consensus-based dual decomposition. Our method enables the different nodes to generate a sequence of approximate dual optimal solutions whose dual cost eventually converges to the optimal dual cost within a bounded error floor. Under the assumptions of convexity, compactness of the feasible set, and Slater’s condition, the convergence goes as O(1 / k), where k is the number of iterations. The error depends on the stepsize and on the number of consensus steps between subsequent iterations k. Furthermore, the nodes are exchanging subgradient information only with their nearby neighboring nodes.

Then, since in our method, each node maintains its own approximate dual sequence, we provide an upper bound on the disagreement among the nodes and we prove its convergences to a bounded value.

Finally, we propose a primal recovery scheme to generate approximate primal solutions from consensus-based dual decomposition. This scheme is proven to converge to the optimal primal cost up to a bounded error floor. Once again, under the same assumptions, the convergence goes as O(1 / k) and the error depends on the stepsize and on the number of consensus steps.

Organization Section 2 describes the problem setting, our main research question, and some sample applications. In Sect. 3, we cover the basics of dual decomposition to pinpoint the main limitation, i.e., the need for a master node. We propose, develop, and investigate the convergence results of our algorithm in Sects. 4 and 5. All the proofs are contained in Sects. 6 and 7. In Sect. 8, we collect numerical simulation results. Future research questions and conclusions are discussed in Sects. 9 and 10, respectively.

2 Problem Formulation

Notation

For any two vectors \(\varvec{x},\varvec{y}\in {\mathbb {R}}^n\), the standard inner product is indicated as \(\langle \varvec{x}, \varvec{y}\rangle \), while its induced (Euclidean) norm is represented as \(\Vert \varvec{x}\Vert _{2}\). A vector \(\varvec{x}\) belongs to \({\mathbb {R}}^n_+\) iff it is of size n, and all its components are nonnegative (i.e., \({\mathbb {R}}^n_+\) is the nonnegative orthant). For any vectors \(\varvec{x}\in {\mathbb {R}}^n\), its components are indicated by \(x_i\), \(i\in \{1, \ldots , n\}\). The vector \({\mathbf {1}}_n\) is the column vector of length n containing only ones. We indicate by \({\mathbf {I}}_n\) the identity matrix of size n. For any real-valued squared matrix \(\varvec{X}\in {\mathbb {R}}^{n\times n}\), we say \(\varvec{X}\succeq 0 \) or \(\varvec{X}\preceq 0\) iff the matrix is positive semi-definite or negative semi-definite, respectively. We also write \(\varvec{X}\in {\mathbb {S}}_{+}^n\), iff \(\varvec{X}\succeq 0\). For any real-valued squared matrix \(\varvec{X}\in {\mathbb {R}}^{n\times n}\), the norm \(\Vert \varvec{X}\Vert _{{\mathrm {F}}}\) represents the Frobenius norm, while the trace is indicated by \({\mathrm {tr}}[\varvec{X}]\). The symbol \((\cdot )^{\mathsf {T}}\) is the transpose operator, \(\otimes \) represents the Kronecker product, \(\circ \) stands for map composition, \({\mathrm {conv}}[\cdot ]\) is the convex hull, \({\mathrm {vec}}(\cdot )\) is the vectorization operator, while \({\mathsf {P}}_{{X}}[\cdot ]\) is the projection operator onto the set X. The \(\epsilon \)-subgradient of a concave function \(q(\varvec{x}): {X}\subseteq {\mathbb {R}}^n \rightarrow {\mathbb {R}}\), for the nonnegative scalar \(\epsilon \geqslant 0\), at \(\varvec{x}{'}\in X\) is a vector \(\tilde{\varvec{g}}\in {\mathbb {R}}^n\) such that

Furthermore, the collection of \(\epsilon \)-subgradients of \(q(\varvec{x})\) at \(\varvec{x}{'}\) is called the \(\epsilon \)-subdifferential set, denoted by \(\partial _{\epsilon } q_{\varvec{x}} (\varvec{x}{'})\). If \(\epsilon = 0\), the \(\epsilon \)-subgradient is the regular subgradient and we drop the \(\epsilon \) in the notation of the subdifferential.

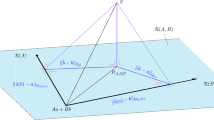

Formulation We consider a convex optimization problem defined on a network of computing and communicating nodes. Let the nodes be labeled with \(i\in {V} = \{1,\ldots ,n\}\), and we equip each of them with the local (private) convex function \(f_i(x_i):{\mathbb {R}} \rightarrow {\mathbb {R}}\). Let \(\varvec{x}\) be the stacked vector of all the local decision variables, i.e., \(\varvec{x}= (x_1, \ldots , x_n)^{\mathsf {T}}\).Let the functions \(g_{i}(x_i): {\mathbb {R}} \rightarrow {\mathbb {R}}, i\in {V}\) be convex. Let \(\varvec{A}_{0}, \varvec{A}_{i}, i\in {V}\) be \(d\times d\) real-valued square and symmetric matrices. Let \(X_i \subset {\mathbb {R}}, i\in {V}\) be convex and compact sets, and let \(X:= \prod _{i\in {V}} X_i\). We are interested in solving decomposable convex optimization problems of the form,

In order to simplify our notation (and without loss of generality), we have chosen to work with scalar decision variables \(x_i\), with one scalar inequality and with one linear matrix inequality. The following assumptions are in place.

Assumption 2.1

(Convexity and compactness) The cost functions \(f_i(x_i)\) and the constraint functions \(g_i(x_i)\) are convex in \(x_i\) for each i. The sets \(X_i\) are convex and compact (thus, bounded). The matrices \(\varvec{A}_{0}, \varvec{A}_{i}, i\in {V}\) are real-valued square and symmetric.

Assumption 2.2

(Existence of solution) The feasible set \( F: = \{\varvec{x}\in X| (2\hbox {b}) \) and \( (2\hbox {c})\} \) is nonempty; for all \(\varvec{x}\in F\), the cost function \(f(\varvec{x})>-\infty \), and there exists a vector \(\varvec{x}\in F\) such that \(f(\varvec{x})<\infty \).

Assumption 2.3

(Slater condition) There exists a vector \(\bar{\varvec{x}} \in {\mathbb {R}}^n\) that is strictly feasible for problem (2), i.e.,

Assumption 2.4

(Communication network) The computing nodes communicate synchronously via undirected time-invariant communication links.

Assumption 2.1 is required to ensure a convex program with compact feasible set. Assumption 2.2 ensures the existence of a solution for the optimization problem (2). Let \(\varvec{x}^*\) be such a (possibly not unique) solution (i.e., a minimizer) and let \(f^*\) be the unique minimum. Assumption 2.3 is often required in dual decomposition approaches in order to guarantee zero duality gap and to be able to derive the optimal value of the optimization problem (2) by solving its dual. In addition, Slater condition helps in bounding the dual variables, which is crucial in our convergence analysis. Assumption 2.4 is required to simplify the convergence analysis. One might be able to loosen it and require only asynchronous communications, but this is left for future research since it is not the core idea of this paper. By Assumption 2.4, we can define an undirected communication graph \(\mathcal {G}\) consisting of a vertex set \( {V}\) as well as an edge set \( {E}\). For each node i, we call neighborhood, or \(N_i\), the set of the nodes it can communicate with.

The main research problem we tackle in this paper can be stated as follows.

Research Problem: We would like to devise an algorithm that enables each node, by communicating with their neighbors only, to construct a sequence of approximate local optimizers \(\{x_i^k\}\), for which their primal objective sequence \(\{f(\varvec{x}^k)\}\) eventually converges to \(f^*\) (possibly) up to a bounded error floor.

Our approach toward this problem is to devise a consensus-based dual decomposition with approximate primal recovery.

Sample Applications Problems as (2) appear in many contexts: The first example we cite is the network utility maximization (NUM) problem, where a group of communication nodes try to maximize their utility subject to a resource allocation constraint [23, 24]. NUM problems are very relevant in communication systems. Generalizations of NUM problems, where the cost function is separable and the variables are constrained by linear inequalities, can also be handled by (2), and have been considered, e.g., in model predictive controller design [25] (which is one of the workhorse of nowadays control theory). Another sample application is sensor selection, where a set of nodes try to decide which one of them should be activated to perform a certain task based on a given metric. This is in general a combinatorial problem, yet it can be relaxed to a semi-definite program, which is a generalization of (2), [26, 27]. In the latter example, the constraint (2c) plays an important role.

Multi-agent/Multiuser/Networked Problems If the constraints (2b) and (2c) involve only local functions, that is, the sum is only over the neighbors of a particular i, then we have what is known as multi-agent (or multiuser, or networked) problem. These problems can be further complicated by the presence of global decision variables. In all these cases, due to the presence of neighborhood constraint functions only, the dual variables associated with them can be computed locally in the neighborhood (we can refer to them as link dual variables). Therefore, by a proper use of dual decomposition, we can devise distributed algorithms that can be implemented on nodes and connecting links. Relevant recent work on these problems is reported in [28–35]. In our case, the constraints (2b)–(2c) involve constraint functions from all the nodes, in all the decision variables together; therefore, the proposed methods for multi-agent problems cannot be directly applied in our case. In general, the link dual variables become a network-wide dual variable in our case, and we retrieve the standard dual decomposition scheme with the need for a master node to compute such a global network-wide dual variable.

3 Dual Decomposition

The Lagrangian function \(L(\varvec{x}, \mu , \varvec{G}): {\mathbb {R}}^n \times {\mathbb {R}}_+ \times {\mathbb {S}}_+^d \rightarrow {\mathbb {R}}\) is formed, as a first step of dual decomposition,

where \(\mu \in {\mathbb {R}}_+\) is the dual variable associated with the constraint (2b), and \(\varvec{G}\in {\mathbb {S}}_+^d\) is the dual variable associated with (2c). Further, the dual function \(q(\mu , \varvec{G}): {\mathbb {R}}_{+} \times {\mathbb {S}}_+^d \rightarrow {\mathbb {R}}\) can be defined as

The set \( {X}\) is compact, which means that the function \(q(\mu , \varvec{G})\) is continuous on \({\mathbb {R}}_{+} \times {\mathbb {S}}_+^d\). Furthermore, the function \(q(\mu , \varvec{G})\) is concave. For any pair of dual variables \((\mu ,\varvec{G})\), we can compute the value of the primal minimizers and their set:

Given the compactness of X and the form of the dual function (4), we can define the subdifferential of \(q(\mu , \varvec{G})\) at \(\mu \) and \(\varvec{G}\) as the following sets

Subgradient choices for \(q(\mu , \varvec{G})\) are therefore

for any choice of \(\tilde{\varvec{x}}\in \tilde{X}\). In addition, since X is compact and the constraints (2b)–(2c) are represented by continuous functions, the subgradients are bounded, and we set, for all \(i\in {V}\)

where we have defined \(h_i(\varvec{x}): = g_i(\varvec{x}_i)\), and \(\varvec{Q}_i(\varvec{x}): = -\varvec{A}_0/n- \varvec{A}_{i} {x}_i\). Finally, the Lagrangian dual problem can be written as

and by Slater condition (Assumption 2.3), strong duality holds: \(q^* = f^*\).

Since the original convex optimization problem (2) is decomposable, the Lagrangian function is separable as

and so is the dual function

and its subgradients.

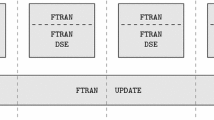

Dual decomposition with approximate primal recovery as defined in [4] is summarized in the following algorithm.

Dual decomposition with primal recovery | |

|---|---|

1. Initialize \(\mu ^0\in {\mathbb {R}}_+\), \(\varvec{G}^0\in {\mathbb {S}}_+^d\), choose a constant stepsize \(\alpha \); | |

2. Local dual optimization: compute in parallel the local dual functions and their primal optimizers | |

\(q_i(\mu ^k, \varvec{G}^k) = \min \limits _{x_i\in {X}_i} \left\{ L_{i}\left( x_i, \mu ^k, \varvec{G}^{k} \right) \right\} , \quad \tilde{x}_i^k = \mathop {\hbox {argmin}}\limits _{x_i\in {X}_i} \left\{ L_{i} \left( x_i, \mu ^k, \varvec{G}^{k} \right) \right\} , \quad \,\,\, \hbox {(12a)}\) | |

as well as their subgradients \(g_i(\tilde{x}_i^k)\) and \(-\varvec{A}_0/n-\varvec{A}_i \tilde{x}_i^k\); | |

3. Primal recovery step: compute in parallel the ergodic sum, for \(k\geqslant 1\) | |

\(x_i^k = \frac{1}{k} \sum \limits _{t=1}^{k} \tilde{x}_i^t; \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \hbox {(12b)}\) | |

4. Dual update: update the variables \(\mu ^k, \varvec{G}^k\) as | |

\(\mu ^{k+1} = {\mathsf {P}}_{{\mathbb {R}}_+}\Big [\mu ^{k} + \alpha \sum \limits _{i\in {V}} g_i(\tilde{x}_i^k)\Big ] \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \hbox {(12c)}\) | |

\(\varvec{G}^{k+1} = {\mathsf {P}}_{{\mathbb {S}}_+^d}\Big [\varvec{G}^{k} - \alpha \Big (\varvec{A}_0+\sum \limits _{i\in {V}}\varvec{A}_i \tilde{x}_i^k\Big ) \Big ]. \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \quad \,\,\, \hbox {(12d)}\) |

This algorithm generates a converging sequence \(\{x_i^k\}\) as detailed in the following theorem.

Theorem 3.1

Let the sequence \(\{\mu ^k, \varvec{G}^k, \varvec{x}^k\}\) be generated by the iterations (12). Let L and Q be defined as in (8). Under Assumptions 2.1 till 2.3,

-

(a)

the dual variables are bounded, i.e., \(\Vert \mu ^k\Vert _2 \leqslant \varLambda _0 < \infty \), \(\Vert \varvec{G}^k\Vert _{{\mathrm {F}}} \leqslant \varGamma _0 < \infty \), for all \(k\geqslant 1\);

-

(b)

an upper bound on the primal cost of the vector \(\varvec{x}^k\), \(k\geqslant 1\), is given by

$$\begin{aligned} f(\varvec{x}^k)\leqslant f^* + \frac{\varLambda _0^2 + \varGamma _0^2}{2 \alpha k} + \frac{\alpha n^2 (L^2+Q^2)}{2}; \end{aligned}$$ -

(c)

a lower bound on the primal cost of the vector \(\varvec{x}^k\), \(k\geqslant 1\), is given by

$$\begin{aligned} f(\varvec{x}^k)\geqslant f^* - \frac{\varLambda _0^2 + \varGamma _0^2}{ \alpha k}. \end{aligned}$$

Proof

The proof follows from [4, Lemma 3 and Proposition 1]. Since our optimization problem involves also a linear matrix inequality, some extra steps are needed in the proof of part (c). To be more specific, by following the same steps in the proof of [4, Proposition 1(c)], we arrive at the following inequality

where \(\mu ^*\geqslant 0\) and \(\varvec{G}^*\succeq 0\) are the optimal dual variables. We now need to find a lower bound for the rightmost term of (13). By similar arguments of the proof of [4, Proposition 1(a)], we obtain for all \(k \geqslant 1\)

Given the two positive semi-definite matrices \(\varvec{X}\) and \(\varvec{Y}\) of dimension \(n\times n\), we know that \({\mathrm {tr}}[\varvec{X}\,\varvec{Y}]\geqslant \lambda _{\min }(\varvec{X}) {\mathrm {tr}}[\varvec{Y}] \geqslant 0\), [36, Lemma 1], which means

This implies that for \(k\geqslant 1\)

where we have used Cauchy–Schwarz inequality [37]. By combining (15) and (14) with (13), we obtain the lower bound

and the claim is proven. \(\square \)

Although the dual decomposition method of [4] presents several advantages, in practice, the nodes will need to sum the subgradients coming from the whole network in Step 4 in order to maintain common dual variables. This is often not practical in large networks, because it would call for a significant communication overhead.

In the following sections, (i) we propose a consensus-based dual decomposition with primal recovery mechanism to modify Step 4 in order to make it suitable for limited information exchange (i.e., communication only with neighboring nodes); (ii) we prove dual and primal objective convergence of the proposed method up to a bounded error floor which depends (among other things) on the number of communication exchange with the neighboring nodes for each iteration k.

4 Basic Relations

Lemma 4.1

Suppose Assumption 2.1 till 2.3 hold. Let \(\bar{\mu } \geqslant 0\), \(\bar{\varvec{G}}\succeq 0\) be a pair of dual variables for which the set \(\bar{D} := \{({\mu } \geqslant 0, {\varvec{G}}\succeq 0)| q({\mu }, {\varvec{G}}) \geqslant q(\bar{\mu }, \bar{\varvec{G}}) \} \) is nonempty. Then, the set \(\bar{D}\) is bounded and we have

where \(\gamma := \min \Big \{\sum _{i\in {V}} -g_i(\bar{x}_i), \lambda _{\min }\big (\varvec{A}_0 + \sum _{i\in {V}} \varvec{A}_i \bar{x}_i\big ) \Big \}\), \(\lambda _{\min }(\cdot )\) is the smallest eigenvalue and \(\bar{\varvec{x}}\) is a vector satisfying the Slater condition.

Proof

The lemma follows from [4, Lemma 1] with minor modifications. In particular, we use [36, Lemma 1] to bound the inner product

and the fact that \(\Vert \varvec{G}\Vert _{{\mathrm {F}}} \leqslant {\text {tr}}[\varvec{G}]\), [37]. The remaining steps are omitted since similar to [4, Lemma 1]. \(\square \)

It follows from the result of the preceding lemma that under Slater, the dual optimal set \(D^*\) is nonempty. Since \(D^* := \{({\mu } \geqslant 0, {\varvec{G}}\succeq 0)| q({\mu }, {\varvec{G}}) \geqslant q^*\}\), by using Lemma 4.1, we obtain

Furthermore, although the dual optimal value \(q^*\) is not a priori available, one can compute a looser bound by computing the dual function for some couple \((\tilde{\mu } \geqslant 0, \tilde{\varvec{G}}\succeq 0)\). Owning to optimality, \(q^* \geqslant q(\tilde{\mu },\tilde{\varvec{G}})\), thus

This result is quite useful to render the dual decomposition method easier to study. In fact, as in [4], we can modify the sets over which we project in Step 4 by considering a bounded superset of the dual optimal solution set. This means that we can substitute Step 4 in (12) with

for a given scalar \(r > 0\). The nice feature of this modification is that both \(D_{\mu }\) and \(D_{\varvec{G}}\) are now compact convex sets. This does not increase computational complexity, and it is a useful modification, for it provides a leverage to derive the convergence rate results. In the following, for convergence purposes, we will use \(r \geqslant \frac{f(\bar{\varvec{x}}) - q(\tilde{\mu }, \tilde{\varvec{G}})}{\gamma }\).

5 Consensus-Based Dual Decomposition

We consider now a consensus-based update to enforce the update rule of dual decomposition in (16) to fit the constraint of a limited communication network. Our approach is inspired by the one of [18] but applied to the dual domain. First of all, we define a consensus matrix \({\varvec{W}}\in {\mathbb {R}}^{n\times n}\), with the following properties:

where \(\rho [\cdot ]\) returns the spectral radius and \(\nu \) is an upper bound on the value of the spectral radius. It is a common practice to generate such consensus matrices; a possible choice is the Metropolis-Hasting weighting matrix [38, 39].

A consensus iteration is a linear mapping \(\mathcal {C}(\varvec{x}): \varvec{x}\mapsto {\varvec{W}}\varvec{x}\) with the property that the result of its repeated application converges to the mean of the initial vector, i.e., for \(\varvec{x}\in {\mathbb {R}}^n\)

This averaging property is ensured, for example, by conditions as the ones in (17). In addition, given the structure of \({\varvec{W}}\) in (17), each consensus iteration involves only local communications (only the neighboring nodes will share their local variables), which will be the key point of our modification. In the following, we will study multiple consensus steps, in the sense that the computing nodes will run multiple consensus iterations (each of which involving only local communications) between subsequent iterations k’s. We let the number of consensus steps be \(\varphi \in \mathbb {N}\). In this case, the consensus mapping will be of the form \(\varvec{x}\mapsto {\varvec{W}}^\varphi \varvec{x}\). Since we will enable each node to generate its own dual variables on which consensus will be enforced, we start by defining local versions of \(\mu \) and \(\varvec{G}\) as \(\mu _i \in {\mathbb {R}}_+\) and \(\varvec{G}_i\in {\mathbb {S}}_+^d\), respectively. Next, we define our consensus-based dual decomposition as the following algorithm.

Consensus-based dual decomposition with primal recovery (CoBa-DD) | |

|---|---|

1. Initialize \(\mu ^0_i\in {\mathbb {R}}_+\), \(\varvec{G}^0_i\in {\mathbb {S}}_+^d\), \(i\in {V}\), choose \(\alpha > 0\), determine a Slater vector \(\bar{\varvec{x}}\) and the sets \(D_{\mu }\) and \(D_{\varvec{G}}\) of (16) with an arbitrarily picked \(\tilde{\mu }, \tilde{\varvec{G}}\) and a scalar \(r\geqslant \frac{f(\bar{\varvec{x}}) - q(\tilde{\mu }, \tilde{\varvec{G}})}{\gamma }\); pick a number of consensus steps \(\varphi \); | |

2. Local dual optimization: compute in parallel the local dual functions and their primal optimizers | |

\(q_i(\mu _i^k, \varvec{G}_i^k) = \min \limits _{x_i\in {X}_i} \{L_i(x_i, \mu _i^k, \varvec{G}_i^k)\}, \quad \tilde{x}_i^k = \mathop {\hbox {argmin}}\limits _{x_i\in {X}_i} \{L_i(x_i, \mu _i^k, \varvec{G}_i^k)\}, \qquad \qquad \qquad \hbox {(18a)}\) | |

as well as their subgradients \(g_i(\tilde{x}_i^k)\) and \(-\varvec{A}_0/n-\varvec{A}_{i} \tilde{x}_i^k\); | |

3. Primal recovery step: compute in parallel the ergodic sum, for \(k\geqslant 1\) | |

\(x_{i}^{k} = \frac{1}{k} \sum \limits _{t=1}^{k} \tilde{x}_i^t; \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \qquad \quad \qquad \hbox {(18b)}\) | |

4. Update the dual variables \(\mu _i^k, \varvec{G}_i^k\) as | |

\(\mu _i^{k+1} = {\mathsf {P}}_{D_{\mu }}\Big [\sum \limits _{j\in {V}} [{\varvec{W}}^\varphi ]_{ij} \Big ( \mu _j^{k} + \alpha g_j(\tilde{x}_j^k)\Big )\Big ]\qquad \qquad \qquad \qquad \quad \qquad \, \qquad \qquad \qquad \qquad \,\, \hbox {(18c)}\) | |

\(\varvec{G}_i^{k+1} = {\mathsf {P}}_{D_{\varvec{G}}}\Big [\sum \limits _{j\in {V}} [{\varvec{W}}^\varphi ]_{ij} \Big (\varvec{G}_j^{k} - \alpha (\varvec{A}_0/n + \varvec{A}_j \tilde{x}_j^k) \Big ) \Big ].\qquad \qquad \qquad \qquad \qquad \qquad \, \qquad \hbox {(18d)}\) |

We highlight that the proposed algorithm CoBa-DD (or (18)) involves only local communication. The only communication involved is in the \(\varphi \) consensus steps, each of which requiring the nodes to share information with their neighbors. Also, note that computing \((f(\bar{\varvec{x}})-q(\tilde{\mu }, \tilde{\varvec{G}}))/\gamma \) (for the definition of \(D_\mu \) and \(D_{\varvec{G}}\)) is not a very difficult task, since a Slater vector is usually easy to find by inspection, and both \(f(\bar{\varvec{x}})\) and \(\gamma \) can be computed by a consensus algorithm run in the initialization step of CoBa-DD.

In order to analyze dual and primal convergence of (18), we start by some basic results. First, given that the sets \(D_{\mu }\) and \(D_{\varvec{G}}\) are compact, and that \(\mu _i^0\) and \(\varvec{G}_i^0\) are picked to be bounded, the dual variables \(\mu _i^k\) and \(\varvec{G}_i^k\) are bounded for each \(k\geqslant 0\). In particular, we have

Lemma 5.1

Let \(q(\varvec{x}): {X} \rightarrow {\mathbb {R}}\) be a concave function. Let the set \( {X} \subset {\mathbb {R}}^n\) be convex and compact, and in particular \(\max _{\varvec{x}\in {X}} \Vert \varvec{x}\Vert _2 \leqslant \eta \). There exist two finite scalars \(\zeta > 0\) and \(\tau > 0\) such that, for all \(\varvec{x}\in {X}\), for all \(g(\varvec{x})\in \partial q_{\varvec{x}}(\varvec{x})\), and for all vectors \(\varvec{\nu }\in {\mathbb {R}}^n\) with \(\Vert \varvec{\nu }\Vert _2\leqslant \tau \), the following holds

Proof

The claim is proven by using the definition of subgradient of a concave function (1). Since q is a concave function, for all \(\varvec{x},\varvec{y}\in {X}, \varvec{\nu } \in {\mathbb {R}}^n\),

For \(\tau \leqslant \zeta /(2 \eta )\), the claim follows. \(\square \)

Lemma 5.2

Let the initial dual variables in (18), \(\mu _i^0\) and \(\varvec{G}^0_i\) for all \(i\in {V}\), be bounded. Let \({\varvec{W}}\) satisfy the conditions (17). Then, the following quantity is bounded by a certain \(c_0 \geqslant 0\),

Proof

The proof follows given the compactness of X and (therefore) the boundedness of the subgradients. \(\square \)

We now present the main convergence results.

Theorem 5.1

(Dual variable agreement) Let \(\bar{\mu }^k, \bar{\varvec{G}}^k\) be the mean values of the dual variables generated via the algorithm (18), i.e.,

Let Assumptions 2.1 till 2.3 hold and let \({\varvec{W}}\) satisfy the conditions (17). Let \(\mu _i^0\) and \(\varvec{G}^0_i\) for \(i\in {V}\) be bounded and let \(\beta _0\geqslant c_0\), with \(c_0\) defined as in (20). Define L and Q as in (8) and let

There exists a number of consensus iterations \(\bar{\varphi }\), such that if \(\varphi \geqslant \bar{\varphi } + \delta \), \(\delta \geqslant 0\), \(k \geqslant 1\), then the dual variables reach consensus as

Furthermore,

Corollary 5.1

Under the same conditions of Theorem 5.1, we obtain

Theorem 5.1 and Corollary 5.1 specify how the consensus is reached among the nodes on the value of the dual variables while the algorithm (18) is running. Specifically, the consensus is reached exponentially fast to a steady-state bounded error floor. This bounded error depends on \(\alpha \) (which can be tuned), and on p, which can also be tuned by varying \(\varphi \). In particular, for \(\varphi \rightarrow \infty \), due to the fact that \(\nu < 1\) in conditions (17), then \(p = 0\) and we obtain back the usual dual decomposition scheme with perfect agreement among the nodes.

Remark 5.1

Computing the lower bound on the number of consensus steps \(\bar{\varphi }\) can be done during the initialization of the algorithm. We can always pick \(\beta _0\) big enough so that \(\beta _0 \gg \alpha M\), which means that \(\bar{\varphi }\) can be simplified as \(\bar{\varphi } = \frac{\log (1/(4 n (1+d^2)))}{\log (\nu )}, \) which can be determined in a distributed way [40].

Theorem 5.2

(Dual objective convergence) Let \({\mu }^k, {\varvec{G}}^k\) be the dual variables generated via the algorithm (18). Let \(\mu _i^0\) and \(\varvec{G}^0_i\) for all \(i\in {V}\) be bounded and let \(\beta _0\) be defined as in Theorem 5.1. Define L and Q as in (8) and let \(M:= L+Q\). Choose a scalar \(\tau \) such that \(\beta _0 / \alpha \leqslant \tau \). Let \(\zeta \) be defined as in Lemma 5.1 for the concave function \(q(\mu ,\varvec{G})\) and the choice of \(\tau \). Let \(q^*\) be the optimal value of \(q(\mu ,\varvec{G})\). Let Assumptions 2.1 till 2.3 hold and let \({\varvec{W}}\) satisfy the conditions (17). Let \(\varphi \geqslant \bar{\varphi } + \delta , \delta \geqslant 0\) and let \(\bar{\varphi }\) be defined as in Theorem 5.1. The following holds true.

If \(q^* = \infty \), then

If \(q^* < \infty \), then

with \(\beta _{\infty } = \frac{p\,\alpha M}{1-p}\) and \(p = \frac{\nu ^\delta \beta _0}{\beta _0 + \alpha M}\).

Theorem 5.2 implies dual objective convergence up to a bounded error floor. Convergence is even more evident if we remember that owning to optimality, \(q(\mu ^k_i, \varvec{G}_i^k) \leqslant q^*\), and therefore, if we define \(q_i^{\infty } : = \limsup _{k\rightarrow \infty } q(\mu ^k_i, \varvec{G}_i^k)\), we obtain

Note that the rightmost term \((-\varepsilon ^2)\) represents a measure of sub-optimality of the approximate solution.

Theorem 5.3

(Primal objective convergence) Let \({\mu }^k, {\varvec{G}}^k, \varvec{x}^k\) be the dual and primal variables generated via the algorithm (18). Let \(\mu _i^0\) and \(\varvec{G}^0_i\) for all \(i\in {V}\) be bounded and let \(\beta _0\) be defined as in Theorem 5.1. Define L and Q as in (8), \(\varLambda \) and \(\varGamma \) as in (19), and let \(M:= L+Q\). Choose a scalar \(\tau \) such that \(\beta _0 / \alpha \leqslant \tau \). Let \(\zeta \) be defined as in Lemma 5.1 for the concave function \(q(\mu ,\varvec{G})\) and the choice of \(\tau \). Let \(f^*\) be the optimal value of \(f(\varvec{x})\). Let Assumptions 2.1 till 2.3 hold and let \({\varvec{W}}\) satisfy the conditions (17). Let \(\varphi \geqslant \bar{\varphi } + \delta , \delta \geqslant 0\) and let \(\bar{\varphi }\) be defined as in Theorem 5.1. The following holds true.

-

(a)

An upper bound on the primal cost of the vector \(\varvec{x}^k\), \(k\geqslant 1\), is given by

$$\begin{aligned} f(\varvec{x}^k) \leqslant f^* + \frac{\varLambda ^2 + \varGamma ^2}{2 k \alpha /n} +e_k; \end{aligned}$$ -

(b)

A lower bound on the primal cost of the vector \(\varvec{x}^k\), \(k\geqslant 1\), is given by

$$\begin{aligned} f(\varvec{x}^k) \geqslant f^* - \frac{9(\varLambda ^2 + \varGamma ^2)}{2 k \alpha /n} - e_k; \end{aligned}$$

where

Theorem 5.3 formulates convergence of the primal cost up to an error bound \(e_k\). The rate of convergence is O(1 / k). We can also distinguish the error terms that come from the constant stepsize \(\alpha \) and the terms that come from the finite number of consensus steps \(\varphi \). In particular, we can write

and see that the term (1) is due to the constant stepsize, while the term (2) is due to the finite number of consensus steps. Furthermore, if \(\varphi \rightarrow \infty \), then \(c_0 = 0\), and we can set \(\beta _0 = \tau = \zeta = 0\), yielding

This is similar to the error level we obtain for the dual decomposition method in (12), and Theorem 3.1. Theorem 5.3 defines the main trade-offs in designing the algorithm’s parameters \(\alpha \) and \(\varphi \). The larger the stepsize \(\alpha \) is, the faster the convergence is, even though the steady-state error becomes larger. If we increase \(\varphi \), then the communication effort increases and the error \(e_k\) decreases.

6 Proof of Theorems 5.1 and 5.2

6.1 Preliminaries

We start our analysis by rewriting Step 4 of (18) in a more compact way. Let \(\varvec{z}_i \in {\mathbb {R}}^{1+d^2}\) be the vector defined as \(\varvec{z}_i := (\mu _i, {\mathrm {vec}}(\varvec{G}_i)^{\mathsf {T}})^{\mathsf {T}}\), and let \(\varvec{z}_{{\mathrm {sv}}}\) be the stacked vector of all the \(\varvec{z}_i\), \(i\in {V}\). Similarly, let \(\varvec{h}_i(\varvec{x})\) be the vector \(\varvec{h}_i(\varvec{x}):= (g_i(x_i), {\mathrm {vec}}(-\varvec{A}_0/n - \varvec{A}_i x_i)^{\mathsf {T}})^{\mathsf {T}}\), and let \(\varvec{h}_{{\mathrm {sv}}}(\varvec{x})\) the stacked vector of all the \(\varvec{h}_i(\varvec{x})\), \(i\in {V}\). Let Z be the convex set

and let \(Z_{{\mathrm {sv}}}= \prod _{i=1}^n Z\). The iterations in Step 4 of (18) can be rewritten as

The iteration (22) represents a consensus-based subgradient method to maximize the dual function \(q(\mu , \varvec{G})\), i.e, the maximization problem

In particular, (22) assigns to each node a copy of \(\varvec{z}\), \(\varvec{z}_i\), and enforces consensus among them. Furthermore, by (8), by triangle inequality, and by (19),

Lemma 6.1

([18, Lemma 1]) Let \(\varvec{x}_i\in {\mathbb {R}}^m, i\in {V}\) be m-dimensional vectors. Let \(\bar{\varvec{x}}\) be the average value of \(\varvec{x}_i, i\in {V}\), i.e., \(\bar{\varvec{x}} = \frac{1}{n}\sum _{i\in {V}}\varvec{x}_i\). The following basic relations hold,

-

(a)

if \(\Vert \varvec{x}_i - \varvec{x}_j\Vert _2 \leqslant \beta ,\, \forall i,j\in {V}\), then \(\Vert \varvec{x}_i - \bar{\varvec{x}}\Vert _2 \leqslant \frac{n-1}{n} \beta \);

-

(b)

if \(\Vert \varvec{x}_i - \bar{\varvec{x}}\Vert _2 \leqslant \beta , \, \forall i\in {V}\), then \(\Vert \varvec{x}_i - \varvec{x}_j\Vert _2 \leqslant 2\beta \).

Lemma 6.2

([18, Lemma 2]) Let \(\varvec{x}^k \in {\mathbb {R}}^n\) be an n-dimensional vector, with components \(x_i\in {\mathbb {R}}, i=1,\ldots ,n\). Let \(\varvec{x}^{k+1} = {\varvec{W}}^\varphi \varvec{x}^k\), with \({\varvec{W}}\in {\mathbb {R}}^{n\times n}\) fulfilling conditions (17). Let \(\Vert x^k_i - x^k_j\Vert _2 \leqslant \sigma \), for a bounded \(\sigma \), and for all \(i,j = 1,\ldots ,n\). Then \(\Vert x^{k+1}_i - x^{k+1}_j\Vert _2 \leqslant 2 \nu ^\varphi n \sigma \) for all \(i,j = 1,\ldots ,n\).

Lemma 6.3

Let \(\{\varvec{z}_{{\mathrm {sv}}}^k\}\) be generated by (22) under Assumptions 2.1 till 2.3. Let \(\varvec{v}^k_i\in {\mathbb {R}}^{1+d^2}\), for all \(i\in {V}\) be defined as

and let \(\bar{\varvec{v}}^k\) be the average value of \(\varvec{v}^k_i, i\in {V}\), i.e., \(\bar{\varvec{v}}^k = \frac{1}{n}\sum _{i\in {V}}\varvec{v}_i^k\). There exists a \(\bar{\varphi }\geqslant 1\), such that if \(\varphi \geqslant \bar{\varphi }+\delta \) with \(\delta \geqslant 0\), then

Proof

The proof is an adaptation of [18, Lemma 3]. In particular, we can show that for all \(i,j\in V\)

Therefore, if we choose,

then

and the claim follows from Lemma 6.1 (a). In order to prove (24), we proceed as follows.

where \([\cdot ]_\ell \) extracts the \(\ell \)-th component of a vector. Define

Prior to the consensus, the distance between the iterates can be bounded as

which also implies \(\Vert [\varvec{u}_{i}^k - \varvec{u}_j^k]_\ell \Vert _2\leqslant 2(\beta +\alpha M)\). Given that \(\varvec{z}_i^{k+1} = {\mathsf {P}}[\varvec{v}_i^k], \forall i\), after consensus, we have

where \(\tilde{\varvec{u}}_\ell ^{k+1} = ([\varvec{u}^{k+1}_{1}]_\ell , \ldots ,[\varvec{u}^{k+1}_{n}]_\ell )^{\mathsf {T}}\). As said \(\Vert [\varvec{u}_{i}^k - \varvec{u}_j^k]_\ell \Vert _2\leqslant 2(\beta +\alpha M)\) which means \(\Vert [\tilde{\varvec{u}}_\ell ^k]_i - [\tilde{\varvec{u}}_\ell ^k]_j\Vert _2\leqslant 2(\beta +\alpha M)\). Thus, by using Lemma 6.2, we can bound (25) as

which is the rightmost term in (24) and the claim is proven. \(\square \)

6.2 Proof of Theorem 5.1

The quantity \(\Vert \varvec{v}^{0}_i - \bar{\varvec{v}}^{0}\Vert _2\) is upper bounded by \(\beta _0\geqslant c_0\) by Lemma 5.2 (inequality (20)), thus, \(\Vert \varvec{v}^{0}_i - \bar{\varvec{v}}^{0}\Vert _2\leqslant \beta _0\). Let us choose \(\varphi \geqslant \bar{\varphi } + \delta \), \(\delta \geqslant 0\), with \(\bar{\varphi }\) determined as in Theorem 5.1. Then, by Lemma 6.3 and (24), it follows that,

Let \(p:= \frac{\nu ^\delta \beta _0 }{\beta _0 + \alpha M}\), since \(p < 1\), then

and by Lemma 6.1 (b), we derive \(\Vert \varvec{v}^{k}_i - {\varvec{v}}^{k}_j\Vert _2 \leqslant 2 \beta _k\).

By using the nonexpansive property of the projection operator, since \(\varvec{z}_i^{k+1} = {\mathsf {P}}[\varvec{v}_i^k]\), for all i, we can write

and by Lemma 6.1 (a) the claim follows.

6.3 Proof of Theorem 5.2

We define an average value for \(\varvec{z}_{{\mathrm {sv}}}^k\) as \(\bar{\varvec{z}}^{k} = \frac{1}{n}\sum _{i\in {V}} \varvec{z}_i^k\). For convergence purposes, we need to keep track of the difference \(\bar{\varvec{z}}^{k+1} - {\mathsf {P}}_{Z}[\bar{\varvec{v}}^k]\), and thus we define the vectors \(\varvec{y}^k\in {\mathbb {R}}^{1+d^2}\) and \(\varvec{d}^k\in {\mathbb {R}}^{1+d^2}\) as

The main idea of the proof is to show that \(\varvec{y}\) is updated via an approximate \(\epsilon \)-subgradient method and, then, by using [41, Proposition 4.1], the theorem follows. The first part is formalized in the following lemma.

Lemma 6.4

Let \(\varvec{y}^k\) be defined as in (28). Under the same conditions of Theorem 5.2, for all \(k\geqslant 1\),

-

(a)

The quantity \(\Vert {\varvec{d}^k}/{\alpha }\Vert _2\) is upper bounded by \(\beta _{k-1}/\alpha \leqslant \tau \) (where \(\beta _k\) is defined in (26));

-

(b)

The following inequalities are true, for all \(i\in V\)

$$\begin{aligned} q(\varvec{y}^k)&\leqslant q(\varvec{z}_i^k) + 3 n M \beta _{k-1} \end{aligned}$$(29)$$\begin{aligned} q_i(\varvec{y})&\leqslant q_i(\varvec{y}^k) + \langle \varvec{h}_i(\tilde{\varvec{x}}^k)+\varvec{\nu }, \varvec{y}- \varvec{y}^k\rangle + \epsilon _{k}/n, \quad \forall \varvec{y}\in Z. \end{aligned}$$(30) -

(c)

The quantity \(\varvec{g}(\tilde{\varvec{x}}^k) := \sum _{i\in {V}}\left( \varvec{h}_i(\tilde{\varvec{x}}^k) + \frac{\varvec{d}^k}{\alpha } \right) \) is an \(\epsilon _k\)-subgradient of \(q (\varvec{y}^k)\) with respect to \(\varvec{y}\).

-

(d)

The variable \(\varvec{y}^k\) is updated via an \(\epsilon \)-subgradient method

$$\begin{aligned} \varvec{y}^{k+1} = {\mathsf {P}}_{Z} \Big [\varvec{y}^k + \frac{\alpha }{n} \varvec{g}(\tilde{\varvec{x}}^k) \Big ], \quad \varvec{g}(\tilde{\varvec{x}}^k) \in \partial _{\epsilon _k}q_{\varvec{y}} (\varvec{y}^k). \end{aligned}$$(31)

And \(\epsilon _k = n (\beta _{k-1}(6 M + 3\tau ) +\zeta )\).

Proof

(a) We start by bounding \(\Vert \varvec{d}^k\Vert _2\),

where we have used the inequality (26) to bound the term \(\Vert \varvec{v}_i^{k-1}-\bar{\varvec{v}}^{k-1} \Vert _2\).

(b) Since \(\varvec{y}^k\in Z\) and \(\varvec{z}_i^k \in Z\), by the concavity of \(q_i(\varvec{z})\) and the definition of subgradient of a concave function (1), we can write for all \(i,j \in V\)

In particular, we have used the fact that any subgradient vector of \(q_j(\varvec{z})\) is bounded by M (23a), and inequality (27). If we sum the last relation over \(j\in {V}\), we obtain (29). In addition for any \(\varvec{y}\in Z\), by using Lemma 5.1

We use the fact that \(\Vert \varvec{\nu }\Vert _2\leqslant \tau \) by construction in Lemma 5.1, \(\Vert \varvec{h}_i(\tilde{\varvec{x}}^k)\Vert _2 \leqslant M\) by (23a), \(\Vert \varvec{z}_i^k - \bar{\varvec{z}}^k\Vert _2 \leqslant 2\beta _{k-1}\) by (27), and \(\Vert \varvec{d}^k\Vert _2\leqslant \beta _{k-1}\) by the preceding proof. By using these inequalities, we can bound

and we obtain

which is (30).

(c) By using the definition of subdifferential (1), the inequality (30) implies \((\varvec{h}_i(\tilde{\varvec{x}})+\varvec{\nu }) \in \partial _{\epsilon _k/n} q_{i,\varvec{y}}(\varvec{y})\) with \(\epsilon _k/n = (\beta _{k-1}(6M +3\tau ) + \zeta )\). Summation over i yields,

for any \(\varvec{\nu }\), such that \(\Vert \varvec{\nu }\Vert \leqslant \tau \). Since \(\Vert \varvec{d}^k/\alpha \Vert _2 \leqslant \tau \) by construction, then we can choose \(\varvec{\nu } = \varvec{d}^k/\alpha \), from which the claim follows.

(d) It is sufficient to write explicitly the update rule for \(\varvec{y}^k\). Starting from the definition of \(\varvec{y}^{k+1}\) in (28) and the definition of \(\varvec{v}_i^k\) in Lemma 6.3, we obtain

Given part (c) of this Lemma, the claim follows. \(\square \)

Proof

(of Theorem 5.2) By Lemma 6.4, the sequence \(\{\varvec{y}^k\}\) is generated via an \(\epsilon _k\) subgradient algorithm to maximize \(q(\varvec{y})\). And in particular, \(k\geqslant 1\)

Therefore, we can use any standard result on the convergence of approximate subgradient algorithms. For example, by using [41, Proposition 4.1] (with \(m=1\)), the following holds for the sequence \(\{\varvec{y}^k\}\),

If \(q^* = \infty \), then

If \(q^* < \infty \), then

where \(\beta _\infty = \lim _{k\rightarrow \infty } \beta _{k-1}\). Then, from the inequality (29), the claim is proven. \(\square \)

7 Primal Recovery: Proof of Theorem 5.3

7.1 Some Basic Facts

Lemma 7.1

Let \(\varvec{y}^k\) be defined as (28). Under the same assumptions and notation of Theorem 5.2,

-

(a)

For any \(\varvec{y}\in Z\),

$$\begin{aligned} \sum _{t=1}^k \langle \varvec{g}(\tilde{\varvec{x}}^t),\varvec{y}-\varvec{y}^{t}\rangle \leqslant \frac{\Vert \varvec{y}^{1} - \varvec{y}\Vert ^2_2}{2 \alpha /n} + k\,\frac{\alpha n(M+\tau )^2}{2}; \end{aligned}$$ -

(b)

For any \(\varvec{y}\in Z\),

$$\begin{aligned} \sum _{t=1}^k \langle \varvec{g}(\tilde{\varvec{x}}^t),\varvec{y}-\varvec{y}^{*}\rangle \leqslant \frac{\Vert \varvec{y}^{1} - \varvec{y}\Vert ^2_2}{2 \alpha /n} + k\,\frac{\alpha n(M+\tau )^2}{2} + \sum _{t=1}^k \epsilon _t, \end{aligned}$$

where \({\epsilon _{t}} = n (\beta _{t-1} (6M + 3\tau )+\zeta )\).

Proof

We start from the update rule (31). For any \(\varvec{y}\in Z\),

where we use the fact that \(\Vert \varvec{g}(\tilde{\varvec{x}}^k)\Vert _2 = \Vert \sum _{i\in {V}}(\varvec{h}_i(\tilde{\varvec{x}}^k)+\varvec{d}^k/\alpha ) \Vert _2 \leqslant n (M+\tau )\). Therefore, for any \(\varvec{y}\in Z\)

and by summing over k, part (a) follows. Since \(\varvec{g}(\tilde{\varvec{x}}^k)\) is an \(\epsilon _k\)-subgradient of the dual function q at \(\varvec{y}^k\), using the subgradient inequality (1),

where the last inequality comes from the optimality condition \(q(\varvec{y}^k) \leqslant q(\varvec{y}^*)\), which is valid for any \(\varvec{y}^k\in {Z}\). In particular, \(\epsilon _k\) is defined in Lemma 6.4 (c). We then have

From the preceding relation and (32), we obtain

and summing over k part (b) follows as well. In particular, we remark that \(\varvec{y}^1 = {\mathsf {P}}_Z[\bar{\varvec{v}}^0]\), which is bounded, since Z is a compact set. \(\square \)

7.2 Proof of Theorem 5.3 (a)

Proof

By convexity of the primal cost \(f(\varvec{x})\) and the definition of \(\tilde{x}^k_i\) as a minimizer of the local Lagrangian functions over \(x_i\in {X}_i\), we have,

By Lemma 6.4 inequality (30) with \(\varvec{y}= \varvec{z}_i^t \in Z\),

with \(\epsilon _t/n = \beta _{t-1}(6 M + 3\tau ) + \zeta \). Summing over \(i\in {V}\),

hence,

We can use Lemma 7.1 (a) with \(\varvec{y}= \mathbf {0} \in Z\) to upper bound \(-\langle \varvec{g}(\tilde{\varvec{x}}^t), \varvec{y}^t\rangle \), while we bound \(\Vert \langle \varvec{\nu },\varvec{z}_i^t\rangle \Vert _2\) as \(\Vert \langle \varvec{\nu }, \varvec{z}_i^t\rangle \Vert _2 \leqslant \tau (\varLambda + \varGamma )\). The latter bound comes from the fact that by construction \(\Vert \varvec{\nu }\Vert _2\leqslant \tau \), and \(\Vert \varvec{z}_i^t\Vert _2\leqslant \varLambda + \varGamma \) by (23a). With this in place, we can write (34) as

If we now compute

and remember that by optimality \(q(\varvec{y}^t) \leqslant q^*\), \(q^* = f^*\) by strong duality (Assumption 2.3), and \(\Vert \varvec{y}^1\Vert _2^2 \leqslant \varLambda ^2+\varGamma ^2\), then the claim follows. \(\square \)

7.3 Proof of Theorem 5.3. (b)

Proof

Given any dual optimal solution \(\varvec{y}^*\), we have

We also know that,

where we used the fact that \(\varvec{h}_i(\tilde{\varvec{x}}^t)\) is a convex function of \(\tilde{\varvec{x}}^t\) and therefore,

and the Cauchy–Schwarz inequality to bound

Furthermore, by the saddle point property of the Lagrangian function, i.e., for any \(\varvec{x}\in X, \varvec{y}\in Z\)

and the fact that under strong duality (Assumption 2.3) \(L(\varvec{x}^*, \varvec{y}^*) = q^* = f^*\), we can write

We can now upper bound \(\Big \langle \varvec{y}^*,\frac{1}{k}\sum _{t=1}^k \varvec{g}(\tilde{\varvec{x}}^t) \Big \rangle \) in (36) as in Lemma 7.1 (b), with \(\varvec{y}= 2 \varvec{y}^* \in Z \) (by the definition of r). By substituting this bound in (36) and by combining it with (37) and (38), we get

From the upper bound (35), and \(\Vert \varvec{y}^1 - 2\varvec{y}^*\Vert ^2_2 = \Vert \varvec{y}^1\Vert ^2_2 + 4\Vert \varvec{y}^1\Vert _2\Vert \varvec{y}^*\Vert _2 + 4\Vert \varvec{y}^*\Vert ^2_2\), which can be upper bounded as \(9(\varLambda ^2+\varGamma ^2)\), the claim follows. \(\square \)

8 Numerical Results

In this section, we present some numerical results to assess the proposed algorithm for different \(\varphi \) values in comparison with the standard dual decomposition. We choose the following simple yet representative sample problem,

where each \(\sigma _i\in [0,1]\) is drawn from a uniform random distribution. This type of problem has been considered, e.g., in network utility maximization contexts [23]. We solve the problem in Matlab with Yalmip and SDPT3 [42, 43], where we also implement the proposed algorithm.Footnote 1

For this problem a Slater vector is \(x_i = 0\) for all i; furthermore \(\gamma = 10\), while q(0) is solvable by inspection \((x_i = 1)\) and gives (for our realization of \(\sigma _i\)) \(r = 8.62\). The communication network is randomly selected, and the average number of neighbors is 3.12.

Figure 1 depicts convergence, and it is in line with our theoretical findings: The error decreases as O(1 / k) till it reaches a bounded error floor. This bounded error floor depends on both \(\varphi \) and \(\alpha \) as captured in Theorem 5.3. We have also plotted the performance of the standard dual decomposition, which (in the absence of a master node) requires reaching complete consensus at each iteration (in theory \(\varphi \rightarrow \infty \), but we have set \(\varphi = 26\), which yields a full \({\varvec{W}}^\varphi \)).

Figure 2 shows the relative error with respect to the total number of messages the nodes are exchanging. We can see that, in the absence of a master node, the proposed consensus-based algorithm involves significantly fewer number of messages than the standard dual decomposition for the same accuracy level (till up to 1 % error). This is very important in real-life applications.

9 Future Research Questions

Future research encompasses the following points.

First of all, we have used the ergodic mean to recover the primal solution. The reason for it is mainly technical: It helps to derive convergence rate results, via a telescopic cancelation argument. Other convex combinations have been advocated, e.g., in [12], but the results they can offer are typically asymptotical and require vanishing stepsizes. An open question is whether other combinations for primal recovery are possible using constant stepsizes.

Then, in the derivation, we have limited ourselves to objective convergence. It would be relevant to investigate convergence of the ergodic mean to the optimizer set, either in the general convex case or in the strong convex scenario.

Finally, the bound on \(\varphi \), i.e., \(\bar{\varphi }\) has been derived in such a way that we could use \(\epsilon \)-subgradient arguments in the rest of the convergence proofs. However, it is quite conservative (in fact, in practice, \(\varphi \) can be as small as 1, but this is often not captured by the bound in Theorem 5.1). This is due to Lemma 6.2 and the use of the spectral radius as an upper bound. A thorough investigation is left for future research.

10 Conclusions

A consensus-based dual decomposition scheme has been proposed to enable a network of collaborative computing nodes to generate approximate dual and primal solutions of a distributed convex optimization problem. We have proven convergence of the scheme both in the dual and the primal objective senses up to a bounded error floor. The proposed scheme is of theoretical and applied importance since it eliminates the need for a centralized entity (i.e., a master node) to collect the local subgradient information, by distributing this task among the nodes. This need has been a major hurdle in the use of dual decomposition for solving certain classes of distributed optimization problems.

Notes

The code is available at: http://ens.ewi.tudelft.nl/~asimonetto/NumericalExample.zip.

References

Bertsekas, D.P.: Nonlinear Programming, 2nd edn. Athena Scientific (1999)

Johansson, B.: On Distributed Optimization in Networked Systems. Ph.D. thesis, KTH, Stockholm, Sweden (2008)

Boyd, S., Xiao, L., Mutapcic, A., Mattingley, J.: Notes on Decomposition Methods. Tech. rep., Stanford University (2008)

Nedić, A., Ozdaglar, A.: Approximate primal solutions and rate analysis for dual subgradient methods. SIAM J. Optim. 19(4), 1757–1780 (2009)

Polyak, B.T.: Introduction to Optimization. Optimization Software Inc (1987)

Kiwiel, K.: Convergence of approximate and incremental subgradient methods for convex optimization. SIAM J. Optim. 14(3), 807–840 (2004)

Nemirovskii, A.S., Yudin, D.B.: Cesaro convergence of gradient method approximation of saddle points for convex-concave functions. Doklady Akademii Nauk SSSR 239, 1056–1059 (1978)

Shor, N.Z.: Minimization Methods for Nondifferentiable Functions. Springer, Berlin (1985)

Sherali, H.D., Choi, G.: Recovery of primal solutions when using subgradient optimization methods to solve lagrangian duals of linear programs. Oper. Res. Lett. 19, 105–113 (1996)

Larsson, T., Patriksson, M., Stömberg, A.B.: Ergodic primal convergence in dual subgradient schemes for convex programming. Math. Program. 86, 283–312 (1999)

Ma, J.: Recovery of Primal Solution in Dual Subgradient Schemes. Master’s thesis, MIT (2007)

Gustavsson, E., Patriksson, M., Strömberg, A.B.: Primal Convergence from Dual Subgradient Methods for Convex Optimization. Math. Program. 150(2), 365–390 (2015)

Necoara, I., Nedelcu, V.: Rate analysis of inexact dual first-order methods: application to dual decomposition. IEEE Trans. Autom. Control 59(5), 1232–1243 (2014)

Nedić, A., Ozdaglar, A.: Subgradient methods for saddle-point problems. J. Optim. Theory Appl. 142(1), 205–228 (2009)

Jakubiec, F.Y., Ribeiro, A.: D-MAP: distributed maximum a posteriori probability estimation of dynamic systems. IEEE Trans. Signal Process. 61(2), 450–466 (2013)

Simonetto, A., Leus, G.: Distributed asynchronous time-varying constrained optimization. In: Proceedings of the Asilomar Conference on Signals, Systems, and Computers. Pacific Grove, USA (2014)

Simonetto, A., Kester, L., Leus, G.: Distributed Time-Varying Stochastic Optimization and Utility-based Communication (2014). arxiv.org/abs/1408.5294

Johansson, B., Keviczky, T., Johansson, M., Johansson, K.H.: Subgradient Methods and Consensus Algorithms for Solving Convex Optimization Problems. In: Proceedings of the 47th IEEE Conference on Decision and Control, pp. 4185–4190. Cancun, Mexico (2008)

Srivastava, K., Nedić, A.: Distributed asynchronous constrained stochastic optimization. IEEE Trans. Sel. Top. Signal Process. 5(4), 772–790 (2011)

Nedić, A., Ozdaglar, A., Parrilo, P.A.: Constrained consensus and optimization in multi-agent networks. IEEE Trans. Autom. Control 55(4), 922–938 (2010)

Duchi, J.C., Agarwal, A., Wainwright, M.: Dual averaging for distributed optimization: convergence analysis and network scaling. IEEE Trans. Autom. Control 57(3), 592–606 (2012)

Bürger, M., Notarstefano, G., Allgöwer, F.: A polyhedral approximation framework for convex and robust distributed optimization. IEEE Trans. Autom. Control 59(2), 384–395 (2014)

Palomar, D., Chiang, M.: A tutorial on decomposition methods for network utility maximization. IEEE J. Sel. Areas Commun. 24(8), 1439–1451 (2006)

Wei, E., Ozdaglar, A., Jadbabaie, A.: A distributed newton method for network utility maximization. In: Proceedings of the 49th IEEE Conference on Decision and Control, pp. 1816–1821. Orlando, FL, USA (2010). doi:10.1109/CDC.2010.5718026

Doan, M.D.: Distributed Model Predictive Controller Design Based on Distributed Optimization. Ph.D. thesis, Delft University of Technology (2012)

Jamali-Rad, H., Simonetto, A., Leus, G.: Sparsity-aware sensor selection: centralized and distributed algorithms. IEEE Signal Process. Lett. 21(2), 217–220 (2014)

Joshi, S., Boyd, S.: Sensor selection via convex optimization. IEEE Trans. Signal Process. 57(2), 451–462 (2009)

Necoara, I., Suykens, J.: Application of a smoothing technique to decomposition in convex optimization. IEEE Trans. Autom. Control 53(11), 2674–2679 (2008)

Koshal, J., Nedić, A., Shanbhag, U.Y.: Multiuser optimization: distributed algorithms and error analysis. SIAM J. Optim. 21(3), 1046–1081 (2011)

Lu, J., Johansson, M.: A Multi-agent projected dual gradient method with primal convergence guarantees. In: Proceedings of the 51st Allerton Conference, pp. 275–281. Monticello, IL, USA (2013)

Lu, J., Johansson, M.: Convergence analysis of approximate primal solutions in dual first-order methods. Math. Program. (submitted) (2013). arXiv:1502.06368

Necoara, I., Nedelcu, V.: Distributed Dual Gradient Methods and Error Bound Conditions (2014). arXiv:1401.4398

Simonetto, A., Leus, G.: Distributed maximum likelihood sensor network localization. IEEE Trans. Signal Process. 62(6), 1424–1437 (2014)

Beck, A., Nedić, A., Ozdaglar, A., Teboulle, M.: An \(O(1/k)\) gradient method for network resource allocation problems. IEEE Trans. Control Netw. Syst. 1(1), 64–73 (2014)

Simonetto, A., Leus, G.: Double smoothing for time-varying distributed multi-user optimization. In: Proceedings of the IEEE Global Conference on Signal and Information Processing, Atlanta, USA (2014)

Wang, S.D., Kuo, T.S., Hsu, C.F.: The bounds on the solution of the algebraic Riccati and Lyapunov equations. IEEE Trans. Autom. Control 31(7), 654–656 (1986)

Golub, G.H., Van Loan, C.F.: Matrix Computations, 3rd edn. Johns Hopkins University Press, Baltimore (1996)

Xiao, L., Boyd, S.: Fast linear iterations for distributed averaging. Syst. Control Lett. 53(1), 65–78 (2003)

Xiao, L., Boyd, S.: Optimal scaling of a gradient method for distributed resource allocation. J. Optim. Theory Appl. 129(3), 469–488 (2006)

Kempe, D., McSherry, F.: A decentralized algorithm for spectral analysis. J. Comput. Syst. Sci. 74(1), 70–83 (2008)

Nedić, A.: Subgradient Methods for Convex Minimization. Ph.D. thesis, MIT (2002)

Löfberg, J.: YALMIP: A toolbox for modeling and optimization in MATLAB. In: Proceedings of the CACSD Conference, Taipei, Taiwan (2004)

Toh, K.C., Todd, M.J., Tutuncu, R.H.: SDPT3—a Matlab software package for semidefinite programming. Optim. Methods Softw. 11, 545–581 (1999)

Acknowledgments

This work was supported in part by STW under the D2S2 project from the ASSYS program (10561) and in part by NWO-STW under the VICI program (10382).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Simonetto, A., Jamali-Rad, H. Primal Recovery from Consensus-Based Dual Decomposition for Distributed Convex Optimization. J Optim Theory Appl 168, 172–197 (2016). https://doi.org/10.1007/s10957-015-0758-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-015-0758-0

Keywords

- Distributed convex optimization

- Dual decomposition

- Primal recovery

- Consensus algorithm

- Subgradient optimization

- Epsilon-subgradient

- Ergodic convergence