Abstract

We study random compositions of transformations having certain uniform fiberwise properties and prove bounds which in combination with other results yield a quenched central limit theorem equipped with a convergence rate, also in the multivariate case, assuming fiberwise centering. For the most part we work with non-stationary randomness and non-invariant, non-product measures. Independently, we believe our work sheds light on the mechanisms that make quenched central limit theorems work, by dissecting the problem into three separate parts.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 The Problem

In the following we will study random compositions \(T_{\omega _n}\circ \cdots \circ T_{\omega _1}\) of maps where \(\omega = (\omega _n)_{n\ge 1}\) is a sequence drawn randomly from a probability space \((\Omega ,{{\mathcal {F}}},{{\mathbb {P}}}) = (\Omega _0^{{{\mathbb {Z}}}_+},{{\mathcal {E}}}^{{{\mathbb {Z}}}_+},{{\mathbb {P}}}).\) Here \((\Omega _0,{{\mathcal {E}}})\) is a measurable space and \({{\mathbb {Z}}}_+ = \{1,2,\ldots \}.\) For each \(\omega _0\in \Omega _0,\,T_{\omega _0}:X\rightarrow X\) is a measurable self-map on the same measurable space \((X,{{\mathcal {B}}}).\) Consider the shift transformation

We assume that \(\tau \) is \({{\mathcal {F}}}\)-measurable, but does not necessarily preserve the probability measure \({{\mathbb {P}}}.\) Next, define the map

with the convention \(\varphi (0,\omega ,x) = x.\) We assume that the map \(\varphi (n,\,\cdot \,,\,\cdot \,)\) is measurable from \({{\mathcal {F}}}\otimes {{\mathcal {B}}}\) to \({{\mathcal {B}}}\) for every \(n\in {{\mathbb {N}}}= \{0,1,\ldots \}.\) The maps \(\varphi (n,\omega ) = \varphi (n,\omega ,\,\cdot \,) : X\rightarrow X\) form a cocycle over the shift \(\tau ,\) which means that the identities \(\varphi (0,\omega ) = {\mathrm {id}}_X\) and \(\varphi (n+m,\omega ) = \varphi (n,\tau ^m\omega )\circ \varphi (m,\omega )\) hold.

Remark 1.1

There is no fundamental reason for working with one-sided time other than that the randomness in our paper is mostly non-stationary—a context in which the concept of an infinite past is perhaps unnatural. For stationary randomness there is no obstacle for two-sided time. The other reason is plain philosophy: our concern will be the future, and whether the observed system has been running before time 0 we choose to ignore—without damage as long as our assumptions (specified later) hold from time 0 onward.

Consider an observable \(f:X\rightarrow {{\mathbb {R}}}.\) Introducing notations, we write

as well as

Given an initial probability measure \(\mu ,\) we write \({\bar{f}}_i\) and \({\bar{W}}_n\) for the corresponding fiberwise-centered random variables:

Note that all of these depend on \(\omega .\) Next, we define

Note that \(\sigma _n^2\) depends on \(\omega .\)

It is said that a quenched CLT equipped with a rate of convergence holds if there exists \(\sigma >0\) such that \(d({\bar{W}}_n, \sigma Z)\) tends to zero with some (in our case, uniform) rate for almost every \(\omega .\) Here \(Z\sim {{\mathcal {N}}}(0,1)\) and the limit variance \(\sigma ^2\) is independent of \(\omega .\) Moreover, d is a distance of probability distributions which we assume to satisfy

and

at least when \(\sigma >0\) and \(\sigma _n\) is close to \(\sigma ;\) and that \(d({\bar{W}}_n,\sigma Z)\rightarrow 0\) implies weak convergence of \({\bar{W}}_n\) to \({{\mathcal {N}}}(0,\sigma ^2).\) One can find results in the recent literature that allow to bound \(d(\bar{W}_n,\sigma _n Z);\) see Nicol–Török–Vaienti [19] and Hella [13]. In this paper we supplement those by providing conditions which allow to identify a non-random \(\sigma \) and to obtain a bound on \(|\sigma _n(\omega ) - \sigma |\) which tends to zero at a certain rate for almost every \(\omega ,\) which is a key feature of quenched CLTs.

Our strategy is to find conditions such that \(\sigma _n^2(\omega )\) converges almost surely to

This is motivated by two observations: (i) if \(\lim _{n\rightarrow \infty }\sigma _n^2 = \sigma ^2\) almost surely, dominated convergence should yield the equation above, and (ii) \({{\mathbb {E}}}\sigma _n^2\) is the variance of \({\bar{W}}_n\) with respect to the product measure \({{\mathbb {P}}}\otimes \mu ,\) since \(\mu ({\bar{W}}_n) = 0\):

Remark 1.2

One has to be careful and note that \({\bar{W}}_n\) has been centered fiberwise, with respect to \(\mu \) instead of the product measure. Therefore, \({{\,\mathrm{\mathrm {Var}}\,}}_{{{\mathbb {P}}}\otimes \mu } {\bar{W}}_n\) and \({{\,\mathrm{\mathrm {Var}}\,}}_{{{\mathbb {P}}}\otimes \mu } W_n\) differ by \({{\,\mathrm{\mathrm {Var}}\,}}_{{\mathbb {P}}}\mu (W_n)\):

In special cases it may happen that \({{\,\mathrm{\mathrm {Var}}\,}}_{{\mathbb {P}}}\mu (W_n)\rightarrow 0,\) or even \({{\,\mathrm{\mathrm {Var}}\,}}_{{\mathbb {P}}}\mu (W_n) = 0\) if all the maps \(T_{\omega _i}\) preserve the measure \(\mu ,\) whereby the distinction vanishes and the use of a non-random centering becomes feasible. We will briefly return to this point in Remark C.2 motivated by a result in [1]. A related observation is made in Remark A.3 which answers a question raised in [2] concerning the trick of “doubling the dimension”.

To implement the strategy, we handle the terms on the right side of

separately, obtaining convergence rates for both. Note that these are of fundamentally different type: the first one concerns almost sure deviations of \(\sigma ^2_n\) about the mean, while the second one concerns convergence of said mean together with the identification of the limit.

Remark 1.3

That the required bounds can be obtained illuminates the following pathway to a quenched central limit theorem:

- (1)

\(d({\bar{W}}_n,\sigma _n Z) \rightarrow 0\) almost surely,

- (2)

\(\sigma ^2_n - {{\mathbb {E}}}\sigma _n^2 \rightarrow 0\) almost surely,

- (3)

\({{\mathbb {E}}}\sigma _n^2 \rightarrow \sigma ^2\) for some \(\sigma ^2>0,\)

where the last step involves the identification of \(\sigma ^2.\)

Remark 1.4

Let us emphasize that in general we do not assume \({{\mathbb {P}}}\) to be stationary or of product form; \(\mu \) to be invariant for any of the maps \(T_{\omega _i};\) or \({{\mathbb {P}}}\otimes \mu \) (or any other measure of similar product form) to be invariant for the random dynamical system (RDS) associated to the cocycle \(\varphi .\)

Quenched limit theorems for RDSs are abundant in the literature, going back at least to Kifer [14]. Nevertheless they remain a lively topic of research to date: Recent central limit theorems and invariance principles in such a setting include Ayyer–Liverani–Stenlund [4], Nandori–Szasz–Varju [18], Aimino–Nicol–Vaienti [2], Abdelkader–Aimino [1], Nicol–Török–Vaienti [19], Dragičević et al. [9, 10], and Chen–Yang–Zhang [8]. Moreover, Bahsoun et al. [5,6,7] establish important optimal quenched correlation bounds with applications to limit results, and Freitas–Freitas–Vaienti [11] establish interesting extreme value laws which have attracted plenty of attention during the past years.

Structure of the paper the main result of our paper is Theorem 4.1 in Sect. 4. It is an immediate corollary of Theorem 2.14 of Sect. 2, which concerns \(|\sigma _n^2(\omega ) - {{\mathbb {E}}}\sigma _n^2|,\) and of Theorem 3.9 of Sect. 3, which concerns \(|{{\mathbb {E}}}\sigma _n^2 - \sigma ^2|.\) In Sect. 4 we also explain how the results of this paper extend to the vector-valued case \(f:X\rightarrow {{\mathbb {R}}}^d.\) As the conditions of our results may appear a bit abstract, Remark 4.5 in Sect. 4 contains examples of systems where these conditions have been verified.

At the end of the paper the reader will find several appendices, which are integral parts of the paper: in Appendix A we interpret the limit variance \(\sigma ^2\) in the language of RDSs and skew products. In Appendix B we present conditions for \(\sigma ^2>0.\) In Appendix C, we discuss how the fiberwise centering in the definition of \({\bar{W}}_n\) affects the limit variance. For completeness, in Appendix D we elaborate on the structure of an invariant measure intimately related to the problem.

2 The Term \(|\sigma _n^2(\omega ) - {{\mathbb {E}}}\sigma _n^2|\)

In this section identify conditions which guarantee that, almost surely, \(|\sigma _n^2(\omega ) - {{\mathbb {E}}}\sigma _n^2|\) tends to zero at a specific rate.

Standing Assumption (SA1) throughout this paper we will assume that f is a bounded measurable function and \(\mu \) is a probability measure. We also assume that a uniform decay of correlations holds in that

almost surely, where \(\eta :{{\mathbb {N}}}\rightarrow [0,\infty )\) is such that

\(\blacksquare \)

Note already that

because \(\lim _{i\rightarrow \infty }i\eta (i) = 0.\) For the most part, we shall require additional conditions on \(\eta .\)

For future convenience, let us introduce the random variables

(where \(\delta _{ij} = 1\) if \(i=j,\) and \(\delta _{ij} = 0\) otherwise) and their centered counterparts

Note that these are uniformly bounded. We also denote

Thus, our objective is to show \({\tilde{\sigma }}_n^2 \rightarrow 0\) at some rate.

The following lemma is readily obtained by a well-known computation:

Lemma 2.1

Assuming (2), there exists a constant \(C>0\) such that

for all \(\omega .\)

Proof

First, we compute

Here

The last sums tend to zero by assumption. \(\square \)

Suppose that \(\eta (0)=A\) and \(\eta (n) = An^{-\psi },\,n\ge 1,\) for some constants \(A\ge 0,\psi >0.\) We then use shorthand notation \(\eta (n) = An^{-\psi },\) i.e., we interpret \(0^{-\psi }=1.\)

Corollary 2.2

Suppose \(\eta (n) = An^{-\psi },\) where \(\psi >1.\) Then

We skip the elementary proof based on Lemma 2.1.

Remark 2.3

Of course, the upper bounds in the preceding results apply equally well to

The following result, which has been used in dynamical systems papers including Melbourne–Nicol [17], will be used to obtain an almost sure convergence rate of \(\frac{1}{n}\sum _{i=0}^{n-1} {\tilde{v}}_i\) to zero:

Theorem 2.4

(Gál–Koksma [12]; see also Philipp–Stout [20]) Let \((X_n)\) be a sequence of centered, square-integrable, random variables. Suppose there exist \(C>0\) and \(q>0\) such that

for all \(m\ge 0\) and \(n\ge 1.\) Let \(\delta >0\) be arbitrary. Then, almost surely,

Remark 2.5

In this paper the theorem is applied in the range \(1\le q<2.\) In particular, \(n^q + m^q \le (n+m)^q\) then holds, so it suffices to establish an upper bound of the form \(Cn^q.\)

Our application of Theorem 2.4 will be based on the following standard lemma:

Lemma 2.6

Suppose \(|{{\mathbb {E}}}[X_iX_k]| \le r(|k-i|)\) where \(r(k) = O(k^{-\beta }).\) There exists a constant \(C>0\) such that

Proof

Note that

Bounding the last sum in each case yields the result. \(\square \)

2.1 Dependent Random Selection Process

It is most interesting to study the case where the sequence \(\omega = (\omega _i)_{i\ge 1}\) is generated by a non-trivial stochastic process such that the measure \({{\mathbb {P}}}\) is not the product of its one-dimensional marginals. Essentially without loss of generality, we pass directly to the so-called canonical version of the process, which corresponds to the point of view that the sequence \(\omega \)is the seed of the random process. In the following we briefly review some standard details.

Let \(\pi _i:\Omega \rightarrow \Omega _0\) be the projection \(\pi _i(\omega ) = \omega _i.\) The product sigma-algebra \({{\mathcal {F}}}\) is the smallest sigma-algebra with respect to which all the latter projections are measurable. For any \(I = (i_1,\ldots ,i_p)\subset {{{\mathbb {Z}}}_+},\, p\in {{{\mathbb {Z}}}_+}\cup \{\infty \},\) we may define the sub-sigma-algebra \({{\mathcal {F}}}_I = \sigma (\pi _i:i\in I)\) of \({{\mathcal {F}}}.\) (In particular, \({{\mathcal {F}}}= {{\mathcal {F}}}_{{{\mathbb {Z}}}_+}.\)) We also recall that a function \(u:\Omega \rightarrow {{\mathbb {R}}}\) is \({{\mathcal {F}}}_I\)-measurable if and only if there exists an \({{\mathcal {E}}}^p\)-measurable function \({\tilde{u}}:\Omega _0^p\rightarrow {{\mathbb {R}}}\) such that \(u = {\tilde{u}}\circ (\pi _{i_1},\ldots ,\pi _{i_p}),\) i.e., \(u(\omega ) = \tilde{u}(\omega _{i_1},\ldots ,\omega _{i_p}).\) With slight abuse of language, we will say below that the sigma-algebra \({{\mathcal {F}}}_I\) is generated by the random variables \(\omega _i,\, i\in I,\) instead of the projections \(\pi _i.\) In particular, we denote

for \(1\le i\le j\le \infty .\)

Denote

In the following \((\alpha (n))_{n\ge 1}\) will denote a sequence such that

for each \(n\ge 1.\)

Standing Assumption (SA2) throughout the rest of the paper we assume that the random selection process is strong mixing: \(\alpha (n)\) can be chosen so thatFootnote 1

\(\blacksquare \)

Suppose that \(u = u(\omega _1,\ldots ,\omega _i)\) and \(v = v(\omega _{i+n},\omega _{i+n+1},\ldots )\) are \(L^\infty \) functions. Then

as is well known. Ultimately, we will impose a rate of decay on \(\alpha (n).\)

We denote by \(T_*\) the pushforward of a map T, acting on a probability measure m, i.e., \((T_*m)(A) = m(T^{-1}A)\) for measurable sets A. We write

and

for \(k\ge r.\) We also write

for \(l\ge k.\) Note that all of these objects depend on \(\omega \) through the maps \(T_{\omega _i}.\) We use the conventions \(\mu _0 = \mu ,\, \mu _{r,r+1} = \mu \) and \(f_{k,k+1} = f\) here.

Standing Assumption (SA3) throughout the rest of the paper we assume the following uniform memory-loss condition: there exists a constant \(C\ge 0\) such that

for all

whenever \(k\ge r.\) The bound holds uniformly for (almost) all \(\omega .\)\(\blacksquare \)

In the cocycle notation, (4) reads

Note that, setting

we have

Lemma 2.7

There exists a constant \(C\ge 0\) such that

In particular, for \(i\le j \le k\le l,\)

Proof

The first bound holds, because

Suppose \( i\le j \le r \le k\le l\) holds. By (SA3), the choice \(g=f f_{l,k+1}\) yields

while the choices \(g = f\) and \(g = f_{l,k+1}\) together yield

Hence

Note that here the expression in the curly braces only depends on the random variables \(\omega _{r+1},\ldots ,\omega _l\) while \(\mu ({\bar{f}}_i {\bar{f}}_j)\) only depends on \(\omega _1,\ldots ,\omega _j.\) More precisely, denoting \(u = \mu ({\bar{f}}_i {\bar{f}}_j)\) and \(v = \mu _{k,r+1}(ff_{l,k+1}) - \mu _{k,r+1}(f)\mu _{k,r+1}(f_{l,k+1}),\) we have \(u\in L^\infty ({{\mathcal {F}}}_1^j)\) and \(v\in L^\infty ({{\mathcal {F}}}_{r+1}^l)\subset L^\infty ({{\mathcal {F}}}_r^\infty ).\) Therefore,

by (6). On the other hand, the strong-mixing bound (3) implies

Moreover,

Collecting the bounds leads to the estimate

Note that (6) immediately yields the estimate

which by the boundedness of \(\alpha \) results in

Taking the minimum with respect to r proves the lemma. \(\square \)

The upper bound \(|{{\mathbb {E}}}[{\tilde{c}}_{ij}{\tilde{c}}_{kl}]| \le C\eta (j-i)\eta (l-k)\) of Lemma 2.7 yields the following intermediate result:

Lemma 2.8

For \(i\le k,\)

where we have denoted \(m=k-i.\)

Proof

We can estimate

In the third line we used the upper bound \(|{{\mathbb {E}}}[\tilde{c}_{ij}{\tilde{c}}_{kl}]| \le C\eta (j-i)\eta (l-k)\) of Lemma 2.7.

\(\square \)

Next we investigate the remaining term \( \sum _{j=i}^{k-1}\sum _{l=k}^{2k-j-1} |{{\mathbb {E}}}[{\tilde{c}}_{ij}\tilde{c}_{kl}]| \) appearing in Lemma 2.8. Since \(i\le j\le k\le l,\) we have

by choosing \(r = j.\) Suppose furthermore that \(k-j \ge l-k\) and recall \(\eta \) is non-increasing. Then the right side of the above display is bounded above by \(C\eta (l-k).\) In other words, if \(i\le j\le k\le l \le 2k-j,\) then \(C\eta (j-i)\min _{r:j\le r\le k}\{\eta (k-r) + \alpha (r-j)\eta (l-k)\}\) is the tightest bound on \(|{{\mathbb {E}}}[\tilde{c}_{ij}{\tilde{c}}_{kl}]|\) that Lemma 2.7 can provide. This observation motivates the following lemma.

Lemma 2.9

Define

(i) There exists a constant \(C\ge 0\) such that

whenever \(i\le k.\)

(ii) There exist constants \(C_1\ge 0\) and \(C_2\ge 0\) such that

whenever \(i<k.\) (Note also that \(S(i,i) = 0).\)

Proof

Part (i) is an immediate corollary of Lemma 2.7. As for part (ii), let us first prove the lower bound. Since all the terms in S(i, k) are nonnegative and \(\alpha \) is non-increasing, we have for \(i<k\) that

and

Setting \(C_1 = \frac{1}{2}\eta (0)^{2} +\frac{1}{2} \eta (0)\) gives an overall bound

for all \(i<k.\)

It remains to prove the upper bound in part (ii). We choose \(r=\lfloor (k+j)/2\rfloor .\) Since \(\eta \) is summable, we have

Next we split the last sum above into two parts, keeping in mind that \(\alpha \) and \(\eta \) are non-increasing and \(\eta \) is also summable:

This completes the proof. \(\square \)

The next two lemmas concern the case when \(\eta \) and \(\alpha \) are polynomial.

Lemma 2.10

Let \(\eta (n)=Cn^{-\psi },\,\psi >1\) and \(\alpha (n)=Cn^{-\gamma },\, \gamma >0.\) Then

whenever \(i<k.\)

Proof

The lower bound follows immediately from Lemma 2.9(ii). Let first \(m\ge 8.\) Then \(\lfloor m/4\rfloor \ge m/8.\) Thus Lemma 2.9(ii) yields

when \(m\ge 8.\) Since \(S(i,k)\le C(k-i)^2 = Cm^2\) by counting terms, we can choose a large enough \(C_{2}\) such that the claimed upper bound holds also for \(1\le m<8.\)\(\square \)

Lemma 2.11

Let \(\eta (n)=Cn^{-\psi },\,\psi >1\) and \(\alpha (n)=Cn^{-\gamma },\, \gamma >0.\) Then

whenever \(i<k.\)

Proof

Firstly,

Secondly,

Regarding the last sum appearing above, observe that

while

In other words, also

Now, by Lemmas 2.9(i) and 2.10 we have

Thus, Lemma 2.8 and bounds (7) and (8) yield

The proof is complete. \(\square \)

Lemma 2.12

Suppose \(|{{\mathbb {E}}}[\tilde{v}_i\tilde{v}_k]| \le C(k-i)^{-\beta }\) for all \(i<k.\) Let \(\delta >0\) be arbitrary. Then

almost surely.

Proof

Applying Lemma 2.6 we get

Notice that for any \(\varepsilon >0\) we have \(n\log n= O(n^{1+\varepsilon }).\) Applying Theorem 2.4 with

yields the claim. \(\square \)

Proposition 2.13

Let \(\eta (n)=Cn^{-\psi },\,\psi >1\) and \(\alpha (n)=Cn^{-\gamma },\, \gamma >0.\) Then, for any \(\delta >0,\)

almost surely.

Proof

By Lemma 2.11, we have \(|{{\mathbb {E}}}[{\tilde{v}}_i{\tilde{v}}_k]| \le Cm^{-\min \{\psi -1,\gamma \}}.\) Applying Lemma 2.12 with \(\beta =\min \{\psi -1,\gamma \}\) yields the claim. \(\square \)

We are now in position to prove the main result of this section:

Theorem 2.14

Assume (SA1) and (SA3) with \(\eta (n)=Cn^{-\psi },\,\psi >1.\) Assume (SA2) with \(\alpha (n)=Cn^{-\gamma },\,\gamma >0.\) Then, for arbitrary \(\delta >0,\)

almost surely.

Proof

By Corollary 2.2,

Combining this with Proposition 2.13 yields the following upper bounds on \(|\sigma _{n}^{2}-{{\mathbb {E}}}\sigma _{n}^{2}|\):

In each case the first term is the largest, so the proof is complete. \(\square \)

3 The Term \(|{{\mathbb {E}}}\sigma _n^2 - \sigma ^2|\)

In this section we formulate general condition that allow to identify the limit \(\sigma ^2 = \lim _{n\rightarrow \infty }{{\mathbb {E}}}\sigma _n^2\) and obtain a rate of convergence.

Write

for brevity. Then

SettingFootnote 2

we arrive at

Recall that

3.1 Asymptotics of Related Double Sums of Real Numbers

In this subsection we consider double sequences of uniformly bounded numbers \(a_{ik},\,(i,k)\in {{\mathbb {N}}}^2,\) with the objective of controlling the sequence

for large values of n. In this subsection, we make the following assumption tailored to our later needs:

Standing assumption there exists \(\eta :{{\mathbb {N}}}\rightarrow [0,\infty )\) such that

We also denote the tail sums of \(\eta \) by

We begin with a handy observation:

Lemma 3.1

There exists \(C>0\) such that

whenever \(0<K\le n\) and \(K\le L\le \infty .\)

Proof

For all choices of \(0<K\le n\) we have

The error is uniform because of the uniform condition \(|a_{ik}|\le \eta (k).\) For \(L\ge K,\)

uniformly, which concludes the proof. \(\square \)

The following lemma helps identify the limit of \(B_n\) and the rate of convergence under certain circumstances:

Lemma 3.2

Suppose the limit

exists for all \(k\ge 0.\) Then

The series on the right side converges absolutely. Furthermore, denoting

there exists \(C>0\) such that

holds whenever \(0<K\le n.\)

Proof

Since

also \(|b_k|\le \eta (k),\) so the series \(\sum _{k=0}^\infty b_k\) converges absolutely. Lemma 3.1 with \(L=K\) yields

uniformly for all \(0<K\le n.\) Thus, the definition of \(r_k(n)\) gives

Now \(|b_k|\le \eta (k)\) yields (11). To prove the convergence of \(B_n,\) consider (11) and fix an arbitrary \(\varepsilon >0.\) Fix K so large that \(R(K)<\varepsilon /2C.\) Since \(\bigl |\sum _{k = 0}^{K}r_k(n)\bigr | + Kn^{-1}\) tends to zero with increasing n, it is bounded by \(\varepsilon /2C\) for all large n. Then \(|B_n - \sum _{k = 0}^{\infty } b_{k} | < \varepsilon .\)

\(\square \)

3.2 Convergence of \({{\mathbb {E}}}\sigma _n^2\): A General Result

In this subsection we apply the results of the preceding subsection to the sequence

where

Recall from (9) and (2) of (SA1) that the standing assumption in (10) is satisfied: \( |{{\mathbb {E}}}v_{ik}| \le 2\eta (k) \) and \(\sum _{k=0}^\infty \eta (k)<\infty .\) The next theorem is nothing but a rephrasing of Lemma 3.2 in the case \(a_{ik} = {{\mathbb {E}}}v_{ik}\) at hand.

Theorem 3.3

Suppose the limit

exists for all \(k\ge 0.\) The series

is absolutely convergent, and

In particular, \(\sigma ^2\ge 0.\) Furthermore, there exists a constant \(C>0\) such that

holds whenever \(0<K\le n.\)

3.3 Convergence of \({{\mathbb {E}}}\sigma _n^2\): Asymptotically Mean Stationary \({{\mathbb {P}}}\)

For the rest of the section we assume \({{\mathbb {P}}}\) is asymptotically mean stationary, with mean \({\bar{{{\mathbb {P}}}}}.\) In other words, there exists a measure \({\bar{{{\mathbb {P}}}}}\) such that, given a bounded measurable \(g:\Omega \rightarrow {{\mathbb {R}}},\)

The measure \({\bar{{{\mathbb {P}}}}}\) is then \(\tau \)-invariant. We denote \({\bar{{{\mathbb {E}}}}} g=\int g\,\mathrm {d}{\bar{{{\mathbb {P}}}}}.\) We will shortly impose additional rate conditions; see (15).

Recall the cocycle property of the random compositions. In what follows, it will be convenient to use the notations

and

For the results of this section we need the following preliminary lemma, which crucially relies on the memory-loss property (SA3), assumed to hold throughout this text.

Lemma 3.4

There exists a constant \(C>0\) such that

for all \(0\le r\le i,\,k\ge 0\) and \(a\in \{1,2\}.\)

Proof

Note that we may rewrite the memory-loss property in (5) as

for all \(r\le j.\) Thus, choosing \(g=f\) (recall \(f = f_{j,j+1}\in {{\mathcal {G}}}_j\) for all j) yields

On the other hand, choosing \(g=ff_{i+k,i+1}=f f\circ \varphi (k,\tau ^{i}\omega )\) gives

which completes the proof. \(\square \)

The following lemma guarantees that both limits \(\lim _{n\rightarrow \infty }n^{-1}\sum _{i=0}^{n-1} {{\mathbb {E}}}\mu (f_i f_{i+k})\) and \(\lim _{n\rightarrow \infty }n^{-1}\sum _{i=0}^{n-1} {{\mathbb {E}}}\mu (f_i)\mu (f_{i+k})\) exist and can be expressed in terms of \(\bar{{{\mathbb {P}}}}.\)

Lemma 3.5

For all \(i, k\ge 0\) and \(a\in \{1,2\}\)

In particular, the limits exist.

Proof

First we make the observation that since \({\bar{{{\mathbb {P}}}}}\) is stationary, (13) implies

whenever \(i\ge r.\) From assumption (2) it follows that \(\lim _{r\rightarrow \infty }\eta (r)=0.\) The sequence \(({\bar{{{\mathbb {E}}}}} g_{ik}^a)_{i=0}^\infty \) is therefore Cauchy, so \(\lim _{i\rightarrow \infty } \bar{{{\mathbb {E}}}}g_{ik}^a\) exists and respects the same bound, i.e.,

We are now ready to show that \(\lim _{n\rightarrow \infty } n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^a\) exists and in the process we see that it is equal to \(\lim _{j\rightarrow \infty } \bar{{{\mathbb {E}}}}g_{jk}^{a}.\)

Let \(\varepsilon >0.\) Choose \(r\in {{\mathbb {N}}}\) such that \(C\eta (r)< \varepsilon /5,\) where C is the same constant as above. Then choose \(n_{0}\in {{\mathbb {N}}}\) that satisfies two following conditions. First, \(\Vert f\Vert _{\infty }^{2}r/n_{0}< \varepsilon /5.\) Second, by (12), \(\left| n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{rk}^{a}\circ \tau ^{i}-\bar{{{\mathbb {E}}}}g_{rk}^{a}\right| < \varepsilon /5\) for all \(n\ge n_{0}.\) Next we show that \(\left| n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^{a}- \lim _{j\rightarrow \infty }\bar{{{\mathbb {E}}}}g_{ik}^{a}\right| <\varepsilon \) for all \(n\ge n_{0}.\)

The following five estimates yield the desired result:

In this first estimate, note that \(\Vert g_{ik}^{a}\Vert _{\infty }\le \Vert f\Vert _{\infty }^{2}\) for all \(i,k\in {{\mathbb {N}}}\) and \(a\in \{1,2\}\):

In the second estimate, we apply (13):

The third estimate follows the same reasoning as the first:

The fourth estimate follows by the definition of \(n_{0}\):

The last estimate holds by (14):

These estimates combined, yield \(\left| n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^a -\lim _{j\rightarrow \infty } \bar{{{\mathbb {E}}}}g_{jk}^{a}\right| < \varepsilon \) for all \(n\ge n_{0}.\) Since \(\lim _{j\rightarrow \infty } \bar{{{\mathbb {E}}}}g_{jk}^{a}\) exists, then also \(\lim _{i\rightarrow \infty }n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^a\) exists and is equal to it. \(\square \)

Theorem 3.3 yields the next result as a corollary.

Theorem 3.6

The series

where

is absolutely convergent, and

Proof

Recall that \({{\mathbb {E}}}v_{ik} = (2-\delta _{k0})\,{{\mathbb {E}}}[\mu (f_i f_{i+k}) - \mu (f_i)\mu (f_{i+k})].\) By Lemma 3.5 the limits \(\lim _{n\rightarrow \infty } n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}\mu (f_i f_{i+k})=\lim _{j\rightarrow \infty } \bar{{{\mathbb {E}}}} \mu (f_j f_{j+k})\) and \(\lim _{n\rightarrow \infty } n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}\mu (f_i) \mu (f_{i+k})=\lim _{j\rightarrow \infty } \bar{{{\mathbb {E}}}}\mu (f_j) \mu (f_{j+k})\) exist. Therefore

Now the rest of the claim follows from Theorem 3.3. \(\square \)

Standing Assumption (SA4) for the rest of the paper we assume that \({{\mathbb {P}}}\) is asymptotically mean stationary, and there exist \(C_0>0\) and \(\zeta >0\) such that

for all \(n\ge 1.\) Here the \(\sup \) is taken over all \(r,k\ge 0\) and \(a\in \{1,2\}.\)\(\blacksquare \)

Lemma 3.7

For all integers \(0<n_{1}< n_{2},\)

where C is uniform.

Proof

By Lemma 3.4 we have

By (15), it follows that

Finally (14) gives

Now the claim follows from (16), (17) and (18). \(\square \)

Next we use Lemma 3.7 to provide an upper bound on \(\left| n^{-1}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^{a}- \lim _{r\rightarrow \infty } \bar{{{\mathbb {E}}}} g_{rk}^{a}\right| .\) Note that just making the substitutions \(n_{1}=0\) and \(n_{2}=n\) in Lemma 3.7 does not yield a good result. Instead we divide the sum \(\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^{a}\) into an increasing number of partial sums and then apply Lemma 3.7 separately to those parts.

Before proceeding to the next lemma, we define a function \(h_{\zeta }:{{\mathbb {N}}}\rightarrow {{\mathbb {R}}}\) which depends on the parameter \(\zeta \) in the following way

Lemma 3.8

Suppose \(\eta (n)=Cn^{-\psi },\,\psi >1.\) Then a uniform bound

holds.

Proof

Denote \(n^{*}=\lfloor \log _{2} n \rfloor .\) We split the sum \(\frac{1}{n}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^{a}\) as

Obviously

Lemma 3.7 yields

Therefore

Lemma 3.7 also gives

In the last line we used the fact that \(n/2 \le 2^{n^{*}}\le n,\) implying \(n-2^{n^{*}}\le n/2.\) Collecting the estimates (20), (21) and (22), we deduce \(\left| \frac{1}{n}\sum _{i=0}^{n-1}{{\mathbb {E}}}g_{ik}^{a}-\lim _{r\rightarrow \infty } \bar{{{\mathbb {E}}}} g_{rk}^{a}\right| \le C h_{\zeta }(n).\)\(\square \)

We are finally ready to state and prove the main result of this section:

Theorem 3.9

Assume (SA1) and (SA3) with \(\eta (n)=Cn^{-\psi },\,\psi >1.\) Assume (SA4) with \(\zeta >0.\) Then

Here \(\sigma ^2\) is the quantity appearing in Theorem 3.6.

Proof

Let \(k\ge 0.\) The previous lemma applied to case \(a=1\) yields

Similarly in the case \(a=2\)

Equations (23), (24) and Theorem 3.6 imply that

We apply Theorem 3.3, which yields

for all \(0<K\le n.\) The estimate on the right side of (25) is minimized, when \(h_{\zeta }(n)=K^{-\psi }.\) Therefore choosing

in (25) completes the proof. \(\square \)

4 Conclusions

4.1 Main Result and Consequences

Theorems 2.14 and 3.9 immediately yield the main result of the paper, given next. The bounds shown are elementary combinations of these theorems, so we leave the details to the reader. Let us remind the reader of the Standing Assumptions (SA1)–(SA4) in Sects. 2 and 3 . At the end of the section we also comment on the case of vector-valued observables.

Theorem 4.1

Assume (SA1 and 3) with \(\eta (n)=Cn^{-\psi },\,\psi >1;\) (SA2) with \(\alpha (n)=Cn^{-\gamma },\,\gamma >0;\) and (SA4) with \(\zeta >0.\) Fix an arbitrarily small \(\delta >0.\) Then there exists \(\Omega _*\subset \Omega ,\,{{\mathbb {P}}}(\Omega _*)=1,\) such that all of the following holds: the non-random numberFootnote 3

is well defined, nonnegative, the series is absolutely convergent, and

for every \(\omega \in \Omega _*.\) Moreover, the absolute difference

has the following upper bounds, for any \(\omega \in \Omega _*\):

Let us reiterate that Theorem 4.1 facilitates proving quenched central limit theorems with convergence rates for the fiberwise centered \({\bar{W}}_n.\) Recalling the discussion from the beginning of the paper, we namely have the following trivial lemma (thus presented without proof):

Lemma 4.2

Suppose \(d(\,\cdot \,,\,\cdot \,)\) is a distance of probability distributions with the following property: given \(b>0,\) there exist an open neighborhood \(U\subset {{\mathbb {R}}}_+\) of b and a constant \(C>0\), such that

for all \(a\in U.\) Here \(Z\sim {{\mathcal {N}}}(0,1).\) If \(\sigma ^2>0,\) then for every \(\omega \in \Omega _*,\)

In other words, once a bound on the first term on the right side has been established (e.g., using methods cited earlier), one can use Theorem 4.1 to bound the second term almost surely. Typical metrics satisfying (26) are the 1-Lipschitz (Wasserstein) and Kolmogorov distances.

The results presented above allow to formulate some sufficient conditions for \(\sigma ^2 > 0.\) For simplicity, we proceed in the ideal parameter regime

Generalizations of the next result involving any of the other parameter regimes of Theorem 4.1 are straightforward, and left to the reader.

Corollary 4.3

Let (27) hold with all the other assumptions of Theorem 4.1. Suppose that either (i) there exists \(\omega \in \Omega _*\) such that

or (ii) and

Then \(\sigma ^2 > 0.\)

Proof

Suppose \(\sigma ^2 = 0.\) We will derive a contradiction in each case.

(i) Let \(\omega \in \Omega _*\) be arbitrary. By Theorem 4.1, there exists \(C>0\) such that \(n^{-1}{{\,\mathrm{\mathrm {Var}}\,}}_\mu (S_n) = \sigma _n^2 \le C n^{-\frac{1}{2}} \log ^{\frac{3}{2}+\delta }n\) for all \(n\ge 1.\) This violates the assumption of part (i), so \(\sigma ^2>0.\)

(ii) By Theorem 3.9, there exists \(C>0\) such that \(n^{-1}{{\mathbb {E}}}{{\,\mathrm{\mathrm {Var}}\,}}_\mu (S_n) = {{\mathbb {E}}}\sigma _n^2 \le Cn^{\frac{1}{\psi }-1}\) for all \(n\ge 1.\) This violates the assumption of part (ii), so \(\sigma ^2>0.\)\(\square \)

We will return to the question of whether \(\sigma ^2 = 0\) or \(\sigma ^2>0\) in Lemma B.1.

4.2 Vector-Valued Observables

Let us conclude by explaining, as promised, how the results extend with ease to the case of a vector-valued observable \(f:X\rightarrow {{\mathbb {R}}}^d.\) This time \(\sigma _n^2\) is a \(d\times d\) covariance matrix and, if the limit exists, so is \(\sigma ^2 = \lim _{n\rightarrow \infty }\sigma _n^2.\) Define the functions \(\ell _n:{{\mathbb {R}}}^d\rightarrow {{\mathbb {R}}}\) by

and denote the standard basis vectors of \({{\mathbb {R}}}^d\) by \(e_\alpha ,\, \alpha = 1,\ldots ,d.\) Observe that \(\ell _n(v)\) is the \(\mu \)-variance of \(W_n\) with the scalar-valued observable \(v^T f\) in place of f.

Lemma 4.4

Suppose there exists \(\kappa >0\) such that, almost surely, the limit \(\ell (e_\alpha +e_\beta ) = \lim _{n\rightarrow \infty }\ell _n(e_\alpha +e_\beta )\) exists and

as \(n\rightarrow \infty \) for all \(\alpha ,\beta =1,\ldots ,d.\) Then, almost surely, \(\sigma ^2 = \lim _{n\rightarrow \infty }\sigma _n^2\) exists and

for all matrix norms.

Proof

Note that the matrix elements of \(\sigma _n^2\) are given by

Dropping the subindex n yields the limit matrix elements \(\sigma ^2_{\alpha \beta }.\) Since \(\alpha \) and \(\beta \) can take only finitely many values, simultaneous almost sure convergence for the matrix elements with the claimed rate follows. \(\square \)

According to the lemma, the rate of convergence of the covariance matrix \(\sigma _n^2\) to \(\sigma ^2\) can be established by applying the earlier results to the finite family of scalar-valued observables \((e_\alpha + e_\beta )^T f.\) Further, one may apply Corollary 4.3 (or Lemma B.1) to the observables \(v^T f\) for all unit vectors v to obtain conditions for \(\sigma ^2\) being positive definite. Assuming now it is, for certain metrics (e.g. 1-Lipschitz) one has

where \(Z\sim {{\mathcal {N}}}(0,I_{d\times d})\) and \(C=C(\sigma ),\) which again yields an estimate of the type

We refer the reader to Hella [13] for details, including the hard part of establishing an almost sure, asymptotically decaying bound on \(d({\bar{W}}_n,\sigma _n Z)\) in the vector-valued case.

Remark 4.5

As an application, Hella [13] establishes the convergence rate \(n^{-\frac{1}{2}}\log ^{\frac{3}{2}+\delta }n\) for random compositions of uniformly expanding circle maps in the regime (27). Furthermore, Leppänen and Stenlund [16] establish the same result for random compositions of non-uniformly expanding Pomeau–Manneville maps.

Notes

It would be standard to denote \(\sup _{i\ge 1}\alpha ({{\mathcal {F}}}_1^i,{{\mathcal {F}}}_{i+n}^\infty )\) by \(\alpha (n).\) We prefer to let \(\alpha (n)\) stand for an upper bound so the non-increasing assumption makes sense. This is a choice of technical convenience.

The relationship with earlier notations is that \(\sum _{k=0}^\infty v_{ik} = v_i.\)

Here \(\delta _{k0} = 1\) if \(k=0,\) and \(\delta _{k0} = 0\) if \(k\ne 0.\)

References

Abdelkader, M., Aimino, R.: On the quenched central limit theorem for random dynamical systems. J. Phys. A. https://doi.org/10.1088/1751-8113/49/24/244002 (2016)

Aimino, R., Nicol, M., Vaienti, S.: Annealed and quenched limit theorems for random expanding dynamical systems. Probab. Theory Relat. Fields 162(1–2), 233–274 (2015). https://doi.org/10.1007/s00440-014-0571-y

Arnold, L.: Random Dynamical Systems. Springer Monographs in Mathematics. Springer, Berlin (1998). https://doi.org/10.1007/978-3-662-12878-7

Ayyer, A., Liverani, C., Stenlund, M.: Quenched CLT for random toral automorphism. Discrete Contin. Dyn. Syst. 24(2), 331–348 (2009). https://doi.org/10.3934/dcds.2009.24.331

Bahsoun, W., Bose, C.: Corrigendum: mixing rates and limit theorems for random intermittent maps (2016 Nonlinearity 29 1417). Nonlinearity 29(12), C4 (2016). https://doi.org/10.1088/0951-7715/29/12/C4

Bahsoun, W., Bose, C., Duan, Y.: Decay of correlation for random intermittent maps. Nonlinearity 27(7), 1543–1554 (2014). https://doi.org/10.1088/0951-7715/27/7/1543

Bahsoun, W., Bose, C., Ruziboev, M.: Quenched decay of correlations for slowly mixing systems. arXiv:1706.04158 (2017)

Chen, J., Yang, Y., Zhang, H.-K.: Non-stationary almost sure invariance principle for hyperbolic systems with singularities. J. Stat. Phys. (2018). https://doi.org/10.1007/s10955-018-2107-9

Dragičević, D., Froyland, G., Gonzalez-Tokman, C., Vaienti, S.: A spectral approach for quenched limit theorems for random expanding dynamical systems. Commun. Math. Phys. 360(3), 1121–1187 (2018). https://doi.org/10.1007/s00220-017-3083-7

Dragičević, D., Froyland, G., González-Tokman, C., Vaienti, S.: Almost sure invariance principle for random piecewise expanding maps. Nonlinearity 31(5), 2252–2280 (2018). https://doi.org/10.1088/1361-6544/aaaf4b

Freitas, A.C.M., Freitas, J.M., Vaienti, S.: Extreme value laws for non stationary processes generated by sequential and random dynamical systems. Ann. Inst. Henri Poincaré Probab. Stat. 53(3), 1341–1370 (2017). https://doi.org/10.1214/16-AIHP757

Gál, I.S., Koksma, J.F.: Sur l’ordre de grandeur des fonctions sommables. Nederl. Akad. Wetensch., Proc. 53, 638–653 = Indagationes Math. 12, 192–207 (1950)

Hella, O.: Central limit theorems with a rate of convergence for sequences of transformations. Preprint. arXiv:1811.06062 (2018)

Kifer, Y.: Limit theorems for random transformations and processes in random environments. Trans. Am. Math. Soc. 350(4), 1481–1518 (1998). https://doi.org/10.1090/S0002-9947-98-02068-6

Leonov, V.P.: On the dispersion of time means of a stationary stochastic process. Teor. Verojatnost. i Primen. 6, 93–101 (1961)

Leppänen, J., Stenlund, M.: Sunklodas’ approach to normal approximation for time-dependent dynamical systems. Preprint. arXiv:1906.03217 (2019)

Melbourne, I., Nicol, M.: A vector-valued almost sure invariance principle for hyperbolic dynamical systems. Ann. Probab. 37(2), 478–505 (2009). https://doi.org/10.1214/08-AOP410

Nándori, P., Szász, D., Varjú, T.: A central limit theorem for time-dependent dynamical systems. J. Stat. Phys. 146(6), 1213–1220 (2012). https://doi.org/10.1007/s10955-012-0451-8

Nicol, M., Török, A., Vaienti, S.: Central limit theorems for sequential and random intermittent dynamical systems. Ergod. Theory Dyn. Syst. 38(3), 1127–1153 (2018). https://doi.org/10.1017/etds.2016.69

Philipp, W., Stout, W.: Almost sure invariance principles for partial sums of weakly dependent random variables. Mem. Am. Math. Soc. 2 (issue 2, 161), iv+140 (1975)

Acknowledgements

Open access funding provided by University of Helsinki including Helsinki University Central Hospital.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Alessandro Giuliani.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

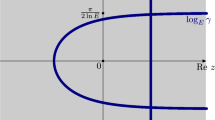

Appendix A. Random Dynamical Systems

In this section we interpret the limit variance of Theorems 3.6 and 3.9 from the point of view of RDSs. Like elsewhere in the paper, we will assume the system possesses the good, uniform, fiberwise properties of the Standing Assumptions.

Recall that \(\tau \) preserves the probability measure \({\bar{{{\mathbb {P}}}}}\) in (12), i.e., \(\tau ^{-1} F\in {{\mathcal {F}}}\) and \({\bar{{{\mathbb {P}}}}}(\tau ^{-1} F) = {\bar{{{\mathbb {P}}}}}(F)\) for all \(F\in {{\mathcal {F}}}.\) One says that \(\varphi (\,\cdot \,,\,\cdot \,,\,\cdot \,)\) in (1) is a measurable RDS on the measurable space \((X,{{\mathcal {B}}})\) over the measure-preserving transformation \((\Omega ,{{\mathcal {F}}},{\bar{{{\mathbb {P}}}}},\tau ).\) The map

is called the skew product of the measure-preserving transformation \((\Omega ,{{\mathcal {F}}},{\bar{{{\mathbb {P}}}}},\tau )\) and the cocycle \(\varphi (n,\omega )\) on X. It is a measurable self-map on \((\Omega \times X,{{\mathcal {F}}}\otimes {{\mathcal {B}}}).\) In general, RDSs and skew products have one-to-one correspondence; in particular, the measurability of one implies the measurability of the other.

We are interested in the skew product, because of the identity

Thus, our task is to study the statistics of the projection of \(\Phi ^n(\omega ,x)\) to X. It now becomes interesting to study the invariant measures of \(\Phi .\) However, the class of all invariant measures of \(\Phi \) is unnatural, for we must incorporate the fact that \(\tau \) preserves the measure \({\bar{{{\mathbb {P}}}}}.\) For this reason, it is said that a probability measure \(\mathbf {P}\) on \({{\mathcal {F}}}\otimes {{\mathcal {B}}}\) is an invariant measure for the RDS \(\varphi \) if it is invariant for \(\Phi \)and the marginal of \(\mathbf {P}\) on \(\Omega \) coincides with \({\bar{{{\mathbb {P}}}}}.\) In other words,

where \(\Pi _1:\Omega \times X\rightarrow X:(\omega ,x)\mapsto \omega .\)

We will also need to consider the cocycle

on the product space \(X\times X.\) The corresponding skew product is

The invariant measures of the RDS \(\varphi ^{(2)}\) are defined analogously to above. Without danger of confusion, we define the projections \(\Pi _1(\omega ,x,y) = \omega ,\, \Pi _2(\omega ,x,y) = x\) and \(\Pi _3(\omega ,x,y) = y\) on \(\Omega \times X\times X.\) We also write \((\Pi _1\times \Pi _2)(\omega ,x,y) = (\omega ,x)\) and \((\Pi _1\times \Pi _3)(\omega ,x,y) = (\omega ,y).\)

Of particular interest will be the sequence of functions \(Z_n:\Omega \times X\times X\) defined by

For then

Notice already that writing

yields the identity

Standing Assumption (SA5) assume there exists an invariant measure \(\mathbf {P}^{(2)}\) for the RDS \(\varphi ^{(2)}\) that is symmetric in the sense that

for all bounded measurable \(h:\Omega \times X\times X\rightarrow {{\mathbb {R}}}.\) The common marginal

is then trivially an invariant measure for the RDS \(\varphi .\) Moreover, assume

and

are satisfied. \(\blacksquare \)

While Standing Assumption (SA5) may, from the point of view of the initial setup of our problem, seem mysterious at a first glance, it is quite natural. We will later provide an example of a more concrete condition which implies (SA5), and stick to the abstract setting for now.

The following lemma lists useful properties of F in view of (SA5).

Lemma A.1

The function F satisfies

and

The latter has the upper bound

Proof

That F is centered is due to the symmetry property (30) of \(\mathbf {P}^{(2)}\) in (SA5). Since

the same symmetry property also yields (35). The upper bound in (36) then follows from (33) and (34) in (SA5) together with (SA1). \(\square \)

Recall that in Theorems 3.6, 3.9 and 4.1 we have

The next lemma connects this expression to the RDS notions when also (SA5) is assumed.

Lemma A.2

The limit variance \(\sigma ^2\) in Theorems 3.6, 3.9 and 4.1 satisfies

Proof

The first line is just the expression of \(\sigma ^2\) rewritten using (33) and (34). The second line then follows by (35). The last line holds by (29) together with (36) and (SA1). \(\square \)

Remark A.3

Note that the expression of \(\sigma ^2\) in (38) is exactly one half of the Green–Kubo formula in terms of the skew-product \(\Phi ^{(2)},\) its invariant measure \(\mathbf {P}^{(2)},\) and the observable F. This trick of “doubling the dimension” is not new. To our knowledge, however, (38) is a new observation at this level of generality. It answers a question raised in [2, Sect. 7] by Aimino, Nicol and Vaienti (who studied the special case where \({{\mathbb {P}}},\,\mathbf {P}\) and \(\mathbf {P}^{(2)}\) are product measures, allowing for a non-random centering of \(S_n\)): The key that makes (38) an algebraic fact is the symmetry property (30) of the measure \(\mathbf {P}^{(2)}.\)

It deserves a separate remark that even though \(\sigma ^2\) does not in general (see Remark C.2) admit a classical Green–Kubo formula in terms of \(\Phi ,\,\mathbf {P},\) and f, “doubling the dimension” still yields (38).

Appendix B. Positivity of \(\sigma ^2\)

In this section we return to the question of positivity of the limit variance \(\sigma ^2.\) We shall assume (SA1) and (SA3)–(SA5), the strong-mixing assumption (SA2) being unnecessary here. Again we assume nice parameters—e.g. \(\psi >2\)—for simplicity of the statements.

The foregoing discussion allows us to give some characterizations of the cases \(\sigma ^2 = 0\) and \(\sigma ^2>0\) on various levels of abstraction:

Lemma B.1

Suppose \(\eta (n) = Cn^{-\psi },\, \psi >2.\)

- (i)

\(\sigma ^2 = 0\) is equivalent to each of the following conditions:

- (a)

\(\sup _{n\ge 0}\int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)}<\infty .\)

- (b)

There exists \(G\in L^2(\mathbf {P}^{(2)})\) such that \(F = G-G\circ \Phi ^{(2)}.\)

- (a)

- (ii)

\(\sigma ^2>0\) is equivalent to each of the following conditions:

- (a)

\(\sup _{n\ge 0}\int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)}=\infty .\)

- (b)

There exist \(c>0\) and \(N>0\) such that \(\int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)} \ge cn\) for all \(n\ge N.\)

- (a)

- (iii)

If \(\zeta >1,\) then \(\sigma ^2>0\) is equivalent to each of the following conditions:

- (a)

\(\sup _{n\ge 1}n^{-\frac{1}{\psi }} \int Z_n^2\,\mathrm {d}({{\mathbb {P}}}\otimes \mu \otimes \mu ) = \infty .\)

- (b)

\(\sup _{n\ge 1}n^{-\frac{1}{\psi }}\, {{\mathbb {E}}}{{\,\mathrm{\mathrm {Var}}\,}}_\mu (S_n) = \infty .\)

- (c)

There exist \(c>0\) and \(N>0\) such that \(\int Z_n^2\,\mathrm {d}({{\mathbb {P}}}\otimes \mu \otimes \mu ) \ge cn\) for all \(n\ge N.\)

- (d)

There exist \(c>0\) and \(N>0\) such that \({{\mathbb {E}}}{{\,\mathrm{\mathrm {Var}}\,}}_\mu (S_n) \ge cn\) for all \(n\ge N.\)

- (a)

- (iv)

If \({{\mathbb {P}}}\) is stationary, then \(\sigma ^2=0\) is equivalent to each of the following conditions:

- (a)

\(\sup _{n\ge 1}\int Z_n^2\,\mathrm {d}({{\mathbb {P}}}\otimes \mu \otimes \mu ) < \infty .\)

- (b)

\(\sup _{n\ge 1} {{\mathbb {E}}}{{\,\mathrm{\mathrm {Var}}\,}}_\mu (S_n) < \infty .\)

- (a)

- (v)

If \({{\mathbb {P}}}\) is stationary, then \(\sigma ^2>0\) is equivalent to each of the following conditions:

- (a)

\(\sup _{n\ge 1} \int Z_n^2\,\mathrm {d}({{\mathbb {P}}}\otimes \mu \otimes \mu ) = \infty .\)

- (b)

\(\sup _{n\ge 1} {{\mathbb {E}}}{{\,\mathrm{\mathrm {Var}}\,}}_\mu (S_n) = \infty .\)

- (c)

There exist \(c>0\) and \(N>0\) such that \(\int Z_n^2\,\mathrm {d}({{\mathbb {P}}}\otimes \mu \otimes \mu ) \ge cn\) for all \(n\ge N.\)

- (d)

There exist \(c>0\) and \(N>0\) such that \({{\mathbb {E}}}{{\,\mathrm{\mathrm {Var}}\,}}_\mu (S_n) \ge cn\) for all \(n\ge N.\)

- (a)

From the point of view of applications, parts (iii)(b and d), (iv)(b) and (v)(b and d) may be the most relevant ones as they involve the measures \({{\mathbb {P}}}\) and \(\mu ,\) and the process \((S_n)_{n\ge 1},\) which are immediately apparent from the definition of the system. Note that (iii)(b) is the same condition as in Corollary 4.3(ii).

Proof of Lemma B.1

By (36) we can appeal to a well-known result due to Leonov [15], which guarantees that the limit \( b = \lim _{n\rightarrow \infty }\int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)} \) exists in \([0,\infty ],\) and \(b<\infty \) if and only if \(\sup _{n\ge 0}\int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)}<\infty .\) Moreover, the last condition is equivalent to the existence of \(G\in L^2(\mathbf {P}^{(2)})\) such that \(F = G-G\circ \Phi ^{(2)}.\) On the other hand, standard computations and the formula for \(\sigma ^2\) in (38) yield

Here \(\psi >2\) was used. Thus, \(\sigma ^2>0\) is equivalent to linear growth of \(\int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)}\) to infinity, while \(\sigma ^2 = 0\) is equivalent to \(\sup _{n\ge 0}\int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)}<\infty .\) Parts (i) and (ii) are proved.

As for part (iii), (28) and Theorem 3.9 with \(\zeta >1\) yield

If \(\sigma ^2 = 0,\) the right side of the estimate is \(O\left( n^{\frac{1}{\psi }}\right) ,\) and each of the conditions (a)–(d) fails. If \(\sigma ^2>0,\) the right side grows asymptotically linearly in n, and (a)–(d) are all satisfied.

Finally, parts (iv) and (v) follow from (i) and (ii), respectively, because in the stationary case it holds that \(\int Z_n^2\,\mathrm {d}({{\mathbb {P}}}\otimes \mu \otimes \mu ) = \int Z_n^2\,\mathrm {d}\mathbf {P}^{(2)} + O(1);\) see Lemma B.2 below. \(\square \)

We close the section with the lemma below, which was needed in the last part of the preceding proof.

Lemma B.2

Suppose \({{\mathbb {P}}}\) is stationary and \(\eta (n) = Cn^{-\psi },\, \psi >2.\) Then

Proof

We have

and

Denote

and

Note that \(|a_{ik}|\le 2\eta (k)\) by (SA1) and \(|b_k|\le 2\eta (k)\) by (36). Thus \(|a_{ik}-b_k|\le Ck^{-\psi }\) for all i and k. By stationarity (\({{\mathbb {P}}}= {\bar{{{\mathbb {P}}}}}\)) and (SA5),

Again by stationarity, (14) implies that \(|a_{ik}-b_k|\le C\eta (i)=Ci^{-\psi }.\) Thus

Collecting (40), (41) and (42) we get the estimate

for all n. The proof is complete. \(\square \)

Appendix C. Effect of the Fiberwise Centering of \(W_n\)

In this section we discuss Remark 1.2 concerning the variance of \(W_n,\) as opposed to the fiberwise-centered \({\bar{W}}_n = W_n - \mu (W_n).\) Note that

and

The difference of (43) and (44) equals

Under the assumptions of our paper

Therefore \({{\,\mathrm{\mathrm {Var}}\,}}_{{{\mathbb {P}}}\otimes \mu } W_n\) and \({{\,\mathrm{\mathrm {Var}}\,}}_{{\mathbb {P}}}\mu (W_n)\) either converge or diverge simultaneously. We now derive their asymptotic expressions in terms of series, restricting to the case where the law \({{\mathbb {P}}}\) of the selection process is stationary.

Lemma C.1

Let \({{\mathbb {P}}}\) be stationary. Let (SA1)–(SA3) hold with \(\eta (n) = Cn^{-\psi },\,\psi >1,\) and \(\alpha (n) = n^{-\gamma },\,\gamma >1.\) Then

and

Here the limits exist and the series converge absolutely. If also (SA5) holds, then

and

using the RDS notations.

Remark C.2

Note that in the latter case

This is the classical Green–Kubo formula in terms of the skew-product \(\Phi ,\) its invariant measure \(\mathbf {P},\) and the observable f. Let us stress that it is not the expression of \(\sigma ^2,\) save for exactly the special case \(\lim _{n\rightarrow \infty }{{\,\mathrm{\mathrm {Var}}\,}}_{{\mathbb {P}}}\mu (W_n) = 0.\) The latter special case is the very same in which Abdelkader and Aimino [1] establish a quenched central limit theorem with non-random centering, assuming i.i.d. randomness (\({{\mathbb {P}}}= {{\mathbb {P}}}_0^{{\mathbb {N}}}\)) in particular; see also Remark A.3.

Proof of Lemma C.1

We prove the statements concerning \({{\,\mathrm{\mathrm {Var}}\,}}_{{\mathbb {P}}}\mu (W_n)\) first. We have

where

We will apply Lemma 3.2 to show convergence as \(n\rightarrow \infty .\) To that end, we need control of \(a_{ik}\) in the limits \(i\rightarrow \infty \) and \(k\rightarrow \infty .\) We begin with the first limit.

By (5) below (SA3), we have a uniform bound

whenever \(r\le i.\) Since \({{\mathbb {P}}}\) is stationary, this yields

Thus, \(({{\mathbb {E}}}\mu (f_{i}))_{i= 0}^\infty \) is Cauchy, so its limit exists and

Since \({\bar{{{\mathbb {P}}}}} = {{\mathbb {P}}}\) by stationarity, (14) gives

Thus, the limit

exists and

as \(i\rightarrow \infty .\) Since \(\eta \) is summable,

as \(n\rightarrow \infty .\) Both of the preceding bounds are uniform in k.

In order to bound \(a_{ik}\) as \(k\rightarrow \infty ,\) first note that (45) allows to estimate

for \(r\le k,\) where the function \(v(\omega ) = \mu (f\circ \varphi (k-r,\tau ^{i+r}\omega ))\) is \({{\mathcal {F}}}_{i+r+1}^\infty \)-measurable and bounded; see Sect. 2.1 for terminology. Thus

the last estimate being true by strong mixing. Picking \(r\asymp k/2\) yields

uniformly in i. Since \(\gamma >1\) and \(\psi >1,\) this bound is summable, so Lemma 3.2 can now be applied; recall (10). The bound in (11) becomes

Now, choosing \(K\asymp n^{1/\min \{\gamma ,\psi \}}\) yields the upper bound \(Cn^{1/\min \{\gamma ,\psi \}-1}\) claimed.

The expressions of the limits \(b_k\) in term of the RDS notations is obtained with the help of (32)–(34), recalling again \({{\mathbb {P}}}= {\bar{{{\mathbb {P}}}}}\) due to stationarity.

Finally, the claims regarding \({{\,\mathrm{\mathrm {Var}}\,}}_{{{\mathbb {P}}}\otimes \mu } W_n = {{\,\mathrm{\mathrm {Var}}\,}}_{{{\mathbb {P}}}\otimes \mu } {\bar{W}}_n + {{\,\mathrm{\mathrm {Var}}\,}}_{{\mathbb {P}}}\mu (W_n)\) follow since we already have control of both terms on the right side: in the stationary case at hand, Theorem 3.9 applies with any \(\zeta >1\), yielding \({{\,\mathrm{\mathrm {Var}}\,}}_{{{\mathbb {P}}}\otimes \mu } {\bar{W}}_n = \sigma ^2 + O\left( n^{\frac{1}{\psi }-1}\right) .\)\(\square \)

Appendix D. (SA5\('\)): A Less Abstract Substitute for (SA5)

Standing Assumption (SA5) is abstract in that it involves the invariant measure \(\mathbf {P}^{(2)}\) of the RDS \(\varphi ^{(2)},\) and a number of properties of the measure, which are not obvious from the setup of the system at the beginning of the paper. For that reason we give in this section, as an example, another assumption which (i) is more concrete in that it involves only the initial measure \(\mu \) and the basic cocycle \(\varphi ,\) and (ii) is stronger than (SA5).

Standing Assumption (SA5\('\)) throughout this section we assume following: the measures \(\varphi (n,\omega )_*\mu \) have uniformly square integrable densities with respect to \(\mu ,\) i.e., there exists \(K>0\) such that

for all n and \(\omega .\) Moreover, for every bounded measurable \(g:X\rightarrow {{\mathbb {R}}}\) and \(\varepsilon >0\) there exists \(N\ge 0\) such that the memory-loss property

hold for \(n\ge N,\,m\ge 0\) and all \(\omega .\)\(\blacksquare \)

The rest of the section is devoted to investigating some consequences of (SA5\('\)).

Note that (47) asks that the integrals of \(x\mapsto g((n,\tau ^m\omega )x)\) with respect to the two measures \(\varphi (m,\omega )_*\mu \) and \(\mu \) are essentially the same for large n, uniformly in m and \(\omega .\) The role of (46) is to allow for uniform approximations of the compositions \(h\circ (\Phi ^{(2)})^n,\,n\ge 0,\) by compositions \({\hat{h}}\circ (\Phi ^{(2)})^n,\) where h is measurable and \({\hat{h}}\) is “simple”: observe that \((h-{\hat{h}})\circ (\Phi ^{(2)})^n\) is not guaranteed to be uniformly (in n) small in \(L^1({\bar{{{\mathbb {P}}}}}\otimes \mu \otimes \mu ),\) even if \(h-{\hat{h}}\) is small, without some assumption. To that end, let us already prove a little lemma:

Lemma D.1

Let \(h:\Omega \times X\times X\rightarrow {{\mathbb {R}}}\) belong to \(L^2({\bar{{{\mathbb {P}}}}}\otimes \mu \otimes \mu )\). Then

holds for all \(n\ge 0\) with K as in (46).

Proof

Write \(\lambda = {\bar{{{\mathbb {P}}}}}\otimes \mu \otimes \mu \) for brevity. Observe that

by Hölder’s inequality. Here

since \({\bar{{{\mathbb {P}}}}}\) is stationary. On the other hand,

by (46). Combining the estimates and taking square roots yields the result. \(\square \)

1.1 D.1 Standing Assumption (SA5\('\)) Implies (SA5)

Lemma D.2

There exists an invariant measure \(\mathbf {P}^{(2)}\) for the RDS \(\varphi ^{(2)}\) such that

for all bounded measurable \(h:\Omega \times X\times X\rightarrow {{\mathbb {R}}}.\) Moreover, (30) holds, and \(\mathbf {P}\) in (31) is an invariant measure for the RDS \(\varphi \) such that

for all bounded measurable \({\tilde{h}}:\Omega \times X\rightarrow {{\mathbb {R}}}.\)

Proof

Let \(u:\Omega \rightarrow {{\mathbb {R}}}\) and \(g^1,g^2:X\rightarrow {{\mathbb {R}}}\) be bounded measurable. Let \(\varepsilon >0.\) Then there exists \(N\ge 0\) such that

for all \(n\ge N\) and \(m\ge 0.\) Here the third line uses (47) and the fourth line uses stationarity. Thus, we see that the sequence \(\left( \iiint (u\otimes g^1\otimes g^2)\circ (\Phi ^{(2)})^{n}(\omega ,x,y)\, \mathrm {d}\mu (x)\, \mathrm {d}\mu (y)\,\mathrm {d}{\bar{{{\mathbb {P}}}}}(\omega ) \right) _n\) is Cauchy and therefore convergent. We will show using the monotone class theorem that the convergence property extends to an arbitrary bounded measurable function in place of \(u\otimes g^1\otimes g^2.\)

Let \({{\mathcal {H}}}\) denote the set of all measurable functions \(h:\Omega \times X\times X\rightarrow {{\mathbb {R}}}\) such that \(\lim _{n\rightarrow \infty }\int h\circ (\Phi ^{(2)})^n\,\mathrm {d}({\bar{{{\mathbb {P}}}}}\otimes \mu \otimes \mu )\) exists. Let \({{\mathcal {A}}}\) denote the set of all measurable cubes in \(\Omega \times X\times X.\) Clearly \({{\mathcal {A}}}\) is nonempty and closed under finite intersections, and it contains the product space \(\Omega \times X\times X.\) Clearly \({{\mathcal {H}}}\) is closed under linear combinations. Furthermore, the argument above shows \(1_A\in {{\mathcal {H}}}\) for all \(A\in {{\mathcal {A}}}.\) Suppose now that \(h_k\in {{\mathcal {H}}}\) are nonnegative functions increasing to a bounded function h. Showing \(h\in {{\mathcal {H}}}\) proves that \({{\mathcal {H}}}\) contains all bounded functions that are measurable with respect to the sigma-algebra \(\sigma ({{\mathcal {A}}}) = {{\mathcal {F}}}\otimes {{\mathcal {B}}}\otimes {{\mathcal {B}}}.\) We will show \(h\in {{\mathcal {H}}}\) next.

Let \(\varepsilon >0\) be fixed. Since \(0\le h_k\uparrow h\) where h is bounded, by the bounded convergence theorem there exists \(k_0 = k_0(\varepsilon )\) such that \( \Vert h-h_{k_0}\Vert _{L^2({\bar{{{\mathbb {P}}}}}\otimes \mu \otimes \mu )} < \varepsilon . \) Thus, by Lemma D.1,

for all \(n\ge 1.\) Since \(h_{k_0}\in {{\mathcal {H}}},\) there exists \(n_0 = n_0(\varepsilon )\) such that

for all \(n\ge n_0.\) A combination of the estimates yields

for all \(n\ge n_0.\) Hence \(h\in {{\mathcal {H}}}.\) Therefore, by the monotone class theorem \({{\mathcal {H}}}\) contains all bounded measurable functions.

By the Vitali–Hahn–Saks theorem there exists a probability measure \(\mathbf {P}^{(2)}\) satisfying

for all bounded measurable \(h:\Omega \times X\times X\rightarrow {{\mathbb {R}}}.\) The symmetry property (30) of \(\mathbf {P}^{(2)}\) is an immediate consequence. By the same token \(\mathbf {P}^{(2)}\) is invariant for \(\Phi ^{(2)}\):

Furthermore, taking h of the form \(h(\omega ,x,y) = u(\omega ),\)

shows \((\Pi _1)_*\mathbf {P}^{(2)} = {\bar{{{\mathbb {P}}}}}.\) Thus, \(\mathbf {P}^{(2)}\) is an invariant measure for the RDS \(\varphi ^{(2)}.\)

Suppose that either \(h(\omega ,x,y) = {\tilde{h}}(\omega ,x)\) or \(h(\omega ,x,y) = {\tilde{h}}(\omega ,y)\) holds identically. Then

This yields the claims concerning \(\mathbf {P}.\)\(\square \)

We are in position to prove the promised fact:

Lemma D.3

Standing Assumption \((\mathrm{SA}5')\) implies \((\mathrm{SA}5).\)

Proof

By Lemma D.2 it remains to verify (32)–(34). Using Lemma D.2,

and

Likewise

The proof is complete. \(\square \)

1.2 D.2 Disintegration of the Invariant Measure \(\mathbf {P}^{(2)}\)

In this subsection we shed some light on the invariant measure \(\mathbf {P}^{(2)}\) of the RDS \(\varphi ^{(2)}\) with the aid of disintegrations. The mathematical constructions here are well known, and we include this part for completeness. The results call for nice structure of the measurable spaces: we assume that both \((X,{{\mathcal {B}}})\) and \((\Omega _0,{{\mathcal {E}}})\) are standard measurable spaces.

We begin by stating a basic fact:

Lemma D.4

There exists a family of set functions \(\nu ^{(2)}_\omega :{{\mathcal {B}}}\rightarrow [0,1],\,\omega \in \Omega ,\) such that

- (i)

the map \(\omega \mapsto \nu ^{(2)}_\omega (B)\) is measurable for all \(B\in {{\mathcal {B}}}\otimes {{\mathcal {B}}};\)

- (ii)

\(\nu ^{(2)}_\omega \) is a probability measure for \({\bar{{{\mathbb {P}}}}}\)-a.e. \(\omega \in \Omega ;\)

- (iii)

for all \(h\in L^1(\mathbf {P}^{(2)}),\)

$$\begin{aligned} \int h\,\mathrm {d}\mathbf {P}^{(2)} = \int _\Omega \int _{X\times X} h(\omega ,x,y)\,\mathrm {d}\nu ^{(2)}_{\omega }(x,y)\,\mathrm {d}{\bar{{{\mathbb {P}}}}}(\omega ). \end{aligned}$$

The disintegration is essentially unique: if \({\tilde{\nu }}^{(2)}_\omega ,\, \omega \in \Omega ,\) is another family of such set functions, then \(\nu ^{(2)}_\omega = {\tilde{\nu }}^{(2)}_\omega \) for \({\bar{{{\mathbb {P}}}}}\)-a.e. \(\omega \in \Omega .\)

Proof

Since the product space \((X\times X, {{\mathcal {B}}}\otimes {{\mathcal {B}}})\) is also a standard measurable space and \((\Pi _1)_*\mathbf {P}= {\bar{{{\mathbb {P}}}}},\) classical results yield the lemma; see, e.g., Arnold [Proposition 1.4.3][3]. \(\square \)

It is helpful to think of \(\nu ^{(2)}_\omega \) as the conditional measure \(\mathbf {P}^{(2)}(\,\cdot \,|\,\omega ).\) In the following we will characterize the conditional measures \(\nu ^{(2)}_{\omega }.\)

Next, we extend \({\bar{{{\mathbb {P}}}}}\) to a stationary measure on the space of two-sided sequences. To that end define \(\Omega ^- = \Omega _0^{\{\ldots ,-2,-1,0\}}\) and \(\Omega ^+ = \Omega _0^{\{1,2,3,\ldots \}} = \Omega .\) The sigma-algebras \({{\mathcal {F}}}^-\) and \({{\mathcal {F}}}^+={{\mathcal {F}}}\) denote the corresponding products of \({{\mathcal {E}}}.\) Write also

Let \({\bar{\tau }}:{\bar{\Omega }}\rightarrow {\bar{\Omega }}\) denote the two-sided shift: \(({\bar{\tau }}^k{\bar{\omega }})_i = {\bar{\omega }}_{i+k}\) for all \(i,k\in {{\mathbb {Z}}}.\) Finally, let \(\Pi ^\pm :{\bar{\Omega }}\rightarrow \Omega ^\pm \) denote the canonical projections: \(\Pi ^-({\bar{\omega }}) = \omega ^-\) and \(\Pi ^+({\bar{\omega }}) = \omega ^+\) for all \({\bar{\omega }} = (\omega ^-,\omega ^+)\in \Omega ^-\times \Omega ^+.\)

We are ready to state another basic fact:

Lemma D.5

(1) There exists a unique probability measure \({\bar{{{\mathbb {Q}}}}}\) on \(({\bar{\Omega }},{\bar{{{\mathcal {F}}}}})\) which is invariant for \({\bar{\tau }}\) and satisfies \((\Pi ^+)_*{\bar{{{\mathbb {Q}}}}} = {\bar{{{\mathbb {P}}}}}.\)

(2) There exists an essentially unique family of set functions \(q_\omega :{{\mathcal {F}}}^-\rightarrow [0,1], \,\omega \in \Omega ,\) such that

- (i)

the map \(\omega \mapsto q_\omega (E)\) is measurable for all \(E\in {{\mathcal {F}}}^-;\)

- (ii)

\(q_\omega \) is a probability measure for \({\bar{{{\mathbb {P}}}}}\)-a.e. \(\omega \in \Omega ;\)

- (iii)

for all \(h\in L^1({\bar{{{\mathbb {Q}}}}}),\)

$$\begin{aligned} \int _{{\bar{\Omega }}} h({\bar{\omega }})\,\mathrm {d}{\bar{{{\mathbb {Q}}}}}({\bar{\omega }}) = \int _\Omega \int _{\Omega ^-} h(\omega ^-,\omega )\,\mathrm {d}q_{\omega }(\omega ^-)\,\mathrm {d}{\bar{{{\mathbb {P}}}}}(\omega ). \end{aligned}$$

Proof

(1) Since \((\Omega _0,{{\mathcal {E}}})\) is a standard measurable space, the shift-invariant measure \({\bar{{{\mathbb {Q}}}}}\) having \({\bar{{{\mathbb {P}}}}}\) as its marginal is uniquely constructed with the aid of Kolmogorov’s extension theorem by requiring that the finite dimensional distributions are translation invariant and coincide with those of \({\bar{{{\mathbb {P}}}}}.\) See, e.g., Arnold [3, Appendix A.3] for details.

(2) Since \((\Omega ^-,{{\mathcal {F}}}^-)\) is a standard probability space and \((\Pi ^+)_*{\bar{{{\mathbb {Q}}}}} = {\bar{{{\mathbb {P}}}}},\) the result is classical as in Lemma D.4. \(\square \)

The resulting dynamical system \(({\bar{\Omega }},{\bar{{{\mathcal {F}}}}},{\bar{{{\mathbb {Q}}}}},{\bar{\tau }})\) is the natural extension of \((\Omega ,{{\mathcal {F}}},{\bar{{{\mathbb {P}}}}},\tau )\) with homomorphism \(\Pi ^+.\) The intuition behind the measures in Lemma D.5 is the following: Think of \(\omega = (\omega _1,\omega _2,\ldots )\) as a stochastic process with law \({\bar{{{\mathbb {P}}}}}.\) Due to stationarity, it is possible to glue a history \(\omega ^- = (\ldots ,\omega _{-1},\omega _0)\) to \(\omega \) in a consistent and unique way such that the law \({\bar{{{\mathbb {Q}}}}}\) of \({\bar{\omega }} = (\omega ^-,\omega ) = (\ldots ,\omega _{-1},\omega _0,\omega _1,\omega _2,\ldots )\) is stationary and the marginal law corresponding to the future part \(\omega \) is \({\bar{{{\mathbb {P}}}}}.\) The measure \(q_\omega \) can be thought of as the conditional law \({\bar{{{\mathbb {Q}}}}}(\,\cdot \,|\,\omega ),\) the distribution of the past \(\omega ^{-}\) given the future \(\omega .\)

For the following it will be convenient to introduce the notations

and

for any finite sequence \((\omega ^-_{-n+1},\ldots ,\omega ^-_0)\subset \Omega _0.\)

Now, for all bounded measurable functions \(h(\omega ,x,y) = u(\omega )g(x,y)\) we have

On the other hand, Lemma D.2 yields

In order to disintegrate \({\bar{{{\mathbb {Q}}}}},\) let us write \({\bar{\omega }} = (\omega ^-,\omega )\) in the obvious manner, noting that \(u(\Pi ^+({\bar{\omega }})) = u(\omega )\) and \(\varphi ^{(2)}(n,\Pi ^+({\bar{\tau }}^{-n}{\bar{\omega }})) = \varphi ^{(2)}(\omega ^-_{-n+1},\ldots ,\omega ^-_0).\) Thus, Lemma D.5 yields

The following observation is now key:

Lemma D.6

Given \(\omega ^-\in \Omega ^-,\) there exists a probability measure \(\mu _{\omega ^{-}}\) on \((X,{{\mathcal {B}}})\) such that

for all bounded measurable \(g:X\times X\rightarrow {{\mathbb {R}}}.\)

Note that \(\mu _{\omega ^-}\) has the interpretation of being the pushforward of \(\mu \) from the infinitely distant past along the history \(\omega ^- = (\ldots ,\omega ^-_1,\omega ^-_0).\)

Proof of Lemma D.6

Consider first a bounded measurable \(g^1:X\rightarrow {{\mathbb {R}}}.\) Let \(\varepsilon >0.\) By (47) of (SA5\('\)) there exists \(N\ge 0\) such that

for all \(n\ge N\) and \(m\ge 0.\) We see that \((\int g^1\circ \varphi (\omega ^-_{-n+1},\ldots ,\omega ^-_0)\,\mathrm {d}\mu )_{n=1}^{\infty }\) is a Cauchy sequence, and thus convergences. Since \(g^1\) was arbitrary, the Vitali–Hahn–Saks theorem yields the existence of a measure \(\mu _{\omega ^-}\) such that

This yields (50) for all \(g(x,y) = g^1(x)g^2(y)\) with both \(g^1,g^2:X\rightarrow {{\mathbb {R}}}\) bounded and measurable. Similarly to the proof of Lemma D.2, a straightforward application of the monotone class theorem extends (50) to all bounded measurable \(g:X\times X\rightarrow {{\mathbb {R}}}.\)\(\square \)

We finally arrive at the characterization of the conditional measure \(\nu ^{(2)}_\omega \) as the expected pushforward of \(\mu \otimes \mu \) from the infinitely distant past along all histories consistent with \(\omega \):

Corollary D.7

For \({\bar{{{\mathbb {P}}}}}\)-a.e. \(\omega \in \Omega ,\)

Proof

Equating first the expressions of \(\int h\,\mathrm {d}\mathbf {P}^{(2)}\) in (48) and (49), and then applying (50) to the latter, we obtain

Since the conditional measures \(\nu ^{(2)}_\omega \) are unique, the claim follows. \(\square \)

Let us lastly point out that the invariance of \(\mathbf {P}^{(2)}\) is equivalent to

holding for almost all \(\omega \) with respect to \({\bar{{{\mathbb {P}}}}},\) for all \(m\ge 1.\) The equation means that

holds for all bounded measurable functions \(g:X\times X\rightarrow {{\mathbb {R}}}\) and \(u:\Omega \rightarrow {{\mathbb {R}}}.\) It is a good exercise for the interested reader to reprove the invariance of \(\mathbf {P}^{(2)}\) by verifying the equation above directly, using Corollary D.7, Lemma D.6 and Lemma D.5.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Hella, O., Stenlund, M. Quenched Normal Approximation for Random Sequences of Transformations. J Stat Phys 178, 1–37 (2020). https://doi.org/10.1007/s10955-019-02390-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10955-019-02390-5