Abstract

This paper illustrates how a deterministic approximation of a stochastic process can be usefully applied to analyse the dynamics of many simple simulation models. To demonstrate the type of results that can be obtained using this approximation, we present two illustrative examples which are meant to serve as methodological references for researchers exploring this area. Finally, we prove some convergence results for simulations of a family of evolutionary games, namely, intra-population imitation models in n-player games with arbitrary payoffs.

Similar content being viewed by others

Notes

A function f(x,γ) is O(γ) as γ→0 uniformly in x∈I iff ∃δ>0,∃M>0 such that |f(x,γ)|≤M⋅|γ| for |γ|<δ and for every x∈I, where δ,M are constants (independent of x).

References

Banisch, S., Lima, R., Araújo, T.: Agent based models and opinion dynamics as Markov chains. Social Networks. doi:10.1016/j.socnet.2012.06.001

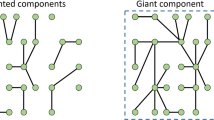

Barrat, A., Barthélemy, M., Vespignani, A.: Dynamical Processes on Complex Networks. Cambridge University Press, Cambridge (2008)

Beggs, A.W.: Stochastic evolution with slow learning. J. Econ. Theory 19(2), 379–405 (2002)

Beggs, A.W.: On the convergence of reinforcement learning. J. Econ. Theory 122(1), 1–36 (2005)

Beggs, A.W.: Large deviations and equilibrium selection in large populations. J. Econ. Theory 132(1), 383–410 (2007)

Benaïm, M., Le Boudec, J.-Y.: A class of mean field interaction models for computer and communication systems. Perform. Eval. 65(11–12), 823–838 (2008)

Benaim, M., Weibull, J.W.: Deterministic approximation of stochastic evolution in games. Econometrica 71(3), 873–903 (2003)

Benveniste, A., Priouret, P., Metivier, M.: Adaptive Algorithms and Stochastic Approximations. Springer, New York (1990)

Binmore, K.G., Samuelson, L., Vaughan, R.: Musical chairs: modeling noisy evolution. Games Econ. Behav. 11(1), 1–35 (1995)

Börgers, T., Sarin, R.: Learning through reinforcement and replicator dynamics. J. Econ. Theory 77(1), 1–14 (1997)

Borkar, V.S.: Stochastic Approximation: A Dynamical Systems Viewpoint. Cambridge University Press, New York (2008)

Borrelli, R.L., Coleman, C.S.: Differential Equations: A Modeling Approach. Prentice-Hall, Englewood Cliffs (1987)

Boylan, R.T.: Laws of large numbers for dynamical systems with randomly matched individuals. J. Econ. Theory 57(2), 473–504 (1992)

Boylan, R.T.: Continuous approximation of dynamical systems with randomly matched individuals. J. Econ. Theory 66(2), 615–625 (1995)

Erev, I., Roth, A.E.: Predicting how people play games: reinforcement learning in experimental games with unique, mixed strategy equilibria. Am. Econ. Rev. 88(4), 848–881 (1998)

Ethier, S.N., Kurtz, T.G.: Markov Processes: Characterization and Convergence. Wiley Series in Probability and Statistics (2005)

Fudenberg, D., Kreps, D.M.: Learning mixed equilibria. Games Econ. Behav. 5(3), 320–367 (1993)

Fudenberg, D., Levine, D.K.: The Theory of Learning in Games. MIT Press, Cambridge (1998)

Galán, J.M., Latek, M.M., Rizi, S.M.M.: Axelrod’s metanorm games on networks. PLoS ONE 6(5), e20474 (2011)

Gilbert, N., Troitzsch, K.G.: Simulation for the Social Scientist. McGraw-Hill, New York (2005)

Gintis, H.: Markov models of social dynamics: theory and applications. ACM Trans. Intell. Syst. Technol. (in press). Available at http://www.umass.edu/preferen/gintis/acm-tist-markov.pdf, http://tist.acm.org/papers/TIST-2011-05-0076.R2.html

Gleeson, J.P., Melnik, S., Ward, J.A., Porter, M.A., Mucha, P.J.: Accuracy of mean-field theory for dynamics on real-world networks. Phys. Rev. E 85(2), 026106 (2012)

Hairer, E., Nørsett, S., Wanner, G.: Solving Ordinary Differential Equations I: Nonstiff Problems. Springer, Berlin (1993)

Hofbauer, J., Sandholm, W.H.: On the global convergence of stochastic fictitious play. Econometrica 70(6), 2265–2294 (2002)

Hopkins, E.: Two competing models of how people learn in games. Econometrica 70(6), 2141–2166 (2002)

Huet, S., Deffuant, G.: Differential equation models derived from an individual-based model can help to understand emergent effects. J. Artif. Soc. Soc. Simul. 11(2), 10 (2008)

Izquierdo, L.R., Izquierdo, S.S., Gotts, N.M., Polhill, J.G.: Transient and asymptotic dynamics of reinforcement learning in games. Games Econ. Behav. 61(2), 259–276 (2007)

Izquierdo, S.S., Izquierdo, L.R., Gotts, N.M.: Reinforcement learning dynamics in social dilemmas. J. Artif. Soc. Soc. Simul. 11(2), 1 (2008)

Izquierdo, L., Izquierdo, S., Galán, J.M., Santos, J.I.: Techniques to understand computer simulations: Markov chain analysis. J. Artif. Soc. Soc. Simul. 12(1), 6 (2009)

Izquierdo, S.S., Izquierdo, L.R., Vega-Redondo, F.: The option to leave: conditional dissociation in the evolution of cooperation. J. Theor. Biol. 267(1), 76–84 (2010)

Kotliar, G., Savrasov, S.Y., Haule, K., Oudovenko, V.S., Parcollet, O., Marianetti, C.A.: Electronic structure calculations with dynamical mean-field theory. Rev. Mod. Phys. 78(3), 865–951 (2006)

Kulkarni, V.G.: Modeling and Analysis of Stochastic Systems. Chapman & Hall/CRC, London (1995)

Kushner, H.: Stochastic approximation: a survey. WIREs: Comput. Stat. 2(1), 87–96 (2010)

Kushner, H.J., Yin, G.G.: Stochastic Approximation Algorithms and Applications. Springer, New York (2003)

Lambert, M.F.: Numerical Methods for Ordinary Differential Systems. Wiley, Chichester (1991)

Ljung, L.: Analysis of recursive stochastic algorithms. IEEE Trans. Autom. Control AC-22 (4), 551–575 (1977)

López-Pintado, D.: Diffusion in complex social networks. Games Econ. Behav. 62(2), 573–590 (2008)

Macy, M.W., Flache, A.: Learning dynamics in social dilemmas. Proc. Natl. Acad. Sci. USA 99(3), 7229–7236 (2002)

Morozov, A., Poggiale, J.C.: From spatially explicit ecological models to mean-field dynamics: the state of the art and perspectives. Ecol. Complex. 10, 1–11 (2012)

Norman, M.F.: Some convergence theorems for stochastic learning models with distance diminishing operators. J. Math. Psychol. 5(1), 61–101 (1968)

Norman, M.F.: Markov Processes and Learning Models. Academic Press, New York (1972)

Perc, M., Szolnoki, A.: Coevolutionary games—a mini review. Biosystems 99(2), 109–125 (2010)

Rozonoer, L.I.: On deterministic approximation of Markov processes by ordinary differential equations. Math. Probl. Eng. 4(2), 99–114 (1998)

Sandholm, W.H.: Deterministic evolutionary dynamics. In: Durlauf, S.N., Blume, L.E. (eds.) The New Palgrave Dictionary of Economics, vol. 2. Palgrave Macmillan, New York (2008)

Sandholm, W.H.: Stochastic imitative game dynamics with committed agents. J. Econ. Theory 147(5), 2056–2071 (2012)

Szabó, G., Fáth, G.: Evolutionary games on graphs. Phys. Rep. 446(4–6), 97–216 (2007)

Vega-Redondo, F.: Complex Social Networks. Cambridge University Press, Cambridge (2007)

Vega-Redondo, F.: Economics and the Theory of Games. Cambridge University Press, Cambridge (2003)

Weiss, P.: L’hypothèse du champ moléculaire et la propriété ferromagnétique. J. Phys. 6, 661–690 (1907)

Young, H.P.: Individual Strategy and Social Structure: An Evolutionary Theory of Institutions. Princeton University Press, Princeton (1998)

Acknowledgements

The authors gratefully acknowledge financial support from the Spanish Ministry of Education (JC2009-00263) and MICINN (CONSOLIDER-INGENIO 2010: CSD2010-00034, and DPI2010-16920). We also thank two anonymous reviewers for their useful comments.

Author information

Authors and Affiliations

Corresponding author

Appendix: Approximation of Difference Equations by Differential Equations

Appendix: Approximation of Difference Equations by Differential Equations

This appendix discusses the relation between a discrete-time difference equation of the form Δx n =γf(x n ), with initial point x 0, and the solution x(t,x 0) of its corresponding continuous time differential equation \(\dot{x}= f(x)\) with x(t=0)=x 0.

This relationship can be neatly formalised using Euler’s method [12]. If f(x) satisfies some conditions that guarantee convergence [23, 35], e.g. if f(x) is globally Lipschitz or if it is smooth on a forward-invariant compact domain of interest D, the difference between the solution x(t,x 0) of the differential equation at time t=T<∞ and the solution x n of the equation in differences at step \(n = \operatorname {int}(T/\gamma)\) converges to 0 as the step-size parameter γ tends to 0:

and, for every \(T, \max_{n = 0,1,\ldots,N}\|x_{n} - x(n\frac{T}{N},x_{0})\| \mathop{\longrightarrow}\limits^{N\to \infty}0\).

As an example, consider a vector x=[x 1,x 2], the function f(x)=[x 2,−x 1], the differential equation \(\dot{x}= f(x)\) and its associated (deterministic) equation in differences Δx n =γf(x n ). Figure 7 shows a trajectory map of the differential equation \(\dot{x}= f(x)\), together with several values of discrete processes that follow the equation in differences Δx n =γf(x n ), for different values of γ and for the same initial value x 0=[x 1,x 2]0. It can be seen how, for decreasing values of γ and for a correspondingly increasing finite number of steps \(n = \operatorname {int}(T/\gamma)\), the discrete process gets closer and closer to the trajectory of the differential equation that goes through x 0. The reader can confirm this fact by running simulations with the interactive version of Fig. 7.

Convergence of difference and differential equations for small step-size. Let x=[x 1,x 2] be a generic point in the real plane. Figure 7 shows a trajectory map of the differential equation \(\dot{x} = f(x) =[x_{2}, -x_{1}]\), together with several values of the discrete process that follow the equation in differences x n+1−x n =γf(x n ), for different values of the step-size parameter γ and for a chosen initial value x 0=[x 1,x 2]0. The background is coloured using the norm of the expected motion, rescaled to be in the interval (0,1). It can be seen how, for decreasing values of γ, the discrete process tends to follow temporarily the trajectory of the differential equation that goes through x 0. Interactive figure at http://demonstrations.wolfram.com/DifferenceEquationVersusDifferentialEquation/ (Color figure online)

Rights and permissions

About this article

Cite this article

Izquierdo, S.S., Izquierdo, L.R. Stochastic Approximation to Understand Simple Simulation Models. J Stat Phys 151, 254–276 (2013). https://doi.org/10.1007/s10955-012-0654-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10955-012-0654-z