Abstract

The administrative burden for physicians in the hospital can affect the quality of patient care. The Service Center Medical Informatics (SMI) of the University Hospital Würzburg developed and implemented the smartphone-based mobile application (MA) ukw.mobile1 that uses speech recognition for the point-of-care ordering of radiological examinations. The aim of this study was to examine the usability of the MA workflow for the point-of-care ordering of radiological examinations. All physicians at the Department of Trauma and Plastic Surgery at the University Hospital Würzburg, Germany, were asked to participate in a survey including the short version of the User Experience Questionnaire (UEQ-S) and the Unified Theory of Acceptance and Use of Technology (UTAUT). For the analysis of the different domains of user experience (overall attractiveness, pragmatic quality and hedonic quality), we used a two-sided dependent sample t-test. For the determinants of the acceptance model, we employed regression analysis. Twenty-one of 30 physicians (mean age 34 ± 8 years, 62% male) completed the questionnaire. Compared to the conventional desktop application (DA) workflow, the new MA workflow showed superior overall attractiveness (mean difference 2.15 ± 1.33), pragmatic quality (mean difference 1.90 ± 1.16), and hedonic quality (mean difference 2.41 ± 1.62; all p < .001). The user acceptance measured by the UTAUT (mean 4.49 ± 0.41; min. 1, max. 5) was also high. Performance expectancy (beta = 0.57, p = .02) and effort expectancy (beta = 0.36, p = .04) were identified as predictors of acceptance, the full predictive model explained 65.4% of its variance. Point-of-care mHealth solutions using innovative technology such as speech-recognition seem to address the users’ needs and to offer higher usability in comparison to conventional technology. Implementation of user-centered mHealth innovations might therefore help to facilitate physicians’ daily work.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

In most western countries, there is a growing number of administrative tasks, such as diagnostic ordering (e.g. imaging) and patient management, which divert time and distract the focus from a physician’s genuine clinical work, i.e. patient care. Physicians spend almost one-quarter of their working hours and up to 10.6 h per week on administrative duties as well as up to 15 h per week on quality measurement and reporting [1,2,3,4]. For every hour clinicians spend on direct clinical face time with patients, almost two additional hours are spent on electronic health records and deskwork [3]. Indeed, the burden of administrative tasks can affect the quality of patient care: in a nationwide survey in 2013 in the United States, 73% of physicians reported compromises in patient care due to documentation requirements [5]. Consequently, the American College of Physicians founded the “Patients before Paperwork” initiative in 2015 and published the position paper “Putting Patients First by Reducing Administrative Tasks in Health Care” in 2017. There, the authors suggested the “innovative use of health IT” as one possible solution to reduce the number of administrative tasks [6]. In 2018, the World Health Organization proposed a new classification of digital health interventions (DHI) that categorizes four complementary digital and mobile technologies addressing health system needs: DHI for clients, health care providers, health system managers, and data services [7]. Accordingly, DHI for health care providers including the sub-category Laboratory and Diagnostics Imaging Management can play an important role in overcoming the challenges described above [7].

Since 2010 the Service Center Medical Informatics (SMI) at the University Hospital of Würzburg developed and implemented the mobile application (MA) ukw.mobileFootnote 1, which grants access to all important features of the electronic health records (EHR) of patients. The implementation process of the MA was carried out in a stepwise and iterative fashion and yields a deep integration into the daily inpatient ward workflows. Apart from access to the EHR, the MA offers also photographic wound documentation, which has already been shown to improve the quality of billing [8]. The technical stack is implemented in three layers (see Fig. 1). The first layer is a native iPhone Operating System (iOS) App running on Apple iPads (Nursing Teams) and iPhones (all Physicians) which are controlled by the hospital’s mobile device management. For privacy reasons no data is stored on the devices but only in the hospital information system (HIS) i.s.h.med (Cerner Health Services Deutschland GmbH, Berlin, Germany). Secondly, all data is exchanged via middleware with the connected subsystems, e.g. picture archiving and communication system (PACS) for radiological imaging data. Authentication and authorization are handled via the third, backend layer against the HIS. Equally to the desktop application (DA) the MA offers embedded speech recognition via Dragon Medical (Nuance Communications, Burlington, USA).

Technical stack of the mobile application (MA) ukw.mobile1. The iPhone Operating System (iOS) application frontend communicates with the hospital information system (HIS) and clinical subsystems via the middleware layer. ABAP denotes advanced business application programming language; ASP.NET, Active Server Pages.NET; API, application programming interface; HTML, hypertext markup language; IS-H, industry solution healthcare; JSON, JavaScript object notation; PACS, picture archiving and communication system; PDF, portable document format; XML, eXtensible markup language

In this study, we compare the conventional DA workflow with the new MA workflow for medical test ordering, e.g. ordering a conventional X-Ray directly during the daily ward round.

The DA workflow, as a reference, is a conventional personal computer process established in and with the tools of the clinical workplace system i.s.h.med. Professional documentation is carried out in paremetrizable forms (see Appendix). As physicians need to locate a workstation first, they typically complete a series of documentation or order entry tasks at once. This might carry the risk of information loss or poor data quality. The full workflow is depicted in Fig. 2.

In the MA workflow (see Fig. 3), physicians use their personal mobile device, which is available anytime at the point of care, i.e. at the patient bedside. After login via PIN or FaceID into the MA ukw.mobile1, physicians select the patient via wrist band and enter the radiological test order using speech recognition. The order is posted in the HIS at the radiological department, where the radiological personnel completes the ordering process by checking the forms and arranging the appointment. If relevant clinical information is missing or the order entry is ambiguous, medical assistants in the department of radiology will reach out to the ordering doctor through his mobile device. The frontend of the MA ukw.mobile1 for radiological test ordering workflow is visualized in Fig. 4.

MA ukw.mobile1 frontend with steps of radiological test ordering: 1 Overview of the patient’s documents 2 Start new workflow by choosing the clinical order form “Anordnung ZLM” 3 Clinical order form “Anordnung ZLM” with free text field and order instructions for speech recognition 4 After speech-to-text conversion the order is ready to revise or save/send

The implementation of the MA workflow was not resctricted to technical aspects alone, but also involved organisational change, i.e. letting radiological personnel fill out the structured HIS form. This relieves physicians from documentation burden, and is necessary for the workflow’s next iteration, wich will be automatisation of the form filling process through artificial intelligence methods.

In our recently submitted publication, we could show that this MA workflow saved time in comparison to the conventional desktop application (DA). The time from medical indication to completion of the diagnostic test order as well as the duration for the test ordering itself could be significantly reduced.

Besides these timesaving aspects, the subjective perspective and perception of each user is essential as it can either impede or promote the actual use of medical software solutions. In an international survey among medical and nursing directors of German and Austrian hospitals in 2020, the insufficient usability and user experience of most products was identified as the leading barrier for the implementation of new IT solutions in hospitals by almost 25% of the participants [9]. It is therefore important to verify that a particular software solution is perceived as useful, intuitive, and helpful.

Consequently, we aimed to investigate the user experience of the above-described new MA for medical test ordering via speech recognition and focused on overall attractiveness, pragmatic and hedonic quality, as well as user acceptance and its determinants (e.g. performance expectancy). We compared the user experience of the two workflows.

The overall attractiveness measured by the Short Version of the User Experience Questionnaire (UEQ-S) was defined as the primary outcome. The comparison of the UEQ-S sub-dimensions and the MA’s user acceptance measured by the Unified Theory of Acceptance and Use of Technology (UTAUT) were secondary outcomes. Our primary research hypotheses were:

-

H0: There is no difference in the overall attractiveness of the DA workflow and the MA workflow.

-

H1: There is a difference in the overall attractiveness of the DA workflow and the MA workflow.

Methods

Setting

The study was conducted at the University Hospital Würzburg. Since the MA ukw.mobile1 workflow had been implemented in the Department of Trauma and Plastic Surgery first, we chose this department for our research project. As medical test, we chose radiological examinations, because X-ray, magnet resonance imaging (MRI), computer tomography (CT) are frequently requested diagnostic tests by physicians of this discipline.

Survey instrument I: Short version of the user experience questionnaire (UEQ-S)

Assessing the user experience of technologies remains challenging because of the theoretical complexity and multidimensionality of user experience in general. Users not only expect a highly pragmatic, performative quality, but also appreciate a product’s novelty and stimulation (hedonic quality). Schrepp et al. could show that both the hedonic (e.g. “Is it exciting and motivating to use the product?” or “Is the product innovative and creative?”) and the pragmatic quality (e.g. “Can users solve their tasks without unnecessary effort?”) influence the attractiveness of and preference for a product [10].

The Short Version of the User Experience Questionnaire (UEQ-S) was designed to offer a simple but effective tool to measure the overall attractiveness of a product, but also its pragmatic and hedonic quality. As shown in Fig. 5, it consists of eight items in the two above-mentioned dimensions (four items for each dimension). Each item is measured on a 7-point Likert scale with two opposite meanings (e.g. inefficient and efficient) ranging from -3 (fully agree with negative term) to + 3 (fully agree with positive term) [11].

Survey instrument II: Unified theory of acceptance and use of technology (UTAUT)

There are several theoretical frameworks and corresponding instruments to assess the acceptance of information- and telecommunication technology (ICT) in medicine. In 2003, Venkatesh et al. conducted a comprehensive research project and combined eight different theories (e.g. Technology Assessment Model: TAM and Theory of Reasoned Action: TRA) into one unified theoretical framework model: the Unified Theory of Acceptance and Use of Technology (UTAUT). It holds four key constructs that influence the behavioral intention to use the new technology and its actual use: performance expectancy (PE), effort expectancy (EE), social influence (SI) and facilitating conditions (FC). While the first three variables indirectly influence the actual use, facilitating conditions is a direct determinant of user behavior. Acceptance of technology is operationalized as behavioral intention to use. The UTAUT has been proven to be very robust and to account for 70% of the variance in acceptance and about 50% in actual use [12]. Since its introduction in 2003, the UTAUT has been applied extensively in the context of Telemedicine, Digital Medicine, mobile health (mHealth) and electronic Health (eHealth) [13,14,15,16,17]. All UTAUT items are measured on a 5-point Likert scale ranging from “strongly disagree” to “strongly agree”. In 2012, Venkatesh et al. extended the original UTAUT framework into the consumer context (UTAUT2) by adding aspects as the consumer affect, the consumer habit or monetary costs [18]. In 2018, the German version of the UTAUT2 questionnaire was translated and validated by Harborth & Pape [19]. Since our research project did not fit in the consumer context, we used a research model (see Fig. 6) based on the original UTAUT constructs as proposed by Hennemann et al. [13] and used the validated German UTAUT2-items as translated and validated by Harborth & Pape [19].

Comprehensive survey and data collection

We designed a comprehensive survey, which consisted of sociodemographic data, the UTAUT items for the MA workflow, and the UEQ-S items for each the usual DA workflow and the MA workflow. At the end of the survey, the participants had the possibility to give free text answers on why they did or did not use the MA. The survey was made available to all physicians of the Department of Trauma and Plastic Surgery at University Hospital Würzburg in a paper version. The data were collected in December 2020 in an anonymized fashion.

Statistical analysis

For statistical analysis, SPSS Statistics 26 (IBM, Armonk, New York, USA) was used. Normality in distribution was inspected by using Q-Q plots, histograms, and Shapiro–Wilk test. To compare the quality of the two workflows, a dependent sample t-test was used, as suggested by the author [10, 20]. To test the predictive model of acceptance and its determinants, we performed hierarchical regression following the approach described by e.g. Hennemann et al. or Apolinario-Hagen et al. [13, 17]. The significance level was set at α = 0.05. All tests were performed 2-sided. No adjustment was done for multiple testing.

Results

Study population

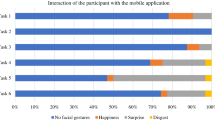

The comprehensive questionnaire was handed out to all physicians of the Department of Trauma and Plastic Surgery (n = 30) at the University Hospital Würzburg, Germany. With 21 of the 30 physicians completing the questionnaire, the response rate of the survey was 70%. The mean age of the participants was 34 ± 8 years (range from 26 to 46 years). The majority of the participating physicians was male (62%) and 71% were medical interns in training. 81.0% of the participants (17/21) had prior experience in using the MA.

Instrument I (UEQ-S): User experience – MA workflow vs. DA workflow

Sixteen of the 21 participants answered all items for the MA workflow, while nineteen of the 21 participants answered all the items for the DA workflow. The distribution of the mean values of the eight items is shown in Fig. 7.

Distribution of the mean values of the eight items of the UEQ-S for the mobile application (MA) workflow and the desktop application (DA) workflow. The y-axis describes the eight opposing items of the UEQ-S. The x-axis describes the level of agreement ranging from -3 (fully agree with negative term) to + 3 (fully agree with positive term)

The overall attractiveness was significantly higher for the MA workflow (1.65 ± 0.70) than for the DA workflow (0.50 ± 1.26) with a mean difference of 2.15 ± 1.33 (p < 0.001). By looking at the two dimensions of the UEQ-S, the pragmatic quality (MA: 2.08 ± 0.59 vs. DA: 0.17 ± 1.40; mean difference 1.90 ± 1.16; p < 0.001) and the hedonic quality (MA: 1.23 ± 1.06 vs. DA: -1.17 ± 1.32; mean difference 2.41 ± 1.62; p < 0.001) were also both higher for the MA workflow compared with the DA workflow.

For the MA workflow, the highest score was reached for its first item (pragmatic quality: obstructive vs. supportive) with a mean value of 2.4 ± 0.8, the lowest score was reached at the fifth item (hedonic quality: boring vs. exciting) with a mean value of 0.7 ± 1.3. The mean values of all eight items were positive. Three of the four items in the dimension of pragmatic quality were greater than 2.

For the DA workflow, the highest score was reached at the fourth item (pragmatic quality: confusing vs. clear) with an almost neutral mean value of 0.3 ± 1.8 and the lowest score was reached at the seventh item (hedonic quality: conventional vs. inventive) with a mean value of -1.6 ± 1.4. In total, the mean values of only two items were positive, and all mean values of the hedonic dimension were negative. In general, the hedonic quality was rated lower than the pragmatic quality for both workflows.

Instrument II (UTAUT): User acceptance and its determinants for the MA workflow

Nineteen of all participants answered all the UTAUT items, except for one person not answering to one item (FC2) in the category of facilitating conditions. The descriptive results of for each item are shown in Table 1.

The overall acceptance measured on the 5-point Likert scale was high (4.5 ± 0.4), as well for the predictors of user acceptance: performance expectancy (4.3 ± 0.6), effort expectancy (4.4 ± 0.7), social influence (3.7 ± 0.8) and facilitating conditions (4.3 ± 0.7). The item with the overall highest score was BI1 (“I intend to use the MA in the future”) with a mean value of 4.7 ± 0.5. Social influence was the only domain, which achieved a lower mean score than 4.0 (3.7 ± 0.8) with the item SI4 (“In general, I receive support in using the MA”) and the item SI2 (“People who are important to me think that I should use the MA”) exhibiting the lowest mean values (3.6 ± 1.2 and 3.7 ± 1.0). The other three constructs performance expectancy, effort expectancy and facilitating conditions had all high agreement levels ranging around 4.3 (maximum = 5.0).

To test how well the selected determinants explained user acceptance, we performed a hierarchical regression model by including the predictors of our research model block-wise. In total, we performed five regression models (Model 1: sociodemographic data (gender, age), model 2: + performance expectancy, model 3: + effort expectancy, model 4: + social influence and model 5: + facilitating conditions. The explained variance for the sociodemographic data alone was very low (model 1: R2 = -0.05, p = 0.59). When entering the UTAUT predictors, the explained variance increased (e.g. R2 = 0.58 for model 2, p < 0.01). The full model 5 explained 65.4% of the variance (R2 = 0.65, p < 0.01). Performance expectancy (beta = 0.57, p = 0.02) and effort expectancy (beta = 0.36, p = 0.04) significantly predicted user acceptance, whereas social influence and facilitating conditions did not reach significance.

Discussion

Principal results

To the best of our knowledge, this is the first study that investigated user experience and user acceptance of a smartphone-based in-hospital mHealth application, which offers diagnostic imaging management via speech recognition. We could show that physicians were very satisfied with the MA including the corresponding workflow. Compared to the conventional DA workflow, the overall attractiveness and the pragmatic and hedonic qualities of the MA workflow were considerably higher. Correspondingly, the user acceptance as an essential driver or barrier of actual use behavior was also very high. Performance expectancy and effort expectancy were identified as significant predictors for the high user acceptance.

Comparison with prior work

There is limited evidence in the field of user experience and user acceptance analysis of DHI for health care providers using speech recognition in general and especially for the subcategory of Laboratory and Diagnostics Imaging Management. The only corresponding DHI mentioned in the WHO lead document is the laboratory test registration tool Bahmni-OpenELIS: “When a patient is registered in Bahmni using the registration module, the patient name and demographic information is synced automatically to the lab system. When the patient goes to the lab, the lab technician collecting the sample can look up the patient and add tests for that patient” [7]. Even though the features of this DHI seems to have been expanded, it has not been studied for user experience and user acceptance purposes [21]. Furthermore, it does not offer a speech recognition service, which constitutes the main innovative feature of this mobile application.

The authors of the UEQ-S offer an online database and benchmarking tool to compare the results with more than 240 other product evaluations, which compromise cumulatively around 1400 study participants [22]. The feedback per scale is grouped into five categories: excellent (The evaluated product is among the best 10% of results) good (10% of the results in the benchmark are better than the evaluated product, 75% of the results are worse), above average (25% of the results in the benchmark are better than the evaluated product, 50% of the results are worse), below average (50% of the results in the benchmark are better than the evaluated product, 25% of the results are worse) and bad (The evaluated product is among the worst 25% of results). By applying this tool, the MA workflow performed above average scores for all components (pragmatic quality: excellent; hedonic quality: above average; overall attractiveness: good). By contrast, the DA workflow achieved low scores (pragmatic quality: bad; hedonic quality: bad, overall attractiveness: bad). Yet, the application of this benchmarking tool remains limited since the database to date primarily contains evaluation results of business applications, web shops or services and social networks. However, since there is a lack of further validated benchmarking tools regarding medical applications, the UEQ-S offers further evidence on the effectiveness and acceptance of MA.

Limitations

Several limitations need to be considered. Despite a response rate of 70%, the resulting sample size of 21 physicians limits the generalizability of our findings. Furthermore, the results are limited to the particularities of our hospital, its infrastructure and corresponding processes. The length of individual experience with the MA, which might have played an important role for the user experience, was not assessed in our survey. This should be considered for future investigations. In contrast to the UEQ-S, a validated German version of the original UTAUT published by Venkatesh et al. in 2003 still does not exist. Fortunately, there is the validated version of the UTAUT-2 by Harborth and Pape, in which the main predictor variables are very similar to the ones of the original UTAUT framework. Since the UTAUT-2 consumer perspective does not fit into our research project, we used a modified research model of the original UTAUT framework by adopting the validated German UTAUT-2 items. In general, solely relying on questionnaires doesn’t cover all relevant usability and user experience aspects. Accordingly, we could recently confirm our findings through qualitative analysis using contextual interviews [23].

Conclusions

High quality patient care requires a rigorous implementation of the Patients before Paperwork and Putting Patients First by Reducing Administrative Tasks in Health Care policy as proposed by the American College of Physicians. With this study, we could illustrate that physicians seem to be more than willing to use innovative mHealth solutions when developed and implemented in a user-centered design. We see a huge potential in reducing the physicians’ burden of administrative tasks by adopting user-centered innovative DHI such as the ukw.mobile1 mHealth solution using speech recognition for point-of-care diagnostic management.

Data Availability

The data that support the findings of this study are available from the corresponding author, FK, upon reasonable request.

Notes

Idea and concept: Helmut Greger (SMI), technical concept and development: Ulrich Trampe (SMI).

Abbreviations

- BI:

-

Behavioral Intention

- DA:

-

Desktop application

- DHI:

-

Digital health intervention

- EE:

-

Effort expectancy

- eHealth:

-

Electronic Health

- EHR:

-

Electronic health record

- FC:

-

Facilitating conditions

- HIS:

-

Hospital information system

- ICT:

-

Information and telecommunication technology

- IOS:

-

IPhone Operating System

- IT:

-

Information technology

- MA:

-

Mobile application

- mHealth:

-

Mobile Health

- PE:

-

Performance expectancy

- RCT:

-

Randomized controlled trial

- SD:

-

Standard deviation

- SI:

-

Social influence

- SMI:

-

Service Center Medical Informatics

- TAM:

-

Technology Assessment Model

- TRA:

-

Theory of Reasoned Action

- UEQ-S:

-

Short Version of the User Experience Questionnaire

- UTAUT:

-

Unified Theory of Acceptance and Use of Technology

- WHO:

-

World Health Organization

References

Nova Scotia Office of Regulatory Affairs and Service Effectiveness, Physician Administrative Burden Survey – Final Report. 2020. Online available from: https://doctorsns.com/sites/default/files/2020-11/admin-burden-survey-results.pdf

Rao, S.K., et al., The Impact of Administrative Burden on Academic Physicians: Results of a Hospital-Wide Physician Survey. Academic Medicine, 2017. 92(2).

Sinsky, C., et al., Allocation of Physician Time in Ambulatory Practice: A Time and Motion Study in 4 Specialties. Ann Intern Med, 2016. 165(11): p. 753-760.

Arndt, B.G., et al., Tethered to the EHR: Primary Care Physician Workload Assessment Using EHR Event Log Data and Time-Motion Observations. Ann Fam Med, 2017. 15(5): p. 419-426.

Christino, M.A., et al., Paperwork versus patient care: a nationwide survey of residents' perceptions of clinical documentation requirements and patient care. J Grad Med Educ, 2013. 5(4): p. 600-4.

Erickson, S.M., et al., Putting Patients First by Reducing Administrative Tasks in Health Care: A Position Paper of the American College of Physicians. Ann Intern Med, 2017. 166(9): p. 659-661.

Word Health Organization (WHO), Classification of Digital Health Interventions v1.0 - A shared language to describe the uses of digital technology for health. 2018. p. 7. Online available from: https://apps.who.int/iris/handle/10665/260480.

Jordan, M.C., et al., Qualitätssteigerung der Abrechnungsprüfung durch Smartphone-basierte Fotodokumentation in der Unfall‑, Hand- und plastischen Chirurgie. Der Unfallchirurg, 2021. 124(5): p. 366-372.

Hübner, U., Esdar, M., Hüsers, J., Liebe, J.-D., Naumann, L., Thye, J., & Weiß, J., IT-Report Gesundheitswesen. Wie reif ist die Gesundheits-IT aus Anwenderperspektive?, H. Osnabrück, Editor. 2020. Online available from: https://www.hs-osnabrueck.de/fileadmin/HSOS/Homepages/IT-Report_Gesundheitswesen/IT-Report_Gesundheitswesen_2020_final.pdf

Schrepp, M.H., T.; Laugwitz, B., The influence of hedonic quality on the attractiveness of user interfaces of business management software. Interacting with Computers, 2006. 18(5): p. 1055–1069.

Schrepp, M.H., A.; Thomaschewski, J., Design and Evaluation of a Short Version of the User Experience Questionnaire (UEQ-S). IJIMAI 2017. 4(6): p. 103.

Venkatesh, V., Morris, M. G., Davis, G. B., and Davis, F. D., User acceptance of information technology: toward a unified view. MIS Q, 2003. 27: p. 425-478.

Hennemann, S., M.E. Beutel, and R. Zwerenz, Drivers and Barriers to Acceptance of Web-Based Aftercare of Patients in Inpatient Routine Care: A Cross-Sectional Survey. J Med Internet Res, 2016. 18(12): p. e337.

Cimperman, M., M. Makovec Brencic, and P. Trkman, Analyzing older users' home telehealth services acceptance behavior-applying an Extended UTAUT model. Int J Med Inform, 2016. 90: p. 22–31.

Hoque, R. and G. Sorwar, Understanding factors influencing the adoption of mHealth by the elderly: An extension of the UTAUT model. Int J Med Inform, 2017. 101: p. 75-84.

Rost, T., et al., User Acceptance of Computerized Cognitive Behavioral Therapy for Depression: Systematic Review. J Med Internet Res, 2017. 19(9): p. e309.

Apolinario-Hagen, J., et al., Acceptance of Mobile Health Apps for Disease Management Among People With Multiple Sclerosis: Web-Based Survey Study. JMIR Form Res, 2018. 2(2): p. e11977.

Venkatesh, V.T., J.; Xu, X., Consumer Acceptance and User of Information Technology: Extending the Unified Theory of Acceptance and Use of Technology. MIS Quarterly, 2012. 36:1: p. 157–178.

Harborth, D.P., S., German Translation of the Unified Theory of Acceptance and Use of Technology 2 (UTAUT2) Questionnaire. SSRN Electronic Journal, 2018. Online avaiable from: https://doi.org/10.2139/ssrn.3147708.

Schrepp, M., User Experience Questionnaire Handbook Version 8 - All you need to know to apply the UEQ successfully in your projects. 2019. Online available from: https://www.ueq-online.org/Material/Handbook.pdf.

Bahmni [cited 2022 11–17–2022]; Online available from: https://www.bahmni.org/feature-list/.

Schrepp, M.H., A; Thomaschewski, J, Construction of a Benchmark for the User Experience Questionnaire (UEQ). International Journal of Interactive Multimedia and Artificial Intelligence, 2017. 4: p. 40-44.

Krop, P., et al. IT-Supported Request Management for Clinical Radiology: Contextual Design and Remote Prototype Testing. Extended Abstracts of the 2022 CHI Conference on Human Factors in Computing SystemsApril, 2022. Article no. 45: p. 1–8.

Acknowledgements

We cordially thank all members of the Digitalisierungszentrum Präzisions- und Telemedizin (DZ.PTM), the Service Center Medical Informatics and the design team at the University Hospital Würzburg for their support.

Funding

Open Access funding enabled and organized by Projekt DEAL. This research was conducted within the “Digitalisierungszentrum Präzisions- und Telemedizin” (DZ.PTM), a project funded by the Bavarian State Ministry for Science and Art as part of the „Masterplan BAYERN DIGITAL II “. Fabian Kerwagen holds a scholarship of the “DFG-UNION-CVD Clinician Scientist Program” at the Integrative Clinician Scientist College (ICSC) Würzburg, a clinician scientist program funded by the DFG (German Research Foundation).

Author information

Authors and Affiliations

Contributions

Fabian Kerwagen conception and design, analysis and interpretation of the data, drafting of the paper, final approval of the paper submitted. Konrad F. Fuchs analysis and interpretation of data, revising the manuscript critically for important intellectual content, final approval of the paper submitted. Melanie Ullrich analysis and interpretation of data, revising the manuscript critically for important intellectual content, final approval of the paper submitted. Andres Schulze analysis and interpretation of data, revising the manuscript critically for important intellectual content, final approval of the paper submitted. Samantha Straka conception and design, revising the manuscript critically for important intellectual content, final approval of the paper submitted. Philipp Krop conception and design, revising the manuscript critically for important intellectual content, final approval of the paper submitted. Marc E. Latoschik conception and design, revising the manuscript critically for important intellectual content, final approval of the paper submitted. Fabian Gilbert revising the manuscript critically for important intellectual content, final approval of the paper submitted. Andres Kunz revising the manuscript critically for important intellectual content, final approval of the paper submitted. Georg Fette revising the manuscript critically for important intellectual content, final approval of the paper submitted. Stefan Störk revising the manuscript critically for important intellectual content, final approval of the paper submitted. Maximilian Ertl conception and design, revising the manuscript critically for important intellectual content, final approval of the paper submitted.

Corresponding author

Ethics declarations

Competing of interest

The authors have no other relevant competing financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Kerwagen, F., Fuchs, K.F., Ullrich, M. et al. Usability of a mHealth Solution using Speech Recognition for Point-of-care Diagnostic Management. J Med Syst 47, 18 (2023). https://doi.org/10.1007/s10916-022-01896-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10916-022-01896-y