Abstract

Diabetes mellitus is a group of metabolic diseases in which blood sugar levels are too high. About 8.8% of the world was diabetic in 2017. It is projected that this will reach nearly 10% by 2045. The major challenge is that when machine learning-based classifiers are applied to such data sets for risk stratification, leads to lower performance. Thus, our objective is to develop an optimized and robust machine learning (ML) system under the assumption that missing values or outliers if replaced by a median configuration will yield higher risk stratification accuracy. This ML-based risk stratification is designed, optimized and evaluated, where: (i) the features are extracted and optimized from the six feature selection techniques (random forest, logistic regression, mutual information, principal component analysis, analysis of variance, and Fisher discriminant ratio) and combined with ten different types of classifiers (linear discriminant analysis, quadratic discriminant analysis, naïve Bayes, Gaussian process classification, support vector machine, artificial neural network, Adaboost, logistic regression, decision tree, and random forest) under the hypothesis that both missing values and outliers when replaced by computed medians will improve the risk stratification accuracy. Pima Indian diabetic dataset (768 patients: 268 diabetic and 500 controls) was used. Our results demonstrate that on replacing the missing values and outliers by group median and median values, respectively and further using the combination of random forest feature selection and random forest classification technique yields an accuracy, sensitivity, specificity, positive predictive value, negative predictive value and area under the curve as: 92.26%, 95.96%, 79.72%, 91.14%, 91.20%, and 0.93, respectively. This is an improvement of 10% over previously developed techniques published in literature. The system was validated for its stability and reliability. RF-based model showed the best performance when outliers are replaced by median values.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Diabetes mellitus (DM) is known as diabetes in which blood glucose levels are too high [1]. As a result, the disease increases the risk of cardiovascular diseases such as heart attack and stroke etc. [2]. There were about 1.5 million deaths directly due to diabetes and 2.2 million deaths due to cardiovascular diseases, chronic kidney disease, and tuberculosis in 2012 [3]. Unfortunately, the disease is never cured but can be managed by controlling glucose. About 8.8% of adults worldwide were diabetic in 2017 and this number is projected to be 9.9% in 2045 [4]. There are three kinds of diabetes disease: (i) juvenile diabetes (type I diabetes), (ii) type II diabetes, and (iii) type III diabetes (gestational diabetes) [5]. In type I diabetes, the body does not produce proper insulin. Usually, it is diagnosed in children and young adults [6]. Type II diabetes usually develops in adults over 45 years, but also in young age children, adolescents and young adults. With type II diabetes, the pancreas does not produce enough insulin. Almost 90% of all diabetes is type II [7]. The third type of diabetes is gestational diabetes. Pregnant women, who never had diabetes before, but have high blood glucose levels during pregnancy are diagnosed with gestational diabetes.

Diabetic classification is an important and challenging issue for the diagnosis and the interpretation of diabetic data [8]. This is because the medical data is nonlinear, non-normal, correlation structured, and complex in nature [9]. Further, the data has missing values or has outliers, which further affects the performance of machine learning systems for risk stratification. A variety of different machine learning techniques have been developed for the prediction and diagnosis of diabetes disease such as: linear discriminant analysis (LDA), quadratic discriminant analysis (QDA), naïve Bayes (NB), support vector machine (SVM), artificial neural network (ANN), feed-forward neural network (FFNN), decision tree (DT), J48, random forest (RF), Gaussian process classification (GPC), logistic regression (LR), and k-nearest neighborhood (KNN) [9, 10]. These classifiers cannot correctly classify diabetic patients when the data contains missing values or has outliers, and therefore, when the machine learning-based classifiers are used for risk stratification, it does not yield higher accuracy [10,11,12,13,14,15,16].

In statistics, outlier removal and the handling of missing values is an important issue and have never been ignored. Previous machine learning techniques [10] have been unsuccessful mainly because their classifications are either (a) directly on the raw data without feature extraction or (b) on raw data without outlier removal or (c) without adding replacement values for missing values or (d) filling missing values simply with the mean value. Moreover, outlier replacements using computed mean is very sensitive [11]. As a result, their classification accuracy is low. Several authors tried outlier removal or the filling of missing values, but in the non-classification framework [12,13,14,15,16]. Our techniques were motivated by the spirit of these statistical measures embedded in a classification framework. To improve the classification accuracy, we adapted a missing value approach based on group median, outlier removal using medians, and further optimizing the data set by choosing the combination of best feature selection criteria and classification model among the set of six feature selection techniques and ten classification models.

The hypothesis has been laid out in Fig. 1, where input diabetic data undergoes two stage process of data preparation: (i) missing value process to replace the missing value by the group median and (ii) removal of the outliers by the median values. The filtered data then undergoes machine learning risk stratification paradigm, given the set of classifiers. The comparator helps in comparing the classification accuracy when the data has (a) no missing values but has outliers against classification accuracy when the data (b) has no missing values and no outliers.

Among the set of classifiers, we adapted RF [17] to extract and select significant features and also predict diabetic disease using the RF-based classifier. RF-based classifier is the most powerful machine learning technique in both classification and regression [18]. Some key strengths of RF are: (i) suites nonlinear and non-normal data; (ii) avoids over fitting of the data; (iii) provides robustness to noise; (iv) possesses an internal mechanism to estimate error rates; (v) provides the rank of variable importance; (vi) adaptable on both continuous and categorical variables; and (vii) fits well for data imputation and cluster analysis. In our current study, we hypothesize that by (a) replacing missing values with group median and outliers by median, and (b) using feature extraction by RF combined with the RF-based classifier will lead to the highest accuracy and sensitivity compared to conventional techniques like: LDA, QDA, NB, GPC, SVM, ANN, Adaboost, LR, and DT. The performances of these classifiers have been evaluated by using accuracy (ACC), sensitivity (SE), specificity (SP), positive predictive value (PPV), negative predictive value (NPV) and area under the curve (AUC).

Thus, following are the novelties of our current study compared to the previous studies:

-

1.

Design of ML system where, one can remove missing values using group median, check outliers by using inter-quartile range (IQR) and if there exit outliers, replace outliers with the median values.

-

2.

Optimizing the ML system by selecting the best combination of feature selection and classification model among the six features selection techniques (random forest (RF), logistic regression (LR), mutual information (MI), principal component analysis (PCA), analysis of variance (ANOVA), and Fisher discriminant ratio (FDR)) and ten classification models (RF, LDA, QDA, NB, GPC, SVM, ANN, AB, LR, and DT).

-

3.

Understanding the different cross-validation protocols (K2, K4, K5, K10, and JK) for determining the generalization of the ML system and computing the performance parameters such as: ACC, SE, SP, PPV, NPV, and AUC.

-

4.

Demonstration of automated reliability index (RI) and stability index, which are used to check the validity of our study and further, benchmarking our ML system against the existing literature.

-

5.

Demonstration of an improvement in classification accuracy compared against current techniques available in literature by 10% using K10 protocol and 18% using JK protocol under the combination of current framework.

The overall layout of this paper is as follows: Section 2 represents the patient’s demographics, section 3 represents methodology, including feature selection methods and classification methods are discussed in this section. Experimental protocols are given in section 4. Results are discussed in section 5. Section 6 represents the hypothesis validations and performance evaluation. Section 7 represents the discussions in detail and finally conclusion is presented in section 8.

Patient demographics

The diabetic dataset has been taken from the University of California, Irvine (UCI) Repository. This dataset consists of 768 female patients, at least 21 years old of Pima Indian heritage, having 268 diabetic patients and 500 controls. In this dataset, five patients have zero glucose level, diastolic blood pressure is zero in 35 patients, 27 patients have zero body mass indexes, 227 patients have zero skin fold thickness and 374 patients have zero serum insulin level. These zero values have no meaning and is treated as missing values. As a preprocessing step, we divide the dataset into two parts: diabetic and control, and then the missing values are replaced by the median of each group. We also check the outliers by inter-quartile range (IQR). If outliers exist, we have replaced outliers by the median. The flow chart of data preparations is described in Fig. 1. The descriptions of the attributes and brief statistical summary are shown in Table 1.

Methodology

The idea of proposed overall machine learning system is presented in Fig. 2. This follows the conventional model of ML; however the input data is now preprocessed by taking care of missing values and outlier removal. The dotted line divides the system into two segments: training diabetic data or offline (shown on the left) and testing diabetic data or online system (shown on right). The basic difference between the training and testing protocol is that the training system works on the basis of a priori ground truth and testing protocols perform prediction of diabetes. The next stage is the feature extraction followed by feature selection block, whose role is to diminish the system complexity while choosing the dominant features. Six types of feature selection techniques have been adapted, i.e., RF, LR, MI, PCA, ANOVA, and FDR. The features are trained based on the binary class framework model. Using the training database and ground truth, the machine learning parameters use online classifiers (classifier types) such as: LDA, QDA, NB, GPC, SVM, ANN, Adaboost, LR, DT, and RF. These training-based machine learning parameters and dominant features extracted from the test datasets are transformed to predict of diabetic patients.

Feature selection methods

Feature selection is important in the field of machine learning. Often in data science, we have hundreds or even millions of features and we want a way to create a model that only includes the most informative features. It has three benefits as (i) we easily run our model to interpret; (ii) reduce the variation of the model; and (iii) reduce the computational cost and time of the training model. The optimal feature selection removes the complexity of the system and increases the reliability, stability, and classification accuracy. The main feature selections methods are used: PCA, ANOVA, FDR, MI, LR, and RF, presented below:

Principal component analysis

Feature selection technique (FST) always removes the less dominant features and improves the classification accuracy and reduces the computational cost and time consumption of machine learning algorithm. Principal component analysis (PCA) is one of the popular dimension reduction technique. In this study, we adapted pooling methodology along with PCA [19] which extract the important features. The PCA algorithm of feature selection is given below:

-

1.

Calculate the mean vectors across each feature space dimension as:

Here, X is a matrix of N × P, where, N is a total number of patients, P is the total number of attributes, and I is a vector of 1’s of size N × 1.

-

2.

To make normalize the data (i.e., zero mean and unit variance), we subtract mean vectors from data matrix as:

-

3.

Compute the covariance matrix of the dataset by using formula

-

4.

Compute the eigenvalues (λ1, λ2, …, λP) and eigenvectors (e1, e2, …, eP) of the covariance matrix (S).

-

5.

Sort the eigenvalues in descending order and arrange the corresponding eigenvectors in the same order.

-

6.

Choose the number of principal components (m) to be considered using the following criterion:

where, R is the cutoff point varying from 0.90 to 0.95, P is the total number of eigenvalues.

-

7.

Compute the contribution of each feature as the following dominance indices:

where, ezn indicates the nth entry of en which is the zth eigenvectors, n = 1, 2… P and |ezn| shows the absolute value of ezn.

Sort the indices bn in descending order and select first m features which will give the reduced number of features (m) (without modifying original feature values) with their dominance level from highest to lowest.

Analysis of variance

The main goal of one-way analysis of variance (ANOVA) test is to perform tests whether or not all the different classes of Y have the same mean as X. To perform ANOVA-test, the following notations are used.

- Nj:

-

Number of classes with Y = j.

- μ j :

-

The sample mean of the predictors X for the target variables Y = j.

- \( {\mathbf{S}}_{\mathrm{j}}^2 \) :

-

The sample variance of the predictors X for the target variables Y = j:

μ= The overall mean of the predictors X: \( \boldsymbol{\upmu} =\frac{\sum_{\mathrm{j}=1}^{\mathrm{N}}{\mathrm{N}}_{\mathrm{j}}{\mathrm{X}}_{\mathrm{j}}}{\mathrm{N}} \), where N is the total number of patients and J are the total number of classes. The p-value is calculated based on the F-statistic which p-value is = Prob.{F (J-1, N-1) > F} where, \( \mathrm{F}=\frac{\frac{\sum_{\mathrm{j}=1}^{\mathrm{J}}{\mathrm{N}}_{\mathrm{j}}{\left({\boldsymbol{\upmu}}_{\mathrm{j}}-\boldsymbol{\upmu} \right)}^2}{\left(\mathrm{J}-1\right)}}{\frac{\sum_{\mathrm{j}=1}^{\mathrm{J}}\left({\mathrm{N}}_{\mathrm{j}}-1\right){\mathrm{S}}_{\mathrm{j}}^2}{\left(\mathrm{N}-1\right)}} \) which follows F-distribution with (J-1) and (N-1) degrees of freedom respectively. We select the features whose p-values are less than 0.0001.

Fisher discriminant ratio

Fisher discriminant ratio (FDR) selects the most informative features in such a way that the distance between the data points of within-class should be as large as possible, while the distance between the data points between-class should be as small as possible [20]. The general algorithm of FDR in details is given below.

-

1.

Calculate the sample mean vectors μj of the different class:

-

2.

Compute the scatter matrices (in-between-class and within-class scatter matrix). The within-class scatter matrix Sw is calculated by the following formula:

-

3.

The between-class scatter matrix SB is computed by the following:

where, μ is the overall mean vectors, μj is the jth sample mean vectors and Nj is the number of classes the respective patients.

-

4.

Finally, the FDR is computed by comparing the relationship between the within-class scatter and between-class scatter matrix by the following formula:

-

5.

Compute the eigenvalues (λ1, λ2, …, λP) and the corresponding eigenvectors (e1, e2, …, eP) for the scatter matrices (FDR=\( {\mathbf{S}}_{\mathrm{W}}^{-1}{\mathbf{S}}_{\mathrm{B}} \)).

-

6.

Sort the eigenvectors by decreasing eigenvalues and choose number of K eigenvectors with the largest eigenvalues to form a P× K dimensional weighted matrix W (where every column represents an eigenvector).

Use this P× K eigenvector matrix to transform the samples into the new subspace. This can be summarized as follows:

where, X is a N× P-dimensional matrix representing the N samples, and Y is the N× K-dimensional samples in the new spaces.

Mutual information

Mutual information (MI) is a well-known dependence measure in information theory. It detects a subset of most informative features [21]. It requires two parameters as its input i.e., the numbers of most informative features to be selected for classification and the number of quantization levels into which the continuous features are binned. Due to redundancy in features, there is over-fitting, and therefore dominant features are selected via this technique. In our current study, the numbers of the important features are selected for our classifier by using t-test based on p-values which are less than 0.0001. For two discrete variables x and y, the mutual information is denoted my MI (x, y) and is defined as:

where, p(x, y) is the joint probability distributions of x and y, p(x) and p(y) are the marginal probability distribution of x and y.

Logistic regression

Logistic regression (LR) is used when the dependent variable is categorical. The logistic model is used to estimate the probability of a binary response based on one or more predictor variables. We estimate the coefficients of the logistic regression by applying maximum likelihood estimator (MLE) and test the coefficients by applying the z-test. We select the features corresponding to the coefficients where p-values are less than 0.0001.

Random forest

Random forest (RF) directly performs feature selection while the classification rules are built. There are two methods used for variable importance measurements as (i) Gini importance index (GIM), and (ii) permutation importance index (PIM) [22]. In this study, we have used two steps to select the important features: (i) PIM index is used to order the features and (ii) RF is used to select the best combination of features for classification [17]. These same techniques are used on both types of data: data with outlier O1 and data without outlier O2. These reduced features are used for classification.

Ten classification models

Ten classification techniques have been adapted for risk stratification in machine learning framework. They are adapted as per their simplicity and popularity: LDA, QDA, NB, GPC, SVM, ANN, Adaboost, LR, DT, and RF. We also adapted five sets of cross-validation protocols as K2, K4, K5, K10, and JK, respectively, and repeated these protocol 10 trials (T). These above systems are implemented under two different sets of paradigms: while outliers (O1) are present and impute outliers by median (O2). Monitoring outputs of the performance system yields ACC, SE, SP, PPV, NPV, and AUC of ROC which is shown in Fig. 3. Brief discussions on the classifiers are presented here:

Classifier type 1: Linear discriminant analysis

Ronald Aymer Fisher introduced the linear discriminant analysis (LDA) in 1936. It is an effective classification technique. It classifies n-dimensional space into two-dimensional space that is separated by a hyper-plane. The main objective of this classifier is to find the mean function for every class. This function is projected on the vectors that maximizes the between-groups variance and minimizes the within-group variance [23].

Classifier type 2: Quadratic discriminant analysis

Quadratic discriminant analysis (QDA) is used in machine learning and statistical learning to classify two or more classes by a quadric surface. It is distance based classification techniques and it is an extension of LDA. Unlike LDA, there is no assumption that the covariance matrix for every class is identical. When the normality assumption is true, the best possible test for the hypothesis that a given measurement is from a given class is the likelihood ratio test [24].

Classifier type 3: Naïve bayes

Naïve Bayes (NB) classifier is a powerful and straightforward classifier and particularly useful in large-scale dataset. It is used on both machine learning and medical science (especially, diagnosis of diabetes). It is a probabilistic classifier based on Bayes’ theorem with the strong independent assumption between the features. It is assumed that the presence of particular features in a class is unrelated to any other features [25].

Classifier type 4: Gaussian process classification

In the last decade, Gaussian process (GP) has become a powerful, nonparametric tool that is not only used in regression but also in classification problems in order to handle various problems such as insufficient capacity of the classical linear method, complex data types, the curse of dimension, etc. The main advantages of this method are the ability to provide uncertainty estimates and to learn the noise and smoothness parameters from training data. A GP-based supervised learning technique attempts to take benefit of the better of two different schools of techniques: SVM developed by Vapnik in the early nineties of the last century and Bayesian methods. A GP is a collection of random variables, any finite number of which has a joint Gaussian distribution. A GP is a Gaussian random function and is fully specified by a mean function and covariance function [26]. In our current study, we have used the radial basis kernel (RBF).

Classifier type 5: Support vector machine

Support vector machine (SVM) is a supervised learning technique and widely used in medical diagnosis for classification and regression [27]. SVM minimizes the empirical classification error and maximizes the margin, called hyper-plane between two parallel hyper-planes. The classification of a non-linear data is performed using the kernel trick that maps the input features into high-dimensional space. In our current study, we have used the radial basis kernel (RBF).

Classifier type 6: Artificial neural network

The concept of the artificial neural network (ANN) [28] is inspired by the biological nervous system. The ANN has following key advantage: (i) it is a data driven, self-adaptive method, i.e., it can adjust themselves to the data and (ii) it is a non-linear model, which makes it flexible in modeling real-world problem. In our current study, we have used back propagation algorithm for training ANN and 10 hidden layers to find better results.

Classifier type 7: Adaboost

Adaboost means adaptive boosting, is a machine learning technique. Yoav Freund and Robert Schapire formulated Adaboost algorithm and won golden prizes in 2003 for their work. It can be used in conjunction with different types of algorithm to improve classifier’s performance. Adaboost is very sensitive to handle noisy data and outliers. In some problems, it can be less susceptible to the over fitting problem than other learning algorithms. Every learning algorithm tends to suit some problem types better than others, and typically has many different parameters and configurations to adjust before it achieves optimal performance on a dataset. Adaboost is known as the best out-of-the-box classifier [29].

Classifier type 8: Logistic regression

Logistic regression (LR) is basically a linear model for classification rather than regression. It is a basic model which describes dummy output variables and can be extended for diabetes disease classification [30]. The main advantages of LR are that it is more robust and it may handle non-linear data. Let us consider there are N input features like X1, X2…, XN, and P is the probability of the event that will occur and 1-P is the probability of the event that is not occurred. The mathematical expression of the model as follows:

where, β0 is the intercept term and βi (i = 1, 2, 3,…, N) is the regression coefficients.

Classifier type 9: Decision tree

A decision tree (DT) classifier is a decision support tool that uses a tree structure this is built using input features. The main objective of this classifier is to build a model that predicts the target variables based on several input features. One can easily extract decision rules for a given input data which makes this classifier suitable for any kinds of application [31].

Classifier type 10: Random forest

Random forest (RF) is one of the popular supervised techniques in the field of machine learning. It is also an ensemble a multitude of decision trees at training time that outputs the class that is the mode of the classes for classification or average mean prediction for regression of the individual trees [18]. The algorithm of RF is given as follows.

-

Step 1:

For a given training dataset, extract a new sample set by repeated N time’s using bootstrap method. For example, we sample of (X1, Y1),…, (XN, YN) from a given training dataset (X1, Y1),…,(Xn, Yn). Samples are not extracted consisting of out of bag data (OOB).

-

Step 2:

Build a decision tree based on the results of step 1.

-

Step 3:

Repeat step 1 and step 2 and results in many trees (here 100 trees used) and comprise a forest.

-

Step 4:

Let every tree in the forest to vote for Xi.

-

Step 5:

Calculate the average of votes for every class and the class with the highest number of votes is the classification label for X.

-

Step 6:

The percentage of correct classification is the accuracy of RF.

Statistical evaluation

Performances of all classifiers are evaluated by different measurement factors as accuracy (ACC), sensitivity (SE), specificity (SP), positive predictive value (PPV), negative predictive value (NPV) etc. These measurement factors are calculated by using true positive (TP), true negative (TN), false positive (FP), and false negative (FN). Using these measures, the performance measures can be defined as

-

Accuracy

It is the proportion of the sum of the true positive and true negative against total number of population. It can be expressed mathematically as follows:

-

Sensitivity

It is the proportion of the positive condition against the predicted condition is positive. It can be expressed mathematically as follows:

-

Specificity

It is the proportion of the negative condition against the predicted condition is negative. It can be expressed mathematically as follows:

-

Positive predictive value

The positive predictive value is the proportion of the predicted positive condition against the true condition is positive. It can be expressed mathematically as follows:

-

Negative predictive value

It is the proportion of the predicted negative condition against the true condition is negative. It can be expressed mathematically as follows:

Experimental protocols

In this study, we adapted six feature selection techniques (FST), two outlier removal techniques (ORT), and six cross-validation (CV) protocols: K2, K4, K5, K10, and JK-fold CV protocols, and ten different classifiers. We have performed two experimental protocols such as (i) to select best FST over CV protocols and ORT and (ii) comparison of the classifiers. Since the partitions K are random, we repeated the protocols with T = 10 trials in K2, K4, K5, and K10-folds CV protocols.

Experiment 1: Select best cross-validation over outlier removal technique

The main objective of this section is to select the best CV protocols for both O1 and O2. The best CV protocols selection formula can be expressed as follows. Where, \( \mathcal{A} \) (f, c, p) represents the mean accuracy of over different protocols when feature selection technique is “f”, classifier types is “c”, and data types is “p”, and total number of feature selection techniques, classifier types, and data types are F, C, and P, respectively.

Experiment 2: Best feature selection techniques over K-fold CV and ORT

The experiment presented in this section chooses the optimal FST over CV protocols and ORT’s on the basis of classification accuracy, where, \( \mathcal{A}\left(\mathrm{k},\mathrm{c},\mathrm{p},\right) \) represents the accuracy of the classifer computed when protocol type is “k”, classifier type is “c”, patient number is “p”, and total number of protocols types, classifiers, and patients are: K, C, and P, then the mean accuracy of the performance of classification algorithms are evaluated in terms of measures.

Experiment 3: Comparison of the classifiers

The main objective of this experiment is to compare classification techniques based on classification accuracy and then select the best classifier. In this experiment, we adapted ten classifiers on both data: (i) data that contains outlier (O1) and (ii) impute outlier by the median (O2). For each dataset same FST and five sets of CV protocols are used. And compute the mean accuracy of all classifiers over protocols for both O1 and O2 datasets.

Where, \( \mathcal{A}\left(\mathrm{k},\mathrm{f},\mathrm{p}\right) \) represents the accuracy of the classifer computed when protocol types is “k”, feature selection methods is “f”, and number of patients is “p”, and total number of protocols types, feature selection techniques, and number of patients are: K, F, and P. then the mean accuracy of the performance of classification algorithms are evaluated in terms of measures.

Results

This section presents the results using the above two experimental protocol setup as discussed in section 4.1 (select best FST and protocols over and ORT) and section 4.2 (comparison of the classifiers). In the first experiment, best FST and CV protocols are estimated based on the criteria of the highest accuracy. The second experiment is to understand the behavior based the variation of the classification accuracy with respect to the different CV protocols. The results of these two experiments are shown in section 5.1 and section 5.2, respectively.

Experiment 1: Select best feature selection techniques over K-fold CV and ORT

In this study, we adapted six FST as RF (F1), LR (F2), MI (F3), PCA (F4), ANOVA (F5), and FDR (F6) on both O1 and O2 datasets. For O1 and K2-protocol, F5-based feature selection technique gives the highest accuracy (81.94%). Increasing the value of K, ACC is also increased for both O1 and O2. On the contrary, F2 gives the highest ACC 84.66% of the same protocols for O2. In the same way, for K4, F4 and F2 give the highest ACC 82.73% and 86.16% for O1 and O2. For O2, RF gives the ACC (85.86%) for K10 and ACC (88.45%) for JK. There are also same results for O1. The details are given in Table 2. So we say that RF is the best FST for both O1 and O2.

Experiment 2: Comparison of the classifiers

For notational simplicity, we call the ten classifiers as: LDA (C1), QDA (C2), NB (C3), GPC (C4), SVM (C5), ANN (C6), Adaboost (C7), LR (C8), DT (C9), and RF (C10). This experiment is performed to investigate the comparison of performance of all classifiers with changing the K-folds CV protocols over ORT. Tables 3 and 4 show that increasing the value of K, classification accuracy is also increased for both O1 and O2 dataset. From these results, we intercept as (i) for K2 protocols, F1 and C10 classifier combination gives the highest accuracy (89.09% for O1 and 88.98 for O2) against the other classifiers because F1 extracts the most important features, (ii) increasing the value of K (2 to 4), the accuracy of C10 also increase. Tables 3 and 4 also show that F1 and C10 combination also gives the highest accuracy (89.79% for O1 and 89.58% for O2). Similarly it can be showed that for K10 protocols F1-C10 gives the accuracy 90.91% for and 92.26% for O2. JK protocols all feature selection based RF-based classifier combination gives 99.99~100.00% accuracy (both O1 and O2 datasets). So we say that F1 and C10 is the best combination for both O1 and O2 datasets.

Hypothesis validation and performance evaluation

Hypothesis validation

As discussed in introduction section that the spirit of this study requires that when the missing values are replaced by the group median along with the replacement of the outliers by the median values, while using the random forest in ML framework should give the highest accuracy against the case when the outliers are either not removed or replaced by means. We demonstrate the results in Table 5, where we compared classification accuracy with outliers (O1) and without outliers (O2). We thus demonstrate that the hypothesis has been validated.

Performance evaluation

Reliability

Reliability and stability index of the ML system is required for evaluation of the performance of the ML system. This can be seen in Fig. 4. The reliability index (RI) has been calculated by the ratio of the standard deviation of the classification accuracy and mean of the classification accuracy over data size (N). The system reliability index (ξN) is calculated by the following formula as:

where, σN is the standard deviation and μN is the mean of all accuracies for FST and ORT’s. The system reliability index of \( \overline{\upxi} \) by taking the mean of all data can be expressed as follows:

Figures 5 and 6 show that the reliability index (RI) for all Fi-Cj (i = 1, 2…, 6 and j = 1, 2… 10) based 60 combinations as data size increases for O1 and O2 datasets. Further, the system reliability index has been computed by averaging the reliability indexes corresponding to all data sizes as shown Table 6 for O1 and Table 7 for O2 which confirms the best performance of F1 and C10 based combination for O1 and O2.

Stability analysis

Stability analysis defines the dynamics of control system. Here in our analysis data size can control the dynamics of overall system. We observed that at data system is stable within 2% tolerance limit.

Discussion

This paper represents the risk stratification system to accurately classify diabetes disease into two classes namely: diabetic and control while input diabetic data contains outliers and replaced outliers by median. Moreover, sixty systems have been designed by cross combination of ten classifiers (LDA, QDA, NB, GPC, SVM, ANN, Adaboost, LR, DT, and RF) and six feature selection techniques (RF, LR,MI, PCA, ANOVA, and FDR) and their performances have been compared. The number of features has been selected with help of 0.90 cutoffs points for PCA while t-test has been adopted for LR, MI, FDR, respectively, and also F-test for ANOVA. The classification of diabetes disease has been implemented using one-against all approach for ten classifiers, i.e., LDA, QDA, NB, GPC, SVM, ANN, Adaboost, LR, DT, and RF. Furthermore, four sets (K2, K4, K5, and K10) of cross-validation protocols has been applied for generalization of classification and this process has been repeated for T = 10 times to reduce the variability. For all sixty combinations, the experiments have been performed in one scenario as comparisons of outlier’s removal techniques varying different protocols. Performance evaluations of all classifiers are compared on the basis of ACC, SE, SP, PPV, NPV, and AUC in experiments with varying FST and CV protocols. The ML system was validated for stability and reliability.

The main focus of our study the following components: Comprehensive analysis of RF-based classifier against nine sets of classifiers: LDA, QDA, NB, GPC, SVM, ANN, Adaboost, LR, and DT, respectively while in input diabetic data, is replaced outliers by median and extract features. Our study shows that the classification must be improved if we replaced the missing values by group median and outliers by median and extract features by random forest and classification of diabetes disease by random forest. There are two reasons to improve the classification accuracy as (i) median missing values imputation while in existing papers, several authors were not using any missing imputation techniques and someone replaced missing values by mean; (ii) replaced outliers by median while in previous papers, authors did not use any methods to check outliers.

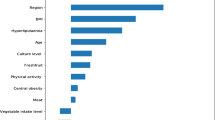

Benchmarking different machine learning systems

There are several papers in literature on the diagnosis and classification of diabetic patients. Karthikeyani et al. [32] applied SVM with radial basis kernel on diabetes dataset. The dataset consisted of 8 attributes and 768 patients having 268 diabetes and 500 controls. They replaced these meaningless values with their mean and applied SVM to classify diabetes disease and demonstrated a classification accuracy of 74.80%. The same authors (Karthikeyani et al. [33]) extracted three features out of eight using partial least square (PLS) and applied LDA method to classify diabetes leading to an accuracy of 74.40%. Kumari and Chitra [34] introduced SVM with radial basis kernel function for classification. After deleting meaningless observations (zero contained observations), there were 460 observations. From those observations, 200 were used as training and rest of observations were used as a testing dataset, while the algorithm achieved a low accuracy of 75.50%. Parashar et al. [35] applied LDA to select the most importance features of diabetic disease and then selected two best features out of eight features. They also applied SVM and FFNN to classify diabetes disease and SVM gave the accuracy of 75.65%. Bozkurt et al. [36] introduced two ML techniques: AIS and ANN. ANN obtained higher accuracy of 76% compared to AIS. Iyer et al. [37] applied NB and DT for classification of diabetic patients. They replaced missing values with the mean and extracted two features out of eight using the correlation based feature selection (CFS) algorithm. They showed that DT obtained accuracy of 74.79%. Kumar Dewangan and Agrawal [38] used MLP and Bayes net classifiers, where MLP gave the highest accuracy of 81.19%. Bashir et al. [10] introduced Hierarchical Multi-level classifiers bagging with Multi-objective optimized Voting (HM-Bag Moov) technique to classify diabetes and compared to various classification techniques such as NB, SVM, LR, QDA, KNN, RF and ANN. They showed that HM-Bag Moov obtained an accuracy of 77.21%. Sivanesan et al. [39] proposed J48 algorithm to classify diabetic patients and obtained an accuracy of 76.58%. Meraj Nabi et al. [40] applied four different classifiers such as NB, LR, J48, RF, and obtained the best accuracy of 80.43% using LR. Recently, Suri’s team (Maniruzzaman et al. [9]) also applied four different classifiers such as LDA, QDA, NB, and GPC. They showed that GPC-based radial basis kernel gave the highest classification accuracy (~82%) with respect to others. From the above discussion, Table 8 and Fig. 7 confirm that our proposed F1 and C10 method is to identify the better diagnosis with an accuracy of 92.26% for K10 and nearly 100% for JK protocols compared to others. So our proposed system can be used to cross check of diagnosis of diabetes with the doctor’s assessment.

Random forest showed encouraging results and identified the most significant features and classify of diabetes disease. It works well on both nonlinear and high dimensional data. In previous study, ML-based DM research has focused on only classification and prediction of diabetic patients. Here, RF capabilities to detect the relevant pattern in the data produced very meaningful results that correlate well with the criteria for diabetes diagnosis and with known risk factors. When we replaced the missing values and outliers are replaced by group mean and mean, then the RF yields 89% classification accuracy. This RF classification accuracy increased by 3% when missing values and outliers are replaced by group median and median, respectively.

Strengths, weakness and extensions

This paper represents the risk stratification system to accurately classify of diabetes disease while there are 768 pregnant patients having two class diabetes and controls. Our study shows that RF-based feature selection technique along with RF-based classifiers with median based outlier’s removal techniques gives a classification accuracy of 92.26% for K10 protocols and nearly 99.99~100% for JK protocols (see Fig. 7). Nevertheless, the presented system can still be improved. Further, preprocessing techniques may be used to replace meaningless values by mean or median and outliers by mean or median. There are many other techniques of feature extraction, feature selection, and classification, and performances of presented combinations of system may be compared the other systems.

Conclusion

Diabetes Mellitus (DM) is a group metabolic diseases in which blood sugar levels are too high. Our hypothesis was that if missing values and outliers are removed by group median and median values, respectively and such a data when used in ML framework using RF-RF combination for feature selection and classification should yield higher accuracy. We demonstrated our hypothesis by showing a 3% improvement and reaching an accuracy of nearly 100% in JK-based cross-validation protocol. Comprehensive data analysis was conducted consisting of ten classifiers, six feature selection methods and five sets of protocols, two outlier’s removal techniques leading to six hundred (600) experiments. Through benchmarking was analyzed and clear improvement was demonstrated. It would be interesting in future to see classification of other kinds of medical data to be adapted in such a framework creating a cost-effective and time-saving option for both diabetic patients and doctors.

References

Muntner, P., Colantonio, L. D., Cushman, M., Goff, D. C., Howard, G., Howard, V. J., and Safford, M. M., Validation of the atherosclerotic cardiovascular disease pooled cohort risk equations. JAMA 311(14):1406–1415, 2014.

American Diabetes Association, Diagnosis and classification of diabetes mellitus. Diabetes Care 37(Supplement 1):S81–S90, 2014.

Bharath, C., Saravanan, N., and Venkatalakshmi, S., Assessment of knowledge related to diabetes mellitus among patients attending a dental college in Salem city-A cross sectional study. Braz. Dent. Sci. 20(3):93–100, 2017.

Fitzmaurice, C., Allen, C., Barber, R. M., Barregard, L., Bhutta, Z. A., Brenner, H., and Fleming, T., Global, regional, and national cancer incidence, mortality, years of life lost, years lived with disability, and disability-adjusted life-years for 32 cancer groups, 1990 to 2015: a systematic analysis for the global burden of disease study. JAMA Oncol. 3(4):524–548, 2017.

Danaei, G., Finucane, M. M., Lu, Y., Singh, G. M., Cowan, M. J., Paciorek, C. J., and Rao, M., National, regional, and global trends in fasting plasma glucose and diabetes prevalence since 1980: systematic analysis of health examination surveys and epidemiological studies with 370 country-years and 2.7 million participants. Lancet 378(9785):31–40, 2011.

Canadian Diabetes Association, Diabetes: Canada at the tipping point 2011. Canadian Diabetes Association: Toronto, 2013.

Shi, Y., and Hu, F. B., The global implications of diabetes and cancer. Lancet 9933(383):1947–1948, 2014.

Barakat, N., Bradley, A. P., and Barakat, M. N. H., Intelligible support vector machines for diagnosis of diabetes mellitus. IEEE Trans. Inf. Technol. Biomed. 14(4):1114–1120, 2010.

Maniruzzaman, M., Kumar, N., Abedin, M. M., Islam, M. S., Suri, H. S., El-Baz, A. S., and Suri, J. S., Comparative approaches for classification of diabetes mellitus data: Machine learning paradigm. Comput. Methods Prog. Biomed. 152:23–34, 2017.

Bashir, S., Qamar, U., and Khan, F. H., IntelliHealth: a medical decision support application using a novel weighted multi-layer classifier ensemble framework. J. Biomed. Inform. 59:185–200, 2016.

Manikandan, S., Measures of dispersion. J. Pharmacol. Pharmacother. 2(4):315–316, 2011.

Zainuri, N. A., Jemain, A. A., and Muda, N., A Comparison of various imputation methods for missing values in air quality data. Sains Malays. 44(3):449–456, 2015.

Cokluk, O., and Kayri, M., The effects of methods of imputation for missing values on the validity and reliability of scales. Educ. Sci. Theory Pract. 11(1):303–309, 2011.

Baneshi, M. R., and Talei, A. R., Does the missing data imputation method affect the composition and performance of prognostic models? Iran Red Crescent Med J 14(1):30–31, 2012.

Kaiser, J., Dealing with missing values in data. J. Syst. Integr. 5(1):42–43, 2014.

Leys, C., Ley, C., Klein, O., Bernard, P., and Licata, L., Detecting outliers: do not use standard deviation around the mean, use absolute deviation around the median. J. Exp. Soc. Psychol. 49(4):764–766, 2013.

Hasan, M. A. M., Nasser, M., Ahmad, S., and Molla, K. I., Feature selection for intrusion detection using random forest. J. Inf. Secur. 7(3):129–140, 2016.

Breiman, L., Random forests. Mach. Learn. 45(1):5–32, 2001.

Shrivastava, V. K., Londhe, N. D., Sonawane, R. S., and Suri, J. S., Computer-aided diagnosis of psoriasis skin images with HOS, texture and color features: a first comparative study of its kind. Comput. Methods Prog. Biomed. 126(2):98–109, 2016.

Shrivastava, V. K., Londhe, N. D., Sonawane, R. S., and Suri, J. S., A novel and robust Bayesian approach for segmentation of psoriasis lesions and its risk stratification. Comput. Methods Prog. Biomed. 150(2):9–22, 2017.

Peng, H., Long, F., and Ding, C., Feature selection based on mutual information criteria of max-dependency, max-relevance, and min-redundancy. IEEE Trans. Pattern Anal. Mach. Intell. 27(8):1226–1238, 2005.

Al Mehedi Hasan, M., Nasser, M., and Pal, B., On the KDD’99 dataset: support vector machine based intrusion detection system (ids) with different kernels. Int. J. Electron. Commun. Comput. Eng. 4(4):1164–1170, 2013.

Sapatinas, T., Discriminant analysis and statistical pattern recognition. J. R. Stat. Soc. A. Stat. Soc. 168(3):635–636, 2005.

Webb, G. I., Boughton, J. R., and Wang, Z., Not so naive Bayes: aggregating one- dependence estimators. Mach. Learn. 58(1):5–24, 2005.

Cover, T. M., Geometrical and statistical properties of systems of linear inequalities with applications in pattern recognition. IEEE Trans. Electron. Comput. 14(3):326–334, 1965.

Brahim-Belhouari, S., and Bermak, A., Gaussian process for nonstationary time series prediction. Comput. Stat. Data Anal. 47(4):705–712, 2004.

Cortes, C., and Vapnik, V., Support-vector networks. Mach. Learn. 20(2):273–297, 1995.

Reinhardt, T. H., Using neural networks for prediction of the subcellular location of proteins. Nucleic Acids Res. 26(9):2230–2236, 1998.

Kégl, B. The return of AdaBoost. MH: multi-class Hamming trees. arXiv preprint arXiv:1312.6086, 2013.

Tabaei, B., and Herman, W., A Multivariate logistic regression equation to screen for diabetes. Diabetes Care 25:1999–2003, 2002.

Acharya, U. R., Molinari, F., Sree, S. V., Chattopadhyay, S., Ng, K. H., and Suri, J. S., Automated diagnosis of epileptic EEG using entropies. Biomed. Signal Process. Control 7(4):401–408, 2012.

Karthikeyani, V., Begum, I. P., Tajudin, K., and Begam, I. S., Comparative of data mining classification algorithm in diabetes disease prediction. Int. J. Comput. Appl. 60(12):26–31, 2012.

Karthikeyani, V., and Begum, I. P., Comparison a performance of data mining algorithms in prediction of diabetes disease. Int. J. Comput. Sci. Eng. 5(3):205–210, 2013.

Kumari, V. A., and Chitra, R., Classification of diabetes disease using support vector machine. Int. J. Eng. Res. Appl. 3(2):1797–1801, 2013.

Parashar, A., Burse, K., and Rawat, K., A Comparative approach for Pima Indians diabetes diagnosis using lda-support vector machine and feed forward neural network. Int. J. Adv. Res. Comput. Sci. Softw. Eng. 4(4):378–383, 2014.

Bozkurt, M. R., Yurtay, N., Yilmaz, Z., and Sertkaya, C., Comparison of different methods for determining diabetes. Turk. J. Electr. Eng. Comput. Sci. 22(4):1044–1055, 2014.

Iyer, A., Jeyalatha, S., and Sumbaly, R., Diagnosis of diabetes using classification mining techniques. Int. J. Data Min. Knowl. Manag. Process. 5(1):1–14, 2015.

Kumar Dewangan, A., and Agrawal, P., Classification of diabetes mellitus using machine learning techniques. Int. J. Eng. Appl. Sci. 2(5):145–148, 2015.

Sivanesan, R., and Dhivya, K. D. R., A Review on diabetes mellitus diagnoses using classification on Pima Indian diabetes data set. Int. J. Adv. Res. Comput. Sci. Manag. Stud. 5(1):12–17, 2017.

Nabi, M., Wahid, A., and Kumar, P., Performance analysis of classification algorithms in predicting diabetes. Int. J. Adv. Res. Comput. Sci. 8(3):456–461, 2017.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

None declared.

Ethics approval

We used secondary dataset taken from the UCI website (https://archive.ics.uci.edu/ml/datasets/Pima+Indians+Diabetes). No ethics approval is required for this dataset.

Additional information

This article is part of the Topical Collection on Education & Training

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Maniruzzaman, M., Rahman, M.J., Al-MehediHasan, M. et al. Accurate Diabetes Risk Stratification Using Machine Learning: Role of Missing Value and Outliers. J Med Syst 42, 92 (2018). https://doi.org/10.1007/s10916-018-0940-7

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10916-018-0940-7