Abstract

Recent machine learning algorithms dedicated to solving semi-linear PDEs are improved by using different neural network architectures and different parameterizations. These algorithms are compared to a new one that solves a fixed point problem by using deep learning techniques. This new algorithm appears to be competitive in terms of accuracy with the best existing algorithms.

Similar content being viewed by others

References

Pardoux, E., Peng, S.: Adapted solution of a backward stochastic differential equation. Syst. Control Lett. 14(1), 55–61 (1990)

Bouchard, B., Touzi, N.: Discrete-time approximation and Monte-Carlo simulation of backward stochastic differential equations. Stoch. Process. Appl. 111(2), 175–206 (2004)

Gobet, E., Lemor, J.-P., Warin, X., et al.: A regression-based Monte Carlo method to solve backward stochastic differential equations. Ann. Appl. Prob. 15(3), 2172–2202 (2005)

Lemor, J.-P., Gobet, E., Warin, X., et al.: Rate of convergence of an empirical regression method for solving generalized backward stochastic differential equations. Bernoulli 12(5), 889–916 (2006)

Gobet, Emmanuel, Turkedjiev, Plamen: Linear regression MDP scheme for discrete backward stochastic differential equations under general conditions. Math. Comput. 85(299), 1359–1391 (2016)

Fahim, A., Touzi, N., Warin, X.: A probabilistic numerical method for fully nonlinear parabolic PDEs. Ann. Appl. Prob. 21, 1322–1364 (2011)

Cheridito, P., et al.: Second-order backward stochastic differential equations and fully nonlinear parabolic PDEs. Commun. Pure Appl. Math. 60(7), 1081–1110 (2007)

Longstaff, F.A., Schwartz, E.S.: Valuing American options by simulation: a simple least-squares approach. Rev. Financ. Stud. 14(1), 113–147 (2001)

Bouchard, B., Warin, X.: Monte-Carlo valuation of American options: facts and new algorithms to improve existing methods. In: Numerical Methods in Finance. Springer, Berlin, pp. 215–255 (2012)

Henry-Labordere, P., et al.: Branching Diffusion Representation of Semilinear PDEs and Monte Carlo Approximation. arXiv preprint arXiv:1603.01727 (2016)

Bouchard, B., et al.: Numerical approximation of BSDEs using local polynomial drivers and branching processes. Monte Carlo Methods Appl. 23(4), 241–263 (2017)

Bouchard, B., Tan, X., Warin, X.: Numerical Approximation of General Lipschitz BSDEs with Branching Processes. arXiv preprint arXiv:1710.10933 (2017)

Warin, X.: Variations on Branching Methods for Non-linear PDEs. arXiv preprint arXiv:1701.07660 (2017)

Fournié, E., et al.: Applications of Malliavin calculus to Monte Carlo methods in finance. Finance Stoch. 3(4), 391–412 (1999)

Warin, X.: Nesting Monte Carlo for High-Dimensional Non Linear PDEs. arXiv preprint arXiv:1804.08432 (2018)

Warin, X.: Monte Carlo for High-Dimensional Degenerated Semi Linear and Full Non Linear PDEs. arXiv preprint arXiv:1805.05078 (2018)

Weinan, E., et al.: Linear scaling algorithms for solving high-dimensional nonlinear parabolic differential equations. In: SAM Research Report 2017 (2017)

Weinan, E., et al.: On multilevel Picard numerical approximations for high-dimensional nonlinear parabolic partial differential equations and high-dimensional nonlinear backward stochastic differential equations, vol. 46. arXiv preprint arXiv:1607.03295 (2016)

Hutzenthaler, M., Kruse, T.: Multi-level Picard Approximations of High Dimensional Semilinear Parabolic Differential Equations with Gradient-Dependent Nonlinearities. arXiv preprint arXiv:1711.01080 (2017)

Hutzenthaler, M., et al.: Overcoming the Curse of Dimensionality in the Numerical Approximation of Semilinear Parabolic Partial Differential Equations. arXiv preprint arXiv:1807.01212 (2018)

Han, J., Jentzen, A., Weinan, E.: Overcoming the Curse of Dimensionality: Solving High-Dimensional Partial Differential Equations Using Deep Learning. arXiv:1707.02568 (2017)

Weinan, E., Han, J., Jentzen, A.: Deep learning-based numerical methods for high-dimensional parabolic partial differential equations and backward stochastic differential equations. Commun. Math. Stat. 5(4), 349–380 (2017)

Beck, C., Weinan, E., Jentzen, A.: Machine Learning Approximation Algorithms for High-Dimensional Fully Nonlinear Partial Differential Equations and Secondorder Backward Stochastic Differential Equations. arXiv preprint arXiv:1709.05963 (2017)

Raissi, M.: Forward–Backward Stochastic Neural Networks: Deep Learning of High Dimensional Partial Differential Equations. arXiv preprint arXiv:1804.07010 (2018)

Fujii, M., Takahashi, A., Takahashi, M.: Asymptotic Expansion as Prior Knowledge in Deep Learning Method for High Dimensional BSDEs. arXiv preprint arXiv:1710.07030 (2017)

Ioffe, S., Szegedy, C.: Batch normalization: accelerating deep network training by reducing internal covariate shift. In: Proceedings of the 32nd International Conference on International Conference on Machine Learning—Volume 37. ICML’15, JMLR.org, Lille, pp. 448–456. http://dl.acm.org/citation.cfm?id=3045118.3045167 (2015)

Cooijmans, T., et al.: Recurrent Batch Normalization. arXiv preprint arXiv:1603.09025 (2016)

Clevert, D.-A., Unterthiner, T., Hochreiter, S.: Fast and Accurate Deep Network Learning by Exponential Linear Units (elus). arXiv preprint arXiv:1511.07289 (2015)

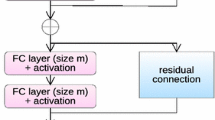

He, K., et al.: Deep residual learning for image recognition. In: 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 770–778 (2016). https://doi.org/10.1109/CVPR.2016.90

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9, 1735–1780 (1997)

Olah, C.: Understanding LSTM Networks, Blog, http://colah.github.io/posts/2015-08-Understanding-LSTMs/ (2015)

Karpathy, A.: The Unreasonable Effectiveness of Recurrent Neural Networks. Blog, http://karpathy.github.io/2015/05/21/rnn-effectiveness/ (2015)

Han, J., Jentzen, A., et al.: Solving High-Dimensional Partial Differential Equations Using Deep Learning. In: arXiv preprint arXiv:1707.02568 (2017)

Sirignano, J., Spiliopoulos, K.: DGM: a deep learning algorithm for solving partial differential equations. J. Comput. Phys. 375, 1339–1364 (2018)

Richou, A.: Étude théorique et numérique des équations différentielles stochastiques rétrogrades. Thèse de doctorat dirigée par Hu, Ying et Briand, Philippe Mathématiques et applications Rennes 1 2010, Ph.D. thesis (2010)

Ruder, S.: An Overview of Gradient Descent Optimization Algorithms. arXiv preprint arXiv:1609.04747 (2016)

Bengio, Y.: Practical recommendations for gradient-based training of deep architectures. In: Grégoire, M., Geneviève, B.O., Klaus-Robert, M. (eds.) Neural Networks: Tricks of the Trade, 2nd edn. Springer, Berlin, pp. 437–478 (2012). ISBN: 978-3-642-35289-8. https://doi.org/10.1007/978-3-642-35289-8_26

Han, J., Weinan, E.: Deep Learning Approximation for Stochastic Control Problems. arXiv preprint arXiv:1611.07422 (2016)

Kingma, D.P., Ba, J.: Adam: A Method for Stochastic Optimization. arXiv preprint arXiv:1412.6980 (2014)

Glorot, X., Bengio, Y.: Understanding the difficulty of training deep feedforward neural networks. In: Proceedings of the 13th International Conference on Artificial Intelligence and Statistics, pp. 249–256 (2010)

Braun, S.: LSTM Benchmarks for Deep Learning Frameworks. arXiv preprint arXiv:1806.01818 (2018)

Touzi, N.: Optimal Stochastic Control, Stochastic Target Problems, and Backward SDE, vol. 29. Springer, Berlin (2012)

Hornik, K.: Approximation capabilities of multilayer feedforward networks. Neural Netw. 4(2), 251–257 (1991)

Cybenko, G.: Approximation by superpositions of a sigmoidal function. Math. Control Signals Syst. 2(4), 303–314 (1989)

Acknowledgements

The authors would like to thank Simon Fécamp for useful discussions and technical advices.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

1.1 Some Test PDEs

We recall our notations for the semilinear PDEs:

where \(\mathcal {L}u (t, x) := \frac{1}{2} {{\,\mathrm{{\text {Tr}}}\,}}\left( \sigma ^\top \sigma (t, x) \nabla ^2 u(t, x)\right) + \mu (t, x)^\top \nabla u(t, x)\). For each example, we thus give the corresponding \(\mu \), \(\sigma \), f and g. In the implementation, we rather use a function

for convenience, as this does not influence the results and allows for a more direct formulation for some PDEs.

1.1.1 A Black–Scholes Equation with Default Risk

From [21, 22]. If not otherwise stated, the parameters take values: \(\overline{\mu }=0.02\), \(\overline{\sigma }=0.2\), \(\delta =2/3\), \(R=0.02\), \(\gamma _h=0.2\), \(\gamma _l=0.02\), \(v_h=50\), \(v_l=70\). We use the initial condition \(X_0 = (100, \ldots , 100)\).

We used the closed formula for the SDE dynamic:

Baseline (from [21]):

1.1.2 A Black–Scholes–Barenblatt Equation

From [24]. If not otherwise stated, the parameters take values: \(\overline{\sigma }=0.4\), \(r=0.05\). We use the initial condition \(X_0 = (1.0, 0.5, 1.0, \ldots )\).

We used the closed formula for the SDE dynamic:

Exact solution:

1.1.3 A Hamilton–Jacobi–Bellman Equation

From [21, 22]. If not otherwise stated: \(\lambda =1.0\) and \(X_0 = (0, \ldots , 0)\).

Monte-Carlo solution:

where

Baseline (computed using 10 million Monte-Carlo realizations, \(d=100\)):

1.1.4 An Oscillating Example with a Square Non-linearity

From [15]. If not otherwise stated, the parameters take values: \(\mu _0=0.2\), \(\sigma _0=1.0\), \(a=0.5\), \(r=0.1\). The intended effect of the \(\min \) and \(\max \) in f is to make f Lipschitz. We used the initial condition \(X_0=(1.0, 0.5, 1.0, \ldots )\).

where

Exact solution:

1.1.5 A Non-Lipschitz Terminal Condition

From [35]. If not otherwise stated: \(\alpha =0.5\), \(X_0 = (0, \ldots , 0)\).

Monte-Carlo solution:

where

Baseline (computed using 10 million Monte-Carlo realizations, \(d=10\)):

1.1.6 An Oscillating Example with Cox–Ingersoll–Ross Propagation

From [16]. If not otherwise stated: \(a=0.1\), \(\alpha =0.2\), \(T=1.0\), \(\hat{k}=0.1\), \(\hat{m}=0.3\), \(\hat{\sigma }=0.2\). We used the initial condition \(X_0 = (0.3, \ldots , 0.3)\). Note that we have \(2 \hat{k} \hat{m} > \hat{\sigma }^2\) so that X remains positive.

where

Exact solution:

1.1.7 An Oscillating Example with Inverse Non-linearity

If not stated otherwise, we took parameters \(\mu _0 = 0.2\), \(\sigma _0 = 1.0\), \(a=0.5\), \(r=0.1\) and the initial condition \(X_0=(1.0, 0.5, 1.0, \ldots )\).

where

Exact solution:

1.2 Demonstration of Proposition 4.6

In the sequel, C will only depend on u, f, g and may vary from one line to another.

First, note that due to the Lipschitz property in Assumption 4.3, the boundedness of f and g and the regularity of u due to Assumption 4.5, the solution u of (1) and Du satisfy a Feynman–Kac relation (see an adaptation of Proposition 1.7 in [42]):

First picking \((t,y) \in [0,T] \times \Omega _\epsilon ^{0,x}\), we have using Eq. (39):

Using Jensen equality, the boundedness of g and f, the fact that \(\rho \) is bounded by below and Assumption 4.3, we get:

Then using the results in [43, 44], we know that there exists \( (u(\theta ^{*},t,x), v(\theta ^*,t,x )) \in \kappa \) such that:

Then we have that

Similarly introducing \({\hat{W}}^{t}_\tau = \sigma ^{-\top } \frac{W_{(t+ (\tau \wedge \Delta t )) \wedge T}-W_{t}}{\tau \wedge (T-t) \wedge \Delta t}\),

where we have used Jensen and Cauchy Schwarz.

Introducing \(G\in {{\,\mathrm{\mathbb {R}}\,}}^d\) composed of centered unitary independent Gaussian random variables

when \(u<1\) in Eq. (11).

Similarly using the fact that g is Lipschitz,

Then using Eq. (40) in Eq. (42), we get

Injecting (41) and (43) in Eq. (31) and using the definition of \(\Omega ^{0,x}_{\epsilon }\) complete the proof.

1.3 Demonstration of Proposition 4.7

Let \(\bar{K}\) be a compact. It is always possible to find \(n_0\) such that for \(n> n_0\), \( \bar{K} \subset \Omega ^{0,x}_{\epsilon _n}\).

Let u be a function from \({{\,\mathrm{\mathbb {R}}\,}}\times {{\,\mathrm{\mathbb {R}}\,}}^d\) to \({{\,\mathrm{\mathbb {R}}\,}}\) and v function from \({{\,\mathrm{\mathbb {R}}\,}}\times {{\,\mathrm{\mathbb {R}}\,}}^d\) to \({{\,\mathrm{\mathbb {R}}\,}}^d\). Let

We note that for \(\zeta \) an independent uniform random variable in [0, T]

Using the Eq. (39), the expression of \(D_n\), the Lipschitz property of f, Jensen inequality, the boundedness of f, g, and the fact that \(\rho \) is bounded by below by \(\hat{\rho }(T)\):

Then, if K is small enough

which completes the proof.

Rights and permissions

About this article

Cite this article

Chan-Wai-Nam, Q., Mikael, J. & Warin, X. Machine Learning for Semi Linear PDEs. J Sci Comput 79, 1667–1712 (2019). https://doi.org/10.1007/s10915-019-00908-3

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10915-019-00908-3