Abstract

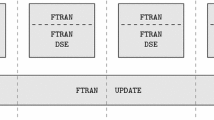

This paper provides a block coordinate descent algorithm to solve unconstrained optimization problems. Our algorithm uses only pairwise comparison of function values, which tells us only the order of function values over two points, and does not require computation of a function value itself or a gradient. Our algorithm iterates two steps: the direction estimate step and the search step. In the direction estimate step, a Newton-type search direction is estimated through a block coordinate descent-based computation method with the pairwise comparison. In the search step, a numerical solution is updated along the estimated direction. The computation in the direction estimate step can be easily parallelized, and thus, the algorithm works efficiently to find the minimizer of the objective function. Also, we theoretically derive an upper bound of the convergence rate for our algorithm and show that our algorithm achieves the optimal query complexity for specific cases. In numerical experiments, we show that our method efficiently finds the optimal solution compared to some existing methods based on the pairwise comparison.

Similar content being viewed by others

Notes

“Sufficiently” means that \(\eta \) is smaller than or equal to the quantity of the right-hand-side of (5). Although the quantity can not be explicitly computed (since L and \(\sigma \) are unknown), we can achieve the order optimal by taking \(\eta \) smaller and smaller.

References

Audet, C., Dennis Jr., J.E.: Analysis of generalized pattern searches. SIAM J. Optim. 13(3), 889–903 (2002)

Audet, C., Dennis Jr., J.E., Digabel, S.L.: Parallel space decomposition of the mesh adaptive direct search algorithm. SIAM J. Optim. 19(3), 1150–1170 (2008)

Audet, C., Ianni, A., Le Digabel, S., Tribes, C.: Reducing the number of function evaluations in mesh adaptive direct search algorithms. SIAM J. Optim. 24(2), 621–642 (2014)

Boyd, S.P., Vandenberghe, L.: Convex Optimization. Cambridge University Press, Cambridge (2004)

Conn, A.A.R., Scheinberg, K., Vicente, L.N.: Introduction to Derivative-Free Optimization, vol. 8. SIAM, Philadelphia (2009)

Conn, A.R., Le Digabel, S.: Use of quadratic models with mesh-adaptive direct search for constrained black box optimization. Optim. Methods Softw. 28(1), 139–158 (2013)

Custódio, A., Dennis, J., Vicente, L.N.: Using simplex gradients of nonsmooth functions in direct search methods. IMA J. Numer. Anal. 28(4), 770–784 (2008)

Flaxman, A.D., Kalai, A.T., McMahan, H.B.: Online convex optimization in the bandit setting: gradient descent without a gradient. In: Proceedings of the sixteenth annual ACM-SIAM symposium on discrete algorithms. SODA ’05, pp. 385–394. Society for Industrial and Applied Mathematics, Philadelphia, PA, USA (2005)

Fu, M.C.: Gradient estimation. In: Henderson, S.G., Nelson, B.L. (eds.) Handbooks in Operations Research and Management Science: Simulation, Chap. 19. Elservier, Amsterdam (2006)

Gao, F., Han, L.: Implementing the Nelder–Mead simplex algorithm with adaptive parameters. Comput. Optim. Appl. 51(1), 259–277 (2012)

Jamieson, K.G., Nowak, R.D., Recht, B.: Query complexity of derivative-free optimization. In: NIPS, pp. 2681–2689 (2012)

Kääriäinen, M.: Active learning in the non-realizable case. In: Algorithmic Learning Theory, pp. 63–77. Springer (2006)

Lagarias, J.C., Reeds, J.A., Wright, M.H., Wright, P.E.: Convergence properties of the Nelder–Mead simplex method in low dimensions. SIAM J. Optim. 9, 112–147 (1998)

Luenberger, D., Ye, Y.: Linear and Nonlinear Programming. Springer, Berlin (2008)

Mohri, M., Rostamizadeh, A., Talwalkar, A.: Foundations of Machine Learning. MIT Press, London (2012)

Nelder, J.A., Mead, R.: A simplex method for function minimization. Comput. J. 7(4), 308–313 (1965)

R Core Team: R: A Language and Environment for Statistical Computing. R Foundation for Statistical Computing, Vienna, Austria (2014). http://www.R-project.org/

Ramdas, A., Singh, A.: Algorithmic connections between active learning and stochastic convex optimization. In: Algorithmic Learning Theory, pp. 339–353. Springer (2013)

Richtárik, P., Takáč, M.: Parallel coordinate descent methods for big data optimization. Math. Program. 156(1–2), 433–484 (2016)

Rios, L.M., Sahinidis, N.V.: Derivative-free optimization: a review of algorithms and comparison of software implementations. J. Glob. Optim. 56(3), 1247–1293 (2013)

Yue, Y., Joachims, T.: Interactively optimizing information retrieval systems as a dueling bandits problem. In: Proceedings of the 26th Annual International Conference on Machine Learning, pp. 1201–1208. ACM (2009)

Yue, Y., Broder, J., Kleinberg, R., Joachims, T.: The k-armed dueling bandits problem. J. Comput. Syst. Sci. 78(5), 1538–1556 (2012)

Zoghi, M., Whiteson, S., Munos, R., de Rijke, M.: Relative upper confidence bound for the K-armed dueling bandit problem. In: ICML 2014: Proceedings of the Thirty-First International Conference on Machine Learning, pp. 10–18 (2014)

Acknowledgments

This work was supported by JSPS KAKENHI Grant No. 16K00044.

Author information

Authors and Affiliations

Corresponding author

Appendices

Appendix 1: Proof of Theorem 1

Proof

The optimal solution of f is denoted as \({\varvec{x}}^*\). Let us define \(\varepsilon '\) be \(\varepsilon /(1+\frac{n}{m\gamma })\). If \(f({\varvec{x}}_t)-f({\varvec{x}}^*)<\varepsilon '\) holds in the algorithm, we obtain \(f({\varvec{x}}_{t+1})-f({\varvec{x}}^*)<\varepsilon '\), since the function value is non-increasing in each iteration of the algorithm

Next, we assume \(\varepsilon '\le {}f({\varvec{x}}_t)-f({\varvec{x}}^*)\). In the following, we use the inequality

that is proved in [11]. For the ith coordinate, let us define the functions \(g_{\mathrm {low},i}(\alpha )\) and \(g_{\mathrm {up},i}(\alpha )\) as

Then, we have

Let \(\alpha _{\mathrm {up},i}\) and \(\alpha _i^*\) be the minimum solution of \(\min _{\alpha }g_{\mathrm {up},i}(\alpha )\) and \(\min _{\alpha }f({\varvec{x}}_t+\alpha {{\varvec{e}}_i})\), respectively. In particular, \(\alpha _{\mathrm {up},i}\) can be written explicitly. Then, we obtain

The inequality \(g_{\mathrm {low},i}(\alpha _i^*)\le {} g_{\mathrm {up},i}(\alpha _{\mathrm {up},i})\) and the concrete form of \(\alpha _{\mathrm {up},i}\) yield that \(\alpha _i^*\) lies between \(-c_0\frac{\partial {f}(x_t)}{\partial {x_i}}\) and \(-c_1\frac{\partial {f}(x_t)}{\partial {x_i}}\), where \(c_0\) and \(c_1\) are defined as

Here, \(0<c_0\le {}c_1\) holds. Each component of the search direction \({\varvec{d}}_t=(d_1,\ldots ,d_n)\ne {\varvec{0}}\) in Algorithm 1 satisfies \(|d_i-\alpha _i^*|\le \eta \) if \(i=i_k\) and otherwise \(d_i=0\). For \(I=\{i_1,\ldots ,i_m\}\subset \{1,\ldots ,n\}\), let \(\Vert {\varvec{a}}\Vert _{I}^2\) of the vector \({\varvec{a}}\in \mathbb {R}^n\) be \(\sum _{i\in {I}}a_{i}^2\).

The vector \((\alpha _1^*,\ldots ,\alpha _n^*)\) is denoted as \({\varvec{\alpha }}^*\). Then, the triangle inequality leads to

The assumption \(\varepsilon '\le {}f({\varvec{x}}_t)-f({\varvec{x}}^*)\) leads to

in which the second inequality is derived from (9.9) in [4].

The above inequality and \(1/4L^2\le {c_0}^2\) yield

Hence, we obtain

where \([x]_+=\max \{0,x\}\) for \(x\in \mathbb {R}\). Let \(Z=\sqrt{\frac{n}{m}}\Vert \nabla {f}(x_t)\Vert _I/\Vert \nabla {f}(x_t)\Vert \) be a non-negative valued random variable defined from the random set I, and define the non-negative value k as \(k=c_0/c_1\le {1}\). A lower bound of the expectation of \((|\nabla {f}({\varvec{x}}_t)^{\top }{\varvec{d}}_t|/\Vert {\varvec{d}}_t\Vert )^2\) with respect to the distribution of I is given as

Here, we recall that \(i_k \in I\) is uniformly distributed. The random variable Z is non-negative, and \(\mathbb {E}_I[Z^2]=1\) holds. Thus, Lemma 2 below leads to

Combining the above inequality with the case of \(f({\varvec{x}}_t)-f({\varvec{x}}^*)<\varepsilon '\), we obtain the conditional expectation of \(f({\varvec{x}}_{t+1})-f({\varvec{x}}^*)\) for given \({\varvec{d}}_0,{\varvec{d}}_1,\ldots ,{\varvec{d}}_{t-1}\) as follows.

Taking the expectation with respect to all \({\varvec{d}}_0,\ldots ,{\varvec{d}}_t\) yields

Since \(0<\gamma <1\) and \(\max \{L\eta ^2/2,\,\varepsilon '\}=\varepsilon '\) hold, for \(\varDelta _T=\mathbb {E}[f({\varvec{x}}_T)-f({\varvec{x}}^*)]\) we have

When T is greater than \(T_0\) in (6), we obtain \(\left( 1 - \frac{m}{n}\gamma \right) ^{T} \varDelta _{0}\le \varepsilon '\) and

\(\square \)

Remark 7

For \(m=1\), the exact evaluation of \(\mathbb {E}[(\nabla {f}({\varvec{x}}_t)^{\top }{\varvec{d}}_t/\Vert {\varvec{d}}_t\Vert )^2]\) is possible. Hence, we do not need to introduce the threshold \(\varepsilon '\) to evaluate the perturbation of the norm \(\Vert {\varvec{d}}_t\Vert \) such as (18). Arbitrary small \(\varepsilon '\) is available, and \(\max \{L\eta ^2/2,\,\varepsilon '\}\) in the above proof becomes \(L\eta ^2/2\). As a result, the faster convergence rate shown in [11] is obtained for \(m=1\).

Lemma 2

Let Z be a non-negative random variable satisfying \(\mathbb {E}[Z^2]=1\). Then, we have

Proof

For \(z\ge 0\) and \(\delta \ge 0\), we have the inequality

Then, we get

By setting \(\delta \) appropriately, we obtain

\(\square \)

Appendix 2: Proof of Corollary 1

Proof

Let us replace \(T_0\) and \(K_0\) with

respectively. Note that \(e^{m\gamma /n}\varDelta _0(1+\frac{n}{m\gamma }) \le 2^{13.5}(L/\sigma )^3n\varDelta _0(1+\frac{n}{m\gamma })\) holds because of \(\sigma \le L\), \(\gamma < 1\) and \(1 \le m \le n\). Suppose that

holds. Then we have

and thus,

holds. Theorem 1 leads to

where \(c_0 = \sqrt{\frac{\log 2}{8}}\). The condition (21) is expressed as

where \([a]_+=\max \{a,0\}\). Note that the left-hand side of the above inequality is determined from the problem setup (i.e. \(\sigma , L, n\)), initial point \({\varvec{x}}_0\), and the parallelization parameter m of Algorithm 1. \(\square \)

Appendix 3: Proof of Corollary 2

Proof

For the output \(\hat{{\varvec{x}}}_Q\) of BlockCD[n, m], \(f(\hat{{\varvec{x}}}_Q)\ge f(\hat{{\varvec{x}}}_{Q+1})\) holds, and thus, the sequence \(\{\hat{{\varvec{x}}}_Q\}_{Q\in {\mathbb {N}}}\) is included in

Since f is convex and continuous, \(C({\varvec{x}}_0)\) is convex and closed. Moreover, since f is convex and it has non-degenerate Hessian, the Hessian is positive definite, and thus, f is strictly convex. Then \(C({\varvec{x}}_0)\) is bounded as follows. We set

the minimal directional derivative along the radial direction from \({\varvec{x}}^*\) over the unit sphere around \({\varvec{x}}^*\) as

Then, b is strictly positive and the following holds for any \({\varvec{x}}\in C(x_0)\) such that \(\Vert {\varvec{x}}-{\varvec{x}}^*\Vert \ge 1\),

Thus we have

Since the right hand side of (22) is a bounded ball, \(C({\varvec{x}}_0)\) is also bounded.

Thus, \(C({\varvec{x}}_0)\) is a convex compact set.

Since f is twice continuously differentiable, the Hessian matrix \(\nabla ^2f({\varvec{x}})\) is continuous with respect to \({\varvec{x}}\in {\mathbb {R}}^n\). By the positive definiteness of the Hessian matrix, the minimum and maximum eigenvalues \(e_{min}({\varvec{x}})\) and \(e_{max}({\varvec{x}})\) of \(\nabla ^2f({\varvec{x}})\) are continuous and positive.

Therefore, there are the positive minimum value \(\sigma \) of \(e_{min}({\varvec{x}})\) and maximum value L of \(e_{max}({\varvec{x}})\) on the compact set \(C({\varvec{x}}_0)\). It means that f is \(\sigma \)-strongly convex and L-Lipschitz on \(C({\varvec{x}}_0)\). Thus, the same argument to obtain (9) can be applied for f. \(\square \)

Rights and permissions

About this article

Cite this article

Matsui, K., Kumagai, W. & Kanamori, T. Parallel distributed block coordinate descent methods based on pairwise comparison oracle. J Glob Optim 69, 1–21 (2017). https://doi.org/10.1007/s10898-016-0465-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10898-016-0465-x