Abstract

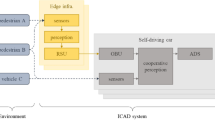

The idea of cooperative perception for navigation assistance was introduced about a decade ago with the aim to increase safety on dangerous areas like intersections. In this context, roadside infrastructure appeared very recently to provide a new point of view of the scene. In this paper, we propose to combine the Vehicle-To-Vehicle (V2V) and Vehicle-To-Infrastructure (V2I) approaches in order to take advantage of the elevated points of view offered by the infrastructure and the in-scene points of view offered by the vehicles to build a semantic grid map of the moving elements in the scene. To create this map, we chose to use camera information and 2-Dimentional (2D) bounding boxes in order to minimize the impact on the network and ignored possible depth information as opposed to all state-of-the art methods. We propose a framework based on two fusion methods: one based on the Bayesian theory and the other on the Dempster-Shafer Theory (DST) to merge the information and chose a label for each cell of the semantic grid in order to assess the best fusion method. Finally, we evaluate our approach on a set of datasets that we generated from the CARLA simulator varying the proportion of Connected Vehicle (CV) and the traffic density. We also show the superiority of the method based on the DST with a gain on the mean intersection over union between the two methods of up to 23.35%.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Availability of data and materials

Basic datasets are available on the following address https://zenodo.org/record/7637904. More are to come.

Code availability

The code is available on GitHub at the following address, https://github.com/caillotantoine/carla-V2X-dataset-generator, for the dataset generation. And, at the following address https://github.com/caillotantoine/Coop-Evidential-Semantic-Grid, for the multi agent grid generation.

References

Datondji, S.R.E., Dupuis, Y., Subirats, P., Vasseur, P.: A survey of vision-based traffic monitoring of road intersections. IEEE Trans. Intell. Transp. Syst. 17(10), 2681–2698 (2016)

Caillot, A., Ouerghi, S., Vasseur, P., Dupuis, Y., Boutteau, R.: Multi-agent cooperative camera-based evidential occupancy grid generation. Conference on Intelligent Transportation Systems. (2022)

Elfes, A.: Using occupancy grids for mobile robot perception and navigation. Computer 22(6), 46–57 (1989)

Thrun, S.: Learning occupancy grid maps with forward sensor models. Auton. Robot. 15(2), 111–127 (2003)

Richter, S., Wang, Y., Beck, J., Wirges, S., Stiller, C.: Semantic evidential grid mapping using monocular and stereo cameras. Sensors. 21(10) (2021). https://doi.org/10.3390/s21103380

Moravec, H., Elfes, A.: High resolution maps from wide angle sonar. In: Proceedings. 1985 IEEE International Conference on Robotics and Automation, vol. 2, pp. 116–121. IEEE (1985)

Birk, A., Carpin, S.: Merging occupancy grid maps from multiple robots. Proc. IEEE 94(7), 1384–1397 (2006)

Jennings, C., Murray, D., Little, J.J.: Cooperative robot localization with vision-based mapping. In: Proceedings 1999 IEEE International Conference on Robotics and Automation (Cat. No. 99CH36288C), vol. 4. pp. 2659–2665 (1999). IEEE. https://www.cs.ubc.ca/labs/lci/papers/docs1998/little-icra98.pdf

Camarda, F., Davoine, F., Cherfaoui, V.: Fusion of evidential occupancy grids for cooperative perception. In: 2018 13th Annual Conference on System of Systems Engineering (SoSE). pp. 284–290 (2018). https://doi.org/10.1109/SYSOSE.2018.8428723

Erkent, O., Wolf, C., Laugier, C.: Semantic grid estimation with occupancy grids and semantic segmentation networks. In: 2018 15th International Conference on Control, Automation, Robotics and Vision (ICARCV). pp. 1051–1056 (2018). https://doi.org/10.1109/ICARCV.2018.8581180, https://hal.inria.fr/hal-01933939/document

Richter, S., Bieder, F., Wirges, S., Stiller, C.: Mapping lidar and camera measurements in a dual top-view grid representation tailored for automated vehicles. (2022). arXiv:2204.07887

Lu, C., Molengraft, M.J.G., Dubbelman, G.: Monocular semantic occupancy grid mapping with convolutional variational encoder-decoder networks. IEEE Robot. Autom. Lett. 4(2), 445–452 (2019)

Annkathrin Krämmer*, Schöller*, D.G.C. , Knoll, A.: Providentia - a large scale sensing system for the assistance of autonomous vehicles. In: Robotics: Science and Systems (RSS), Workshop on Scene and Situation Understanding for Autonomous Driving. (2019). https://sites.google.com/view/uad2019/accepted-posters

Li, Z., Yu, T., Fukatsu, R., Tran, G.K., Sakaguchi, K.: Proof-of-concept of a sdn based mmwave v2x network for safe automated driving. In: IEEE Global Communications Conference (GLOBECOM). pp. 1–6. IEEE (2019)

Bosch: Conduite automatisée: comment les voitures et les infrastructures communiquent en milieu urbain. Website. Accessed 2024-03-22 (2020). https://www.bosch.fr/actualites/2020/conduite%2Dautomatisee%2Dcomment%2Dles%2Dvoitures%2Det%2Dles%2Dinfrastructures%2Dcommuniquent%2Den%2Dmilieu%2Durbain/

Gabb, M., Digel, H., Müller, T., Henn, R.-W.: Infrastructure-supported perception and track-level fusion using edge computing. In: IEEE Intelligent Vehicles Symposium (IV). pp. 1739–1745. (2019). https://doi.org/10.1109/IVS.2019.8813886

Lv, B., Xu, H., Wu, J., Tian, Y., Zhang, Y., Zheng, Y., Yuan, C., Tian, S.: Lidar-enhanced connected infrastructures sensing and broadcasting high-resolution traffic information serving smart cities. IEEE Access. 7, 79895–79907 (2019)

Kim, S.-W., Chong, Z.J., Qin, B., Shen, X., Cheng, Z., Liu, W., Ang, M.H.: Cooperative perception for autonomous vehicle control on the road: Motivation and experimental results. In: IEEE/RSJ International Conference on Intelligent Robots and Systems. pp. 5059–5066. IEEE (2013)

Kim, S.-W., Qin, B., Chong, Z.J., Shen, X., Liu, W., Ang, M.H., Frazzoli, E., Rus, D.: Multivehicle cooperative driving using cooperative perception: Design and experimental validation. IEEE Trans. Intell. Transp. Syst. 16(2), 663–680 (2014)

Redmon, J., Farhadi, A.: Yolov3: An incremental improvement. (2018). arXiv:1804.02767

Baek, M., Jeong, D., Choi, D., Lee, S.: Vehicle Trajectory Prediction and Collision Warning via Fusion of Multisensors and Wireless Vehicular Communications. Sensors. 20(1), 288 (2020). https://doi.org/10.3390/s20010288. Number: 1 Publisher: Multidisciplinary Digital Publishing Institute. Accessed 2022-02-14

Franco, J.-S., Boyer, E.: Fusion of multiview silhouette cues using a space occupancy grid. In: Tenth IEEE International Conference on Computer Vision (ICCV’05) Volume 1, vol. 2. pp. 1747–17532. (2005). https://doi.org/10.1109/ICCV.2005.105 ISSN: 2380-7504

Dempster, A.P., Laird, N.M., Rubin, D.B.: Maximum likelihood from incomplete data via the em algorithm. J. Roy. Stat. Soc.: Ser. B (Methodol.) 39(1), 1–22 (1977) https://doi.org/10.1111/j.2517-6161.1977.tb01600.x, https://www.rss.onlinelibrary.wiley.com/doi/pdf/10.1111/j.2517-6161.1977.tb01600.x

Shafer, G.: Dempster-shafer theory. Encyclop. Artif. Intell. 1, 330–331 (1992)

Xu, P., Dherbomez, G., Héry, E., Abidli, A., Bonnifait, P.: System architecture of a driverless electric car in the grand cooperative driving challenge. IEEE Intell. Transp. Syst. Mag. 10(1), 47–59 (2018)

Englund, C., Chen, L., Ploeg, J., Semsar-Kazerooni, E., Voronov, A., Bengtsson, H.H., Didoff, J.: The grand cooperative driving challenge 2016: boosting the introduction of cooperative automated vehicles. IEEE Wirel. Commun. 23(4), 146–152 (2016). https://doi.org/10.1109/MWC.2016.7553038

Caillot, A., Ouerghi, S., Vasseur, P., Boutteau, R., Dupuis, Y.: Survey on cooperative perception in an automotive context. IEEE Trans. Intell. Transpo. Syst. 1–20 (2022)

Kianfar, R., Augusto, B., Ebadighajari, A., Hakeem, U., Nilsson, J., Raza, A., Tabar, R.S., Irukulapati, N.V., Englund, C., Falcone, P., et al.: Design and experimental validation of a cooperative driving system in the grand cooperative driving challenge. IEEE Trans. Intell. Transp. Syst. 13(3), 994–1007 (2012)

Hartley, R., Zisserman, A.: Multiple view geometry in computer vision 2nd ed., 4th print. New York: Cambridge University Press (2006)

Bradski, G., Kaehler, A.: Opencv. Dr. Dobb’s journal of software tools. 3, 2 (2000)

Stachniss, C.: Exploration and mapping with mobile robots. PhD thesis, Citeseer (2006)

Lefèvre, E.: Fonctions de croyance: de la théorie à la pratique. PhD thesis (2012)

Geiger, A., Lenz, P., Stiller, C., Urtasun, R.: Vision meets robotics: The kitti dataset. Int. J Robotics Res. (2013)

Dosovitskiy, A., Ros, G., Codevilla, F., Lopez, A., Koltun, V.: CARLA: An open urban driving simulator. In: Proceedings of the 1st Annual Conference on Robot Learning. pp. 1–16. (2017)

Xu, H. Runsheng anpignistiqued Xiang, Xia, X., Han, X., Liu, J., Ma, J.: Opv2v: An open benchmark dataset and fusion pipeline for perception with vehicle-to-vehicle communication. (2021) arXiv:2109.07644

Schönberger, J.L., Zheng, E., Pollefeys, M., Frahm, J.-M.: Pixelwise view selection for unstructured multi-view stereo. In: European Conference on Computer Vision (ECCV). (2016)

Mauri, A., Khemmar, R., Decoux, B., Haddad, M., Boutteau, R.: Real-time 3d multi-object detection and localization based on deep learning for road and railway smart mobility. J Imaging. 7(8)(2021). https://doi.org/10.3390/jimaging7080145

Gan, L., Jadidi, M.G., Parkison, S.A., Eustice, R.M.: Sparse bayesian inference for dense semantic mapping. (2017) arXiv:1709.07973

Acknowledgements

This work is supported by ESIGELEC, Rouen, France through an internal Ph.D. grant.

Funding

This work is supported by ESIGELEC, Rouen, France through an internal Ph.D. grant.

Author information

Authors and Affiliations

Contributions

All authors contributed to the study’s methodology, conception and design. Formal analysis: Caillot Antoine and Ouerghi Safa; Software and experimental validation: Caillot Antoine, Ouerghi Safa and Dupuis Yohan; Resources: Caillot Antoine; Writing: The first draft of the manuscript was written by Caillot Antoine and all authors commented on previous versions of the manuscript, all authors read and approved the final manuscript; Supervision and administration: Ouerghi Safa, Dupuis Yohan, Pascal Vasseur and Boutteau Rémi.

Corresponding author

Ethics declarations

Conflicts of interest

The authors have no conflicts of interest to declare that are relevant to the content of this article.

Ethics approval

Not applicable.

Consent to participate

Informed consent was obtained from all participants.

Consent for publication

Participant consented to the submission of this article.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Caillot, A., Ouerghi, S., Dupuis, Y. et al. Multi-Agent Cooperative Camera-Based Semantic Grid Generation. J Intell Robot Syst 110, 64 (2024). https://doi.org/10.1007/s10846-024-02093-4

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10846-024-02093-4