Abstract

Long sequence information remains a challenging problem in deep learning nowadays for predicting remaining useful life (RUL). In this work, we propose a novel deep learning module called attention long short-term memory projected (ALSTMP) for RUL estimation to mitigate the inefficient information of long-term dependencies. The ALSTMP is designed to utilize attention mechanisms in traditional long short-term memory (LSTM) for effectively collecting key features of the dataset. Moreover, the time-window length method is implemented to generate a better feature extraction. The proposed model not only outperforms the traditional LSTM and its extension but also the latest existing approaches with a smaller quantity of parameters compared with recent deep learning approaches.

Similar content being viewed by others

References

M. AriasChao, C. Kulkarni, K. Goebel, O. Fink, Aircraft engine run-to-failure dataset under real flight conditions for prognostics and diagnostics, Data 6 (1) (2021) 5.

Ayodeji, A., Wang, Z., Wang, W., Qin, W., Yang, C., Xu, S., & Liu, X. (2022). Causal augmented convnet: A temporal memory dilated convolution model for long-sequence time series prediction. ISA transactions,123, 200–217.

Azadeh, A., Asadzadeh, S., Salehi, N., & Firoozi, M. (2015). Condition-based maintenance effectiveness for series-parallel power generation system-a combined markovian simulation model. Reliability Engineering & System Safety,142, 357–368.

Bahdanau, D., Cho, K., & Bengio, Y. (n.d.). Neural machine translation by jointly learning to align and translate. arXiv preprint. arXiv:1409.0473

Benkedjouh, T., Medjaher, K., Zerhouni, N., & Rechak, S. (2013). Remaining useful life estimation based on nonlinear feature reduction and support vector regression. Engineering Applications of Artificial Intelligence,26(7), 1751–1760.

Cao, H., Wang, Y., Chen, J., Jiang, D., Zhang, X., Tian, Q., Wang, M. (n.d.). Swin-Unet: Unet-like pure transformer for medical image segmentation. arXiv preprint. arXiv:2105.05537

Chao, M. A., Kulkarni, C., Goebel, K., & Fink, O. (2022). Fusing physics-based and deep learning models for prognostics. Reliability Engineering & System Safety,217, 107961.

Chen, J., Jing, H., Chang, Y., & Liu, Q. (2019). Gated recurrent unit based recurrent neural network for remaining useful life prediction of nonlinear deterioration process. Reliability Engineering & System Safety,185, 372–382.

Dai, Z., Yang, Z., Yang, Y., Carbonell, J., Le, Q. V., & Salakhutdinov, R. (n.d.). Transformer-XL: Attentive language models beyond a fixed-length context. arXiv preprint. arXiv:1901.02860

Dong, L., Xu, S., & Xu, B. (2018). Speech-transformer: A no-recurrence sequence-to-sequence model for speech recognition. In 2018 IEEE international conference on acoustics, speech and signal processing (ICASSP), 2018 (pp. 5884–5888). IEEE.

Dong, M., & He, D. (2007). A segmental hidden semi-markov model (hsmm)-based diagnostics and prognostics framework and methodology. Mechanical systems and signal processing,21(5), 2248–2266.

Duan, Y., Li, H., & Zhang, N. (2022). Mechanical health indicator construction and similarity remaining useful life prediction based on natural language processing model. Measurement Science and Technology,33(9), 094008.

Ellefsen, A. L., Bjørlykhaug, E., Æsøy, V., Ushakov, S., & Zhang, H. (2019). Remaining useful life predictions for turbofan engine degradation using semi-supervised deep architecture. Reliability Engineering & System Safety,183, 240–251.

Fahad, S. A., & Yahya, A. E. (2018). Inflectional review of deep learning on natural language processing. In International conference on smart computing and electronic enterprise (ICSCEE), 2018 (pp. 1–4). IEEE.

Guo, J., Li, Z., & Li, M. (2019). A review on prognostics methods for engineering systems. IEEE Transactions on Reliability,69(3), 1110–1129.

Heimes, F. O. (2008). Recurrent neural networks for remaining useful life estimation. In International conference on prognostics and health management, 2008 (pp. 1–6). IEEE.

Heng, A., Zhang, S., Tan, A. C., & Mathew, J. (2009). Rotating machinery prognostics: State of the art, challenges and opportunities. Mechanical systems and signal processing,23(3), 724–739.

Hochreiter, S., & Schmidhuber, J. (1997). Long short-term memory. Neural computation,9(8), 1735–1780.

G. Hou, S. Xu, N. Zhou, L. Yang, Q. Fu, Remaining useful life estimation using deep convolutional generative adversarial networks based on an autoencoder scheme, Computational Intelligence and Neuroscience, 2020, 3:1.

Jiang, Y., Dai, P., Fang, P., Zhong, R. Y., Zhao, X., & Cao, X. (2022). A2-lstm for predictive maintenance of industrial equipment based on machine learning. Computers & Industrial Engineering,172, 108560.

Kim, T. S., & Sohn, S. Y. (2021). Multitask learning for health condition identification and remaining useful life prediction: deep convolutional neural network approach. Journal of Intelligent Manufacturing,32(8), 2169–2179.

Lee, D., Lim, M., Park, H., Kang, Y., Park, J.-S., Jang, G.-J., & Kim, J.-H. (2017). Long short-term memory recurrent neural network-based acoustic model using connectionist temporal classification on a large-scale training corpus. China Communications,14(9), 23–31.

Lee, J., Wu, F., Zhao, W., Ghaffari, M., Liao, L., & Siegel, D. (2014). Prognostics and health management design for rotary machinery systems-reviews, methodology and applications. Mechanical systems and signal processing,42(1–2), 314–334.

Li, H., Zhao, W., Zhang, Y., & Zio, E. (2020). Remaining useful life prediction using multi-scale deep convolutional neural network. Applied Soft Computing,89, 106113.

Li, X., Ding, Q., & Sun, J.-Q. (2018). Remaining useful life estimation in prognostics using deep convolution neural networks. Reliability Engineering & System Safety,172, 1–11.

Lim, P., Goh, C. K., & Tan, K. C. (2016). A time window neural network based framework for remaining useful life estimation. In International joint conference on neural networks (IJCNN), 2016 (pp. 1746–1753). IEEE.

Liu, H., Liu, Z., Jia, W., & Lin, X. (2020). Remaining useful life prediction using a novel feature-attention-based end-to-end approach. IEEE Transactions on Industrial Informatics,17(2), 1197–1207.

Liu, L., Song, X., & Zhou, Z. (2022). Aircraft engine remaining useful life estimation via a double attention-based data-driven architecture. Reliability Engineering & System Safety,221, 108330.

Malhi, A., Yan, R., & Gao, R. X. (2011). Prognosis of defect propagation based on recurrent neural networks. IEEE Transactions on Instrumentation and Measurement,60(3), 703–711.

Mo, H., & Iacca, G. (2022). Multi-objective optimization of extreme learning machine for remaining useful life prediction. In International conference on the applications of evolutionary computation (Part of EvoStar), 2022 (pp. 191–206). Springer.

Mo, Y., Wu, Q., Li, X., & Huang, B. (2021). Remaining useful life estimation via transformer encoder enhanced by a gated convolutional unit. Journal of Intelligent Manufacturing,32(7), 1997–2006.

Nguyen, K. A., Chen, W., Lin, B.-S., & Seeboonruang, U. (2020). Using machine learning-based algorithms to analyze erosion rates of a watershed in northern taiwan. Sustainability,12(5), 2022.

Nguyen, K. T., & Medjaher, K. (2019). A new dynamic predictive maintenance framework using deep learning for failure prognostics. Reliability Engineering & System Safety,188, 251–262.

P. G. Nieto, E. García-Gonzalo, F. S. Lasheras, F. J. de CosJuez, Hybrid pso–svm-based method for forecasting of the remaining useful life for aircraft engines and evaluation of its reliability, Reliability Engineering & System Safety 138 (2015) 219–231.

Park, J., Ha, J. M., Oh, H., Youn, B. D., Choi, J.-H., & Kim, N. H. (2016). Model-based fault diagnosis of a planetary gear: A novel approach using transmission error. IEEE Transactions on Reliability,65(4), 1830–1841.

Pecht, M., & Gu, J. (2009). Physics-of-failure-based prognostics for electronic products. Transactions of the Institute of Measurement and Control,31(3–4), 309–322.

Qian, Y., Yan, R., & Gao, R. X. (2017). A multi-time scale approach to remaining useful life prediction in rolling bearing. Mechanical Systems and Signal Processing,83, 549–567.

Qin, Y., Chen, D., Xiang, S., & Zhu, C. (2020). Gated dual attention unit neural networks for remaining useful life prediction of rolling bearings. IEEE Transactions on Industrial Informatics,17(9), 6438–6447.

Sateesh Babu, G., Zhao, P., & Li, X.-L. (2016). Deep convolutional neural network based regression approach for estimation of remaining useful life. In International conference on database systems for advanced applications, 2016 (pp. 214–228). Springer.

Saxena, A., Goebel, K., Simon, D., & Eklund, N. (2008). Damage propagation modeling for aircraft engine run-to-failure simulation. In International conference on prognostics and health management, 2008 (pp. 1–9). IEEE.

Sha, Y., Zhang, Y., Ji, X., & Hu, L. (n.d.). Transformer-Unet: Raw image processing with Unet. arXiv preprint. arXiv:2109.08417

Si, X.-S., Wang, W., Hu, C.-H., & Zhou, D.-H. (2011). Remaining useful life estimation—A review on the statistical data driven approaches. European Journal of Operational Research, 213(1), 1–14.

Vaswani, A., Shazeer, N., Parmar, N., Uszkoreit, J., Jones, L., Gomez, A. N., Kaiser, Ł., & Polosukhin, I. (2017). Attention is all you need. In Advances in neural information processing systems 30, 2017.

Voulodimos, A., Doulamis, N., Doulamis, A., & Protopapadakis, E. (2018). Deep learning for computer vision: A brief review. Computational Intelligence and Neuroscience. https://doi.org/10.1155/2018/7068349

H.-K. Wang, Y. Cheng, K. Song, Remaining useful life estimation of aircraft engines using a joint deep learning model based on tcnn and transformer, Computational Intelligence and Neuroscience, 2021, 3:1.

Wang, J., Wen, G., Yang, S., & Liu, Y. (2018). Remaining useful life estimation in prognostics using deep bidirectional LSTM neural network. In Prognostics and system health management conference (PHM-Chongqing), 2018 (pp. 1037–1042). IEEE.

Wu, Y., Yuan, M., Dong, S., Lin, L., & Liu, Y. (2018). Remaining useful life estimation of engineered systems using vanilla lstm neural networks. Neurocomputing,275, 167–179.

Xia, J., Feng, Y., Lu, C., Fei, C., & Xue, X. (2021). Lstm-based multi-layer self-attention method for remaining useful life estimation of mechanical systems. Engineering Failure Analysis,125, 105385.

Xia, T., Song, Y., Zheng, Y., Pan, E., & Xi, L. (2020). An ensemble framework based on convolutional bi-directional lstm with multiple time windows for remaining useful life estimation. Computers in Industry,115, 103182.

Xiang, S., Qin, Y., Zhu, C., Wang, Y., & Chen, H. (2020). Lstm networks based on attention ordered neurons for gear remaining life prediction. ISA transactions,106, 343–354.

Yan, J., He, Z., & He, S. (2022). A deep learning framework for sensor-equipped machine health indicator construction and remaining useful life prediction. Computers & Industrial Engineering,172, 108559.

Yu, W., Kim, I. Y., & Mechefske, C. (2019). Remaining useful life estimation using a bidirectional recurrent neural network based autoencoder scheme. Mechanical Systems and Signal Processing,129, 764–780.

Zhang, Z., Song, W., & Li, Q. (2022). Dual-aspect self-attention based on transformer for remaining useful life prediction. IEEE Transactions on Instrumentation and Measurement,71, 1–11.

C. Zhao, X. Huang, Y. Li, M. YousafIqbal, A double-channel hybrid deep neural network based on cnn and bilstm for remaining useful life prediction, Sensors 20 (24) (2020) 7109.

Zheng, S., Ristovski, K., Farahat, A., & Gupta, C. (2017). Long short-term memory network for remaining useful life estimation. In IEEE international conference on prognostics and health management (ICPHM), 2017 (pp. 88–95). IEEE.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose. The authors have no competing interests to declare that are relevant to the content of this article. All authors certify that they have no affiliations with or involvement in any organization or entity with any financial interest or non-financial interest in the subject matter or materials discussed in this manuscript. The authors have no financial or proprietary interests in any material discussed in this article. We don’t have any conflicts of interest.

Ethical approval

We don’t involve Human Participants and/or Animals.

Informed consent

We don’t have informed consent.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendices

Appendix A: Ablation study

In this work, we conducted experiments on an alternative structure, where the attention mechanism is applied to forget gate \({{f}_{t}}\) of the traditional LSTM, referred to as ALSTMP(1), to strengthen our scientific contribution. We did not investigate the integration for output gate \({{o}_{t}}\) because it has no contribution to the cell gate memory \({{C}_{t}}\). The RUL prediction is made on the two most difficult sub-datasets of the C-MAPSS dataset, which are FD002 and FD004. We investigated two structures with different time window lengths. The results are presented in Tables 6 and 7.

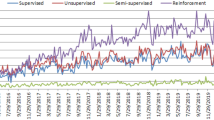

The results of ALSTMP are generally better than those of ALSTMP(1), as described in the two figures. Furthermore, the RMSE and Score values of ALSTMP(1) tend to increase with a larger time window length (the smaller the RMSE and Score, the better the model). In conclusion, the results show that our proposed ALSTMP is the best possible design and well-suited for the time window length method. The results on RMSE and Score values are depicted in Fig. 10.

Appendix B: N-CMAPSS dataset description

In 2021, Arias Chao et al. (2021) further improved the original CMAPSS dataset to generate the new dataset, referred to as N-CMAPSS. In this dataset, realistic flight conditions, such as those recorded on board a commercial jet, were utilized as inputs to the original CMAPSS model (Saxena et al., 2008). The major difference with respect to the original CMAPSS dataset is that the data in N-CMAPSS were significantly enlarged to million (M) samples. Meanwhile, eight aircraft fleets and seven different failure modes exist in the N-CMAPSS dataset, which contains different run-to-fail data, including operating conditions, monitoring sensors, degradation information, and auxiliary information. In the operative conditions, four variables, including altitude, flight Mach number, throttle-resolver angle, and total temperature at the fan inlet, are provided. The monitoring data consist of 14 sensor data and 14 virtual sensor data, while other variables, such as flight classes, RUL label, and binary health state, are provided. Finally, the degradation information described multiple fault latent factors in the dynamic model.

We validated the performances of our proposed model by using the N-CMAPSS dataset. In particular, we used the DS02 sub-dataset of N-CMAPSS, which was developed for data-driven problems (Saxena et al., 2008). The DS02 sub-dataset includes run-to-failure degradation trajectories from nine different turbofan engines with unknown initial healthy conditions. On the basis of the work in Chao et al. (2022), we used six engines (\({{u}_{2}}\), \({{u}_{5}}\), \({{u}_{10}}\), \({{u}_{16}}\), \({{u}_{18}}\), and \({{u}_{20}}\)) for the training set (\({{D}_{train}}\)) and three engines (\({{u}_{11}}\), \({{u}_{14}}\), and \({{u}_{15}}\)) for the test set (\({{D}_{test}}\)). Specifically, the evaluation results on the test set (\({{D}_{test}}\)) can implicitly reflect the comprehensive effectiveness of the RUL models because the two units (\({{u}_{11}}\) and \({{u}_{14}}\)) contain shorter and lower altitude flights compared with those units in the training set (\({{D}_{train}}\)).

The details of the DS02 sub-dataset are described in Table 8. The total number of samples in the training and test sets are 5.26 and 1.25M, respectively. The end-of-life times (\({{t}_{EOL}}\)) relate to the ending life cycle of the engine’s lifespan, which is also considered as the initial labeled RUL corresponding to that engine. Meanwhile, the two different failure modes, the abnormal high-pressure turbine (HPT) and low-pressure turbine (LPT), are also presented in the table. It is worth noticing that the unit with combined failure modes is subject to a more complicated failure mode than a single one.

Appendix C: Experimental results on the DS02 sub-dataset of N510 experimental results on the DS02 sub-dataset of N-CMAPSS

We utilized the same setup as the work in Chao et al. (2022). In particular, the sample rate of the data is 0.1 Hz, where the total samples for the training and test sets are 0.526 and 0.125M, respectively. Moreover, we chose the same 20 signals from the multivariate time series for our selected features \({{N}_{f}}\). We also applied the time window length method to the DS02 sub-dataset to investigate the effectiveness of our proposed model. No deletion was carried out on the test set since all the recorded data cycles are large enough for the time window length method. We then trained our proposed model with a set of six different window lengths to figure out the most effective window for the test set of DS02. Thereafter, we made the RUL prediction on each unit of the test set and the whole data (Average) to examine how the predicted data are affected by the different lengths of time window. The evaluation results are described in Table 9.

The table illustrates that the RMSE and Score values of the unit reduce when the time window length is increased, indicating that the larger the time window size, the better the RUL prediction. Meanwhile, the RUL estimation for the other units (\({{u}_{14}}\) and \({{u}_{15}}\)) is better with a smaller time window length, where the best results are obtained at the length of 30. Unit \({{u}_{11}}\) has the longest life cycles among all the three units. Therefore, it can be reflected that a bigger time window length will be more suitable for those units with longer life cycles. In terms of RUL prediction on the whole test set (Average), the table demonstrates that the best results commonly line up on the time window length of 40, 50, 60, and 70, where the best result is achieved at the length of 40. Finally, we used the best result in Table 9 for comparison with the benchmarks.

To set up, we selected the time window length \({{L}_{tw}}=40\) for all the comparing models at the pre-processing stage. The architectures of the comparison include a simple multilayer perceptron (MLP) structure in Mo and Iacca (2022) and a proposed CNN structure in Chao et al. (2022). In particular, the MLP network consists of four hidden layers, in which the first three of them have 200 neurons, and the fourth layer has 50 neurons. The ReLU activation function is applied for all hidden nodes. Meanwhile, the CNN structure is constructed of three convolution layers followed by a fully connected layer (FC). The selected kernel size is 10 for all CNN layers. The output size of the first two layers is 10, while it is reduced to one for the last CNN layer. The output size for the FC layer is 50, and the ReLU activation function is applied for all layers. The results are presented in Table 10.

It can be observed that our proposed ALSTM model outperformed all the best-presented benchmarks on RUL prediction of units \({{u}_{11}}\), \({{u}_{15}}\) and the whole test set \({{D}_{test}}\) (Average). Comparing with the MLP method, the results show that our proposed model obtains 20.55%, 10.51%, 22.06%, and 17.07% reductions in the RMSE values, respectively. Meanwhile, the Score values reduce by 26.43%, 18.24%, 26.59%, and 22.15% in units \({{u}_{11}}\), \({{u}_{14}}\), \({{u}_{15}}\), and the whole test set \({{D}_{test}}\), respectively. The superior results of our proposed method have shown the benefit of incorporating the attention mechanism inside the LSTM and the effectiveness of the time window length method. In summary, the results on unit \({{u}_{14}}\) from our proposed model are inferior to the best approaches (CNN); however, the comprehensive performances on the other sub-dataset are still remarkable. Lastly, we evaluated each specific unit for all models in Figs. 10, 11, and 12.

Appendix D: Statistical discussion

In addition to using the RMSE and Score values to validate the performance of our proposed model, we investigate whether the obtained results are statistically significant. In this study, we used the Wilcoxon signed-rank test to compare the errors in pair of the RUL predictive models. We used the same setup as motivated by the work in Nguyen et al. (2020), where the formulas of the absolute error for each RUL estimation are defined as follows:

where \({{Y}_{t}}\) is true labeled RUL, \({{Y}_{p1}}\) is the predicted RUL of model 1 and \({{Y}_{p2}}\) is the predicted RUL of model 2. We applied a one-tailed hypothesis test to determine whether the prediction of model 1 is better than model 2. The null hypothesis (\({{H}_{o}}\)) is that “two models have the same predictive error” (\({{A}_{1}}={{A}_{2}}\)). The alternative hypothesis (\({{H}_{a}}\)) is that “the first model has a smaller error than the second model” (\({{A}_{1}}<{{A}_{2}}\)). In this case, the Wilcoxon signed-rank test is run using the SciPy library in Python with a significance level of 0.05. The output of Wilcoxon signed-rank test is a \({p{\text {-}}value}\), which is used to judge whether the null hypothesis (\({{H}_{o}}\)) will fail to reject \((p{\text {-}}value>0.05)\) and vice versa, the null hypothesis (\({{H}_{a}}\)) will be rejected \((p{\text {-}}value<0.05)\). The results of the Wilcoxon signed-rank test on the test set (\({{D}_{test}}\)) for each combination of the three models are summarized in Table 11.

The table represents the results from running the Wilcoxon signed-rank test, where errors between different models are compared. In particular, the absolute error of each model is calculated and denoted as \({{A}_{MLP}}\), \({{A}_{CNN}}\), and \({{A}_{ALSTMP}}\) corresponding to the compared models in Table 10. The \({p{\text {-}}value}\) between MLP and CNN on units \({{u}_{14}}\) and \({{u}_{15}}\) are smaller than the threshold value (0.05), while the results for the other two sets (unit \({{u}_{11}}\) and Average) are not small enough to reject the null hypothesis. Meanwhile, the \({p{\text {-}}value}\) between our proposed model and each of the compared models (ALSTMP–MLP and ALSTMP–CNN) are small enough to reject the null hypothesis, except the \({p{\text {-}}value}\) of ALSTMP–MLP on unit \({{u}_{11}}\). Based on the Wilcoxon signed-rank test on the full test set (Average), it can be concluded that the error from our proposed model was a statistically better model than the MLP and CNN.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Tseng, SH., Tran, KD. Predicting maintenance through an attention long short-term memory projected model. J Intell Manuf 35, 807–824 (2024). https://doi.org/10.1007/s10845-023-02077-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10845-023-02077-5