Abstract

Understanding neural computation on the mechanistic level requires models of neurons and neuronal networks. To analyze such models one typically has to solve coupled ordinary differential equations (ODEs), which describe the dynamics of the underlying neural system. These ODEs are solved numerically with deterministic ODE solvers that yield single solutions with either no, or only a global scalar error indicator on precision. It can therefore be challenging to estimate the effect of numerical uncertainty on quantities of interest, such as spike-times and the number of spikes. To overcome this problem, we propose to use recently developed sampling-based probabilistic solvers, which are able to quantify such numerical uncertainties. They neither require detailed insights into the kinetics of the models, nor are they difficult to implement. We show that numerical uncertainty can affect the outcome of typical neuroscience simulations, e.g. jittering spikes by milliseconds or even adding or removing individual spikes from simulations altogether, and demonstrate that probabilistic solvers reveal these numerical uncertainties with only moderate computational overhead.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Computational neuroscience is built around computational models of neurons that allow the simulation and analysis of signal processing in the central nervous system. These models can describe neural computations on different levels of abstraction. On the statistical level, e.g. generalized linear models have been used to provide a probabilistic model mapping environmental variables to neural activity (Pillow et al., 2008). For such statistical models, quantifying the uncertainty of the parameters can be achieved using Bayesian approaches (Gerwinn et al., 2008). On the mechanistic level, the models typically take the form of systems of coupled ordinary differential equations (ODEs), which describe the dynamics of the membrane potential and give rise to the spike-times (Gerstner & Kistler, 2002; Izhikevich, 2007). Recently, likelihood-free inference approaches have made it possible to perform uncertainty-aware inference even for such complicated mechanistic models (Gonçalves et al., 2020; Oesterle et al., 2020; Papamakarios et al., 2018).

However, mechanistic models of neurons are subject to an additional source of uncertainty: the numerical error caused by the solution of the model’s ODEs with a concrete algorithm (Hennig et al., 2015). This arises because all numerical solvers are necessarily run with finite time and limited resources, so their estimate diverges from the true solution of the ODE, even if the problem is well-posed. When simulating neurons, one would like to compute a numerical solution close to the true solution of the ODE, to ensure that conclusions drawn from the simulations are based on the mechanisms described by the model rather than the specific choice, setting and implementation of the ODE solver.

Many of the well-established numerical solvers do report a global error estimate and a corresponding tolerance that can be set by the user (Hairer et al., 1993, Chapter II.4). This global scalar error, though, does not capture how the numerical error arising from finite step-sizes used in practice affects crucial quantities of interest in the simulation, such as spike-times or the number of spikes. In practice, it can therefore be challenging to select a tolerance that strikes a good balance between run time and accuracy.

For some of the most common mechanistic models in neuroscience like the Hodgkin–Huxley or Izhikevich neuron model, errors in numerical integration have been studied in detail for a range of solvers and different integration step-sizes (Stewart & Bair, 2009; Borgers & Nectow, 2013; Chen et al., 2020). These studies have shown that standard solvers are often not the best choice in terms of accuracy or the accuracy vs. run time tradeoff. Therefore, the authors of these studies proposed to use specific solvers for the analyzed models, e.g. the Parker–Sochacki method for the Hodgkin–Huxley and Izhikevich neuron (Stewart & Bair, 2009), an exponential midpoint method (Borgers & Nectow, 2013) or second-order Strang splitting (Chen et al., 2020) for Hodgkin–Huxley-like models. While improving computations for the specific problems, applying these to other scenarios requires a detailed understanding of the kinetics of the neuron model of interest; and while choosing a “good” solver for a given model is important, it is typically not necessary to choose the “best” ODE solver. In many cases, it can be sufficient to ensure that the computed solution is within a certain accuracy.

As a more general approach to quantify the numerical uncertainty in mechanistic models in neuroscience, we therefore propose to use probabilistic ODE solvers (Hennig et al., 2015; Oates & Sullivan, 2019; Cockayne et al., 2019). In contrast to classical ODE solvers, this class of solvers does not only yield a single solution, but instead a distribution over solutions that quantifies the numerical uncertainty.

Several frameworks for probabilistic ODE solvers have been proposed, which differ mostly in the tradeoff between computational cost and flexibility of the posterior, from fast Gaussian filters (Schober et al., 2019; Tronarp et al., 2019; Krämer et al., 2021) to sampling-based approaches (Conrad et al., 2017; Chkrebtii et al., 2016; Teymur et al., 2016, 2018; Abdulle & Garegnani, 2020). These solvers have been mostly tested for well-behaved systems with well-behaved solutions, but the ODEs used to simulate neural activity model the non-linear membrane dynamics that underlie the all-or-none nature of an action potential. Here, we use two related approaches of probabilistic ODE integration, the state perturbation proposed by Conrad et al. (2017) and the step-size perturbation of Abdulle and Garegnani (2020). Both build on existing explicit, iterative ODE solvers and stochastically perturb the numerical integration of individual steps taken by the underlying solvers. These perturbations make the solution of every step probabilistic and therefore also the solution as a whole. The magnitude of the perturbation has to be calibrated, such that the solver’s output distribution reflects the numerical uncertainty in the solution.

Here, we explore the potential of probabilistic ODE solvers for neuron models. We show how the state and step-size perturbation methods can be used to quantify and reveal numerical uncertainty caused by the numerical ODE integration and demonstrate that the solver outputs are easy to interpret. For this, we simulate typical neuron models, namely the Izhikevich neuron model (Izhikevich, 2004), as a representative of leaky-integrate-and-fire neuron models, single-compartment Hodgkin–Huxley models (Hodgkin & Huxley, 1952) and a model with three synaptically coupled Hodgkin–Huxley-like neurons (Prinz et al., 2004) as an example of a neuronal network model. Lastly, we discuss practical considerations and limitations of these probabilistic solvers such as the calibration of the perturbation and the computational overhead.

Taken together, our results suggest that probabilistic ODE solvers should be considered as a useful tool for the simulation of neuronal systems, to increase the quality and reliability of such simulations over those achieved with classic solvers.

2 Methods and models

2.1 Probabilistic solvers

Illustration of probabilistic ODE solvers. A Left: A single step with a state perturbed forward Euler (FE) method (Conrad et al., 2017) for the ODE \(f(t,x(t)) = 3 \cdot x(t) \cdot \sin (t+3)\) and the exact solution \(x(t) = \exp (-3 \cdot \cos (t+3))\) (black curve). We set \(t_0=0\) and the step-size to \(\Delta t=0.1\). The exact solution at \(t=t_0 + \Delta t\) is highlighted (black dot). A first order solution is computed using FE: \(x_\text {det.}(t +\Delta t)=x_0 + \Delta t \cdot f(t_0, x_0)\) (\(x_\text {det.}\): green dot, f: blue arrow). Right: The probability density function \(\rho\) of \(x_\text {prb}(t +\Delta t)\), where \(x_\text {prb}(t +\Delta t)\) is the output of the probabilistic step. In the state perturbation, \(\rho\) is a normal distribution with mean \(x_\text {det.}(t +\Delta t)\) and a standard deviation based on a local error estimator (see Sect. 2.1). A sample is shown for illustration (red dot). B As in A, but for the step-size perturbation (Abdulle & Garegnani, 2020). Instead of integrating from \(t_0\) to \(t_0 + \Delta t\), the ODE is integrated from \(t_0\) to \(t_0 + \zeta _t\), where \(\zeta _t\) is randomly drawn from a log-normal distribution (top panel). The solution of this perturbed integration \(x_\text {det.}(t +\zeta _t)\) (green circle) is then used as the solution \(x_\text {prb}(t +\Delta t)\) of the probabilistic step (red dot), making \(x_\text {prb}(t +\Delta t)\) a random variable with a distribution \(\rho (x_\text {prb}(t +\Delta t))\) (right panel), that has no general analytical form and is therefore shown as a histogram and a kernel density estimate. C Simulations of an Izhikevich neuron with a deterministic (green) and a state perturbed FE method (two samples: red and orange)

Simulating neuron models typically amounts to solving an initial value problem (IVP) based on a set of coupled ODEs. In abstract form, an initial value problem is given by

where f, \(\mathbf {x}_0\) and \(t_0\) are known and \(\mathbf {x}(t)\) for \(t>t_0\) is the quantity of interest. The solution to the initial value problem at time \(t + \Delta t\) provided the solution at time t, is given by integrating Eq. (1) from t to \(t + \Delta t\):

Except for special cases, this integral has no analytic form and must be solved numerically. For example, the forward Euler method approximates the integral as \(\int _{t}^{t + \Delta t} f(s, \mathbf {x}(s)) \,\text {d} s \approx \Delta t \cdot f(t, \mathbf {x}(t))\). To simulate a neuron, Eq. (2) is solved iteratively, which results in a sequence of solutions \(X = [\mathbf {x}(t_0), \mathbf {x}(t_1), \mathbf {x}(t_2), ..., \mathbf {x}(t_M)]\) for a set of time points with \(t_{i+1} > t_i\) and a maximum time point \(t_M\). Standard solvers yield a deterministic solution in every step, and therefore for the solution X as a whole. In contrast, the probabilistic solvers used in this study stochastically perturb the numerical integration used to approximate Eq. (2), which makes the solution of every step—and therefore of the whole solution—probabilistic. For a given IVP and solver, one can therefore generate a sample distribution of solutions X by repeating the iterative numerical integration from \(t_0\) to \(t_M\) multiple times. To create these probabilistic solvers, we implemented the state perturbation algorithm of Conrad et al. (2017) and the step-size perturbation algorithm of Abdulle and Garegnani (2020).

In the state perturbation algorithm (Conrad et al., 2017), in each step of the numerical integration, a small independently drawn noise term \(\varvec{\xi }_t\) is added to the solution \(\mathbf {x}_\text {det.}(t+\Delta t)\) of a corresponding deterministic integration scheme:

where \(\varvec{\nu }_t\) controls the magnitude of the perturbation. The perturbation is only efficient when \(\varvec{\nu }_t\) is of the right order: if chosen too small, the uncertainty will be underestimated; if chosen too large, it will render the solver output useless. Conrad et al. (2017) suggested calibrating \(\varvec{\nu }_t\) to replicate the amount of error introduced by the numerical scheme. We chose \(\varvec{\nu }_t = \sigma \varvec{\varepsilon }_t\) using the error estimator \(\varvec{\varepsilon }_t\) readily available in methods that were developed for step-size adaptation (see Sect. 5.1), and a scalar perturbation parameter \(\sigma\) that can be adjusted to calibrate the perturbation. If not stated otherwise, we used \(\sigma =1\). An example of this perturbation method is shown in Fig. 1A for a single integration step and in Fig. 1C for an Izhikevich neuron model.

A related approach to stochastically perturbing the numerical integration was proposed by Abdulle and Garegnani (2020), where noise is added to the integration step-size (i.e. to the “input” of the solver, rather than the “output”, cf. Fig. 1B). The numerical integration is performed using the perturbed step-size \(\zeta _t\), but the computed solution is treated as the solution for the original step-size \(\Delta t\):

where \(\zeta _t\) is the perturbed step-size drawn from a distribution \(\mathcal {P}\) and \(\mathbf {x}_\text {det.}(\bullet )\) is a deterministic integration scheme that approximates Eq. (2). For example, for the forward Euler method Eq. (4) would be computed as \(\mathbf {x}_\text {prb}(t+\Delta t) = \mathbf {x}_\text {det.}(t) + \zeta _t \cdot f(t, \mathbf {x}_\text {det.}(t))\). Abdulle and Garegnani (2020) defined three properties the i.i.d. random variables \(\zeta _t\) should fulfill:

-

\(\mathcal {P}(\zeta _t > 0) = 1\),

-

there exists \(\Delta t\) such that \(\mathbb {E}[\zeta _t] = \Delta t\), and

-

there exist \(p \ge 0.5\) and \(C > 0\) independent of t such that \(\mathbb {E}[(\zeta _t - \Delta t)^2] = C \cdot {\Delta t}^{2p+1}\).

Based on these restrictions, they proposed, as an example, to use a log-normal distribution:

where m and s are the mean and standard deviation of the underlying normal distribution, which are given as:

Using \(p \le O\), where O is the order of the method, ensures that the mean-squared convergence order of the method is not changed. We used \(p=O\) throughout to maximize the effect of the perturbation without changing the convergence order. We further generalized the example provided by Abdulle and Garegnani (2020) in which \(C=1\) to a parametrized distribution by setting \(C = \sigma ^2\), i.e. setting \(\phi = \sqrt{{\Delta t}^2 + \sigma ^2 \cdot {\Delta t}^{2 O+1}}\). The introduction of the perturbation parameter \(\sigma\) allows to—similarly to the perturbation parameter used in the state-perturbation—adjust and calibrate the magnitude of perturbation. If not stated otherwise, we used \(\sigma =1\). The perturbation of a single step is illustrated in Fig. 1B.

2.2 Choice of solvers

We used the perturbation methods described above to create probabilistic versions of the solvers listed in Table 1.

The usage of fixed (f) and adaptive (a) step-sizes is indicated with subscripts, and the perturbation method is indicated using the superscripts—x for the state perturbation (Conrad et al., 2017) and t for the step-size perturbation (Abdulle & Garegnani, 2020)—meaning that e.g. FEfx is referring to a forward Euler method using fixed step-sizes and the state perturbation. For the exponential integrators, we chose to only use the step-size perturbation because it preserves the important property of these solvers that the activation and inactivation variables cannot leave the interval [0, 1], and also because there are no established methods for local error estimation for these methods.

The second order exponential integrator EEMP was implemented based on the version by Börgers and Nectow (2013) (Sect. 5.2), which is a modification of the midpoint method by Oh and French (2006). Computation of Runge–Kutta steps and step-size adaptation were based on the respective scipy implementations (Virtanen et al., 2020). To avoid computational overhead, we only computed the local error estimates when necessary, i.e. for adaptive step-sizes or the state perturbation.

2.3 Interpolation

The iterative solvers used in this study yield solutions for \(\mathbf {x}(t)\) on either a fixed and equidistant grid of time points T or, in the case of adaptive step-size solvers, on a finite set of time points T automatically chosen by the solver. To interpolate these solutions for example for spike-time estimation (see Sect. 2.4), we used linear interpolation for FE, EE and EEMP between solutions of single steps. To interpolate the steps of the Runge–Kutta methods we utilized the “dense output” implemented in the respective scipy methods (Virtanen et al., 2020). These “dense outputs” allow evaluating the solution between two steps \(\mathbf {x}(t_i)\) and \(\mathbf {x}(t_{i+1})\) for any t with \(t_i \le t \le t_{i+1}\) without any additional ODE evaluation. To not discard the effect of the state perturbation during interpolation, we defined the dense output \(\hat{d}_\text {RK}(t, t_i, t_{i+1})\) for a state perturbed Runge–Kutta step from time \(t_i\) to \(t_{i+1}\) as:

where \(d_\text {RK}(t, t_i, t_{i+1})\) is the dense output of the respective deterministic Runge–Kutta step and \(\varvec{\xi }_{t_i}\) is the perturbation noise that was added to this step to compute \(\mathbf {x}(t_{i+1})\) (see Eq. (3)). This is a simplified version of the continuous-time output proposed by Conrad et al. (2017).

2.4 Spike-time estimation

To determine spike-times based on simulated voltage traces v(t), we interpolated the ODE solutions for all steps where v(t) started from below and ended above a certain threshold voltage \(v_\text {th}\). For lineally interpolated solutions (Sect. 2.3) we computed spike-times as follows. For every step from a time \(t_i\) to \(t_{i+1}\) with \(v(t_i) < v_\text {th} \le v(t_{i+1})\) we estimated the respective spike-time \(t_\text {spike}\) as:

To estimate spike-times for Runge–Kutta methods with “dense-outputs”, we utilized scipy’s “brentq” root finding algorithm to determine the time point \(t_\text {spike}\) when the threshold is reached, i.e. \(|v(t_\text {spike}) - v_\text {th}| < \epsilon\), with \(\epsilon =1e-12\).

2.5 Common ODE models in computational neuroscience

In this study, we use probabilistic ODE solvers to analyze the effect of numerical uncertainty in the following neuroscience models:

-

The Izhikevich neuron model with a range of dynamics,

-

the Hodgkin–Huxley neuron model,

-

and a small network of Hodgkin–Huxley neurons.

We picked these models to cover both single neuron models and models of neuronal networks.

2.5.1 Single Izhikevich neurons

The Izhikevich neuron (IN) model is a simplified non-linear single neuron model that has been used e.g. to build large-scale models of the brain (Izhikevich & Edelman, 2008) and to understand oscillatory phenomena in the cortex (Izhikevich, 2003; Domhof & Tiesinga, 2021) and the olfactory bulb (Galán et al., 2006). An attractive property of the IN is that a whole range of different response dynamics can be simulated (Fig. S1) depending on the setting of the parameters \(\varvec{\theta } = [a, b, c, d]\) (Izhikevich, 2004). The IN is described by the following pair of ODEs (Izhikevich, 2003):

where v is the membrane potential, u is a recovery variable and \(I_{\text {Stim}}\) is a given input current. Whenever the spike threshold, a spike is triggered and the neuron is reset in the next time step of the simulation:

where \(\Delta t_\text {Sp} \ge 0\). Following the original implementation, we set the threshold to 30. Typically, \(\Delta t_\text {Sp} = \Delta t\) is used, but to facilitate the comparison between different step-sizes we used \(\Delta t_\text {Sp} = 0\) instead. In the original implementation, the reset can only be triggered after a full integration step. So, whenever \(v(t_{i+1}) \ge 30\) after a step from \(t_{i}\) and \(t_{i+1}\), the neuron is reset as described above, i.e. \(v(t_{i+1}+\Delta t_\text {Sp}) = c\) and \(u(t_{i+1}+\Delta t_\text {Sp}) = u(t_{i+1})+d\). This is problematic, because it introduces an error of order \(O(\Delta t)\) (Stewart & Bair, 2009), independent of the solver scheme.

Therefore, in addition to this discrete version of resetting the neuron, we implemented a continuous version based on two complementary strategies. Fist, we adapted Eq. (9) such that whenever \(\dot{v}\) and \(\dot{u}\) would have been evaluated for \(v(t) \ge 30\)—which can only happen for multi-stage methods—the derivatives were evaluated for \(v(t)=30\) instead. Second, we implemented the strategy suggested by Stewart and Bair (2009): Every step resulting in a reset is split into two intermediate steps, a step until the threshold is reached, and a step after the reset. For this, the spike-time \(t_\text {spike}\) during such as step was estimated as described in Sect. 2.4 with a threshold of \(v_\text {th} = 30\). Then, the pre-reset step solution \(\mathbf {x}(t_\text {spike})\) was approximated based on the interpolation strategies described in Sect. 2.3. And finally, the post-reset step solution \(\mathbf {x}(t_{i+1})\) was computed by resetting (see Eq. (10)) and integrating \(\mathbf {x}\) from \(t_\text {spike}\) to \(t_{i+1}\).

2.5.2 Single Hodgkin–Huxley neurons

Hodgkin–Huxley (HH) models (Hodgkin & Huxley, 1952) are widely used to simulate single and multi-compartment neurons. We study both the classical HH neuron (Hodgkin & Huxley, 1952) and a single compartment HH-like neuron model (Prinz et al., 2003) prominently used to study the stomatogastric ganglion (STG) (Prinz et al., 2004). Both models are described by ODEs that include, among other state variables, the membrane potential v(t):

where C is the membrane capacitance, \(I_{\text {Stim}}\) is the stimulation current and \(I_i\) are membrane currents. These membrane currents are described by the following equation:

where \(E_i\) is the reversal potential of the current, \(\bar{g}_i\) is the maximum channel conductance, \(p_i\) are integer exponents, and \(m_i\) and \(h_i\) are activation and inactivation functions. \(m_i\) and \(h_i\) were modeled by the following differential equations:

where \(m_\infty\), \(\tau _m\), \(h_\infty\), and \(\tau _h\) are voltage dependent functions defining the channel’s kinetics. For non-inactivating channels, \(h_i\) is removed from Eq. (12). In the classical HH model, this amounts to a 4-dimensional ODE (Ermentrout & Terman, 2010). For the STG neuron, which has eight instead of two membrane currents and also implements a model for the intracellular calcium concentration, the ODE is 13-dimensional (Prinz et al., 2003). The respective parametrizations can be found in Sect. 5.3.

We simulated the HH neuron’s response to two different input currents \(I_{\text {Stim}}\), a step and a noisy step stimulus. Both stimuli were 200 ms long, with \(I_{\text {Stim}}(t) = 0\) for \(t < t_\text {onset}\) and \(t \ge t_\text {offset}\), where \(t_\text {onset} = 10 \text { ms}\) and \(t_\text {offset} = 190 \text{ ms}\). The amplitude of the step stimulus for \(t_\text {onset} \ge t < t_\text {offset}\) was \(I_{\text {Stim}}(t) = 0.2 \text { mA}\). The amplitude of the noisy step stimulus were created by drawing 100 values from a uniform distribution between 0.0 mA and 0.4 mA that were spaced equidistantly between \(t_\text {onset}\) and \(t_\text {offset}\). These points were interpolated using a cubic spline with endpoints at \(t_\text {onset}\) and \(t_\text {offset}\). At the endpoints both \(I_{\text {Stim}}\) and its derivative were set to zero. The single STG neuron was simulated for 3 s using a step stimulus starting at \(t_\text {onset} = 0.9\text { s}\) with an amplitude of \(I_{\text {Stim}}(t) = 3 \text { nA}\).

2.5.3 STG model

The STG neuron model described above was used by Prinz et al. (2004) in a network of three synaptically coupled neurons, ABPD, LP and PY, to study their firing patterns in dependence of the synaptic and neuronal parametrizations. In the model, there are seven synapses connecting the neurons, that are either modeled as slow or fast synapses. The postsynaptic input current \(I_i\) to a neuron is described by:

where, similarly to Eq. (12), \(E_i\) is the reversal potential of the current, \(\bar{g}_i\) is the synapse’s maximum conductance, v is the membrane potential of the postsynaptic neuron and s is the activation function of the synapse. s is described by the following differential equation:

where \(v_\text {pre}\) is the membrane potential of the presynaptic neuron and \(\tau _s\) and \(f_s\) are constants (see Sect. 5.3).

2.6 Quantifying numerical uncertainty

2.6.1 Reference solutions

None of the aforementioned neuron models has an analytical solution. It is therefore not possible to compare simulations to the true solutions of the respective IVPs. As a substitute, we computed reference solutions using a deterministic RKDPa solver with a tolerance of \(\kappa ={1}\mathrm {e}{-12}\) and a maximum step-size dependent on the model investigated (0.01 ms for IN and HH; 0.1 ms for the STG model). To obtain a reference solution at the same time points of a given fixed step-size solution \(X = [\mathbf {x}(t_0), ..., \mathbf {x}(t_M)]\), we forced the reference solver to evaluate \(\mathbf {x}(t)\) at least at all time points \(T = [t_0, ..., t_M]\) of the given solution. For this, in every step in which the adaptive reference solver automatically picked a step-size that would skip any \(t_i\) in T by taking a too large step-size \({\Delta t}_{i-1}\), the step-size \({\Delta t}_{i-1}\) was clipped such that the step was evaluated exactly at \(\mathbf {x}(t_i)\). All solutions \(\mathbf {x}(t)\) for t not in T were dropped before the comparison. To compare adaptive step-size solvers to reference solutions, we also forced these solvers to evaluate time points on a grid \(T = [t_0, ..., t_M]\) with time points space equidistantly using a distance of 1 ms.

2.6.2 Distance metrics

To estimate the uncertainty for a given neuron model and solver, we computed multiple solutions (samples) with the same probabilistic solver to obtain a distribution of solutions. Based on these sample distributions and the respective reference solutions, we evaluated the distributions of sample-sample distances and sample-reference distances using two different distance measures. As a general measure, we computed Mean Absolute Errors (MAEs) between single traces. If not stated otherwise, MAEs were computed on the simulated membrane potentials v(t), because this is typically the quantity of interest. For two traces of equal size \(\mathbf {a}=[a_0, ..., a_M]\) and \(\mathbf {b}=[b_0, ..., b_M]\) the MAE was defined as:

For n samples from a probabilistic solver, we computed the sample-sample distance distribution MAESM as the n MAEs between single samples and the mean trace of the other \(n-1\) samples. Sample-reference distance distributions MAESRwere computed as the n MAEs between single samples and the reference solution. In some cases, we also computed the distance between the solution of a corresponding deterministic solver to the reference solution, abbreviated as MAEDR.

As a second metric, we computed “SPIKE-distances” between the spike-times of different solutions (Kreuz et al., 2013). The SPIKE-distance is a bounded distance measure between zero and one that quantifies the dissimilarity between two (or more) spike-trains based on the distances between neighboring spikes. Here, we used an open-source python implementation (Mulansky & Kreuz, 2016).

For plotting, we also computed spike density functions (SDFs) of sample distributions as Gaussian kernel density estimates with a bandwidth optimized through grid-search and tenfold cross-validation using the Scikit-learn toolbox (Pedregosa et al., 2011).

3 Results

In this study, we explored the potential of probabilistic ODE solvers in computational neuroscience. First, we study the effect of numerical uncertainty on simulations of neuron models and qualitatively show that probabilistic solvers can reveal this uncertainty in a way that is easy to interpret. Second, we provide examples and guidelines where probabilistic solvers can be useful when conducting a new study. Third, we analyze potential drawbacks of probabilistic solvers, such as computational overhead.

3.1 Probabilistic solvers can reveal numerical uncertainty in neuron models

Neuron simulations can be subject to substantial numerical uncertainty. A Simulations of the classical HH model for the step stimulus \(I_\text {Stim}\) (normalized stimulus in gray). Solutions for v(t) are shown for a reference solver (black) and a deterministic EE solver with \(\Delta t = 0.25 \text { ms}\) (orange) together with a spike density function (SDF) estimated from \(100\) samples from a probabilistic EEft solver using the same step-size (blue). Bottom panel: Spike-times of the reference (black), the deterministic EE solution (orange) and for \(5\) randomly selected samples from the probabilistic solver (blue). B, C As in A, but for simulations of the IN model for two different parametrizations \(\theta _i\) (see Sect. 5.3) and stimuli \(I_\text {Stim}\) (normalized stimuli in gray). Here, a FEft solver and its deterministic version were used. Additionally, a solution was computed using the original solver (green). Based on the original publication, the step-size \(\Delta t\) was set to 0.5 ms for all but the reference solver. For plotting, v(t) were clipped at 30. In C, the number of spikes of the \(100\) samples are shown as a histogram. D, E As in A, but for simulations of the STG neuron network model for two different synaptic parametrizations \(\varvec{\theta }_\text {syn}^\text {b}\) and \(\varvec{\theta }_\text {syn}^\text {d}\) (see Sect. 5.3), respectively. The membrane potential v(t) and spike-times are shown for the LP neuron. solutions were computed using an EEft solver and its deterministic counterpart with a step-size of \(\Delta t=0.1 \text { ms}\)

To demonstrate the effect of numerical uncertainty on simulations of single neuron models, we first simulated the classical HH neuron with the step stimulus (Fig. 2A). We computed solutions with a deterministic and probabilistic EE solver for a step-size of \(\Delta t=0.25 \text { ms}\). Additionally, we computed a reference solution. We found that the exact spike-times of the deterministic EE solver differed substantially from the reference solution (spike-time difference \(t^\text {det.}_\text {spike} - t^\text {ref.}_\text {spike}\) of the first three spikes: 0.6 ms, 2.3 ms, 4.0 ms). The probabilistic solver revealed this numerical uncertainty with spike-times varying substantially between samples (standard deviation (SD) of the spike-time \(t_\text {spike}\) for the first three spikes over all \(100\) samples: 0.2 ms, 0.9 ms, 1.1 ms).

Next we simulated single INs with different parametrizations \(\theta _i\) and response dynamics (Izhikevich, 2004). Using the original step-sizes \(\Delta t\) and input currents \(I_i\), we compute solutions with the original solver scheme—which is related to a FEf solver (Sect. 5.2)—a deterministic FE scheme and a probabilistic FEft solver. We found, that for the “Inhibition-induced spiking” neuron all solvers produced similar spiking patterns in response to a negative current step (Fig. 2B). However, the original solver produced longer intervals between the spikes compared to the reference, resulting in only three instead of four spikes. The deterministic FE solution matched the reference better (e.g. both had four spikes), but the spike-times were still off by several milliseconds (spike-time difference \(t^\text {det.}_\text {spike} - t^\text {ref.}_\text {spike}\) of the last two spikes: 8.2 ms, 3.9 ms). The probabilistic solver revealed this numerical uncertainty (SD of the spike-time \(t_\text {spike}\) of the first three spikes: 3.0 ms, 5.6 ms, 7.1 ms).

Similarly, for the “Inhibition-induced bursting” neuron the solution from the original solver and the deterministic FE solver were qualitatively broadly consistent with the reference solution (Fig. 2C). In all simulations, the neuron responded with spike bursts to a negative stimulus current step. The spike-times and the number of spikes of the original solution (\(n_\text {spikes} = 11\)) and the deterministic FE solution (\(n_\text {spikes} = 14\)) differed substantially from the reference (\(n_\text {spikes} = 33\)) though, with the FE solution having only two bursts instead of three during the simulated period. Here, the probabilistic solver revealed the substantial uncertainty in the spike-times and number of spikes (\(\overline{n}_\text {spikes}=13.8\) (SD 2.5), where \(\overline{n}\) denotes the sample mean. See also histogram in Fig. 2C), with around \(33\%\) of the samples having a third burst (Fig. 2C, bottom). All 16 simulated parametrizations are shown in Fig. S1.

To provide an example of a neuronal network, we simulated the STG model for two parametrizations (Fig. 2D and E) that only differ in their synaptic conductances (see Sect. 2.5.3). We computed solutions with a reference solver, a deterministic and a probabilistic EE solver. We focused the analysis on the LP neuron for simplicity. For the first parametrization (Fig. 2D), the LP neuron showed continuous spiking in all simulations. Similar to the HH neuron, we found differences in the exact spike-times and number of spikes between the reference (\(n_\text {spikes}=17\)) and the deterministic EE solution (\(n_\text {spikes}=13\)). The uncertainty was again revealed by the probabilistic solver (\(\overline{n}_\text {spikes} = 14.6\) (SD 1.3), Fig. 2D). The second parametrization resulted in a different spiking behavior of the LP neuron (Fig. 2E). Here, the neuron started to fire at a high frequency for a prolonged time after approximately two seconds. In the reference solution, the neuron continued to fire. In contrast, in the deterministic solution, the neuron stopped after about another two seconds to then start another burst shortly later. While this also happened in all generated samples from the probabilistic solvers, the sample distribution still indicated a high uncertainty about the duration of the firing periods (Fig. 2E). Simulations of all five synaptic parametrizations from the original paper (Prinz et al., 2004) are shown in Fig. S2.

Numerical uncertainty can vary between state variables. A, B Simulations of a single STG neuron in response to a step-stimulus (gray, top row). Solutions were computed using a reference solver and by drawing \(100\) samples from an EEft solver with \(\Delta t=0.1 \text{ ms}\). The reference solution (black) and the mean over the samples (blue) is shown for two state variables: the membrane potential v(t) (A, second row) and the intracellular calcium \(\text {Ca}(t)\) (B, second row). For both state variables, the absolute error \(\text {AE}(t) = |x_\text {sample}(t) - x_\text {ref.}(t) |\) (third row) and the relative error \(\text {RE}(t) = |x_\text {sample}(t) - x_\text {ref.}(t) |/ \max (|x_\text {sample}(t) |, |x_\text {ref.}(t) |)\) (bottom row) between sample and reference traces are shown as means and the 10th and 90th percentiles over all samples, respectively

Finally, we turned to a single STG neuron and stimulated the response to a step stimulus (Fig. 3) based on the original publication (Prinz et al., 2003). Here, we compared the numerical uncertainty in two different state variables, namely the voltage v(t) (Fig. 3A) and the intracellular calcium \(\text {Ca}(t)\) (Fig. 3B). We found that the numerical uncertainty differed strongly between these state variables, and was much higher for v(t) (Fig. 3). While this is expected because of the transient and brief nature of spikes in contrast to the slower changing calcium, it highlights the power of probabilistic ODE solvers, as they can guide the choice of the solver and step-size parameter dependent on the quantity of interest and the desired accuracy without requiring detailed knowledge about the model and its kinetics.

Numerical uncertainty affects also higher order methods. A-C Simulations of the classical HH model for a step stimulus \(I_\text {Stim}\) with an amplitude of 0.022406 mA (normalized stimulus in gray). Solutions for v(t) are shown for a reference solver (black) and a deterministic RKDPa solver with \(\kappa ={1}\mathrm {e}{-3}\), \(\kappa ={1}\mathrm {e}{-5}\) and \(\kappa ={1}\mathrm {e}{-7}\), respectively (orange). Bottom panels: Spike-times of the reference (black), the deterministic solutions (orange) and for 10 samples from probabilistic RKDPax solvers with \(\kappa ={1}\mathrm {e}{-3}\), \(\kappa ={1}\mathrm {e}{-5}\) and \(\kappa ={1}\mathrm {e}{-7}\), respectively (blue). D Fraction of samples (\(n=100\)) from the probabilistic solvers in (A-C) that had no spike, shown as mean and standard error. All other samples had exactly one spike

All the examples in Fig. 2 used first order methods. To also provide an example where higher order solvers with low tolerances yield solutions qualitatively different from the reference solution, we simulated the classical HH neuron’s response for 50 ms to a step stimulus with an amplitude of \(0.022406\text { mA}\) and \(t_\text {onset}=10\text { ms}\) and \(t_\text {offset}=40\text { ms}\). This amplitude did not evoke a single spike in the reference solver (Fig. 4A), but was very close to the threshold, i.e. slightly larger amplitudes (e.g. \(0.022410\text { mA}\)) did produce a spike for the reference solver. When simulating this model with a RKDPa solver, we found that for tolerances of \(\kappa ={1}\mathrm {e}{-3}\) and \(\kappa ={1}\mathrm {e}{-5}\) the solutions did contain a spike (Fig. 4A and B). Only a tolerance as small as \(\kappa ={1}\mathrm {e}{-7}\) yielded a solution with no spike for this solver (Fig. 4C). Simulating the model with probabilistic solvers revealed this numerical uncertainty for both \(\kappa ={1}\mathrm {e}{-3}\) and \(\kappa ={1}\mathrm {e}{-5}\), with a fraction of samples containing one and a fraction containing zero spikes in both cases (Fig. 4D).

3.2 Probabilistic solvers can guide solver selection

Probabilistic solvers can be used to compare different solver schemes. A Reference solutions of v(t) (black) for the Hodgkin–Huxley model stimulated with the noisy step stimulus (normalized stimulus in gray). B Mean Absolute Errors \(\text {MAE}_\text {SR}\) between sample traces of v(t) and the respective reference solutions for different solver schemes (legend) and step-sizes / tolerances. Mean Absolute Errors from \(100\) samples are shown as medians (dots) and 10th to 90th percentiles (vertical lines) as a function of the number of ODE evaluations of a corresponding deterministic solver (x-axis). C As in B, but for sample-sample Mean Absolute Errors \(\text {MAE}_\text {SM}\). D As in B, but for sample-sample Mean Absolute Errors \(\text {MAE}_\text {SM}\) vs. sample-reference Mean Absolute Errors \(\text {MAE}_\text {SR}\). E-G As in B-D, but for using the distance measure SPIKE-distance (see Sect. 2.6.2) instead of Mean Absolute Errors

To demonstrate how probabilistic ODE solvers can be used to compare the accuracy vs. run time tradeoff between different solver schemes, we simulated the HH neuron’s response to the noisy step stimulus (Fig. 5A) using the following probabilistic solvers: EEft, EEMPft, FEft and RKBSax. To this end, we computed sample-reference Mean Absolute Errors (\(\text {MAE}_\text {SR}\)) and sample-reference SPIKE-distances (see Sect. 2.6.2) for each solver as an estimate of the numerical error induced. We compared these errors to the number of ODE evaluations a corresponding deterministic solver would need. We found that the exponential integrators EE and EEMP allowed computing solutions with the fewest ODE evaluations, as they terminated successfully even for the relatively large step-size \(\Delta t=0.5\text { ms}\) (Fig. 5B). In contrast, when using the FE solver, all step-sizes \(\Delta t \gg {0.05}\text { ms}\) resulted in floating-point overflow errors and therefore in both useless and incomplete solutions. However, when choosing a sufficiently small step-size of \(\Delta t \le {0.05}\text { ms}\) the samples obtained with the FE method had on average a smaller error compared to the EE method (Fig. 5B and E). From the methods tested, the adaptive RKBS method was the most efficient one, i.e. it produced the most accurate solutions for the fewest number of ODE solutions, but it also required a substantially higher number of minimum ODE evaluations to successfully terminate compared to the exponential integrators (Fig. 5B and E).

In principle, a very similar analysis could also have been done with deterministic solvers. However, probabilistic solvers have two advantages. First, they yield sample distributions instead of single solutions which make it possible to compute confidence intervals etc. when comparing different solver outputs. Second, and more crucially, probabilistic solvers do not require a reference solution to estimate how numerical errors in a solution affect quantities of interest such as spike-times. For a sufficiently calibrated probabilistic solver, the sample distribution, i.e. the solver’s output, can be used to estimate the numerical error of the solver. In Fig. 5C and F we computed the sample-sample distances which are independent of the reference, for the same samples used in Fig. 5B and E. We found that the mean sample-reference distances were highly similar to the respective mean sample-sample distances for all solvers for both the Mean Absolute Error (Fig. 5B-D) and the SPIKE-distance (Fig. 5E-G). Therefore, the solver comparison described above could have also been based on sample-sample distance instead of the sample-reference distances (e.g. \(\text {MAE}_\text {SM}\) instead of \(\text {MAE}_\text {SR}\)), and thus would not have required a reference solution.

3.3 Calibration of probabilistic solvers

The mean sample-sample distance (e.g. measured as \(\overline{\text {MAE}}_\text {SM}\)) is only then a good approximation to the mean sample-reference distance (e.g. measured as \(\overline{\text {MAE}}_\text {SR}\)), when the probabilistic solver is well calibrated. Ideally, the magnitude of the perturbation is large enough to capture the numerical uncertainty of the underlying numerical integration, but it is not too large to severely reduce the accuracy of the integration scheme. To quantify the calibration of different solvers, we therefore defined two metrics, the ratio \(R_S=\overline{\text {MAE}}_\text {SM}/\overline{\text {MAE}}_\text {SR}\) and the ratio \(R_D = \text {MAE}_\text {DR} / \overline{\text {MAE}}_\text {SR}\), where \(\text {MAE}_\text {DR}\) is the distance between a corresponding deterministic solution and the reference. \(R_S\) is close to zero if the perturbation is too small (i.e. the sample-sample distance is much smaller than the sample-reference distance) and close to one if the perturbation is sufficiently large to not underestimate the numerical uncertainty (i.e. the sample-sample distance can be used as an approximate measure of the sample-reference distance)Footnote 1. However, \(R_S\) is also close to one, when the perturbation is too large and the solver output is dominated by the perturbation rather than the underlying ODE. This is why we also consider \(R_D\) to evaluate the calibration. \(R_D\) is close to one if the perturbation is either too small to affect the model output (i.e. all samples are approximately equal to the deterministic solution) or if samples are on average approximately equally close to the reference than the deterministic solution. \(R_D\) is close to zero, if the perturbation is too large and the perturbation severely reduces the solver accuracyFootnote 2.

For a well calibrated solver, \(R_S\) is close to one, such that the sample-reference distance \(\overline{\text {MAE}}_\text {SR}\) can be estimated from sample-sample distance \(\overline{\text {MAE}}_\text {SM}\) while \(R_D\) is close to or larger than one, such that the perturbation does not decrease the solver accuracy.

Calibration of probabilistic solvers. Simulations of the HH model with different perturbation parameters \(\sigma\). A MAE distributions for \(100\) samples computed with an EEft solver (\(\Delta t={0.025}\text { ms}\)) as \(\text {MAE}_\text {SR}\) (blue), \(\text {MAE}_\text {SM}\) (red) and \(\text {MAE}_\text {DR}\) (grey line). Distribution means are highlighted (triangles). B \(R_S=\overline{\text {MAE}}_\text {SM}/\overline{\text {MAE}}_\text {SR}\) as a function of \(\sigma\). C As in B, but for \(R_D=\text {MAE}_\text {DR}/\overline{\text {MAE}}_\text {SR}\). D “Goodness of calibration” \(R^c_S R^c_D\) for different solvers (column titles) and step-sizes \(\Delta t\) / tolerances \(\kappa\) (panel titles) for two stimuli (legend) as a function of \(\sigma\). E, F Simulations using an EEft and an EEMPft solver, respectively. The model’s ODEs were simulated in microseconds (green), milliseconds (orange) and seconds (blue), with step-sizes \(\Delta t\) of \({25}\text{ }\mu\text{s}\), \({0.025}\text { ms}\) and \({0.000025} \text { s}\), respectively. MAEs were computed for perturbation parameters \(\sigma\) ranging from \({1}\mathrm {e}{-5}\) to \({1}\mathrm {e}{7}\): (left) \(R^c_S R^c_D\), (middle) \(R_S=\overline{\text {MAE}}_\text {SM}/\overline{\text {MAE}}_\text {SR}\), (right) \(R_D=\text {MAE}_\text {DR}/\overline{\text {MAE}}_\text {SR} \text{}\)

The magnitude of the perturbation can be adjusted with the perturbation parameter \(\sigma\) that we defined for both the state and step-size perturbation (see Sect. 2.1). To analyze how the parameter \(\sigma\) affects the calibration of the perturbation and to test for which \(\sigma\) the solvers are well calibrated, we simulated the classical HH neuron in response to the noisy and the step stimulus with probabilistic solvers for a range of perturbation parameters (Fig. 6). First, we used a probabilistic EEft solver and computed MAESM, MAESRand MAEDRand the ratios of the distribution means \(R_S\) and \(R_D\) for different \(\sigma\) ranging from 0.0625 to 16 for the noisy stimulus (Fig. 6A-C). As expected, we found that with increasing \(\sigma\), the mean sample-sample distance \(\overline{\text {MAE}}_\text {SM}\) converged to the mean sample-reference distance \(\overline{\text {MAE}}_\text {SR}\), and for sufficiently large \(\sigma\) the mean sample-sample distance \(\overline{\text {MAE}}_\text {SM}\) could therefore be used as an approximate measure of mean sample-reference distance \(\overline{\text {MAE}}_\text {SR}\) (Fig. 6A and B). For example, for \(\sigma =0.25\), the perturbation magnitude was too small and the solver was underestimating the numerical uncertainty: Here, the mean sample-sample distances was much smaller \(\overline{\text {MAE}}_\text {SM}\) (0.33) than the mean sample-reference distance \(\overline{\text {MAE}}_\text {SR}\) (4.35) (Fig. 6A) with all sample-reference distances distributed narrowly (\(\text {MAE}_\text {SR}\) 10th to 90th percentiles: [4.14, 4.56]) around the deterministic-reference distance MAEDR(4.36), indicating that all samples were very close to the deterministic solution, despite the numerical error. When using \(\sigma \ge 4\), the mean sample-reference distance was higher than the deterministic-reference distance (Fig. 6A and C), indicating a loss of solver accuracy caused by the perturbation (e.g. \(R_D = 0.51\) for \(\sigma =8\)). Here, the best calibration was achieved with \(\sigma = 2\), with distributions of MAESMclose to MAESR(\(R_S\): 0.83; Fig. 6A and B) and with the mean sample accuracy close to the accuracy of the deterministic solution (\(R_D\): 1.08; Fig. 6A and C).

To provide an overview of the calibration for different solvers settings, we defined a scalar measure for the “goodness of calibration” \(R^c_S R^c_D\), where \(R^c_D = \min (R_D, 1)\) and \(R^c_S = 1 - |1 - R_S |\), which is ideally close to one. \(R^c_S R^c_D\) is close to zero for either an underestimation of the numerical uncertainty (\(R^c_S \approx 0\)) or for a too strong perturbation that renders the solver output useless (\(R^c_D \approx 0\)). We used \(R^c_S\) and \(R^c_D\) instead of \(R_S\) and \(R_D\), because in some cases \(R_S\) and \(R_D\) took values larger than one (for example see Fig. S3) which would make their product more difficult to interpret. We simply clipped \(R_D\), because here we only cared about the samples being at least as close to the reference solution as the deterministic solution. For \(R_S\) on the other hand, values larger than one are not beneficial, as \(R_S\) approximates how well the sample-reference distance can be estimated from the sample distribution alone. \(R^c_S\) therefore measures the deviance of \(R_S\) from one.

We computed \(R^c_S R^c_D\) for different probabilistic solvers and step-sizes—including the EEft solver with \(\Delta t={0.025}\text { ms}\) used above—for the HH neuron stimulated with the step and noisy step stimulus (Fig. 6D). The respective values for \(R_S\) and \(R_D\) are shown in Fig. S3. We found that using a perturbation parameter of \(\sigma =1\), which we used as default in other experiments, produced reasonably calibrated solutions overall. However, in most cases \(\sigma =1\) was also not ideal. For example for EEft, larger values (e.g. \(\sigma =2\) or \(\sigma =4\)) resulted in better calibration, whereas for FEft the calibration was improved using smaller values (e.g. \(\sigma =0.5\) or \(\sigma =0.25\)). For the state perturbed Runge–Kutta methods RKBS and RKDP with fixed step-sizes, the best \(\sigma\) were close to one, but it was both step-size and stimulus dependent if slightly larger or smaller values would result in better calibration. The same Runge–Kutta methods with adaptive step-sizes were well calibrated for a wide range of perturbation parameters \(\sigma\), including very small ones (e.g. \(\sigma =0.0625\)), especially in the high tolerance case (\(\kappa =1e-2\)). This is likely because even small perturbations cause the solvers to take different step-sizes and therefore to evaluate the ODE at different time points. When using the state perturbation on a new neuron model, setting \(\sigma =1\) may therefore be a good strategy to get a solver with reasonable, though often not ideal, calibration.

For the step-size perturbation on the other hand, setting \(\sigma =1\) can also result in extremely poor calibration. To illustrate this, we simulated the Hodgkin–Huxley model with different units for the time t, meaning that we rescaled the values for t and \(\dot {\mathbf {x}}\) equally. As expected, this rescaling did not affect the solutions of deterministic or state perturbed solvers. For the step-size perturbation method, however, the step-size \(\Delta t\) is treated as a unit-less quantity during the perturbation step (Eq. (4)) which makes the method sensitive to such unit changes. We simulated the model in milliseconds, microseconds and seconds for a wide range of perturbation parameters \(\sigma\) and computed the goodness of calibration \(R^c_S R^c_D\) for an EEft (Fig. 6E) and an EEMPft solver (Fig. 6F). As expected, the perturbation parameter \(\sigma\) resulting in the best calibration differed by orders of magnitude (Fig. 6E and F). This is, because here the best calibration did always result in the same ratio between the step-size \(\Delta t\) and the standard deviation of the perturbation distribution \(\mathcal {P}\). Obviously, the step-size perturbation could be made invariant to such unit changes, but this would not address the underlying problem: A priori it is not clear how \(\sigma\), or more generally the distribution \(\mathcal {P}\), should be set. Given that the time unit in which a model is simulated is a relatively arbitrary choice, the reasonable calibration achieved with \(\sigma =1\) in examples above was at least partially a side effect of all models being simulated in milliseconds with comparable kinetic and is not a generally applicable rule.

Therefore, especially in the case of step-size perturbation, it is advisable to calibrate the perturbation before simulating a new model. In many practical applications, such as simulation-based parameter inference for neuron models (Oesterle et al., 2020), models are simulated repeatedly with slight modifications in their parametrization. Here, it would be impractical to recalibrate the solvers for every evaluation, but on the other hand, it would cause only little overhead to calibrate the perturbation once in the beginning. We therefore tested how transferable the calibration for one model parametrization is to a wider range of other parametrizations. First, we calibrated an EEft solver by maximizing the goodness of calibration \(R^c_S R^c_D\) through a grid-search over \(\sigma\) (Fig. S4A) on a HH neuron model using the default parameters for the conductances (Sect. 5.3) and a low frequency noisy step stimulus (Fig. S4). Using the optimized perturbation parameter \(\sigma\), we computed the goodness of calibration for 100 different neuron parameter sets (Fig. S4B) which resulted in a variety of spiking patterns (Fig. S4C and D). For the vast majority of parametrizations, the perturbation was well calibrated (Fig. S4E): For almost half the parametrizations (39 out of 100) \(R^c_S R^c_D\) was even higher than for the original parametrization and in all cases it was larger than 0.3.

3.4 Computational overhead

Probabilistic solvers based on state or step-size perturbation increase the computational costs for two reasons. First, they are sampling based and require computing multiple solutions for a single IVP. While this process can be parallelized, it nevertheless comes with a computational overhead, especially if it conflicts with other computations using parallelized model evaluation, e.g. in simulation-based inference where the same model is evaluated for different model parameters (Cranmer et al., 2020; Gonçalves et al., 2020). Second, probabilistic solvers induce a computational overhead per solution computed relative to their deterministic counterparts. We analyzed both aspects in the following.

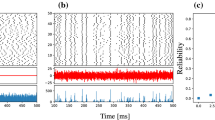

The computational overhead of probabilistic solver is moderate. A, B Bootstrapped distributions of normalized sample-sample distances \(\Psi _\text {MAE} = \overline{\text {MAE}}_\text {SM-n} / \overline{\text {MAE}}_\text {SM-300}\) as a function of the numbers of samples n. \(\Psi _\text {MAE}\) were computed for the classical HH neuron simulated with an EEft with \(\Delta t={0.1}\text { ms}\) (A) and a RKBSax solver with \(\kappa ={1}\mathrm {e}{-2}\) (B) for the step (grey) and noisy step (black) stimulus, respectively. Top: Distributions over \(\Psi _\text {MAE}\) computed by \(10000\) times repeated random sub-sampling of \(\overline{\text {MAE}}_\text {SM-n}\) from all \(300\) generated samples. Bottom: Percentages of the bootstrapped \(\Psi _\text {MAE}\) distributions in [0.5, 2.0]. C, D As in A, B, but for \(\Psi _\text {SpD} = \overline{\text {SpD}}_\text {SS-n} / \overline{\text {SpD}}_\text {SS-300}\), where \(\overline{\text {SpD}}_\text {SS-n}\) is the mean of the pairwise SPIKE-distance distribution. E Relative run times for different solver schemes measured for the HH neuron with the noisy step stimulus. For every solver, \(100\) samples were simulated for both a probabilistic and a corresponding deterministic solver. Relative run times were computed by dividing the run times of the probabilistic samples by the run times of the respective deterministic samples. Distributions were computed by bootstrapping \(10000\) ratios and are shown as medians and the 10th to 90th percentiles. The step-size of fixed step-size methods was \(\Delta t ={0.01}\text { ms}\) and the tolerance of adaptive methods was \(\kappa ={1}\mathrm {e}{-4}\). For EE and EEMP, only the step-size perturbation was used

3.4.1 Required number of samples

To empirically determine the number of samples necessary to obtain a reliable measure of numerical uncertainty, we simulated the classical HH neuron with probabilistic solvers for the step and noisy step stimulus. To this end, we computed mean sample-sample distances \(\overline{\text {MAE}}_\text {SM-n}\) for small numbers of samples n, and divided them by the mean sample-sample distances for a much larger number of samples (\(300\)) to obtain normalized sample-sample distances \(\Psi _\text {MAE}(n) = \overline{\text {MAE}}_\text {SM-n} / \overline{\text {MAE}}_\text {SM-300}\). Similarly, we computed normalized sample-sample SPIKE distances \(\Psi _\text {SpD}\) for the spike times. We bootstrapped distributions of \(\Psi _\text {MAE}\) (Fig. 7A and B) and \(\Psi _\text {SpD}\) (Fig. 7C and D) to see how likely a certain number of samples n would result in a good estimate of the true sample-sample distance. We found that two samples were often already sufficient to estimate the sample-sample MAE. For example, for the step stimulus and \(n=2\), more than \(80\%\) of the bootstrapped \(\Psi _\text {MAE}\) were in [0.5, 2.0] with little difference between the solvers EEft (Fig. 7A) and RKBSax (Fig. 7B). Reliably estimating the sample-sample SPIKE-distance required more samples, especially for the noisy stimulus. Still, only 10 samples were sufficient to obtain a \(\Psi _\text {SpD}\) in [0.5, 2.0] with high probability (\(>80\%\)) for both solvers and stimuli.

3.4.2 Overhead per sample

In addition to the computational overhead caused by the computation of multiple samples, probabilistic methods also come with a computational overhead per solution. For the state perturbation this overhead has three components. First, one needs to compute the local error estimator, which only causes overhead for fixed step-sizes since for adaptive methods the local error estimator needs to be computed anyway. The second potential source of overhead is that the “First Same As Last” property—i.e. that the last stage in one step can be used as the first stage of the next step, which is used in RKBS and RKDP—is not applicable. This is because the last stage is computed before the perturbation, and after the perturbation the evaluation of the ODE is not valid anymore. Lastly, the perturbation itself, which includes sampling form a Gaussian, needs to be computed.

In total, this overhead is relatively small for higher order methods optimized for step-size adaptation like RKBS, RKCK and RKDP. For example, the state perturbation for RKDPa increases the number of ODE evaluations per step from six to seven (\(+16\%\)) due to the loss of the First Same As Last property, and for RKCKa—which does not make use of this property—no additional ODE evaluation is required. However, for first order methods like FE this overhead severely reduces the computational efficiency because instead of a single ODE evaluation per step, a state perturbed version needs two (\(+100\%\)). Additionally, lower order methods typically require more steps in total compared to higher order methods, because they are typically used in combination with smaller step-sizes. This increases the total computational costs of the perturbation itself, which is done once per step. For the step-size perturbation, the overhead is reduced to the perturbation and, for adaptive step-size methods, the loss of the First Same As Last property.

To quantify this overhead empirically, we simulated the HH neuron with different probabilistic solvers and their deterministic counterparts and compared the run times relative to each other. As expected, for the state perturbation, the computational overhead was larger for the lower order methods (Fig. 7E; on average for FEfx: \(+113\%\), RKBSfx: \(+50\%\), RKCKfx: \(+6\%\), RKDPfx: \(+23\%\)). The adaptive methods—where the local error estimates were computed not only for the probabilistic, but also for the deterministic methods—showed the smallest increase in run times (\(+14\%\) on average across all adaptive methods), with RKCKax, not using the First Same As Last property, having the least overhead (\(+5\%\)). For the step-size perturbation, the increase in run times was on average smaller (\(+16\%\) on average across all methods) and without large differences between the solver schemes and the usage of adaptive or fixed step-sizes.

3.5 Errors beyond numerical integration

Probabilistic solver only account for errors in numerical integration. A Simulations of the “DAP” IN model with a pulse stimulus \(I_\text {Stim}\) (normalized stimulus in gray). Solutions for v(t) are shown for a reference solver (black), the original solver scheme (green) and a deterministic FE solver (orange). Based on the original publication, the step-size \(\Delta t\) was set to 0.1 ms. For plotting, v(t) were clipped at 30. Bottom panel: Spike-times are shown for the reference (black), the original solver solution (green), the deterministic FE solution (orange) and for \(100\) samples from a probabilistic FEft solver (blue). Samples were sorted by the number of spikes. B, C Number of spikes dependent on the step-size for different solver methods (legend) for fixed A and pseudo-fixed B step-sizes, respectively. Number of spikes are shown for \(40\) samples as medians, 10th to 90th percentiles (vertical lines) and outliers (stars). The dashed horizontal line refers to the reference solution

Finally, we turned back to the “DAP” IN model (Fig. S1), to illustrate numerical uncertainties that cannot be fully captured by the perturbation methods because they are not caused by the numerical approximation of the integral in Eq. (2). For this neuron model we had found a large difference in the number of spikes for the fixed step-size methods, like the original solver, compared to the reference solution (Fig. 8A). While the reference solution had eight spikes during the simulated period, the original solution had only one and a deterministic FE solver had two. While the probabilistic solver FEft arguably indicated some numerical uncertainty (\(\overline{n}_\text {spikes}=2.3\) (SD 0.6)), the number of spikes was still much lower compared to the reference. To better understand the source of this numerical uncertainty, we simulated the “DAP” neuron model with different probabilistic solvers, FEft, RKBSfx and RKDPfx.

First, we simulated the neuron for different fixed step-sizes. We found that all probabilistic solvers underestimated the true number of spikes when using relatively large fixed step-sizes (Fig. 6B). For the largest step-size tested, \(\Delta t=0.5 \text { ms}\), only the FEft solver indicated uncertainty in the number of spikes, whereas for RKBSfx and RKDPfx all samples had only a single spike. When using smaller step-sizes, the probabilistic solvers’ outputs were more indicative of the numerical uncertainty. For \(\Delta t={0.02}\text { ms}\), all solvers produced outputs that were closer (\(\overline{n}_\text {spikes} = 5.9\) for FE, \(\overline{n}_\text {spikes} = 6.5\) for RKBS and \(\overline{n}_\text {spikes} = 6.7\) for RKDP) to the reference solution and all methods indicated uncertainty in the number of spikes. With the very small step-size \(\Delta t={0.002}\text { ms}\), all samples from all solvers showed the same number of spikes as the reference and the probabilistic solvers indicated no remaining uncertainty about the number of spikes here.

While these results may be unsatisfactory at first glance, they are not necessarily unexpected. The probabilistic solvers used here can only capture the uncertainty arising through the numerical integration; they cannot capture the error that is introduced by restricting spikes to occur only on a fixed time grid, which is the case for the fixed step-size solvers. We therefore simulated the neuron for the same solvers and step-sizes again, but allowed the solver to take intermediate steps (see Eq. (10)) every time a reset occurred. When using these “pseudo-fixed” step-sizes, we found that RKDPfx still did not indicate uncertainty in the number of spikes for any step-size tested, but now all samples had the same number of spikes as the reference (Fig. 8C). And while FEft and RKBSfx still underestimated the number of spikes for larger step-sizes on average (e.g. for \(\Delta t={0.5}\text { ms}\): \(\overline{n}_\text {spikes}=3.5\) for FE and \(\overline{n}_\text {spikes} = 6.3\) for RKBS), both indicated high numerical uncertainty (e.g. for \(\Delta t={0.5}\text { ms}\): \(q_{90}(n_\text {spikes})=6\) for FE and \(q_{90}(n_\text {spikes}) = 8\) for RKBS, where \(q_{90}\) is the 90th percentile).

4 Discussion

The outcome of neuron simulations is affected by numerical uncertainty arising from the inevitably finite step-sizes used in numerical ODE integration. With standard solvers there is no straightforward way to quantify how this uncertainty affects quantities of interest such as spike-times and the number of spikes.

In this study, we demonstrated how probabilistic solvers can be used to quantify and reveal numerical uncertainty in commonly used neuron models. Crucially, these solvers can be easily implemented and do not require a detailed understanding of the underlying kinetics of the neuron model of interest.

Further, we showed that numerical uncertainty can affect the precise timing and the number of spikes in simulations of neuron models commonly used in neuroscience. We also found that some models and parametrizations are more susceptible to numerical uncertainty than others, and that some solvers employed in the neuroscience literature yield rather large uncertainties. These findings highlight the need for a thorough quantification of numerical uncertainty in neuroscience simulations to strike an informed balance between simulation time and tolerated uncertainty.

The idea to quantify the accuracy or numerical errors of different solvers for mechanistic models in neuroscience is not new. For example, Butera and McCarthy (2004) showed that for small step-sizes, the forward Euler method produces more accurate solutions than the exponential Euler method, which is in agreement with our findings. Börgers and Nectow (2013) on the other hand argued that for Hodgkin–Huxley-like systems exponential integrators—such as exponential Euler and the exponential midpoint Euler—are often the best choice, as they allow for much larger step-sizes especially when high accuracy is not necessary, which is again what we observed. Stewart and Bair (2009) argued in favor of the Parker–Sochacki integration method and showed that it can be used to generate highly accurate solutions for both the Izhikevich and Hodgkin–Huxley model. However, this method has the disadvantage that the ODE system at hand has to be put into the proper form and therefore requires specific knowledge about the model and solver. In a more recent study, Chen et al. (2020) recommended to use splitting methods, such as second-order Strang splitting, instead of exponential integrators.

In contrast to these studies, probabilistic solvers offer a more general approach to tackle the problem of numerical uncertainty. Instead of finding the “best” solver for a specific problem, they produce an easy-to-interpret uncertainty measure that can be analyzed without specific knowledge about the solver or solved neuron model. This allows to easily assess if a solver is sufficiently accurate for a given research question. It can therefore facilitate both the choice of the solver and choice of solver settings such as the step-size.

In this study, we used two simple probabilistic solvers that build on deterministic solver and stochastically perturb the numerical integration. For both, the state (Conrad et al., 2017) and the step-size perturbation (Abdulle & Garegnani, 2020) method, it is crucial that the perturbation is of the right order to neither underestimate the numerical uncertainty nor to reduce the solver accuracy unnecessarily. To be able to adjust the perturbation we introduced a perturbation parameter for both the state and step-size perturbation. We found that using the default value for this parameter yielded good calibration for most of the models we simulated, but slight adjustments often improved the calibration further. However, especially for the step-size perturbation, the calibration can be strongly dependent on the model and a certain level of calibration by means of adapting the perturbation distribution to the problem is always recommended. Fortunately, calibration may often be transferable between models with similar kinetics, for example in simulation-based inference where models are repeatably evaluated for different model parametrizations.

A downside of the step-size perturbation is, that it requires a local error estimator to be calibrated. This can introduce a relatively large computational overhead per solution for lower order methods like FE. The step-size perturbation may therefore a better choice for lower order methods. However, for higher order methods like RKDP this difference vanishes and both approaches require an equally small computational overhead per solution. Another advantage of the step-size perturbation is that it preserves desirable properties of the underlying solver schemes (Abdulle & Garegnani, 2020). For example, when Hodgkin–Huxley-like models are solved with exponential integrators like EE or EEMP, the state variables of the activation and inactivation cannot leave their domain [0, 1] by design of the solvers, a property preserved by the step-size but not the state perturbation.

Beyond the two perturbation methods used and discussed in this study, there are other classes of probabilistic numerical algorithms applicable to the problem studied herein. For instance, Teymur et al. (2021) recently proposed a method that builds on Richardson’s deferred approach to the limit and replaces the deterministic interpolant with a stochastic process. Similar to the perturbation methods, this approach can be used to create probabilistic ODE solvers from established deterministic ones. Another class of probabilistic ODE solvers is constructed using techniques from (nonlinear) Gaussian filtering and smoothing (Schober et al., 2019; Kersting et al., 2019; Tronarp et al., 2019). These methods have the advantage that instead of repeatedly integrating the initial value problem, they only require a single forward integration and return local uncertainty estimates that are proportional to the local truncation error. The disadvantage of Gaussian ODE filters and smoothers is that the uncertainty estimates are Gaussian. This restriction can be lifted by replacing Gaussian filters and smoothers with particle filters and smoothers (Tronarp et al., 2019). These and other methods may further extend the applicability of probabilistic numerics in computational neuroscience. In particular for large neural network simulations, more efficient methods like the filtering approaches will be key in quantifying uncertainty. However, because these methods are not sampling based, their success for simulating neurons will depend on how well they can capture the numerical uncertainty arising from the all-or-none behavior of spikes.

Another aspect that will be crucial for the success of probabilistic numerics in computational neuroscience is to develop and test implicit probabilistic solvers for neuron models. For example, the ODEs of multi-compartment neuron models are typically stiff which makes implicit solvers the better choice for such models (Mascagni et al., 1989). A priori, it is often not easy to judge whether a ODE system is stiff or not. A noteworthy attempt to tackle this problem is the algorithm by Blundell et al. (2018) that automatically determines whether an implicit or an explicit solver should be used.

Code availability

The probabilistic solvers and models were implemented in Python and Cython. The code is publicly available at https://github.com/berenslab/neuroprobnum.

Change history

26 June 2023

A Correction to this paper has been published: https://doi.org/10.1007/s10827-023-00856-w

Notes

\(R_S\) can take values larger than one, for example when the sample distribution is bimodal, the majority of samples is much closer to one of the modes (e.g. a fraction of samples has two and the larger fraction has three spike bursts as in Fig. 2C) and the reference solution is close to the larger mode.

In some cases, \(R_D\) takes values larger than one, which means that the perturbation increases the solver accuracy on average (e.g. see Fig. 2C). This happens, for example, when the deterministic solution is missing a spike but almost reaches the model’s spike threshold, and the perturbation is strong enough to generate the missing spike in some samples.

References

Abdulle, A., & Garegnani, G. (2020). Random time step probabilistic methods for uncertainty quantification in chaotic and geometric numerical integration. Statistics and Computing.

Blundell, I., Plotnikov, D., Eppler, J. M., & Morrison, A. (2018). Automatically selecting a suitable integration scheme for systems of differential equations in neuron models. Frontiers in neuroinformatics, 12, 50.

Bogacki, P., & Shampine, L. F. (1989). A 3 (2) pair of Runge-Kutta formulas. Applied Mathematics Letters, 2, 321–325.

Borgers, C., & Nectow, A. R. (2013). Exponential time differencing for hodgkin-huxley-like odes. SIAM Journal on Scientific Computing, 35, B623–B643.

Butera, R. J., & McCarthy, M. L. (2004). Analysis of real-time numerical integration methods applied to dynamic clamp experiments. Journal of Neural Engineering, 1, 187.

Cash, J. R., & Karp, A. H. (1990). A variable order runge-kutta method for initial value problems with rapidly varying right-hand sides. ACM Transactions on Mathematical Software (TOMS), 16, 201–222.

Chen, Z., Raman, B., & Stern, A. (2020). Structure-preserving numerical integrators for hodgkin-huxley-type systems. SIAM Journal on Scientific Computing, 42, B273–B298.

Chkrebtii, O. A., Campbell, D. A., Calderhead, B., & Girolami, M. A. (2016). Bayesian solution uncertainty quantification for differential equations. Bayesian Analysis, 11, 1239–1267.

Cockayne, J., Oates, C. J., Sullivan, T. J., & Girolami, M. (2019). Bayesian probabilistic numerical methods. SIAM Review, 64, 756–789.

Conrad, P. R., Girolami, M., Särkkä, S., Stuart, A., & Zygalakis, K. (2017). Statistical analysis of differential equations: introducing probability measures on numerical solutions. Statistics and Computing, 27, 1065–1082.

Cranmer, K., Brehmer, J., & Louppe, G. (2020). The frontier of simulation-based inference. Proceedings of the National Academy of Sciences, 117, 30055–30062.

Dayan, P., & Abbott, L. F. (2001). Theoretical neuroscience: computational and mathematical modeling of neural systems.

Domhof, J. W., & Tiesinga, P. H. (2021). Flexible frequency switching in adult mouse visual cortex is mediated by competition between parvalbumin and somatostatin expressing interneurons. Neural Computation, 33, 926–966.

Dormand, J. R., & Prince, P. J. (1980). A family of embedded Runge-Kutta formulae. Journal of Computational and Applied Mathematics, 6, 19–26.

Ermentrout, G. B., & Terman, D. H. (2010). The hodgkin–huxley equations. (pp. 1–28).

Galán, R. F., Fourcaud-Trocmé, N., Ermentrout, G. B., & Urban, N. N. (2006). Correlation-induced synchronization of oscillations in olfactory bulb neurons. Journal of Neuroscience, 26, 3646–3655.

Gerstner, W., & Kistler, W. M. (2002). Spiking neuron models: Single neurons, populations, plasticity.

Gerwinn, S., Bethge, M., Macke, J. H., & Seeger, M. (2008). Bayesian inference for spiking neuron models with a sparsity prior. In Advances in Neural Information Processing Systems (pp. 529–536).

Gonçalves, P. J., Lueckmann, J.-M., Deistler, M., Nonnenmacher, M., Öcal, K., Bassetto, G., et al. (2020). Training deep neural density estimators to identify mechanistic models of neural dynamics. Elife, 9, e56261.

Hairer, E., Nørsett, S. P., & Wanner, G. (1993). Solving ordinary differential equations i – nonstiff problems.

Hennig, P., Osborne, M. A., & Girolami, M. (2015). Probabilistic numerics and uncertainty in computations. Proceedings of the Royal Society of London A: Mathematical, Physical and Engineering Sciences, 471.

Hodgkin, A. L., & Huxley, A. F. (1952). A quantitative description of membrane current and its application to conduction and excitation in nerve. The Journal of Physiology, 117, 500–544.

Izhikevich, E. M. (2003). Simple model of spiking neurons. IEEE Transactions on Neural Networks, 14, 1569–1572.

Izhikevich, E. M. (2004). Which model to use for cortical spiking neurons? IEEE Transactions on Neural Networks, 15, 1063–1070.

Izhikevich, E. M. (2007). Dynamical systems in neuroscience.

Izhikevich, E. M., & Edelman, G. M. (2008). Large-scale model of mammalian thalamocortical systems. Proceedings of the National Academy of Sciences, 105, 3593–3598.

Kersting, H., Sullivan, T. J., & Hennig, P. (2020). Convergence rates of Gaussian ODE filters. Statistics and Computing, 30(6), 1791–1816.

Krämer, N., Bosch, N., Schmidt, J., & Hennig, P. (2022, June). Probabilistic ODE solutions in millions of dimensions. In International Conference on Machine Learning (pp. 11634–11649). PMLR.

Kreuz, T., Chicharro, D., Houghton, C., Andrzejak, R. G., & Mormann, F. (2013). Monitoring spike train synchrony. Journal of neurophysiology, 109, 1457–1472.

Mascagni, M. V., Sherman, A. S. et al. (1989). Numerical methods for neuronal modeling. Methods in neuronal modeling, 2.

Mulansky, M., & Kreuz, T. (2016). Pyspike–a python library for analyzing spike train synchrony. SoftwareX, 5, 183–189.

Oates, C. J., & Sullivan, T. J. (2019). A modern retrospective on probabilistic numerics. Statistics and Computing, 29, 1335–1351.

Oesterle, J., Behrens, C., Schröder, C., Hermann, T., Euler, T., Franke, K., et al. (2020). Bayesian inference for biophysical neuron models enables stimulus optimization for retinal neuroprosthetics. Elife, 9, e54997.

Oh, J., & French, D. A. (2006). Error analysis of a specialized numerical method for mathematical models from neuroscience. Applied mathematics and computation, 172, 491–507.

Papamakarios, G., Sterratt, D. C., & Murray, I. (2018). Sequential neural likelihood: Fast likelihood-free inference with autoregressive flows. arXiv:1805.07226.

Pedregosa, F., Varoquaux, G., Gramfort, A., Michel, V., Thirion, B., Grisel, O., et al. (2011). Scikit-learn: Machine learning in Python. Journal of Machine Learning Research, 12, 2825–2830.

Pillow, J. W., Shlens, J., Paninski, L., Sher, A., Litke, A. M., Chichilnisky, E., & Simoncelli, E. P. (2008). Spatio-temporal correlations and visual signalling in a complete neuronal population. Nature, 454, 995–999.

Prinz, A. A., Billimoria, C. P., & Marder, E. (2003). Alternative to hand-tuning conductance-based models: construction and analysis of databases of model neurons. Journal of Neurophysiology, 90, 3998–4015.

Prinz, A. A., Bucher, D., & Marder, E. (2004). Similar network activity from disparate circuit parameters. Nature Neuroscience, 7, 1345–1352.

Schober, M., Särkkä, S., & Hennig, P. (2019). A probabilistic model for the numerical solution of initial value problems. Statistics and Computing, 29, 99–122.