Abstract

Difference constraints have been used for termination analysis in the literature, where they denote relational inequalities of the form \(x' \le y + c\), and describe that the value of x in the current state is at most the value of y in the previous state plus some constant \(c \in \mathbb {Z}\). We believe that difference constraints are also a good choice for complexity and resource bound analysis because the complexity of imperative programs typically arises from counter increments and resets, which can be modeled naturally by difference constraints. In this article we propose a bound analysis based on difference constraints. We make the following contributions: (1) our analysis handles bound analysis problems of high practical relevance which current approaches cannot handle: we extend the range of bound analysis to a class of challenging but natural loop iteration patterns which typically appear in parsing and string-matching routines. (2) We advocate the idea of using bound analysis to infer invariants: our soundness proven algorithm obtains invariants through bound analysis, the inferred invariants are in turn used for obtaining bounds. Our bound analysis therefore does not rely on external techniques for invariant generation. (3) We demonstrate that difference constraints are a suitable abstract program model for automatic complexity and resource bound analysis: we provide efficient abstraction techniques for obtaining difference constraint programs from imperative code. (4) We report on a thorough experimental comparison of state-of-the-art bound analysis tools: we set up a tool comparison on (a) a large benchmark of real-world C code, (b) a benchmark built of examples taken from the bound analysis literature and (c) a benchmark of challenging iteration patterns which we found in real source code. (5) Our analysis is more scalable than existing approaches: we discuss how we achieve scalability.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Automated program analysis for inferring program complexity and resource bounds is a very active area of research. Amongst others, approaches have been developed for analyzing functional programs [16], C# [15], C [2, 7, 29, 35], Java [1] and Integer Transition Systems [6, 10]. Below we sketch applications in the areas of verification and program understanding. For additional motivation we refer the reader to the cited papers.

Verification In many applications such as embedded systems there is a hard constraint on the availability of resources such as CPU time, memory, bandwidth, etc. It is an important part of functional correctness that programs stay within their given resource limits. As a concrete example we mention that considerable effort has been invested to analyze the worst case execution time (WCET) of hard real-time systems [33]. Another application domain is security, where the goal is to derive a bound on how much secret information is leaked in order to decide whether this leakage is acceptable [31].

Static Profiling and Program Understanding Standard profilers report numbers such as how often certain program locations are visited and how much time is spent inside certain functions; however, no information is provided how these numbers are related to the program input. Recently, new profiling approaches have been proposed that apply curve fitting techniques for deriving a cost function, which relates size measures on the program input to the measured program performance [9, 34]. We believe that automated complexity and resource bound analysis lends itself naturally as static profiling technique, because it provides the user with a symbolic expression that relates the program performance to the program input. In the same way, complexity and resource bound analysis can be used to explore unfamiliar code or to annotate library functions by their performance characteristics; we note that a substantial number of performance bugs can be attributed to a “wrong understanding of API performance features” [22].

As a final remark we discuss the relationship to termination analysis, which has been intensively studied in the last decade in the computer-aided verification community: complexity and resource bound analysis can be understood as a quantitative variant of termination analysis, where not only a qualitative “yes” answer is provided, but also a symbolic upper bound on the run-time of the program.

Difference constraints (\( DCs \)) have been introduced by Ben-Amram for termination analysis in [4], where they denote relational inequalities of the form \(x' \le y + c\), and describe that the value of x in the current state is at most the value of y in the previous state plus some constant \(c \in \mathbb {Z}\). We call a program whose transitions are given by a set of difference constraints a difference constraint program (\( DCP \)).

We advocate the use of \( DCs \) for program complexity and resource bound analysis. Our key insight is that \( DCs \) provide a natural abstraction of the standard manipulations of counters in imperative programs: counter increments and decrements, i.e., \(x := x + c\) resp. resets, i.e., \(x := y\), can be modeled by the \( DCs x' \le x + c\) resp. \(x' \le y\) (see Sect. 6 on program abstraction). The approach we discuss in this article exploits the expressive strength of \( DCs \) and distinguishes between counter resets and counter increments in the reasoning. In contrast, previous approaches [1, 2, 6, 10, 15, 29, 35] to bound analysis are not able to track increments and resets on the same level of precision and therefore often fail to infer tight bounds for a class of nested loop constructs which we identified during our experiments on real-world code (demonstrated by our experimental evaluation in Sect. 8.3). In this article we make the following contributions:

-

1.

Our analysis handles bound analysis problems of high practical relevance which current approaches cannot handle: we extend the range of bound analysis to a class of challenging but natural loop iteration patterns which typically appear in parsing and string-matching routines as we discuss in Sect. 2. At the same time our analysis is general and can handle most of the bound analysis problems which are discussed in the literature. Both claims are supported by our experiments.

-

2.

We advocate the idea of using bound analysis to infer invariants: we state a clear and concise formulation of invariant analysis by bound analysis on base of our abstract program model: our soundness proven algorithm (Sect. 3) obtains invariants through bound analysis, the inferred invariants are in turn used for obtaining bounds. Our bound analysis therefore does not rely on external techniques for invariant generation.

-

3.

We demonstrate that difference constraints are a suitable abstract program model for automatic complexity and resource bound analysis: we develop appropriate techniques for abstracting imperative programs to \( DCPs \) in Sect. 6.

-

4.

We report on a thorough experimental comparison of state-of-the-art bound analysis tools (Sect. 8): we set up a tool comparison on (a) a large benchmark of real-world C-code (Sect. 8.1), (b) a benchmark built of examples taken from the bound analysis literature (Sect. 8.2) and (c) a benchmark of challenging iteration patterns which we found in real source code (Sect. 8.3).

-

5.

We have designed our analysis with the goal of scalability: our experiments demonstrate that our implementation outperforms the state-of-the-art with respect to scalability. We give a detailed discussion on how we achieve scalability in Sect. 10.

This article is an extension of the conference version presented at FMCAD 2015 [27]. Besides making the material more accessible through additional explanations and discussions, it adds the following contributions: (1) a discussion on the instrumentation of our analysis for resource bound analysis (Sect. 2.2). (2) A more detailed discussion and presentation of our context-sensitive bound algorithm (Sect. 3.3). (3) A more detailed discussion on how we determine local bounds and an extension to sets of local bounds (Sect. 4). (4) A complete example (Sect. 7). (5) A discussion on the relation to amortized complexity analysis (Sect. 9). (6) Additional experimental results (Sects. 8.2 and 8.3). (7) In Electronic Supplementary Material we state the soundness proofs omitted in the conference version.

2 Motivation and Related Work

Example xnuSimple stated in Fig. 1 is representative for a class of loops that we found in parsing and string matching routines during our experiments. In these loops the inner loop iterates over disjoint partitions of an array or string, where the partition sizes are determined by the program logic of the outer loop. For an illustration of this iteration scheme see Example xnu in Fig. 9 (Sect. 7), which contains a snippet of the source code after which we have modeled Example xnuSimple. Example xnuSimple has the linear complexity 2n (we define complexity here as the total number of loop iterations, for alternative definitions see the discussion in Sect. 2.2), because the inner loop as well as the outer loop can be iterated at most n times (as argued in the next paragraph). In the following, we give an overview how our approach infers the linear complexity for Example xnuSimple:

-

1.

Program Abstraction We abstract the program to a \( DCP \) over \(\mathbb {N}\) as shown in Fig. 1. The abstract variable \([x]\) represents the program expression \(\max (x,0)\). We discuss our algorithm for abstracting imperative programs to \( DCP \)s based on symbolic execution in Sect. 6.

-

2.

Finding Local Bounds We identify \([p]\) as a variable that limits the number of executions of transition \(\tau _3\): we have that \([p]\) decreases on each execution of \(\tau _3\) (\([p]\) takes values over \(\mathbb {N}\)). We call \([p]\) a local bound for \(\tau _3\). Accordingly we identify \([x]\) as a local bound for the transitions \(\tau _1,\tau _{2},\tau _{4},\tau _{5},\tau _{6}\).

-

3.

Bound Analysis Our algorithm (stated in Sect. 3) computes transition bounds, i.e., (symbolic) upper bounds on the number of times program transitions can be executed, and variable bounds, i.e., (symbolic) upper bounds on variable values. For both types of bounds, the main idea of our algorithm is to reason how much and how often the value of the local bound resp. the variable value may increase during program run. Our algorithm is based on a mutual recursion between variable bound analysis (“how much”, function \( V\mathcal {B} (\mathtt {v})\)) and transition bound analysis (“how often”, function \( T\mathcal {B} (\tau )\)). Next, we give an intuition how our algorithm computes transition bounds: for \(\tau \in \{\tau _1,\tau _{2},\tau _{4},\tau _5,\tau _6\}\) our algorithm computes \( T\mathcal {B} (\tau ) = [n] = n\) (note that \([n] = n\) because n has type unsigned) because the local bound \([x]\) is initially set to \([n]\) and never increased or reset. Our algorithm computes \( T\mathcal {B} (\tau _3)\) (\(\tau _3\) corresponds to the loop at \(l_3\)) as follows: \(\tau _3\) has local bound \([p]\); \([p]\) is reset to \([r]\) on \(\tau _{2}\); our algorithm detects that before each execution of \(\tau _{2}\), \([r]\) is reset to \([0]\) on either \(\tau _0\) or \(\tau _4\), which we call the context under which \(\tau _{2}\) is executed; our algorithm establishes that between being reset and flowing into \([p]\) the value of \([r]\) can be incremented up to \( T\mathcal {B} (\tau _1)\) times by 1; our algorithm obtains \( T\mathcal {B} (\tau _1) = n\) by a recursive call; finally, our algorithm calculates \( T\mathcal {B} (\tau _3) = [0] + T\mathcal {B} (\tau _1) \times 1 = 0 + n \times 1 = n\). We give an example for the mutual recursion between \( T\mathcal {B} \) and \( V\mathcal {B} \) in Sect. 2.1.

2.1 Invariants and Bound Analysis

We motivate the need for invariants in bound analysis and sketch how our algorithm infers invariants by bound analysis. Consider Example twoSCCs in Fig. 2. It is easy to infer x as a bound for the possible number of iterations of the loop at \(l_3\). However, in order to obtain a bound in the function parameters the difficulty lies in finding an invariant of form \(x \le \mathtt {expr}(n,{m_1},{m_2})\), where \(\mathtt {expr}(n,{m_1},{m_2})\) denotes an expression over the function parameters \(n,{m_1},{m_2}\). We show how our algorithm obtains the invariant \(x \le \max (m_1,m_2) + 2n\) by means of bound analysis:

Our algorithm computes a transition bound for the loop at \(l_3\) (with the single transition \(\tau _5\)) by \( T\mathcal {B} (\tau _5) = T\mathcal {B} (\tau _4) \times V\mathcal {B} ([x]) = 1 \times V\mathcal {B} ([x]) = V\mathcal {B} ([x]) = T\mathcal {B} (\tau _3) \times 2 + \max ([m_1],[m_2]) = ( T\mathcal {B} (\tau _0) \times [n]) \times 2 + \max ([m_1],[m_2]) = (1 \times [n]) \times 2 + \max ([m_1],[m_2]) = 2n + \max (m_1,m_2)\) (note that \([n]=n\), \([m_1]=m_1\) and \([m_2] = m_2\) because \(n, m_1, m_2\) have type unsigned). We point out the mutual recursion between \( T\mathcal {B} \) and \( V\mathcal {B} \): \( T\mathcal {B} (\tau _5)\) has called \( V\mathcal {B} (x)\), which in turn called \( T\mathcal {B} (\tau _3)\). We highlight that the variable bound \( V\mathcal {B} (x)\) (corresponding to the invariant \(x \le \max (m_1,m_2) + 2n\)) has been established during the computation of \( T\mathcal {B} (\tau _5)\).

We call the kind of invariants that our algorithm infers upper bound invariants (Definition 6). We compare our reasoning to classical invariant analysis in Sect. 2.3.

2.2 Resource Bound Analysis

We shortly discuss how resource bound analysis can be naturally formulated within our framework. We introduce a fresh variable c and add the initialization \(c = 0\) to the beginning of the program under scrutiny. We add an increment/decrement \(c = c + k\) at program locations where a resource of cost k is consumed (k is positive) or freed (k is negative). Resource bound analysis is then equivalent to computing an upper bound on the value of the variable c. We can run our algorithm \( V\mathcal {B} (c)\) to compute a symbolic upper bound for c.

In the same way we can encode related bound analysis problems: reachability bounds [15] (visits to a single location), visits to multiple transitions, loop bounds or complexity analysis. For each of these bound analysis problems one can add a counter increment at the program locations of interest.

We illustrate the suggested encoding on the problem of computing loop bounds: for a given loop we add increments of the counter variable c to every back edge of the loop. Calling \( V\mathcal {B} (c)\) then returns the sum of the transition bounds of all back edges of the loop. This example also illustrates how transition bounds are used for computing variable bounds in our approach.

2.3 Related Work

Termination In [4] it is shown that termination of \( DCPs \) is undecidable in general but decidable for the natural syntactic subclass of fan-in free \( DCPs \) (see Definition 12), which is the class of \( DCPs \) we use in this article. It is an open question for future work whether there is a complete algorithm for bound analysis of fan-in free \( DCPs \).

Bound Analysis In [35] a bound analysis based on so-called size-change constraints \(x' \lhd y\) is proposed, where \(\lhd \in \{<,\le \}\). Size-change constraints form a strict syntactic subclass of \( DCs \). However, termination is decidable even for size-change programs that are not fan-in free and a complete algorithm for deciding the complexity of size-change programs has been developed [8]. For reasoning about inner loops [35] computes disjunctive loop summaries while such summaries are not computed by the approach discussed in this work.

In [29] a bound analysis based on constraints of the form \(x' \le x + c\) is proposed, where c is either an integer or a symbolic constant. Because the constraints in [29, 35] cannot model both increments and resets, the resulting bound analyses cannot infer the linear complexity of Example xnuSimple and need to rely on external techniques for invariant analysis.

The COSTA project (e.g. [1]) obtains recurrence relations from so-called cost equations using invariant analysis based on the polyhedra abstract domain and approaches from the literature for synthesizing linear ranking functions. Closed-form solutions for the obtained recurrence relations are inferred by means of computer algebra.

The technique discussed in [10] is based on the COSTA approach and formulated in terms of cost equations. Further, paper [10] is inspired by the counter instrumentation-based approach [14] and applies the techniques [3, 25] for inferring linear ranking functions. The technique of [10] achieves a high precision of the inferred bounds by means of control-flow refinement (see also Ref. [11]).

The technique discussed in [2] over-approximates the reachable states by abstract interpretation based on the polyhedra abstract domain. This information is used for generating a linear constraint problem from which a multi-dimensional linear ranking function is obtained. A bound on the number of values which can be taken by the ranking function is then obtained from the previously computed approximation of the reachable states. Importantly, the number of dimensions of the ranking function determines the degree of the bound polynomial. The approach of [2] therefore aims at inferring a ranking function with a minimal number of dimensions and thus depends on a minimal solution to the linear constraint problem which is obtained by linear optimization (Technique [2] instruments the LP-solver with an objective function).

The technique discussed in [6] applies approaches from the literature for synthesizing ranking functions thereby inferring bounds on the number of times the execution of isolated program parts can be repeated. These bounds, called time bounds, are then used to compute bounds on the absolute value of variables, so-called variable size bounds. Additional information is inferred through abstract interpretation based on the octagon abstract domain. An overall complexity bound is deduced by alternating between time bound and variable size bound analysis. In each alternation bounds for larger program parts are obtained based on the previously computed information.

In Sect. 8 we compare our implementation against the techniques [2, 6, 7, 10, 29].

Amortized Complexity Analysis We note that inferring the linear complexity 2n for Example xnuSimple, even though the inner loop can already be iterated n times within one iteration of the outer loop, is an instance of amortized complexity analysis [32]: the cost of executing the inner loop, averaged over all n iterations of the outer loop is 1. Most previous approaches [1, 6, 10, 15, 29, 35] can establish only a quadratic bound for Example xnuSimple. A typical reasoning which fails to establish the linear complexity of Example xnuSimple is as follows: (1) the outer loop can be iterated at most n times, (2) the inner loop can be iterated at most n times within one iteration of the outer loop (because the inner loop has a local loop bound p and \(p \le n\) is an invariant), (3) the loop bound \(n^2\) is obtained from (1) and (2) by multiplication.

The recent paper [7] discusses an interesting alternative for amortized complexity analysis of imperative programs: a system of linear inequalities is derived using Hoare-style proof-rules. Solutions to the system represent valid linear resource bounds. Since bound analysis typically does not aim at some bound but tries to infer a tight bound, Ref. [7] uses linear optimization (an LP-solver instrumented by an objective function) in order to obtain a minimum solution to the problem. Interestingly, Ref. [7] is able to compute the linear bound for \(l_3\) of Example xnuSimple but fails to deduce the bound for the original source code (discussed in Sect. 7). Moreover, Ref. [7] is restricted to linear bounds, while our approach derives bounds which are polynomial (see, e.g., the results in Table 12) and which contain the maximum operator (e.g., Example twoSCCs). We compare our implementation to the implementation of Ref. [7] in Sect. 8.

Invariants and Bound Analysis The powerful idea of expressing locally computed bounds in terms of the function parameters by alternating between bound analysis and variable upper bound analysis has previously been applied in [6, 12, 28]. Since Refs. [12, 28] do not give a general algorithm but deal with specific cases we focus our discussion on [6] and highlight some important differences. The technique discussed in [6] computes upper bound invariants only for the absolute values of variables; for many cases, this does not allow to distinguish between variable increments and decrements: consider the program foo(int x, int y) {while(y> 0) {x \(\mathtt{--}\) ; y \(\mathtt{--}\) ;} while(x> 0) x \(\mathtt{--}\) ;}. The algorithm described in [6] infers the bound \(|x| + |y|\) for the second loop, whereas our analysis infers the bound \(\max (x, 0)\). The approach of [6] depends on global invariant analysis. E.g., given a decrement \(x := x - 1\), the technique of [6] needs to check whether \(x \ge 0\) holds. If \(x \ge 0\) cannot be ensured, the decrement can actually increment the absolute value of x, and will thus be interpreted as \(|x| = |x| + 1\). This can either lead to gross over-approximations or failure of bound computation if the increment of |x| cannot be bounded. Since our approach does not track the absolute value but the value, it is not concerned with this problem. The technique discussed in [6] does not support amortized analysis: e.g., The technique [6] fails to compute the linear bounds for Example xnuSimple (Fig. 1), Example xnu (Fig. 9) and other examples we discuss in this article (see also the results in Sect. 8.3). On the other hand, Ref. [6] can infer bounds for functions with multiple recursive calls which is not supported by the analysis we present in this article.

Comparison to Invariant Analysis We contrast our previously discussed approach for computing a bound for the loop at \(l_3\) of Example xnuSimple with classical invariant analysis: assume that we have added a counter c which counts the number of inner loop iterations (i.e., c is initialized to 0 and incremented in the inner loop). For inferring \(c \le n\) through invariant analysis the invariant \(c+x+r\le n\) is needed for the outer loop, and the invariant \(c+x+p\le n\) for the inner loop. Both relate 3 variables and cannot be expressed as (parametrized) octagons (e.g., [26]). Further, the expressions \(c+x+r\) and \(c+x+p\) do not appear in the program, which is challenging for template based approaches to invariant analysis.

We now contrast our variable bound analysis (function \( V\mathcal {B} \)) with classical invariant analysis: reconsider Example twoSCCs in Fig. 2. We have discussed how our algorithm obtains the invariant \(x \le \max (m_1,m_2) + 2n\) by means of bound analysis in the course of computing a bound for the loop at \(l_3\). Note, that the invariant \(x \le \max (m_1,m_2) + 2n\) cannot be computed by standard abstract domains such as octagon or polyhedra: these domains are convex and cannot express non-convex relations such as maximum. The most precise approximation of x in the polyhedra domain is \(x \le m_1 + m_2 + 2n\). Unfortunately, it is well-known that the polyhedra abstract domain does not scale to larger programs and needs to rely on heuristics for termination. Standard abstract domains such as octagon or polyhedra propagate information forward until a fixed point is reached, greedily computing all possible invariants expressible in the abstract domain at every location of the program. In contrast, our method \( V\mathcal {B} (x)\) infers the invariant \(x \le \max (m1,m2) + 2n\) by modular reasoning: local information about the program (i.e., local bounds and increments/resets of variables) is combined to a global program property. Moreover, our variable and transition bound analysis is demand-driven: our algorithm performs only those recursive calls that are indeed needed to derive the desired bound. We believe that our analysis complements existing techniques for invariant analysis and will find applications outside of bound analysis.

3 Program Model and Algorithm

In this section we present our algorithm for computing worst-case upper bounds on the number of executions of a given transition (transition bound) and on the value of a given program expression (variable bound and upper bound invariant).

Definition 1

(Program) Let \({\varSigma }\) be a set of states. A program over \({\varSigma }\) is a directed labeled graph \(\mathcal {P}= (L, T, l_b,l_e)\), where \(L\) is a finite set of locations, \(l_b \in L\) is the entry location, \(l_e \in L\) is the exit location and \(T\subseteq L\times 2^{{\varSigma }\times {\varSigma }} \times L\) is a finite set of transitions. We write \(l_1 \xrightarrow {\lambda } l_2\) to denote a transition \((l_1,\lambda ,l_2) \in T\). We call \(\lambda \in 2^{{\varSigma }\times {\varSigma }}\) a transition relation. A path of \(\mathcal {P}\) is a sequence \(l_0 \xrightarrow {\lambda _0} l_1 \xrightarrow {\lambda _1} \cdots \) with \(l_i \xrightarrow {\lambda _i} l_{i+1} \in E\) for all i. A run of \(\mathcal {P}\) is a sequence \(\rho = (l_b,\sigma _0) \xrightarrow {\lambda _0} (l_1,\sigma _1) \xrightarrow {\lambda _1} \cdots \) such that \(l_b \xrightarrow {\lambda _0} l_1 \xrightarrow {\lambda _1} \cdots \) is a path of \(\mathcal {P}\) and for all \(0 < i\) it holds that \((\sigma _{i-1},\sigma _i) \in \lambda _{i-1}\). A run \(\rho \) is complete if it ends at \(l_e\).

Note that a run of \(\mathcal {P}= (L, T, l_b,l_e)\) starts at location \(l_b\). Further note that we call an edge \({l_1 \xrightarrow {\lambda } l_1} \in T\) of the program a transition, whereas \(\lambda \) is its transition relation. In the following we will refer to transitions by \(\tau \) and to transition relations by \(\lambda \).

Transition bounds are at the core of our analysis: we infer bounds on the number of loop iterations, on computational complexity, on resource consumption, etc., by computing bounds on the number of times that one or several transitions can be executed. Before we formally define our notion of a transition bound we have to introduce some notation.

Definition 2

(Counter Notation I) Let \(\mathcal {P}(L, T,l_b, l_e)\) be a program over \({\varSigma }\). Let \(\tau \in T\). Let \(\rho = (l_b,\sigma _0) \xrightarrow {\lambda _0} (l_1,\sigma _1) \xrightarrow {\lambda _1} \cdots \) be a run of \(\mathcal {P}\). By \(\sharp (\tau , \rho )\) we denote the number of times that \(\tau \) occurs on \(\rho \).

In the following, we denote by ‘\(\infty \)’ a value s.t. \(a < \infty \) for all \(a \in \mathbb {Z}\) (infinity).

Definition 3

(Transition Bound) Let \(\mathcal {P}= (L, T, l_b,l_e)\) be program over states \({\varSigma }\). Let \(\tau \in T\). A value \(\mathtt {b}\in \mathbb {N}_0 \cup \{\infty \}\) is a bound for \(\tau \) on a run \(\rho = (l_{b}, \sigma _0) \xrightarrow {\lambda _0} (l_{1}, \sigma _1) \xrightarrow {\lambda _1} (l_{2}, \sigma _2) \xrightarrow {\lambda _2} \cdots \) of \(\mathcal {P}\) iff \(\sharp (\tau ,\rho ) \le \mathtt {b}\), i.e., iff \(\tau \) appears not more than \(\mathtt {b}\) times on \(\rho \). A function \(\mathtt {b}: {\varSigma }\rightarrow \mathbb {N}_{0} \cup \{\infty \}\) is a bound for \(\tau \) iff for all runs \(\rho \) of \(\mathcal {P}\) it holds that \(\mathtt {b}(\sigma _0)\) is a bound for \(\tau \) on \(\rho \), where \(\sigma _0\) denotes the initial state of \(\rho \).

Given a program transition \(\tau \), our bound algorithm (which we define below) computes a bound for \(\tau \). If possible, the bound computed by our algorithm should be precise or tight, in particular the trivial bound \({\varSigma }\rightarrow \infty \) is (most often) of no value to us.

Definition 4

(Precise Transition Bound) Let \(\mathcal {P}(L, T,l_b, l_e)\) be a program over states \({\varSigma }\). Let \(\tau \in T\). We say that a transition bound \(\mathtt {b}: {\varSigma }\rightarrow \mathbb {N}_{0} \cup \{\infty \}\) for \(\tau \) is precise iff for each \(\sigma _0 \in {\varSigma }\) there is a run \(\rho = (l_b,\sigma _0) \xrightarrow {\lambda _0} (l_1,\sigma _1) \xrightarrow {\lambda _1} \cdots \) such that \(\sharp (\tau ,\rho ) = \mathtt {b}(\sigma _0)\).

Informally A transition bound is precise if it can be reached for all initial states \(\sigma _0\). Note that there is exactly one precise transition bound.

Definition 5

(Tight Transition Bound) Let \(\mathcal {P}(L, T,l_b, l_e)\) be a program over states \({\varSigma }\). Let \(\tau \in T\). We say that a transition bound \(\mathtt {b}: {\varSigma }\rightarrow \mathbb {N}_{0} \cup \{\infty \}\) is tight iff there is a \(c > 0\) such that either (1) for all \(\sigma \in {\varSigma }\) we have \(\mathtt {b}(\sigma ) < c\) (\(\mathtt {b}\) is bounded), or (2) there is a family of states \((\sigma _{i})_{i\in \mathbb {N}}\) with \(\lim \nolimits _{i\mapsto {\infty }}\mathtt {b}(\sigma _i) = \infty \) (\(\mathtt {b}\) is unbounded) such that for all \(\sigma _i\) there is a run \(\rho _i\) starting in \(\sigma _i\) with \(\mathtt {b}(\sigma _i) \le c \times \sharp (\tau ,\rho _i)\).

Informally A transition bound is tight if it is in the same asymptotic class as the precise transition bound: let \(\tau \in T\). For the special case \({\varSigma }= \mathbb {N}\) we have the following: let \(f: \mathbb {N} \rightarrow \mathbb {N}\) denote the precise transition bound for \(\tau \). Let \(g: \mathbb {N} \rightarrow \mathbb {N}\) be some transition bound for \(\tau \). Trivially \(f \in O(g)\) (f does not grow faster than g). Now, g is tight if also \(f \in {\varOmega }(g)\) (f does not always grow slower than g). With \(f \in O(g)\) and \(f \in {\varOmega }(g)\) we have that \(f \in {\varTheta }(g)\). The same can be formulated for general state sets \({\varSigma }\) by mapping \({\varSigma }\) to the natural numbers.

We discussed in Sect. 2.1 that in the course of computing transition bounds, our analysis computes invariants of a special shape. We now formally define the form of the invariants that our analysis infers.

Definition 6

(Upper Bound Invariant) Let \(\mathcal {P}(L, T,l_b,l_e)\) be a program over \({\varSigma }\). Let \(e: {\varSigma }\rightarrow \mathbb {Z}\). Let \(l\in L\). Let \(\rho = (l_{b}, \sigma _0) \xrightarrow {\lambda _0} (l_{1}, \sigma _1) \xrightarrow {\lambda _1} (l_{2}, \sigma _2) \xrightarrow {\lambda _2} \cdots \) be a run of \(\mathcal {P}\). A value \(\mathtt {b}\in \mathbb {Z} \cup \{\infty \}\) is an upper bound invariant for \(e\) at \(l\) on \(\rho \) iff \(e(\sigma _i) \le \mathtt {b}\) holds for all i on \(\rho \) with \(l_i = l\). A function \(\mathtt {b}: {\varSigma }\rightarrow \mathbb {Z} \cup \{\infty \}\) is an upper bound invariant for \(e\) at \(l\) iff for all runs \(\rho \) of \(\mathcal {P}\) it holds that \(\mathtt {b}(\sigma _0)\) is an upper bound invariant for \(e\) at \(l\) on \(\rho \), where \(\sigma _0\) denotes the initial state of \(\rho \).

We now formally define the notion local bound that we motivated in Sect. 2.

Definition 7

(Counter Notation II) Let \(\mathcal {P}(L, T,l_b, l_e)\) be a program over \({\varSigma }\). Let \(\rho = (l_b,\sigma _0) \xrightarrow {\lambda _0} (l_1,\sigma _1) \xrightarrow {\lambda _1} \cdots \) be a run of \(\mathcal {P}\). Let \(e: {\varSigma }\rightarrow \mathbb {Z}\) be a norm. By \({\downarrow }(e, \rho )\) we denote the number of times that the value of \(e\) decreases on \(\rho \), i.e., \({\downarrow }(e,\rho ) = |\{i \mid {e(\sigma _i) > e(\sigma _{i+1}) }\}|\).

Definition 8

(Norm) Let \({\varSigma }\) be a set of states. A norm \(e: {\varSigma }\rightarrow \mathbb {Z}\) over \({\varSigma }\) is a function that maps the states to the integers.

Definition 9

(Local Bound) Let \(\mathcal {P}(L, T,l_b, l_e)\) be a program over \({\varSigma }\). Let \(\tau \in T\). Let \(e: {\varSigma }\rightarrow \mathbb {N}\) be a norm that takes values in the natural numbers. Let \(\rho = (l_b,\sigma _0) \xrightarrow {u_0} (l_1,\sigma _1) \xrightarrow {u_1} \cdots \) be a run of \(\mathcal {P}\). \(e\) is a local bound for \(\tau \) on \(\rho \) if it holds that \(\sharp (\tau , \rho ) \le {\downarrow }(e, \rho )\). We call \(e\) a local bound for \(\tau \) if \(e\) is a local bound for \(\tau \) on all runs of \(\mathcal {P}\).

Discussion A natural number valued norm \(e\) is a local bound for \(\tau \) on a run \(\rho \) if \(\tau \) appears not more often on \(\rho \) than the number of times the value of \(e\) decreases. I.e., a local bound \(e\) for \(\tau \) limits the number of executions of \(\tau \) on a run \(\rho \) as long as certain program parts (those were \(e\) increases) are not executed. We argue in Sect. 9 that in our analysis local bounds play the role of potential functions in classical amortized complexity analysis [32]. We discuss how we obtain local bounds in Sect. 4.

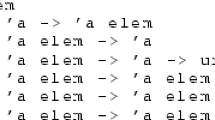

3.1 Difference Constraint Programs

As discussed introductory, we base our algorithm on the abstract program model of difference constraint programs which we now formally define in Definition 12. We discuss in Sect. 6 how we abstract a given program to a \( DCP \).

Definition 10

(Variables, Symbolic Constants, Atoms) By \(\mathcal {V}\) we denote a finite set of variables. By \(\mathcal {C}\) we denote a finite set of symbolic constants. \(\mathcal {A}= \mathcal {V}\cup \mathcal {C}\) is the set of atoms.

Definition 11

(Difference Constraints) A difference constraint over \(\mathcal {A}\) is an inequality of form \(x^\prime \le y + c\) with \(x \in \mathcal {V}\), \(y \in \mathcal {A}\) and \(c \in \mathbb {Z}\). By \(\mathcal {DC}(\mathcal {A})\) we denote the set of all difference constraints over \(\mathcal {A}\).

Notation We often write \(x^\prime \le y\) as a shorthand for the difference constraint \(x^\prime \le y + \mathtt {c}\).

Definition 12

(Difference Constraint Program, Syntax) A difference constraint program (\( DCP \)) over \(\mathcal {A}\) is a directed labeled graph \({\varDelta }\mathcal {P}= (L, E, l_b,l_e)\), where \(L\) is a finite set of vertices, \(l_b \in L\) and \(l_e \in L\) and \(E\subseteq L\times 2^{\mathcal {DC}(\mathcal {A})} \times L\) is a finite set of edges. We write \(l_1 \xrightarrow {u} l_2\) to denote an edge \((l_1, u, l_2) \in E\) labeled by a set of difference constraints \(u\in 2^{\mathcal {DC}(\mathcal {A})}\). We use the notation \(l_1 \xrightarrow {} l_2\) to denote an edge that is labeled by the empty set of difference constraints. \({\varDelta }\mathcal {P}\) is fan-in-free, if for every edge \(l_1 \xrightarrow {u} l_2 \in E\) and every \(\mathtt {v}\in \mathcal {V}\) there is at most one \(\mathtt {a}\in \mathcal {A}\) and \(\mathtt {c}\in \mathbb {Z}\) s.t. \(\mathtt {v}^\prime \le \mathtt {a}+ \mathtt {c}\in u\).

Example Figure 10b shows a fan-in free \( DCP \).

Definition 13

(Difference Constraint Program, Semantics) The set of valuations of \(\mathcal {A}\) is the set \( Val _\mathcal {A}= \mathcal {A}\rightarrow \mathbb {N}\) of mappings from \(\mathcal {A}\) to the natural numbers. Let \(u\in 2^{\mathcal {DC}(\mathcal {A})}\). We define \(\llbracket u\rrbracket \in 2^{( Val _\mathcal {A}\times Val _\mathcal {A})}\) s.t. \((\sigma ,\sigma ^\prime ) \in \llbracket u\rrbracket \) iff for all \(x^\prime \le y + c \in u\) it holds that (i) \(\sigma ^\prime (x) \le \sigma (y) + c\) and (ii) for all \(\mathtt {s}\in \mathcal {C}\) \(\sigma ^\prime (\mathtt {s}) = \sigma (\mathtt {s})\). A \( DCP \) \({\varDelta }\mathcal {P}= (L, E, l_b,l_e)\) is a program over the set of states \( Val _\mathcal {A}\) with locations \(L\), entry location \(l_b\), exit location \(l_e\) and transitions \(T= \{l_1 \xrightarrow {\llbracket u\rrbracket } l_2 \mid l_1 \xrightarrow {u} l_2 \in E\}\).

Discussion A \( DCP \ \) is a program (Definition 1) whose transition relations are solely specified by conjunctions of difference constraints. Note that variables in difference constraint programs take values only over the natural numbers. Further note that we refer to the syntactic representation of the transition relation in form of a set of difference constraints by \(u\), whereas by \(\llbracket u\rrbracket \) we refer to the transition relation itself.

Definition 14

(Well-defined \( DCP \)) Let \({\varDelta }\mathcal {P}= (L, E, l_b,l_e)\) be a \( DCP \) over atoms \(\mathcal {A}\). We say that a variable \(x\in \mathcal {V}\) is defined at \(l\) if \(x \in \mathtt {def}(l)\), where \(\mathtt {def}: L\rightarrow 2^\mathcal {A}\) is defined by \(\mathtt {def}(l) = \bigcap \nolimits _{{l_1 \xrightarrow {u} l} \in E} \{x \mid \exists y \in \mathcal {V}~\exists \mathtt {c}\in \mathbb {Z} \text { s.t. } {x^\prime \le y + \mathtt {c}\in u}\} \cup \mathcal {C}\).

We say that a variable x is used at \(l\) if \(x \in \mathtt {use}(l)\), where \(\mathtt {use}: L\rightarrow 2^\mathcal {A}\) is defined by \(\mathtt {use}(l) = \bigcup \nolimits _{{l\xrightarrow {u} l_1} \in E} \{y \mid \exists x \in \mathcal {A}~\exists \mathtt {c}\in \mathbb {Z} \text { s.t. } {x^\prime \le y + \mathtt {c}\in u}\}\).

\({\varDelta }\mathcal {P}\) is well-defined iff \(l_b\) has no incoming edges and for all \(l\in L\) it holds that \(\mathtt {use}(l) \subseteq \mathtt {def}(l)\).

Discussion A \( DCP \) \({\varDelta }\mathcal {P}\) is well-defined if \(l_b\) has no incoming edges and for all \(\mathtt {v}\in \mathcal {V}\) it holds that \(\mathtt {v}\) is defined at all locations at which \(\mathtt {v}\) is used (symbolic constants are always defined). Note that for well-defined programs we in particular require \(\mathtt {use}(l_b) \subseteq \mathtt {def}(l_b)\). Because \(l_b\) has no incoming edges we have \(\mathtt {def}(l_b) = \mathcal {C}\). Thus only symbolic constants can be used at \(l_b\).

Throughout this work we will only consider \( DCP \)s that are fan-in free and well-defined.

Let \({\varDelta }\mathcal {P}(L, E,l_b, l_e)\) be a \( DCP \) over \(\mathcal {A}\). Our bound algorithm, which we start to develop in the next section, computes a bound for a given transition \(\tau \in E\) in form of an expression over \(\mathcal {A}\) which involves the operators \(+\),\(\times \), / ,\(\min \),\(\max \) and the floor function \(\lfloor \cdot \rfloor \). However, note that the norms, which are treated as atoms (elements of \(\mathcal {A}\)) in the abstraction, can involve arbitrary operators (see Sect. 6).

Definition 15

(Expressions over \(\mathcal {A}\)) By \( Expr (\mathcal {A})\) we denote the set of expressions over \(\mathcal {A}\cup \mathbb {Z} \cup \{\infty \}\) that are formed using the arithmetical operators addition (\(+\)), multiplication (\(\times \)), maximum (\(\max \)), minimum (\(\min \)) and integer division of form \(\lfloor \frac{\mathtt {expr}}{\mathtt {c}}\rfloor \) where \(\mathtt {expr}\in Expr (\mathcal {A})\) and \(\mathtt {c}\in \mathbb {N}\). The semantics function \(\llbracket \cdot \rrbracket : Expr (\mathcal {A}) \rightarrow ( Val _\mathcal {A}\rightarrow \mathbb {Z} \cup \{\infty \})\) evaluates an expression \(\mathtt {expr}\in Expr (\mathcal {A})\) over a state \(\sigma \in Val _\mathcal {A}\) using the usual operator semantics (we have \(a + \infty = \infty \), \(\min (a, \infty ) = a\), etc.).

Our bound algorithm, which we define next, computes a special case of an upper bound invariant which we call a variable bound.

Definition 16

(Variable Bound) Let \({\varDelta }\mathcal {P}(L, E,l_b, l_e)\) be a \( DCP \) over \(\mathcal {A}\). Let \(\mathtt {a}\in \mathcal {A}\). We call \(\mathtt {b}\) s.t. \(\mathtt {b}\) is an upper bound invariant for \(\llbracket \mathtt {a}\rrbracket \) at all \(l\in L\) with \(\mathtt {a}\in \mathtt {def}(l)\) a variable bound for \(\mathtt {a}\).

Let variable x of the abstract program represent the expression \(\mathtt {expr}\) of the concrete program. Note that by computing a variable bound for x in the abstract program, we compute an upper bound invariant for \(\mathtt {expr}\) in the concrete program.

3.2 Algorithm

Our bound algorithm computes a bound for a given transition \(\tau \in E\) based on a mapping \(\zeta : E\rightarrow Expr (\mathcal {A})\) (called local bound mapping) which assigns each transition \(\tau \in E\) either (1) a bound for \(\tau \) in form of an expression over the symbolic constants (i.e., \(\zeta (\tau ) \in Expr (\mathcal {C})\)) or (2) a local bound for \(\tau \) in form of a variable (i.e, \(\zeta (\tau ) \in \mathcal {V}\)). Note that \(\mathcal {V}\cap Expr (\mathcal {C}) = \emptyset \). In Case (1) our algorithm (Definition 19) returns \( T\mathcal {B} (\tau ) = \zeta (\tau )\). In Case (2) a transition bound \( T\mathcal {B} (\tau ) \in Expr (\mathcal {C})\) is computed by inferring how often and by how much the local transition bound \(\zeta (\tau ) \in \mathcal {V}\) of \(\tau \) may increase during program run.

Definition 17

(Local Bound Mapping) Let \({\varDelta }\mathcal {P}(L, E,l_b, l_e)\) be a \( DCP \) over \(\mathcal {A}\). Let \(\rho = (l_b,\sigma _0) \xrightarrow {u_0} (l_1,\sigma _1) \xrightarrow {u_1} \cdots \) be a run of \({\varDelta }\mathcal {P}\). We call a function \(\zeta : E\rightarrow Expr (\mathcal {A})\) a local bound mapping for \(\rho \) if for all \(\tau \in E\) it holds that either

-

1.

\(\zeta (\tau ) \in Expr (\mathcal {C})\) and \(\llbracket \zeta (\tau )\rrbracket (\sigma _0)\) is a bound for \(\tau \) on \(\rho \) or

-

2.

\(\zeta (\tau ) \in \mathcal {V}\) and \(\llbracket \zeta (\tau )\rrbracket \) is a local bound for \(\tau \) on \(\rho \).

We say that \(\zeta \) is a local bound mapping for \({\varDelta }\mathcal {P}\) if \(\zeta \) is a local bound mapping for all runs of \({\varDelta }\mathcal {P}\).

Further, our bound algorithm is based on a syntactic distinction between two kinds of updates that modify the value of a given variable \(\mathtt {v}\in \mathcal {V}\): we identify transitions which increment \(\mathtt {v}\) and transitions which reset \(\mathtt {v}\).

Definition 18

(Resets and Increments) Let \({\varDelta }\mathcal {P}= (L, E, l_b,l_e)\) be a \( DCP \) over \(\mathcal {A}\). Let \(\mathtt {v}\in \mathcal {V}\). We define the resets \(\mathcal {R}(\mathtt {v})\) and increments \(\mathcal {I}(\mathtt {v})\) of \(\mathtt {v}\) as follows:

Given a path \(\pi \) of \({\varDelta }\mathcal {P}\) we say that \(\mathtt {v}\) is reset on \(\pi \) if there is a transition \(\tau \) on \(\pi \) such that \((\tau , \mathtt {a}, \mathtt {c}) \in \mathcal {R}(\mathtt {v})\) for some \(\mathtt {a}\in \mathcal {A}\) and \(\mathtt {c}\in \mathbb {Z}\). We say that \(\mathtt {v}\) is incremented on \(\pi \) if there is a transition \(\tau \) on \(\pi \) such that \((\tau , \mathtt {c}) \in \mathcal {I}(\mathtt {v})\) for some \(\mathtt {c}\in \mathbb {N}\).

I.e., we have that \((\tau , \mathtt {a}, \mathtt {c}) \in \mathcal {R}(\mathtt {v})\) if variable \(\mathtt {v}\) is reset to a value smaller or equal to \(\mathtt {a}+ \mathtt {c}\) when executing the transition \(\tau \). Accordingly we have \((\tau , \mathtt {c}) \in \mathcal {I}(\mathtt {v})\) if variable \(\mathtt {v}\) is incremented by a value smaller or equal to \(\mathtt {c}\) when executing the transition \(\tau \).

Our algorithm in Definition 19 is built on a mutual recursion between the two functions \( V\mathcal {B} (\mathtt {v})\) and \( T\mathcal {B} (\tau )\), where \( V\mathcal {B} (\mathtt {v})\) infers a variable bound for variable \(\mathtt {v}\) and \( T\mathcal {B} (\tau )\) infers a transition bound for the transition \(\tau \).

Definition 19

(Bound Algorithm) Let \({\varDelta }\mathcal {P}(L, E, l_b, l_e)\) be a \( DCP \) over \(\mathcal {A}\). Let \(\zeta : E\rightarrow Expr (\mathcal {A})\). We define \( V\mathcal {B} : \mathcal {A}\mapsto Expr (\mathcal {A})\) and \( T\mathcal {B} : E\mapsto Expr (\mathcal {A})\) as:

where \(\mathtt {Incr}(\mathtt {v}) = \sum \nolimits _{(\tau ,\mathtt {c}) \in \mathcal {I}(\mathtt {v})}{ T\mathcal {B} (\tau ) \times \mathtt {c}}\) (we set \(\mathtt {Incr}(\mathtt {v}) = 0\) for \(\mathcal {I}(\mathtt {v}) = \emptyset \))

Discussion We first explain the subroutine \(\mathtt {Incr}(\mathtt {v})\): with \((\tau , \mathtt {c}) \in \mathcal {I}(\mathtt {v})\) we have that a single execution of \(\tau \) increments the value of \(\mathtt {v}\) by not more than \(\mathtt {c}\). \(\mathtt {Incr}(\mathtt {v})\) multiplies the bound for \(\tau \) with the increment \(\mathtt {c}\) in order to summarize the total amount by which \(\mathtt {v}\) may be incremented over all executions of \(\tau \). \(\mathtt {Incr}(\mathtt {v})\) thus computes a bound on the total amount by which the value of \(\mathtt {v}\) may be incremented during program run.

The function \( V\mathcal {B} (\mathtt {v})\) computes a variable bound for \(\mathtt {v}\): after executing a transition \(\tau \) with \((\tau , \mathtt {a}, \mathtt {c}) \in \mathcal {R}(\mathtt {v})\), the value of \(\mathtt {v}\) is bounded by \( V\mathcal {B} (\mathtt {a}) + \mathtt {c}\). As long as \(\mathtt {v}\) is not reset, its value cannot increase by more than \(\mathtt {Incr}(\mathtt {v})\).

The function \( T\mathcal {B} (\tau )\) computes a transition bound for \(\tau \) based on the following reasoning: (1) the total amount by which the local bound \(\zeta (\tau )\) of transition \(\tau \) can be incremented is bounded by \(\mathtt {Incr}(\zeta (\tau ))\). (2) We consider a reset \(( t , \mathtt {a}, \mathtt {c}) \in \mathcal {R}(\zeta (\tau ))\); in the worst case, a single execution of \( t \) resets the local bound \(\zeta ( t )\) to \( V\mathcal {B} (\mathtt {a}) + \mathtt {c}\), adding \(\max ( V\mathcal {B} (\mathtt {a}) + \mathtt {c},0)\) to the potential number of executions of \( t \); in total all \( T\mathcal {B} ( t )\) possible executions of \( t \) add up to \( T\mathcal {B} ( t ) \times \max ( V\mathcal {B} (\mathtt {a}) + \mathtt {c},0)\) to the potential number of executions of \( t \).

Example We want to infer a bound for the loop at \(l_3\) in Fig. 2. We thus compute a transition bound for \(\tau _5\) (the single back edge of the loop at \(l_3\)). See Table 1 for details on the computation. We get \( T\mathcal {B} (\tau _5) = \max ([m1],[m2]) + [n] \times 2\). Thus \(\max (m1,m2) + 2n\) is a bound for the loop at \(l_3\) (\(m_1\), \(m_2\) and n have type unsigned).

Termination Our algorithm does not terminate iff recursive calls cycle, i.e., if a call to \( T\mathcal {B} (\tau )\) resp. \( V\mathcal {B} (\mathtt {v})\) (indirectly) leads to a recursive call to \( T\mathcal {B} (\tau )\) resp. \( V\mathcal {B} (\mathtt {v})\). This can be detected easily, we return the expression ‘\(\infty \)’.

We distinguish three cases of cyclic computation: (1) there is a variable \(\mathtt {v}\in \mathcal {V}\) such that the computation of \( V\mathcal {B} (\mathtt {v})\) ends up calling \( V\mathcal {B} (\mathtt {v})\) over a number of recursive calls to \( V\mathcal {B} \). (2) There is a transition \(\tau \in E\) such that the computation of \( T\mathcal {B} (\tau )\) ends up calling \( T\mathcal {B} (\tau )\) over a number of recursive calls to \( T\mathcal {B} \). (3) There is a variable \(\mathtt {v}\in \mathcal {V}\) and a transition \(\tau \in E\) such that the computation of \( T\mathcal {B} (\tau )\) calls \( V\mathcal {B} (\mathtt {v})\) which in turn ends up calling \( T\mathcal {B} (\tau )\) over a number of recursive calls to \( V\mathcal {B} \) and \( T\mathcal {B} \).

Case (1) occurs iff there is a cycle in the reset graph (Definition 20 in Sect. 3.3) of \({\varDelta }\mathcal {P}\). In Sect. 3.4 we discuss a preprocessing that ensures absence of cycles in the reset graph and thus absence of Case (1) by renaming the program variables appropriately.

Case (2) occurs iff there is a transition \(\tau _1\) with local bound x that increases the local bound y of a transition \(\tau _2\) which in turn increases x. We conclude that absence of Case (2) is ensured if for all strongly connected components (SCC) \(\mathtt {SCC}\) of \({\varDelta }\mathcal {P}\) we can find an ordering \(\tau _1,\ldots ,\tau _n\) of the transitions of \(\mathtt {SCC}\) such that the local bound of transition \(\tau _i\) is not increased on any transition \(\tau _j\) with \(n \ge j > i \ge 1\). Note that the existence of such an ordering for each SCC of \({\varDelta }\mathcal {P}\) proves termination of \({\varDelta }\mathcal {P}\): it allows to directly compose a termination proof in form of a lexicographic ranking function by ordering the respective local transition bounds accordingly.

An example for Case (3) is given in Fig. 3a. Let \(\tau _1\) be the transition on which y is reset to a. Let \(\tau _2\) be the single transition of the inner loop. Assume we want to compute a loop bound for the inner loop, i.e., a transition bound for \(\tau _2\) with local bound y. This triggers a variable bound computation for a because y is reset to a. Since a is incremented on \(\tau _2\), the variable bound computation for a will in turn trigger a transition bound computation for \(\tau _2\). Note, however, that the loop bound for the inner loop is exponential (\(2^n\)). We consider exponential loop bounds very rare, we did not encounter an exponential loop bound during our experiments.

Complexity Our algorithm can be efficiently (polynomial in the number of variables and transitions of the abstract program) implemented using caches (dynamic programming): we set \(\zeta (\tau ) = T\mathcal {B} (\tau )\) after having computed \( T\mathcal {B} (\tau )\). Accordingly we introduce a cache to store the result of a \( V\mathcal {B} \)-computation. When \( V\mathcal {B} (\mathtt {v})\) is called we first check if the result is already in the cache before performing the computation. The computed bound expressions, however, can be of exponential size: consider the \( DCP \) \({\varDelta }\mathcal {P}=(\{l_b,l\}, \{\tau _0,\tau _1,\ldots ,\tau _n\},l_b,l_e)\) over variables \(\{x_1,x_2,\ldots ,x_n\}\) and constants \(\{m_1,m_2,\ldots ,m_n\}\) shown in Fig. 3b. In fact, \( T\mathcal {B} (\tau _n) = \sum \nolimits _{S \in 2^{\{1,2,\ldots ,n\}}} m_0 \times \prod \nolimits _{i \in S} m_i\) is precise for Fig. 3b. However, the example is artificial. To our experience the computed bound expressions can, in practice, be reduced to human readable size by applying basic rules of arithmetic.

Theorem 1

(Soundness) Let \({\varDelta }\mathcal {P}(L, E, l_b, l_e)\) be a well-defined and fan-in free \( DCP \) over atoms \(\mathcal {A}\). Let \(\zeta : E\mapsto Expr (\mathcal {A})\) be a local bound mapping for \({\varDelta }\mathcal {P}\). Let \(\mathtt {a}\in \mathcal {A}\) and \(\tau \in E\). Let \( T\mathcal {B} (\tau )\) and \( V\mathcal {B} (\mathtt {a})\) be as defined in Definition 19. We have: (1) \(\llbracket T\mathcal {B} (\tau )\rrbracket \) is a transition bound for \(\tau \). (2) \(\llbracket V\mathcal {B} (\mathtt {a})\rrbracket \) is a variable bound for \(\mathtt {a}\).

In the following we describe two straightforward improvements of the algorithm stated in Definition 19.

Improvement I Let \(\tau \in E\). Let \(\mathtt {v}\in \mathcal {V}\) be a local bound for \(\tau \), i.e., for all runs \(\rho \) of \({\varDelta }\mathcal {P}\) we have that \(\sharp (\tau ,\rho ) \le {\downarrow }(\mathtt {v},\rho )\). Let \(\mathtt {c}\in \mathbb {N}\). Let \({\downarrow }(\mathtt {v}, \mathtt {c}, \rho )\) denote the number of times that the value of \(\mathtt {v}\) decreases on a run \(\rho \) of \({\varDelta }\mathcal {P}\) by at least \(\mathtt {c}\) (refines Definition 7). If for all runs \(\rho \) of \({\varDelta }\mathcal {P}\) we have that \(\sharp (\tau ,\rho ) \le {\downarrow }(\mathtt {v},\mathtt {c},\rho )\) (refines Definition 9) then \(\llbracket \lfloor \frac{ T\mathcal {B} (\tau )}{\mathtt {c}}\rfloor \rrbracket \) is a bound for \(\tau \) (assuming \(\zeta (\tau ) = \mathtt {v}\)). In our simple abstract program model, \(\mathtt {c}\in \mathbb {N}\) is obtained syntactically from a constraint \(\mathtt {v}^\prime \le \mathtt {v}- \mathtt {c}\). See Sect. 4 on how we determine relevant constraints. More details on the discussed improvement are given in [30].

Improvement II Let \(\tau _1,\tau _2 \in E\) be two transitions with the same local bound, i.e., \(\zeta (\tau _1) = \zeta (\tau _2)\). If \(\tau _1\) and \(\tau _2\) cannot be executed without decreasing the common local bound \(\zeta (\tau _1)\) twice, once for \(\tau _1\) and once for \(\tau _2\) (e.g., \(\tau _2\) and \(\tau _5\) in xnuSimple, Fig. 1), we have that \(\sharp (\tau _1,\rho ) + \sharp (\tau _2,\rho ) \le \llbracket T\mathcal {B} (\tau _1)\rrbracket (\sigma _0) = \llbracket T\mathcal {B} (\tau _2)\rrbracket (\sigma _0)\). Thus, \( T\mathcal {B} (\tau _1)\) is a bound on the number of times that \(\tau _1\) and \(\tau _2\) can be executed on any run of \({\varDelta }\mathcal {P}\). We exploit this observation: assume some \(\mathtt {v}\in \mathcal {V}\) is incremented by \(\mathtt {c}_1\) on \(\tau _1\) and by \(\mathtt {c}_2\) on \(\tau _2\). For computing \(\mathtt {Incr}(\mathtt {v})\) we only add \( T\mathcal {B} (\tau _1) \times \max \{\mathtt {c}_1,\mathtt {c}_2\}\) instead of \( T\mathcal {B} (\tau _1) \times \mathtt {c}_1 + T\mathcal {B} (\tau _2) \times \mathtt {c}_2\). This idea can be generalized to multiple transitions. Further details on the discussed improvement are given in [30].

3.3 Reasoning Based on Reset Chains

Consider Fig. 4. The precise bound for the loop at \(l_3\) is n: Initially r has value n, after we have iterated the loop at \(l_3\), r is set to 0. Thus the loop can only be executed in at most one iteration of the outer loop. However, our algorithm from Definition 19 infers a quadratic bound for the loop at \(l_3\): as shown in Table 2 we have \( T\mathcal {B} (\tau _3) = [n] \times [n]\). We thus get \(n^2\) (n has type unsigned) as bound for the loop at \(l_3\) in the concrete program.

Our algorithm from Definition 19 does not take into account that r is reset to 0 after executing the loop at \(l_3\). In the following we discuss an extension of our algorithm which overcomes this imprecision by taking the context under which a transition is executed into account: we say that a transition \(\tau _2\) is executed under context \(\tau _1\) if transition \(\tau _1\) was executed before the current execution of \(\tau _2\) and after the previous execution of \(\tau _2\) (if any).

As an example, consider Fig. 4b, the abstraction of Fig. 4a. We have that \(\tau _2\) is always executed either under context \(\tau _0\) or under context \(\tau _4\). When executing \(\tau _2\) under context \(\tau _0\), p is set to n. But when executing \(\tau _2\) under context \(\tau _4\), p is set to 0. Moreover, \(\tau _2\) can only be executed once under context \(\tau _0\) because \(\tau _0\) is executed only once.

We define the notion of a reset graph as a means to reason systematically about the context under which resets can be executed.

Definition 20

(Reset Chain Graph) Let \({\varDelta }\mathcal {P}(L, E,l_b,l_e)\) be a \( DCP \) over \(\mathcal {A}\). The reset chain graph or reset graph of \({\varDelta }\mathcal {P}\) is the directed graph \(\mathcal {G}\) with node set \(\mathcal {A}\) and edges \(\mathcal {E}= \{(y, \tau , \mathtt {c}, x) \mid {(\tau , y, \mathtt {c}) \in \mathcal {R}(x)}\} \subseteq \mathcal {A}\times E\times \mathbb {Z} \times \mathcal {V}\), i.e., each edge has a label in \(E\times \mathbb {Z}\). We call \(\mathcal {G}(\mathcal {A},\mathcal {E})\) a reset chain DAG or reset DAG if \(\mathcal {G}(\mathcal {A},\mathcal {E})\) is acyclic. We call \(\mathcal {G}(\mathcal {A},\mathcal {E})\) a reset chain forest or reset forest if the sub-graph \(\mathcal {G}(\mathcal {V},\mathcal {E})\) (recall that \(\mathcal {V}\subset \mathcal {A}\)) is a forest. We call a finite path \(\kappa = \mathtt {a}_n \xrightarrow {\tau _n, c_n} \mathtt {a}_{n-1} \xrightarrow {\tau _{n-1}, c_{n-1}} \cdots \mathtt {a}_0\) in \(\mathcal {G}\) with \(n > 0\) a reset chain of \({\varDelta }\mathcal {P}\). We say that \(\kappa \) is a reset chain from \(\mathtt {a}_n\) to \(\mathtt {a}_0\). Let \(n \ge i \ge j \ge 0\). By \(\kappa _{[i,j]}\) we denote the sub-path of \(\kappa \) that starts at \(\mathtt {a}_i\) and ends at \(\mathtt {a}_j\). We define \( in (\kappa ) = \mathtt {a}_n\), \( c (\kappa ) = \sum \nolimits _{i=1}^n c_i\), \( trn (\kappa ) = \{\tau _n,\tau _{n-1},\ldots ,\tau _1\}\), and \( atm (\kappa ) = \{a_{n-1},\ldots ,a_0\}\). \(\kappa \) is sound if for all \(1 \le i < n\) it holds that \(\mathtt {a}_i\) is reset on all paths from the target location of \(\tau _1\) to the source location of \(\tau _i\) in \({\varDelta }\mathcal {P}\). \(\kappa \) is optimal if \(\kappa \) is sound and there is no sound reset chain \(\varkappa \) of length \(n+1\) s.t. \(\varkappa _{[n,0]} = \kappa \). Let \(\mathtt {v}\in \mathcal {V}\), by \(\mathfrak {R}(\mathtt {v})\) we denote the set of optimal reset chains ending in \(\mathtt {v}\).

Example Figure 4c shows the reset graph of Fig. 4b.

We elaborate on the notions sound and optimal below. Let us first state a basic intuition on how we employ reset chains to enhance the precision of our reasoning:

For a given reset \((\tau , \mathtt {a}, \mathtt {c}) \in \mathcal {R}(\mathtt {v})\), the reset graph determines which atom flows into variable \(\mathtt {v}\) under which context. For example, consider Fig. 4b and its reset graph in Fig. 4c: when executing the reset \((\tau _2,[r],0) \in \mathcal {R}([p])\) under the context \(\tau _4\), \([p]\) is set to \([0]\), if the same reset is executed under the context \(\tau _0\), \([p]\) is set to \([n]\). Note that the reset graph does not represent increments of variables. We discuss how we handle increments in Sect. 3.3.1.

Let \(\mathtt {v}\in \mathcal {V}\). Given a reset chain \(\kappa \) of length n that ends at \(\mathtt {v}\), we say that \(( trn (\kappa ), in (\kappa ), c (\kappa ))\) is a reset of \(\mathtt {v}\) with context of length \(n-1\). I.e., \(\mathcal {R}(\mathtt {v})\) from Definition 18 is the set of context-free resets of \(\mathtt {v}\) (context of length 0), because \(( trn (\kappa ), in (\kappa ), c (\kappa )) \in \mathcal {R}(\mathtt {v})\) iff \(\kappa \) ends at \(\mathtt {v}\) and has length 1. Our previously defined algorithm from Definition 19 uses only context-free resets, we say that it reasons context free. For reasoning with context, we substitute the term

in Definition 19 by the term

Note that we can compute a bound on the number of times that a sequence \(\tau _1,\tau _2,\ldots ,\tau _n\) of transitions may occur on a run by computing \(\min \nolimits _{1\le i \le n} T\mathcal {B} (\tau _i)\).

We now discuss how our algorithm infers the linear bound for \(\tau _3\) of Fig. 4 when applying the described modification to Definition 19: the reset graph of Fig. 4b is shown in Fig. 4c. There are 3 reset chains ending in \([p]\): \(\kappa _1 = [0] \xrightarrow {\tau _4,0} [r] \xrightarrow {\tau _2,0} [p]\), \(\kappa _2 = [n] \xrightarrow {\tau _0,0} [r] \xrightarrow {\tau _2,0} [p]\) and \(\kappa _3 = [r] \xrightarrow {\tau _2,0} [p]\). However, \(\kappa _3\) is a sub-path of \(\kappa _1\) and \(\kappa _2\). Note that \(\kappa _1\) and \(\kappa _2\) are sound by Definition 20 because \([r]\) is reset on all paths from the target location \(l_3\) of \(\tau _2\) to the source location \(l_2\) of \(\tau _2\) in Fig. 4b (namely on \(\tau _4\)). \(\kappa _1\) and \(\kappa _2\) are both optimal because they are sound and of maximal length (we discuss the notions sound and optimal next). Thus \(\mathfrak {R}([p]) = \{\kappa _1, \kappa _2\}\). Basing our analysis on \(\mathfrak {R}([p])\) rather than \(\mathcal {R}([p])\) our approach reasons as shown in Table 3. We get \( T\mathcal {B} (\tau _3) = [n]\), i.e., we get the bound n (n has type unsigned) for the loop at \(l_3\) in the concrete program (Fig. 4a).

Sound and Optimal Reset Paths A given reset chain \(\mathtt {a}_n \xrightarrow {\tau _n, \mathtt {c}_n} \mathtt {a}_{n-1} \xrightarrow {\tau _{n-1}, \mathtt {c}_{n-1}} \cdots \xrightarrow {\tau _1,\mathtt {c}_1} \mathtt {a}_0\) is sound if in between any two executions of \(\tau _1\) all atoms on the path (but not necessarily \(\mathtt {a}_n\) where the path starts and \(\mathtt {a}_0\) where it ends) are reset: Assume that r in Fig. 4a would not be reset after executing the inner loop. Then we could repeat the reset of p to r without resetting r to 0, and the inner loop would have a quadratic loop bound. For the abstract program the described modification replaces the constraint \([r]^\prime \le [0]\) on \(\tau _4\) in Fig. 4b by \([r]^\prime \le [r]\). In the modified program, \([r]\) is not reset between two executions of \(\tau _2\), i.e., the reset chain \([n] \xrightarrow {\tau _0} [r] \xrightarrow {\tau _2} [p]\) is not sound. Our algorithm therefore reasons based on the reset chain \([r] \xrightarrow {\tau _2} [p]\) and obtains a quadratic bound for \(\tau _3\): \( T\mathcal {B} (\tau _3) = T\mathcal {B} (\tau _2) \times V\mathcal {B} (r) = [n] \times [n]\). I.e., if r is not reset on the outer loop this is modeled in our analysis by considering the reset chain \([r] \xrightarrow {\tau _2} [p]\) rather than the maximal reset chain \([n] \xrightarrow {\tau _0} [r] \xrightarrow {\tau _2} [p]\). Considering the maximal reset chain \([n] \xrightarrow {\tau _0} [r] \xrightarrow {\tau _2} [p]\) would be unsound in the described scenario: \(\min ( T\mathcal {B} (\tau _0), T\mathcal {B} (\tau _2)) \times [n] = [n]\) is not a valid transition bound for \(\tau _3\) if r is not reset to 0 between two executions of the inner loop. The optimal reset chains are the sound reset chains with maximal context, i.e., those reset chains that are sound and cannot be extended without becoming unsound.

3.3.1 Algorithm Based on Reset Chain Forests

In the presence of cycles in the reset graph we get infinitely many reset chains. Let us for now assume that the given program has a reset forest, i.e., that the sub-graph of the reset graph, which has nodes only in \(\mathcal {V}\), is a forest (Definition 20). Then also the complete reset graph is acyclic because \(\mathcal {A}= \mathcal {V}\cup \mathcal {C}\) and the nodes in \(\mathcal {C}\) cannot have incoming edges (Definition 20).

Definition 21

(Bound Algorithm using Reset Chains (reset forest)) Let \(\zeta : E\rightarrow Expr (\mathcal {A})\) be a local bound mapping for \({\varDelta }\mathcal {P}\). Let \( V\mathcal {B} : \mathcal {A}\mapsto Expr (\mathcal {A})\) be as defined in Definition 19. We override the definition of \( T\mathcal {B} : E\mapsto Expr (\mathcal {A})\) in Definition 19 by stating:

where \( T\mathcal {B} (\{\tau _1,\tau _2,\ldots ,\tau _n\}) = \min \nolimits _{1 \le i \le n} T\mathcal {B} (\tau _i)\) and

\(\mathtt {Incr}(\{\mathtt {a}_1,\mathtt {a}_2,\ldots ,\mathtt {a}_n\}) = \sum \nolimits _{1 \le i \le n}\mathtt {Incr}(\mathtt {a}_i)\) with \(\mathtt {Incr}(\emptyset ) = 0\)

Discussion and Example We have discussed above why we replace the term \( T\mathcal {B} ( t ) \times \max ( V\mathcal {B} (\mathtt {a}) + \mathtt {c}, 0)\) from Definition 19 by the term \( T\mathcal {B} ( trn (\kappa )) \times \max ( V\mathcal {B} ( in (\kappa )) + c (\kappa ), 0)\). We further discuss the term \(\mathtt {Incr}(\bigcup \nolimits _{\kappa \in \mathfrak {R}(\zeta (\tau ))} atm (\kappa ))\) which replaces the term \(\mathtt {Incr}(\zeta (\tau ))\) from Definition 19: consider Example xnuSimple in Fig. 1. Note that r may be incremented on \(\tau _1\) between the reset of r to 0 on \(\tau _0\) resp. \(\tau _4\) and the reset of p to r on \(\tau _{2}\). The term \(\mathtt {Incr}(\bigcup \nolimits _{\kappa \in \mathfrak {R}(\zeta (\tau ))} atm (\kappa ))\) takes care of such increments which may increase the value that finally flows into \(\zeta (\tau )\) (in the example p) when the last transition on \(\kappa \) (in the example \(\tau _{2}\)) is executed. In Table 4 the details of the bound computation are given. We get \( T\mathcal {B} (\tau _3) = [n]\), i.e, we have the bound n for the loop at \(l_3\) in the concrete program (Fig. 1a, n has type unsigned).

Soundness Definition 21 for \( DCP \)s with a reset forest is a special case of Definition 23 for \( DCP \)s with a reset DAG. We prove soundness of Definition 23 in Electronic Supplementary Material.

Complexity The nodes of a reset forest are the variables and constants of the abstract program (the elements of \(\mathcal {A}\)). Since the number of paths of a forest is polynomial in the number of nodes, the run time of our algorithm remains polynomial.

3.3.2 Algorithm Based on Reset Chain DAGs

The examples we considered so far had reset forests. (Note that the definition of a reset forest (Definition 20) only requires the sub-graph over the variables, i.e., the reset graph without the nodes that are symbolic constants, to be a forest.) In the following we generalize Definition 21 to reset DAGs. We discuss in Sect. 3.4 how we ensure that the reset graph is acyclic.

Consider the Example shown in Fig. 5. The outer loop (at \(l_1\)) can be executed n times. The loop at \(l_4\) resp. transition \(\tau _6\) can be executed 2n times, e.g., by executing the program as depicted in Table 5:

The first row counts the number of iterations of the outer loop, the second row shows the transitions that are executed and in the last two rows the values of r resp. p are tracked. The execution switches between two iteration schemes of the outer loop: an uneven iteration increments r twice (by executing \(\tau _2\) twice) and afterward assigns r to p by executing \(\tau _5\). We can then execute \(\tau _6\) two times. Afterward the value of r is “saved” in p for the next (even) iteration of the outer loop before r is set to 0 on \(\tau _1\). Therefore \(\tau _6\) can be executed again two times in the next, even iteration though r is not incremented on that iteration.

Consider the abstracted \( DCP \) in Fig. 5b and its reset graph in Fig. 5c. We have that \(\kappa _2 = [0] \xrightarrow {\tau _1} [r] \xrightarrow {\tau _5} [p]\) and \(\kappa _3 = [0] \xrightarrow {\tau _1} [r] \xrightarrow {\tau _7} [p]\) are two reset chains ending in \([p]\) (see Fig. 5 c). Observe that both are sound, i.e., between any two executions of \(\tau _7\) resp. \(\tau _5\) \([r]\) is reset. However, \([r]\) is not necessarily reset between the execution of \(\tau _5\) and \(\tau _7\), therefore the accumulated value 2 of r is used twice to increase the local bound \([p]\) of \(\tau _6\).

I.e., since there are two paths from \([r]\) to \([p]\) in the reset graph (Fig. 5c) we have to count the increments of \([r]\) twice: once for \(\kappa _2\) and once for \(\kappa _3\). Definition 22 distinguishes between nodes that have a single resp. multiple path(s) to a given variable in the reset graph. This is used in Definition 23 for a sound handling of the latter case.

Definition 22

(\( atm _1(\kappa )\) and \( atm _2(\kappa )\)) Let \({\varDelta }\mathcal {P}(L, E,l_b,l_e)\) be a \( DCP \) over \(\mathcal {A}\). Let \(P(\mathtt {a},\mathtt {v})\) denote the set of paths from \(\mathtt {a}\) to \(\mathtt {v}\) in the reset graph of \({\varDelta }\mathcal {P}\). Let \(\mathtt {v}\in \mathcal {V}\). Let \(\kappa \) be a reset chain ending in \(\mathtt {v}\). We define \( atm _1(\kappa ) = \{\mathtt {a}\in atm (\kappa ) \mid |P(\mathtt {a},\mathtt {v})| \le 1\}\) and \( atm _2(\kappa ) = \{\mathtt {a}\in atm (\kappa ) \mid |P(\mathtt {a},\mathtt {v})| > 1\}\), where |S| denotes the number of elements in S.

Definition 23

(Bound Algorithm Based on Reset Chains (reset DAG)) Let \({\varDelta }\mathcal {P}(L, E,l_b,l_e)\) be a \( DCP \) over \(\mathcal {A}\). Let \(\zeta : E\rightarrow Expr (\mathcal {A})\) be a local bound mapping for \({\varDelta }\mathcal {P}\). Let \( V\mathcal {B} : \mathcal {A}\mapsto Expr (\mathcal {A})\) be as defined in Definition 19. We override the definition of \( T\mathcal {B} : E\mapsto Expr (\mathcal {A})\) in Definition 19 by stating:

where \( T\mathcal {B} (\{\tau _1,\tau _2,\ldots ,\tau _n\}) = \min \nolimits _{1 \le i \le n} T\mathcal {B} (\tau _i)\) and

\(\mathtt {Incr}(\{\mathtt {a}_1,\mathtt {a}_2,\ldots ,\mathtt {a}_n\}) = \sum \nolimits _{1 \le i \le n}\mathtt {Incr}(\mathtt {a}_i)\) with \(\mathtt {Incr}(\emptyset ) = 0\)

Discussion If \( atm _2(\kappa ) = \emptyset \) for all reset chains \(\kappa \), Definition 23 is equal to Definition 21. This is the case for all \( DCP \)s with a reset forest (all examples in this article except Fig. 5). Definition 23 thus is a generalization of Definition 21.

Example As shown in Table 6 we get \( T\mathcal {B} (\tau _6) = [n] + [n]\) for Fig. 5 by Definition 23. I.e., we get the precise bound 2n for the loop at \(l_4\) in Fig. 5 (n has type unsigned).

Theorem 2

( Soundness of Bound Algorithm using Reset Chains) Let \({\varDelta }\mathcal {P}(L, E, l_b, l_e)\) be a well-defined and fan-in free \( DCP \) over atoms \(\mathcal {A}\). Let \(\zeta : E\mapsto Expr (\mathcal {A})\) be a local bound mapping for \({\varDelta }\mathcal {P}\). Let \( T\mathcal {B} \) and \( V\mathcal {B} \) be defined as in Definition 23. Let \(\tau \in E\) and \(\mathtt {a}\in \mathcal {A}\). If \({\varDelta }\mathcal {P}\) has a reset DAG then (1) \(\llbracket T\mathcal {B} (\tau )\rrbracket \) is a transition bound for \(\tau \) and (2) \(\llbracket V\mathcal {B} (\mathtt {a})\rrbracket \) is a variable bound for \(\mathtt {a}\).

Proof

See Electronic Supplementary Material.

Complexity A DAG can have exponentially many paths in the number of nodes. Thus there can be exponentially many reset chains in \(\mathfrak {R}(\mathtt {v})\) (exponential in the number of variables and constants of the abstract program, i.e., the norms generated during the abstraction process, see Sect. 6). However, in our experiments enumeration of (optimal) reset chains did not affect performance. (See also our discussion on scalability in Sect. 10.1.)

3.4 Preprocessing: Transforming a Reset Graph into a Reset DAG

Consider the \( DCP \) shown in Fig. 6a. Figure 6a has a cyclic reset graph as shown in Fig. 6b. In the following we describe an algorithm which transforms Fig. 6a into d by renaming the program variables. Figure 6d has an acyclic reset graph (a reset DAG).

Definition 24

(Variable Flow Graph) Let \({\varDelta }\mathcal {P}(L,E,l_b,l_e)\) be a \( DCP \) over \(\mathcal {A}\). We call the graph with node set \(\mathcal {V}\times L\) and edge set

the variable flow graph.

For an example see Fig. 6c.

Let \({\varDelta }\mathcal {P}(L,E,l_b,l_e)\) be a \( DCP \). Let \(\{\mathtt {SCC}_1,\mathtt {SCC}_2,\ldots ,\mathtt {SCC}_n\}\) be the strongly connected components of its variable flow graph. For each SCC \(\mathtt {SCC}_i\) we choose a fresh variable \(\mathtt {v}_i \in \mathcal {V}\). Let \(\varsigma : \mathcal {V}\times L\mapsto \mathcal {V}\) be the mapping \(\varsigma (\mathtt {v}, l) = \mathtt {v}_i\), where i s.t. \((\mathtt {v}, l) \in \mathtt {SCC}_i\). We extend \(\varsigma \) to \(\mathcal {A}\times L\mapsto \mathcal {A}\) by defining \(\varsigma (\mathtt {s},l) = \mathtt {s}\) for all \(l\in L\) and \(\mathtt {s}\in \mathcal {C}\).

We obtain \({\varDelta }\mathcal {P}^\prime (L,E^\prime ,l_b,l_e)\) from \({\varDelta }\mathcal {P}\) by setting \(E^\prime = \{l_1 \xrightarrow {u^\prime } l_2 \mid l_1 \xrightarrow {u} l_2 \in E\}\), where \(u^\prime \) is obtained from \(u\) by generating the constraint \(\varsigma (x,l_2)^\prime \le \varsigma (y,l_1) + \mathtt {c}\) from a constraint \(x^\prime \le y + \mathtt {c}\in u\).

Examples Figure 6d is obtained from Fig. 6a by applying the described transformation using the mapping \(\varsigma (x,l_1) = \varsigma (y,l_2) = z\).

Soundness Soundness of the described variable renaming is obvious if there are no two (different) variables \(\mathtt {v}_1\) and \(\mathtt {v}_2\) that are renamed to the same fresh variable at some location \(l\). This is the case if in each SCC of the variable flow graph each location \(l\in L\) appears at most once, i.e., if there is no SCC \(\mathtt {SCC}\) in the variable flow graph of the program such that there is a location \(l\in L\) and variables \(\mathtt {v}_1,\mathtt {v}_2 \in \mathcal {V}\) with \(\mathtt {v}_1 \ne \mathtt {v}_2\) and \((l,\mathtt {v}_1) \in \mathtt {SCC}\) and \((l,\mathtt {v}_2) \in \mathtt {SCC}\). In the literature, a program with this property is called stratifiable (e.g., [5]). A fan-in free \( DCP \) that is not stratifiable can be transformed into a stratifiable and fan-in free \( DCP \) by introducing appropriate case distinctions into the control flow of the program. Details are given in [30]. In the worst-case, however, this transformation can cause an exponential blow up of the number of transitions in the program (the size of the control flow graph).

4 Finding Local Bounds

In this section we describe our algorithm for finding local bounds.

Intuition Let \(\tau = {l_1 \xrightarrow {u} l_2} \in E\) and \(\mathtt {v}\in \mathcal {V}\). Clearly, \(\mathtt {v}\) is a local bound for \(\tau \) if \(\mathtt {v}\) decreases when executing \(\tau \), i.e., if \({\mathtt {v}^\prime \le \mathtt {v}+ \mathtt {c}} \in u\) for some \(\mathtt {c}< 0\). Moreover, \(\mathtt {v}\) is a local bound for \(\tau \), if every time \(\tau \) is executed also some other transition \( t \in E\) is executed and \(\mathtt {v}\) is a local bound for \( t \). This is, e.g., the case if \( t \) is always executed either before each execution of \(\tau \) or after each execution of \(\tau \).

Algorithm The above intuition can be turned into a simple three-step algorithm. Let \({\varDelta }\mathcal {P}(L,E,l_b,l_e)\) be a \( DCP \). (1) We set \(\zeta (\tau ) = 1\) for all transitions \(\tau \) that do not belong to a strongly connected component (SCC) of \({\varDelta }\mathcal {P}\). (2) Let \(\mathtt {v}\in \mathcal {V}\). We define \({\xi }(\mathtt {v}) \subseteq E\) to be the set of all transitions \(\tau = {l_1 \xrightarrow {u} l_2} \in E\) such that \({\mathtt {v}^\prime \le \mathtt {v}+ \mathtt {c}} \in u\) for some \(\mathtt {c}< 0\). For all \(\tau \in {\xi }(\mathtt {v})\) we set \(\zeta (\tau ) = \mathtt {v}\). (3) Let \(\mathtt {v}\in \mathcal {V}\) and \(\tau \in E\). Assume \(\tau \) was not yet assigned a local bound by (1) or (2). We set \(\zeta (\tau ) = \mathtt {v}\) if \(\tau \) does not belong to a strongly connected component (SCC) of the directed graph \((L,E^\prime )\) where \(E^\prime = E{\setminus }\{{\xi }(\mathtt {v})\}\) (the control flow graph of \({\varDelta }\mathcal {P}\) without the transitions in \({\xi }(\mathtt {v})\)).

If there are \(\mathtt {v}_1 \ne \mathtt {v}_2\) s.t. \(\tau \in {\xi }(\mathtt {v}_1) \cap {\xi }(\mathtt {v}_2)\) then \(\zeta (\tau )\) is assigned either \(\mathtt {v}_1\) or \(\mathtt {v}_2\) non-deterministically. An alternative way of handling this case is as follows: we generate two local bound mappings, \(\zeta _1\) and \(\zeta _2\) where \(\zeta _1(\tau ) = \mathtt {v}_1\) and \(\zeta _2(\tau ) = \mathtt {v}_2\). This way we can systematically enumerate all possible choices, finally we apply our bound algorithm once based on \(\zeta _1\), based on \(\zeta _2\), etc., and finally take the minimum over all computed bounds. In our implementation, however, we follow the aforementioned greedy approach based on non-deterministic choice.

Discussion on Soundness Soundness of Steps (1) and (2) is obvious. We discuss soundness of Step (3): let \(\tau \in E\). If \(\tau \) does not belong to an SCC of \((L,E{\setminus }\{{\xi }(\mathtt {v})\})\) we have that some transition in \({\xi }(\mathtt {v})\) (which decreases \(\mathtt {v}\)) has to be executed in between any two executions of \(\tau \). It remains to ensure that there is a decrease of \(\mathtt {v}\) also for the last execution of \(\tau \): for special cases this is unfortunately not the case. Consider Fig. 8b (Sect. 5). The above stated algorithm sets \(\zeta (\tau _1) = [x]\). However, \([x]\) is not a local bound for \(\tau _1\) of Fig. 8b because there is no decrease of \([x]\) for the last execution of \(\tau _1\) (before executing \(\tau _3\)).

It is straightforward to ensure soundness of the algorithm: adding an edge from \(l_e\) to \(l_b\) forces the algorithm to take the last execution of a transition into account. I.e., we set \(E^\prime = E\cup \{l_e \xrightarrow {\emptyset } l_b\}{\setminus }\{{\xi }(\mathtt {v})\}\). Now our algorithm fails to find a local bound for \(\tau _1\) of Fig. 8b, which is sound. We discuss how we handle the example in Fig. 8 in Sect. 4.1.

Complexity Steps (1) and (2): can be implemented in linear time. Step (3): for each \(\mathtt {v}\in \mathcal {V}\) we need to compute the SCCs of \((L,E{\setminus }{\xi }(\mathtt {v}))\). It is well known that SCCs can be computed in linear time (linear in the number of edges and nodes). Since we need to perform one SCC computation per variable, Step (3) is quadratic.

4.1 Generalizing Local Bounds to Sets of Local Bounds

Consider the example in Fig. 7. In Fig. 7b the \( DCP \) obtained by abstraction (Sect. 6) from the program in Fig. 7a is shown. We have that x is a local bound for \(\tau _1\) and y is a local bound for \(\tau _2\). However, it is not straightforward to find a local bound for \(\tau _3\): in order to form a local bound for \(\tau _3\) we need to combine x and y to a linear combination, e.g., \(2x + y\). It is unclear how to automatically come up with such expressions.

In the following we discuss a simple generalization of our algorithm by which we avoid an explicit composition of local bounds.

We generalize the local bound mapping \(\zeta : E\rightarrow Expr (\mathcal {A})\) (Definition 17) to a local bound set mapping \(\zeta : E\rightarrow 2^{ Expr (\mathcal {A})}\).

Definition 25