Abstract

We describe, in a very explicit way, a method for determining the spectra and bases of all the corresponding eigenspaces of arbitrary lifts of graphs (regular or not).

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

For a graph \(\Gamma \) with adjacency matrix A, the spectrum of \(\Gamma \) is defined to be the spectrum of A. While the structure of a graph determines its spectrum, an important converse question in spectral graph theory is to what extent the spectrum determines the structure of a graph; see for example the classical textbooks of Biggs [2], and Cvetković, Doob, and Sachs [4]. In particular, a persisting problem is to show whether or not a given graph is completely determined by its spectrum (see van Dam and Haemers [18]), which has led to the following.

Conjecture 1.1

([18]) Almost every graph is determined by its spectrum.

The table below, showing the ratio of graphs on \(n\le 12\) vertices not determined by their spectrum (‘n-DS’) to all graphs of order n, may be taken as a support of this conjecture.

n | 1 | 2 | 3 | 4 | 5 | 6 | 7 | 8 | 9 | 10 | 11 | 12 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

n-DS | 0 | 0 | 0 | 0 | 0.059 | 0.064 | 0.105 | 0.139 | 0.186 | 0.213 | 0.211 | 0.188 |

Considerable effort has thus been devoted to (partial or entire) identification of spectra of some interesting families of graphs. For instance, Godsil and Hensel [9] explicitly studied the problem of determining the spectrum of certain voltage graphs. Lovász [16] provided a formula that expresses the eigenvalues of a graph admitting a transitive group of automorphisms in terms of group characters. In the particular case of Cayley graphs (when the automorphism group contains a subgroup acting regularly on vertices), Babai [1] derived a more handy formula by different methods (and, as a corollary, obtained the existence of arbitrarily many Cayley graphs with the same spectrum). In fact, Babai’s formula also applies to digraphs and arc-colored Cayley graphs. Following these findings, Dalfó, Fiol, and Širáň [6] considered a more general construction and derived a method for determining the spectrum of a regular lift of a ‘base’ (di)graph equipped with an ordinary voltage assignment, or, equivalently, the spectrum of a regular cover of a (di)graph. Recall, however, that by far not all coverings are regular; a description of arbitrary graph coverings by the so-called permutation voltage assignments was given by Gross and Tucker [11].

In this paper, we generalize our previous results to arbitrary lifts of graphs (regular or not). Our method not only gives the complete spectra of lifts but also provides bases of the corresponding eigenspaces, both in a very explicit way.

Of course, as a consequence, our method also furnishes the associated characteristic polynomials. To summarize the work previously done in this direction, Kwak and Lee [14] and Kwak and Kwon [15] dealt with the cases of characteristic polynomials of lifts with voltages in Abelian or dihedral groups. Furthermore, Mizuno and Sato [17] obtained a formula for the characteristic polynomial of a regular covering with voltages in the symmetric group, whereas Feng, Kwak, and Lee [8] also solved the case of graph coverings that are not necessarily regular. In these papers, the characteristic polynomials are essentially given in terms of ‘large’ matrices (based on the results of Kwak and Lee [14], where the adjacency matrix of the lift graph was expressed in terms of Kronecker products).

Our approach, in contrast, uses relatively ‘small’ quotient-like matrices derived from the base graph to completely determine the spectrum of the lift (and eigenspace bases).

The paper is organized as follows. In the next section, we recall the notions of permutation voltage assignments on a graph together with the associated lifts, along with an equivalent description of these in terms of relative voltage assignments, and pointing out also their relationship with regular coverings. In Sect. 3, we revisit some fundamental facts in representation theory of groups, and also present a new result on group representations, which could be of interest on its own. Section 4 deals with the main result, where the complete spectrum of a relative lift graph is determined, together with bases of the associated eigenspaces. Our method is illustrated by an example in Sect. 5. Finally, in the last section, we discuss the case of regular lifts of digraphs in the light of applications of group characters.

2 Lifts and permutation voltage assignments

Let \(\Gamma \) be an undirected graph (possibly with loops and multiple edges) and let n be a positive integer. As usual in algebraic and topological graph theory, we will think of every undirected edge joining vertices u and v (not excluding the case when \(u=v\)) as consisting of a pair of oppositely directed arcs; if one of them is denoted a, then the opposite one will be denoted \(a^-\). Let \(V=V(\Gamma )\) and \(X=X(\Gamma )\) be the sets of vertices and arcs of \(\Gamma \).

2.1 Permutation voltage assignments

Let G be a subgroup of the symmetric group \(\mathrm{Sym}(n)\), that is, a permutation group on the set \([n]=\{1,2, \ldots , n\}\). A permutation voltage assignment on the graph \(\Gamma \) is a mapping \(\alpha : X\rightarrow G\) with the property that \(\alpha (a^-)=(\alpha (a))^{-1}\) for every arc \(a\in X\). Thus, a permutation voltage assignment allocates a permutation of n letters to each arc of the graph, in such a way that a pair of mutually reverse arcs forming an undirected edge receives mutually inverse permutations.

The graph \(\Gamma \) together with the permutation voltage assignment \(\alpha \) determine a new graph \(\Gamma ^{\alpha }\), called the lift of \(\Gamma \), which is defined as follows. The vertex set \(V^{\alpha }\) and the arc set \(X^{\alpha }\) of the lift are simply the Cartesian products \(V\times [n]\) and \(X\times [n]\). As regards incidence in the lift, for every arc \(a\in X\) from a vertex u to a vertex v for \(u,v\in V\) (possibly, \(u=v\)) in \(\Gamma \) and for every \(i\in [n]\), there is an arc \((a,i)\in X^{\alpha }\) from the vertex \((u,i)\in V^{\alpha }\) to the vertex \((v,i\alpha (a))\in V^{\alpha }\). Note that we write the argument of a permutation to the left of the symbol of the permutation, with the composition being read from the left to the right.

If a and \(a^{-}\) are a pair of mutually reverse arcs forming an undirected edge of G, then for every \(i\in [n]\) the pairs (a, i) and \((a^-,i\alpha (a))\) form an undirected edge of the lift \(\Gamma ^{\alpha }\), making the lift an undirected graph in a natural way. If \(\Gamma \) is connected, then the lift \(\Gamma ^{\alpha }\) is connected if and only if the local group at a vertex \(u\in V\) (that is, the subgroup of G generated by the voltage products on all closed walks rooted at u) is a transitive permutation group on the set [n], see Ezell [7].

2.2 Coverings and permutation voltage assignments

The mapping \(\pi :\Gamma ^{\alpha }\rightarrow \Gamma \) that is defined by erasing the second coordinate, that is, \(\pi (u,i)=u\) and \(\pi (a,i)=a\), for every \(u\in V\), \(a\in X\) and \(i\in [n]\), is a covering, in its usual meaning in algebraic topology. Since \(\pi \) consists of n-to-1 mappings \(V^{\alpha }\rightarrow V\) and \(X^{\alpha }\rightarrow X\), we speak about an n-fold covering; we note that this covering may not be regular, see, for instance, Gross and Tucker [11]. The graph \(\Gamma \) is often called the base graph of the covering.

Conversely, given an arbitrary n-fold covering \(\vartheta \) (regular or not) of our base graph \(\Gamma \) by some graph \({\tilde{\Gamma }}\) with vertex set \({\tilde{V}}\) and arc set \({\tilde{X}}\), then \(\vartheta \) is equivalent to a covering described as above. In more detail, being a covering means that \(\vartheta :{\tilde{\Gamma }} \rightarrow \Gamma \) is assumed to induce n-fold mappings \({\tilde{V}}\rightarrow V\) and \({\tilde{X}}\rightarrow X\) with the property that for every vertex \(u\in V\) and for every vertex \({\tilde{u}}\in \vartheta ^{-1}(u)\subset {\tilde{V}}\) the set of arcs of \({\tilde{\Gamma }}\) emanating from \({\tilde{u}}\) are mapped bijectively by the same mapping \(\vartheta \) onto the set of arcs of \(\Gamma \) emanating from u. Then, there is a permutation voltage assignment \(\alpha : X\rightarrow \mathrm{Sym}(n)\) inducing a covering \(\pi : \Gamma ^{\alpha }\rightarrow \Gamma \) as above, and a graph isomorphism \(\omega : {\tilde{\Gamma }}\rightarrow \Gamma ^{\alpha }\), such that \(\vartheta =\omega \pi \), as shown in the following commutative diagram.

2.3 Relative voltage assignments

Permutation voltage assignments and the corresponding lifts and covers can equivalently be described in the language of the so-called relative voltage assignments as follows. Let \(\Gamma \) be the graph considered above, K a group and L a subgroup of K of index n; we let K/L denote the set of right cosets of L in K. Furthermore, let \(\beta : X\rightarrow K\) be a mapping satisfying \(\beta (a^-)= (\beta (a))^{-1}\) for every arc \(a\in X\); in this context, one calls \(\beta \) a voltage assignment in K relative to L, or simply a relative voltage assignment. The relative lift \(\Gamma ^{\beta }\) has vertex set \(V^{\beta }= V\times K/L\) and arc set \(X^{\beta }=X\times K/L\). Incidence in the lift is given as expected: If a is an arc from a vertex u to a vertex v in \(\Gamma \), then for every right coset \(J\in K/L\) there is an arc (a, J) from the vertex (u, J) to the vertex \((v,J\beta (a))\) in \(\Gamma ^{\beta }\). We slightly abuse the notation and denote by the same symbol \(\pi \) the covering \(\Gamma ^{\beta }\rightarrow \Gamma \) given by suppressing the second coordinate.

There is a 1-to-1 correspondence between permutation and relative voltage assignments on a connected base graph. Namely, if \(\alpha \) is a permutation voltage assignment as in the first paragraph, then the corresponding relative voltage group is \(K=G\), with a subgroup L being the stabilizer in K of an arbitrary point from the set [n]. The corresponding relative assignment \(\beta \) is simply identical to \(\alpha \), but acting by right multiplication on K/L. Conversely, if \(\beta \) is a voltage assignment in a group K relative to a subgroup L of index n in K, then one may identify the set K/L with [n] in an arbitrary way, and then \(\alpha (a)\) for \(a\in X\) is the permutation of [n] induced (in this identification) by right multiplication on the set of (right) cosets K/L by \(\beta (a)\in K\).

We also note that a covering \(\Gamma ^{\beta }\rightarrow \Gamma \), described in terms of a permutation voltage assignment, is regular if and only if the group \(G = \langle \alpha (a):a\in X\rangle \) is a regular permutation group on [n]. If the covering is given by a voltage assignment in a group K relative to a subgroup L, then it is equivalent to a regular covering if and only if L is a normal subgroup of K. In such a case, the covering admits a description in terms of ordinary voltage assignment in the factor group K/L and with voltage \(L\beta (a)\) assigned to an arc \(a\in X\) with original relative voltage \(\beta (a)\). For more details about lifts and coverings, see Gross and Tucker [12].

At this point, it may be instructive to point out a relationship between ordinary and relative voltage assignments and the corresponding lifts. If \(\beta \) is a voltage assignment on our graph \(\Gamma \) in a group K relative to a subgroup L of index n, then the same \(\beta \) can also be considered to be an ordinary voltage assignment in the group K (with no reference to L or, equivalently, letting L be the trivial group). The ordinary lift of \(\Gamma \), which we distinguish by the notation \(\Gamma _0^\beta \), has vertex set \(V\times K\) and arc set \(X\times K\), and for every arc \(a\in X\) from u to v and for every \(g\in K\) there is an arc (a, g) from the vertex (u, g) to the vertex \((v,g\alpha (a))\) in \(\Gamma _0^\beta \). Observe that this gives rise to two coverings: The covering \(\Gamma _0^{\beta }\rightarrow \Gamma ^\beta \) given on vertices and arcs of \(\Gamma _0^{\beta }\) by \((u,g)\mapsto (u,Lg)\) and \((a,g)\mapsto (a,Lg)\), followed by the covering \(\pi : \Gamma ^\beta \rightarrow \Gamma \) considered before and given by erasing the second coordinate. Their composition is a regular covering \(\Gamma _0^\beta \rightarrow \Gamma \) given, again, by suppressing the group coordinate.

2.4 An example

The following example is drawn from Gross and Tucker [11].

Example 2.1

Let \(\Gamma \) be the dumbbell graph with vertex set \(V=\{u,v\}\) and arc set \(X=\{a,a^-,b,b^-, c,c^-\}\), where the pairs \(\{a,a^-\}\), \(\{b,b^-\}\) and \(\{c,c^-\}\) correspond, respectively, to a loop at u, an edge joining u to v, and a loop at v. Let \(n=3\) and let \(\alpha \) be a voltage assignment on \(\Gamma \) with values in \(\mathrm{Sym}(3)\) given by \(\alpha (a)=(23)\), \(\alpha (b)=e\) (the identity element), and \(\alpha (c)=(12)\), so that the voltage group G is equal to \(\langle (12),(23)\rangle = \mathrm{Sym}(3)\).

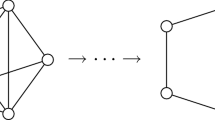

We may describe the situation in terms of a relative voltage assignment. To do so, we let K be equal to G, and for L we take the subgroup \(H=\mathrm{Stab}_G(1)= \{e,(23)\}\) of G. Furthermore, let the set \([3]=\{1,2,3\}\) be identified with G/H by \(1\mapsto H\), \(2\mapsto H(12)\), and \(3\mapsto H(13)\), and let \(\alpha \) be as above but acting by a right multiplication on the right cosets of H in G. Then, for example, in the lift \(\Gamma ^{\alpha }\) the arc (a, 2) emanates from the vertex (u, 2) and terminates at the vertex \((u,2\alpha (a))=(u,3)\) in the permutation voltage setting. Equivalently, in the relative voltage setting, with 2 and 3 identified with H(12) and H(13), the corresponding arc (a, H(12)) starting at the vertex (u, H(12)) points at the vertex \((u,H(12)\alpha (a)) =(u,H(13))\) because \(H(12)\alpha (a) = H(12)(23) = \{(13),(132)\} = H(13)\). The covering \(\pi : \Gamma ^{\alpha }\rightarrow \Gamma \) is irregular since H is not a normal subgroup of G; the situation is displayed in Fig. 1.

3 Some results on group representations

In this section, we recall some basic results on representation theory that will be used in our study; see, for instance, the textbooks of Burrow [3], and James and Liebeck [13]. With the same purpose, we also give a new result on group representations, which may be of interest on its own.

We begin by stating a result well known as the Great Orthogonality Theorem. For a complex representation \(\rho \) of a group G, we let \(d_\rho =\dim (\rho )\) denote the dimension of \(\rho \); as usual, \(\rho (g)_{i,j}\) will be the (i, j)th entry of the matrix \(\rho (g)\) for \(g\in G\). We denote by \(z^*\) the complex conjugate of a complex number z. Furthermore, let \({{\,\mathrm{Irep}\,}}(G)\) denote a complete set of unitary irreducible representations of G. Let us also recall the Kronecker symbol \(\delta _{s,t}\), for s, t in any suitable domain, equal to 0 if \(s\ne t\) and to 1 if \(s=t\).

Theorem 3.1

(The great orthogonality theorem) Let G be a finite group. Then, for any \(\rho ,\rho '\in {{\,\mathrm{Irep}\,}}(G)\),

The second result we will need later is also well known.

Theorem 3.2

(Orthogonality theorem for characters) Let G be a finite group and for \(g\in G\) let \(c_g\) denote the size of the conjugacy class of g in the group G. Furthermore, let \(\chi ^\rho \) be the character of an irreducible representation \(\rho \in {{\,\mathrm{Irep}\,}}(G)\).

- (i):

-

For any \(\rho ,\rho '\in {{\,\mathrm{Irep}\,}}(G)\),

$$\begin{aligned} \sum _{g\in G}\chi ^\rho (g)\chi ^{\rho '}(g)^*=|G|\delta _{\rho ,\rho '}. \end{aligned}$$ - (ii):

-

For any \(g,g'\in G\),

$$\begin{aligned} \sum _{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)}\chi ^\rho (g)\chi ^\rho (g')^*=\frac{|G|}{c_{g}}\delta _{g,g'} \end{aligned}$$where \(\delta _{g,g'}=1\) if g and \(g'\) belong to the same conjugacy class of G, and \(\delta _{g,g'}=0\) otherwise.

In the proof of our main result in Sect. 4, we will also need the following proposition, which we could not locate in the literature and which could be of interest on its own. For a subgroup H of a finite group G and for any \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), we let \(\rho (H)=\sum _{h\in H}\rho (h)\); that is, \(\rho (H)\) is the sum of \(d_\rho \)-dimensional complex matrices \(\rho (h)\) taken over all elements h of the subgroup H. We note that, in general, it may happen that \(\rho (H)\) is the zero matrix, although, of course, all the matrices \(\rho (h)\) for \(h\in H\) are non-singular. As usual, the symbol \({{\,\mathrm{rank}\,}}(M)\) stands for the rank of a matrix M.

Proposition 3.3

For every group G and every subgroup H of G of index n,

Proof

We will freely use a few facts about irreducible representations and characters of groups, taken from James and Liebeck [13]. Let \(\rho |H\) be the representation of the subgroup H of G induced by \(\rho \). If \(\sigma \) is any non-trivial irreducible constituent of the decomposition of \(\rho |H\) into an irreducible representation of H, then, by the elementary representation theory (a particular case of the Great Orthogonality Theorem), \(\sum _{h\, \in \, H} \sigma (h)=0\). On the other hand, for a trivial representation \(\tau \) of H, we have \(\sum _{h\, \in \, H}\tau (h)=|H|\ne 0\). It follows that \({{\,\mathrm{rank}\,}}(\sum _{h\, \in \, H}\rho (h)) = s\) if and only if the trivial representation appears exactly s times in the decomposition of \(\rho |H\) into irreducible constituents. Therefore, if the character \(\chi ^{\rho |H}\) decomposes into irreducible characters of H in the form

then, by the above argument, \(s=a_\iota \), where \(\iota =\iota _H\) denotes here the trivial representation of H. Moreover, by the first form of the Orthogonality Theorem for Characters, one has

where \(\langle \cdot ,\cdot \rangle \) denotes the standard inner product of characters relative to H. We now invoke the last identity of the proof of Proposition 20.4 on page 213 of [13], by which

for any character \(\psi \) of G; here e is the unit element of G. Letting \(\psi \) be the trivial character, using \(n=|G|/|H|\), and realizing that \(\chi ^\rho (e)=\dim (\rho )=d_\rho \), Eq. (3) reduces to

Finally, by (2) we know that \({{\,\mathrm{rank}\,}}(\rho (H))= {{\,\mathrm{rank}\,}}(\sum _{h\, \in \, H}\rho (h)) = s=\langle \chi ^{\rho |H},\chi ^{\iota } \rangle \), which in combination with (4) gives Eq. (1), as expected. \(\square \)

Note that Proposition 3.3 generalizes the well-known identity \(\sum _{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)} (d_\rho )^2=|G|\) by letting \(H=1\), with \(\sum _{h\in H}\rho (h)\) reducing to the identity matrix of rank equal to \(d_\rho =\dim (\rho )\). In the proof, this shows up by the fact that \(\chi ^{\rho |H}\) is equal to \(d_\rho \) if H is trivial.

4 The spectrum of a relative lift

Let \(\Gamma \) be a connected graph on k vertices (with loops and multiple edges allowed), and let \(\alpha \) be a permutation voltage assignment on the arc set X of \(\Gamma \) in a transitive permutation group G of degree n. Letting, without loss of generality, \(H=\mathrm{Stab}_G(1)\), we will freely use the fact that \(\alpha \) is equivalent to the assignment in G relative to H given by the same mapping \(\alpha \), but it is considered to act on right cosets of G/H by right multiplication.

To the pair \((\Gamma ,\alpha )\) as above, we assign the \(k\times k\) base matrix B, a square matrix whose rows and columns are indexed by elements of the vertex set of \(\Gamma \), and whose uvth element \(B_{uv}\) is determined as follows: If \(a_1,\ldots ,a_j\) is the set of all the arcs of \(\Gamma \) emanating from u and terminating at v (not excluding the case \(u=v\)), then

the sum being an element of the complex group algebra \(\mathbb C(G)\); otherwise, we let \(B_{u,v}=0\).

As before, let \(\rho \in {{\,\mathrm{Irep}\,}}(G)\) be a unitary irreducible representation of G of dimension \(d_\rho \). For our graph \(\Gamma \) on k vertices, the assignment \(\alpha \) in G relative to H, and the base matrix B, we let \(\rho (B)\) be the \(d_\rho k\times d_\rho k\) matrix obtained from B by replacing every nonzero entry \(B_{u,v} = \alpha (a_1) +\cdots + \alpha (a_j) \in \mathbb C(G)\) as in (5) by the \(d_\rho \times d_\rho \) matrix \(\rho (B_{u,v})\) defined by

and by replacing zero entries of B by all-zero \(d_\rho \times d_\rho \) matrices. We will refer to \(\rho (B)\) as the \(\rho \)-image of the base matrix B.

An important role in our consideration will be played by the matrix \(\rho (H)= \sum _{h\in H}\rho (h)\), that we have encountered in Proposition 3.3; let \(r_{\rho ,H}={{\,\mathrm{rank}\,}}(\rho (H))\). With this notation, we return to the \(\rho \)-image of the base matrix, and we let \({{\,\mathrm{Sp}\,}}(\rho (B))\) denote the spectrum of \(\rho (B)\), that is, the multiset of all the \(d_\rho k\) eigenvalues of the matrix \(\rho (B)\). Finally, let \(r_{\rho ,H}\cdot {{\,\mathrm{Sp}\,}}(\rho (B))\) be the multiset of \(d_\rho kr_{\rho ,H}\) values obtained by taking each of the \(d_\rho k\) entries of the spectrum \({{\,\mathrm{Sp}\,}}(\rho (B))\) exactly \(r_{\rho ,H}={{\,\mathrm{rank}\,}}(\rho (H))\) times. This, in particular, means that if \(r_{\rho ,H}={{\,\mathrm{rank}\,}}(\rho (H))=0\), the set \(r_{\rho ,H}\cdot {{\,\mathrm{Sp}\,}}(\rho (B))\) is empty.

With this terminology and notation, we are ready to state and prove our main result.

Theorem 4.1

Let \(\Gamma \) be a base graph of order k and let \(\alpha \) be a voltage assignment on \(\Gamma \) in a group G relative to a subgroup H of index n in G. Then, the multiset of the kn eigenvalues of the relative lift \(\Gamma ^{\alpha }\) is obtained by concatenation of the multisets \({{\,\mathrm{rank}\,}}(\rho (H))\cdot {{\,\mathrm{Sp}\,}}(\rho (B))\), ranging over all the irreducible representations \(\rho \in {{\,\mathrm{Irep}\,}}(G)\).

Proof

Let \(A^{\alpha }\) be the adjacency matrix of the relative lift \(\Gamma ^{\alpha }\) with vertex set \(V^{\alpha }=V\times G/H\), where V is the vertex set of the base graph \(\Gamma \), and G/H is the set of right cosets of H in G. In more detail, \(A^{\alpha }\) is a \(kn\times kn\) matrix indexed by ordered pairs \((u,J)\in V^{\alpha }\) whose entries are given as follows. If there is no arc from u to \(u'\) for \(u,u'\in V\) in \(\Gamma \), then there is no arc from any vertex (u, J) to any vertex \((u',J')\) for \(J,J'\in G/H\) in the lift \(\Gamma ^{\alpha }\). In the case of existing adjacencies, if for some \(g\in G\), there are exactly t arcs \(a_1,\ldots ,a_t\) from some vertex u to some vertex v in \(\Gamma \) that carry the voltage \(g=\alpha (a_1)=\cdots =\alpha (a_t)\), then for every right coset \(J\in G/H\) the (u, J)(v, Jg)th entry of \(A^{\alpha }\) is equal to t.

Our aim is to show that the multiset of all the eigenvalues of the matrix \(A^{\alpha }\) is equal to a concatenation of multisets coming from very different matrices. Namely, those of the form \(\rho (B)\), where in addition each entry in their spectrum is taken \({{\,\mathrm{rank}\,}}(\rho (H))\) times, and with \(\rho \) ranging over all the irreducible representations of G. To do so, we proceed as follows.

Take a representation \(\rho \in {{\,\mathrm{Irep}\,}}(G)\) of dimension \(d=d_{\rho }\) and consider the matrix \(\rho (B)\), the \(\rho \)-image of the base matrix B of \(\Gamma \) relative to H. Let \(D=D_\rho \) be a diagonal matrix formed by the multiset \({{\,\mathrm{Sp}\,}}(\rho (B))\) of dk eigenvalues of \(\rho (B)\). Furthermore, let \(U=U_\rho \) be a \(dk\times dk\) matrix whose columns are eigenvectors of \(\rho (B)\) corresponding to the eigenvalues in the main diagonal of D, so that the three \(dk\times dk\) matrices \(\rho (B)\), D and U satisfy

Assume that the base matrix B is indexed by the elements of the vertex set V of \(\Gamma \) in some fixed order, and that this indexing is lifted to the three matrices above, so that, say, for \(u\in V\) the matrix U is formed by k strips of width d, indexed by (u, i) for a particular \(u\in V\), where i ranges over the set \([d]=\{1,2,\ldots ,d\}\). For any \(u\in V\), let \(\mathbf{x}_u\) be such a \(d\times dk\) strip of U. The product \(\mathbf{x}_uD\) is a \(d\times dk\) strip taken from the right-hand side of (7), consisting of a ‘row’ of \(d\times d\) square matrices of the form \(\mathbf{x}_{u,w}D_w\), where, for each fixed vertex \(w\in V\), the square block \(\mathbf{x}_{u,w}\) corresponds to columns indexed by (w, j) for \(j\in [d]\), and \(D_w\) is the square diagonal block of D corresponding to w; one should keep in mind that these blocks depend on \(\rho \) that we omit from our notation.

Because of Eq. (7) the same square block \(\mathbf{x}_{u,w}\) arises from the left-hand side of (7) as the product of the horizontal \(d\times dk\) strip of \(\rho (B)\) corresponding to the vertex u with the vertical \(dk\times d\) strip \(U_w\) of U corresponding to the vertex w. By (6), the horizontal \(d\times dk\) strip of \(\rho (B)\) consists of square \(d\times d\) blocks of the form \(\rho (B_{u,v})\) with v ranging over the set V. The vertical \(dk\times d\) strip \(U_w\) corresponding to w consists of square \(d\times d\) blocks \(\mathbf{x}_{v,w}\) mentioned above, again with v ranging over V. Using the symbol \(v\sim u\) to capture all arcs from u to v, it follows that the product of these two strips is a \(d\times d\) square block of the form \(\sum _{v\sim u} \rho (B_{u,v})\mathbf{x}_{v,w}\), and by the previous paragraph this square block is also equal to \(\mathbf{x}_{u,w}D_w\). Since \(w\in V\) was arbitrary, we conclude that, for every \(u\in V\),

Next, for every vertex (u, Hg) of the relative lift \(\Gamma ^{\alpha }\) consider the \(d\times dk\) matrix

A straightforward calculation using (8) gives

where we emphasize again that the summation symbol \(v\sim u\) means summing, for every \(v\in V\), over all arcs from u to v, which is in accordance with \(B_{u,v}\) expressed earlier as in (5). But the last term in the chain of the equations above is, by (9), equal to \(\sum _{v\sim u} \Phi _{(v,Hg\alpha (u,v))}\), so that we have derived

To get insight into Eq. (10), recall that D consists of square diagonal blocks \(D_w\) for each vertex \(w\in V\); let \(\mu _{(w,i)}\) for \(i\in [d]\) be the eigenvalues of the matrix \(\rho (B)\) appearing as diagonal entries of \(D_w\). (We point out again that the eigenvalues depend on the representation \(\rho \) we have been working with, so that one should write \(D_w=D_{\rho ,w}\) and \(\mu _{(w,i)}=\mu _{(w,i)}(\rho )\), but we chose to keep things simple if no confusion is likely.) For any \(v\in V\), let \(\Phi _{(v,Hg),w}\) be the square \(d\times d\) block of \(\Phi _{(v,Hg)}\) corresponding to a vertex \(w\in V\). Equation (10) now states that, for any fixed \(i\in [d]\) and any fixed vertex \(w\in V\), the ith column of the square block \(\Phi _{(u,Hg),w}\) multiplied by \(\mu _{(w,i)}\) is equal to the sum of the ith columns of the square blocks \(\Phi _{(v,Hg\alpha (u,v)),w}\), the summation ranging over all \(v\sim u\).

The conclusion of the last paragraph means that for every pair of fixed elements \(j\in [d]\) and \((w,i)\in V\times [d]\), the kn-dimensional vector \(\mathbf{y}^H=\mathbf{y}^H_{j,(w,i)}(\rho )\), obtained by taking the (j, (w, i))th entry of every matrix \(\Phi _{(v,Hh)}\) for the kn vertices (v, Hh) of \(\Gamma ^{\alpha }\), has the property that for every \(u\in V\) the \(\mu _{(w,i)}\)-multiple of the (u, Hg)th coordinate of \(\mathbf{y}^H\) is equal to the sum of \((v,Hg\alpha (u,v))\)th coordinates of \(\mathbf{y}\) over all the adjacencies \(v\sim u\). In other words, \(\mathbf{y}^H\) can be identified with a function on the vertex set \(V^{\alpha }\) of the relative lift \(\Gamma ^{\alpha }\) with the property that, for any vertex \((u,J)\in V^{\alpha }\), the sum of the values of \(\mathbf{y}^H\) taken over all the adjacencies \(v\sim u\) is the \(\mu _{(w,i)}\)-multiple of the value of \(\mathbf{y}^H\) at (u, J). But, in the case of a nonzero vector, this is the defining property of an eigenvector of the adjacency matrix \(A^{\alpha }\) of our lift. Thus, if \(\mathbf{y}^H=\mathbf{y}^H_{j,(w,i)}(\rho )\ne \mathbf{0}\), then it is an eigenvector of the relative lift \(\Gamma ^{\alpha }\) corresponding to the eigenvalue \(\mu _{(w,i)}=\mu _{(w,i)} (\rho )\) in the spectrum of the matrix \(\rho (B)\).

The question now is if, in this way, one can determine bases for all eigenspaces of the relative lift. Their expected sum of dimensions is kn, the number of vertices of \(\Gamma ^{\alpha }\). As on both sides of (10), the matrix \(\rho (H)\) appears as a factor, one cannot produce more than \(r_{\rho ,H}= {{\,\mathrm{rank}\,}}(\rho (H))\) linearly independent vectors \(\mathbf{y}=\mathbf{y}_{j,(w,i)}(\rho )\) as described above for the eigenvalue \(\mu _{(w,i)}(\rho )\). Taking into account that w is one of the k elements of V and invoking Proposition 3.3, this means that our method furnishes at most \(k\cdot \sum _{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)} d_{\rho } r_{\rho ,H} = kn\) linearly independent vectors \(\mathbf{y}\) as above. To prove that we obtain a multiset of eigenvalues and bases of eigenspaces with the dimension sum as expected, we only need to show that our procedure gives rise to kn independent eigenvectors of \(A^{\alpha }\).

To do so, let us begin with the regular case, which may be identified with the situation when H is the trivial group and \(n=|G|\), and let us re-examine (10) as follows. Recalling the ‘sections’ \(\mathbf{x}_{u,w}\), identity (10) may be rewritten with the help of (9) in the form

for every \(u,w\in V\). And, as argued above, (11) means that for a given \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), fixed row and column indices \(j,i\in [d]\) for \(d=d_\rho =\mathrm{dim}(\rho )\), and a given \(w\in V\), the collection of the (j, i)th entries of the product \(\rho (g)\mathbf{x}_{u,w}\) of the two \(d\times d\) matrices for varying \(g\in G\) and \(u\in V\) determine a k|G|-dimensional vector \(\mathbf{y} = \mathbf{y}_{j,(w,i)} = \mathbf{y}_{j,(w,i)}(\rho )\) that, if nonzero, is an eigenvector for the eigenvalue \(\mu _{(w,i)}= \mu _{(w,i)}(\rho )\).

This can be expressed in the following equivalent form. For \(j\in [d]\) let \(R_{\rho ,j}\) be the \(|G|\times d\) matrix whose row indexed by \(g\in G\) is equal to the jth row of \(\rho (g)\). We will be assuming the same indexing of the rows by elements \(g\in G\) in all \(R_{\rho ,j}\) for \(j\in [d]\). The ith column of the product \(R_{\rho ,j}{} \mathbf{x}_{u,w}\) will then account for the |G| coordinates of the vector \(\mathbf{y}\) that corresponds to a fixed \(u\in V\) but varying \(g\in G\). Furthermore, let \(S_{\rho ,j} = \mathrm{diag}(R_{\rho ,j},\ldots , R_{\rho ,j})\) be the \(k|G|\times kd\) block-diagonal matrix consisting of k diagonal blocks, each equal to \(R_{\rho ,j}\). Here we assume that the row- and column-indexing of the blocks of \(S_{\rho ,j}\) by elements of the set V is the same as used for the matrix \(U=U_\rho \) at the beginning of the proof. Last, recall the matrix \(U_w=U_{\rho ,w}\), which may also be viewed as a concatenation of k square d-dimensional sections \(\mathbf{x}_{u,w}\) into one ‘column of blocks’ for varying \(u\in V\). Then, the entire vector \(\mathbf{y}= \mathbf{y}_{j,(w,i)} (\rho )\) can be identified with the ith column of the product \(S_{\rho ,j} U_{\rho ,w}\). Since the matrix \(U = U_\rho \) is just a concatenation of the strips \(U_{\rho ,w}\) for \(w\in V\), extending the last finding over all \(w\in V\), we conclude that:

Claim 1. The collection of kd vectors \(\mathbf{y}= \mathbf{y}_{j,(w,i)}(\rho )\) for varying \(w\in V\) and \(i\in [d]\) can be identified with the collection of all the kd columns of the product \(S_{\rho ,j} U_\rho \).

Continuing in this fashion, let \(S_\rho \) be the \(|G|k\times d^2k\) block matrix of the form \(S_\rho =(S_{\rho ,1}, \ldots , S_{\rho ,j}, \ldots , S_{\rho ,d})\) for \(d=d_\rho \) obtained by concatenating the matrices \(S_{\rho ,j}\) for \(j\in [d]\). Furthermore, let \(T_\rho =\mathrm{diag}(U_\rho ,\ldots , U_\rho )\) be the \(kd^2\times kd^2\) block-diagonal matrix formed by d identical diagonal blocks equal to \(U_\rho \). By the same token as before, Claim 1 may then be extended to stating that the vectors \(\mathbf{y}= \mathbf{y}_{j,(w,i)}(\rho )\), for varying \(w\in V\) and \(i\in [d]\) and also for varying \(j\in [d]\), can be identified with the \(d^2k\) columns of the product \(S_\rho T_\rho \).

We are now ready to perform the final step of our procedure to determine all the eigenspaces of the lift. Let \(S = (S_{\rho })_{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)}\) be the \(|G|k\times |G|k\) square matrix obtained by concatenating the \(|{{\,\mathrm{Irep}\,}}(G)|\) blocks \(S_\rho \) of dimension \(|G|k\times d_\rho k\) for \(\rho \in {{\,\mathrm{Irep}\,}}(G)\) into one ‘thick row’. Finally, let \(T = \mathrm{diag}(T_\rho )_{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)}\) be the block-diagonal \(|G|k\times |G|k\) matrix consisting of \(d_\rho \) blocks of type \(d_\rho k \times d_\rho k\), all equal to \(T\rho \); we will assume that both S and T arise from the same ordering of elements \(\rho \in {{\,\mathrm{Irep}\,}}(G)\). Note that the number of columns of S and the dimensions of T are indeed equal to |G|k since \(\sum _{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)} d_{\rho }^2=|G|\). This allows us to extend Claim 1 and the subsequent statements as follows:

Claim 2. The collection of \(k|G| = k\sum _{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)} d_{\rho }^2\) vectors \(\mathbf{y}= \mathbf{y}_{j,(w,i)}(\rho )\) for varying \(w\in V\), \(i,j\in [d_\rho ]\), and \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), can be identified with the collection of all the k|G| columns of the product ST.

To prove that the k|G| vectors \(\mathbf{y}= \mathbf{y}_{j,(w,i)}(\rho )\) for varying \(w\in V\), \(i,j\in [d]\) and \(\rho \in {{\,\mathrm{Irep}\,}}(G)\) are linearly independent—and hence constituting a complete set of representatives of the eigenspaces of the lift—it is sufficient to show that \(\mathrm{rank}(ST)=k|G|\). Our way of introducing T implies that it is a block-diagonal matrix consisting, for each \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), of \(d_\rho \) diagonal \(d_\rho k\times d_\rho k\) blocks equal to \(U_\rho \). Recall now that \(U_\rho \) is a \(dk\times dk\) matrix whose columns are dk linearly independent eigenvectors of the matrix \(\rho (B)\) encountered at the beginning of the proof. This, in combination with our description of T, immediately implies that \(\mathrm{rank}(T)=k|G|\). Also, recall that S is a concatenation of \(k|G|\times kd_{\rho }^2\) blocks \(S_\rho \) for \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), where \(S_\rho =(S_{\rho ,1}, \ldots , S_{\rho ,d_\rho })\) themselves are concatenations of the matrices \(S_{\rho ,j}=\mathrm{diag}(R_{\rho ,j},\ldots , R_{\rho ,j})\) for \(j\in [d]\) consisting of k equal diagonal blocks, with \(R_{\rho ,j}\) being the \(|G|\times d\) matrix whose row indexed by \(g\in G\) is equal to the jth row of \(\rho (g)\). By the indexing convention introduced earlier, the diagonal blocks of \(S_{\rho ,j}\) can be assumed to be indexed by (v, v) for \(v\in V\); such a \(v\in V\) will be the position of the block \(R_{\rho ,j}\) in \(S_{\rho ,j}\).

With this preparation, we now show that any two distinct columns of S are orthogonal (obviously, all these columns are nonzero vectors). Indeed, let us consider two distinct columns \(c=c(j,i,v,\rho )\) and \(c'=c'(j',i',v',\rho ')\) of S, obtained as extensions of the ith column of \(R_{\rho ,j}\) at position v and the \(i'\)th column of \(R_{\rho ',j'}\) at position \(v'\). If \(v\ne v'\), then, by construction of S, the dot product \(c\cdot c'\) is equal to zero simply because, in the product, every nonzero coordinate of c meets a zero coordinate of \(c'\) and vice versa. If \(v=v'\), then one just needs to realize that elements of \(R_{\rho ,j}\) and \(R_{\rho ',j'}\) are entries \(\rho (g)_{j,i}\) and \(\rho '(g)_{j',i'}\) of the matrices \(\rho (g)\) and \(\rho '(g)\) for \(g\in G\), respectively. The product \(c\cdot c'\) for \(v=v'\) thus reduces to \(\sum _{g\in G} \rho (g)_{j,i} \rho '(g)_{j',i'}\) that, by the Great Orthogonality Theorem, is equal to zero whenever \(j\ne j'\), \(i\ne i'\) or \(\rho \ne \rho '\). This proves the orthogonality of the columns of S.

But then, the rank of ST is equal to k|G|, which means that the column vectors of ST give a complete set of representatives of the eigenspaces of the lift \(\Gamma ^{\alpha }\) in the regular case.

It remains to consider the case when H is an arbitrary subgroup of G of index n. Using the previously introduced notation and realizing that \(\rho (H)\rho (g)=\rho (Hg)\), in this general case identity (10) with the help of (9) may be rewritten in the form

for every \(u,w\in V\) and each right coset \(J\in G/H\). Recall that (12) now means that for a given \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), fixed row and column indices \(j,i\in [d]\) for \(d=d_\rho \), and a given \(w\in V\), the collection of the (j, i)th entries of the product \(\rho (J)\mathbf{x}_{u,w}\) of the two \(d\times d\) matrices for varying \(J\in G/H\) and \(u\in V\) determine a kn-dimensional vector \(\mathbf{y}^H = \mathbf{y}^H_{j,(w,i)}(\rho )\) which, if nonzero, is an eigenvector of the relative lift for the eigenvalue \(\mu _{(w,i)}= \mu _{(w,i)}(\rho )\).

Bearing this in mind, it follows that one can go through the same individual steps of the procedure devised for the regular case. The only items in need of modification will be those in the description of the matrix S that refer to row coordinates indexed by elements of G; these need to be replaced by row sums indexed by the corresponding cosets of G/H. Thus, instead of \(R_{\rho ,j}\) we let \(R^H_{\rho ,j}\) be the \(n\times d_\rho \) matrix whose row with index \(J\in G/H\) is the jth row of the matrix \(\rho (J)=\sum _{g\in J}\rho (g)\). Similarly, \(S^H_{\rho ,j}=\mathrm{diag}(R^H_{\rho ,j})\) will now be the \(kn\times kd\) block-diagonal matrix with k blocks each equal to \(R^H_{\rho ,j}\), and the \(kn \times kd^2\) matrix \(S^H_\rho \) will be formed by the concatenation of the blocks \(S^H_{\rho ,j}\) taken over all \(j\in [d]\). Finally, the \(kn\times k|G|\) matrix \(S^H\) will be a concatenation of the blocks \(S^H_\rho \) taken over all \(\rho \in {{\,\mathrm{Irep}\,}}(G)\). In all these matrices, we assume the same indexing conventions as before. And, arguing exactly as in the regular case, we obtain:

Claim 3. The collection of k|G| vectors \(\mathbf{y}^H= \mathbf{y}^H_{j,(w,i)}(\rho )\) of dimension kn, for varying \(w\in V\), \(i,j\in [d_\rho ]\) and taken over all \(\rho \in {{\,\mathrm{Irep}\,}}(G)\) can be identified with the collection of all the k|G| columns of the product \(S^HT\).

To finish the argument, it remains to show that the rank of \(S^HT\) is equal to kn. From the previous part, we know that T is non-singular, and so it is sufficient to prove that \(\mathrm{rank}(S^H)=kn\). We derive this from the fact that \(\mathrm{rank}(S)=k|G|\), established earlier for the regular case. The way \(S^H\) has been defined implies that \(S^H\) is obtained from S by replacing, for every \(J\in G/H\) and every fixed \(v\in V\), the |H| rows corresponding to the row indices (g, v) for \(g\in J\) by the sum of these \(|J|=|H|\) rows. Since we have k possibilities for \(v\in V\) and n cosets, and adding all rows corresponding to a coset can be done by \(|H|-1\) summations of pairs of rows, creating this way \(S^H\) from S can be done by performing \(kn(|H|-1)\) sums of pairs of rows. By summation of a pair of rows, the rank of the resulting matrix decreases by at most one. Since \(n|H|=|G|\), it follows that

and, as we trivially have \(kn\ge \mathrm{rank}(S^H)\), we conclude that \(\mathrm{rank}(S^H) = kn\), as claimed. It means that the columns of \(S^HT\) indeed account for representatives of all the eigenspaces of the permutation lift \(\Gamma ^{\alpha }\). This completes the proof. \(\square \)

In the notation introduced in the above proof, we have the following consequence.

Corollary 4.2

All the eigenspaces corresponding to the permutation lift \(\Gamma ^{\alpha }\) are generated by a subset of kn independent columns of the product \(S^HT\). \(\square \)

5 An example

Following the method of Theorem 4.1 and keeping to the notation introduced in its proof, we will now work out the spectrum of the relative lift from Example 2.1. Referring to Fig. 1, recall that we consider the dumbbell graph \(\Gamma \) with vertex set \(V=\{u,v\}\) and arc set \(X=\{a,a^-,b,b^-, c,c^-\}\), where the pairs \(\{a,a^-\}\), \(\{b,b^-\}\) and \(\{c,c^-\}\) determined a loop at u, an edge joining u to v, and a loop at v, respectively. The permutation voltage assignment \(\alpha \) on \(\Gamma \) in the group \(\mathrm{Sym}(3)\) was given by \(\alpha (a)=(23)\), \(\alpha (b)=e\), and \(\alpha (c)=(12)\). An equivalent description is to regard \(\alpha \) as a relative voltage assignment, with values of \(\alpha \) on arcs acting on the right cosets of G/H for \(H=\mathrm{Stab}_G(1)=\{e,(23)\}\) by right multiplication. Letting \(g=(23)\) and \(h=(12)\), the base matrix B of G with entries in the group algebra \(\mathbb C(G)\) has the form

The (transitive) voltage group \(G=\mathrm{Sym}(3)=\{e,g,h,r,s,t\}\) with \(r=ghg=(13)\), \(s=gh=(123)\) and \(t=hg=(132)\) has a complete set of irreducible unitary representations \({{\,\mathrm{Irep}\,}}(G)=\{\iota ,\pi ,\sigma \}\) corresponding to the symmetries of an equilateral triangle with vertices positioned at the complex points \(e^{i\frac{2\pi }{3}}\), 1, and \(e^{i\frac{4\pi }{3}}\), where

whence we obtain

Then, the images of B under these three representations are given by

with spectra \({{\,\mathrm{Sp}\,}}(\iota (B))=\{1,3\}\), \({{\,\mathrm{Sp}\,}}(\pi (B))=\{-3,-1\}\), and \({{\,\mathrm{Sp}\,}}(\sigma (B))=\{\pm \sqrt{3},\pm \sqrt{7}\}\). To determine the ‘multiplication factors’ appearing in front of the spectra in the statement of Theorem 4.1, we evaluate \(\iota (H)=\iota (e)+\iota (g)=1+1=2\), of rank 1, \(\pi (H)=\pi (e) + \pi (g) = 1-1=0\), of rank 0, and

of rank 1. By Theorem 4.1, the spectrum of the adjacency matrix \(A^{\alpha }\) of the relative lift \(\Gamma ^{\alpha }\) is obtained by concatenating the sets \(1\cdot \{1,3\}\), \(0\cdot \{-3,-1\}\), and \(1\cdot \{\pm \sqrt{3},\pm \sqrt{7}\}\), giving

Due to the complexity of determining the corresponding eigenspaces in the second part of the proof of Theorem 4.1, we illustrate the details of the process on the same example. Let us again begin by considering the regular case, that is, when H is the trivial group. Then, as explained in the proof, the eigenvectors of the ordinary lift \(\Gamma _0^{\alpha }\), shown in Fig. 2, are obtained from the matrix product ST, where, in the previously introduced notation, S is now a \(12\times 12\) matrix with block form

where

Note that, as required in the proof, \(S_{\sigma ,1}\) and \(S_{\sigma ,2}\) are formed, respectively, out of the first and second rows of the matrices \(\sigma (e)\) and \(\sigma (g)\), and so on. The matrix T in this case is a \(12\times 12\) matrix with block form \(T = \mathrm{diag}(T_\iota ,T_\pi ,T_\sigma )\), where \(T_\iota = U_\iota \), \(T_\pi =U_\pi \), and \(T_\sigma = \mathrm{diag}(U_\sigma ,U_\sigma )\), so that

Here one needs to be careful about indexation of rows and columns to align eigenvectors with the corresponding eigenvalues. In accordance with the proof of Theorem 4.1, for each \(\rho \in \{\iota ,\pi ,\sigma \}\) of dimension \(d_\rho \), the \(d_\rho \times d_\rho \) matrix \(U_\rho \) is formed by a choice of the corresponding eigenvectors of \(\rho (B)\). To proceed, we choose to list the eigenvalues \(\mu _{(w,i)}=\mu _{(w,i)}(\rho )\) for \(w\in V=\{u,v\}\), \(\rho \) as above, and \(i\in [d_{\sigma }]\), in the form \(\mu _{(u,1)}(\iota )=3\), \(\mu _{(v,1)} (\iota )=1\), \(\mu _{(u,1)}(\pi )=-3\), \(\mu _{(v,1)}(\pi )=-1\), \(\mu _{(u,1)}(\sigma )=\sqrt{3}\), \(\mu _{(u,2)}(\sigma ) =\sqrt{7}\), \(\mu _{(v,1)}(\sigma )=-\sqrt{7}\), and \(\mu _{(v,2)}(\sigma )=-\sqrt{3}\), together with a choice of the corresponding eigenvectors as follows:

where, from left to right, the columns of \(U_\iota \) correspond to eigenvalues 3 and 1, the columns of \(U_\pi \) to the eigenvalues \(-3\) and \(-1\), and finally the columns of \(U_\sigma \) correspond to the eigenvalues \(\sqrt{3}, \sqrt{7},\sqrt{-7}\) and \(\sqrt{-3}\), respectively. Then, by the proof of Theorem 4.1, the product ST yields all the eigenvectors of the adjacency matrix \(A^{\alpha }\) of the regular lift, corresponding to the eigenvalues \(\pm 3\), \(\pm 1\), \(\pm \sqrt{3}\), and \(\pm \sqrt{7}\) (the last four with multiplicity 2); see also Sect. 6.

For the lift relative to H, note first that the right cosets of \(H=\{e,g\}\) are \(Hh=\{h,gh\}\) and \(Hghg=\{ghg,hg\}\). These correspond, respectively, to the rows \(\{1,2\}\), \(\{3,5\}\), and \(\{4,6\}\) out of the first 6 rows of the matrix S (and by the form of S it is clearly sufficient to restrict ourselves to its first 6 rows). Then, the new matrix \(S^H\) has blocks

As the product \(S^HT\), we now obtain the \(6\times 12\) matrix whose first four columns (the first two of which correspond to the eigenvalues 1 and \(-1\)) are

and the remaining eight columns of \(S^HT\) have the form

with columns from the left to the right corresponding to the eigenvalues \(\sqrt{3}\), \(\sqrt{7}\), \(-\sqrt{7}\), \(-\sqrt{3}\), \(\sqrt{3}\), \(\sqrt{7}\), \(-\sqrt{7}\) and \(-\sqrt{3}\), as dictated by our chosen indexation of S and T. Now, observe that the 9th and the 12th columns of \(S^HT\) are a \((-\sqrt{3})\)-multiple of the 5th and the 8th columns, respectively; similarly, the 10th and the 11th columns of \(S^HT\) are the same \((-\sqrt{3})\)-multiple of the 6th and the 7th columns. This means that the eigenspaces of the relative lift \(\Gamma ^{\alpha }\) for the 6 eigenvalues \(3,1,\sqrt{3},\sqrt{7},-\sqrt{7},-\sqrt{3}\) are all one-dimensional, and may be taken to be generated by the 6-dimensional vectors forming the 1st, 2nd, 5th, 6th, 7th and 8th columns, respectively, of the above matrix \(S^HT\). The sum of the dimensions of all the eigenspaces of \(\Gamma ^{\alpha }\) is \(6=2\cdot 3 = kn\), which is in agreement with Corollary 4.2.

6 The regular case with the help of group characters

If the covering \(\Gamma ^{\alpha }\rightarrow \Gamma \) is regular, the multiset of eigenvalues of the lift can also be obtained using group characters, as it was demonstrated in [6], even for regular lifts of digraphs. The eigenvalue multiplicities furnished by [6] are all algebraic—which, for digraphs, may be distinct from geometric multiplicities—and therefore the method of [6] gives no information about eigenspaces in general. To relate the techniques used in the previous sections with those of [6], we briefly sum up the essentials. This will also offer a complete argument for establishing the key equality (19) (see below) that appears, in an equivalent form, in the proof of Theorem 2.1 in [6].

The method of [6] is based on establishing a relationship between counting closed walks in a relative lift of a connected base graph \(\Gamma \) (now allowed to contain both undirected and directed edges) and characters of the voltage group G. As before, B will be the ‘voltage adjacency matrix’ for \(\Gamma \) indexed by its vertex set V, with elements \(B_{u,v}\) given as in (5). An important role in what follows is played by the diagonal elements of the powers \(B^\ell \) for \(\ell \ge 1\), evaluated on the group algebra \(\mathbb C(G)\). For \(u\in V\), every such diagonal element has the form \((B^\ell )_{u,u} = \sum _{g\in G}a^{(\ell )}_{(u,g)}g \in \mathbb C(G)\) for some integers \(a^{(\ell )}_{(u,g)}\). Next, for every \(\rho \in {{\,\mathrm{Irep}\,}}(G)\) and for the corresponding character \(\chi ^\rho \) of G we let

Note that, in this way, we only extend \(\chi ^\rho \) over particular linear combinations in \(\mathbb C(G)\). For the unit element \(e\in G\), we will derive a formula for the coefficients \(a^{(\ell )}_{(u,e)}\) from (16) by evaluating the inner product of the vectors \(\mathbf{z}=(\chi ^\rho ((B^\ell )_{u,u}))_{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)}\) and \(\mathbf{z}'=(\chi ^\rho (e))_{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)}\) in two ways (assuming the same indexing in both vectors). First, using the definition of \(\chi ^\rho ((B^\ell )_{u,u})\) and, in the last step, the second form of the Orthogonality Theorem for Characters from Sect. 3, one obtains

On the other hand, realizing that \(\chi ^\rho (e)=d_\rho \) is the dimension of \(\rho \), one has

and a comparison of the two results gives

The number of rooted closed walks of length \(\ell \) in the regular lift \(\Gamma ^\alpha \) is obviously equal to the trace \(\mathrm{tr}((A^\alpha )^\ell )\) of the \(\ell \)th power of the adjacency matrix of the lift. By Lemma 1.1. of [6], this trace (equal also to the sum of \(\ell \)th powers of entries in the spectrum of the lift) can be evaluated as follows, where we used (17) in the last step:

Developing now (18) with the help of elementary facts about traces and group characters, and also recalling earlier definitions such as (6) and (16), gives

and realizing the meaning of the left-hand side (see also (18)) and the right-hand side (which is simply the sum of all eigenvalues of \(\rho (B^\ell )\), each taken \(d_\rho \) times), one obtains

Notice that, in the sums on the right-hand side of (19), we have a total of \(k\sum _{\rho \, \in \, {{\,\mathrm{Irep}\,}}(G)} d_\rho ^2= k|G|\) terms, which is the number of eigenvalues of the adjacency matrix \(A^{\alpha }\) of the digraph \(\Gamma ^{\alpha }\) obtained as a regular lift of \(\Gamma \). Then, as the above equality holds for every \(\ell =1,2,\ldots \), the multisets of eigenvalues on the left-hand and on the right-hand sides of (19) must coincide (see, for example, Gould [10]). This furnishes an alternative proof of the ‘eigenvalues’ part of Theorem 4.1 for regular lifts of digraphs.

As a consequence, in terms of group characters, the spectrum of a regular lift \(\Gamma ^{\alpha }\) of a digraph \(\Gamma \) with voltage group G is determined as follows (see [6] for a full account).

Proposition 6.1

([6]) Let \(P_{\ell }\) be the set of closed walks of length \(\ell \ge 1\) in a connected digraph \(\Gamma \) with vertex set V. For a (closed) walk \(p\in P_{\ell }\) of the form \(u_0{\rightarrow } u_1{\rightarrow } \cdots {\rightarrow } u_{\ell -1}{\rightarrow } u_{\ell }{=}u_0\) and for a \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), we let \(\chi ^\rho (p)=\chi ^\rho \left( \prod _{j=0}^{\ell -1}\alpha (u_ju_{j+1})\right) \). Then, for each \(\rho \in {{\,\mathrm{Irep}\,}}(G)\), the eigenvalues \(\lambda _{u,j}\), for \(u\in V\) and \(j\in [d_\rho ]\), of the lift \(\Gamma ^{\alpha }\), are the solutions (each repeated \(d_\rho \) times) of the system

The right-hand side of (20) is equal to \(\sum _{u\in V} \chi ^\rho ((B^{\ell })_{u,u})\), a quantity we may denote \(\chi ^\rho ({{\,\mathrm{tr}\,}}(B^{\ell }))\) by extending \(\chi ^\rho \) as done in (16). In particular, when \(d_\rho =1\) for some \(\rho \), in the notation of the proof of Theorem 4.1, we simply have \(\lambda _{u,1} =\mu _{u,1}\) for every \(u\in V\). For instance, when G is Abelian, this holds for every irreducible representation of G. This case was dealt with by Miller and Ryan together with the first two and the last authors in [5].

Example 6.2

Consider the dumbbell graph of Fig. 1 again, with regular voltages in the symmetric group \(\mathrm{Sym}(3)=\langle g, h \rangle \), and with the base matrix given by (13):

The corresponding regular lift \(\Gamma _0^{\alpha }\) is shown in Fig. 2. A set of irreducible characters for the group \(\mathrm{Sym}(3)\) can be taken from traces of the representations \(\iota ,\pi ,\sigma \) used in Sect. 5; these are shown in Table 1.

To construct the spectrum of the regular lift \(\Gamma _0^\alpha \) by Proposition 6.1, it remains to evaluate the quantities \(\chi ^\rho ({{\,\mathrm{tr}\,}}(B^\ell ))\) for our three characters and appropriate powers \(\ell \), which gives the following.

-

For \(\chi ^\iota \): Since \(d_\iota =1\), the corresponding two eigenvalues of \(\Gamma ^{\alpha }\) are

$$\begin{aligned} \{3,-1\}={{\,\mathrm{Sp}\,}}(\chi ^\iota (B)). \end{aligned}$$ -

For \(\chi ^\pi \): Since \(d_\pi =1\), the two corresponding eigenvalues of \(\Gamma ^{\alpha }\) are

$$\begin{aligned} \{-3,1\}={{\,\mathrm{Sp}\,}}(\chi ^\pi (B)). \end{aligned}$$ -

For \(\chi ^\sigma \): Since \(d_\sigma =2\), we need to consider the traces of all the \(\ell \)th powers of B for \(\ell \in \{1,2,3,4{=}d_\sigma |V|\}\) in the group algebra \(\mathbb C(G)\), which turn out to be

$$\begin{aligned} {{\,\mathrm{tr}\,}}(B) \&=2 g + 2 h,\\ {{\,\mathrm{tr}\,}}(B^2)&=4g^2+4h^2+2e=10e,\\ {{\,\mathrm{tr}\,}}(B^3)&=8g^3+8h^3+6g+6h=14g+14h, \\ {{\,\mathrm{tr}\,}}(B^4)&= 16g^4+16h^4+16g^2+16gh+16h^2+2e=66e+16gh. \end{aligned}$$By Proposition 6.1 and the remark following it, the traces give the nonlinear system

$$\begin{aligned} \lambda _{u,0}+\lambda _{u,1}+\lambda _{v,0}+\lambda _{v,1}&=\chi ^\sigma ({{\,\mathrm{tr}\,}}(B))=0, \\ \lambda _{u,0}^2+\lambda _{u,1}^2+\lambda _{v,0}^2+\lambda _{v,1}^2&=\chi ^\sigma ({{\,\mathrm{tr}\,}}(B^2))=20,\\ \lambda _{u,0}^3+\lambda _{u,1}^3+\lambda _{v,0}^3+\lambda _{v,1}^3&=\chi ^\sigma ({{\,\mathrm{tr}\,}}(B^3))=0,\\ \lambda _{u,0}^4+\lambda _{u,1}^4+\lambda _{v,0}^4+\lambda _{v,1}^4&=\chi ^\sigma ({{\,\mathrm{tr}\,}}(B^4))=116, \end{aligned}$$with solution \(\pm \sqrt{7}, \pm \sqrt{3}\). As these must be taken twice, the spectrum of \(\Gamma _0^{\alpha }\) is

$$\begin{aligned} {{\,\mathrm{Sp}\,}}(\Gamma _0^{\alpha })=\{3^{(1)},\sqrt{7}^{(2)},\sqrt{3}^{(2)}, 1^{(1)},-1^{(1)},-\sqrt{3}^{(2)},-\sqrt{7}^{(2)},-3^{(1)}\}. \end{aligned}$$

Note that, as expected, \({{\,\mathrm{Sp}\,}}(\Gamma )\subset {{\,\mathrm{Sp}\,}}(\Gamma ^{\alpha })\subset {{\,\mathrm{Sp}\,}}(\Gamma _0^{\alpha })\).

References

Babai, L.: Spectra of Cayley graphs. J. Combin. Theory Ser. B 27, 180–189 (1979)

Biggs, N.: Algebraic Graph Theory, 2nd edn. Cambridge Univ. Press, Cambridge (1993)

Burrow, M.: Representation Theory of Finite Groups. Dover, New York (1993)

Cvetković, D., Doob, M., Sachs, H.: Spectra of Graphs. Theory and Applications, 3rd edn. Johann Ambrosius Barth, Heidelberg (1995)

Dalfó, C., Fiol, M.A., Miller, M., Ryan, J., Širáň, J.: An algebraic approach to lifts of digraphs. Discrete Appl. Math. 269, 68–76 (2019)

Dalfó, C., Fiol, M.A., Širáň, J.: The spectra of lifted digraphs. J. Algebraic Combin. 50, 419–426 (2019)

Ezell, C.L.: Observations on the construction of covers using permutation voltage assignments. Discrete Math. 28(1), 7–20 (1979)

Feng, R., Kwak, J.H., Lee, J.: Characteristic polynomials of graph coverings. Bull. Austral. Math. Soc. 69, 133–136 (2004)

Godsil, C.D., Hensel, A.D.: Distance regular covers of the complete graph. J. Combin. Theory Ser. B 56(2), 205–238 (1992)

Gould, H.W.: The Girard-Waring power sum formulas for symmetric functions and Fibonacci sequences. Fibonacci Quart. 37(2), 135–140 (1999)

Gross, J.L., Tucker, T.W.: Generating all graph coverings by permutation voltage assignments. Discrete Math. 18, 273–283 (1977)

Gross, J.L., Tucker, T.W.: Topological Graph Theory, Wiley, New York. Dover, New York (2001)

James, G., Liebeck, M.: Representations and Characters of Groups, 2nd edn. Cambridge Univ. Press, Cambridge (2001)

Kwak, J.H., Lee, J.: Characteristic polynomials of some graph bundles II. Linear Multilinear Algebra 32, 61–73 (1992)

Kwak, J.H., Kwon, Y.S.: Characteristic polynomials of graph bundles having voltages in a dihedral group. Linear Algebra Appl. 336, 99–118 (2001)

Lovász, L.: Spectra of graphs with transitive groups. Period. Math. Hungar. 6, 191–196 (1975)

Mizuno, H., Sato, I.: Characteristic polynomials of some graph coverings. Discrete Math. 142, 295–298 (1995)

van Dam, E.R., Haemers, W.H.: Developments on spectral characterizations of graphs. Discrete Math. 309, 576–586 (2009)

Acknowledgements

The research of C. Dalfó and M. A. Fiol has been partially supported by AGAUR from the Catalan Government under project 2017SGR1087 and by MICINN from the Spanish Government under project PGC2018-095471-B-I00. The research of C. Dalfó has also been supported by MICINN from the Spanish Government under project MTM2017-83271-R. The authors S. Pavlíková and J. Širáň acknowledge support from the APVV Research Grants 15-0220 and 17-0428, and the VEGA Research Grants 1/0142/17 and 1/0238/19.

Open Access

This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

Funding

Open Access funding provided thanks to the CRUE-CSIC agreement with Springer Nature.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

The first author has received funding from the European Union’s Horizon 2020 research and innovation programme under the Marie Skłodowska-Curie Grant Agreement No. 734922.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Dalfó, C., Fiol, M.A., Pavlíková, S. et al. Spectra and eigenspaces of arbitrary lifts of graphs. J Algebr Comb 54, 651–672 (2021). https://doi.org/10.1007/s10801-021-01049-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10801-021-01049-3