Abstract

An involuntary international experiment in which the entire student population was switched to digital remote learning due to the measures to stop COVID-19 put the paradigm of "anytime, anywhere learning" to the test. Online survey responses were obtained from 281 preservice primary and subject teachers. Using Structural Equation Modelling, connections were examined by inspection of path coefficients between constructs quality of personal digital technology, satisfaction, health, well-being, motivation, and physical activity. Problems with the quality of personal digital technology had a moderate influence on all constructs except motivation. Satisfaction influenced all constructs, well-being, and health the most. When comparing responses of the bottom and top third students based on the quality of personal digital technology, it was found that students who did not have the appropriate technology and workspace were less satisfied and suffered more. This is reflected in an increased incidence of problems related to health, well-being, and physical activity, along with a decrease in motivation. At least for the technologically deprived, the paradigm of "anytime, anywhere learning" is a myth. The study highlights the need for educational institutions to provide adequate technology and workspaces for all students in order to support their well-being and motivation during remote learning.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

The social distancing triggered by COVID-19 has changed many established routines in activities associated with human gatherings, including education (Carrillo & Flores, 2020), by moving them from a public space to a private, individual space (De' et al., 2020). According to the United Nations (2020, p. 5), "By mid-April 2020, 94 percent of learners worldwide were affected by the pandemic, representing 1.58 billion children and youth, from pre-primary to higher education, in 200 countries." One possible solution for reducing the spread of the virus and the damage it caused was to move traditional teaching to an online virtual environment. In parallel with this move, a vast unplanned "international experiment" on a large scale (Thomas & Rogers, 2020), with virtually the entire student population, allows for the testing of many postulates of online distance and mobile education (Bell, 2011; Ovčjak et al., 2015; Siemens, 2004), including the mantra of "anytime-anywhere learning" (Martin et al., 2013; Olcott, 2014). The shift of instruction from "brick-and-mortar" institutions to the home was accompanied by a surge in technology-based solutions, and the possession of digital technology became ubiquitous in teaching and learning. Because the transition was not based on voluntary choices and lasted only a short time, it was not coordinated with best practices and principles for online instruction (Crawford et al., 2020). Thus, it significantly impacted struggling students (Gewin, 2020).

The terms that best describe the preceding situation are Forced Remote Education and Forced Online Distance Education (FODE) (Dolenc et al., 2021). Because of a shortage of time and uncertainty about the duration of the measures, educators pragmatically transfer the content of their courses to an online form, using the tools they already have at hand and making adjustments during the courses. The problem with this strategy is that neither the courses nor the technology (e.g. smartphones) was designed for online instruction (Chittaro, 2006), so unintended and unpredictable side effects may occur (Dolenc et al., 2021; Brainard & Watson, 2020) in addition to those that are already familiar (Mseleku, 2020). Because FODE is only possible with heavy use of digital technologies in distributed environments, educational technology in computer-mediated communication cannot be considered neutral. According to Zhao et al. (2004, p. 23), "perceiving technology as passive, neutral, and universal is inherently problematic in itself". The other factor that was not controllable by the instructors and students was the daily addition and replacement of functionalities of the video-conferencing and online learning environments by developers.

Consequently, sending students home means more than just a solution to an epidemiological problem but also the creation of new inequalities or the increased visibility of existing ones (Czerepaniak-Walczak, 2020). The main reason for the emergent inequalities was the dispersion of education from faculties to homes. All students had more or less equal working conditions inside university buildings and in one classroom, but this was not so in their homes. For better or worse, some of these inequalities relate to the quality of digital technology, the accessibility of online platforms, and the home environment-hereafter, Personal Digital Technology (PDT) is the topic of our research interest.

Because of the novelty of the situation, there are few sources available about the specifics of preservice teacher education going online (Kim, 2020). However, from the times before COVID-19 (e.g., Ball et al., 2008; Cochran et al., 1993), we know that the education of preservice teachers is based on three pillars. They should learn (1) the content of the discipline(s) they are going to teach; (2) pedagogy as an amalgam of psychology, theoretical pedagogy, didactics, and methodology (Shulman, 1986), with and without the use of technology (Mishra & Koehler, 2006); and (3) clinical practice, a practicum in all simulated (microteaching) or actual work conditions with primary and secondary school students (Ploj Virtič et al., 2021b). This last element, together with practical work in laboratories, was the most negatively impacted by the lockdown, which meant transforming "the curriculum from a traditional pre-planned and structured syllabus to one that is more responsive, dynamic, and malleable" (Hadar et al., 2020).

The use of stationary and mobile digital technologies in preservice teacher education can be regarded as complex, with some aspects not present in the education of professionals in many other disciplines (Haydn, 2014; Howard et al., 2021). Preservice teachers should not be considered only as passive receptors of information provided utilising information technology and users of tools to respond to the tasks provided by the instructors but also as creators of the multimedia materials and administrators of learning platforms for their practice in work with primary and secondary school students (Bullock, 2013). Additionally, preservice science and technology teachers should learn how to use and apply hardware and software, such as data loggers and construction software, to be used in hands-on laboratories and workshops at the schools where they will teach (Rusek et al., 2017). Therefore, preservice teachers should be regarded as (1) receivers and interpreters of the information offered by instructors (media); (2) participators in communication in virtual classrooms; (3) respondents to the tasks; (4) creators of the multimedia materials to be used in their preservice and in-service teaching practice; and (5) administrators of online learning platforms (e.g. video-conferencing systems, Moodle, etc.) in both synchronous and asynchronous formats. Accordingly, prospective teachers need to learn how to best use the capabilities of digital and communication technology in relation to the content and context of the subject(s) they will teach (Mishra & Koehler, 2006), and not last, how to teach their students to use digital technology when working in off- and online instruction.

There are many studies available about several predictors supposed to influence the prospective use of technology in teaching practice, most often based on the Technology Acceptance Model (Davis, 1989), UTAUT (Venkatesh et al., 2003), and their derivatives (e.g., Šumak & Šorgo, 2016; Šumak et al., 2017). However, given the novelty of the situation, we were, at the date of study preparation, unable to locate a study about the influence of the quality of personal digital technology, internet connection, and workplace on health, well-being, motivation, physical activity, and satisfaction with online learning among preservice primary teachers (PPT) and preservice subject teachers (PST) in the course of the COVID-19 induced lockdown; to the best of our knowledge, no such study exists. The choice of the factors (latent variables) used as predictors was a difficult task for the researchers, as they had to find a balance between the selection from almost unlimited number of potential factors and the length of the instruments to prevent attrition (Rolstad et al., 2011). Therefore, the authors took a pragmatic approach and relied on their experiences and findings that were not supported by research at the time of preparing a study.

Lockdown as a side effect inevitably resulted in an upsurge in introducing FODE in classrooms, even by teachers who had never imagined using video-conferencing and online learning environments. Confinement at home with a bunch of technology can be regarded as immersion (swim or drown) in first-hand experience with online conferencing systems, allowing students to test all the possibilities and side effects (Jandrić et al., 2021). As educators of preservice teachers, we observed that some students struggled with attendance and fulfilment of obligations simply because of problems with technology, so we decided to explore the connections (correlations) between the quality of personal digital technology and (1) satisfaction with video-conferencing; (2) health; (3) well-being; (4) motivation; and (5) physical activity.

The above constructs (reviewed and described in more detail later in the text—see the Methodology section) were included in the hypothetical model based on the literature review and pre-pandemic findings as informed guesses. The inclusion of the construct 'quality of personal digital technology was based on recognising the importance of the digital divide for education at the personal and public levels (e.g., Wei & Hindman, 2011). 'Satisfaction with video-conferencing' has been included as a special case of the importance of satisfaction as a key construct in a theory of expectation confirmation and as an indicator of intention to use a technology (Bhattacherjee, 2001). At the onset of the pandemic, it was noted that physical activity among the student population declined because of the lockdown, which was of concern because the association between the benefits of physical activity and health is well established (e.g., Warburton et al., 2006). Subjective 'well-being' can be viewed as “the feelings, experiences, and sentiments arising from what people do and how they think” (Dolan et al., 2017, p. 3). It was expected that subjective well-being would change due to the novel online educational experiences. The same is valid for motivation, which is one of the most important factors for doing or not doing something in education (e.g., Lin et al., 2017). However, as with almost any study, there is always the possibility that we missed some important variables and constructs, which can be considered a limitation of the study (Dearden et al., 2011).

1.1 Aims and Scope

In this study, we apply and build upon various theoretical models to understand how the quality of students' personal digital technology (PDT) and satisfaction with online learning platforms impact their health, well-being, motivation, and physical activity. Our research is grounded in the Continuance Intention Models of technology use (Bhattacherjee, 2001) and the Expectation Confirmation Theory (Oliver, 1980), which provide a foundation for understanding how users' experiences and satisfaction with technology influence their subsequent engagement. Additionally, we draw on Flow Theory (Csikszentmihalyi & Csikzentmihaly, 1990) to understand how seamless engagement with technology contributes to satisfaction. Our study extends these theories to the context of online learning, examining how students' home technology and satisfaction with learning platforms influence a range of personal and educational outcomes.

The technological factors influencing students' learning outcomes from instruction can be divided into two significant subgroups. In the first group are factors with minimal or no student influence that depend on the provider of a program or lecture, e.g. the choice of a video-conferencing system, the number of participants in a group, weekly schedule, content, and format of the video lectures, and similar. In the second group are student-dependent factors, where the influence of lecture providers is minimal or non-existent. Among these factors, there are, for example, student-owned equipment, network accessibility outside faculty buildings or campuses, and working conditions at home, such as having one's own study room. Differences based on personal characteristics, traits, and socio-demographic factors are not part of this study, which in every instance limits their importance.

We, therefore, began our research with five basic research questions:

RQ1: Do the means of home technology, quality of access, and working conditions at home (PDT) correlate with satisfaction while working in video-conferencing systems?

RQ2: Do the means of home technology, quality of access, and working conditions at home (PDT) correlate with health, well-being, motivation, and physical activity?

RQ3: Does satisfaction with working in video-conferencing systems correlate with health, well-being, motivation, and physical activity?

RQ4: Are there differences in all of the above between PPT and PST?

RQ5: Are there differences in all of the above between students depending on the quality of PDT?

2 Methodology

2.1 Questionnaire Design

"The development and organization of our questionnaire were strongly influenced by several theoretical models that have been extensively used in the field of technology use and user satisfaction. Specifically, we draw on the Continuance Intention Models of technology use (Bhattacherjee, 2001), the Expectation Confirmation Theory (Oliver, 1980), and Flow Theory (Csikszentmihalyi & Csikzentmihaly, 1990). These models serve as the basis for our constructs and associated items, guiding our understanding of how students' experiences with their personal digital technology (PDT) and their satisfaction with online learning platforms may impact a range of personal and educational outcomes, including health, well-being, motivation, and physical activity.

The Continuance Intention Models and Expectation Confirmation Theory informed our constructs related to students' usage and satisfaction with their PDT. The questionnaire items associated with these constructs aim to capture students' experiences with PDT and their satisfaction levels. Flow Theory, on the other hand, informed the development of items designed to measure how seamless engagement with online learning platforms impacts students' satisfaction levels.

The online questionnaire addressing prospective teachers was designed in the form of blocks of items organised as tables. The questionnaire was designed and maintained by the authors, all professional educators of prospective teachers. Due to their professional work, they recognised early that remote online education has many unwanted side effects (Dolenc et al., 2021). They sought to explore the patterns to provide evidence to reduce these. Because all study activities were online during the research, the online questionnaire was a rational decision.

After the initial paragraph addressing the purpose of the research and asking for informed consent, followed two demographic items, asking for gender and the number of educational streams students are taking. There followed blocks of questions related to the constructs addressed in the study. These are described in more detail later in the text. Briefly, they can be grouped as (Table 1): (a) Quality of their personal digital technology and abbreviated as PDT in the text, measured on a five-point scale; (b) Satisfaction with remote online study; and a group of items assessing Health (HEA), Well-being (WEL), Motivation (MOT), and Physical activity (PHY) of the students. The last two scales were assessed by a seven-point scale.

2.1.1 Sampling Procedure

An online form was created using the open-source web survey application 1Ka (2021). The link was announced to university students through various access channels, such as social networks and faculty mailing lists. Sampling started at the beginning of December 2020. From the students' perspective, it was about two months after the second closure of the University. For most students, it was their second experience with online remote work following the first closure in the Spring of 2020, which ended with the end of the semester in mid-June 2020. After two weeks, data collection was completed. The instrument was anonymous, and responses were taken as consent. An opt-out option was recognised in that no fields were marked as mandatory, and no participant was subject to abuse or could take advantage of the response. According to the University's rules, such research does not need the approval of an ethical body, as no sensitive personal data was collected.

2.1.2 Research Population and Sample

The research population of this study comprised of full-time preservice teachers (students) from three faculties (Faculty of Arts, Faculty of Education, and Faculty of Natural Sciences and Mathematics) of University of Maribor. Our choice of sampling population was informed by our research objective, which aimed at understanding the impact of quality of students' personal digital technology and satisfaction with online learning platforms on their health, well-being, motivation, and physical activity. We believe that preservice teachers are a suitable population for our study given their regular engagement with digital technologies for learning and teaching purposes.

The data clearing process initially removed responses that only covered the introductory, consent, and demographic items, thereby ensuring that the retained data were more relevant for subsequent statistical analyses. As a result, we were left with 281 partially usable datasets. The decision to work with partially usable data sets was guided by our aim to maximize the use of available data for richer and more comprehensive analyses, and to avoid the loss of important information through listwise deletion, as recommended by Carter (2006). In line with Kline's (2010) recommendation for SEM analysis, we ensured our dataset size (N = 242 for SEM models) was sufficiently large to produce reliable and robust results. Furthermore, our sample demographics reflect the gender distribution trend observed in the teaching profession (Drudy, 2008). The potential influence of gender on the study variables was considered during the analysis.

The division between primary preservice teachers and subject preservice teachers was intended to capture any potential differences between these groups in terms of their digital technology use and satisfaction with online learning platforms. A brief presentation of the educational system in Slovenia, which may further illuminate the context of our sample, is provided in "Appendix".

By employing these stringent selection and analysis procedures, we were able to achieve a high degree of statistical robustness while preserving the diversity and representativeness of our sample.

Hypotheses

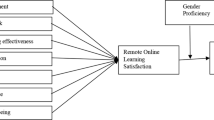

Six constructs are included in the Model(s) (Fig. 1). Descriptive statistics and results of PCA for each of the constructs included in the models are provided in the Findings (results) section.

Based on the research questions, it was hypothesised that:

H1: The Construct Personal Digital Technology (PDT) will impact satisfaction with online experiences.

H2a,b,c,d: The Construct Personal Digital Technology (PDT) will impact the health and well-being, motivation, and physical activity of the students.

H3a,b,c,d: Satisfaction with online experiences will impact the health and well-being, motivation, and physical activity of the students.

H4: There will be statistically significant differences between PPT and PST.

H5: The quality of PDT will statistically significantly moderate H1, H2 and H3.

2.1.3 Constructs in the Model

The constructs used in the model are listed in Table 1 and are described in detail in the following subsections. The results of the statistical analyses for each construct are presented in the Results section.

2.1.4 Personal Digital Technology (PDT)—Means of Personal Digital Technology, Quality of Access to the Educational Learning Systems and Workplace

The construct Personal Digital Technology (PDT) (Table 1; Fig. 1) is devoted to measuring the quality of home technology used in online communication and the ability to perform tasks provided by instructors. The rationale for inclusion was that PDT used in online interaction between instructors and students, as well as students' ability to fulfil their obligation to finish a course, is not neutral (Clegg et al., 2003; Zhao et al., 2004). In the words of Selwyn (2007, p. 90): "education technology is not a new or neutral blank canvas (as is disingenuously claimed by many) but a site of intense conflict and choice." The construct PDT can be considered fluid due to the rapid changes in technologies and applications for home use. In addition, teachers may use several digital technologies in their school practice that are limited to their workplace, such as data loggers, i-Whiteboards, and the like, which cannot be readily transferred to the home environment. For the purposes of the study, PDT was considered to include possession of a stationary or mobile computer and an Internet-connected smartphone that allows connection to ODT in addition to a working place. The construct consists of five items: two measure technology, two connections to the internet, and one workplace quality. The instruction provided to the students was as follows: "Write down how suitable your infrastructure and equipment are for distance learning" on a five-point scale. The response format was DNAM = Does not apply to me (I do not have this device), Ins = insufficient; BS = Barely sufficient; Sui = Suitable; Good; Exc = Excellent. For some calculations, values of all five items were summed; therefore, results fall in a range between 5 and 30, for those who have access to all the listed elements.

2.1.5 Satisfaction with the Video-Conferencing Environment

Satisfaction is a key construct in several studies following the Continuance Intention Models of technology use (Bhattacherjee, 2001) and stemming from the Expectation Confirmation Theory (Oliver, 1980). It is based on personal experience, and both positive and negative incidents can influence satisfaction. Based on this assumption, we hypothesised that the quality of PDT could have an impact on satisfaction with a video conferencing environment. Satisfaction is an important construct influencing continuance intentions, and it can be speculated that their satisfaction with technology-mediated instruction may have an impact on students' prospective work when they go on to use technology in schools (Dolenc et al., 2022). Satisfaction (SAT) is a construct of five items based on flow theory (Csikszentmihalyi & Csikzentmihaly, 1990). It was shown in previous studies by the authors related to COVID-19 (Dolenc et al., 2022; Gabrovec et al., 2022; Ploj Virtič et al., 2021a) that the scale had appropriate content validity and reliability and was successfully included in their models. SAT consisted of a set of five statements referring to satisfaction with using the video-conferencing system in distance learning. These statements asked for a response in a 7-point format. Students were instructed to provide answers to a set of claims on a scale from 1 (I do not agree at all) to 7 (I totally agree) and clearly (unambiguously) indicate the extent to which each statement applied to them. The number (4) indicates the middle position on the issue.

2.1.6 Health (HEA), Well-being (WEL), Motivation (MOT), and Physical Activity (PHY)

Among the almost limitless selection of factors that can be actually or potentially influenced by FODE, we chose health (HEA), well-being (WEL), motivation (MOT), and physical activity (PHY). This decision was made based on consultation with several papers (e.g., Meeter et al., 2020; Pfefferbaum & North, 2020; Tison et al., 2020; Zacher & Rudolph, 2020). Our intention to find papers connecting these constructs with teacher education was unsuccessful. However, it was possible to support inclusion of constructs by transferring findings from other populations. For example in almost limitless studies it was claimed that physical and mental health declined during the pandemic (e.g.Taylor, 2022), as well as motivation for study (e.g. Smith et al., 2021), well-being (Hamilton & Gross, 2021), and physical activities (Wilke et al., 2021), all separately of in a combination leading to devastating effects of lockdown (Pandey et al., 2021).

All four constructs (Fig. 1) are measured by 12 items in three principal components presented later in Table 4: HEA (4 items), WEL (3 items), MOT (3 items), and PHY activity (2 items). These statements asked for responses in a 7-point format in a range from completely disagree (1) to completely agree (7) and clearly (unambiguously) indicating the extent to which each statement applied to them. The (4) indicates the middle position on the issue. Except for MOT 1 and MOT 2, all other items were reverse-coded for inclusion in the SEM models.

During the process of model building, the first principal component (Table 4) was divided into two subscales, splitting health from well-being issues. With the second group of hypotheses, we seek to explore the influence of the quality of PDT and satisfaction on HEA, WELL, MOT, and PHY, all factors directly or indirectly influencing the personal well-being and welfare of the students and indirectly influencing the struggle to follow and fulfil instructions and ultimately, learning and grade outcomes.

Because of the post hoc nature of the study, initial conditions are unknown and cannot be measured by the instruments and means available. What can be hypothesised is that PDT can influence measured outcomes in a range from negative to positive.

2.2 Statistical Analysis

2.2.1 Calculation of Descriptive Statistics

Based on the frequency of responses, Means, Standard Deviations (SD), Mode, and Median were calculated and are reported in the Tables. Skewness and Kurtosis were also calculated to allow us to decide whether items in the constructs were appropriate for use in the SEM analyses (not reported).

2.2.2 Validity and Reliability of the Scales

The validity of the scales was assured in two ways. The first was by use of scales already tested in previous studies (e.g., satisfaction scale), and the second was by including experts from the field in content evaluation when the scales were not yet used. Cronbach's alpha was calculated as a measure of reliability.

2.2.3 Evaluation of Correlations between Subgroups

Correlations between constructs were routinely calculated using AMOS software. They are expressed as Pearson's correlation coefficient r. The ranges for interpreting the magnitude of the observed correlation coefficient were adopted from Schober et al. (2018) as follows: 0.00–0.10 = negligible correlation; 0.10–0.39 = weak correlation; 0.40–0.69 = moderate correlation; 0.70–0.89 = strong correlation; and 0.90–1.00 = very strong correlation.

For calculations of effect size between correlations between subgroups (preservice elementary versus preservice subject teachers; the lower third of respondents according to the quality of home digital equipment versus others), Cohen's q was used, by applying the Psychometrica (Lenhard & Lenhard, 2017) online engine. According to recommendations on their webpage citing Cohen (1988, p. 109), they propose the following categories for interpretation: < 0.1: no effect; 0.1 to 0.3: small effect; 0.3 to 0.5: intermediate effect; > 0.5: large effect.

2.2.4 Principal Component Analysis

Principal Component Analysis (PCA) with Direct Oblimin rotation was applied in the initial Exploratory phases of research to reveal whether the constructs followed assumptions to be used in later CFA analysis. KMO and Bartlett's test, dimensionality, the factor structure of the scales, and component loadings higher than 0.4 were the entrance qualificators to be included.

2.2.5 Structural Equation Modelling (SEM)

Structural Equation Modelling (SEM) with the use of AMOS 27 software was the choice. Among the offered Fit Measures and Indices for CFA (Byrne, 2013), our choices were as follows: (a) the likelihood-ratio Chi-square index (basic absolute fit measure), and the chi-square to degrees of freedom ratio (CMIDF or χ2/df < 3); (b) Comparative Fit Index (CFI), with values closer to one (1) indicating a better fitting model; (c) Standardised Root Mean Square Residual (SRMR), and Root Mean Square Error of Approximation (RMSEA) with an acceptable range of 0.08 or less.

To improve the models, two procedures proposed by Byrne (2013) were examined: inspection of the standardised residual covariance matrix and application of the modification indices. Based on the examination of values, error terms were connected within some constructs.

2.3 Limitations of the Study

The limitations relate to the research methodology of online sampling because the respondents were self-selected, and it is possible that some students without access to the internet did not respond. However, correcting this potential weakness in the study design is impossible. The common method bias (Podsakoff et al., 2012) can hamper the results of this type of study; therefore, all measures were taken to prevent it in a range of recommendations from the references. Owing to the limited population, it was not possible to test the CFA models on a new "uncontaminated" population, a procedure that should be done in the future. Therefore, using previously untested instruments can be considered an additional limitation.

3 Results

Results are reported in two parts. In the first part, our intention was to present the results of the statistics for individual constructs. In the second part, a Model based on CFA is presented. The tables present the data for all students who provided this information. However, to be included in the SEM models, all respondents with missing variables were deleted.

3.1 Part 1: Overview of the Constructs (Latent Variables) of the Models

3.1.1 Personal Digital Technology (PDT)- Means of Personal Digital Technology, Quality of Access to the Educational Learning Systems, and Workplace

The frequency of responses provided as percentages and component loadings are provided in Table 2. Measures of central tendencies for summed data (n = 242) are: Mean = 19.4, SD = 3.6, Median = 20, and Mode = 19.

It can be seen (Table 2) that the weakest link is the internet connection, and the least problematic is the workspace, with the quality of computers and mobile phones in between. Cronbach's alpha of the scale without missing items (n = 242) was 0.81, a result that makes it appropriate for inclusion in the models. Skewness, Kurtosis, and inter-item correlations of all variables fall in ranges that are not considered problematic (Byrne, 2013). PCA shows the unidimensionality of the component (variance = 56.1%; eigenvalue = 2.81). The outcomes of the one-factor model test resulted in appropriate goodness-of-fit indices (χ2/df = 2.31; CFI = 0.986; RMSEA = 0.074; SRMR = 0.027).

3.1.2 Satisfaction with the Video-Conferencing Environment

Table 3 shows the data for all students who provided this information. However, to be included in the SEM models, all respondents with missing variables were deleted. Students most positively described the experience of working in a video conferencing environment as understandable and easy, mostly educational and successful; however, they still think of it as not being a fun experience.

Cronbach's alpha of the scale without missing items was 0.84, and 0.86 if SAT5 was deleted, which makes it appropriate for inclusion in the models. Skewness, Kurtosis, and inter-item correlations of all variables fall in ranges that are not considered problematic (Byrne, 2013). PCA shows the unidimensionality of the component (variance = 62.5%; eigenvalue = 3.12). The outcomes of the one-factor model test resulted in goodness-of-fit indices (χ2/df = 4.91; CFI = 0.964; RMSEA = 0.127; SRMR = 0.041), and (χ2/df = 6.38; CFI = 0.978; RMSEA = 0.149; SRMR = 0.028) if SAT5 was deleted. Based on these results, we made the decision not to delete this item.

3.1.3 Health, Well-being, Motivation, and Physical Activity

From Table 4, the highest level of agreement went to statements that distance learning was exhausting, stressful, and less motivational. There was disagreement with the statements that students were more motivated and more focused in the online experience. Even though it comes in last place, there should be a concern that many students sought help.

The Cronbach's alpha of the entire instrument was 0.87. During the PCA, it was revealed that the instrument split into three components based on eigenvalue > 1 criteria (Table 4), cumulatively explaining 66.5% of the variance. The first component (42.4% of variance) comprises seven items explaining the influence of distance learning on health and well-being. In the models, this component, for theoretical reasons, was split into two constructs; the first (four items, Cronbach's alpha = 0.83) are items indicating health, and the remaining three (Cronbach's alpha = 0.83) form a construct called well-being. The second component (15.3% of variance) comprises three items related to motivation, and the third component (8.9% of variance), two items related to physical activity and weight gain. According to stricter parallel analysis, the third component should not be retained. Correlations between constructs are weak to moderate; only health and well-being correlate strongly (0.79); therefore, all constructs (latent variables) are appropriate for inclusion in the Models. Correlations between the four constructs are beyond the scope of the interpretation. The outcomes of the one-factor model test of the first component resulted in goodness-of-fit indices (χ2/df = 4.37; CFI = 0.948; RMSEA = 0.118; SRMR = 0.063).

3.2 Part 2: CFA Models

All three main hypotheses (H1; H2a, b, c, d; and H3a, b, c, d) were included in a single model (Fig. 1). Calculations in the model were based on 234 respondents who provided all responses, which allowed the use of modification indices to improve the model in AMOS.

The outcomes of the-factor model test resulted in appropriate goodness-of-fit indices (χ2/df = 1.74; CFI = 0.94; RMSEA = 0.056; SRMR = 0.068). From the Model presented in Fig. 1, it can be seen that the quality of home technology and working conditions (PDT) did have some influence on all constructs except Motivation, which confirms H1 (except in the case of motivation) and H2. Satisfaction influences all constructs in the model (Table 5), confirming H3. These correlations can be regarded in terms of effect size as a small to medium effect. They are higher for satisfaction than for PDT.

In relation to the proposed hypotheses, we can reach the following conclusions:

H1: PDT correlates weakly but near the top of the order of magnitude (0.35) with satisfaction with online experiences.

H2a,b,c,d: PDT correlate weakly but near the top of the order of magnitude with well-being (r = 0.38) and physical activity (0.34), weakly with health (r = 0.24), and not with motivation (r = 0.02).

H3a,b,c,d: Satisfaction with online experiences correlates moderately with health (r = 0.49) and well-being (r = 0.49), motivation (r = 0.41), and weakly with physical activity (r = 0.30) among the students.

3.2.1 Differences Between Preservice primary (PPT) and Subject (PST) Teachers

As already reported, PDT correlates with all constructs except MOT for the whole sample. The greatest difference was found in correlations between PDT and SAT, where it can be interpreted as moderate and much higher for PST than for PPT. Higher correlations in PSTs in a small effect range were also recognised in the influence of PDT on PSY and HEA. There was no difference in effect size between SAT and WEL. A minor effect between two insignificant correlations was found between the effects of PDT on MOT. It seems that, based on the possession of PDT, PSTs suffer more than PPTs, a finding in need of in-depth study to be interpreted. On the other hand, differences are much smaller in correlations between SAT and other constructs, even if correlations in three pairs were higher than in correlations between PDT and other constructs. Differences in the moderate range were found only in the pair SAT – PHY. As in the case of PDT, it is evident that PSTs suffer more than PPTs, a finding in need of in-depth study to be fully interpreted.

Differences between MOT, HEA, WELL, and PHY conditions are rather high, but the interpretation is beyond the scope of the study.

3.2.2 Differences Between the Lower (n = 70) and Upper (n = 68) Third of Respondents Based on the Quality of PDT

Results should be received with some caution because of the relatively low number of respondents available for inclusion in the comparative analysis. However, with some caution, results can be a signpost toward follow-up studies. In the first group were 70 students (29.9%) who reported quality of PDT in a range up to 17. In the upper third group were 68 (29.1%) respondents who reported 22 and above on a 5 to 25 scale.

The influence of PDT is much greater in the lower-third group and is almost absent in the upper-third group, with the effect size between PDT and WEL even falling into the large category. In contrast, SAT is more strongly connected to HEA, WEL, MOT, and PHY activity in students with better PDT; however, the differences in terms of effect size are not evident, showing that SAT is also affected by other constructs and factors not considered in the study.

One interesting finding was that PDT did not influence SAT in the upper third group; however, it seriously impacted the lower third of students. The differences in response may lie in homework assignments, which need better computers and connections to be completed.

4 Discussion

The SARS-Cov2 preventative measures have had far-reaching effects on various sectors, including education. Undeniably, the education sphere has faced considerable disruptions, compelling an impromptu shift to online learning, which presented many challenges at different levels—social, pedagogical, emotional, cognitive, and technological (Wilson et al., 2020). These difficulties, unfortunately, were not uniformly distributed among learners (Dolenc et al., 2021), leading to alterations in online learning behaviour (Dolenc et al., 2022).

Existing literature from the pandemic period has examined these issues from multiple angles (e.g., Adedoyin & Soykan, 2020; Chick et al., 2020; Code et al., 2020; Lassoued et al., 2020; Ng et al., 2020; Tadesse & Muluye, 2020). Their central takeaway points to a challenge to the insights, findings, and claims of pre-pandemic studies (e.g., Abdal-Haqq, 1995; Brush et al., 2008; Goktas et al., 2009; Yildirim & Kiraz, 1999). A common thread among past research on preservice teachers in online environments was examining their perspectives on technology in connection with educational goals and outcomes of university courses, but not the influence of technology on other life aspects.

Our research pivots around the key construct of Personal Digital Technology (PDT), which addresses the immediate and long-term impact of PDT quality on several aspects, including Health (HEA), Physical Activity (PHY), Well-being (WEL), and Satisfaction (SAT). An important two-pronged approach frames our study: we explore immediate actions during the pandemic and long-term strategies to prevent disparities caused by differences in equipment quality and working conditions. As the pandemic restrictions ease, this second perspective becomes even more relevant (e.g., Mahmud et al., 2022; Ng, 2022; Nuzzo & Gostin, 2022; Wannapiroon et al., 2022).

With the University of Maribor endorsing MS Teams as the primary video-conferencing system, all responses primarily reflect experiences with this platform. As prospective teachers are expected to adeptly navigate a digitally driven educational landscape, it is disheartening to discover that a quarter of future educators lack adequate computers (Table 2). Moreover, approximately 40% of students lack stable internet connections, and around 35% report subpar working conditions, underscoring potential difficulties in concluding the school year if online education remains the primary delivery method.

Considering the data, it is clear that having quality computers, a stable internet connection, and a suitable workspace can significantly mitigate the issues connected with students' health, well-being, motivation, physical health, and satisfaction. Despite these findings aligning with previous studies, it is crucial to remember that these obstacles have become more pronounced during the lockdown (Cutri & Mena, 2020; Czerepaniak-Walczak, 2020; Radu et al., 2020).

Our study also illuminates how Preservice Subject Teachers (PST) suffer more than Preservice Primary School Teachers (PPT), a result that could not be fully explained by our study design. Furthermore, it is of concern that PDT's influence is stronger in the lower-third group and almost absent in the upper-third group. This finding echoes the well-established understanding that the use of technology in education is far from neutral (Clegg et al., 2003; Selwyn, 2007; Zhao et al. 2004). Therefore, it is the collective responsibility of the faculties and society to provide adequate PDT.

Surprisingly, our results do not correlate PDT and motivation (MOT), despite the crucial role motivation plays in education (e.g., Deci et al., 1991; Dörnyei & Ushioda, 2013; Herzberg, 2017; Ryan & Deci, 2000). Instead, MOT shows positive correlations with all other constructs, suggesting that student motivation is driven by course content and perceived importance rather than the technology in use. Lastly, the direct impact of PDT on satisfaction (SAT) and its indirect influence through HEA, WEL, and PHY demand attention. SAT plays a central role in determining numerous behaviors and decisions and is key to the future use of educational technology (e.g., Dolenc et al., 2021).

In conclusion, while most students possess basic equipment for online courses, the quality of these resources is inadequate for a significant number. This shortcoming affects many aspects of their personal and academic lives, emphasizing the urgency to bridge digital gaps in education.

5 Conclusions and Recommendations

Conclusions and recommendations can be drawn from the implications for theory, methodology, and practice.

From a theoretical point of view, the proposed model links constructs (latent variables) in a novel way, showing the interdependence or lack thereof. Since the constraints introduced during the pandemic have been lifted, the model should be retested under "normal" conditions to determine if the quality of PDT has the same effect on digitally enhanced education due to the required digitization of society.

Methodologically, it can be predicted with great certainty that the same conditions that prevailed during the closure, especially in the first part, will most likely never occur again. Therefore, it might be important to preserve the most valuable experiences of all those involved in the educational process and to reexamine the validity of the results in the period after the closure. Throughout the period of Forced Remote Education and the transition to distance learning, professors at the University perceived an increased range of questions about and reports of problems with technology (Dolenc et al., 2021; 2022). This study confirms these perceptions and offers insight into what is happening from the student's perspective. Students who lack the appropriate technology, workspace, or connections are more prone to technological problems than to study and learning, which shows increased problems related to health, well-being, physical activities, and a fall in motivation. Additionally, problems with computers and connections influence satisfaction, one of the predictors of almost any aspect of life. On the other hand, a student with good or excellent conditions does not perceive such problems. With the transition to Forced Remote Education, not only did the form and methods of study change, but the social corrective was also nullified. The social correction before the pandemic was made possible by studying and attending lectures and workshops in common areas of faculties, where equipment and materials were available to all students under the same conditions. According to our findings, the idea promoted by proponents of online digital learning that education can happen anytime, anywhere (Olcott, 2014) is a myth. We do not deny that the anytime-anywhere concept can be achieved in small-scale, controlled conditions, but it did not survive the reality test during the COVID-19 lockdown. These outcomes could have been predicted based on many early warnings (Chittaro, 2006).

Recommendations for practice can be made at three levels: prospective teachers, instructors, and institutions. At the level of individual students, the best solution will be to suggest that they acquire better equipment, which can be regarded as cynical. Probably a fraction of students might, for one reason or the other, oppose the use of smartphones and even computers in school, an argument that became obsolete with forced remote education. Therefore, to be equipped with technology allowing achievement of mastery in the use of educational technology is something that cannot be negotiated. With this in mind, the following question arises: what can an individual teacher trainer do to help students in their professional careers? Since individual prospective teacher educators cannot directly influence what kind of technology students possess and in which conditions they live, their options for action can be as follows:

-

1.

Recognition and internalisation of the problem;

-

2.

Adjustment of the content, format, and expected outcomes of courses to avoid exacerbating the problem. Instructors could consider adjusting course content by reducing file sizes and optimizing digital resources for slower internet speeds. The format of courses could be modified to provide both synchronous (live) and asynchronous (recorded) lessons to accommodate different access schedules and internet capabilities. Expected outcomes may need re-evaluation, with a shift in emphasis towards understanding core concepts and critical thinking skills, rather than mastering extensive digital tools that may not be accessible to all students.;

-

3.

Adjustment of the content, format and expected outcomes of the examinations. Instructors and educational administrators should advocate for more equitable distribution of resources by engaging in dialogue with local and national governments, as well as educational authorities. This could involve campaigning for policies that ensure all students have access to the necessary technology and internet capabilities, lobbying for additional funding for educational infrastructure, or partnering with community organizations or businesses to secure donations or discounts on necessary equipment.;

-

4.

Pressure on those who have access to resources to equip or help each student to acquire adequate home computers and access to the net to allow work from equal positions, by the provision of resources.

The final solution must lie with the institutions. For an individual student, it is meaningless who is responsible for the provision of equipment and fast connections; however, any solutions must be systemic and should not rely on charity organisations or support from one-off actions. The most realistic solution would be the opportunity to rent a device (computer) equipped with licensed software to allow all prospective teachers to follow the instructions and to prepare their own in both off- and online environments. Institutions should explore establishing a device rental program that enables students to rent laptops or tablets equipped with necessary educational software. This could be funded through a combination of institutional resources, grants, or subsidies from tech companies. The program would require an effective logistics management system to distribute and track the devices, a support system to help students with technical issues, and a financial model that ensures affordability for all students.

Data Availability

The datasets used and/or analysed during the current study are available from the corresponding author on reasonable request. Primary anonymised data for secondary analyses is available on request from the authors in electronic form as an Excel file. In the case of data usage, it is expected that the publication source will be properly cited.

References

Abdal-Haqq, I. (1995). Infusing technology into preservice teacher education. ERIC Digest.

Adedoyin, O. B., & Soykan, E. (2020). Covid-19 pandemic and online learning: the challenges and opportunities. Interactive Learning Environments, 1–13.

Ball, D. L., Thames, M. H., & Phelps, G. (2008). Content knowledge for teaching: What makes it special? Journal of Teacher Education, 59(5), 389–407. https://doi.org/10.1177/0022487108324554

Bell, F. (2011). Connectivism: Its place in theory-informed research and innovation in technology-enabled learning. International Review of Research in Open and Distributed Learning, 12(3), 98–118. https://doi.org/10.19173/irrodl.v12i3.902

Bhattacherjee, A. (2001). Understanding information systems continuance: An expectation-confirmation model. MIS Quarterly, 25(3), 351–370. https://doi.org/10.2307/3250921

Brainard, R., & Watson, L. (2020). Zoom in the classroom: Transforming traditional teaching to incorporate real-time distance learning in a face-to-face graduate physiology course. The FASEB Journal, 34(S1), 1–1. https://doi.org/10.1096/fasebj.2020.34.s1.08665

Brush, T., Glazewski, K. D., & Hew, K. F. (2008). Development of an instrument to measure preservice teachers’ technology skills, technology beliefs, and technology barriers. Computers in the Schools, 25(1–2), 112–125.

Bullock, S. M. (2013). Using digital technologies to support self-directed learning for preservice teacher education. Curriculum Journal, 24(1), 103–120.

Byrne, B. M. (2013). Structural equation modeling with AMOS: Basic concepts, applications, and programming. Routledge.

Carrillo, C., & Flores, M. A. (2020). COVID-19 and teacher education: A literature review of online teaching and learning practices. European Journal of Teacher Education, 43(4), 466–487.

Carter, R. L. (2006). Solutions for missing data in structural equation modeling. Research & Practice in Assessment, 1, 4–7.

Chick, R. C., Clifton, G. T., Peace, K. M., Propper, B. W., Hale, D. F., Alseidi, A. A., & Vreeland, T. J. (2020). Using technology to maintain the education of residents during the COVID-19 pandemic. Journal of Surgical Education, 77(4), 729–732.

Chittaro, L. (2006). Visualising information on mobile devices. Computer, 39(3), 40–45. https://doi.org/10.1109/MC.2006.109

Clegg, S., Hudson, A., & Steel, J. (2003). The emperor’s new clothes: Globalisation and e-learning in higher education. British Journal of Sociology of Education, 4(1), 39–53. https://doi.org/10.1080/01425690301914

Cochran, K. F., DeRuiter, J. A., & King, R. A. (1993). Pedagogical content knowing: An integrative model for teacher preparation. Journal of Teacher Education, 44(4), 263–272. https://doi.org/10.1177/0022487193044004004

Code, J., Ralph, R., & Forde, K. (2020). Pandemic designs for the future: Perspectives of technology education teachers during COVID-19. Information and Learning Sciences, 121(5/6), 419–431.

Cohen, J. E. (1988). Statistical power analysis for the behavioral sciences. Hillsdale, NJ: Lawrence Erlbaum Associates, Inc.

Crawford, J., Butler-Henderson, K., Rudolph, J., Malkawi, B., Glowatz, M., Burton, R., Magni, P., & Lam, S. (2020). COVID-19: 20 countries’ higher education intra-period digital pedagogy responses. Journal of Applied Learning & Teaching, 3(1), 1–20. https://doi.org/10.37074/jalt.2020.3.1.7

Csikszentmihalyi, M., & Csikzentmihaly, M. (1990). Flow: The psychology of optimal experience (Vol. 1990). Harper & Row.

Cutri, R. M., & Mena, J. (2020). A critical reconceptualisation of faculty readiness for online teaching. Distance Education, 41(3), 361–380. https://doi.org/10.1080/01587919.2020.1763167

Czerepaniak-Walczak, M. (2020). Respect for the right to education in the COVID-19 pandemic time. Towards reimagining education and reimagining ways of respecting the right to education. The New Educational Review, 62(4), 57–66. https://doi.org/10.15804/tner.2020.62.4.05

Davis, F. D. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology. MIS Quarterly, 13(3), 319–340. https://doi.org/10.2307/2F249008

De’, R., Pandey, N., & Pal, A. (2020). Impact of digital surge during Covid-19 pandemic: A viewpoint on research and practice. International Journal of Information Management, 55, 102171. https://doi.org/10.1016/j.ijinfomgt.2020.102171

Dearden, L., Miranda, A., & Rabe-Hesketh, S. (2011). Measuring school value added with administrative data: The problem of missing variables. Fiscal Studies, 32(2), 263–278. https://doi.org/10.1111/j.1475-5890.2011.00136.x

Deci, E. L., Vallerand, R. J., Pelletier, L. G., & Ryan, R. M. (1991). Motivation and education: The self-determination perspective. Educational Psychologist, 26(3–4), 325–346. https://doi.org/10.1080/00461520.1991.9653137

Dolan, P., Kudrna, L., Testoni, S., & Series, M. W. (2017). Definition and measures of subjective well-being. Centre for Economic Performance (Discussion paper 3), 1–9.

Dolenc, K., Šorgo, A., & Ploj Virtič, M. (2021). The difference in views of educators and students on forced online distance education can lead to unintentional side effects. Education and Information Technologies, 1–27.

Dolenc, K., Šorgo, A., & Ploj Virtič, M. (2022). Perspectives on lessons from the COVID-19 outbreak for post-pandemic higher education: Continuance intention model of forced online distance teaching. European Journal of Educational Research, 11(1), 163–177. https://doi.org/10.12973/eu-jer.11.1.163

Dörnyei, Z., & Ushioda, E. (2013). Teaching and researching: Motivation. Routledge.

Drudy, S. (2008). Gender balance/gender bias: The teaching profession and the impact of feminisation. Gender and Education, 20(4), 309–323. https://doi.org/10.1080/09540250802190156

Gabrovec, B., Selak, Š., Crnkovič, N., Cesar, K., & Šorgo, A. (2022). Perceived satisfaction with online study during COVID-19 lockdown correlates positively with resilience and negatively with anxiety, depression, and stress among slovenian postsecondary students. International Journal of Environmental Research and Public Health, 19(12), 7024. https://doi.org/10.3390/ijerph19127024

Gewin, V. (2020). Five tips for moving teaching online as COVID-19 takes hold. Nature, 580(7802), 295–296. https://doi.org/10.1038/d41586-020-00896-7

Goktas, Y., Yildirim, S., & Yildirim, Z. (2009). Main barriers and possible enablers of ICTs integration into pre-service teacher education programs. Journal of Educational Technology & Society, 12(1), 193–204.

Hadar, L. L., Alpert, B., & Ariav, T. (2020). The response of clinical practice curriculum in teacher education to the Covid-19 breakout: A case study from Israel. Prospects. https://doi.org/10.1007/s11125-020-09516-8

Hamilton, L., & Gross, B. (2021). How has the pandemic affected students’ social-emotional well-being? Center on Reinventing Public Education.

Haydn, T. (2014). How do you get pre-service teachers to become ‘good at ICT’in their subject teaching? The views of expert practitioners. Technology, Pedagogy and Education, 23(4), 455–469.

Herzberg, F. (2017). Motivation to work. Routledge.

Howard, S. K., Tondeur, J., Ma, J., & Yang, J. (2021). What to teach? Strategies for developing digital competency in preservice teacher training. Computers & Education, 165, 104149.

1KA. (2021). 1KA: Online survey software (version 21.11.16) [Computer software].University of Ljubljana, Faculty of Social Sciences. https://www.1ka.si

Jandrić, P., Hayes, D., Levinson, P., Christensen, L. L., Lukoko, H. O., Kihwele, J. E., Brown, J. B., Reitz, C., Mozelius, P., Nejad, H. G., Martinez, A. F., & Hayes, S. (2021). Teaching in the age of Covid-19—1 year later. Postdigital Science and Education, 3(3), 1073–1223.

Kim, J. (2020). Learning and teaching online during Covid-19: Experiences of student teachers in an early childhood education practicum. International Journal of Early Childhood, 52(2), 145–158. https://doi.org/10.1007/s13158-020-00272-6

Kline, R. B. (2010). Principles and practice of structural equation modeling. The Guilford Press.

Lassoued, Z., Alhendawi, M., & Bashitialshaaer, R. (2020). An exploratory study of the obstacles for achieving quality in distance learning during the COVID-19 pandemic. Education Sciences, 10(9), 232.

Lenhard, W., & Lenhard, A. (2017). Calculation of effect sizes. Retrieved February 2, 2021, from: https://www.psychometrica.de/effect_size.html. https://doi.org/10.13140/RG.2.2.17823.92329

Lin, M. H., & Chen, H. G. (2017). A study of the effects of digital learning on learning motivation and learning outcome. Eurasia Journal of Mathematics, Science and Technology Education, 13(7), 3553–3564. https://doi.org/10.12973/eurasia.2017.00744a

Mahmud, M. M., Wong, S. F., & Ismail, O. (2022). Emerging learning environments and technologies post Covid-19 pandemic: What’s Next? Advances in Information, Communication and Cybersecurity: Proceedings of ICI2C’21 (pp. 308–319).

Martin, R., McGill, T., & Sudweeks, F. (2013). Learning anywhere, anytime: student motivators for m-learning. In Proceedings of the Informing Science and Information Technology Education Conference (pp. 51–67). Informing Science Institute.

Meeter, M., Bele, T., Den Hartogh, C. F., Bakker, T., De Vries, R. E., & Plak, S. (2020). College students' motivation and study results after COVID-19 stay-at-home orders [Internet document]. https://psyarxiv.com/kn6v9/

Mishra, P., & Koehler, M. J. (2006). Technological pedagogical content knowledge: A framework for teacher knowledge. Teachers College Record, 108(6), 1017–1054.

Mseleku, Z. (2020). A literature review of E-learning and E-teaching in the era of Covid-19 pandemic. International Journal of Innovative Science and Research Technology, 5(10), 588–597.

Ng, D. T. K. (2022). Online aviation learning experience during the COVID-19 pandemic in Hong Kong and Mainland China. British Journal of Educational Technology, 53(3), 443–474. https://doi.org/10.1111/bjet.13185

Ng, T. K., Reynolds, R., Chan, M. Y. H., Li, X., & Chu, S. K. W. (2020). Business (teaching) as usual amid the COVID-19 pandemic: A case study of online teaching practice in Hong Kong. Journal of Information Technology Education: Research, 19, 775–802. https://doi.org/10.28945/2F4620

Nuzzo, J. B., & Gostin, L. O. (2022). The first 2 years of COVID-19: Lessons to improve preparedness for the next pandemic. JAMA, 327(3), 217–218.

OECD. (2016). Education Policy Outlook: Slovenia [Internet document] www.oecd.org/education/policyoutlook.htm

Olcott, D. Jr. (2014). Transforming learning environments for anytime, anywhere learning for all. In Microsoft in Education Transformation Framework (9 ed., pp. 1–26). Microsoft [Internet document] https://researchoutput.csu.edu.au/ws/portalfiles/portal/9991085/9_MS_EDU_TransformingLearningEnvironments.pdf

Oliver, R. L. (1980). A cognitive model of the antecedents and consequences of satisfaction decisions. Journal of Marketing Research, 17(4), 460–469. https://doi.org/10.2307/3150499

Ovčjak, B., Heričko, M., & Polančič, G. (2015). Factors impacting the acceptance of mobile data services—A systematic literature review. Computers in Human Behavior, 53, 24–47. https://doi.org/10.1016/j.chb.2015.06.013

Pandey, D., Ogunmola, G. A., Enbeyle, W., Abdullahi, M., Pandey, B. K., & Pramanik, S. (2021). COVID-19: A framework for effective delivering of online classes during lockdown. Human Arenas, 1–15.

Pfefferbaum, B., & North, C. S. (2020). Mental health and the Covid-19 pandemic. New England Journal of Medicine, 383(6), 510–512. https://doi.org/10.1056/NEJMp2008017

Ploj Virtič, M., Dolenc, K., & Šorgo, A. (2021a). Changes in online distance learning behaviour of university students during the coronavirus disease 2019 outbreak, and development of the model of forced distance online learning preferences. European Journal of Educational Research, 10(1), 393–411. https://doi.org/10.12973/eu-jer.10.1.393

Ploj Virtič, M., Du Plessis, A., & Šorgo, A. (2021b). In the search for the ideal mentor by applying the ‘Mentoring for effective teaching practice instrument’. European Journal of Teacher Education, 1–19. https://doi.org/10.1080/02619768.2021.1957828

Podsakoff, P. M., MacKenzie, S. B., & Podsakoff, N. P. (2012). Sources of method bias in social science research and recommendations on how to control it. Annual Review of Psychology, 63, 539–569. https://doi.org/10.1146/annurev-psych-120710-100452

Radu, M. C., Schnakovszky, C., Herghelegiu, E., Ciubotariu, V. A., & Cristea, I. (2020). The impact of the COVID-19 pandemic on the quality of educational process: A student survey. International Journal of Environmental Research and Public Health, 17(21), 7770. https://doi.org/10.3390/ijerph17217770

Rolstad, S., Adler, J., & Rydén, A. (2011). Response burden and questionnaire length: Is shorter better? A review and meta-analysis. Value in Health, 14(8), 1101–1108.

Rusek, M., Stárková, D., Chytrý, V., & Bílek, M. (2017). Adoption of ICT innovations by secondary school teachers and pre-service teachers within chemistry education. Journal of Baltic Science Education, 16(4), 510.

Ryan, R. M., & Deci, E. L. (2000). Self-determination theory and the facilitation of intrinsic motivation, social development, and well-being. American Psychologist, 55(1), 68–78. https://doi.org/10.1037/0003-066X.55.1.68

Schober, P., Boer, C., & Schwarte, L. A. (2018). Correlation coefficients: appropriate use and interpretation. Anesthesia & Analgesia, 126(5), 1763–1768.

Selwyn, N. (2007). The use of computer technology in university teaching and learning: A critical perspective. Journal of Computer Assisted Learning, 23(2), 83–94.

Shulman, L. S. (1986). Those who understand: Knowledge growth in teaching. Educational Researcher, 15(2), 4–14. https://doi.org/10.3102/0013189X015002004

Siemens, G. (2004). Connectivism: A learning theory for the digital age [Internet document] URL http://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.1089.2000&rep=rep1&type=pdf. Accessed 01/03/2021.

Smith, J., Guimond, F. A., Bergeron, J., St-Amand, J., Fitzpatrick, C., & Gagnon, M. (2021). Changes in students’ achievement motivation in the context of the COVID-19 pandemic: A function of extraversion/introversion? Education Sciences, 11(1), 30.

Stockwell, S., Trott, M., Tully, M., Shin, J., Barnett, Y., Butler, L., ... & Smith, L. (2021). Changes in physical activity and sedentary behaviours from before to during the COVID-19 pandemic lockdown: a systematic review. BMJ Open Sport & Exercise Medicine. https://doi.org/10.1136/bmjsem-2020-000960

Šumak, B., & Šorgo, A. (2016). The acceptance and use of interactive whiteboards among teachers: Differences in UTAUT determinants between pre-and post-adopters. Computers in Human Behavior, 64, 602–620. https://doi.org/10.1016/j.chb.2016.07.037

Šumak, B., Pušnik, M., Heričko, M., & Šorgo, A. (2017). Differences between prospective, existing, and former users of interactive whiteboards on external factors affecting their adoption, usage and abandonment. Computers in Human Behavior, 72, 733–756. https://doi.org/10.1016/j.chb.2016.09.006

Tadesse, S., & Muluye, W. (2020). The impact of COVID-19 pandemic on education system in developing countries: A review. Open Journal of Social Sciences, 8(10), 159–170.

Tang, Y. M., Chen, P. C., Law, K. M., Wu, C. H., Lau, Y. Y., Guan, J., ... & Ho, G. T. (2021). Comparative analysis of Student's live online learning readiness during the coronavirus (COVID-19) pandemic in the higher education sector. Computers & Education. https://doi.org/10.1016/j.compedu.2021.104211

Taylor, S. (2022). The psychology of pandemics. Annual Review of Clinical Psychology, 18, 581–609.

Thomas, M. S., & Rogers, C. (2020). Education, the science of learning, and the COVID-19 crisis. Prospects, 49(1), 87–90. https://doi.org/10.1007/s11125-020-09468-z

Tison, G. H., Avram, R., Kuhar, P., Abreau, S., Marcus, G. M., Pletcher, M. J., & Olgin, J. E. (2020). Worldwide effect of COVID-19 on physical activity: A descriptive study. Annals of Internal Medicine, 173(9), 767–770. https://doi.org/10.7326/M20-2665

United Nations. (2020). Policy Brief: Education During COVID-19 and Beyond [Internet document] https://www.un.org/development/desa/dspd/wp-content/uploads/sites/22/2020/08/sg_policy_brief_covid-19_and_education_august_2020.pdf

Venkatesh, V., Morris, M. G., Davis, G. B., & Davis, F. D. (2003). User acceptance of information technology: Toward a unified view. MIS Quarterly, 27(3), 425–478. https://doi.org/10.2307/30036540

Wannapiroon, P., Nilsook, P., Jitsupa, J., & Chaiyarak, S. (2022). Digital competences of vocational instructors with synchronous online learning in next normal education. International Journal of Instruction, 15(1), 293–310.

Warburton, D. E., Nicol, C. W., & Bredin, S. S. (2006). Health benefits of physical activity: The evidence. CMAJ, 174(6), 801–809. https://doi.org/10.1503/cmaj.051351

Wei, L., & Hindman, D. B. (2011). Does the digital divide matter more? Comparing the effects of new media and old media use on the education-based knowledge gap. Mass Communication and Society, 14(2), 216–235.

Wilke, J., Mohr, L., Tenforde, A. S., Edouard, P., Fossati, C., González-Gross, M., ... & Hollander, K. (2021). A pandemic within the pandemic? Physical activity levels substantially decreased in countries affected by COVID-19. International Journal of Environmental Research and Public Health, 18(5), 2235.

Wilson, M. L., Ritzhaupt, A. D., & Cheng, L. (2020). The impact of teacher education courses for technology integration on pre-service teacher knowledge: A meta-analysis study. Computers & Education, 156, 103941.

Yildirim, S., & Kiraz, E. (1999). Obstacles in integrating online communications tools into preservice teacher education: A case study. Journal of Computing in Teacher Education, 15(3), 23–28.

Zacher, H., & Rudolph, C. W. (2020). Individual differences and changes in subjective well-being during the early stages of the COVID-19 pandemic. American Psychologist., 76(1), 50–62. https://doi.org/10.1037/amp0000702

Zhao, Y., Alvarez-Torres, M. J., Smith, B., & Tan, H. S. (2004). The non-neutrality of technology: A theoretical analysis and empirical study of computer mediated communication technologies. Journal of Educational Computing Research, 30(1–2), 23–55. https://doi.org/10.2190/5N93-BJQR-3H4Q-7704

Acknowledgements

The authors acknowledge the help of Dr Michelle Gadpaille in polishing the language. The authors would like to thank the students who have been involved in the research, without whom this work would not have been possible.

Funding

This work was supported by the Slovenian Research Agency under the core projects: “Information Systems”, Grant No. P2-0057 (Šorgo, Andrej) and “Computationally Intensive Complex Systems”, Grant No. P1-0403 (Ploj Virtič, Mateja).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest. The article is an original work of all the authors, and has not been submitted elsewhere, nor is it under consideration for publication in any other journal.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix: Brief Presentation of the Educational System in Slovenia

Appendix: Brief Presentation of the Educational System in Slovenia

Slovenian pre-tertiary education consists of compulsory 9-year basic education, which is provided within the elementary school and 3- 4-year secondary education (OECD, 2016). Nine years of compulsory elementary school is formally divided into triads; however, from the teaching point of view, the class-level (classes 1–5) is taught by primary teachers, with some subjects, such as sport, taught by a subject teacher. These teachers handle all the subjects (e.g. mathematics, mother tongue, science, music education, etc.), which means that primary teacher education covers a wide range of areas. In contrast, the subject-level (classes 6–9), the lower secondary school level, is taught primarily by two-streams subject teachers. Upper secondary level education is dominated by single-stream subject teachers.

To become a primary or a subject teacher, a candidate should have completed a master’s degree (300 ECTS), which includes at least 60 ECTS in pedagogical subjects and teaching practice, which currently means at least 5 years of study. Since the faculties are autonomous in organizing their study programs, there exists a range of curricular models: (1) 3 Bachelor + 2 Master; (2) 4 Bachelor + 1 Master; (3) 0 + 5 Bachelor and Master are integrated.

The subject teacher programs regularly offer two options of study: one-subject study and two-subject study. Alternatively, someone who completed Master’s studies in a non-pedagogical program (e.g. Biology, Mathematics, or Engineering) can enrol in a 60 ECTS pedagogical program to get a licence for teaching. Regardless of the program and after about one year of apprenticeship in teaching, the candidate may sit the state examination, a professional examination that enables the candidate to obtain a lifetime teaching license.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Šorgo, A., Ploj Virtič, M. & Dolenc, K. The Idea That Digital Remote Learning Can Happen Anytime, Anywhere in Forced Online Teacher Education is a Myth. Tech Know Learn 28, 1461–1484 (2023). https://doi.org/10.1007/s10758-023-09685-3

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10758-023-09685-3