Abstract

This research aims to address the current gaps in computer-assisted translation (CAT) courses offered in bachelor’s and master’s programmes in scientific and technical translation (STT). A multi-framework course design methodology is proposed to support CAT teachers from the computer engineering field, improve student engagement, and promote computer-supported education, together with a balanced coverage of the most relevant topics in the CAT domain. STT is currently in high demand in many fields, requiring translators with sector-specific language skills and considerable computer literacy in order to manage translation projects with complex structures, and format heterogeneity. However, many STT curricula often lag behind current market demands, focusing primarily on language and translation theory, with less emphasis on CAT technologies and tools. Moreover, the lack of shared course design guidelines hinders the introduction of innovative teaching approaches based on collaborative learning. A novel multi-framework CAT course design methodology, named CATDeM, is proposed, based on the integration between an official European translation competence framework, real-life-mimicking laboratorial activities, and computer-supported collaborative learning, enriched with discussion case studies and role-playing experiences. A real-life case study is examined to illustrate and evaluate the implementation of CATDeM in two consecutive editions (2020/2021 and 2021/2022) of a one-semester compulsory CAT course in a M.A. degree in STT at the University of Salento (Italy). Students’ perceptions of translation technology and role-plays, as well as their attitudes towards the proposed CAT course are evaluated through a post-grading self-assessment questionnaire. Achieved results indicated successful student engagement and self-assessed improvement in translation, technical, and interpersonal skills. The importance given by students to role-playing experiences mimicking professional scenarios was also highlighted, paving the way for CATDeM to be adopted in similar contexts.

Similar content being viewed by others

Explore related subjects

Discover the latest articles, news and stories from top researchers in related subjects.Avoid common mistakes on your manuscript.

1 Introduction

Scientific and technical translation, or STT as defined by Olohan (2015), typically entails translating extensive volumes of data and documents, encompassing hundreds or even thousands of files in different formats, into multiple target languages (Garcia, 2015) in order to make them accessible to scientists, researchers, clinicians, students, technical specialists, and even ordinary users of electronic devices. Representative examples of technical documents include patents, user manuals, scientific articles and reports, engineering specifications, safety data sheets, medical guidelines, clinical procedures, product catalogues, specialist glossaries, as well as text strings from application software and content from Web pages. These documents typically contain technical jargon, many abbreviations, significant amounts of quantitative data along with units of measurements, visual plots and tables, pictures and graphics (Olohan, 2015, 2021).

In such a peculiar scenario, not only the correctness, quality, and consistency of the translation are crucial but also the role of technology, as it is simultaneously a driving factor and an essential enabler (Rothwell & Svoboda, 2019) that has shaped the scientific translator’s workflow (Bowker, 2015). As a direct consequence, over the last decade, STT teaching curricula and translator training programmes have included courses on technologies that support the translation workflow, with the aim of complementing translation competences with technological competences (PACTE group et al., 2018). Technologies are now perceived as fundamental components along with linguistic, translation, and intercultural skills for the education of students (Gaspari et al., 2015; Rico, 2017), thanks to the definition of specific, multi-skill competence frameworks (Toudic & Krause, 2017).

In addition to the fundamental and ever-present need for scientific translation skills, modern translators must acquire at least a profitable operational knowledge of computer-assisted translation (CAT) tools (Krüger, 2016). Essentially, these software applications support the translator’s daily activities: the human translates digital documents, while the software manages a database of all the translations, preserves the graphical layout of the document, and automates repetitive tasks. These solutions are also known as Translation Management Systems (TMS)Footnote 1 (Shuttleworth, 2015).

Realistic problem-based educational contexts (which are more widely used in STEM pedagogy) are also gaining importance in translation education (Mellinger, 2018). This is facilitated by the adoption of new technologies that allow the implementation of the long-theorised approach of situated translation (Bowker & Marshman, 2010; Risku, 2002), according to which the translator’s actions and problem-solving skills are intertwined with the overall situation in which these actions take place and these skills are required.

One might therefore assume that all the necessary conditions are in place for university translation courses to finally catch up with the surrounding technological landscape, but this is not yet the case. The heterogeneity of course programmes, content structures, and learning objectives is evidenced by the significant flow of scientific literature focusing on the teaching of specific topics, ranging from traditional CAT systems (Shuttleworth, 2017) and terminology management (Montero Martínez & Benítez, 2009) to machine translation post-editing (Arenas & Moorkens, 2019) and even to programming resources for machine translation (Krüger, 2021). Similarly, educational strategies and the assessment of pedagogical effectiveness vary considerably, from context-based approaches (Killman, 2018) to autonomous learning (Shuttleworth, 2017) and virtual learning (Wang & Wang, 2021). Moreover, CAT courses do not take advantage sufficiently of the fruitful collaboration between the computer science sector and the applied translation fields, with the former perceiving the latter as secondary and the latter perceiving the former as too computer-dependent. Furthermore, several studies have pointed out that the gap between the current state of STT teaching and the market demand for skilled translators requires appropriate pedagogical interventions (Rothwell & Svoboda, 2019; Zhang & Vieira, 2021).

Based on such premises, this paper proposes a broader and more systematic perspective on how to design Master CAT courses in which translation technology and STT are intertwined, without focusing only on a specific topic or sub-topic. In addition, special attention is given to how to provide students with more engaging opportunities to acquire the technical and professional skills required by the market and to bring them closer to the real working environment of STT.

More specifically, this work presents a multi-framework methodology, called CATDeM, to design CAT courses, enriched with discussion case studies and role-playing elements, with the aim of easing the workload of educators and instructors as well as improving student engagement and learning outcomes. CATDeM is aimed specifically at instructors in the field of computer-engineering and has three objectives: (1) modelling: to leverage frameworks this typology of instructors is already familiar with, in order to speed up the course design process; (2) technology: to guide the instructor rigorously through the technical peculiarities of STT and CAT fields; (3) education: to provide the instructor with a holistic perspective on STT and CAT teaching. To the author’s knowledge, the proposed methodology is the first of its kind at the time of writing this paper, thanks to the composition of several frameworks.

Another important aim is to propose a balance between the different topics and sub-topics related to CAT in a M.A. degree in STT. In some cases, the translation technology component is reduced to a single course, while in others it is possible to benefit from several courses dedicated to vertical topics, where the introductory CAT course is then followed by courses on MT, terminology management, software localisation, and so on (Malenova, 2019; Motiejūnienė & Kasperavičienė, 2019; Zhang & Vieira, 2021). This diversity depends on the specific academic context and, therefore, the modular and self-consistent design methodology proposed in this paper is intended to be adaptable to the specific needs of the teacher designing a single CAT course, in order to take into account all possible scenarios.

Before introducing CATDeM, an analysis of the tools that populate the translation technology landscape will be proposed according to functional categories that represent relevant topics for any CAT course. It will be explained how a pivotal component of CATDeM is borrowed from business process modelling (BPM Resource Center, 2014), to take advantage from a rigorous ICT perspective so that the CAT course can not only be modelled but also operated, monitored, and optimised to make it replicable in different academic contexts and compatible with professional STT workflows. CATDeM is further enriched with computer-supported collaborative learning and active learning elements (De Hei et al., 2016), such as discussion case studies and role-playing experiences, whose mapping to the course structure and learning goals will be thoroughly discussed, together with the proposed teaching approach and learning platforms.

A real-life case study will then be examined to illustrate the implementation of CATDeM in two consecutive editions of a one-semester compulsory course on computer-assisted scientific translation in a M.A. degree in STT and InterpretingFootnote 2 at the University of Salento (Italy), covering the academic years 2020/2021 and 2021/2022. Students’ perceptions of translation technology and their attitudes towards the proposed CAT course will be evaluated through a post-grading self-assessment questionnaire.

The paper is structured as follows: extended motivations and research questions are detailed in the remaining part of Sect. 1. The theoretical background and the related works are presented in Sect. 2, while the ecosystem of translation technologies is sketched in Sect. 3. Course design strategies and their implementation in a CAT course acting as a pilot case study are thoroughly described in Sect. 4. The achieved results are discussed in Sect. 5. Section 6 provides the answers to the research questions. Conclusions are drawn in Sect. 7, together with limitations and further developments of the study. Finally, a list of acronyms is reported to support the reader, along with the list of software tools mentioned throughout the paper.

1.1 Motivations

The language services market, mainly driven by the business globalisation, the need for customised digital content, and the ever-expanding medical sector, grew by 4.77% year-on-year in 2022 despite global economic uncertainties (Slator, 2023) and is expected to grow at a compound annual growth rate (CAGR) of 5.3% over the period 2023–2028 (Mordor Intelligence, 2023). In terms of market size, translation and localisation together represent the largest segment of language services (i.e., 43%), followed by interpreting (15.1%), dubbing (10.7%), and machine translation (5.9%) (Hickey, 2023a). The translation and localisation segment, in turn, is driven by several industry verticals, in varying proportions, from government (18.1%) to healthcare (10.2%), from life sciences (9.5%) to retail and e-commerce (2%) (Hickey, 2023b). Some of these industry verticals are more closely related to scientific and technical translation than others, as certain sectors require accurate translation of specialised and technical content to ensure effective communication, compliance with standards, and global dissemination of knowledge within their respective fields. More specifically, technology, IT, software, automotive, aviation, manufacturing, healthcare, life sciences, financial and legal, when aggregated, account for 47.1% of the verticals listed in (Hickey, 2023b), thus representing one of the largest shares of the translation services market. In addition, several verticals (e.g., utilities, healthcare and pharmaceuticals, electronics and semiconductors, IT services and gaming, etc.) have shown steadily increasing demand in the recent years (DePalma, 2021).

These insights clarify how required a scientific and technical translator can be nowadays. This role, as anticipated in the introductory section, requires professionals skilled not only in the CAT field but also on Machine Translation (MT) engines (Alcina, 2008), which represent a powerful asset (if used properly by the translator) and typically require human post-editing intervention before automatically translated texts can be delivered to the client. Similarly, translation project managers have to deal with complex software systems that allow them to manage the entire service provisioning pipeline effectively, from the initial negotiation with the client up to the delivery of the final product (Shuttleworth, 2015). From a deployment-oriented perspective, a significant subset of these tools has shifted over the last decade to cloud-based software-as-a-service (SaaS) solutions that allow: (1) translators to benefit from collaborative functionalities and remote access to resources, and (2) language service providers (LSPs) to avoid the need for on-premises equipment, thus increasing accessibility, productivity, and market opportunities. Moreover, TMS and MT engines (or MTEs, for brevity) are only the best known elements in a broader collection of technological enablers that translators, as well as trainers and educators, has to confront with: at least ten different categories, encompassing more than 800 IT tools, can be identified, according to a recent market report by Nimdzi (Akhulkova et al., 2022).

Designing and implementing a CAT course is, therefore, a multifaceted challenge, made more difficult by the lack of widely available curriculum design guidelines. It is necessary to identify carefully not only the enabling information and communication technology (ICT) tools but also the most appropriate topics and the most effective teaching strategies. Only in this way will students be able to meet the ever-increasing market demand for professionally qualified scientific translators, a trend that was already consolidating in the early 2000s (Bacon, 2002), but has now become even more strategic, as the flow of technical publications has doubled in the last decade (Olohan, 2015). Companies wishing to offer localised products on a global scale or requiring the secure translation of patents in foreign markets, businesses demanding complex translations compliant with safety instructions detailed in technical standards, IT vendors requiring adequate global localisation of the graphical user interface of their software products, all need professional translators capable of transposing technical-scientific knowledge from one language into another (Olohan, 2021; Scheibengraf, 2023).

Another challenge for STT students is to cope adequately with technology advances by learning how to use them effectively (Doherty, 2016) and without being locked to a specific commercial software solution or approach that could lead to high licensing costs, excessive dependency on proprietary formats as well as on vendor-provided updates and customer support. While in more ICT-driven areas such as engineering education, students achieve progressively the necessary skill set to adapt to different and rapidly evolving software environments or to use alternative free and open source tools (Álvarez Ariza & Nomesqui Galvis, 2023; Pinto et al., 2019; Srinivasa et al., 2021), the same effectiveness could be more difficult to reach for STT students. To this end, computer-supported collaborative learning (CSCL) (Lipponen et al., 2004) and active learning activities, largely exploited in scientific curricula at higher education level (Hmelo-Silver & Jeong, 2021; Ma et al., 2020) but still overlooked in translation teaching, can represent an effective educational asset to exploit.

1.2 Research Questions

As anticipated in the introductory section, this paper proposes a CAT course design methodology called CATDeM to systematise the STT educational context, where ICT and enabling software platforms are too often relegated to a secondary role or focused solely on vertical sub-topics (e.g., machine translation post-editing or terminology management). To achieve this goal, the following research questions (RQs) will be answered:

-

(RQ1)

What is the technological landscape from which the educators should draw, depending on pedagogical requirements and market demands?

-

(RQ2)

How to support computer scientists and researchers involved as instructors in CAT courses (in B.A./M.A. in translation studies)?

-

(RQ3)

How to bring computer-supported active learning and computer-supported collaborative learning strategies from the computer science area (where they are usually adopted) to CAT courses?

-

(RQ4)

Are these activities effective and how they are perceived by students?

To this end, the rationale of a CAT course designed and implemented at the University of Salento (Italy) and incorporating collaborative and active learning activities will be proposed, together with a detailed data analysis of its effectiveness.

2 Theoretical Background and Related Works

2.1 Computer-Assisted Translation and Machine Translation Teaching

CAT courses are nowadays available in the majority of translation teaching curricula, either at bachelor or master level (Rothwell & Svoboda, 2019). A common format relies on laboratory-oriented courses, which are mainly dedicated to making students operationally competent in using a specific software tool (Malenova, 2019), with few courses focusing on machine translation and even fewer on software/videogame localisation. Collaborative learning practices are even rarer and a full technology-driven training for modern translators is far from being achieved systematically on a large scale.

The inherent interdisciplinary nature of the CAT approach would require tight cooperation and interaction between educators from the computer science sector and educators from the applied-translation area, so to provide students with a real competitive advantage, but CAT courses do not benefit from such a combined approach and these two categories of educators often operate separately.

Consequently, even though the wide availability of ICT tools has made their application in translation teaching inevitable (Ivanova, 2016) and even though TMSs have long been considered essential in translator education (Bowker, 2002), the real scenario has been mostly static for about two decades in terms of novel educational strategies for technology-assisted language teaching. Moreover, two typical obstacles can be identified.

The first hindrance is related to students’ digital competence (also referred to as digital literacy), intended as the ability to apply five basic digital skills (i.e., information and data literacy, online communication and collaboration, digital content creation, safety, problem-solving) in a confident, critical, and responsible way to a defined context (Brolpito, 2018). These basic skills vary significantly depending on geographical and social contexts, thus creating potentially relevant digital divides from country to country. According to Eurostat, digital skills of young Europeans (i.e., those aged 16–29) exhibit considerable differences, ranging from 93% of Finnish youngsters having at least one basic digital skill to 49% and 46% in Bulgaria and Romania, respectively (Eurostat, 2022). Moreover, digital skills of B.A./M.A. students are generally lower than those of computer science students and diversified among different humanities faculties (Vodă et al., 2022; Yoleri & Nur Anadolu, 2022), thus limiting significantly the possibility to cover additional topics as complex as software and video game localisation or technical foundations of MT. In order to incorporate those topics effectively in translation education curricula, more advanced digital skills are required, such as prior knowledge of programming languages, data structures, UI/UX (User Interface/User eXperience) design principles as detailed in Microsoft’s localisation guides (Microsoft, 2022), and AI/DL (Artificial Intelligence/Deep Learning) fundamentals (Maxwell Chandler & O’Malley Deming, 2011; O’Hagan, 2020).

The second obstacle is related to CAT educators, who usually come from computer science/engineering backgrounds. As a direct consequence, it is highly plausible that they master scientific and technical genres in written/spoken English as both text producers and text consumers, since they are specialists who need to communicate frequently with other specialists in the same field. However, it is definitely less likely, that they know the translation strategies needed to deal with other languages and cultural contexts (Olohan, 2021), how to interact with LSPs, or the professional translation pipeline regulated by international standards (ISO, 2015). Therefore, the main challenge in this case is to avoid that students are trained more from a pure software tool-oriented operational perspective and less from an applied-translation perspective.

Limitations can also be observed with regard to MT topics in STT teaching (Wittkowsky, 2014). MT is usually referred to in terms of pre- and post-editing activities (Toledo Báez, 2018), with some introductory concepts supporting the basic distinction between statistical, neural, and hybrid MT (Zhang & Vieira, 2021), but without delving into the latest approaches, such as graph convolutional networks (GCN) (Suman Banerjee & Khapra, 2019; Zhang et al., 2023) or federated learning (FL) (Lu et al., 2022).

GCN allows achieving sentence-structure-aware NMT in densely connected deep architectures without suffering from performance degradation as the architecture scales, and is more suitable for hardware acceleration, thus promising to outperform traditional NMT approaches (Guo et al., 2019).

FL has proven to be beneficial for multilingual MT, as it allows machine-learning models to be trained across multiple decentralised devices (or edge computing nodes) without sharing raw data, thus preserving data privacy and security while enabling collaborative model training. As multilingual MT is often applied to large volumes of sensitive data that require complex training of mixed-domain translation models, FL can be used to overcome the need for costly data preparation techniques (Passban et al., 2022) and communication overhead between neural nodes (Roosta et al., 2021), achieving better performance than traditional MT approaches based on centralised learning (Weller et al., 2022). Although the working principles of FL are beyond the scope of a CAT course, STT students would benefit from discussing FL-related topics, such as centralised vs decentralised learning, data privacy, data distribution, and resource constraints.

Another crucial challenge in MT is how MT models need to be trained differently for low-resource languages (i.e., languages for which limited corpora are available) and for high-resource languages. STT students should be taught about the technological approaches to cope with low-resource languages, such as model compression and knowledge distillation (Gou et al., 2023; F. Wang et al., 2021), which can increase MT accuracy and reduce the operational costs of MT service providers in the post-deployment phase (Jooste et al., 2022).

Finally, the emergence of Generative AI (GAI) technologies as alternative enablers to multilingual translation (GAI-MT) should be also considered as a no longer avoidable topic to be discussed in STT courses. GAI-MT promises accuracy, speed, and scalability (Hariri, 2023; Nguyen et al., 2022) but students should be adequately trained on how to interact properly with GAI-enabled systems through appropriate prompt engineering techniques (Roumeliotis & Tselikas, 2023) in order to improve the quality of the translation output.

2.2 Competence Frameworks: An Underestimated Opportunity

Competence models started to appear in the late 2000s as a schematisation to combine students’ competences together. In (Göpferich & Jääskeläinen, 2009), the translation competence is associated with skills on communication, domain, strategy, psychomotricity, and translation-specific tools. Similarly, the European Master’s in Translation (EMT) (Toudic & Krause, 2017) is a framework for selecting European universities that offer translation courses satisfying a set of evaluation criteria in five competence areas: language and culture, translation, technology, personal and interpersonal, and service provision. If we focus specifically on the EMT technology competence, it requires to train students in:

-

1.

Using relevant IT applications (e.g., office software) and IT resources (e.g., cloud-based applications).

-

2.

Using effectively search engines, corpus-based tools, text analysis tools and TMSs.

-

3.

Pre-processing, processing and managing files, media/multimedia sources.

-

4.

Understanding the basics of MT and its impact on the translation process.

-

5.

Assessing the relevance of MT in a translation workflow.

-

6.

Using the appropriate MT system where relevant.

-

7.

Applying adequate ICT tools to support language and translation (e.g., workflow management software).

European universities can apply for a renewable 5-year EMT quality label, which ascertains their effectiveness in incorporating all five competence areas in their curricula. Unfortunately, the evaluation process is extremely selective and only 3% of EU universities have been successful so far, thus making the framework limitedly effective in shaping the overall European translation teaching sector on the one hand, and splitting universities into EMT and non-EMT ones, on the other hand. Moreover, only a minority of international LSPs are currently aware of the EMT (ELIS Research, 2022). Since the potentially beneficial effects of EMT on CAT course design strategies can be directly observed in a very limited number of academic institutions, it does not yet represent a large-scale game-changer, but rather an interesting methodological reference on which to base new STT/CAT teaching approaches.

That is why, although EMT concept seems to many as not much more than a missed opportunity and the path to its achievement a relevant obstacle to overcome, it can represent the common grounding element on which to build rigorous and technology-driven translation teaching curricula, even without necessarily trying to apply for it. The validity of the EMT framework is also demonstrated by an interesting initiative promoted by nearly a dozen EMT-universities that introduced in their CAT courses some laboratorial activities that replicate the processes of real translation agencies, under the definition of Simulated Translation Bureaus (STBs) (Buysschaert et al., 2017). The STB concept may pave the way for the simulation of a cooperative learning context in translation teaching but, again, several challenges can be identified. Firstly, the number of institutions involved is very small and their geographical coverage is limited: no more than one or two institutions per European country are involved, with many countries not represented at all, and there are no similar initiatives in non-EU countries. Secondly, the STB design strategies adopted vary so much from one university to another that it is practically impossible for a non-participating CAT instructor to design and launch a STB as well as to integrate it into a standard STT curriculum without a considerable preparatory work and without appropriate guidelines to support the adaptation of the STB to the specificities of the STT curriculum.

Moreover, in-depth knowledge of LSP workflows is needed. It is also noteworthy that computer scientists involved in CAT courses are usually not aware of competence frameworks or LSP/STB workflows. Similarly, a large proportion of translation teachers still perceive technology competences as being primarily associated with purely supportive computer tools needed to speed up translation.

In order to fill this gap, Sect. 4 will also propose a set of design principles for embedding a STB (as a role-playing experience) in STT curricula.

2.3 Computer-Supported Collaborative Learning (CSCL) and Active Learning

The CSCL approach has long been accepted as a useful educational strategy in universities, especially in STEM disciplines (Flores et al., 2015), as it supports group-learning activities with the aim of improving students’ individual knowledge as well as group and social skills (Ludvigsen & Mørch, 2010). Since the early 2000s, a considerable stream of scientific literature has been devoted to CSCL (Barron, 2000; Lipponen et al., 2004), thanks to technological advances favouring the collaboration among students who form a team (social constellation), physically or virtually, with the aim of achieving a specific set of learning objectives (Strijbos, 2011).

Effective CSCL requires a clear definition of individual student responsibility and a clear determination of whether a positive interdependence exists between individual outcomes and group performance (Wang, 2009). Both of these elements are supported by (at least) a teacher who plays a coordinating role, through scripting (Dillenbourg, 2002) and scaffolding (Rienties et al., 2012; Shin et al., 2020), which could progressively fade out in order to let students acquire greater autonomy. Teacher-led coordination is pivotal, especially at the beginning, as students must receive indications on how to carry out group tasks, activate problem-based learning dynamics, and exploit available software platforms properly (Hernández-Sellés et al., 2020).

When it comes to the design of CSCL experiences, several approaches are available, depending on whether educators focus more on knowledge, communication, collaboration, motivation, or pragmatism as learning objectives (Meijer et al., 2020). Very often, however, these elements are addressed separately, without fruitfully combining the different components of the learning environment. The Group Learning Activities Instructional Design (GLAID) framework was proposed in 2016 (Strijbos, 2016) in order to support educators in designing CSCL experiences with eight design components (Ci) and five stages (Si):

-

S1. Analysis: profiling of students, teachers, and curriculum.

-

S2. Design:

-

o

C1: interaction patterns among students to achieve pre-defined targets,

-

o

C2: individual and group learning objectives and outcomes,

-

o

C3: formative and/or summative assessment strategies focusing on both individual students and groups.

-

o

-

S3A. Development (didactics):

-

o

C4: description of student task features (e.g., type, duration, frequency, etc.),

-

o

C5: structuring and timing of the CSCL experience,

-

o

C6: student guidance (i.e., scaffolding) policies and dynamics, along with the expected role of the educators.

-

o

-

S3B. Development (logistics):

-

o

C7: organisation of student group constellation (e.g., composition, type, number of participants, level of heterogeneity, etc.),

-

o

C8: facilities enabling teaching and learning in the CSCL experience,

-

o

-

S4. Implementation: performing and monitoring the instructional process.

-

S5. Evaluation: analysis of learning processes and learning outcomes.

Group-learning and active-learning, some of the core elements in CSCL, need careful planning in order to avoid disappointing results when transferred to CAT courses in STT teaching. Group-learning requires an orchestration between supporting technologies and pedagogy (Pierre Dillenbourg, 2013), but students must have acquaintance with them and teachers from the computer area should be trained in the best pedagogy strategies when translation studies are involved. Similarly, active learning is much more exploited in computer science courses to promote student-to-student and student-to-teacher interactions to improve learning outcomes (Chilukuri, 2020; Sobral, 2021), while it does not represent a primary typology of learning activities in CAT courses, except for the STBs implemented in a few European universities (Loock et al., 2017), whose diversity, however, shows that no common design methodologies have been adopted so far. Therefore, the GLAID framework will be adopted to design one of the CSCL-based activities in the proposed CAT course, as explained in Sect. 4.

Table 1 summarises the various related studies, frameworks and the educational strategies and approaches discussed in Sect. 2, together with their positive aspects, limitations, and their prospected use in CATDeM.

3 Technological Landscape

According to Nimdzi’s 2022 annual technology report (Akhulkova et al., 2022), there are more than 800 software applications supporting scientific translators. These tools are grouped into nine categories, covering computer-assisted translation, machine translation, and audio-visual translation. Such a wide range of alternatives is somewhat intimidating for students and even for teachers, who very often decide, as a consequence, to focus primarily on the tool that is currently favoured by the market, since it is plausible to assume that when students start their professional careers they will be required to use that specific tool by most translation agencies and bureaus. Consequently, many CAT courses today cover Trados Studio,Footnote 3 the platform that holds 80% of the global translation market share (Verified Market Research, 2022). Moreover, it is worth to note that the tools for corpus linguistics are not listed in Nimdzi’s report, while they should be included instead because of their role in training MT engines. This category alone consists of more than 200 additional applications, according to various lists and repositories directly maintained by communities of computational linguists (Corpus-Analysis.com, 2022).

The following subsections will now deal with the most relevant categories of tools for the CAT domain.

3.1 Translation Management Systems (TMS) and Translation Business Management Systems (TMBS)

These tools, which are fundamental to any modern translator (Shuttleworth, 2015), support the translation of language assets in multiple digital formats according to a rigorous processing pipeline (RWS Group, 2021). Once the documents to be translated (i.e., source documents) are provided as input, an automated workflow guides the translator through project configuration settings to a translation editor where source documents can be actually translated. The translator focuses solely on the textual content. The TMS controls all non-translation tasks and provides the final documents (i.e., target documents) as output, applying the graphical layout and structure of the original documents to the translated text.

The TMS also allows managing and reusing linguistic resources (RWS Group, 2021) via dictionaries, glossaries and, most importantly, translation memories (TMs), which are bilingual databases of aligned sentences (i.e., every source sentence is stored along with its translation in a given language, the first time it is delivered by the human translator). Each time a new sentence needs to be translated, the TMS searches the TM: if a match is found, the corresponding translations can be retrieved and used/modified, thus saving a considerable time and effort.

The creation and management of translation projects is also an important feature of TMSs. Moreover, translation companies can rely on special TMSs specifically tailored to business aspects, known as Translation Business Management Systems (TBMSs), which offer quoting, invoicing, client history, personnel management and payment, and which can be integrated with TMSs. As can be easily guessed, translation students and STT students in particular need to be familiar with TMSs (Alkan, 2016; Tarasenko et al., 2019), while TBMSs are not covered usually.

3.2 Software Localisation Management Systems (SLMS)

Software localisationFootnote 4 is the process of adapting a software product or video game for a specific market, so that all its components (e.g., UIs, graphics, navigational elements, dialogues, measurement units, character sets and fonts, local regulations, copyright claims, etc.) are not only translated but also perceived by end users as specifically designed for their linguistic and cultural context. Localisation is much more than just the translation of text documents: software localisers face many more challenges and pitfalls than translators. First, the timing is different: usually, digital documents are translated once the source text has been finalised, whereas software products are very often localised during the development process (or in the early stages of testing) so that they can be shipped to multiple markets simultaneously. Second, multiple adaptations may be required to the UI (e.g., form resizing, dialogue adaptation, etc.) as well as to any graphics containing visible and readable text. Third, the translated elements must fit back into the original software product, so it is necessary to check that all functionalities work properly. Software localisers are typically involved in close collaboration with software developers and have to adapt to different working schemes (e.g., Agile, DevOps) (Lenker et al., 2000).

SLMSs allow translators to integrate the translation workflow seamlessly into the software development pipeline, eliminating unnecessary code snippets that would only slow down the translation process and would pose significant risks of accidental code misplacement. Consequently, these platforms can be used only after appropriate training, which requires explaining in advance: (1) the file formats that a software localiser will encounter in daily activities (e.g., JSON, XML, YAML files, etc.), (2) the software development pipeline, (3) the structure of a software product and the placement of translatable assets. For these reasons, SLMSs are rarely addressed in CAT courses (Zhang & Vieira, 2021) and related hands-on activities are very difficult to implement, even though the market demand for software localisers is growing rapidly, especially in the mobile app sector (Omar et al., 2020; Schroeder, 2018).

3.3 Translation Quality Management Systems (TQMS)

Their pivotal role is to support translators in identifying, tracking, and resolving issues hampering the quality of translated documents (Vela-Valido, 2021), according to specific standards such as ISO 17100:2015 (ISO, 2015) on regulatory requirements and client guidelines. TQMSs apply different types of evaluation criteria, such as spelling and grammar correctness, terminology consistency, text meaning and style, readability level, adaptation of culture-specific aspects, adequacy of formats (e.g., dates, units of measurements, and currencies). They can focus on quality control (QC, basic quality controls to check whether requirements are met) or quality assurance (QA, planned procedures to manage quality controls). TQMSs are covered rarely in CAT courses (Zhang & Vieira, 2021), although they represent a must for any professional LSP (especially those working on the international market). As an educational replacement, special TMSs with TQA functionalities, such as MemsourceQA (Memsource, 2021), can be used.

3.4 Machine Translation Engines (MTE)

These are software solutions capable of automatically translating a source text into another (set of) language(s) without any human intervention (Garg & Agarwal, 2018; Xu & Li, 2021). Statistical methods of MT (SMT) have been studied for years but today the deep learning MT (namely, neural MT or NMT) offers better performance when properly trained, especially when translating languages with different word order. NMT relies on encoder-decoder architectures, where each source sentence is encoded into a numerical representation and then is used by the decoder to output the target sentence.

In language courses, students leverage MTEs with profitable learning outcomes (Raad, 2020), while students in translation courses should focus on other aspects, such as: (1) evaluating or post-editing (i.e., correcting) the MTE output; (2) pre-editing (i.e., simplifying) source texts to help the MTE achieve better translation quality; (3) understanding how MT algorithms actually work; (4) appropriately using MT also in CAT contexts (Toledo Báez, 2018); (5) exploring MT functionalities when embedded in automatic subtitling and dubbing applications (Koponen et al., 2020). However, courses/modules dedicated to MT usually focus on manual post-editing techniques, overlooking a whole branch of translation quality assurance (TQA) and text annotation tools that would help students significantly.

3.5 Corpus Linguistics Tools (CLT)

Considerable quantities of texts (known as text corpora) are needed to train both NMT and SMT machine translation algorithms, especially for scientific texts (Tehseen et al., 2018). Usually, the larger the number of words/sentences in the corpus, the higher the resulting quality of the MT algorithm (Rikters, 2018). In order to build these corpora quickly, texts are automatically extracted from multiple sources (e.g., Web repositories, digitised documents, etc.). The problem with automatic text extraction is that gathered data are noisy, because of incomplete or wrong sentences that are very often present alongside the correct ones. Since the quality of the training dataset directly affects the resulting quality of the MT algorithm (especially when NMT is involved) (Rikters, 2018), it is crucial to select carefully the sources, adopt correct corpus-building procedures, pre-process the resulting dataset whenever possible, and assess its overall quality.

Even if translators are not involved directly in MTE configuration and training process, they can exploit CLTs, which essentially are speech and language processing software applications (Anthony, 2013), to create parallel text corpora usable as a source of translation suggestions for both MT engines and human translators. A parallel text corpus is a collection of monolingual source texts, stored together with their translation into one or more languages (parallel): each text is chunked into sentence-like segments linked in a 1:1 relationship (alignment) to the segments corresponding to their translation in another language stored in the corpus. In addition, CLTs allow the user to analyse collections of texts and to identify typical/unusual language use patterns, concordances, co-occurrences.

Very rarely dealt with in CAT courses (Zhang & Vieira, 2021), CLTs represent an important addition to the so-called translator’s workbench (Delpech, 2014), as they can be used in several other language and translation-related activities. CLTs should also be considered when designing the educational offerings in this sector as they provide a convenient way to perform language data management (LDM) (Li & Rafiei, 2018), which is becoming increasingly important not only for MT (Eetemadi et al., 2015; Rikters, 2018), but also for automatic text labelling and annotation (Gries & Berez, 2017), text summarisation (Tawmo et al., 2022), sentiment analysis (Stuart et al., 2016), and to create natural language interfaces to data repositories (Quamar et al., 2022). Unfortunately, LDM is largely underestimated in translation studies (X. Zhang & Vieira, 2021).

Table 2 lists the most significant software tools for the CAT tools domain by the categories considered so far.

4 CATDeM

The second research question focuses on proposing a set of course design guidelines for computer scientists and researchers involved in teaching CAT courses (B.A./M.A. in translation studies). Normally, educators focus more on technical aspects and there is a risk of overlooking highly significant elements of the STT education as well as in-domain competence frameworks. In order to introduce a fundamental ICT-oriented perspective in translation studies, which is usually underestimated despite the growing use of cloud-based platforms by professional translators and language service providers (LSPs), this section presents course design guidelines and their implementation in a real course (a 1st-year, one-semester compulsory course in the M.A. in STT and interpreting at the University of Salento, Italy).

4.1 Design Methodology

In order to make the CAT course structure outputted by CATDeM replicable in other universities and compatible with the professional translation workflows (PTWs) adopted by LSPs, a multi-stage design methodology was borrowed from business process modelling (BPM), called the Define-Model-Execute-Monitor-Optimise (DMEMO) cycle (BPM Resource Center, 2014). The core concept in DMEMO is to identify goals, requirements, and constraints so that a process can be modelled and subsequently executed. The process execution must be monitored against pre-defined evaluation metrics and then improvement actions can be introduced depending on the monitoring outcomes. After that, the DMEMO cycle starts again.

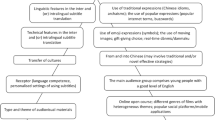

To transfer the DMEMO cycle to the CAT context, it has been assumed that the course represents the process and that each cycle coincides with an academic year. Each stage (Fig. 1) is defined as follows.

-

Define: this is a teacher-only activity that needs to involve educators from both computer science and translation studies. It entails the definition of targeted skills (i.e., all EMT competences), purposes (i.e., learning objectives) and technological enablers (e.g., tools, needed educational software licences, etc.). The original DMEMO cycle requires that business modelling strategies are set during this stage: from the CAT-oriented perspective, such strategies coincide with the CSCL component needed to simulate a translation bureau (Sect. 4.5.2).

-

Model: this stage defines (1) involved students and teachers, (2) required resources and technologies, (3) evaluation approaches, (4) collaborative/active learning strategies (i.e., discussion case studies, role-plays).

-

Execution: it starts with a preparation period, to set up the necessary tools and platforms, then the actual work period starts (i.e., classroom instructional hours and laboratory activities).

-

Monitor: it covers evaluation and assessment. Formative pre-grading assessments are provided by CAT and translation teachers for scaffolding purposes, during case studies and role-playing activities. Summative regular grading covers course topics (2/3 overall score) and role-playing (1/3 overall score). Summative post-grading is carried out via an online survey and only involves students that have been assessed. Monitoring results are crucial to guide the final stage.

-

Optimise: monitoring outcomes are examined to identify potential improvements for the next academic year. Collaborative thinking sessions among involved educators are typically exploited to that end.

Proposed CAT course design workflow based on the DMEMO cycle (BPM Resource Center, 2014)

4.2 Learning Goals

The proposed learning outcomes are grounded on the EMT framework (Toudic & Krause, 2017), so to create an appropriate balance between the expectations of the educators from the field of translation studies and the typical teaching approach adopted in computer science, which is based on skills-lab-like experiences and on a strong presence of computer-supported activities. Therefore, the EMT is leveraged as the common knowledge ground where shared learning goals are defined and CSCL dynamics are activated (Clark et al., 2002). In this way, students who complete the course will be able to achieve the following learning goals (LGs):

-

(LG1)

mastering current technology-driven trends in CAT and MT, in order to be capable of working profitably and independently in business-like scenarios;

-

(LG2)

learning the advantages of TMS platforms for STT, along with their limitations with other translation typologies;

-

(LG3)

knowing and directly experiencing PTW, as well as contextualising TMS platforms in PTW;

-

(LG4)

understanding translation quality principles, industry reference standards, and resource sharing (e.g., translation memories, termbases, etc.) to boost cooperative work;

-

(LG5)

acquiring MT basics, along with pre-editing, post-editing, and MT-TMS coupling dynamics;

-

(LG6)

exploring advanced MT topics, such as technologies involved (statistical vs neural), training principles (e.g., corpus-based training), and translation quality assurance procedures;

-

(LG7)

experiencing software localisation tasks and examining how they differ from traditional translation;

-

(LG8)

approaching the cross-disciplinary importance of a data-oriented perspective when dealing with language data.

4.3 Content Structure

Students are introduced to a series of EMT-compliant topics (T) of increasing complexity from a computer-oriented perspective. As a second objective, those topics are designed to cover the broadest possible range of enabling tools, while at the same time stimulating a constant awareness of the targeted professional dimension. Current and future trends in the sector are constantly brought into focus, to stimulate student interest and motivation. Table 3 relates course topics to learning objectives and anticipates their relationship to collaborative learning activities (thoroughly explained in Sect. 4.5).

Topics are organised as in the following.

-

T1.

CAT basics and terminology. The course begins with an introduction to the basic concepts and definitions of the CAT domain. The significance of adopting computer-assisted solutions to improve translation quality, efficiency, and consistency is discussed with real-world examples. All the necessary elements common to most TMSs are explained to students, so that they can understand the role and the importance of translation projects, translation memories, glossaries, and translation quality checkers. Contextual awareness is provided in the form of typical application scenarios, with the aim of fostering students’ critical thinking and situated learning.

-

T2.

TMS. These platforms represent the core content of any CAT course: here, several TMSs are presented, highlighting their common features and their ability to perform the same tasks (e.g., creating a translation project, populating a translation memory, exporting a terminology database, etc.), even if with different approaches. Indeed, one of the most underestimated difficulties for students in this field is the risk of becoming “locked in” to a particular software product, so that they continue to use the same tool they were introduced to, even long after they have finished their studies. This issue is mainly due to the fact that students start CAT courses with little computer acquaintance and, therefore, they do not possess enough operational autonomy in advance to be able to switch from one TMS to another with confidence depending on the necessities (e.g., document availability, backward compatibility of file formats, translation delivery constraints, compliance with standards, etc.). For this reason, the TMS-specific course module addresses the market-leading, desktop-based TMS (i.e., Trados Studio), a cloud-based TMS used to perform collaborative learning activities (i.e., Memsource,Footnote 5 see Sect. 4.5.2), and a freeware desktop tool (i.e., OmegaT) that is a valid alternative to the other two (since Trados Studio is a commercial product and Memsource is free for academic purposes only).

-

T3.

MT: from training to post-editing. When it comes to MT, many students perceive it as a handy way of achieving quick and ready-to-use target documents. The first part of this topic aims to debunk such misconception in favour of a rigorous and systematic approach to how MTEs work and how they are trained, according to their type (e.g., neural vs statistical). Subsequently, the basics of pre-editing (Miyata & Fujita, 2016) and post-editing (Arenas & Moorkens, 2019) are introduced, involving students in practical discussion case studies (see Sect. 4.5.1). Specific attention is paid to comparing translators and post-editors, who differ in their methodological approaches and the software tools they need to accomplish their tasks.

-

T4.

TQA and standards. This topic is closely related to T2 and T3, being TQA crucial for distributing machine-translated or human-translated texts. Manual and automatic TQA procedures must be considered. To address the first type, students must be instructed on error taxonomies (Lommel, 2018; Mariana et al., 2015; Vardaro et al., 2019), which help them detecting and classifying errors by type and severity (Shi et al., 2019). As for automatic procedures, reference metrics such as BLEU (Papineni et al., 2002), METEOR (Satanjeev Banerjee & Lavie, 2005), or TER (Snover et al., 2006) must be examined. Students may also benefit from the use of text annotation tools.

-

T5.

PTW. A detailed analysis of typical LSP activities is proposed to the students, with a constant focus on tools and to real scenarios. Reference standards (ISO, 2015) are discussed and different professional roles (e.g., project manager, translator, reviewer, post-editor, etc.) are compared. This topic will be directly and thoroughly addressed during the role-playing activity (Sect. 4.5.2).

-

T6.

SW-L10N. This type of translation is proposed to the students by means of a 6-criteria comparison with document translation.

-

i.

Process lifecycle: the two translation processes are compared and their key features are highlighted.

-

ii.

Subject domain: students learn that a software application contains at the same time texts about different domains, such as IT (e.g., technical settings), in-domain content, laws and regulations (e.g., disclaimers), dialogues and narratives (e.g., in video games), and so on.

-

iii.

Source text structure: in software localisation, the source text may change several times, depending on the development approach adopted (e.g., DevOps, Agile methodology, etc.) (Kasakliev et al., 2019). Therefore, students are involved in examining typical software development workflows in order to understand when and how frequently software localisation tasks are required.

-

iv.

Text and file formats: these are examined as they normally depend either on the specific programming language or on the specific string file format (e.g.,.xls/.xlsx,.json,.strings, etc.).

-

v.

Context-awareness: students are instructed on how to manage decontextualised strings coming from a software app, whose logical order may be apparently missing and which usually contains a considerable amount of variables and source-code comments. These aspects are conveyed and examined by means of translation kitsFootnote 6 prepared by the theacher.

-

vi.

Localisation testing: students learn how to evaluate the quality of translates text assets (e.g., language testing checklists, software testing approaches and cycles, etc.) (Mangiron, 2018; Sharma & Bhatia, 2014). This topic is supported by a dedicated discussion case study (Sect. 4.5.1).

-

T7.

LDM fundamentals. The last topic introduces students to a concept with which they are not familiar. Considering texts as language-related data, instead of simple elements to translate, is something they are not able to perceive at the beginning of the course, although this aspect is becoming more and more crucial, especially in the EU (ELRC Consortium, 2019). However, previous topics (especially, T1-T3), help to pave the way towards LDM from two perspectives. First, the language data lifecycle (Mattern, 2022), where data are collected from existing sources (e.g., text corpora) or created (e.g., by extracting them from interviews), catalogued and organised, annotated (to enrich with additional information content) or described, processed (e.g., simply translated, or used to train MTEs), preserved/archived, shared or even exchanged in a dedicated marketplace (TAUS, 2021), and eventually deleted. Second, thanks to a dedicated case study (Sect. 4.5.1.), it will be explained how to handle language data under specific conditions (e.g., translation of sensitive documents, use of cloud-based TMSs, localisation of text assets from unreleased video games), so that data ownership, copyright, and security requirements are considered (Moorkens & Lewis, 2019; Seinen & Van Der Meer, 2020).

4.4 Teaching Approach and Learning Platforms

The instructional part of the course spans over 14 consecutive weeks and involves a combination of complementary teaching strategies to keep students engaged throughout the entire period. To this end, the very first frontal lessons about CAT principles and definitions are soon followed by a series of practical, hands-on laboratory sessions enriched with computer-supported collaborative learning (CSCL) and active learning activities. These two approaches are triggered by group-works on selected discussion case studies (Sect. 4.5.1) and a role-playing experience (Sect. 4.5.2). While the case studies are 1-week-long activities distributed regularly throughout the course, role-playing is carried out in the last three weeks of the course.

It is noteworthy that the collaborative and active learning dimensions are fundamental to allow students perceiving the proposed topics as an interesting landscape to explore in the light of their prospective professional career rather than just a stack of overly complex ICT-related notions that they will have to struggle against due to their initial scarce expertise on informatics.

Moreover, since CAT educator may come from the computer-engineering sector (which is the norm in this scenario and which is also true for the proposed case study), there may exist a provenance divide with students and other educators from traditional courses on translation. In order to bridge such a gap and not to reduce the effectiveness of the course, the educator should assume the role of facilitator and leverage appropriately the advanced features offered by any online learning platforms already available. Another relevant aspect is that the expected improvements in learning outcomes should be shared in advance and explicitly with students, otherwise learning platforms will be perceived as an additional operational burden to keep during the course. Instead, online learning platforms have long been regarded as valuable teaching aids, especially when they enable cooperative work, under the definition of groupware platforms (Ellis & Wainer, 2004). To this end, Microsoft Teams is used, since it was adopted at this university to enable remote teaching and distance learning during the SARS-CoV-2 pandemic and, therefore, students are already familiar with it. The platform offers typical features such as document sharing, instant chat messaging, and audio-visual conferencing. Microsoft Teams also allows supporting students via a course-specific Wiki and as a full groupware for the role-playing experience (Sect. 4.5.2).

4.5 Collaborative and Active Learning Activities

4.5.1 Discussion Case Studies

Three course topics are linked to typical case studies from the translation industry sector: MT, SW-L10N and LDM. The case studies are presented according to a pre-defined analysis pattern (Table 4), which specifies:

-

(1)

Involved stakeholders,

-

(2)

Initial motivations and requirements,

-

(3)

Enabling tools

-

(4)

Needed tasks/interventions,

-

(5)

Required standards, process workflows, and guidelines,

-

(6)

Expected challenges,

-

(7)

Possible mitigation strategies.

Students learn how to deal with specific situations in real-life scenarios. Similarly, students are also encouraged to approach work contexts in a systematic and methodical way; they are provided with tools, supporting files, and teaching materials for each case study. The active learning component is initiated by the teacher/facilitator, who starts the discussion by explaining the scenario, then provides adequate context awareness, and finally guides the students through the different steps of the analysis pattern listed above. A demo session is also performed by the teacher for each case study and then the students are requested to try the same procedure on their own (possibly in groups). It is crucial for the teacher to encourage the students to develop critical-thinking and decision-making approaches, so that expected the challenges and mitigation actions elicited in the analysis pattern are properly considered. Finally, in order to boost student involvement, the case studies are incorporated into the course as non-evaluative activities, so that group discussions are stimulated without any anxiety due to evaluation expectations.

The first discussion case study refers to a translation agency that decides to use a MT pipeline to translate a set of source documents supplied by an engineering company, from English into Italian language. The documents describe 5G wireless communication systems and potential risks to human health. The MT is required because the agency is supposed not to have any in-house or freelance translators with the needed expertise on that specific topic and language pair. A freely available MTE (i.e., DeepL) is selected and it must be used in conjunction with a typical TMS platform (i.e., Trados Studio), assuming that a valid DeepL API key is available (so that students have to check autonomously how the integration procedure has to be done). Since the adopted MT pipeline requires a full human post-editing intervention, students are required to refer to the corresponding TQA and MTPE guidelines (Lommel, 2018; Mariana et al., 2015; Vardaro et al., 2019), as well as to the ISO 17100:2015 standard (ISO, 2015). Students are also encouraged to reflect on the usefulness of a text annotation tool (i.e., CATMA) to post-edit the MT output. In the real-life scenario discussed, technical challenges and operational difficulties are expected: the former can be tackled by testing in advance how to integrate the APIs of the selected MTE with the agency’s TMS platform, while the latter can be mitigated by ensuring a freelance human post-editor with adequate in-domain competence for (at least) the target language is available.

In the second discussion case study, the localisation from English into Italian language of a Python-based video game is discussed. Students are provided with a translation kit prepared by the teacher starting from an already published free open-source visual novel named “Under What?” (Gartman, 2019). The choice of a Python video game allows students to interact with code snippets with relatively low syntax complexity. The interaction with code snippets is facilitated by the teacher, who shows the differences between the original location of translatable strings in the source code and their typical translator-friendly appearance in a SLMS platform, where those strings are displayed as key-string pairs. Similarly, the selection a visual novel means that the majority of the translatable text assets come from dialogues and narrative sections, so that the typical issues related to decontextualised and non-sequential strings can easily emerge during the discussion. In addition to the SLMS (i.e., Phrase), another useful tool allowing students to examine the software developer’s perspective is a game development environment: in this case, an IDE for Python visual novels is used (i.e., Ren’py). Since this case study focuses on a client company that works according to the Agile software development lifecycle (Martin, 2014), the main challenge for students is to understand how Agile software localisation works, thus considering how in this case developers typically push new code to the project codebase at the end of every Agile sprint and how translators/localisers are required to work in a continuous delivery mode. Students have also to learn how the SLMS platform synchronises the codebase with the translation project. In order to manage the non-sequential order of pushed strings, students are encouraged to understand the typical challenges encountered by software localisers and how to address them (Xia et al., 2013). Typical game localisation issues such as string length boundaries, special fonts, and variables are also widely discussed (Mangiron, 2018). Suggested mitigation strategies include testing procedures and checklists. This case study can also be acted out with students involved as localisers and the teacher acting as both facilitator and software developer. An interesting variation (whose feasibility is under examination for the next academic year) would be to involve also students from a M.Sc. degree in computer science and have them play the role of a real software house.

The third discussion case study covers the LDM field and focuses on how to handle legal rights in a translation supply chain, where the client, the agency, and the translators hold different intellectual property rights. This scenario starts with the assumption that the agency receives a request for an English-to-Italian translation of a scientific manuscript on a novel data mining algorithm. The adopted research article (Yiwen, 2017) has indeed already been published and is openly accessible. The agency is supposed to rely solely on a cloud-based TMS (i.e., Memsource), whose servers are obviously not located on the agency’s premises. Students are first introduced to the basics of data protection requirements, represented in this case by the European General Data Protection Regulation (GDPR) (European Union, 2022), as well as to the core concept of personal data, which entails data to a broader extent than its US counterpart PII (personally identifiable information) (Seinen & Van Der Meer, 2020). Students learn how to identify and manage personal data within language data and, then, they are challenged by the teacher to distinguish between copyright on the whole source text, and copyright on individual source segments.Footnote 7 The discussion continues by introducing, in an inquiry-based approach, the differences between copyright on source text and copyright on target text. Since the cloud-based TMS is the only tool needed in this case study, students are asked to think critically about the physical location of the source text, once it has been uploaded to the cloud repository, and of the target text, before it is downloaded from the same repository. Consequently, it is explained how to gather the necessary information from the technical documentation of the TMS.

4.5.2 Role-Playing

The STB was incorporated as the last part of the instructional period, weighted at one third of the overall examination grade and designed according to the GLAID framework (Table 5). Students were required to self-group into translation teams, each one representing a fictional translation bureau. Every team had one project manager (PM), (at least) two translators, and one reviewer. Team members were free to choose their PM. They also defined a fictional company logo and a fictional company name to be used throughout the role-playing experience. The academic-free edition of Memsource was the enabling cloud-based collaborative platform for every team, as it provided a significant subset of functionalities typical of real LSPs.

The role-playing was initiated by translation/language teachers, acting as fictional clients and asking each team the provisioning of a translation service for a given set of source documents, within a given deadline, for a given language pair. Then, each PM negotiated a fictional quote (according to the typical per-word payment rate for the selected language pair), received the source documents from the fictional client, created a translation project in the available TMS platform and assigned tasks to translators and reviewers. Upon translation and revision, a translation quality check was performed and the PM returned targets document to the client with a fictional invoice.

5 Evaluation

5.1 Participants and Sampling Strategy

As anticipated in the previous sections, CATDeM has been evaluated in a real-life case study spanning two academic years (a.y.): it was used to design and implement two consecutive editions of a compulsory CAT course, which were delivered in 2020/2021 a.y. and 2021/2022 a.y., respectively. Students from 2021/2022 a.y. will be considered as the study group in this section, while students from 2020/2021 a.y. will be considered as the control group. The course was held in the M.A. in STT and Interpreting at the University of Salento (Italy). Students of other M.A. degrees in language studies at the same university could also choose it as an elective. However, none of these degrees offers any other course in translation technology to students, who only benefit from a compulsory module on basic computer science during the B.A. degree in order to build up their computer literacy. The instructional part (56 h, 9 ECTS) was hybrid: students mainly attended face-to-face, but they could also participate remotely via Microsoft Teams. These settings were the same in the two academic years considered. In both the study group and control group, only students who actively participated to the instructional part of the course (i.e., attended more than 70% of the lessons) were included.

As detailed in Table 6, in 2021/2022 a.y., 24 out of 26 enrolled students (92.3%) actively participated, while 2 students did not show up after the enrolling. The sample consisted of 19 female (79.2%) and 5 male (20.8%) participants. Only two of them (8.3%) attended the course outside prescribed periods. The control group is represented by the 31 actively participating students out of 40 enrolled in 2020/2021 a.y., and even if absolute numbers are slightly different, the demographic breakdown of the control group is very similar in both years. As for the contents, LDM was not covered in 2020/2021, nor were the case studies. The role-playing experience was present, albeit with a different setup. In terms of scoring, the percentage of students who passed the exam after 2 out of 7 attempts was 91.6% in 2021/2022 and 64.5% in the previous year, while the average score was quite similar.

The role-playing experience, summarised in Table 7, was a compulsory, in-course, 3-week activity in 2021/2022 a.y., whereas in the previous a.y. it was an elective, post-course, 6-month activity. The grading mechanism was also different: it was part of the course grade in 2021/2022 and it was linked to additional ECTS in 2020/2021. As an elective, the role-playing in the previous a.y. was attended by 51.6% of active students, while the female-to-male gender ratio was 3.8 in 2021/2022 and 4.3 in 2020/2021.

5.2 Data Collection and Data Visualisation

A post-grading online survey was administered to the study group, after passing the exam, to determine:

-

1.

Student self-assessment of competence improvement (question Q1),

-

2.

Student perception of the course topic’s usefulness with respect to future professional career (Q2),

-

3.

Student opinion about the usefulness of collaborative/active learning activities in improving theoretical knowledge (Q3) and practical/interpersonal skills (Q4),

-

4.

Student-perceived level of difficulty in learning how to use the proposed tools (Q5) and in using them (Q6),

-

5.

Student feedback on: most interesting topic and collaborative/active learning activity (Q7),

-

6.

STUDENT feedback on topics and collaborative/active learning activities to improve and/or reduce (again Q7).

All questions were mandatory. Table 8 lists each question, the elements covered, and the corresponding Likert scale or request. Questions Q1, Q3–Q6 adopted a 5-point Likert scale with a neutral central item but different assessment scales depending on the question (Vagias, 2006). The answers to these questions will be reported as diverging simple horizontal stacked bar charts, centred on the neutrality line, so to make the distribution of respondents’ opinions immediately visible (Evergreen, 2019).

Question Q2, which asked respondents to quantify how useful the topics covered in the course could be in terms of a translator’s future professional career, used a progressive intensity 5-point Likert scale with the addition of a sixth item corresponding to the “unsure” option (score: 0). The option was deemed necessary in order not to force respondents to make such an assessment, as some of them may choose a different career at the end of their studies or may not yet have a clear idea of their professional expectations. For the “0” item in this particular scale, a decision had to be made whether to include these responses in the statistical analysis or to exclude them (i.e., complete case analysis), as they are missing valid responses. It was decided to exclude the 0-scores from the analysis as they correspond to respondents who do not actively express their opinion on the usefulness of a given topic, thus making these responses fundamentally different from the others and, therefore, not part of the overall distribution. From a data visualisation perspective, it was decided to use a chart with 100% horizontal stacked bars (Evergreen, 2019), since the assessment scale adopted does not have a neutrality line in the middle.

Question Q7 asked students to indicate the most interesting topic as well as the most interesting collaborative and active learning activity. Similarly, they were asked to indicate what they would like to emphasise (in a forthcoming edition of the course) and what they would like to shorten. The visualisation chosen in this case is a three-column heatmap (Evergreen, 2019), where the elements of interest are placed side by side and the adopted colour gradient scale immediately shows those with the highest number of responses.

The response rate in the study group was 75%, with 18 respondents for each question. Although 18 respondents may seem relatively few, it is important to consider the context of the case study. Firstly, the study group had a limited number of components, as it consisted of 24 actively participating students out of a total of 26 enrolled students, as shown in Table 6. The questionnaire was only administered to actively participating students, so the maximum number of students invited to participate was actually 24. In general, such a relatively small sample size is not uncommon for specialised courses, particularly in niche areas such as STT and especially considering the typical annual enrolment rates for the M.A. degree to which the CAT course covered in this case study belongs, which has an average of 30 students enrolled per year. Therefore, achieving a response rate of 75% indicates a high level of student engagement and represents a significant proportion of the total eligible student population, thus suggesting a willingness to provide feedback on the course. Secondly, while a larger sample of respondents would be desirable in order to make generalisations, the goal of our case study was not to make broader assumptions but rather to gain insights into the self-perceived skills and attitudes of the students who had taken the course designed according to CATDeM. Therefore, by focusing on a specific cohort (i.e., the 2021/2022 cohort) we aimed at exploring the effectiveness of the course structure and at identifying areas for further improvement.

5.3 Results and Findings

The first six questions in the survey were all associated with Likert scales and each covered different aspects, one per sub-question, as shown in Table 8. More specifically, all five EMT competencies were covered by Q1, all seven course topics by Q2, role-playing and all three discussion case studies by Q3 and Q4, and the four main tool categories by Q5 and Q6, for a total of 28 sub-questions. Question Q7 asked for two responses about the perceived level of interest, need for improvement, and need for reduction in course topics and collaborative learning activities, for a total of 6 additional responses per respondent.

Before examining the corresponding Likert charts, a statistical analysis is now provided. Descriptive statistics are reported in Table 9. For each question and corresponding topic, the following elements are considered: number of valid/excluded responses, mean, median (MED), standard deviation (STD), interquartile range (IQR), range, skewness and kurtosis, minimum (MIN), and maximum (MAX) values.