Abstract

If there is one thing all university rankings have in common, it is that they are the target of widespread criticism. This article takes the many challenges university rankings are facing as its point of departure and asks how they navigate their hostile environment. The analysis proceeds in three steps. First, we unveil two modes of ranking critique, one drawing attention to negative effects, the other to methodological shortcomings. Second, we explore how rankers respond to these challenges, showing that they either deflect criticism with a variety of defensive responses or that they respond confidently by drawing attention to the strengths of university rankings. In the last step, we examine mutual engagements between rankers and critics that are based on the entwinement of methodological critique and confident responses. While the way rankers respond to criticism generally explains how rankings continue to flourish, it is precisely the ongoing conversation with critics that facilitates what we coin the discursive resilience of university rankings. The prevalence of university rankings is, in other words, a product of the mutual discursive work of their proponents and opponents.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

University rankings: ubiquitous and contested

University rankings gained a firm foothold in global higher education over the past decades. They have grown dramatically in numbers (Altbach, 2012), wield considerable levels of influence (Hazelkorn, 2011), and receive attention from such diverse audiences as journalists, policymakers, consultants, university administrators, students, and the scientific community (Brankovic et al., 2018). With an increase in number, influence, and attention also came critique and contestation, especially by the scientific community: “the last years have brought an avalanche of articles, monographs and readers on the topic” (Hertig, 2016, p. 3). As a result, “writing about rankings has become a global business” (Amsler, 2014, p. 155). All things considered, university rankings have become institutionalized—but so has their critique (Kaidesoja, 2022).

A number of studies suggest that the prevalence of university rankings is an ongoing accomplishment in which both producers and critics of rankings are involved (Barron, 2017; Free et al., 2009; Lim, 2018; Ringel & Werron, 2020). These studies indicate that a better understanding of how rankings deal with legitimacy challenges in what appears like hostile environments requires us to extend our focus: criticism, not least by the scientific community, should be treated as part of the empirical phenomenon and needs to be analyzed accordingly (cf. Bacevic, 2019; Boltanski, 2011). Drawing on and extending our previous research (Ringel et al., 2021), we take the struggles between rankers and critics as our point of departure. Specifically, we ask: how is it possible that university rankings increase in number, influence, and attention in spite of their frequent contestation by the scientific community? To tackle this question, we tentatively explore the discourse revolving around university rankings, particularly public criticism and responses by rankers. Attending to the mutual public engagement of rankers and critics allows us to reveal the source of what we call the discursive resilience of university rankings: their ability to navigate widespread criticism.

We start by taking stock of the state of research, discussing, first, the high levels of scholarly interest in the impact of university rankings or their lack of scientific rigor and, second, the literature that focuses more specifically on how rankers actively try to render their evaluations credible. We then present an exploratory analysis that proceeds in three steps. First, we distinguish different arguments leveled against university rankings. Second, we show how producers of rankings respond to these challenges. Seeking inspiration from this rich material, our exploration reveals, third, the emergence of an ongoing conversation between rankers and critics from their mutual engagement. In conclusion, we tentatively suggest that the discursive resilience of university rankings is co-produced by both rankers and their critics, who are co-opted as (involuntary) accomplices.

Research on rankings: beyond reactivity and methodological shortcomings

Fueled by the publication of the seminal study by Espeland and Sauder (2007) on a highly influential ranking of law schools in the USA, a significant body of literature has shown that public measures such as university rankings foster reactivity, making them more than just representations of something that is already “out there” (cf. O’Connell, 2013). The basic idea is that measures are reactive because they elicit responses that intervene in the objects that are measured. University rankings, then, by virtue of being published, alter the behavior of their objects and by extension impact both national and global fields of higher education (cf. Chun & Sauder, 2022; Hazelkorn, 2011; Münch, 2013; Wedlin, 2006). Complementing studies on reactivity, a second body of literature investigates the degree to which university rankings adhere to methodological standards of scientific rigor, designating them as all-too often inadequate representations of reality. Assessed in these terms, university rankings are effectively benchmarked against the norms of science, which stipulate that research involves generating new knowledge through the systematic use of methods of data collection and analysis (see Welsh, 2019 for a critical account). Studies in this line of research typically comment on matters of methodology, transparency, and data quality (cf. Johnes, 2018; Schmoch, 2015; Westerheijden, 2015).

Despite these differences, the majority of the research literature shares two premises (cf. O’Connell, 2013; Amsler, 2014): first, an overwhelmingly critical approach to university rankings, and second, a tendency to take their existence and prevalence for granted, albeit grudgingly. We intend to bracket both premises by focusing explicitly on the social processes by which university rankings are rendered credible public measures. In doing so, we want to contribute to a better understanding of how university rankings could increase in number, influence, and recognition despite ongoing criticism. The analysis combines research on organizations that produce university rankings with research on critique of rankings as a social practice. The following paragraphs introduce both lines of research.

Although producers of rankings have received considerably less scholarly attention than the rankings themselves, a handful of studies reveal different kinds of organizational practices geared towards sustaining evaluative credibility. Having become quite aware of the importance of self-presentation, rankers claim to “serve” the public and fashion themselves as impartial “arbiters” (Sauder & Fine, 2008) while simultaneously concealing the more “political” and value-driven aspects of their evaluations (Brankovic et al., 2018; Chirikov, 2022; Sauder, 2008). Part and parcel of this strategy are “scientized” documents such as reports or methodology statements (Barron, 2017; Leckert, 2021). In addition, rankers mobilize the authority of external authorities, whom they claim to be independent assessors, attesting to the validity of the underlying methodology and calculations (Barron, 2017; Free et al., 2009). Even critique, it seems, is welcome (Brankovic et al., 2022; Lim, 2018; Ringel, 2021; Ringel & Werron, 2020).

Studies that treat ranking critique as a social practice in need of scholarly exploration are also important sources of inspiration. Some studies indicate that criticism occurs in episodes, with contestations having a start and an end: eventually, critics either succeed or fail, in which case rankings retain their legitimacy and, basically, become taken for granted (cf. Barron, 2017; Free et al., 2009; Sauder, 2008; Sauder & Fine, 2008). Treating criticism as a regular feature of the field, other studies suggest that a more apt way to look at contestations is that they neither “succeed” nor strictly “fail” (cf. Kaidesoja, 2022; Lim, 2018; Ringel & Werron, 2020). Rather, given the public nature of criticism, university rankings are thought of as existing “in a state of fragile validity only through the continual production of legitimising discourses” (Amsler, 2014, p. 159–160).

Combining both lines of research on producers of rankings and critique of rankings, we assume that in order to understand how university rankings remain prevalent evaluations despite ongoing criticism, we need to turn our attention to the mutual “discursive work that is being done” (Amsler, 2014, p. 157) by both rankers and their critics.

Methodological note

Drawing on the research literature, our own previous work, and empirical examples, we are going to flesh out a tentative conceptual exploration of how university rankings proliferate in discursive environments that appear quite hostile. Before turning to our exploration, we set the stage by presenting the main elements of our story: university rankings (and the organizations that produce them), critics of rankings, and sites of mutual discursive work.

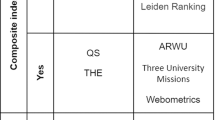

A considerable number of university rankings have been published before the 1980s. Produced by individual scholars who used rank-ordered tables to organize their findings, these rankings appeared usually only once and did not address larger audiences (Ringel & Werron, 2020). Starting in the USA with the US News and World Report (USNWR), more and more organizations, many but not all of them for-profit, began to publish university rankings on a regular basis: first at the national level, in different Western and subsequently also in non-Western countries, then, since the early 2000s, also at the global level (Hazelkorn, 2011). Currently, the World University Rankings by Times Higher Education (THE Rankings), the QS World University Rankings by Quacquarelli Symonds (QS Rankings), and the Academic Ranking of World Universities by the Shanghai Ranking Consultancy (ARWU Ranking, sometimes also referred to as ‘Shanghai Rankings’) are arguably the most popular global university rankings. U-Multirank, initiated by the European Union and now produced by a consortium of think tanks and research institutes, and the CWTS Leiden Ranking by the Centre for Science and Technology Studies form the second tier. Overall, global higher education is nowadays densely populated by university rankings as well as organizations like the IREG Observatory (IREG stands for “International Ranking Expert Group”), which are dedicated to the promotion of university rankings.

The majority of those who criticize rankings are scholars who voice their concerns in academic discourse as well as in the public domain. University rankings are a frequent topic in scientific debates at workshops, conferences, in journal articles, edited volumes, monographs, or reports. However, scholarly critics also make use of domains that allow them to address more diverse (and larger) audiences such as in-person events (where they can engage with the rankers and other stakeholders directly), the mass media (where they publish articles and op-eds or comment on rankings in interviews), personal websites, blogs, and social media. Popular blogs, such as Wonkhe, and social media accounts with a large following, such as the Twitter account University Wankings, increasingly seem to act as distributors and amplifiers of the critical discourse in the public domain. In an interview, the anonymous user who administrates University Wankings argues that the Twitter account “picked up a significant following quite quickly and we have obviously touched a nerve.” The user then continues:

It is clear that academics and other university staff, across disciplinary and national boundaries, are affected by rankings. Their effects make us laugh, cry, rant, and sigh, and it’s good to share this. Reflection and critical thinking are essential functions of higher education, and metrics are as worthy – and ripe – for analysis as any other aspect of the world we live in. (University Wankings, in Brankovic, 2019)

The mutual discursive work of producers and critics of rankings can be observed in their public communication. This includes communication taking place at in-person events (e.g., public lectures, panel discussions, conferences, workshops), via print (e.g., reports, scholarly publications, interviews, or op-eds in newspaper articles), or online (e.g., blog posts or social media, Twitter in particular). Sometimes rankers and their critics interact directly (e.g., posts on social media), whereas other utterances might fuel discussions, but not between rankers and their critics (e.g., academic conferences). Yet other communication consists of standalone statements that do not invite further interaction (e.g., online articles). Critique of rankings spreads across different sites and is ubiquitous. Of course, neither we, as authors, nor our article are exempt from the critical discourse and the dynamics we will attend to in the following. We will come back to our own complicity at the end of this contribution.

Drawing selectively on a rich variety of sources, we present a tentative conceptual exploration that accounts for the different kinds of critique leveled against university rankings, how rankers respond to these challenges, and the emergence of a quality we refer to as discursive resilience. Because the public discourse revolving around university rankings has been a key source of inspiration for our exploration, we make frequent use of empirical examples to illustrate our arguments. Although our findings do not result from systematic sampling or analysis of data, we understand them as contributions towards a conceptual framework (Hamann & Kosmützky, 2021). Leveraging the statements made by rankers and critics for creative purposes in our work, we hope to inspire systematic empirical studies in the future.

Critique of rankings: negative effects and methodological shortcomings

Drawing on a distinction that we introduced in our previous work (Ringel et al., 2021), we identify two modes of ranking critique which can be found in both scholarly discourse and the public domain. We start with (1) criticism targeting the negative effects of university rankings and then continue with (2) criticism emphasizing methodological shortcomings.

(1) Within and beyond the confines of scientific discourse, critics draw attention to multiple negative effects of university rankings such as (1a) rising levels of inequality, (1b) the spread of opportunistic behavior, and (1c) a restriction of scholarly autonomy (i.e., heteronomy). This distinction is analytical; empirically, the different types of critique can intersect. To illustrate them, we are going to draw on statements by researchers (or organizations). The point is not to focus on individual positions but to convey discursive patterns.

(1a) The propensity of university rankings to produce new and consolidate existing inequalities is perhaps the most frequently criticized effect. Assessments often highlight that while university rankings create an aura of neutrality, they are, in fact, “politico-ideological technologies of valuation and hierarchisation that operate according to a […] logic of inclusion and exclusion” (Amsler & Bolsmann, 2012, p. 284; cf. Welsh, 2019). Inequalities are identified both in material and symbolic terms and concern the individual as well as the institutional level (Hamann, 2016). Studies that focus on material inequalities on the individual level suggest, for example, that rankings drive up tuition fees and thereby keep low-income students from applying to “elite” universities (Chu, 2021). At the institutional level, rankings are said to lead to a monopolization of research funding, fostering a system in which a select few universities command the majority of resources (Münch, 2014). Others criticize that rankings (re-)produce symbolic inequalities by creating “multi-scalar geograph[ies] of institutional reputation” (Collins & Park, 2016, pp. 116–117), which are said to instill a never-ending “pursuit of world-class status” (Hazelkorn, 2011, p. 24) and “legitimize an institution’s placement in the global hierarchy” (Kauppinen et al., 2016, p. 38). Inequality-related criticism of university rankings also takes place in the public domain. A good example is an article published in Politico, which describes how rankings accelerate inequality, focusing specifically on tuition fees (Wermund, 2017).

(1b) Another type of critique draws attention to an increase in opportunism sparked by university rankings. The main concern is that, due to being ranked, universities have developed an obsession with reputation and adapt their behavior to fit the criteria of evaluation against which they are benchmarked (cf. Espeland & Sauder, 2007). More and more often, universities are seen to motivate the academic staff by indulging a “proliferating habit of connecting financial rewards to ranking positions” (Hallonsten, 2021, p. 14) and to engage in such practices as gaming or “jukin’ the stats” (Bush & Peterson, 2013, p. 1237; cf. Biagioli et al., 2019). These sorts of activities, critics argue, side-track universities from their core mission of providing institutional support for basic research and teaching (cf. Collins & Park, 2016; van Houtum & van Uden, 2022). This type of critique also extends beyond scholarly debates and has become a popular theme in public discourse. For example, an article published in Forbes quotes an anonymous university vice president who admits: “We are now numbers centered instead of people-centered. We are now results oriented rather than viewing ourselves as counselors” (Barnard, 2018). Some critics even call for more active measures to keep universities’ opportunism in check. In this sense, an article published on the website University World News states: “In the absence of global or regional standards or harmonisation of data collection and definition, the many ways in which institutions play the rankings games will persist” (Calderon, 2020).

(1c) A third negative effect of university rankings is expressed in criticism of their heteronomous influence. While a decrease in academic autonomy is to some extent implied in the previous two types, there is still more to heteronomy. The following quote encapsulates the basic premise of this critique: “Rankings ultimately colonize education and science […]. They impose their own logic of the production of differences in rank upon the practice of research and teaching” (Münch, 2013, p. 201). From this point of view, rankings foster intrusive regimes of accountability in academia, including “new configurations of knowledge and power” (Shore & Wright, 2015, p. 430), which amount to infringements upon the professional autonomy of science and the primacy of professional judgment (Hamann & Schmidt-Wellenburg, 2020; Mollis & Marginson, 2002). The quantitative nature of rankings is often connected to economic rationalities, which are seen to present “a direct threat to the institutional autonomy of science, and the operational and organizational logic it entails” (Hallonsten, 2021, p. 13). Criticism of heteronomy sometimes adopts a militaristic rhetoric, invoking, for instance, a Battle for World-Class Excellence (Hazelkorn, 2011) or a “mercenary army of professional administrators, armed with spreadsheets, output indicators, and audit procedures, loudly accompanied by the Efficiency and Excellence March” (Halffman & Radder, 2015, pp. 165–166). Like the other two types, this critique also found its way into public discourse. The following tweet is a prime example:

Universities’ acceptance of the @timeshighered academic reputation survey & rankings is shameful because universities are supposed to look after knowledge. Promoting empty marketing surveys & rankings as if they produce valid knowledge undermines HE’s central purpose. @paulashwin, 2021

(2) Complementing critique of negative effects, the second mode of critique is concerned with methodological shortcomings of university rankings. At its core, this critique evaluates “rankings as social science” (Marginson, 2014, p. 46). A typical example for this attitude is a “quality check” (Saisana et al., 2011, p. 174) of the rankings by ARWU and THE: “A robustness assessment,” such as the one undertaken by the authors, is perceived as necessary because it provides insights into the “methodological uncertainties that are intrinsic to the development of a ranking system and to test whether the space of inference of the ranks for the majority of the universities is narrow enough to justify any meaningful classification” (ibid., p. 175). The most common methodological shortcomings to which critics attend are (2a) commensuration, (2b) transparency, and (2c) validity and reliability.

(2a) A popular argument made against rankings is that they break down concepts like “quality of teaching” or “research performance” in measures that are too simplistic and fail to do justice to the complex reality they profess to depict. Rankings are, in other words, criticized for how they commensurate (Espeland & Stevens, 1998). At its core, this type of critique questions “whether a single measure can truly reflect overall performance across a variety of production activities (relating to teaching and research)” (Johnes, 2018, p. 586). Aggregating a variety of indicators to overall scores, rankings are criticized for claiming a level of holistic precision that is not only unrealistic from a scientific point of view but also entices their usage “as a lazy proxy for quality, no matter the flaws” (Gadd, 2020, p. 523). Although some acknowledge that there are cases in which weights are assigned to single indicators (Westerheijden, 2015, p. 426), there still seems to be a consensus that “a profile of the different dimensions is much more meaningful” (Schmoch, 2015, p. 152). Criticism invoking commensuration can also be found in the public domain. For example, an article published in the Financial Times argues that:

[u]niversity rankings are inherently questionable because they use various pieces of data [...] to come up with an overall number. If football matches were judged similarly, rather than on one score, there would be chaos. (Gapper, 2021)

Some, such as the Twitter account University Wankings, approach the purported level of precision even with irony and open ridicule:

Dear Staff Member, Our in-house student satisfaction survey has found that every department scored 97%. However, within this, we have identified three groups: - Green: 97.7-97.99% - Amber: 97.4-97.69% - Red: 97.0-97.39%. As you can imagine, this is cause for concern. @Uwankings, 2019

(2b) Critics also point to a lack of transparency regarding the indicators, measures, and not least the data used by university rankings. These shortcomings are said to compromise the scientific credibility and integrity of rankings (Marginson, 2014; Surappa, 2016). For example, a study suggests that the USNWR ranking “is not entirely forthright when it comes to the data and actually suppresses the values for some of the attributes” (Bougnol & Dulá, 2015, pp. 864–865). Another study compares five rankings, which, according to the authors, “fail to provide a theoretical or empirical justification for the measures selected and the weights utilized to calculate their rankings” (Dill & Soo, 2005, p. 506). Such criticism of rankings’ lack of methodological transparency can also be found in the public domain—for instance, on the blog University Ranking Watch, dedicated to methodological commentary. In one post, the author finds it regrettable that “[t]he THE rankings are uniquely opaque: they combine eleven indicators into three clusters so it is impossible for a reader to figure out exactly why a university is doing so well or so badly for teaching or research” (Holmes, 2020). In a similar vein, a post to the blog Leiter Reports criticizes the QS rankings’ lack of transparency. Referring to an audit by the IREG Observatory on behalf of QS, the blogger states that “until the audit is published, and the independent ‘experts’ named, this is all public relations, and nothing more” (Leiter, 2013).

(2c) The validity and reliability of methods and data are a third type of criticism related to methodology. The basic rationale is that university rankings do not deliver on the level of accuracy they promise; their measurements are not only seen as simplistic (cf. commensuration) but as fundamentally flawed. For example, university rankings are said to be “not sufficiently reliable measures of performance quality” and failing to “provide the basis of checkable improvement activities” (Leiber, 2017, p. 47). It is thus not surprising that they are charged with delivering unreliable, even “arbitrary results”: “The results of the different rankings differ, as the underlying methodology differs” (Schmoch, 2015, p. 152). The fact that some university rankings rely on surveys raises particular concerns about their reliability and validity (Dill & Soo, 2005, p. 511). In such cases, critics suggest using other, more suitable indicators (Bougnol & Dulá, 2015, pp. 860–865; Kroth & Daniel, 2009, p. 554). Even though these discussions can be quite technical, validity and reliability have become popular themes in public discourse, as illustrated by an article published in Inside Higher Ed:

QS’s methodology seems to be particularly controversial [...] due in large part to its greater reliance on reputational surveys than other rankers. Combined with a survey of employers, which counts for 10 percent of the overall ranking, reputational indicators account for half of a university’s QS ranking. (Redden, 2013)

Thus far, we have distinguished two modes of ranking critique, one attending to negative effects, the other to methodological shortcomings. Our examples suggest that criticism of negative effects tends to be more fundamental, whereas discussions revolving around methodological shortcomings of rankings can also have a constructive spirit.

Responses to critique of rankings: defensive and confident

Although continuously targeted by challenges that emphasize negative effects or methodological shortcomings, university rankings seem to be quite resilient towards this criticism. Extending previous accounts (Ringel et al., 2021; see also Ringel, 2021), our tentative analysis suggests that rankers mobilize two modes of responding to criticism. We refer to the first mode as (3) defensive responses and to the second mode as (4) confident responses to critique of rankings.

(3) Defensive responses counter criticism and promote alternative narratives. As such, they are a core element of the discursive resilience of university rankings. We have found two common defensive responses: (3a) trivializing university rankings and (3b) emphasizing that they are inevitable. Although analytically distinct, these strategies can overlap empirically. Similar to the previous section, we are going to use public statements not to focus on individual positions but to illustrate discursive patterns.

(3a) Although rankers have become proficient in leveraging public relations expertise to transform league tables into spectacular public performances (Brankovic et al., 2018; Ringel, 2021), they are also accomplished in trivializing university rankings by downplaying their influence. If deemed necessary, rankers point to their competitors or other types of university evaluation. In these instances, they strike a more modest tone, for instance, when they expect encounters with the scientific community. The following quote from an article written by the Head of Research at QS (and published in a volume addressing both scholars and experts) is a good illustration:

Rankings results, as published, are just one (perhaps expert) interpretation of the data – a little like a film critic stating that one film is better than another. The critic may know far more about film and may be able to justify his viewpoint using a near scientific formulae, but his ultimate proclamation may bear no resemblance to the viewpoint of any given audience member. (Sowter, 2013, p. 64)

In an article published in Inside Higher Ed, the Chief Knowledge Officer of THE goes even further: “The rankings of the world’s top universities that my magazine has been publishing for the past six years […] are not good enough” (Baty, 2010). Other actors from the ranking industry, such as the influential publisher Elsevier (2021), which provides the data for the THE Rankings, also employ this defensive strategy: “Due to their limitations, you should not use rankings and league tables as a stand-alone measurement. They are best when used as decision-making tools in conjunction with other indicators and data.”

(3b) A second type of defensive response emphasizes that rankings are inevitable. This response seeks to render the agency of rankers invisible and provides them with “an aura of justified inevitability despite evidence that other interpretations are possible” (Amsler, 2014, p. 157). According to Amsler (ibid.), the most obvious manifestation of this response is the phrase “rankings are here to stay,” which often serves as a backdrop for appeals to critics that a more constructive approach is needed. The following statement by a former managing editor of America’s Best Colleges by USNWR is a typical example:

Given that the rankings are here to stay, what is needed are broadened efforts by colleges and universities to explain to the public – in an intellectually honest, not narrowly self-serving, way – what the rankings do and don’t do. (Sanoff, 1998)

The claim of inevitability is adopted by a broad range of actors such as Elsevier (2021)—“Most agree that rankings are here to stay”—or Alan Gilbert, president and vice-chancellor of the University of Manchester, who maintains that “rankings are here to stay” and “it is therefore worth the time and effort to get them right” (in Butler, 2007). Notably, scholars occasionally join rankers in their efforts to create the impression that there is no alternative to rankings. Statements such as the following are typical instances: “If rankings did not exist, someone would have to invent them” (Altbach, 2012, p. 26). “They are,” the author continues, “an inevitable result of higher education’s worldwide massification, which produced a diversified and complex academic environment, as well as competition and commercialization within it.”

(4) While defensive responses are given to critique of both the negative effects of rankings and their methodological shortcomings, the second type of response is mainly given to methodological criticism. Responses are confident to the extent that they insist on the strengths and potentials of university rankings. Broadly speaking, we can distinguish three types of confident responses: (4a) claiming demand, (4b) demonstrating scientific proficiency, and (4c) temporalizing rankings.

(4a) A first type of confident response portrays university rankings as satisfying a demand. Statements such as the following are typical examples of this rhetoric: “rankings address the growing demand for accessible, manageably packaged and relatively simple information on the ‘quality of higher education institutions’” (Marope & Wells, 2013, pp. 12–13). Rankers claim that rankings provide “much-needed – and clearly desired – comparative information to help make decisions on where to apply and enroll” (Morse, 2009). When issuing this type of response, rankers often cast aspersions on the higher education sector’s motivation to hold itself accountable (cf. Marope et al., 2013). In this view, university rankings are a “catalyst for the transparency agenda in higher education” (Sowter, 2013, p. 65) and their producers are “part of the rapidly growing higher education accountability movement” (Morse, in Byrne, 2015). The confident strategy of claiming that rankings satisfy specific demands is also employed by other actors. For example, Thompson’s legal editor attests to the USNWR rankings’ informative value stating: “Students are not going to stop using the U.S. News rankings until law schools start to meet their ethical responsibility to provide applicants with meaningful information in a convenient format” (Berger, 2001, p. 502).

(4b) Rankers are eager to demonstrate scientific proficiency, which is why their public communication is replete with claims to “serious research and sound data” (Baty, 2013, p. 46), technical terminology such as “DataPoints tools” (Baty, 2018), accounts of “data gathering operations” (Sowter, 2013, p. 65), and promises to “remove any potential bias” (Quacquarelli Symonds, 2021). A study on the USNWR rankings indicates that rankers have developed and honed this skill over the years:

In addition to creating a quantification of law school quality, USNs adoption of already legitimate methods of evaluation to justify these numbers, namely, statistical models, symbols, and language, was also a necessary ingredient in the creation of its appeal. (Sauder, 2008, p. 215)

A popular way of demonstrating scientific proficiency are confident claims about the thorough methodology of rankings. For example, responding to critique leveled against composite indicators, the Chief Data Strategist for USNWR rankings argues that their methodology has been improved over the years so that “you cannot make a meaningful rise in the rankings by tweaking one or two numbers” (Morse, in Bruni, 2016). The willingness to demonstrate scientific proficiency is also apparent in THE’s public account of its new ranking in 2009, which had for the first time been produced independently after several years of cooperation with QS. To convey the transparency and thoroughness of this process, THE claims to have worked with the academic community in what is called “the largest consultation exercise ever undertaken to produce world university rankings” (Baty, in Lim, 2018, p. 421). Those who demonstrate scientific proficiency on behalf of a ranking organization often hold positions with names that are supposed to signal scientific proficiency, for example, Chief Data Strategist, Chief Knowledge Officer, or Head of Research. When speaking in public, these actors take great care of presenting themselves as disinterested experts. For instance, an article published in The Chronicle of Higher Education refers to Robert Morse, the Chief Data Strategist for the USNWR rankings, as a “grandfatherly data-cruncher” with “a reputation as a rather dry speaker. Though the rankings involve high stakes, his annual presentation is technical and his delivery measured” (Diep, 2022).

(4c) A third confident response is temporalization. Rankers argue that university performance is inherently volatile and needs constant monitoring. This is why rankings are considered provisional snapshots, always to be revised and improved in the next iteration. This line of reasoning figures in claims such as the following by the Head of Research at QS: “We work tirelessly to improve the quality of our data and processes every year” (O’Malley, 2016). In another quote, QS highlights that improving the methodology for its World University Rankings is an ongoing concern:

We review our methodology annually and have a formal process for adjustments or additions. We seek advice from our Global Academic Advisory Board [...] and assess the evidence and impact that any adjustments would have. (Quacquarelli Symonds, 2021)

The temporalization of rankings tends to invoke the notion of scientific progress. From this point of view, there might actually never be a perfect ranking. In the words of THE’s Chief Knowledge Officer, it is quite possible that “rankings can never arrive at ‘the truth’”—all one can hope for is to “get closer to the truth, by being more rigorous, sophisticated and transparent” (Baty, 2010). Once updated on a regular basis, university rankings become “a serial practice of comparison” (Ringel & Werron, 2020, p. 144) and effectively institutionalize a temporal logic that resembles a calendar (Landahl, 2020) in which shortcomings are framed as matters that need to be addressed—but only in the future, that is, the next iteration.

Both defensive and confident responses explicitly refer to, and thus connect with, statements made by others. However, the responses discussed thus far mostly do not aim to initiate exchange with critics. Although they undoubtedly contribute to the discursive resilience of university rankings as they counter criticism and provide alternative narratives, such responses only account for part of the story. To arrive at a comprehensive understanding of how university rankings navigate what appears like hostile environments, we need to extend the analytical scope and also explore mutual engagements between rankers and their critics.

Co-opting the critics: a never-ending conversation?

As the previous section has shown, producers of rankings react to criticism by deploying either defensive or confident responses. In this section, we turn our attention to confident responses that stimulate and maintain discussions between rankers and critics. This engagement emerges particularly from methodological criticism and confident responses to this mode of critique. We refer to the process by which rankers engage critics in mutual discursive work revolving around the improvement of university rankings as (5) co-optation. To illustrate this process, we use public statements by rankers and critics. In doing so, our aim is not to expose individuals but to illustrate that mutual engagement is a discourse effect that is systematically built into positions like ranker and critic.

(5) In the previous section, we showed how rankers respond to methodological criticism with confident claims regarding the scientific proficiency of rankings or with temporalizing assessments of university performance. From this discursive interplay between methodological critique and confident responses emerges the co-optation of critics for a conversation about how rankings can be improved. To this end, rankers regularly extend invitations that demonstrate their willingness to engage critics. The following quote from a methodology statement by THE on its Impact Rankings is a typical example:

Our goal is to be as open and transparent as possible, but also to engage with universities and higher education institutions more directly. If the guidance we have provided is unclear, or doesn’t reflect your local environment, please contact us so that we can help you, and so that we can improve the approach! (Times Higher Education, 2021, p. 2)

Another illustration of engaging the critics is provided in a statement by THE’s Chief Knowledge Officer: “Times Higher Education has registered notable progress in improving its methodology. Going forward, it will continue to engage its critics and take expert advice on further methodological modifications and innovations” (Baty, 2013, p. 51). Striking a similar tone, representatives of USNWR ostentatiously highlight the scientific community’s contribution to the improvement of their rankings over the years:

Although the methodology is the product of years of research, we continuously refine our approach based on user feedback, discussions with schools and higher education experts, literature reviews, trends in our own data, availability of new data, and engaging with deans and institutional researchers at higher education conferences. (Morse & Brooks, 2021)

Crucially, for the process of co-optation to work, critics need to take up the invitation to engage. In this regard, rankers can rely on the constructive spirit of methodological criticism, which oftentimes conveys a genuine interest in better assessments and more rigorous university rankings by proposing improved methodologies or alternative indicators (cf. Johnes, 2018; Kroth & Daniel, 2009). The premise of this constructive attitude could be summarized as follows:

If rankings are effectively grounded in real university activity there is potential for a virtuous constitutive relationship between university rank and university performance.” (Marginson, 2014, p. 46)

Rankers leverage the constructive spirit of their critics who are inclined to evaluate university rankings “in their own terms” (O’Connell, 2013, p. 270). Methodological criticism of a lack of scientific rigor is then utilized in the interest of reforming rankings. The outcome is a conversation between rankers and critics carried out in different venues and sites. Our preliminary analysis suggests three sites to be of most significance: (5a) publications, (5b) events, and (5c) social media.

(5a) An important site for conversations between proponents and opponents of university rankings are publications. When critics invoke methodological shortcomings in scholarly outlets, but also in blog posts or media articles, rankers often respond to these concerns in their own publications. This can result in a dense network of mutual references. A genre of particular interest are edited volumes and reports in which representatives of ranking organizations, think tanks, and consultancies publish alongside esteemed higher education researchers (cf. Kehm & Stensaker, 2009; Marope et al., 2013; Cai et al., 2021). The following quote by the Assistant-Director General for Education at the UNESCO (from the foreword of one of the aforementioned edited volumes) illustrates the significance of mutual engagement via publications:

The current volume brings together both promoters and opposers of rankings, to reflect the wide range of views that exist in the higher education community on this highly controversial topic. If learners, institutions and policy-makers are to be responsible users of ranking data and league table lists, it is vital that those compiling them make perfectly clear what criteria they are using to devise them, how they have weighted these criteria, and why they made these choices. (Tang, 2013, p. 6)

Both the networks of mutual references across publications and joint publications represent a common discursive framework in which rankers and critics engage in a constructive dialogue on intended and unintended effects, uses and misuses, and, most importantly, room for improvement of university rankings. Not least, publications foster the appearance of symmetry or equivalence between proponents and scholarly opponents.

(5b) Events such as workshops, summits, or conferences are another site of mutual engagement. More than just being rituals, these events are also social spaces where critics are given the opportunity to voice concerns and offer (preferably constructive) feedback. A handful of studies, for example, on the IREG Observatory conferences (Brankovic et al., 2022) or on the World Academic Summit organized by THE (Lim, 2018) provide examples of how these events are geared towards nurturing relationships with scholarly audiences and establishing rankings as evaluations that, albeit flawed, can be improved, which is why they should be debated—at this and at the next event as well. The following quote from the IREG Observatory website suggests that events are indeed appreciated by (some members of) the academic community:

The IREG conferences have traditionally become a unique and neutral international platform, where university rankings are discussed in the presence of those who do the rankings, and those who are ranked: authors of the main global rankings, university managers, and experts on higher education. ‘There is at least one organization that serves as a base for communication among those concerned with rankings, the International Ranking Expert Group (IREG) Observatory on Academic Ranking and Excellence, which attracts hundreds to its conferences’. Philip G. Altbach in Research Handbook on University Rankings. (IREG, 2022)

The previously mentioned article about the Association for Institutional Research, where USNWR representatives meet representatives from the colleges that deliver the data for the rankings, describes how official sessions are dominated by methodology-related discussions, while other issues—such as negative effects—are relegated to informal gatherings:

Outside of the room, commentators have posed even bigger questions, and pitched more radical steps. Should college ranking even exist? Should colleges refuse to cooperate with them on ethical grounds? Within the room, however, tweaks seemed to be the preferred solution. Attendees concluded that they could put together a committee, with representatives from a diversity of college types, to stress-test rankings surveys, identifying which questions need clarification and where survey-takers might be tempted to cheat. (Diep, 2022)

(5c) A growing number of encounters take place on social media, especially Twitter, with some discussions spanning across many tweets. In the following example, the Twitter account of U-Multirank, upon being addressed by a representative of the European University Association (EUA), uses the opportunity to promote its methodology:

What do universities think about @UMultirank? Tia Loukkola talks to @scibus about new @euatweets survey [link to blog post on U-Multirank metrics removed by the authors]. @Thomas_E_Jorgen, 2015

@Thomas_E_Jorgen @scibus @euatweets Recent EUA report shows top priority indicators for HEIs are those in @Umultirank [link to report removed by authors]. @Umultirank, 2015

.@UMultirank @scibus @euatweets that might be, but is the data good enough? Some scepticism came out. @Thomas_E_Jorgen, 2015

@Thomas_E_Jorgen @scibus @euatweets UMR uses a thorough data verification process detailed in our methodology: [link to document on methodology removed by the authors]. @Umultirank, 2015

Another example of conversations between rankers and critics is the following series of tweets, unfolding ahead of the 2016 Middle East and North Africa Universities Summit (MENAUS), organized by THE in cooperation with the United Arab Emirates University. Starting as a fundamental challenge of the ranking’s methodology, the conversation quickly turns into a productive exchange between the critic and THE’s Chief Knowledge Officer:

Saudi Arabia dominated Arab world university ranking #menaus #SaudiArabia [picture of a ranking entitled “Top 15 Arab Universities” removed by the authors]. @Phil_Baty, 2016

@Phil_Baty I would question the ranking credibility as it looks odd and unrealistic .. @HamadYaseen, 2016

@HamadYaseen it’s a balanced and comprehensive methodology, but it will evolve after consultation at #menaus [picture illustrating the methodology of THE rankings removed by the authors]. @Phil_Baty, 2016

@Phil_Baty just out of curiosity, do you have the scores of Kuwait university .. Many thanks. @HamadYaseen, 2016

@HamadYaseen Sadly it declined to participate. We’d love to get them in our database this year: [Link to THE article calling for universities to submit their data removed by the authors]. @Phil_Baty, 2016

@Phil_Baty I will see what I can do about that … Thanks. @HamadYaseen, 2016

@HamadYaseen Thank you. We will be in touch with the university very soon. Representatives welcome at #MENAUS to consult in metrics. @Phil_Baty, 2016

By engaging scholarly critics in publications, at events, and on social media, rankers make them involuntary accomplices in the process of building the discursive resilience of university rankings. The mutual discursive work of proponents and opponents comes full circle when rankers not only engage the scientific community but also draw attention to common ties. The following quotes by representatives of QS and USNWR illustrate how the co-optation of critics is used to promote the scholarly credibility of producers of rankings:

We spoke with nearly 8,000 academics in face-to-face seminars, who showed strong support for our focus on these primary goals of world class universities. Next, QS sought to develop a tried and tested approach to conducting an expert Peer Review Survey of academic quality. It brought in statistical and technical experts to ensure that our survey design could not be ‘gamed’ and provided valid results. (Quacquarelli Symonds, 2021)

Although the methodology is the product of years of research, we continuously refine our approach based on user feedback, discussions with schools and higher education experts, literature reviews, trends in our own data, availability of new data, and engaging with deans and institutional researchers at higher education conferences. (Morse & Brooks, 2021)

Conclusion: the discursive resilience of university rankings

We approached the critical discourse revolving around university rankings in three steps. First, we distinguished two modes of critique of rankings, one attending to negative effects, the other drawing attention to methodological shortcomings of university rankings. Second, we examined how rankers respond to these challenges. Our exploration suggests that rankers respond either defensively by deflecting or countering criticism and providing alternative narratives or confidently by insisting on the strengths and potentials of university rankings. Defensive responses address both modes of ranking critique, whereas confident responses tend to be focused on methodological shortcomings. The third step of our analysis reveals how methodological critique and confident responses intersect at events, in (joint) publications, and on social media, facilitating an ongoing public conversation between rankers and critics. As a result, critics are co-opted and made involuntary accomplices in a common discursive endeavor: the development and improvement of university rankings. Although rankers’ defensive responses to criticism contribute to the institutionalization of rankings, it seems that the co-optation of critics is of even greater importance and should therefore be considered a major determining factor for the success of university rankings.

These findings are tentative because they are neither based on a systematic sampling strategy nor on a systematic data analysis. Our contribution is to be understood as an exploration towards a systematic conceptualization of the conditions that are conducive to the proliferation of university rankings in what appears to be hostile environments. In addressing this question, we have built on and extended our previous research (Ringel et al., 2021). We call upon future research to extend our conceptualization by systematically studying the discursive work done by rankers and critics. Specifically, we would like to discuss three avenues for future research which we find particularly promising. First, studies should take a more nuanced approach and account for variations between producers of rankings. There is evidence suggesting that it could be beneficial to distinguish types of organizations—such as for-profit corporations (Lim, 2018) or research centers (Leckert, 2021)—and their responses to criticism. Second, we emphasized rankers’ proficiency when dealing with criticism. In doing so, we neglected potential tensions producers of rankings are facing, especially between the affordances of scholarly sophistication and rigor, on the one hand, and the necessity of providing simplified explanations and aesthetically appealing visualizations for lay audiences, on the other (Ringel, 2021). Third, it is an open question whether the division of discursive labor between rankers and critics that we found in higher education also contributes to the success of rankings in other fields—for instance, in healthcare, international development, or the arts. Taking this step is critical to prevent university rankings from becoming “model cases” (Krause, 2021a) of research on rankings and to arrive at a more general understanding of rankings as a social practice across fields.

While we acknowledge its limitations, we maintain that our tentative exploration addresses a substantial gap in the literature: it suggests that the success of university rankings is not only due to macro-level trends such as the rise of neoliberal ideology, the transformation of universities into entrepreneurial actors, or the institutionalization of numbers as a rationalized mode of communication (cf. Jessop, 2017; Münch, 2014; Slaughter & Leslie, 1999). These perspectives have undoubtedly facilitated important insights into the success of rankings and their effects on academic fields. However, building on recent studies (cf. Barron, 2017; Kaidesoja, 2022; Lim, 2018) and our previous research (Ringel et al., 2021; see also Brankovic et al., 2022; Ringel, 2021), we contend that in order to fully understand the proliferation of university rankings, we also need to account for meso-level processes. Only then are we able to see how university rankings can prevail despite being exposed to frequent criticism. Our tentative answer to this question is that contemporary university rankings are equipped with a quality we refer to as discursive resilience.

What do we mean by discursive resilience? Resilience implies that rankers neither avoid criticism nor try to immunize rankings against it. A resilient approach means to not only tolerate challenges but also to accept and utilize them. As we have shown, criticism is turned into a valuable resource: in their effort to make rankings a legitimate form of evaluating university performance, rankers render improvement a joint endeavor pursued together with scholarly critics. The development of discursive resilience has several consequences: first, rankings are transformed from a heteronomous threat to scholarly autonomy into a scientized undertaking. They may be seen as imperfect but are not questioned as such. According to this logic, rankings, like any scientific project, can always be improved, whereas fundamental critique—especially when it concerns negative effects—appears inadequate and unproductive. Second, the discursive resilience of rankings also has consequences for their critics: we argue that critics’ mutual engagement with rankers is by no means a matter of individual flaws, naiveté, or dishonesty. Rather, the fact that scholars are co-opted and made involuntary accomplices is a function of the subject position of the ranking critic. Undoubtedly affected by the ubiquitous measurement of academic performance and often assessed in terms of societal outreach and policy impact, this subject position is hardly indifferent towards rankings and perhaps tempted to engage with media corporations and data companies. Such engagements tend to revolve around methodological critique, which seems natural to scholarly critics of rankings—after all, they are equipped with the expertise to monitor and enforce scientific standards.

These entanglements mandate a kind of reflexivity that transcends methodological issues (such as data quality, transparency, validity, or reliability) and calls upon us to carefully analyze the scholastic position of the ranking critic (cf. Bourdieu, 1990; see also Bacevic, 2019; Krause, 2021b). Whatever the intention, if it is voiced in the public domain and engages producers of rankings, scholarly criticism inevitably leaves behind the social presuppositions of the academic field, such as an interest in disinterested scientific knowledge production. Confronted with interests in practical applicability and exploitation, which differ from the interests that are taken for granted in the academic field, scholarly critique can be conveniently transformed into an (involuntary) accomplice in the institutionalization of university rankings. While our own critical approach is, of course, by no means exempt from such discursive dynamics and thus not immune to co-optation, we hope that our analysis contributes to a more comprehensive reflexivity.

References

Altbach, P. G. (2012). The globalization of college and university rankings. Change: The Magazine of Higher Learning, 44(1), 26–31. https://doi.org/10.1080/00091383.2012.636001

Amsler, S. S. (2014). University ranking: A dialogue on turning towards alternatives. Ethics in Science and Environmental Politics, 13(2), 155–166. https://doi.org/10.3354/esep00136

Amsler, S. S., & Bolsmann, C. (2012). University ranking as social exclusion. British Journal of Sociology of Education, 33(2), 283–301. https://doi.org/10.1080/01425692.2011.649835

Bacevic, J. (2019). Knowing neoliberalism. Social Epistemology, 33(4), 380–392. https://doi.org/10.1080/02691728.2019.1638990

Barnard, B. (2018). End college rankings: An open letter to the owners and editors of U.S. News And World Report. Forbes.com. Retrieved May 16, 2021, from https://www.forbes.com/sites/brennanbarnard/2018/09/28/end-college-rankings-an-open-letter-to-the-owners-and-editors-of-u-s-news-and-world-report/?sh=532acd2576cc

Barron, G. R. S. (2017). The Berlin principles on Ranking Higher Education Institutions: Limitations, legitimacy, and value conflict. Higher Education, 73(2), 317–333. https://doi.org/10.1007/s10734-016-0022-z

Baty, P. (2013). An evolving methodology: The Times Higher Education World University Rankings. In M. Marope, P. J. Wells, & E. Hazelkorn (Eds.), Rankings and Accountability in Higher Education: Uses and Misuses (pp. 41–53). UNESCO.

Baty, P. (2010). Ranking confession. Retrieved January 2, 2022, from https://www.insidehighered.com/views/2010/03/15/ranking-confession

Baty, P. (2018). This is why we publish the World University Rankings. Retrieved November 5, 2021, from https://www.timeshighereducation.com/blog/why-we-publish-world-university-rankings

Berger, M. (2001). Why the U.S. News and World Report Law School Rankings are both useful and important. Journal of Legal Education, 51(4), 487–502.

Biagioli, M., Kenney, M., Martin, B. R., & Walsh, J. P. (2019). Academic misconduct, misrepresentation, and gaming: A reassessment. Research Policy, 48(2), 401–413. https://doi.org/10.1016/j.respol.2018.10.025

Boltanski, L. (2011). On critique. A sociology of emancipation. Polity Press.

Bougnol, M.-L., & Dulá, J. H. (2015). Technical pitfalls in university rankings. Higher Education, 69(5), 859–866. https://doi.org/10.1007/s10734-014-9809-y

Bourdieu, P. (1990). The scholastic point of view. Cultural Anthropology, 5(4), 380–391. https://doi.org/10.1525/can.1990.5.4.02a00030

Brankovic, J., Ringel, L., & Werron, T. (2018). How rankings produce competition: The case of global university rankings. Zeitschrift Für Soziologie, 47(4), 270–288. https://doi.org/10.1515/zfsoz-2018-0118

Brankovic, J., Ringel, L., & Werron, T. (2022). Spreading the gospel: Legitimizing university rankings as boundary work. Research Evaluation, Online First. https://doi.org/10.1093/reseval/rvac035

Brankovic, J. (2019). Satire, resignation and anger around higher education rankings and wankings. Retrieved January 2, 2022, from https://echer.org/higher-education-rankings-and-wankings

Bruni, F. (2016). Why college rankings are a joke. Retrieved January 2, 2022, from https://www.nytimes.com/2016/09/18/opinion/sunday/why-college-rankings-are-a-joke.html

Bush, D., & Peterson, J. (2013). Jukin’ the stats: The gaming of law school rankings and how to stop it. Conneticut Law Review, 45(4), 1236–1280.

Butler, D. (2007). Academics strike back at spurious rankings. Nature, 447(May), 514–515. https://doi.org/10.1038/447514b

Byrne, J. A. (2015). U.S. News disclosing less due to gaming. Retrieved January 2, 2022, from https://tippingthescales.com/2015/06/u-s-news-disclosing-less-because-of-gaming/

Cai Liu, N., Wu, Y., & Wang, Q. (Eds.). (2021). World-class universities. Global trends and institutional models. Leiden, Boston: Brill.

Calderon, A. (2020). New rankings results show how some are gaming the system. Retrieved December 23, 2021, from https://www.universityworldnews.com/post.php?story=20200612104427336

Chirikov, I. (2022). Does conflict of interest distort global university rankings? Higher Education, Online First. https://doi.org/10.1007/s10734-022-00942-5

Chu, J. (2021). Cameras of merit or engines of inequality? College ranking systems and the enrollment of disadvantaged students. American Journal of Sociology, 126(6), 1307–1346. https://doi.org/10.1086/714916

Chun, H., & Sauder, M. (2022). The power in managing numbers: Changing interdependencies and the rise of ranking expertise. Higher Education. https://doi.org/10.1007/s10734-022-00823-x

Collins, F. L., & Park, G.-S. (2016). Ranking and the multiplication of reputation: Reflections from the frontier of globalizing higher education. Higher Education, 72(1), 115–129. https://doi.org/10.1007/s10734-015-9941-3

Diep, F. (2022). Where the rankers meet the ranked. An annual conference illustrates college rankings’ enduring dominance. The Chronicle of Higher Education. Retrieved August 4, 2022, from https://www.chronicle.com/article/where-the-rankers-meet-the-ranked

Dill, D. D., & Soo, M. (2005). Academic quality, league tables, and public policy: A cross-national analysis of university ranking systems. Higher Education, 49(4), 495–533. https://doi.org/10.1007/s10734-004-1746-8

Elsevier. (2021). University rankings: A closer look for research leaders. Retrieved January 2, 2022, from https://www.elsevier.com/research-intelligence/university-rankings-guide

Espeland, W. N., & Sauder, M. (2007). Rankings and reactivity. How public measures recreate social worlds. American Journal of Sociology, 113(1), 1–40. https://doi.org/10.1086/517897

Espeland, W. N., & Stevens, M. L. (1998). Commensuration as a social Process. Annual Review of Sociology, 24, 313–343. https://doi.org/10.1146/annurev.soc.24.1.313

Free, C., Salterio, S. E., & Shearer, T. (2009). The construction of auditability: MBA rankings and assurance in practice. Accounting, Organizations and Society, 34(1), 119–140. https://doi.org/10.1016/j.aos.2008.02.003

Gadd, E. (2020). University rankings need a rethink. Nature, 587, 523. https://doi.org/10.1038/d41586-020-03312-2

Gapper, J. (2021). University rankings are just an educated guess. Retrieved November 4, 2021, from https://www.ft.com/content/21da8a6b-d5e9-473a-86e0-056c489d55bf

Halffman, W., & Radder, H. (2015). The Academic Manifesto: From an occupied to a public university. Minerva, 53(2), 165–187. https://doi.org/10.1007/s11024-015-9270-9

Hallonsten, O. (2021). Stop evaluating science: A historical-sociological argument. Social Science Information, 60(1), 7–26. https://doi.org/10.1177/2F0539018421992204

Hamann, J. (2016). The visible hand of research performance assessment. Higher Education, 72(6), 761–779. https://doi.org/10.1007/s10734-015-9974-7

Hamann, J., & Kosmützky, A. (2021). Does higher education research have a theory deficit? Explorations on theory work. European Journal of Higher Education, 11(5), 468–488. https://doi.org/10.1080/21568235.2021.2003715

Hamann, J., & Schmidt-Wellenburg, C. (2020). The double function of rankings. Consecration and dispositif in transnational academic fields. In S. Bernhard & C. Schmidt-Wellenburg (Eds.), Charting transnational fields. Methodology for a political sociology of knowledge (160–177). London: Routledge.

Hazelkorn, E. (2011). Rankings and the reshaping of higher education: The battle for world class excellence. Palgrave Macmillan.

Hertig, H. P. (2016). Universities, rankings and the dynamics of global higher education: Perspectives from Asia, Europe and North America. Springer.

Holmes, R. (2020). Observations on the Indian ranking boycott. Retrieved January 2, 2022, from https://rankingwatch.blogspot.com/2020/05/observatinss-on-indian-ranking-boycott.html

IREG. (2006). Berlin Principles on Ranking of Higher Education Institutions. Retrieved January 2, 2022, from http://ireg-observatory.org/en_old/berlin-principles

IREG (2022). IREG 2022 Warsaw conference. Academic rankings at the crossroads. Retrieved July 25, 2022, from https://ireg-observatory.org/en/events/ireg-2022-warsaw-conference/

Jessop, B. (2017). Varieties of academic capitalism and entrepreneurial universities. Higher Education, 73(6), 853–870. https://doi.org/10.1007/s10734-017-0120-6

Johnes, J. (2018). University rankings: What do they really show? Scientometrics, 115(1), 585–606. https://doi.org/10.1007/s11192-018-2666-1

Kaidesoja, T. (2022). A theoretical framework for explaining the paradox of university rankings. Social Science Information, 61(1), 128–153. https://doi.org/10.1177/05390184221079470

Kauppinen, I., Coco, L., & Brajkovic, L. (2016). Blurring boundaries and borders: Interlocks between AAU institutions and transnational corporations. In S. Slaughter & T. J. Barrett (Eds.), Higher education, stratification, and workforce development (pp. 33–57). Springer.

Kehm, B. M., & Stensaker, B. (Eds.). (2009). University rankings, diversity, and the new landscape of higher education. Sense Publishers.

Krause, M. (2021a). Model cases. On canonical research objects and sites. University of Chicago Press.

Krause, M. (2021b). On sociological reflexivity. Sociological Theory, 39(1), 3–18. https://doi.org/10.1177/0735275121995213

Kroth, A., & Daniel, H.-D. (2009). Internationale Hochschulrankings. Zeitschrift Für Erziehungswissenschaft, 11(4), 542. https://doi.org/10.1007/s11618-008-0052-0

Landahl, J. (2020). The Pisa calendar: Temporal governance and international large-scale assessments. Educational Philosophy and Theory, 52(6), 625–639. https://doi.org/10.1080/00131857.2020.1731686

Leckert, M. (2021). (E-)Valuative metrics as a contested field A comparative analysis of the Altmetrics- and the Leiden Manifesto. Scientometrics, 126, 9869–9903. https://doi.org/10.1007/s11192-021-04039-1

Leiber, T. (2017). University governance and rankings. The ambivalent role of rankings for autonomy, accountability and competition. Beiträge zur Hochschulforschung, 39(3–4), 30–51.

Leiter, B. (2013). Are the QS Rankings a fraud on the public? QS’s Head of Public Relations responds. Retrieved January 2, 2022, from https://leiterreports.typepad.com/blog/2013/05/are-the-qs-rankings-a-fraud-on-the-public-qss-head-of-public-relations-responds.html

Lim, M. A. (2018). The building of weak expertise: The work of global university rankers. Higher Education, 75(3), 415–430. https://doi.org/10.1007/s10734-017-0147-8

Marginson, S. (2014). University rankings and social science. European Journal of Education Research, Development and Policy, 49(1), 45–59. https://doi.org/10.1111/ejed.12061

Marope, M., Wells, P. J., & Hazelkorn, E. (Eds.). (2013). Rankings and accountability in higher education. Uses and misuses. UNESCO.

Marope, M., & Wells, P. J. (2013). University rankings: The many sides of the debate. In M. Marope, P. J. Wells, & E. Hazelkorn (Eds.), Rankings and accountability in higher education. Uses and misuses ( 7–19). Paris: UNESCO.

Mollis, M., & Marginson, S. (2002). The assessment of universities in Argentina and Australia: Between autonomy and heteronomy. Higher Education, 43(3), 311–330. https://doi.org/10.1023/A:1014603823622

Morse, R., & Brooks, E. (2021). How U.S. News calculated the 2022 Best Colleges Rankings. Retrieved January 2, 2022, from https://www.usnews.com/education/best-colleges/articles/how-us-news-calculated-the-rankings

Morse, R. (2009). Do the rankings ‘punish’ law schools? Retrieved January 2, 2022, from https://www.usnews.com/education/blogs/college-rankings-blog/2009/02/02/do-the-rankings-punish-law-schools

Münch, R. (2014). Academic capitalism. Universities in the global struggle for excellence. Routledge.

Münch, R. (2013). The colonization of the academic field by rankings: Restricting diversity and obstructing the progress of knowledge. In T. Erkkilä (Ed.), Global university rankings. Challenges for European higher education (196–219). Houndsmills, Basingstoke: Palgrave.

O’Connell, C. (2013). Research discourses surrounding global university rankings: Exploring the relationship with policy and practice recommendations. Higher Education, 65(6), 709–723. https://doi.org/10.1007/s10734-012-9572-x

O'Malley, B. (2016). “Global university rankings data are flawed” – HEPI. Retrieved January 2, 2022, from https://www.universityworldnews.com/post.php?story=20161215001420225

Redden, E. (2013). Scrutiny of QS rankings. Retrieved January 2, 2022, from https://www.insidehighered.com/news/2013/05/29/methodology-qs-rankings-comes-under-scrutiny

Ringel, L. (2021). Challenging valuations: How rankings navigate contestation. Zeitschrift Für Soziologie, 50(5), 289–305. https://doi.org/10.1515/zfsoz-2021-0020

Ringel, L., & Werron, T. (2020). Where do rankings come from? A historical-sociological perspective on the history of modern rankings. In A. Epple, W. Erhart, & J. Grave (Eds.), Practices of comparing: Ordering and changing the worlds (pp. 137–170). Bielefeld University Press.

Ringel, L., Hamann, J., & Brankovic, J. (2021). Unfreiwillige Komplizenschaft Wie wissenschaftliche Kritik zur Beharrungskraft von Hochschulrankings beiträgt. Leviathan, 49(special issue 38), 386–407. https://doi.org/10.5771/9783748911418-386

Saisana, M., d’Hombres, B., & Saltelli, A. (2011). Rickety numbers: Volatility of university rankings and policy implications. Research Policy, 40(1), 165–177. https://doi.org/10.1016/j.respol.2010.09.003

Sanoff, A. P. (1998). Rankings are here to stay; Colleges can improve them. Chronicle of Higher Education, 45(2), 96–100.

Sauder, M. (2008). Interlopers and field change: The entry of U.S. News into the field of legal education. Administrative Science Quarterly, 53(2), 209–234. https://doi.org/10.2189/asqu.53.2.209

Sauder, M., & Fine, G. A. (2008). Arbiters, entrepreneurs, and the shaping of business school reputations. Sociological Forum, 23(4), 699–723. https://doi.org/10.1111/j.1573-7861.2008.00091.x

Schmoch, U. (2015). The informative value of international university rankings: Some methodological remarks. In I. M. Welpe, J. Wollersheim, S. Ringelhan, & M. Osterloh (Eds.), Incentives and performance: Governance of research organizations (141–154). Cham: Springer International Publishing. https://doi.org/10.1007/978-3-319-09785-5_9

Shore, C., & Wright, S. (2015). Audit culture revisited: Rankings, ratings, and the reassembling of society. Current Anthropology, 56(3), 421–444. https://doi.org/10.1086/681534

Slaughter, S., & Leslie, L. L. (1999). Academic capitalism: Politics, policies, and the entrepreneurial university. John Hopkins University Press.

Sowter, B. (2013). Issues of transparency and applicability in global university rankings. In M. Marope, P. J. Wells, & E. Hazelkorn (Eds.), Rankings and accountability in higher education uses and misuses (pp. 55–68). UNESCO.

Surappa, M. K. (2016). World university rankings and subject ranking in engineering and technology (2015–2016): A case for greater transparency. Current Science, 111(3), 461–464.

Quacquarelli Symonds. (2021). Understanding the methodology: QS World University Rankings. Retrieved January 2, 2022, from https://www.topuniversities.com/university-rankings-articles/world-university-rankings/understanding-methodology-qs-world-university-rankings

Tang, Q. (2013). Foreword. In M. Marope, P. J. Wells, & E. Hazelkorn (Eds.), Rankings and accountability in higher education. Uses and misuses (5–6). Paris: UNESCO.

Times Higher Education. (2021). Impact Rankings methodology 2021. THE.

van Houtum, H., & van Uden, A. (2022). The autoimmunity of the modern university: How its managerialism is self-harming what it claims to protect. Organization, 29(1), 197–208. https://doi.org/10.1177/1350508420975347

Wedlin, L. (2006). Ranking business schools: Forming fields, identities and boundaries in international management education. Edward Elgar.

Welsh, J. (2019). Ranking academics: Toward a critical politics of academic rankings. Critical Policy Studies, 13(2), 153–173. https://doi.org/10.1080/19460171.2017.1398673

Wermund, B. (2017). How U.S. News College Rankings promote economic inequality on campus. Retrieved January 9, 2021, from https://www.politico.com/interactives/2017/top-college-rankings-list-2017-us-news-investigation

Westerheijden, D. F. (2015). Global university rankings, an alternative and their impacts. In J. Huisman, H. de Boer, D. D. Dill, & M. Souto-Otero (Eds.), The Palgrave international handbook of higher education policy and governance (417–436). London: Palgrave Macmillan UK. https://doi.org/10.1007/978-1-137-45617-5_23

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher's note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Hamann, J., Ringel, L. The discursive resilience of university rankings. High Educ 86, 845–863 (2023). https://doi.org/10.1007/s10734-022-00990-x

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10734-022-00990-x