Abstract

In this article, an alternative for Tinto’s integration theory of student persistence is proposed and tested. In the proposed theory, time available for individual study is considered a major determinant of both study duration and graduation rate of students in a particular curriculum. In this view, other activities in the curriculum, in particular lectures, constrain self-study time and therefore must have a negative impact on persistence. To test this theory, we collected study duration and graduation rate information of all—almost 14,000 students—enrolling in eight Dutch medical schools between 1989 and 1998. In addition, information was gathered regarding the timetables of each of these curricula in the particular period: lectures hours, hours spent in small-group tutorials, practicals, and time available for self-study. Structural equation modeling was used to study relations among these variables. In line with our predictions, time available for self-study was the only determinant of graduation rate and study duration. Lectures were negatively related to self-study time, negatively related to graduation rate, and positively related to study duration. The results suggest that extensive lecturing may be detrimental in higher education. However, in the curricula employing limited lecturing considerable energy was spent in supporting self-study activities of students and preventing postponement of learning. Given our findings, both activities will likely have large pay offs, in particular in curricula with low graduation rates.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Does the design of a curriculum play a role in how many students graduate? And does it influence how fast they do it? Answers to these two questions are not unimportant, because low graduation rates and study delays seem to be problems of higher education worldwide. For instance, in most European countries university graduation rates are 55% or less (in Italy even 35%), and those who graduate exceed the nominally available time on average with at least with 35% (Van Den Berg and Hofman 2005).

What are the sources of delay and less than satisfactory graduation rates? Within curricula, most variation can be explained by differences in aptitude and background characteristics of students, such as socio-economic status and gender. For instance, in a study, involving more than 5,000 first-year students in six different subjects, it was demonstrated that a one point higher secondary education GPA doubled the chance of completing the first year within the allocated time frame (Jansen 2004). In a recent study of Dutch medical education, study delay was negatively related to the final examination GPA in secondary education: The lower the GPA, the more time needed to complete medical education (Cohen-Schotanus et al. 2006). A difference in initial aptitude often emerges as the most important determinant of whether a student fails or succeeds (Arulampalam et al. 2007; Astin 1997; Lindblom-Ylanne et al. 1999).

Less is known about the influence of curriculum characteristics on dropout. In this realm, dropout is almost universally explained by Tinto’s theory of student integration (Tinto 1987, 1997). According to this theory, persistence of students is primarily a function of the extent to which these students are enabled by the curriculum to feel socially and academically integrated (Lamport 1993; Pascarella and Terenzini 1991; Zepke et al. 2006). The question is, of course, which features of a curriculum are particularly conducive in bringing about this psychological state among students. According to Tinto, social and academic integration tend to emerge if a student has friends among his peers, if he knows some of the faculty personally, if he is active in student bodies on campus, if the curriculum provides opportunities to discuss subject matter with peers and faculty, or if students are allowed to engage in common projects. Under these conditions, dropout is less likely to occur. Tinto (1998) argues that colleges and universities would be best served by reorganizing themselves in ways that promote greater educational community among students and faculty, but is not very specific on how to go about reaching that goal.

Recently, the importance of active learning strategies in this respect has come to the fore. The assumption here is that classroom activities such as collaborative learning, classroom discussions, holding presentations, managing projects together, and other forms of active learning would involve students more in their own learning and, hence, foster social and academic integration. Braxton et al. (2000), for instance, demonstrated that the opportunity to have discussions in the classroom among students and with teachers positively influenced subsequent “institutional commitment” of their students. In a large-scale quantitative study, Van Den Berg and Hofman (2005) demonstrated that curricula emphasizing small-group instruction rather than lectures as the main instructional method generally had higher graduation rates than other curricula. This finding was corroborated in a study by Schmidt et al. (2009) demonstrating that three medical active learning curricula in The Netherlands had up to 10% higher retention rates that all other more conventional curricula. Olds and Miller (2004) describe an experiment in which engineering students and faculty (1) worked in integrated project modules, (2) worked in small groups emphasizing discussion or subject matter and team teaching, and (3) were part of peer study groups for mutual support. Although this educational experiment only covered the first year of an undergraduate program, students who participated graduated at a significantly higher rate than their peers in a control group, and reflected retrospectively that the program had had a strong positive effect on their college careers. Lonka and Ahola (1995) demonstrated that students participating in activating instruction were more successful than those participating in lecture-based courses (although they needed more time in the first years of study).

While recognizing the significance of Tinto’s theory in understanding persistence, it has two shortcomings. The first is that does not clearly outline how feelings of being socially and academically integrated transform into higher levels of persistence. What is the causal intermediary mechanism between being integrated and persistence? It seems unlikely that integration itself is sufficient for higher completion to occur, irrespective of academic performance. As Tinto (1997) puts it: “… we have yet to explore the critical linkages between involvement in classrooms, student learning, and persistence (pp. 600–601).” The second shortcoming is that Tinto’s theory remains somewhat ambiguous as to the critical ingredient in the curriculum responsible for better learning. Given its emphasis on the community of learners (Tinto 1998), one is tempted to think that it is particularly the opportunity to collaborate in small-groups that fosters learning and, hence, persistence. However, active learning curricula are also characterized by curricular practices that tend to co-vary with the emphasis on small-group instruction. For instance, small-group instruction often goes together with less emphasis on lecturing. Therefore, since the timetable with scheduled activities is reduced, more time for self-study is available. It may be that better learning and persistence is primarily a function of this latter phenomenon.

The theory we propose here as an alternative for Tinto’s integration assumptions takes as its point of departure not the student’s perception of integration, but the learning process itself. To pass examinations, students have to process often fairly large amounts of information. They usually do this through independent and active homework, using elaboration and memorization techniques, helping them remember for the test. These learning activities take time. It is therefore not farfetched to assume that the more time is available for these activities (assuming that this time will be used appropriately), the better the learning. The better the learning, the better the performance on examinations, the shorter study duration, and the lower dropout. This is an extension to the curriculum level of the time-on-task hypothesis, stating that it is time spent on learning that determines its extent (Carroll 1963). Therefore, the extent to which the curriculum provides room for such independent and active learning, the lower the dropout of such curriculum will be. However, time is a limited resource; it cannot be made available to students indefinitely. This leads to the counterintuitive prediction that activities in the curriculum, that do not directly enable or support these learning processes, actually impede learning by restricting time-on-task, and therefore must increase dropout. A case in point is lectures. Lectures may serve useful functions in the curriculum, but they cannot be a substitute for the self-directed learning activity of the student. In fact, listening to lectures may preclude actual learning to take place because necessary memorization activities such as rehearsal of, and elaboration upon, what the teacher is conveying are virtually impossible while one is at the same time trying to follow the teacher’s line of thought. Therefore, our prediction is that the number of lectures in a curriculum must be negatively associated with graduation rate. This view on the use of lectures in education seem to put us in direct opposition to the proponents of direct instruction (e.g., Kirschner et al. 2006; Klahr and Nigam 2004) who believe that learning cannot be effective if teachers only provide minimal guidance, a point of view, by the way, that is prevalent among university teachers as well.

The data to be discussed below came from eight medical schools in the Netherlands. In addition to studying the effect of self-study and lectures, they also allowed us to examine the influence of time spent on small-group tutorials and practicals as potential contributors to learning and graduation. This leads us to three hypotheses to be considered while attempting to explain potential differences in learning and, hence, graduation rates and study duration in the context of our theory: (1) an activity hypothesis, (2) a direct-instruction hypothesis, and (3) a collaboration hypothesis.Footnote 1 The activity hypothesis suggests that it is crucial for achievement to be an active student spending long hours of independent learning to master the knowledge of his domain of study. Under this hypothesis, the student would need little guidance through lectures to succeed. Central to success is the amount of time spent on self-study. The second hypothesis proposes that subjects such as medicine are too complex to study on your own. You need direct instruction by experts who help you decide what to study and how to study it (Kirschner et al. 2006). Under this hypothesis the student would need lectures to a sufficient extent to succeed in higher education. The third hypothesis focuses on the contributions of small group instruction. This kind of instruction would enable students to engage themselves with peers and faculty around the topics to be mastered. If Tinto is right, one would expect the amount of time spent in these small groups would affect learning positively and therefore would help students persist.

To test these hypotheses, we collected study duration and graduation rate information of all—almost 14,000 students—enrolling in eight Dutch medical schools between 1989 and 1998. In addition, we analyzed evaluative reports about each of these curricula, reports that were written to provide information for external review committees of experts visiting these medical schools in 1992 and 1997. This enabled us to collect information indicating to what extent the four features mentioned above—self-study time, lectures, and small groups—characterized these curricula in that particular period. Subsequently we studied the influence of these features on graduation rate and delay among these ten generations of students in these eight schools using structural equation modeling (SEM).

Method

Context: the medical curricula involved

The Netherlands has eight medical schools. Since the late 1980s and early 1990s of the last century, considerable variation has emerged in their approach to the training of doctors. Three of these schools can be characterized as having problem-based or “active learning” curricula. Tutorial groups form a central feature of their approach, and lectures are relatively few. In addition, students spent relatively much time on self-study. Two schools can be characterized by a combination of small-group work and lectures, while the remaining three medical schools can be described as conventional to varying degrees in their approach to teaching. For more details see Table 1 adapted from Schmidt et al. (2009).

Admission to Dutch medical schools is dealt with at the national level, employing a weighted lottery procedure based on achievement on a national university entrance examination. This procedure (inadvertently) results in groups of students in different schools that are similar in terms of past performance, age, gender, and motivation to study medicine (Roeleveld 1997). In addition, all schools employ a 6-year curriculum and the subject matter taught is largely overlapping.

Finally, all eight medical schools have agreed on a common framework of graduation criteria since the late 1980s (Metz et al. 1994). This framework describes in considerable detail the final objectives, the knowledge and skills that every graduate must be able to demonstrate. These final objectives are updated on a regular basis. Since there are no national licensing examinations in the Netherlands, each school takes care that the examination system covers the final objectives. An external review committee of experts visiting all medical schools on behalf of the government checks every 5 years to what extent this is the case. It may therefore come as no surprise that examination performance is highly similar, even in schools with different didactic philosophies, and that the curriculum comparison studies in which these schools were involved routinely fail to find differences in knowledge among students (Imbos et al. 1984; Prince et al. 2003; Van der Vleuten et al. 2004; Verhoeven et al. 1998; Verwijnen et al. 1990), suggesting that all graduates on average have a similar level of knowledge.

Participants

Participants in the study were 13,845 medical students, being the entire population of students entering either one of the eight medical schools in The Netherlands between 1989 and 1998. These were the ten most recent generations of which data are available (the 9-year graduation rates of the 1998 generation only became available in 2007). The data were publicly accessible.

Measurement

For all medical schools in The Netherlands graduation rate data were computed, using data made available by the Dutch Association of Universities (VSNU). The graduation rate of a program is defined as the percentage succeeding of those students initially entering the program. This index was computed for each generation of medical students that entered university between 1989 and 1998 for the first time. Since students would often not complete their training in the nominally available time of 6 years, 9-year graduation rates were computed. The 9-year graduation rate was one of our two dependent variables. In addition, mean study duration in years was computed for those graduating.

In 1992 and 1997, external review committees visited all schools to assess the quality of medical training in The Netherlands (Gezondheidswetenschappen 1992, 1997). To prepare for these visits, the eight schools had to produce a critical appraisal of their educational efforts called Self-Study. The resulting 16 Self-Study reports and the assessment reports of the expert committees contain detailed information about the contents and structure of the various curricula at various points in time. This information enabled the characterization of all eight medical schools on the three curricular facets mentioned in the Introduction. In addition, for the record we included number of hours spent on practicals. (1) Mean number of hours for individual study was computed by taking the total number of hours spent on independent study in the first four—preclinical—years, as reported by the external review committees and dividing these numbers by 4 (the number of years) and 40 (the number of weeks per year). The same was done for (2) number of lecturing hours, (3) number of hours spent in small-group tutorials, and (4) number of hours spent on practicals. The latter included medical skills training and activities in the health care system such as preclinical rotations. The findings were corroborated through interviews with most educators involved in these programs.

Analysis

The resulting data set consisted of—eight schools times ten generations is—80 generations of students. In this sample, attempts were made to predict graduation rate and study duration based on knowledge of the particular curriculum. To that end, descriptive statistics were computed and correlations among the variables of interest. In addition, hypothesized structural relations among them were tested using structural equation modeling (SEM).

Results and discussion

Table 2 contains means and standard deviations of variables of interest, averaged over eight schools and ten generations of Dutch medical students entering medical school each year between 1989 and 1998.

Inspection of Table 2 suggests the following. Graduation rates of the eight Dutch medical schools are quite high, at least compared with other Dutch university curricula: Average graduation rate is there around 50% (Van Den Berg and Hofman 2005). Keep in mind, however, that the graduation rate reported for the medical students is the 9-year graduation rate while nominal study duration is 6 years. Mean 6-year graduation rate are considerably lower, equalling 13%. Those who graduate need on average an additional 1.30 years to do so. The curricula these students were in consisted for 22% of lectures, equal to about 9 h of lecturing per week, however, with a large range of 12.35 h. Tutorials covered around 5 h per week, practicals 4 h. Students had on average 22 h available for homework, however, among curricula the range was 11.60 h. The remaining time was consumed by less frequent activities such as examinations or learning to conduct empirical research.

Table 3 contains product-moment correlations between the various curriculum process measures and gradation rate and study duration.

As was predicted by the theory outlined in the Introduction section, time available for self-study was related to graduation rate: the more time was available, the higher were the numbers of students graduating 5–8 years later. The number of lectures on the other hand, entertained a negative relationship with graduation rate: the more lectures were given, the lower the completion rate. A reverse pattern was found for these variables and study duration. Higher levels of self-study were associated with shorter study duration. Lectures had a negative influence on study duration. Numbers of tutorials and practicals were not related to the outcome variables, however, both entertained a moderate negative relationship with number of lectures: the more lecturing in a curriculum, the less tutorials and practicals.

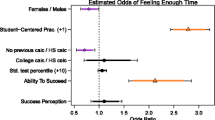

To study relationships among the variables of interest in greater depth, we conducted a series of path analyses, testing models suggested by the hypotheses outlined in the Introduction. We considered three hypotheses: an activity hypothesis in which self-study played a central role, a direct-instruction hypothesis in which lectures played the leading role, and a collaboration hypothesis stressing the importance of small-group work. When considered independently of the other factors, these three independent variables all did poorly as an explanation for persistence and study duration: the models’ Chi-squares were significantly different from zero, and other indicators of fit such as CFI were unsatisfactorily. We therefore decided to deal only with combination of these variables. We will only present models here that showed sufficient fit with the data. First we tested models relating lectures and time for self-study with graduation rate and study duration, in accordance with our time-on-task theory. Second, models were tested in which time spent in small-group tutorials was the determining factor. Third combinations of these models were attempted.

Table 4 suggests the following. The six models considered fit the data well, as witnessed by the fact that in almost all cases Chi-square did not significantly differ from zero, indicating that the particular model and its relations sufficiently fitted the data. (The only marginal exception is Model 4, delineating the relationship between lectures, time for self-study and study duration.) Interesting to note is that if the tutorials variable was paired with self-study without lectures being present, the path between tutorials and self-study was non-significant. Therefore, it is most useful to concentrate on models involving numbers of lectures and self-study time as explanatory variables. Let’s look at models 3a and 3b, because they are the most comprehensive ones. Model 3a can be understood as follows: the numbers of lecture hours scheduled in a curriculum has a strong negative impact on the amount of time available for self-study; the more lectures the lesser self-study time. Time for self-study, however, positively influences graduation rate. The more time a curriculum enables for self-study the more students graduate. Numbers of tutorials scheduled have a marginally negative influence on time for self-study. Model 3b can be understood along the same lines, with the provision that here self-study time is negatively related to study duration: the more time for self-study, the shorter the time needed to graduate.

General discussion

In this article we propose a straightforward theory explaining graduation rate and study duration. In this theory, time available for self-study plays a central role. Since preparing for examinations at a sufficient level of depth requires extensive processing of often-large amounts of information, summarizing, elaboration, and rehearsal are necessary individual activities that require time and call for being secluded from others. Our predictions were: 1. The more time is available for such self-directed learning activities, the more students will pass examinations and eventually graduate. 2. They will also do this in a shorter time with less delays caused by repeats of examinations. 3. Activities in the curriculum that restrict time for self-directed learning, such as large numbers of lectures, may actually impede such learning and, therefore, negatively influence graduation rate and study duration. To test this theory, we analyzed graduation-rate and study-duration data of ten generations of students entering one of the eight medical schools in The Netherlands between 1989 and 1998. In addition, we collected information about the various curricula involved by analyzing reports written by the particular schools in preparation of the visit of external review committees (Gezondheidswetenschappen 1992, 1997). These reports contain estimates of the amount of time within the curriculum spent on lectures, tutorials, practicals, etc. In addition, these reports contained information about the amount of time available for independent study.

Our analyses clearly demonstrated the central role of self-study time in the production of graduation rate and study duration. Generally, students who were part of a program that allowed for more time for self-study completed their training faster and in larger numbers. These effects were sizable. The availability of five additional hours of self-study per week would lead to almost 4% more graduates. These students would gain almost a quarter of a year before graduation. Our findings also demonstrate that number of lecturing hours has a strong negative effect on self-study time and through it on graduation rate. The more lecturing, the less time for self-study, the fewer students completing their studies. A reverse effect was found for study duration: Students take longer to graduate when they receive more lectures. One might argue that the high negative correlation between lectures and self-study is trivial because it follows from the fact that a week has a fixed number of hours. Therefore, more hours of lecturing automatically leads to less hours available for self-study. However, other activities scheduled during the week such as time for tutorials or practicals correlate far lower with self-study time, suggesting that lectures indeed play a decisive role in the determination of how much time is left for self-study.

Why are lectures counterproductive to the extent documented here?

Let’s first agree that the finding that lectures negatively affect persistence is highly counterintuitive. Teachers generally believe that lectures are good for students. This is why, in many conventional curricula, students spend half of their time or more in lecture theatres. In the Netherlands, the government has recently ordained a minimum number of teaching hours in secondary education of 26 h per week in response to a perceived drop in the quality of high-school education. This belief is by no means restricted to teachers or governments, as witnessed by recent attacks in the scientific literature on those who are seen to promote active learning (e.g., Kirschner et al. 2006; Klahr and Nigam 2004; Mayer 2004). These proponents of direct instruction believe that learning cannot be effective if teachers only provide “minimal guidance.”

Our findings are not only counterintuitive but also seem to contradict a large body of research demonstrating that lecturing, if done well, in fact improves learning and performance (See for instance Light 2001; Perry and Smart 2007). However, the contradiction may not be as self-evident as it seems. Retention of students is multifactorially determined. Good teaching and too much teaching may be counteracting forces: while the quality of direct instruction may support learning and retention, the quantity of such instruction may work against it. Imagine two schools in which an equal number of excellent teachers work (and some mediocre lecturers). If one program provides 10 h of lectures per week, whereas the other program provides 30 h of lectures per week, then, based on our theory, the first program can be predicted to be doing a better job in terms of student retention, despite of the fact that both programs have similar numbers of good teachers.

Our results seem to extend little known findings of the Dutch researcher Peter Vos (Van der Drift and Vos 1987, pp. 84–85). In a study of eighteen curricula, Vos was able to demonstrate a curvilinear relationship between the numbers of hours scheduled in these curricula and student-reported self-study time. Curricula that offered few lectures did not encourage students to spend much time on self-study. Self-study linearly increased with the number of scheduled hours. However, beyond a certain point the number of self-study hours reported decreased with further increases of scheduled hours. (The optimum lay between 8 and 10 h of timetabled activities per week.) His study shows that there is a trade-off between the two activities. Self-study time is not an unlimited resource that can be made available to students indefinitely; it is constrained by the scheduled activities. In his contribution, Vos implicitly assumes that students learn from lectures enough to make up for the loss of self-study time. Our study, however, shows that this is not the case. The trade-off between scheduled hours and self-study has serious negative consequences. If scheduled activities dominate the curriculum, student learning is hampered and chances of survival are diminished.

One may argue that it is only conceivable that attending lectures is counterproductive if lectures took up most of the students’ available time. The fact that lectures required on average 9 h per week from the students’ time as is shown in Table 2, suggests that this is hardly the case here. However, Table 1 demonstrates that there were large differences between curricula in the number of hours dedicated to lectures; the school with the highest number had seven times more lectures than the school with the lowest number. The same applies to time available for self-study. At completion of the first four preclinical years, students from the school with the most room for self-study had had about 4,480 h available for self-study, whereas students in the school with the least room for self-study had had available only slightly more than half of that time. The school with the largest amount of time available for self-study graduated 92% of those enrolled. For this they needed an extra 0.94 years. The school with the lowest amount of self-study time graduated 83% and these students needed additional 1.56 years to accomplish this (Schmidt et al. 2009). So, large differences in lecturing hours and time for self-study indeed seem to produce fairly large differences in student retention and study duration.

What about Tinto’s integration theory of persistence (Tinto 1998)? We failed to find meaningful positive relations between amount of time scheduled for tutorials and persistence. It is important to stress at this point that our theory of how dropout and delay emerge focuses on the quantity of instruction rather than on its quality. It has nothing to say about how good or bad the teaching was in a particular program, but only how much time was spent on these teaching activities. It is therefore very well possible that the quality of the small-group encounters with peers and staff makes a difference beyond the amount of time spent on them. Again, it may very well be possible that tutorials have a positive effect on learning—in fact, there is ample evidence that it does (Springer et al. 1999)—whereas too many tutorials constrain self-study time and therefore have a (slight) negative effect. In addition, the curricula studied here differed widely in terms of what was considered a small-group tutorial. Some of them applied groups of eight guided by a tutor. Others considered groups of 12–16 small groups. In some of these programs groups were student-driven, in particular in the problem-based curricula involved. In other programs, small groups were mainly used for additional instruction by teachers. These qualitative differences could not be included in this study, because the publicly available documents used for describing the curricula at hand did not allow for such distinction.

A second point of caution pertains to our central concept: time available for self-study. In judging our findings, one should keep in mind that our study concerned time available, not time actually used by students. On the other hand, if time available were not appropriately used for self-study and, consequently, were not to lead to appropriate examination performance, our findings relating time available, graduation rate, and study duration would be entirely absurd.

A third constraint on our findings is that we were not able to reconstruct small year-to-year adaptations of curriculum timetables within and between student generations. The sources available ten to 15 years after the fact simply do not allow for more detailed information than we were able to amass. On the other hand, we spoke with most educators involved in the curricula studied in the particular time frame (in fact some of them are co-authoring this article), and ascertained that changes, if any, were small and non-consequential.

An important possible limitation of our study is that the data were gathered in the context of medical education. In most countries students have to compete for a limited number of positions. Therefore, only the brightest students tend to get into medical school. It may be possible that such students need much less guidance than students who are less well endowed in the intellectual department. This may limit the generalizability of our findings. On the other hand, a study by Van Den Berg and Hofman (2005), involving almost 9,000 Dutch students from a variety of curricula found a similar effect: the more lectures were provided, the less study progress was made. A related issue may be the extent to which Dutch medical education is a representative model for medical education elsewhere. Our impression is that, at least in Europe, the numbers of lectures given is generally higher than in the Netherlands, increasing the potential relevance of the notions put forward in this article.

Concluding remarks

The study discussed in this article demonstrates positive effects of time available for self-study in a curriculum on students’ 6- to 9-years graduation rates. In addition, our study revealed negative effects of extensive lecturing on persistence of students. Of course taking the logic of the argument that lectures negatively influence student retention to its extreme would imply a curriculum without lectures. This would of course be absurd. Lectures serve a number of useful functions. (1) They guide students in what is important and what is not important (and therefore help them in allocating their self-study time appropriately), (2) they may play a motivational role. Good teachers are able to illustrate how interesting their subject is, and (3) they may help in the initial comprehension of difficult subjects. These are three important conditions for learning to take place. Not only is a minimum number of lectures necessary to stimulate sufficient self-study (Van der Drift and Vos 1987), teacher-directed activity also brings structure to the curriculum. Students, like all human beings are just-in-time managers and need this structure in order not to postpone work on their studies. In fact, the curricula involved in this study were successful in terms of graduation rate and study duration, not simply because they allowed for sufficient time for self-study, but because they made certain that students used this self-study time appropriately through study assignments, problems to be understood and solved, and other means to encourage students to work hard. Small-group work played a central role in this approach (Schmidt et al. 2009). Encouraging efficient use of self-study time and preventing students to postpone self-study seem crucial elements to the success of these curricula.

Notes

We have presently no specific hypothesis with regard to the influence of numbers of practicals on student retention but included it in the analyses because the curricula involved tended to differ on this variable as well.

References

Arulampalam, W., Naylor, R. A., & Smith, J. P. (2007). Dropping out of medical school in the UK: Explaining the changes over ten years. Medical Education, 41(4), 385–394.

Astin, A. W. (1997). How “good” is your institution’s retention rate? Research in Higher Education, 38(6), 647–658.

Braxton, J. M., Milem, J. F., & Sullivan, A. S. (2000). The influence of active learning on the college student departure process—Toward a revision of Tinto’s theory. Journal of Higher Education, 71(5), 569.

Carroll, J. (1963). A model of school learning. Teachers College Record, 64, 723–733.

Cohen-Schotanus, J., Muijtjens, A. M. M., Reinders, J. J., Agsteribbe, J., van Rossum, H. J. M., & van der Vleuten, C. P. M. (2006). The predictive validity of grade point average scores in a partial lottery medical school admission system. Medical Education, 40(10), 1012–1019.

Gezondheidswetenschappen, V.-V. G. e. (1992). Onderwijsvisitatie geneeskunde en gezondheidswetenschappen (Assessing quality of curricula in medicine and health sciences). Utrecht, The Netherlands: Vereniging van Samenwerkende Nederlandse Universiteiten.

Gezondheidswetenschappen, V.-V. G. e. (1997). Onderwijsvisitatie geneeskunde en gezondheidswetenschappen (Assessing quality of curricula in medicine and health sciences). Utrecht, The Netherlands: Vereniging van Samenwerkende Nederlandse Universiteiten.

Imbos, T., Drukker, J., Van Mameren, H., & Verwijnen, M. (1984). The growth of knowledge of anatomy in a problem-based curriculum. In H. G. Schmidt & M. L. De Volder (Eds.), Tutorials in problem-based learning: A new direction in teaching the health professions (pp. 106–115). Assen/Maastricht, The Netherlands: Van Gorcum.

Jansen, E. (2004). The influence of the curriculum organization on study progress in higher education. Higher Education, 47(4), 411–435.

Kirschner, P. A., Sweller, J., & Clark, R. E. (2006). Why minimal guidance during instruction does not work: An analysis of the failure of constructivist, discovery, problem-based, experiential, and inquiry-based teaching. Educational Psychologist, 41(2), 75–86.

Klahr, D., & Nigam, M. (2004). The equivalence of learning paths in early science instruction—Effects of direct instruction and discovery learning. Psychological Science, 15(10), 661–667.

Lamport, M. A. (1993). Student-faculty informal interaction and the effect on College-student outcomes—A review of the literature. Adolescence, 28(112), 971–990.

Light, R. J. (2001). Making the most of college: Students speak their minds. Cambridge, MA: Harvard University Press.

Lindblom-Ylanne, S., Lonka, K., & Leskinen, E. (1999). On the predictive value of entry-level skills for successful studying in medical school. Higher Education, 37(3), 239–258.

Lonka, K., & Ahola, K. (1995). Activating instruction: How to foster study and thinking skills in higher education. European Journal of Psychology of Education, 10(4), 351–368.

Mayer, R. E. (2004). Should there be a three-strikes rule against pure discovery learning? The case for guided methods of instruction. American Psychologist, 59(1), 14–19.

Metz, J. C. M., Pels-Rijcken-Van Erp Taalman Kip, E. H., & van den Brand-Valkenburg, B. W. M. (1994). Raamplan 1994 Artsopleiding (Framework 1994 for Medical Training) Nijmegen, The Netherlands: Universitair Publicatiebureau.

Olds, B. M., & Miller, R. L. (2004). The effect of a first-year integrated engineering curriculum on graduation rates and student satisfaction: A longitudinal study. Journal of Engineering Education, 93(1), 23–35.

Pascarella, E. T., & Terenzini, P. (1991). How college affects students. San Francisco, CAL: Jossey-Bass.

Perry, R. P., & Smart, J. C. (Eds.). (2007). The scholarship of teaching and learning in higher education: An evidence-based perspective. Dordrecht, The Netherlands: Springer.

Prince, K., Van Mameren, H., Hylkema, N., Drukker, J., Scherpbier, A. J. J. A., & Van der Vleuten, C. P. M. (2003). Does problem-based learning lead to deficiencies in basic science knowledge? An empirical case on anatomy. Medical Education, 37(1), 15–21.

Roeleveld, J. (1997). Lotingscategorieën en studiesucces: Rapportage aan de adviescommissie Toelating numerus-Fixusopleidingen (Lottery categories and academic achievement: Report to the advisory committee admissions numerus-Fixus programs). Amsterdam, The Netherlands: University of Amsterdam: SCO/Kohnstamm Instituut.

Schmidt, H. G., Cohen-Schotanus, J., & Arends, L. (2009). Impact of problem-based, active, learning on graduation rates of ten generations of Dutch medical students. Medical Education, 43(3), 211–218.

Springer, L., Stanne, M. E., & Donovan, S. S. (1999). Effects of small-group learning on undergraduates in science, mathematics, engineering, and technology: A meta-analysis. Review of Educational Research, 69(1), 21–51.

Tinto, V. (1987). Leaving college: Rethinking the causes and cures of student attrition. Chicago: ILL The University of Chicago Press.

Tinto, V. (1997). Classrooms as communities—Exploring the educational character of student persistence. Journal of Higher Education, 68(6), 599–623.

Tinto, V. (1998). Colleges as communities: Taking research on student persistence seriously. Review of Higher Education, 21(2), 167–177.

Van Den Berg, M. N., & Hofman, W. H. A. (2005). Student success in university education: A multi-measurement study of the impact of student and faculty factors on study progress. Higher Education, 50(3), 413–446.

Van der Drift, K. D. J., & Vos, P. (1987). Anatomie van een leeromgeving, een onderwijseconomische analyse of universitair onderwijs (Anatomy of a learning environment, an economical analysis of university education). Lisse: Swets & Zeitlinger.

Van der Vleuten, C. P. M., Schuwirth, L. W. T., Muijtens, A. M. M., Thoben, A. J. N. M., Cohen-Schotanus, J., & Van Boven, C. P. A. (2004). Cross institutional collaboration in assessment: A case on progress testing. Medical Teacher, 26(8), 719–725.

Verhoeven, B. H., Verwijnen, G. M., Scherpbier, A., Holdrinet, R. S. G., Oeseburg, B., Bulte, J. A., et al. (1998). An analysis of progress test results of PBL and non-PBL students. Medical Teacher, 20(4), 310–316.

Verwijnen, G. M., Van der Vleuten, C., & Imbos, T. (1990). A comparison of an innovative medical school with traditional schools: An analysis in the cognitive domain. In Z. Nooman, H. G. Schmidt, & E. Ezzat (Eds.), Innovation in medical education, an evaluation of its present status (pp. 40–49). New York, NY: Springer Publishing.

Zepke, N., Leach, L., & Prebble, T. (2006). Being learner centred: One way to improve student retention? Studies in Higher Education, 31(5), 587–600.

Open Access

This article is distributed under the terms of the Creative Commons Attribution Noncommercial License which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This is an open access article distributed under the terms of the Creative Commons Attribution Noncommercial License (https://creativecommons.org/licenses/by-nc/2.0), which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

About this article

Cite this article

Schmidt, H.G., Cohen-Schotanus, J., van der Molen, H.T. et al. Learning more by being taught less: a “time-for-self-study” theory explaining curricular effects on graduation rate and study duration. High Educ 60, 287–300 (2010). https://doi.org/10.1007/s10734-009-9300-3

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10734-009-9300-3